2. Methodology

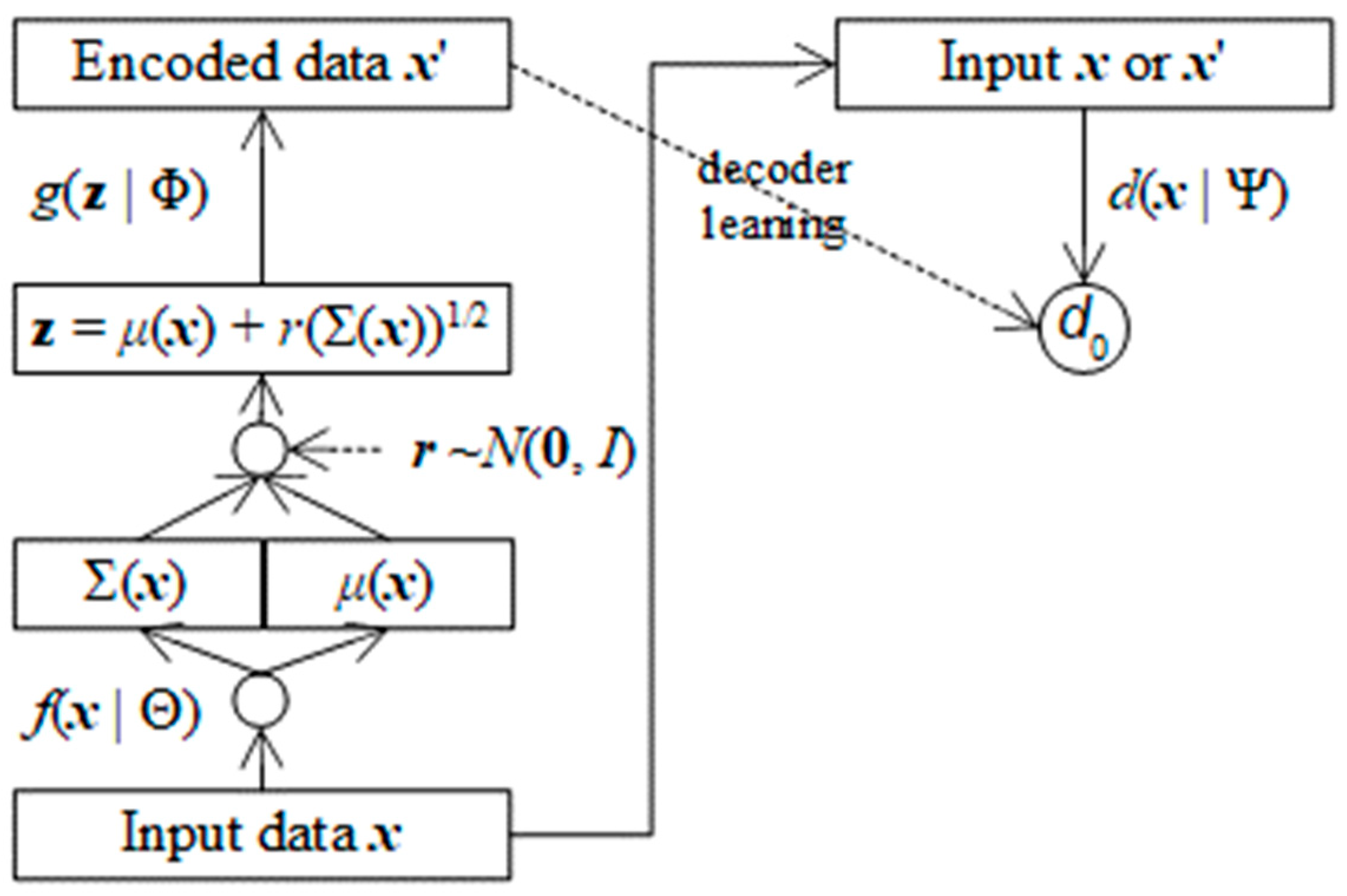

In this research I propose a method as well as a generative model which incorporate Generative Adversarial Network (GAN) into Variational Autoencoders (VAE) for extending and improving deep generative model because GAN does not concern how to code original data and VAE lacks mechanisms to assess quality of generated data with note that data coding is necessary to some essential applications such as image impression and recognition whereas audit quality can improve accuracy of generated data. As a convention, let vector variable

x = (

x1,

x2,…,

xm)

T and vector variable

z = (

z1,

z2,…,

zn)

T be original data and encoded data whose dimensions are

m and

n (

m >

n), respectively. A generative model is represented by a function

f(

x | Θ) =

z,

f(

x | Θ) ≈

z, or

f(

x | Θ) →

z where

f(

x | Θ) is implemented by a deep neural network (DNN) whose weights are Θ, which converts the original data

x to the encoded data

z and is called encoder in VAE. A decoder in VAE which converts expectedly the encoded data

z back to the original data is represented by a function

g(

z | Φ) =

x’ where

g(

z | Φ) is also implemented by a DNN whose weights are Φ with expectation that the decoded data

x’ is approximated to the original data

x as

x’ ≈

x. The essence of VAE is to minimize the following loss function for estimating the encoded parameter Θ and the decoded parameter Φ.

Note that ||x – x’|| is Euclidean distance between x and x’ whereas KL(μ(x), Σ(x) | N(0, I)) is Kullback-Leibler divergence between Gaussian distribution of x whose mean vector and covariance matrix are μ(x) and Σ(x) and standard Gaussian distribution N(0, I) whose mean vector and covariance matrix are 0 and identity matrix I.

GAN does not concern the encoder

f(

x | Θ) =

z but it focuses on optimizing the decoder

g(

z | Φ) =

x’ by introducing a so-called discriminator which is a discrimination function

d(

x | Ψ):

x → [0, 1] from concerned data

x or

x’ to range [0, 1] in which

d(

x | Ψ) can distinguish fake data from real data. In other words, the larger result the discriminator

d(

x’ | Ψ) derives, the more realistic the generated data

x’ is. Obviously,

d(

x | Ψ) is implemented by a DNN whose weights are Ψ with note that this DNN has only one output neuron denoted

d0. The essence of GAN is to optimize mutually the following target function for estimating the decoder parameter Φ and the discriminator parameter Ψ.

Such that Φ and Ψ are optimized mutually as follows:

The proposed generative model in this research is called Adversarial Variational Autoencoders (AVA) because it combines VAE and GAN by fusing mechanism in which loss function and balance function are optimized parallelly. The AVA loss function implies loss information in encoder

f(

x | Θ), decoder

g(

z | Φ), discriminator

d(

x | Ψ) as follows:

The balance function of AVA is to supervise the decoding mechanism, which is the GAN target function as follows:

The key point of AVA is that the discriminator function occurs in both loss function and balance function via the expression log(1 –

d(

g(

z | Φ) | Ψ)), which means that the capacity of how to distinguish fake data from realistic data by discriminator function affects the decoder DNN. As a result, the three parameters Θ, Φ, and Ψ are optimized mutually according to both loss function and balance function as follows:

Because the encoder parameter Θ is independent from both the decoder parameter Φ and the discriminator parameter Ψ, its estimate is specified as follows:

Because the decoder parameter Φ is independent from the encoder parameter Θ, its estimate is specified as follows:

Note that the Euclidean distance ||

x –

x’|| is only dependent on Θ. Because the discriminator tries to increase credible degree of realistic data and decrease credible degree of fate data, its parameter Ψ has following estimate:

By applying stochastic gradient descent (SDG) algorithm into backpropagation algorithm, these estimates are determined based on gradients of loss function and balance function as follows:

where

γ (0 <

γ ≤ 1) is learning rate. Let

af(.),

ag(.), and

ad(.) be activation functions of encoder DNN, decoder DNN, and discriminator DNN, respectively and so, let

af’(.),

ag’(.), and

ad’(.) be derivatives of these activation functions, respectively. The encoder gradient regarding Θ is (Doersch, 2016, p. 9):

The decoder gradient regarding Φ is:

where,

The discriminator gradient regarding Ψ is:

As a result, SGD algorithm incorporated into backpropagation algorithm for solving AVA is totally determined as follows:

where notation [

i] denotes the

ith element in vector. Please pay attention to the derivatives

af’(.),

ag’(.), and

ad’(.) because they are helpful techniques to consolidate AVA. The reason of two different occurrences of derivatives

ad’(

d(

x’ | Ψ

*)) and

ag’(

x’) in decoder gradient regarding Φ is nontrivial because the unique output neuron of discriminator DNN is considered as effect of the output layer of all output neurons in decoder DNN.

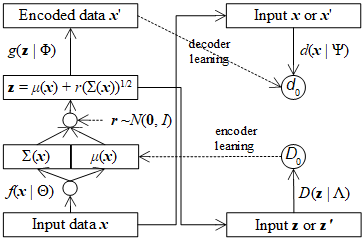

Figure 1.

Causality effect relationship between decoder DNN and discriminator DNN.

Figure 1.

Causality effect relationship between decoder DNN and discriminator DNN.

When weights are assumed to be 1, error of causal decoder neuron is error of discriminator neuron multiplied with derivative at the decoder neuron and moreover, the error of discriminator neuron, in turn, is product of its minus bias –d’(.) and its derivative a’d(.).

It is necessary to describe AVA architecture because skillful techniques cannot be applied into AVA without clear and solid architecture. The key point to incorporate GAN into VAE is that the error of generated data is included in both decoder and discriminator, besides decoded data x’ which is output of decoder DNN becomes input of discriminator DNN.

AVA architecture follows an important aspect of VAE where the encoder f(x | Θ) does not produce directly decoded data z as f(x | Θ) = z. It actually produces mean vector μ(x) and covariance matrix Σ(x) belonging to x instead. In this research, μ(x) and Σ(x) are flatten into an array of neurons output layer of the encoder f(x | Θ).

The actual decoded data

z is calculated randomly from

μ(

x) and Σ(

x) along with a random vector

r.

where

r follows standard Gaussian distribution with mean vector

0 and identity covariance matrix

I and each element of (Σ(

x))

1/2 is squared root of the corresponding element of Σ(

x). This is an excellent invention in traditional literature which made the calculation of Kullback-Leibler divergence much easier without loss of information.

The balance function

bAVA(Φ, Ψ) aims to balance decoding task and discrimination task without partiality but it can lean forward decoding task for improving accuracy of decoder by including the error of original data

x and decoded data

x’ into balance function as follows:

As a result, the estimate of discriminator parameter Ψ is:

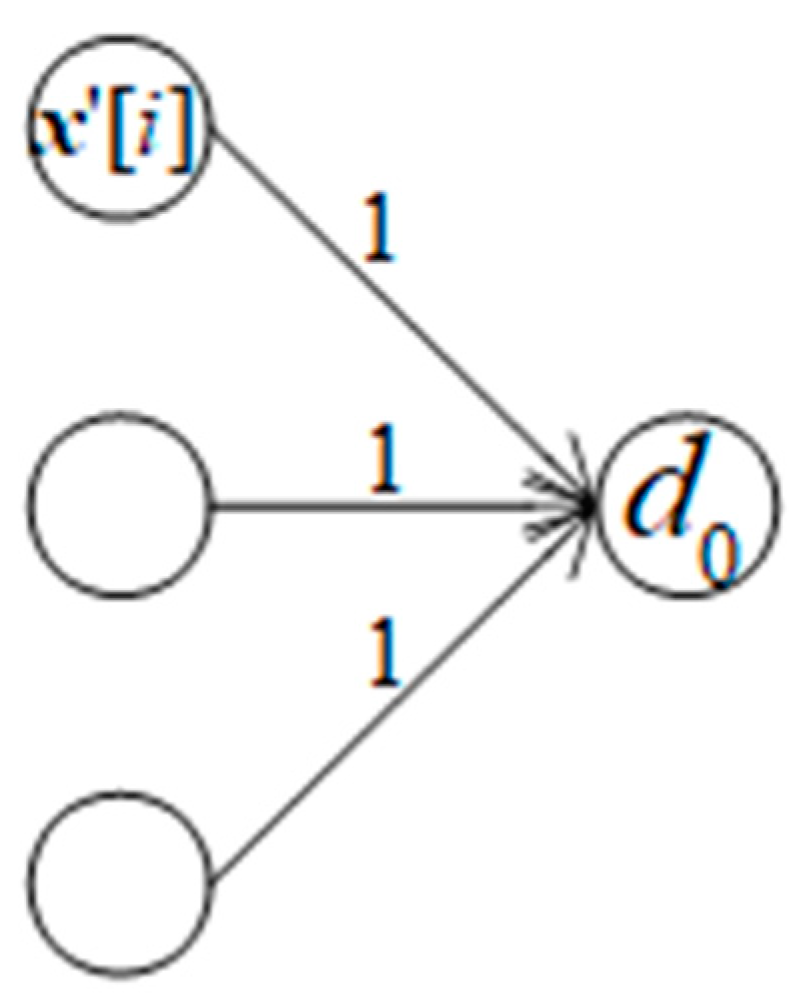

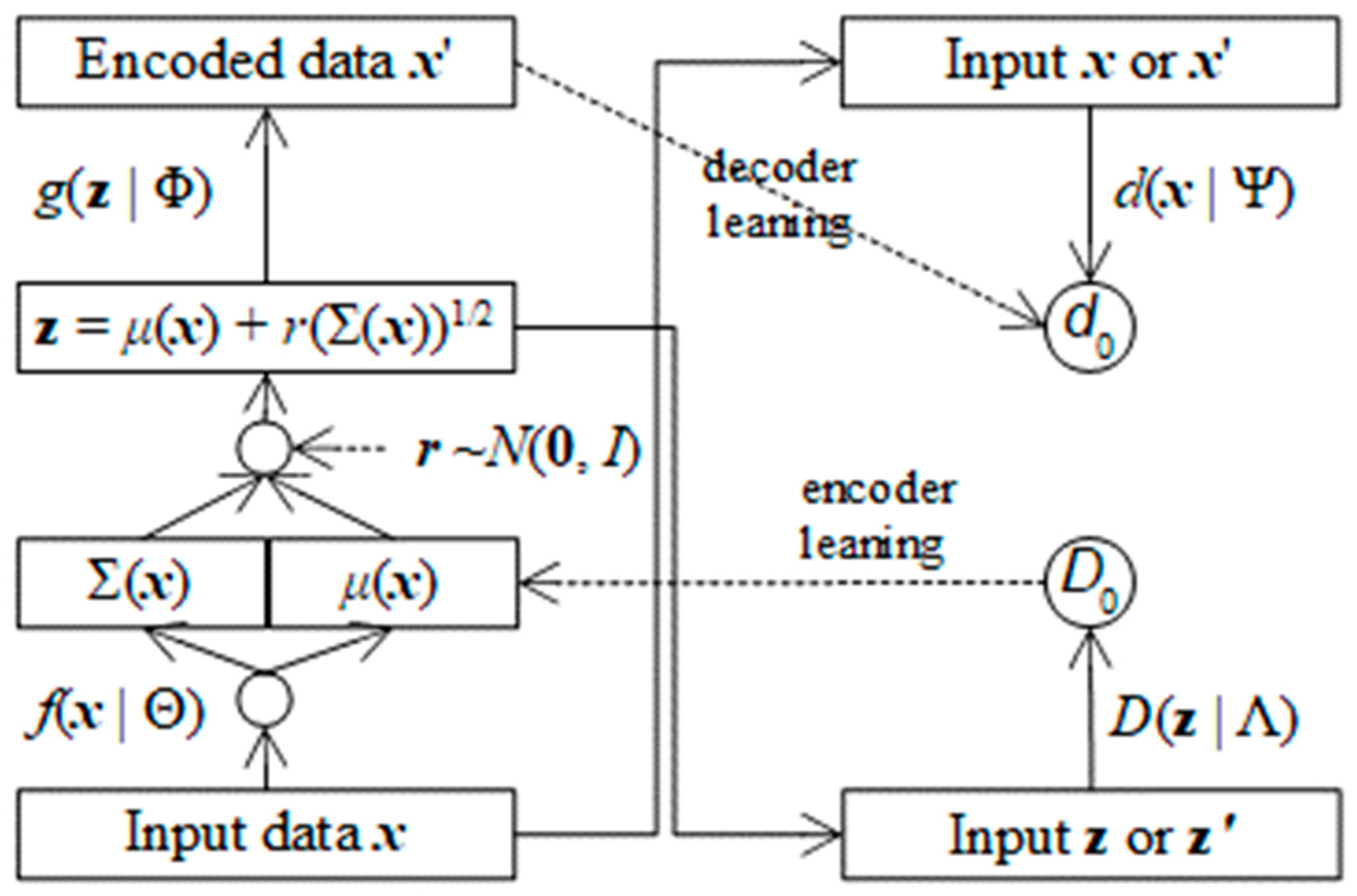

In a reverse causality effect relationship in which the unique output neuron of discriminator DNN is cause of all output neurons of decoder DNN as shown in Figure 3.

Figure 3.

Reverse causality effect relationship between discriminator DNN and decoder DNN.

Figure 3.

Reverse causality effect relationship between discriminator DNN and decoder DNN.

Suppose bias of each decoder output neuron is bias[

i], error of the discriminator output neuron error[

i] is sum of weighted biases which is in turn multiplied with derivative at the discriminator output neuron with note that every weighted bias is also multiplied with derivative at every decoder output neuron. Suppose all weights are 1, we have:

Because the balance function

bAVA(Φ, Ψ) aims to improve the decoder

g(

z | Φ), it is possible to improve the encoder

f(

x | Θ) by similar technique with note that output of encoder is mean vector

μ(

x) and covariance matrix Σ(

x). In this research, I propose another balance function

BAVA(Θ, Λ) to assess reliability of the mean vector

μ(

x) because

μ(

x) is most important to randomize

z and

μ(

x) is linear. Let

D(

μ(

x) | Λ) be discrimination function for encoder DNN from

μ(

x) to range [0, 1] in which

D(

μ(

x) | Λ) can distinguish fake mean

μ(

x) from real mean

μ(

x’). Obviously,

D(

μ(

x) | Λ) is implemented by a so-called encoding discriminator DNN whose weights are Λ with note that this DNN has only one output neuron denoted

D0. The balance function

BAVA(Θ, Λ) is specified as follows:

AVA loss function is modified with regard to the balance function

BAVA(Θ, Λ) as follows:

By similar way of applying SGD algorithm, it is easy to estimate the encoding discriminator parameter Λ as follows:

where

aD(.) and

a’

D(.) are activation function of the discriminator

D(

μ(

x) | Λ) and its derivative, respectively.

The encoder parameter Θ is consisted of two separated parts Θμ and ΘΣ because the output of encoder f(x | Θ) is consisted of mean vector μ(x) and covariance matrix Σ(x).

When the balance function

BAVA(Θ, Λ) is included in AVA loss function, the part Θ

μ is recalculated whereas the part Θ

Σ is kept intact as follows:

Figure 4 shows AVA architecture with support of assessing encoder.

Similarly, the balance function

BAVA(Φ, Λ) can lean forward encoding task for improving accuracy of encoder

f(

x | Θ) by concerning the error of original mean

μ(

x) and decoded data mean

μ(

x’) as follows:

Without repeating explanations, the estimate of discriminator parameter Λ is modified as follows:

These variants of AVA are summarized, and their tests are described in the next section.