Submitted:

30 June 2023

Posted:

04 July 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background

3. Related Works

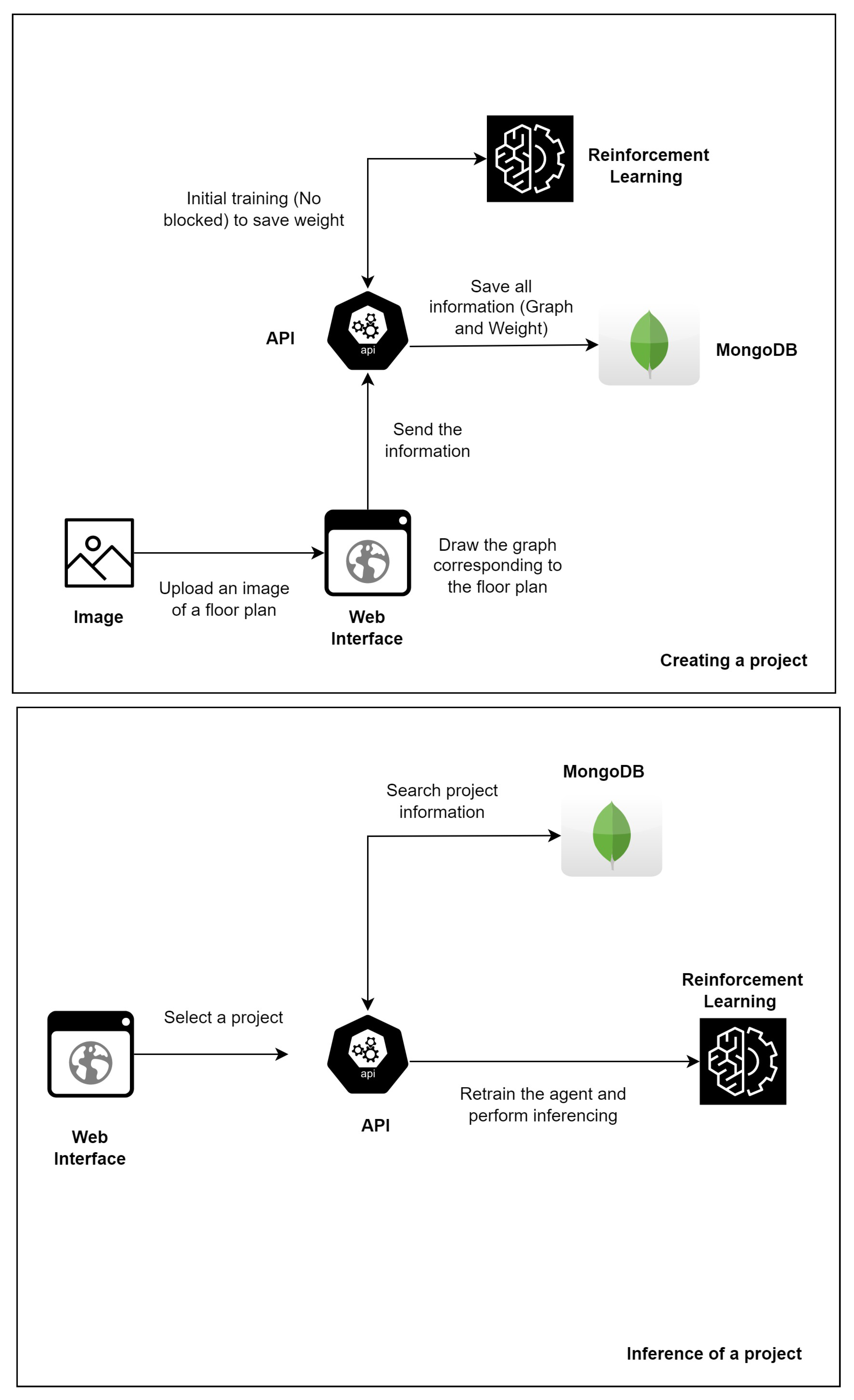

4. Proposed Solutions

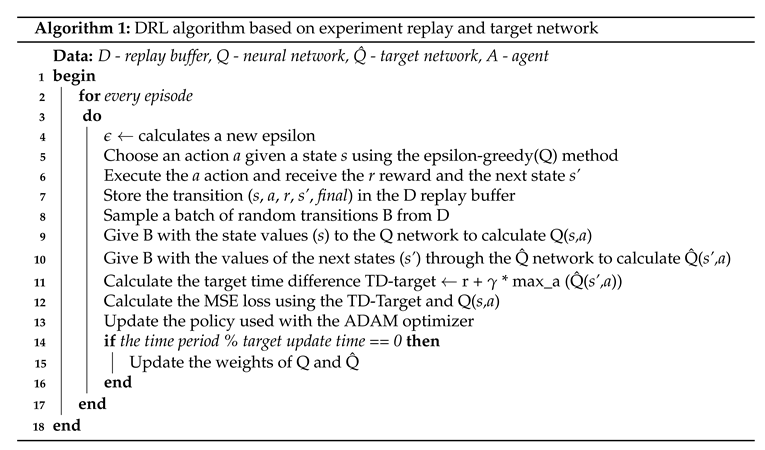

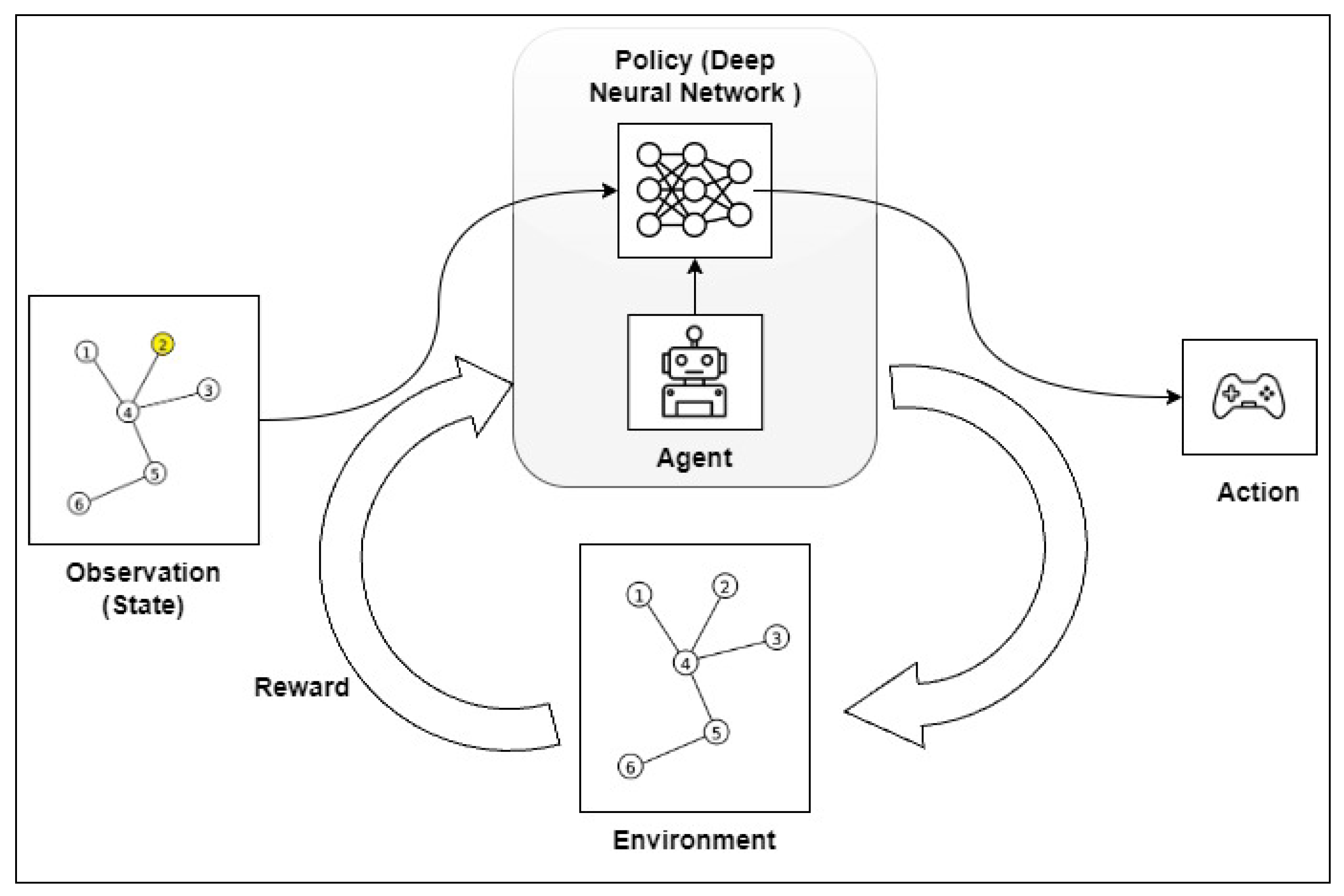

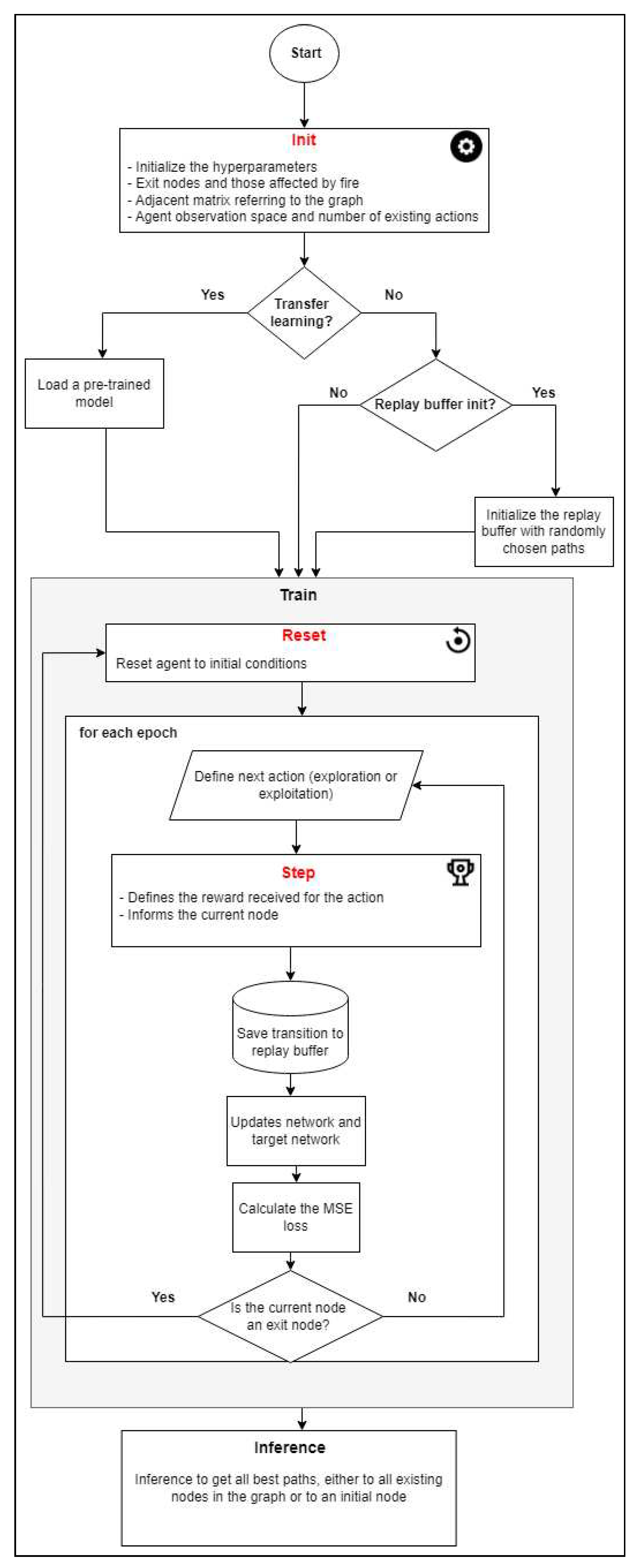

4.1. The Use of Deep Reinforcement Learning for defining the best emergency exit routes

- The init method is used to initialize the variables necessary for the environment. A graph referring to the floor plan is provided with information. Following this, an adjacent matrix is created from the input graph, output nodes and the nodes affected by fire. The spatial distance between the nodes is used as the edge weight. The number of possible actions to be performed is defined in terms of the number of nodes in the graph and the size of the observation space is defined as the number of possible actions * 2.

- The reset method is used to reset the environment to its initial conditions. This method is responsible for defining the initial state of the agent in the environment, either in a predefined node or randomly, if it has not received the information. By using random initial states during the training, the agent learns the path from all the nodes of the graph to the output node, although this also makes the training time much longer.

- The step method is responsible for the core of the algorithm, as it uses the neural network to define the next action required, the next state and the reward. It receives the current state and the action as parameters. After this, it calculates the reward by means of the adjacent matrix, which shows the adjacency and distance between the nodes.

- If the next state is a node that is on the list of nodes if affected by fire, the agent incurs a severe penalty (i.e a negative reward) of -10,000.

- If the next node is not adjacent to the current node (i.e., they are not neighbors), the agent incurs a penalty (negative reward) of -5,000.

- If the next node is adjacent of the current node (i.e., they are neighbors) and is not an exit node, the agent incurs a penalty (negative reward) corresponding to the weight of the edge that connects these nodes.

- If the next node is an exit node, the learning agent is granted a positive reward of 10,000, which is a sign that it has successfully completed its goal.

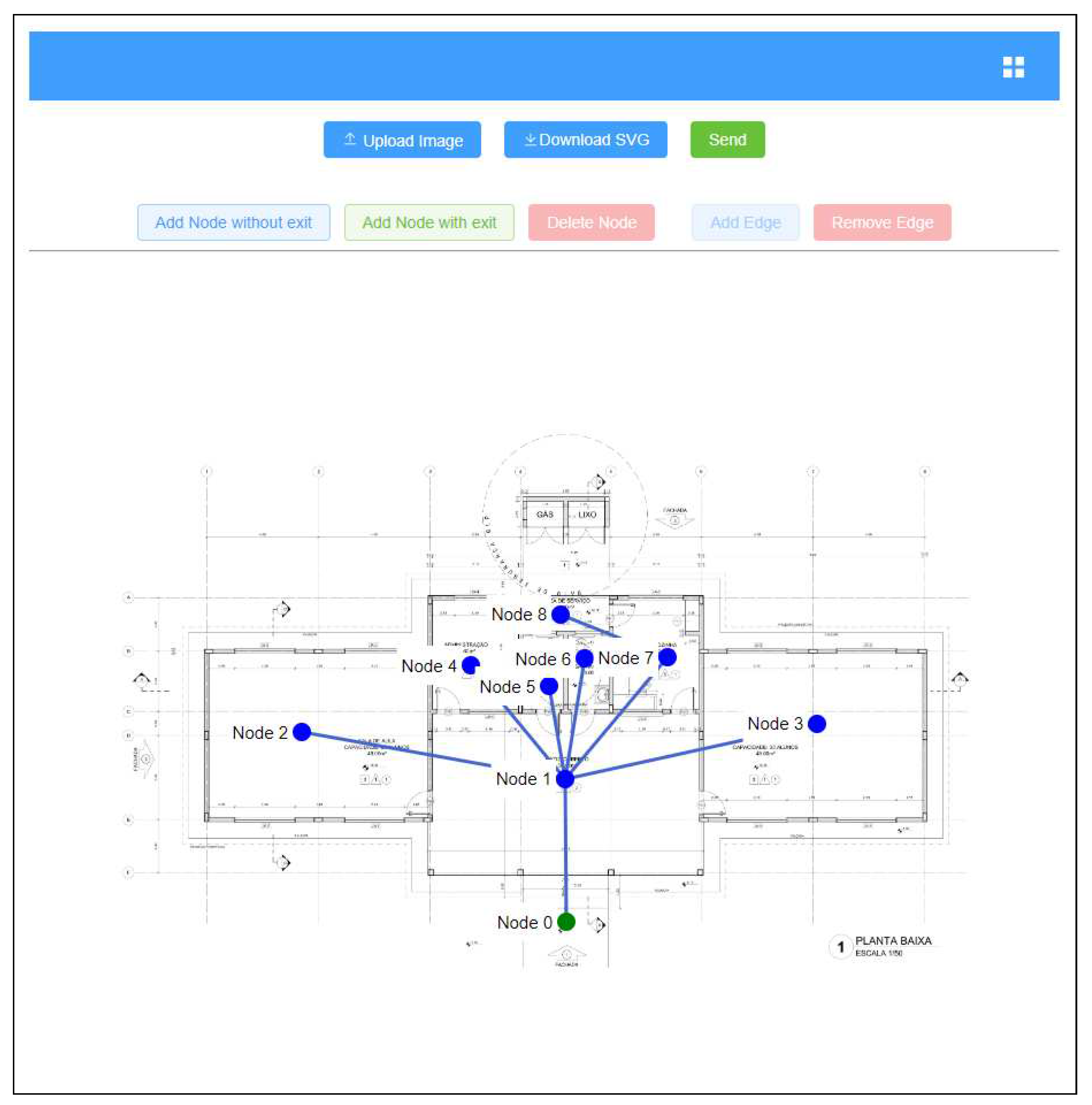

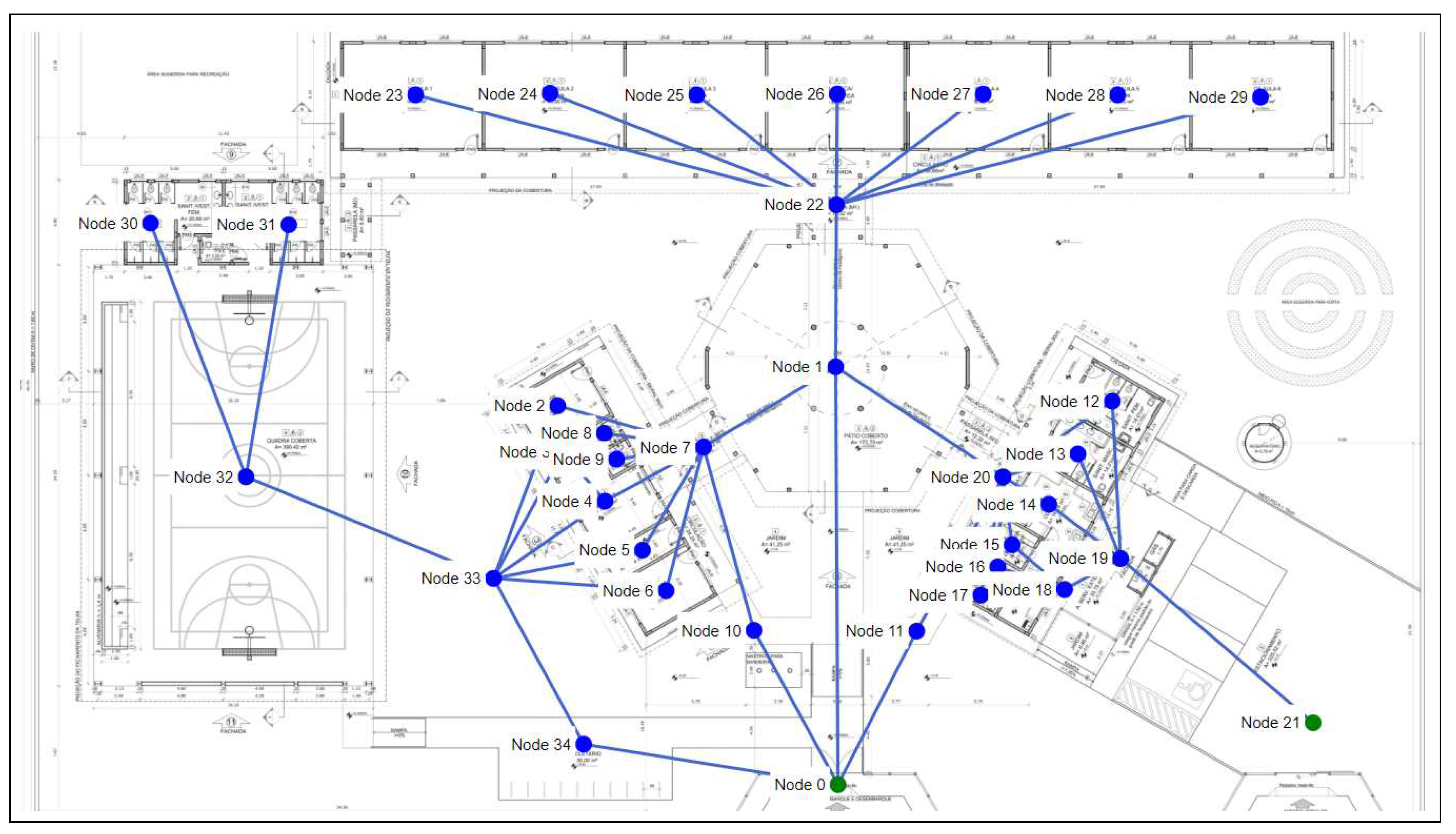

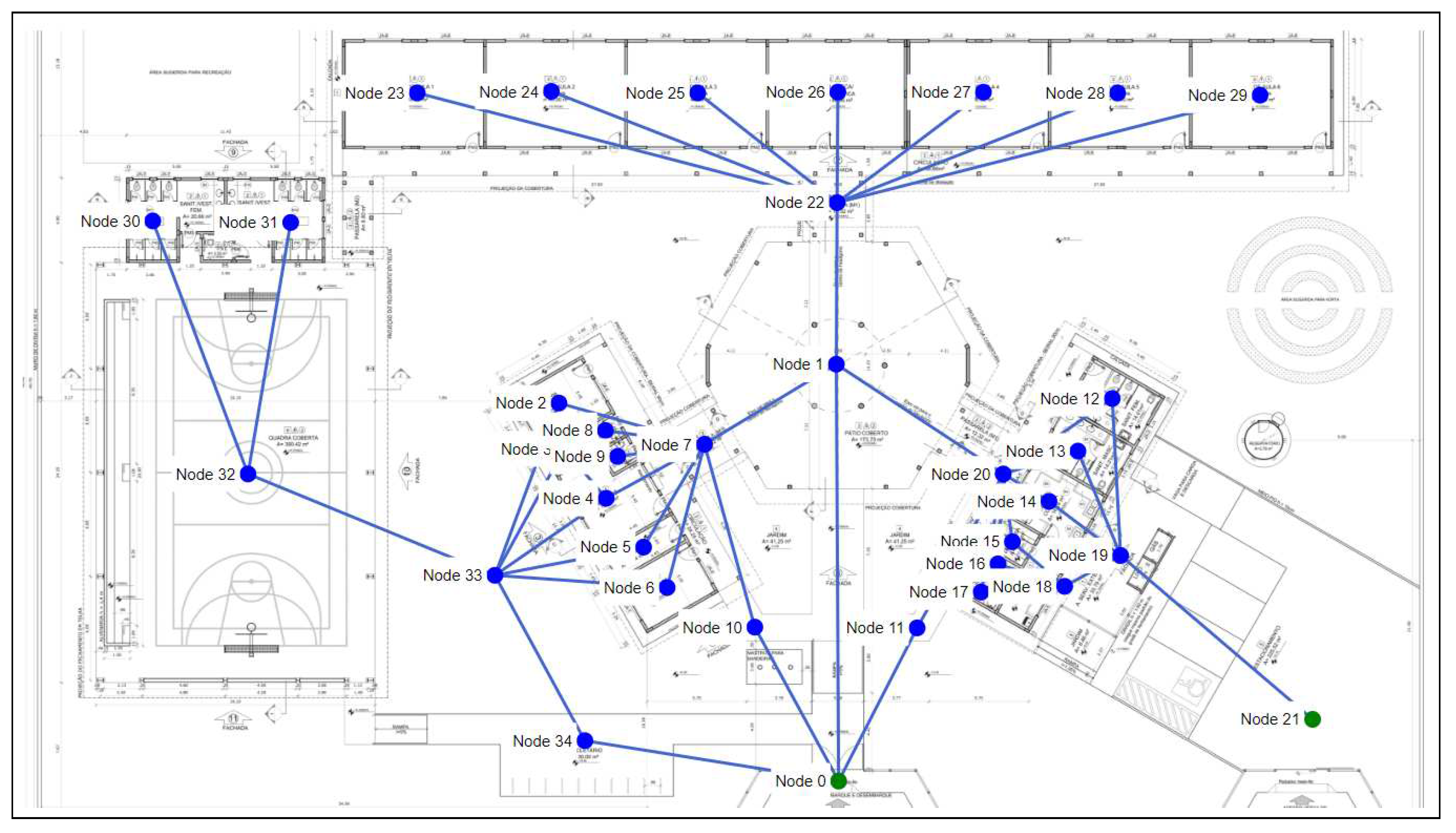

4.2. Representing and using floor plan images

4.3. Settings for training

5. Projects, Results and Discussion

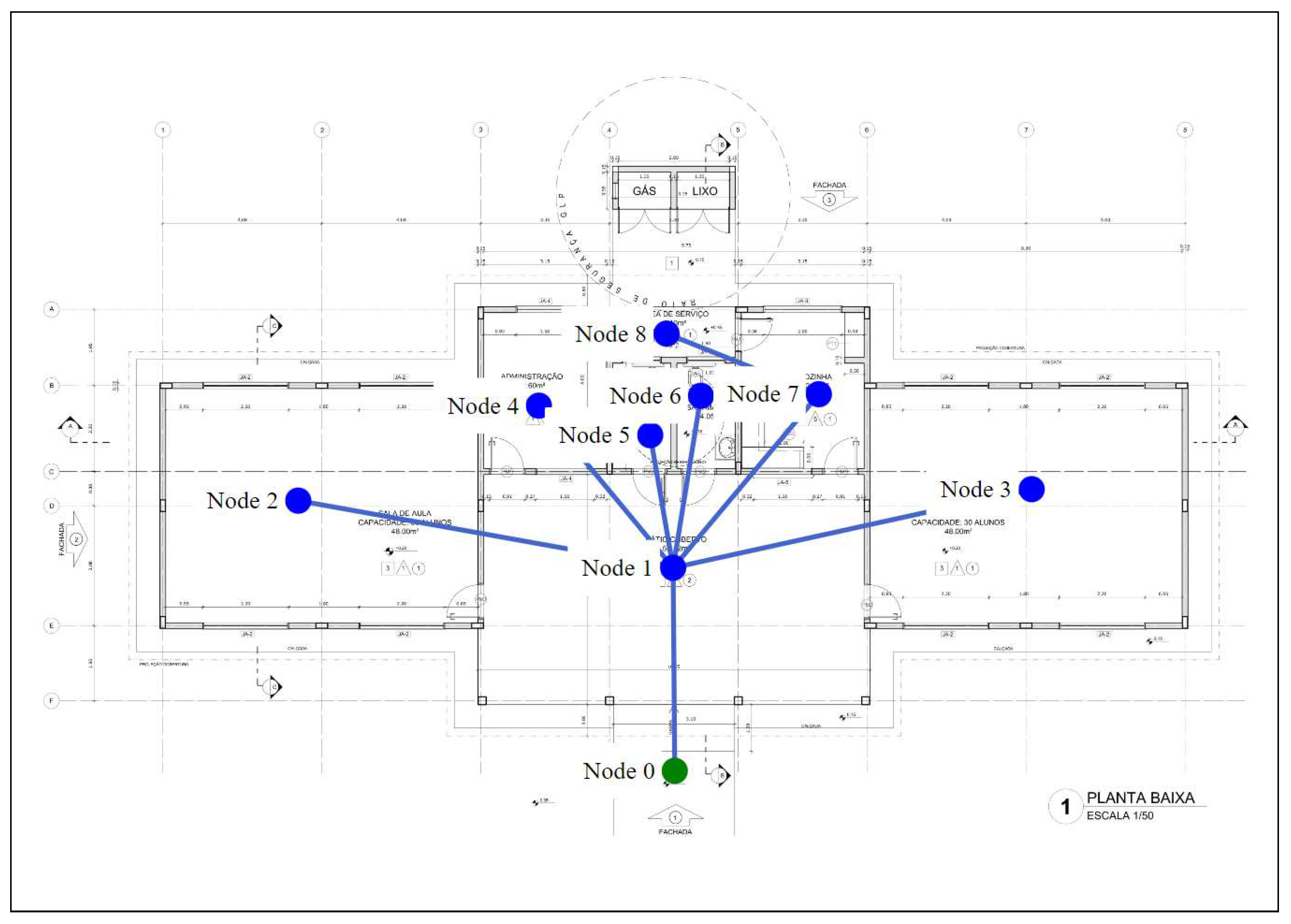

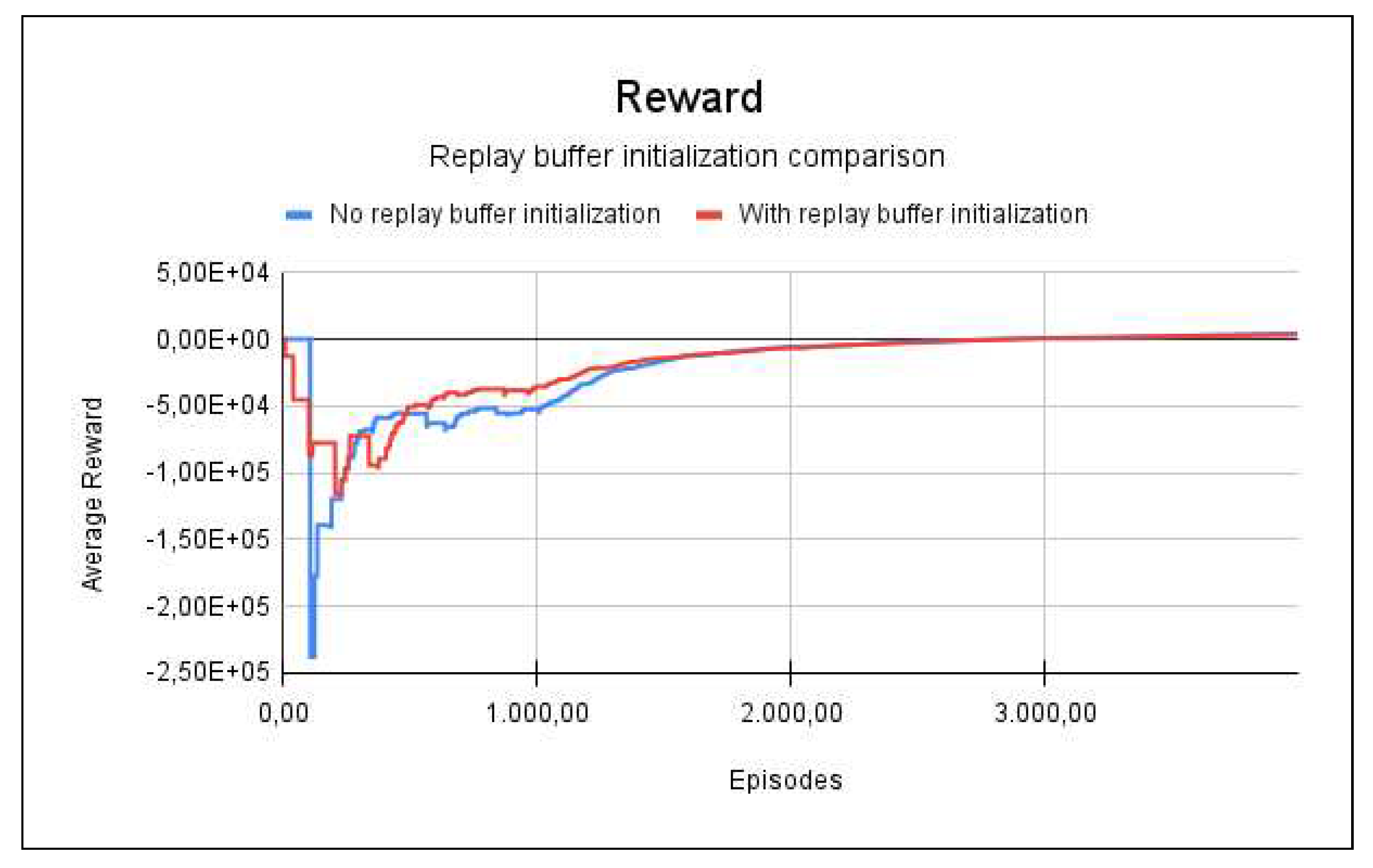

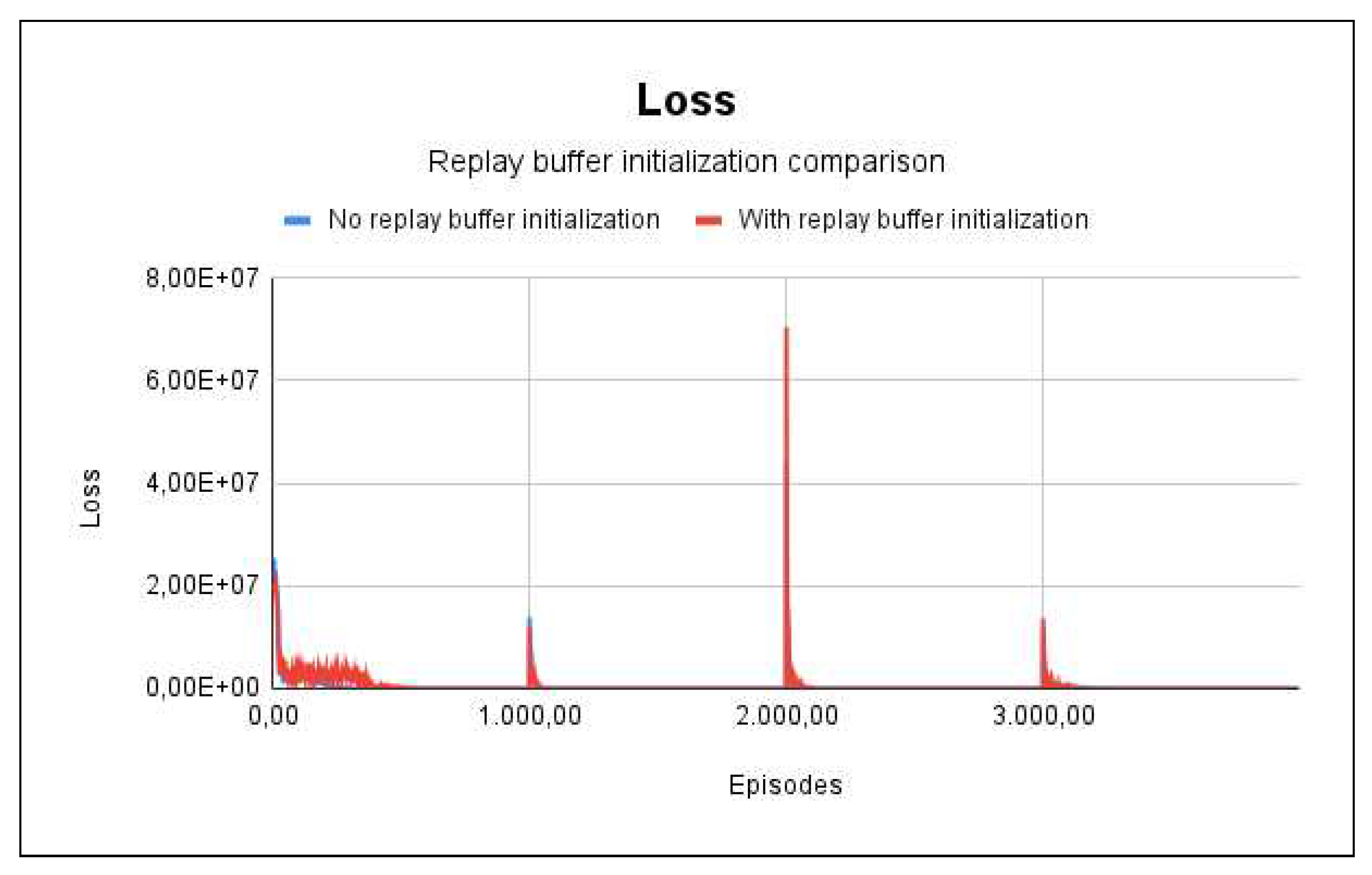

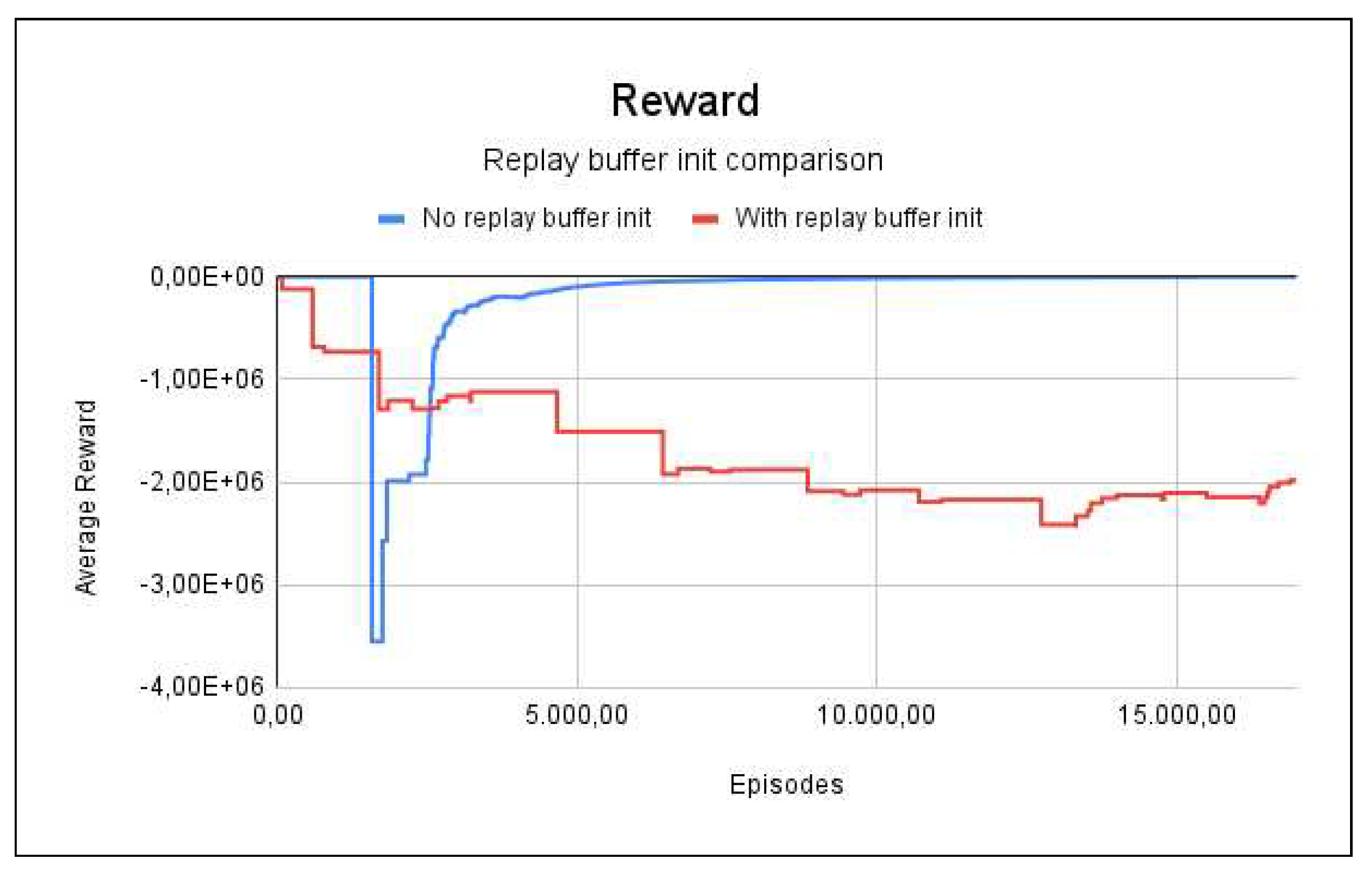

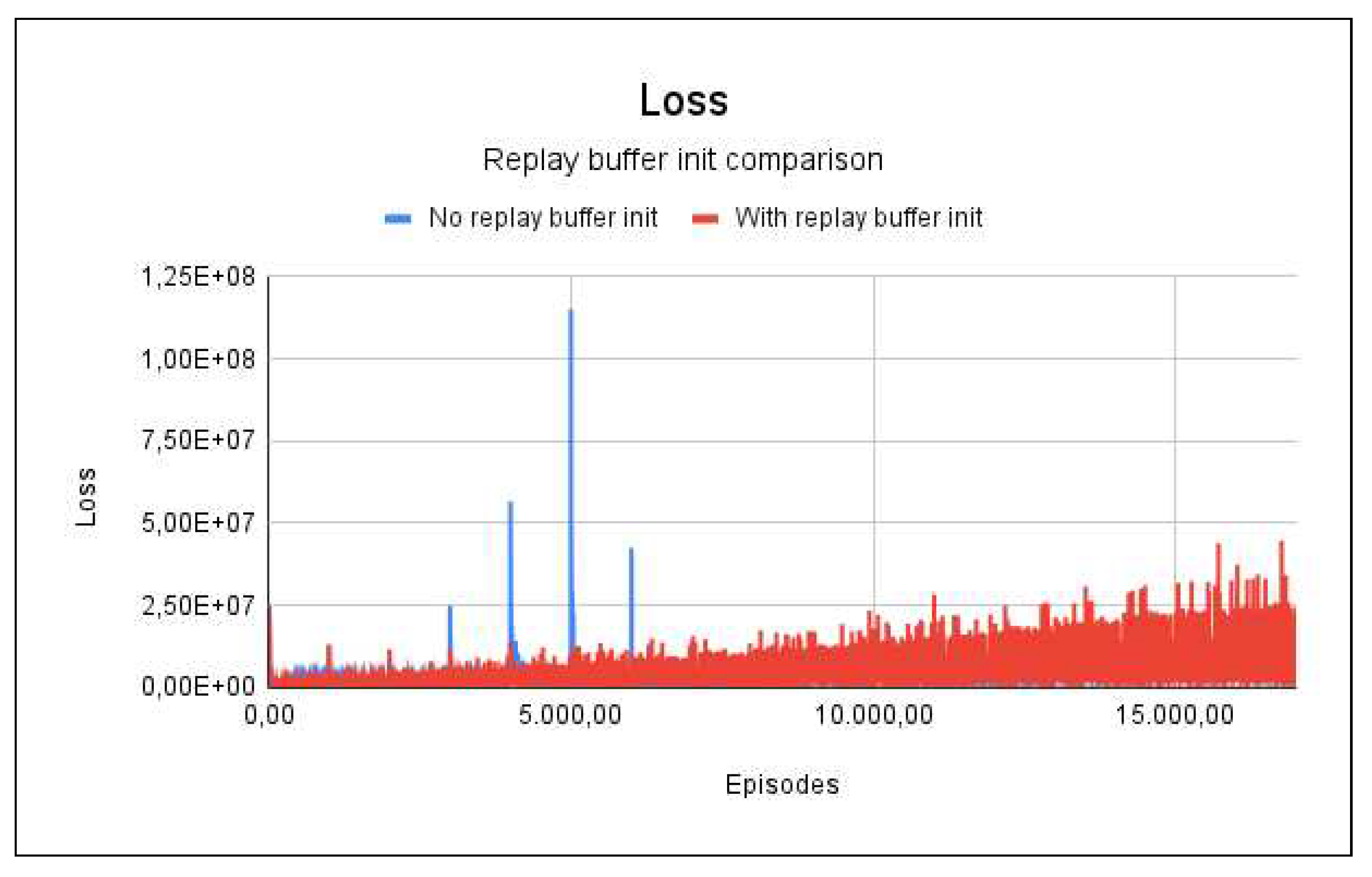

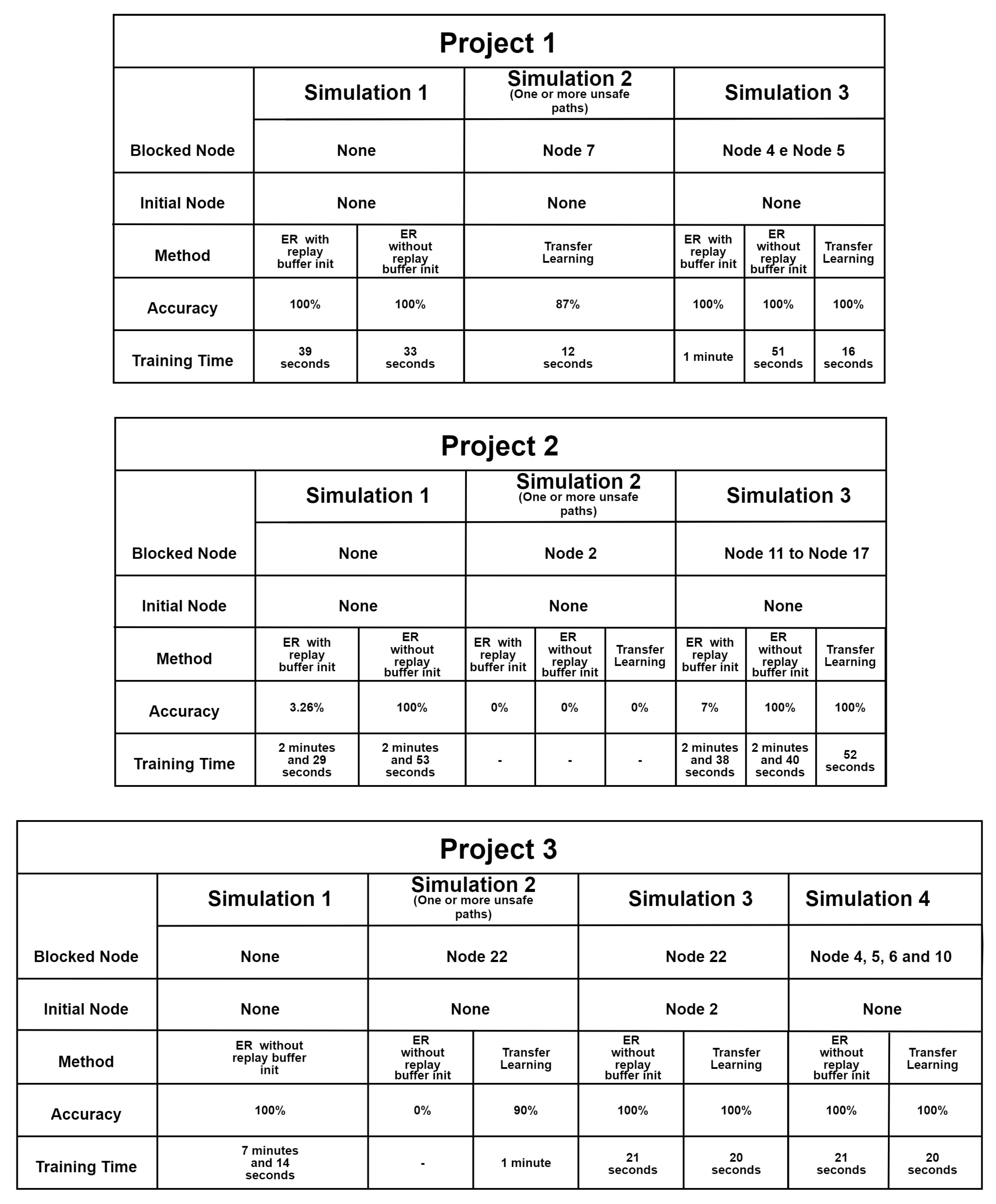

5.1. Project 1

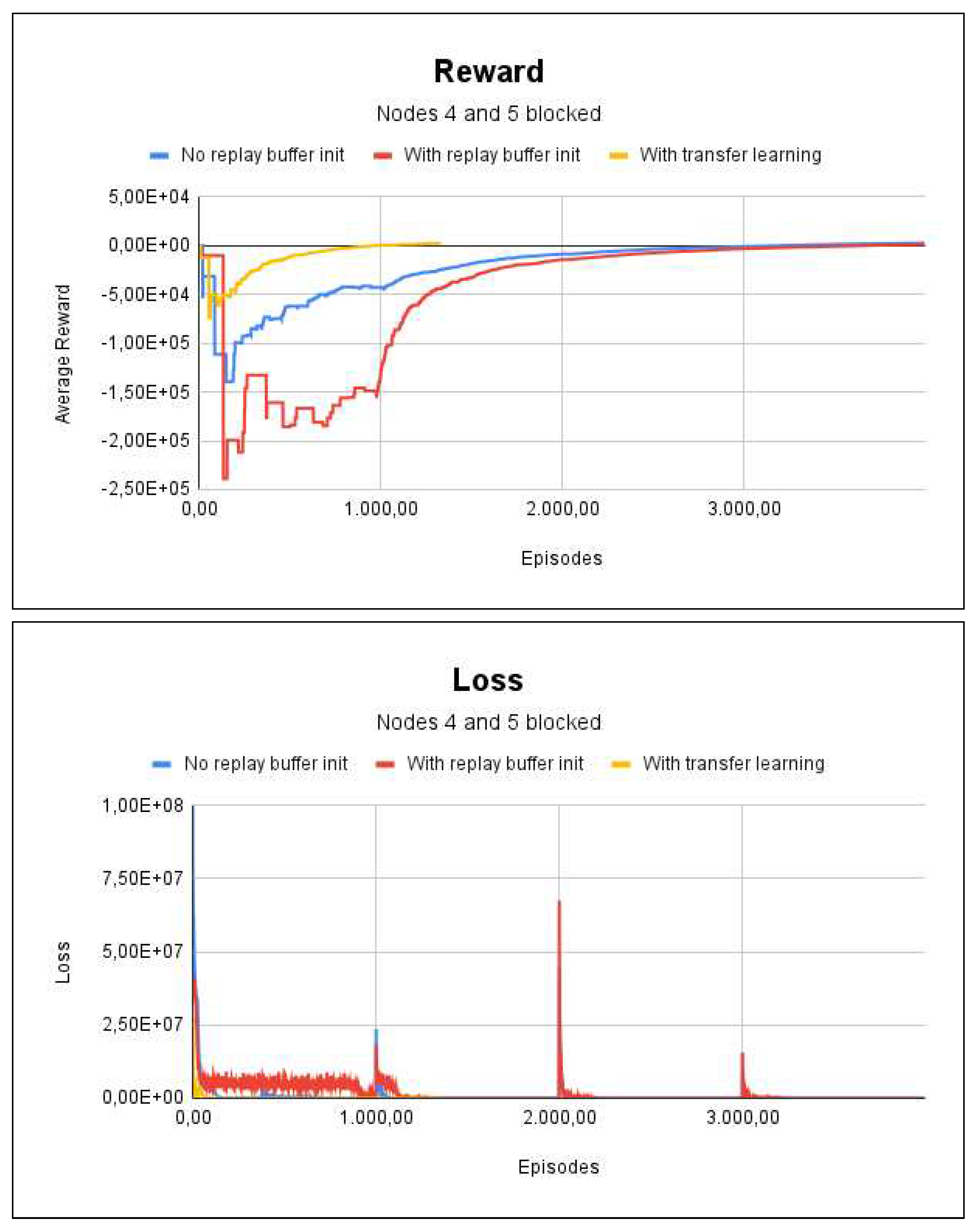

5.2. Project 2

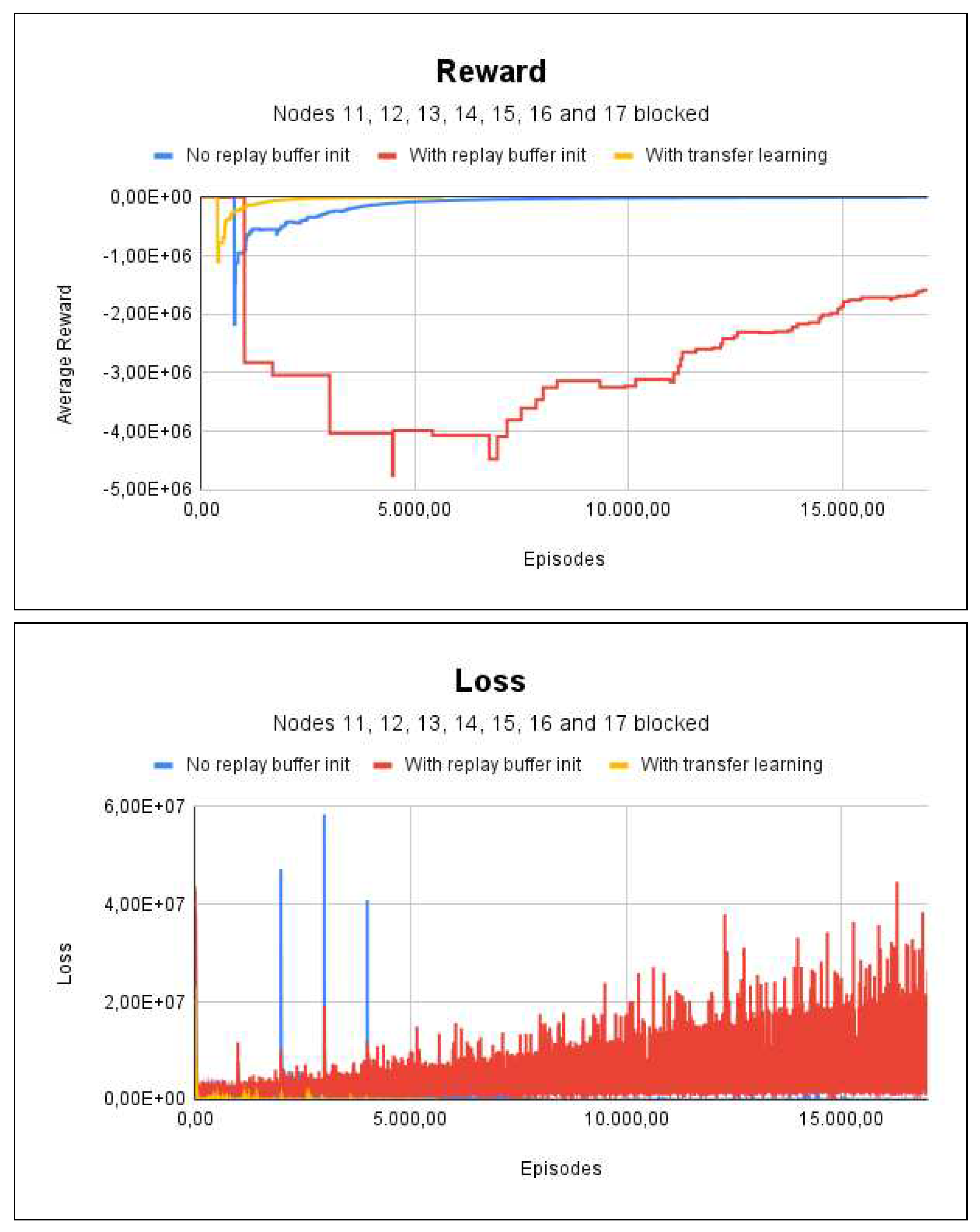

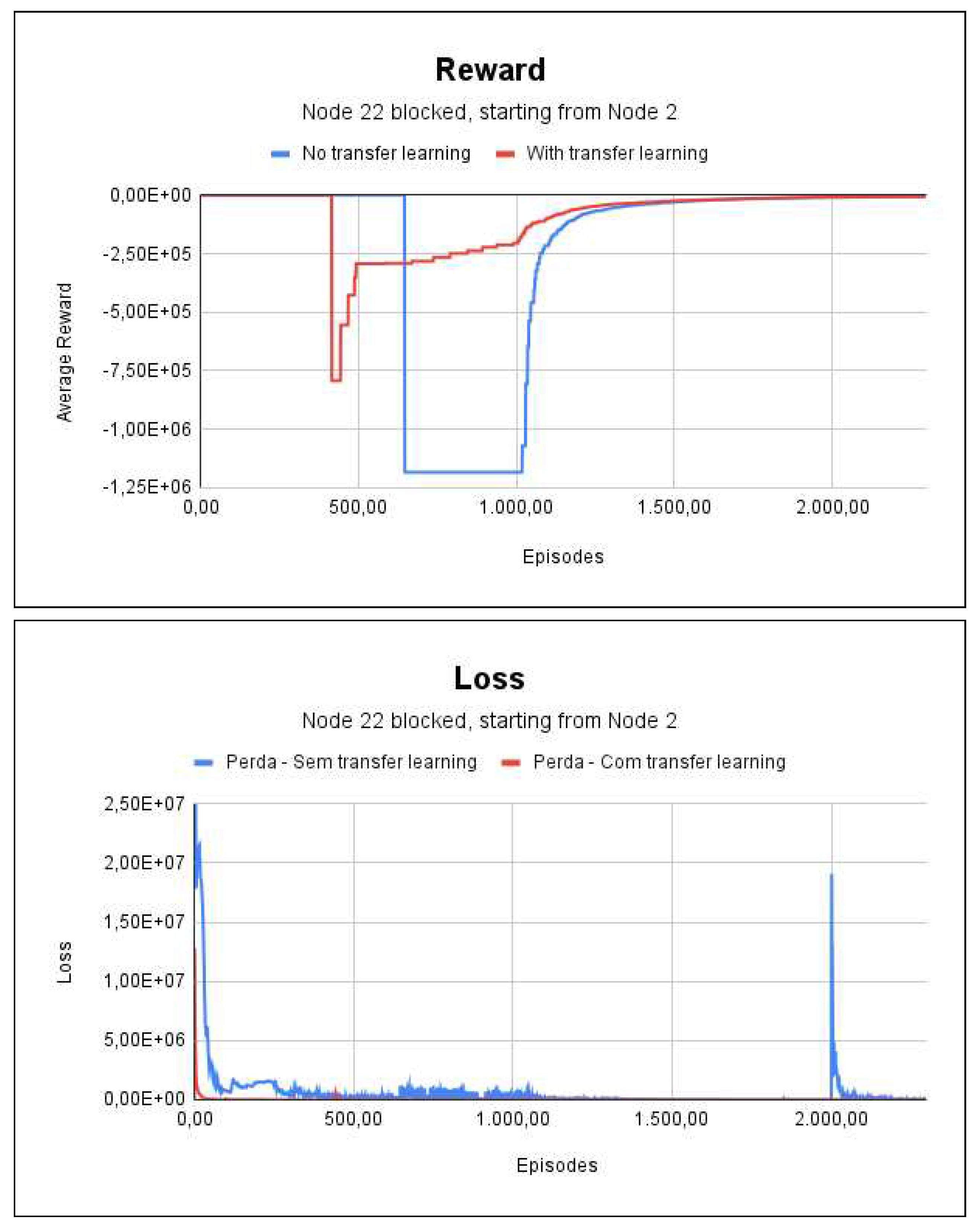

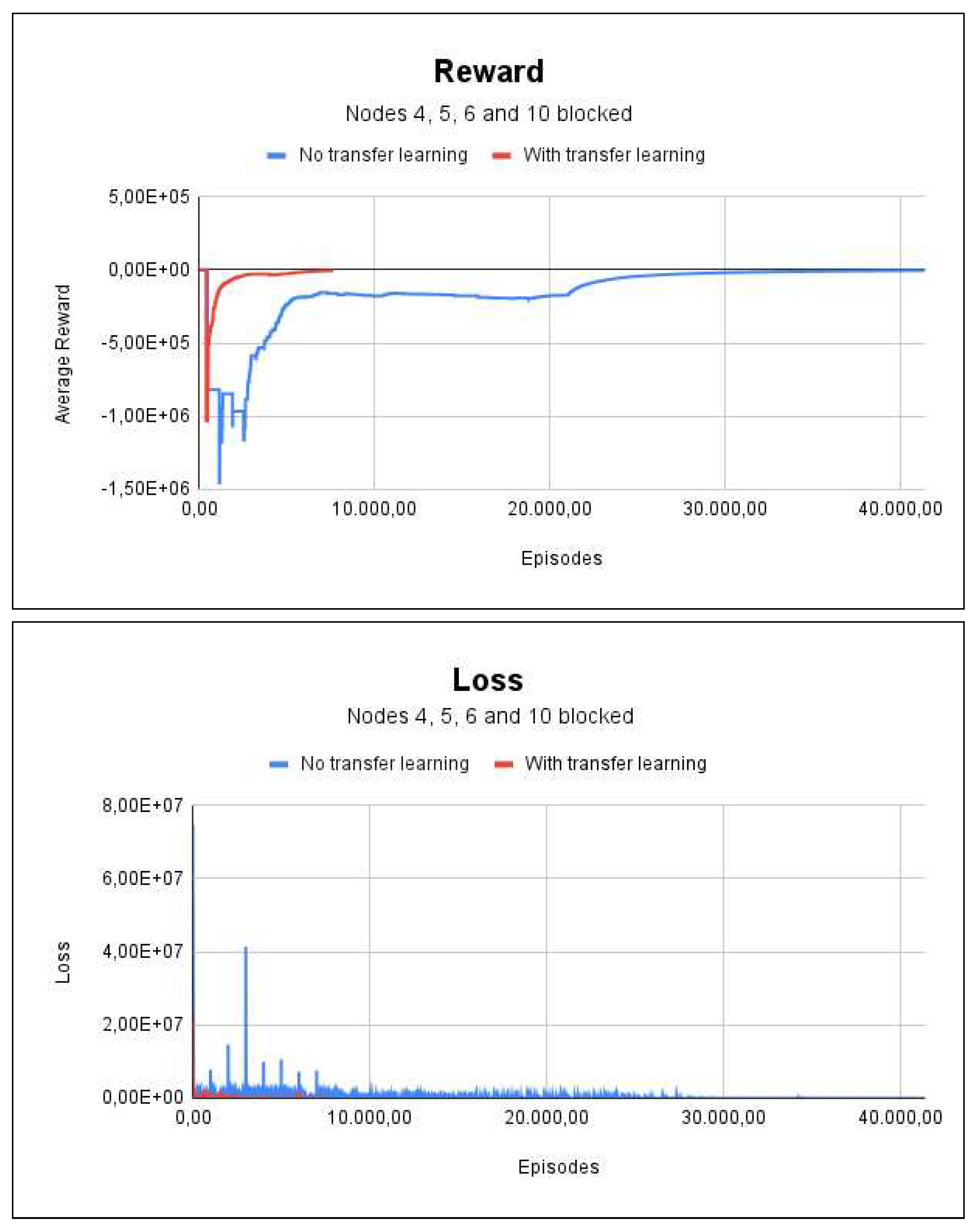

5.3. Project 3

5.4. Improvements of the System, Discussion and Challenges

5.4.1. Improvements of the System

5.4.2. Discussion

6. Conclusion Recommendations for Future Work

References

- TodayShow. Newer homes and furniture burn faster, giving you less time to escape a fire, 2017.

- Emergency Exit Routes. https://www.osha.gov/sites/default/files/publications/emergency-exit-routes-factsheet.pdf, 2018. Accessed: 2022-4-22.

- Brasil É o 3º País com o maior número de mortes por incêndio (newsletter nº 5), 2015.

- Conselho Nacional do ministério público - início.

- Residential fire estimate summaries, 2022.

- Crispim, C.M.R. Proposta de arquitetura segura de centrais de incêndio em nuvem, 2021.

- Sharma, J.; Andersen, P.A.; Granmo, O.C.; Goodwin, M. Deep Q-Learning With Q-Matrix Transfer Learning for Novel Fire Evacuation Environment. IEEE Transactions on Systems, Man, and Cybernetics: Systems 2021, 51, 7363–7381. [Google Scholar] [CrossRef]

- Agnihotri, A.; Fathi-Kazerooni, S.; Kaymak, Y.; Rojas-Cessa, R. Evacuating Routes in Indoor-Fire Scenarios with Selection of Safe Exits on Known and Unknown Buildings Using Machine Learning. 2018 IEEE 39th Sarnoff Symposium, 2018, pp. 1–6. [CrossRef]

- Xu, S.; Gu, Y.; Li, X.; Chen, C.; Hu, Y.; Sang, Y.; Jiang, W. Indoor emergency path planning based on the Q-learning optimization algorithm. ISPRS International Journal of Geo-Information 2022, 11, 66. [Google Scholar] [CrossRef]

- Bhatia, S. Survey of shortest path algorithms, 2019.

- Sutton, R.S.; Barto, A.G., Chapter 1.7 - Introduction, Early History of Reinforcement Learning. In Reinforcement learning: An introduction; The MIT Press, 2018; p. 13–22.

- Sutton, R.S.; Barto, A.G., Chapter 1.1 - Introduction, Reinforcement Learning. In Reinforcement learning: An introduction; The MIT Press, 2018; p. 1–4.

- PACELLI FERREIRA DIAS JUNIOR, E. Aprendizado por reforço sobre o problema de revisitação de páginas web. Dissertation 2012, p. 27. [CrossRef]

- Sutton, R.S.; Barto, A.G., Chapter 1.3 - Introduction, Elements of Reinforcement Learning. In Reinforcement learning: An introduction; The MIT Press, 2018; p. 6–7.

- Prestes, E., Capítulo 1.1 - Conceitos Básicos. In Introdução à Teoria dos Grafos; 2020; pp. 2–7.

- Sutton, R.S.; Barto, A.G., Chapter 3 - Policies and Value Functions. In Reinforcement learning: An introduction; The MIT Press, 2018; p. 58–62.

- Sutton, R.S.; Barto, A.G., Chapter 6 - Temporal-Difference Learning. In Reinforcement learning: An introduction; The MIT Press, 2018; p. 119–138.

- Fedus, W.; Ramachandran, P.; Agarwal, R.; Bengio, Y.; Larochelle, H.; Rowland, M.; Dabney, W. Revisiting Fundamentals of Experience Replay. CoRR 2020, abs/2007.06700, [2007.06700].

- TORRES.AI, J. Deep Q-Network (DQN)-II, 2021.

- Deep Q-learning: An introduction to deep reinforcement learning, 2020.

- Agnihotri, A.; Fathi-Kazerooni, S.; Kaymak, Y.; Rojas-Cessa, R. Evacuating Routes in Indoor-Fire Scenarios with Selection of Safe Exits on Known and Unknown Buildings Using Machine Learning 2018. pp. 1–6. [CrossRef]

- Deng, H.; Ou, Z.; Zhang, G.; Deng, Y.; Tian, M. BIM and computer vision-based framework for fire emergency evacuation considering local safety performance. Sensors (Basel) 2021, 21, 3851. [Google Scholar] [CrossRef] [PubMed]

- Selin, J.; Letonsaari, M.; Rossi, M. Emergency exit planning and simulation environment using gamification, artificial intelligence and data analytics. Procedia Computer Science 2019, 156, 283–291. 8th International Young Scientists Conference on Computational Science, YSC2019, 24-28 June 2019, Heraklion, Greece. [CrossRef]

- Wongsai, P.; Pawgasame, W. A Reinforcement Learning for Criminal’s Escape Path Prediction 2018. pp. 26–30. [CrossRef]

- Schmitt, S.; Zech, L.; Wolter, K.; Willemsen, T.; Sternberg, H.; Kyas, M. Fast routing graph extraction from floor plans 2017. pp. 1–8. [CrossRef]

- Lam, O.; Dayoub, F.; Schulz, R.; Corke, P. Automated topometric graph generation from floor plan analysis 2015. pp. 1–8.

- Hu, R.; Huang, Z.; Tang, Y.; van Kaick, O.; Zhang, H.; Huang, H. Graph2Plan: Learning Floorplan Generation from Layout Graphs. CoRR 2020, abs/2004.13204, [2004.13204].

- Kalervo, A.; Ylioinas, J.; Häikiö, M.; Karhu, A.; Kannala, J. CubiCasa5K: A Dataset and an Improved Multi-Task Model for Floorplan Image Analysis. CoRR 2019, abs/1904.01920, [1904.01920].

- Lu, Y.; Tian, R.; LI, A.; Wang, X.; del Castillo Lopez, J.L.G. CubiGraph5K - Organizational Graph Generation for Structured Architectural Floor Plan Dataset 2021.

- He, K.; Gkioxari, G.; Dollár, P.; Girshick, R.B. Mask R-CNN. CoRR 2017, abs/1703.06870, [1703.06870].

- Sandelin, F. Semantic and Instance Segmentation of Room Features in Floor Plans using Mask R-CNN. Dissertation 2019.

- Gym is a standard API for reinforcement learning, and a diverse collection of reference environments. https://www.gymlibrary.dev.

- The progressive javascript framework. https://vuejs.org.

- FastAPI framework, high performance, easy to learn, fast to code, ready for production. https://fastapi.tiangolo.com/.

- MogoDB: The developer data platform that provides the services and tools necessary to build distributed applications fast, at the performance and scale users demand. https://www.mongodb.com/.

- v-network-graph: An interactive network graph visualization component for Vue 3. https://dash14.github.io/v-network-graph/.

- Huang, B.; Wu, Q.; Zhan, F.B. A shortest path algorithm with novel heuristics for dynamic transportation networks. International Journal of Geographical Information Science 2007, 21, 625–644. [Google Scholar] [CrossRef]

- Machado, A.F.d.V.; Santos, U.O.; Vale, H.; Gonçalvez, R.; Neves, T.; Ochi, L.S.; Clua, E.W.G. Real Time Pathfinding with Genetic Algorithm. 2011 Brazilian Symposium on Games and Digital Entertainment, 2011, pp. 215–221. [CrossRef]

- Sigurdson, D.; Bulitko, V.; Yeoh, W.; Hernandez, C.; Koenig, S. Multi-agent pathfinding with real-time heuristic search. 2018 IEEE Conference on Computational Intelligence and Games (CIG) 2018. [CrossRef]

- Thombre, P. Multi-objective path finding using reinforcement learning. [CrossRef]

- Konar, A.; Chakraborty, I.G.; Singh, S.J.; Jain, L.C.; Nagar, A.K. A deterministic improved Q-learning for path planning of a mobile robot. IEEE Transactions on Systems, Man, and Cybernetics: Systems 2013, 43, 1141–1153. [Google Scholar] [CrossRef]

| 1 | Brazil’s Health Unit System is one of the largest public health systems in the world. It is designed to provide universal and free access to simple and complex healthcare to the whole of the country. |

| Reference | Machine Learning | Reinforcement Learning | Real Time | Technique for representing the floor plan (graph or matrix) |

|---|---|---|---|---|

| [8] | Yes | No | Does not specify | No |

| [23] | Yes | No | No | Yes |

| [9] | Yes | Yes | Does not specify | No |

| [7] | Yes | Yes - DRL | Yes | No |

| EvacuAI (This study) | Yes | Yes - DRL - TL | Yes | Yes |

| Name | Type | In Features | Out Features | Activation |

|---|---|---|---|---|

| F1 | Linear | Observation Size | 256 | ReLu |

| F2 | Linear | 256 | 128 | ReLu |

| F3 | Linear | 128 | 64 | ReLu |

| F4 | Linear | 64 | Qty. of actions | Linear |

| Condition | Number of episodes |

|---|---|

| There is one start node | (Number of edges) * 100 |

| Transfer learning is used | (Number of edges/3) * 500 |

| The number of edges is greater than 40 | (Number of edges) * 900 |

| For the other cases | (Number of edges) * 500 |

| Project 1 | ||

|---|---|---|

| With TL | Without TL | |

| Number of Nodes | 9 | 9 |

| Number of Edges | 8 | 8 |

| Observation Space Size | 18 | 18 |

| Number of Episodes start node | 1333 | 800 |

| Number of Episodes all nodes | 1333 | 4000 |

| Project 1 | ||

|---|---|---|

| With TL | Without TL | |

| Number of Nodes | 31 | 31 |

| Number of Edges | 34 | 34 |

| Observation Space Size | 62 | 62 |

| Number of Episodes start node | 5666 | 3400 |

| Number of Episodes all nodes | 5666 | 17000 |

| Project 1 | ||

|---|---|---|

| With TL | Without TL | |

| Number of Nodes | 34 | 34 |

| Number of Edges | 46 | 46 |

| Observation Space Size | 68 | 68 |

| Number of Episodes start node | 7666 | 4600 |

| Number of Episodes all nodes | 7666 | 41400 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).