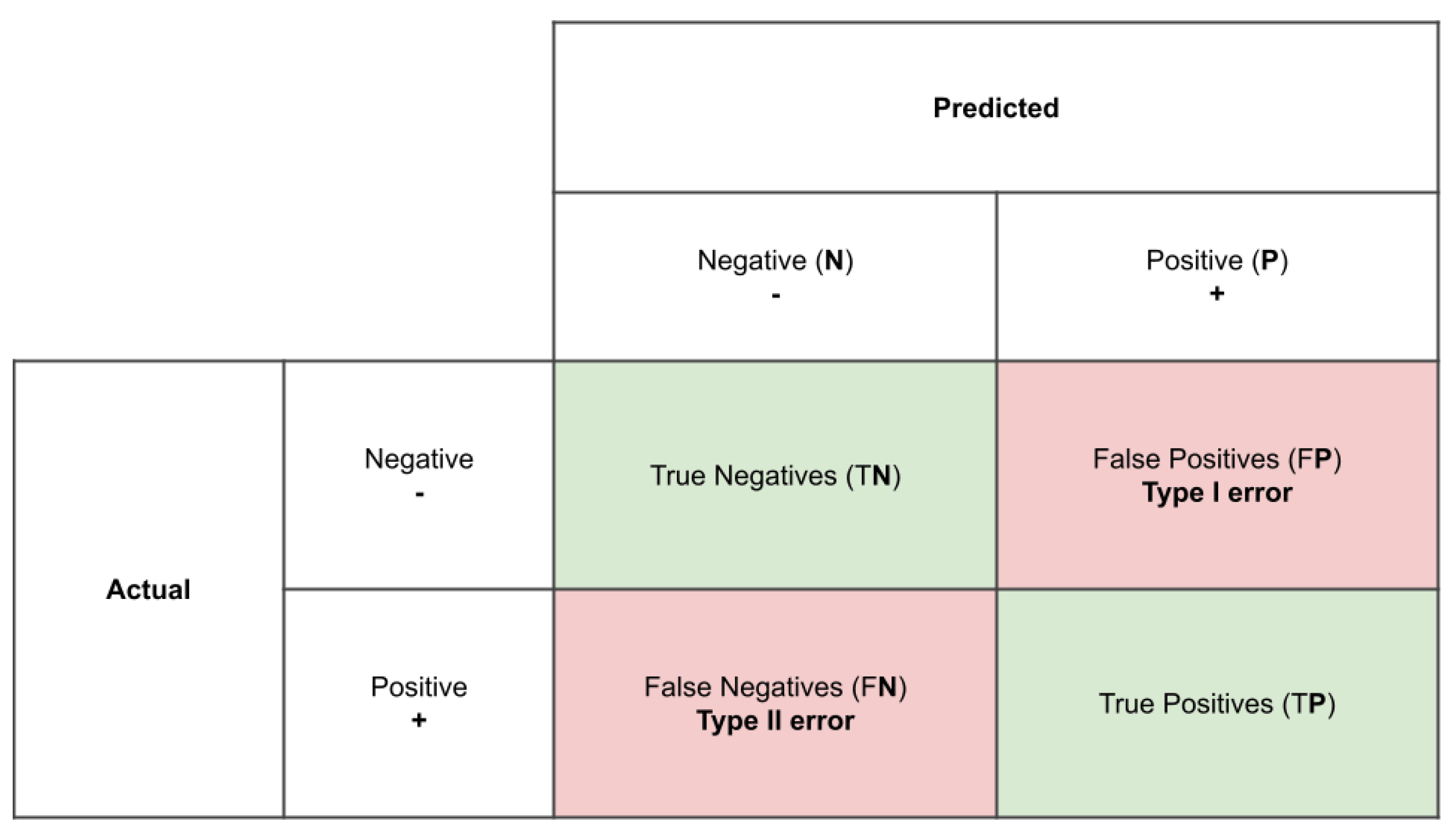

1. Introduction

A growing consensus from governments and civil engineering communities worldwide has identified Building Information Modelling (BIM) as a highly-efficient method within the construction industry that offers enhanced ways of working throughout the life cycle of a structure [

1,

2]. This process aims to generate an information model that functions as a digital description of the individual components of the structure. The model is built on information generated collaboratively and updated throughout various key stages of the project. While BIM in the planning and design phase is initially based on virtual models that describe the building to be realized (Project Information Models), the models in the life cycle must be further developed into as-built or as-is models that reflect the actual built condition of the structure, that are also the basis of Asset Information Models for operation. For operations, however, the concept of the digital twin is currently being discussed intensively. In a digital twin, the real asset is coupled with its digital representation. A bidirectional connection enables the exchange of information and knowledge between both representations. The construction of a digital twin is achieved by integrating different digital models (information models, physical models, sensor models,

.). An essential model can be the as-is BIM model, which describes the actual geometric-semantic situation as well as the position and height of the structure [

3].

The complexities across major infrastructure projects have demonstrated for quite some time the need to develop a single model that reflects all components of the entire project. Consequently, one can argue that applying BIM in infrastructure projects provides a much needed solution to this particular problem. This is similar to the single source of truth (SSOT) concept applied in information science, where data from many systems is aggregated and edited in one place. When needed, this data can also be recalled by reference. Some of the multiple advantages of this procedure include avoiding the duplication of data entries and building a complete view of the project’s performance.

The main modelling scenarios of an infrastructure project can be categorized into greenfield and brownfield.

Greenfield is categorized by creating new information about built assets from design through construction, resulting in what is called an as-built model. Ideally, the as-built model is identical to the as-planned model. However, in most of the cases there are deviations between those two models [

4]. By comparing the as-built model with the as-planned model these deviations can be uncovered. On the other hand, in

Brownfield scenarios the information of an already existing asset must consider the nature and quality of the information required for the project. In terms of safety, the advanced age and relevance of most infrastructure projects makes this a very common scenario. In rare cases, the digital models of existing assets are available, but there is a need to update them due to modifications, damages and repairs [

3].

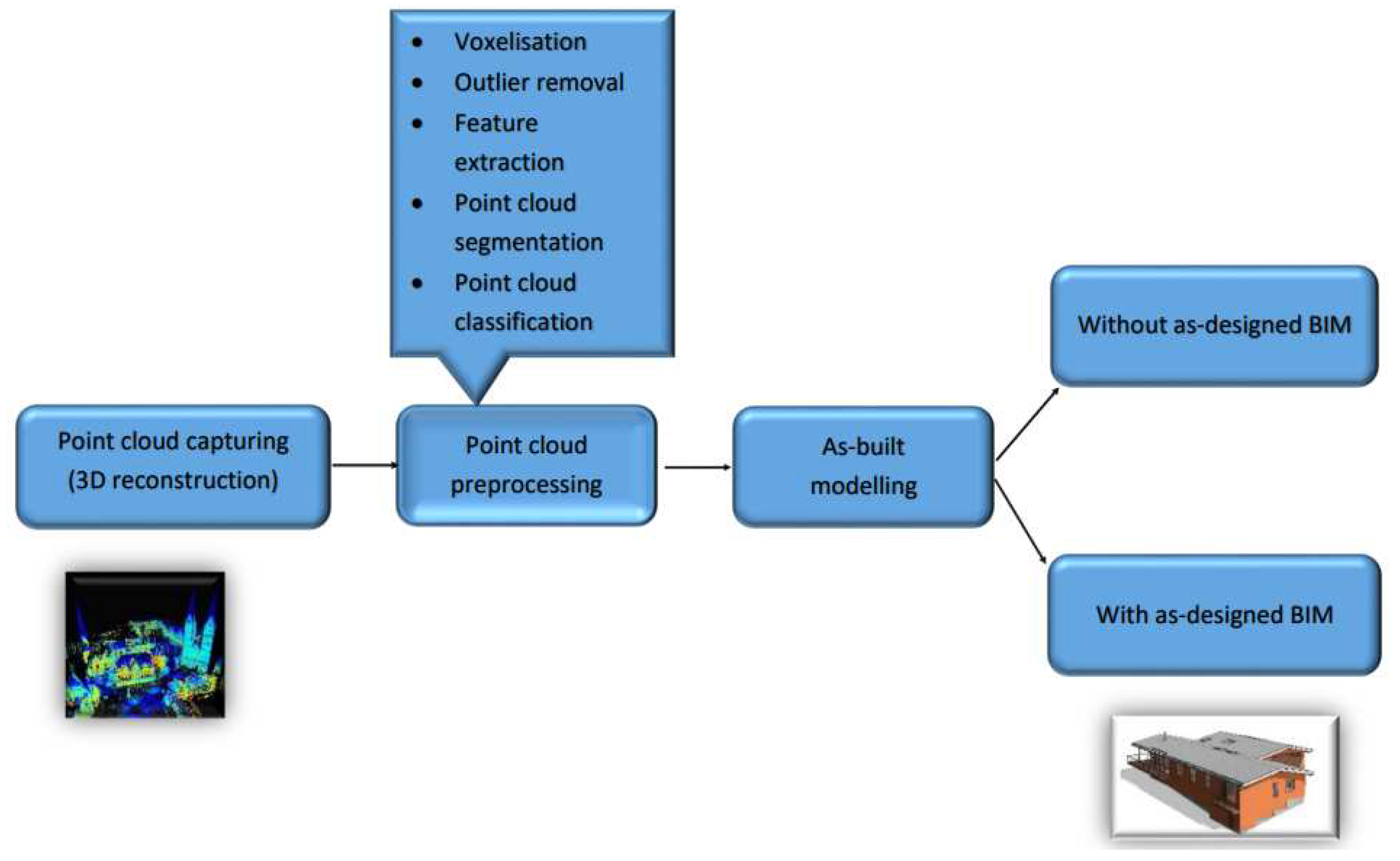

In order to update the as-planned model to an as-built model during the construction in the greenfield scenario, geometric information of an existing structure today can be captured with high resolution using 3D laser scans or photogrammetry and then imported in specific software to create the 3D as-is model. This crucial step is often called Scan to BIM (Scan2BIM). The workflow of the scan to BIM process taken from literature is shown in Figure 1.1. Firstly, various capturing devices are employed to collect 3D points of an object. However, the resulting 3D representation typically includes redundant points, causing noise in the scene and offering limited semantic information. Secondly, further processing is conducted to obtain a more informative 3D representation of the scene, which involves the use of different point cloud preprocessing techniques. This preprocessing stage begins with converting the unordered 3D points into structured 3D grids known as voxels, which provides a regular and efficient way of processing and analyzing 3D point clouds. Voxelization can also be utilized for point cloud downsampling, whereby the number of points is reduced by averaging the values of points within a voxel. Additionally, preprocessing involves the elimination of outliers that are not part of the 3D points representing the object, and extraction of semantic information via feature extraction. The point cloud is then segmented and classified into distinct categories to represent the entire scene. Utilizing these classified segments, various surface construction algorithms are employed to generate 3D models of the object [

5,

6]. Extracting geometric-semantic models from the point cloud is the fundamental aspect of 3D modelling for BIM. Finally, an as-is built model of the structure is generated. An overview of the as-is built modelling process is presented in the following literature [

7].

Figure 1.

Scan to BIM workflow to create as built model starting from point cloud capturing; after [

7].

Figure 1.

Scan to BIM workflow to create as built model starting from point cloud capturing; after [

7].

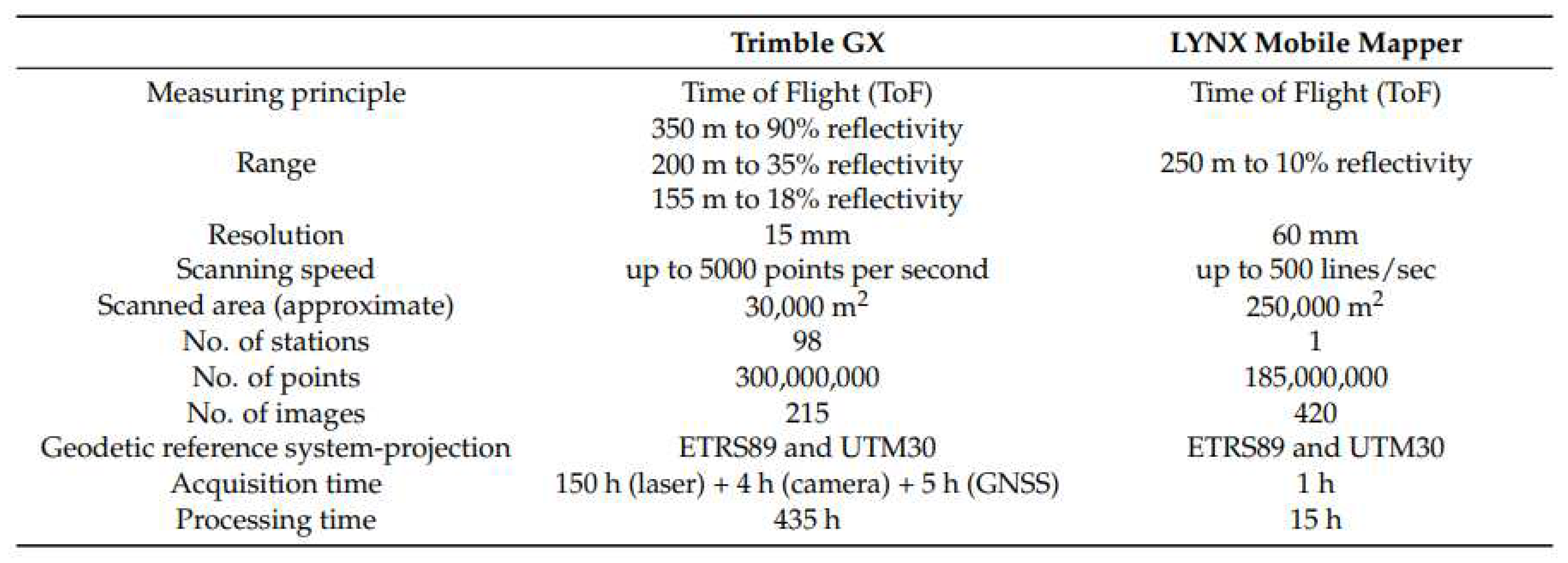

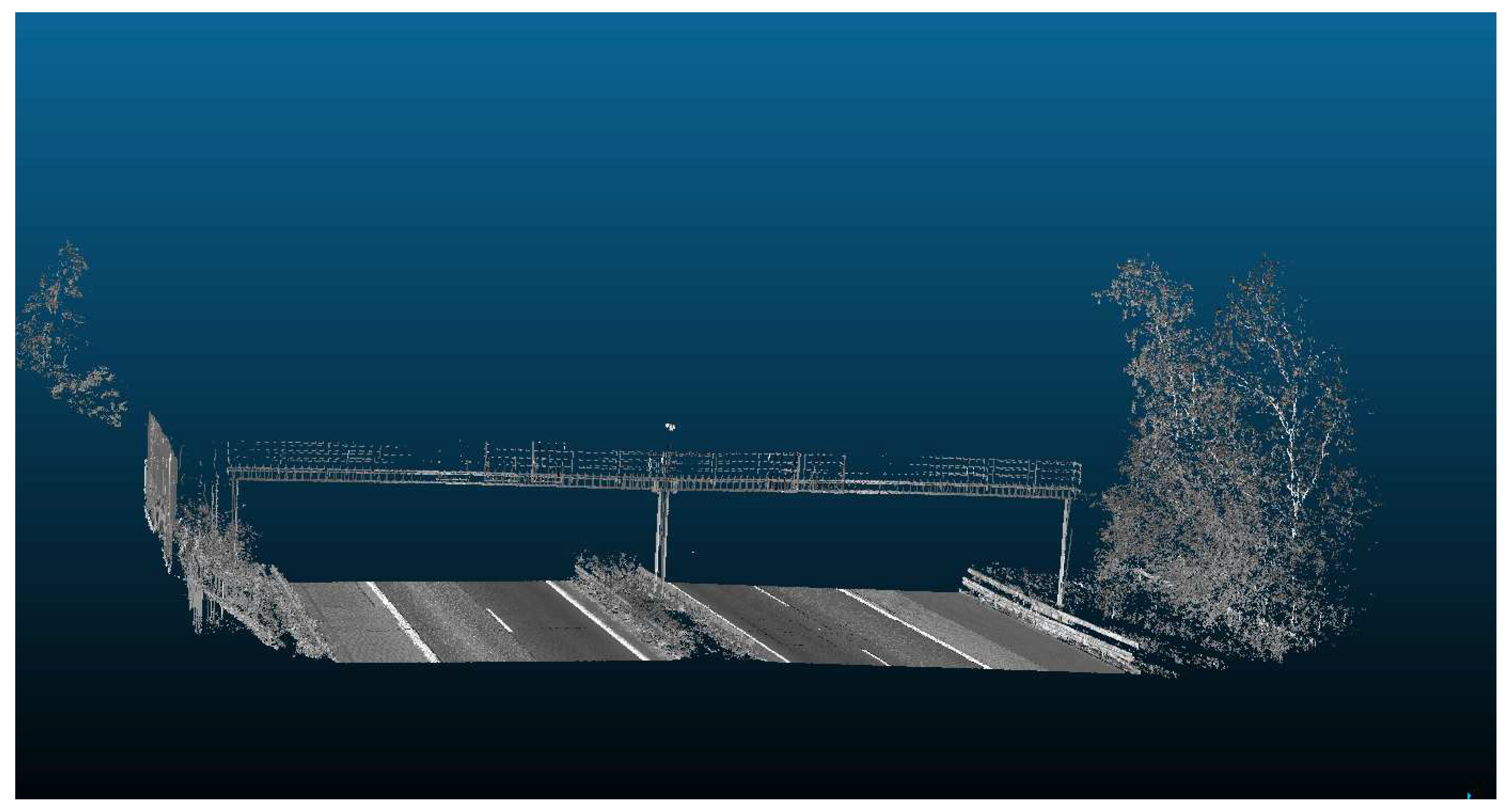

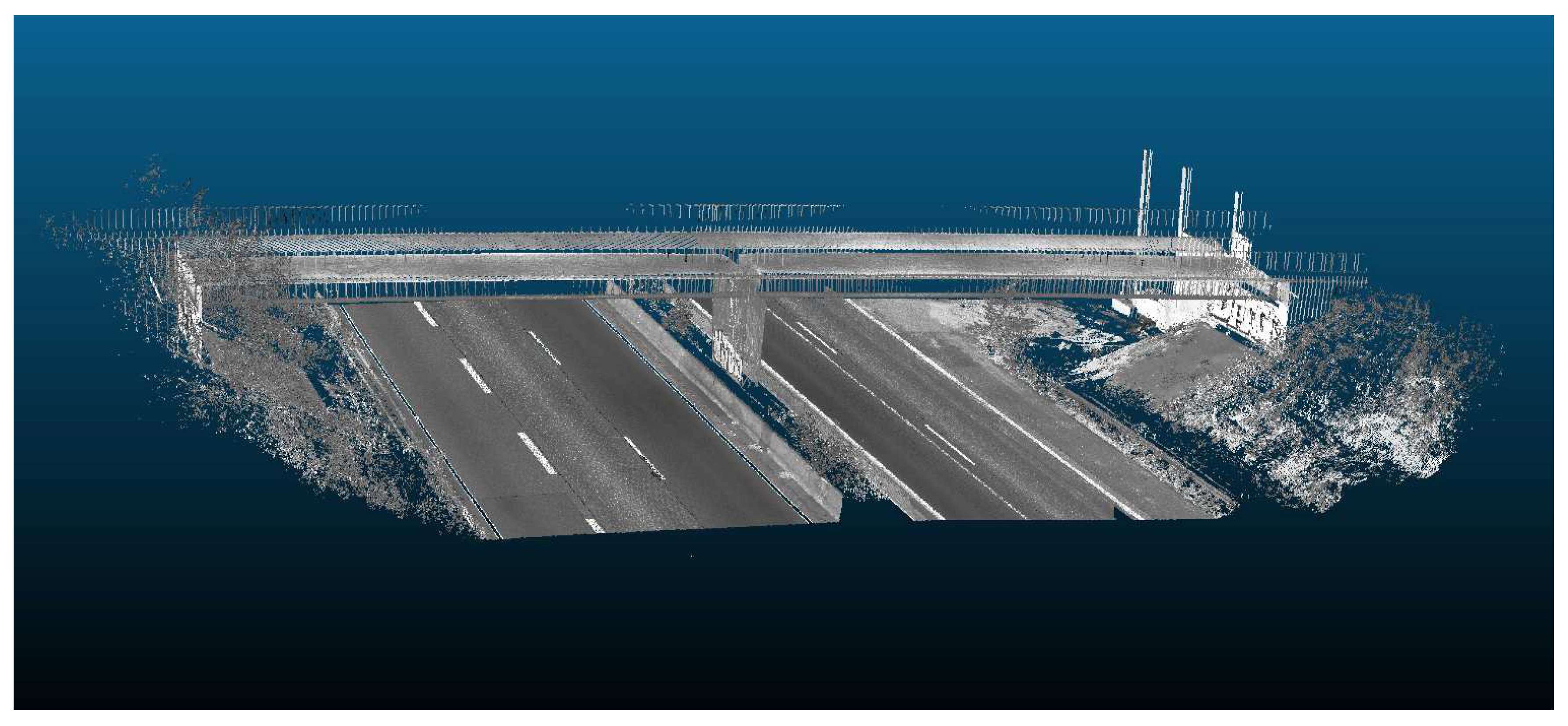

One widespread technology for Scan2BIM is 3D laser scanning. There are different techniques for capturing 3D data of the built environment using 3D laser scanners. Two of the most frequently used techniques are Terrestrial Laser Scanning (TLS) and Mobile Laser Scanning (MLS), with varying advantages depending on their application. TLS is used as a reality capture system that is ground-based. Placed on a static tripod, these systems are able to scan multiple positions and are therefore particularly useful when capturing data points from engineering structures, building interiors or areas with especially dense vegetation. MLS on the other hand is a surveying method, characterized by placing laser systems onto moving vehicles or carrying by hand. It is useful for kinematically capturing large structures and areas, whereas TLS has delivers point clouds at a much higher resolution and with lower of noise. MLS is thus suitable for large infrastructure objects, while TLS is to be preferred in scenarios with good visibility (fewer scan positions required) and high accuracy requirements. Figure 1.2 highlights exemplary for two scanner systems in the acquisition and processing time, as well as resolution based on the application of both methods in cultural heritage sites.

Figure 2.

Exemplary comparison of acquisition and processing time, as well as resolution, between MLS (Lynx mobile mapper) and TLS (Trimble GX) on 3D point cloud from cultural heritage sites [

8].

Figure 2.

Exemplary comparison of acquisition and processing time, as well as resolution, between MLS (Lynx mobile mapper) and TLS (Trimble GX) on 3D point cloud from cultural heritage sites [

8].

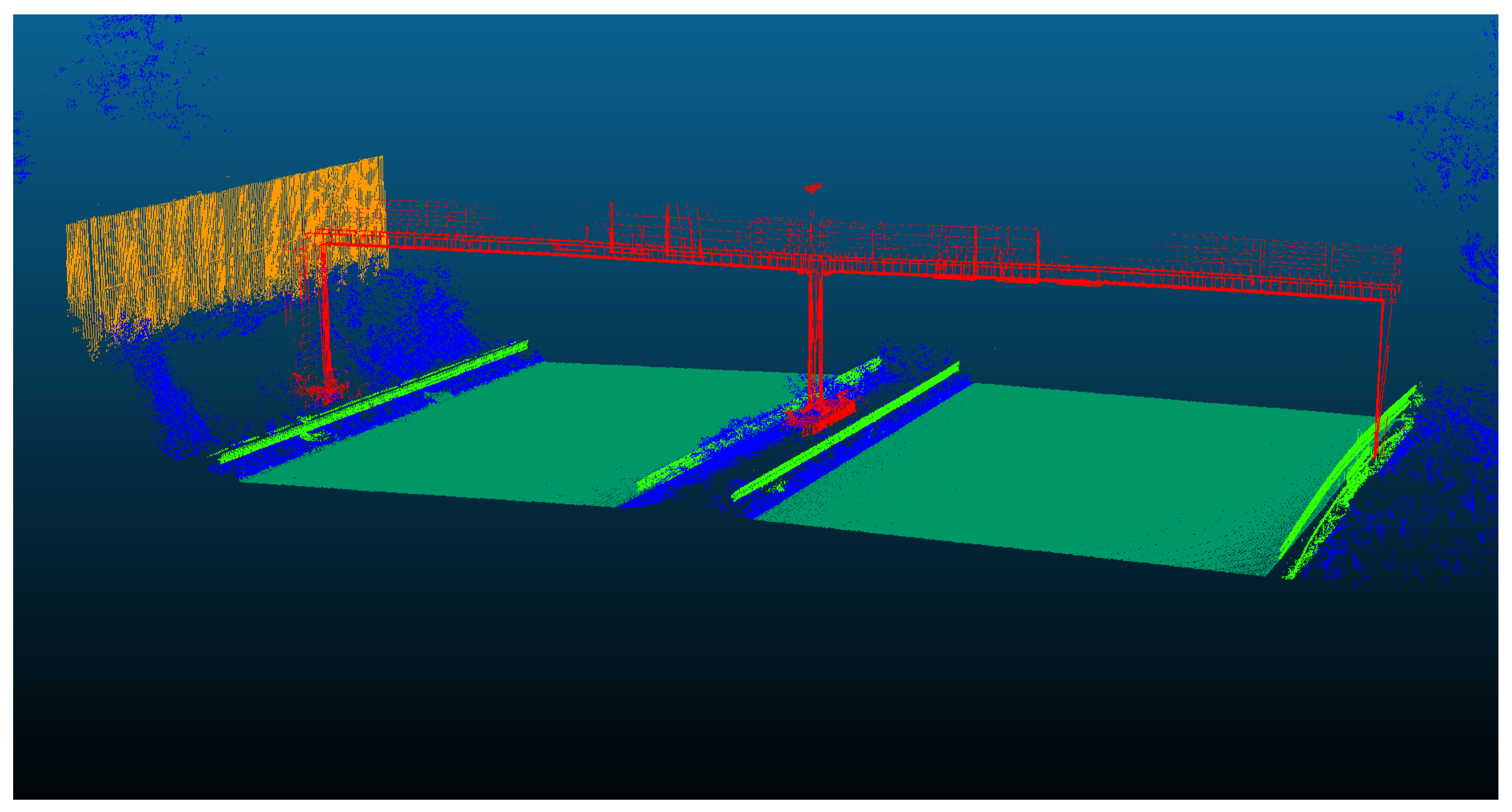

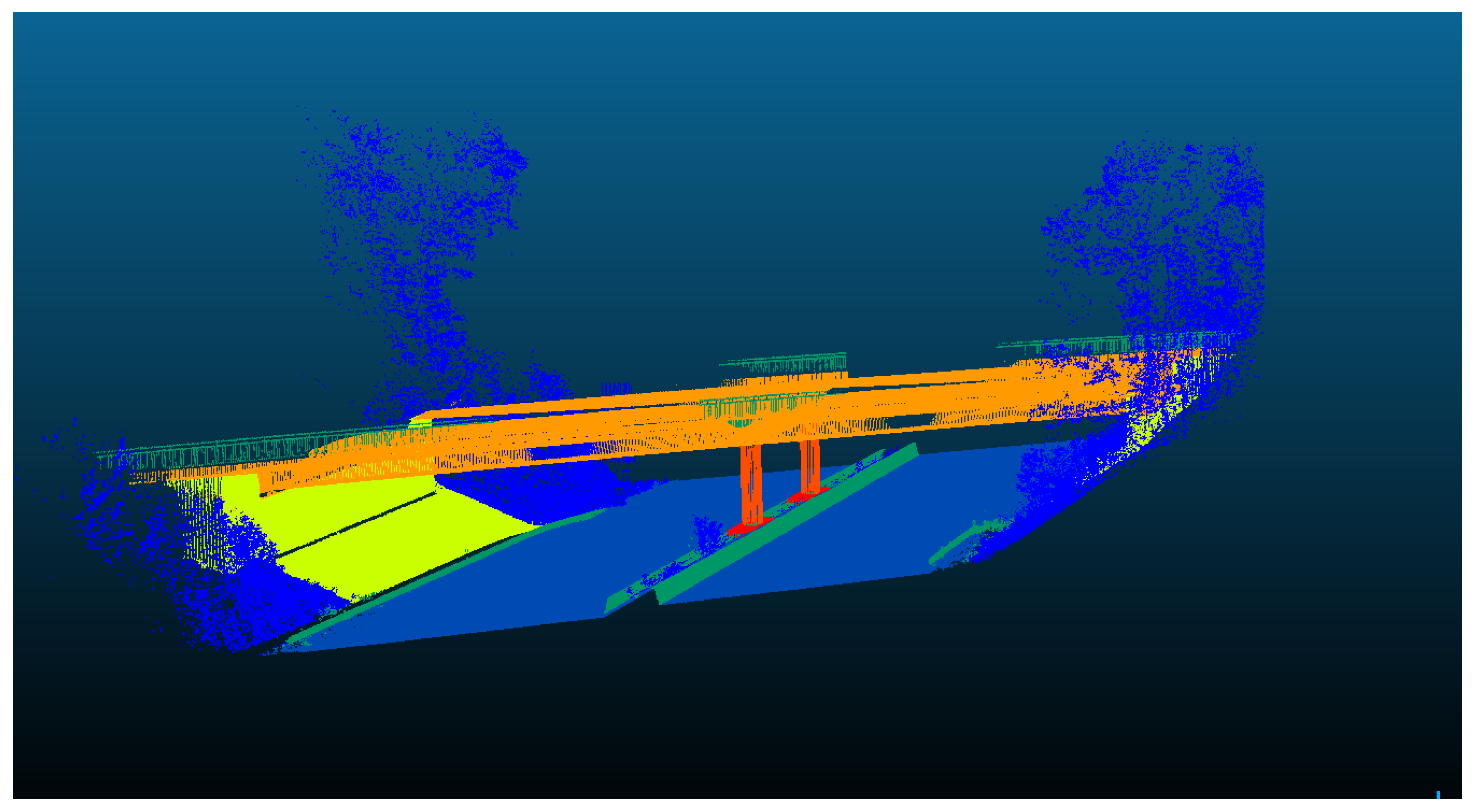

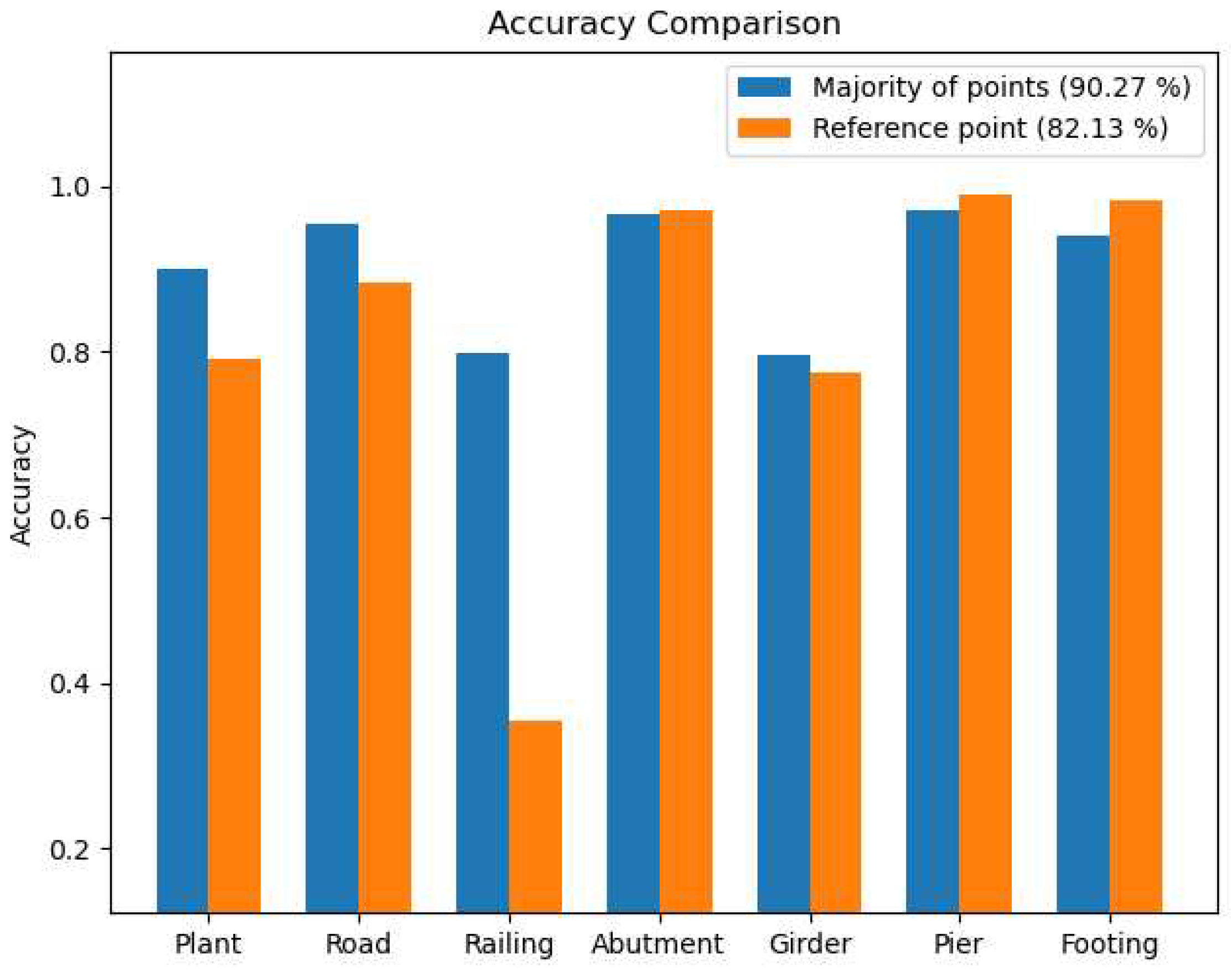

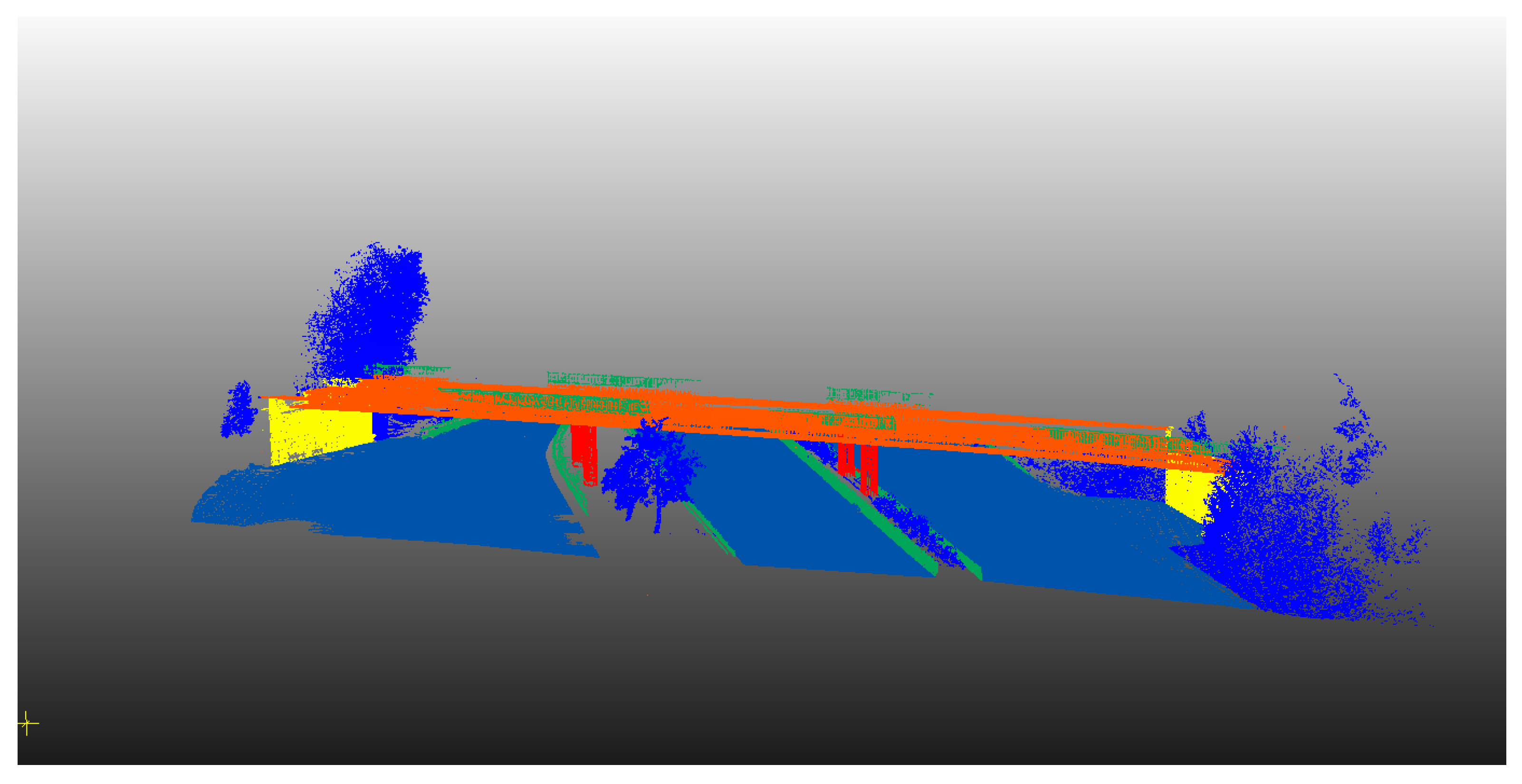

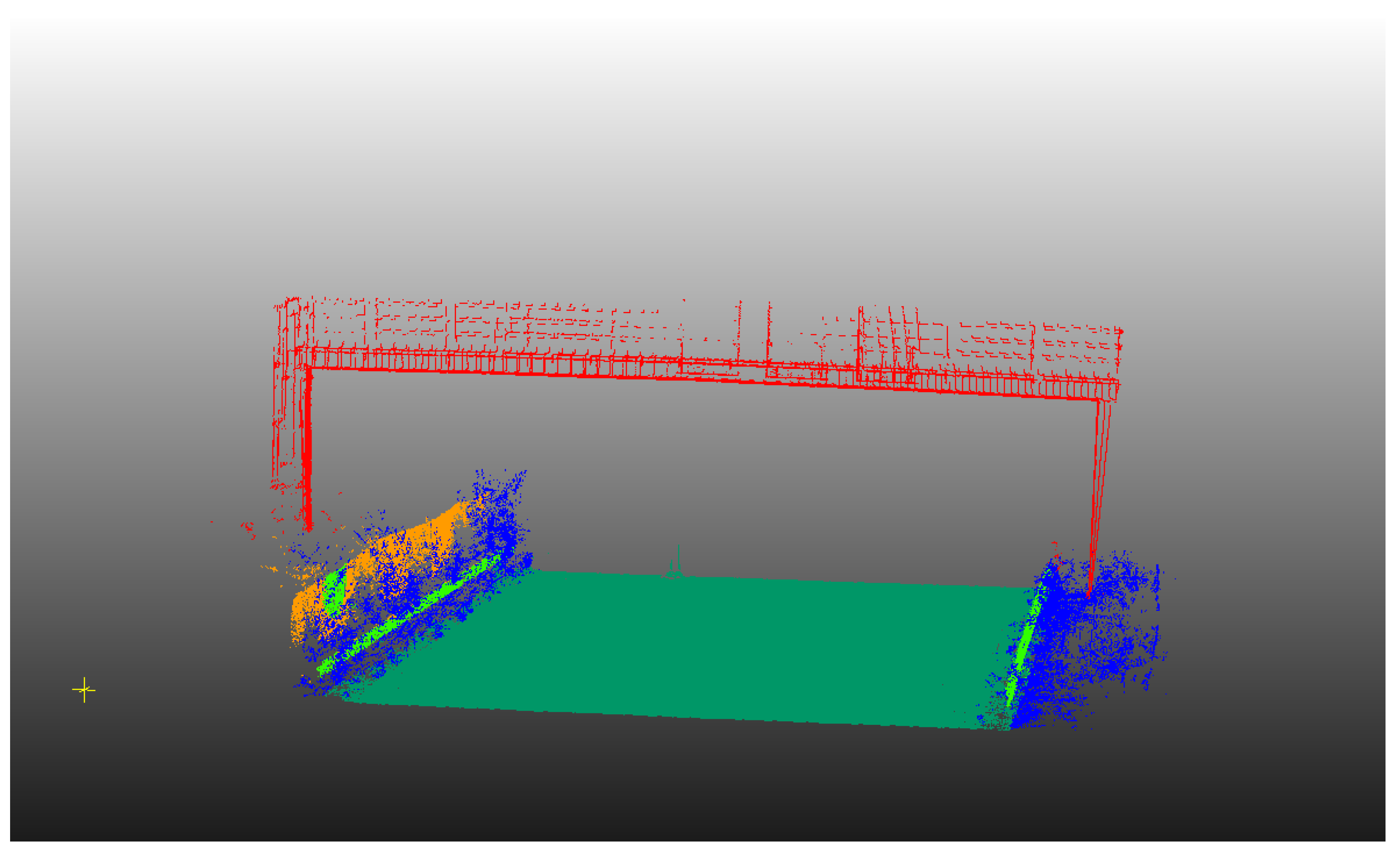

The process of creating a 3D model from a point cloud in practice is a highly manual-driven task. Considering how much time is required to conduct this step manually in order to represent complex geometries accurately, one could conclude that manual Scan2BIM is particularly difficult work [

9]. The aim of this study is to automate this process through the segmentation and classification of captured points, which in turn reduces, at least partially, the manual work involved. Segmentation focuses on partitioning the scene into multiple segments without understanding their meaning. This step is crucial for subsequent modeling efforts, as it provides a way to filter out irrelevant points and focus on the Region of Interests (ROI). On the other hand, classification assigns each point to a specific label based on the meaningful representation of its segment in the scene to give semantic meaning to the segment. Various names have been used in the literature to refer to segmentation and classification of point cloud, including semantic segmentation, instance segmentation, point labeling, and point-wise classification [

10,

11,

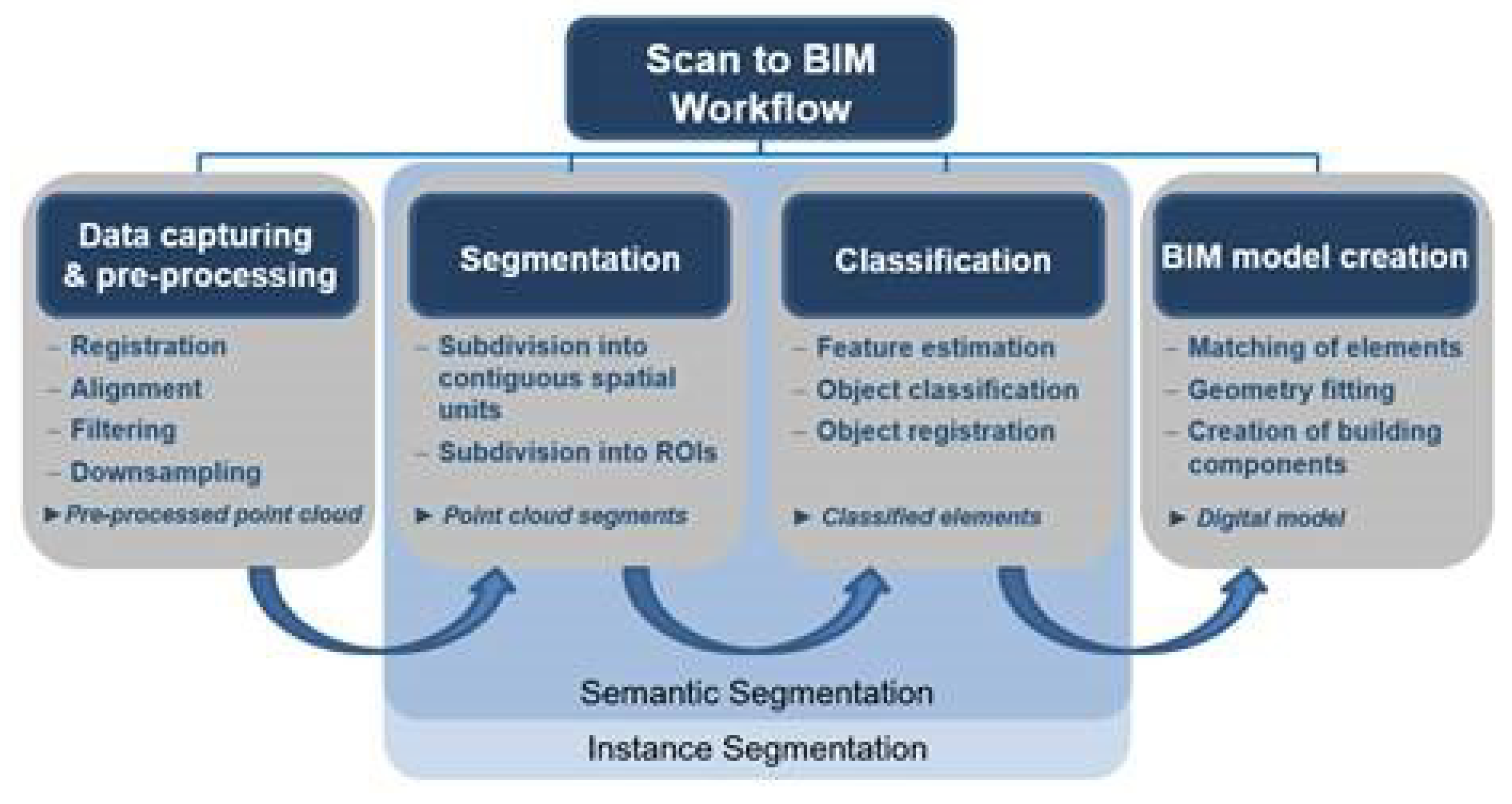

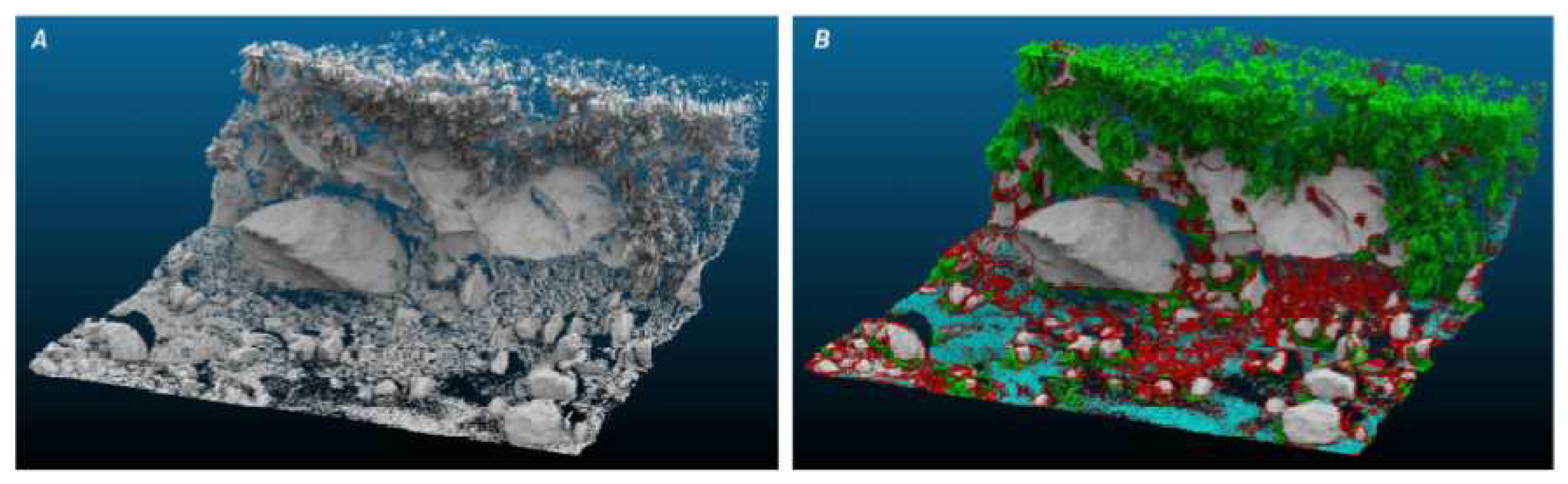

12]. The main difference between semantic segmentation and instance segmentation of 3D point clouds is that semantic segmentation focuses on assigning semantic labels to points based on their object or class, while instance segmentation goes a step further by distinguishing individual instances of objects within the same class, providing a more detailed scene understanding. However,for the sake of simplification, we will adopt the term "semantic segmentation" throughout this work. Figure 1.3 illustrates the workflow of Scan2BIM showing where our approach for the automatic semantic segmentation of the 3D points fits. A general overview of the as-is modelling process is provided showing the capability of various techniques from different research fields (computer vision, surveying and geoinformatics, construction informatics, architecture,

.) to automate the as-is modelling process [

7]. The overview is based on different relevant works, that discussed the potential of these techniques and the large overlap of the modelling process with the geometry processing field. As a result these approaches will be discussed in detail in this work..

Figure 3.

Steps of Scan to BIM showing where our approach fits in the automation of the semantic segmentation stage for 3D point clouds.

Figure 3.

Steps of Scan to BIM showing where our approach fits in the automation of the semantic segmentation stage for 3D point clouds.

Recently, there has been an increasing interest in investigating different techniques to automate the Scan2BIM process by automating the stages of point cloud semantic segmentation. A notable scientific investigation explored different approaches for segmenting and generating BIM models from point cloud data. The study introduced the VOX2BIM method, which involved converting point clouds into voxel representations and employing diverse techniques to automate the process of BIM model generation [

13]. Other research investigations have explored the automation of the workflow for point cloud processing, which is an essential step in the Scan2Bim process [

14]. The automatization aimed in our work can be achieved by segmenting and classifying the point cloud, using machine learning algorithms, which can complete this task efficiently and in reasonable time [

15,

16]. Machine learning algorithms (including deep learning, neural network,

) generate models that are based on, but not specific to, a training data set that allows the algorithms to learn how to classify information.

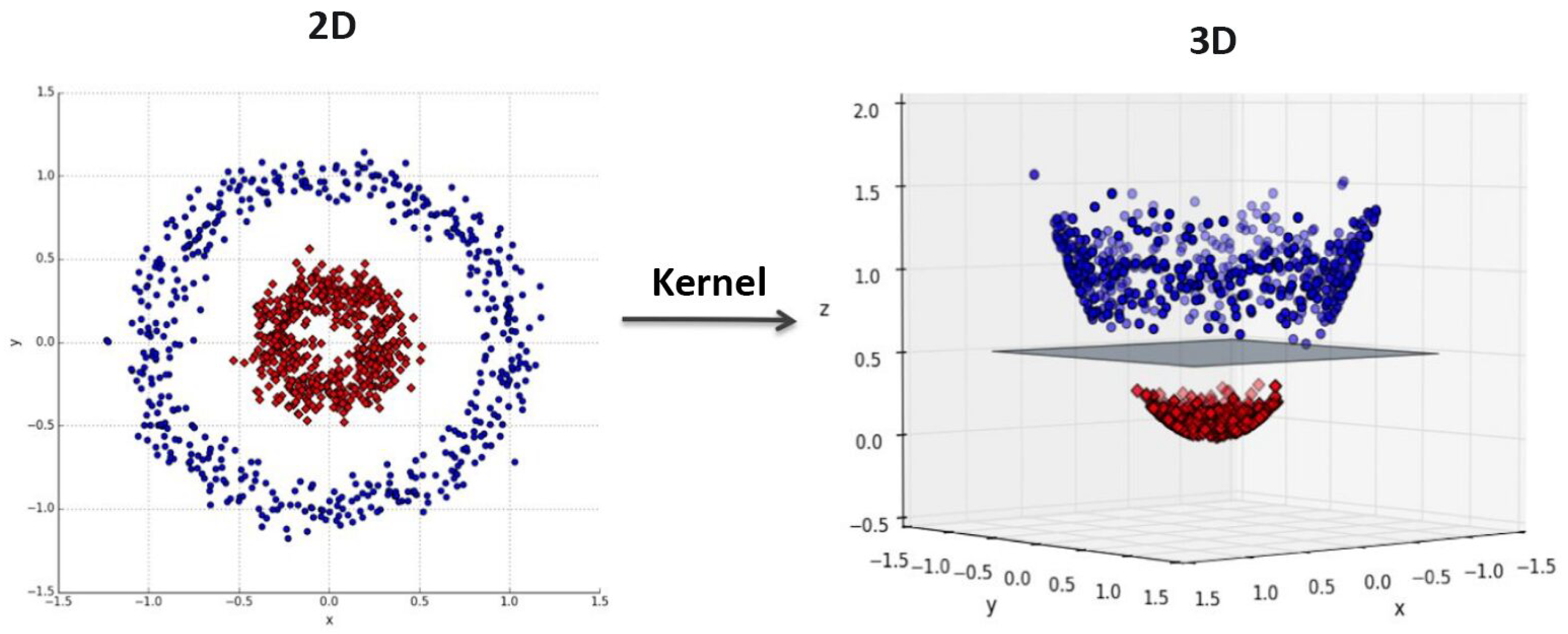

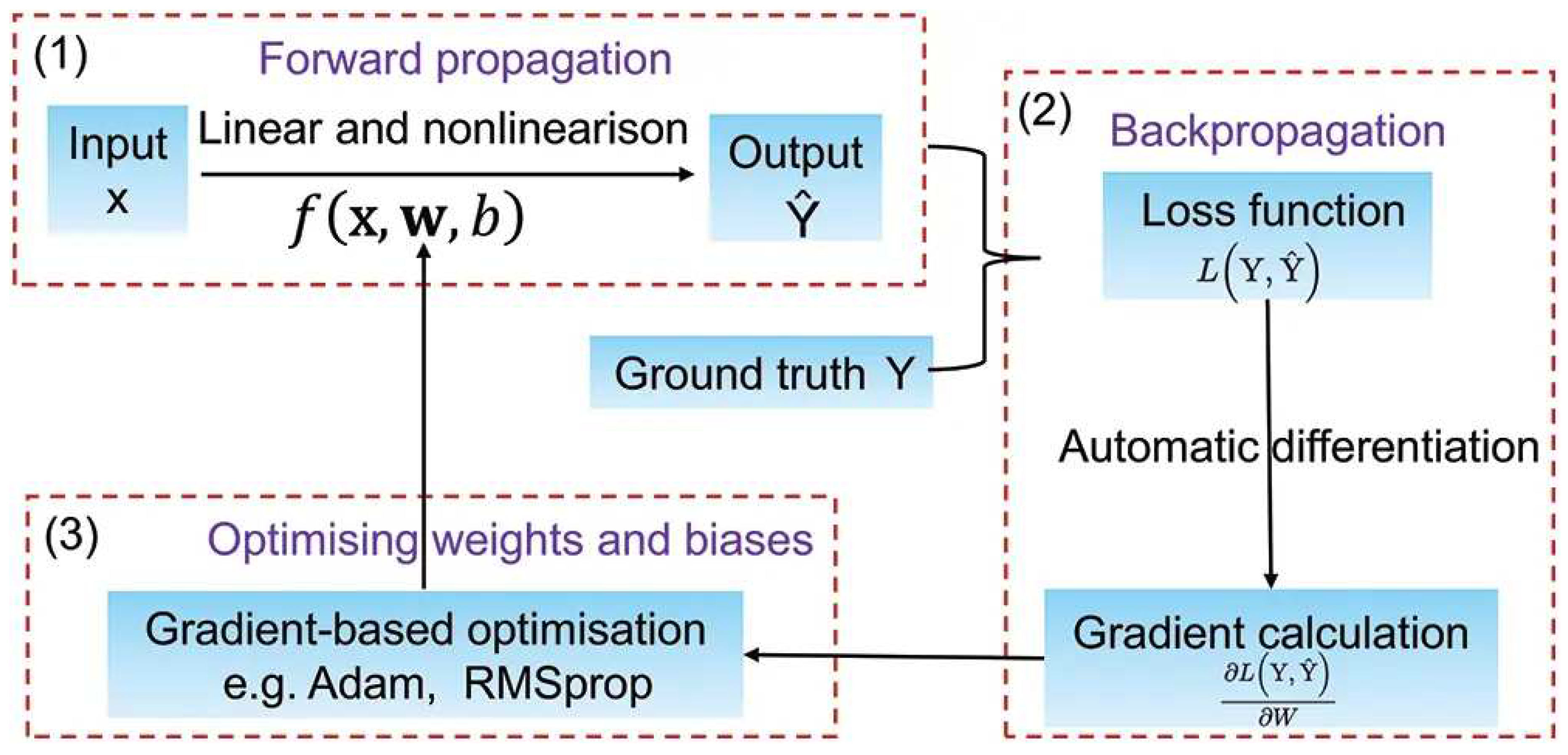

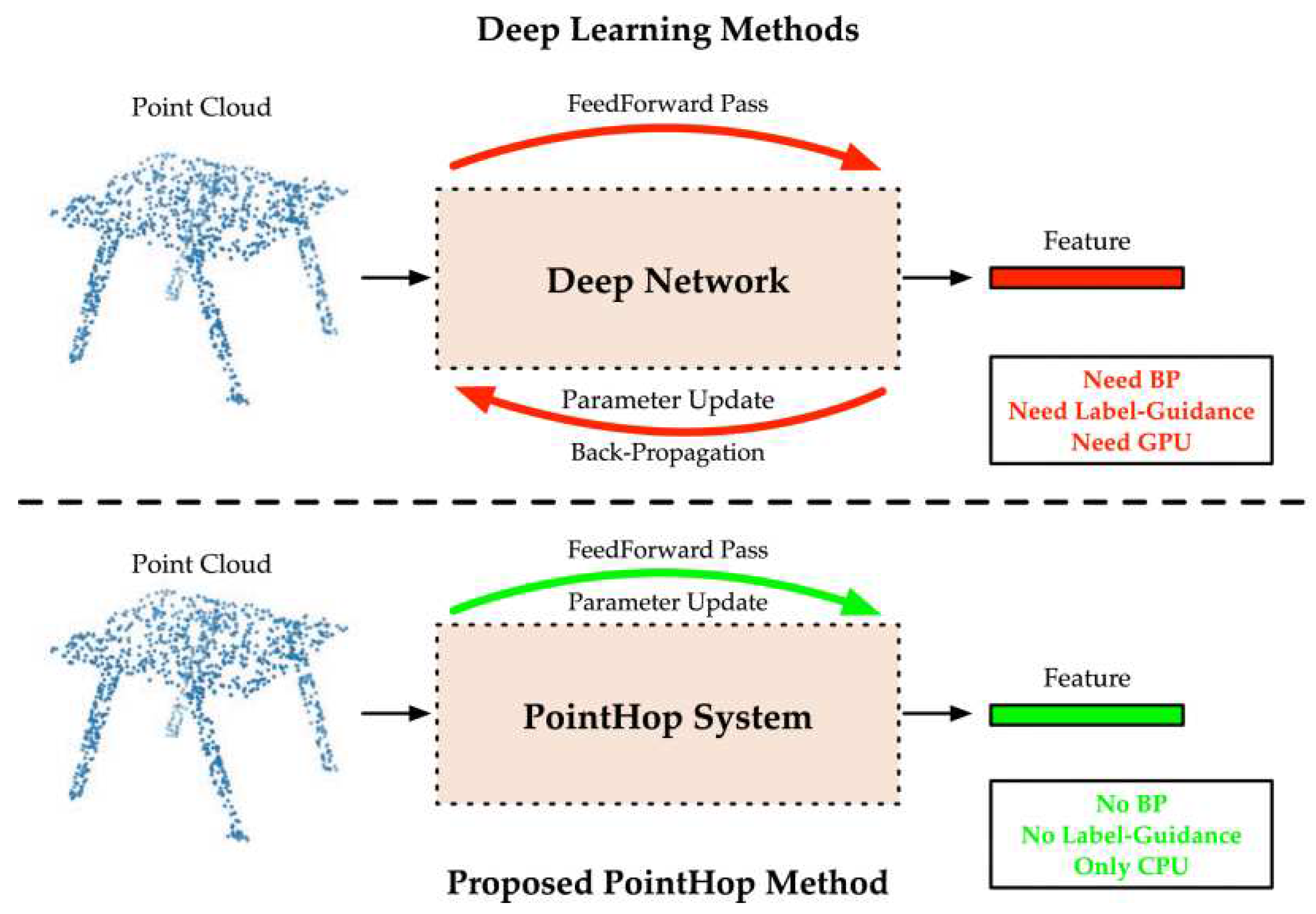

Two broad categories of machine learning are often discussed: classical machine learning and deep learning [

17]. Classical machine learning refers to traditional machine learning algorithms that typically rely on handcrafted features and are trained on data sets using statistical models. In contrast, deep learning involves neural networks that automatically learn features from raw data and are trained on larger data sets using optimization techniques.

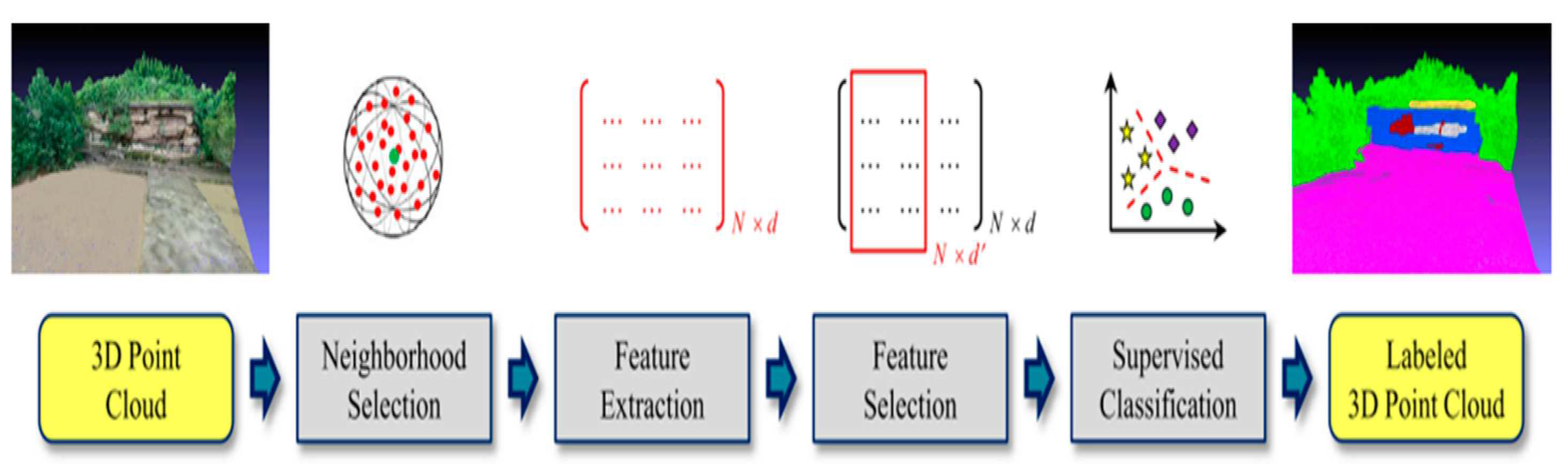

In point cloud semantic segmentation, one of the challenges is to assign every 3D point to a correct label or class in the observed scene (car, bridge,

). After the segmentation, the 3D point cloud can be classified into different categories which are present in the scene [

18]. Our process of automatic semantic segmentation of 3D point clouds employs classical machine learning methods which can be broken down into three primary stages. The first stage is selecting a group of points around a 3D point, a step that can be completed manually or automatically. Each group contains information and spatial relations between its points which should be described using different methods. The second stage is extracting different features from each group and passing these features to the machine learning algorithms for the training process. The key factor in this stage is to discover which features may be more characteristic for each group among other features based on good understanding of the scene. In the third stage, the training stage, a training data set is used to train the classification model. The training data set is fed to the model in the form of features assigned with labels of the different classes. Here the model learns the correlations between these features and be able to separate the different classes in the feature space. Finally, a new 3D point cloud, a so called test set, can be segmented and classified automatically based on the learned features. The classified test set reflects the performance of the model which can be used to segment and classify any other data sets captured by comparable sensors.