Submitted:

26 June 2023

Posted:

26 June 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Current State of Computer Vision in Horticulture

1.1.1. Plant Growth Monitoring

1.1.2. Pest and Disease Detection

1.1.3. Yield Estimation

1.1.4. Quality Assessment

1.1.5. Automated Harvesting

1.2. Deep Learning Practices

2. Materials and Methods

2.1. Visual Signal Data and Quality

| Imaging Method | Characteristics | Data and Representation |

|---|---|---|

| 2D Images | Planar representations of objects captured from a single viewpoint or angle. These have height and width dimensions considered as resolution. | Typically represented as matrices or tensors, with each element representing the pixel value at a specific location. Colour images have multiple channels (e.g., red, green, blue), while greyscale images have a single channel. |

| 2D Videos | A sequence of images captured over time, creating a temporal dimension. Each frame is a 2D image. | Composed of multiple frames, typically represented as a series of 2D image matrices or tensors. The temporal dimension adds an extra axis to represent time. |

| RGB-D Images | Same 2D spatial dimensions as colour images, but include depth information. | Consist of colour channels (red, green, blue) for visual appearance and a depth channel represented as distance or disparity values. Can be stored as multi-channel matrices or tensors. |

| 3D Point Clouds | Represent the spatial coordinates of individual points in a 3D space. | Consist of a collection of points, where each point is represented by its and z coordinates in the 3D space. |

| Thermal Imaging | Same 2D spatial dimensions as regular images, but represent temperature values instead of colour or greyscale pixel values. | Stored as matrices or tensors with scalar temperature values at each pixel location. |

| Fluorescence Imaging | Captures and visualises the fluorescence emitted by objects, typically using specific excitation wavelengths. | Represented as images or videos with intensity values corresponding to the emitted fluorescence signal. These can involve the use of specific fluorescence channels or spectra. |

| Transmittance Imaging | Measures the light transmitted through objects, providing information about their transparency or opacity. | Represented as images or videos with pixel values indicating the amount of light transmitted through each location. |

| Multi-spectral Imaging | Captures images at multiple discrete wavelengths or narrow spectral bands. | Represented as multi-dimensional arrays, where each pixel contains intensity values at different wavelengths or spectral bands. Provides detailed spectral information for analysis. |

| Hyperspectral Imaging | Same 2D spatial dimensions as regular images, but each pixel contains a spectrum of reflectance values across the spectral bands. | Represented as multi-dimensional arrays, where each pixel contains a spectrum of reflectance values across the spectral bands. Provides a more comprehensive spectral analysis compared to multi-spectral imaging. |

| Dataset | Imaging | Quality | Quantity | Application |

|---|---|---|---|---|

| [35] | RGB Image | Varied Resolution | 587 images | Detection of sweet pepper, rock melon, apple, mango, orange and strawberry. |

| [36] | RGB Image | px | 270 images | Fruit maturity classification for pineapple. |

| [37] | RGB Image | 1357 images | Fruit maturity classification and defect detection for dates. | |

| [38] | RGB Image | px | 764 leaves | Growth classification for Okinawan spinach. |

| [39] | Gray Image | px | 13,200 | Prediction of white cabbage seedling’s chances of success. |

| [40] | Hyperspectral | px px, 2.8nm | 45 plants | Estimation of strawberry ripeness. |

| [41] | 2D Video | px | 11 videos, >8000 frames | Segmentation of apple and ripening stage classification. |

| [42] | Timelapse Images | px | 1218 images | Monitoring growth of lettuce. |

| [43] | RGB Images | px | 1350 images | Monitoring growth of lettuce. |

| [44] | 2D Video | px | 20 videos | Detection of apple and fruit counting. |

| [45] | Timelapse Images | px | 724 images | Estimation of compactness, maturity and yield count for blueberry. |

| [45] | RGB-D Images | px | 123 images | Detection of ripeness of tomato fruit. |

| [46] | RGB-D Images | px | 123 images | Detection of ripeness of tomato fruit and counting. |

| [47] | RGB Images | px | 480 images | Detection of growth stage of apple. |

| [48] | RGB Images | px, px | 108 images | Classification of panicle stage of mango. |

| [49] | RGB Images | px, px | 3704 images | Detection and segmentation of apple, mango and almond. |

| [50] | RGB Images | px | 8079 images | Classification of fruit maturity and harvest decision. |

| [51] | RGB-D and IRS | px | 967 multimodal images | Detection of Fuji apple. |

| [52] | RGB Images | px | 49 images | Detection of mango fruit. |

| [53] | Thermal Images | px | - | Detection of bruise and its classification in pear fruit. |

| [54] | RGB Images | px | 1730 images | Detection of mango fruit and orchard load estimation. |

| [55] | RGB Images | Varied Resolution | 2298 images | Robotic harvesting and yield estimation of apple. |

| [56,57] | RGB Images, Multiview Images and 3D Point Cloud | px for images | 288 images | Detection, localisation and segmentation of fuji apple. |

2.2. Data Requirement Analysis

2.2.1. Deep Feature Entropy Analysis

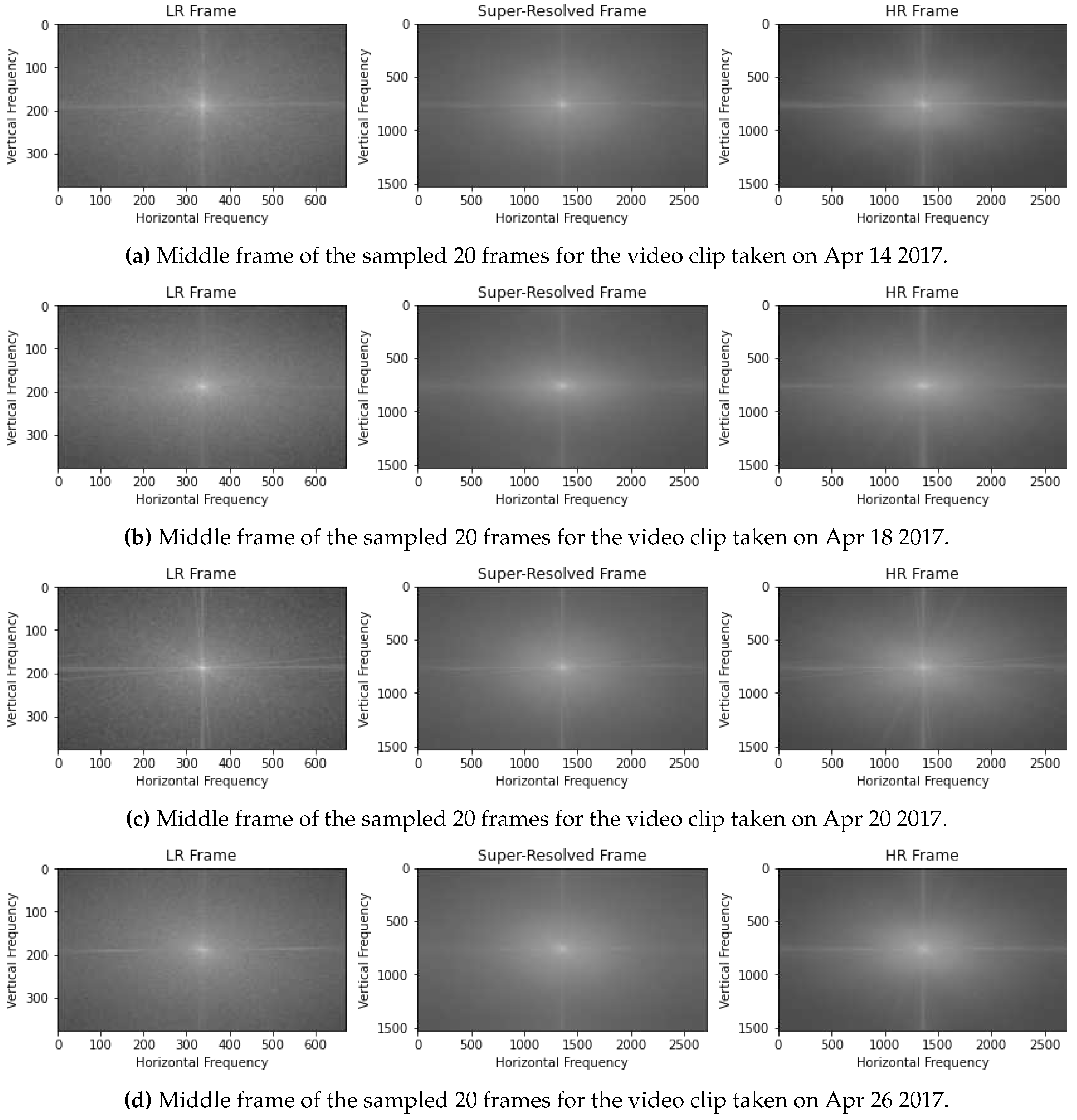

2.2.2. Fourier Transform Analysis

2.3. Super-Resolution as a Generative AI Solution

3. Results and Discussion

3.1. Entropy of Deep Feature Maps

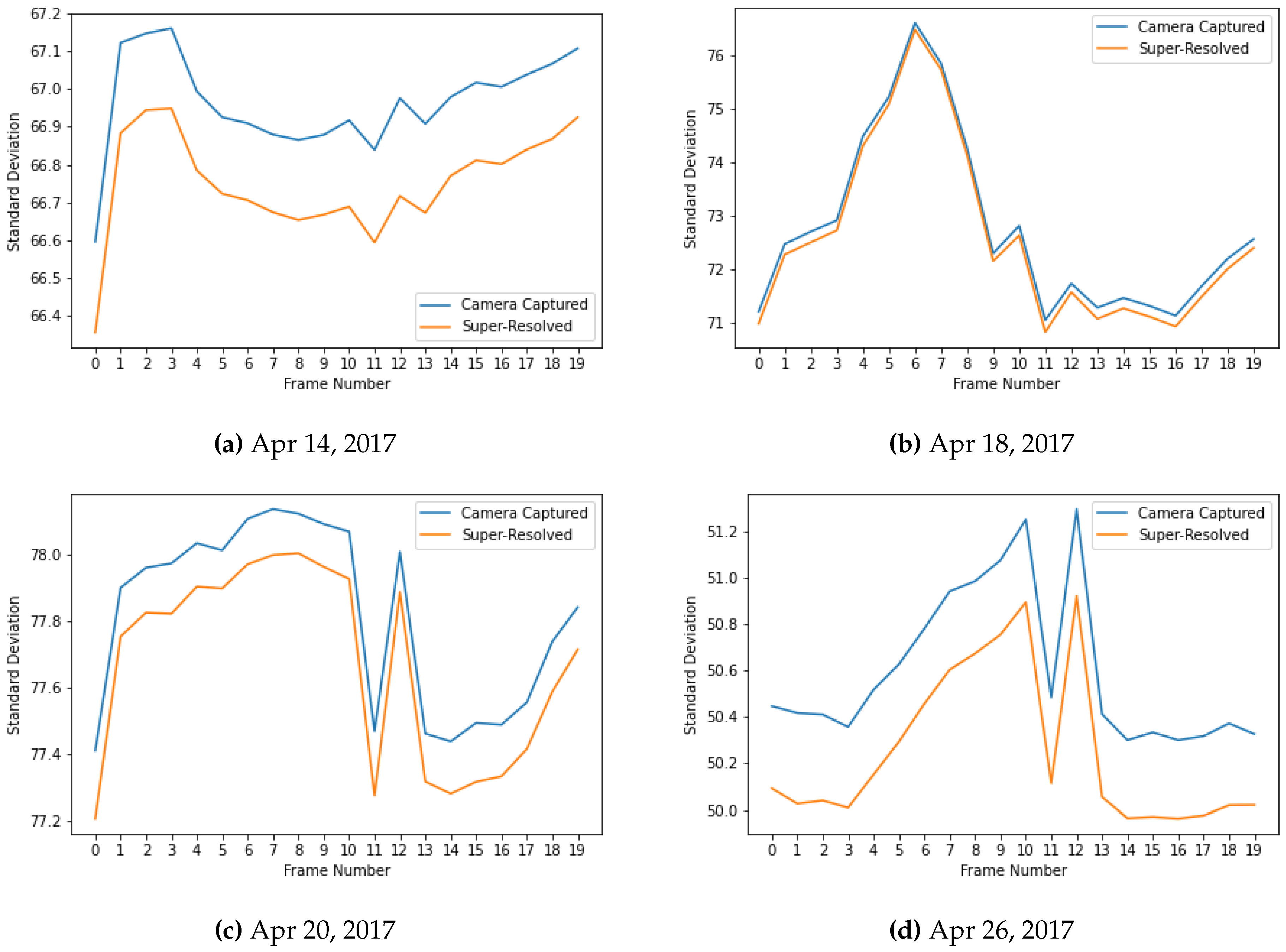

3.2. Magnitude Spectra

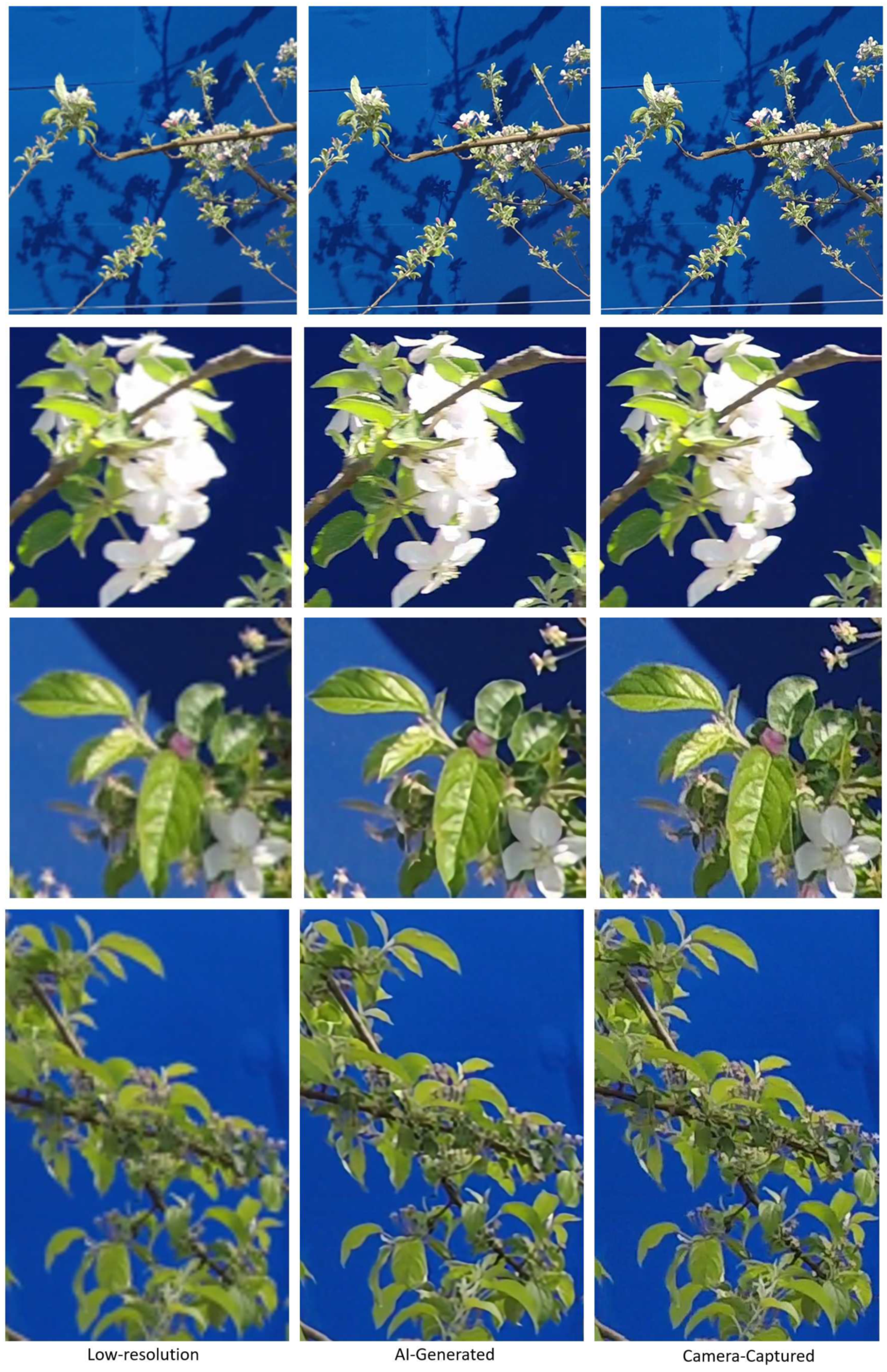

3.3. AI Generated vs Camera Captured – Quality and Cost

| Model | Video Resolution (px) | ≈Price (USD) | ≈Cost (USD) |

|---|---|---|---|

| Canon EOS 2000D | 1920×1080 | 440 | 1159 |

| Canon EOS 90D | 3840×2160 | 1599 | |

| Nikon D5200 | 1920×1080 | 695 | 2102 |

| Nikon D780 | 3840×2160 | 2797 | |

| Fujifilm XF1 | 1920×1080 | 499 | 1750 |

| Fujifilm XT5 | 6240×3510 | 2249 |

4. Conclusions

Author Contributions

Conflicts of Interest

References

- Yang, B.; Xu, Y. Applications of deep-learning approaches in horticultural research: A review. Horticulture Research 2021, 8. [Google Scholar] [CrossRef]

- Spalding, E.P.; Miller, N.D. Image analysis is driving a renaissance in growth measurement. Curr. Opin. Plant Biol. 2013, 16, 100–104. [Google Scholar] [CrossRef] [PubMed]

- Hussain, M.; He, L.; Schupp, J.; Lyons, D.; Heinemann, P. Green fruit segmentation and orientation estimation for robotic green fruit thinning of apples. Comput. Electron. Agric. 2023, 207, 107734. [Google Scholar] [CrossRef]

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. Proceedings of the IEEE conference on computer vision and pattern recognition, 2014, pp. 580–587.

- Yang, X.; Sun, M. A survey on deep learning in crop planting. IOP conference series: Materials science and engineering. IOP Publishing, 2019, Vol. 490, p. 062053.

- Pound, M.P.; Atkinson, J.A.; Townsend, A.J.; Wilson, M.H.; Griffiths, M.; Jackson, A.S.; Bulat, A.; Tzimiropoulos, G.; Wells, D.M.; Murchie, E.H.; others. Deep machine learning provides state-of-the-art performance in image-based plant phenotyping. Gigascience 2017, 6, gix083. [Google Scholar] [CrossRef] [PubMed]

- Tong, Y.S.; Tou-Hong, L.; Yen, K.S. Deep Learning for Image-Based Plant Growth Monitoring: A Review. Int. J. Eng. Technol. Innov. 2022, 12, 225. [Google Scholar] [CrossRef]

- Colaço, A.F.; Molin, J.P.; Rosell-Polo, J.R.; Escolà, A. Application of light detection and ranging and ultrasonic sensors to high-throughput phenotyping and precision horticulture: Current status and challenges. Horticulture Research 2018, 5, 35. [Google Scholar] [CrossRef]

- Pujari, J.D.; Yakkundimath, R.; Byadgi, A.S. Image processing based detection of fungal diseases in plants. Procedia Comput. Sci. 2015, 46, 1802–1808. [Google Scholar] [CrossRef]

- Tsaftaris, S.A.; Minervini, M.; Scharr, H. Machine learning for plant phenotyping needs image processing. Trends Plant Sci. 2016, 21, 989–991. [Google Scholar] [CrossRef]

- Fuentes, A.; Yoon, S.; Park, D.S. Deep learning-based techniques for plant diseases recognition in real-field scenarios. Advanced Concepts for Intelligent Vision Systems: 20th International Conference, ACIVS 2020, Auckland, New Zealand, February 10–14, 2020, Proceedings 20. Springer, 2020, pp. 3–14.

- Indrakumari, R.; Poongodi, T.; Khaitan, S.; Sagar, S.; Balamurugan, B. A review on plant diseases recognition through deep learning. Handb. Deep Learn. Biomed. Eng.

- Liu, J.; Wang, X. Plant diseases and pests detection based on deep learning: A review. Plant Methods 2021, 17, 1–18. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. Arxiv Prepr. 2014, arXiv:1409.1556. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 770–778.

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Commun. ACM 2017, 60, 84–90. [Google Scholar] [CrossRef]

- Saleem, M.H.; Potgieter, J.; Arif, K.M. Plant disease detection and classification by deep learning. Plants 2019, 8, 468. [Google Scholar] [CrossRef] [PubMed]

- Chlingaryan, A.; Sukkarieh, S.; Whelan, B. Machine learning approaches for crop yield prediction and nitrogen status estimation in precision agriculture: A review. Comput. Electron. Agric. 2018, 151, 61–69. [Google Scholar] [CrossRef]

- He, L.; Fang, W.; Zhao, G.; Wu, Z.; Fu, L.; Li, R.; Majeed, Y.; Dhupia, J. Fruit yield prediction and estimation in orchards: A state-of-the-art comprehensive review for both direct and indirect methods. Comput. Electron. Agric. 2022, 195, 106812. [Google Scholar] [CrossRef]

- Girshick, R. Fast r-cnn. Proceedings of the IEEE international conference on computer vision, 2015, pp. 1440–1448.

- Rebortera, M.; Fajardo, A. Forecasting Banana Harvest Yields using Deep Learning. 2019 IEEE 9th International Conference on System Engineering and Technology (ICSET), 2019, pp. 380–384. [CrossRef]

- Koirala, A.; Walsh, K.B.; Wang, Z.; McCarthy, C. Deep learning–Method overview and review of use for fruit detection and yield estimation. Comput. Electron. Agric. 2019, 162, 219–234. [Google Scholar] [CrossRef]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You only look once: Unified, real-time object detection. Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 779–788.

- Redmon, J.; Farhadi, A. YOLO9000: Better, faster, stronger. Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 7263–7271.

- Redmon, J.; Farhadi, A. Yolov3: An incremental improvement. Arxiv Prepr. 2018, arXiv:1804.02767. [Google Scholar]

- Bochkovskiy, A.; Wang, C.Y.; Liao, H.Y.M. Yolov4: Optimal speed and accuracy of object detection. Arxiv Prepr. 2020, arXiv:2004.10934. [Google Scholar]

- Nturambirwe, J.F.I.; Opara, U.L. Machine learning applications to non-destructive defect detection in horticultural products. Biosystems engineering 2020, 189, 60–83. [Google Scholar] [CrossRef]

- Arendse, E.; Fawole, O.A.; Magwaza, L.S.; Opara, U.L. Non-destructive prediction of internal and external quality attributes of fruit with thick rind: A review. J. Food Eng. 2018, 217, 11–23. [Google Scholar] [CrossRef]

- Wieme, J.; Mollazade, K.; Malounas, I.; Zude-Sasse, M.; Zhao, M.; Gowen, A.; Argyropoulos, D.; Fountas, S.; Van Beek, J. Application of hyperspectral imaging systems and artificial intelligence for quality assessment of fruit, vegetables and mushrooms: A review. Biosystems Engineering 2022, 222, 156–176. [Google Scholar] [CrossRef]

- Lu, Y.; Saeys, W.; Kim, M.; Peng, Y.; Lu, R. Hyperspectral imaging technology for quality and safety evaluation of horticultural products: A review and celebration of the past 20-year progress. Postharvest Biol. Technol. 2020, 170, 111318. [Google Scholar] [CrossRef]

- Zujevs, A.; Osadcuks, V.; Ahrendt, P. Trends in Robotic Sensor Technologies for Fruit Harvesting: 2010-2015. Procedia Comput. Sci. 2015, 77, 227–233, ICTE in regional Development 2015 Valmiera. [Google Scholar] [CrossRef]

- Barbashov, N.; Shanygin, S.; Barkova, A. Agricultural robots for fruit harvesting in horticulture application. IOP Conference Series: Earth and Environmental Science. IOP Publishing, 2022, Vol. 981, p. 032009.

- Zhang, X.; Karkee, M.; Zhang, Q.; Whiting, M.D. Computer vision-based tree trunk and branch identification and shaking points detection in Dense-Foliage canopy for automated harvesting of apples. J. Field Robot. 2021, 38, 476–493. [Google Scholar] [CrossRef]

- Benavides, M.; Cantón-Garbín, M.; Sánchez-Molina, J.; Rodríguez, F. Automatic tomato and peduncle location system based on computer vision for use in robotized harvesting. Applied Sciences 2020, 10, 5887. [Google Scholar] [CrossRef]

- Sa, I.; Ge, Z.; Dayoub, F.; Upcroft, B.; Perez, T.; McCool, C. Deepfruits: A fruit detection system using deep neural networks. sensors 2016, 16, 1222. [Google Scholar] [CrossRef] [PubMed]

- Azman, A.A.; Ismail, F.S. Convolutional neural network for optimal pineapple harvesting. Elektr. -J. Electr. Eng. 2017, 16, 1–4. [Google Scholar]

- Nasiri, A.; Taheri-Garavand, A.; Zhang, Y.D. Image-based deep learning automated sorting of date fruit. Postharvest Biol. Technol. 2019, 153, 133–141. [Google Scholar] [CrossRef]

- Hao, X.; Jia, J.; Khattak, A.M.; Zhang, L.; Guo, X.; Gao, W.; Wang, M. Growing period classification of Gynura bicolor DC using GL-CNN. Comput. Electron. Agric. 2020, 174, 105497. [Google Scholar] [CrossRef]

- Perugachi-Diaz, Y.; Tomczak, J.M.; Bhulai, S. Deep learning for white cabbage seedling prediction. Comput. Electron. Agric. 2021, 184, 106059. [Google Scholar] [CrossRef]

- Gao, Z.; Shao, Y.; Xuan, G.; Wang, Y.; Liu, Y.; Han, X. Real-time hyperspectral imaging for the in-field estimation of strawberry ripeness with deep learning. Artif. Intell. Agric. 2020, 4, 31–38. [Google Scholar] [CrossRef]

- Sabzi, S.; Abbaspour-Gilandeh, Y.; García-Mateos, G.; Ruiz-Canales, A.; Molina-Martínez, J.M.; Arribas, J.I. An automatic non-destructive method for the classification of the ripeness stage of red delicious apples in orchards using aerial video. Agronomy 2019, 9, 84. [Google Scholar] [CrossRef]

- Lu, J.Y.; Chang, C.L.; Kuo, Y.F. Monitoring growth rate of lettuce using deep convolutional neural networks. 2019 ASABE Annual International Meeting. American Society of Agricultural and Biological Engineers, 2019, p. 1.

- Reyes-Yanes, A.; Martinez, P.; Ahmad, R. Real-time growth rate and fresh weight estimation for little gem romaine lettuce in aquaponic grow beds. Comput. Electron. Agric. 2020, 179, 105827. [Google Scholar] [CrossRef]

- Gao, F.; Fang, W.; Sun, X.; Wu, Z.; Zhao, G.; Li, G.; Li, R.; Fu, L.; Zhang, Q. A novel apple fruit detection and counting methodology based on deep learning and trunk tracking in modern orchard. Comput. Electron. Agric. 2022, 197, 107000. [Google Scholar] [CrossRef]

- Ni, X.; Li, C.; Jiang, H.; Takeda, F. Deep learning image segmentation and extraction of blueberry fruit traits associated with harvestability and yield. Horticulture research 2020, 7. [Google Scholar] [CrossRef] [PubMed]

- Afonso, M.; Fonteijn, H.; Fiorentin, F.S.; Lensink, D.; Mooij, M.; Faber, N.; Polder, G.; Wehrens, R. Tomato fruit detection and counting in greenhouses using deep learning. Front. Plant Sci. 2020, 11, 571299. [Google Scholar] [CrossRef]

- Tian, Y.; Yang, G.; Wang, Z.; Wang, H.; Li, E.; Liang, Z. Apple detection during different growth stages in orchards using the improved YOLO-V3 model. Comput. Electron. Agric. 2019, 157, 417–426. [Google Scholar] [CrossRef]

- Koirala, A.; Walsh, K.B.; Wang, Z.; Anderson, N. Deep learning for mango (Mangifera indica) panicle stage classification. Agronomy 2020, 10, 143. [Google Scholar] [CrossRef]

- Bargoti, S.; Underwood, J. Deep fruit detection in orchards. 2017 IEEE international conference on robotics and automation (ICRA). IEEE, 2017, pp. 3626–3633.

- Altaheri, H.; Alsulaiman, M.; Muhammad, G. Date fruit classification for robotic harvesting in a natural environment using deep learning. IEEE Access 2019, 7, 117115–117133. [Google Scholar] [CrossRef]

- Gené-Mola, J.; Vilaplana, V.; Rosell-Polo, J.R.; Morros, J.R.; Ruiz-Hidalgo, J.; Gregorio, E. Multi-modal deep learning for Fuji apple detection using RGB-D cameras and their radiometric capabilities. Comput. Electron. Agric. 2019, 162, 689–698. [Google Scholar] [CrossRef]

- Kestur, R.; Meduri, A.; Narasipura, O. MangoNet: A deep semantic segmentation architecture for a method to detect and count mangoes in an open orchard. Eng. Appl. Artif. Intell. 2019, 77, 59–69. [Google Scholar] [CrossRef]

- Zeng, X.; Miao, Y.; Ubaid, S.; Gao, X.; Zhuang, S. Detection and classification of bruises of pears based on thermal images. Postharvest Biol. Technol. 2020, 161, 111090. [Google Scholar] [CrossRef]

- Koirala, A.; Walsh, K.; Wang, Z.; McCarthy, C. Deep learning for real-time fruit detection and orchard fruit load estimation: Benchmarking of ‘MangoYOLO’. Precision Agriculture 2019, 20, 1107–1135. [Google Scholar] [CrossRef]

- Bhusal, S.; Karkee, M.; Zhang, Q. Apple Dataset Benchmark from Orchard Environment in Modern Fruiting Wall 2019.

- Gené-Mola, J.; Sanz-Cortiella, R.; Rosell-Polo, J.R.; Morros, J.R.; Ruiz-Hidalgo, J.; Vilaplana, V.; Gregorio, E. Fruit detection and 3D location using instance segmentation neural networks and structure-from-motion photogrammetry. Comput. Electron. Agric. 2020, 169, 105165. [Google Scholar] [CrossRef]

- Gené-Mola, J.; Gregorio, E.; Cheein, F.A.; Guevara, J.; Llorens, J.; Sanz-Cortiella, R.; Escolà, A.; Rosell-Polo, J.R. LFuji-air dataset: Annotated 3D LiDAR point clouds of Fuji apple trees for fruit detection scanned under different forced air flow conditions. Data Brief 2020, 29, 105248. [Google Scholar] [CrossRef]

- Leung, L.W.; King, B.; Vohora, V. Comparison of image data fusion techniques using entropy and INI. 22nd Asian Conference on Remote Sensing, 2001, Vol. 5, pp. 152–157.

- Tabb, A.; Medeiros, H. Video Data of Flowers, Fruitlets, and Fruit in Apple Trees during the 2017 Growing Season at USDA-ARS-AFRS. Ag Data Commons, 2018. [CrossRef]

- Wang, X.; Chan, K.C.; Yu, K.; Dong, C.; Change Loy, C. Edvr: Video restoration with enhanced deformable convolutional networks. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, 2019, pp. 0–0.

- Haris, M.; Shakhnarovich, G.; Ukita, N. Recurrent back-projection network for video super-resolution. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2019, pp. 3897–3906.

- Baniya, A.A.; Lee, T.K.; Eklund, P.W.; Aryal, S.; Robles-Kelly, A. Online Video Super-Resolution using Information Replenishing Unidirectional Recurrent Model. Neurocomputing 2023, 126355. [Google Scholar] [CrossRef]

- Isobe, T.; Jia, X.; Gu, S.; Li, S.; Wang, S.; Tian, Q. Video Super-Resolution with Recurrent Structure-Detail Network. Computer Vision – ECCV 2020; Vedaldi, A., Bischof, H., Brox, T., Frahm, J.M., Eds.; Springer International Publishing: Cham, 2020; pp. 645–660. [Google Scholar]

- Isobe, T.; Li, S.; Jia, X.; Yuan, S.; Slabaugh, G.; Xu, C.; Li, Y.L.; Wang, S.; Tian, Q. Video Super-Resolution With Temporal Group Attention. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2020.

- Chan, K.C.; Wang, X.; Yu, K.; Dong, C.; Loy, C.C. BasicVSR: The Search for Essential Components in Video Super-Resolution and Beyond. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2021, pp. 4947–4956.

- Baniya, A.A.; Lee, T.K.; Eklund, P.W.; Aryal, S. Omnidirectional Video Super-Resolution using Deep Learning. IEEE Trans. Multimed. 2023. [Google Scholar] [CrossRef]

- Yamamoto, K.; Togami, T.; Yamaguchi, N. Super-resolution of plant disease images for the acceleration of image-based phenotyping and vigor diagnosis in agriculture. Sensors 2017, 17, 2557. [Google Scholar] [CrossRef]

- Isobe, T.; Zhu, F.; Jia, X.; Wang, S. Revisiting temporal modeling for video super-resolution. Arxiv Prepr. 2020, arXiv:2008.05765. [Google Scholar]

- Chan, K.C.; Zhou, S.; Xu, X.; Loy, C.C. BasicVSR++: Improving video super-resolution with enhanced propagation and alignment. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 5972–5981.

- Yi, P.; Wang, Z.; Jiang, K.; Jiang, J.; Lu, T.; Tian, X.; Ma, J. Omniscient video super-resolution. Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 4429–4438.

- Product Chart. Product Chart. Online, 2023. https://www.productchart.com/cameras/.

- Shan, T.; Wang, J.; Chen, F.; Szenher, P.; Englot, B. Simulation-based lidar super-resolution for ground vehicles. Robot. Auton. Syst. 2020, 134, 103647. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).