Submitted:

22 June 2023

Posted:

23 June 2023

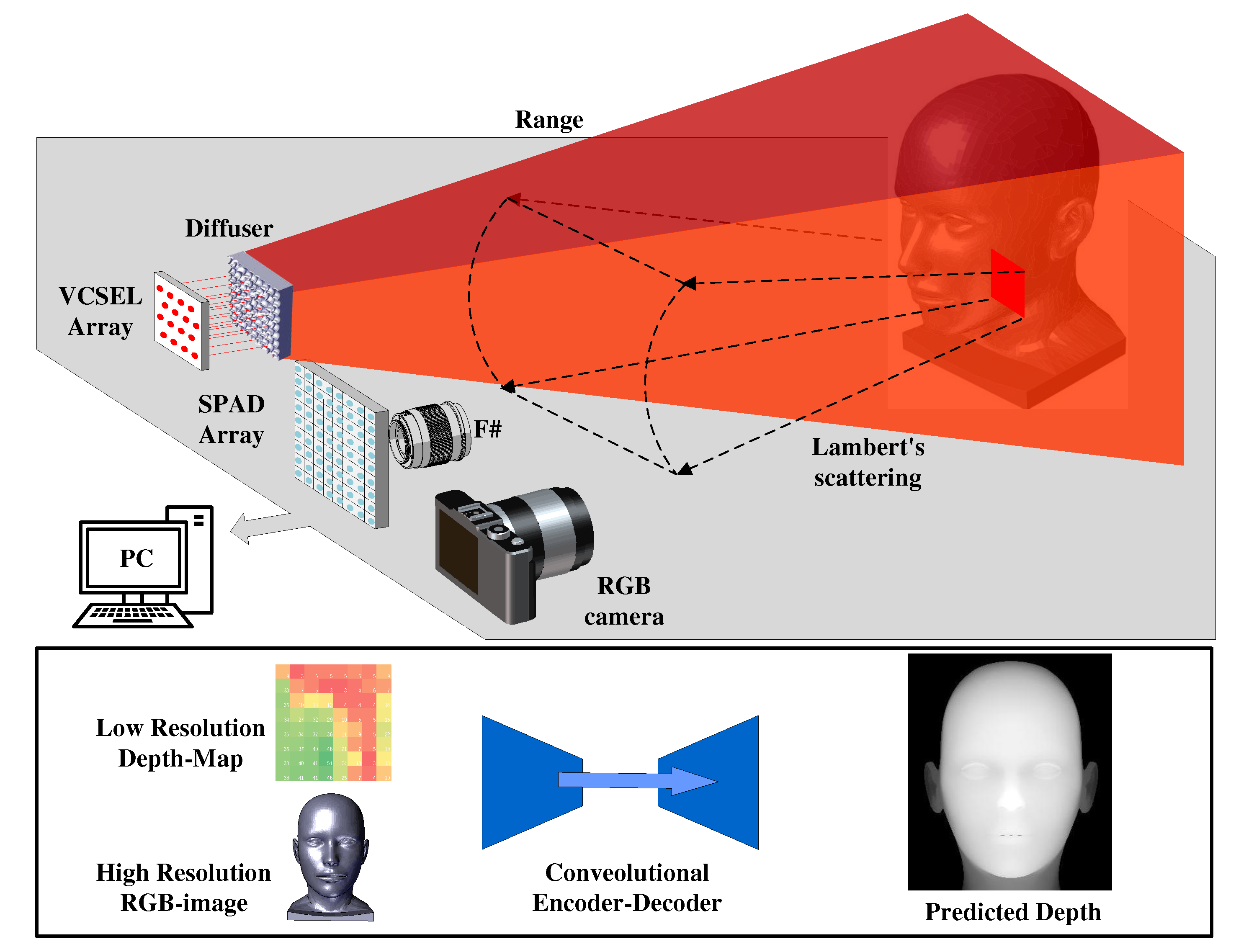

You are already at the latest version

Abstract

Keywords:

1. Introduction

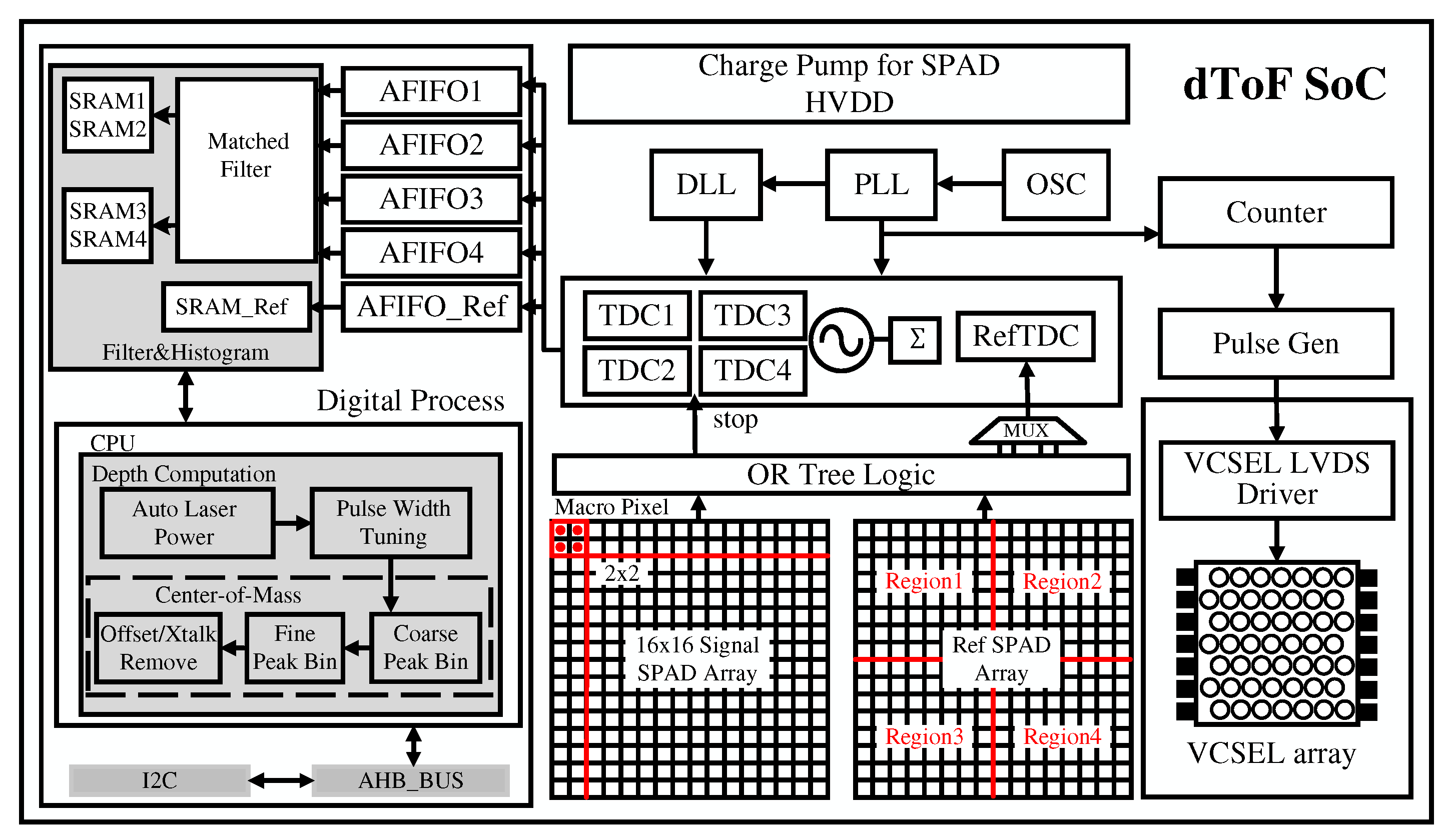

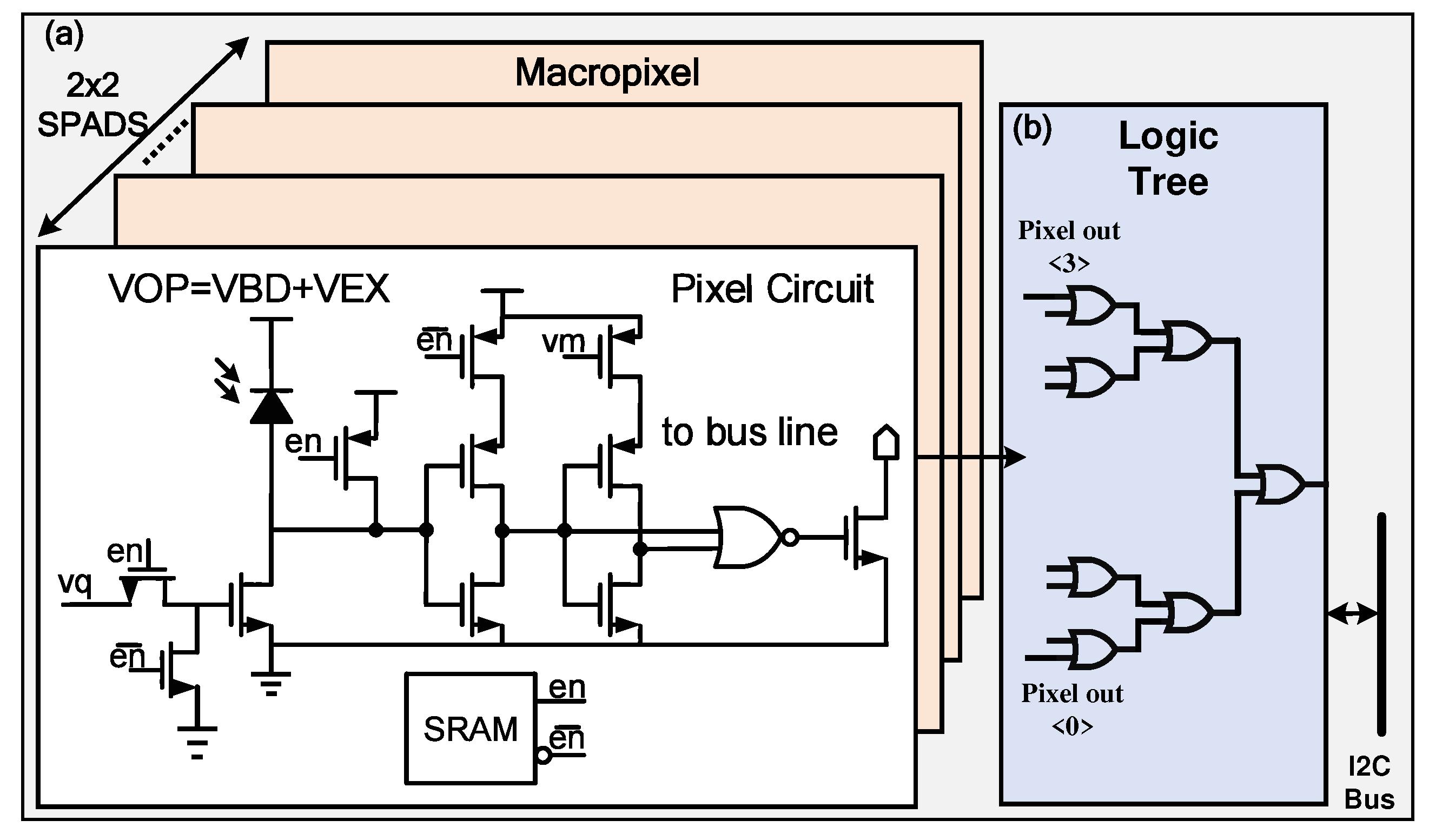

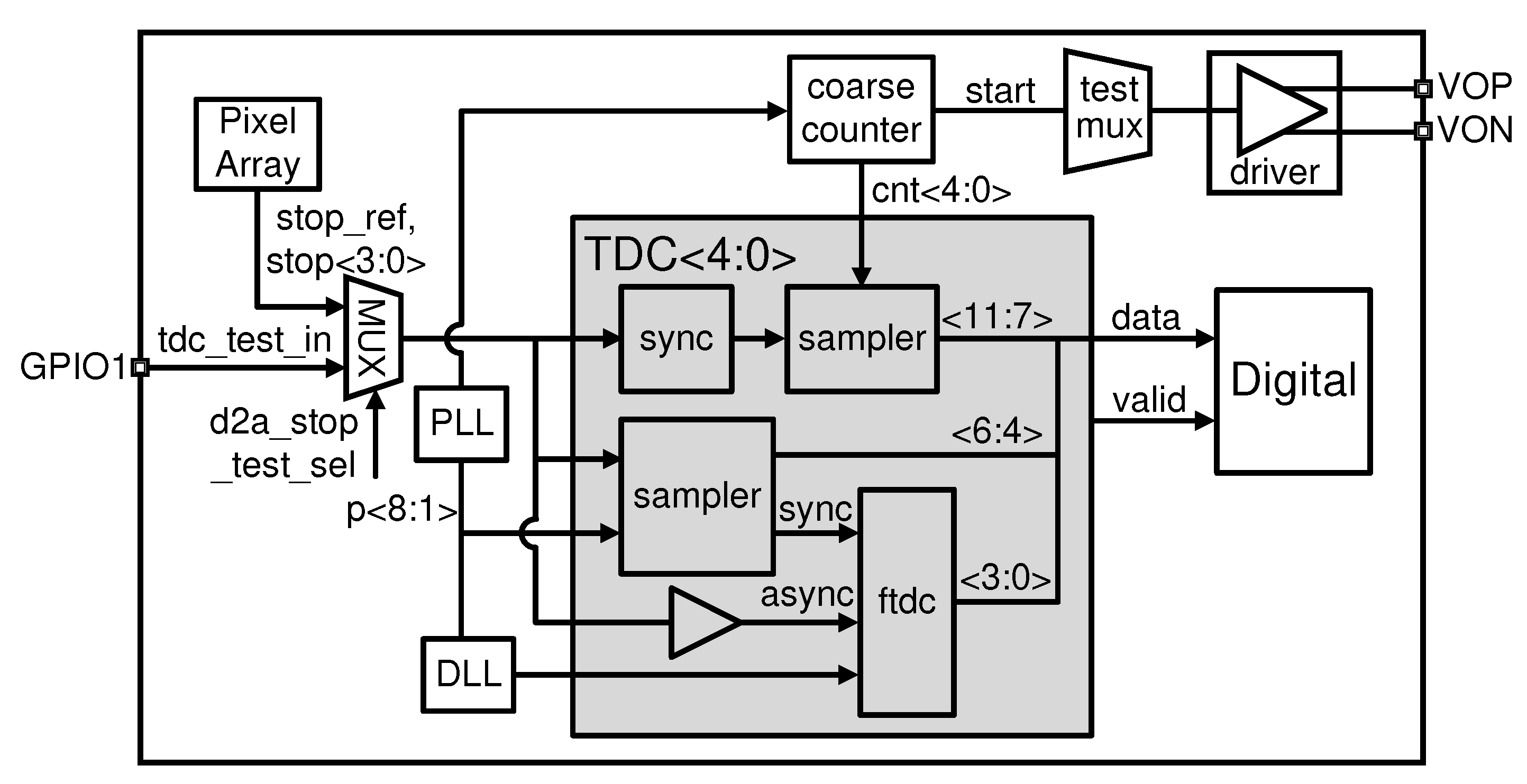

2. SOC Implement

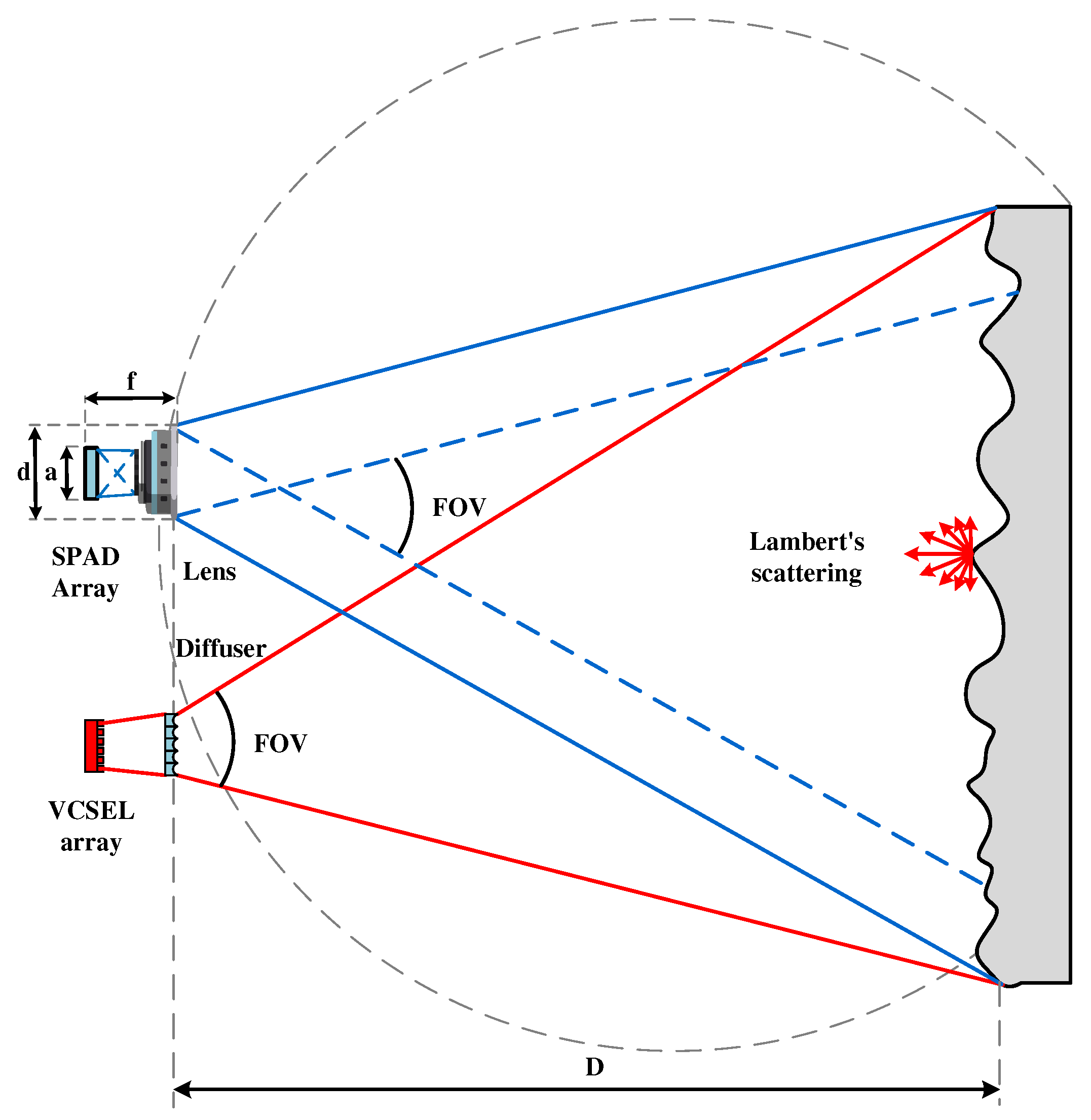

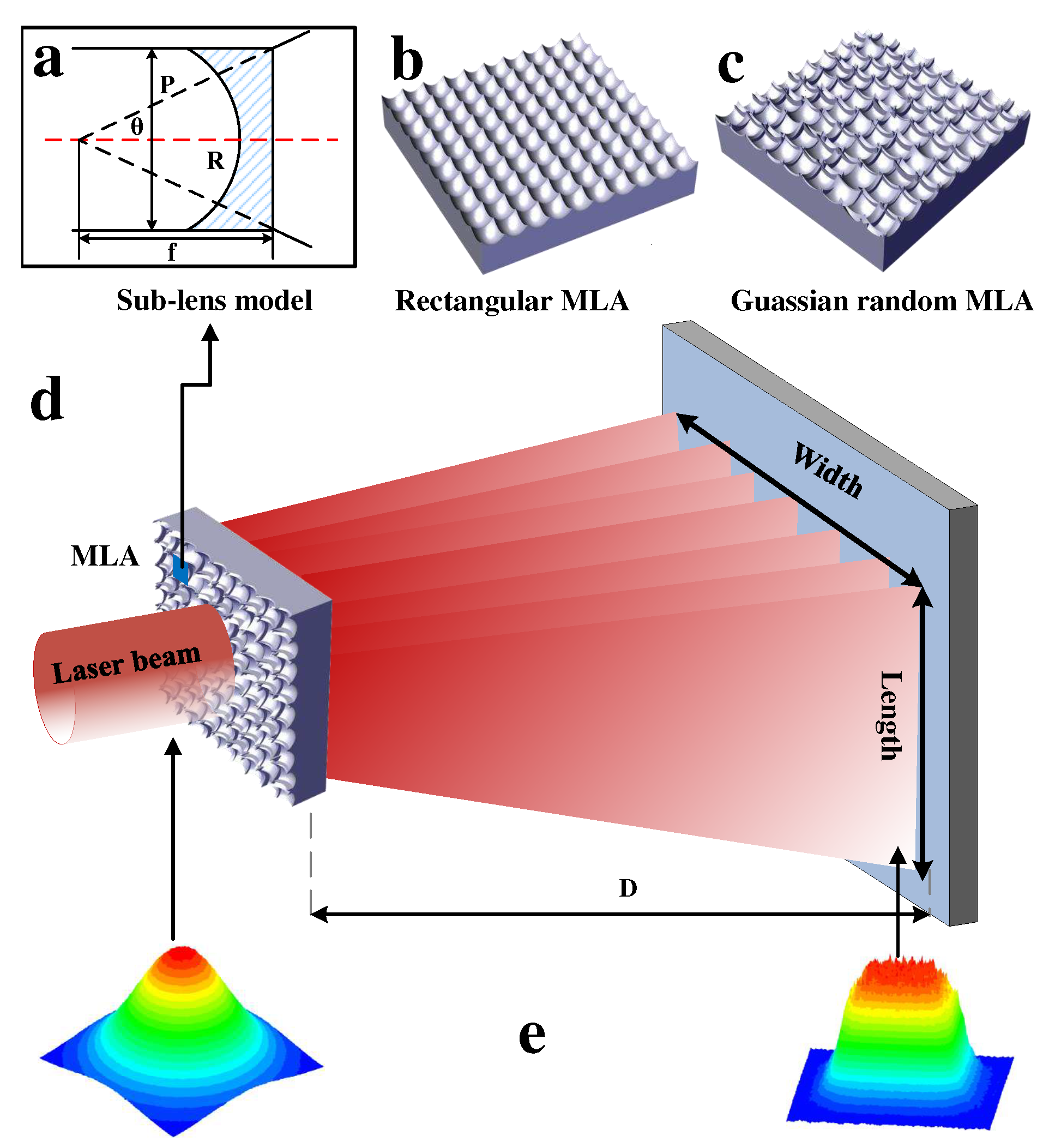

3. Optical System and MLA

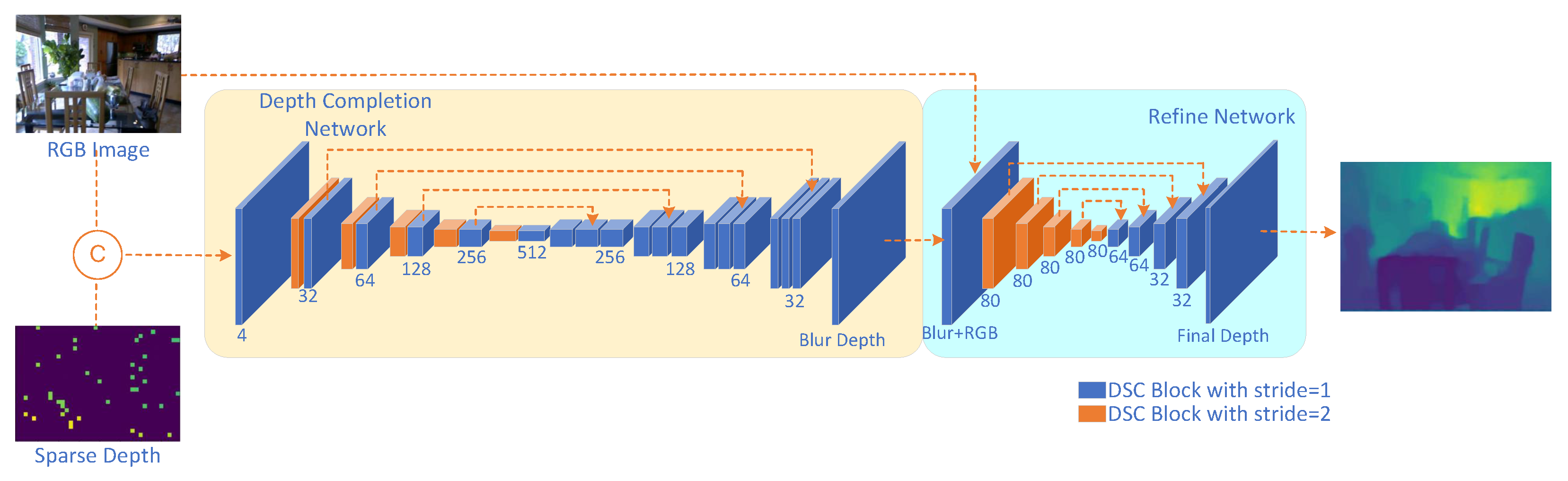

4. Proposed RGB Guided Depth Completion Neural Network

- (1)

- We incorporate conv-bn-relu and conv-bn architectures, which are supported by the majority of CNN accelerators and embedded NPUs.

- (2)

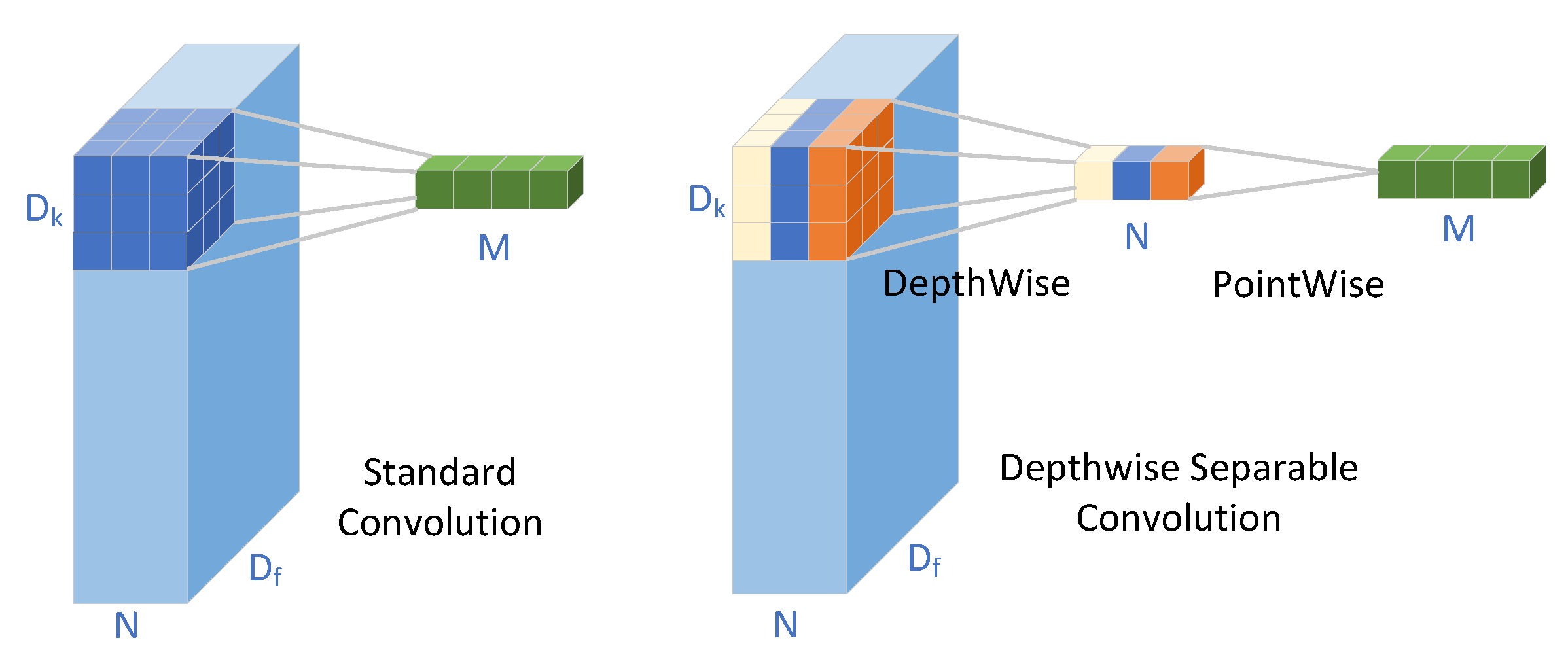

- To reduce the number of parameters, we adopt depthwise separable convolution, as presented in [22], as the foundational convolution architecture.

4.1. Depthwise Separable Convolution

4.2. Network

4.3. Loss Function

5. Results and discussion

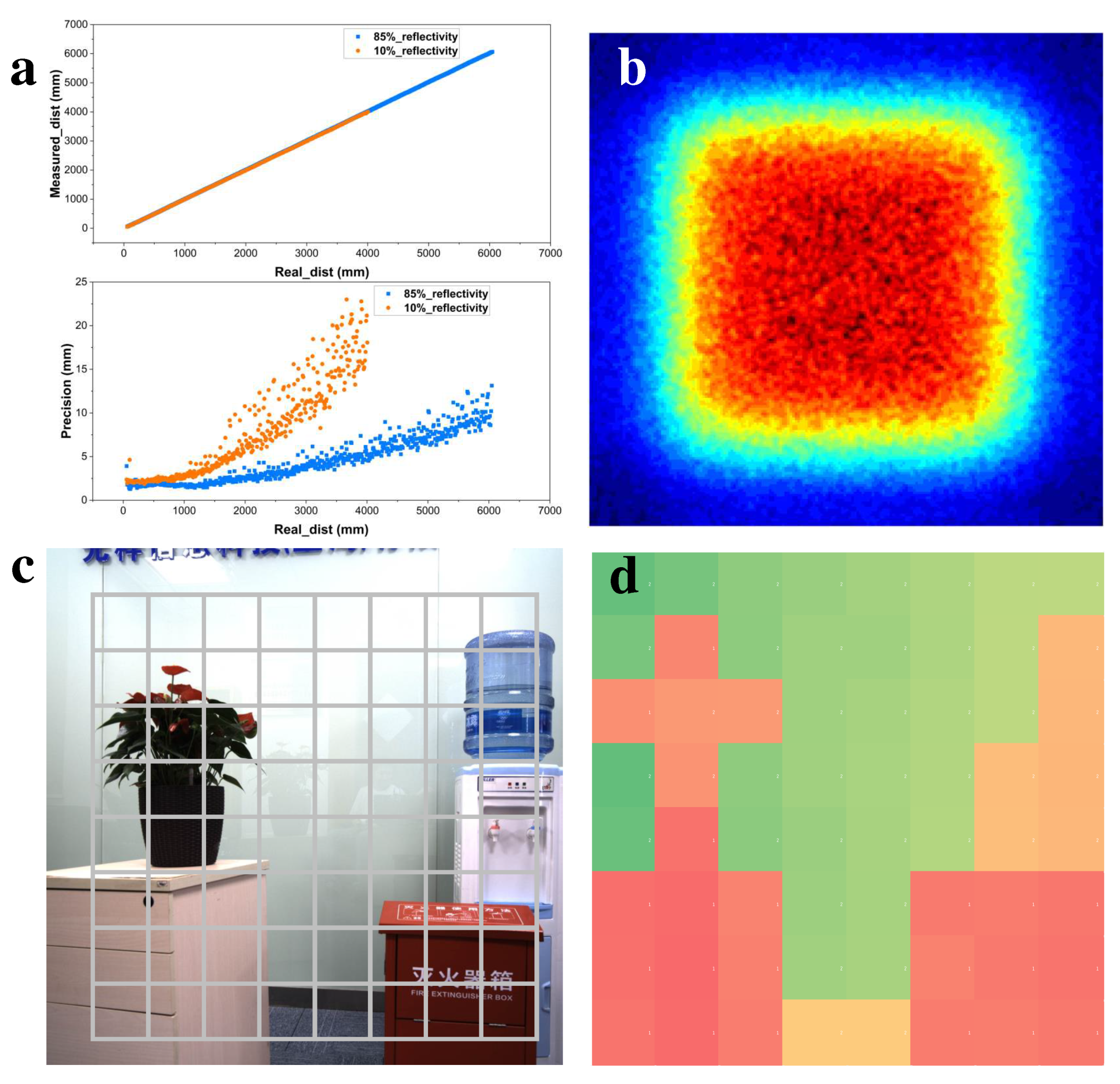

5.1. System of Hardware

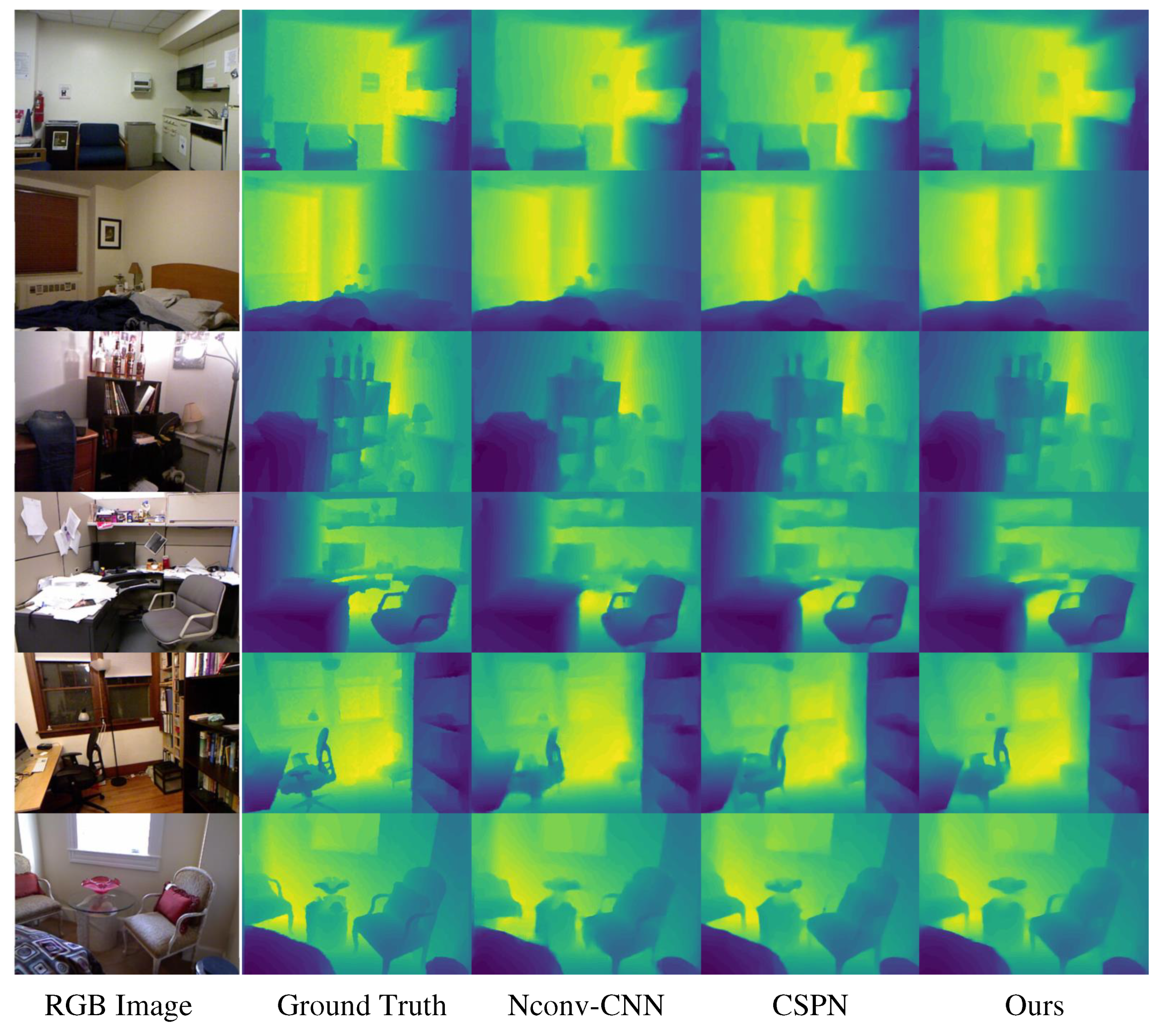

5.2. Comparison of network performance

5.2.1. Dataset

5.2.2. Metrics

- (1)

- RMSE:

- (2)

- Abs Rel:

- (3)

- : % of , s.t.

5.2.3. Comparison

5.3. Network implement on hardware system

6. Conclusions

Author Contributions

Funding

Data Availability Statement

References

- David, R.; Allard, B.; Branca, X.; Joubert, C. Study and design of an integrated CMOS laser diode driver for an itof-based 3D image sensor. CIPS 2020; 11th International Conference on Integrated Power Electronics Systems. VDE, 2020, pp. 1–6.

- Bamji, C.; Godbaz, J.; Oh, M.; Mehta, S.; Payne, A.; Ortiz, S.; Nagaraja, S.; Perry, T.; Thompson, B. A Review of Indirect Time-of-Flight Technologies. IEEE Transactions on Electron Devices 2022. [Google Scholar] [CrossRef]

- Zhang, C.; Zhang, N.; Ma, Z.; Wang, L.; Qin, Y.; Jia, J.; Zang, K. A 240× 160 3D-stacked SPAD dToF image sensor with rolling shutter and in-pixel histogram for mobile devices. IEEE Open Journal of the Solid-State Circuits Society 2021, 2, 3–11. [Google Scholar] [CrossRef]

- Li, N.; Ho, C.P.; Xue, J.; Lim, L.W.; Chen, G.; Fu, Y.H.; Lee, L.Y.T. A Progress Review on Solid-State LiDAR and Nanophotonics-Based LiDAR Sensors. Laser & Photonics Reviews 2022, 16, 2100511. [Google Scholar]

- Hutchings, S.W.; Johnston, N.; Gyongy, I.; Al Abbas, T.; Dutton, N.A.; Tyler, M.; Chan, S.; Leach, J.; Henderson, R.K. A reconfigurable 3-D-stacked SPAD imager with in-pixel histogramming for flash LIDAR or high-speed time-of-flight imaging. IEEE Journal of Solid-State Circuits 2019, 54, 2947–2956. [Google Scholar] [CrossRef]

- Ximenes, A.R.; Padmanabhan, P.; Lee, M.J.; Yamashita, Y.; Yaung, D.N.; Charbon, E. A 256× 256 45/65nm 3D-stacked SPAD-based direct TOF image sensor for LiDAR applications with optical polar modulation for up to 18.6 dB interference suppression. 2018 IEEE International Solid-State Circuits Conference-(ISSCC). IEEE, 2018, pp. 96–98.

- Bantounos, K.; Smeeton, T.M.; Underwood, I. 24-3: Distinguished Student Paper: Towards a Solid-State LIDAR Using Holographic Illumination and a SPAD-Based Time-Of-Flight Image Sensor. SID Symposium Digest of Technical Papers. Wiley Online Library, 2022, Vol. 53, pp. 279–282.

- Kumagai, O.; Ohmachi, J.; Matsumura, M.; Yagi, S.; Tayu, K.; Amagawa, K.; Matsukawa, T.; Ozawa, O.; Hirono, D.; Shinozuka, Y. ; others. 7.3 A 189× 600 back-illuminated stacked SPAD direct time-of-flight depth sensor for automotive LiDAR systems. 2021 IEEE International Solid-State Circuits Conference (ISSCC). IEEE, 2021, Vol. 64, pp. 110–112.

- Ximenes, A.R.; Padmanabhan, P.; Lee, M.J.; Yamashita, Y.; Yaung, D.N.; Charbon, E. A modular, direct time-of-flight depth sensor in 45/65-nm 3-D-stacked CMOS technology. IEEE Journal of Solid-State Circuits 2019, 54, 3203–3214. [Google Scholar] [CrossRef]

- Ulku, A.C.; Bruschini, C.; Antolović, I.M.; Kuo, Y.; Ankri, R.; Weiss, S.; Michalet, X.; Charbon, E. A 512× 512 SPAD image sensor with integrated gating for widefield FLIM. IEEE Journal of Selected Topics in Quantum Electronics 2018, 25, 1–12. [Google Scholar] [CrossRef] [PubMed]

- Hu, J.; Bao, C.; Ozay, M.; Fan, C.; Gao, Q.; Liu, H.; Lam, T.L. Deep Depth Completion from Extremely Sparse Data: A Survey. IEEE Transactions on Pattern Analysis and Machine Intelligence 2022. [Google Scholar] [CrossRef] [PubMed]

- Eldesokey, A.; Felsberg, M.; Khan, F.S. Confidence propagation through cnns for guided sparse depth regression. IEEE transactions on pattern analysis and machine intelligence 2019, 42, 2423–2436. [Google Scholar] [CrossRef] [PubMed]

- Cheng, X.; Wang, P.; Yang, R. Depth estimation via affinity learned with convolutional spatial propagation network. Proceedings of the European Conference on Computer Vision (ECCV), 2018, pp. 103–119.

- Liu, L.; Liao, Y.; Wang, Y.; Geiger, A.; Liu, Y. Learning steering kernels for guided depth completion. IEEE Transactions on Image Processing 2021, 30, 2850–2861. [Google Scholar] [CrossRef] [PubMed]

- Zhu, J.; Xu, Z.; Fu, D.; Hu, C. Laser spot center detection and comparison test. Photonic Sensors 2019, 9, 49–52. [Google Scholar] [CrossRef]

- Yuan, W.; Xu, C.; Xue, L.; Pang, H.; Cao, A.; Fu, Y.; Deng, Q. Integrated double-sided random microlens array used for laser beam homogenization. Micromachines 2021, 12, 673. [Google Scholar] [CrossRef] [PubMed]

- Jin, Y.; Hassan, A.; Jiang, Y. Freeform microlens array homogenizer for excimer laser beam shaping. Optics express 2016, 24, 24846–24858. [Google Scholar] [CrossRef] [PubMed]

- Cao, A.; Shi, L.; Yu, J.; Pang, H.; Zhang, M.; Deng, Q. Laser Beam Homogenization Method Based on Random Microlens Array. Appl. Laser 2015, 35, 124–128. [Google Scholar]

- Liu, Z.; Liu, H.; Lu, Z.; Li, Q.; Li, J. A beam homogenizer for digital micromirror device lithography system based on random freeform microlenses. Optics Communications 2019, 443, 211–215. [Google Scholar] [CrossRef]

- Xue, L.; Pang, Y.; Liu, W.; Liu, L.; Pang, H.; Cao, A.; Shi, L.; Fu, Y.; Deng, Q. Fabrication of random microlens array for laser beam homogenization with high efficiency. Micromachines 2020, 11, 338. [Google Scholar] [CrossRef] [PubMed]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 770–778.

- Chollet, F. Xception: Deep learning with depthwise separable convolutions. Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 1251–1258.

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. Medical Image Computing and Computer-Assisted Intervention–MICCAI 2015: 18th International Conference, Munich, Germany, -9, 2015, Proceedings, Part III 18. Springer, 2015, pp. 234–241. 5 October.

- Hu, J.; Ozay, M.; Zhang, Y.; Okatani, T. Revisiting single image depth estimation: Toward higher resolution maps with accurate object boundaries. 2019 IEEE winter conference on applications of computer vision (WACV). IEEE, 2019, pp. 1043–1051.

- Silberman, N.; Hoiem, D.; Kohli, P.; Fergus, R. Indoor segmentation and support inference from rgbd images. ECCV (5) 2012, 7576, 746–760. [Google Scholar]

- Ma, F.; Karaman, S. Sparse-to-dense: Depth prediction from sparse depth samples and a single image. 2018 IEEE international conference on robotics and automation (ICRA). IEEE, 2018, pp. 4796–4803.

| Method | Error | Accuracy | Parameters | ||||

|---|---|---|---|---|---|---|---|

| Rmse | Rel | ||||||

| Sparse-to-Dense[26] | 0.230 | 0.044 | 92.6 | 97.1 | 99.4 | 99.8 | 28.4M |

| Unet+CSPN[13] | 0.117 | 0.016 | 97.1 | 99.2 | 99.9 | 100.0 | 256M |

| KernelNet[14] | 0.111 | 0.015 | 97.4 | 99.3 | 99.9 | 100.0 | 16.47M |

| Nconv-CNN[12] | 0.125 | 0.017 | 96.7 | 99.1 | 99.8 | 100.0 | 484K |

| Ours | 0.116 | 0.018 | 96.8 | 99.3 | 99.9 | 100.0 | 1.07M |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).