Submitted:

07 June 2023

Posted:

07 June 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

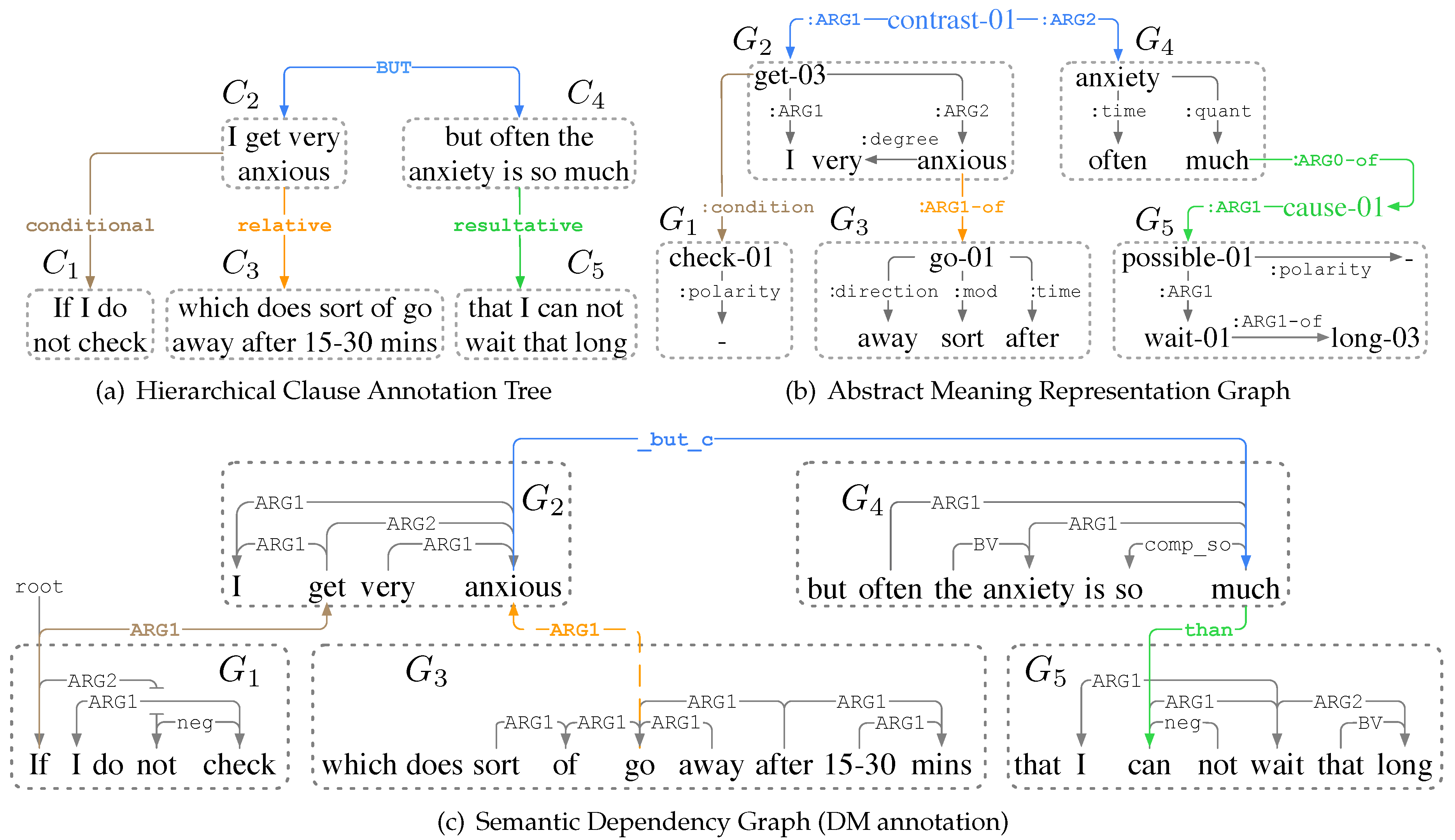

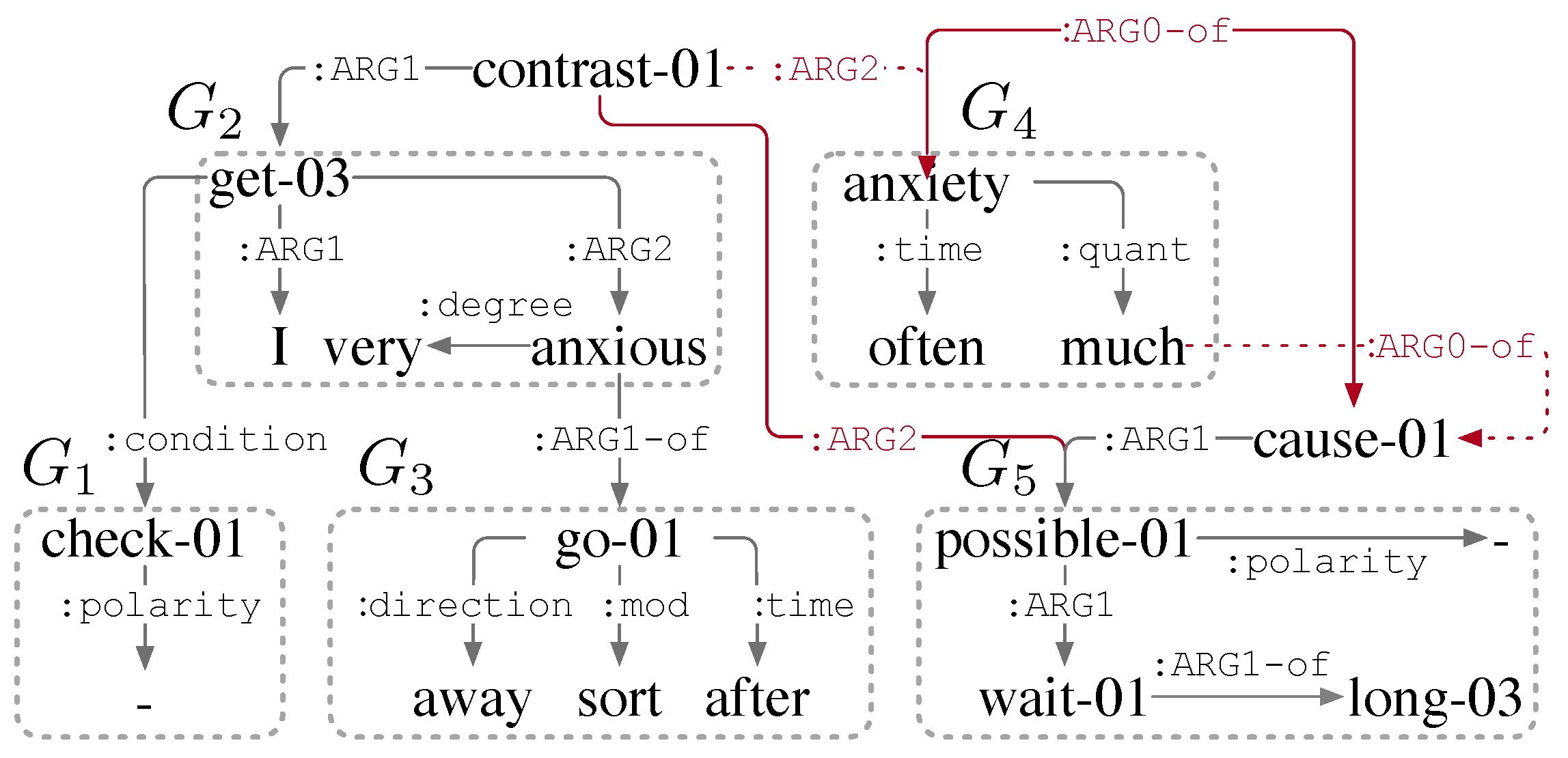

- and are coordinate and contrastive,

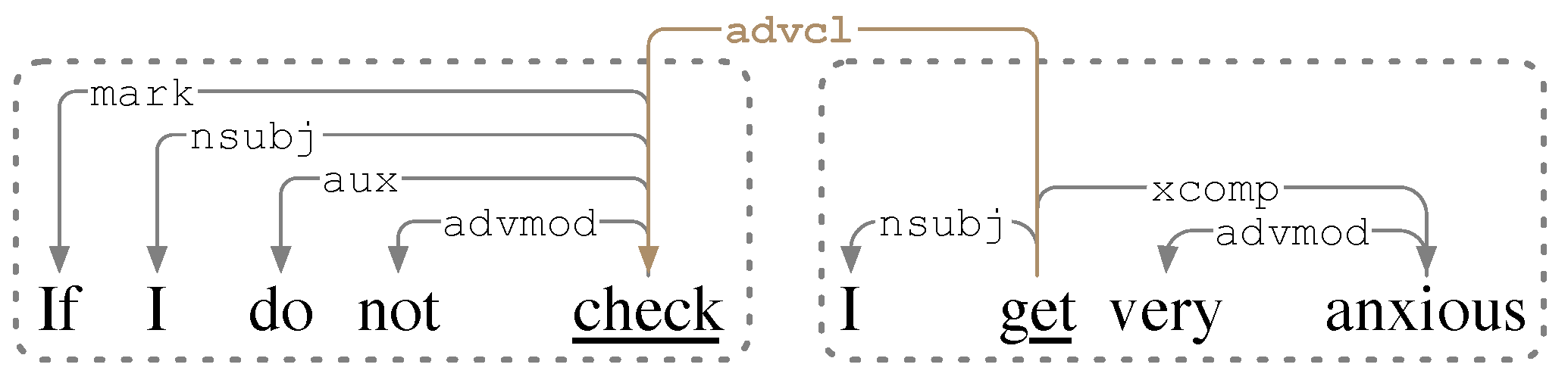

- and are conditional adverbial and relative clauses of , respectively,

- is a resultative adverbial clause of .

- We propose a novel framework, hierarchical clause annotation (HCA), to segment complex sentences into clauses and capture their interrelations based on the linguistic research of clause hierarchy, aiming to provide clause-level structural features to facilitate semantic parsing tasks.

- We elaborate on our experience developing a large HCA corpus – including determining an annotation framework, creating a silver-to-gold manual annotation tool, and ensuring annotating quality. The resulting HCA corpus contains English sentences from AMR 2.0, each including at least two clauses.

- We decompose HCA into two subtasks, i.e., clause segmentation and parsing, and adapt discourse segmentation and parsing models for the HCA subtasks. Experimental results show that the adapted models achieve satisfactory performances in providing reliable silver HCA data.

2. Related Work

2.1. RST Parsing

- The elementary units of HCA are clauses, while that of RST parsing are EDUs, including clauses and phrases. The blurry definitions of an EDU may cause obstacles in RST parsing. For example, phrases“as a result of margin calls” in (1) and “Despite some their considerable incomes and assets” in (2) are segmented as EDUs but not clauses due to the absence of a verb. Moreover, although the clause “that they have made it” functions as a predicative of the verb “feel”, it can not be annotated as an EDU.

- The rhetorical relations in RST parsing characterize the coherence among EDUs, and some can not map to semantic relations. For example, the semantic relation between “feel” and “that they have made it” in (2) is not captured in RST parsing.

2.2. Other Similar Tasks

2.2.1. Clause Identification

2.2.2. Split-and-Rephrase

2.2.3. Text Simplification

2.2.4. Simple-Sentence-Decomposition

2.2.5. Summary

- For clause identification, coordinator “but” and subordinators “If”, “which”, and “that” are segmented out. Besides, non-tensed verb phrases that function as a subject, object, or postmodifier are also target clauses in the task. These cases are out of the definition of annotated clauses in our HCA framework, as redundant hierarchies occur in capturing inter-clause relations.

- For split-and-rephrase, the granularity of decomposing is larger than clauses due to the consideration of preserving the original meaning of the input sentence. The output (1) and (3) are still complex sentences with two clauses. Additionally, the output (2) is segmented from the relative clause that modifies the “anxious” in the matrix clause, leading to a syntax transformation.

- For text simplification, dropping the subordinator “If” and the coordinator “but” leads to the uncertainties of discourse relations between output sentences. Moreover, as replacements for simpler syntax in (3) and (4) bring misalignments between substitute and substituted tokens, text simplification is unsuitable to serve as a preprocess for semantic dependency parsing, which is a token-level task.

- For simple-sentence-decomposition, it also drops clausal connectives like text simplification, leading to the uncertainties of some discourse relations captured by semantic parsing.

2.3. Clause Hierarchy

3. Hierarchical Clause Annotation Framework

3.1. Concept

3.1.1. Sentence and Clause

- Finite: clauses that contain tensed verbs;

- Non-finite : clauses that only contain non-tensed verbs like ing-participles, ed-participles, and to-infinitives.

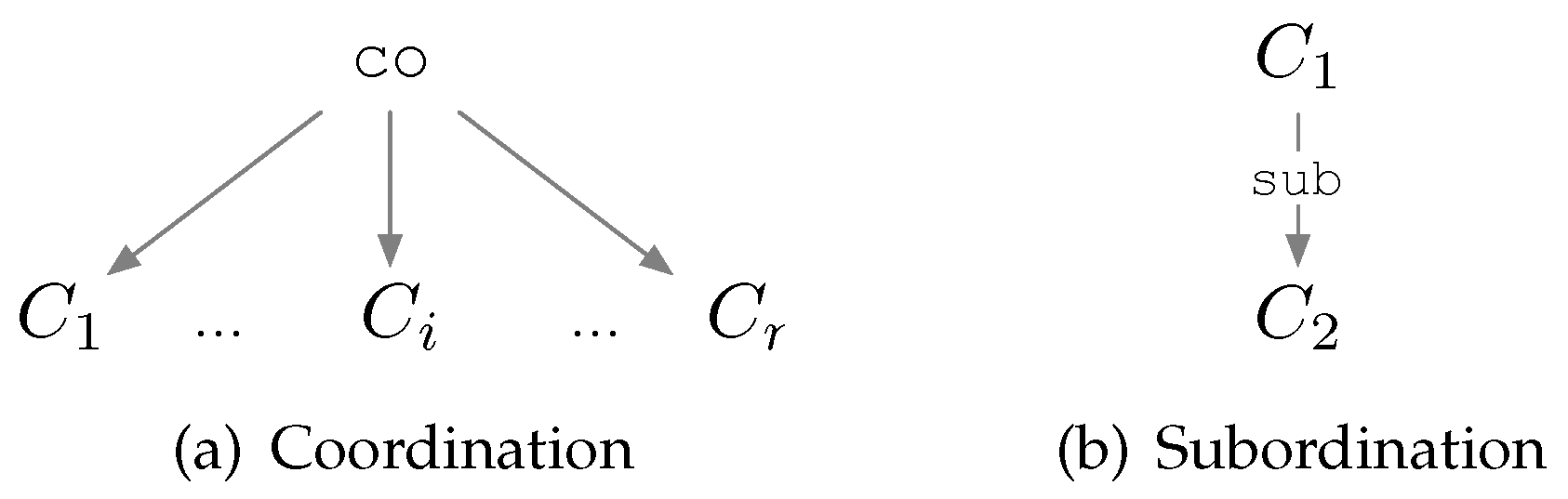

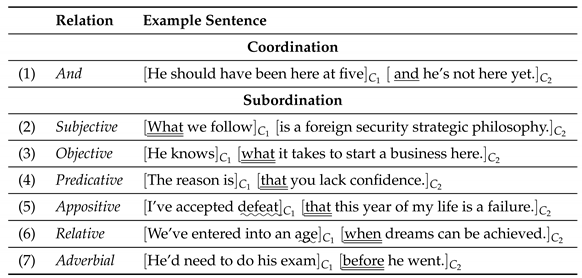

3.1.2. Clause Combination

- (1)

- Coordination and Coordinator

- (2)

- Subordination, Subordinator, and Antecedent

- Nominative: Function as clausal arguments or noun phrases in the matrix clause and can be subdivided into Subjective, Objective, Predicative and Appositive.

- Relative: Define or describe a preceding noun head in the matrix clause.

- Adverbial: Function as a Condition, Concession, Reason, and such for the matrix clause.

3.2. HCA Representation

3.3. HCA corpus

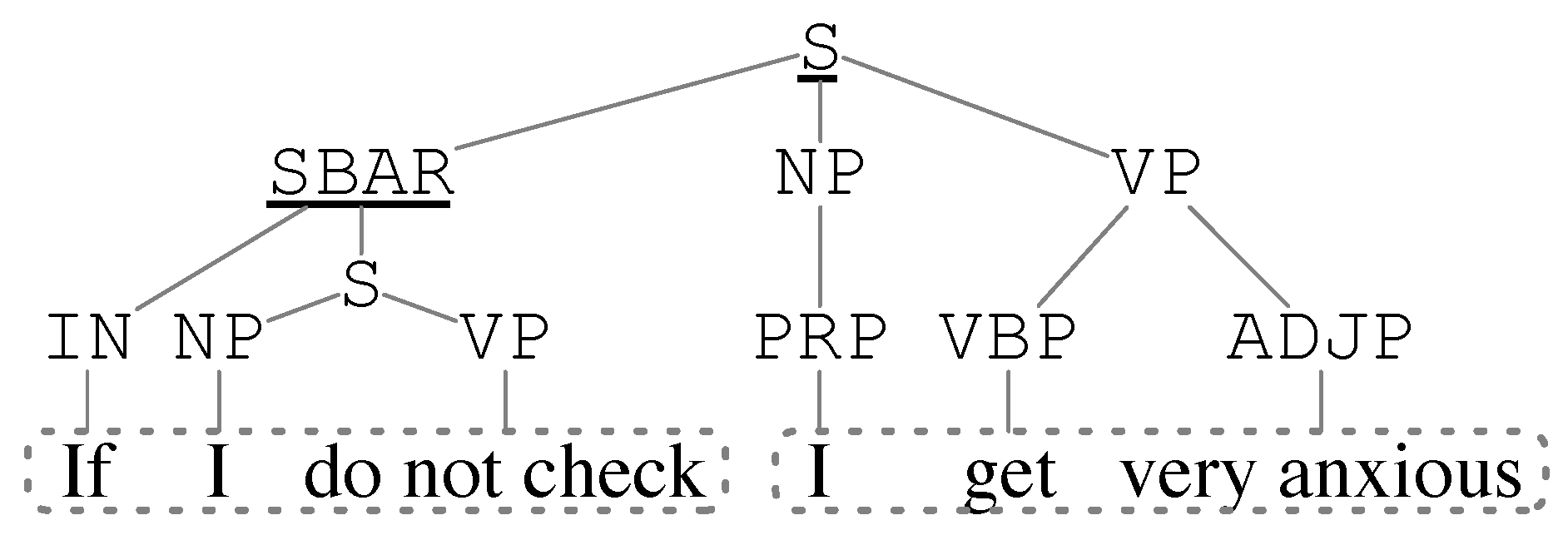

3.3.1. Silver Data from Existing Schemas

- Consituency Parse Tree

- Traverse non-leaf nodes in the CPT and find the clause-type nodes: S, SBAR, SBARQ, INV, and SQ.

- Identify the tokens dominated by a clause-type node as a clause.

- When a clause-type node dominates another one, an inter-clause relation between them is determined without an exact relation type.

- Syntactic Dependency Parse Tree

- Use a mapping of dependency relations to clause constituents: subjects (S) and the governor, i.e., a non-copular verb(V), via relation nsubj and such; objects (O) and complements (C) in V’s dependants via relations dobj, iobj, xcomp, ccomp, and such; adverbials (A) in V’s dependents via relations advmod, advcl, prep_in, and such.

- When detecting a verb5 in the sentence, a corresponding clause, consisting of the verb and its dependant constituents, can be identified.

-

If a clause governs another clause via a dependency relation, the interrelation between them can be determined by the relation label:

- Coordination: conj:and, conj:or, conj:but

- Subjective: nsubj

- Objective: dobj, iobj

- Predicative: xcomp, ccmop

- Appositive: appos

- Relative: ccomp, acl:relcl, rcmod

- Adverbial: advcl

3.3.2. Gold Data from Manual Annotator

- Specific inter-clause relations can not be obtained via the two syntactic structures, where CPT can only provide the existence of a relation without a label, and the dependency relations in SDPT have multiple mappings (e.g., ccomp to Predcative or Relative) or no mapping (e.g., advcl to no exact Adverbial subtype like Conditional).

- Pre-set transformation rules identify more clauses out of the HCA definitions. For example, the extracted non-finite clauses (e.g., to-infinitives) embedded in its matrix clause are too short and lead to hierarchical redundancies in the HCA tree.

- The performances of two syntactic parsers degrade when encountering long and complex sentences which are the core concerns of our HCA corpus.

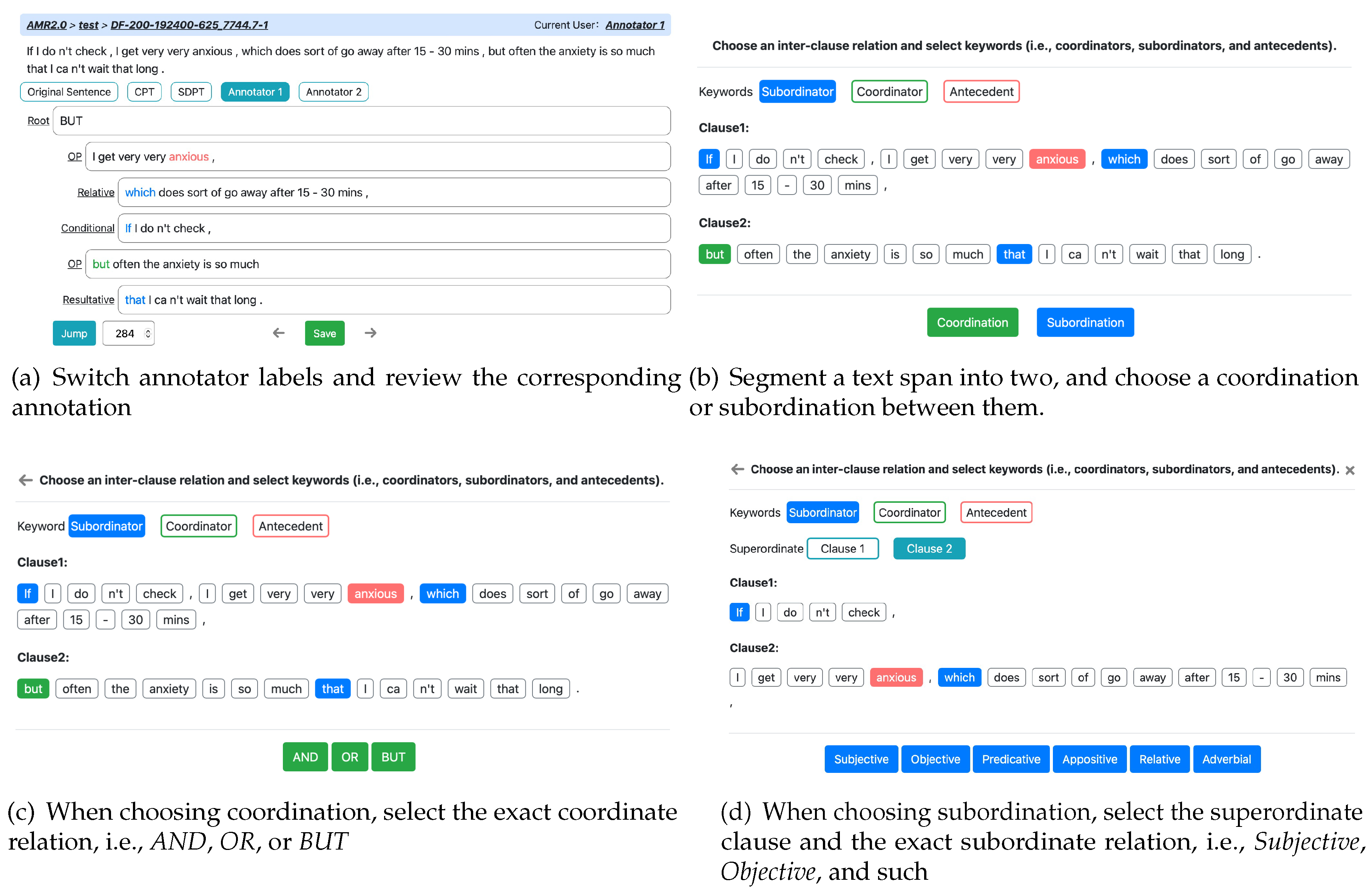

- Review annotations from CPT, SDPT, or other annotators by switching the name tags in Figure 6a.

- Choose an existing annotation to proofread or just start from the original sentence.

-

Segment a text span into two by double-clicking the blank space of a split point, and select the relation between them in Figure 6b.

- (a)

- If the two spans are coordinated, select a specific coordination and label coordinators that indicate the interrelation in Figure 6c.

- (b)

- If the two spans are subordinated, designate the superordinate one, select a specific subordination, and label subordinators that indicate the interrelation in Figure 6d.

- Remerge two text spans into one by clicking the two spans successively.

- Repeat step c and d until all text spans are segmented into a set of clauses constructed in a right HCA tree.

3.3.3. Quality Assurance

- P/R/ on clauses: precision, recall, and score on the segmented clauses, where a positive match means that both segmented clauses from two annotators have the same start and end boundaries.

- RST-Parseval [31] on interrelations: consisting of Span, Nuclearity, Relation, and Full are used to evaluate unlabeled, nuclearity-, relation-, and fully labeled interrelations respectively between the matched clauses from two annotators.

- scores on clauses range from 98.4 to 100,

- RST-Parseval scores on interrelations range from 97.3, 97.0, 93.6, 93.4 to 98.1, 97.8, 94.2, 94.1 in Span, Nuclearity, Relation, and Full, respectively.

3.3.4. Dataset Detail

4. Model

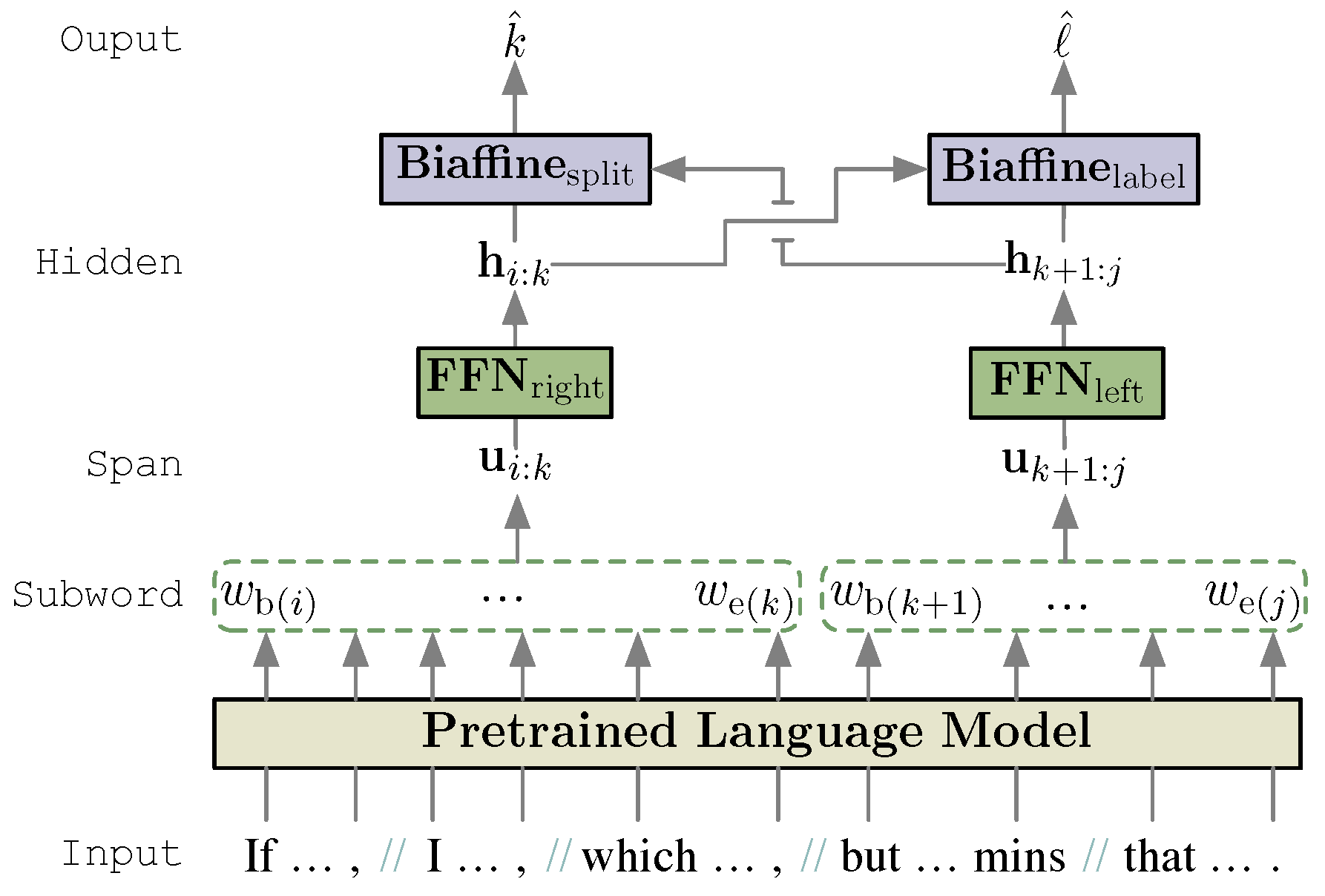

4.1. Clause Segmentation

- (1)

- Bi-LSTM encoded character embeddings,

- (2)

- static word embeddings from fastText, [32]

- (3)

- and fine-tuning word embeddings from a pretrained language model (PLM).

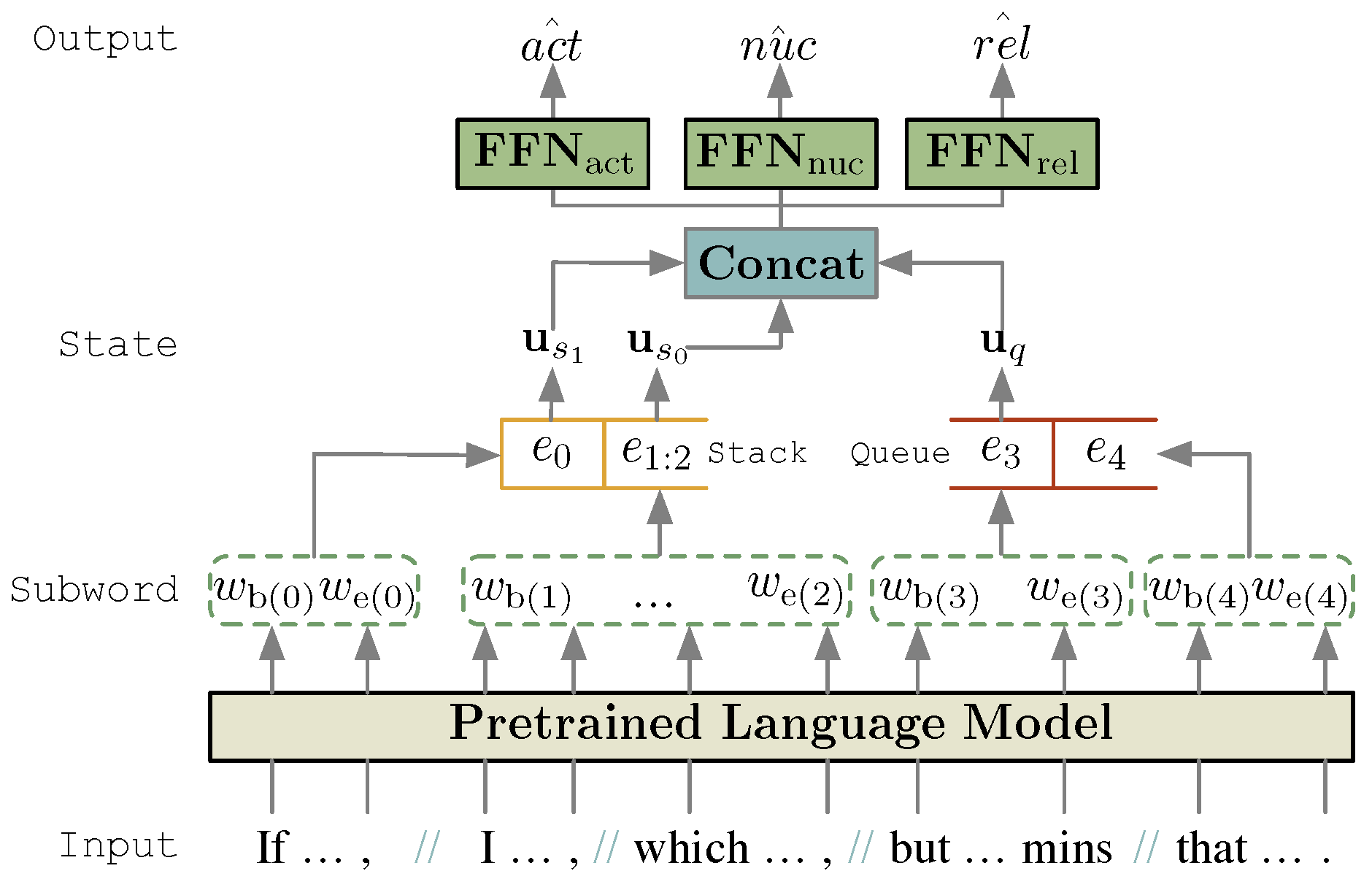

4.2. Clause Parsing

4.2.1. Text Span Embedding

4.2.2. Top-Down Strategy

4.2.3. Bottom-Up Strategy

- : pop the first clause off Q and push it onto S.

- : pop two elements from S and push a new combined composite item that has the popped subtrees as its children onto S as a single composite item.

5. Experiments

5.1. Dataset

- HCA-AMR2.0: The first HCA corpus is annotated on sentences in AMR 2.0 dataset. The source data includes discussion forums collected for the DARPA BOLT AND DEFT programs, transcripts and English translations of Mandarin Chinese broadcast news programming from China Central TV, Wall Street Journal text, translated Xinhua news texts, various newswire data from NIST OpenMT evaluations and weblog data used in the DARPA GALE program.

- GUM: The Georgetown University Multilayer corpus is created as part of the course LING-367 (Computational Corpus Linguistics) at Georgetown University, and its annotation is followed the RST-DT segmentation guidelines for English. The text sources consist of 35 documents of news, interviews, instruction books, and travel guides from WikiNews, WikiHow, and WikiVoyage.

- STAC: The Strategic Conversation dataset is a corpus of strategic chat conversations in 45 games annotated with negotiation-related information, dialogue acts, and discourse structures in Segmented Discourse Representation Theory (SDRT) framework.

- RST-DT: Rhetorical Structure Theory (RST) Discourse Treebank is developed by researchers at the Information Sciences Institute (University of Southern California), the US Department of Defense, and the Linguistic Data Consortium (LDC). It comprises 385 Wall Street Journal articles from the Penn Treebank annotated with discourse structure in the RST framework.

5.2. Experimental Environments

5.3. Hyper-Parameters

5.4. Evaluation Metrics

5.5. Baseline Models

5.6. Experimental Results

5.6.1. Results of Clause Segmentation

- Compared with the 98.4-100 IAA scores on clause segmentation mentioned in Section 3.3.3, the adapted DisCoDisCo model achieves satisfactory performances, i.e., 91.3 scores.

- In the ablation study of different embedded features, the static word embedding fastText, which gains a 4.9 score improvement, contributes the most in all features, although other features also have positive impacts on the performances.

- In the discourse segmentation task, the DisCoDisCo model outperforms the GumDrop model in the GUM and RST-DT datasets. Although GumDrop performs better than DisCoDisCo in the STAC dataset, Its performance sharply declined with 14.7 scores when removing extra gold features. Thus, we choose to apply the DisCoDisCo model to the clause segmentation subtask.

- Experimented with the same model DisCoDisCo, performances on clause segmentation are about 3-5 scores lower than on discourse segmentation, indicating that the performance gaps are attributed to the corpora. From the statistics in Table 6, an average of 3.1 clauses constitute a sentence in HCA-AMR2.0, more than that in GUM (2.3 EDUs), STAC (1.1 EDUs), and RST-DT (2.6 EDUs). Besides, only HCA-AMR2.0 contains a certain number of weblog data, which are less formal in English grammar and may cause more obstacles for the DisCoDisCo model.

5.6.2. Results of Clause Parsing

- Better performances are obtained in clause parsing than in discourse parsing by either top-down or bottom-up parsers with whatever PLMs.

- The best performances of clause parsing are obtained by the bottom-up parser with the pretrained DeBERTa, where the performance reaches up to 97.0 scores on Parseval-Span and falls to 87.7 scores on Parseval-Full.

- All the best performances in either clause parsing or discourse parsing are obtained by parsers with pretrained XLNet and DeBERTa, indicating that these two PLMs are more suitable for relation classification tasks than other PLMs.

- Experimented with a same model and a same pretrained language model, performances on clause parsing are about 6-9 Parseval-Full scores higher than that on discourse parsing, indicating that the performance gaps are attributed to the differences between corpora. RST-DT contains 18 classes of relations partitioned from 78 types of blurry rhetorical relations, while HCA has 18 types of distinguishable semantic relations, half of which are subdivided from Adverbial.

- All the parsers with the corresponding large PLMs perform better than that with the base PLMs.

- The bottom-up parser with DeBERTa-large achieves a better performance, with a 0.8 score improvement on Parseval-Full over the parser with DeBERTa-base.

6. Discussion

6.1. Potentialities of HCA

6.1.1. Case Study for AMR Parsing

- 1.

- Transform the inter-clause relation BUT to an AMR node contrast-01 and two AMR edges directing to the root nodes of and .

- 2.

- Transform the inter-clause relation resultative to an AMR node cause-01 and two AMR edges directing to the root node of and the node much in .

6.1.2. Case Study for Semantic Dependency Parsing

- Transform the inter-clause relation BUT to a dependency edge between root nodes (i.e., anxious and much) of and .

- Transform the inter-clause relation resultative to a dependency edge between root nodes (i.e., much and can) of and .

- 3.

- Transform the inter-clause relation relative to a dependency edge between the root node go and the node anxious) of .

6.2. Future Work

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AMR | Abstract Meaning Representation |

| BiLSTM | Bidirectional Long Short-Term Memory |

| CPT | Constituency Parse Tree |

| FFN | Feed Forward Neural Networks |

| HCA | Hierarchical Clause Annotation |

| IAA | Inter-Annotator Agreement |

| PLM | Pretrained Language Model |

| POS | Part-of-Speech |

| RST | Rhetorical Structure Theory |

| RST-DT | Rhetorical Structure Theory Discourse Treebank |

| SDG | Semantic Dependency Graph |

| SDPT | Syntactic Dependency Parse Tree |

| SPRP | Split-and-Rephrase |

| SSD | Simple Setence Decomposition |

| TS | Text Simplification |

References

- Sataer, Y.; Shi, C.; Gao, M.; Fan, Y.; Li, B.; Gao, Z. Integrating Syntactic and Semantic Knowledge in AMR Parsing with Heterogeneous Graph Attention Network. In Proceedings of the ICASSP 2023 - 2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP); 2023; pp. 1–5. [Google Scholar] [CrossRef]

- Li, B.; Gao, M.; Fan, Y.; Sataer, Y.; Gao, Z.; Gui, Y. DynGL-SDP: Dynamic Graph Learning for Semantic Dependency Parsing. In Proceedings of the Proceedings of the 29th International Conference on Computational Linguistics; International Committee on Computational Linguistics: Gyeongju, Republic of Korea, 2022; pp. 3994–4004. [Google Scholar]

- Tian, Y.; Song, Y.; Xia, F.; Zhang, T. Improving Constituency Parsing with Span Attention. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2020; Association for Computational Linguistics: Online, 2020; pp. 1691–1703. [Google Scholar] [CrossRef]

- He, L.; Lee, K.; Lewis, M.; Zettlemoyer, L. Deep Semantic Role Labeling: What Works and What’s Next. In Proceedings of the Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers); Association for Computational Linguistics: Vancouver, Canada, 2017; pp. 473–483. [Google Scholar] [CrossRef]

- Tang, G.; Müller, M.; Rios, A.; Sennrich, R. Why Self-Attention? In A Targeted Evaluation of Neural Machine Translation Architectures. In Proceedings of the Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing; Association for Computational Linguistics: Brussels, Belgium, 2018; pp. 4263–4272. [Google Scholar] [CrossRef]

- Xu, J.; Gan, Z.; Cheng, Y.; Liu, J. Discourse-Aware Neural Extractive Text Summarization. In Proceedings of the Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics: Online, 2020; pp. 5021–5031. [Google Scholar] [CrossRef]

- Carlson, L.; Marcu, D.; Okurovsky, M.E. Building a Discourse-Tagged Corpus in the Framework of Rhetorical Structure Theory. In Proceedings of the Proceedings of the Second SIGdial Workshop on Discourse and Dialogue; 2001. [Google Scholar]

- Narayan, S.; Gardent, C.; Cohen, S.B.; Shimorina, A. Split and Rephrase. In Proceedings of the Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing; Association for Computational Linguistics: Copenhagen, Denmark, 2017; pp. 606–616. [Google Scholar] [CrossRef]

- Zhang, X.; Lapata, M. Sentence Simplification with Deep Reinforcement Learning. In Proceedings of the Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing; Association for Computational Linguistics: Copenhagen, Denmark, 2017; pp. 584–594. [Google Scholar] [CrossRef]

- Gao, Y.; Huang, T.H.; Passonneau, R.J. ABCD: A Graph Framework to Convert Complex Sentences to a Covering Set of Simple Sentences. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers); Association for Computational Linguistics: Online, 2021; pp. 3919–3931. [Google Scholar] [CrossRef]

- Banarescu, L.; Bonial, C.; Cai, S.; Georgescu, M.; Griffitt, K.; Hermjakob, U.; Knight, K.; Koehn, P.; Palmer, M.; Schneider, N. Abstract Meaning Representation for Sembanking. In Proceedings of the Proceedings of the 7th Linguistic Annotation Workshop and Interoperability with Discourse; Association for Computational Linguistics: Sofia, Bulgaria, 2013; pp. 178–186. [Google Scholar]

- Oepen, S.; Kuhlmann, M.; Miyao, Y.; Zeman, D.; Cinková, S.; Flickinger, D.; Hajič, J.; Urešová, Z. SemEval 2015 Task 18: Broad-Coverage Semantic Dependency Parsing. In Proceedings of the Proceedings of the 9th International Workshop on Semantic Evaluation (SemEval 2015); Association for Computational Linguistics: Denver, Colorado, 2015; pp. 915–926. [Google Scholar] [CrossRef]

- Mann, W.C.; Thompson, S.A. Rhetorical structure theory: Toward a functional theory of text organization. Text-interdisciplinary Journal for the Study of Discourse 1988, 8, 243–281. [Google Scholar] [CrossRef]

- Payne, T.E. Understanding English Grammar: A Linguistic Introduction; Cambridge University Press, 2010. [CrossRef]

- Gessler, L.; Behzad, S.; Liu, Y.J.; Peng, S.; Zhu, Y.; Zeldes, A. DisCoDisCo at the DISRPT2021 Shared Task: A System for Discourse Segmentation, Classification, and Connective Detection. In Proceedings of the Proceedings of the 2nd Shared Task on Discourse Relation Parsing and Treebanking (DISRPT 2021); Association for Computational Linguistics: Punta Cana, Dominican Republic, 2021; pp. 51–62. [Google Scholar] [CrossRef]

- Kobayashi, N.; Hirao, T.; Kamigaito, H.; Okumura, M.; Nagata, M. A Simple and Strong Baseline for End-to-End Neural RST-style Discourse Parsing. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2022; Association for Computational Linguistics: Abu Dhabi, United Arab Emirates, 2022; pp. 6725–6737. [Google Scholar]

- Tjong Kim Sang, E.F.; Déjean, H. Introduction to the CoNLL-2001 shared task: clause identification. In Proceedings of the Proceedings of the ACL 2001 Workshop on Computational Natural Language Learning (ConLL); 2001. [Google Scholar]

- Marcus, M.P.; Santorini, B.; Marcinkiewicz, M.A. Building a Large Annotated Corpus of English: The Penn Treebank. Computational Linguistics 1993, 19, 313–330. [Google Scholar]

- Al-Thanyyan, S.S.; Azmi, A.M. Automated Text Simplification: A Survey. ACM Comput. Surv. 2021, 54. [Google Scholar] [CrossRef]

- Givón, T. Syntax: An Introduction. Volume I; John Benjamins, 2001.

- Matthiessen, C.M. Combining clauses into clause complexes: A multi-faceted view. In Complex Sentences in Grammar and Discourse; John Benjamins, 2002; pp. 235–319.

- Hopper, Paul J.; Traugott, E.C. Grammaticalization, 2003.

- Aarts, B. Syntactic gradience: The nature of grammatical indeterminacy; Oxford University Press, 2007.

- Givón, T. On Understanding Grammar: Revised edition; John Benjamins, 2018; pp. 1–321.

- Carter, R.; McCarthy, M. Cambridge grammar of English: a comprehensive guide; spoken and written English grammar and usage; Cambridge University Press, 2006.

- Feng, S.; Banerjee, R.; Choi, Y. Characterizing Stylistic Elements in Syntactic Structure. In Proceedings of the Proceedings of the 2012 Joint Conference on Empirical Methods in Natural Language Processing and Computational Natural Language Learning; Association for Computational Linguistics: Jeju Island, Korea, 2012; pp. 1522–1533. [Google Scholar]

- Del Corro, L.; Gemulla, R. Clausie: clause-based open information extraction. In Proceedings of the Proceedings of the 22nd international conference on World Wide Web, 2013, pp.; pp. 355–366.

- Vo, D.T.; Bagheri, E. Self-training on refined clause patterns for relation extraction. Information Processing & Management 2018, 54, 686–706. [Google Scholar] [CrossRef]

- Oberländer, L.A.M.; Klinger, R. Token Sequence Labeling vs. In Clause Classification for English Emotion Stimulus Detection. In Proceedings of the Proceedings of the Ninth Joint Conference on Lexical and Computational Semantics; Association for Computational Linguistics: Barcelona, Spain (Online), 2020; pp. 58–70. [Google Scholar]

- Qi, P.; Zhang, Y.; Zhang, Y.; Bolton, J.; Manning, C.D. Stanza: A Python Natural Language Processing Toolkit for Many Human Languages. In Proceedings of the Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics: System Demonstrations; Association for Computational Linguistics: Online, 2020; pp. 101–108. [Google Scholar] [CrossRef]

- Morey, M.; Muller, P.; Asher, N. How much progress have we made on RST discourse parsing? In A replication study of recent results on the RST-DT. In Proceedings of the Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing; Association for Computational Linguistics: Copenhagen, Denmark, 2017; pp. 1319–1324. [Google Scholar] [CrossRef]

- Bojanowski, P.; Grave, E.; Joulin, A.; Mikolov, T. Enriching Word Vectors with Subword Information. Transactions of the Association for Computational Linguistics 2017, 5, 135–146. [Google Scholar] [CrossRef]

- Dozat, T.; Manning, C.D. Deep Biaffine Attention for Neural Dependency Parsing. In Proceedings of the International Conference on Learning Representations; 2016; pp. 1–8. [Google Scholar]

- Zeldes, A. The GUM Corpus: Creating Multilayer Resources in the Classroom. Lang. Resour. Eval. 2017, 51, 581–612. [Google Scholar] [CrossRef]

- Asher, N.; Hunter, J.; Morey, M.; Farah, B.; Afantenos, S. Discourse Structure and Dialogue Acts in Multiparty Dialogue: the STAC Corpus. In Proceedings of the Proceedings of the Tenth International Conference on Language Resources and Evaluation (LREC’16); European Language Resources Association (ELRA): Portorož, Slovenia, 2016; pp. 2721–2727. [Google Scholar]

- Heilman, M.; Sagae, K. Fast Rhetorical Structure Theory Discourse Parsing. CoRR, 1505. [Google Scholar]

- Yu, Y.; Zhu, Y.; Liu, Y.; Liu, Y.; Peng, S.; Gong, M.; Zeldes, A. GumDrop at the DISRPT2019 Shared Task: A Model Stacking Approach to Discourse Unit Segmentation and Connective Detection. In Proceedings of the Proceedings of the Workshop on Discourse Relation Parsing and Treebanking 2019; Association for Computational Linguistics: Minneapolis, MN, 2019; pp. 133–143. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers); Association for Computational Linguistics: Minneapolis, Minnesota; 2019; pp. 4171–4186. [Google Scholar] [CrossRef]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. 2019; arXiv:cs.CL/1907.11692].

- Joshi, M.; Chen, D.; Liu, Y.; Weld, D.S.; Zettlemoyer, L.; Levy, O. SpanBERT: Improving Pre-training by Representing and Predicting Spans. Transactions of the Association for Computational Linguistics 2020, 8, 64–77. [Google Scholar] [CrossRef]

- Yang, Z.; Dai, Z.; Yang, Y.; Carbonell, J.; Salakhutdinov, R.R.; Le, Q.V. XLNet: Generalized Autoregressive Pretraining for Language Understanding. In Proceedings of the Advances in Neural Information Processing Systems. Curran Associates, Inc., Vol. 32. 2019. [Google Scholar]

- He, P.; Liu, X.; Gao, J.; Chen, W. 2021; arXiv:cs.CL/2006.03654].

| 1 | Gao et al. have yet to name their task, and thus we summarize their main idea into simple-sentence-decomposition (SSD) for the convenience of writing. |

| 2 | |

| 3 | |

| 4 | |

| 5 | Note that a copular verb in a clause and other constituents are dependants of the complement. |

| 6 |

| Task | Output Descrpition | Output Example | |

|---|---|---|---|

| Unit | Relation | ||

| Hierarchical Clause Annotation |

Clause trees built up by clauses and inter-clause relations |

(1) [But some big brokerage firms said [they don’t expect major problems as a result of margin calls. |

|

| (2) [Despite their considerable incomes and assets, one-fourth don’t feelthat they have made it. |

|||

| RST Parsing | Discourse trees built up by EDUs and rhetorical relations |

(1) [But some big brokerage firms said [they don’t expect major problems as a result of margin calls. |

|

| (2) [Despite their considerable incomes and assets,one-fourth don’t feel that they have made it. |

|||

| Task | Output Descrpition | Output Example |

|---|---|---|

| Hierarchical Clause Annotation |

Finite clauses and non-finite clauses seperated by a comma |

(1) If I do not check, (2) I get very anxious, (3) which does sort of go away after 15-30 mins, (4) but often the anxiety is so much (5) that I can not wait that long. |

| Clause Indentification |

Finite clauses, non- tensed verb phrases, coordinators, and subordinators |

(1) If (2) I do not check, (3) I get very anxious, (4) which (5) does sort of go away after 15-30 mins, (6) but (7) often the anxiety is so much (8) that (9) I can not wait that long. |

| Split-and -Rephrase |

Shorter sentences | (1) If I do not check, I get very anxious. (2) The anxieties does sort of go away after 15-30 mins. (3) But often the anxiety is so much that I can not wait that long. |

| Text Simplification |

Sentences with simpler syntax |

(1) If I do not check. (2) I get very anxious (3) The anxiety lasts for 15-30 mins. (3) But I am often too anxious to wait that long. |

| Simple -Sentence -Decomposition |

Simple sentences with only one clause |

(1) If I do not check. (2) I get very anxious, (3) The anxiety does sort of go away after 15-30 mins. (4) But Often the anxiety is so much. (5) that I can not wait that long. |

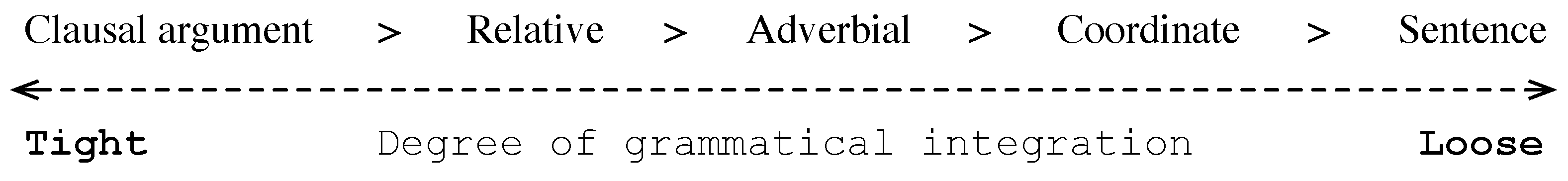

| Type | Cline of Clause Integration Tightness Degree |

|---|---|

| Matthiessen [21] | Embedded > Hypotaxis > Parataxis > Cohesive devices > Coherence |

| Hopper& Traugott [22] |

Subordination > Hypotaxis > Parataxis |

| Payne [14] | Compound verb > Clausal argument > Relative > Adverbial > Coordinate > Sentence |

|

| Annotator | Clause | Interrelation | |||||

|---|---|---|---|---|---|---|---|

| P | R | Span | Nuc. | Rel. | Full | ||

| 1, 2 | 99.9 | 99.8 | 99.8 | 97.9 | 97.5 | 94.3 | 94.1 |

| 1, 3 | 100 | 100 | 100 | 98.1 | 97.8 | 94.2 | 94.1 |

| 2, 4 | 99.8 | 99.5 | 99.6 | 97.5 | 97.3 | 93.9 | 93.8 |

| 1, 5 | 99.0 | 98.3 | 98.6 | 98.0 | 97.7 | 94.2 | 94.0 |

| 6, 7 | 99.6 | 99.1 | 99.3 | 97.3 | 97.0 | 93.8 | 93.6 |

| 4, 8 | 99.4 | 99.3 | 99.3 | 97.9 | 93.9 | 93.7 | 93.5 |

| 1, 9 | 99.0 | 98.6 | 98.8 | 97.2 | 97.0 | 93.9 | 93.8 |

| 5, 10 | 98.8 | 98.0 | 98.4 | 97.3 | 97.1 | 93.6 | 93.4 |

| Item | Occurence | Relation | Occurence |

|---|---|---|---|

| # Sentences (S) | MulSnt* | ||

| # S with HCA | And | ||

| # S in Train Set | Or | 974 | |

| # S in Dev Set | 740 | But | |

| # S in Test Set | 751 | Subjective | 992 |

| # Tokens (T) | 521,805 | Objective | |

| # Clauses (C) | 57,330 | ||

| # Avg. T/S | 26.9 | Appositive | 667 |

| # Avg. T/C | 9.1 | Relative | |

| # Avg. C/S | 3.1 | Adverbial★ |

| Dataset | #Unit (U) / #Sentence (S) | #Avg. U/S |

#Rel. | #Rel. Type |

#Avg. Rel./U |

||

|---|---|---|---|---|---|---|---|

| Train | Dev | Test | |||||

| HCA- AMR2.0 |

52,758/17,885 | 2,222/740 | 2,350/751 | 3.1 | 49,160 | 18 | 0.86 |

| GUM | 14,766/6,346 | 2,219/976 | 2,283/999 | 2.3 | - | - | |

| STAC | 9,887/8,754 | 1,154/991 | 1,547/1,342 | 1.1 | - | - | - |

| RST-DT | 17,646/6,671 | 1,797/716 | 2,346/928 | 2.6 | 19,778 | 18 | 0.91 |

| Environment | Value |

|---|---|

| Hardware | |

| CPU | Intel i9-10900K @ 3.7 GHz (10-core) |

| GPU | NVIDIA RTX 3090Ti (24G) |

| Memory | 64 GB |

| Software | |

| Python | 3.8.16 |

| Pytorch | 1.12.1 |

| Anaconda | 4.10.1 |

| CUDA | 11.3 |

| IDE | PyCharm 2022.2.3 |

| Layer | Hyper-Parameter | Value |

|---|---|---|

| Clause Segmentation Model | ||

| Character Embedding (Bi-LSTM) | layer | 1 |

| hidden_size | 64 | |

| dropout | 0.2 | |

| Word Embedding | fastText | 300 |

| Electra | 1024 (large) | |

| Feature Embedding | POS/Lemma/DP | 100 |

| Bi-LSTM | layer | 1 |

| hidden_size | 512 | |

| dropout | 0.1 | |

| Trainer | optimizer | AdamW |

| learning rate | 5e-4, 1e-4 | |

| # epochs | 60 | |

| patience of early stopping | 10 | |

| validation criteria | +span_f1 | |

| Clause Segmentation Model | ||

| Word Embbeding | pretrained language model | 768/1024(base/large) |

| FFN | hidden_size | 512 |

| dropout | 0.2 | |

| Trainer | optimizer | AdamW |

| learning rate | 2e-4, 1e-5 | |

| weight decay | 0.01 | |

| batch size (# spans/actions) | 5 | |

| # epochs | 20 | |

| patience of early stopping | 5 | |

| gradient clipping | 1.0 | |

| validation criteria | RST-Parseval-Full | |

| Task | Dataset | Model | P | R | |

|---|---|---|---|---|---|

| Discourse Segmentation |

GUM | GumDrop[37] | 96.5 | 90.8 | 93.5 |

| - all feats. * | 97.7 | 87.4 | 92.3 | ||

| DisCoDisCo[15] | 93.9 | 94.4 | 94.2 | ||

| - all feats. * | 92.7 | 92.6 | 92.6 | ||

| STAC | GumDrop[37] | 95.3 | 95.4 | 95.3 | |

| - all feats.* | 85.0 | 76.7 | 80.6 | ||

| DisCoDisCo[15] | 96.3 | 93.6 | 94.9 | ||

| - all feats. * | 91.8 | 92.1 | 91.9 | ||

| RST-DT | GumDrop[37] | 94.9 | 96.5 | 95.7 | |

| - all feats.* | 96.3 | 94.6 | 95.4 | ||

| DisCoDisCo[15] | 96.4 | 96.9 | 96.6 | ||

| - all feats. * | 96.8 | 95.9 | 96.4 | ||

| Clause Segmentation |

HCA-AMR2.0 | DisCoDisCo | 92.9 | 89.7 | 91.3 |

| - lem. * | 86.8 | 93.9 | 90.2 | ||

| - dp.∘ | 91.0 | 87.7 | 89.3 | ||

| - pos∘ | 91.4 | 87.6 | 89.4 | ||

| - all feats.∘ | 89.2 | 85.4 | 87.2 | ||

| - fastText | 90.5 | 82.7 | 86.4 |

| Model | PLM | Discourse Parsing | Clause Parsing | ||||||

|---|---|---|---|---|---|---|---|---|---|

| Span | Nuc. | Rel. | Full | Span | Nuc. | Rel. | Full | ||

| Top-Down | BERT | 92.6±0.53 | 85.7±0.41 | 75.4±0.45 | 74.7±0.54 | 96.0±0.20 | 92.1±0.27 | 85.8±0.51 | 85.7±0.47 |

| RoBERTa | 94.1±0.46 | 88.4±0.46 | 79.6±0.17 | 78.7±0.11 | 96.5±0.02 | 93.1±0.09 | 87.1±0.23 | 87.1±0.22 | |

| SpanBERT | 94.1±0.15 | 88.8±0.19 | 79.4±0.49 | 78.5±0.39 | 96.4±0.13 | 92.6±0.12 | 86.1±0.15 | 85.9±0.15 | |

| XLNet | 94.8±0.39 | 89.5±0.39 | 80.5±0.59 | 79.5±0.53 | 96.6±0.07 | 93.5±0.20 | 87.0±0.57 | 86.9±0.56 | |

| DeBERTa | 94.2±0.33 | 89.0±0.16 | 80.1±0.43 | 79.1±0.32 | 96.6±0.02 | 93.3±0.07 | 87.2±0.04 | 87.2±0.10 | |

| Bottom-Up | BERT | 91.9±0.34 | 84.4±0.31 | 74.4±0.37 | 73.8±0.30 | 96.3±0.22 | 92.3±0.36 | 86.1±0.37 | 86.0±0.32 |

| RoBERTa | 94.4±0.12 | 89.0±0.34 | 80.4±0.47 | 79.7±0.51 | 96.4±0.16 | 92.8±0.20 | 86.6±0.56 | 86.6±0.58 | |

| SpanBERT | 93.9±0.24 | 88.2±0.19 | 79.3±0.37 | 78.4±0.29 | 96.5±0.09 | 92.6±0.06 | 86.4±0.20 | 86.2±0.22 | |

| XLNet | 94.7±0.31 | 89.4±0.24 | 81.2±0.27 | 80.4±0.34 | 96.9±0.10 | 93.6±0.09 | 87.4±0.33 | 87.3±0.31 | |

| DeBERTa | 94.6±0.38 | 89.8±0.65 | 81.0±0.64 | 80.2±0.70 | 97.0±0.10 | 94.0±0.17 | 87.8±0.39 | 87.7±0.38 | |

| Model | PLM | Span | Nuc. | Rel. | Full |

|---|---|---|---|---|---|

| Top-Down | XLNet | 96.6±0.07 | 93.5±0.20 | 87.0±0.57 | 86.9±0.56 |

| XLNet† | 96.7±0.27 | 93.6±0.33 | 87.6±0.36 | 87.6±0.37 | |

| DeBERTa | 96.6±0.02 | 93.3±0.07 | 87.2±0.04 | 87.2±0.10 | |

| DeBERTa† | 97.0±0.14 | 94.0±0.29 | 87.6±0.69 | 87.6±0.61 | |

| Bottom-Up | XLNet | 96.9±0.10 | 93.6±0.09 | 87.4±0.33 | 87.3±0.31 |

| XLNet† | 97.0±0.34 | 93.7±0.46 | 87.6±0.67 | 87.6±0.67 | |

| DeBERTa | 97.0±0.10 | 94.0±0.17 | 87.8±0.39 | 87.7±0.38 | |

| DeBERTa† | 97.4±0.08 | 94.5±0.13 | 88.6±0.27 | 88.5±0.28 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).