Submitted:

05 June 2023

Posted:

05 June 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Methods

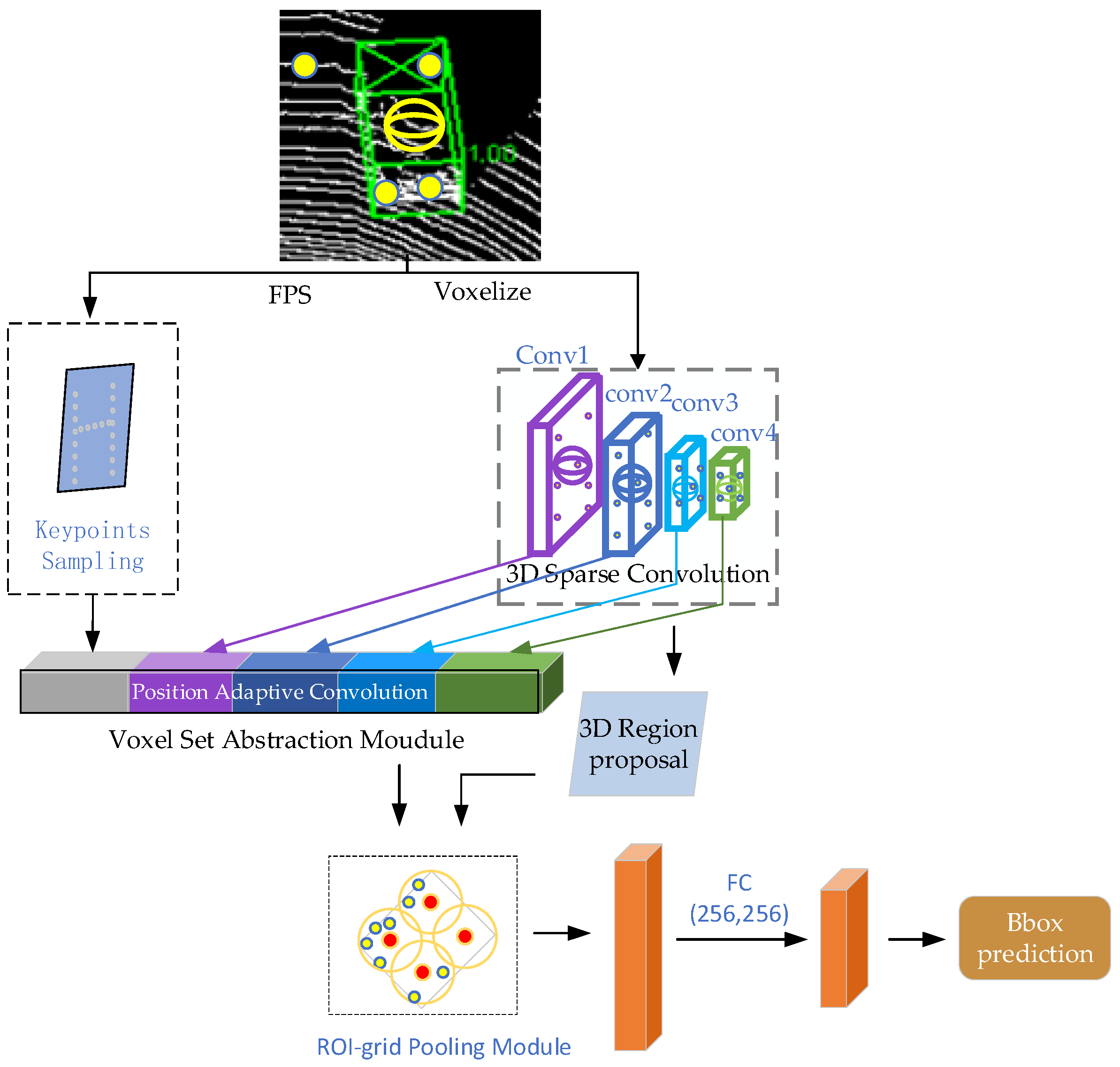

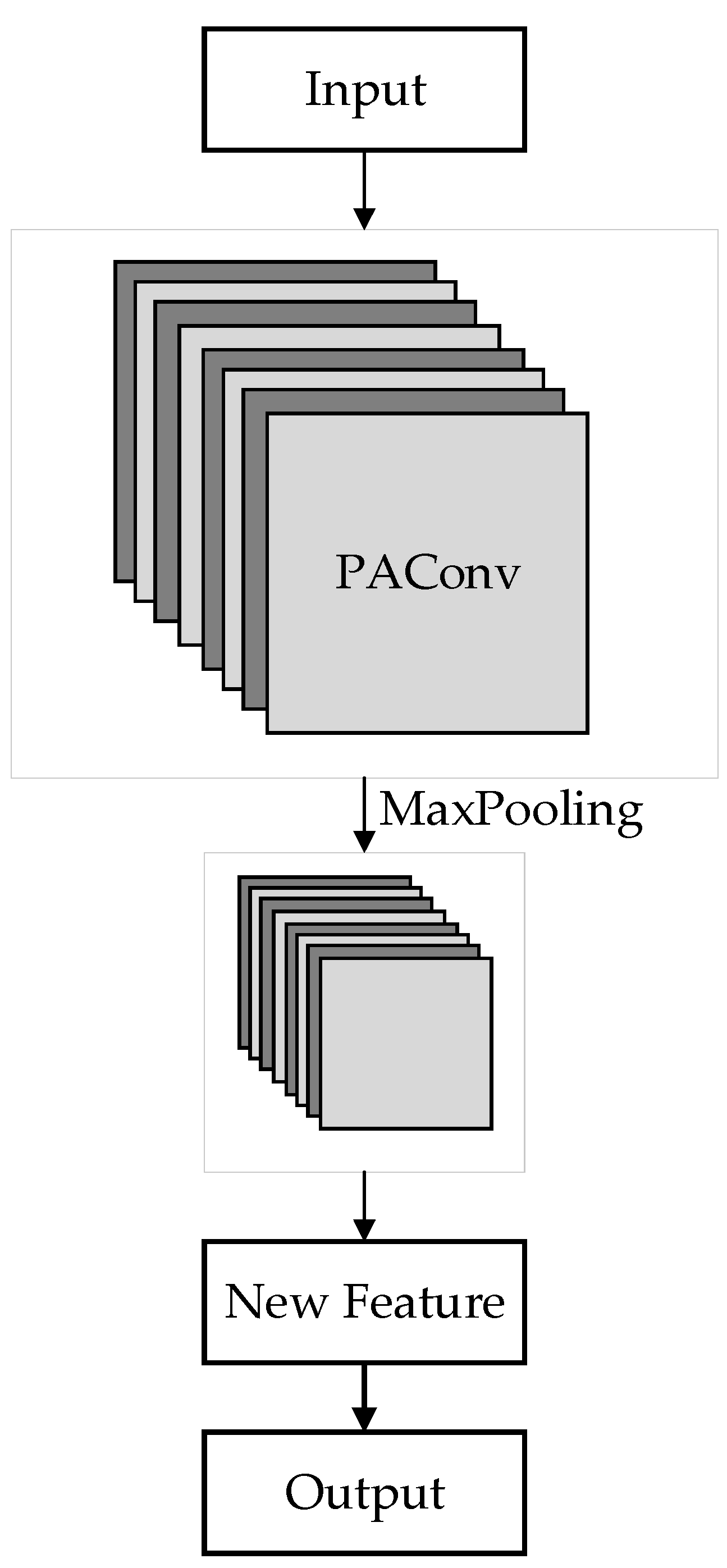

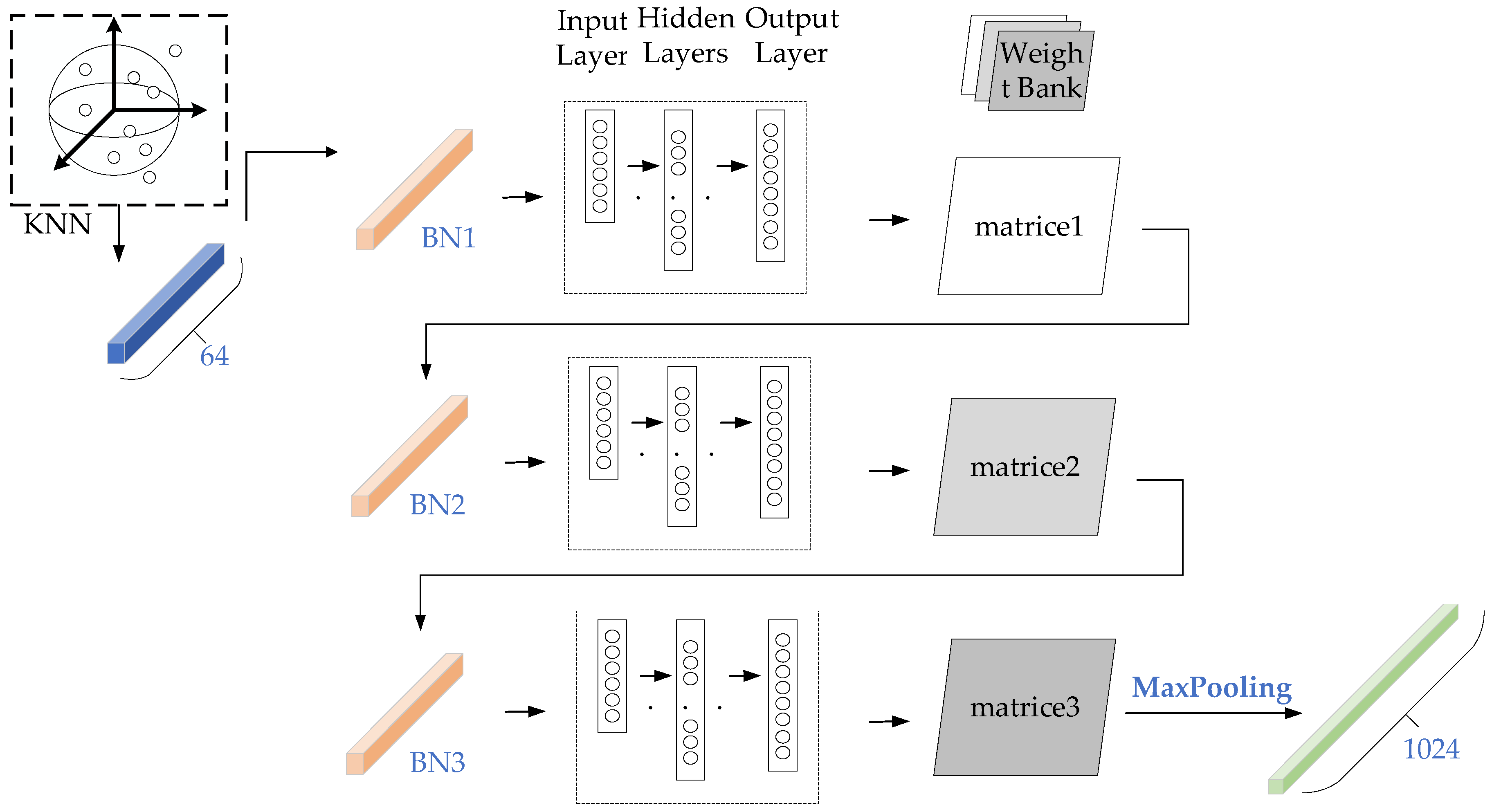

3.1. Position Adaptive Convolution Embedded Network

4. Experimental Results and Analysis

4.1. Dataset

4.2. Evaluation Metrics

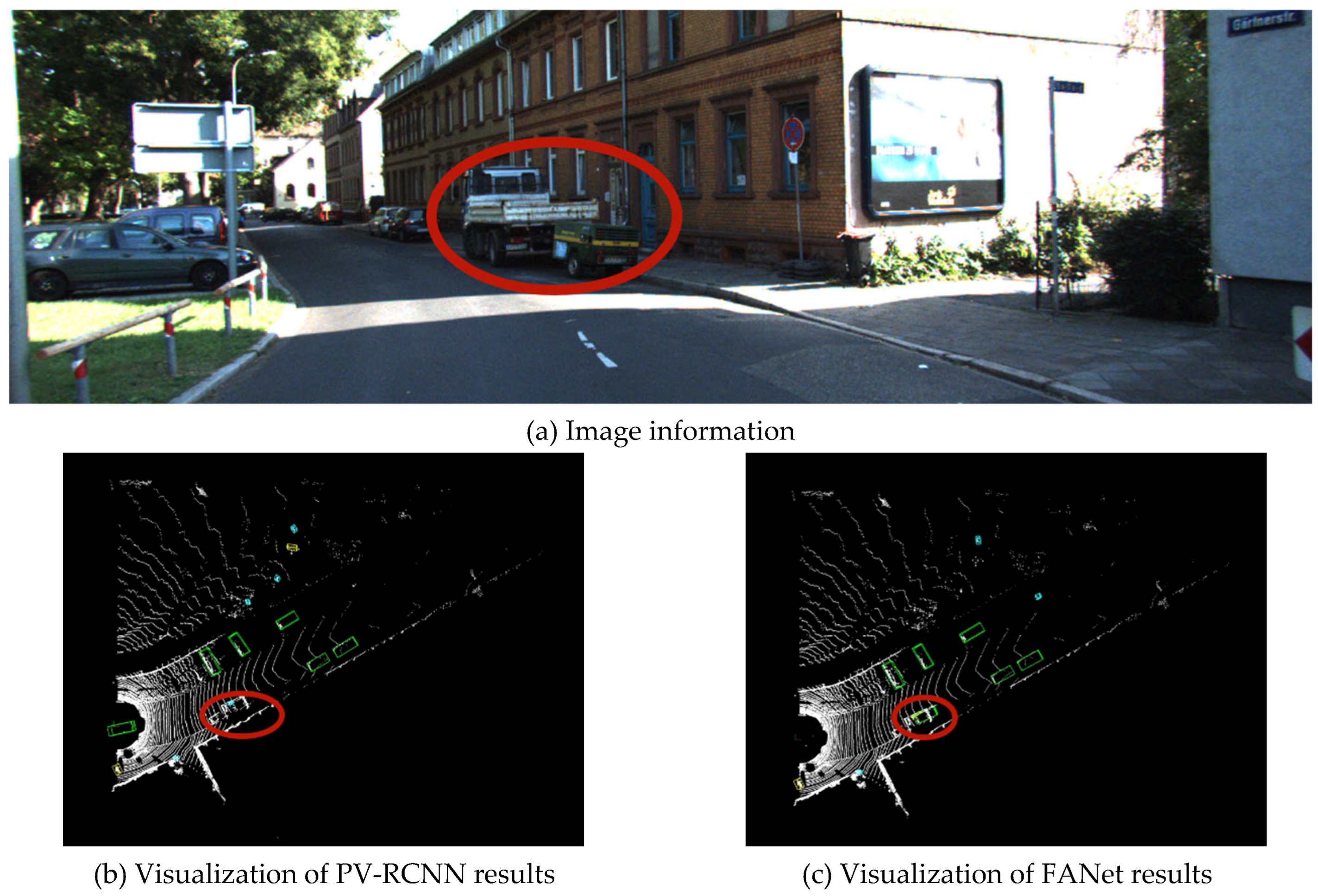

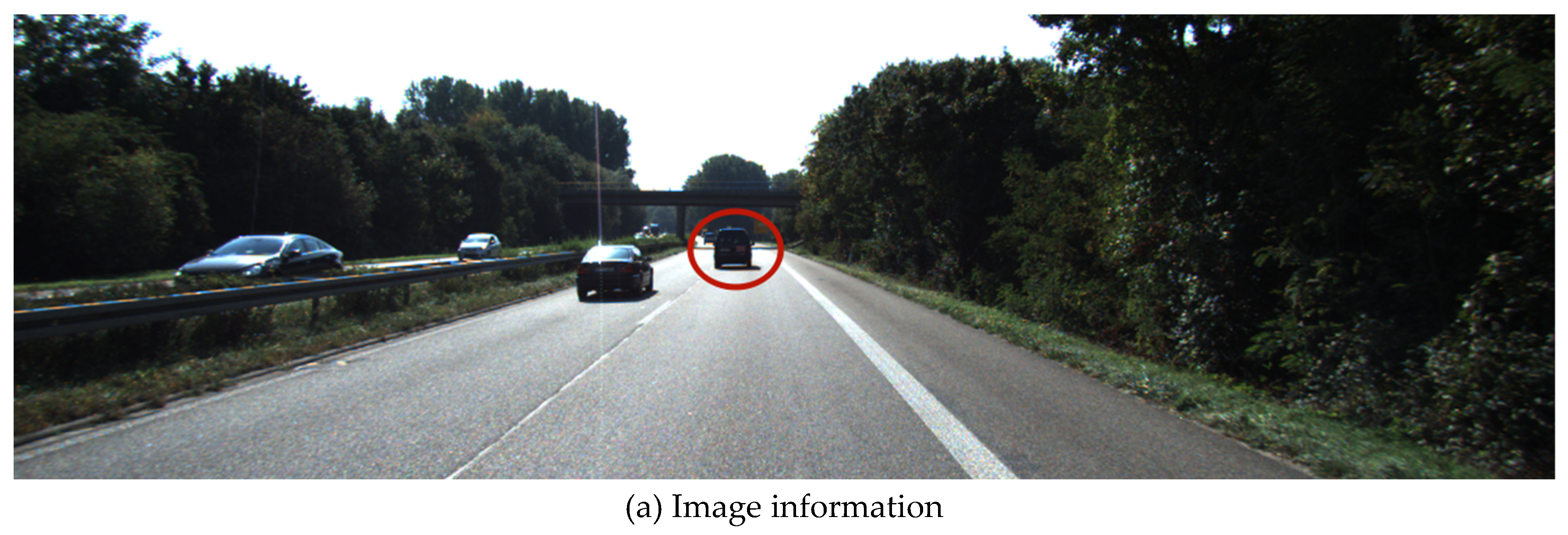

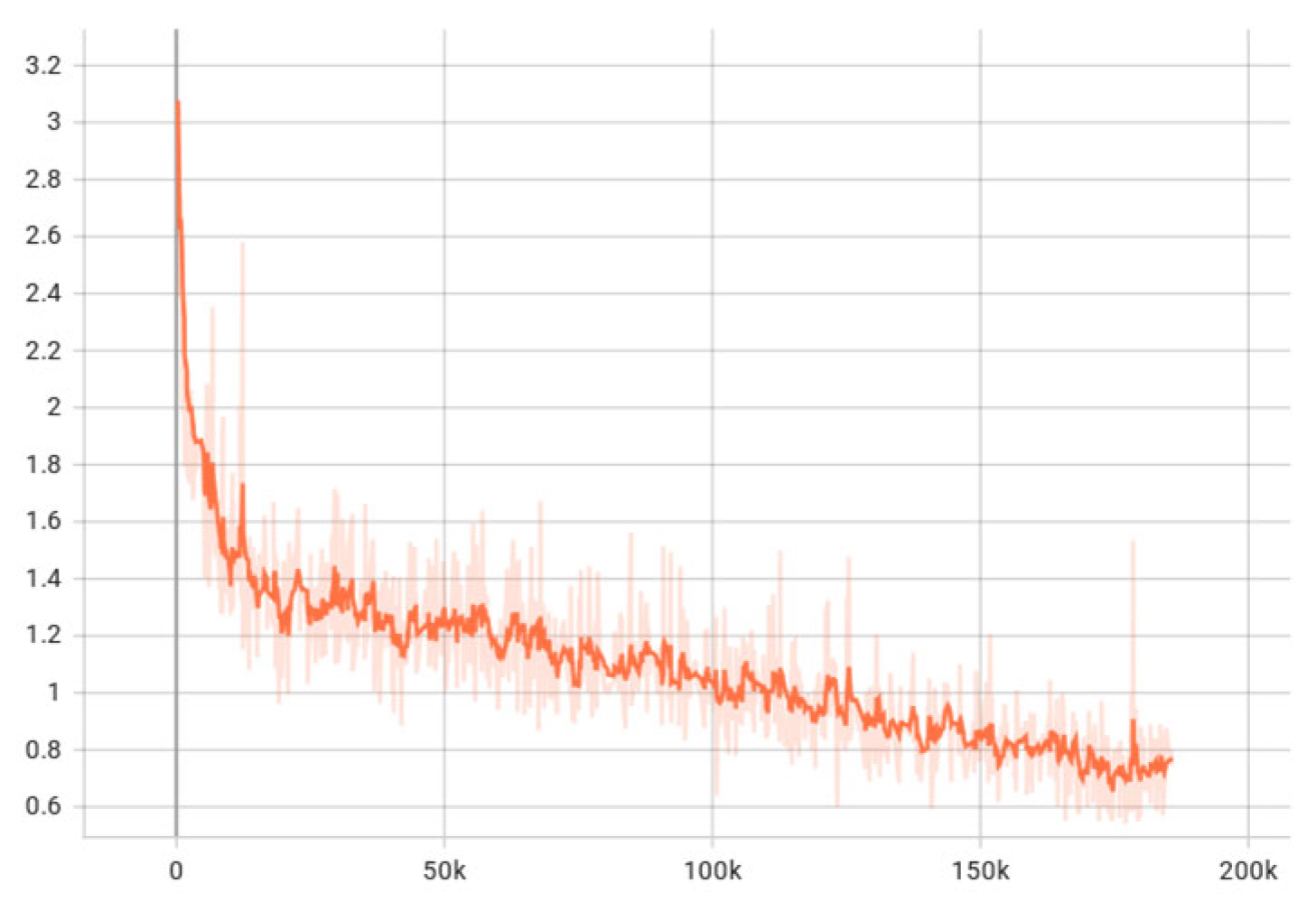

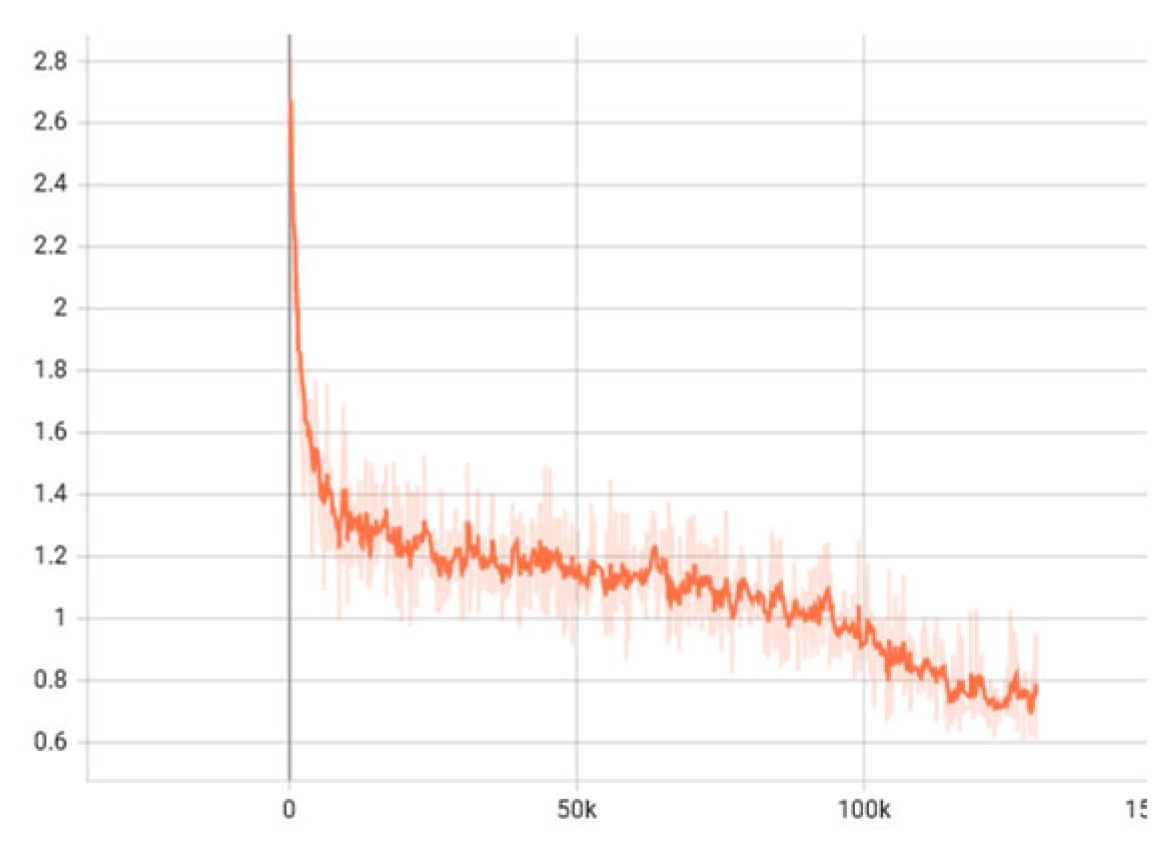

4.3. Data and Analysis

5. Conclusions

Author Contributions

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Shi, S.; Jiang, L.; Deng, J.; Wang, Z.; Guo, C.; Shi, J.; Wang, X.; Li, H.J.a.p.a. PV-RCNN++: Point-voxel feature set abstraction with local vector representation for 3D object detection. 2021. [CrossRef]

- Li, B. 3D Fully Convolutional Network for Vehicle Detection in Point Cloud. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Vancouver, CANADA, Sep 24-28, 2017; pp. 1513-1518.

- Qi, C.R.; Su, H.; Mo, K.C.; Guibas, L.J. Ieee. PointNet: Deep Learning on Point Sets for 3D Classification and Segmentation. In Proceedings of the 30th IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, Jul 21-26, 2017; pp. 77-85.

- Engelcke, M.; Rao, D.; Wang, D.Z.; Tong, C.H.; Posner, I. Vote3deep: Fast object detection in 3d point clouds using efficient convolutional neural networks. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), 2017; pp. 1355-1361.

- Ye, Y.Y.; Chen, H.J.; Zhang, C.; Hao, X.L.; Zhang, Z.X. SARPNET: Shape attention regional proposal network for liDAR-based 3D object detection. Neurocomputing 2020, 379, 53–63. [Google Scholar] [CrossRef]

- Deng, J.J.; Shi, S.S.; Li, P.W.; Zhou, W.G.; Zhang, Y.Y.; Li, H.Q.; Assoc Advancement Artificial, I. Voxel R-CNN: Towards High Performance Voxel-based 3D Object Detection. In Proceedings of the 35th AAAI Conference on Artificial Intelligence / 33rd Conference on Innovative Applications of Artificial Intelligence / 11th Symposium on Educational Advances in Artificial Intelligence, Electr Network, Feb 02-09, 2021; pp. 1201-1209.

- Yang, Z.T.; Sun, Y.A.; Liu, S.; Shen, X.Y.; Jia, J.Y. Ieee. STD: Sparse-to-Dense 3D Object Detector for Point Cloud. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, SOUTH KOREA, Oct 27-Nov 02, 2019; pp. 1951-1960.

- Mahmoud, A.; Hu, J.S.; Waslander, S.L. Dense voxel fusion for 3D object detection. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2023; pp. 663-672.

- Li, Y.; Chen, Y.; Qi, X.; Li, Z.; Sun, J.; Jia, J.J.a.p.a. Unifying voxel-based representation with transformer for 3d object detection. 2022. [Google Scholar] [CrossRef]

- Zhou, Y.; Tuzel, O. Ieee. VoxelNet: End-to-End Learning for Point Cloud Based 3D Object Detection. In Proceedings of the 31st IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, Jun 18-23, 2018; pp. 4490-4499.

- Yan, Y.; Mao, Y.X.; Li, B. SECOND: Sparsely Embedded Convolutional Detection. Sensors 2018, 18. [Google Scholar] [CrossRef]

- C., H.; H., Z.; J., H.; X.-S., H.; L., Z. Structure Aware Single-Stage 3D Object Detection from Point Cloud %J Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition. 2020. [CrossRef]

- Mao, J.; Xue, Y.; Niu, M.; Bai, H.; Feng, J.; Liang, X.; Xu, H.; Xu, C. Ieee. Voxel Transformer for 3D Object Detection. In Proceedings of the 18th IEEE/CVF International Conference on Computer Vision (ICCV), Electr Network, 2021 Oct 11-17, 2021; pp. 3144-3153.

- Chen, C.; Chen, Z.; Zhang, J.; Tao, D. SASA: Semantics-Augmented Set Abstraction for Point-based 3D Object Detection. 2022.

- Qi, C.R.; Yi, L.; Su, H.; Guibas, L.J. PointNet plus plus : Deep Hierarchical Feature Learning on Point Sets in a Metric Space. In Proceedings of the 31st Annual Conference on Neural Information Processing Systems (NIPS), Long Beach, CA, Dec 04-09, 2017.

- Yang, Z.; Sun, Y.; Liu, S.; Jia, J. 3dssd: Point-based 3d single stage object detector. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020; pp. 11040-11048.

- Zheng, W.; Tang, W.; Jiang, L.; Fu, C.-W.; Ieee Comp, S.O.C. SE-SSD: Self-Ensembling Single-Stage Object Detector From Point Cloud. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Electr Network, 2021 Jun 19-25, 2021; pp. 14489-14498.

- Qi, C.R.; Liu, W.; Wu, C.; Su, H.; Guibas, L.J. Frustum pointnets for 3d object detection from rgb-d data. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2018; pp. 918-927.

- Sheng, H.L.; Cai, S.J.; Liu, Y.; Deng, B.; Huang, J.Q.; Hua, X.S.; Zhao, M.J. Ieee. Improving 3D Object Detection with Channel-wise Transformer. In Proceedings of the 18th IEEE/CVF International Conference on Computer Vision (ICCV), Electr Network, Oct 11-17, 2021; pp. 2723-2732.

- Shi, S.; Guo, C.; Jiang, L.; Wang, Z.; Shi, J.; Wang, X.; Li, H. Pv-rcnn: Point-voxel feature set abstraction for 3d object detection. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020; pp. 10529-10538.

- Shi, S.; Wang, Z.; Shi, J.; Wang, X.; Li, H. From Points to Parts: 3D Object Detection From Point Cloud With Part-Aware and Part-Aggregation Network. Ieee Transactions on Pattern Analysis and Machine Intelligence 2021, 43, 2647–2664. [Google Scholar] [CrossRef]

- Bhattacharyya, P.; Czarnecki, K.J.a.p.a. Deformable PV-RCNN: Improving 3D object detection with learned deformations. 2020. [CrossRef]

- Xu, M.T.; Ding, R.Y.; Zhao, H.S.; Qi, X.J.; Ieee Comp, S.O.C. PAConv: Position Adaptive Convolution with Dynamic Kernel Assembling on Point Clouds. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Electr Network, Jun 19-25, 2021; pp. 3172-3181.

- Thomas, H.; Qi, C.R.; Deschaud, J.-E.; Marcotegui, B.; Goulette, F.; Guibas, L.J. Ieee. KPConv: Flexible and Deformable Convolution for Point Clouds. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, SOUTH KOREA, 2019. Oct 27-Nov 02, 2019; pp. 6420-6429.

- Chen, Y.; Dai, X.; Liu, M.; Chen, D.; Yuan, L.; Liu, Z. Dynamic convolution: Attention over convolution kernels. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020; pp. 11030-11039.

- Geiger, A.; Lenz, P.; Stiller, C.; Urtasun, R.J.T.I.J.o.R.R. Vision meets robotics: The kitti dataset. 2013, 32, 1231-1237. [CrossRef]

- Sun, P.; Kretzschmar, H.; Dotiwalla, X.; Chouard, A.; Patnaik, V.; Tsui, P.; Guo, J.; Zhou, Y.; Chai, Y.; Caine, B. Scalability in perception for autonomous driving: Waymo open dataset. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020; pp. 2446-2454.

| symbols | significance |

| W | weight bank |

| WT | weight matrix |

| CP | input channel |

| Cq | output channel |

| T | number of weight matrices |

| Di | center point |

| Dj | neighboring point |

| ATi,j | location adaptive coefficient |

| Relu | activation function |

| Softmax | normalization function |

| K | dynamic kernel |

| Fp | input characteristic |

| τcorr | weight regularization |

| Car-3D | Car-BEV | |||||

|---|---|---|---|---|---|---|

| Method | Easy | Mod | Hard | Easy | Mod | Hard |

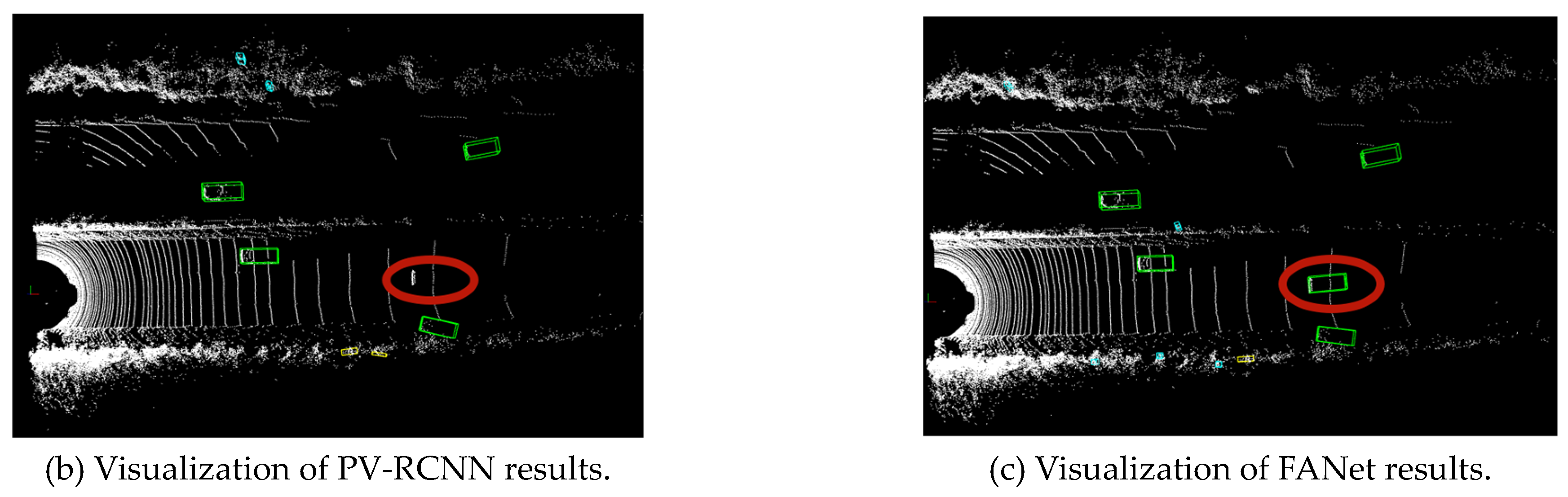

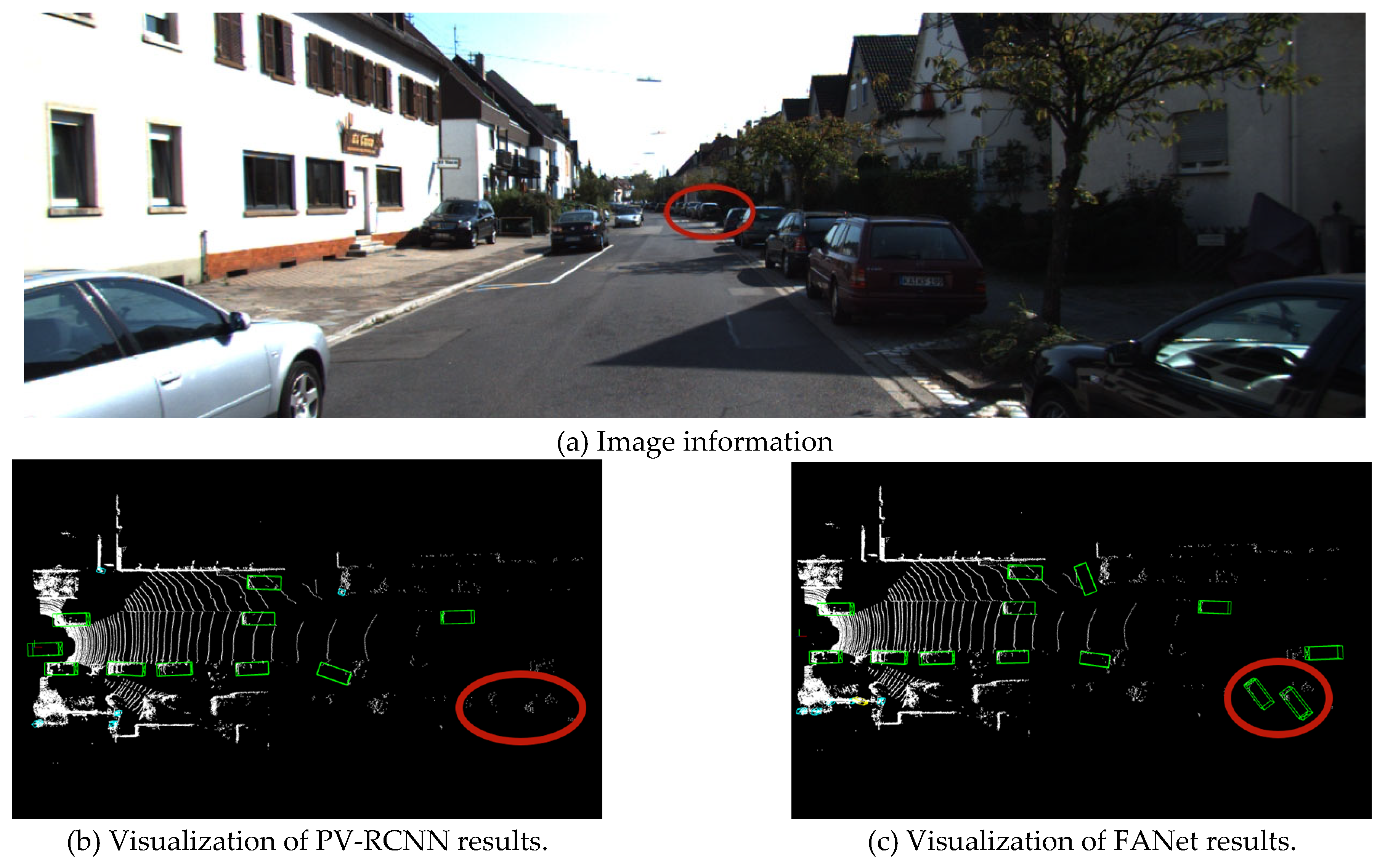

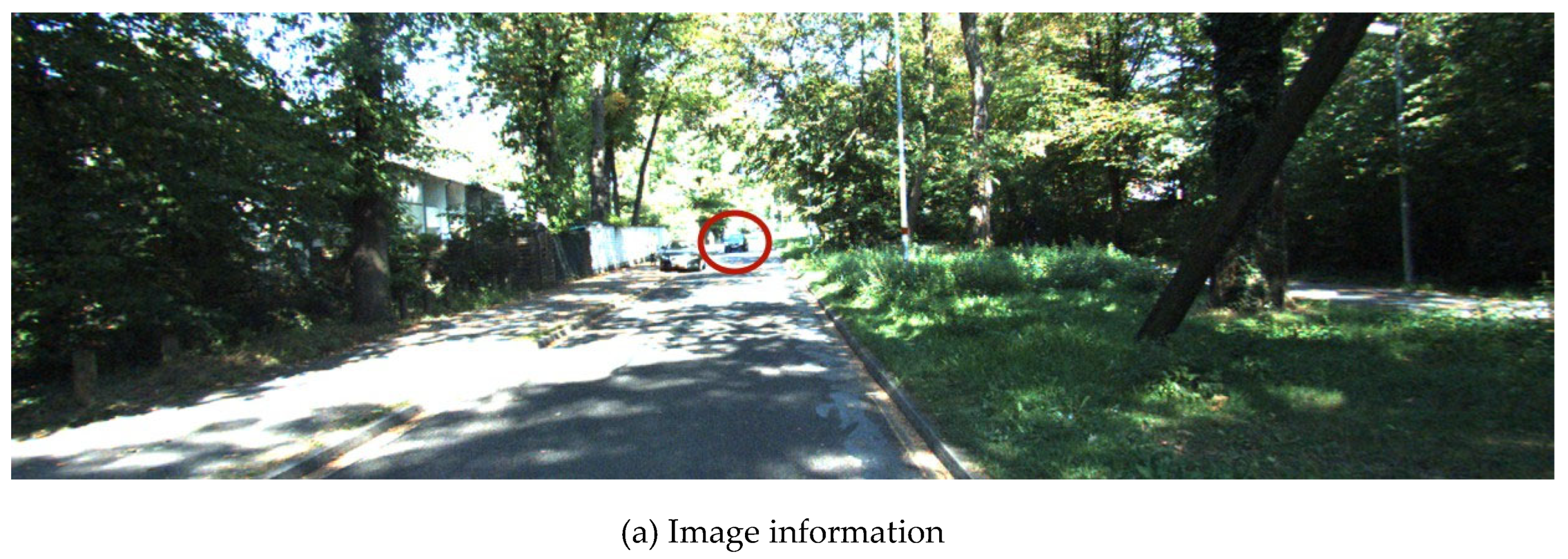

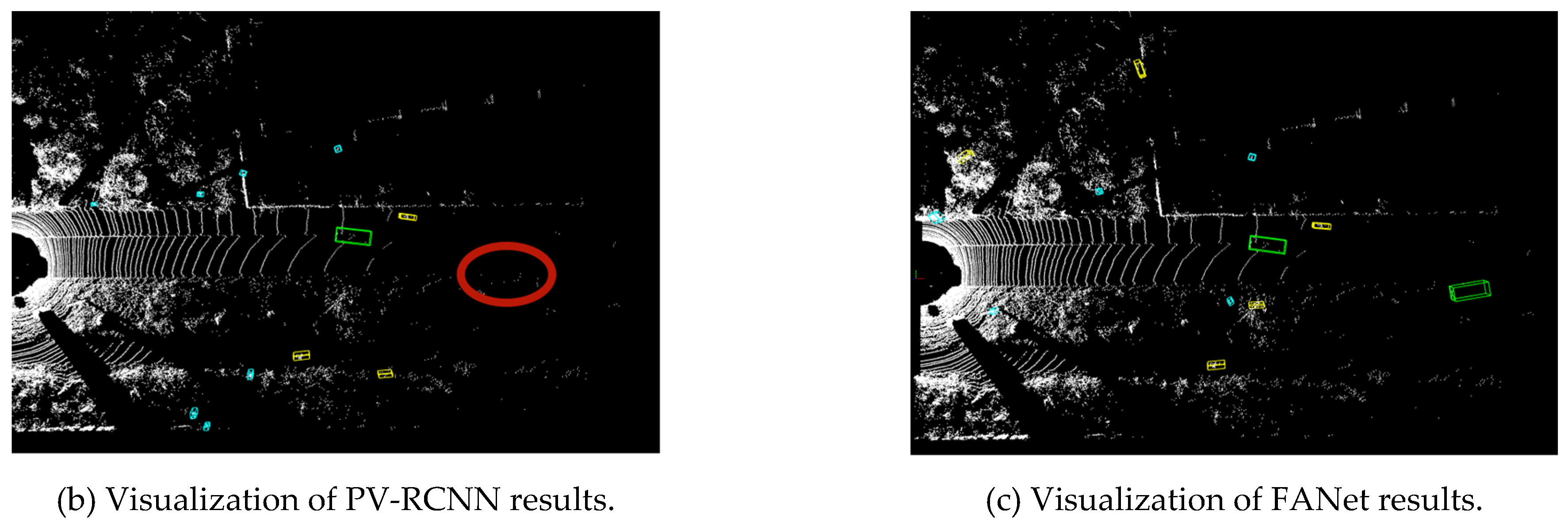

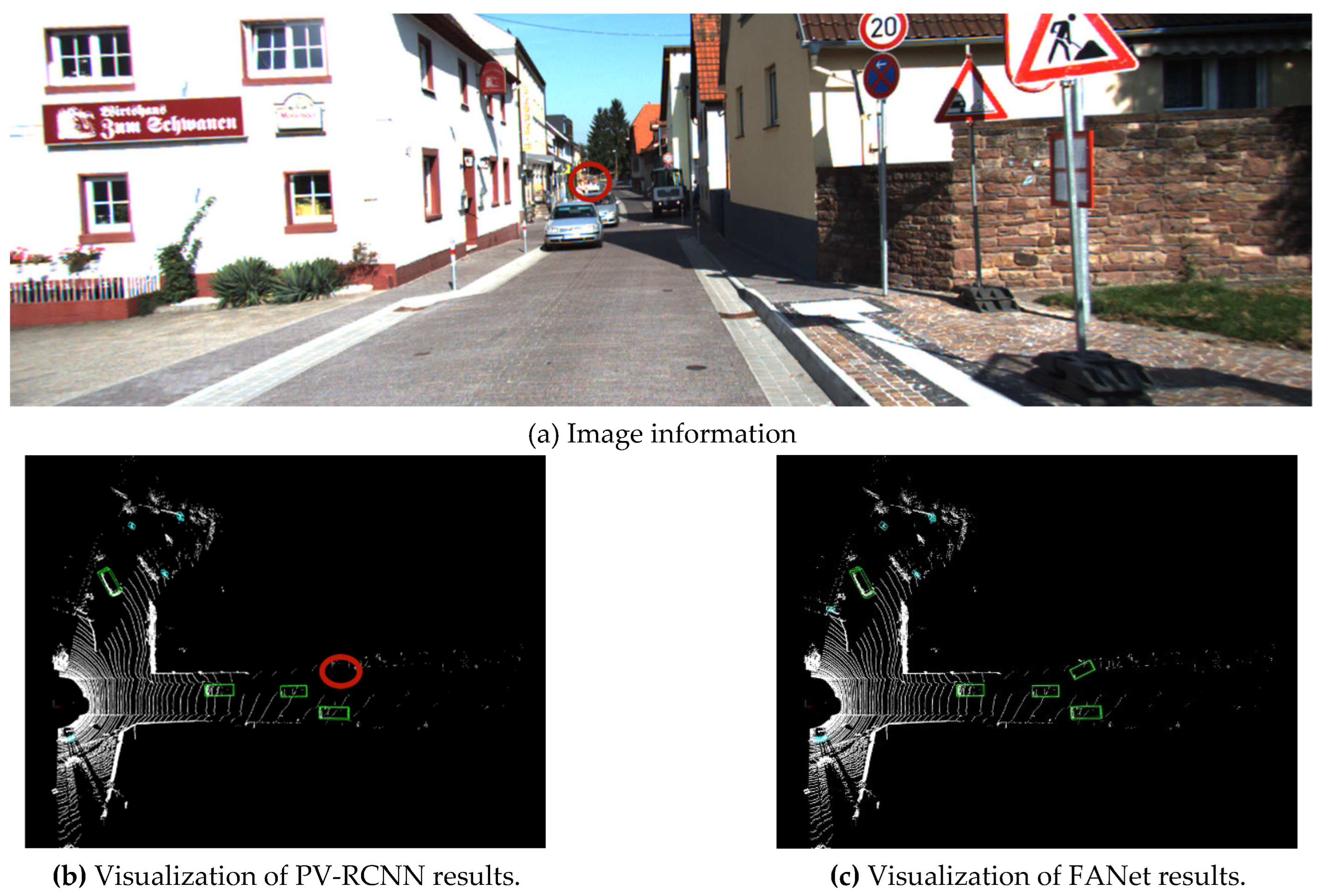

| PV-RCNN | 89.66 | 81.82 | 78.06 | 93.00 | 88.58 | 88.25 |

| FANet | 92.41 | 82.82 | 80.30 | 94.06 | 90.63 | 91.30 |

| Pedestrian-3D | Pedestrian-BEV | |||||

|---|---|---|---|---|---|---|

| Method | Easy | Mod | Hard | Easy | Mod | Hard |

| PV-RCNN | 63.89 | 56.35 | 51.31 | 66.87 | 59.79 | 55.60 |

| FANet | 65.53 | 58.11 | 52.06 | 66.21 | 60.21 | 55.37 |

| Cyclist-3D | Cyclist-BEV | |||||

|---|---|---|---|---|---|---|

| Method | Easy | Mod | Hard | Easy | Mod | Hard |

| PV-RCNN | 87.03 | 68.70 | 64.38 | 93.32 | 75.07 | 70.49 |

| FANet | 90.10 | 71.27 | 66.42 | 89.53 | 77.34 | 71.10 |

| 3D-mAP | |||

|---|---|---|---|

| Method | Easy | Mod | Hard |

| STD | 77.89 | 67.71 | 62.85 |

| Part-A2 | 77.75 | 66.49 | 61.27 |

| 3DSSD | 78.30 | 67.57 | 62.31 |

| CT3D | 77.77 | 69.77 | 64.92 |

| VoTR | 79.84 | 70.09 | 66.90 |

| PV-RCNN | 80.19 | 68.96 | 64.58 |

| PV-RCNN++ | 80.30 | 69.41 | 64.91 |

| FANet | 82.68 | 70.73 | 66.26 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).