3.2.1. Topic similarity calculation

LDA model is a bag-of-words model. Words are independent of each other and cannot solve cross-language problems [

34]. In this paper, by setting the training corpus as a bilingual parallel corpus, there are no identical words between bilingual documents, so the topics of the bilingual texts are also independent, so that the bilingual topics present a symmetrical distribution. This process is equivalent to using a symmetrical bilingual corpus to train two If there are two LDA models, the topics in one model are all Chinese, and the topics in the other model are all English.

Suppose the number of topics is 2k, the Chinese topic sequence, the English topic sequence is , where is the symmetric topic of .

The problem can now be transformed into a bilingual topic sequence , the sequence is transformed into , so that and are bilingual symmetric topics. Suppose that there is a matrix so that is the similarity between topic i and topic j, then this matrix is called topic similarity matrix. For any topic i, we can find the most similar topic, thus solving this problem.

Topic similarity can be defined by the similarity of subject words. In LDA, a topic is embodied as a certain probability distribution of words, sorted by probability from large to small, and the top m words with the largest frequency are defined as the topic words of a topic [

35]. A subject can thus be defined as a collection of subject terms:

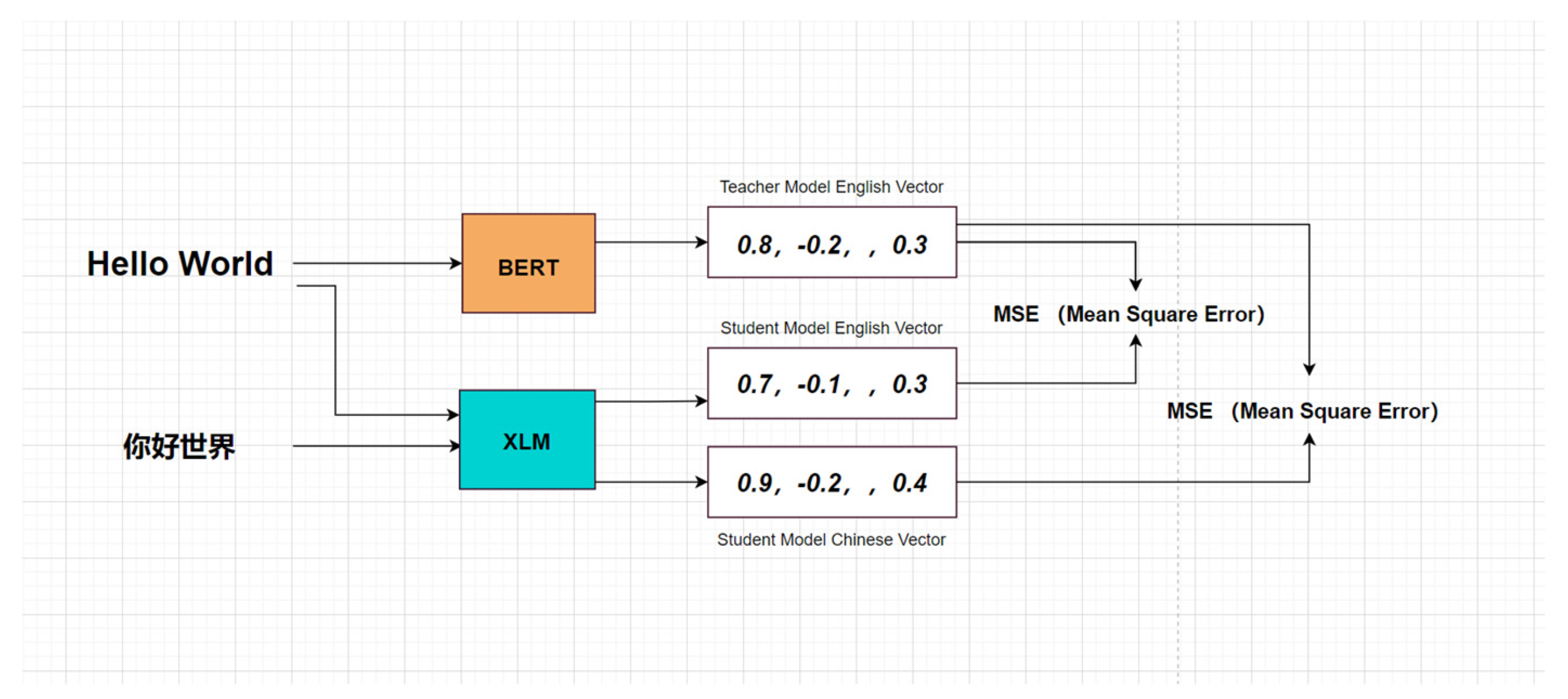

Subject headings can be directly represented by a cross language model, and the process of representing a vector of text x through a cross language model (Cross Language Model) is defined as the following function:

The similarity of subject words is defined by cosine similarity, which uses the angle between two vectors in a high-dimensional space to describe how similar two vectors are:

The similarity between subject words

and

is defined as the similarity between subject words

and b:

Then the similarity between topic a and topic b is:

To guarantee

, the topic similarity is defined as:

In order to make the distribution of topic similarity between 0 and 1, and the similarity between asymmetric topics is lower and the similarity between symmetric topics is higher, the sine function is used to remap the topic similarity [

36]. Calculate the similarity between all topics, the minimum value is

, the maximum value is

, the similarity is evenly distributed between

and

, through the following linear function y=ax + b, where

. Use the sine function to map and normalize the data between 0 and 1:

This paper does not map the distribution of similarity to 01 distribution, because there is also a certain similarity between different topics, such as technology topics and automobile topics, military topics and political topics.

To sum up, the calculation process of the topic similarity matrix is as follows:

For each topic of LDA, take the top m words with the highest probability as the topic words of the topic;

For any topic word w, it can be Through the cross-language model, it is represented by a 768-dimensional vector;

Calculate the similarity between all topics;

Count the maximum similarity and minimum similarity in all samples, and re-normalize the similarity to Between 0 and 1.

3.2.2. News similarity calculation

News can be expressed as the vector representation of the semantic space of the title and the probability distribution representation of the topic .

For news A and B, their headline similarity can be directly defined by the cosine similarity of the vector:

Through the topic similarity matrix

, the topic similarity

between any two topics i and j can be obtained, the probability of news A belonging to topic i is

, the probability of news B belonging to topic j is

, so the topics of news AB are similar The degree is:

Therefore, the similarity of news AB is:

where α and β are the weight coefficients of title similarity and topic similarity, respectively.

3.2.3. Based on improved Single-Pass Chinese-English bilingual news clustering

The algorithm flow of applying the traditional Single-Pass to the bilingual news clustering scene in this paper is as follows:

There is a news text sequence, specify the news similarity threshold news_threshold;

For news , calculate Its similarity with all clusters, find the cluster with the largest similarity, if the maximum similarity is greater than news_threshold, add the news to this cluster; otherwise, the news is clustered independently.

The similarity between the sample and the cluster in the original Single-Pass is the sample Maximum similarity to all news in this cluster. It can be seen from the above process that the cluster attribution of the current sample can be found by traversing all samples at most once; if the current total number of samples is N, the time complexity is O (N), which is very efficient compared with the complexity of other non-incremental clustering algorithm .

Although the advantages of Single-Pass applied to news clustering are obvious, the shortcomings should not be ignored:

The time complexity of O(N) is still too large, especially when performing topic-level coarse-grained clustering, where news is clustered in a few;

When performing event-level fine-grained clustering, the temporal characteristics of streaming data are not reflected;

The accuracy of Single-Pass is not high, because the cluster of samples belongs. After it is determined, it will not change, even if the density of the two clusters is reachable, the number of clusters will only increase, and there is no cluster merging process.

For the original Single-Pass directly applied to the three news clustering scenarios Defects, this paper makes the following improvements to Single-Pass:

Define the mean value of news in the cluster (the mean of the headline vector and the mean of the topic probability distribution) as the cluster center, and directly calculate the distance between the news and the cluster;

In the event When clustering high-level fine-grained news, the news release time parameter is added. In highly similar news, the time is more likely to be the same event;

Specify the cluster merging threshold. As news is added continuously, the cluster center is constantly changing. Each clustering, if the distance between clusters is less than the cluster merging threshold, the two clusters will be merged [

37].

If the existing sample point is m, there is a cluster

, then in the original Single-Pass algorithm, the distance between m and C is defined as:

A piece of news can be defined by the title vector plus the topic probability distribution, title vector

, topic distribution

, the average of the headline vectors of all news in the cluster, and the average of the topic distributions of all news can be defined as the following cluster center:

()

The cluster center can be regarded as a virtual representative news, so the distance between news and the cluster can be calculated directly according to the news similarity formula. If the number of current clusters is k, the time complexity is O(k), which can be achieve the effect of real-time clustering.

When a cluster is represented by the cluster center, the news similarity formula can be applied to calculate the distance between clusters [

38]. Specify the similarity threshold between clusters and cluster threshold. After one clustering is completed, if the similarity between the two clusters is greater than cluster threshold, the two clusters will be merged and the cluster center will be recalculated.

The process of the coarse-grained news clustering algorithm at the topic level is as follows:

Calculate the similarity between the newly added sample a and the center of each cluster, and take the cluster c;

If a and c is less than the threshold news threshold, sample a Independent clustering, otherwise, add sample a to cluster c, recalculate and persist the cluster center of c;

If c changes, calculate c and other clusters, and take the similarity cluster t, if t and c is greater than the threshold cluster threshold, merge c and t, recalculate and persist the cluster center of the merged cluster;

When doing fine-grained news clustering at the event level, we can increase the title’s weight of similarity α, reduces the weight of topic similarity, because the title is often a high summary of news events [

39]. Even so, in many similar events, the headlines still cannot distinguish, for example, there are two NBA sports news headlines are "Grizzlies beat the Nets", but these are events of different time periods. Therefore, in the event-level clustering, the input order of news is arranged in the order of release time, adding the time interval parameter

, and specifying the news time threshold time_threshold, for any two news in the cluster, if the time interval

, then split into two sub-clusters.

The algorithm flow of event-level fine-grained news clustering is as follows:

Calculate the similarity between the new sample a and the center of each cluster, and arrange the clusters in descending order of similarity;

If the similarity between a and is greater than the threshold news_ Threshold and the time interval of any news in a and is less than the time threshold time_ threshold, add sample a to cluster c, recalculate and persist c the cluster center Otherwise i+1 repeat step2. If i = k,a clustered independently;

If c changes, calculate c and other clusters, and take the cluster t, if t and c is greater than the threshold cluster_threshold, merge c and t, recalculate and persist the cluster center of the merged cluster;