Submitted:

02 May 2023

Posted:

03 May 2023

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

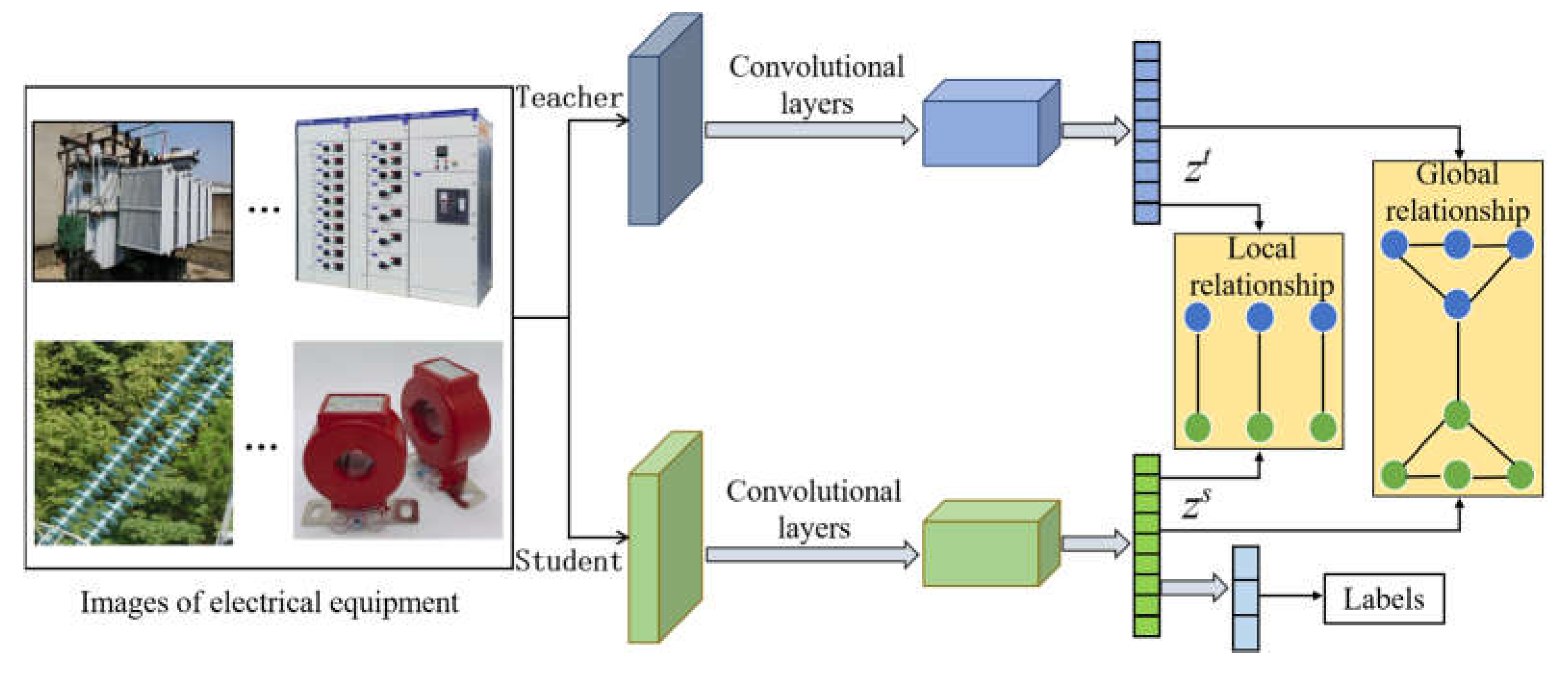

- We present a novel distillation approach that compresses the knowledge of teacher networks into a compact student network, enabling efficient few-shot classification. The incorporation of global and local relationship strategies during the distillation process effectively directs the student network towards achieving performance levels akin to those of the teacher network.

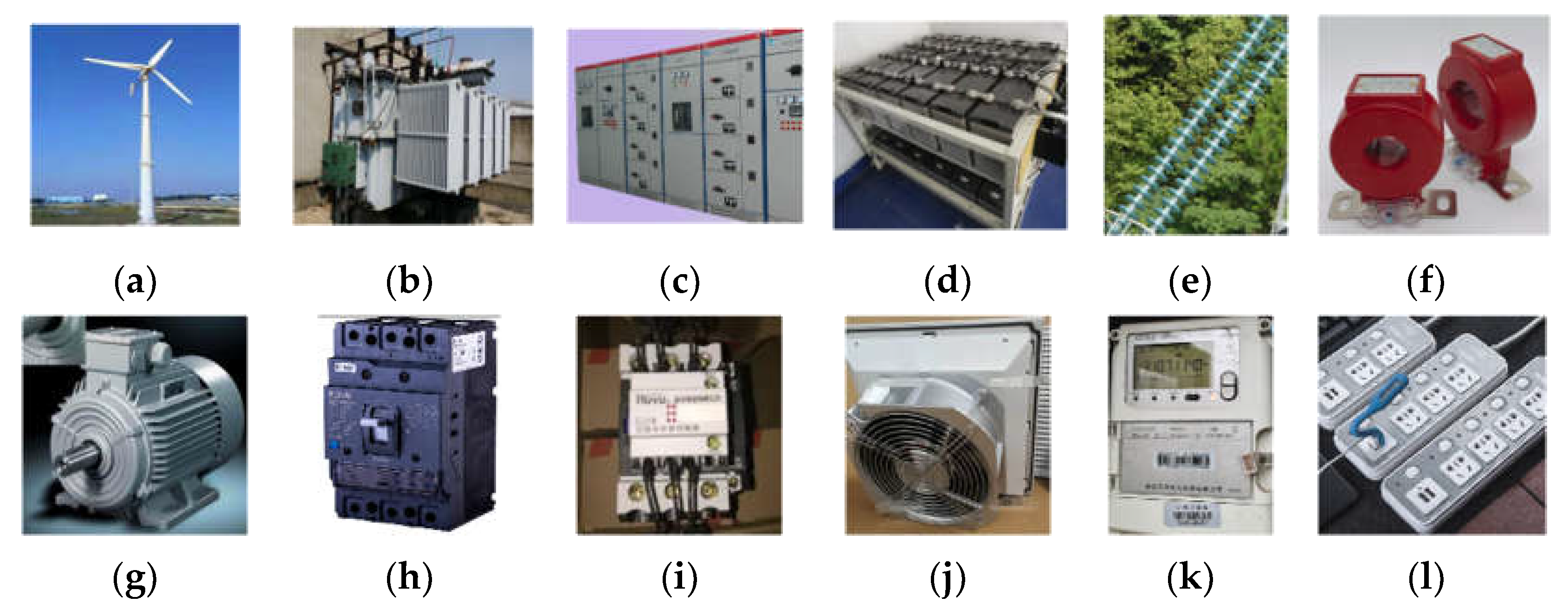

- We contribute a new dataset that contains 100 classes of electric equipment with 4000 images. The dataset contains a wide range of various electrical equipment, including power generation equipment, distribution equipment, industrial electrical equipment, and household electrical equipment.

- We demonstrate the effectiveness of our proposed method by validating it on three public datasets and comparing it with the SOTA methods on the electric image dataset we introduced. Our proposed method outperforms all other methods and achieves the best performance.

2. Related Work

2.1. Electrical Images Classification

2.2. Few-shot Classification

2.3. Knowledge Distillation

3. Methodology

3.1. Problem Definition

3.2. FSC Network based on Global and Local Knowledge Distillation

3.2.1. Pre-train of Teacher Network

3.2.2. Global and Local Knowledge Distillation

3.2.3. Few-shot Evaluation

4. Experiments

4.1. Experiments on Public Datasets

4.1.1. Experiment Setup

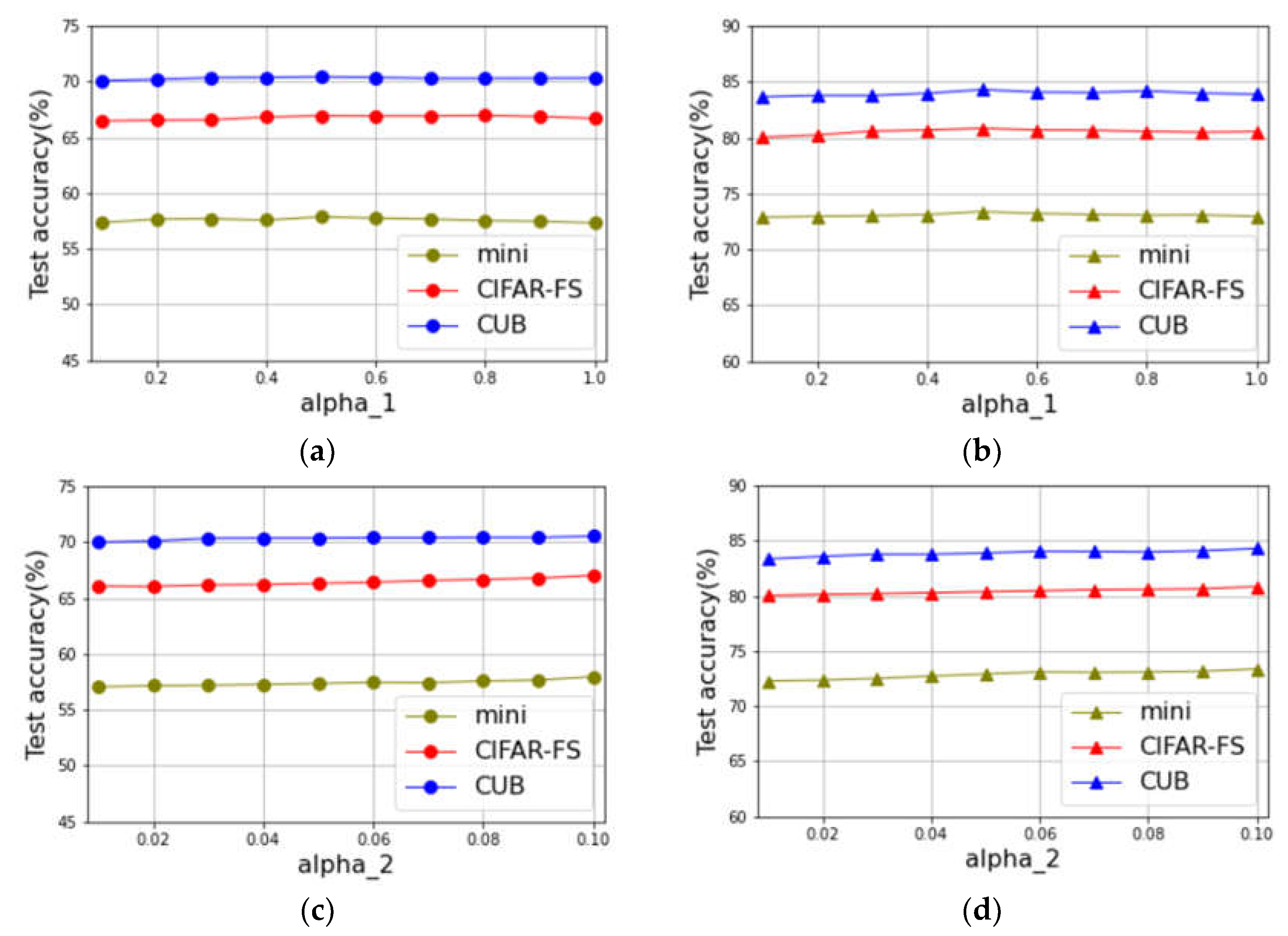

4.1.2. Parametric Analysis Experiment

4.1.3. Ablation Experiment

4.1.4. Comparison Experiment with Existing Methods

4.2. Electrical images Dataset

4.2.1. EEI-100 Dataset

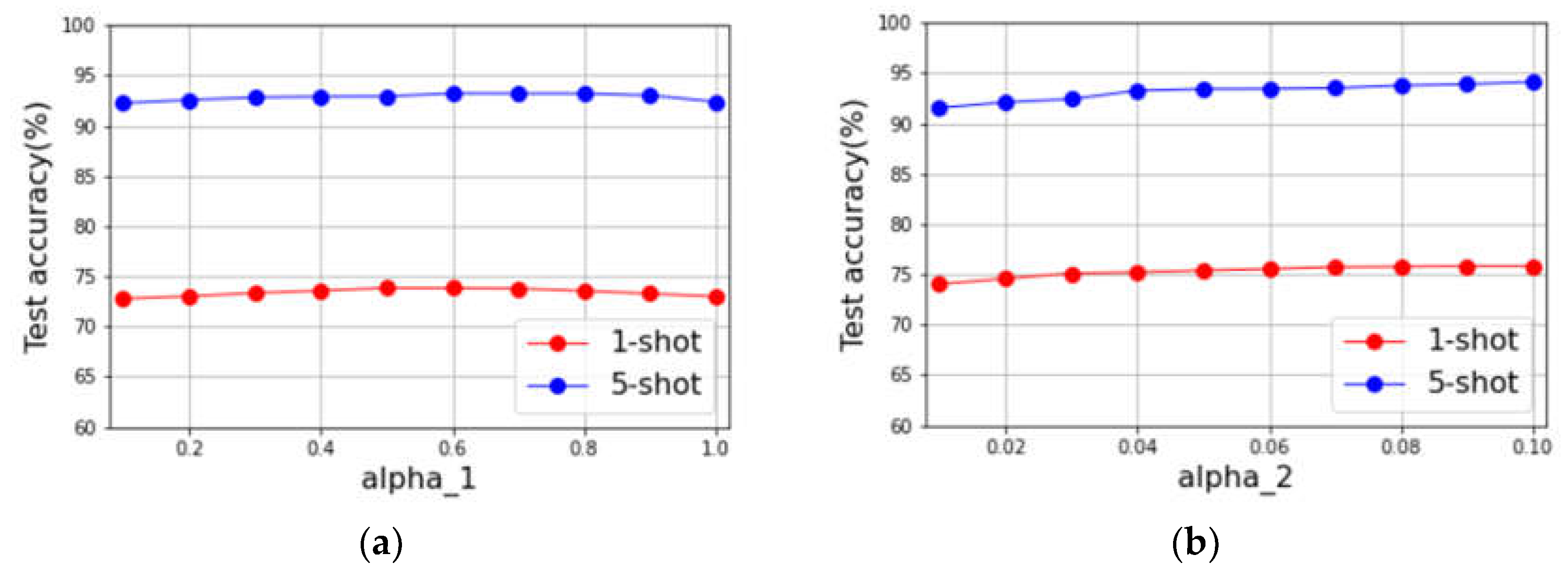

4.2.2. Parametric Analysis Experiment

4.2.3. Comparison Experiment with Existing Methods

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| UAV | Unmanned aerial vehicle |

| CNN | convolutional neural network |

| FSL | Few-shot learning |

| FSC | Few-shot classification |

Appendix A

References

- Peng, J.; Sun, L.; Wang, K.; Song, L. ED-YOLO power inspection UAV obstacle avoidance target detection algorithm based on model compression. Chinese Journal of Scientific Instrument 2021, 10, 161–170. [Google Scholar]

- Geoffrey, H.; Oriol, V.; Jeff, D. Distilling the Knowledge in a Neural Network. arXiv:1503.02531, Mar 2015. arXiv:1503.02531, Mar 2015.

- Adriana, R.; Nicolas, B.; Samira, E.K.; Antoine, C.; Carlo, G.; Yoshua, B. FitNets: Hints for Thin Deep Nets. Dec 2014. arXiv:1412.6550.

- Bogdan, T.N.; Bruno, M.; Rafael, W.; Victor, B.G.; Vanderlei, Z.; Lourival, L. A Computer Vision System for Monitoring Disconnect Switches in Distribution Substations. IEEE Transactions on Power Delivery 2022, 37, 833–841. [Google Scholar] [CrossRef]

- Zhang, Z.D.; Zhang, B.; Lan, Z.C.; Lu, H.C.; Li, D.Y.; Pei, L.; Yu, W.X. FINet: An Insulator Dataset and Detection Benchmark Based on Synthetic Fog and Improved YOLOv5. IEEE Transactions on Instrumentation and Measurement 2022, 71, 1–8. [Google Scholar] [CrossRef]

- Xu, Y.; Li, Y.; Wang, Y.; Zhong, D.; Zhang, G. Improved few-shot learning method for transformer fault diagnosis based on approximation space and belief functions. Expert Systems with Applications 2021, 167, 114105. [Google Scholar] [CrossRef]

- Yi, Y.; Chen, Z.; Wang, L. Intelligent Aging Diagnosis of Conductor in Smart Grid Using Label-Distribution Deep Convolutional Neural Networks. IEEE Transactions on Instrumentation and Measurement 2022, 71, 1–8. [Google Scholar] [CrossRef]

- Finn, C.; Abbeel, P.; Levine, S. Model-agnostic meta-learning for fast adaptation of deep network. Proceedings of the 34th International Conference on Machine Learning (ICML), Sydney, Australia, 7 Aug 2017.

- Li, Z.; Zhou, F.; Chen, F.; Li, H. Meta-SGD: Learning to Learn Quickly for Few-Shot Learning. Jul 2017. arXiv:1707.09835.

- Ravi, S.; Larochelle, H. Optimization as a model for few-shot learning. 5th International Conference on Learning Representations (ICLR), Toulon, France, 24 Apr 2017.

- Wu, Z.; Li, Y.; Guo, L.; Jia, K. PARN. Position-Aware Relation Networks for few-shot learning. Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea, 27 Oct 2019.

- Gidaris, S.; Bursuc, A.; Komodakis, N.; Perez, P.; Cord, M.; Ecole, L. Boosting few-shot visual learning with self-supervision. Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea, 27 Oct 2019.

- Zhang, H.; Zhang, J.; Koniusz, P. Few-shot learning via saliency-guided hallucination of samples. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), California, USA, 16 June 2019.

- Hou, R.; Chang, H.; Ma, B.; Shan, S.; Chen, X. Cross attention network for few-shot classification. Proceedings of the 33rd International Conference on Neural Information Processing Systems (NIPS), Vancouver, Canada, 8 Dec 2019.

- Guo, Y.; Cheung, N. Attentive weights generation for few shot learning via information maximization. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, USA, 13 June 2020.

- Li, H.; Eigen, D.; Dodge, S.; Zeiler, M.; Wang, X. Finding task-relevant features for few-shot learning by category traversal. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), California, USA, 16 June 2019.

- Nguyen, V.N.; Løkse, S.; Wickstrøm, K.; Kampffmeyer, M.; Roverso, D.; Jenssen, R. SEN: a novel feature normalization dissimilarity measure for prototypical few-Shot learning networks. Proceedings of the 16th European conference on computer vision (ECCV), Glasgow, Scotland, 23 Aug 2020.

- Wertheime, D.; Tang, L.; Hariharan, B. Few-shot classification with feature map reconstruction networks. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), online, 19 June 2021.

- Li, W.; Wang, L.; Xu, J.; Huo, J.; Gao, Y.; Luo, J. Revisiting local descriptor based image-to-class measure for few-shot learning. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), California, USA, 16 June 2019.

- Zhang, C.; Cai, Y.; Lin, G.; Shen, C. DeepEMD: few-shot image classification with differentiable Earth Mover’s distance and structured classifiers. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, USA, 13 June 2020.

- Chen, Y.; Liu, Y. ; Kira, Zsolt.; Wang, Y.F.; Huang, J. A closer look at few-shot classification. Proceedings of the 7th International Conference on Learning Representations (ICLR), New Orleans, USA, 6 May 2019.

- Liu, B.; Cao, Y.; Lin, Y.; Zhang, Z.; Long, M.; Hu, H. Negative margin matters: understanding margin in few-shot classification. Proceedings of the 16th European conference on computer vision (ECCV), Glasgow, Scotland, 23 Aug 2020.

- Mangla, P.; Singh, M.; Sinha, A.; Kumari, N.; Balasubramanian, V.; Krishnamurthy, B. Charting the right manifold: Manifold mixup for few-shot learning. The IEEE Winter Conference on Applications of Computer Vision (WACV), Hawaii, USA, 7 Janu 2020.

- Su, J.; Maji, S.; Hariharan, B. When does self-supervision improve few-shot learning. Proceedings of the 16th European conference on computer vision (ECCV), Glasgow, Scotland, 23 Aug 2020.

- Shao, S.; Xing, L.; Wang, Y.; Xu, R.; Zhao, C.; Wang, Y.J.; Liu, B. MHFC: multi-head feature collaboration for few-shot learning. Proceedings of the 29th ACM International Conference on Multimedia (MM), Online, 20 Otc 2021.

- Zagoruyko, S.; Komodakis, N. Paying more attention to attention: improving the performance of convolutional neural networks via attention transfer. 5th International Conference on Learning Representations (ICLR), Toulon, France, 24 Apr 2017.

- Park, W.; Kim, D.; Lu, Y.; Cho, M. Relational knowledge distillation. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), California, USA, 16 June 2019.

- Peng, B.; Jin, X. ; Liu, J; Zhou, S.; Wu, Y.; Liu, Y.; Li, D.; Zhang, Z. Correlation congruence for knowledge distillation. Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea, 27 Oct 2019.

- Zhou, B.; Zhang, X. ; Zhao, J; Zhao, F.; Yan, C.; Xu, Y.; Gu, J. Few-shot electric equipment classification via mutual learning of transfer-learning model. IEEE 5th International Electrical and Energy Conference (CIEEC), Nangjing, China, 27 May 2022.

| Method | Backbone | MiniImageNet | CIFAR-FS | CUB | |||

|---|---|---|---|---|---|---|---|

| 1-shot | 5-shot | 1-shot | 5-shot | 1-shot | 5-shot | ||

| Global | Conv4 | 57.32±0.84 | 72.90±0.64 | 66.40±0.93 | 80.44±0.67 | 70.20±0.93 | 83.88±0.57 |

| Local | Conv4 | 57.65±0.83 | 73.06±0.64 | 66.63±0.93 | 80.64±0.67 | 70.12±0.93 | 83.66±0.57 |

| Global-Local | Conv4 | 57.86±0.83 | 73.38±0.62 | 67.04±0.91 | 80.84±0.68 | 70.44±0.92 | 84.19±0.56 |

| Method | Backbone | MiniImageNet | CIFAR-FS | CUB | |||

|---|---|---|---|---|---|---|---|

| 1-shot | 5-shot | 1-shot | 5-shot | 1-shot | 5-shot | ||

| Meta-learning | |||||||

| Relational | Conv4 | 50.44±0.82 | 65.32±0.70 | 55.00±1.00 | 69.30±0.80 | 62.45± 0.98 | 76.11± 0.69 |

| MetaOptSVM | Conv4 | 52.87±0.57 | 68.76±0.48 | - | - | - | - |

| PN+rot | Conv4 | 53.63±0.43 | 71.70±0.36 | - | - | - | - |

| CovaMNet | Conv4 | 51.19±0.76 | 67.65± 0.63 | - | - | 52.42±0.76 | 63.76±0.64 |

| DN4 | Conv4 | 51.24±0.74 | 71.02±0.64 | - | - | 46.84±0.81 | 74.92±0.64 |

| MeTAL | Conv4 | 52.63±0.37 | 70.52±0.29 | - | - | ||

| HGNN | Conv4 | 55.63±0.20 | 72.48±0.16 | - | - | 69.02±0.22 | 83.20±0.15 |

| DSFN | Conv4 | 50.21±0.64 | 72.20±0.51 | - | - | - | - |

| PSST | Conv4 | - | - | 64.37±0.33 | 80.42± 0.32 | - | - |

| Transfer-learning | |||||||

| Baseline++ | Conv4 | 48.24±0.75 | 66.43±0.63 | - | - | 60.53±0.83 | 79.34±0.61 |

| Neg-Cosine | Conv4 | 52.84±0.76 | 70.41±0.66 | - | - | - | - |

| SKD | Conv4 | 48.14 | 66.36 | - | - | - | - |

| CGCS | Conv4 | 55.53±0.20 | 72.12±0.16 | - | - | - | - |

| Our method | Conv4 | 57.86±0.83 | 73.38±0.62 | 67.04±0.91 | 80.84±0.68 | 70.44±0.92 | 84.19±0.56 |

| Method | 1-shot | 5-shot |

|---|---|---|

| CGCS | 72.85±0.68 | 89.68±0.27 |

| Neg-Cosine | 74.57±0.63 | 90.54±0.25 |

| HGNN | 75.61±0.62 | 93.54±0.24 |

| Our method | 75.80±0.67 | 94.12±0.20 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).