Submitted:

08 March 2023

Posted:

09 March 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

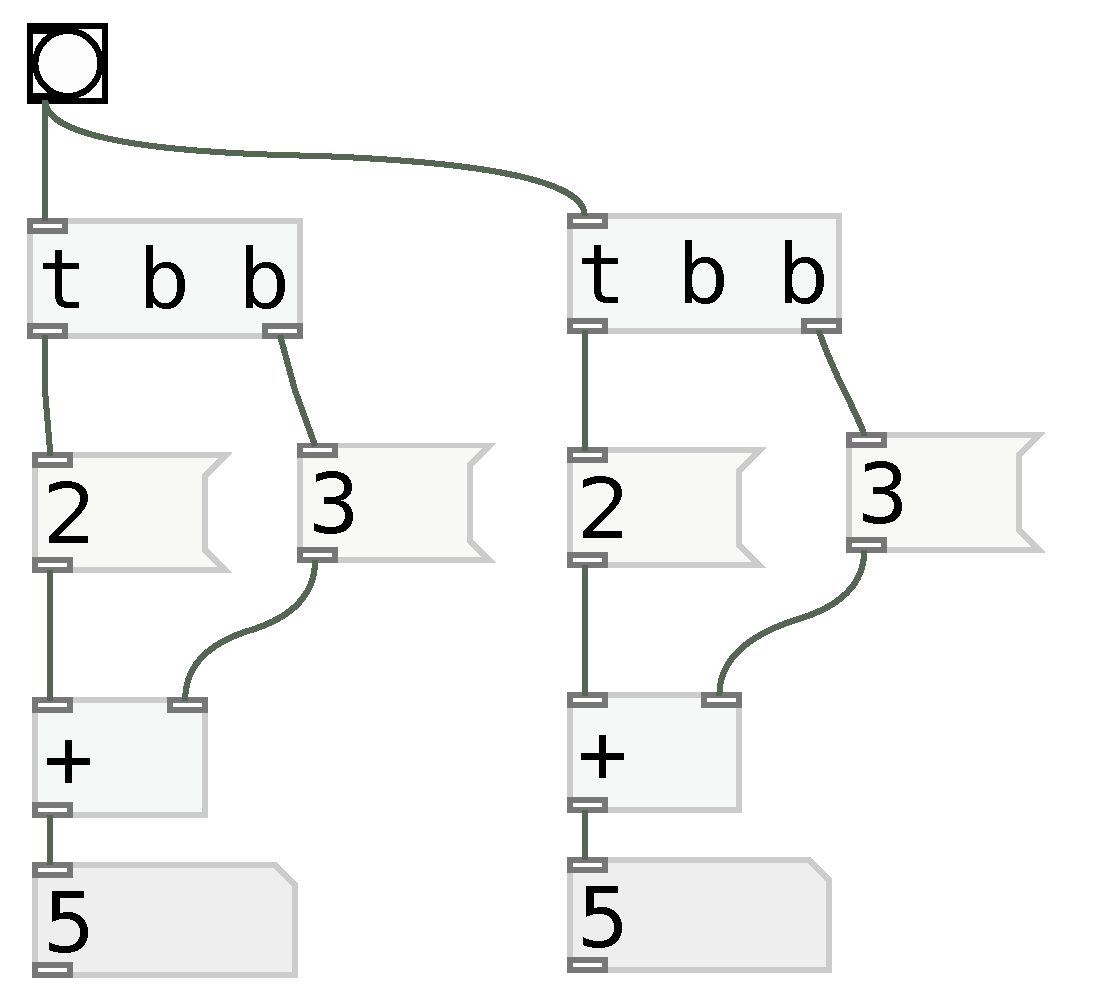

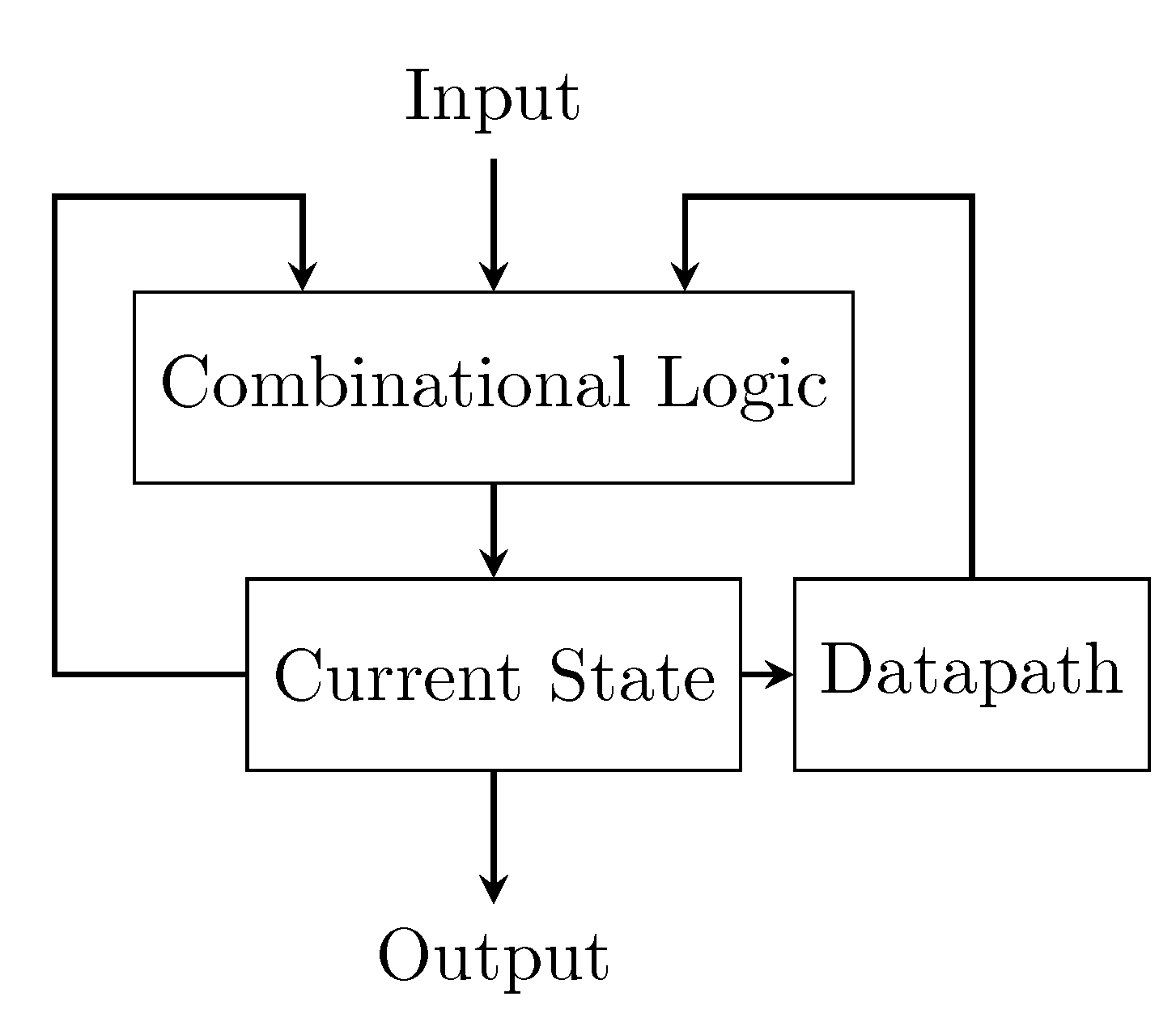

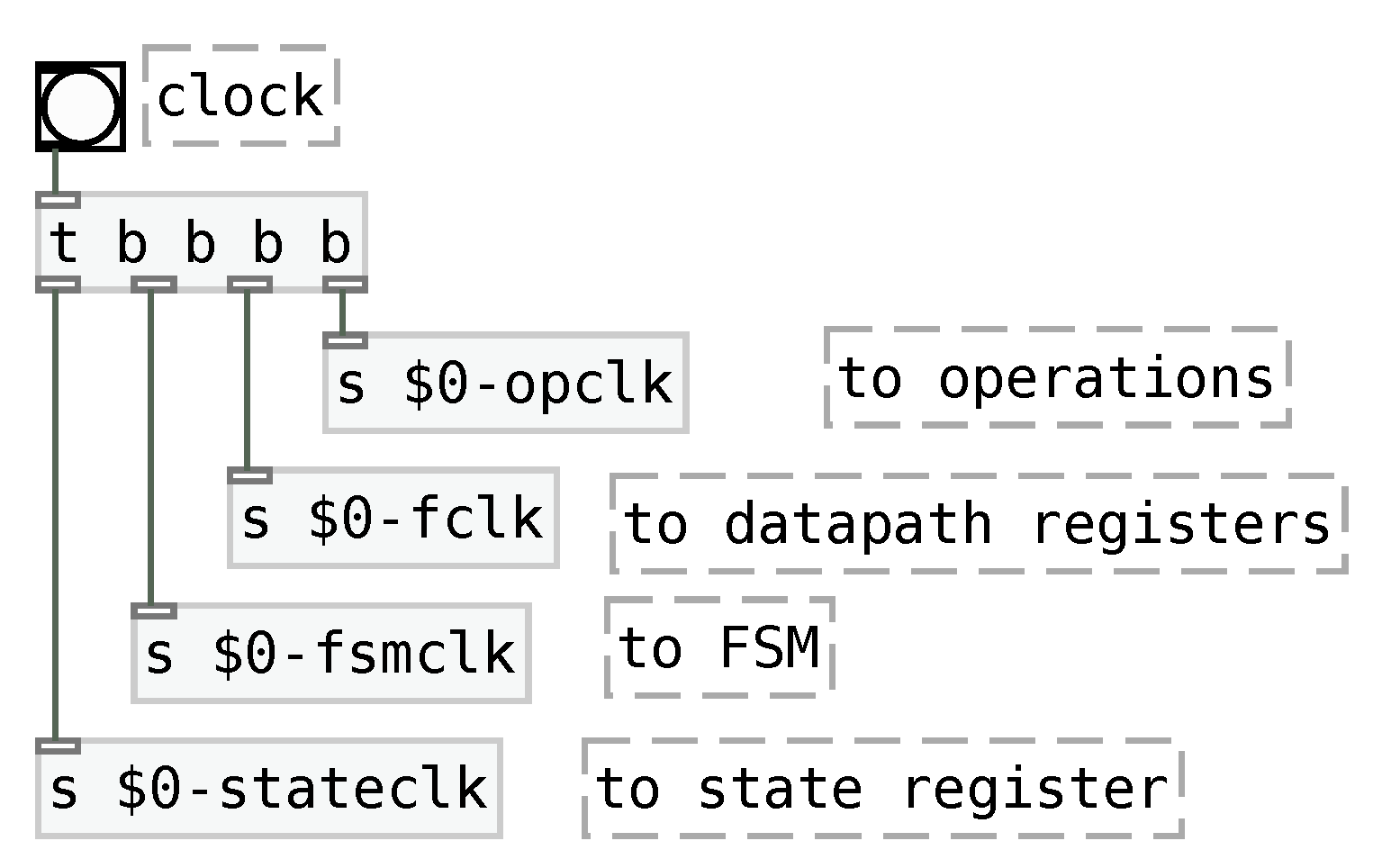

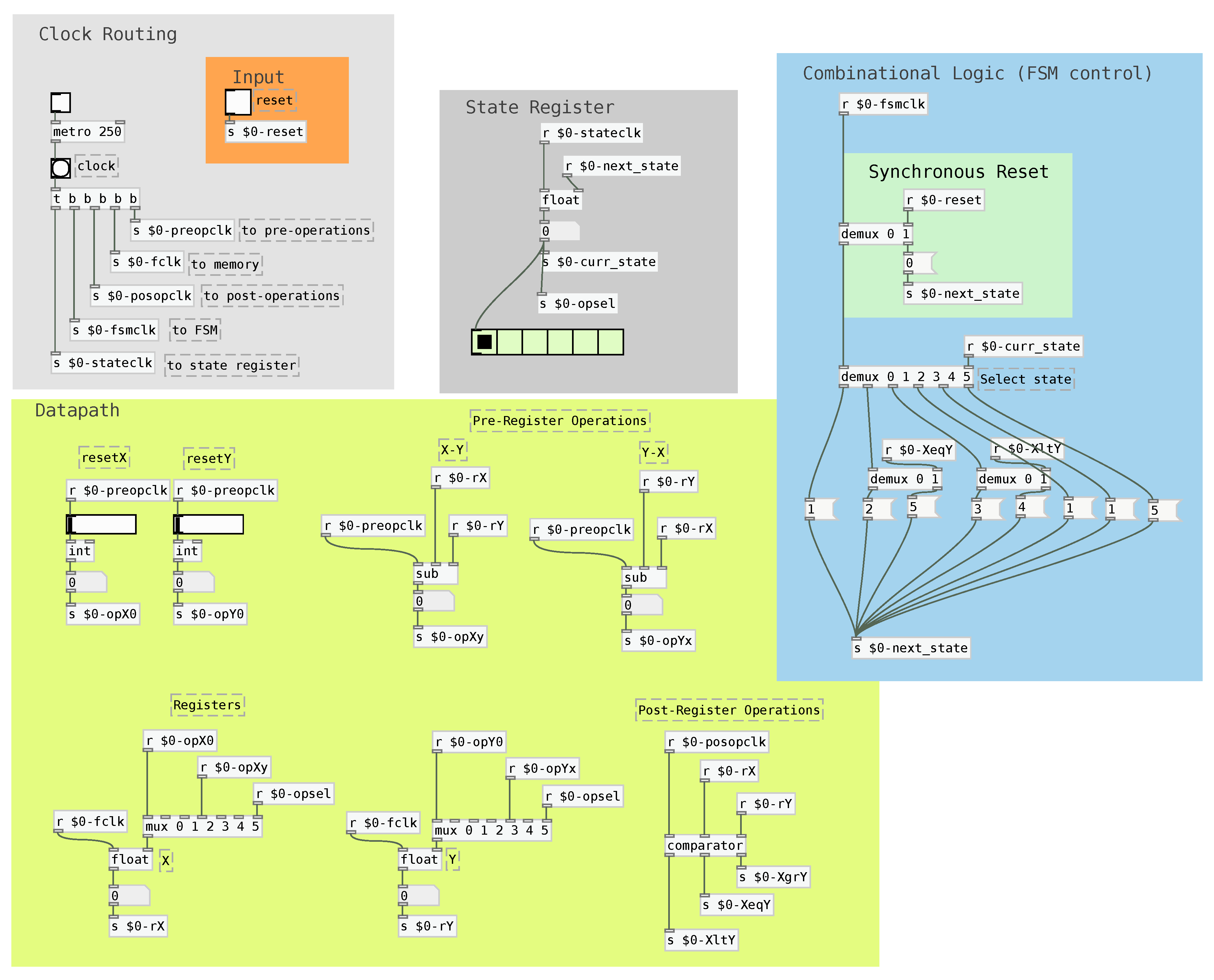

2. Implementing FSMDs

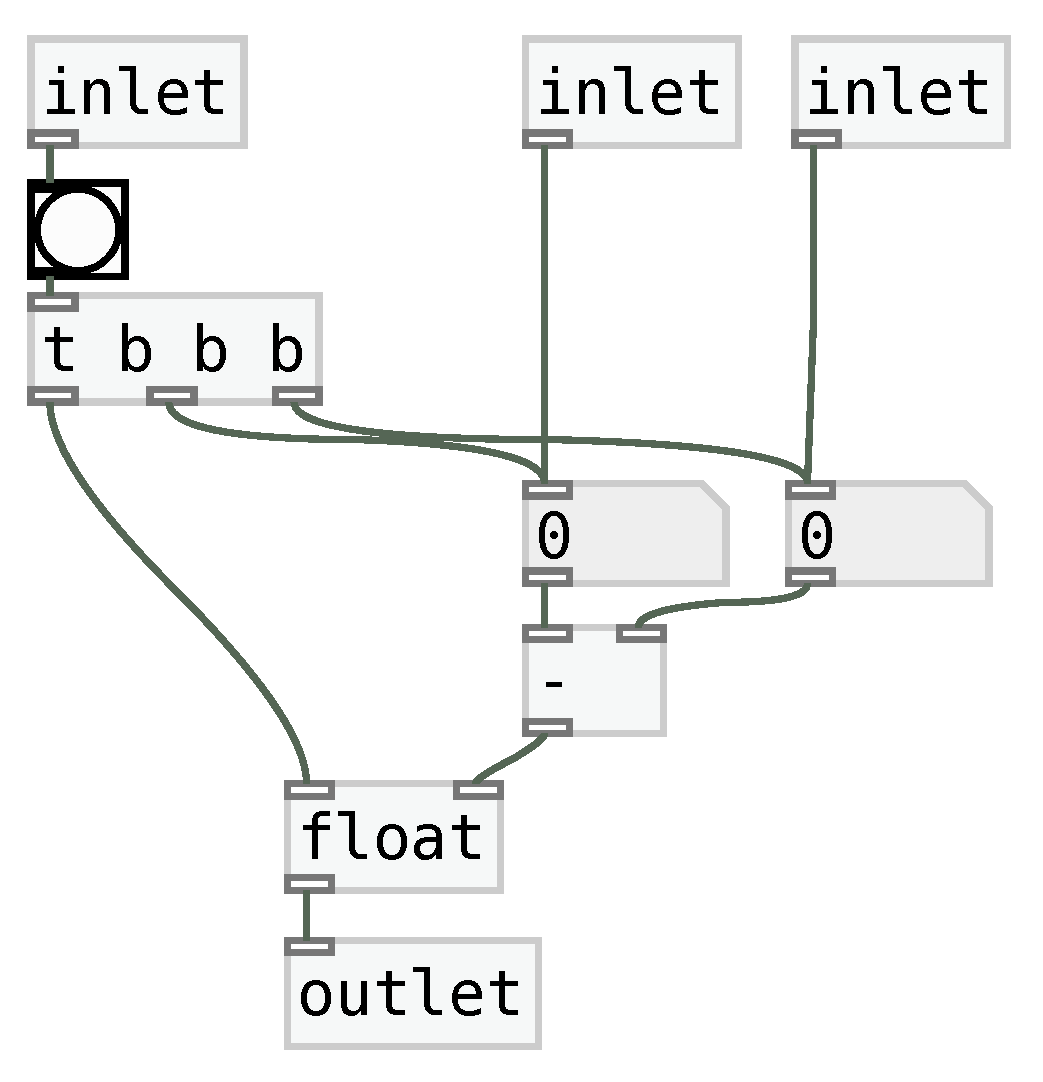

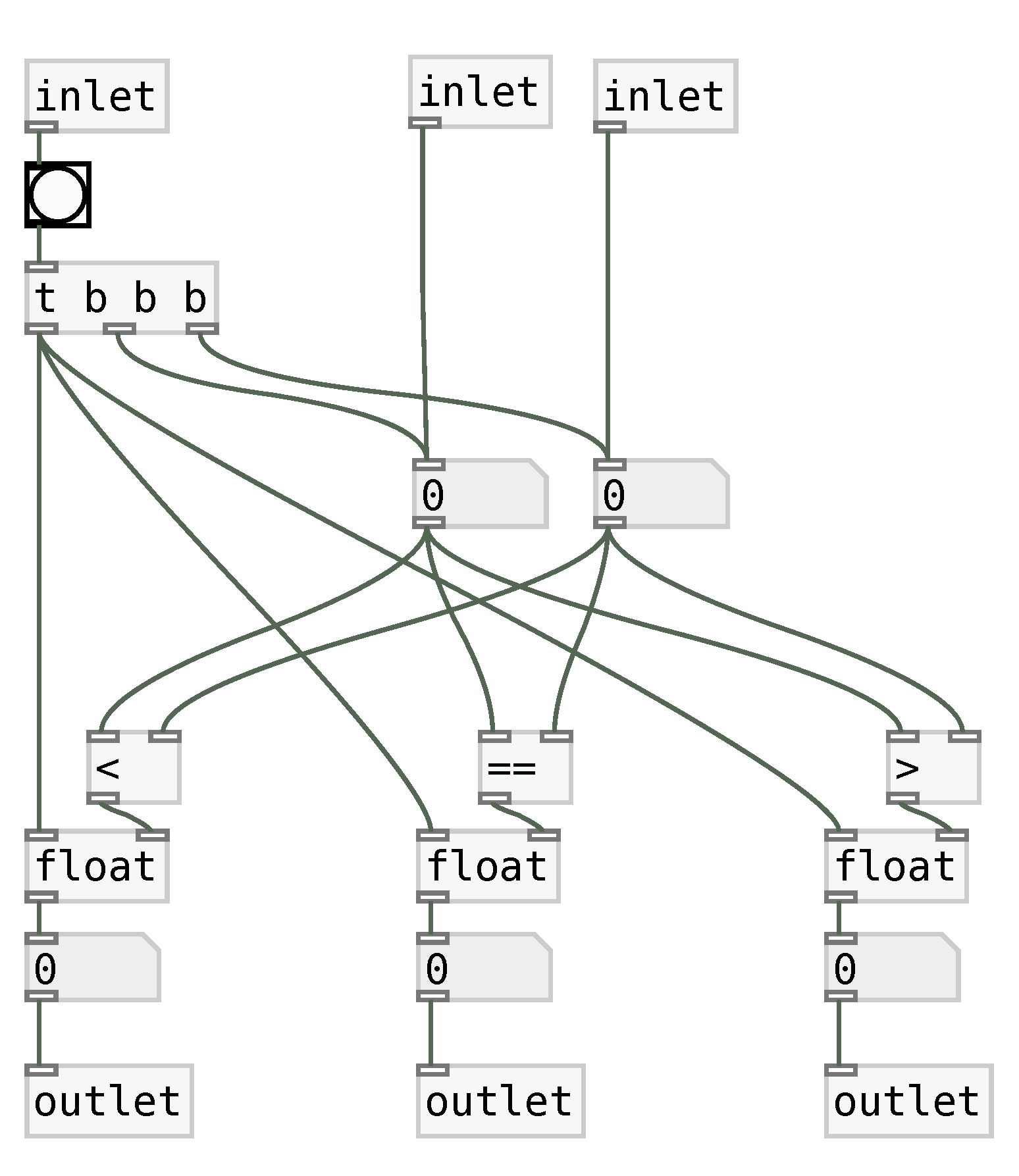

2.1. Elementary blocks

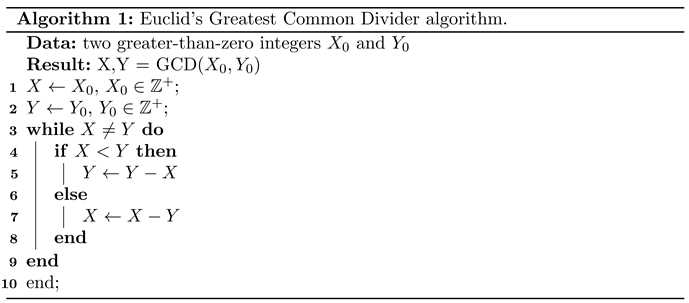

2.1.1. Explicit Operation Precedence

2.1.2. Clock-Synchronous Variable Assignment

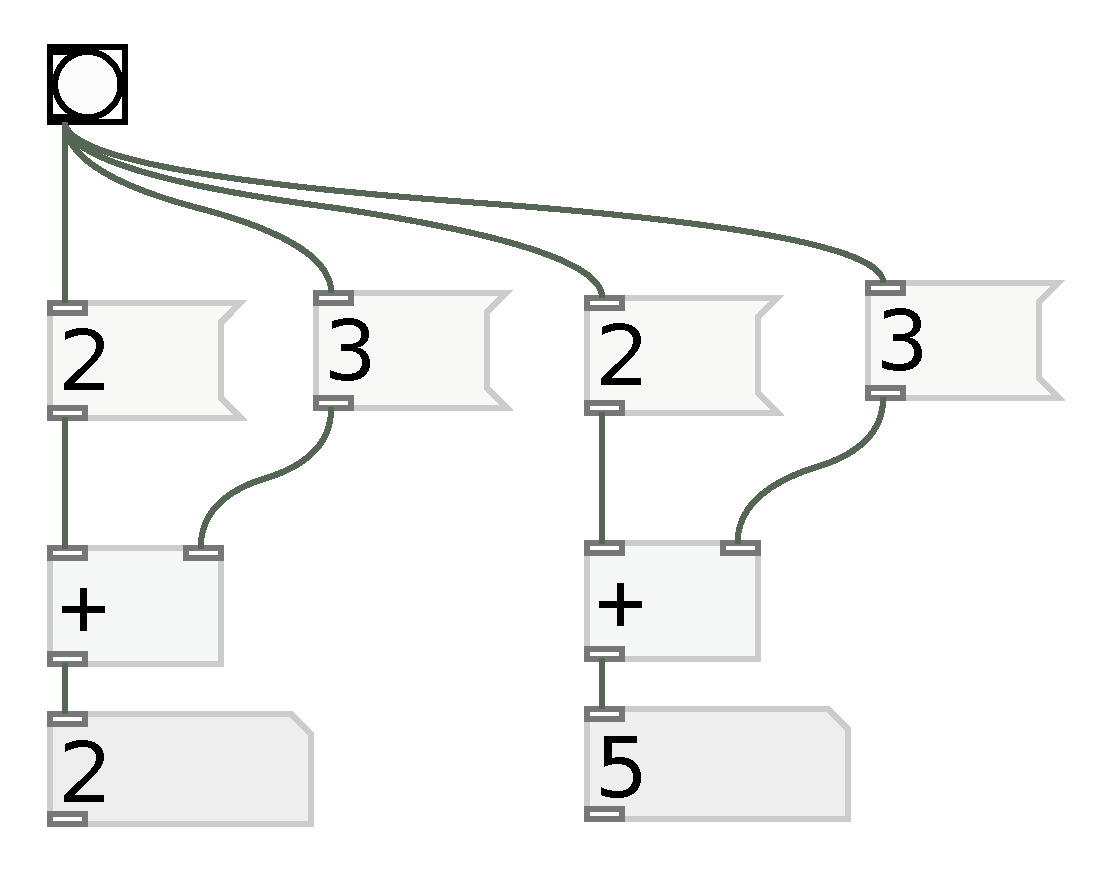

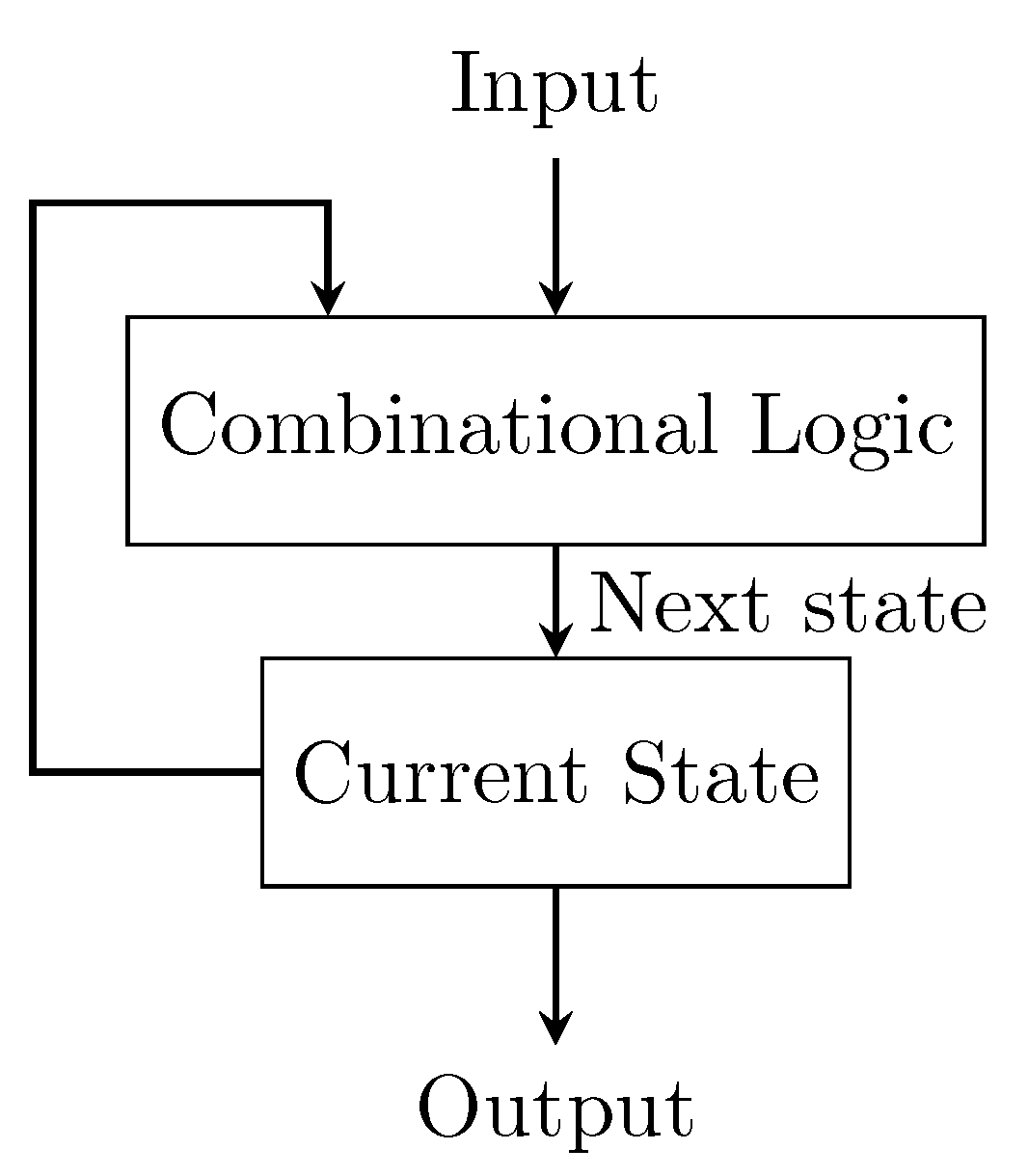

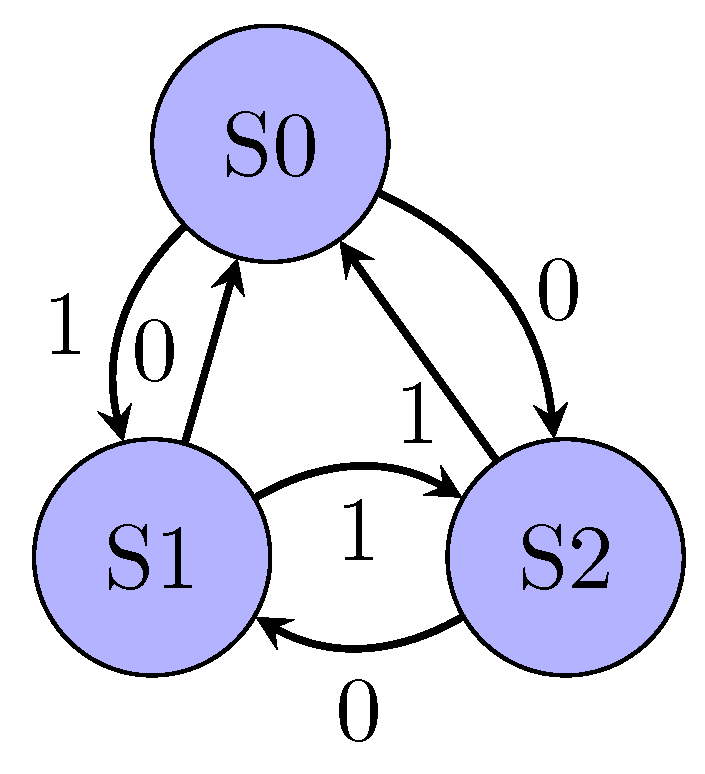

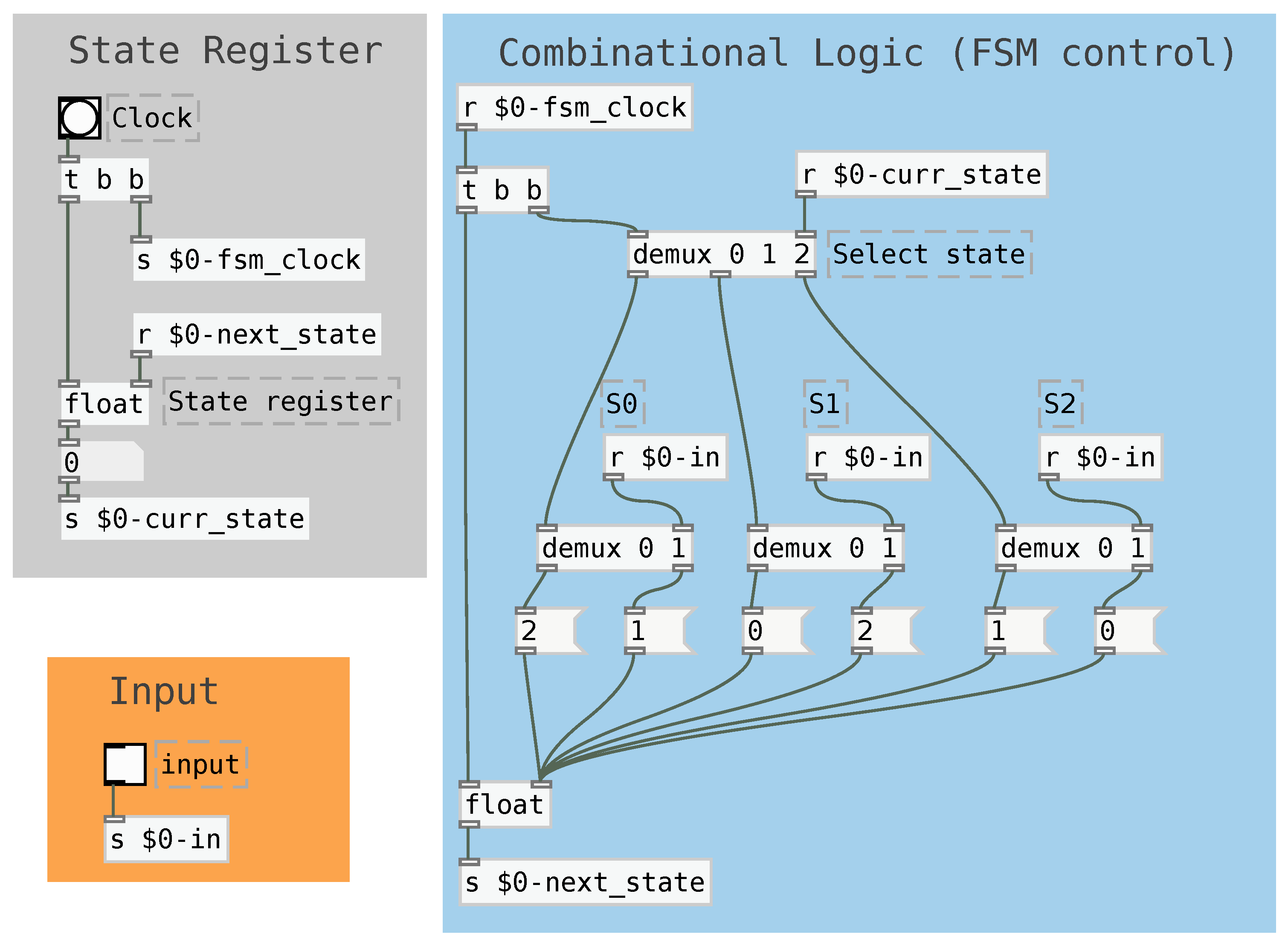

2.2. Finite State Machines

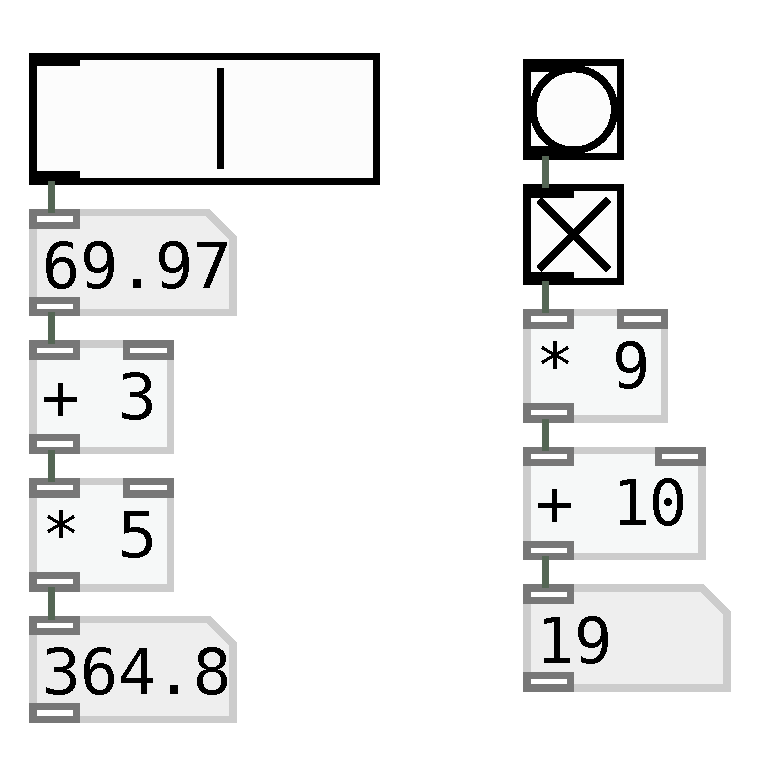

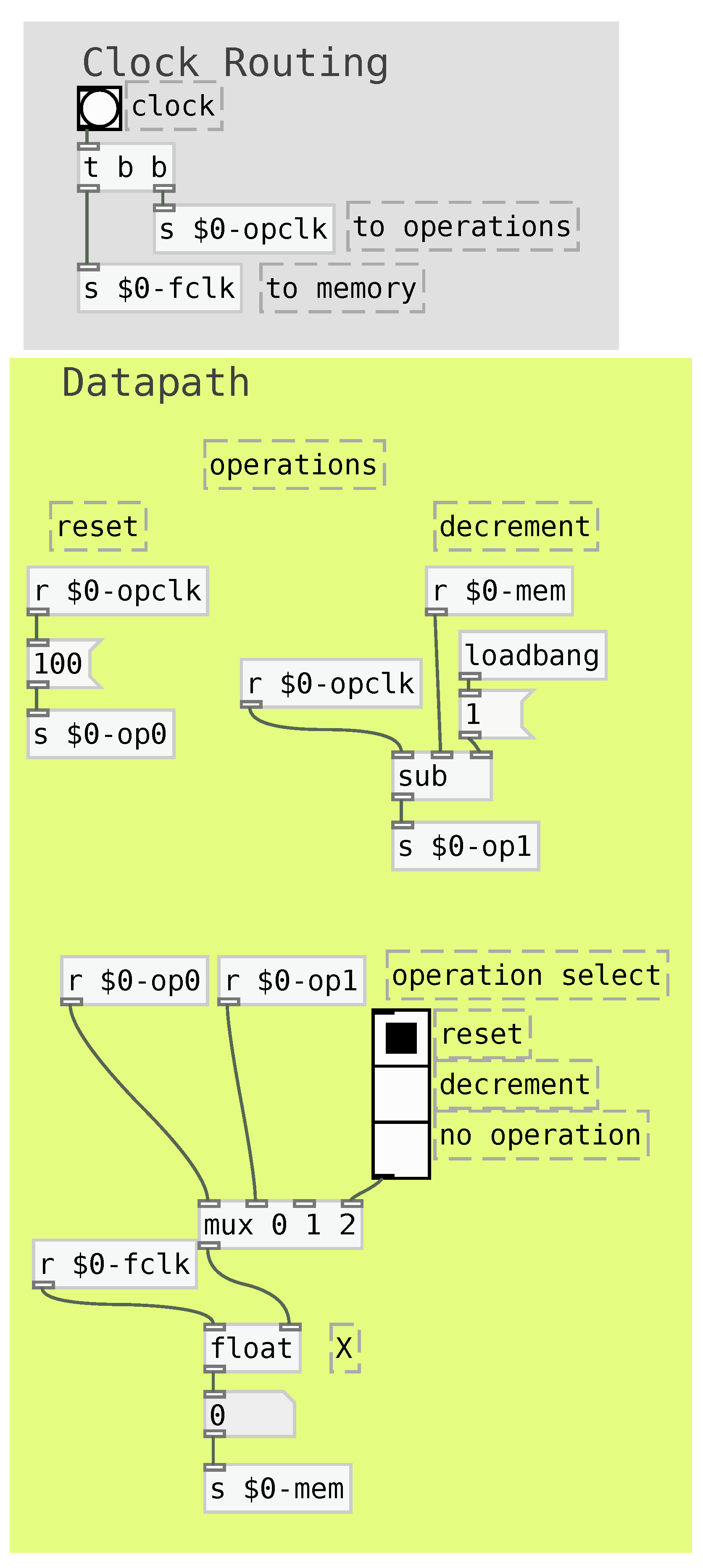

2.3. Datapath

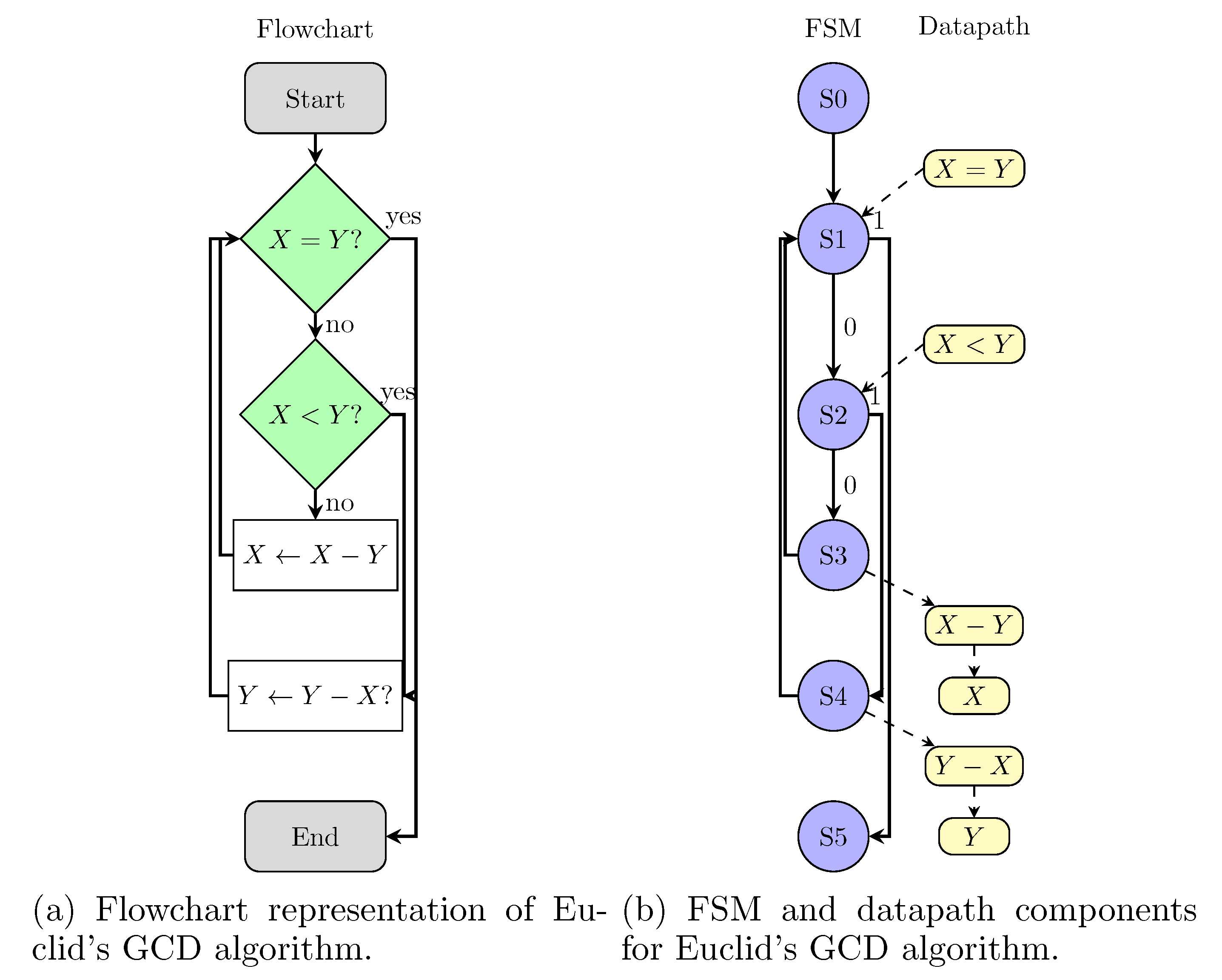

2.4. Demonstration

3. Demonstrations

3.1. Music structure control

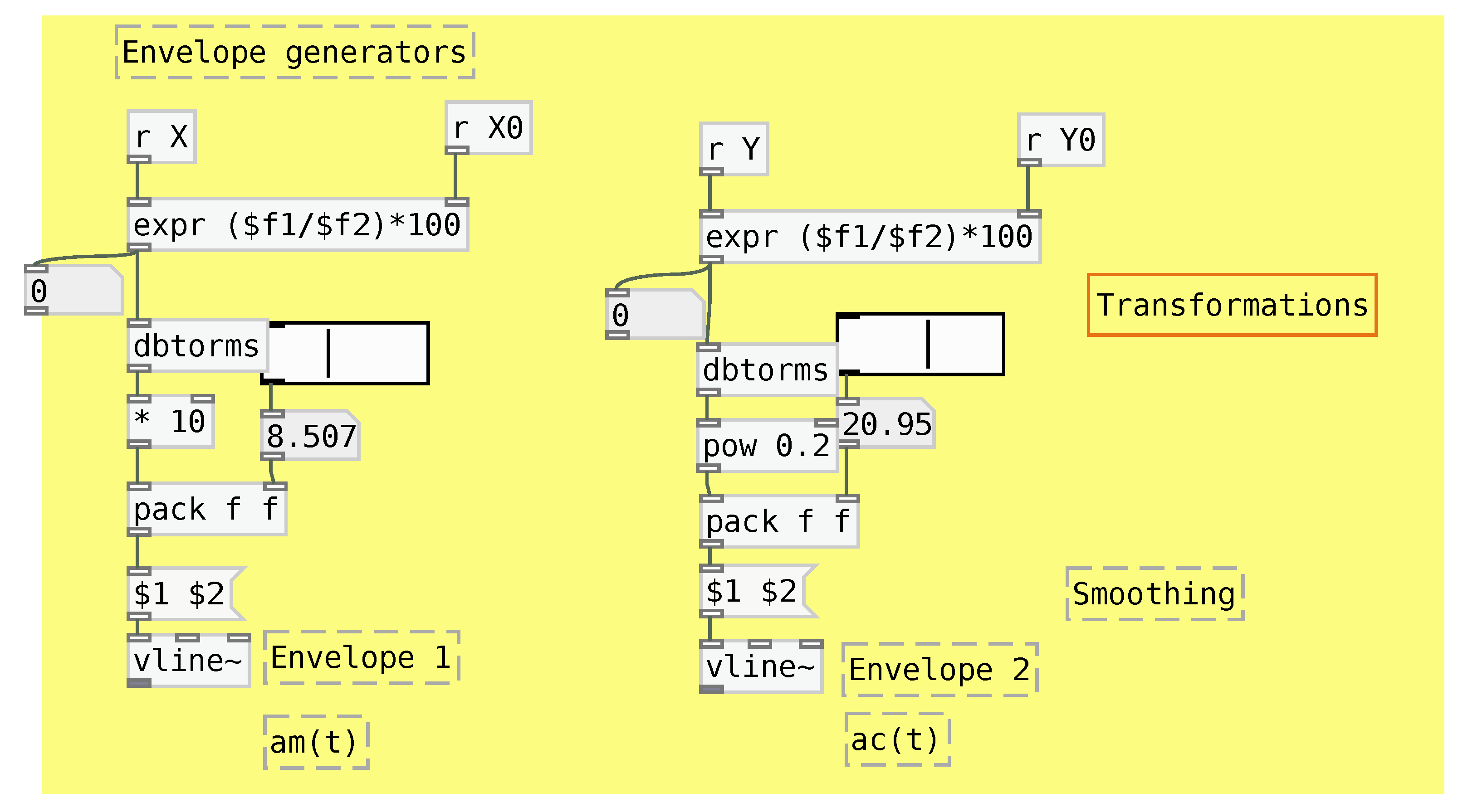

3.2. Sound parameter control

4. Conclusion

References

- M Puckette. Pure data. In Proceedings, International Computer Music Conference, 1996.

- M. Puckette. Combining event and signal processing in the max graphical programming environment. Computer Music Journal, 15(3):68–77, 1991. [CrossRef]

- Mark Danks. The graphics environment for max. In Proceedings of the International Computer Music Conference, 1996.

- Vincent J. Manzo. Max/MSP/Jitter for music: A practical guide to developing interactive music systems for education and more. Oxford University Press, 2016.

- M. Puckette, T. Apel, and D. Zicarelli. Real-time audio analysis tools for pd and msp. In Proceedings, International Computer Music Conference, 1998.

- Baptiste Caramiaux, Alessandro Altavilla, Scott G. Pobiner, and Atau Tanaka. Form follows sound. In Proceedings of the 33rd Annual ACM Conference on Human Factors in Computing Systems - CHI 15. ACM Press, 2015.

- Daniele Ghisi and Andrea Agostini. Extending bach: A family of libraries for real-time computer-assisted composition in max. Journal of New Music Research 2017, 53. [CrossRef]

- Stephen A. Hedges. Dice music in the eighteenth century. Music & Letters, 59(2):180–187, 1978.

- John Cage. Silence : lectures and writings, chapter Composition as Process I: Changes, pages 18–34. Wesleyan University Press, 1951.

- Iannis Xenakis. Formalized Music: Thoughts in Mathematics and Composition. Pendragon, 1963.

- Roger T. Dean and Jamie Forth. Towards a deep improviser: a prototype deep learning post-tonal free music generator. Neural Computing and Applications, 32(4):969–979, oct 2018. [CrossRef]

- Jean-Pierre Briot and François Pachet. Deep learning for music generation: challenges and directions. Neural Computing and Applications, 32(4):981–993, oct 2018. [CrossRef]

- Curtis Roads and Paul Wieneke. Grammars as representations for music. Computer Music Journal, 3(1):48, mar 1979. [CrossRef]

- Frédérick Duhautpas, Renaud Meric, and Makis Solomos. Expressiveness and meaning in the electroacoustic music of iannis xenakis. the case of la légende d’eer. In Proceedings of the Electroacoustic Music Studies Network Conference Meaning and Meaningfulness in Electroacoustic Music, 2012.

- Dimitri Bouche, Jérôme Nika, Alex Chechile, and Jean Bresson. Computer-aided composition of musical processes. Journal of New Music Research, 46(1):3–14, 2017. [CrossRef]

- Paul Vickers. Sonification and Music, Music and Sonification. In The Routledge Companion to Sounding Art. Routledge, 2016.

- Paul Vickers and James L. Alty. CAITLIN: A Musical Program Auralisation Tool to Assist Novice Programmers with Debugging. In Proceedings of ICAD 96 International Conference of Auditory Display, 1996.

- Paul Vickers and James L. Alty. When bugs sing. Interacting with Computers, 14(6):793–819, December 2002. Publisher: Oxford Academic.

- D.H. Jameson. Building real-time music tools visually with Sonnet. In Proceedings Real-Time Technology and Applications, pages 11–18, June 1996.

- Andy M. Sarrof, Phillip Hermans, and Sergey Bratus. SOS: Sonify Your Operating System. In Proc. of the 10th International Symposium on Computer Music Multidisciplinary Research, Marseille, France, 2013.

- Alexis Kirke and Eduardo Miranda. Pulsed Melodic Affective Processing: Musical structures for increasing transparency in emotional computation. SIMULATION, 90(5):606–622, May 2014. Publisher: SAGE Publications Ltd STM.

- R. Camposano and W. Rosenstiel. Synthesizing circuits from behavioural descriptions. IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems, 8(2):171–180, Feb 1989.

- Frank Vahid. Digital Design with RTL Design, Verilog and VHDL. Wiley Publishing, 2nd edition, 2010.

- Robert Kraemer and Cornelius Poepel. On transformations between paradigms in audio programming. In Proceedings of the Audio Mostly 2018 on Sound in Immersion and Emotion, AM’18, pages 23:1–23:4, New York, NY, USA, 2018. ACM.

- Robert Carl. Terry Riley’s in C. Oxford University Press, 2009.

- John Chowning. The synthesis of complex audio spectra by means of frequency modulation. Journal of the Audio Engineering Society, pages J. Audio Eng. Soc. 21 (7), 526–534., 1973.

- W. James. The Principles of Psychology. MacMillan, 1980.

- Szelag E., Kanabus M., Kolodziejczyk I., Kowalska J., and Szuchnik J. Individual differences in temporal information processing in humans. Acta Neurobiol, 64:349–366, 2004.

- Marc Wittmann. Moments in time. Frontiers in integrative neuroscience, 5(66), 2011.

- Eibl-Eibesfeldt Feldhütter I, Schleidt M. Moving in the beat of seconds: analysis of the time structure of human action. Ethol Sociobiol, 11:1–10, 1990. [CrossRef]

| 1 | For example, https://github.com/tiagosr/pdfsm. |

| 2 | Other objects, such as int, can also be used for the same purpose. |

| Current state | Input | Next state |

|---|---|---|

| S0 | 0 | S2 |

| S0 | 1 | S1 |

| S1 | 0 | S0 |

| S1 | 1 | S2 |

| S2 | 0 | S1 |

| S2 | 1 | S0 |

| Parameters | Meaning | Behavior |

|---|---|---|

| f | Fundamental frequency (pitch) | Fixed |

| Harmonicity and timbre | Fixed | |

| Mapping | Sound intensity along time | Progressions |

| Mapping | Timbre complexity along time | Progressions |

| Filter center frequencies | Timbre variations along time | Patterns |

| FSMD clock frequency | Envelope speed | Fixed |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).