Submitted:

25 February 2023

Posted:

27 February 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methodology

2.1. YOLO

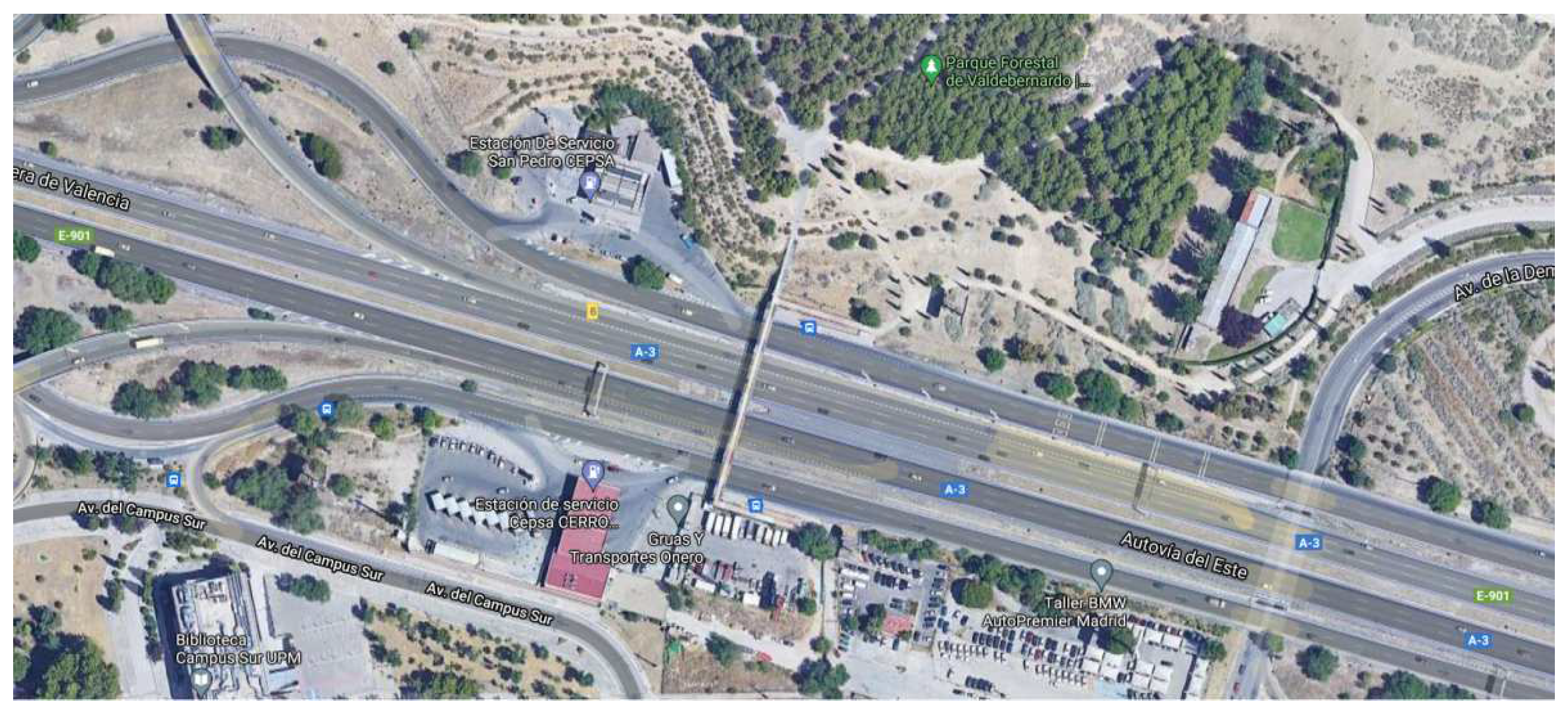

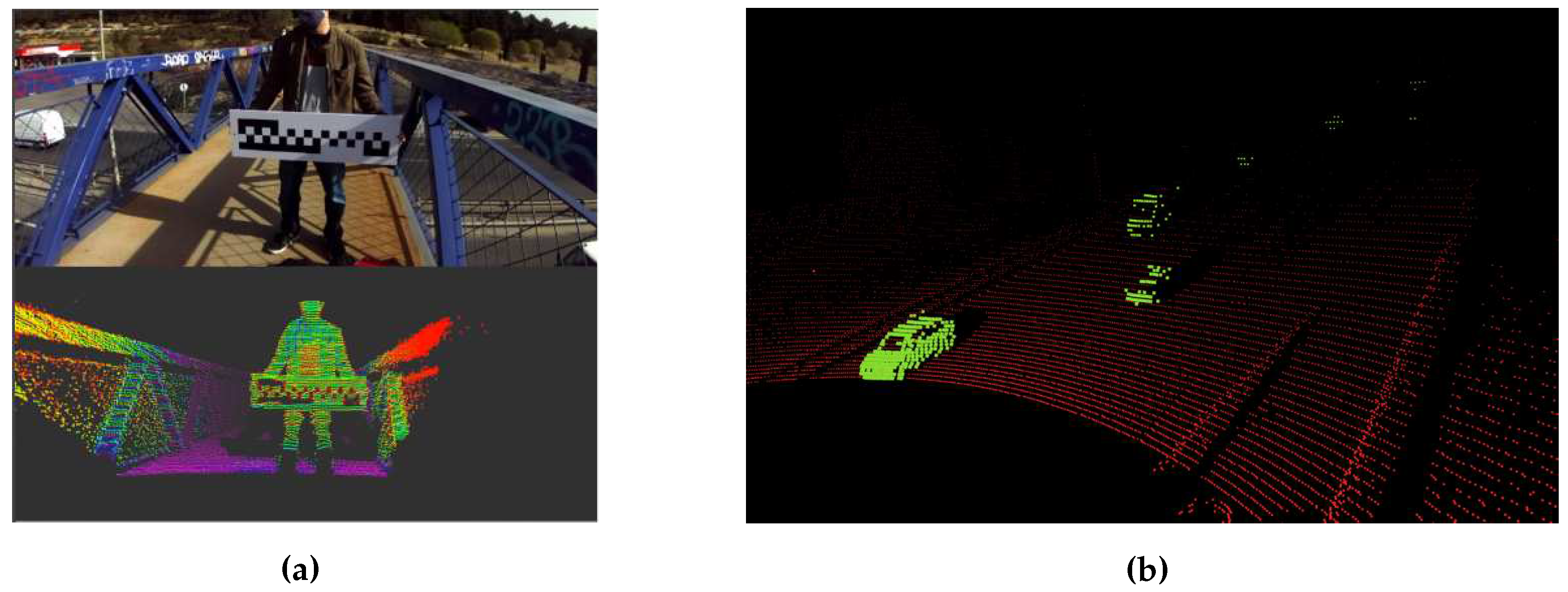

2.2. KITTI

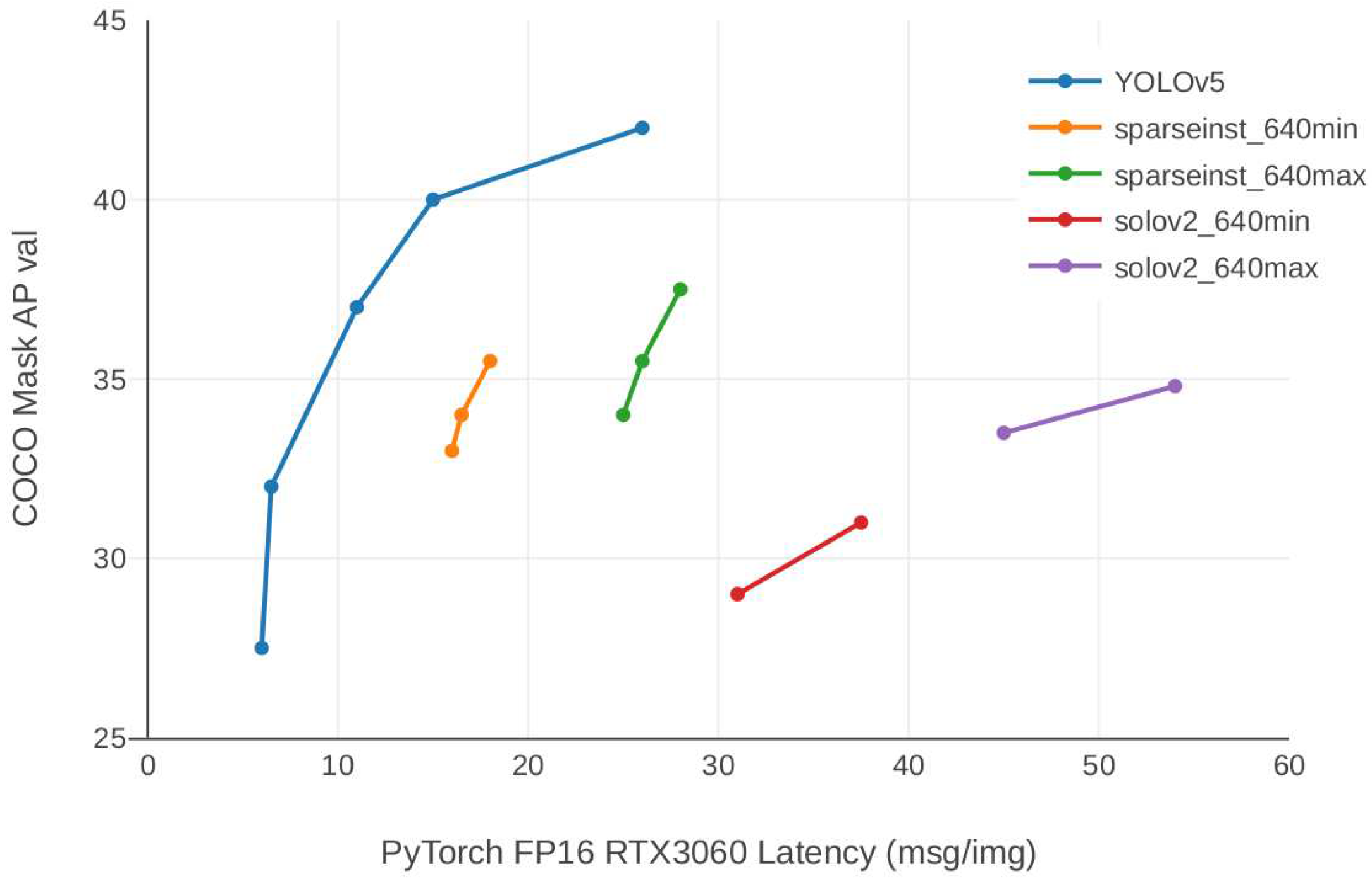

2.3. Validation models

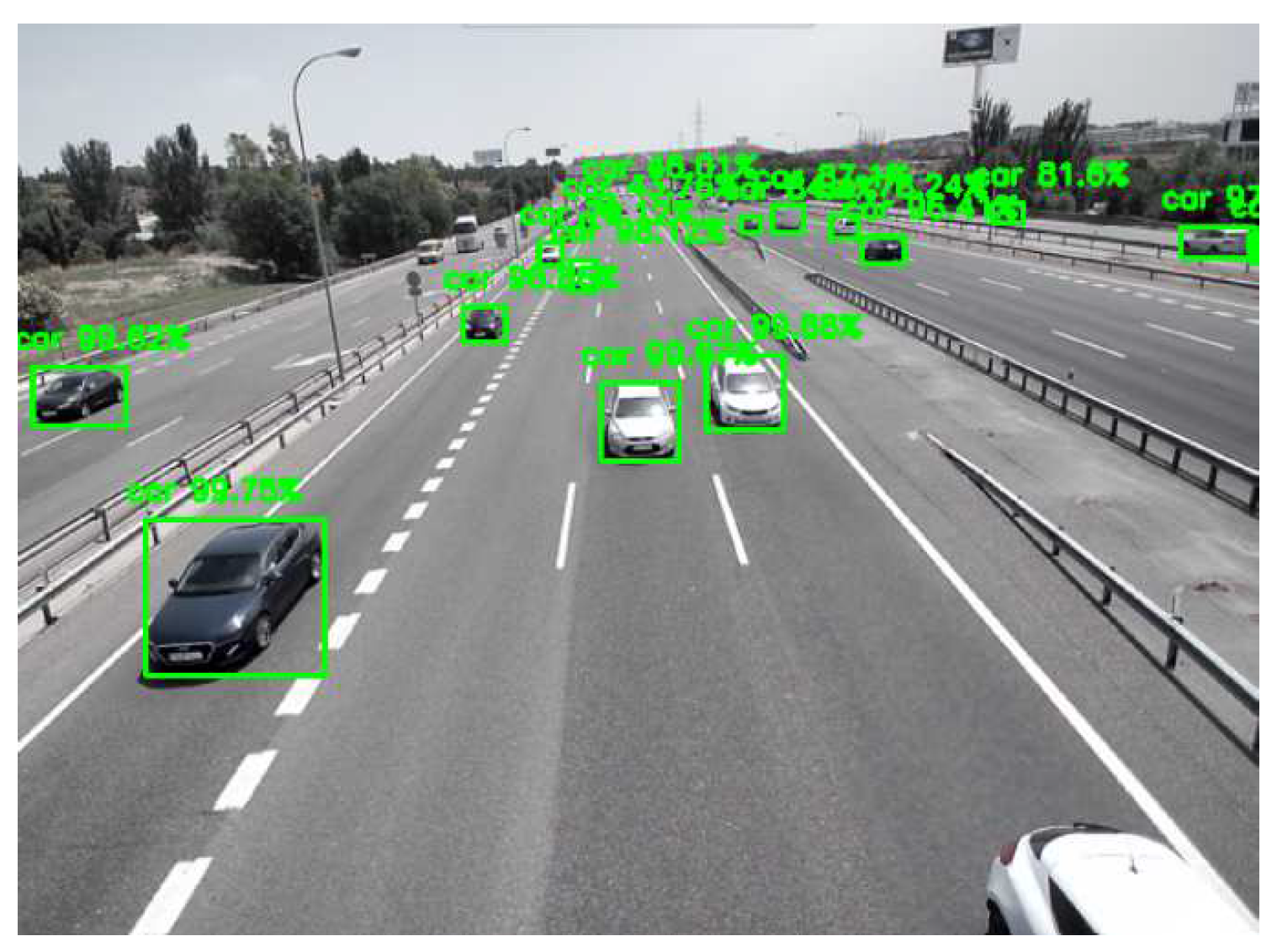

2.4. Auto-label model

2.5. Models trained from scratch

3. Results

- First step: validate the error with which the images obtained from the sensors are self-labelled.

- Second step: validate that the self-labelled datasets generated are efficient enough to be used as training datasets for DL models from the literature.

3.1. First step Validation

| Model | Source | BEV | 3D Detection | ||||

| Easy | Moderate | Hard | Easy | Moderate | Hard | ||

| PointPillars | Lang et al. [13] | 88.35 | 86.10 | 79.83 | 79.05 | 74.99 | 68.30 |

| OpenPCDet | 92.03 | 88.05 | 86.66 | 87.70 | 78.39 | 75.18 | |

| OpenPCDet - Auto-labeled | 93.55 | 84.70 | 81.56 | 85.06 | 75.00 | 71.43 | |

| Voxel R-CNN | Deng et al. [14] | - | - | - | 90.90 | 81.62 | 77.06 |

| OpenPCDet | 93.55 | 91.18 | 88.92 | 92.15 | 85.01 | 82.48 | |

| OpenPCDet - Auto-labeled | 95.78 | 90.99 | 88.77 | 92.54 | 83.28 | 82.21 | |

| PointRCNN | Shi et al. [12] | - | - | - | 85.94 | 75.76 | 68.32 |

| OpenPCDet | 94.93 | 89.12 | 86.83 | 91.49 | 80.65 | 78.13 | |

| OpenPCDet - Auto-labeled | 95.57 | 90.96 | 88.80 | 92.19 | 82.89 | 80.33 | |

| PV-RCNN | Shi et al. [25] | - | - | - | - | 81.88 | - |

| OpenPCDet | 93.02 | 90.32 | 88.53 | 92.10 | 84.36 | 82.48 | |

| OpenPCDet - Auto-labeled | 92.50 | 86.93 | 85.83 | 90.09 | 78.25 | 75.38 | |

3.2. Second step Validation

3.2.1. Set up configuration

3.2.2. Experiments

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You Only Look Once: Unified, Real-Time Object Detection. 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016, pp. 779–788. [CrossRef]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollar, P.; Zitnick, C.L. Microsoft COCO: Common Objects in Context. Computer Vision – ECCV 2014; Fleet, D.; Pajdla, T.; Schiele, B.; Tuytelaars, T., Eds.; Springer International Publishing: Cham, 2014; pp. 740–755.

- Geiger, A.; Lenz, P.; Stiller, C.; Urtasun, R. Vision meets Robotics: The KITTI Dataset. International Journal of Robotics Research (IJRR) 2013.

- Sun, P.; Kretzschmar, H.; Dotiwalla, X.; Chouard, A.; Patnaik, V.; Tsui, P.; Guo, J.; Zhou, Y.; Chai, Y.; Caine, B.; Vasudevan, V.; Han, W.; Ngiam, J.; Zhao, H.; Timofeev, A.; Ettinger, S.; Krivokon, M.; Gao, A.; Joshi, A.; Anguelov, D. Scalability in Perception for Autonomous Driving: Waymo Open Dataset. 2020, pp. 2443–2451. [CrossRef]

- Roh, Y.; Heo, G.; Whang, S.E. A Survey on Data Collection for Machine Learning: A Big Data - AI Integration Perspective. IEEE Transactions on Knowledge and Data Engineering 2021, 33, 1328–1347. [CrossRef]

- Chadwick, S.; Maddern, W.; Newman, P. Distant Vehicle Detection Using Radar and Vision. 2019 International Conference on Robotics and Automation (ICRA), 2019, pp. 8311–8317. [CrossRef]

- Dong, X.; Wang, P.; Zhang, P.; Liu, L. Probabilistic Oriented Object Detection in Automotive Radar. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops, 2020.

- Chen, Z.; Liao, Q.; Wang, Z.; Liu, Y.; Liu, M. Image Detector Based Automatic 3D Data Labeling and Training for Vehicle Detection on Point Cloud. 2019 IEEE Intelligent Vehicles Symposium (IV), 2019, pp. 1408–1413. [CrossRef]

- Chen, X.; Ma, H.; Wan, J.; Li, B.; Xia, T. Multi-View 3D Object Detection Network for Autonomous Driving. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2017.

- Fritsch, J.; Kuehnl, T.; Geiger, A. A New Performance Measure and Evaluation Benchmark for Road Detection Algorithms. International Conference on Intelligent Transportation Systems (ITSC), 2013.

- Geiger, A.; Lenz, P.; Stiller, C.; Urtasun, R. Vision meets Robotics: The KITTI Dataset. International Journal of Robotics Research (IJRR) 2013.

- Shi, S.; Wang, X.; Li, H. PointRCNN: 3D Object Proposal Generation and Detection From Point Cloud. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2019.

- Lang, A.H.; Vora, S.; Caesar, H.; Zhou, L.; Yang, J.; Beijbom, O. PointPillars: Fast Encoders for Object Detection from Point Clouds. CVPR 2019.

- Deng, J.; Shi, S.; Li, P.; Zhou, W.; Zhang, Y.; Li, H. Voxel R-CNN: Towards High Performance Voxel-based 3D Object Detection. AAAI, 2021.

- Caesar, H.; Bankiti, V.; Lang, A.H.; Vora, S.; Liong, V.E.; Xu, Q.; Krishnan, A.; Pan, Y.; Baldan, G.; Beijbom, O. nuScenes: A multimodal dataset for autonomous driving. CVPR, 2020.

- Patil, A.; Malla, S.; Gang, H.; Chen, Y.T. The H3D Dataset for Full-Surround 3D Multi-Object Detection and Tracking in Crowded Urban Scenes. 2019 International Conference on Robotics and Automation (ICRA), 2019, pp. 9552–9557. [CrossRef]

- Yu, W.; Sun, Y.; Zhou, R.; Liu, X. GAN Based Method for Labeled Image Augmentation in Autonomous Driving. 2019 IEEE International Conference on Connected Vehicles and Expo (ICCVE), 2019, pp. 1–5. [CrossRef]

- Simony, M.; Milzy, S.; Amendey, K.; Gross, H.M. Complex-YOLO: An Euler-Region-Proposal for Real-time 3D Object Detection on Point Clouds. Proceedings of the European Conference on Computer Vision (ECCV) Workshops, 2018.

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.Y.; Berg, A.C. SSD: Single Shot MultiBox Detector. Computer Vision – ECCV 2016; Leibe, B.; Matas, J.; Sebe, N.; Welling, M., Eds.; Springer International Publishing: Cham, 2016; pp. 21–37.

- Neupane, B.; Horanont, T.; Aryal, J. Real-Time Vehicle Classification and Tracking Using a Transfer Learning-Improved Deep Learning Network. Sensors 2022, 22.

- Imad, M.; Doukhi, O.; Lee, D.J. Transfer Learning Based Semantic Segmentation for 3D Object Detection from Point Cloud. Sensors 2021, 21. [CrossRef]

- Vatani Nezafat, R.; Sahin, O.; Cetin, M. Transfer Learning Using Deep Neural Networks for Classification of Truck Body Types Based on Side-Fire Lidar Data. Journal of Big Data Analytics in Transportation 2019, 1. [CrossRef]

- Team, O.D. OpenPCDet: An Open-source Toolbox for 3D Object Detection from Point Clouds, 2020.

- Jocher, G. YOLOv5 by Ultralytics. 2020. [CrossRef]

- Shi, S.; Guo, C.; Jiang, L.; Wang, Z.; Shi, J.; Wang, X.; Li, H. PV-RCNN: Point-Voxel Feature Set Abstraction for 3D Object Detection. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2020.

- Geiger, A.; Lenz, P.; Urtasun, R. Are we ready for autonomous driving? The KITTI vision benchmark suite. 2012 IEEE Conference on Computer Vision and Pattern Recognition, 2012, pp. 3354–3361. [CrossRef]

- Zhou, H.; Ge, Z.; Mao, W.; Li, Z. PersDet: Monocular 3D Detection in Perspective Bird’s-Eye-View. arXiv preprint arXiv:2208.09394 2022.

- Ng, M.H.; Radia, K.; Chen, J.; Wang, D.; Gog, I.; Gonzalez, J.E. BEV-Seg: Bird’s Eye View Semantic Segmentation Using Geometry and Semantic Point Cloud. arXiv preprint arXiv:2006.11436 2020.

- Simonelli, A.; Bulò, S.R.; Porzi, L.; Lopez-Antequera, M.; Kontschieder, P. Disentangling Monocular 3D Object Detection. 2019 IEEE/CVF International Conference on Computer Vision (ICCV), 2019, pp. 1991–1999. [CrossRef]

| Easy | Moderate | Hard | |

| F-Score | 0.957 | 0.927 | 0.740 |

| Model | BEV | 3D Detection | ||||

| Easy | Moderate | Hard | Easy | Moderate | Hard | |

| PointPillars | 93.05 | 84.03 | 81.50 | 79.99 | 65.53 | 61.03 |

| Voxel R-CNN | 95.39 | 91.19 | 88.56 | 92.45 | 83.33 | 81.95 |

| PointRCNN | 95.55 | 91.00 | 88.88 | 92.57 | 82.84 | 79.89 |

| PV-RCNN | 93.02 | 87.08 | 85.13 | 89.98 | 79.14 | 75.08 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).