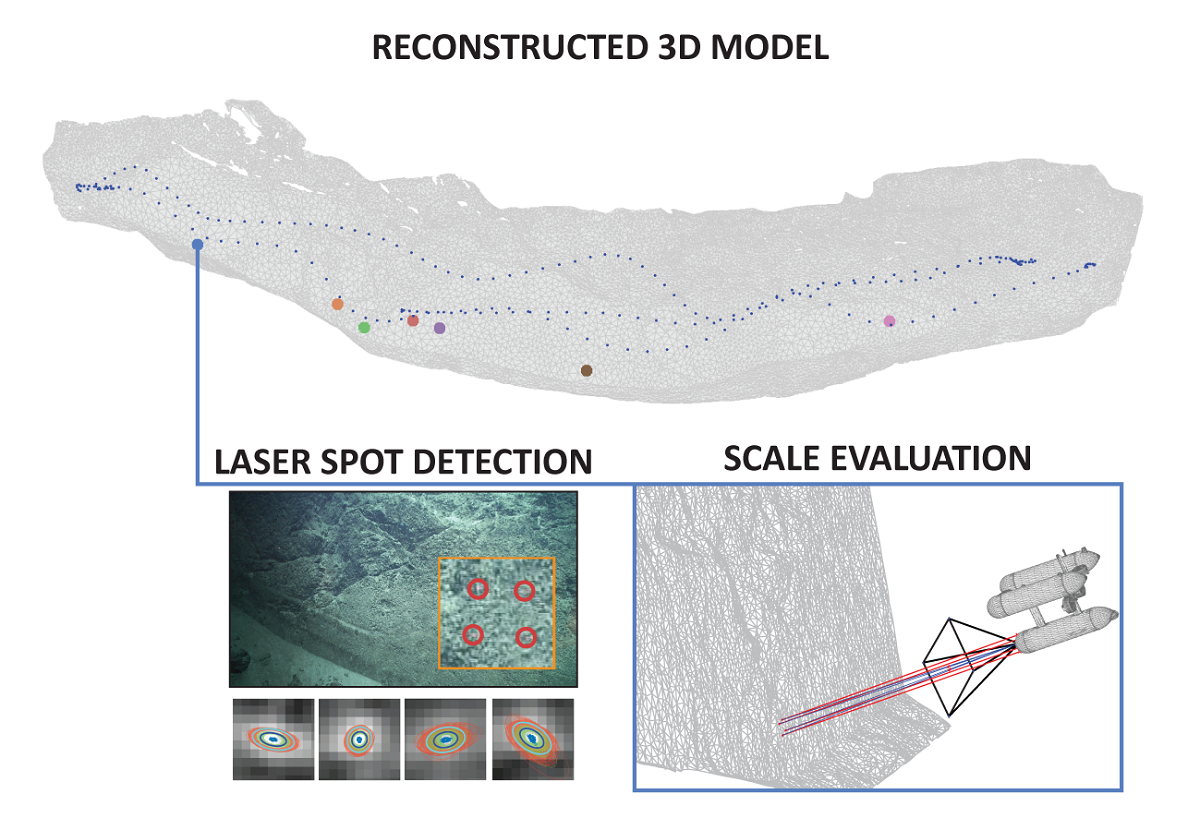

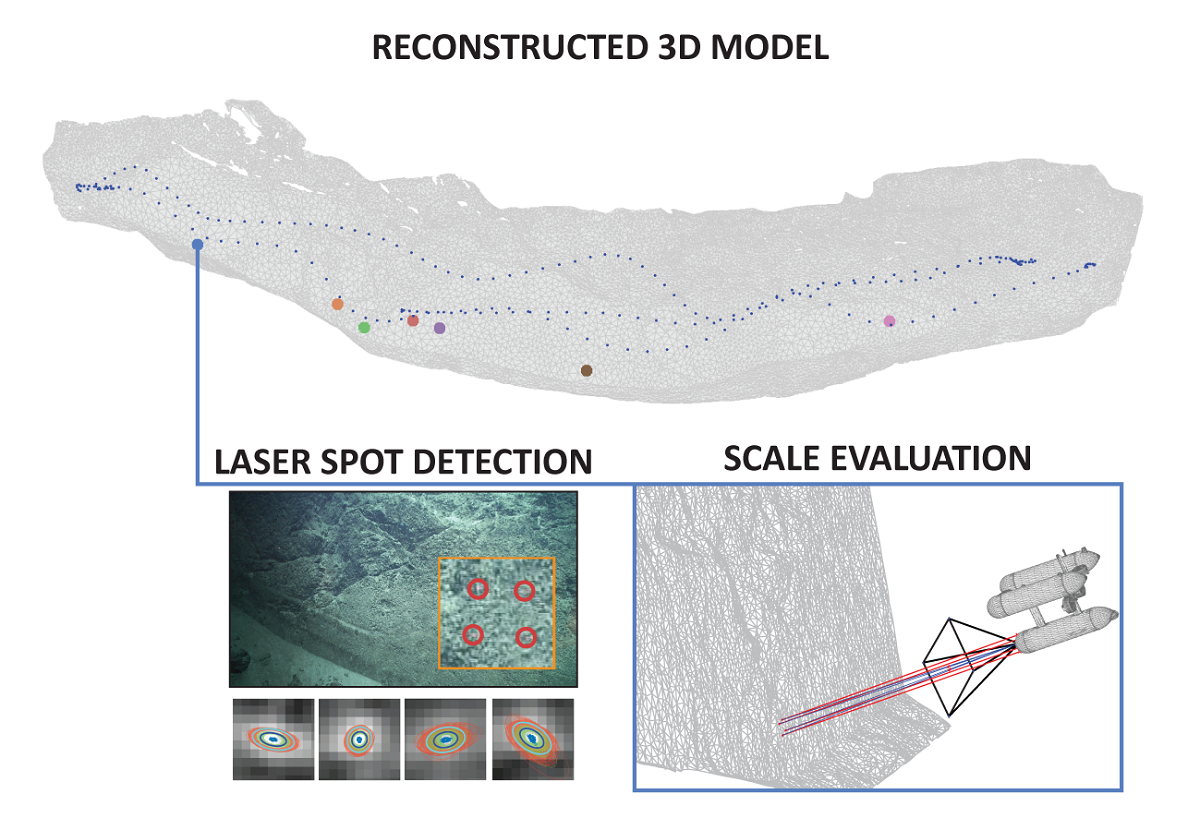

Rapid developments in the field of underwater photogrammetry have given scientists1the ability to produce accurate 3-dimensional (3D) models which are now increasingly used in the representation and study of local areas of interest. This paper addresses the lack of systematic analysis of 3D reconstruction and navigation fusion strategies, as well as associated error evaluation of models produced at larger scales in GPS-denied environments using a monocular camera (often in deep-sea scenarios). Based on our prior work on automatic scale estimation of Structure from Motion (SfM)-based 3D models using laser scalers, an automatic scale accuracy framework is presented. The confidence level for each of the scale error estimates is independently assessed through the propagation of the uncertainties associated with image features and laser spot detections using a Monte Carlo simulation. The number of iterations used in the simulation was validated through the analysis of the final estimate behaviour. To facilitate the detection and uncertainty estimation of even greatly attenuated laser beams, an automatic laser spot detection method, mitigating the effects of scene texture, was developed, with the main novelty of estimating the uncertainties based on the recovered characteristic shapes of laser spots with radially decreasing1 intensities. The effects of four different reconstruction strategies resulting from the combinations of Incremental/GlobalSfM, and thea priori/a posterioriuse of navigation data were analyzed using two distinct survey scenarios captured during the SUBSAINTES 2017 cruise (doi: 10.17600/17001000). The study demonstrates that surveys with multiple overlaps of non-sequential images result in a nearly identical solution regardless of the strategy (SfM or navigation fusion), while surveys with weakly connected sequentially acquired images are prone to produce broad-scale deformation (doming effect) when navigation is not included in the optimization. Thus the scenarios with complex survey patterns substantially benefit from using multi-objective BA navigation fusion. In all cases, the errors in the models are inferior to 5%, with errors often being around 1%. The effects of combining data from multiple surveys were also evaluated. The introduction of additional vectors in the optimization of multi-survey problems successfully accounted for offset changes present in the underwater USBL-based navigation data and thus minimize the effect of contradicting navigation priors. Our results also illustrate the importance of collecting a multitude of evaluation data at different locations and moments during the survey.