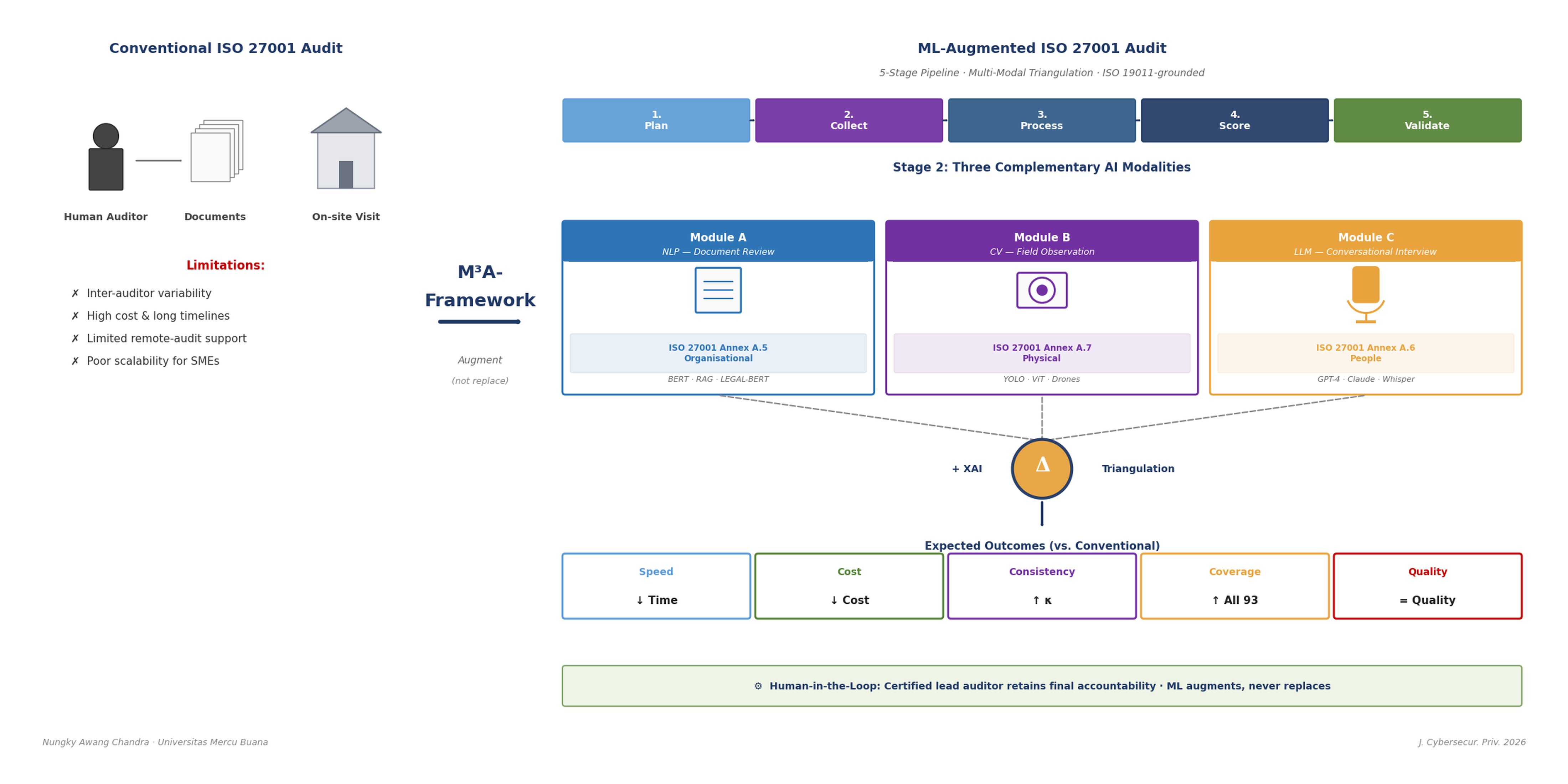

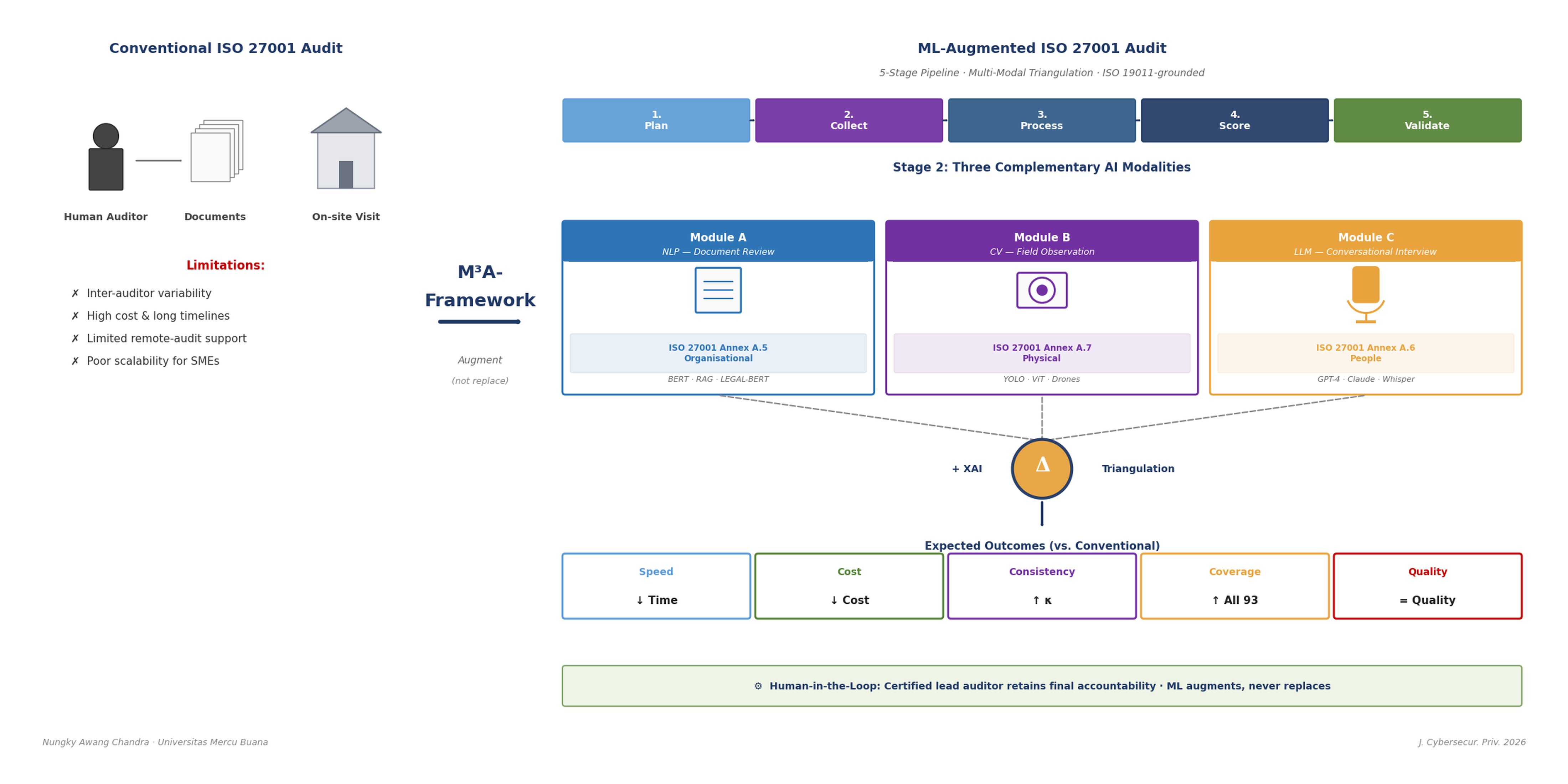

The audit of Information Security Management Systems (ISMS) under ISO/IEC 27001:2022 has traditionally relied on human auditors whose competence, experience, and judgment shape audit outcomes. While effective, this human-centric approach suffers from inter-auditor variability, high cost, scheduling constraints, and limited scalability — challenges magnified by the post-pandemic shift toward remote audits and the growing volume of organisations seeking certification. Recent advances in Natural Language Processing (NLP), Computer Vision (CV), and Large Language Models (LLMs) suggest that significant portions of the audit workflow could be augmented by machine learning. However, prior research has examined these technologies in isolation; no integrated conceptual framework yet exists that unifies document review, field observation, and interviewing under a single multi-modal pipeline tailored to ISO/IEC 27001 audits and explicitly grounded in the audit methodology of ISO 19011:2018. This paper proposes such a framework — the Multi-Modal ML-Augmented ISO 27001 Audit Framework (M³A-Framework). We synthesise insights from ISO 19011:2018 audit guidelines, recent advances in AI-driven assurance, and the design science research paradigm to develop a five-stage conceptual model that augments the seven-step evidence-collection process specified in ISO 19011 Clause 6.4.7 and that extends the audit-methods matrix of ISO 19011 Annex A (Table A.1). The framework comprises: (1) audit planning and scoping; (2) multi-modal evidence collection through NLP for document analysis, CV for physical control verification (supported by inspection robots and drones), and LLM-based conversational AI for interview; (3) ML-based evidence processing and triangulation; (4) confidence-weighted finding classification using Explainable AI; and (5) human-in-the-loop validation. The framework explicitly maps each module to the 93 controls of Annex A of ISO/IEC 27001:2022 and to the audit phases mandated by ISO 19011. We further propose a set of testable propositions, evaluation metrics, and ethical considerations that ground the framework in both academic rigour and practical deployability.