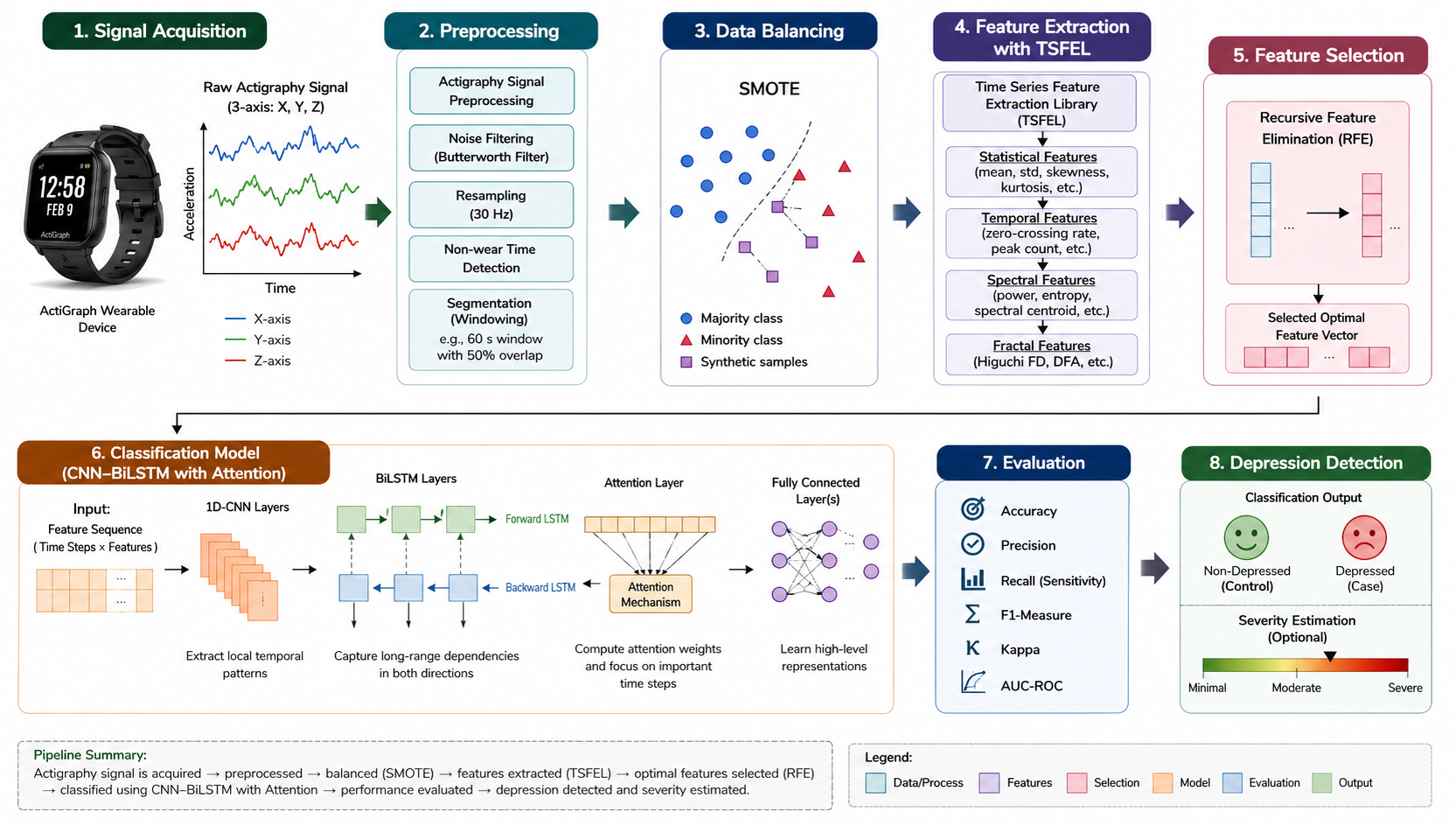

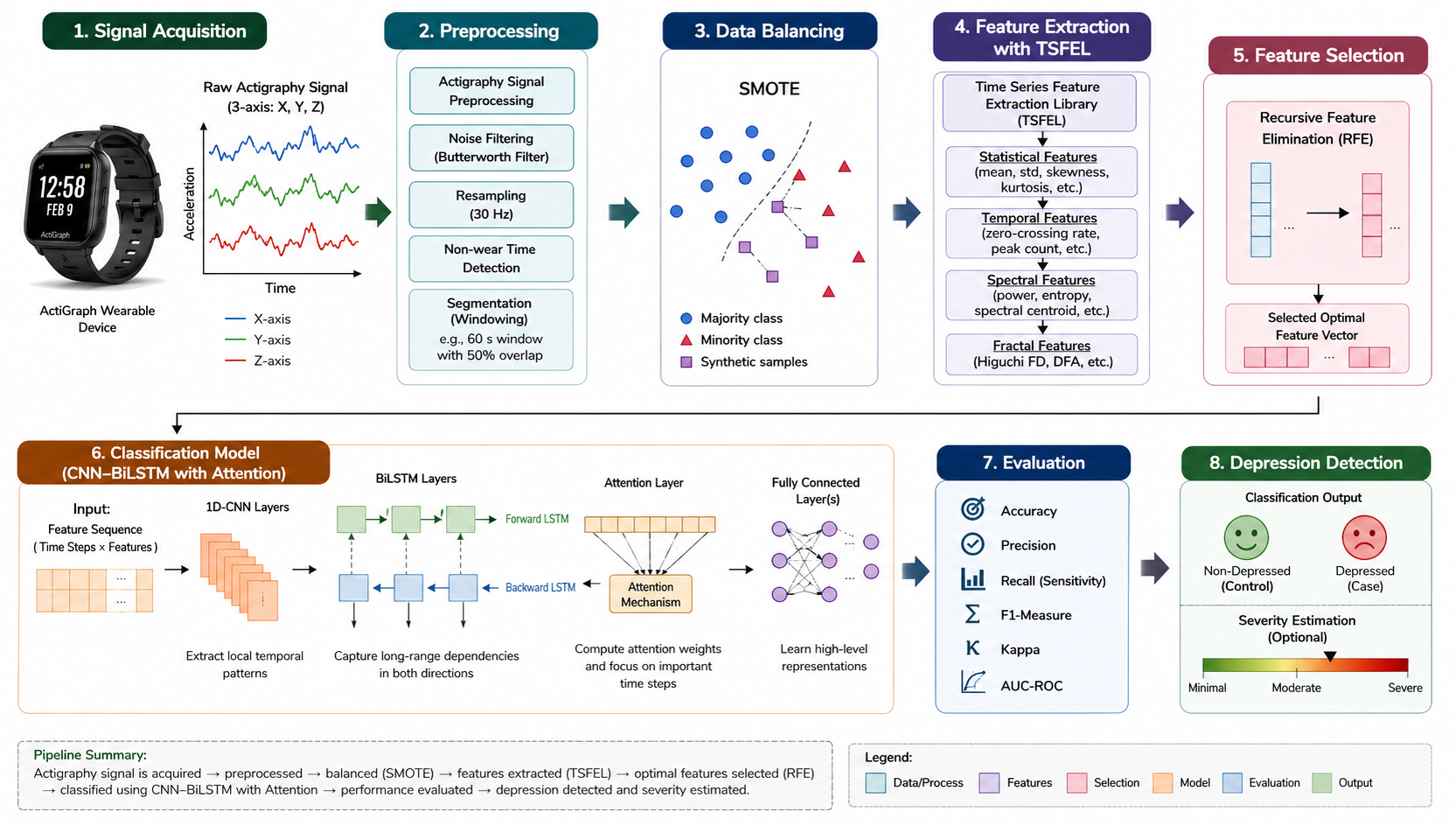

Depression is a widespread mental health disorder with significant personal and societal impacts, yet its early detection remains challenging due to reliance on subjective clinical assessments. Advances in wearable technologies, particularly actigraphy, enable continuous and objective monitoring of behavioral patterns, offering new opportunities for data-driven mental health analysis. In this study, we propose a novel deep learning framework based on a CNN–BiLSTM architecture with an attention mechanism for automated depression detection using Internet of Medical Things (IoMT)-based actigraphy signals. The model effectively captures local temporal patterns and long-range dependencies, while the attention mechanism enhances interpretability by emphasizing clinically relevant time segments. To improve robustness, the framework incorporates preprocessing techniques to address missing data through augmentation and class imbalance using SMOTE. Time-series features are extracted using TSFRESH to capture statistical, temporal, and spectral characteristics, followed by Recursive Feature Elimination (RFE) for feature optimization. These features are then used for classification within the proposed architecture. Experimental results demonstrate that the model achieves superior performance, with an accuracy of 89.93%, along with strong sensitivity, specificity, F1-score, and AUC. These findings highlight the effectiveness of the proposed approach as a scalable, non-invasive solution for early depression detection.