Submitted:

30 April 2026

Posted:

05 May 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Related Work

2.1.1. DDoS Detection in Smart Grid and Microgrid Environments

2.1.2. Deep Learning for Network Anomaly Detection

2.1.3. Unsupervised Anomaly Detection for Industrial Control Systems

2.2. System Architecture and Data Generation

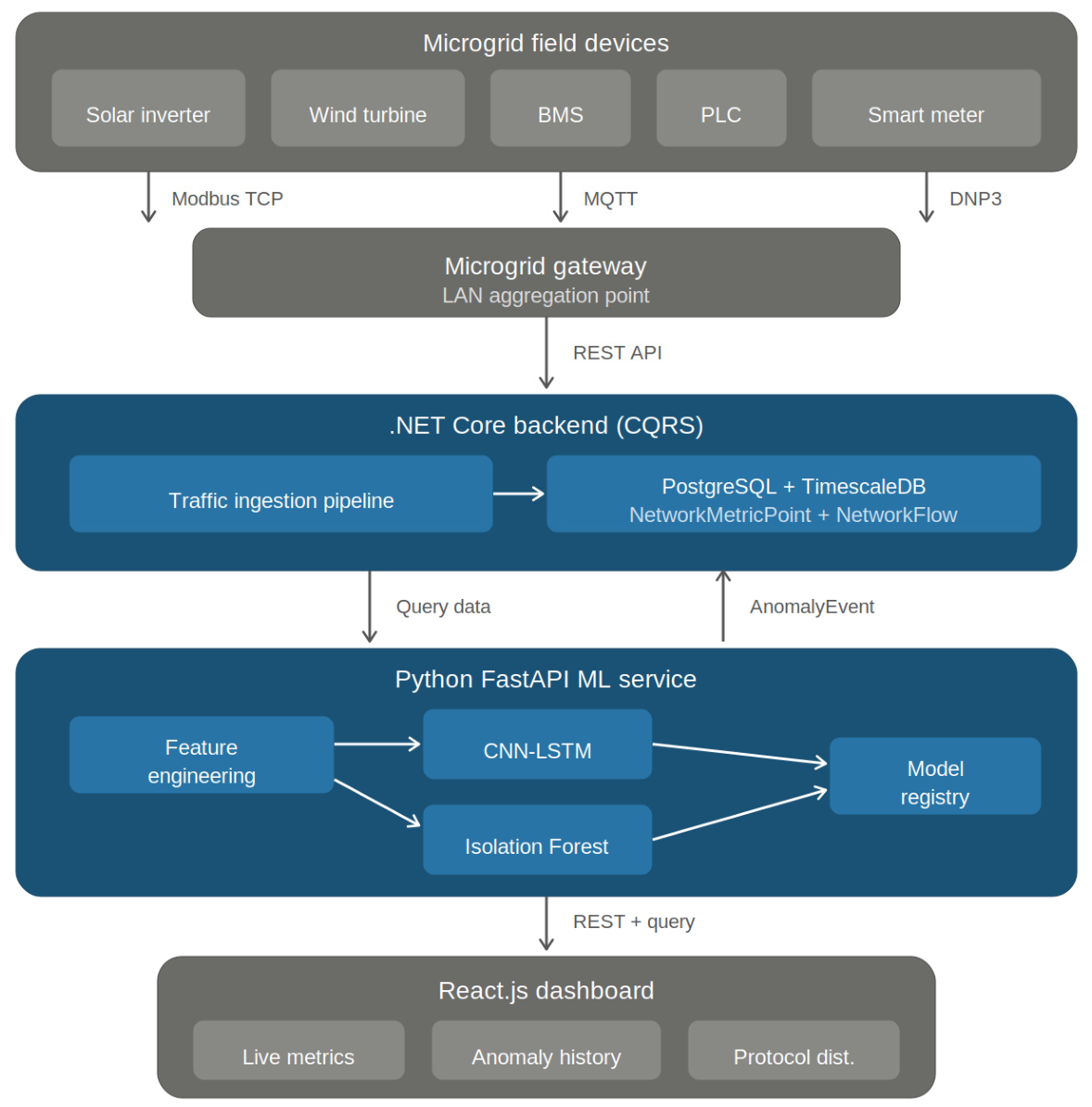

2.2.1. Platform Architecture

2.2.2. Microgrid Communication Model

2.2.3. Synthetic Traffic Generation

2.2.4. Attack Scenario Generation

2.3. Detection Methodology

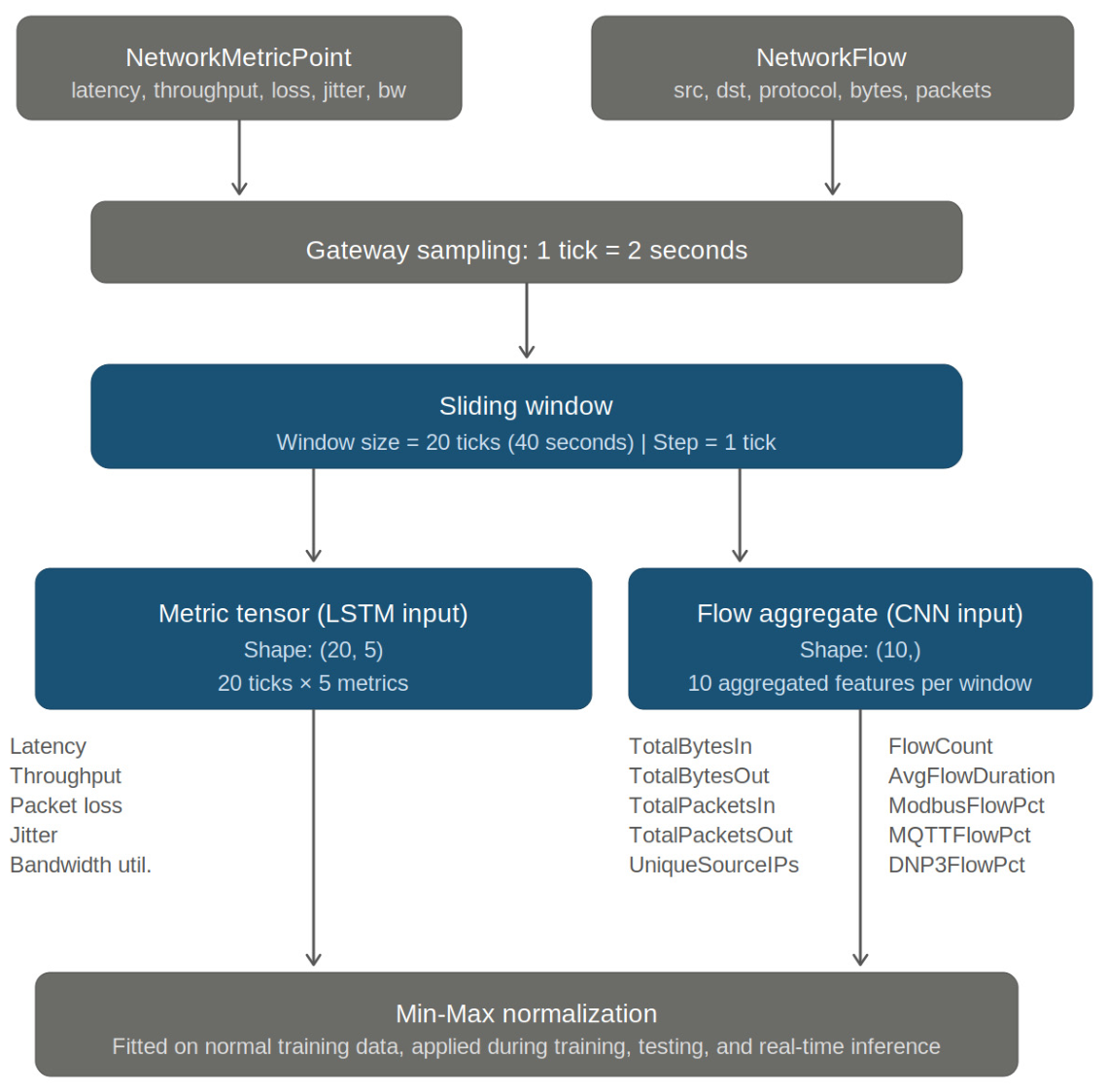

2.3.1. Feature Engineering

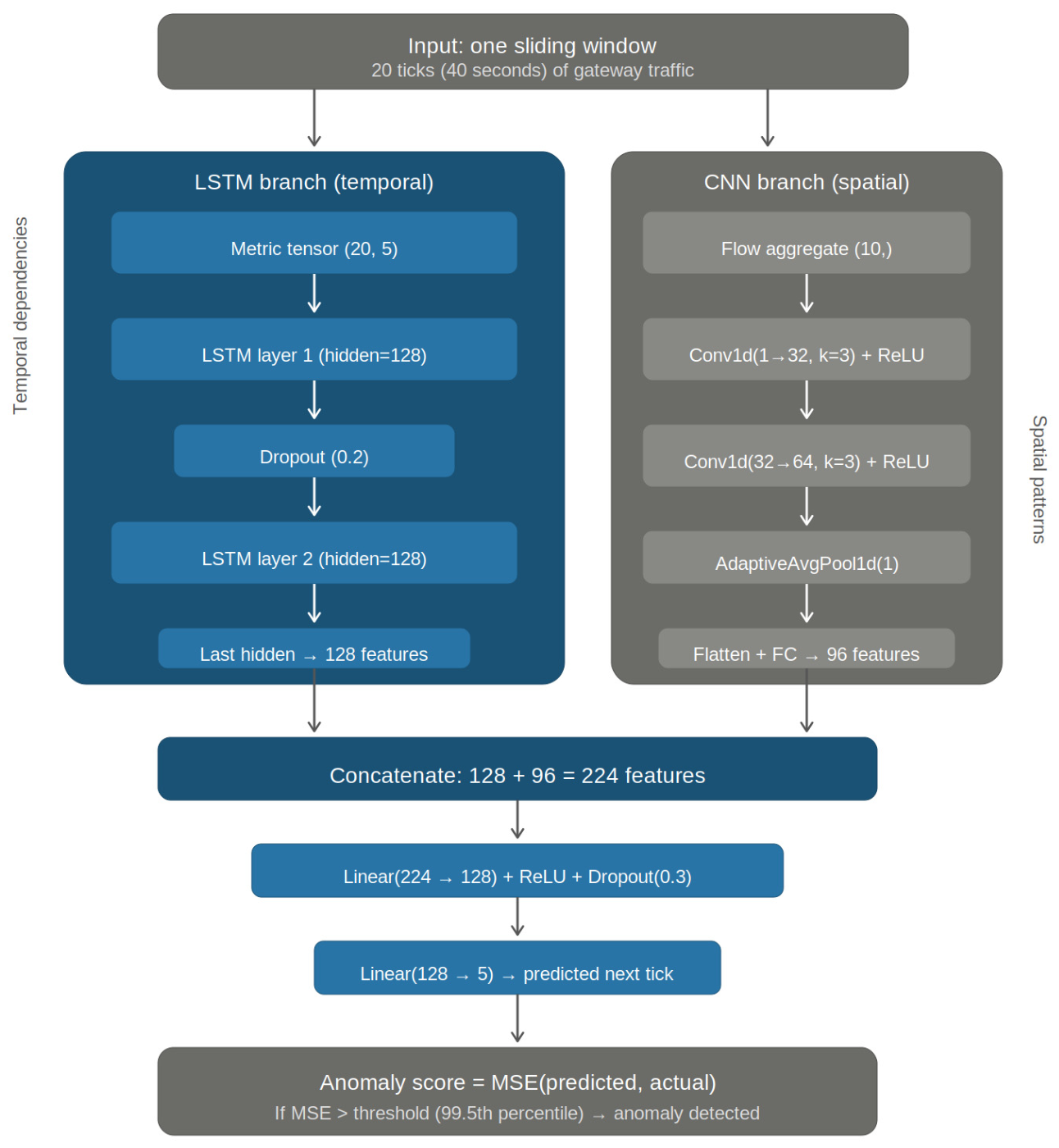

2.3.2. CNN-LSTM Architecture (Proposed Model)

2.3.3. Anomaly Threshold Determination

2.3.4. Isolation Forest Baseline

2.3.5. Ablation Baselines

2.4. Experimental Setup

2.4.1. Dataset Description

2.4.2. Evaluation Protocol

2.4.3. Evaluation Metrics

2.4.4. Implementation Details

| Parameter | Value |

| Learning rate | 0.001 |

| Batch size | 64 |

| LSTM hidden size | 128 |

| LSTM layers | 2 |

| LSTM dropout | 0.2 |

| CNN filters | [32, 64] |

| CNN kernel size | 3 |

| CNN pooling | AdaptiveAvgPool1d |

| CNN output dimension | 96 |

| Fusion input dimension | 224 (128 + 96) |

| Fusion hidden dimension | 128 |

| Fusion dropout | 0.3 |

| Fusion output dimension | 5 |

| Max epochs | 100 |

| Early stopping patience | 10 |

| LR scheduler | None |

| Threshold percentile | 99.5th |

| Window size | 20 ticks |

| Window step | 1 tick |

| Optimizer | Adam |

| Normalization | Min-Max |

| Pfarameter | Value |

| n_estimators | 100 |

| contamination | auto |

| max_samples | auto |

| max_features | 1.0 |

| random_state | 42 |

| Input dimension | 110 (flattened 20 × 5 + 10) |

3. Results and Discussion

3.1. Overall Detection Performance

3.2. Per-Attack-Type Analysis

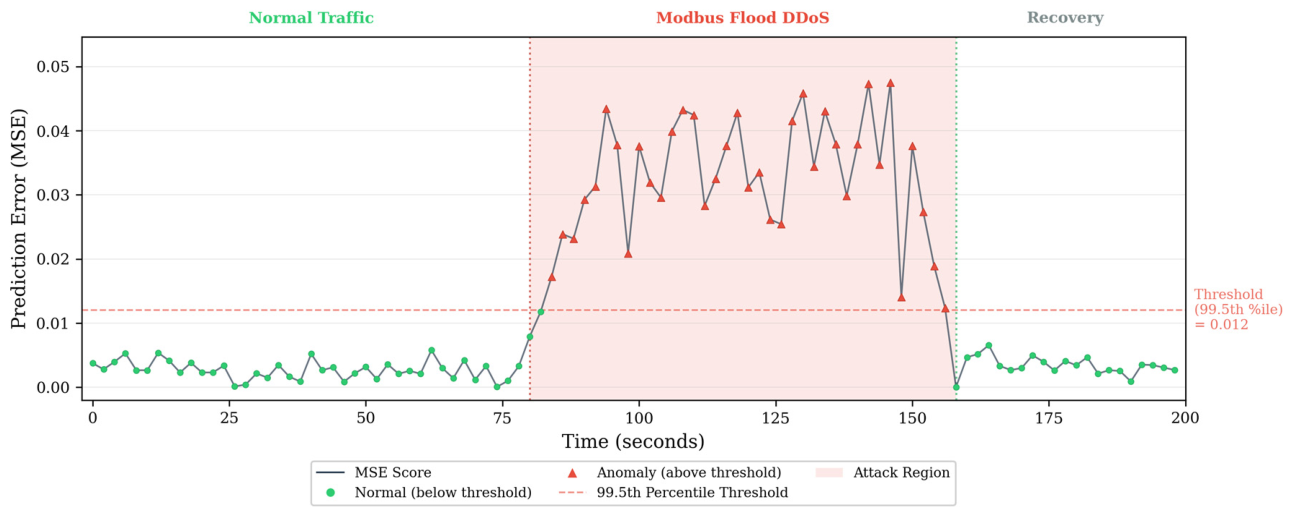

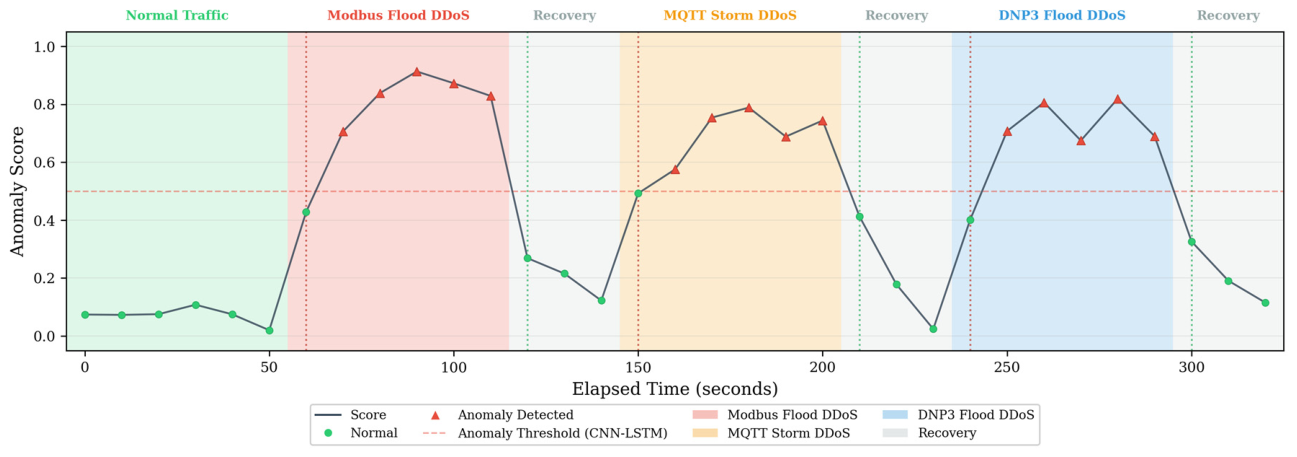

3.3. Anomaly Score Visualization

3.4. Ablation Study

3.5. Computational Cost Analysis.

3.6. Operational Deployment Demonstration

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| API | Application Programming Interface |

| BMS | Battery Management System |

| CNN | Convolutional Neural Network |

| CQRS | Command Query Responsibility Segregation |

| DDoS | Distributed Denial of Service |

| DNP3 | Distributed Network Protocol 3 |

| FN | False Negative |

| FP | False Positive |

| GRU | Gated Recurrent Unit |

| ICS | Industrial Control System |

| IP | Internet Protocol |

| LR | Learning Rate |

| LSTM | Long Short-Term Memory |

| ML | Machine Learning |

| MQTT | Message Queuing Telemetry Transport |

| MSE | Mean Squared Error |

| OCSVM | One-Class Support Vector Machine |

| OT | Operational Technology |

| PLC | Programmable Logic Controller |

| QoS | Quality of Service |

| ReLU | Rectified Linear Unit |

| REST | Representational State Transfer |

| SCADA | Supervisory Control and Data Acquisition |

| SDN | Software-Defined Network |

| SHAP | SHapley Additive exPlanations |

| TCP | Transmission Control Protocol |

| TP | True Positive |

| WAMS | Wide-Area Monitoring System |

References

- Haxhismajli, B.; Marinova, G. Enhancing Microgrid Security: Web-Based Anomaly Detection Using Autoencoder. In Proceedings of the International Conference on Telecommunications and Signal Processing (TSP), Skopje, North Macedonia, 2025.

- Diaba, S.Y.; Elmusrati, M. Proposed algorithm for smart grid DDoS detection based on deep learning. Neural Netw. 2023, 159, 175–184. [CrossRef]

- Naqvi, S.S.A.; Li, Y.; Uzair, M. DDoS attack detection in smart grid network using reconstructive machine learning models. PeerJ Comput. Sci. 2024, 10, e1784. [CrossRef]

- AlHaddad, U.; Basuhail, A.; Khemakhem, M.; Eassa, F.E.; Jambi, K. Ensemble model based on hybrid deep learning for intrusion detection in smart grid networks. Sensors 2023, 23, 7464. [CrossRef]

- Hosseini Rostami, S.M.; Pourgholi, M.; Asharioun, H. Enhancing resilience of distributed DC microgrids against cyber attacks using a transformer-based Kalman filter estimator. Sci. Rep. 2025, 15, 6815. [CrossRef]

- Halbouni, A.; Gunawan, T.S.; Habaebi, M.H.; Halbouni, M.; Kartiwi, M.; Ahmad, R. CNN-LSTM: Hybrid deep neural network for network intrusion detection system. IEEE Access 2022, 10, 99837–99849. [CrossRef]

- Altunay, H.C.; Albayrak, Z. A hybrid CNN+LSTM-based intrusion detection system for industrial IoT networks. Eng. Sci. Technol. Int. J. 2023, 38, 101322. [CrossRef]

- Abdallah, M.; Le Khac, N.A.; Jahromi, H.; Jurcut, A.D. A hybrid CNN-LSTM based approach for anomaly detection systems in SDNs. In Proceedings of the 16th International Conference on Availability, Reliability and Security (ARES), Vienna, Austria, 17–20 August 2021. [CrossRef]

- Sinha, P.; Sahu, D.; Prakash, S.; Yang, T.; Rathore, R.S.; Pandey, V.K. A high performance hybrid LSTM CNN secure architecture for IoT environments using deep learning. Sci. Rep. 2025, 15, 9684. [CrossRef]

- Alashjaee, A.M. Deep learning for network security: An Attention-CNN-LSTM model for accurate intrusion detection. Sci. Rep. 2025, 15, 21856. [CrossRef]

- Choi, W.-H.; Kim, J. Unsupervised learning approach for anomaly detection in industrial control systems. Appl. Syst. Innov. 2024, 7, 18. [CrossRef]

- Altaha, M.; Hong, S. Anomaly detection for SCADA system security based on unsupervised learning and function codes analysis in the DNP3 protocol. Electronics 2022, 11, 2184. [CrossRef]

- Zare, F.; Mahmoudi-Nasr, P.; Yousefpour, R. A real-time network based anomaly detection in industrial control systems. Int. J. Crit. Infrastruct. Prot. 2024, 45, 100676. [CrossRef]

- T Ha, D.T.; Hoang, N.X.; Hoang, N.V.; Du, N.H.; Huong, T.T.; Tran, K.P. Explainable anomaly detection for industrial control system cybersecurity. IFAC-PapersOnLine 2022, 55, 1183–1188.

- Ghosh, T.; Bagui, S.; Bagui, S.; Kadzis, M.; Bare, J. Anomaly detection for Modbus over TCP in control systems using entropy and classification-based analysis. J. Cybersecur. Priv. 2023, 3, 895–913. [CrossRef]

- Modbus Organization. Modbus Application Protocol Specification V1.1b3, 2012. Available online: https://modbus.org/specs.php (accessed on 02 March 2026).

- OASIS. MQTT Version 3.1.1/5.0 Standard, 2014/2019. Available online: https://docs.oasis-open.org/mqtt/mqtt/v5.0/mqtt-v5.0.html (accessed on 02 March 2026).

- IEEE Std 1815-2012; IEEE Standard for Electric Power Systems Communications—Distributed Network Protocol (DNP3). IEEE: Piscataway, NJ, USA, 2012.

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [CrossRef]

- Liu, F.T.; Ting, K.M.; Zhou, Z.H. Isolation forest. In Proceedings of the 8th IEEE International Conference on Data Mining (ICDM), Pisa, Italy, 15–19 December 2008; pp. 413–422. [CrossRef]

- Paszke, A.; Gross, S.; Massa, F.; Lerer, A.; Bradbury, J.; Chanan, G.; Killeen, T.; Lin, Z.; Gimelshein, N.; Antiga, L.; et al. PyTorch: An imperative style, high-performance deep learning library. In Advances in Neural Information Processing Systems 32 (NeurIPS); Curran Associates: Red Hook, NY, USA, 2019.

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830.

| Feature | Description | Unit | Sampling Rate |

| Latency | Round-trip communication delay | ms | 2 s |

| Throughput | Data transfer rate at gateway interface | Mbps | 2 s |

| Packet Loss | Proportion of packets dropped | % | 2 s |

| Jitter | Inter-arrival time variation | ms | 2 s |

| Bandwidth Utilization | Proportion of available bandwidth in use | % | 2 s |

| Field | Description | Type | Example |

| SrcIp | Source IP address | string | 192.168.1.10 |

| DstIp | Destination IP address | string | 203.0.113.50 |

| Protocol | OT protocol identifier | string | Modbus/MQTT/DNP3 |

| BytesIn | Bytes received | integer | - |

| BytesOut | Bytes sent | integer | - |

| PacketsIn | Packets received | integer | - |

| PacketsOut | Packets sent | integer | - |

| TsStart | Flow start timestamp | datetime | - |

| TsEnd | Flow end timestamp | datetime | - |

| FlowDuration | TsEnd − TsStart | seconds | - |

| Parameter | Modbus TCP | MQTT | DNP3 |

| Polling interval | 1–5 s | Event-driven | 2–10 s |

| Typical packet size | 60–260 bytes | 50–1500 bytes | 10–292 bytes |

| Function codes / message types | FC 03, 04 (read) | PUBLISH, SUBSCRIBE | Integrity poll, event class |

| Specification reference | [16] | [17] | [18] |

| Attack Scenario | Protocol | Traffic Signature | Metric Impact |

| Modbus SCADA Flooding | Modbus TCP | Few sources, large flows, bandwidth saturation, disrupted polling regularity | BandwidthUtil 90–100%, high PacketLoss + Latency |

| MQTT Publish Storm | MQTT | Many sources, small flows, packet explosion, QoS shift | Moderate BandwidthUtil, high PacketCount |

| DNP3 Response Flooding | DNP3 | Multi-source burst, small packets, high jitter, timing deviation | Very high Jitter, sharp Latency spike |

| Class | Label | Protocol | Windows | % of Test Set |

| Normal | 0 | All | ~8,600 per fold | ~44% |

| Modbus Flood | 1 | Modbus TCP | ~3,580 | ~19% |

| MQTT Storm | 2 | MQTT | ~3,580 | ~19% |

| DNP3 Flood | 3 | DNP3 | ~3,580 | ~18% |

| Feature | Mean | Std Dev | Min | Max | Skewness |

| Latency (ms) | 12.4 | 3.7 | 2.1 | 45.8 | 1.83 |

| Throughput (Mbps) | 4.6 | 1.9 | 0.3 | 12.1 | 0.72 |

| Packet Loss (%) | 0.8 | 0.6 | 0.0 | 4.2 | 2.14 |

| Jitter (ms) | 2.1 | 1.3 | 0.1 | 11.7 | 2.47 |

| Bandwidth Util. (%) | 38.2 | 14.7 | 3.5 | 78.6 | 0.41 |

| TotalBytesIn | 24,580 | 8,430 | 1,240 | 62,100 | 0.89 |

| TotalBytesOut | 18,920 | 7,110 | 890 | 51,300 | 0.94 |

| TotalPacketsIn | 187 | 64 | 12 | 478 | 0.76 |

| TotalPacketsOut | 142 | 53 | 8 | 389 | 0.81 |

| UniqueSourceIPs | 4.2 | 1.1 | 1 | 9 | 0.63 |

| FlowCount | 6.8 | 2.3 | 1 | 18 | 0.71 |

| AvgFlowDuration (s) | 1.87 | 0.42 | 0.3 | 3.9 | 0.38 |

| Modbus % | 34.1 | 8.7 | 0.0 | 100.0 | 0.52 |

| MQTT % | 41.3 | 9.2 | 0.0 | 100.0 | −0.31 |

| DNP3 % | 24.6 | 7.8 | 0.0 | 100.0 | 0.44 |

| Model | Precision | Recall |

| CNN-LSTM (proposed) | 0.967 ± 0.012 | 0.953 ± 0.014 |

| Isolation Forest | 0.921 ± 0.018 | 0.894 ± 0.021 |

| LSTM-only | 0.948 ± 0.015 | 0.931 ± 0.017 |

| CNN-only | 0.932 ± 0.019 | 0.907 ± 0.022 |

| Model | Modbus Flood Recall | MQTT Storm Recall | DNP3 Flood Recall |

| CNN-LSTM | 0.981 ± 0.008 | 0.957 ± 0.013 | 0.923 ± 0.019 |

| Isolation Forest | 0.942 ± 0.016 | 0.897 ± 0.024 | 0.843 ± 0.028 |

| LSTM-only | 0.968 ± 0.011 | 0.939 ± 0.016 | 0.887 ± 0.023 |

| CNN-only | 0.951 ± 0.014 | 0.912 ± 0.020 | 0.857 ± 0.026 |

| Window Size (ticks) | Precision | Recall |

| 10 | 0.934 ± 0.021 | 0.917 ± 0.024 |

| 20 (default) | 0.967 ± 0.012 | 0.953 ± 0.014 |

| 30 | 0.971 ± 0.011 | 0.949 ± 0.015 |

| 40 | 0.969 ± 0.013 | 0.944 ± 0.017 |

| Percentile | Precision | Recall |

| 90th | 0.891 ± 0.023 | 0.978 ± 0.009 |

| 95th | 0.928 ± 0.017 | 0.971 ± 0.011 |

| 97th | 0.947 ± 0.014 | 0.964 ± 0.013 |

| 99th | 0.961 ± 0.013 | 0.958 ± 0.014 |

| 99.5th (default) | 0.967 ± 0.012 | 0.953 ± 0.014 |

| Model | Parameters | Model Size (MB) | Training Time/Fold | Inference Time/Window |

| CNN-LSTM | ~243K | ~1.0 | ~8 min | ~2 ms |

| Isolation Forest | N/A | ~15 | ~30 s | ~0.1 ms |

| LSTM-only | ~201K | ~0.8 | ~5 min | ~1.5 ms |

| CNN-only | ~13K | ~0.05 | ~2 min | ~0.5 ms |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).