2. Introduction

Artificial neural networks rely fundamentally on nonlinear activation functions to learn complex representations. Traditionally, standard activations, such as the Rectified Linear Unit (ReLU) or the hyperbolic tangent (Tanh), apply a fixed mathematical transformation identically across nodes in a network layer.(Nair and Hinton, 2010) However, in complex biological systems, information processing is rarely rigid; neurons can dynamically modulate their responses to regulate information flow, as observed in sensory systems such as the retina.(Perlman and Normann, 1998; Sato and Kefalov, 2025) Inspired by this principle, an ideal trainable activation function should be able to modulate the output signal of each node by learning whether the node should become more sensitive or less sensitive to specific input ranges according to the task.

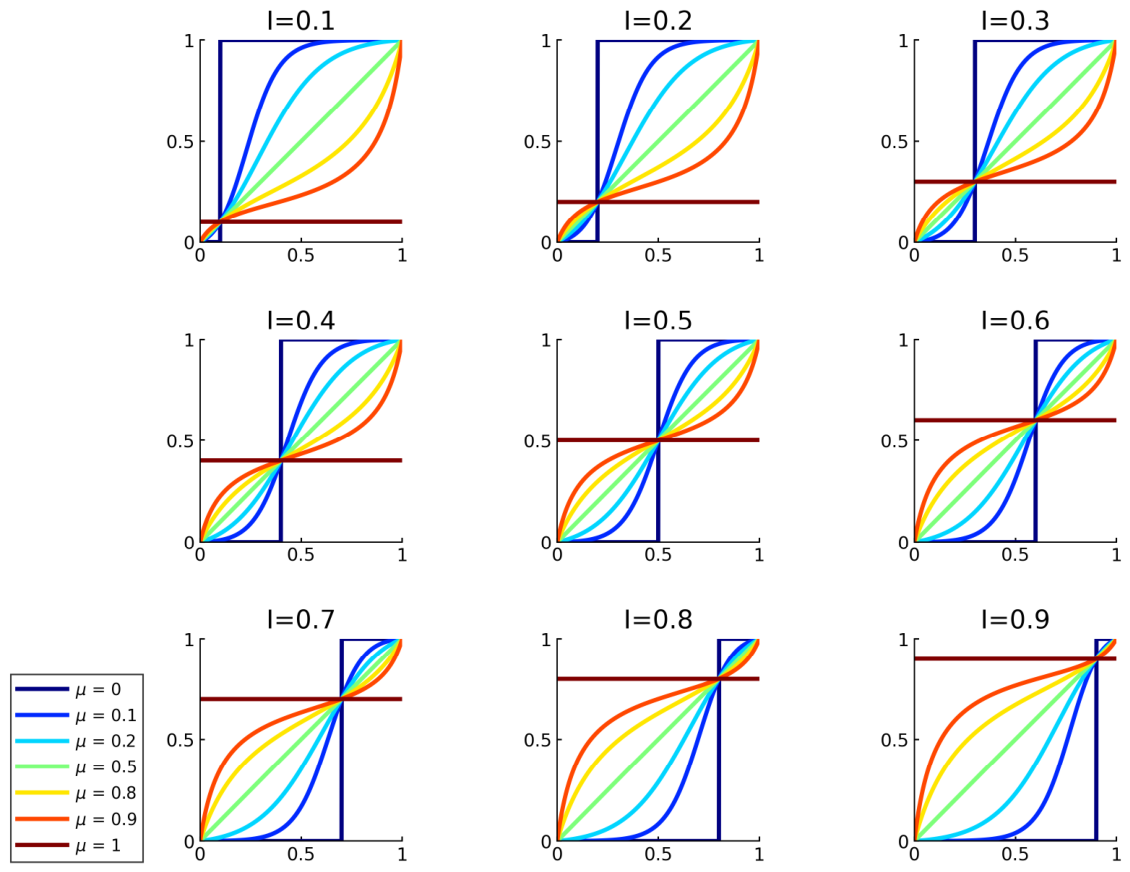

To address this need for flexible signal modulation, the Cannistraci–Muscoloni–Gu Generalized Logistic–Logit Function (CMG-GLLF) was recently introduced.(Gu et al., 2025) CMG-GLLF is a trainable activation function that allows explicit and independent control over steepness, asymmetry, and exact reachable boundaries on both the x- and y-axes. By learning these parameters during backpropagation, CMG-GLLF modulates the signal sensitivity of each node. The learned logistic curves can sharpen the input distribution by increasing local sensitivity in informative regions. Conversely, the learned logit curves can compress differences across selected input ranges, reducing the node’s sensitivity to variation.

Because this generalized function can be deployed at various stages of a neural network, it is important to establish clear terminology regarding its application. In this study, we use the term “Input Feature Modulator” (IFM) specifically to indicate the application of the CMG-GLLF to the input layer of a network. Conversely, when applied to the hidden layers of a network, it is referred to as a hidden-layer activation function. However, regardless of whether it is deployed as an IFM at the input layer or as activation function in the hidden layers, the underlying mechanism remains identical: it is a trainable activation function designed to dynamically modulate the node input representation for better model performance.

Despite these significant theoretical advantages, the original implementation of the CMG-GLLF (Gu et al., 2025) has certain practical limitations. Because the generalized logit-phase of the curve lacked an explicit analytical expression, previous implementation relied on an implicit approximation algorithm to invert the logistic curve. This implicit approximation not only increase computational overhead, but also requires us to use implicit function theorem to calculate approximated gradients, which might lead to numerical instability during backpropagation. Consequently, its application is limited to simple structures like multi-layer perceptrons (MLPs), preventing it from being tested on more complex architectures.

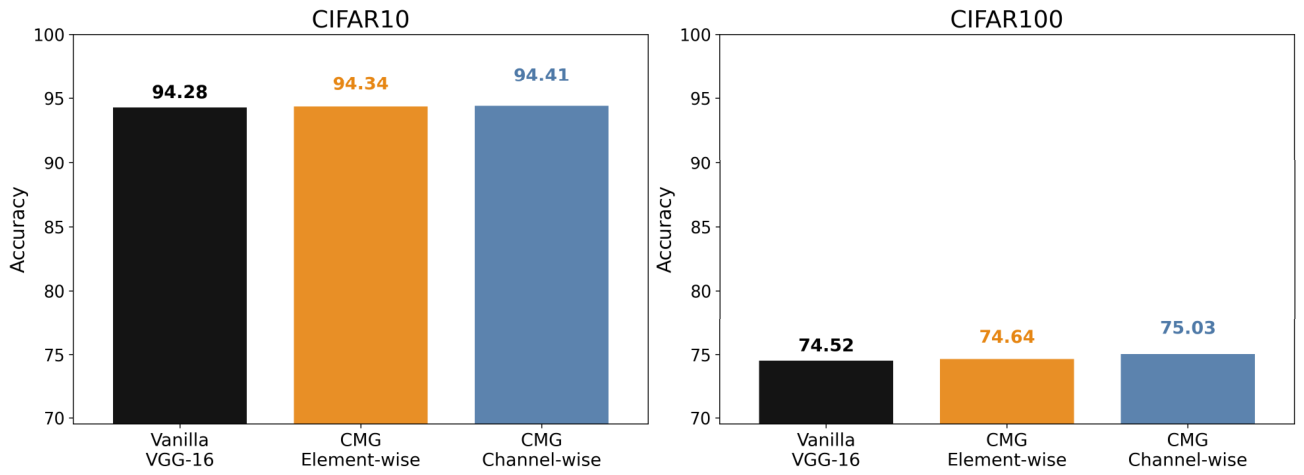

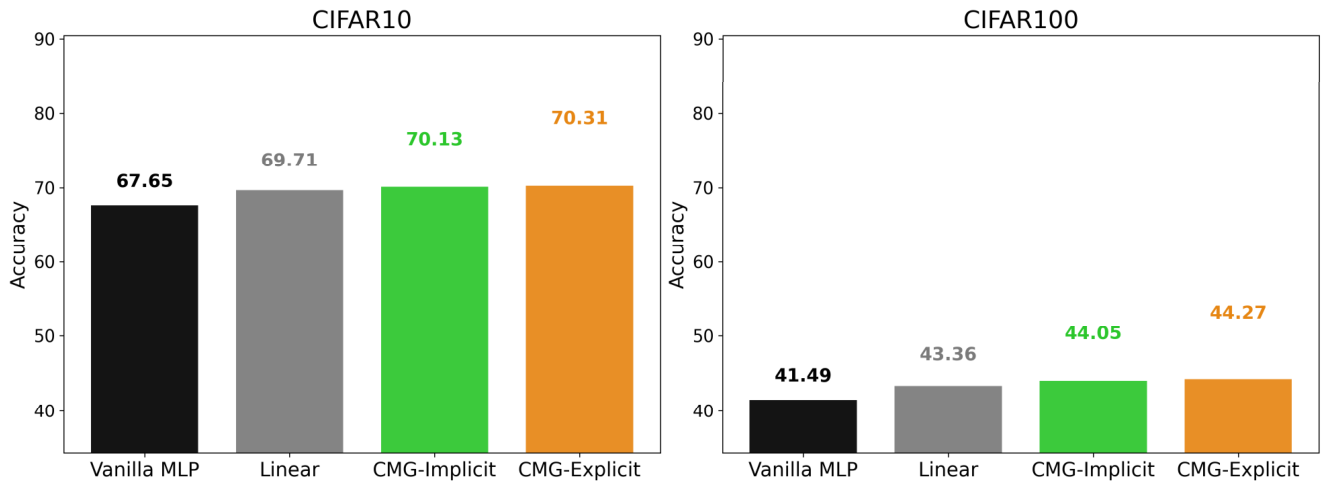

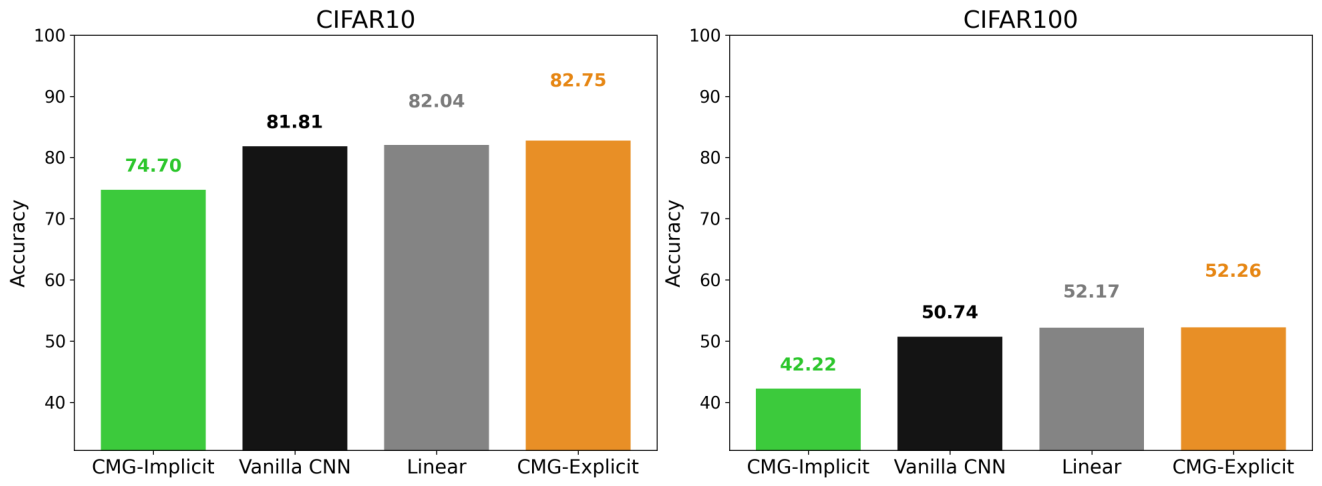

In this study, we overcome these bottlenecks by deriving a fully explicit and differentiable formulation of the CMG-GLLF using a one-step Newton’s method approximation, which we refer to as CMG-Explicit.(Kelley, 2003) This formulation eliminates the need for implicit inversion and implicit-gradient approximation, thereby resolving the numerical-instability issues of the previous CMG-Implicit implementation while reducing GPU memory and training-time overhead to near-vanilla-network levels. Enabled by this scalable formulation, we systematically evaluate CMG-Explicit as both an input feature modulator and a hidden-layer activation function. Across MLPs, simple CNNs, and VGG-16 on CIFAR-10/100, CMG-Explicit, as IFM, improves performance over vanilla networks and linear modulators, with the channel-wise VGG-16 strategy achieving strong gains using only six additional learnable parameters.(Simonyan and Zisserman, 2015)

When using CMG-Explicit as hidden layer activation functions, except data-driven image classification task, physics-informed neural networks (PINN) provide a complementary benchmark because multilayer perceptrons are the most common neural architecture used in PINN frameworks, while Tanh is widely used and often reported to provide strong and stable performance in PINN applications.(Raissi et al., 2019; Zhao et al., 2024; Fan and Chen, 2026) This makes them a natural setting to evaluate whether CMG-GLLF, a generalized and trainable logistic-logit function, can learn task-adaptive transformations beyond standard choices.

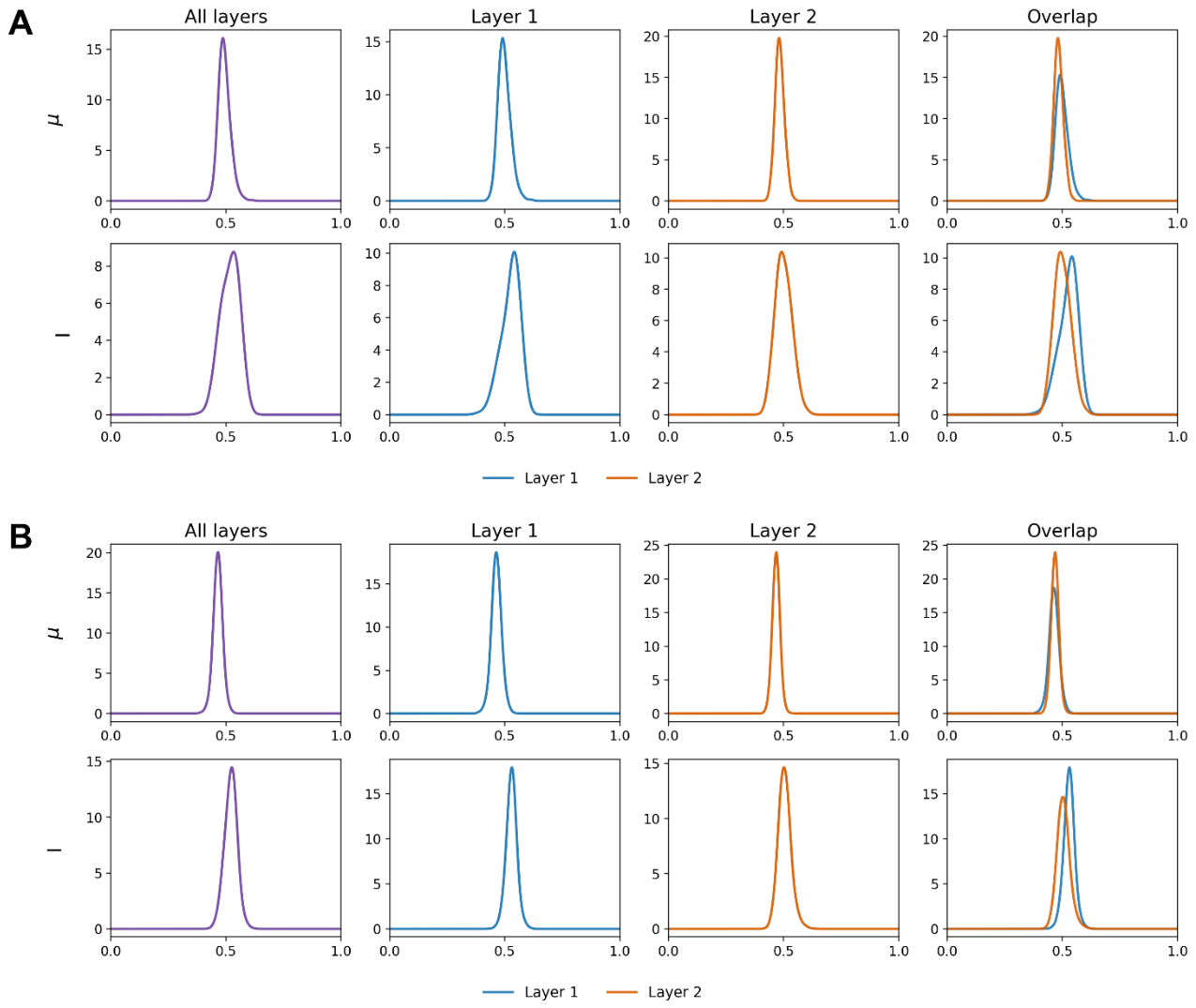

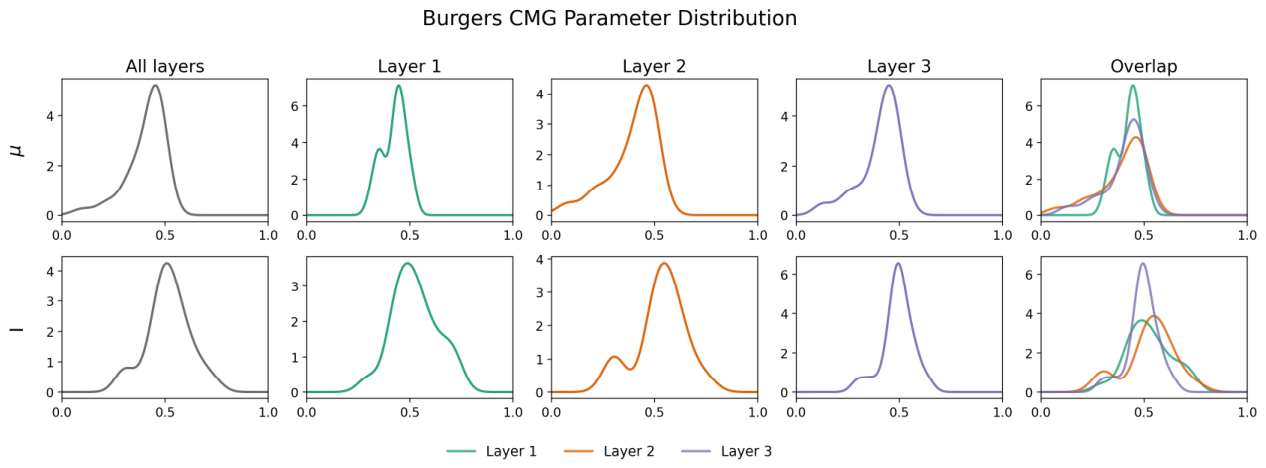

We demonstrate that, when used as a hidden-layer activation function, CMG-Explicit provides explainable functional adaptation: in classical image-classification tasks, CMG-Explicit shape converges toward ReLU-like behavior, and thus achieving similar classification accuracy compared to ReLU; whereas in Physics-Informed Neural Networks it learns more diverse activation shapes that outperform the standard Tanh baseline across multiple PINN benchmarks. These results demonstrate the profound potential for explainability of CMG-Explicit. By analyzing the parameter distributions learned by the network, researchers can achieve a high degree of explainability—empirically showing what functional behavior the nodes are trying to adopt to solve a given task. Therefore, the value of the CMG-GLLF lies not only in improving predictive performance, but in its ability to explicitly explain the performance gains of activation function node modulation in neural networks.