3. Methodology

3.1. Problem Definition and Unit of Analysis

The problem addressed is the lack of a measurable and consistent way to operationalise likelihood in cybersecurity risk assessment from heterogeneous evidence sources. The objective is not to claim an exact frequentist probability of cyber incidents, but to define an evidence-based indicator that can support monitoring, control determination and prioritization, and comparison across organizations and repeated observation periods.

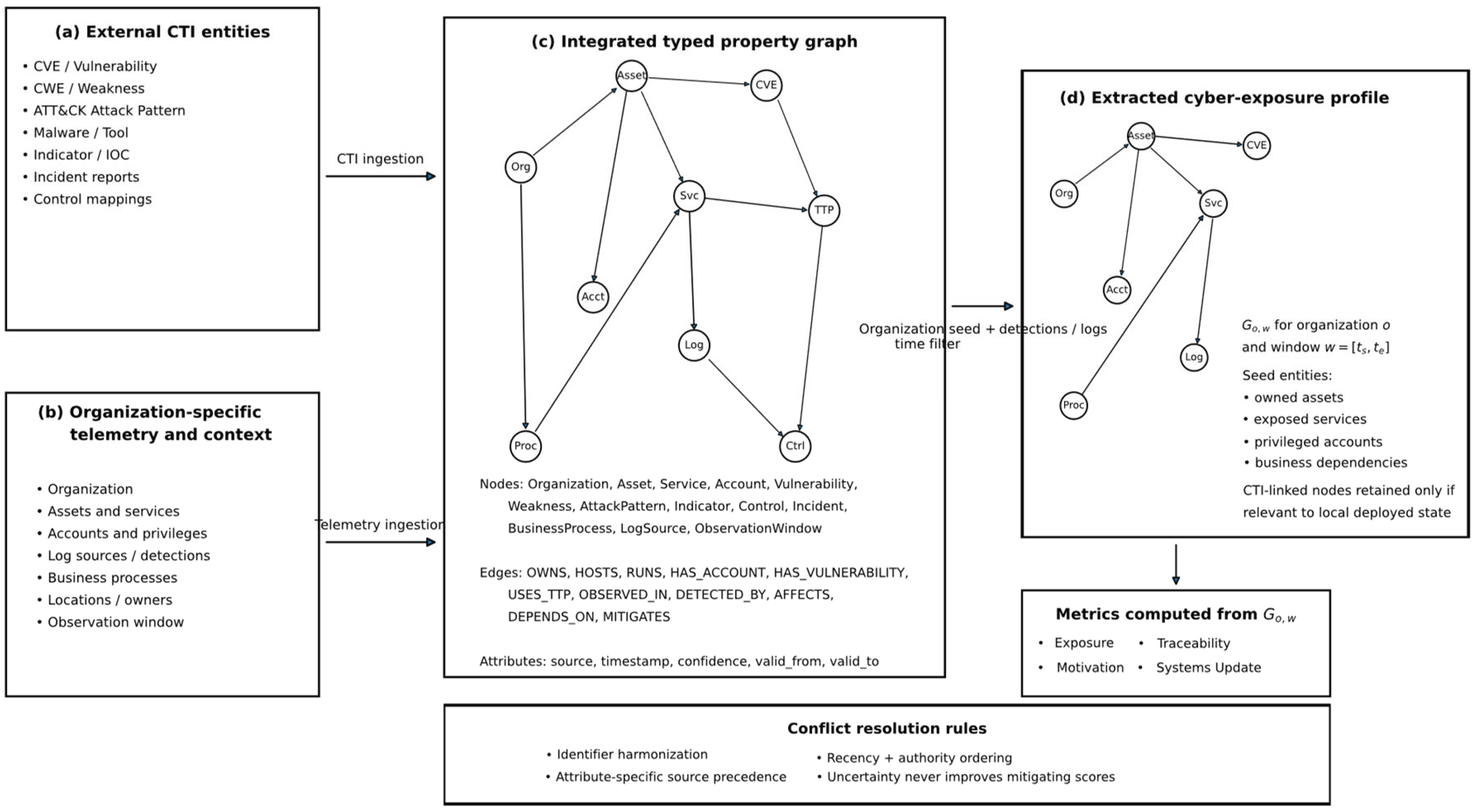

A first layer of the graph is built from structured information about vulnerabilities, weaknesses, adversary behaviour, and defensive techniques. A second layer provides the observations required to compute the metrics in

Table 1: asset inventories, configuration-management records, identity and access data, vulnerability scans, operating-system and application logs, network telemetry, patch status, and business-context attributes. The framework defines source classes rather than a mandatory tooling stack so that it can be instantiated across different sectors and maturity levels. Incident data are ingested mainly for grounding, evaluation, and calibration, not as the sole basis for the likelihood score. During ingestion, records are time-bounded, standardized, duplicates eliminated, and mapped to common identifiers; source quality is recorded explicitly to fortify metric aggregation when evidence is weaker.

3.2. Framework Artifacts and Workflow

The methodology is organized as an artefact-based workflow consistent with design-science research (Hevner et al., 2004; Peffers et al., 2007). Each stage transforms heterogeneous evidence into a more structured representation until a bounded likelihood indicator and associated control priorities can be computed.

Artefact A1 is a unified cyber risk data model that defines entity types, attributes, and relations required to represent assets, users, services, vulnerabilities, techniques, indicators, controls, incidents, and contextual metadata.

Artefact A2 is the cyber-exposure profile: the organization and time-bounded subgraph extracted from A1 for the assessed case.

Artefact A3 is the metric registry, specifying how observable evidence is transformed into measurable variables through explicit gathering methods, units, orientations, and normalization rules.

Artefact A4 is the likelihood computation rule, which maps normalized component values to a bounded score while preserving monotonicity and auditability.

Artefact A5 is the control determination output, translating measured conditions into actionable defensive recommendations and closing the loop between measurement and control selection.

The workflow proceeds from source acquisition and standardization to graph construction, profile extraction, metric computation, likelihood scoring, and control interpretation. Although presented sequentially, it is iterative: each intervention cycle adds new data, updated infrastructure conditions, and new outcome evidence, enabling successive approximations rather than one-time assessment.

3.3. Data Sources and Ingestion

Artefact A1 feeds from structured information about vulnerabilities, weaknesses, adversary behaviour, defensive techniques, and exploited conditions. Typical sources include CVE, CWE, NVD, exploited-vulnerability feeds, ATT&CK, and D3FEND, selected because they provide stable identifiers and structured mappings that support graph integration and control reasoning (Kaloroumakis & Smith, 2021; Strom et al., 2020). Where feasible, cyber threat intelligence objects are represented using STIX 2.1 and exchanged using TAXII 2.1 (OASIS Open, 2021a, 2021b).

Several cybersecurity datasets were surveyed as candidates for the incident-narrative corpus (Aldribi et al., 2018, 2019, 2020; Sarker et al., 2020), including the gfek Real-CyberSecurity-Datasets collection, VERIS Community Database, Hackmageddon, APTnotes, and CASIE/CySecED corpora. After running the feature identification and merging process, the following corpora were selected and processed following Abbiati et al. (2021): the VERIS Community Database (VCDB; github.com/vz-risk/VCDB), providing structured JSON incident records each containing a free-text summary field encoding what happened, how, and to which assets; and the Hackmageddon timeline collection (hackmageddon.com), providing bi-weekly curated incident entries with short prose descriptions derived from public news sources and security blogs (Passeri, 2011–2024). Both sources are defender-side, incident-level, and publicly accessible.

Heterogeneous sources such as IDS alerts, operative system logs, PCAPs, malware binaries, malicious URL datasets, and sensor logs were surveyed, and LANL, HIKARI-2021, and ISOT-CID datasets consistently describe the pre-incident organizational conditions needed to explain why an event was more or less likely, these operational dataset were ingested in its native structure, normalized into observation windows by organization, host, asset class, or time interval, and the description s of events in logs, ports and access control were retrieve form their documentation to reconstruct the corresponding narratives. After which LANL netflow contributes device, port, protocol, traffic-volume, and remote-access indicators, while LANL Windows host events contribute successful and failed logons, Kerberos ticket requests, credential validation, explicit credential use, special privileges, session identifiers, process starts, and process ends (Turcotte et al., 2018); HIKARI-2021 is processed as labeled intrusion-detection traffic to derive scan-like behavior, brute-force activity, service targeting, port concentration, and exploit-oriented traffic features (Ferriyan et al., 2022); and ISOT-CID is processed as cloud-security telemetry, extracting indicators from VM-level and hypervisor-level traffic, system logs, performance data, and system calls where available (Aldribi et al., 2019).

The merged corpus comprised N = 57,105 records before validation filtering. Near-duplicate removal used MinHash LSH (Jaccard ≥ 0.92, character 5-grams, 256 permutations), eliminating 1,803 records (3.2%), with cross-source duplicates — the same high-profile incident receiving a summary entry in VCDB and a timeline entry in Hackmageddon — accounting for the majority of removals. A known coverage limitation is that Hackmageddon over-represents high-profile, publicly disclosed incidents, inflating motivation- and exposure-relevant vocabulary relative to internal-only events. The NLP pipeline is used here for construct validation rather than incidence estimation, so this skew affects the prominence of specific terms within clusters rather than the existence or separability of the clusters themselves.

3.4. Feature Extraction and Clustering

The incident corpus comprised N = 57,105 incident records after basic validation. A MinHash LSH near-duplicate filter (Jaccard ≥ 0.92, char 5-grams, 256 permutations) removed 1,803 records (3.2%), leaving 55,302 records. After the NLP normalization length filter (≥ 4 tokens), the final modelling set comprised N = 55,107 incident narratives. The median incident narrative length was 73 tokens (IQR: 16–80; P95 = 86) after tokenization.

A deterministic text normalization pipeline was applied to the incident-description fields, consistent with established NLP practice (Manning et al., 2008; Salton & Buckley, 1988). The pipeline performed Unicode normalization, lowercasing, and rule-based tokenization that preserved cybersecurity-relevant identifiers (CVE IDs, ATT&CK technique IDs, port numbers). High-signal entity types were replaced with typed placeholders (e.g., IPv4/IPv6 addresses → <IP>, URLs → <URL>, file hashes → <HASH>). Standard and domain-specific stopwords were removed (461 items total), while retaining discriminative security terms. Acronym expansion (1,124-entry dictionary) and collocation-based phrase promotion (10,000 accepted phrases) were applied to capture multi-word security concepts such as “privilege escalation” and “lateral movement” as compound tokens. Light lemmatization reduced inflectional variance while exempting preserved identifiers.

3.5. TF-IDF Representation

Incident narratives were represented using the term-frequency–inverse document frequency (TF–IDF) model (Manning et al., 2008; Salton & Buckley, 1988). A smoothed IDF variant and sublinear term-frequency transform were used:

Each document vector was L2-normalized (||wd||2 = 1). The TF–IDF model used a word-level analyser, n-gram range (1,3), minimum document frequency min_df = 20, and maximum document frequency max_df = 0.90. After pruning, the effective vocabulary was V* = 25,022 features. On the final corpus of N = 55,107 incident narratives, the resulting sparse TF–IDF matrix contained 6,591,100 non-zero entries (density 0.478%; sparsity 99.522%). The mean number of non-zero features per narrative was 120 (median 112; 95th percentile 224), consistent with sparse representations of operational text that varies in length between short incident summaries and longer log-derived narratives. Three mechanisms stabilized the representation prior to dimensionality reduction: sublinear TF to temper repeated terms, IDF smoothing to prevent extreme weights for rare terms, and L2 normalization to ensure unit-length document vectors. Globally discriminative features in an illustrative run included tokens/phrases such as “coverage,” “correlation,” “logging,” “credential,” “alert,” “remote_access,” “unmanaged,” “ransomware_attack,” “data breach,” and “patch.”

3.6. Dimensionality Reduction and Clustering

The TF–IDF matrix

was reduced via truncated singular value decomposition (SVD), using a randomized SVD solver with oversampling (p = 20) and power iterations to improve accuracy on large sparse inputs (Deerwester et al., 1990; Halko et al., 2011), explaining 37.2% of total variance. SVD was preferred over PCA because it operates efficiently on sparse matrices without requiring mean-centering, which can densify sparse matrices and materially increase computational cost. The rank-

approximation is:

where

contains the document factors,

the top k singular values, and

the term factors. We set k = 150 latent dimensions, appropriate to the corpus scale and consistent with inspection of the singular value spectrum. Document embeddings

were used as the reduced representation for downstream clustering.

After dimensionality reduction, incident narratives were clustered using spherical k-means, which minimizes cosine dissimilarity and is well-suited for term-weight and latent-semantic representations (Dhillon & Modha, 2001; Hornik et al., 2012), with k-means++ seeding for stability (Arthur & Vassilvitskii, 2007). Twenty-five independent initializations were run with a maximum of 300 iterations each; the solution with the lowest objective value (highest average within-cluster cosine) was retained. The number of clusters K was selected by evaluating K ∈ {2,3,…,12} using silhouette indices computed on the full corpus, repeated across five independent random sub-samples of 10,000 embeddings to estimate variability. The silhouette curve increased from K = 2 to K = 6 (peak score 0.2109 ± 0.0033) and then declined; K = 4 registered a local inflection in the curve (score 0.1932 ± 0.0015) and was selected on two grounds: it aligns with the four-construct theoretical framework, and analyst review of centroid-loading terms and exemplar narratives at K ∈ {4, 5, 6} confirmed that the K = 4 partition yields four internally coherent and interpretively distinct themes, whereas K = 5 and K = 6 primarily subdivide the motivation and exposure/systems update themes without adding new construct coverage. For K ∈ {4, 5, 6}, two analysts reviewed the top 30 centroid-loading terms per cluster and 20 exemplar incidents nearest to each centroid. Cluster coherence rates were 94% at K = 4, 88% at K = 5, and 86% at K = 6, confirming K = 4 as the preferred solution for downstream governance use.

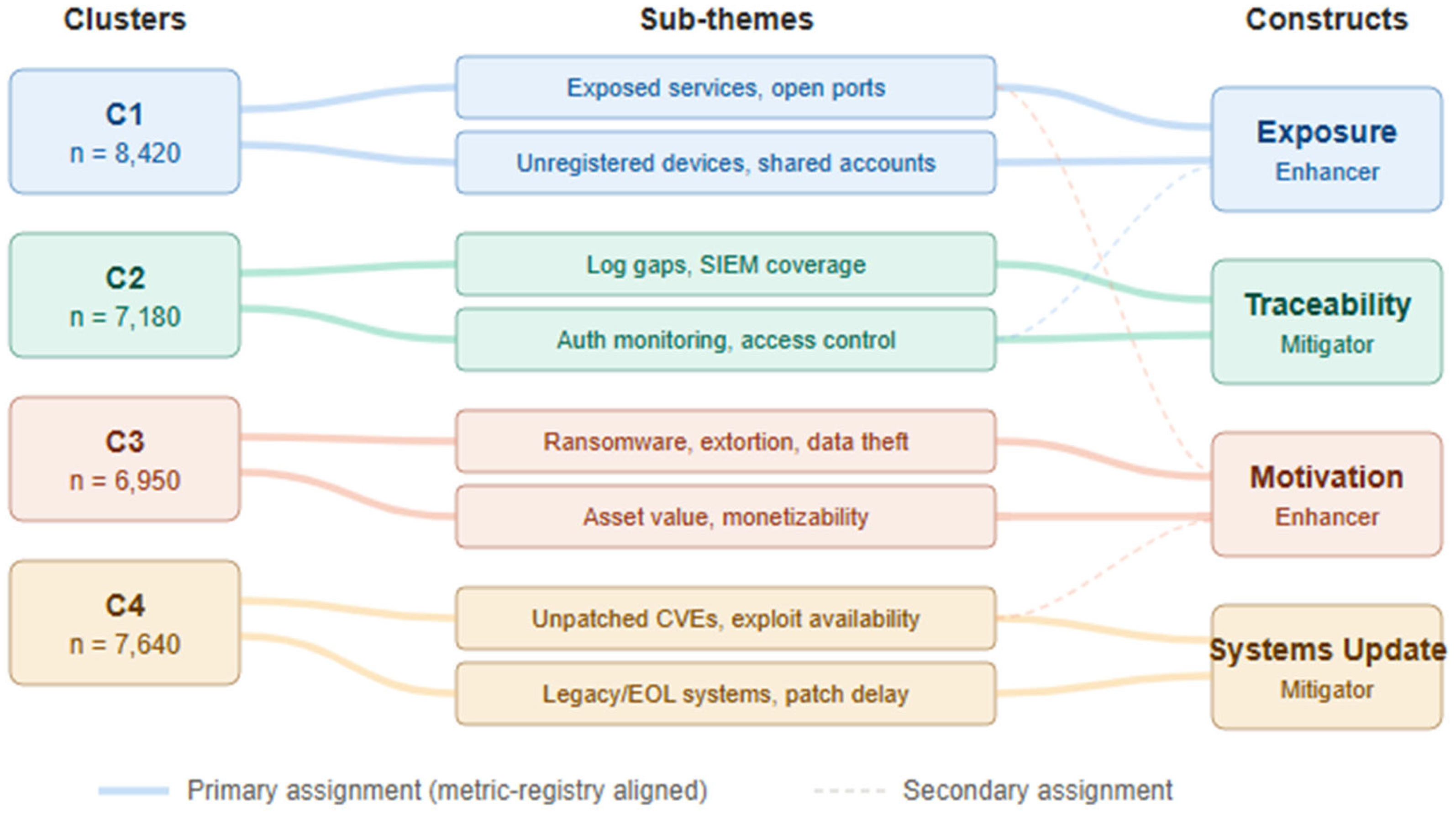

3.7. Cluster-to-Construct Mapping

The resulting four clusters were independently examined by two analysts. Each cluster’s top centroid-loading terms and twenty nearest-centroid exemplar narratives were used to assign a primary framework construct following a pre-specified metric-registry alignment rule: a cluster was assigned to the construct whose

Table 1 metrics are most directly informed by the cluster’s top-loading terms. Agreement on primary construct assignment was 100% for three clusters (C1 → Traceability, C2 → Exposure, C3 → Motivation, C4 → Systems Update). All four assignments were reached with 100% inter-rater agreement; the construct-vocabulary overlap scores were unambiguous (C1: T=31 vs next-best E=0; C2: E=32 vs T=3; C3: M=19 vs E=7; C4: U=25 vs T=3). The only secondary-construct note concerned C3, where seven Exposure-adjacent terms (“attack,” “hacker,” “website”) were judged to reflect incident context rather than the exposure construct operationalization, leaving motivation unambiguous as primary.

Table 1b presents the full cluster-to-variable mapping with rationale;

Table 1c presents exemplar incident narratives nearest to each centroid.

This mapping constitutes empirical validation of the constructs: exposure summarizes attack-surface and exploitable-access conditions; traceability summarizes the ability to observe, record, and correlate relevant activity; motivation summarizes the attractiveness of the target from the attacker’s perspective; and systems update summarizes patching and technology-refresh posture.

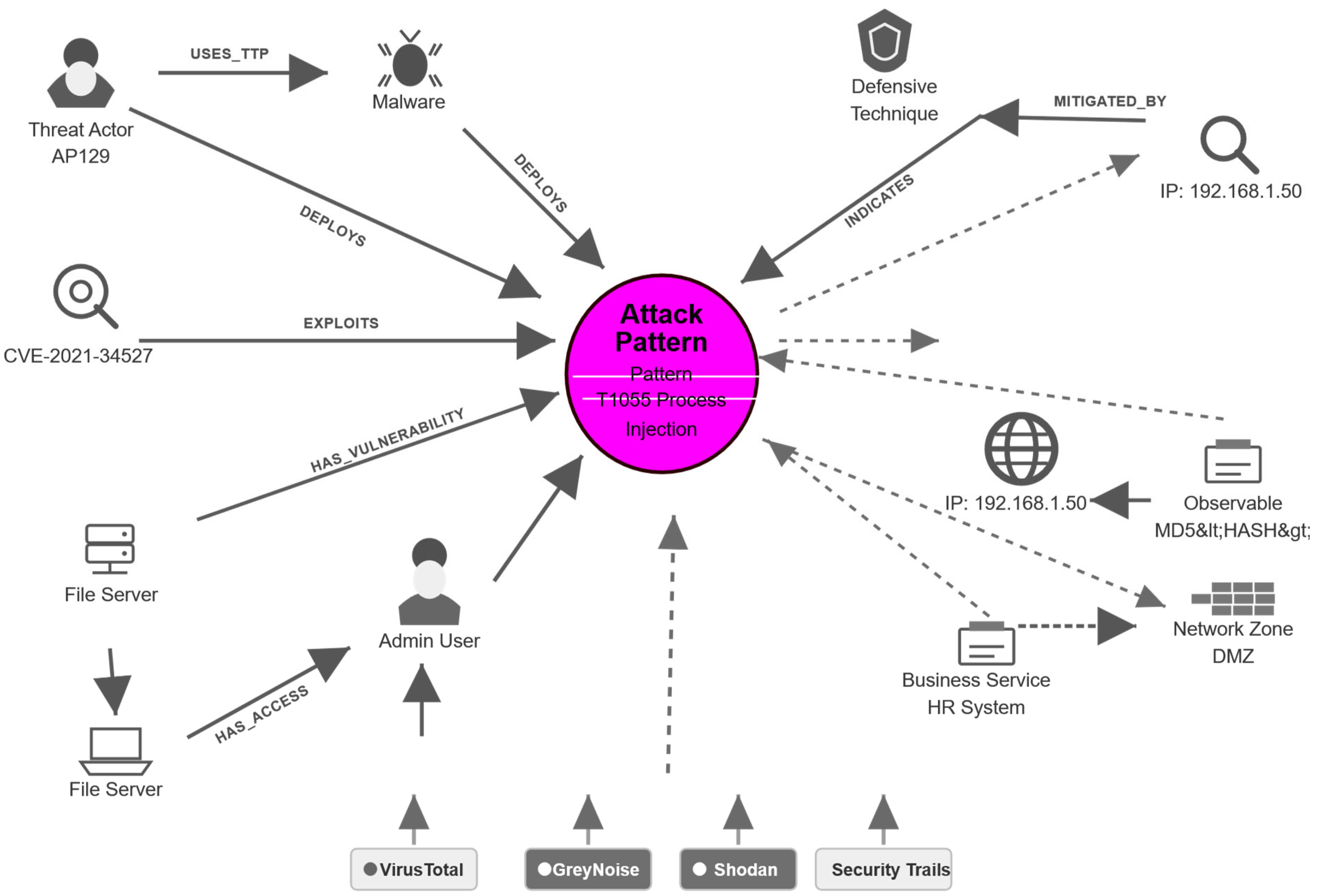

3.8. Knowledge Graph Construction

The merged dataset was formatted in a graph database using variables for measuring likelihood derived from the obtained clusters. The unified graph is defined formally as a typed property graph

where V is the set of nodes, E the set of directed typed edges, τV and τE assign node and edge types, and A stores attributes including identifiers, timestamps, provenance, and confidence. The schema is aligned with STIX 2.1/TAXII 2.1 to maximize interoperability (OASIS Open, 2021a, 2021b).

Nodes represent: threat entities (ThreatActor, IntrusionSet, Campaign); TTP entities (AttackPattern, Malware, Tool); vulnerability entities (Vulnerability/CVE, Weakness/CWE); observable/indicator entities; defensive entities (DefensiveTechnique/D3FEND, Control, DetectionRule); and organization overlay entities (Asset, Account, BusinessService, NetworkZone). Edges represent identified relationships (e.g., USES_TTP, DEPLOYS, EXPLOITS, INDICATES), control mappings (MITIGATED_BY), and event propagation paths (OBSERVED_ON, HAS_VULNERABILITY).

The baseline graph contains approximately 1,184,200 vertices and 3,062,500 edges. In terms of vertex composition, Observables account for 612,400 nodes, Vulnerabilities (CVE) for 214,600, Indicators for 146,800, Organization overlay entities (Asset/Account/Service/Zone) for 179,180, and ATT&CK AttackPatterns, Malware, Tool, IntrusionSet/Campaign/ThreatActor, and D3FEND DefensiveTechniques for the remainder. The principal edge types are INDICATES (1,020,000), OBSERVED_WITH (840,000), HAS_VULNERABILITY (520,000), USES_TTP (140,000), EXPLOITS (95,000), and MITIGATED_BY (84,500). The largest connected component covers 91.2% of vertices (mean degree 5.17; 99th percentile degree 248). High-degree hubs are CVEs, common observables, and ATT&CK technique nodes — expected in CTI graphs.

Figure 1 shows an example of the graph for a single attack pattern.

3.9. Metric Computation and Normalization

The metric registry (Artefact A3) converts the organization-specific profile into measurable variables. Each metric records its raw measure type, source, observation window, orientation, and normalization rule so that component scores remain reproducible (ISO/IEC, 2016; NIST, 2011).

Table 1 presents the complete metric registry.

Figure 2.

Alluvial diagram for the clustering themes.

Figure 2.

Alluvial diagram for the clustering themes.

Raw metrics are normalized to the unit interval and directionally oriented. higher exposure and motivation indicate worse likelihood conditions, whereas higher traceability and systems update indicate stronger mitigating conditions. Where natural bounds are unavailable, the normalization range is fixed through policy thresholds or empirical study percentiles, and the choice is recorded in the computational trace.

Component scores are aggregated from their assigned metrics using a confidence-weighted mean:

where mi is the normalized metric value, wi its analytical weight, and ci a composite evidence-quality score computed from three declared dimensions. The first dimension is completeness , defined as the fraction of a metric’s required data fields populated from primary sources rather than imputed or absent. The second dimension is freshness , defined by the age of the source data relative to the observation window end-date, ranging from within-window to undated or older than 180 days . The third dimension is source authority , defined by the relationship between the data source and the construct being measured: canonical primary sources such as a CMDB for asset inventory or Active Directory for account data receive si = 1.00; secondary derived sources such as network-scan-inferred asset lists receive si = 0.75; tertiary or analyst-estimated sources receive si = 0.50; and unverified or assumed values receive si = 0.20. The composite score ensures that weakness in any single dimension degrades overall confidence without being offset by strength in another — a complete and authoritative source that is six months stale is appropriately down-weighted. The scoring bands for all three dimensions are recorded in the metric registry (Artefact A3) alongside each metric’s raw value and normalization trace, ensuring that every ci is recoverable from declared source metadata rather than from undocumented practitioner judgement. Two conservative rules apply irrespective of ci: uncertainty in a mitigating metric cannot improve the aggregate score, so missing logging coverage does not increase traceability and absent patch records do not improve Systems Update.

In the default configuration, metric weights are equal within each construct unless a justified alternative is reported.

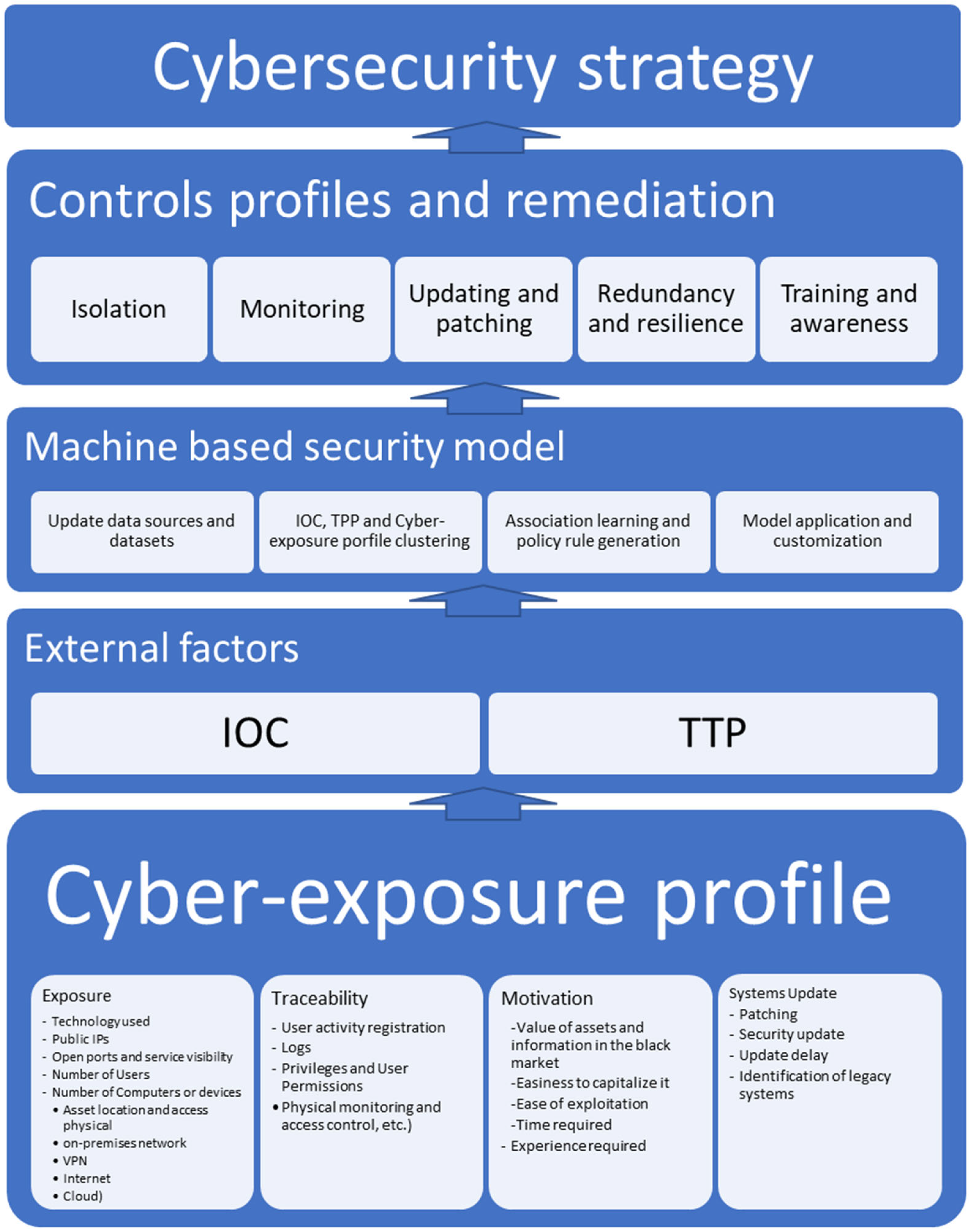

Figure 3 presents the cyber-exposure profile and its attributes.

3.10. Likelihood Computation and Calibration

The likelihood computation rule (Artefact A4) maps the four normalized component scores — exposure (E), traceability (T), motivation (M), and systems update (U) — to a bounded indicator L. The mapping satisfies four design requirements: monotonicity (the score increases when E or M increases and decreases when T or U improves); boundedness (the output remains in (0,1)); robustness to normalization choices; and auditability (each component’s contribution can be inspected transparently).

A log-additive/logistic specification is adopted as the reference form. Its main advantages are that it satisfies all four requirements while preserving an interpretable additive structure in the latent space (Agresti, 2013; McCullagh & Nelder, 1989; OECD & JRC, 2008). Let E, T, M, U ∈ [0,1] denote the normalized component scores, and let ε > 0 be a small positive constant to avoid singularities. The latent score is:

The bounded incident-likelihood indicator is then:

Higher values of exposure and motivation increase the latent score, whereas higher traceability and systems update decrease it. The logistic transformation ensures L ∈ (0,1), making the score suitable for monitoring and longitudinal comparison.

An equivalent way to view the same model is through the odds form. Because the logistic mapping implies

, the odds of incident likelihood can be written as:

3.11. Control Prioritization Output

Artefact A5 consumes the four component scores and the cyber-exposure profile graph to produce a ranked, graph-traceable control list. It operates in four sequential steps.

Step 1 — Component leverage ranking. The partial derivatives of the logistic function with respect to each component score identify which dimension has the greatest marginal influence on L at its current value. Because L(1−L) is a common factor, the signed marginal leverage for each component simplifies to:

For the enhancers E and M, a high λ indicates that the component is already near its upper bound and difficult to reduce further through a single control cycle; a low λ indicates greater sensitivity to incremental change. For the mitigating components

and

, a high value of

or

indicates that the component remains well below its target level, so that even small improvements can produce comparatively large reductions in

. Components are ranked according to their improvement opportunity. For mitigators, this is defined as

whereas for enhancers it is defined as

Here,

denotes the policy threshold or empirical percentile recorded in Artefact A3 for the corresponding component. The component with the highest opportunity score is designated the primary control target for the current observation window.

Step 2 — Metric-level deficit identification. Within the primary target component, individual metrics are ranked according to their normalized deficit. For mitigating metrics, the deficit is defined as

whereas for enhancing metrics it is defined as

Metrics whose deficit exceeds a declared threshold are flagged as high-priority inputs for the graph query in Step 3. Unless otherwise specified, the default threshold is 0.20 in normalized units. This threshold is recorded in Artefact A3 and may be adjusted by an analyst, provided that the adjustment is explicitly justified.

Step 3 — Graph-based control retrieval. For each high-deficit metric, the cyber-exposure profile G~o,w~ is queried to retrieve applicable defensive controls. The query traverses from the organization's affected assets through the typed-edge structure of the unified graph: for exposure deficits, the path is Asset → (via HOSTS or HAS_VULNERABILITY) → Vulnerability or AttackPattern → (via MITIGATED_BY) → DefensiveTechnique → Control; for traceability deficits, the path traverses LogSource or DetectionRule nodes with the identified gap to D3FEND detection and hardening techniques; for systems update deficits, the path traverses Vulnerability nodes with active EXPLOITS edges and KEV listing or EPSS above threshold to patch and configuration Controls. All retrieved Controls are D3FEND-aligned and carry the MITIGATED_BY provenance chain from the source condition, preserving full traceability from the metric deficit to the recommended action.

Step 4 — Control scoring and ranking. Each candidate control c is scored as:

where coverage(c) is the number of high-deficit metrics addressed by c normalized to [0,1]; severity(c) is the mean CVSS base score or asset-criticality weight of the affected conditions, normalized to [0,1]; and cross(c) is the count of additional components beyond the primary that c also improves, rewarding controls with multi-dimensional impact. Controls are output in descending score order. Each entry in the A5 output records: control identifier, D3FEND and ATT&CK alignment, targeted component(s), metrics addressed, the improvement opportunity score for those metrics, and the expected reduction in L if each addressed metric moves from its current value to its policy threshold.

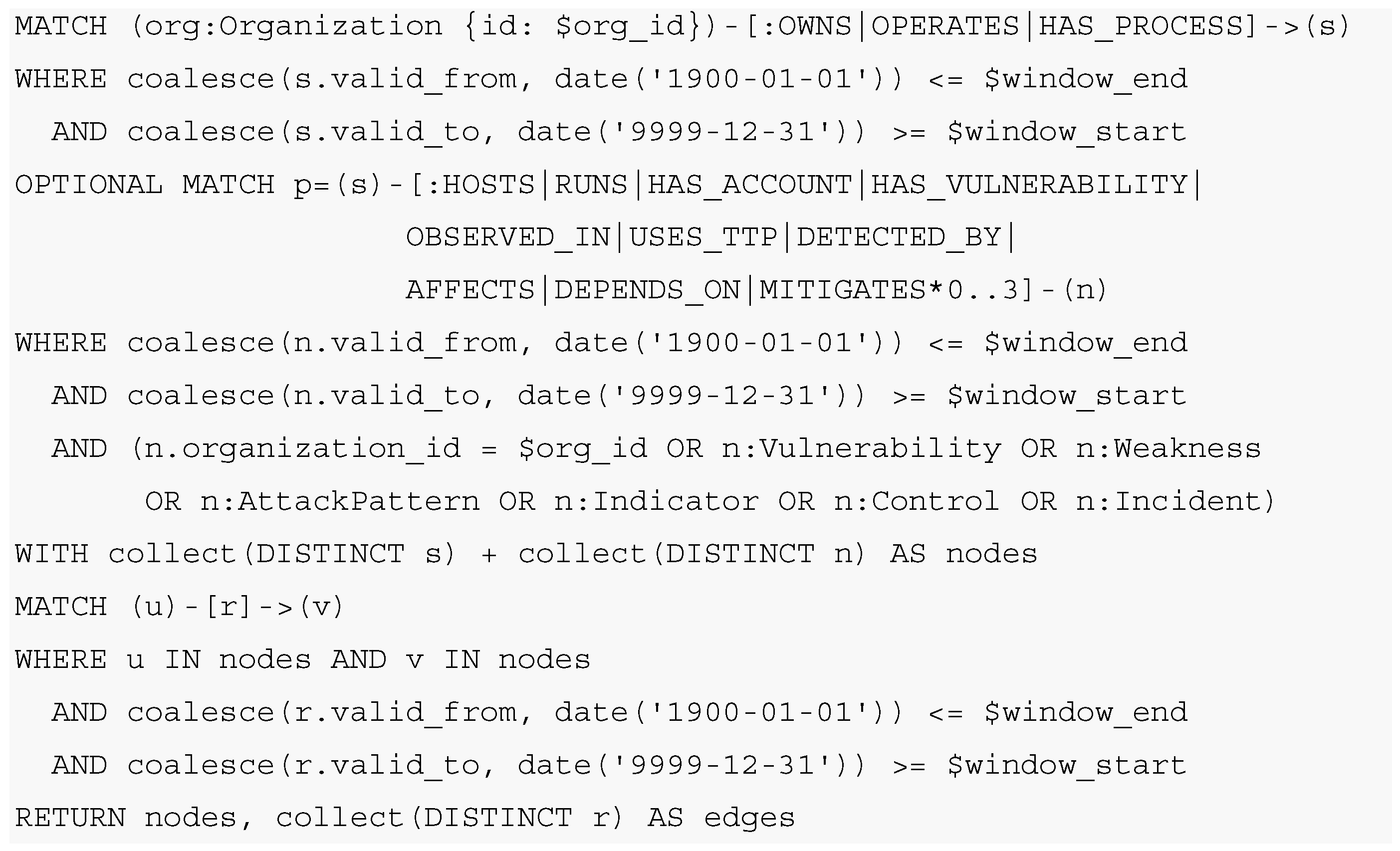

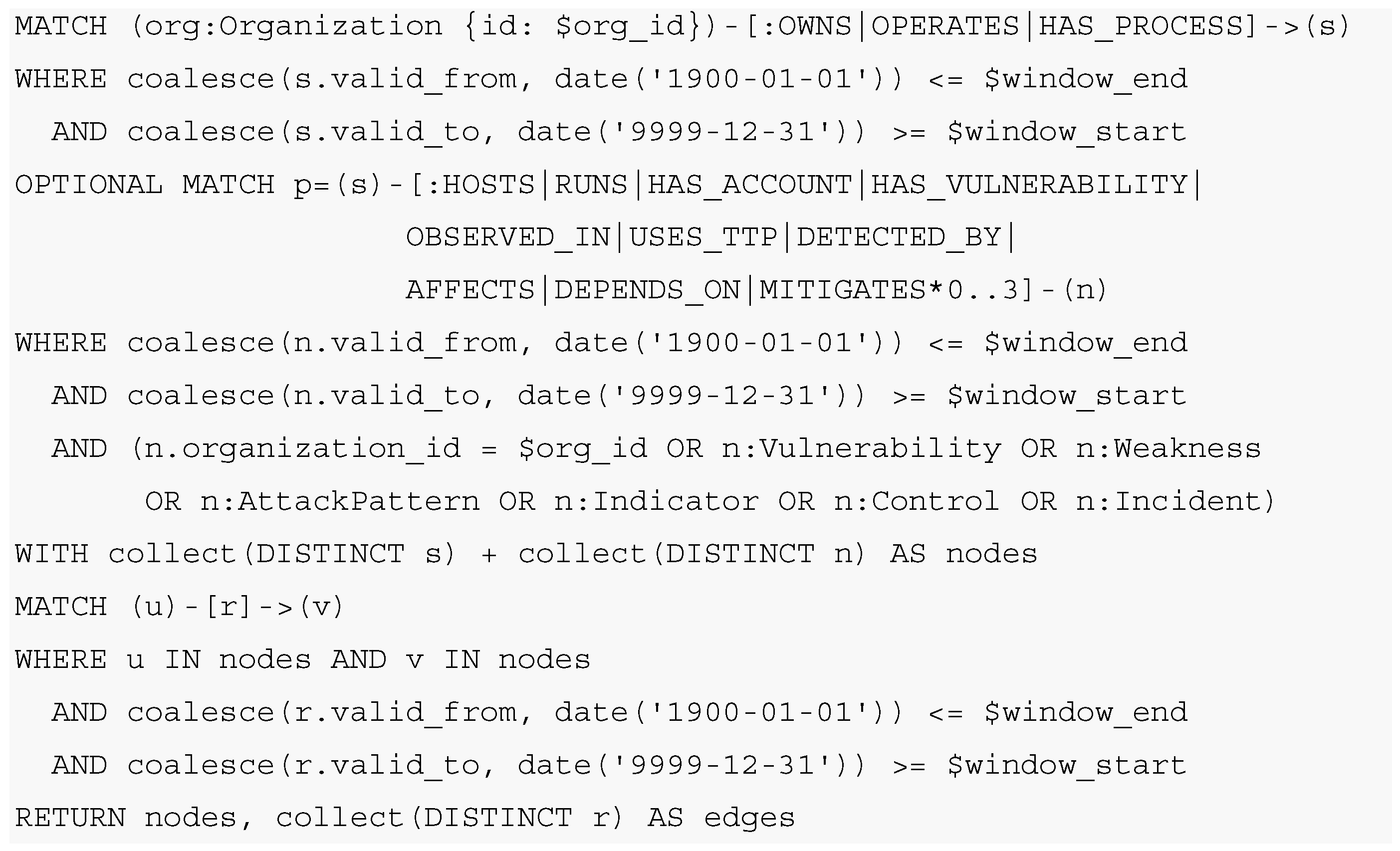

3.12. Graph Querying and Instantiation

Artefact A2 is the instantiation of the graph based on the organization’s telemetry and context. The empirical unit of analysis is the organization–time window pair: for each organization, a fixed observation window is used over which evidence is collected, normalized, and aggregated into the cyber-exposure profile. This unit is necessary because cyber conditions are dynamic — asset inventories change, systems are updated, new exposures emerge, and attacker incentives shift.

Based on the first-layer graph (containing AttackPattern, Malware, Tool, Vulnerability, Weakness, Indicator, Control, and Incident entities), a second layer is built from organization-specific information: asset, service, account, LogSource, DetectionRule, BusinessProcess, Location, and ObservationWindow. Formally, for organization o and observation window w = [t

s, t

e], the cyber-exposure profile is the induced subgraph

The node set of the profile is then

where

denotes admissible relation paths used by the framework, such as ownership, hosting, execution, vulnerability association, observed behavior, detection linkage, business dependency, and control mapping. The edge set is

The seed set includes the organization’s assets, services, accounts, business processes, and declared observation window, and additional nodes are included when reachable via admissible relation paths (ownership, hosting, execution, vulnerability association, observed behavior, detection linkage, business dependency, control mapping) that overlap the window. Edges are retained when both endpoints are in Vo,w and the edge overlaps the window.

In operational terms, the profile therefore contains: (i) organization-local entities and their states within the window; (ii) directly connected CTI entities relevant to the deployed technologies, observed exposures, or incidents; and (iii) the typed relations needed to compute the metrics in

Table 1 deterministically.

An example extraction query in Cypher:

Conflicts between external CTI and internal data collection are handled explicitly through three rules. First, entities are merged only when canonical identifiers exist (CVE IDs, ATT&CK technique IDs, approved asset keys); absent such identifiers, ambiguous entities are retained as distinct nodes with a provisional equivalence annotation pending review. Second, source precedence is attribute-specific: internal data collection is authoritative for organization-local state variables (whether an asset exists, whether a service is currently exposed, whether a patch is installed, whether logging is enabled, whether an account is privileged); external CTI is authoritative for global threat semantics (vulnerability definitions, exploit reports, ATT&CK technique meaning, weakness classification, control mappings). Third, when conflicting assertions remain within the same attribute class, the framework applies recency-plus-authority ordering for computation while preserving both assertions in the provenance layer.

Two conservative scoring rules apply throughout: uncertainty in a mitigating attribute cannot improve the score — missing or disputed logging evidence does not increase traceability, and disputed patch evidence does not improve Systems Update; and CTI relevance is not confused with local exposure status — when internal evidence indicates a vulnerability has been remediated, the CTI relation is preserved as contextual knowledge while the local exploitable-state metric is computed from current telemetry. This separation prevents CTI relevance from being confused with local exposure status and ensures that the cyber-exposure profile reflects the organization’s actual current condition rather than the aggregate threat landscape.

Confidence is paired with each metric when evidence quality differs across sources, tempering the contribution of weaker or stale measurements without defining an additional risk construct.

Figure 4 shows the cyber-exposure profile extraction from the unified graph.

3.13. Framework

In line with Peng (2011), the framework is presented as an explicit protocol with configuration capture, versioning, provenance, and preservation of intermediate artifacts:

C1 – Define scope and unit of analysis: organizational context, evaluation time window(s), and event definition.

C2 – Assemble data sources: external cyber knowledge and event datasets, plus organization telemetry and asset context.

C3 – Map sources to the unified data model: transform each source into the entity–relation schema (A1).

C4 – Integrate into a graph instance: create the integrated knowledge graph and validate structural consistency.

C5 – Instantiate the organization profile: extract the organization-constrained subgraph to form the cyber-exposure profile (A2).

C6 – Compute and normalize metrics: generate the metric vector from A2 using A3, normalized to [0,1].

C7 – Compute likelihood and produce recommendations: apply the likelihood computation rule (A4) and generate control prioritization outputs (A5).