1. Introduction

IoMT devices are now routine in clinical settings. Patient monitors, infusion pumps, wearable sensors, and diagnostic equipment communicate over Wi-Fi, MQTT, and Bluetooth, generating continuous network traffic. These devices are difficult to patch, often lack built-in security mechanisms, and a compromised endpoint can directly affect patient care. In this setting, intrusion detection is part of operational safety rather than a purely technical add-on.

Machine learning classifiers have made strong progress on this front. Random Forest, XGBoost, and deep learning models have reported very high accuracy, often above 98%, under standard flow-level evaluation settings on IoMT benchmarks [

1,

2]. The CICIoMT2024 dataset [

3], comprising traffic from 40 IoMT devices under 18 attack scenarios, has attracted considerable attention for this purpose. Published results on its pre-extracted CSV files are consistently high.

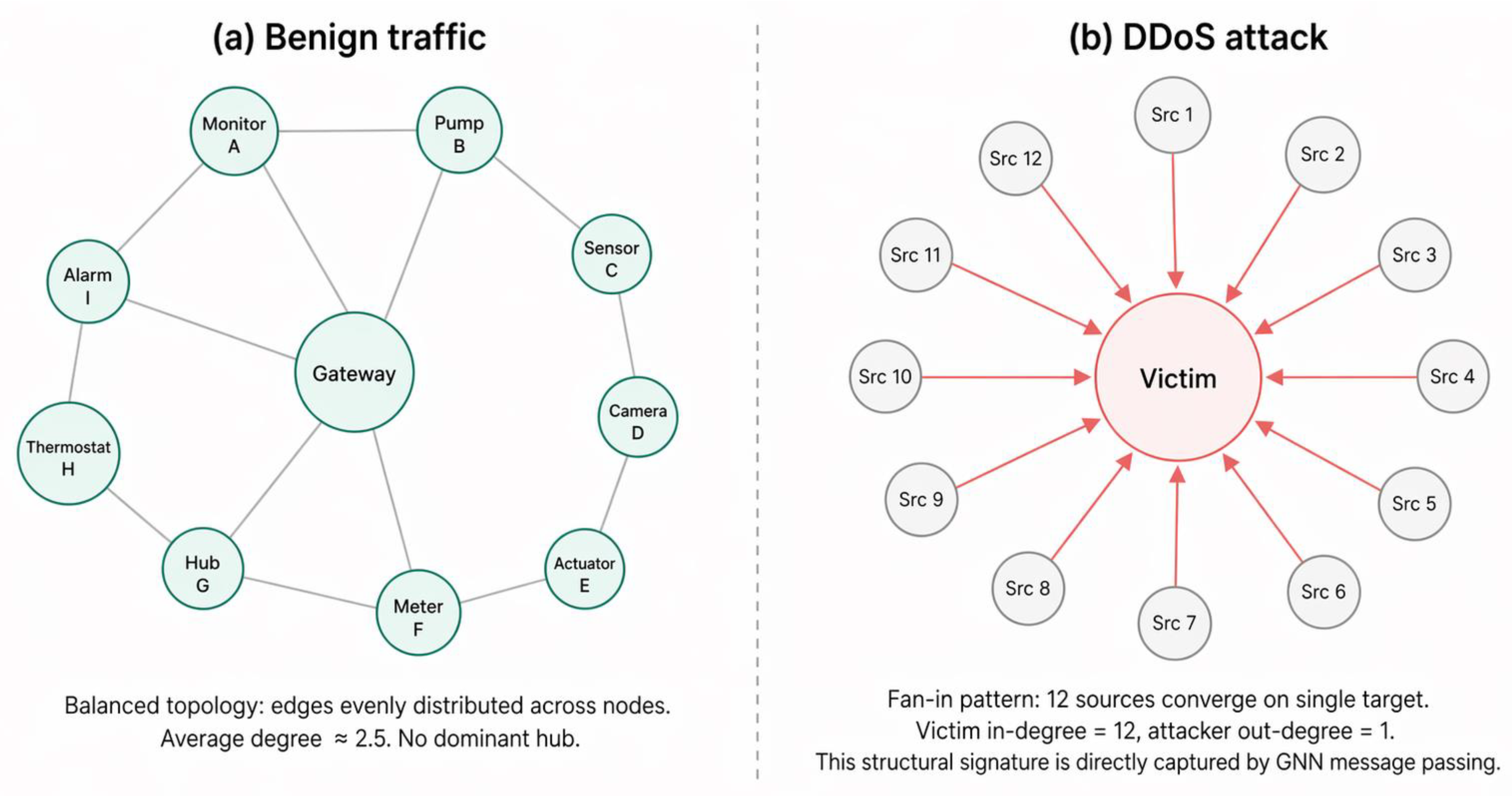

But accuracy on a flow-level tabular task and readiness for deployment are different things. Flow-level classifiers process each record independently. They cannot represent that 30 sources are converging on a single target — a DDoS fan-in signature — because they never see flows in relation to each other. A reconnaissance scan, where one host probes dozens of destinations, produces a fan-out pattern that is only visible when flows are considered as a communication graph. These topological patterns are characteristic of IoMT attacks, but they are not directly represented when flows are treated as isolated rows.

Graph Neural Networks can model such relational structures by representing devices as nodes and traffic flows as edges. Several studies have applied GNNs to intrusion detection on other benchmarks [

4,

5,

6,

7,

8], demonstrating value especially when communication structure or inter-flow context is informative. However, a question that has received limited systematic attention is how much the graph construction strategy itself — as opposed to the GNN architecture — determines whether graph-based detection actually works.

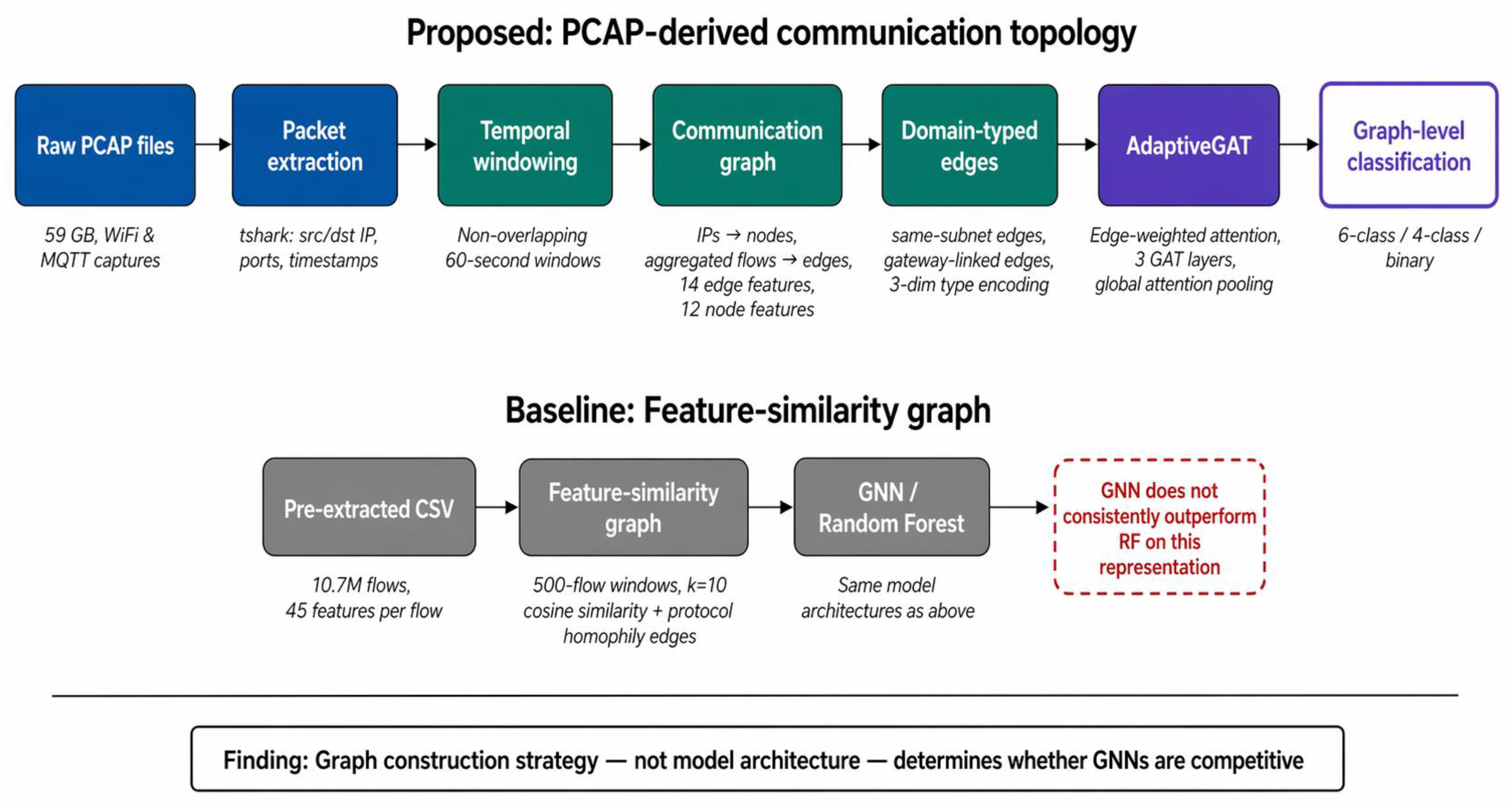

This question turns out to matter more than we initially expected. Our investigation on CICIoMT2024 began with a standard feature-similarity graph formulation: flows as nodes, cosine similarity as edges. GNN models trained on these graphs performed reasonably but never consistently outperformed a window-level Random Forest baseline. The artificial edges did not appear to encode enough structural information to give GNNs a consistent advantage.

When we reconstructed the communication topology from the raw PCAP files — IPs as nodes, aggregated flows as edges, in 60-second windows — the graph models became substantially more competitive. Adding domain-typed edges (same-subnet, gateway-linked) further improved both performance and stability, consistent with findings that domain-aware graph construction matters in GNN-based IDS [

7,

8].

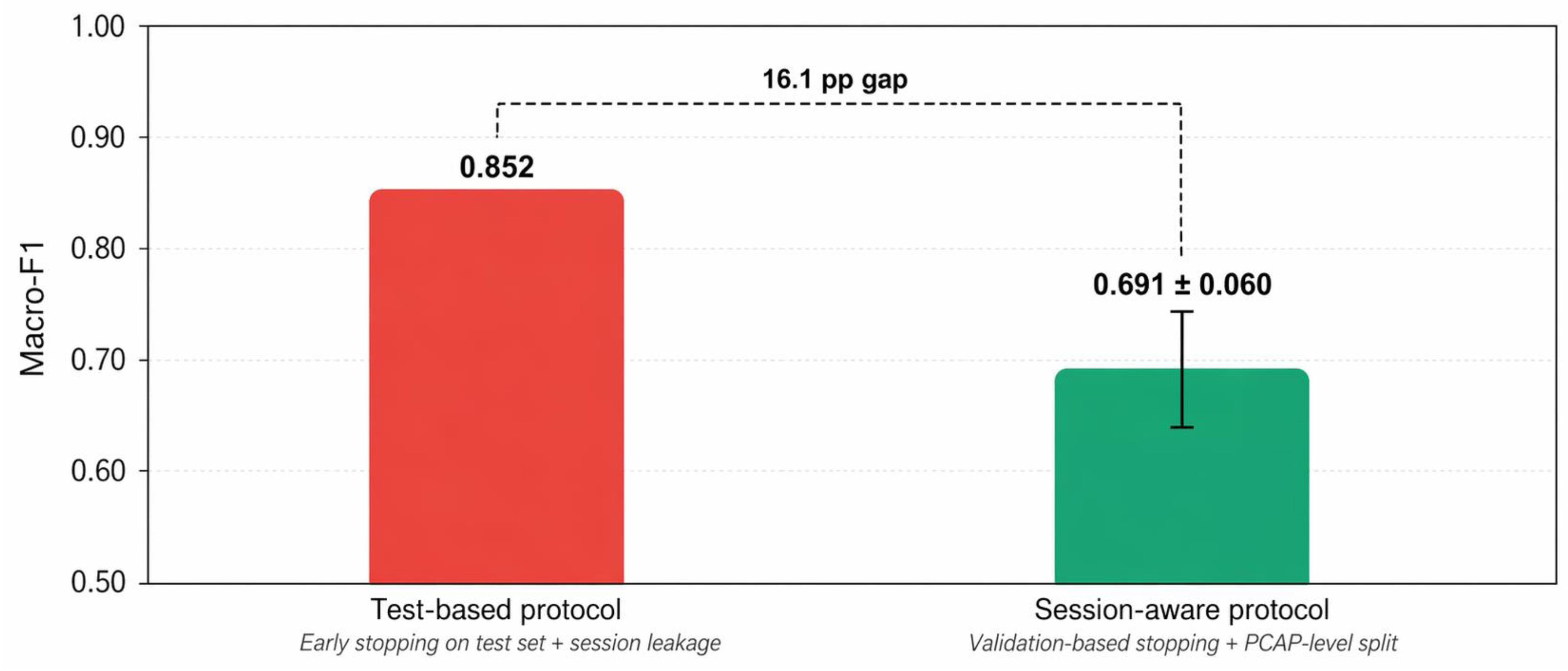

However, under PCAP-level session-aware validation, the performance gains observed with naive splits diminished substantially. In the full 6-class setting, our best GNN model did not consistently outperform Random Forest. It was only when we separated topology-heavy attacks (DDoS, DoS, Recon) from protocol-heavy attacks (MQTT, Spoofing) that GNNs showed a clearer and more stable advantage. Additional architectural complexity did not yield consistent gains over a simpler adaptive baseline.

Taken together, the experiments suggest that in IoMT intrusion detection on CICIoMT2024, the primary factors shaping graph-based detection performance are data representation, evaluation protocol, and task formulation — not architectural complexity. The specific contributions of this paper are:

(1) A systematic comparison of three data representations — flow-level tabular, feature-similarity graphs, and PCAP-derived communication-topology graphs — showing that graph construction strategy has a larger effect on GNN performance than model architecture.

(2) A PCAP-to-graph pipeline that extracts natural network topology from raw packet captures and augments it with domain-typed edges (communication, same-subnet, gateway-linked), yielding measurable gains in both performance and stability.

(3) Evidence that evaluation protocol substantially affects reported results: under PCAP-level session-aware validation, gains that appear significant under naive splits diminish considerably.

(4) A demonstration that, in our experiments on this dataset, GNN utility is attack-category dependent: graph models are more competitive for topology-heavy attacks but not for protocol-heavy attacks, motivating task decomposition in future IoMT IDS design.

(5) Negative results from multiple architectural extensions — neuro-symbolic fusion, motif-aware detection heads, and node-role auxiliary supervision — reinforcing that representation matters more than model complexity in this setting.

The rest of this paper is organized as follows.

Section 2 reviews related work.

Section 3 describes the dataset, representations, models, and evaluation protocol.

Section 4 presents results.

Section 5 discusses implications and limitations.

Section 6 concludes.