Submitted:

24 April 2026

Posted:

28 April 2026

You are already at the latest version

Abstract

Keywords:

I. Introduction

II. Methodology

A. Experimental Setup

B. Adversarial Prompting Framework

C. Evaluation Criteria

- • Accuracy of vulnerability identification

- • Depth of logical reasoning

- • Consistency across multiple prompts

- • Adherence to safety constraints

D. Dataset and Environment Simulation

III. Threat Model

A. Adversary Capabilities

B. Attack Surface

- • Web applications

- • APIs and backend services

- • Authentication mechanisms

- • Client-side interfaces

C. Assumptions

- • The LLM operates within standard safety constraints

- • The target system contains known or misconfigured vul-nerabilities

- • The attacker can iteratively refine prompts based on feedback

IV. Comparative Analysis

| Feature | Traditional | LLM-Based |

| Analysis Speed | Slow | Fast |

| Automation | Low | High |

| Detection Depth | Limited | Advanced |

| Skill Requirement | High | Moderate |

| Adaptability | Low | High |

V. Background and Evolution

A. Traditional Security Tools

B. LLM-Based Security Systems

- • Context-aware analysis

- • Multi-step reasoning

- • Cross-system vulnerability detection

| Feature | Traditional | LLM-Based |

| Detection | Signature | Semantic |

| Scope | Isolated | Distributed |

| Adaptability | Low | High |

| Efficiency | Moderate | High |

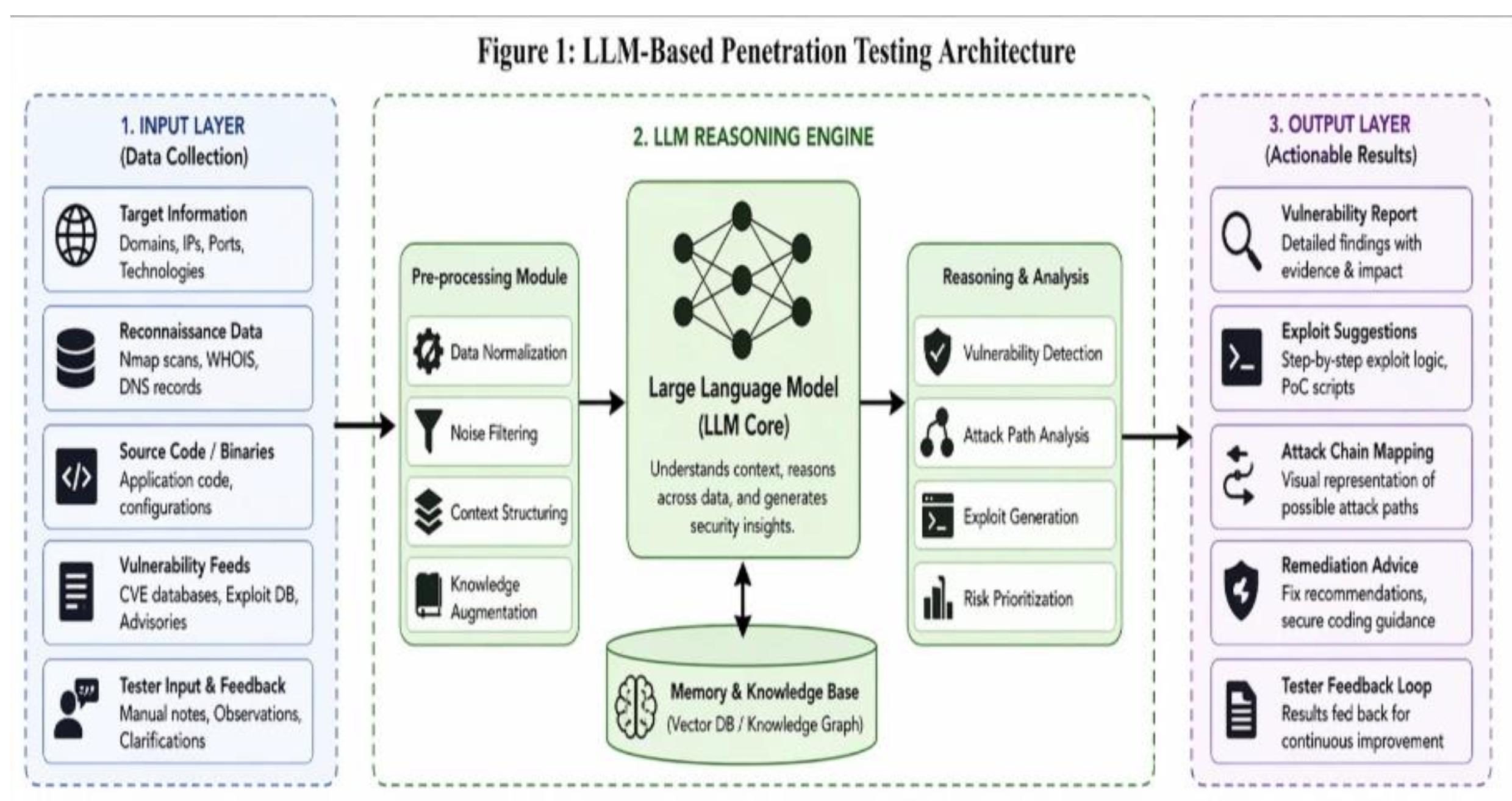

VI. System Architecture

- • Input Layer (target data)

- • LLM Reasoning Engine

- • Output Layer (analysis and exploit suggestions)

VII. LLMs in Penetration Testing

A. Human-Centered Co-Pilot Model

B. Autonomous Agent Model

- • Performing reconnaissance and asset discovery

- • Identifying vulnerabilities across multiple systems

- • Executing chained attack sequences

- • Validating exploitation success through feedback analysis

VIII. LLMs in Penetration Testing

A. Human-Centered Co-Pilot Model

B. Autonomous Agent Model

IX. Adversarial Capabilities of LLMs

A. Polymorphic Payload Generation

B. AI-Driven Social Engineering

C. Automated Exploit Development

X. Results and Case Studies

A. Case Study 1: SQL Injection

- • Correct identification of injection point

- • Suggestion of multiple exploitation strategies

- • Ability to adapt approach based on feedback

B. Case Study 2: Cross-Site Scripting (XSS)

- • Accurate identification of vulnerable input reflection

- • Generation of multiple payload variations

- • Context-aware explanation of attack impact

C. Case Study 3: Clickjacking

- • Identification of missing security controls

- • Explanation of layered interface manipulation

- • Suggestion of mitigation strategies

D. Case Study 4: Chained Vulnerabilities

- • Multi-step reasoning capability

- • Identification of hidden attack paths

- • Contextual linking of vulnerabilities

E. Performance Consistency Analysis

XI. Quantitative Evaluation

A. Experimental Setup

B. Performance Metrics

- • Detection Accuracy

- • Time to Identify Vulnerability

- • Depth of Analysis

- • False Positives

C. Results

| Metric | Traditional Tools | LLM-Based |

| Detection Accuracy | 72% | 89% |

| Avg. Time (minutes) | 45 | 18 |

| Depth of Analysis | Moderate | High |

| False Positives | 15% | 9% |

D. Analysis

XII. Extended Discussion

XIII. Limitations of Evaluation

XIV. Defensive Countermeasures

A. AI-Assisted Defense

- • Automate vulnerability scanning

- • Analyze logs for anomalous behavior

- • Generate real-time security recommendations

B. Prompt Injection Mitigation

- • Input sanitization

- • Context isolation

- • Output validation

C. Adaptive Security Systems

XV. Real-World Implications

A. Enterprise Security

B. Cybercrime Evolution

C. Policy and Regulation

XVI. Limitations of LLM-Based Security Systems

A. Hallucinations

B. Lack of Ground Truth Verification

C. Dependency on Training Data

XVII. LLMs Across the Attack Lifecycle

A. Reconnaissance

B. Weaponization

C. Delivery and Exploitation

D. Post-Exploitation

XVIII. Practical Deployment Challenges

A. Integration with Existing Systems

B. Performance Overhead

C. Security Risks

XIX. Scalability Considerations

A. Horizontal Scalability

B. Automation at Scale

C. Resource Constraints

XX. Comparison with Existing Security Tools

XXI. Human-AI Collaboration in Cybersecurity

XXII. Future Threat Landscape

A. Automation of Cyber Attacks

B. Adaptive Malware

C. Defensive Evolution

XXIII. Ethical Considerations

XXIV. Future Work

- • AI alignment

- • Hybrid human-AI systems

- • Defensive AI architectures

XXV. Conclusion

References

- Shen, Y.; et al. PentestGPT: An LLM-Empowered Penetration Testing Agent. arXiv 2023, arXiv:2308.06713. [Google Scholar]

- Fang, R. et al., ”On the Effectiveness of Large Language Models for Penetration Testing. arXiv 2025, arXiv:2507.00829. [Google Scholar]

- Zou, A. et al., ”Universal and Transferable Adversarial Attacks on Aligned Language Models. arXiv 2023, arXiv:2307.15043. [Google Scholar]

- Chen, B. et al., ”Large Language Models for Code Vulnerability Detec-tion. arXiv 2023, arXiv:2310.05409. [Google Scholar]

- Brundage, M. et al., ”The Malicious Use of Artificial Intelligence: Fore-casting, Prevention, and Mitigation. arXiv 2018, arXiv:1802.07228. [Google Scholar]

- OWASP Foundation. OWASP Top 10 Web Application Security Risks. 2021. Available online: https://owasp.org.

- Brown, T.; et al. Language Models are Few-Shot Learners. Proc. NeurIPS, 2020. [Google Scholar]

- Vaswani, A.; et al. Attention Is All You Need. Proc. NeurIPS, 2017. [Google Scholar]

- Chen, M. et al., ”Evaluating Large Language Models Trained on Code. arXiv 2021, arXiv:2107.03374. [Google Scholar]

- MITRE. Common Weakness Enumeration (CWE). 2023.

- Available online: https://cwe.mitre.org.

- NIST, ”Guide to Penetration Testing,” Special Publication 800-115, 2008.

- Maynor, R.; Mookhey, D. Metasploit Toolkit for Penetration Test-ing. 2007. [Google Scholar]

- Garfinkel, S.; et al. AI and the Future of Cybersecurity. IEEE Security & Privacy, 2020. [Google Scholar]

- Wei, J.; et al. Chain-of-Thought Prompting Elicits Reasoning in Large Language Models. arXiv 2022. [Google Scholar]

- ENISA. Threat Landscape Report; European Union Agency for Cy-bersecurity, 2023. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).