Submitted:

24 April 2026

Posted:

27 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Purpose

1.2. Related Work

1.2.1. TAIDE Model

1.2.2. Multimodal Learning and Medical Image Diagnosis

1.2.3. Visual RAG

1.2.4. Development of Visual-Language Models (VLMs) in Medical Image Analysis

1.2.5. Skin Lesion Diagnostic Aid Systems

2. Materials and Methods

2.1. Data Source

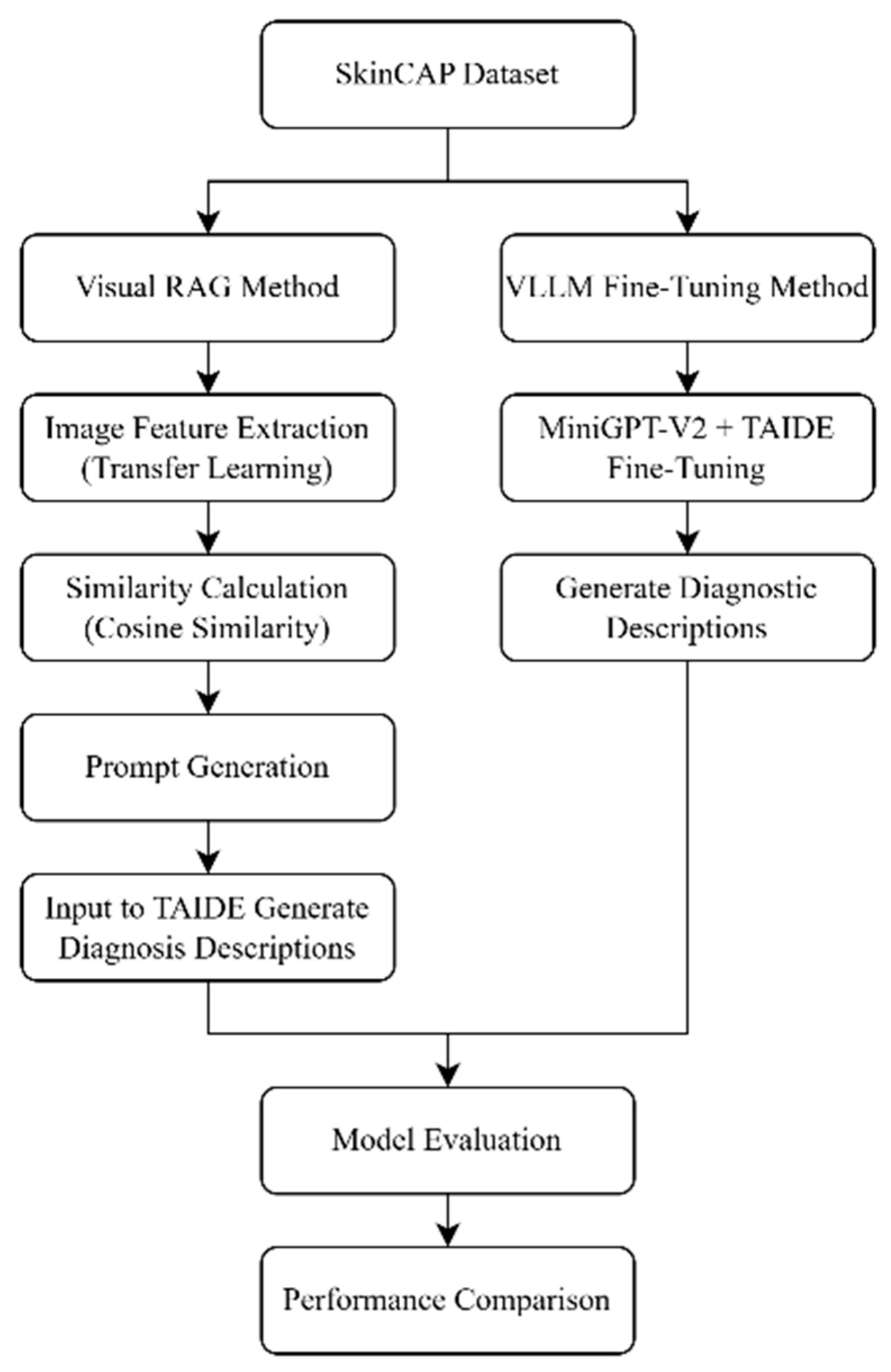

2.2. Methodology

2.2.1. Visual RAG-Based Retrieval with TAIDE for Diagnostic Description Generation

- (1)

- Pre-trained Feature Extraction: The EfficientNetV2B2 convolutional neural network, pre-trained on a large-scale image dataset, was employed as a feature extractor. It was applied to 3,200 training images of skin lesions to obtain 1,408-dimensional feature vectors, forming a high-dimensional embedding space for retrieval.

- (2)

- Fine-Tuned Feature Extraction: The same EfficientNetV2B2 model was fine-tuned on the 3,200 skin lesion images to adapt its representations to the dermatological domain. The resulting domain-specific embeddings were then used to construct an alternative image feature set.

2.2.2. VLLM Fine-Tuning with MiniGPT-V2 and TAIDE for Multimodal Generation

2.2.3. Evaluation Metrics

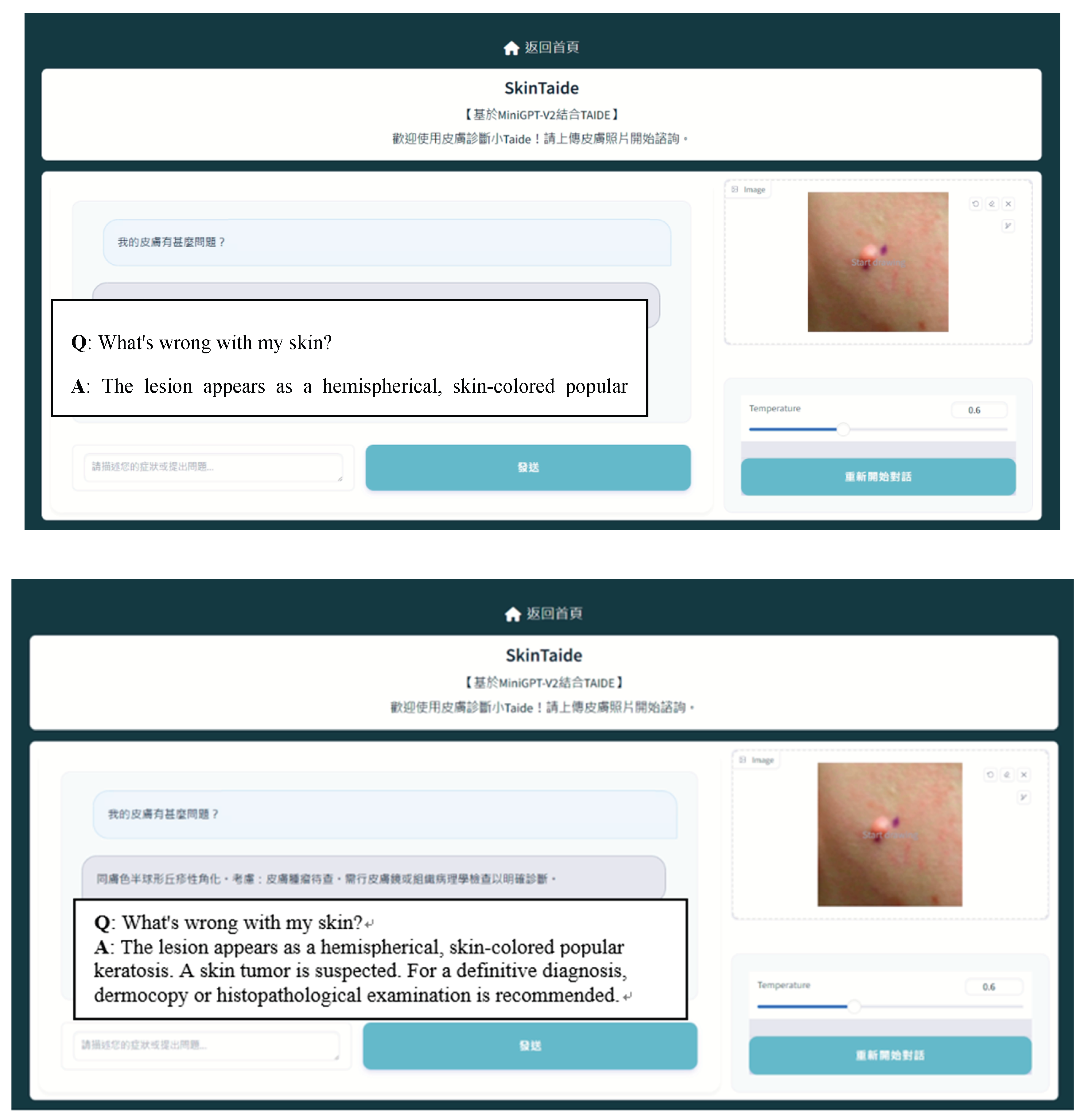

2.3. System Development

3. Results

3.1. Visual RAG-Based Migration Learning Retrieval Combined with TAIDE Model for Search Generation

3.2. VLLM Fine-Tuning Based MiniGPT-V2 Framework Combined with TAIDE Model for Fine-Tuning Generation

- (1)

- Full Dataset (4000 Images, 179 Categories, 8:2 Split): The dataset, consisting of 4,000 images, was partitioned into a training set (80%) and a test set (20%) using an 80:20 split. However, due to the uneven distribution of categories and the limited number of samples in certain disease categories, the model encountered difficulties in effectively learning the features of all categories. This resulted in suboptimal performance and evaluation outcomes.

- (2)

- Subset Dataset (1061 Images, 15 Categories, 8:2 Split): Fifteen categories, each containing between 50 and 100 samples (totaling 1,061 images), were selected for testing using the same 80:20 split. While the issue of data imbalance was alleviated, the relatively small sample size within each category limited the model’s ability to learn effectively, hindering improvements in classification accuracy.

- (3)

- Balanced Dataset (515 Images, 4 Categories, 8:2 Split): Four categories, each with more than 100 samples (totaling 515 images), were chosen for testing with an 80:20 split. At this stage, the problem of data imbalance was resolved, and the sufficient sample size within each category significantly improved the model’s diagnostic capabilities for specific disease categories. The test results demonstrated that a balanced data distribution notably enhanced model performance, particularly in the generation of diagnostic descriptions. The CIDEr score reached its highest value, and the model received the highest subjectivity rating in terms of description quality.

3.3. System Demonstration

4. Discussion

5. Conclusions

Funding

Acknowledgments

References

- (NARLabs) STPRaIC. Trustworthy AI Dialogue Engine (TAIDE). 2024. Available online: https://taide.tw.

- Skin cancer detection using VGG16, InceptionV3 and ResUNet. In 2023 4th International Conference on electronics and sustainable communication systems (ICESC); Swathi, B., Kannan, K., Chakravarthi, S.S., Ruthvik, G., Avanija, J., Reddy, C.C.M., Eds.; IEEE, 2023. [Google Scholar]

- Zhang, J.; Huang, J.; Jin, S.; Lu, S. Vision-language models for vision tasks: A survey. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024. [Google Scholar]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; et al. Retrieval-augmented generation for knowledge-intensive nlp tasks. Adv. Neural Inf. Process. Syst. 2020, 33, 9459–74. [Google Scholar]

- Yu, S.; Tang, C.; Xu, B.; Cui, J.; Ran, J.; Yan, Y.; et al. Visrag: Vision-based retrieval-augmented generation on multi-modality documents. arXiv 2024, arXiv:241010594. [Google Scholar]

- Bonomo, M.; Bianco, S. Visual RAG: Expanding MLLM visual knowledge without fine-tuning. arXiv 2025, arXiv:2501.10834. [Google Scholar] [CrossRef]

- Zhu, D.; Chen, J.; Shen, X.; Li, X.; Elhoseiny, M. Minigpt-4: Enhancing vision-language understanding with advanced large language models. arXiv 2023, arXiv:230410592. [Google Scholar]

- Smit, A.; Jain, S.; Rajpurkar, P.; Pareek, A.; Ng, A.Y.; Lungren, M.P. CheXbert: combining automatic labelers and expert annotations for accurate radiology report labeling using BERT. arXiv 2020, arXiv:2004.09167. [Google Scholar] [CrossRef]

- Chen, J.; Zhu, D.; Shen, X.; Li, X.; Liu, Z.; Zhang, P.; et al. Minigpt-v2: large language model as a unified interface for vision-language multi-task learning. arXiv 2023, arXiv:231009478. [Google Scholar]

- Zhou, J.; He, X.; Sun, L.; Xu, J.; Chen, X.; Chu, Y.; et al. SkinGPT-4: an interactive dermatology diagnostic system with visual large language model. arXiv 2023, arXiv:2304.10691. [Google Scholar] [CrossRef]

- Zhou, J.; Sun, L.; Xu, Y.; Liu, W.; Afvari, S.; Han, Z.; et al. SkinCAP: A Multi-modal Dermatology Dataset Annotated with Rich Medical Captions. arXiv 2024, arXiv:24051800411. [Google Scholar]

| Feature Extractor | Bleu_1 | METEOR | ROUGE_L | CIDEr | SPICE |

| Pretrained EfficientNetV2B2 | 0.011 | 0.039 | 0.166 | 0.000 | 0.133 |

| Fine-tuned EfficientNetV2B2 | 0.090 | 0.094 | 0.196 | 0.002 | 0.137 |

| Dataset Split (Image Count) | Bleu_1 | METEOR | ROUGE_L | CIDEr | SPICE | Subjective Rating |

| 4000 images (8:2 split) | 0.3167 | 0.2171 | 0.3331 | 0.205 | 0.2362 | 2.5013/5 |

| 1061 images (8:2 split) | 0.318 | 0.2166 | 0.3376 | 0.3413 | 0.2506 | 3.3568/5 |

| 515 images (8:2 split) | 0.3605 | 0.2232 | 0.3369 | 0.5672 | 0.3062 | 3.6923/5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).