Submitted:

24 April 2026

Posted:

28 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

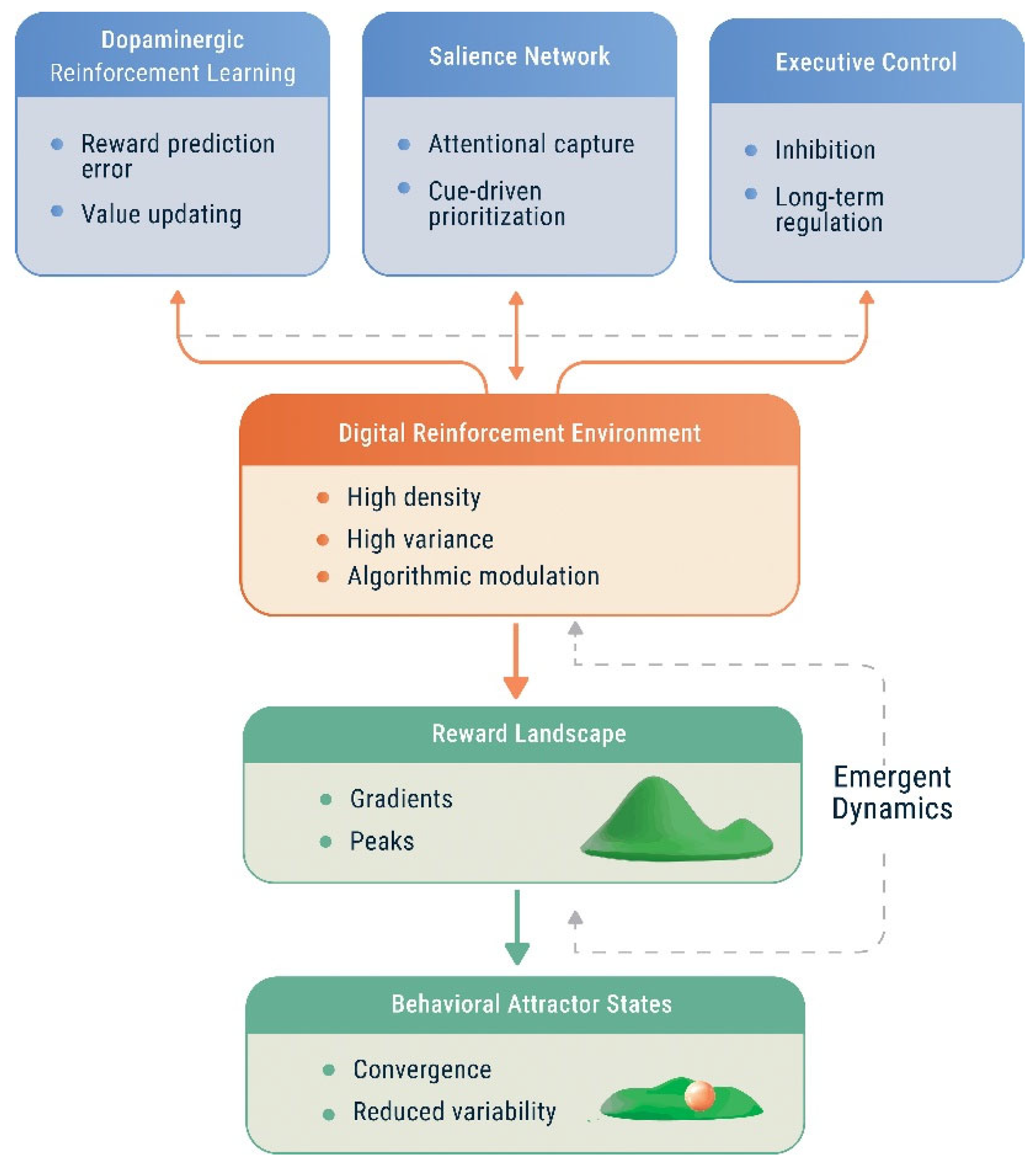

2. Neural Mechanisms of Reward Processing in Digital Addiction

2.1. Dopaminergic Reinforcement Learning Under High-Density Stimulation

2.2. Salience Attribution and Attentional Capture

2.3. Executive Control and System Imbalance

2.4. Individual Differences in Reward System Dynamics

2.5. Integration with Core Theories of Addiction

2.6. Toward a Unified Neurocomputational Perspective

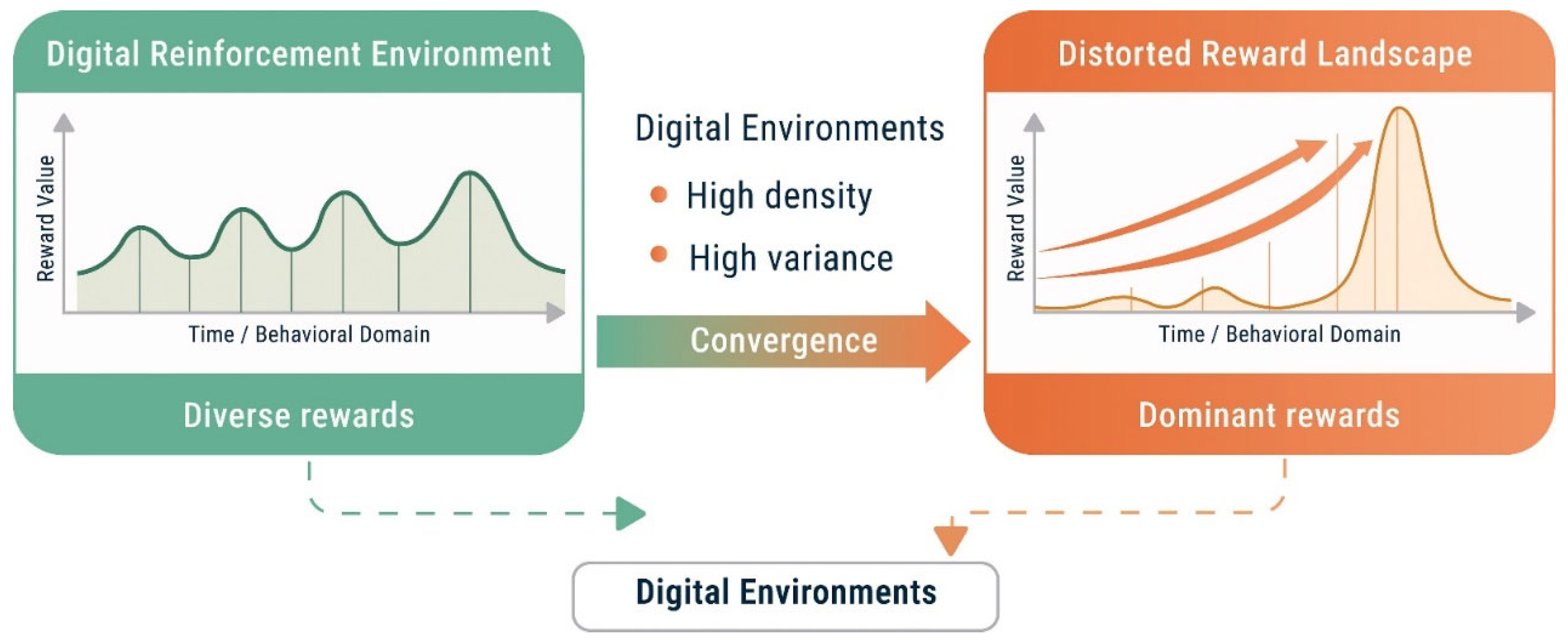

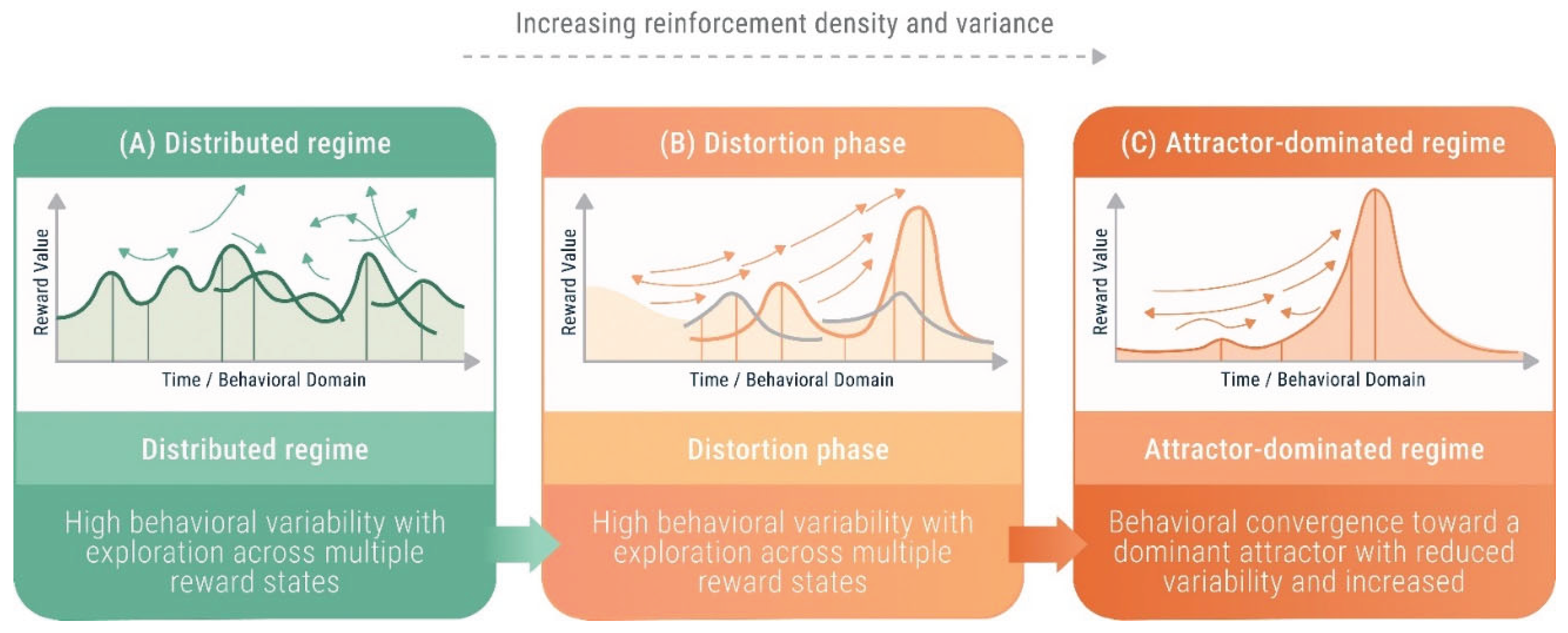

3. Reward Landscape Distortion: A Dynamical Systems Perspective on Behavioral Addiction

3.1. Behavioral Systems as Reward Landscapes

3.2. Distortion Through Reinforcement Density and Variance

3.3. Emergence of Dominant Reward Peaks

3.4. Collapse of Behavioral Entropy

3.5. Attractor Formation and Phase Transition Dynamics

- high entry probability,

- reduced exit probability,

- diminished sensitivity to alternative rewards.

3.6. Irreversibility and Path Dependence

3.7. Synthesis: Addiction as an Emergent Property of Distorted Landscapes

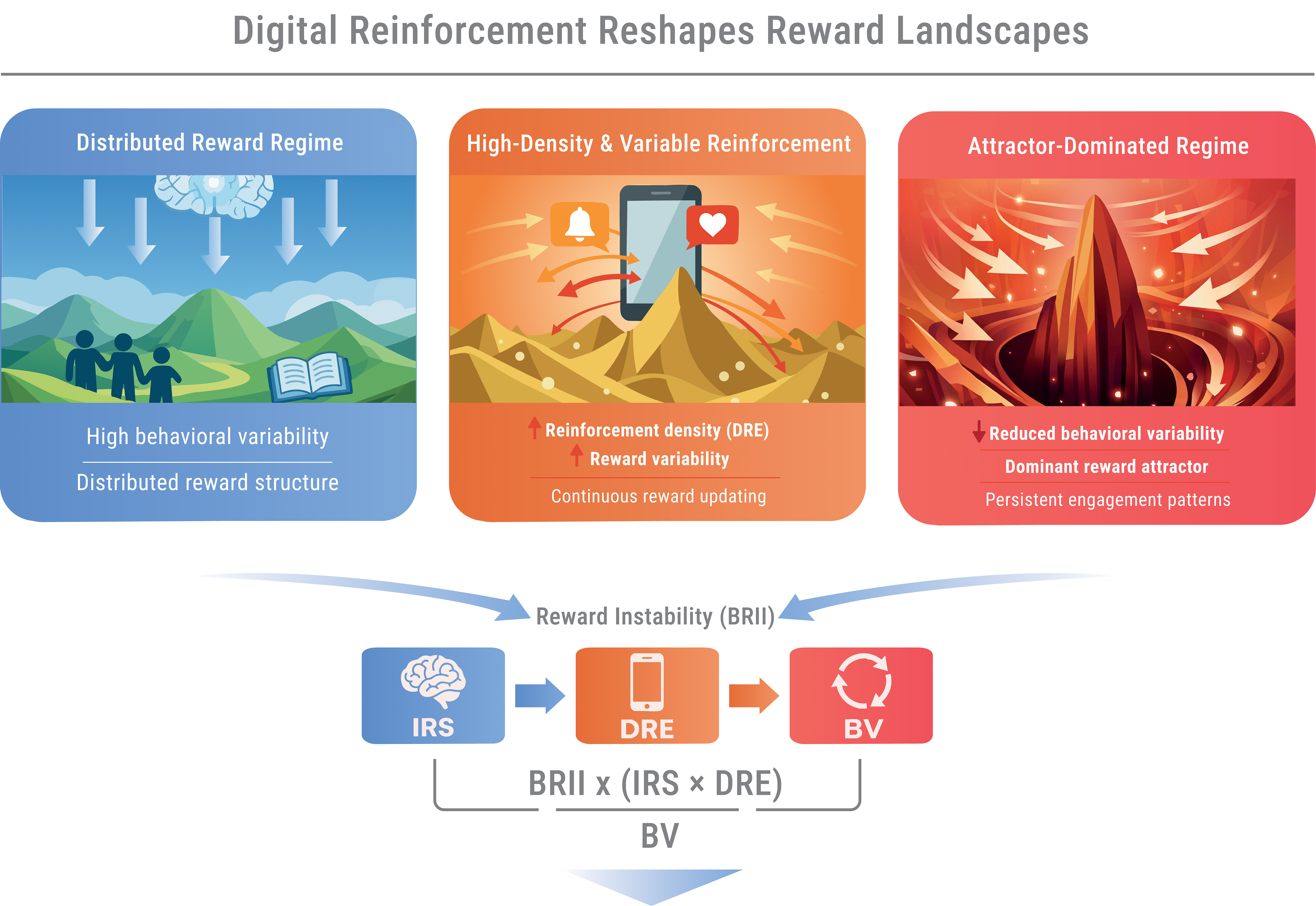

4. Behavioral Reward Instability Index (BRII): A Heuristic Framework for Motivational Instability

4.1. Conceptual Rationale

4.2. Core Dimensions

4.3. Heuristic Non-Linear Formulation

4.4. Operationalization Using Digital Phenotyping

4.5. Limits and Future Validation

5. Toward Operationalization: Digital Phenotyping and Reward Instability

5.1. Digital Phenotyping as an Empirical Substrate

5.2. Measurement Challenges: Noise, Validity, Bias, and Governance

5.3. Non-Linearity and Calibration Requirements

- non-linear modeling approaches (e.g., multiplicative, threshold-based, or sigmoid formulations),

- dynamic time-series analyses capturing trajectory evolution over time,

- identification of thresholds or early warning signals associated with attractor formation.

5.4. Scope and Limits of Operationalization

5.5. Synthesis: Measurement as Model Refinement

- test whether non-linear transitions occur in real-world behavioral dynamics,

- identify conditions under which reward landscapes become progressively distorted,

- refine the structure, calibration, and predictive utility of the BRII framework.

6. Discussion

6.1. From Mechanisms to System Dynamics

6.2. Theoretical Contribution and Testable Predictions

6.3. Integration Across Levels of Theory and Early Warning Signals

6.4. Digital Environments and Measurement Implications

6.5. Limitations

6.6. Future Directions

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Deng, Y.; Song, D.; Ni, J.; Qing, H.; Quan, Z. Reward prediction error in learning-related behaviors. Front. Neurosci. 2023, 17, 1171612. [Google Scholar] [CrossRef]

- Gershman, S.J.; Assad, J.A.; Datta, S.R.; Linderman, S.W.; Sabatini, B.L.; Uchida, N.; Wilbrecht, L. Explaining dopamine through prediction errors and beyond. Nat. Neurosci. 2024, 27, 1645–1655. [Google Scholar] [CrossRef]

- Volkow, N.D.; Blanco, C. Substance use disorders: A comprehensive update of classification, epidemiology, neurobiology, clinical aspects, treatment and prevention. World Psychiatry 2023, 22, 203–229. [Google Scholar] [CrossRef]

- Robbins, T.W.; Banca, P.; Belin, D. From compulsivity to compulsion: The neural basis of compulsive disorders. Nat. Rev. Neurosci. 2024, 25, 313–333. [Google Scholar] [CrossRef] [PubMed]

- Robinson, T.E.; Berridge, K.C. The incentive-sensitization theory of addiction 30 years on. Annu. Rev. Psychol. 2025, 76, 29–58. [Google Scholar] [CrossRef]

- Amo, R. Prediction error in dopamine neurons during associative learning. Neurosci. Res. 2024, 199, 12–20. [Google Scholar] [CrossRef] [PubMed]

- Pezzulo, G.; Parr, T.; Friston, K.J. Active inference as a theory of sentient behavior. Biol. Psychol. 2024, 186, 108741. [Google Scholar] [CrossRef]

- Wilkinson, C.S.; Luján, M.Á.; Hales, C.; Costa, K.M.; Fiore, V.G.; Knackstedt, L.A.; Kober, H. Listening to the data: Computational approaches to addiction and learning. J. Neurosci. 2023, 43, 7547–7553. [Google Scholar] [CrossRef]

- Konova, A.B.; Ceceli, A.O.; Horga, G.; Moeller, S.J.; Alia-Klein, N.; Goldstein, R.Z. Reduced neural encoding of utility prediction errors in cocaine addiction. Neuron 2023, 111, 4058–4070.e6. [Google Scholar] [CrossRef]

- Koob, G.F.; Schulkin, J. Addiction and stress: An allostatic view. Neurosci. Biobehav. Rev. 2019, 106, 245–262. [Google Scholar] [CrossRef]

- Friston, K.J. Computational psychiatry: From synapses to sentience. Mol. Psychiatry 2023, 28, 256–268. [Google Scholar] [CrossRef]

- Gordon, J.A.; Dzirasa, K.; Petzschner, F.H. The neuroscience of mental illness: Building toward the future. Cell 2024, 187, 5858–5870. [Google Scholar] [CrossRef]

- Akiki, T.J.; Williams, L.M.; Wolfers, T.; Yang, Y.; Stahl, D.; Gillan, C.M. Transforming psychiatry with computational and brain-based methods. Nat. Comput. Sci. 2025, 5, 844–847. [Google Scholar] [CrossRef]

- Shine, J.M.; Breakspear, M.; Bell, P.T.; Ehgoetz Martens, K.A.; Shine, R.; Koyejo, O.; Sporns, O.; Poldrack, R.A. Human cognition involves the dynamic integration of neural activity and neuromodulatory systems. Nat. Neurosci. 2019, 22, 289–296. [Google Scholar] [CrossRef] [PubMed]

- Seguin, C.; Sporns, O.; Zalesky, A. Brain network communication: concepts, models and applications. Nat. Rev. Neurosci. 2023, 24, 557–574. [Google Scholar] [CrossRef] [PubMed]

- Ashwin, P.; Fadera, M.; Postlethwaite, C. Network attractors and nonlinear dynamics of neural computation. Curr. Opin. Neurobiol. 2024, 84, 102818. [Google Scholar] [CrossRef] [PubMed]

- Klein-Flügge, M.C.; Bongioanni, A.; Rushworth, M.F.S. Medial and orbital frontal cortex in decision making and flexible behavior. Neuron 2022, 110, 2743–2770. [Google Scholar] [CrossRef]

- Dabney, W.; Kurth-Nelson, Z.; Uchida, N.; Starkweather, C.K.; Hassabis, D.; Munos, R.; Botvinick, M. A distributional code for value in dopamine-based reinforcement learning. Nature 2020, 577, 671–675. [Google Scholar] [CrossRef]

- Dakos, V.; Boulton, C.A.; Buxton, J.E.; Abrams, J.F.; Arellano-Nava, B.; Armstrong McKay, D.I.; Bathiany, S.; Blaschke, L.; Boers, N.; Dylewsky, D.; et al. Tipping point detection and early warnings in climate, ecological, and human systems. Earth Syst. Dynam. 2024, 15, 1117–1135. [Google Scholar] [CrossRef]

- Kato, A.; Shimomura, K.; Ognibene, D.; Parvaz, M.A.; Berner, L.A.; Morita, K.; Fiore, V.G. Computational models of behavioral addictions: State of the art and future directions. Addict. Behav. 2023, 140, 107595. [Google Scholar] [CrossRef]

- Ceceli, A.O.; Huang, Y.; Kronberg, G.; McClain, N.; King, S.G.; Butelman, E.R.; Alia-Klein, N.; Goldstein, R.Z. The impaired response inhibition and salience attribution model of drug addiction: Recent neuroimaging evidence and future directions. Annu. Rev. Psychol. 2026, 77, 81–108. [Google Scholar] [CrossRef]

- Shourkeshti, A.; Abbaszadeh, M.; Marrocco, G.; Jurewicz, K.; Moore, T.; Ebitz, R.B. Pupil size predicts exploration through critical slowing in prefrontal dynamics. Commun. Biol. 2026, 9, 103. [Google Scholar] [CrossRef]

- Bufano, P.; Laurino, M.; Said, S.; Tognetti, A.; Menicucci, D. Digital phenotyping for monitoring mental disorders: Systematic review. J. Med. Internet Res. 2023, 25, e46778. [Google Scholar] [CrossRef]

- Akre, S.; Seok, D.; Douglas, C.; Aguilera, A.; Carini, S.; Dunn, J.; Hotopf, M.; Mohr, D.C.; Bui, A.A.T.; Freimer, N.B.; et al. Advancing digital sensing in mental health research. Npj Digit. Med. 2024, 7, 362. [Google Scholar] [CrossRef] [PubMed]

- Vasilchenko, K.F.; Chumakov, E.M. Current status, challenges and future prospects in computational psychiatry: A narrative review. Consort. Psychiatr. 2023, 4, 33–42. [Google Scholar] [CrossRef]

- Badcock, P.B.; Davey, C.G. Active inference in psychology and psychiatry: Progress to date? Entropy 2024, 26, 833. [Google Scholar] [CrossRef] [PubMed]

- Shafiei, A.; Jesawada, H.; Friston, K.J.; Russo, G. Distributionally robust free energy principle for decision-making. Nat. Commun. 2026, 17, 707. [Google Scholar] [CrossRef]

- Boot, J.; van den Ende, M.W.J.; Wiers, R.W.; Lees, M.H.; van der Maas, H.L.J. Integrating dual-process decision making and social dynamics: A formal modeling framework for addiction. Psychol. Rev. Advance online publication. 2025. [Google Scholar] [CrossRef] [PubMed]

- Strack, F.; Deutsch, R. Reflective and impulsive determinants of social behavior. Pers. Soc. Psychol. Rev. 2004, 8, 220–247. [Google Scholar] [CrossRef]

- Sani, O.G.; Pesaran, B.; Shanechi, M.M. Dissociative and prioritized modeling of behaviorally relevant neural dynamics using recurrent neural networks. Nat. Neurosci. 2024, 27, 2033–2045. [Google Scholar] [CrossRef]

- Findling, C.; Romand-Monnier, M.; Skvortsova, V.; Koechlin, E. Neural variability in the medial prefrontal cortex contributes to efficient adaptive behavior. Nat. Commun. 2025, 16, 11356. [Google Scholar] [CrossRef] [PubMed]

- Camargo, A.; Del Mauro, G.; Wang, Z. Task-induced changes in brain entropy. J. Neurosci. Res. 2024, 102, e25310. [Google Scholar] [CrossRef] [PubMed]

- Britton, G.B.; Huang, L.K.; Villarreal, A.E.; Levey, A.; Philippakis, A.; Hu, C.J.; Yang, C.C.; Mushi, D.; Oviedo, D.C.; Rangel, G.; et al. Digital phenotyping: An equal opportunity approach to reducing disparities in Alzheimer’s disease and related dementia research. Alzheimers Dement. (Amst.) 2023, 15, e12495. [Google Scholar] [CrossRef] [PubMed]

- Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction, 2nd ed.; MIT Press: Cambridge, MA, USA, 2018. [Google Scholar]

- Chan, J.; Choi, F.; Saha, K.; Chandrasekharan, E. Examining algorithmic curation on social media: An empirical audit of Reddit’s r/popular feed. arXiv 2025, arXiv:2502.20491. [Google Scholar] [CrossRef]

- Bakshy, E.; Messing, S.; Adamic, L.A. Exposure to ideologically diverse news and opinion on Facebook. Science 2015, 348, 1130–1132. [Google Scholar] [CrossRef]

| Factor |

Neurobiological Mechanism |

System-Level Effects |

Reward Landscape Impact |

| Dopaminergic signaling (e.g., DRD2, SLC6A3) | Modulation of reward prediction error encoding and synaptic plasticity | Modulates reinforcement learning gain and sensitivity to reward gradients | Steepens reward gradients, increasing convergence toward high-reward states |

| Prefrontal regulation (e.g., COMT) | Regulation of executive control and top-down modulation of behavior | Modulates capacity for behavioral inhibition and goal-directed control | Expands or constrains accessibility of alternative behavioral trajectories |

| Impulsivity and delay discounting traits | Reduced delay discounting thresholds and increased sensitivity to immediate rewards | Biases decision-making toward short-term reinforcement | Shifts system toward shallow but rapidly accessible reward peaks |

| Stress and allostatic load | Dysregulation of baseline reward processing and stress-related neuroadaptation | Alters baseline reward sensitivity and increases reliance on habitual responding | Globally deforms the reward landscape, reducing salience of alternative rewards |

| Salience attribution networks (dopaminergic–insula interactions) | Enhanced cue-triggered motivational salience | Increases attentional capture and cue-driven behavior | Amplifies prominence of specific reward peaks, reinforcing attractor formation |

| BRII Dimension |

Candidate Proxies |

Data Sources | System Role |

Expected Dynamic Effect |

| Individual Reward Sensitivity (IRS) | Impulsivity indices, delay discounting, neurocognitive performance | Behavioral tasks, cognitive testing apps | Modulates sensitivity to reward signals and amplification of reward gradients | Higher IRS may amplify responsiveness to reinforcement under high DRE |

| Digital Reward Exposure (DRE) | Screen time, notification frequency, short-form content exposure | Smartphone logs, app usage analytics | Shapes density and variability of environmental reinforcement | Higher DRE may accelerate convergence toward dominant reward states |

| Behavioral Variability (BV) | Behavioral entropy, activity diversity, sleep regularity | Wearables, GPS, app diversity metrics | Maintains distributed engagement and counteracts attractor formation | Lower BV may reduce resilience and favor convergence |

| Temporal Dynamics (BRII(t)) | Fluctuations in activity patterns, recovery from perturbation, variance shifts | Longitudinal behavioral data | Captures time-dependent evolution of instability | Early warning signals may include increased variance and critical slowing down |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).