Submitted:

25 April 2026

Posted:

27 April 2026

You are already at the latest version

Abstract

Keywords:

MSC: 86-10

1. Introduction

2. Related Works

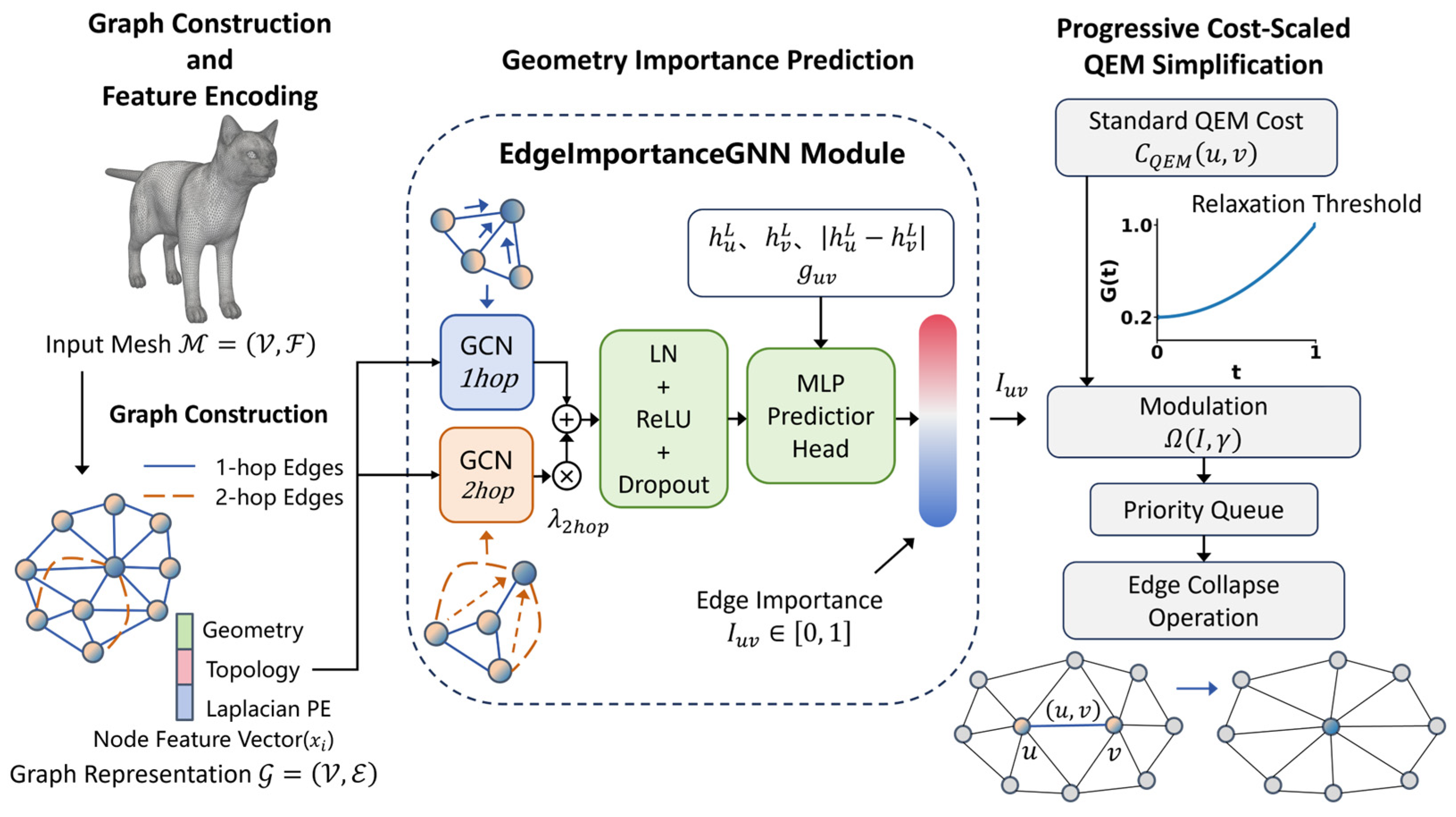

3. Materials and Methods

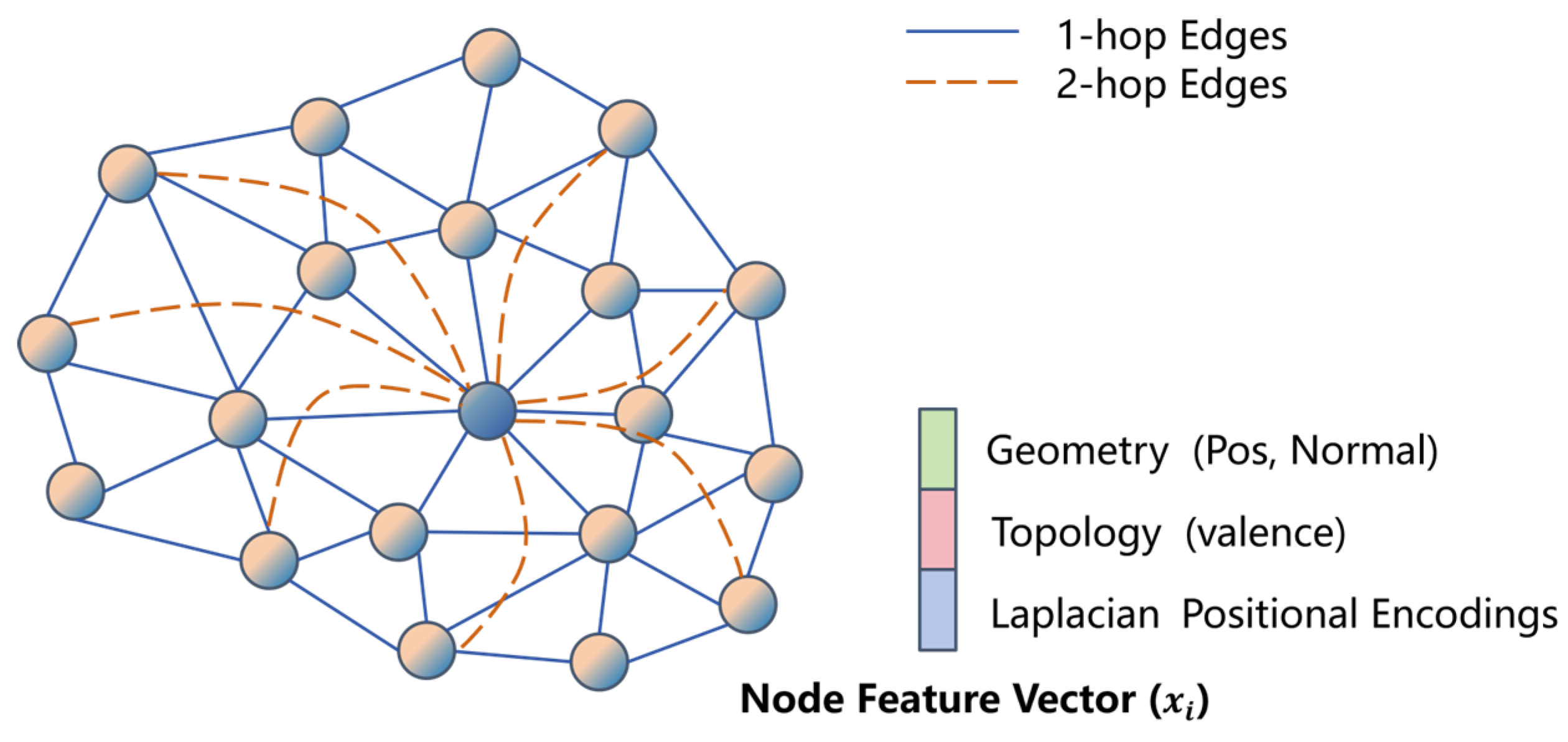

3.1. Graph and Its Features

3.1.1. Node Feature Initialization

3.1.2. Multi-Scale Graph

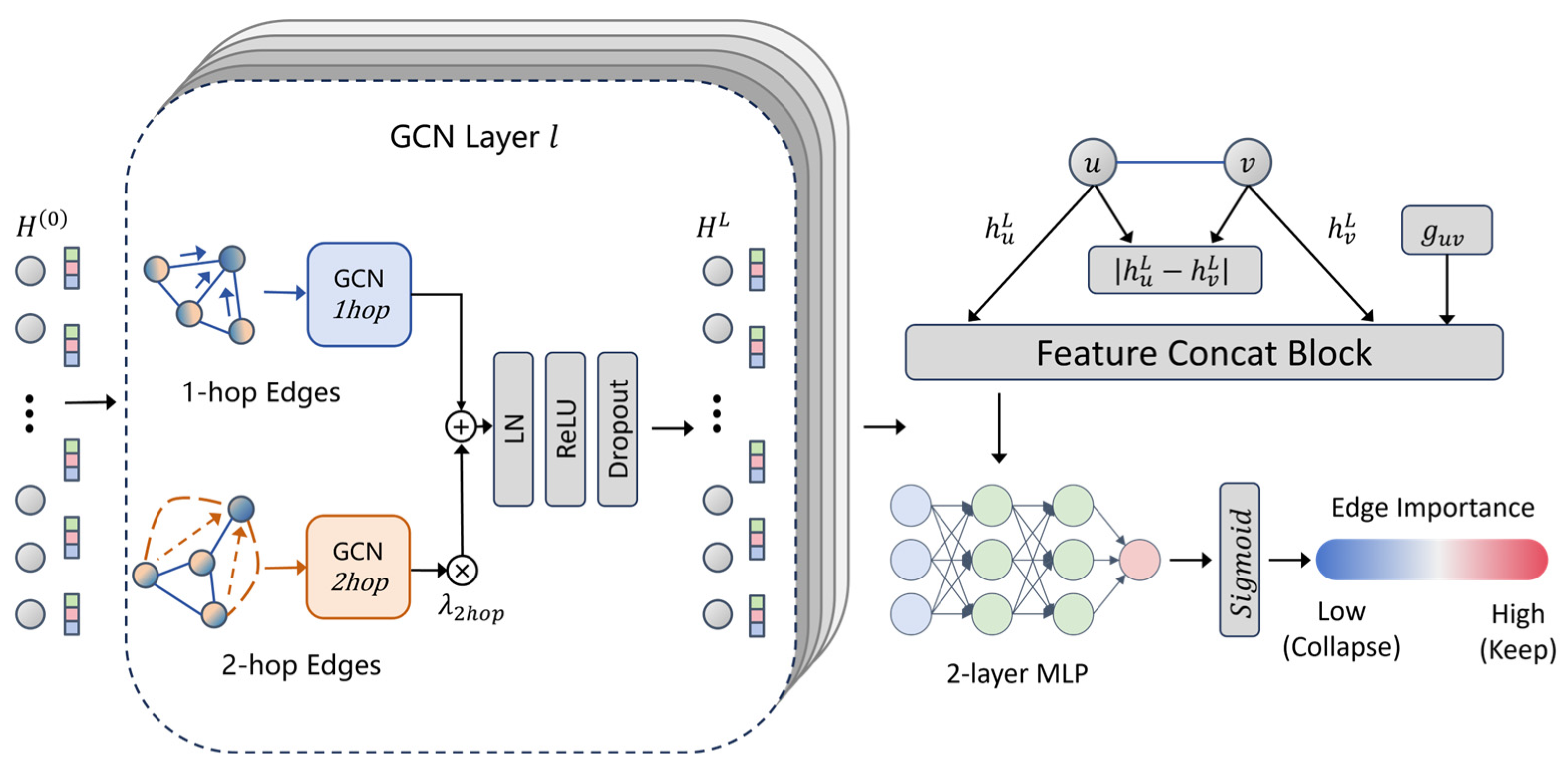

3.2. Edge Importance GNNs

3.2.1. Dual-Branch Message Passing

3.2.2. Edge Importance Prediction Head

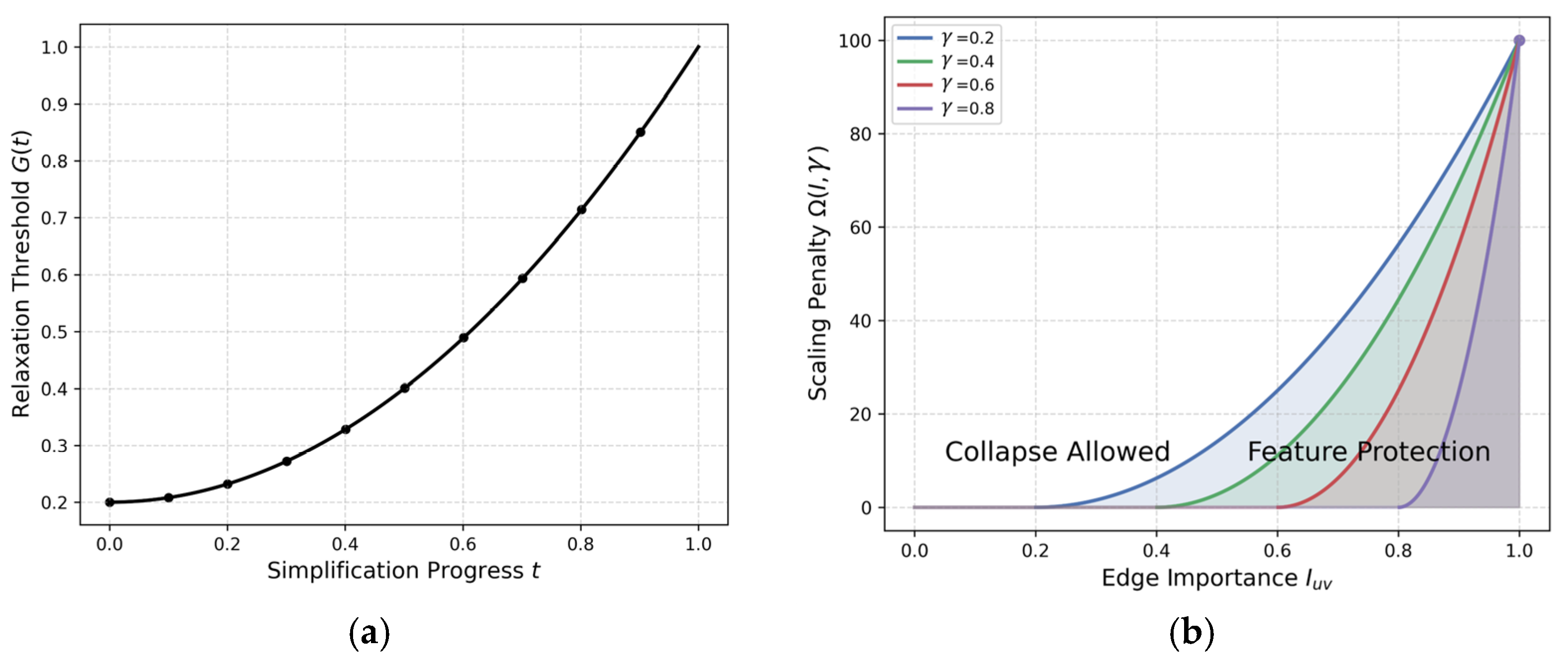

3.3. QEM Simplification with GNN-Guided Dynamic Soft Modulation

3.3.1. QEM Cost Initialization

3.3.2. Dynamic Cost Soft Modulation

3.3.3. Overall Implementation Pipeline

3.4. Loss Function

3.4.1. Structural Contrastive Loss (

3.4.2. Geometry-Aware Loss (

3.4.3. Local Smoothness Regularization (

3.4.4. Total Loss Function

4. Results

4.1. Dataset and Baselines

4.2. Experimental Environment and Model Settings

4.2. Evaluation Metrics

4.2.1. Percentage of Wrong Adjacency (

4.2.2. Point-Wise Chamfer Distance (

4.2.3. Point-Sampled Normal Error (

4.2.4. Laplacian Spectrum Error (

4.3. Model Performances

4.3.1. Model Comparison

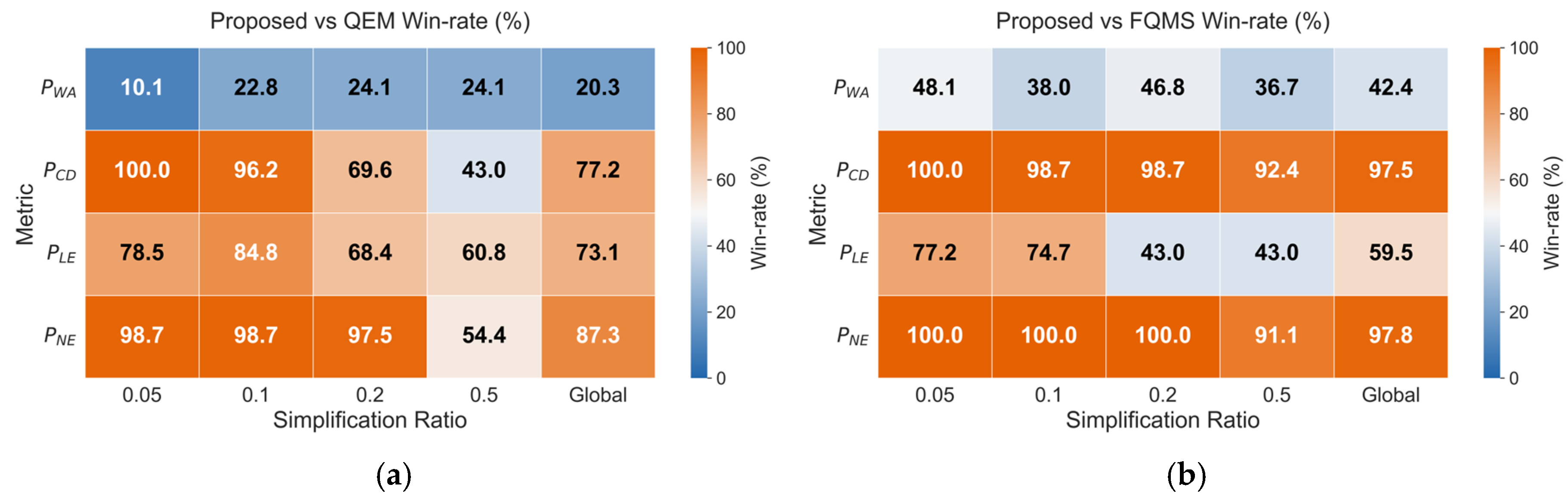

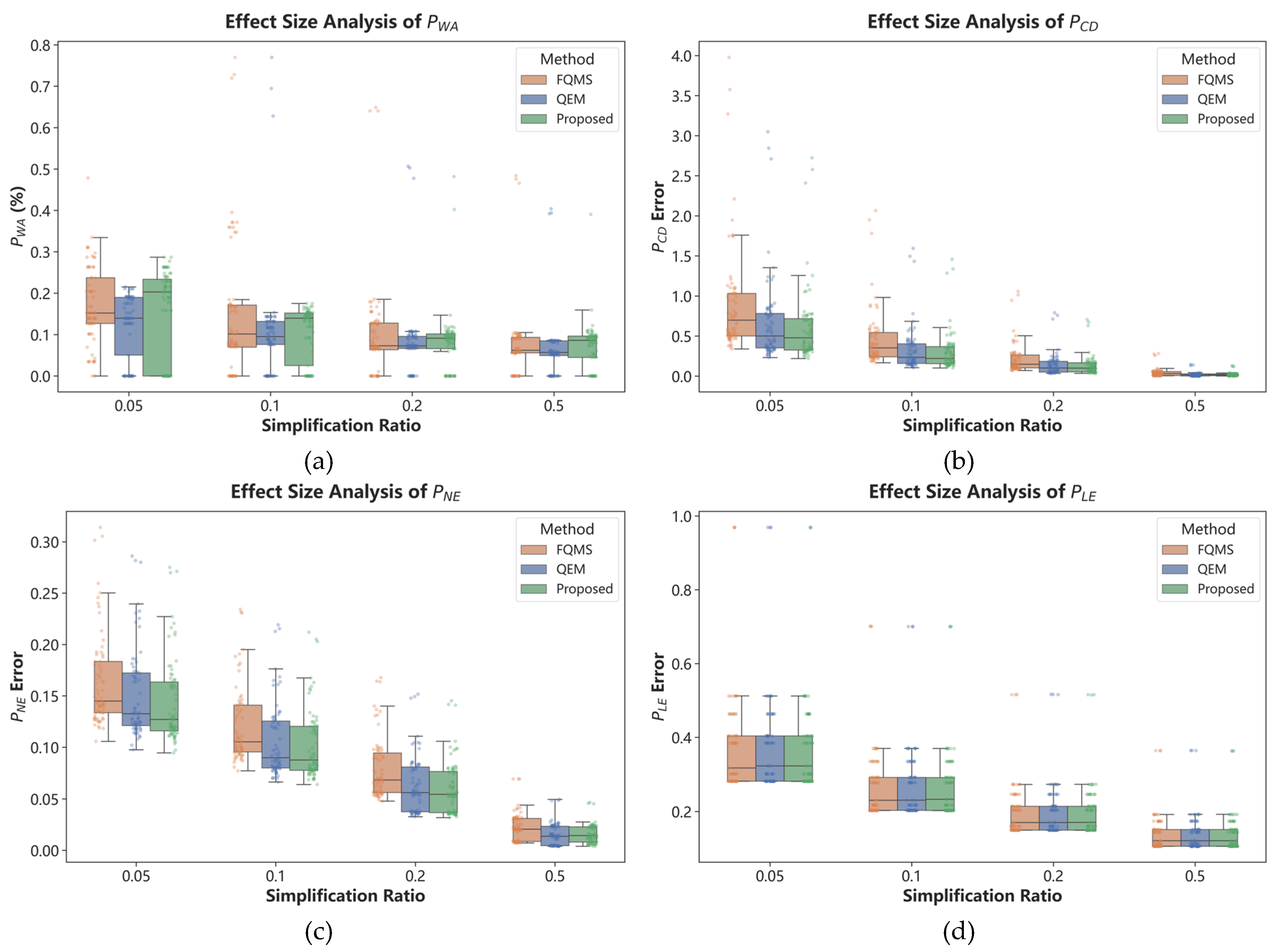

4.3.2. Win-Rates and Effect Size Analysis

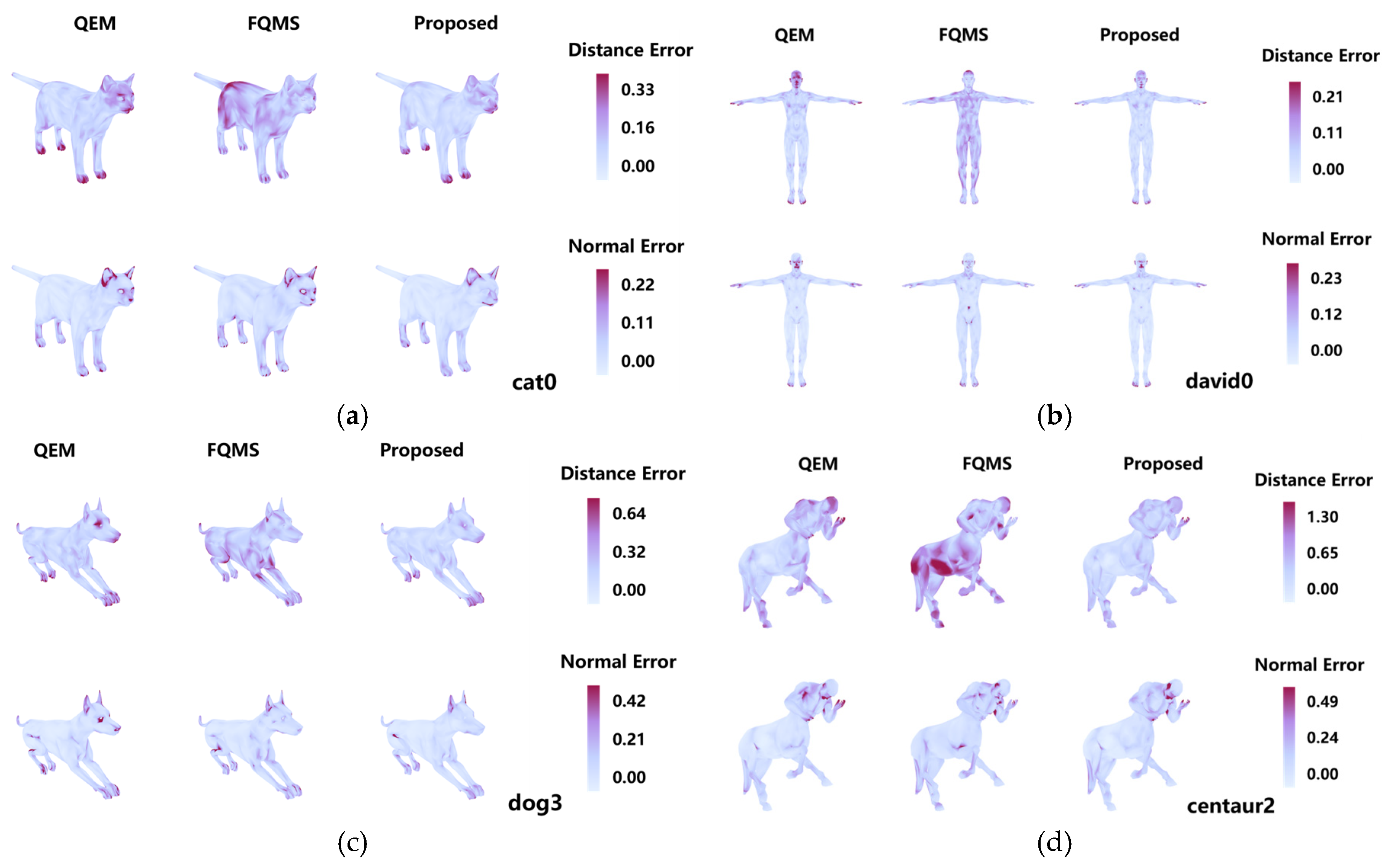

4.3.3. Error Fields

5. Discussions

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Potamias, R.A.; Ploumpis, S.; Zafeiriou, S. Neural mesh simplification. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022; pp. 18583–18592. [Google Scholar]

- Zhang, B.; Zhu, Y.; Zhang, T.; Zhou, X.; Wang, B.; Kablan, O.A.B.K.; Huang, J. Three-Dimensional Stratigraphic Structure and Property Collaborative Modeling in Urban Engineering Construction. Mathematics 2025, 13, 345. [Google Scholar] [CrossRef]

- Hoppe, H. Progressive meshes. In Proceedings of the Proceedings of the 23rd annual conference on Computer graphics and interactive techniques, 1996; pp. 99–108. [Google Scholar]

- Biljecki, F.; Ledoux, H.; Stoter, J. An improved LOD specification for 3D building models. Comput. Environ. Urban Syst. 2016, 59, 25–37. [Google Scholar] [CrossRef]

- Garland, M.; Heckbert, P.S. Surface simplification using quadric error metrics. In Proceedings of the Proceedings of the 24th annual conference on Computer graphics and interactive techniques, 1997; pp. 209–216. [Google Scholar]

- Lindstrom, P.; Turk, G. Fast and memory efficient polygonal simplification. In Proceedings of the Proceedings of the conference on Visualization '98, Research Triangle Park, North Carolina, USA, 1998; pp. 279–286. [Google Scholar]

- Lee, C.H.; Varshney, A.; Jacobs, D.W. Mesh saliency. ACM SIGGRAPH 2005 Papers, 2005; pp. 659–666. [Google Scholar]

- Liu, H.T.D.; Gillespie, M.; Chislett, B.; Sharp, N.; Jacobson, A.; Crane, K. Surface Simplification using Intrinsic Error Metrics. Acm Trans. Graph. 2023, 42, 17. [Google Scholar] [CrossRef]

- Gori, M.; Monfardini, G.; Scarselli, F. A new model for learning in graph domains. In Proceedings of the Proceedings. 2005 IEEE International Joint Conference on Neural Networks, 2005., 31 July-4 Aug. 2005; 2005 vol. 722, pp. 729–734. [Google Scholar]

- Kipf, T.N.; Welling, M. Semi-Supervised Classification with Graph Convolutional Networks. In Proceedings of the 5th International Conference on Learning Representations, ICLR 2017, April 24, 2017 - April 26, 2017, 2017. [Google Scholar]

- Zhang, B.; Li, M.; Huan, Y.; Khan, U.; Wang, L.; Wang, F. Bedrock mapping based on terrain weighted directed graph convolutional network using stream sediment geochemical samplings. Trans. Nonferrous Met. Soc. China 2023, 33, 2799–2814. [Google Scholar] [CrossRef]

- Wang, L.; Jiang, Z.; Song, L.; Yu, X.; Yuan, S.; Zhang, B. A groundwater level spatiotemporal prediction model based on graph convolutional networks with a long short-term memory. J. Hydroinformatics 2024, 26, 2962–2979. [Google Scholar] [CrossRef]

- Choi, J.; Shah, R.; Li, Q.; Wang, Y.; Saraf, A.; Kim, C.; Huang, J.-B.; Manocha, D.; Alsisan, S.; Kopf, J. Ltm: Lightweight textured mesh extraction and refinement of large unbounded scenes for efficient storage and real-time rendering. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024; pp. 5053–5063. [Google Scholar]

- Takikawa, T.; Litalien, J.; Yin, K.; Kreis, K.; Loop, C.; Nowrouzezahrai, D.; Jacobson, A.; McGuire, M.; Fidler, S. Neural geometric level of detail: Real-time rendering with implicit 3d shapes. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2021; pp. 11358–11367. [Google Scholar]

- Garland, M.; Zhou, Y. Quadric-based simplification in any dimension. Acm Trans. Graph. 2005, 24, 209–239. [Google Scholar] [CrossRef]

- Lindstrom, P. Out-of-core simplification of large polygonal models. In Proceedings of the Proceedings of the 27th annual conference on Computer graphics and interactive techniques, 2000; pp. 259–262. [Google Scholar]

- Hoppe, H. New quadric metric for simplifying meshes with appearance attributes. In Proceedings of the Proceedings Visualization'99 (Cat. No. 99CB37067), 1999; pp. 59–510. [Google Scholar]

- Song, R.; Liu, Y.H.; Martin, R.R.; Rosin, P.L. Mesh Saliency via Spectral Processing. Acm Trans. Graph. 2014, 33, 17. [Google Scholar] [CrossRef]

- Xu, R.; Liu, L.; Wang, N.; Chen, S.; Xin, S.; Guo, X.; Zhong, Z.; Komura, T.; Wang, W.; Tu, C. CWF: consolidating weak features in high-quality mesh simplification. ACM Trans. Graph. (TOG) 2024, 43, 1–14. [Google Scholar] [CrossRef]

- Heep, M.; Behnke, S.; Zell, E. Feature-Preserving Mesh Decimation for Normal Integration. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025; pp. 5783–5792. [Google Scholar]

- Hanocka, R.; Hertz, A.; Fish, N.; Giryes, R.; Fleishman, S.; Cohen-Or, D. MeshCNN: A Network with an Edge. Acm Trans. Graph. 2019, 38, 12. [Google Scholar] [CrossRef]

- Lan, J.M.; Zeng, B.; Li, S.Q.; Zhang, W.H.; Shi, X.Y. A Deep Learning-Based Salient Feature-Preserving Algorithm for Mesh Simplification. CMC-Comput. Mat. Contin. 2025, 83, 2865–2888. [Google Scholar] [CrossRef]

- Monti, F.; Boscaini, D.; Masci, J.; Rodola, E.; Svoboda, J.; Bronstein, M.M. Geometric deep learning on graphs and manifolds using mixture model cnns. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017; pp. 5115–5124. [Google Scholar]

- Fey, M.; Lenssen, J.E.; Weichert, F.; Müller, H. Splinecnn: Fast geometric deep learning with continuous b-spline kernels. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2018; pp. 869–877. [Google Scholar]

- Wang, Y.; Sun, Y.; Liu, Z.; Sarma, S.E.; Bronstein, M.M.; Solomon, J.M. Dynamic graph cnn for learning on point clouds. ACM Trans. Graph. (tog) 2019, 38, 1–12. [Google Scholar] [CrossRef]

- Milano, F.; Loquercio, A.; Rosinol, A.; Scaramuzza, D.; Carlone, L. Primal-dual mesh convolutional neural networks. Adv. Neural Inf. Process. Syst. 2020, 33, 952–963. [Google Scholar]

- Sharp, N.; Attaiki, S.; Crane, K.; Ovsjanikov, M. DiffusionNet: Discretization Agnostic Learning on Surfaces. Acm Trans. Graph. 2022, 41, 16. [Google Scholar] [CrossRef]

- Chen, Y.-C.; Kim, V.; Aigerman, N.; Jacobson, A. Neural progressive meshes. In Proceedings of the ACM SIGGRAPH 2023 Conference Proceedings, 2023; pp. 1–9. [Google Scholar]

- Liang, Y.; Zhao, S.; Yu, B.; Zhang, J.; He, F. Meshmae: Masked autoencoders for 3d mesh data analysis. In Proceedings of the European conference on computer vision, 2022; pp. 37–54. [Google Scholar]

- Liu, H.-T.D.; Kim, V.G.; Chaudhuri, S.; Aigerman, N.; Jacobson, A. Neural subdivision. arXiv 2020, arXiv:2005.01819. [Google Scholar] [CrossRef]

- Guillard, B.; Remelli, E.; Lukoianov, A.; Yvernay, P.; Richter, S.R.; Bagautdinov, T.; Baque, P.; Fua, P. DeepMesh: Differentiable Iso-Surface Extraction. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 46, 7072–7087. [Google Scholar] [CrossRef]

- Rakotosaona, M.J.; Aigerman, N.; Mitra, N.J.; Ovsjanikov, M.; Guerrero, P. Differentiable Surface Triangulation. Acm Trans. Graph. 2021, 40, 13. [Google Scholar] [CrossRef]

- Shen, T.; Munkberg, J.; Hasselgren, J.; Yin, K.; Wang, Z.; Chen, W.; Gojcic, Z.; Fidler, S.; Sharp, N.; Gao, J. Flexible isosurface extraction for gradient-based mesh optimization. ACM Trans. Graph. (TOG) 2023, 42, 1–16. [Google Scholar] [CrossRef]

- Son, S.; Gadelha, M.; Zhou, Y.; Xu, Z.; Lin, M.C.; Zhou, Y. Dmesh: A differentiable representation for general meshes. CoRR 2024. [Google Scholar]

- Abu-El-Haija, S.; Perozzi, B.; Kapoor, A.; Harutyunyan, H.; Alipourfard, N.; Lerman, K.; Steeg, G.V.; Galstyan, A.G. MixHop: Higher-Order Graph Convolutional Architectures via Sparsified Neighborhood Mixing. In Proceedings of the International Conference on Machine Learning, 2019. [Google Scholar]

- Qi, C.R.; Yi, L.; Su, H.; Guibas, L.J. PointNet++: deep hierarchical feature learning on point sets in a metric space. In Proceedings of the Proceedings of the 31st International Conference on Neural Information Processing Systems, Long Beach, California, USA, 2017; pp. 5105–5114. [Google Scholar]

- Gilmer, J.; Schoenholz, S.S.; Riley, P.F.; Vinyals, O.; Dahl, G.E. Neural message passing for quantum chemistry. In Proceedings of the International conference on machine learning, 2017; pp. 1263–1272. [Google Scholar]

- Xu, K.; Hu, W.; Leskovec, J.; Jegelka, S. How powerful are graph neural networks? arXiv 2018, arXiv:1810.00826. [Google Scholar]

- Dwivedi, V.P.; Joshi, C.K.; Luu, A.T.; Laurent, T.; Bengio, Y.; Bresson, X. Benchmarking graph neural networks. J. Mach. Learn. Res. 2023, 24, 1–48. [Google Scholar]

- Chung, F.R.K. Spectral graph theory; American Mathematical Soc., 1997; Volume 92. [Google Scholar]

- Bronstein, A.M.; Bronstein, M.M.; Kimmel, R. Numerical geometry of non-rigid shapes; Springer Science & Business Media, 2008. [Google Scholar]

- Zhou, Q.-Y.; Park, J.; Koltun, V. Open3D: A modern library for 3D data processing. arXiv 2018, arXiv:1801.09847. [Google Scholar] [CrossRef]

- Forstmann, S. Fast Quadric Mesh Simplification. Available online: https://github.com/sp4cerat/Fast-Quadric-Mesh-Simplification (accessed on 27 January 2026).

- Fey, M.; Lenssen, J.E. Fast Graph Representation Learning with PyTorch Geometric. arXiv 2019, 1903.02428. [Google Scholar] [CrossRef]

- Reuter, M.; Wolter, F.-E.; Peinecke, N. Laplace–Beltrami spectra as ‘Shape-DNA’of surfaces and solids. Comput.-Aided Des. 2006, 38, 342–366. [Google Scholar] [CrossRef]

| Algorithm 1: GNN-Guided Dynamic Soft Modulation QEM Simplification |

| Input: : Original 3D mesh : Target face count : Total number of staged inference steps : Trained EdgeImportanceGNN parameters Output: : Simplified 3D mesh Definitions: : Returns predicted edge importance scores for the graph : Computes dynamic relaxation threshold at normalized progress : Computes soft penalty scale given importance and threshold : Pops and returns the edge with the tuple with the minimum cost : Boolean check for manifold, normal flip, quality, and valence Constraints Procedure:1: 1: , 2: Compute per-stage geometric decay ratio: 3: for stage to do 4: // Phase 1: Staged GNN Inference 5: Set stage target: 6: // Update stage progress and dynamic scheduling threshold 7: Reconstruct multi-scale graph from current mesh 8: Extract node feature matrix (Geometric, Topological, Laplacian PE) 9: Predict edge importance: 10: // Phase 2: QEM & Priority Queue Initialization 11: Compute base quadric matrices for all vertices 12: Initialize an empty priority queue 13: Compute stage initial progress: 14: Compute dynamic relaxation threshold: 15: for each valid edge do 16: Solve optimal collapse position and compute base cost 17: Modulated cost 18: Insert into 19: end for 20: // Phase 3: Dynamic Edge Collapse (Continuous Local Optimization) 21: while and is not empty do 22: 23: if edge or vertices are dead then continue 24: if not then continue 25: Collapse edge and update mesh connectivity and 26: Update quadric strictly via accumulation: 27: // remains fixed within the current stage; surviving edges retain their scores 28: Update normalized stage progress: 29: Update dynamic relaxation threshold: 30: for each affected edge in local 1-ring neighborhood of do 31: Solve new optimal position and recompute base cost 32: Recompute final cost 33: Push updated into 34: end for 35: end while 36: end for 37: return |

| Configuration | Value |

| CPU | Intel(R) Core(TM) i7-10700 @ 2.90 GHz |

| GPU | NVIDIA GeForce RTX 2060 SUPER (8GB) |

| RAM | 32GB |

| Operating System | Windows 10 Pro 64-bit |

| DL Framework | PyTorch 2.4.0 + PyTorch Geometric 2.5.3 |

| CUDA Version | 12.1 |

| Category | Configuration Item | Value / Setting |

| Input Features | Surface normal dimensionality | 3 |

| Structural feature dimensionality | 2 | |

| Laplacian positional encoding dimensionality () | 16 | |

| Network Architecture | GNN convolution operator | GCNConv |

| Number of GNN layers () | 3 | |

| Hidden feature dimensionality () | 64 | |

| Normalization strategy | LayerNorm | |

| Dropout rate | 0.15 | |

| Multi-scale Graph | Residual fusion weight for 2-hop neighbors () | 0.5 |

| Maximum number of far neighbors () | 12 | |

| Edge Decoder | Geometric edge feature dimensionality | 2 |

| Edge MLP input feature dimension | 194 | |

| Training Settings | Optimizer | Adam |

| Initial learning rate | ||

| Training epochs | 50 | |

| Batch size | 1 | |

| Loss Weights | Structural contrastive loss weight () | 1.0 |

| Geometric hinge loss weight () | 0.7 | |

| Pairwise ranking loss weight () | 0.25 | |

| Local smoothness regularization weight () | 0.2 |

| Ratio | Method | ||||||||

| mean | median | mean | median | mean | median | mean | median | ||

| 0.05 | QEM | 0.1234±0.0191 | 0.1428±0.0111 | 0.6632±0.1977 | 0.5144±0.1469 | 0.1494±0.0166 | 0.1379±0.0195 | 0.3761±0.0509 | 0.3329±0.0545 |

| FQMS | 0.1668±0.0253 | 0.1573±0.0175 | 0.8936±0.2517 | 0.6923±0.1689 | 0.1630±0.0168 | 0.1517±0.0153 | 0.3758±0.0508 | 0.3317±0.0547 | |

| Proposed | 0.1622±0.0270 | 0.1982±0.0205 | 0.6043±0.1750 | 0.4772±0.1288 | 0.1431±0.0154 | 0.1323±0.0174 | 0.3759±0.0510 | 0.3327±0.0544 | |

| 0.1 | QEM | 0.1081±0.0257 | 0.1008±0.0170 | 0.3283±0.1041 | 0.2515±0.0782 | 0.1049±0.0137 | 0.0936±0.0170 | 0.2715±0.0367 | 0.2399±0.0393 |

| FQMS | 0.1488±0.0468 | 0.1178±0.0436 | 0.4575±0.1352 | 0.3445±0.0891 | 0.1193±0.0138 | 0.1091±0.0138 | 0.2714±0.0368 | 0.2397±0.0393 | |

| Proposed | 0.1053±0.0181 | 0.1326±0.0159 | 0.3020±0.0923 | 0.2365±0.0691 | 0.1011±0.0129 | 0.0905±0.0153 | 0.2714±0.0368 | 0.2402±0.0392 | |

| 0.2 | QEM | 0.0810±0.0185 | 0.0766±0.0086 | 0.1390±0.0529 | 0.1013±0.0453 | 0.0620±0.0109 | 0.0532±0.0144 | 0.1999±0.0271 | 0.1766±0.0287 |

| FQMS | 0.1032±0.0287 | 0.0876±0.0239 | 0.2174±0.0716 | 0.1634±0.0523 | 0.0783±0.0112 | 0.0690±0.0134 | 0.1999±0.0271 | 0.1765±0.0287 | |

| Proposed | 0.0825±0.0186 | 0.0880±0.0089 | 0.1294±0.0456 | 0.0970±0.0381 | 0.0598±0.0101 | 0.0514±0.0133 | 0.1999±0.0271 | 0.1766±0.0286 | |

| 0.5 | QEM | 0.0641±0.0145 | 0.0562±0.0029 | 0.0200±0.0097 | 0.0128±0.0099 | 0.0149±0.0042 | 0.0115±0.0063 | 0.1412±0.0191 | 0.1249±0.0199 |

| FQMS | 0.0759±0.0182 | 0.0718±0.0176 | 0.0409±0.0190 | 0.0273±0.0175 | 0.0220±0.0057 | 0.0175±0.0082 | 0.1411±0.0191 | 0.1249±0.0200 | |

| Proposed | 0.0791±0.0213 | 0.0838±0.0134 | 0.0210±0.0079 | 0.0166±0.0084 | 0.0154±0.0035 | 0.0130±0.0049 | 0.1411±0.0190 | 0.1248±0.0199 | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).