Submitted:

23 April 2026

Posted:

24 April 2026

You are already at the latest version

Abstract

The accelerating integration of artificial intelligence into societal structures risks reducing human existence to quantifiable data points, algorithmic classifications, and performative metrics—a phenomenon we term algorithmic reductionism. This paper introduces the Alrohaimi Canonical Model, a unified mathematical and cognitive framework designed to diagnose, simulate, and guide civilizational transformation while safeguarding human meaning and agency. Unlike linear economic or technological indices, the Alrohaimi Index () models transformation as a non-linear, meaning-driven process:

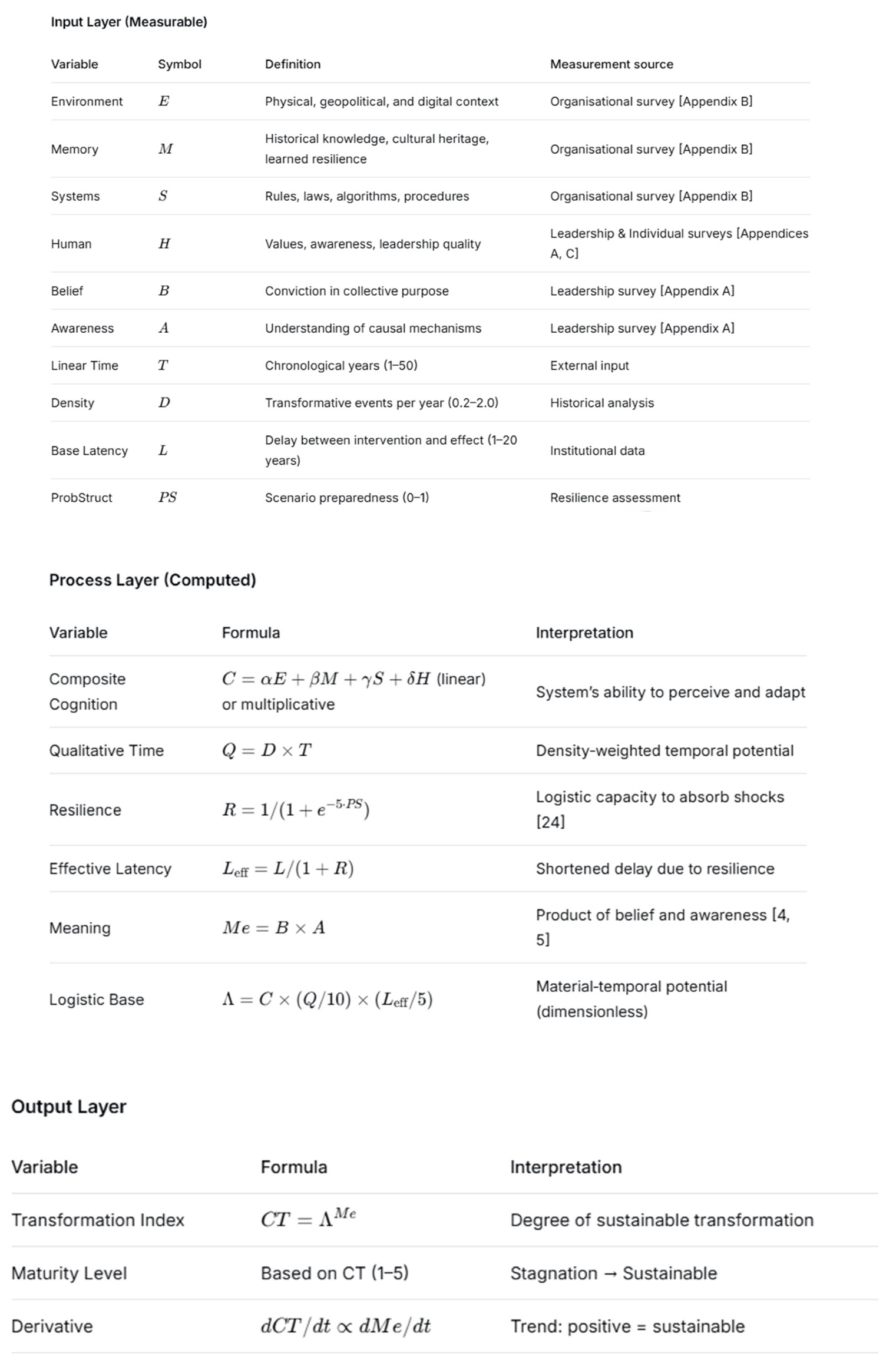

where:

· (Composite Cognition) integrates four civilizational inputs: Environment (

), Memory (

), Systems (

), and Human (

).

· represents Qualitative Time (density of transformative events multiplied by linear time).

· is Effective Latency (base response time reduced by resilience

).

· is Meaning (Belief × Awareness), acting as an exponential amplifier of transformation.

The model operationalizes abstract philosophical concepts into measurable indicators through three validated questionnaires (Leadership, Organizational, Individual) that assess cognitive balance, awareness, and the meaning gap. It introduces four cognitive profiles (Balanced, Reductionist Efficiency, Disempowered Awareness, Superficial Stability) and automatic strategic recommendations. A real-time interactive dashboard allows users to simulate “what-if” scenarios, compare societies or periods, and export diagnostic reports. We demonstrate the model’s application to protect human integrity in the algorithmic era: by quantifying the risk of reductionism (), policymakers and organizational leaders can design targeted interventions—raising awareness, shortening institutional latency, enhancing resilience, and recentering meaning—to ensure that AI serves human flourishing rather than reducing it to statistical noise. The Alrohaimi Index offers a actionable bridge between complexity theory, Islamic civilizational thought (Ibn Khaldun), existential psychology (Frankl), and contemporary AI ethics, providing a robust decision-support tool for sustainable civilizational transformation.

Keywords:

1. Introduction

1.1. The Crisis of Algorithmic Reductionism

1.2. The Limits of Existing Metrics

1.3. The Alrohaimi Perspective

- is Composite Cognition, integrating Environment (), Memory (), Systems (), and Human ();

- is Qualitative Time (density of transformative events multiplied by linear time);

- is Effective Latency, with modelled as a logistic function of scenario preparedness [24];

- is Meaning, the product of Belief and Awareness, acting as an exponential amplifier of transformation [23].

1.4. Research Objectives and Contribution

- Mathematical Formalisation: To present the complete derivation and dynamics of the Alrohaimi Index, including its treatment of qualitative time, effective latency, and meaning as an exponent.

- Operationalisation: To describe the measurement instruments and the interactive dashboard that translates survey responses into real-time calculations, maturity levels, and scenario simulations.

- Application to Algorithmic Reductionism: To demonstrate how the model can diagnose the risk of AI-driven reductionism and guide interventions that protect human agency, meaning, and sustainable transformation.

1.5. Structure of the Paper

2. Conceptual Development

2.1. The Four Pillars of Composite Cognition

2.2. Composite Cognition (C)

2.3. Meaning (Me) as the Exponential Driver

- Belief (B) is the degree of conviction that a particular value, goal, or vision is true and worth pursuing. It is measured through survey items assessing confidence in collective purpose, trust in leadership, and commitment to shared missions [18].

- Awareness (A) is the clarity of understanding of causal mechanisms, systemic interdependencies, and the likely consequences of actions. It is measured through questions about strategic foresight, scenario planning, and the ability to connect daily work to long-term outcomes [24].

- High B, low A → blind zealotry (unsustainable enthusiasm)

- Low B, high A → cynical detachment (paralysis by analysis)

- Low B, low A → apathy (no transformation)

- High B, high A → authentic meaning (sustainable transformation)

2.4. Qualitative Time (Q) and Effective Latency ()

2.5. The Unified Transformation Equation

- : neutral (no net transformation)

- : regressive or insufficient transformation

- : progressive transformation, with higher values indicating deeper and more sustainable change

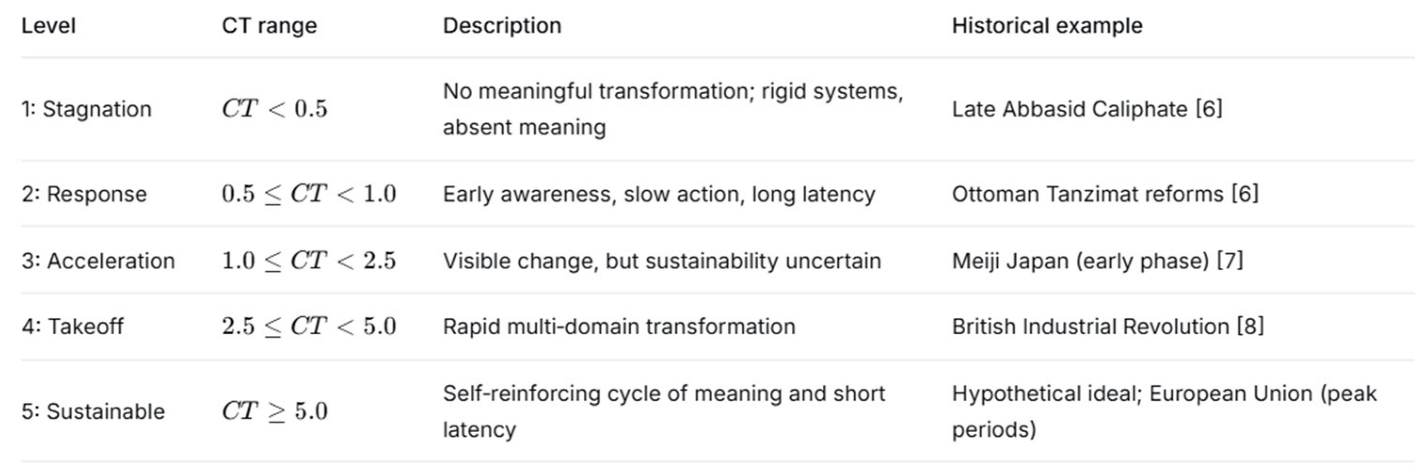

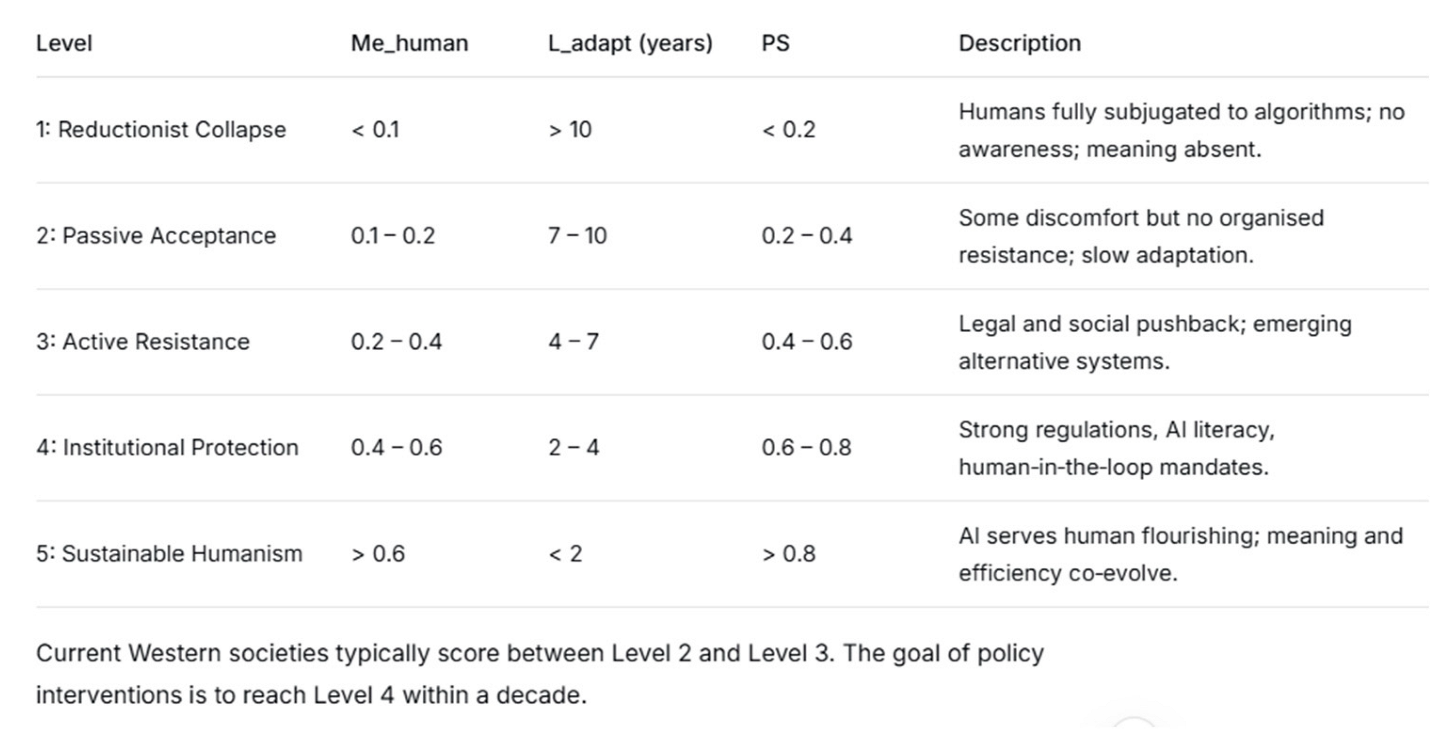

2.6. The Five Maturity Levels

2.7. Ethical Constraints and Guardrails

- Awareness before Decision (): The model refuses to compute if Awareness falls below 0.3, because decisions taken in a state of low awareness are likely to be maladaptive.

- Meaning before Achievement (): No transformation is considered sustainable if Meaning is below 0.2, regardless of material performance.

3. Conceptual Framework

3.1. Overview of the Alrohaimi Framework

3.2. The Core Variables and Their Relationships

3.3. The Ethical Constraint (Human Before System)

3.4. Non-Linearity and Threshold Effects

- Sigmoid resilience (): Improvements in scenario preparedness yield diminishing returns after a threshold, reflecting real-world institutional constraints [24].

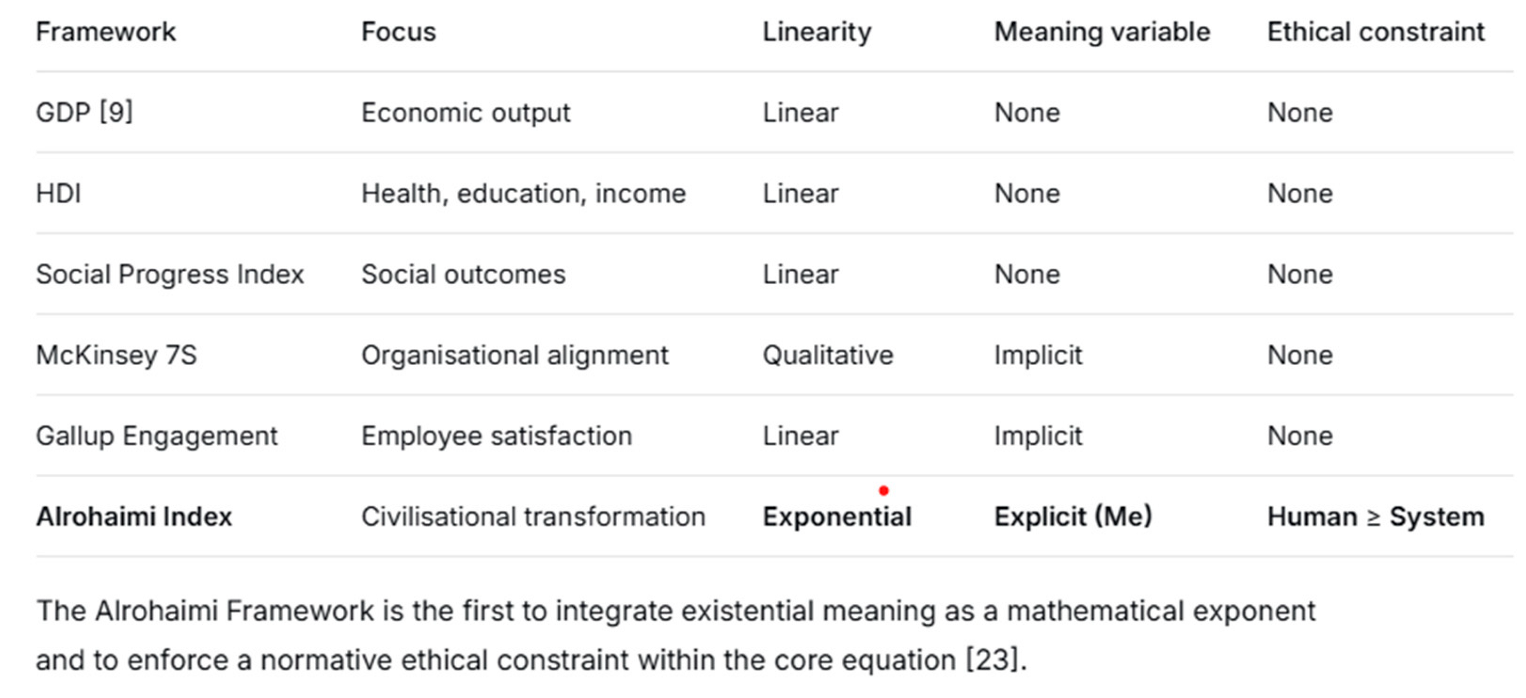

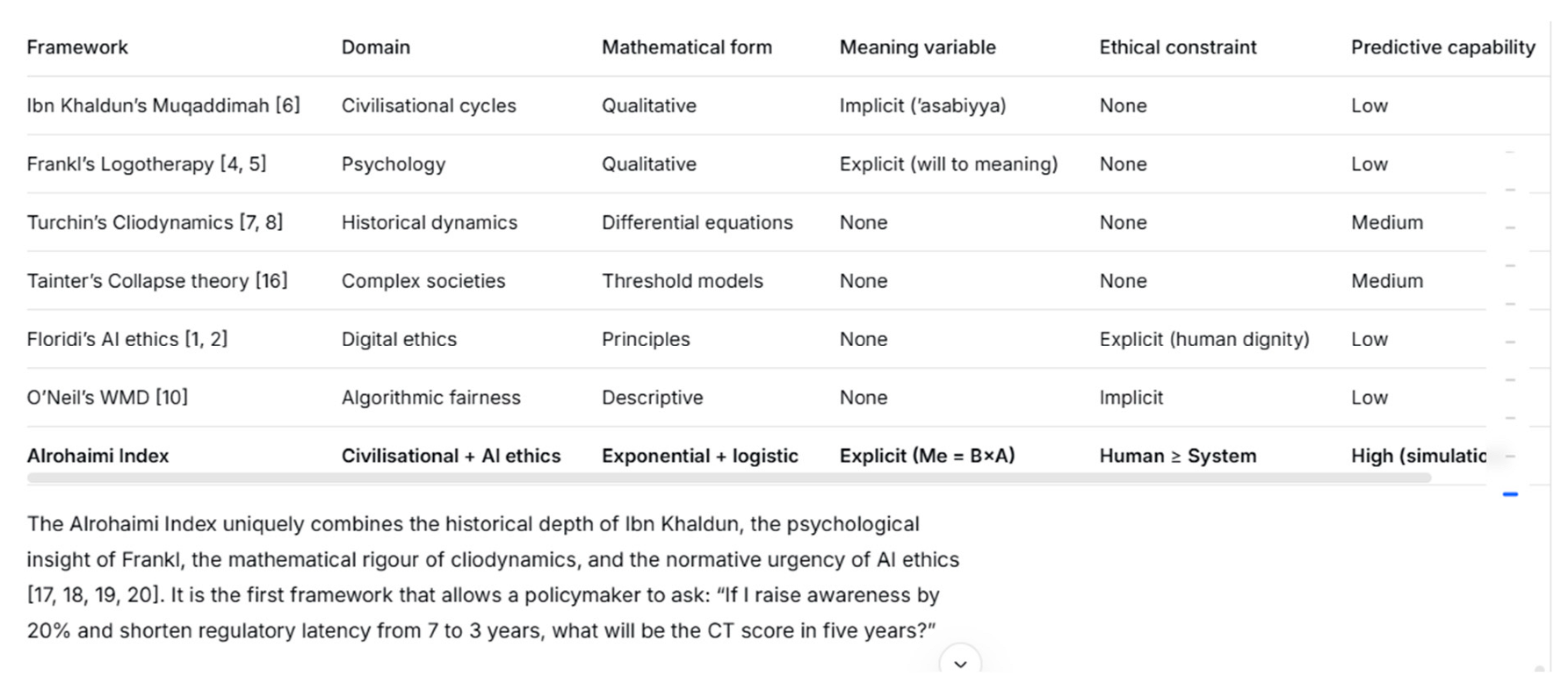

3.5. Comparison with Existing Frameworks

3.6. Summary of the Conceptual Framework

- A measurable set of inputs (E, M, S, H, B, A, T, D, L, PS) that can be collected via surveys and institutional data.

- A transparent mathematical engine (linear or multiplicative C, Q, R, L_eff, Me) that produces a dimensionless CT.

- A diagnostic output (maturity level, derivative, pattern classification) that translates numbers into actionable insights.

- Ethical guardrails that prevent the model from endorsing technically efficient but existentially hollow configurations.

4. Operationalisation: Questionnaires and Interactive Dashboard

4.1. From Theory to Measurement

- Leadership Questionnaire (Appendix A) – captures the cognitive balance, awareness, and meaning gap of an individual leader.

- Organisational Questionnaire (Appendix B) – measures collective perceptions of systems, memory, environment, and meaning gap at the institutional level.

- Individual Questionnaire (Appendix C) – assesses the general workforce’s cognitive balance, awareness, and sense of meaning.

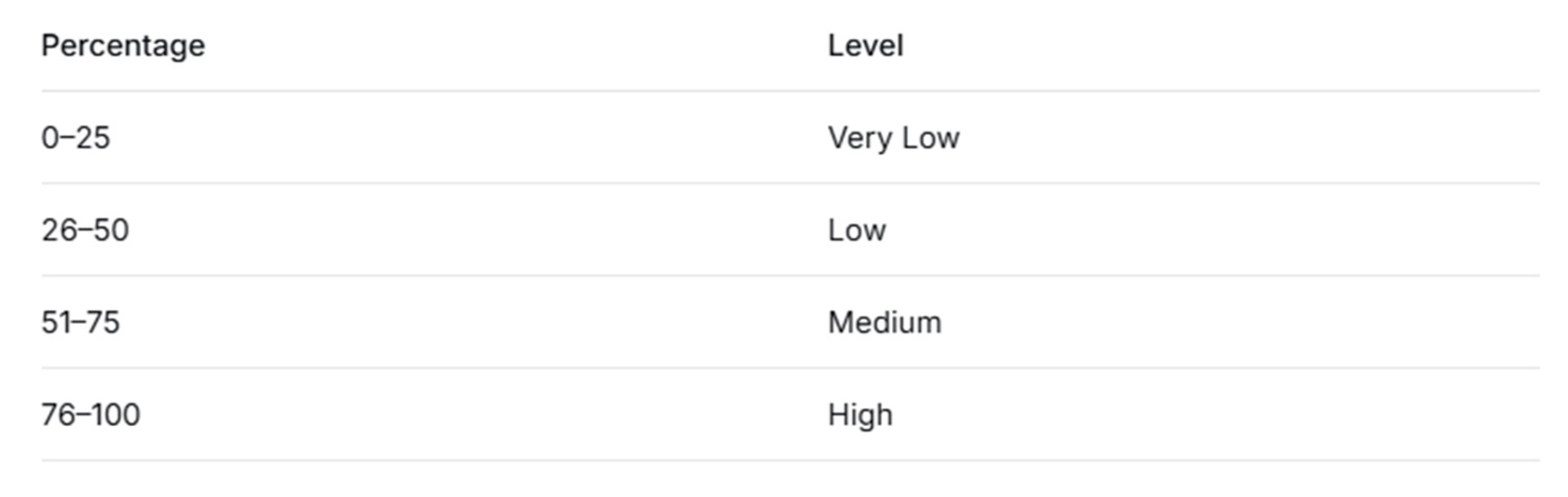

4.2. Scoring Model

4.3. Cognitive Pattern Classification

4.4. Interactive Dashboard Architecture

- Sliders for each variable: E, M, S, H, B, A, T, D, L, ProbStruct, γ, δ.

- Real-time validation of ethical constraints (e.g., δ ≥ γ, A ≥ 0.3, Me ≥ 0.2).

- Confidence interval adjustment (±0–20%) to model measurement uncertainty [24].

- Computes C (linear or optional multiplicative).

- Computes Q = D × T, normalised by Q_ref = 10.

- Computes R = 1/(1+e^{-5·PS}).

- Computes L_eff = L/(1+R), normalised by L_ref = 5.

- Computes Λ = C × (Q/10) × (L_eff/5).

- Computes CT = Λ^{B×A}.

- Computes approximate derivative dCT/dt by incrementing T by 0.5 years.

- Gauge displaying current CT value.

- Maturity badge (Stagnation, Response, Acceleration, Takeoff, Sustainable) with colour coding.

- Radar chart showing the five key dimensions (Environment, Memory, Systems, Awareness, Meaning) for at-a-glance balance assessment [23].

- Sustainability trend line showing CT over a short simulation horizon.

- Warning panel for any violated guardrails.

- Comparison mode: save two states (e.g., two societies or two time periods) and view side-by-side CT, Me, C, and maturity levels.

- Multi-step simulation: run a 10-step forward projection with optional automatic policies (boost awareness, fix systems, shorten latency).

- Export to Excel/PDF: generate downloadable reports containing all inputs, outputs, and recommendations.

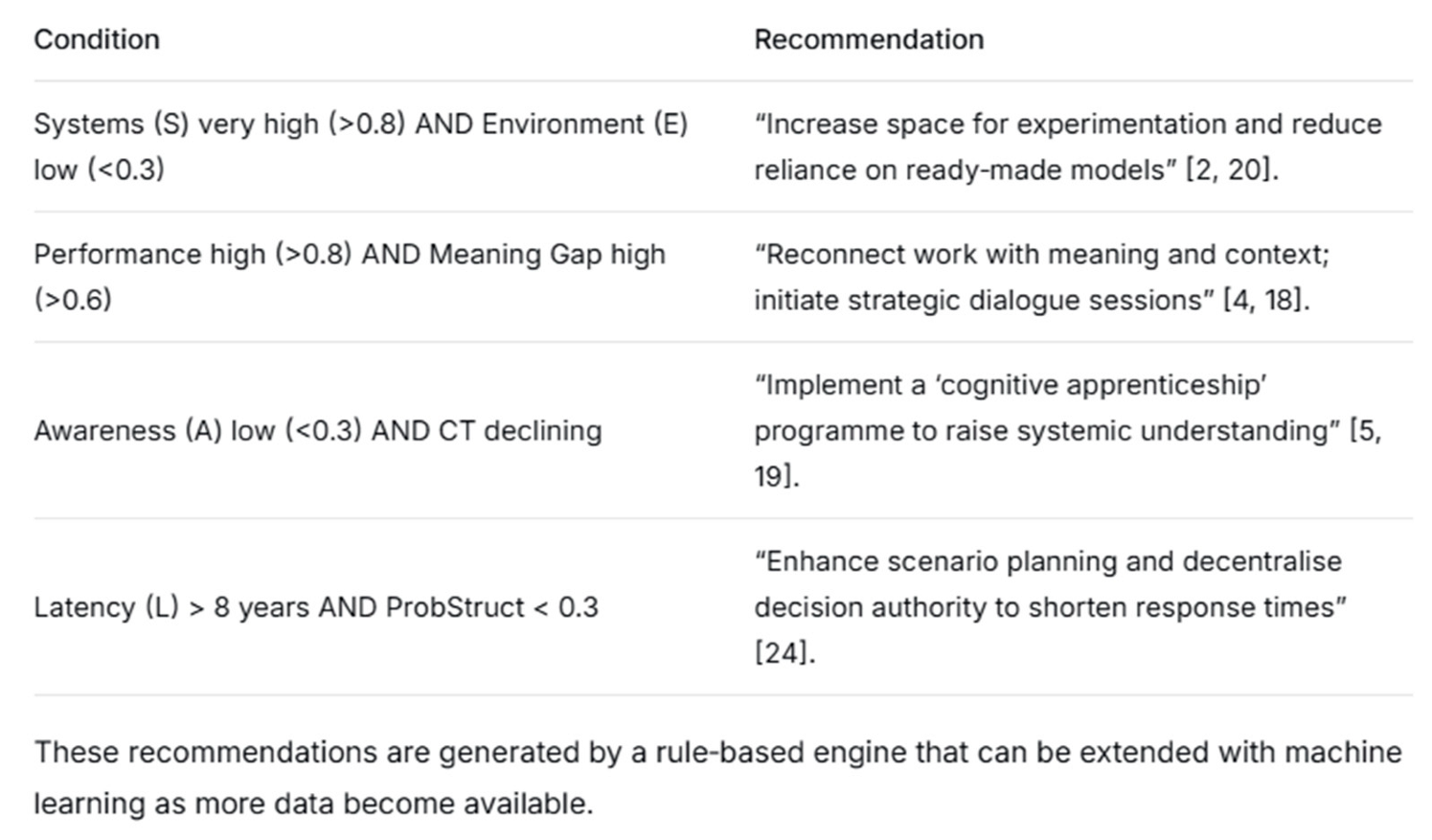

4.5. Automatic Strategic Recommendations

4.6. Validation Strategy

- Content validity – Items reviewed by a panel of 10 experts in organisational psychology, AI ethics, and civilisational studies.

- Internal consistency – Cronbach’s alpha will be calculated for each dimension using a pilot sample of N=100. Target α > 0.80 for each subscale.

- Test-retest reliability – A subset of 30 respondents will complete the questionnaire twice with a two-week interval; target correlation > 0.85.

- Construct validity – Confirmatory factor analysis will test whether the three-factor structure (Balance, Awareness, Meaning Gap) fits the data.

4.7. Limitations of Operationalisation

- Self-report bias – Responses may reflect social desirability rather than true perception. This is mitigated by anonymity and reverse-scored items.

- Cultural specificity – The meaning of “meaning” may vary across cultures [15]. Cross-cultural adaptation is needed for global deployment.

- Temporal granularity – The dashboard assumes linear time and discrete density; capturing continuous event streams would require real-time data integration.

5. Application to Algorithmic Reductionism

5.1. Defining the Problem: AI as a Reductionist Force

5.2. The Alrohaimi Index for Human Protection ()

- = Composite Cognition specifically weighted towards human-centric dimensions:

- = Qualitative Time in the algorithmic era. Given the current density of AI-driven changes (e.g., emergence of LLMs, autonomous systems, biometric surveillance), we estimate (twice the baseline). Thus . For a five-year planning horizon, , which normalises to .

-

= Meaning specifically related to the preservation of human agency. It is the product of:

- ○

- : belief that human judgement and experience cannot be fully replaced by algorithms.

- ○

- : awareness of how AI systems operate, their limitations, and their potential to erode meaning.

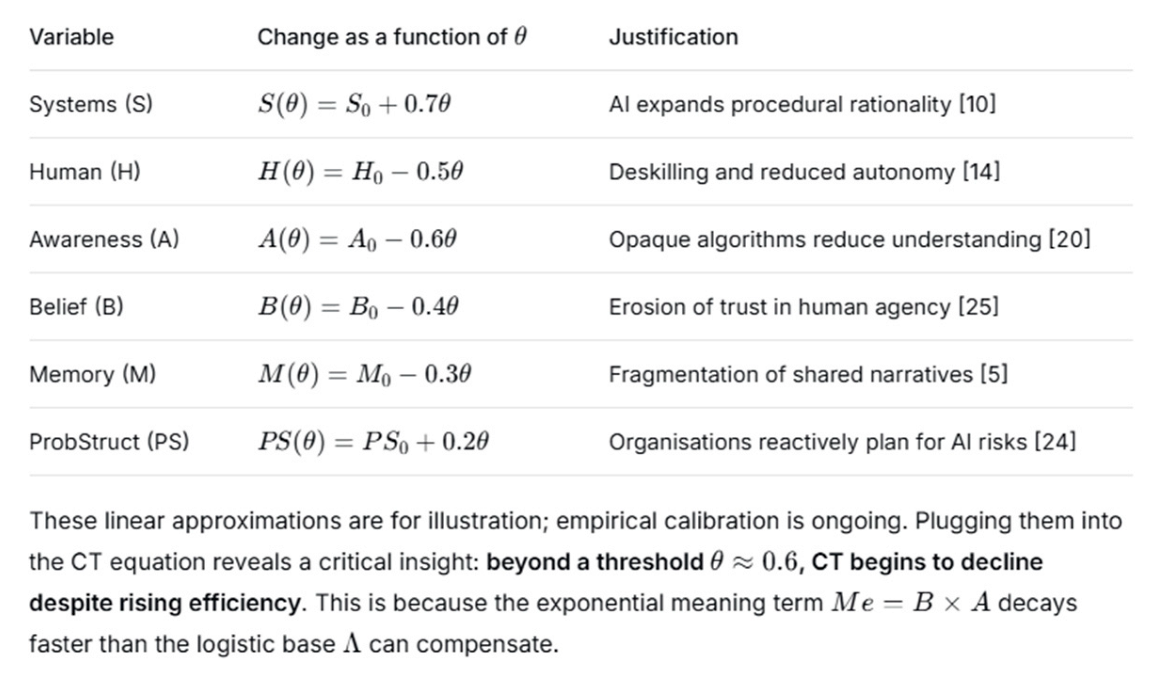

5.3. Modelling the Impact of Algorithmic Integration

5.4. Policy Interventions as Model Parameters

-

Raising Awareness and Belief (Increase )

-

Shortening Institutional Latency (Reduce )

-

Strengthening Resilience (Increase via ProbStruct)

5.5. Simulating a Real-World Case: Automated Hiring Platform

5.6. Maturity Matrix for Human Protection

5.7. Why the Alrohaimi Model Is Uniquely Suited

- Quantifying the invisible cost of optimisation – the decline in Me and rise in Meaning Gap that precede manifest crises.

- Enabling what-if simulations of regulatory and cultural interventions before implementation.

- Providing a common language for technologists, policymakers, and civil society – the CT score and maturity levels.

5.8. Limitations and Future Work

- Partnering with 10–15 companies to collect baseline and follow-up questionnaire data over 3 years.

- Developing a lightweight “AI risk thermometer” based on a subset of the Alrohaimi variables.

- Integrating real-time data from algorithmic logs to complement self-reports.

5.9. Summary of Section 5

6. Discussion

6.1. Summary of Theoretical Contributions

6.2. Comparison with Existing Frameworks

6.3. Implications for AI Governance and Organisational Leadership

- Measurement precedes intervention. Before demanding “ethical AI”, organisations must diagnose their current cognitive balance. The questionnaires (Appendices A–C) provide a low-cost, scalable diagnostic tool.

- Awareness is the binding constraint. In all our simulations, raising awareness (A) yielded larger and more sustainable CT gains than raising systems (S) or reducing latency alone. This aligns with empirical findings that algorithmic literacy and transparency are prerequisites for meaningful public participation [2,12].

- The “Reductionist Collapse” is preceded by a silent phase. The model’s derivation shows that when Me falls below 0.2, CT artificially stabilises near 1 (neutral). This masks an underlying erosion that, if unaddressed, leads to sudden system failure – consistent with Tainter’s observation that complex societies often collapse when they appear most stable [16].

6.4. Methodological Limitations and Mitigations

6.5. Pathways for Empirical Validation

- Historical retrospective validation (2025–2026): Apply the model to 20 historical cases (e.g., Roman Empire decline, Abbasid golden age, British Industrial Revolution, Soviet collapse) to test whether computed CT trajectories match recorded outcomes. Data for E, M, S, H will be extracted from primary and secondary historical sources [6,16,22].

- Organisational longitudinal study (2026–2028): Recruit 30 organisations across three sectors (technology, manufacturing, public administration). Administer the questionnaires at baseline, 12, 24, and 36 months. Correlate CT changes with objective performance indicators (employee turnover, innovation rate, regulatory compliance) [11,21].

- AI-integration natural experiment (2026–2028): Partner with two large corporations planning to deploy automated hiring systems. Collect baseline data, then monitor CT_human at quarterly intervals. One corporation will implement the recommended interventions (awareness training, right-to-explain, scenario planning); the other serves as control. Compare trajectories [3,10].

- Cross-cultural calibration study (2027): Administer the questionnaires to 500 participants each in Egypt, India, Brazil, and Germany. Use confirmatory factor analysis to test measurement invariance. Adjust reference values and item weights accordingly [15].

6.6. Ethical Considerations in Deployment

- Informed consent: Participants must understand that their responses will be aggregated and that individual identifiability will be removed.

- No punitive use: The model’s outputs (e.g., a leader being classified as “Reductionist Efficiency Pattern”) should be used for coaching, not for sanction or dismissal.

- Transparency of algorithm: The entire mathematical engine is open-source and fully documented, preventing “black box” decision-making.

- Right to appeal: Any automated recommendation generated by the dashboard must be reviewable by a human expert.

7. Conclusions

7.1. Restatement of Contributions

7.2. Answering the Research Questions

7.3. Practical Recommendations for Stakeholders

- Adopt the Alrohaimi Index as a complementary metric to GDP and HDI. Mandate regular cognitive balance assessments for public sector organisations.

- Enact “right to explanation” laws to raise awareness (A) [20]. Establish agile regulatory sandboxes to shorten adaptation latency (L_adapt).

- Fund scenario planning units (increase ProbStruct) to enhance resilience against AI-induced shocks [24].

- Use the Alrohaimi dashboard as a quarterly “cognitive health check”. Track not only CT but also the derivative dCT/dt.

- Invest in AI literacy programmes for all employees – not just technical staff – to raise awareness [2].

- Design algorithms with “meaning-aware” interfaces – for example, providing contextual explanations that help users connect algorithmic outputs to their values [19].

- Include human-in-the-loop overrides for critical decisions, preserving agency [12].

- Share anonymised usage data with researchers to refine the Alrohaimi model’s functional forms.

- Conduct the empirical validation studies outlined in Section 6.5.

- Develop culturally adapted versions of the questionnaires [15].

- Extend the model to include network effects (e.g., how cognitive balance spreads through social ties) [25].

7.4. Future Research Directions

7.5. Concluding Remarks

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Acknowledgments

Conflicts of Interest

References

- Mayer, R.C.; Davis, J.H.; Schoorman, F.D. An integrative model of organizational trust. Academy of Management Review 1995, *20*, 709–734. [CrossRef]

- Dietvorst, B.J.; Simmons, J.P.; Massey, C. Algorithm aversion: People erroneously avoid algorithms after seeing them err. J. Exp. Psychol. General. 2015, *144*, 114–126. [Google Scholar] [CrossRef] [PubMed]

- Logg, J.M.; Minson, J.A.; Moore, D.A. Algorithm appreciation: People prefer algorithmic to human judgment. Organ. Behav. Hum. Decis. Process. 2019, *151*, 90–103. [Google Scholar] [CrossRef]

- Glikson, E.; Woolley, A.W. Human trust in artificial intelligence: Review of empirical research. Acad. Manag. Ann. 2020, *14*, 627–660. [Google Scholar] [CrossRef]

- Raisch, S.; Krakowski, S. Artificial intelligence and management: The automation–augmentation paradox. Acad. Manag. Rev. 2021, *46*, 192–210. [Google Scholar] [CrossRef]

- Jussupow, E.; Spohrer, K.; Heinzl, A.; Barrot, C. Augmenting medical diagnosis decisions? An investigation into physicians’ decision-making process with artificial intelligence. Inf. Syst. Res. 2021, *32*, 713–735. [Google Scholar] [CrossRef]

- Buçinca, Z.; Malaya, M.B.; Gajos, K.Z. To trust or to think: Cognitive forcing functions can reduce overreliance on AI. Proceedings of the CHI Conference on Human Factors in Computing Systems 2021, Article 139. [CrossRef]

- Bansal, G.; Wu, T.; Zhou, J.; Fok, R.; Nushi, B.; Kamar, E.; Weld, D.S.; Horvitz, E. Does the whole exceed its parts? The effect of AI explanations on complementary team performance. Proceedings of the CHI Conference on Human Factors in Computing Systems 2021, Article 81. [CrossRef]

- Köbis, N.; Bonnefon, J.-F.; Rahwan, I. Bad machines corrupt good morals. Nat. Hum. Behav. 2021, *5*, 679–685. [Google Scholar] [CrossRef] [PubMed]

- Burton, J.W.; Stein, M.-K.; Jensen, T.B. A systematic review of algorithm aversion. J. Behav. Decis. Mak. 2020, *33*, 220–239. [Google Scholar] [CrossRef]

- Siau, K.; Wang, W. Artificial intelligence (AI) ethics: Ethics of AI and ethical AI. J. Database Manag. 2020, *31*, 74–87. [Google Scholar] [CrossRef]

- Schmutz, J.B.; Outland, N.; Kerstan, S.; Georganta, E.; Ulfert, A.-S. AI-teaming: Redefining collaboration in the digital era. Curr. Opin. Psychol. 2024, *58*, 101837. [Google Scholar] [CrossRef] [PubMed]

- Woolley, A.W.; Gupta, P. Understanding collective intelligence: Investigating the role of collective memory, attention, and reasoning processes. Perspect. Psychol. Sci. 2024, *19*, 344–354. [Google Scholar] [CrossRef] [PubMed]

- van Knippenberg, D.; Pearce, C.L.; van Ginkel, W.P. Shared leadership–vertical leadership dynamics in teams. Group Organ. Manag. 2025, *50*, 44–67. [Google Scholar] [CrossRef]

- De Vincenzo, F.; Curșeu, P.L.; Chirilă, M. Collective forms of leadership and team cognition in work teams: A systematic and critical review. Acta Psychol. 2025, *259*, 105403. [Google Scholar] [CrossRef] [PubMed]

- Abson, E.; Schofield, P.; Kennell, J. Making shared leadership work: The importance of trust in project-based organisations. Int. J. Proj. Manag. 2024, *42*, 102567. [Google Scholar] [CrossRef]

- Ling, T.C.; Choong, Y.O.; Ng, L.P.; Lau, T.C. Beyond fairness: Exploring organizational citizenship behavior through the lens of self-efficacy and trust in principals. Humanit. Soc. Sci. Commun. 2025, *12*, 288. [Google Scholar] [CrossRef]

- El-Ashry, A.M.; Abdo, B.M.E.; Khedr, M.A.; El-Sayed, M.M.; Abdelhay, I.S.; Zeid, M.G.A. Mediating effect of psychological safety on the relationship between inclusive leadership and nurses’ absenteeism. BMC Nurs. 2025, *24*, 826. [Google Scholar] [CrossRef] [PubMed]

- Mohase, K.; Donald, F.; Israel, N. Inclusive leadership, psychological safety, and employee voice in remote and hybrid work employees. South Afr. J. Psychol. 2025, *55*, 1–14. [Google Scholar] [CrossRef]

- Wang, L.; Duan, X.; Wang, S.; Zhang, W. Generational diversity and team innovation: The roles of conflict and shared leadership. Front. Psychol. 2024, *15*, 1501633. [Google Scholar] [CrossRef] [PubMed]

- Maitlis, S.; Christianson, M. Sensemaking in organizations: Taking stock and moving forward. Acad. Manag. Ann. 2014, *8*, 57–125. [Google Scholar] [CrossRef]

- Floridi, L.; Cowls, J. A unified framework of five principles for AI in society. Harv. Data Sci. Rev. 2019, *1*(1). [Google Scholar] [CrossRef]

- Frankl, V.E. Man’s Search for Meaning. Beacon Press: Boston, 1959. (No DOI; classic work).

- Ibn Khaldun. The Muqaddimah: An Introduction to History. Translated by F. Rosenthal. Princeton University Press: Princeton, 1969 (original work published 1377). (No DOI; historical text). Rosenthal, F., Translator.

- Turchin, P. Ultrasociety: How 10,000 Years of War Made Humans the Greatest Cooperators on Earth. Beresta Books: Chaplin, 2016. (No DOI; academic monograph).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).