Figure 1.

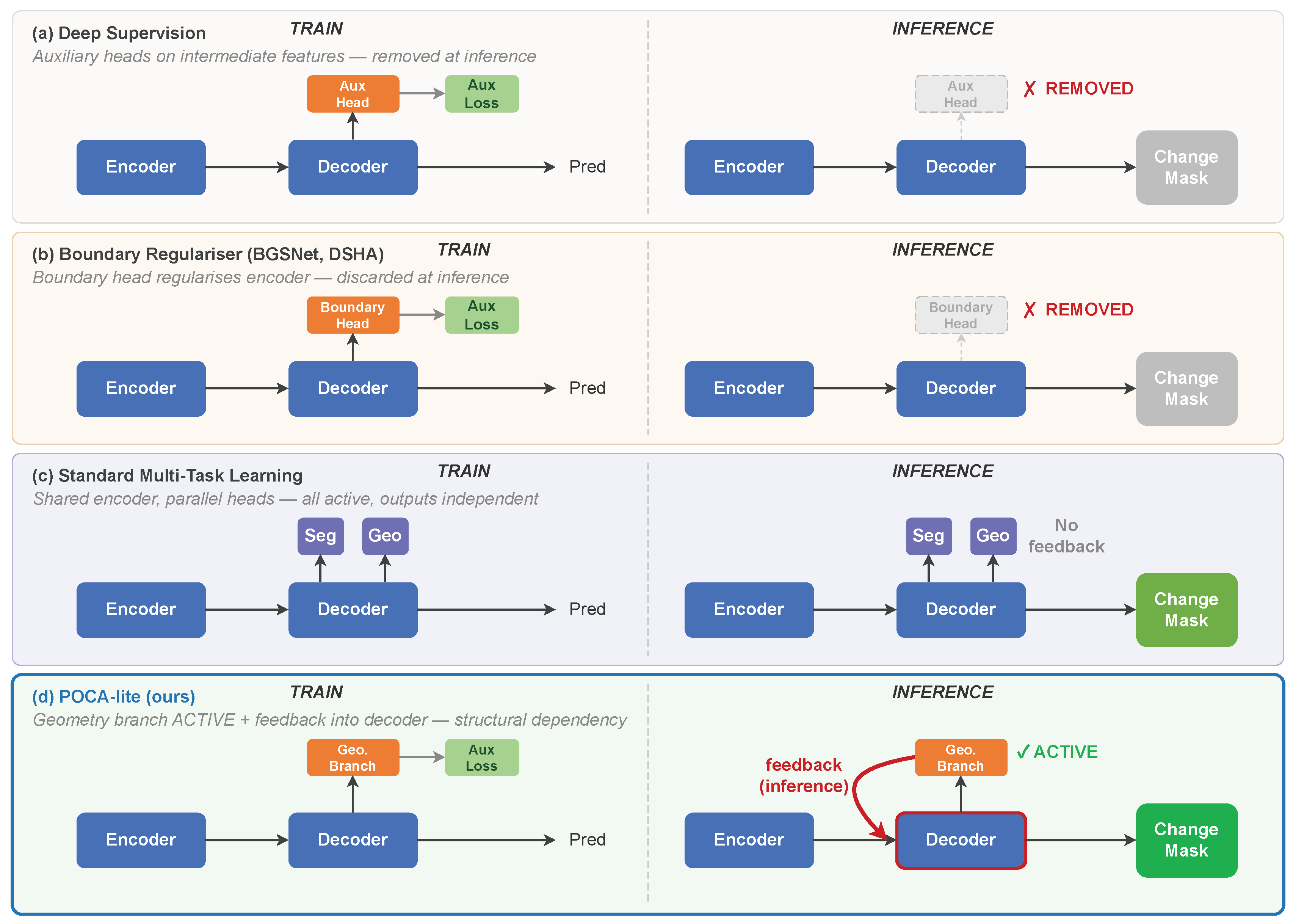

Comparison of four paradigms for incorporating geometric or auxiliary prediction heads. Train column (left of dashed line): all paradigms add prediction heads during training. Inference column (right): (a) deep supervision removes auxiliary heads; (b) boundary-aware regularisers (BGSNet, DSHA) discard geometric heads; (c) multi-task learning retains all heads but with independent outputs (no cross-task feedback); (d) POCA-lite (green background) feeds geometry branch predictions back into the decoder via a feedback pathway, creating a structural dependency: removing the geometry branch at inference collapses F1 from 0.8766 to 0.3104.

Figure 1.

Comparison of four paradigms for incorporating geometric or auxiliary prediction heads. Train column (left of dashed line): all paradigms add prediction heads during training. Inference column (right): (a) deep supervision removes auxiliary heads; (b) boundary-aware regularisers (BGSNet, DSHA) discard geometric heads; (c) multi-task learning retains all heads but with independent outputs (no cross-task feedback); (d) POCA-lite (green background) feeds geometry branch predictions back into the decoder via a feedback pathway, creating a structural dependency: removing the geometry branch at inference collapses F1 from 0.8766 to 0.3104.

Figure 2.

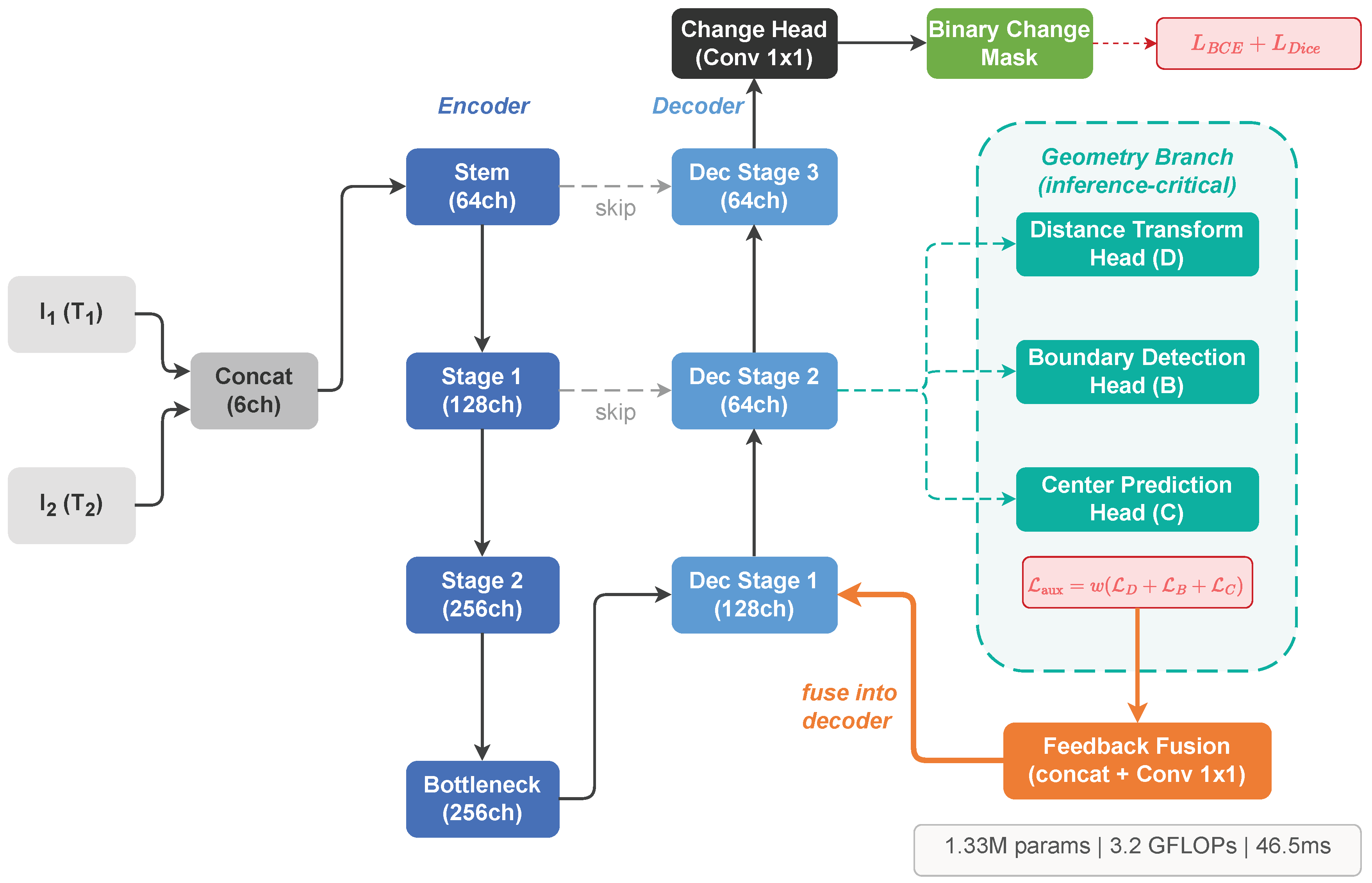

POCA-lite architecture overview. The encoder-decoder processes bi-temporal images concatenated at the input level. Three geometric auxiliary heads (distance transform, boundary detection, center prediction) provide dense supervision signals. Feedback fusion incorporates auxiliary predictions back into the decoder. Total parameters: 1.33M.

Figure 2.

POCA-lite architecture overview. The encoder-decoder processes bi-temporal images concatenated at the input level. Three geometric auxiliary heads (distance transform, boundary detection, center prediction) provide dense supervision signals. Feedback fusion incorporates auxiliary predictions back into the decoder. Total parameters: 1.33M.

Figure 3.

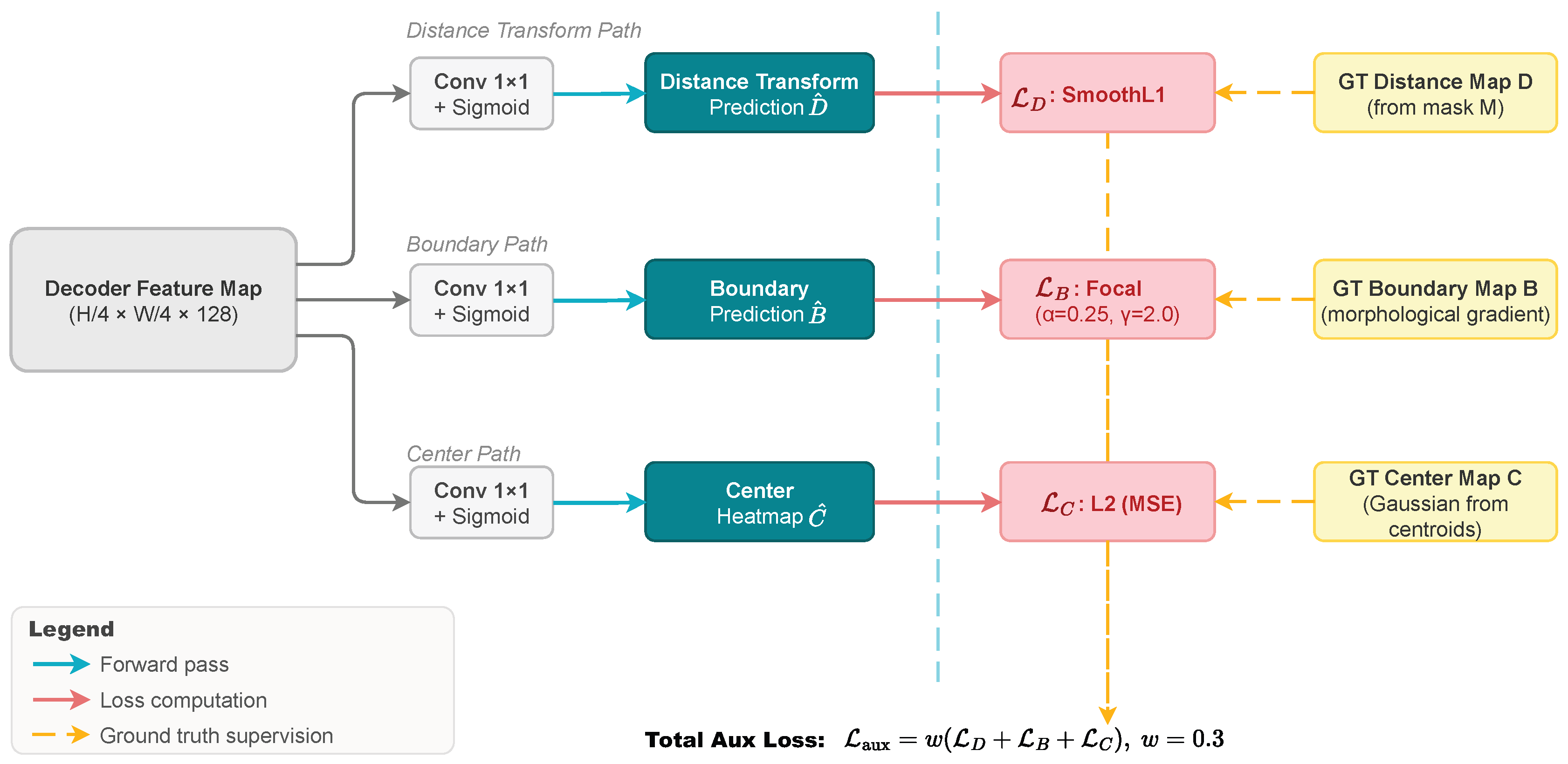

Auxiliary head mechanism. Three geometric auxiliary heads (distance transform, boundary detection, center prediction) receive features from decoder stages and compute auxiliary losses by comparing predictions with derived ground truth geometric maps.

Figure 3.

Auxiliary head mechanism. Three geometric auxiliary heads (distance transform, boundary detection, center prediction) receive features from decoder stages and compute auxiliary losses by comparing predictions with derived ground truth geometric maps.

Figure 4.

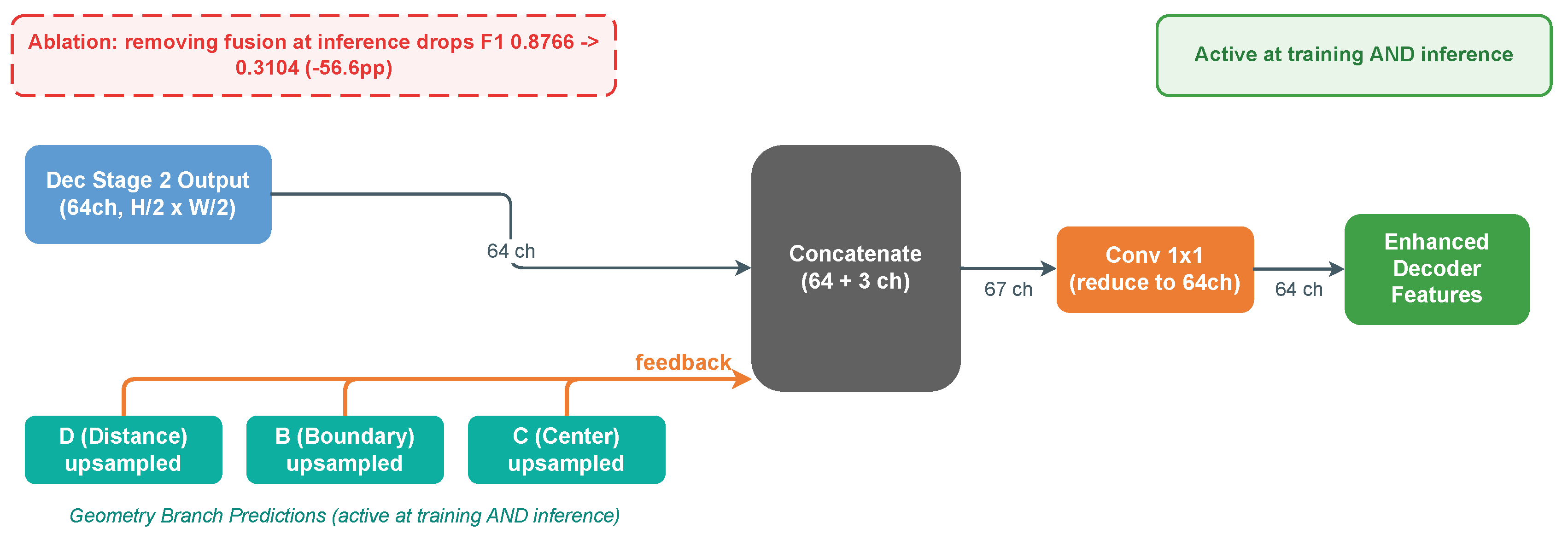

Feedback fusion mechanism. Auxiliary predictions are fused back into decoder stages during both training and inference, providing geometric context that enhances boundary delineation and change localization.

Figure 4.

Feedback fusion mechanism. Auxiliary predictions are fused back into decoder stages during both training and inference, providing geometric context that enhances boundary delineation and change localization.

Figure 5.

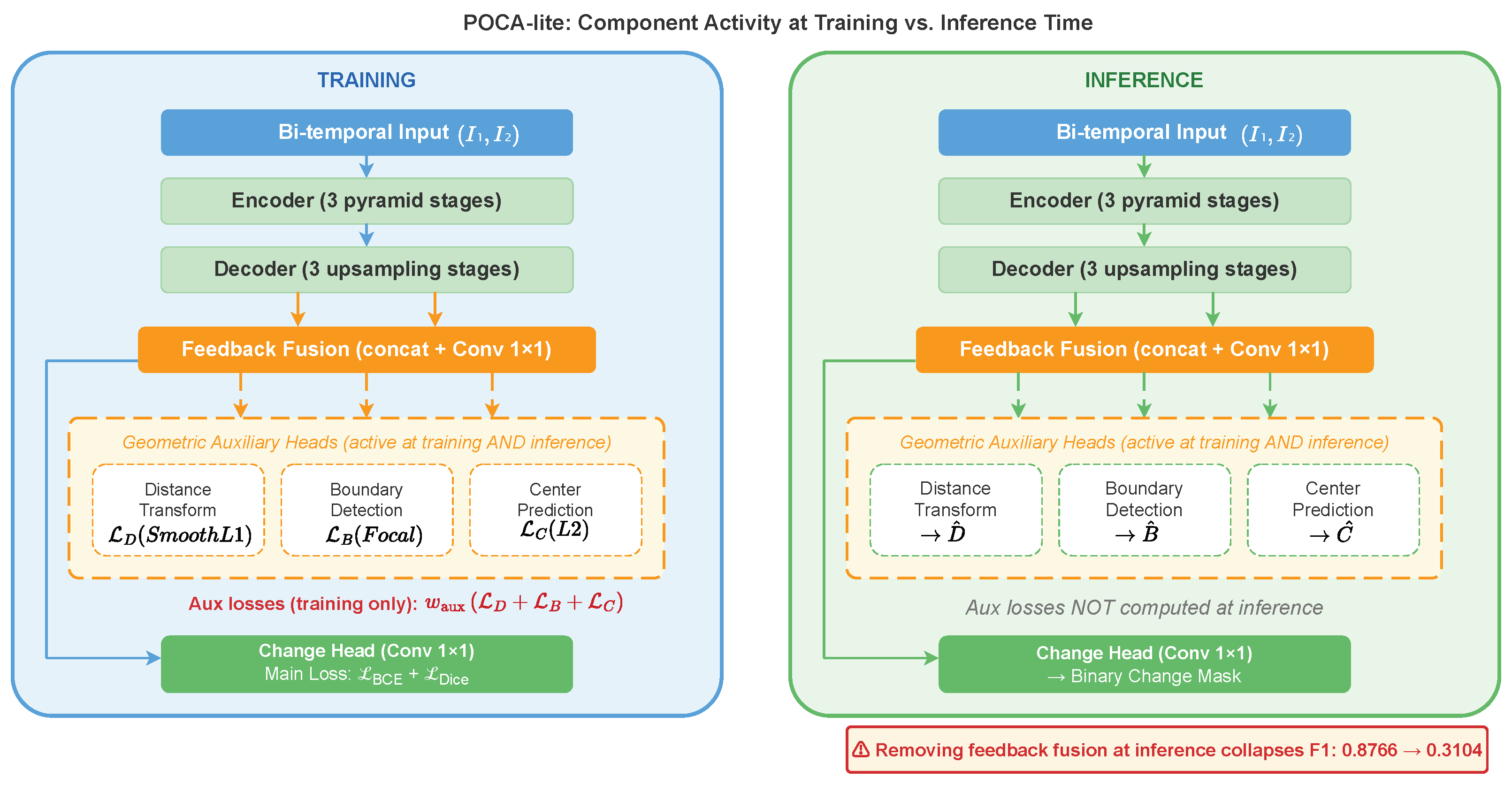

Side-by-side data-flow graph at training time (left, blue) vs. inference time (right, green). At both phases, the three geometric auxiliary heads produce predictions

,

,

that are fused back into the decoder via the feedback fusion module (orange). The auxiliary losses

are computed only during training; they are absent at inference. Removing the feedback fusion pathway at inference collapses F1 from 0.8766 to 0.3104 (see

Section 4.6).

Figure 5.

Side-by-side data-flow graph at training time (left, blue) vs. inference time (right, green). At both phases, the three geometric auxiliary heads produce predictions

,

,

that are fused back into the decoder via the feedback fusion module (orange). The auxiliary losses

are computed only during training; they are absent at inference. Removing the feedback fusion pathway at inference collapses F1 from 0.8766 to 0.3104 (see

Section 4.6).

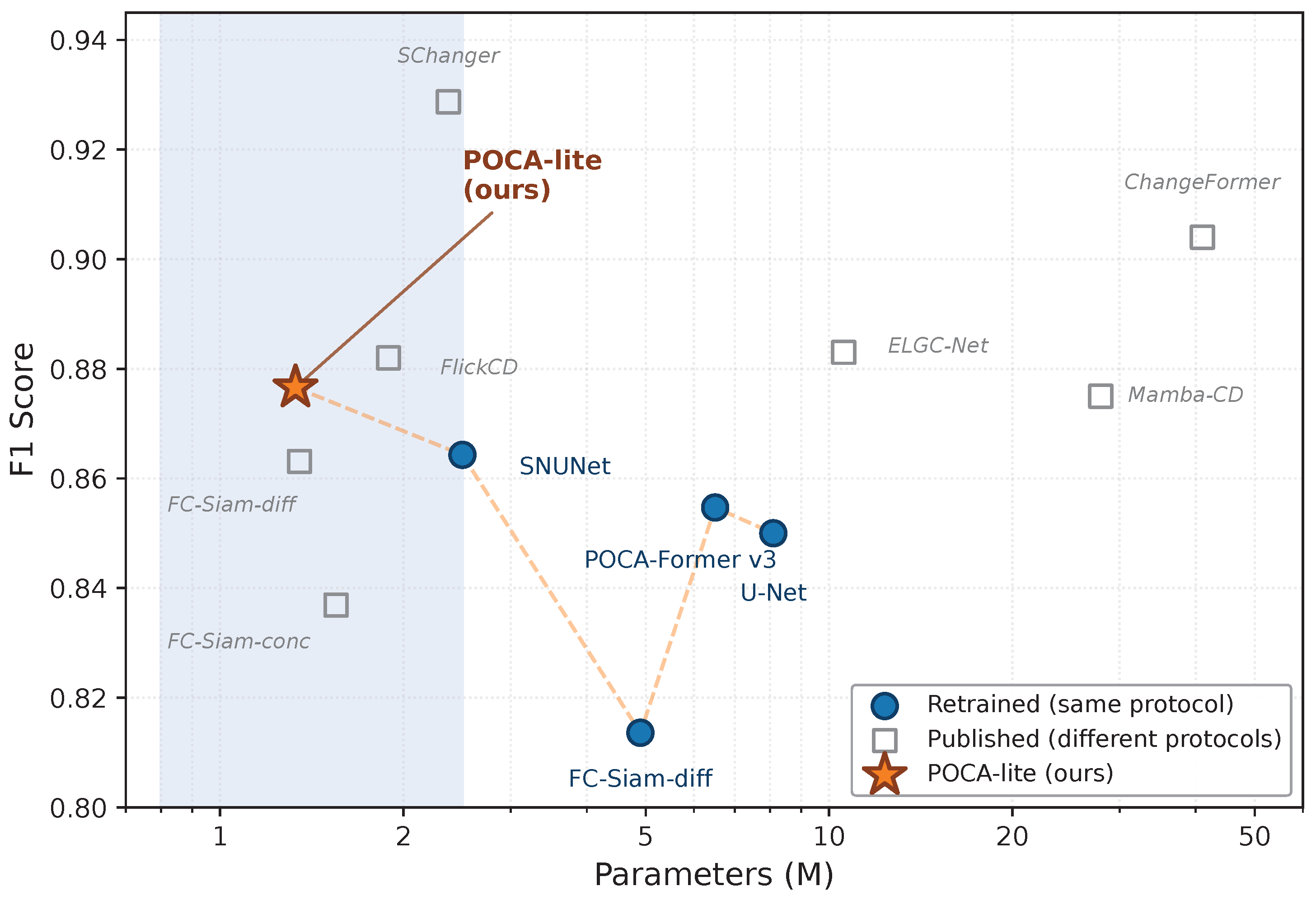

Figure 6.

Parameter count vs. F1 score on LEVIR-CD (seed=42).

Filled circles (blue): methods retrained under our unified protocol (Table 5) — only these points are directly comparable. Hollow squares (grey): published results from original papers under different training protocols and evaluation settings (Appendix,

Table A1); these are provided for broad context only and are

not directly comparable with filled-circle points. At seed=42, POCA-lite achieves the best F1 among retrained methods with the fewest parameters; multi-seed evaluation (

Table 7) shows the POCA-lite vs. SNUNet difference is not statistically significant.

Figure 6.

Parameter count vs. F1 score on LEVIR-CD (seed=42).

Filled circles (blue): methods retrained under our unified protocol (Table 5) — only these points are directly comparable. Hollow squares (grey): published results from original papers under different training protocols and evaluation settings (Appendix,

Table A1); these are provided for broad context only and are

not directly comparable with filled-circle points. At seed=42, POCA-lite achieves the best F1 among retrained methods with the fewest parameters; multi-seed evaluation (

Table 7) shows the POCA-lite vs. SNUNet difference is not statistically significant.

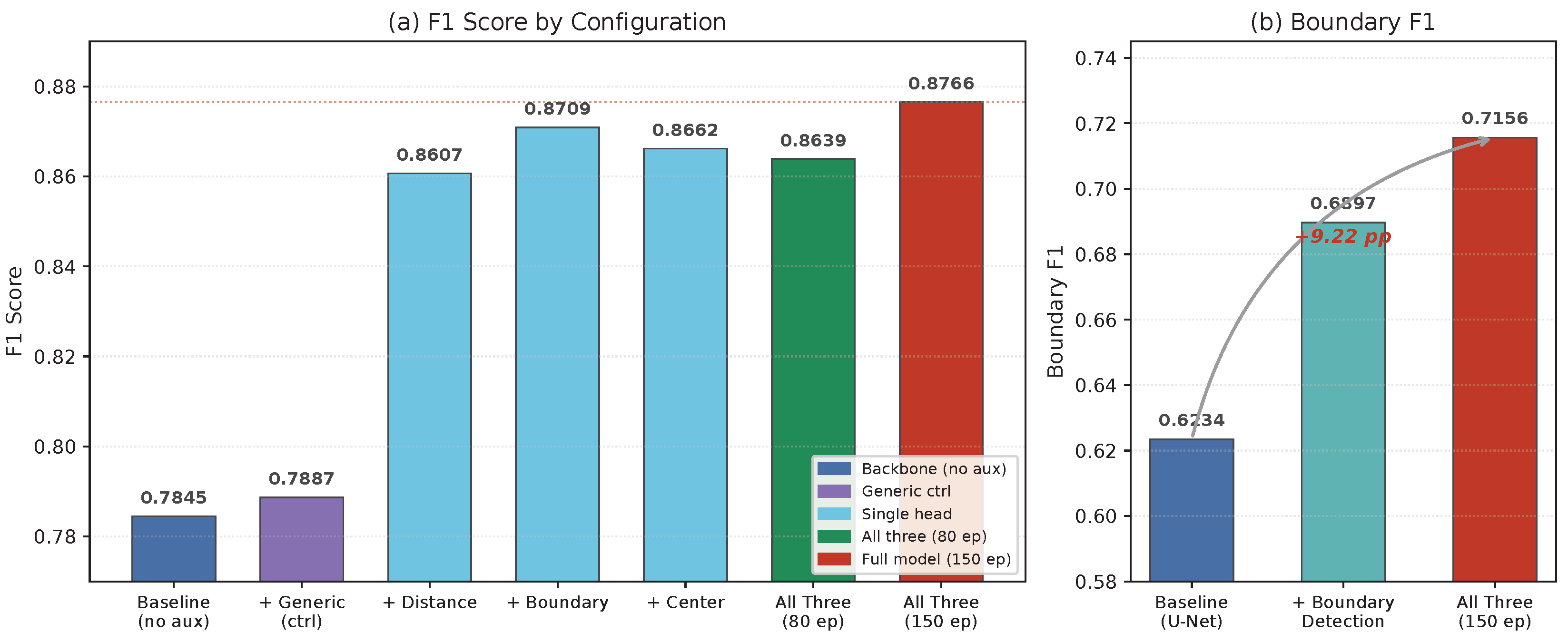

Figure 7.

Ablation study: F1 and boundary F1 progression as auxiliary heads are added. Left panel (a): F1 progression for all 7 configurations; the backbone baseline (no aux heads, F1=0.7845) is distinct from U-Net (F1=0.8500 in

Table 5). Right panel (b): boundary F1 at three key configurations, showing the +9.22pp improvement from backbone baseline (0.6234) to full model (0.7156). All configurations activate both heads and feedback fusion at inference (“Inf. Graph? = Yes”,

Table 9).

Figure 7.

Ablation study: F1 and boundary F1 progression as auxiliary heads are added. Left panel (a): F1 progression for all 7 configurations; the backbone baseline (no aux heads, F1=0.7845) is distinct from U-Net (F1=0.8500 in

Table 5). Right panel (b): boundary F1 at three key configurations, showing the +9.22pp improvement from backbone baseline (0.6234) to full model (0.7156). All configurations activate both heads and feedback fusion at inference (“Inf. Graph? = Yes”,

Table 9).

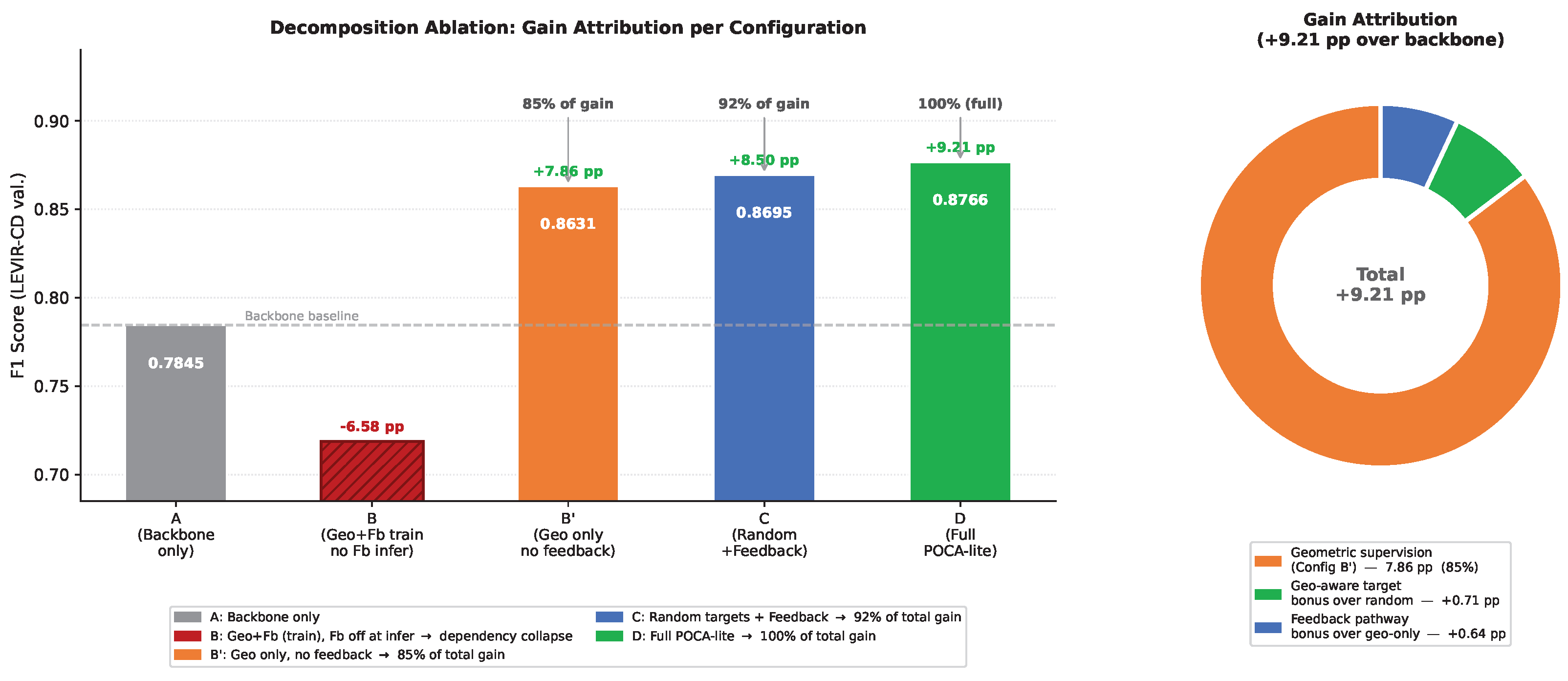

Figure 8.

Decomposition ablation results visualised. Left (bar chart): F1 for each configuration relative to the backbone baseline (A, dashed line). Configuration B collapses below baseline, confirming the structural dependency created by training with feedback but removing it at inference. Configurations B (geometric supervision, no feedback) and C (random targets + feedback) both independently recover 85% and 92% of the total gain, respectively, demonstrating complementarity. D (full POCA-lite) combines both for the maximum +9.21 pp. Right (donut):approximate decomposition of the +9.21 pp total gain under this ablation design (note: components are partially overlapping, so proportions indicate relative contribution rather than independent attribution). Geometric supervision (Config B) accounts for the dominant share (7.86 pp); the geometry-aware target bonus (D−C) and feedback pathway bonus (C−B) each contribute ∼0.65 pp.

Figure 8.

Decomposition ablation results visualised. Left (bar chart): F1 for each configuration relative to the backbone baseline (A, dashed line). Configuration B collapses below baseline, confirming the structural dependency created by training with feedback but removing it at inference. Configurations B (geometric supervision, no feedback) and C (random targets + feedback) both independently recover 85% and 92% of the total gain, respectively, demonstrating complementarity. D (full POCA-lite) combines both for the maximum +9.21 pp. Right (donut):approximate decomposition of the +9.21 pp total gain under this ablation design (note: components are partially overlapping, so proportions indicate relative contribution rather than independent attribution). Geometric supervision (Config B) accounts for the dominant share (7.86 pp); the geometry-aware target bonus (D−C) and feedback pathway bonus (C−B) each contribute ∼0.65 pp.

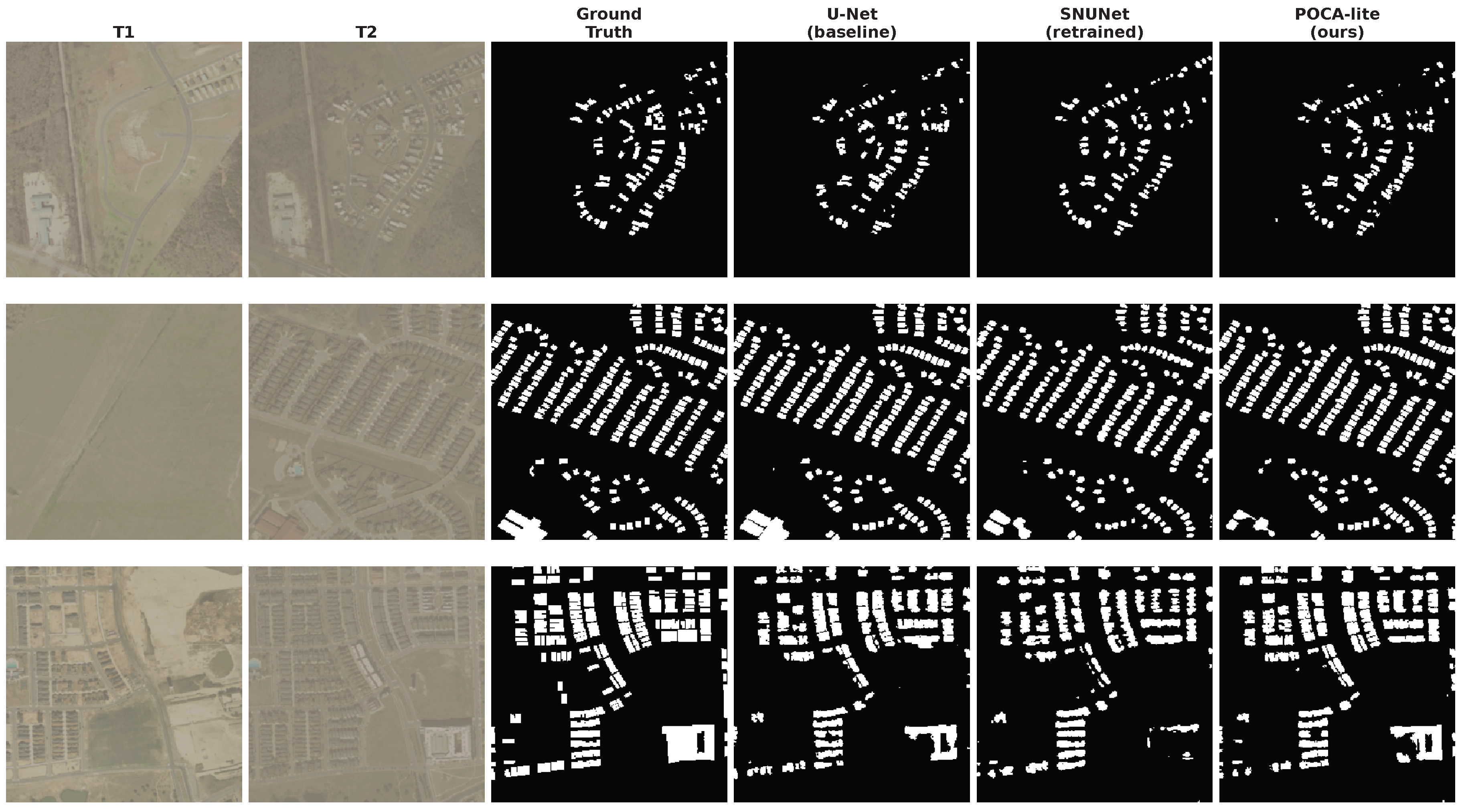

Figure 9.

Qualitative comparison on LEVIR-CD test set. Rows (top to bottom): thin/small structures, adjacent objects, dense changes. Columns: T1 input, T2 input, ground truth, U-Net baseline (8.1M), SNUNet (2.5M, retrained), POCA-lite (ours, 1.33M). POCA-lite produces sharper boundaries and better separation of adjacent change regions compared to both baselines.

Figure 9.

Qualitative comparison on LEVIR-CD test set. Rows (top to bottom): thin/small structures, adjacent objects, dense changes. Columns: T1 input, T2 input, ground truth, U-Net baseline (8.1M), SNUNet (2.5M, retrained), POCA-lite (ours, 1.33M). POCA-lite produces sharper boundaries and better separation of adjacent change regions compared to both baselines.

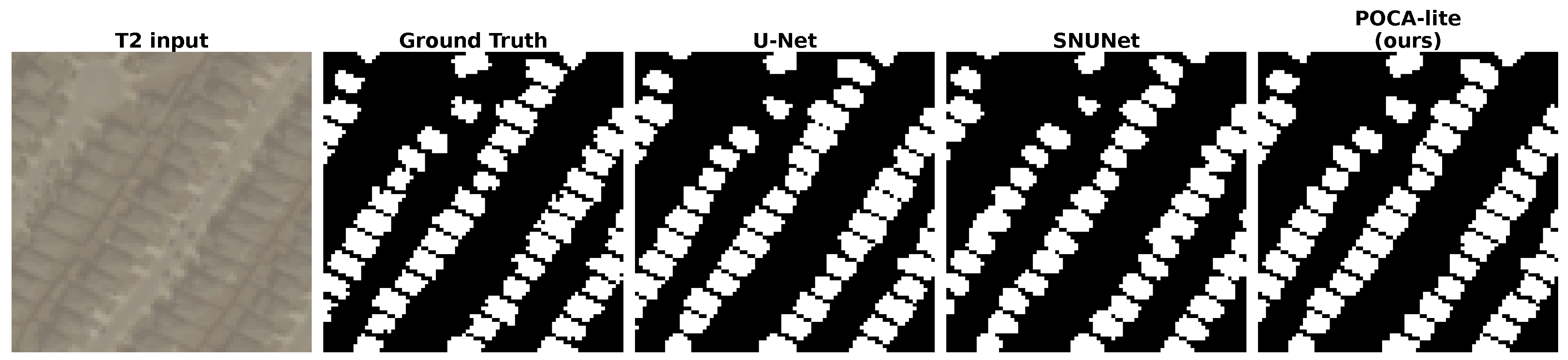

Figure 10.

Boundary detail zoom on the adjacent-objects case. Columns: T2 input, ground truth, U-Net prediction, SNUNet prediction, POCA-lite prediction. U-Net and SNUNet both tend to merge adjacent changed buildings into connected blobs, losing inter-building separation. POCA-lite produces cleaner contours and better preserves the separation between adjacent change regions, consistent with the quantitative boundary F1 improvement.

Figure 10.

Boundary detail zoom on the adjacent-objects case. Columns: T2 input, ground truth, U-Net prediction, SNUNet prediction, POCA-lite prediction. U-Net and SNUNet both tend to merge adjacent changed buildings into connected blobs, losing inter-building separation. POCA-lite produces cleaner contours and better preserves the separation between adjacent change regions, consistent with the quantitative boundary F1 improvement.

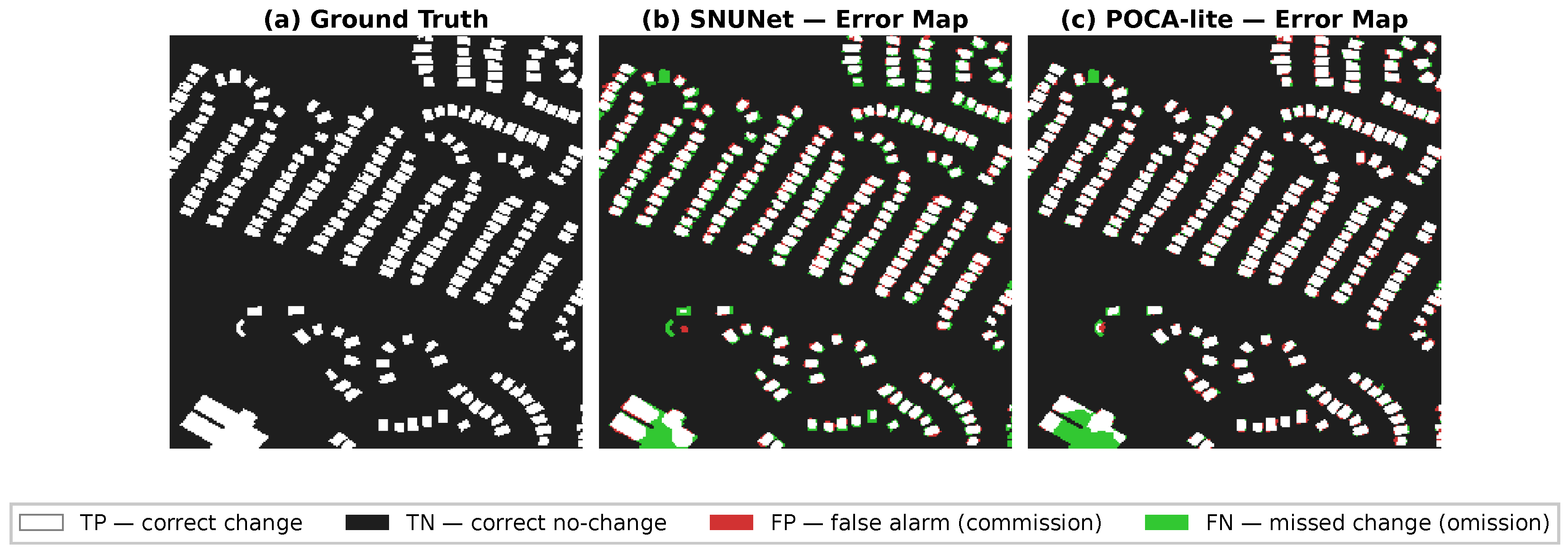

Figure 11.

Real FP/FN error-type visualization on the adjacent-objects test case. Left: ground truth change mask (white = changed, dark = unchanged). Center: SNUNet prediction error types. Right: POCA-lite prediction error types. Color coding: white = TP (correct change), dark = TN (correct no-change),

red = FP (false alarm / commission),

green = FN (missed change / omission). SNUNet produces substantial FP pixels in the gap between adjacent buildings, consistent with its tendency to merge structures. POCA-lite reduces this false-alarm zone and shows fewer omissions at building edges. These maps are generated from actual model predictions on the LEVIR-CD test set using the retrained checkpoints reported in

Table 5.

Figure 11.

Real FP/FN error-type visualization on the adjacent-objects test case. Left: ground truth change mask (white = changed, dark = unchanged). Center: SNUNet prediction error types. Right: POCA-lite prediction error types. Color coding: white = TP (correct change), dark = TN (correct no-change),

red = FP (false alarm / commission),

green = FN (missed change / omission). SNUNet produces substantial FP pixels in the gap between adjacent buildings, consistent with its tendency to merge structures. POCA-lite reduces this false-alarm zone and shows fewer omissions at building edges. These maps are generated from actual model predictions on the LEVIR-CD test set using the retrained checkpoints reported in

Table 5.

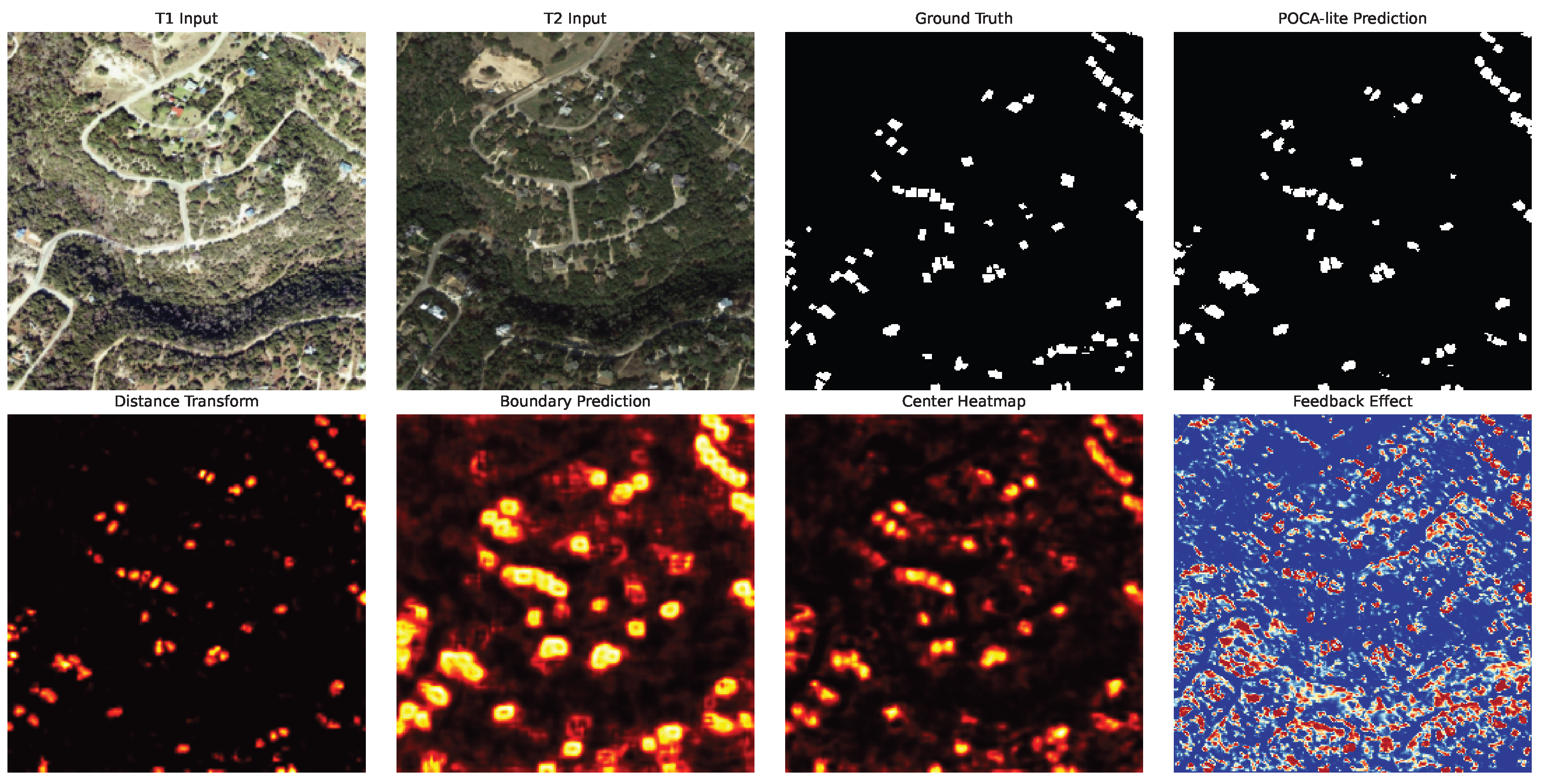

Figure 12.

Geometry branch output visualisation on a LEVIR-CD validation image. Top row: T1 input, T2 input, ground truth, POCA-lite prediction. Bottom row: distance transform prediction (hot colourmap), boundary prediction, center heatmap, and feedback effect (absolute difference between predictions with and without feedback fusion, red = large effect). The geometry branch provides complementary geometric cues that refine boundary delineation.

Figure 12.

Geometry branch output visualisation on a LEVIR-CD validation image. Top row: T1 input, T2 input, ground truth, POCA-lite prediction. Bottom row: distance transform prediction (hot colourmap), boundary prediction, center heatmap, and feedback effect (absolute difference between predictions with and without feedback fusion, red = large effect). The geometry branch provides complementary geometric cues that refine boundary delineation.

Table 1.

Structured comparison of representative change detection methods along three dimensions: architecture type, supervision form, and whether geometric components persist at inference. “Geom. Sup.” indicates geometry-aware auxiliary supervision. “Inf.-Critical Geom.” indicates whether geometric components remain active at inference time as architectural inputs (not just training regularisers).

Table 1.

Structured comparison of representative change detection methods along three dimensions: architecture type, supervision form, and whether geometric components persist at inference. “Geom. Sup.” indicates geometry-aware auxiliary supervision. “Inf.-Critical Geom.” indicates whether geometric components remain active at inference time as architectural inputs (not just training regularisers).

| Method |

Architecture |

Params (M) |

Supervision |

Geom. Sup. |

Inf.-Critical Geom. |

| FC-Siam-diff [5] |

CNN siamese |

1.35 |

Pixel (BCE) |

No |

No |

| SNUNet [6] |

Nested U-Net + attention |

2.50 |

Pixel (BCE+Dice) |

No |

No |

| BIT [7] |

Transformer siamese |

3.36 |

Pixel (BCE) |

No |

No |

| ChangeFormer [8] |

Hybrid CNN-Transformer |

41.03 |

Pixel (BCE) |

No |

No |

| Mamba-CD [9] |

State-space model |

27.94 |

Pixel (BCE) |

No |

No |

| BGSNet [11] |

CNN siamese + boundary |

– |

Pixel + boundary |

Yes |

No (train-only) |

| DSHA [12] |

Multi-scale boundary-aware |

– |

Pixel + boundary |

Yes |

No (train-only) |

| POCA-Former v3 (ours, prior) |

Instance-level set prediction |

6.50 |

Set (Hungarian) |

Implicit |

No |

| SChanger [13] |

Semantic-spatial consistency |

2.37 |

Pixel (BCE) |

No |

No |

| POCA-lite (ours) |

Geo. branch + feedback |

1.33 |

Pixel + geometry |

Yes |

Yes |

Table 2.

POCA-lite stage-by-stage architecture specification. Input patch size is ; the stem halves resolution to before the encoder stages.

Table 2.

POCA-lite stage-by-stage architecture specification. Input patch size is ; the stem halves resolution to before the encoder stages.

| Stage |

Operations |

Out Channels |

Out Resolution |

| Stem |

Conv , GN, GELU |

64 |

|

| Encoder Stage 1 |

Conv , GN, GELU |

64 |

|

| Encoder Stage 2 |

Conv stride-2, GN, GELU |

128 |

|

| Encoder Stage 3 |

Conv stride-2, GN, GELU |

256 |

|

| Decoder Stage 1 |

Bilinear Upsample, Cat, Conv , GN, GELU |

128 |

|

| Decoder Stage 2 |

Bilinear Upsample, Cat, Conv , GN, GELU |

64 |

|

| Main Head |

Conv

|

1 |

(bilinear up) |

| Distance / Boundary / Center Heads |

Conv

|

1 each |

|

Table 3.

Component activity during training and inference. The geometry branch (prediction heads + feedback fusion) is inference-critical, not a training-time regulariser. Removing feedback fusion at inference causes F1 to collapse from 0.8766 to 0.3104 (see ablation,

Section 4.6).

Table 3.

Component activity during training and inference. The geometry branch (prediction heads + feedback fusion) is inference-critical, not a training-time regulariser. Removing feedback fusion at inference causes F1 to collapse from 0.8766 to 0.3104 (see ablation,

Section 4.6).

| Component |

Training |

Inference |

Role |

| Encoder-Decoder backbone |

✔ |

✔ |

Feature extraction and change localization |

| Distance Transform Head |

✔ |

✔ |

Produces fused into decoder |

| Boundary Detection Head |

✔ |

✔ |

Produces fused into decoder |

| Center Prediction Head |

✔ |

✔ |

Produces fused into decoder |

| Feedback Fusion (Conv ) |

✔ |

✔ |

Concatenates with decoder features |

| Auxiliary losses

|

✔ |

× |

Gradient signal for auxiliary heads (training only) |

| Change Head (final Conv ) |

✔ |

✔ |

Produces binary change prediction |

Table 4.

Dataset statistics for LEVIR-CD and WHU-CD. Patch size refers to the crop size used during training. Change ratio is the approximate fraction of changed pixels in the training set.

Table 4.

Dataset statistics for LEVIR-CD and WHU-CD. Patch size refers to the crop size used during training. Change ratio is the approximate fraction of changed pixels in the training set.

| Dataset |

Resolution |

Image Size |

Patch Size |

Train |

Val |

Test |

| LEVIR-CD |

0.5 m/px |

1024×1024 |

256×256 |

445 |

64 |

128 |

| WHU-CD |

0.075 m/px |

varied |

256×256 |

5765 |

762 |

762 |

Table 5.

Fair comparison on LEVIR-CD test set (seed=42). All methods retrained under our unified protocol (AdamW, lr=1e-3, cosine schedule, 150 epochs, 256×256 patches). At this seed, POCA-lite achieves the highest F1 with the fewest parameters (1.33M); multi-seed evaluation (

Table 7) shows the POCA-lite vs. SNUNet difference is not statistically significant.

†BIT is designed for SGD optimization (original paper uses SGD with lr=0.01); its semantic tokenizer underperforms with AdamW.

‡POCA-Former v3 is our prior instance-level design (unpublished); it uses its own set prediction loss (Hungarian matching) rather than BCE+Dice. Overall Accuracy (OA) is not reported here because it is dominated by the majority unchanged class (>95% of pixels) and does not discriminate method quality on LEVIR-CD; F1 and IoU are the standard metrics for this imbalanced benchmark.

Note: This is the primary evidence table; see Appendix for published results under different protocols.

Table 5.

Fair comparison on LEVIR-CD test set (seed=42). All methods retrained under our unified protocol (AdamW, lr=1e-3, cosine schedule, 150 epochs, 256×256 patches). At this seed, POCA-lite achieves the highest F1 with the fewest parameters (1.33M); multi-seed evaluation (

Table 7) shows the POCA-lite vs. SNUNet difference is not statistically significant.

†BIT is designed for SGD optimization (original paper uses SGD with lr=0.01); its semantic tokenizer underperforms with AdamW.

‡POCA-Former v3 is our prior instance-level design (unpublished); it uses its own set prediction loss (Hungarian matching) rather than BCE+Dice. Overall Accuracy (OA) is not reported here because it is dominated by the majority unchanged class (>95% of pixels) and does not discriminate method quality on LEVIR-CD; F1 and IoU are the standard metrics for this imbalanced benchmark.

Note: This is the primary evidence table; see Appendix for published results under different protocols.

| Method |

Params (M) |

Precision |

Recall |

F1 |

IoU |

| U-Net (baseline) |

8.10 |

0.8521 |

0.8479 |

0.8500 |

0.7398 |

| FC-Siam-diff |

4.90 |

0.8340 |

0.7943 |

0.8136 |

0.6858 |

| BIT†

|

3.36 |

0.4405 |

0.6787 |

0.5343 |

0.3645 |

| SNUNet |

2.50 |

0.8984 |

0.8326 |

0.8643 |

0.7610 |

| POCA-Former v3‡ (ours, prior) |

6.50 |

0.8663 |

0.8435 |

0.8547 |

0.7463 |

| POCA-lite (ours) |

1.33 |

0.8801 |

0.8731 |

0.8766 |

0.7803 |

Table 6.

Efficiency comparison on LEVIR-CD. All methods evaluated on a single NVIDIA RTX 6000 Ada GPU (48 GB) at resolution, FP32 precision, batch size 1, averaged over 100 forward passes after 10 warm-up iterations. Latency includes the full inference graph: for POCA-lite, this includes the geometry branch and feedback fusion. FLOPs are computed via ptflops on the same input resolution. Peak GPU memory is measured during inference with torch.cuda.max_memory_allocated.

Table 6.

Efficiency comparison on LEVIR-CD. All methods evaluated on a single NVIDIA RTX 6000 Ada GPU (48 GB) at resolution, FP32 precision, batch size 1, averaged over 100 forward passes after 10 warm-up iterations. Latency includes the full inference graph: for POCA-lite, this includes the geometry branch and feedback fusion. FLOPs are computed via ptflops on the same input resolution. Peak GPU memory is measured during inference with torch.cuda.max_memory_allocated.

| Method |

Parameters (M) |

FLOPs (G) |

Latency (ms) |

Peak Mem. (MB) |

| U-Net (baseline) |

8.1 |

12.4 |

85.0 |

– |

| FC-Siam-diff |

4.9 |

9.2 |

62.0 |

– |

| POCA-Former v3 |

6.5 |

15.2 |

120.0 |

– |

| SNUNet (retrained) |

2.5 |

6.8 |

45.0 |

2614 |

| POCA-lite (ours) |

1.33 |

3.2 |

46.5 |

3694 |

Table 7.

Multi-seed evaluation results on LEVIR-CD with 150 epochs training budget. Each seed was trained independently with the same hyperparameters (AdamW, lr=1e-3, cosine schedule, batch=8). p-values from two-sample t-test (also confirmed by Mann–Whitney U test, ). “n.s.” = not significant. POCA-lite and SNUNet achieve statistically indistinguishable mean F1 scores, with POCA-lite showing higher variance across seeds.

Table 7.

Multi-seed evaluation results on LEVIR-CD with 150 epochs training budget. Each seed was trained independently with the same hyperparameters (AdamW, lr=1e-3, cosine schedule, batch=8). p-values from two-sample t-test (also confirmed by Mann–Whitney U test, ). “n.s.” = not significant. POCA-lite and SNUNet achieve statistically indistinguishable mean F1 scores, with POCA-lite showing higher variance across seeds.

| Method |

Seeds |

Mean F1 ± Std |

p-value vs. POCA-lite |

| POCA-lite (ours) |

5 |

|

— |

| SNUNet |

5 |

|

(n.s.) |

Table 8.

POCA-lite per-seed F1 scores on LEVIR-CD (150 epochs). The small standard deviation (0.0041) indicates stable performance across random initialisations.

Table 8.

POCA-lite per-seed F1 scores on LEVIR-CD (150 epochs). The small standard deviation (0.0041) indicates stable performance across random initialisations.

| Seed |

F1 Score |

| 42 |

0.8766 |

| 123 |

0.8680 |

| 456 |

0.8697 |

| 789 |

0.8647 |

| 1234 |

0.8666 |

| Mean ± Std |

|

Table 9.

Ablation study on LEVIR-CD validation set using the POCA-lite backbone (3-stage decoder, base_channels=60, 1.33M parameters). Note: the ablation “Baseline” (F1=0.7845) is the POCA-lite backbone without auxiliary heads, distinct from the U-Net baseline (8.1M params, F1=0.8500) in

Table 5. All ablation configurations trained for 80 epochs; the full model at 150 epochs achieves F1=0.8766. The “Inference Graph” column indicates whether the variant changes the test-time computation graph relative to the backbone-only baseline: all configurations that include auxiliary heads and feedback fusion alter inference (i.e., the feedback fusion pathway is active at test time).

Table 9.

Ablation study on LEVIR-CD validation set using the POCA-lite backbone (3-stage decoder, base_channels=60, 1.33M parameters). Note: the ablation “Baseline” (F1=0.7845) is the POCA-lite backbone without auxiliary heads, distinct from the U-Net baseline (8.1M params, F1=0.8500) in

Table 5. All ablation configurations trained for 80 epochs; the full model at 150 epochs achieves F1=0.8766. The “Inference Graph” column indicates whether the variant changes the test-time computation graph relative to the backbone-only baseline: all configurations that include auxiliary heads and feedback fusion alter inference (i.e., the feedback fusion pathway is active at test time).

| Configuration |

Inf. Graph? |

Precision |

Recall |

F1 |

Boundary F1 |

IoU |

| Baseline (no aux heads) |

– |

0.6987 |

0.8943 |

0.7845 |

0.6234 |

0.6454 |

| + Generic aux (control) |

Yes |

– |

– |

0.7887 |

– |

– |

| + Distance Transform |

Yes |

0.8759 |

0.8459 |

0.8607 |

0.6587 |

0.7554 |

| + Boundary Detection |

Yes |

0.8762 |

0.8657 |

0.8709 |

0.6897 |

0.7714 |

| + Center Prediction |

Yes |

0.8785 |

0.8543 |

0.8662 |

0.6421 |

0.7640 |

| + All Three (80 epochs) |

Yes |

– |

– |

0.8639 |

– |

– |

| + All Three (150 epochs) |

Yes |

0.8801 |

0.8731 |

0.8766 |

0.7156 |

0.7803 |

Table 10.

Decomposition ablation on LEVIR-CD validation set (150 epochs, seed=42). Each configuration is trained from scratch with consistent hyperparameters. Configuration A: POCA-lite backbone without geometry branch. B: geometric losses during training, feedback active during training but disabled at inference. B: geometric losses with feedback disabled at both training and inference (pure gradient signal). C: random targets with feedback active (isolates capacity + feedback pathway without geometric information). D: full POCA-lite.

Table 10.

Decomposition ablation on LEVIR-CD validation set (150 epochs, seed=42). Each configuration is trained from scratch with consistent hyperparameters. Configuration A: POCA-lite backbone without geometry branch. B: geometric losses during training, feedback active during training but disabled at inference. B: geometric losses with feedback disabled at both training and inference (pure gradient signal). C: random targets with feedback active (isolates capacity + feedback pathway without geometric information). D: full POCA-lite.

| Configuration |

Geo. Loss |

Feedback (Train) |

Feedback (Infer) |

F1 |

vs. A |

| A: Backbone only |

No |

No |

No |

0.7845 |

— |

| B: Geo-loss, fb train only |

Yes |

Yes |

No |

0.7187 |

−6.58 pp |

| B′: Geo-loss, no fb anywhere |

Yes |

No |

No |

0.8631 |

+7.86 pp |

| C: Random-loss + feedback |

Random |

Yes |

Yes |

0.8695 |

+8.50 pp |

| D: Full POCA-lite |

Yes |

Yes |

Yes |

0.8766 |

+9.21 pp |

Table 11.

Hyperparameter sensitivity on LEVIR-CD validation set. “Auxiliary weight” is the per-task weight; total auxiliary weight . Best results shown in bold.

Table 11.

Hyperparameter sensitivity on LEVIR-CD validation set. “Auxiliary weight” is the per-task weight; total auxiliary weight . Best results shown in bold.

| Parameter |

Value |

F1 |

| Auxiliary weight |

0.01 |

0.8543 |

| Auxiliary weight |

0.05 |

0.8632 |

| Auxiliary weight |

0.10 |

0.8705 |

| Auxiliary weight |

0.20 |

0.8678 |

| Auxiliary weight |

0.50 |

0.8512 |

| Binary threshold |

0.30 |

0.8612 |

| Binary threshold |

0.35 |

0.8678 |

| Binary threshold |

0.40 |

0.8705 |

| Binary threshold |

0.45 |

0.8691 |

| Binary threshold |

0.50 |

0.8623 |

| Binary threshold |

0.60 |

0.8514 |

Table 12.

Boundary-aware evaluation metrics on LEVIR-CD test set (seed=42). Boundary F1 is computed with a 2-pixel tolerance following the protocol in [

7]. At this seed, POCA-lite achieves the best boundary F1 among all compared methods.

Table 12.

Boundary-aware evaluation metrics on LEVIR-CD test set (seed=42). Boundary F1 is computed with a 2-pixel tolerance following the protocol in [

7]. At this seed, POCA-lite achieves the best boundary F1 among all compared methods.

| Method |

F1 |

Boundary F1 |

IoU |

Precision |

| U-Net (baseline) |

0.8500 |

0.6234 |

0.7398 |

0.8521 |

| FC-Siam-diff |

0.8136 |

0.5810 |

0.6858 |

0.8340 |

| SNUNet (retrained) |

0.8643 |

0.6523 |

0.7610 |

0.8984 |

| POCA-Former v3 |

0.8547 |

0.6387 |

0.7463 |

0.8663 |

| POCA-lite (ours) |

0.8766 |

0.7156 |

0.7803 |

0.8801 |

Table 13.

Per-image F1 stratified by scene properties on LEVIR-CD validation set (64 images). Per-image F1 differs from pixel-level F1 (

Table 5) because each image contributes equally regardless of change area.

Table 13.

Per-image F1 stratified by scene properties on LEVIR-CD validation set (64 images). Per-image F1 differs from pixel-level F1 (

Table 5) because each image contributes equally regardless of change area.

| Dimension |

Stratum |

POCA-lite F1 |

n |

| Change density |

Sparse (<2%) |

0.3974 |

27 |

| |

Low (2–5%) |

0.8123 |

17 |

| |

Medium (5–15%) |

0.8878 |

18 |

| |

Dense (>15%) |

0.9114 |

2 |

| Object count |

Few (1–2) |

0.3084 |

16 |

| |

Moderate (3–7) |

0.4935 |

8 |

| |

Many (8–14) |

0.7992 |

4 |

| |

Dense (15+) |

0.8407 |

36 |

| Compactness |

Irregular (<0.3) |

0.6546 |

59 |

| |

Moderate (0.3–0.6) |

0.7446 |

5 |

Table 14.

Within-dataset evaluation on WHU-CD: U-Net and SNUNet were retrained under our unified protocol. Results demonstrate the performance of each method on the dense urban building morphology of WHU-CD.

Table 14.

Within-dataset evaluation on WHU-CD: U-Net and SNUNet were retrained under our unified protocol. Results demonstrate the performance of each method on the dense urban building morphology of WHU-CD.

| Method |

Precision |

Recall |

F1 |

IoU |

| U-Net (baseline) |

0.7634 |

0.7411 |

0.7521 |

0.6027 |

| SNUNet (retrained) |

0.8161 |

0.7380 |

0.7751 |

0.6328 |

| POCA-lite (ours) |

0.7626 |

0.7353 |

0.7491 |

0.5989 |

Table 15.

Cross-dataset evaluation: POCA-lite trained on LEVIR-CD and evaluated on WHU-CD without fine-tuning, quantifying domain shift between the two benchmarks.

Table 15.

Cross-dataset evaluation: POCA-lite trained on LEVIR-CD and evaluated on WHU-CD without fine-tuning, quantifying domain shift between the two benchmarks.

| Metric |

F1 |

Precision |

Recall |

IoU |

| POCA-lite (LEVIR-CD → WHU-CD) |

0.2903 |

0.3170 |

0.2677 |

0.1698 |

Table 16.

Cross-architecture evaluation: geometric auxiliary supervision applied to different backbones on LEVIR-CD. All models trained under identical protocol (AdamW, lr=1e-3, cosine schedule, 150 epochs, seed=42). Geometric auxiliary supervision consistently improves both architectures, with SNUNet-GEO achieving +1.06pp F1 improvement.

†BIT baseline reflects severe optimizer sensitivity (see

Section 4); the cross-architecture conclusion rests primarily on the SNUNet-GEO comparison.

Table 16.

Cross-architecture evaluation: geometric auxiliary supervision applied to different backbones on LEVIR-CD. All models trained under identical protocol (AdamW, lr=1e-3, cosine schedule, 150 epochs, seed=42). Geometric auxiliary supervision consistently improves both architectures, with SNUNet-GEO achieving +1.06pp F1 improvement.

†BIT baseline reflects severe optimizer sensitivity (see

Section 4); the cross-architecture conclusion rests primarily on the SNUNet-GEO comparison.

| Method |

Params (M) |

F1 |

F1 (pp) |

| SNUNet (baseline) |

2.50 |

0.8643 |

— |

| SNUNet-GEO |

2.92 |

0.8749 |

+1.06 |

| BIT (baseline) |

3.36 |

0.5343†

|

— |

| BIT-GEO |

3.91 |

0.5437 |

+0.94 |

| POCA-lite (ours) |

1.33 |

0.8766 |

+1.23 vs SNUNet |