Submitted:

20 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

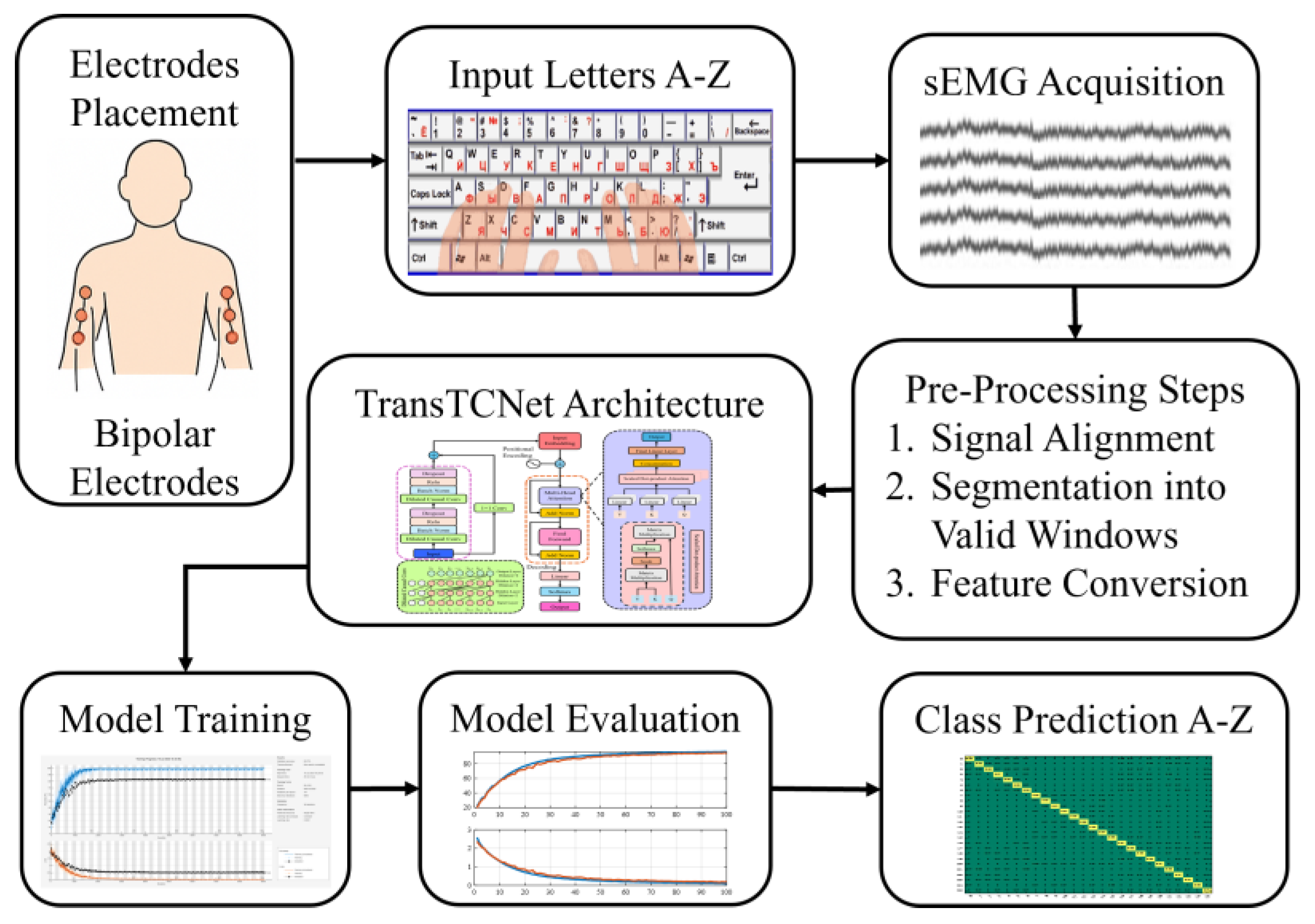

2. Materials and Methods

2.1. Dataset Description

2.1.1. Keyboard Typing sEMG Dataset

2.1.2. Participants

2.1.3. Data Structure

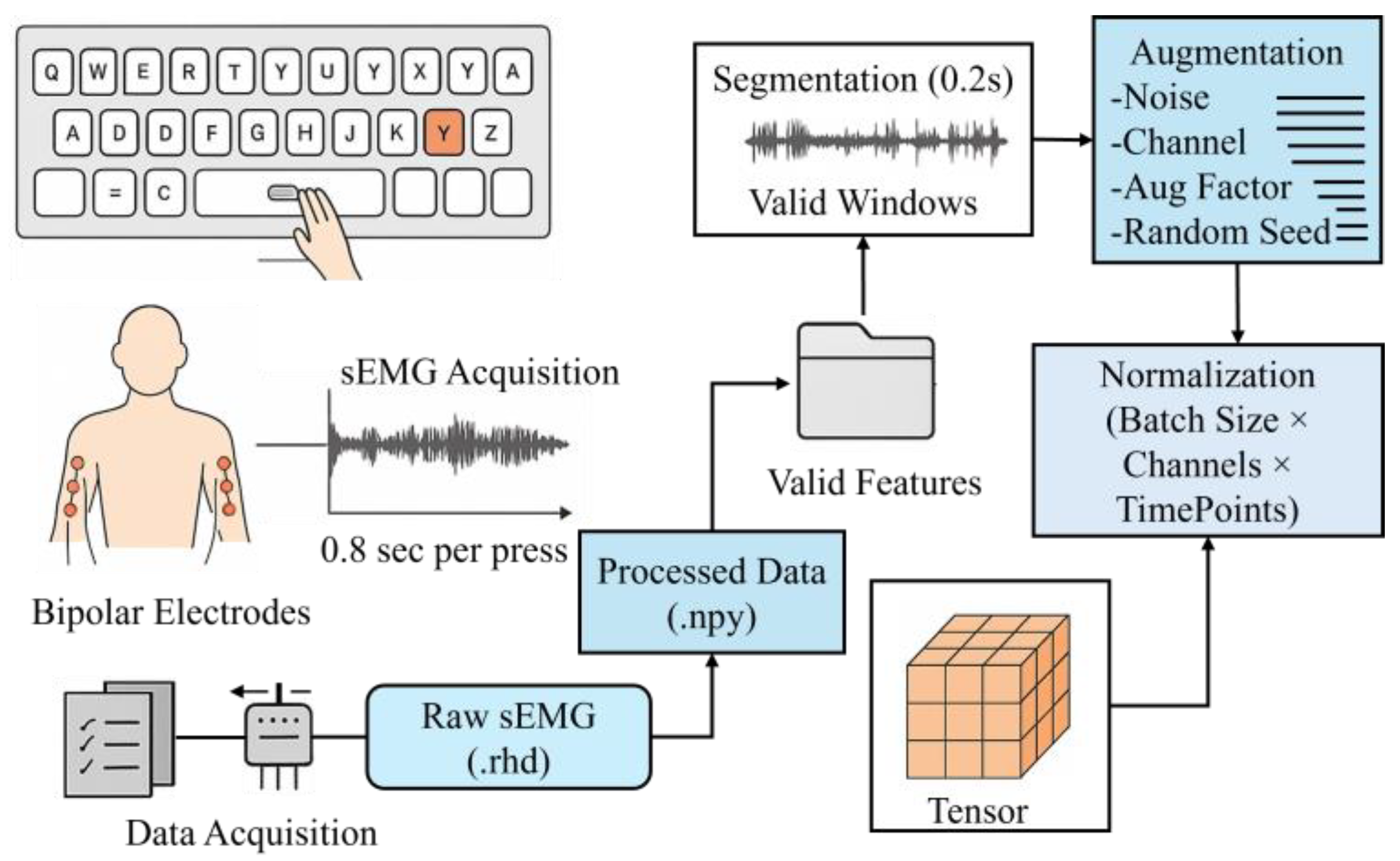

2.2. Data Preprocessing

2.2.1. Valid Session and Window Selection

2.2.2. Data Augmentation

2.2.3. Normalization and Tensor Formation

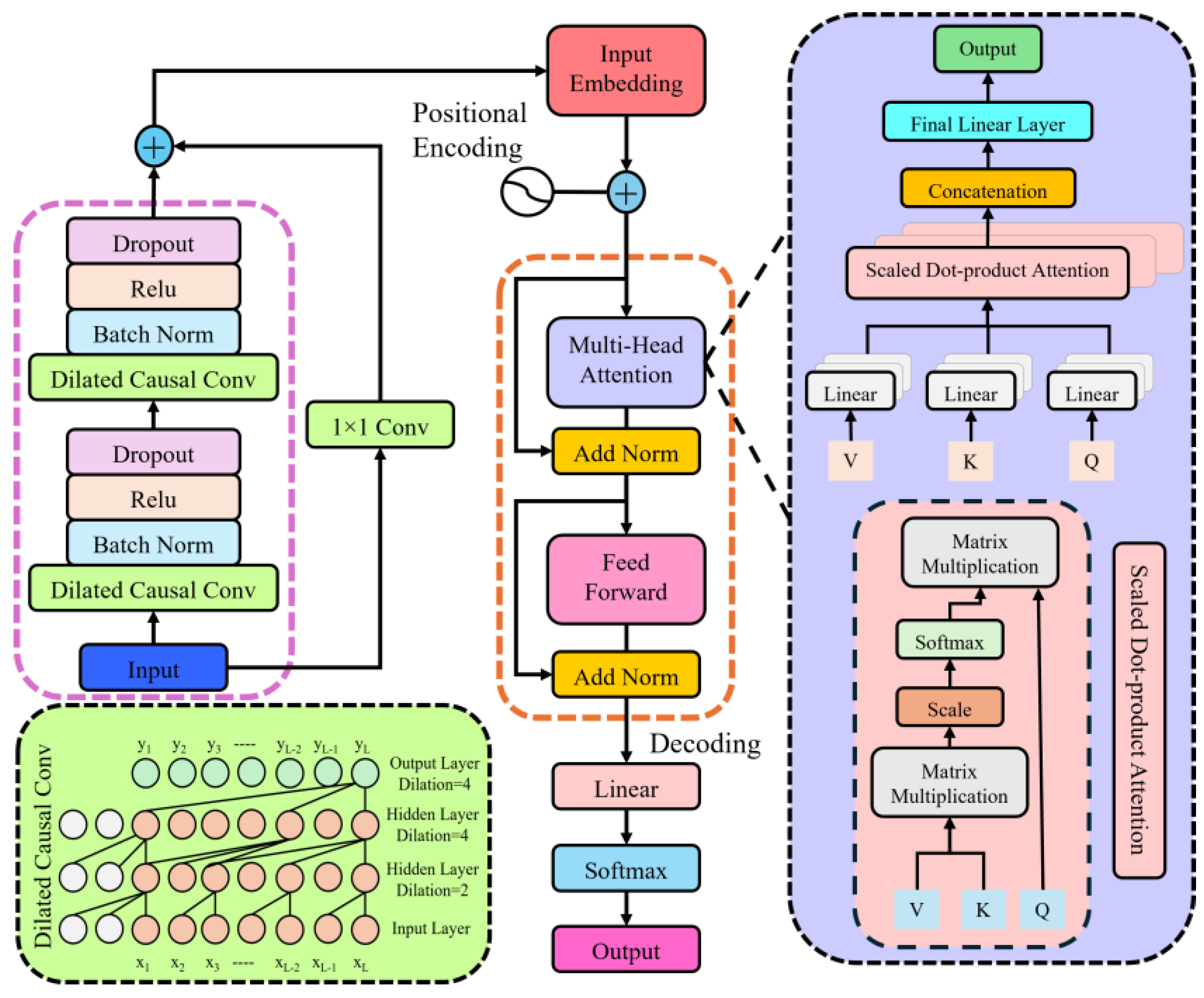

2.3. Neural Network Architecture

2.3.1. TransTCNet Pipeline

2.3.1.1. Local Temporal Feature Encoding

2.3.1.2. Global Contextual Sequence Modeling

| Algorithm: TransTCNet Training and Inference |

| Input: Preprocessed sEMG windows X, class labels y, learning rate η, number of epochs N, batch size B Output: Trained model parameters θ*, evaluation metrics 1. Initialize TransTCNet parameters θ 2. Initialize optimizer (Adam) and loss function (Cross-Entropy) 3. for epoch ← 1 to N, do 4. for each minibatch (Xb, yb) ∈ Dtrain, do 5. Zt ← DilatedCausalConv1D (Xb) 6. Zc ← Self Attention (Zt) 7. ŷ ← Softmax (Classifier (Zc)) 8. ← Cross Entropy (ŷ, yb) 9. θ ← θ − η ∇ θ end 10. Model Evaluation (accuracy, F1-score, etc.) end Return: Final trained model θ*, performance metrics |

3. Experimental Setup

3.1. Training and Validation Split

3.2. Hyperparameters and Evaluation Metrics

3.3. Hardware/Software

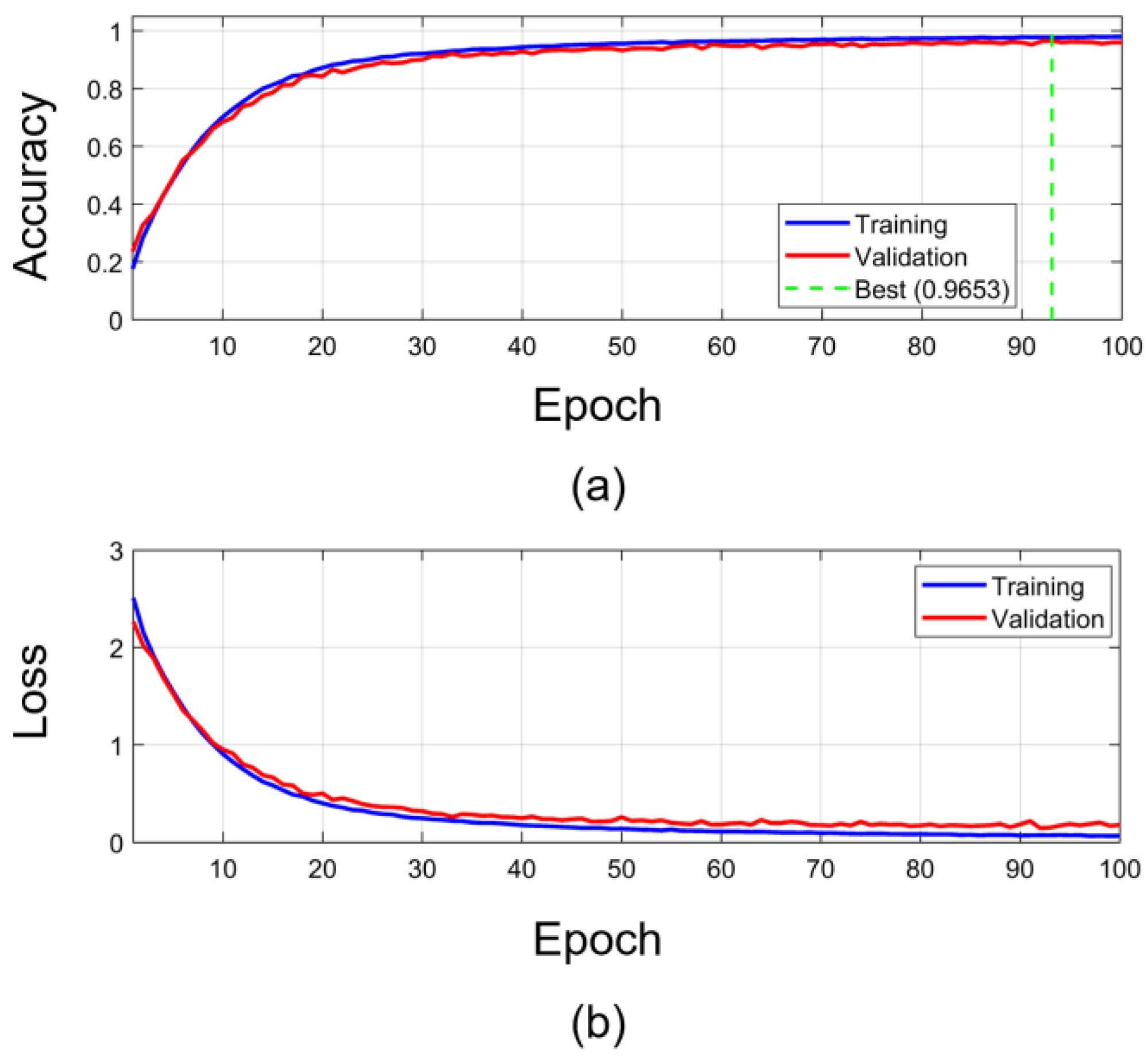

4. Results

4.1. Accuracy and Loss Curves

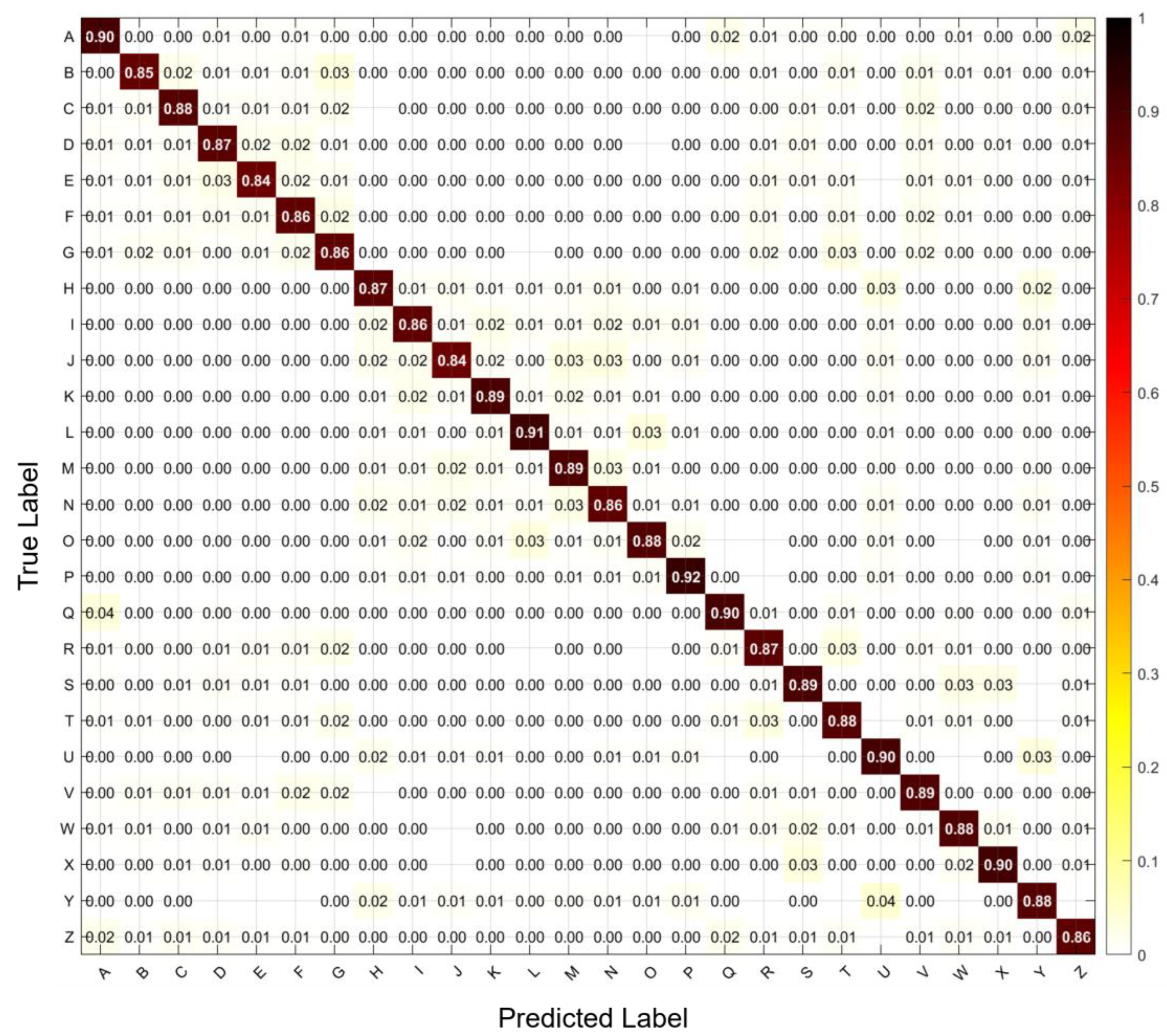

4.2. Confusion Matrix Analysis

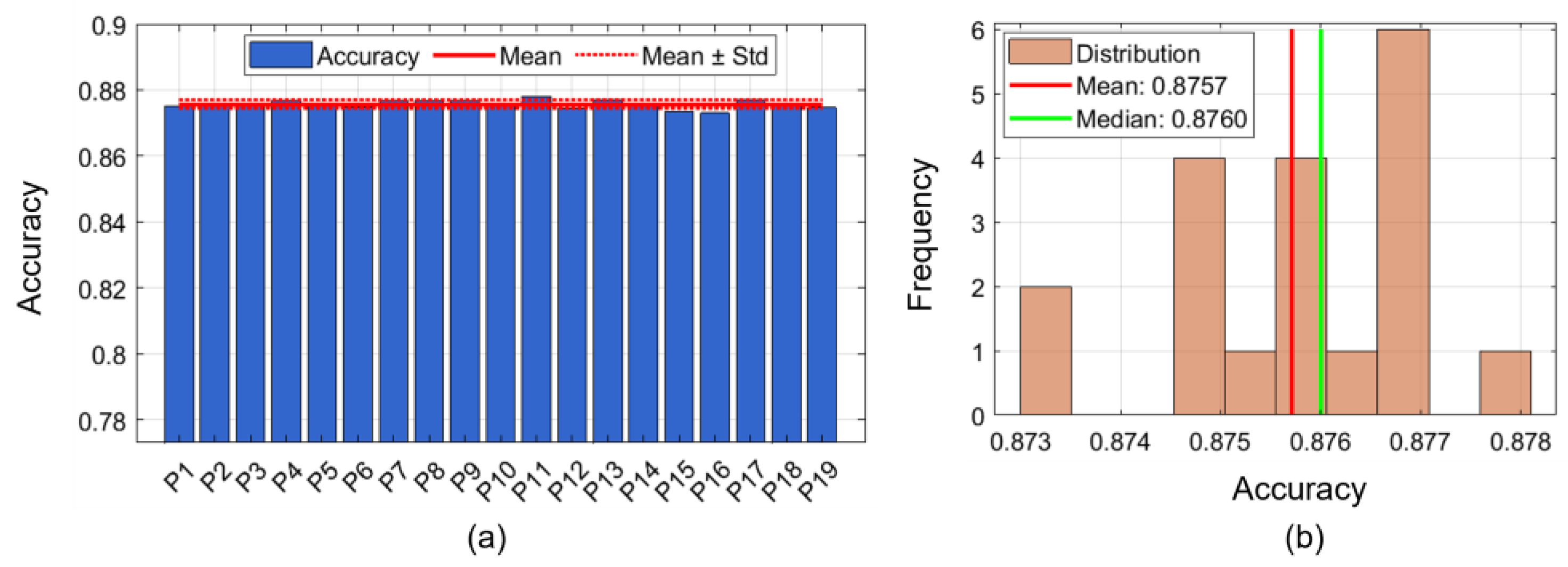

4.3. Participant-Wise Performance Analysis

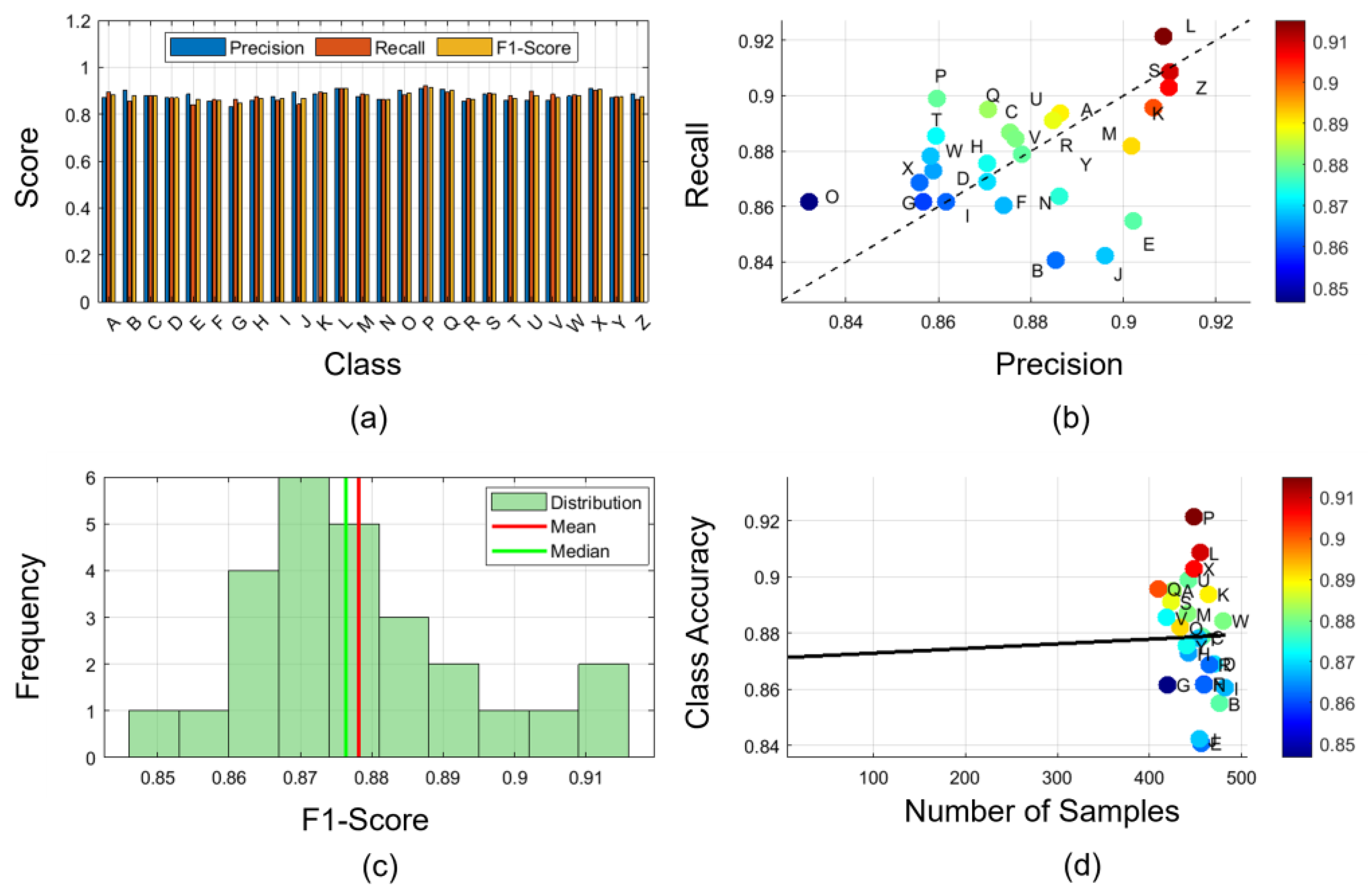

4.4. Class Level Performance

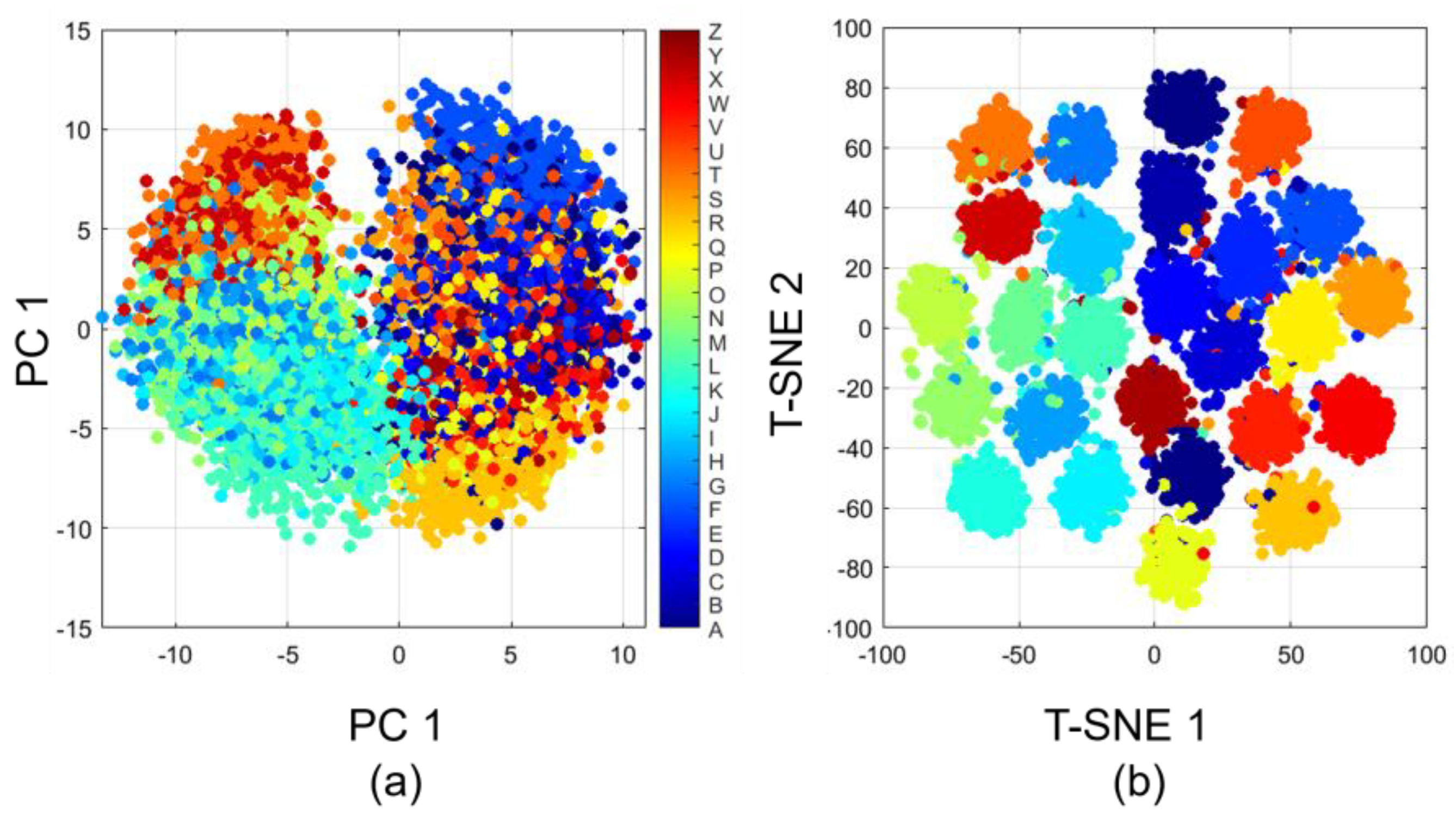

4.5. Feature Space Visualization

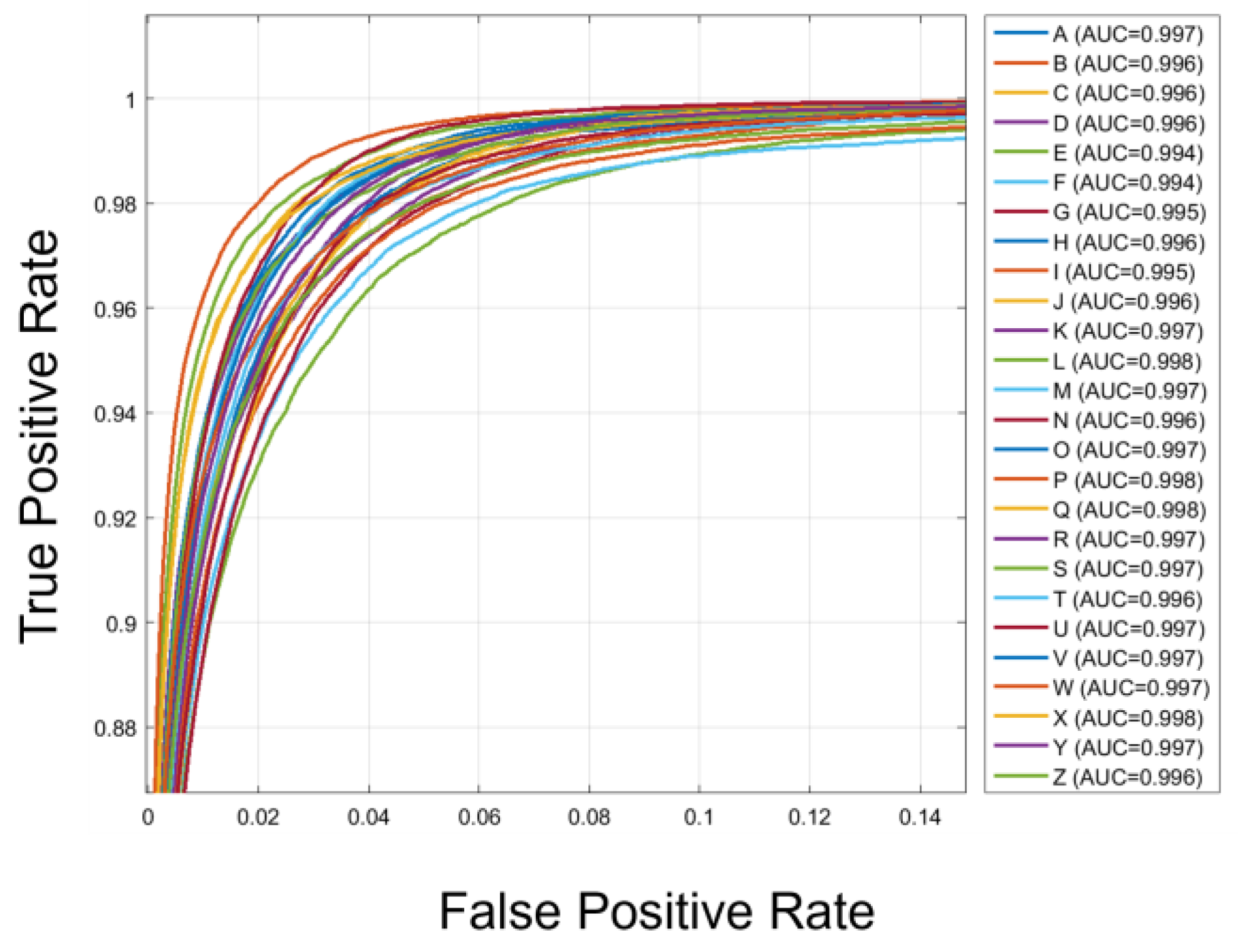

4.6. ROC Curve Analysis

4.7. Prediction Confidence and Calibration Analysis

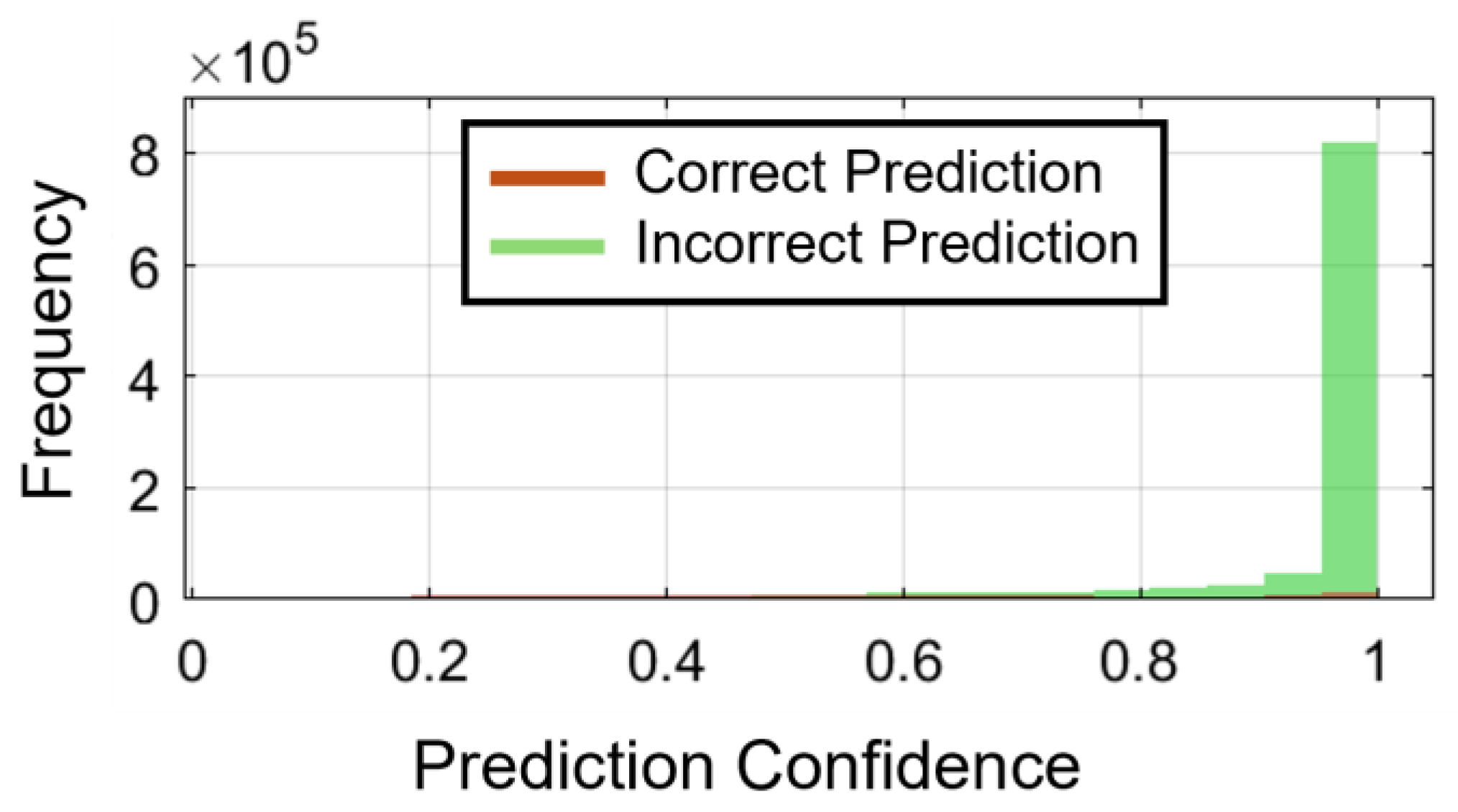

4.7.1. Confidence Distribution Analysis

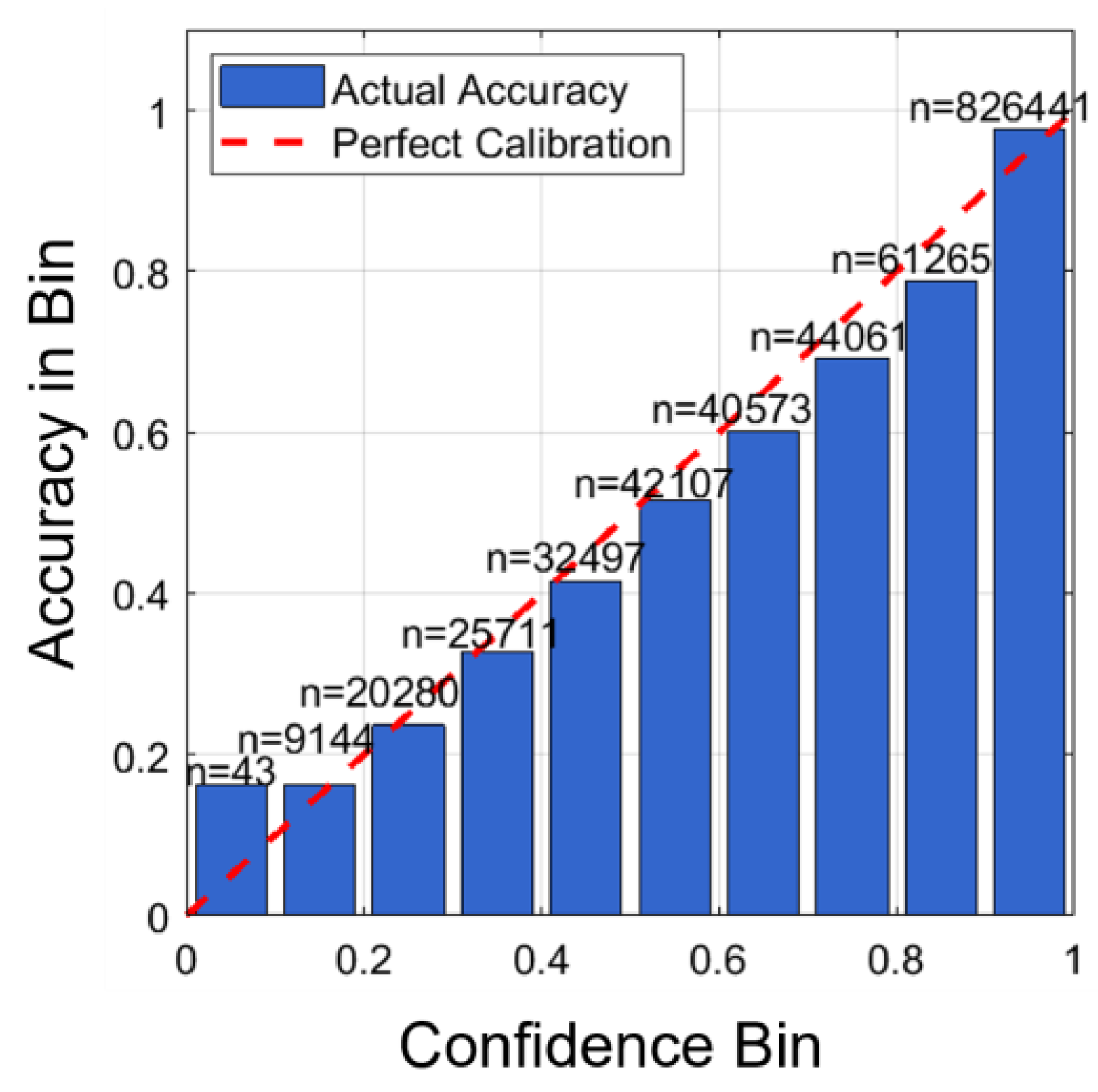

4.7.2. Model Calibration Assessment

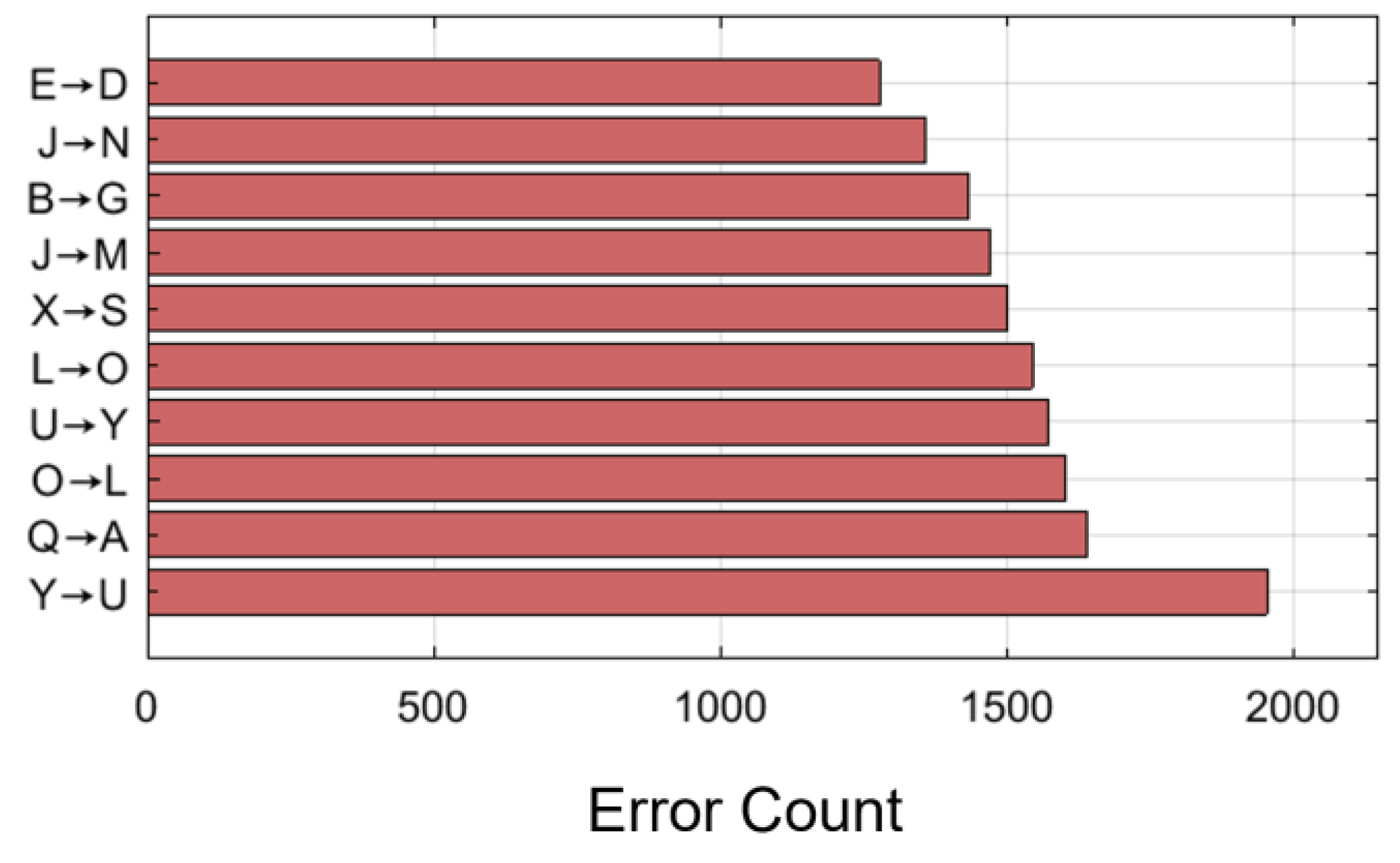

4.8. Error Pattern Analysis

4.9. Ablation Study

4.9.1. Baseline: 1D-CNN

4.9.2. + Temporal Module (Dilated Causal Convolutions)

4.9.3. + Global Context (Transformer Encoder)

4.9.4. TransTCNet: Full Architecture

4.9.5. Architectural Justification for Fine-Grained Discrimination

4.10. Comparison Models

4.11. Statistical Analysis

5. Limitations and Future Work

6. Conclusion

Author Contributions

Funding

Declaration of conflict of interest

References

- Yeo, A.; Kwok, B. W.; Joshna, A.; Chen, K.; Lee, J. S. J. E. Entering the next dimension: A review of 3d user interfaces for virtual reality. Electronics 2024, vol. 13(no. 3), 600. [Google Scholar] [CrossRef]

- Zheng, M.; Crouch, M. S.; Eggleston, M. S. J. I. S. J. Surface electromyography as a natural human–machine interface: a review. IEEE Sensors Journal 2022, vol. 22(no. 10), 9198–9214. [Google Scholar] [CrossRef]

- Zaim, T.; Abdel-Hadi, S.; Mahmoud, R.; Khandakar, A.; Rakhtala, S. M.; Chowdhury, M. E. J. B. Machine Learning-and Deep Learning-Based Myoelectric Control System for Upper Limb Rehabilitation Utilizing EEG and EMG Signals: A Systematic Review. Bioengineering 2025, vol. 12(no. 2), 144. [Google Scholar] [CrossRef] [PubMed]

- Eddy, E.; Campbell, E.; Bateman, S.; Scheme, E. J. F. i. B.; Biotechnology. Big data in myoelectric control: large multi-user models enable robust zero-shot EMG-based discrete gesture recognition. Frontiers in Bioengineering and Biotechnology 2024, vol. 12, 1463377. [Google Scholar] [CrossRef]

- Kyranou, I.; Szymaniak, K.; Nazarpour, K. J. S. D. EMG dataset for gesture recognition with arm translation. Scientific Data 2025, vol. 12(no. 1), 100. [Google Scholar] [CrossRef]

- Zhang, S.; Zhou, H.; Tchantchane, R.; Alici, G. J. I. A. T. o. M. A wearable human–machine-interface (HMI) system based on colocated EMG-pFMG sensing for hand gesture recognition. IEEE/ASME Transactions on Mechatronics 2024. [Google Scholar] [CrossRef]

- Ma, C.; Wang, C.; Zhu, D.; Chen, M.; Zhang, M.; He, J. J. J. o. P. R. The Investigation of the Relationship Between Individual Pain Perception, Brain Electrical Activity, and Facial Expression Based on Combined EEG and Facial EMG Analysis. Journal of Pain Research 2025, 21–32. [Google Scholar] [CrossRef]

- Adamov, L.; et al. Comparative analysis of electrical signals in facial expression muscles. BioMedical Engineering 2025, vol. 24(no. 1), 17. [Google Scholar] [CrossRef]

- Ullah, A.; et al. Surface Electromyography-Based Recognition of Electronic Taste Sensations. BioSensors 2024, vol. 14(no. 8), 396. [Google Scholar] [CrossRef]

- Salkanovic, A.; Sušanj, D.; Batistić, L.; Ljubic, S. J. S. Beyond Signatures: Leveraging Sensor Fusion for Contextual Handwriting Recognition. Sensors 2025, vol. 25(no. 7), 2290. [Google Scholar] [CrossRef] [PubMed]

- Tigrini, A.; et al. Intelligent human–computer interaction: combined wrist and forearm myoelectric signals for handwriting recognition. Bioengineering 2024, vol. 11(no. 5), 458. [Google Scholar] [CrossRef] [PubMed]

- Eby, J.; Beutel, M.; Koivisto, D.; Achituve, I.; Fetaya, E.; Zariffa, J. Electromyographic typing gesture classification dataset for neurotechnological human-machine interfaces. Scientific Data 2025, vol. 12(no. 1), 440. [Google Scholar] [CrossRef] [PubMed]

- Choudhury, N. A.; Soni, B. Enhanced complex human activity recognition system: A proficient deep learning framework exploiting physiological sensors and feature learning. IEEE Sensors Letters 2023, vol. 7(no. 11), 1–4. [Google Scholar] [CrossRef]

- Essa, E.; Abdelmaksoud, I. R. Temporal-channel convolution with self-attention network for human activity recognition using wearable sensors. Knowledge-Based Systems 2023, vol. 278, 110867. [Google Scholar] [CrossRef]

- Singh, S.; Choudhury, N. A.; Soni, B. Gait recognition using activities of daily livings and ensemble learning models. International Conference on Advances in IoT and Security with AI, 2023; Springer, 2023; pp. 195–206. [Google Scholar]

- Zhang, S.; et al. Deep learning in human activity recognition with wearable sensors: A review on advances. Sensors 2022, vol. 22(no. 4), 1476. [Google Scholar] [CrossRef]

- Demrozi, F.; Pravadelli, G.; Bihorac, A.; Rashidi, P. Human activity recognition using inertial, physiological and environmental sensors: A comprehensive survey. IEEE access 2020, vol. 8, 210816–210836. [Google Scholar] [CrossRef]

- Choudhury, N. A.; Soni, B. An efficient CNN-LSTM approach for smartphone sensor-based human activity recognition system. In 2022 5th International conference on computational intelligence and networks (CINE); IEEE, 2022; pp. 01–06. [Google Scholar]

- Putro, N. A. S.; Avian, C.; Prakosa, S. W.; Mahali, M. I.; Leu, J.-S. J. B. S. P.; Control. Estimating finger joint angles by surface EMG signal using feature extraction and transformer-based deep learning model. Biomedical Signal Processing and Control 2024, vol. 87, 105447. [Google Scholar] [CrossRef]

- Ullah, A.; Song, Z.; Riaz, W.; Qi, X.; Hossain, M. M. Hand Gesture-Based Biometric Verification and Identification Using Embedded-STQNet Deep Neural Architecture in Security-Oriented Systems. IEEE Internet of Things Journal 2026. [Google Scholar] [CrossRef]

- Al-Qaness, M. A. A.; Dahou, A.; Abd Elaziz, M.; Helmi, A. M. Multi-ResAtt: Multilevel residual network with attention for human activity recognition using wearable sensors. IEEE Transactions on Industrial Informatics 2022, vol. 19(no. 1), 144–152. [Google Scholar] [CrossRef]

- Hnoohom, N.; Jitpattanakul, A.; You, I.; Mekruksavanich, S. Deep learning approach for complex activity recognition using heterogeneous sensors from wearable device. In 2021 Research, Invention, and Innovation Congress: Innovation Electricals and Electronics (RI2C); IEEE, 2021; pp. 60–65. [Google Scholar]

- Choudhury, N. A.; Moulik, S.; Roy, D. S. J. I. S. J. Physique-based human activity recognition using ensemble learning and smartphone sensors. IEEE Sensors Journal 2021, vol. 21(no. 15), 16852–16860. [Google Scholar] [CrossRef]

- Choudhury, N. A.; Soni, B. J. I. S. J. An efficient and lightweight deep learning model for human activity recognition on raw sensor data in uncontrolled environment. IEEE Sensors Journal 2023, vol. 23(no. 20), 25579–25586. [Google Scholar] [CrossRef]

- Pradhan, A. Electromyography-based Biometrics for Secure and Robust Personal Identification and Authentication. Doctoral dissertation, University of Waterloo, 2024. [Google Scholar]

- Ullah, A.; Song, Z.; Riaz, W.; Wang, Y. GTMH-TasteNet: Advanced Deep Learning for sEMG-Based Taste Sensation Recognition. Tsinghua Science and Technology 2025. [Google Scholar] [CrossRef]

- M. Pourmokhtari and B. J. P. o. t. I. o. M. E. Beigzadeh, Part H: Journal of Engineering in Medicine, "Simple recognition of hand gestures using single-channel EMG signals,". Proceedings of the Institution of Mechanical Engineers, Part H: Journal of Engineering in Medicine 2024, vol. 238(no. 3), 372–380. [CrossRef]

- Liu, G.; et al. Kinetic and Kinematic Sensors-free Approach for Estimation of Continuous Force and Gesture in sEMG Prosthetic Hands. arXiv 2024, arXiv:2407.00014. [Google Scholar]

- Taheri, M.; Omranpour, H. J. B. S. P.; Control. Breast cancer prediction by ensemble meta-feature space generator based on deep neural network. Biomedical Signal Processing and Control 2024, vol. 87, 105382. [Google Scholar] [CrossRef]

- Wetzel, S. J.; Ha, S.; Iten, R.; Klopotek, M.; Liu, Z. J. a. p. a. Interpretable machine learning in physics: A review. arXiv preprint arXiv 2025. [Google Scholar]

| Hyperparameter | Value |

| Batch Size | 32 |

| Learning Rate | 1×10⁻⁴ |

| Optimizer | Adam (β₁=0.9, β₂=0.999, ε=10⁻⁸) |

| Epochs | 100 |

| Augmentation Factor | 3 |

| Train/Validation Split | 80:20 |

| Model Architecture | Transformer Encoder |

| Input Channels | 16 |

| Embedding Dimension (d_model) | 128 |

| Attention Heads | 8 |

| Transformer Layers | 4 |

| Dropout Rate | 0.1 |

| Positional Encoding | Learned |

| Feedforward Dimension | 512 |

| Loss Function | Cross-Entropy |

| Gradient Clipping | 1.0 |

| Weight Initialization | Xavier Uniform |

| Model Parameters | 849,818 |

| Training Time | 1.51 hours |

| Model Variant | Architectural Components | Validation Accuracy (%) |

| Baseline | Standard 1D convolutional layers | 48.66 |

| + Temporal Module | Dilated causal convolutions (dilation=2,4) | 72.67 |

| + Global Context | Multi-head self-attention encoder | 88.39 |

| TransTCNet (Full) | Both temporal + global components integrated | 96.53 |

| Model | Accuracy (%) | Comments |

| SVM + Handcrafted Features | 87.4 ± 2.5 | Baseline model using RMS, LOGVAR, WL, WAMP, ZC, AR1, AR2 |

| SVM (Excl. spacebar class) | 90.2 ± 2.1 | 26-class classification (A–Z only) |

| MLP (FedAvg) | 53.3 ± 0.92 | Shared model, no personalization |

| MLP (FedPer) | 66.58 ± 1.01 | Personalized classifier layers |

| MLP (pFedGP) | 74.49 ± 0.72 | Gaussian process with personalized heads |

| TransTCNet (Current model) | 94.72% ± 0.31 | Short-range temporal dependencies and long-range contextual patterns |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).