Submitted:

20 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

- The design of a Deep Q-Network (DQN) agent capable of modeling the cluster state and dynamically selecting the optimal lightweight cryptographic algorithm from a pool of candidates (Chacha20, Rabbit, NOEKEON, AES-CTR).

- 2.

- The seamless integration of this AI engine into the existing MR-LWT architecture, with a reinforcement learning feedback loop.

- 3.

- The experimental validation of the approach on files ranging from 1 MB to 1 GB, demonstrating significant performance gains compared to static approaches.

2. State of the Art (2023–2026)

2.1. Lightweight Cryptography and Big Data Environment Security

2.2. Dynamic Cryptographic Algorithm Selection via Artificial Intelligence

2.3. Evolution towards Post-Quantum Cryptography and New Standards

3. Adaptive-Crypto-RL System Architecture

3.1. Overview

3.2. Description of Architecture Layers

- System State Monitor: Collects cluster metrics in real-time (CPU, RAM, Network).

- State Vector : Aggregates system metrics and data metadata.

- Deep Q-Network (DQN): Neural network that evaluates Q-values for each algorithm based on .

- Action Selection: Selects the algorithm with the maximum Q-value.

- Algorithm Pool: The pool of candidate algorithms (Chacha20, Rabbit, NOEKEON, or AES-CTR).

3.3. Justification for Decryption at the Reducer Level

3.4. System User Interface

3.5. Compatibility and Backward Compatibility

3.6. Algorithmic Formalization

| Algorithm 1 Original MR-LWT Process (Static Selection) |

|

| Algorithm 2 Adaptive-Crypto-RL Process (Dynamic Selection) |

|

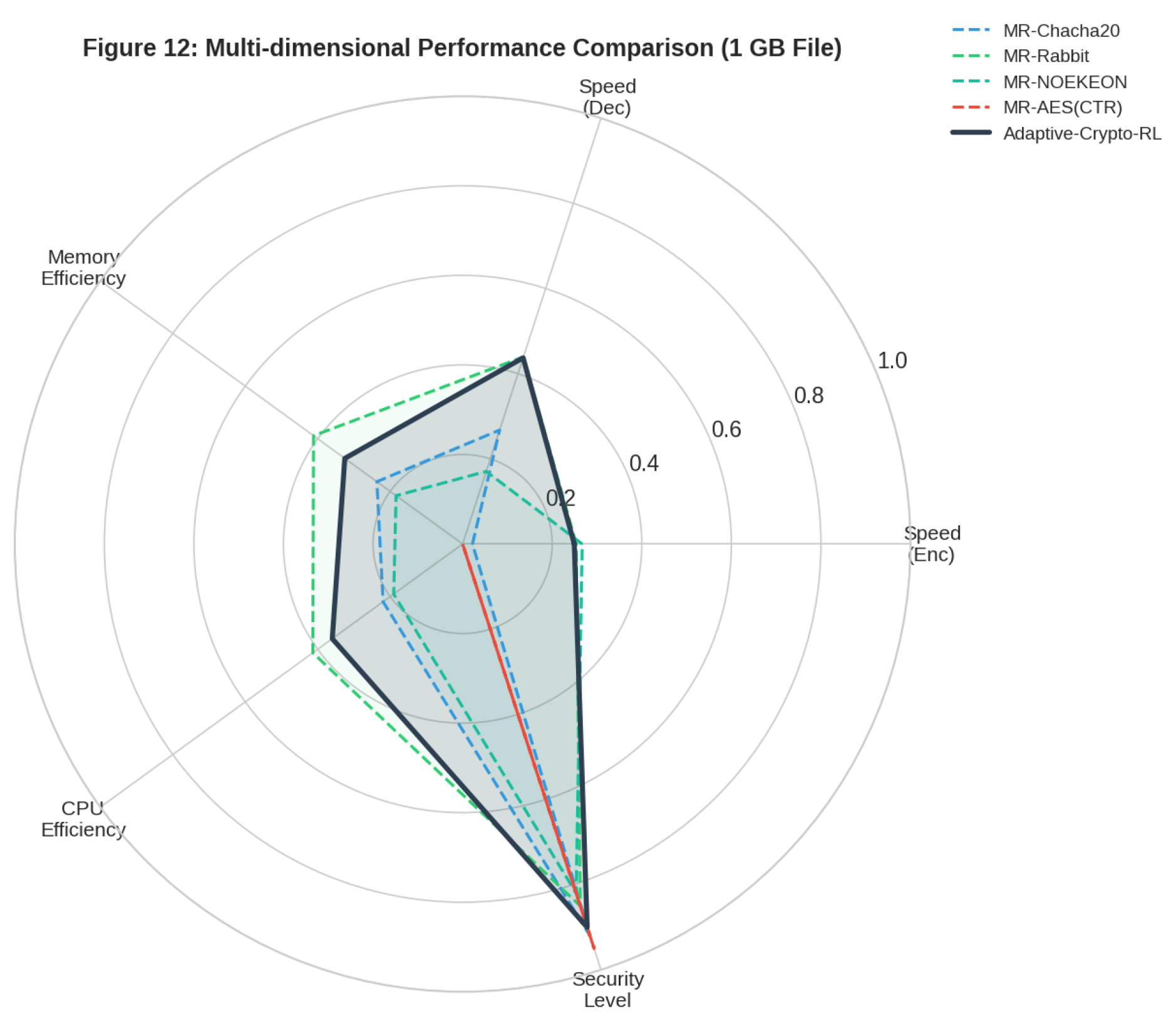

4. Experimental Evaluation and Results

4.1. Test Environment

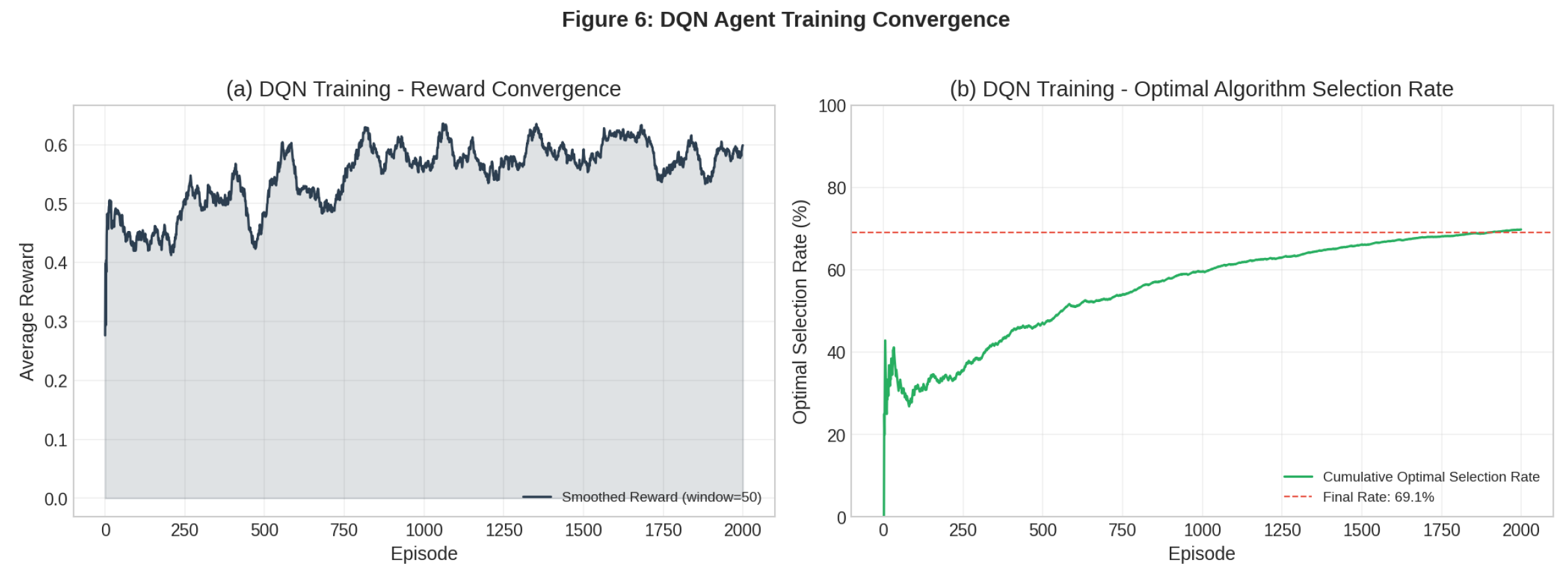

4.2. AI Training Convergence

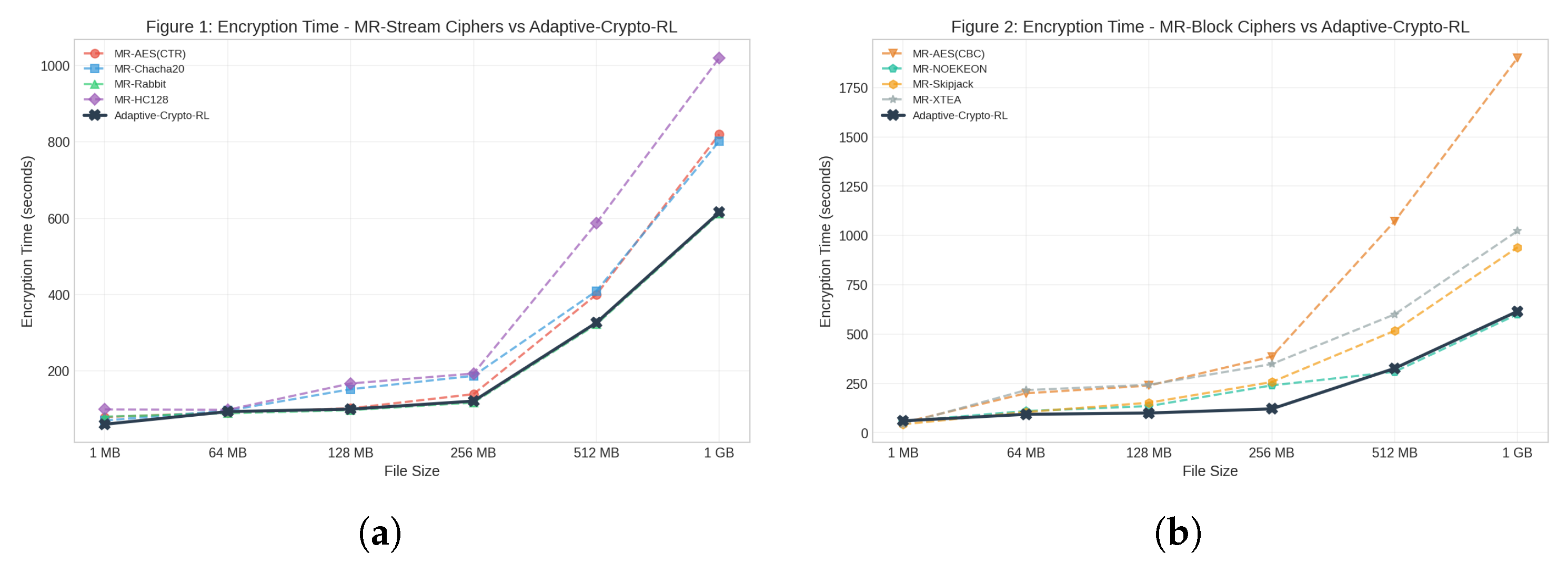

4.3. Encryption and Decryption Results

| File Size | Selected Algorithm | Encryption Time (s) | Decryption Time (s) | Total Time (s) |

|---|---|---|---|---|

| 1 MB | MR-NOEKEON | 60.2 | 55.8 | 116.1 |

| 64 MB | MR-Rabbit | 93.3 | 88.4 | 181.7 |

| 128 MB | MR-Rabbit | 99.8 | 100.0 | 199.9 |

| 256 MB | MR-Rabbit | 120.8 | 124.8 | 245.7 |

| 512 MB | MR-Rabbit | 326.2 | 202.7 | 528.9 |

| 1 GB | MR-Rabbit | 615.6 | 399.6 | 1015.2 |

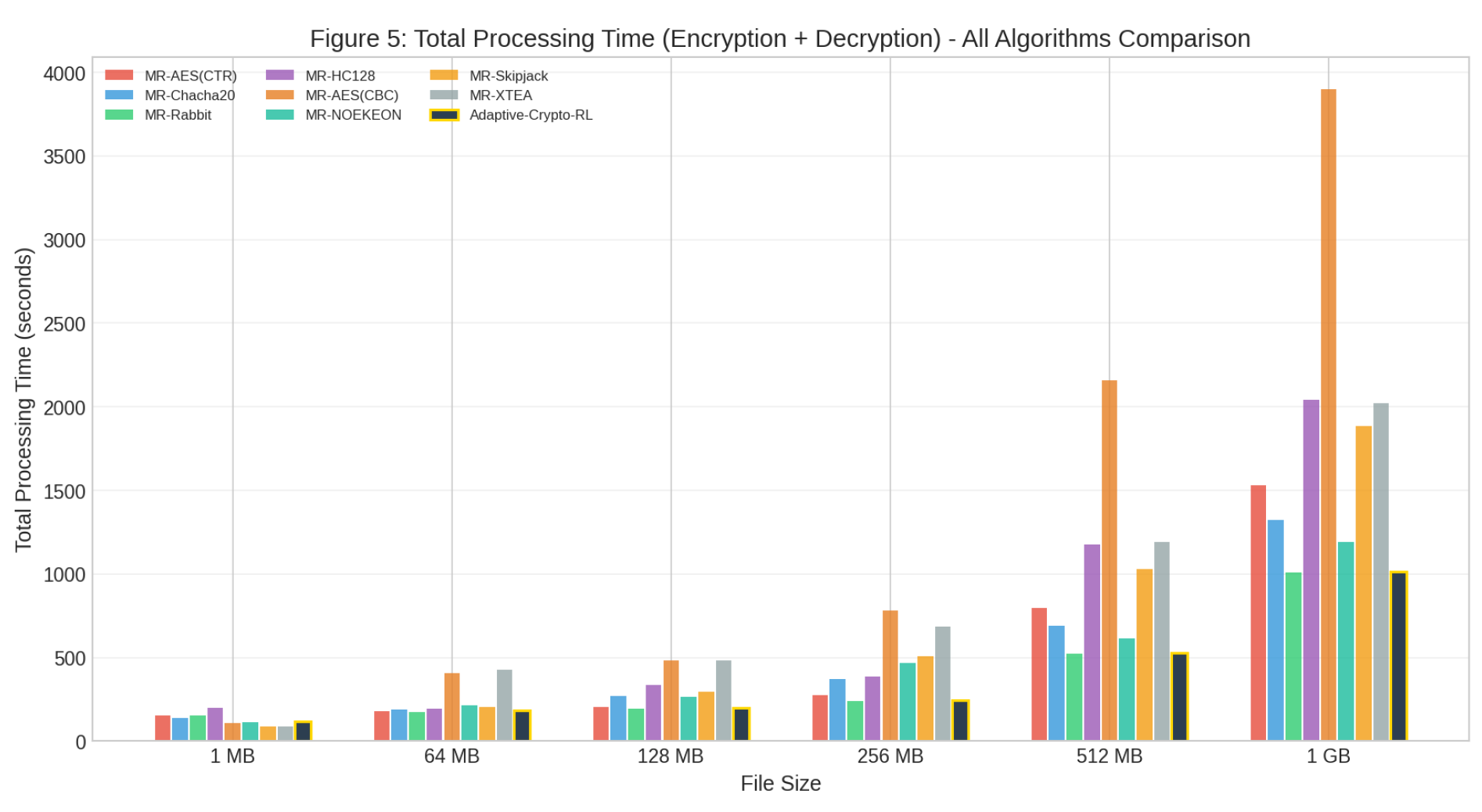

4.4. Total Processing Time

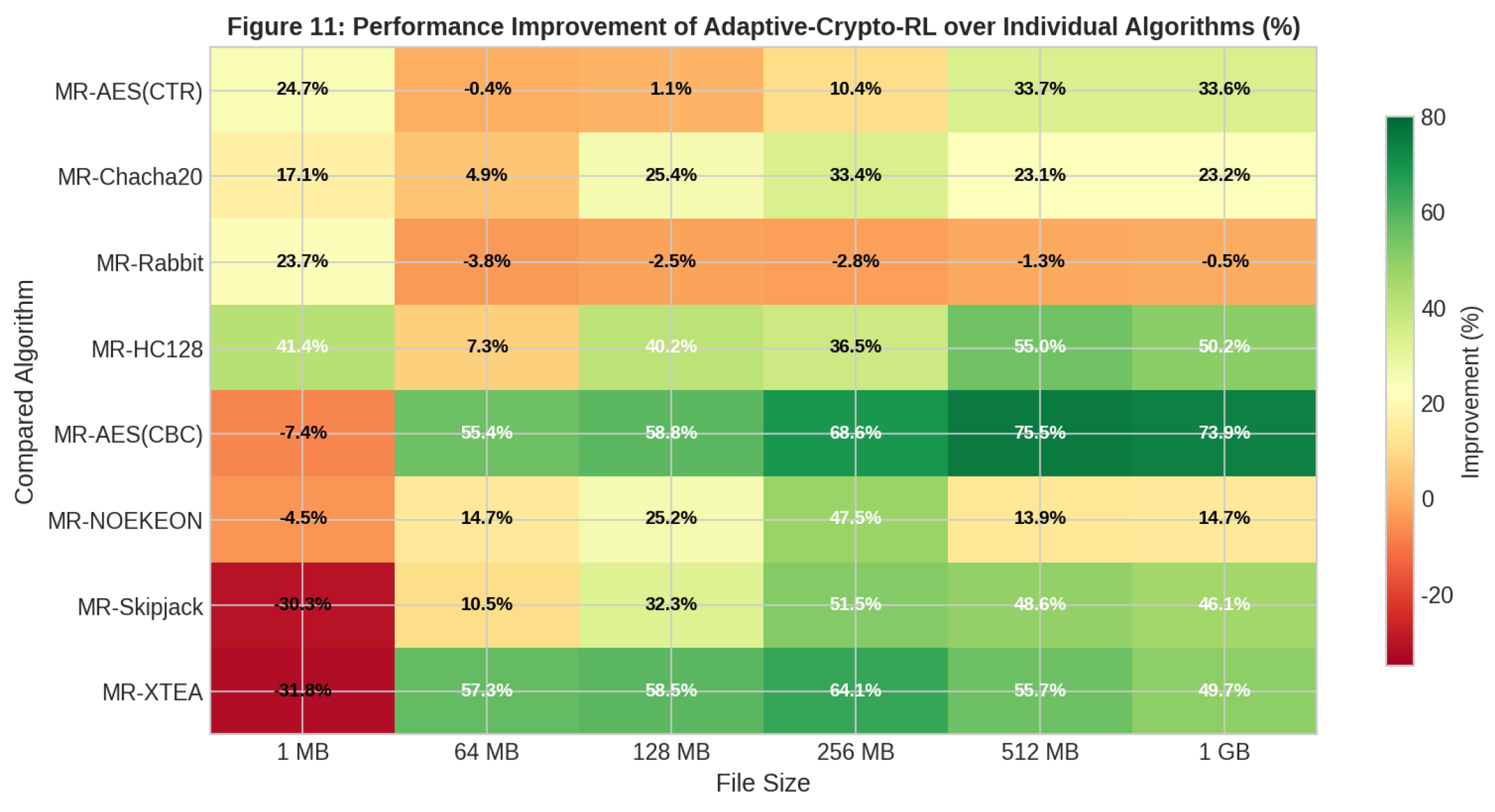

4.5. Performance Improvement Analysis

4.6. Resource Efficiency (Resource-Aware)

5. Conclusions and Perspectives

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Filaly, Y.; Berros, N.; El Mendili, F.; El Bouzekri El Idrissi, Y. A comprehensive survey on big data privacy and Hadoop security: Insights into encryption mechanisms and emerging trends. Results in Engineering 2025, 27, 106203.

- Kamoun-Abid, F.; et al. Enhanced MQTT Protocol for Securing Big Data/Hadoop Data Management. MDPI Journal of Sensor and Actuator Networks 2026, 15, 22.

- Original Author.; et al. Securing large-scale data processing: Integrating lightweight cryptography in MapReduce.

- Qasem, M. A.; Motiram, B. M.; Thorat, S.; Al-Hejri, A. M.; Alshamrani, S. S.; Alshmrany, K. M. Enhancement of cryptography algorithms for security of cloud-based IoT with machine learning models. Scientific Reports 2026, 16, 10972.

- Kumar, P. R.; Goel, S. A secure and efficient encryption system based on adaptive and machine learning for securing data in fog computing. Scientific Reports 2025, 15, 11654.

- Ng, Q. M.; Juremi, J.; Abd Rahman, N. A.; Thiruchelvam, V. Machine Learning–Based Cryptographic Selection System for IoT Devices Based on Computational Resources. Preprint 2025.

- Premakumari, S. B. N.; et al. Reinforcement Q-Learning-Based Adaptive Encryption Model for Cyberthreat Mitigation in Wireless Sensor Networks. Sensors 2025, 25, 2056.

- Guo, H.; et al. Deep Reinforcement Learning for Dynamic Algorithm Selection: A Proof-of-Principle Study on Differential Evolution. IEEE Transactions on Evolutionary Computation 2024.

- NIST. NIST Releases First 3 Finalized Post-Quantum Encryption Standards. 2024. Available online: https://www.nist.gov/news-events/news/2024/08/nist-releases-first-3-finalized-post-quantum-encryption-standards (accessed on 5 April 2026).

- Turan, M. S.; McKay, K.; Chang, D.; Kang, J. SP 800-232, Ascon-Based Lightweight Cryptography Standards for Constrained Devices. NIST Special Publication 800-232 2024/2025.

- Bajwa, M. T. T.; Afzal, M. N.; Afzal, M. H.; Ullah, M. S. Post-quantum cryptography for big data security. Bulletin of Big Data Management 2025.

- Khan, A. A.; Laghari, A. A.; Almansour, H.; Jamel, L. Quantum computing empowering blockchain technology with post quantum resistant cryptography for multimedia data privacy preservation. Journal of Cloud Computing 2025.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).