Submitted:

19 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Materials and Methods

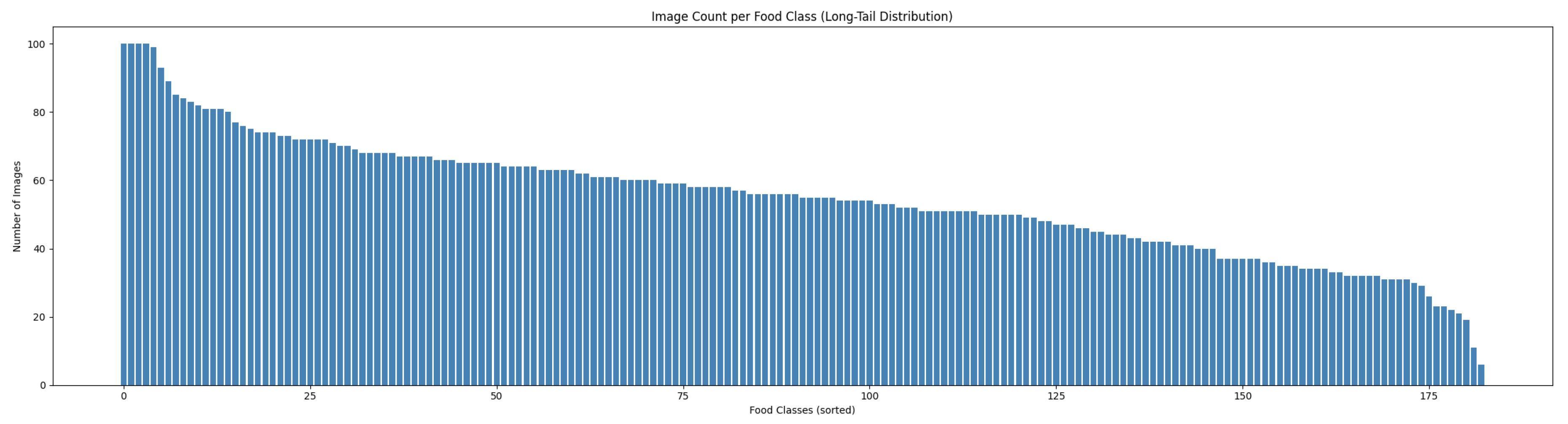

3.1. Dataset Collection

| Algorithm 1 Image Scraper Pipeline |

|

3.2. Pre-Processing of Image Data

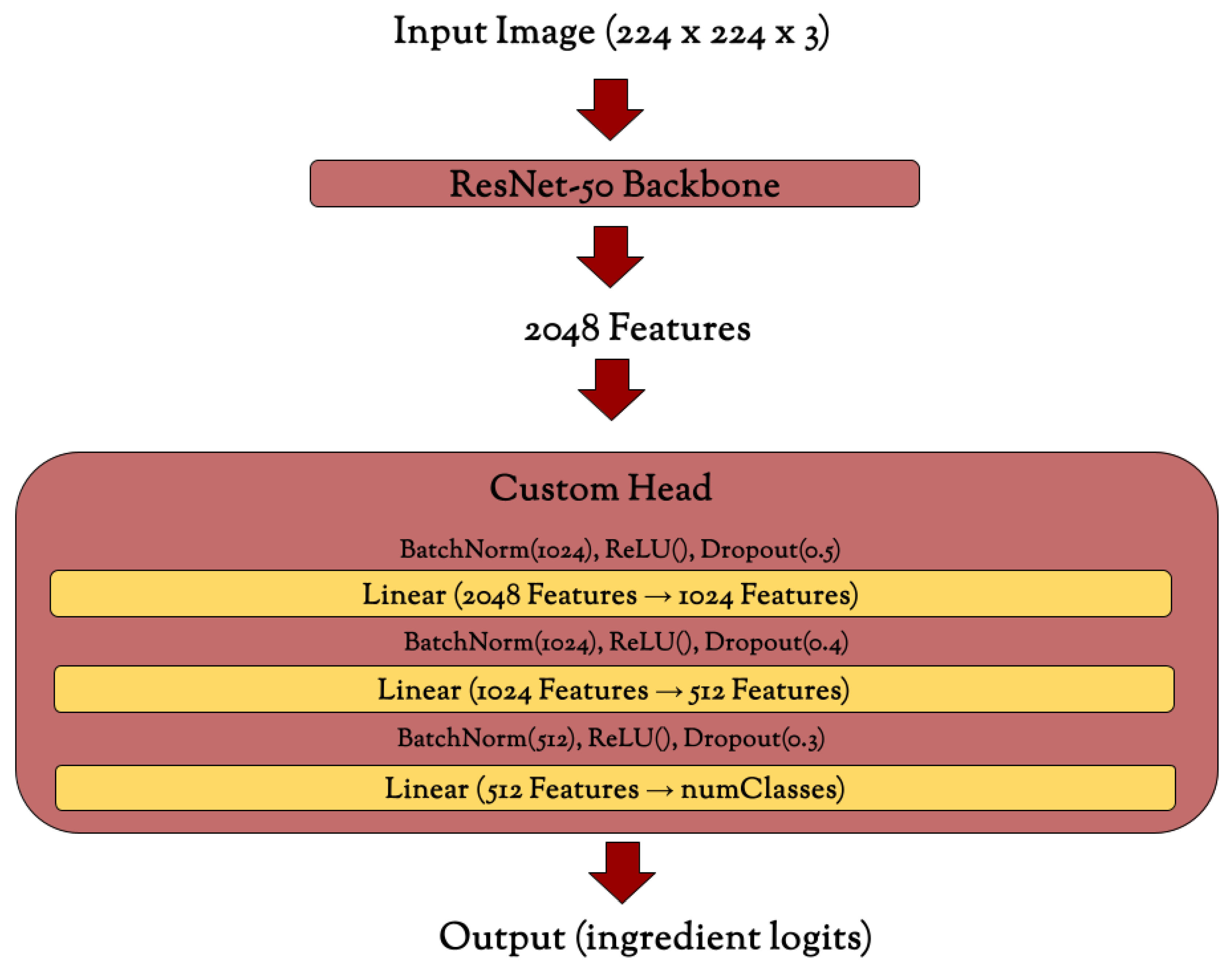

3.3. Model Architecture

3.4. Loss Function

3.5. Model Training

| Algorithm 2 Training Loop |

|

4. Results

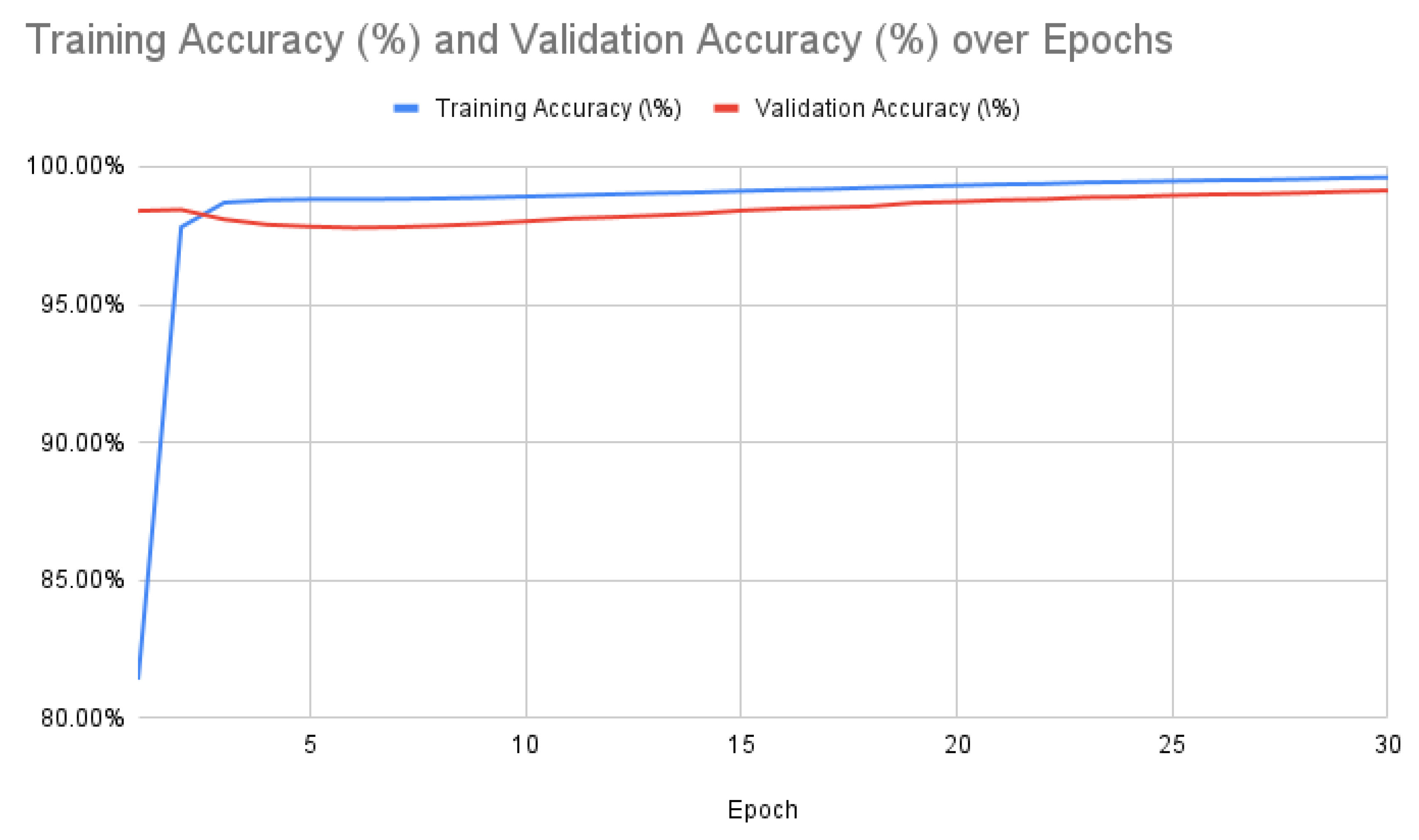

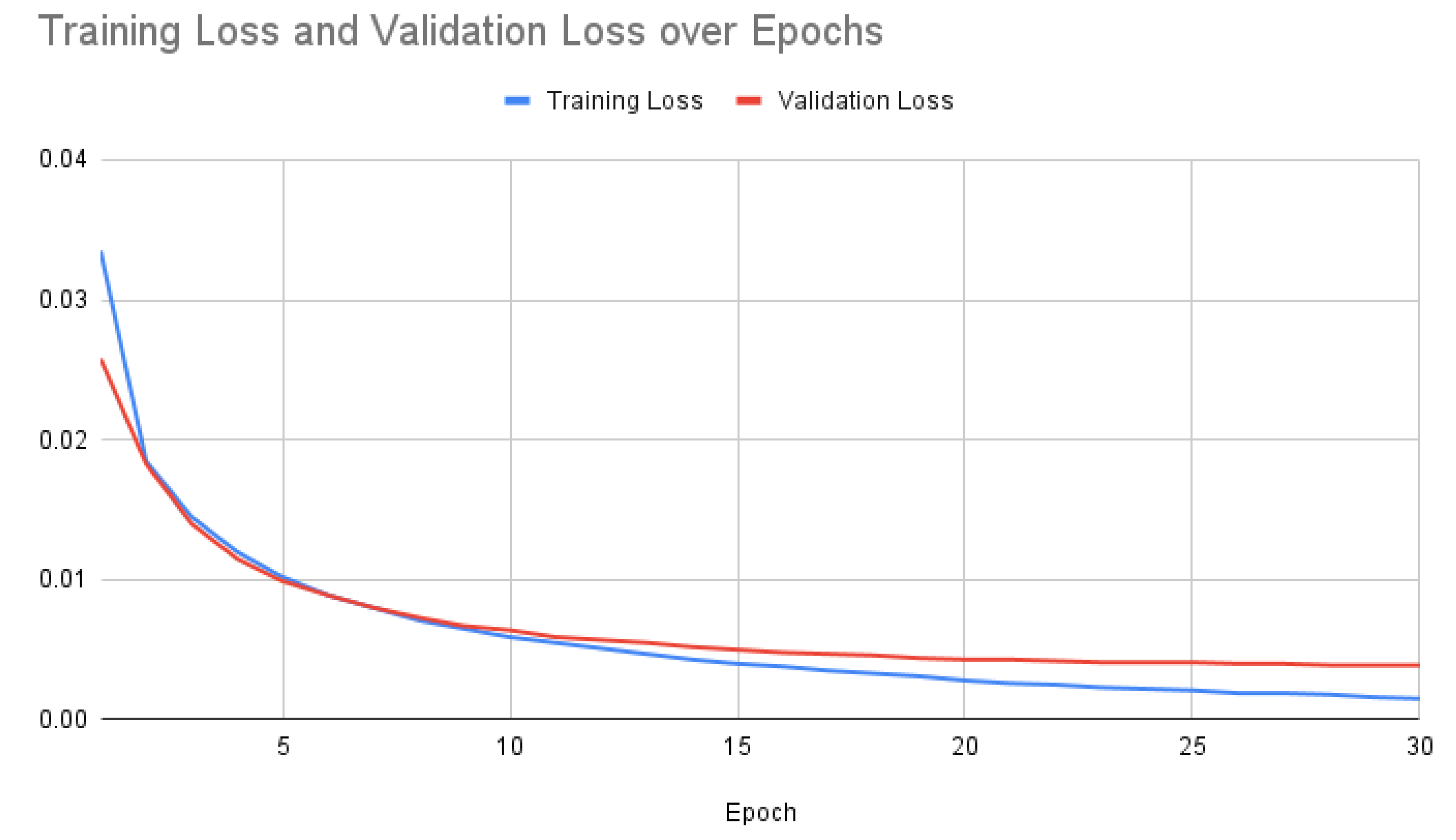

4.1. Training Results

| Epoch | Training Accuracy (%) | Validation Accuracy (%) | Training Loss | Validation Loss |

|---|---|---|---|---|

| 1 | 81.40% | 98.40% | 0.0335 | 0.0258 |

| 2 | 97.81% | 98.44% | 0.0185 | 0.0183 |

| 3 | 98.70% | 98.09% | 0.0145 | 0.0140 |

| 4 | 98.79% | 97.90% | 0.0120 | 0.0115 |

| 5 | 98.82% | 97.83% | 0.0102 | 0.0099 |

| 6 | 98.82% | 97.79% | 0.0089 | 0.0089 |

| 7 | 98.83% | 97.81% | 0.0080 | 0.0080 |

| 8 | 98.85% | 97.86% | 0.0071 | 0.0073 |

| 9 | 98.88% | 97.93% | 0.0065 | 0.0067 |

| 10 | 98.92% | 98.02% | 0.0059 | 0.0064 |

| 11 | 98.96% | 98.12% | 0.0055 | 0.0059 |

| 12 | 99.00% | 98.17% | 0.0051 | 0.0057 |

| 13 | 99.04% | 98.23% | 0.0047 | 0.0055 |

| 14 | 99.07% | 98.30% | 0.0043 | 0.0052 |

| 15 | 99.12% | 98.41% | 0.0040 | 0.0050 |

| 16 | 99.16% | 98.48% | 0.0038 | 0.0048 |

| 17 | 99.19% | 98.52% | 0.0035 | 0.0047 |

| 18 | 99.24% | 98.56% | 0.0033 | 0.0046 |

| 19 | 99.28% | 98.69% | 0.0031 | 0.0044 |

| 20 | 99.32% | 98.73% | 0.0028 | 0.0043 |

| 21 | 99.36% | 98.79% | 0.0026 | 0.0043 |

| 22 | 99.38% | 98.82% | 0.0025 | 0.0042 |

| 23 | 99.43% | 98.89% | 0.0023 | 0.0041 |

| 24 | 99.45% | 98.91% | 0.0022 | 0.0041 |

| 25 | 99.48% | 98.96% | 0.0021 | 0.0041 |

| 26 | 99.51% | 99.00% | 0.0019 | 0.0040 |

| 27 | 99.52% | 99.01% | 0.0019 | 0.0040 |

| 28 | 99.55% | 99.05% | 0.0018 | 0.0039 |

| 29 | 99.59% | 99.10% | 0.0016 | 0.0039 |

| 30 | 99.61% | 99.14% | 0.0015 | 0.0039 |

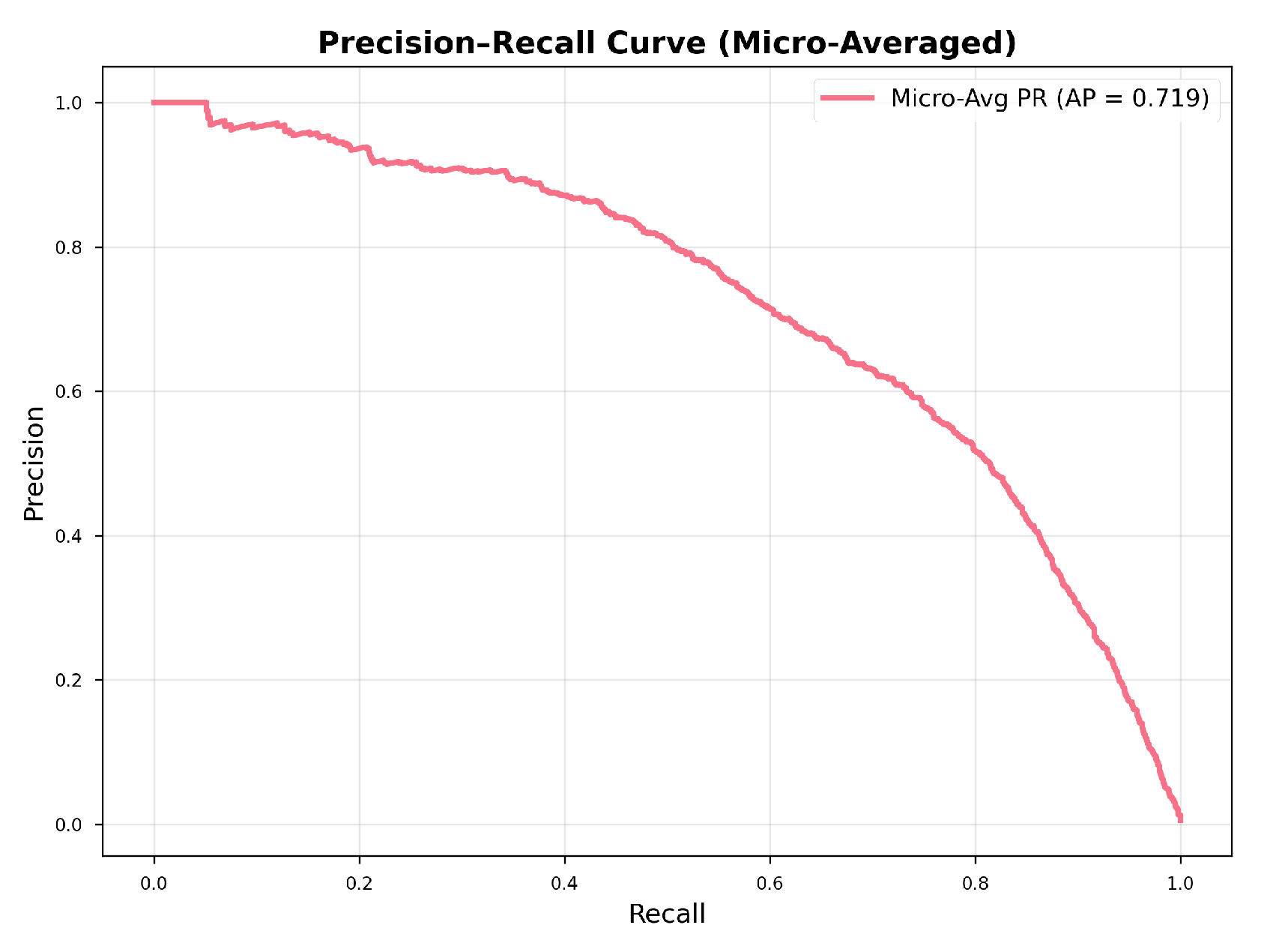

4.2. Error Analysis

4.3. F1 Score

4.4. Optimization

5. Discussion

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Matthews, E.D.; Kurnat-Thoma, E.L. U.S. Food Policy to Address Diet-Related Chronic Disease. Frontiers in Public Health 2024, 12. [Google Scholar] [CrossRef] [PubMed]

- Afshin, A.; Sur, P.J.; Fay, K.A.; Cornaby, L.; Ferrara, G.; Salama, J.S.; Mullany, E.C.; Abate, K.H.; Abbafati, C.; Abebe, Z.; et al. Health Effects of Dietary Risks in 195 Countries, 1990–2017: A Systematic Analysis for the Global Burden of Disease Study 2017. The Lancet 2019, 393, 1958–1972. [Google Scholar] [CrossRef] [PubMed]

- Gropper, S.S. The Role of Nutrition in Chronic Disease. Nutrients 2023, 15, 664. [Google Scholar] [CrossRef] [PubMed]

- Govender, I.; Rangiah, S.; Kaswa, R.; Nzaumvila, D. Malnutrition in Children Under the Age of 5 Years in a Primary Health Care Setting. South Afr. Fam. Pract. 2021, 63, e1–e6. [Google Scholar] [CrossRef]

- Howes, E.; Boushey, C.; Kerr, D.; Tomayko, E.; Cluskey, M. Image-Based Dietary Assessment Ability of Dietetics Students and Interns. Nutrients 2017, 9, 114. [Google Scholar] [CrossRef] [PubMed]

- Ahles, S.; DeWitt, C.A.M.; Hellberg, R.S. A Meta-Analysis of Seafood Species Mislabeling in the United States. Food Control 2025, 171, 111110. [Google Scholar] [CrossRef]

- Brinkley, S.; Gallo-Franco, J.J.; Vázquez-Manjarrez, N.; Chaura, J.; Quartey, N.K.A.; Toulabi, S.B.; Odenkirk, M.T.; Jermendi, E.; Laporte, M.A.; Lutterodt, H.E.; et al. The State of Food Composition Databases: Data Attributes and FAIR Data Harmonization in the Era of Digital Innovation. Front. Nutr. 2025, 12, 1552367. [Google Scholar] [CrossRef] [PubMed]

- Borugadda, P.; Kalluri, H.K. A Comprehensive Analysis of Artificial Intelligence, Machine Learning, Deep Learning and Computer Vision in Food Science. Journal of Future Foods 2025, 1. [Google Scholar] [CrossRef]

- Zhao, Z.; Wang, R.; Liu, M.; Bai, L.; Sun, Y. Application of Machine Vision in Food Computing: A Review. Food Chemistry 2025, 463, 141238. [Google Scholar] [CrossRef] [PubMed]

- Ma, J.; Sun, D.W.; Qu, J.H.; Liu, D.; Pu, H.; Gao, W.H.; Zeng, X.A. Applications of Computer Vision for Assessing Quality of Agri-Food Products: A Review of Recent Research Advances. Critical Reviews in Food Science and Nutrition 2016, 56, 113–127. [Google Scholar] [CrossRef] [PubMed]

- Amugongo, L.M.; Kriebitz, A.; Boch, A.; Lütge, C. Mobile Computer Vision-Based Applications for Food Recognition and Volume and Calorific Estimation: A Systematic Review. Healthcare 2022, 11, 59. [Google Scholar] [CrossRef] [PubMed]

- Kawano, Y.; Yanai, K. Food Image Recognition with Deep Convolutional Features. In Proceedings of the Proceedings of the 2014 ACM International Joint Conference on Pervasive and Ubiquitous Computing: Adjunct Publication, New York, NY, USA, 2014. [Google Scholar] [CrossRef]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal Loss for Dense Object Detection. In Proceedings of the IEEE International Conference on Computer Vision (ICCV); IEEE, Oct 2017; pp. 2980–2988. [Google Scholar]

- Pouladzadeh, P.; Kuhad, P.; Peddi, S.V.B.; Yassine, A.; Shirmohammadi, S. Food Calorie Measurement Using Deep Learning Neural Network. In Proceedings of the 2016 IEEE International Instrumentation and Measurement Technology Conference (I2MTC), Taipei, Taiwan, May 2016. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR); IEEE, Jun 2016; pp. 770–778. [Google Scholar]

- Li, X.; Grandvalet, Y.; Davoine, F.; Cheng, J.; Cui, Y.; Zhang, H.; Belongie, S.; Tsai, Y.H.; Yang, M.H. Transfer Learning in Computer Vision Tasks: Remember Where You Come From. Image and Vision Computing 2020, 93, 103853. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).