Submitted:

21 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Relevant Research Analysis

2.1. Main Limitations of Traditional Abnormal Behavior Detection Methods

2.2. Research Progress and Challenges of Unsupervised Deep Abnormality Detection Methods

2.3. Adaptability Analysis of the Introduction of the Abnormal Detection Task Through Contrastive Learning

3. Model Design

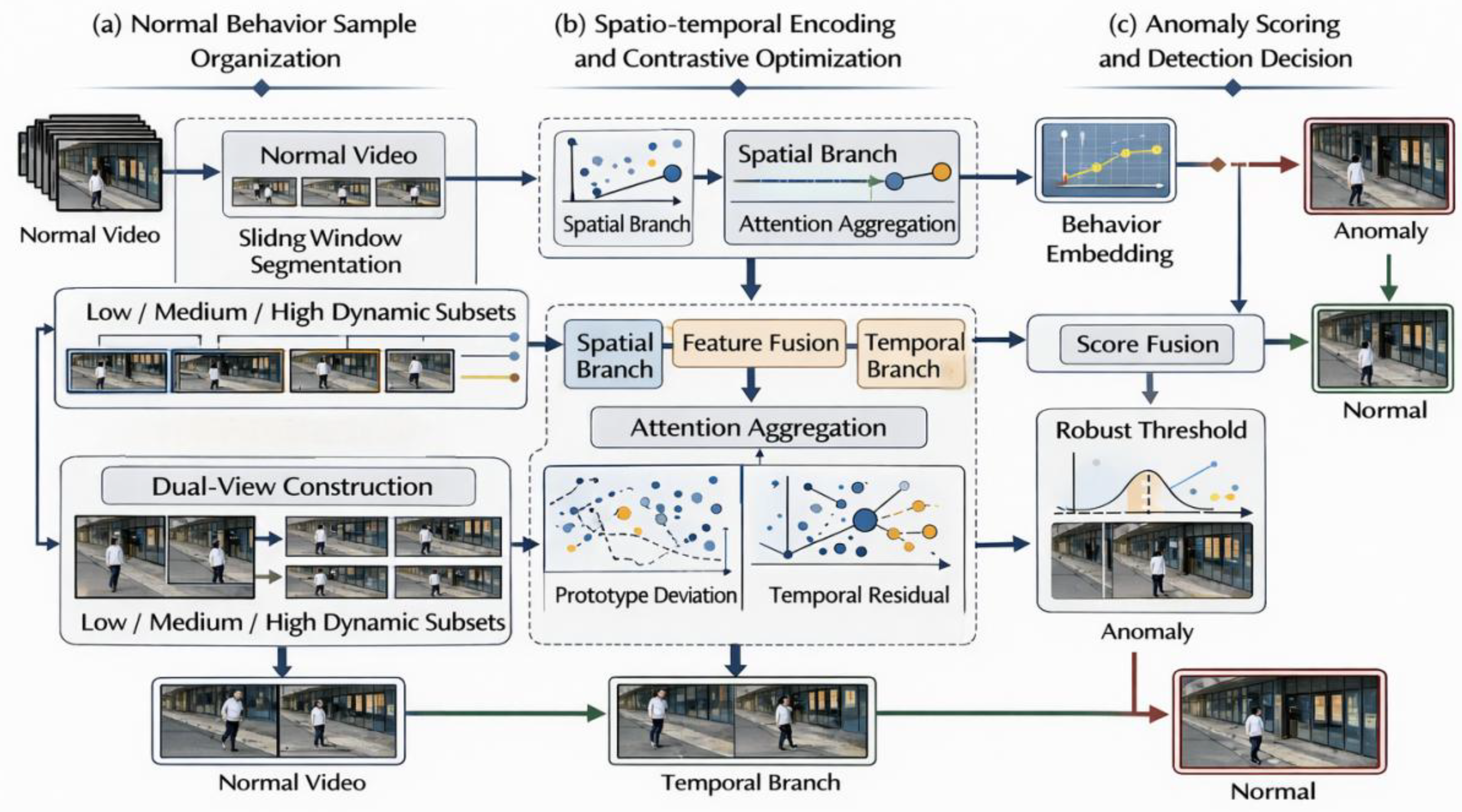

3.1. Unsupervised Behavior Abnormality Detection Framework Based on Contrastive Learning

3.2. Organization of Normal Behavior Samples and Feature Representation Learning

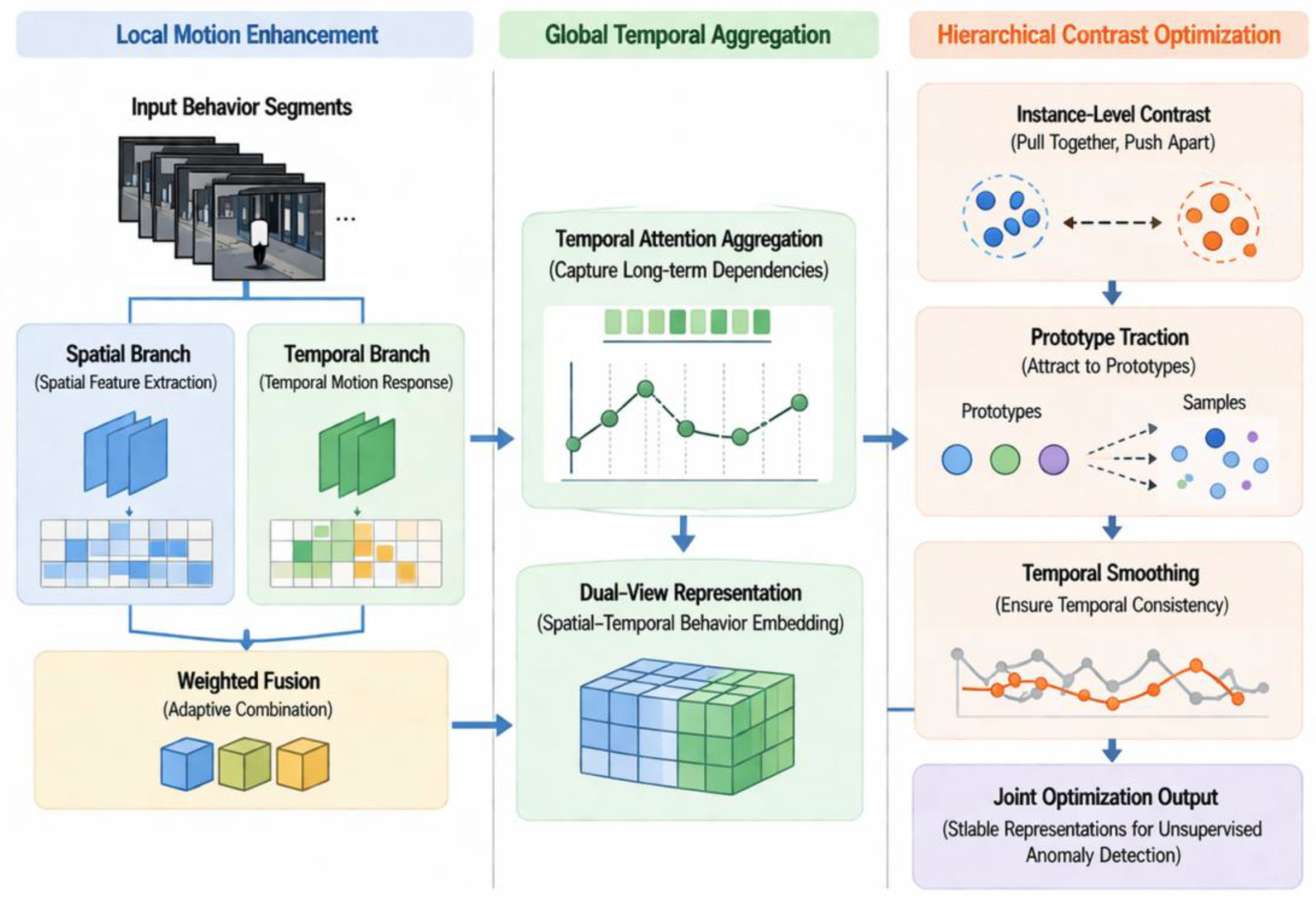

3.3. Spatio-Temporal Feature Encoding and Contrastive Optimization Mechanism

3.4. Abnormal Score Calculation and Detection Decision Method

4. Experiment and Result Analysis

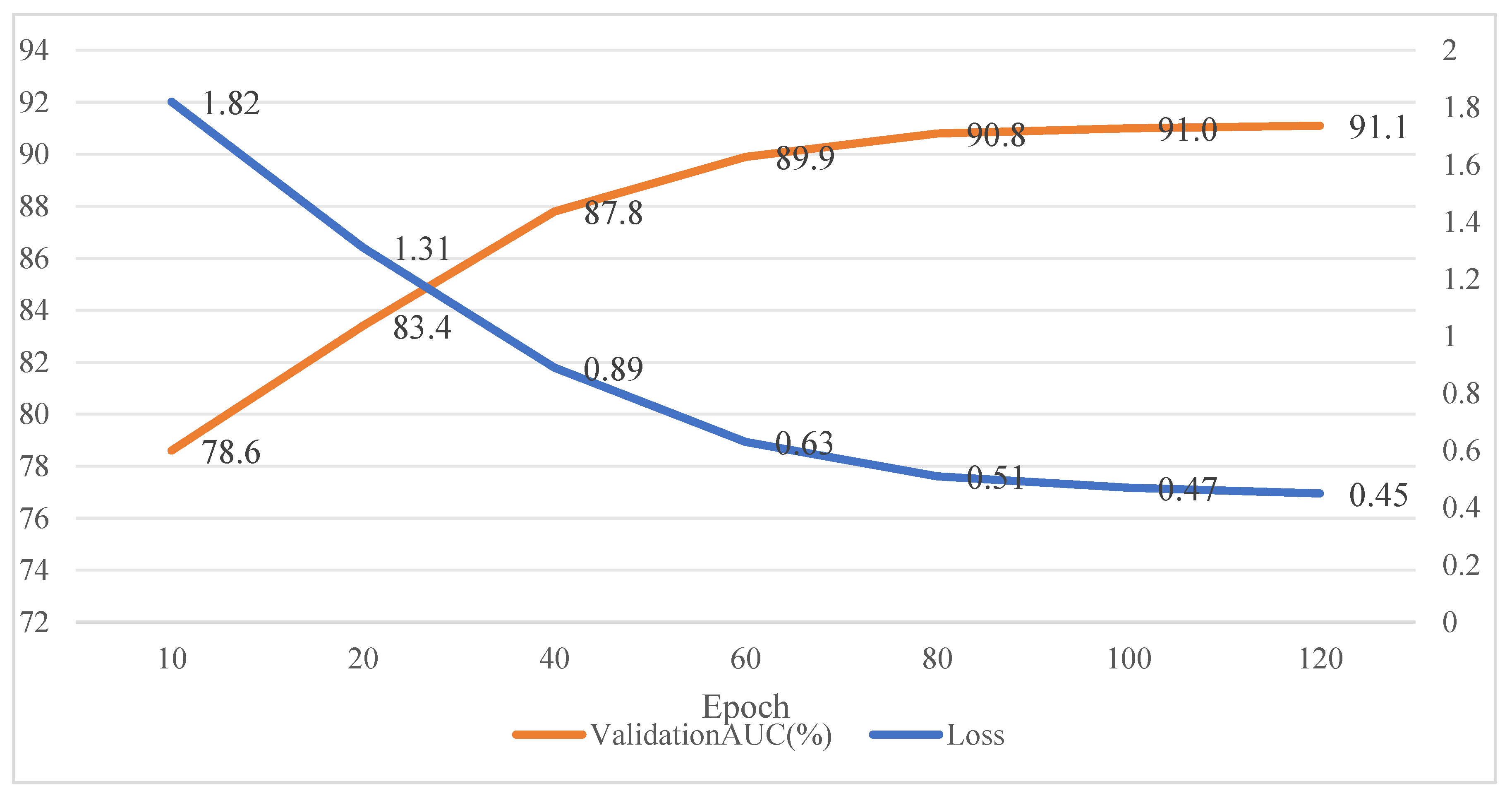

4.1. Experimental Scheme and Parameter Settings

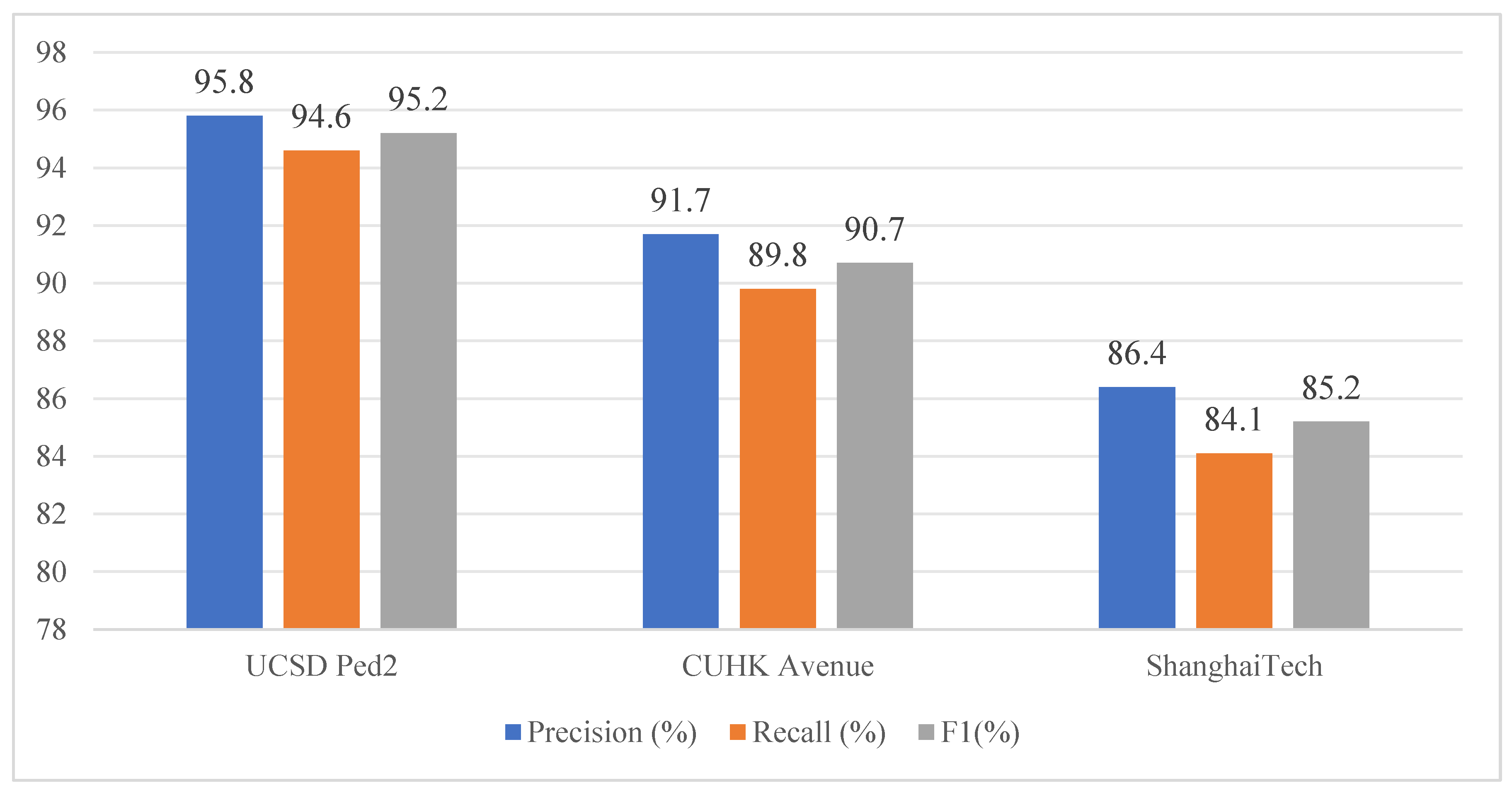

4.2. Detection Results and Performance Comparison

4.3. Ablation Analysis and Result Discussion

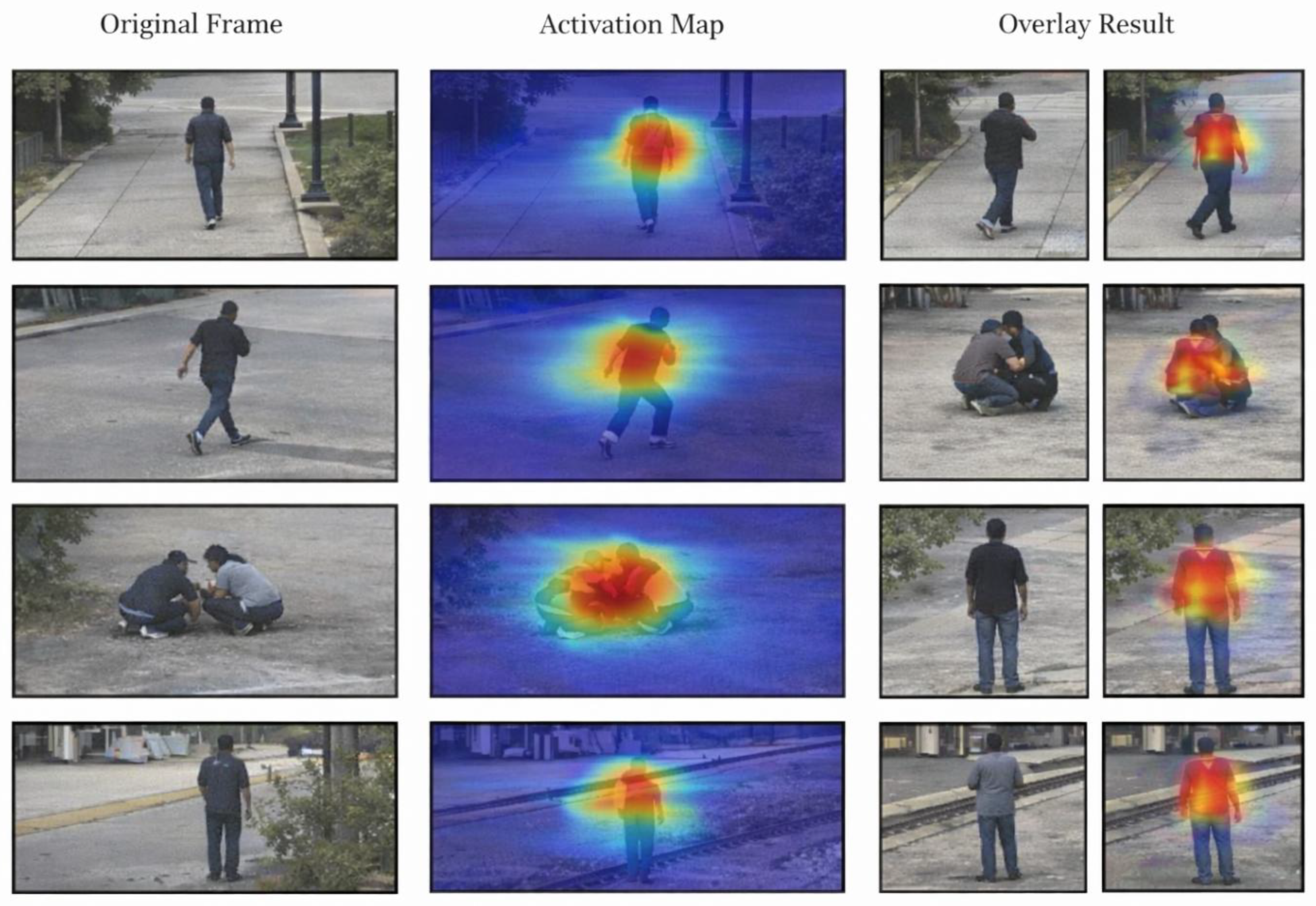

4.4. Interpretability Analysis of Abnormal Concern Regions

5. Conclusions

References

- Zhang, W.; Shi, H.; Qiu, J.; et al. EdgeAD: Unsupervised Learning Model Based on Prior Knowledge Enhanced Image Anomaly Detection of Heavy Railway Freight Cars[J]. IEEE Transactions on Instrumentation and Measurement 2025, Pt.1, 74. [Google Scholar] [CrossRef]

- Li, M.; Ying, Z.; Li, G.; et al. Unsupervised anomaly detection with memory bank and contrastive learning[J]. Array 2025, 28(c), 100548. [Google Scholar] [CrossRef]

- Wang, H. W.; Wu, R. T. Unsupervised anomaly detection for tile spalling segmentation using synthetic outlier exposure and contrastive learning[J]. Automation in construction 2025(Feb.), 170. [Google Scholar] [CrossRef]

- Wu, W.; Gu, Y. Advancing unsupervised graph anomaly detection: A multi-level contrastive learning framework to mitigate local consistency deception[J]. Neurocomputing 2025, 646. [Google Scholar] [CrossRef]

- Zhu, W.; Li, W.; Dorsey, E. R.; et al. Unsupervised anomaly detection by densely contrastive learning for time series data[J]. Neural Networks 2023, 168, 450–458. [Google Scholar] [CrossRef]

- Ghanim, J.; Awad, M. An Unsupervised Anomaly Detection in Electricity Consumption Using Reinforcement Learning and Time Series Forest Based Framework[J]. JOURNAL OF ARTIFICIAL INTELLIGENCE AND SOFT COMPUTING RESEARCH 2025, 15(1), 20. [Google Scholar] [CrossRef]

- Seo, J.; Kim, Y.; Ha, J.; et al. Unsupervised anomaly detection for earthquake detection on Korea high-speed trains using autoencoder-based deep learning models[J]. Scientific Reports 2024, 14(1). [Google Scholar] [CrossRef]

- Li, R.; Ma, H.; Wang, R.; et al. Application of unsupervised learning methods based on video data for real-time anomaly detection in wire arc additive manufacturing[J]. Journal of manufacturing processes 2025(Jun.), 143. [Google Scholar] [CrossRef]

- Ding, F.; Li, B.; Ben, X.; et al. ALAD: A New Unsupervised Time Series Anomaly Detection Paradigm Based on Activation Learning[J]. IEEE Transactions on Big Data 2025, 11(3), 1285–1297. [Google Scholar] [CrossRef]

- Wang, X.; Wang, Y.; Dai, Y.; et al. UDTL: Anomaly Detection Based on Unsupervised Deep Transfer Learning[J]. 2024 27th International Conference on Computer Supported Cooperative Work in Design (CSCWD) 2024, 2650–2655. [Google Scholar] [CrossRef]

- Pu, Y.; Sun, J.; Tang, N.; et al. Self-supervised distributional and contrastive learning model for image anomaly detection[J]. Knowledge-Based Systems 2025, 316. [Google Scholar] [CrossRef]

- Wang, X.; He, J.; Huang, F.; et al. Abnormal cell cause localization based on contrastive pre-training and unsupervised data-driven model for lithium-ion battery manufacturing[J]. Journal of Energy Storage 2024(Nov.Pt.A), 101. [Google Scholar] [CrossRef]

- Wang, L.; Cheng, Y.; Gong, H.; et al. Research on Dynamic Data Flow Anomaly Detection based on Machine Learning[J]. 2024 3rd International Conference on Electronics and Information Technology (EIT) 2024, 953–956. [Google Scholar] [CrossRef]

- Ho, W. J.; Hsieh, H. Y.; Tsai, C. W. Anomaly Detection Model of Time Segment Power Usage Behavior Using Unsupervised Learning[J]. Journal of Internet Technology 2024, 3, 25. [Google Scholar]

- Vyshkvarkova, E. V.; Grekov, A. N.; Kabanov, A. A.; et al. Anomaly Detection in Biological Early Warning Systems Using Unsupervised Machine Learning[J]. Sensors (Basel, Switzerland) 2023, 23(5), 2687–2687. [Google Scholar] [CrossRef]

- Hiruta, T.; Maki, K.; Tetsuji, K.; et al. Unsupervised Learning Based Diagnosis Model for Anomaly Detection of Motor Bearing with Current Data[J]. Procedia CIRP 2021, 98, 336–341. [Google Scholar] [CrossRef]

- Fan, C. Unsupervised anomaly detection based on improved skip-gannomaly[J]. Proceedings of SPIE 2022, 12348(000), 7. [Google Scholar] [CrossRef]

- Ea, P.; Vo, Q.; Salem, O.; et al. Unsupervised Anomaly Detection in IoMT Based on Clustering and Online Learning[J]. 2024 IEEE International Conference on E-health Networking, Application & Services (HealthCom) 2024, 1–6. [Google Scholar] [CrossRef]

- Wang, X.; Bian, W.; Zhao, X. Robust Unsupervised Anomaly Detection for Surface Defects Based on Stacked Broad Learning System[J]. IEEE/ASME Transactions on Mechatronics 2024, 1–11. [Google Scholar] [CrossRef]

- Xu, Q.; Xie, T.; Jiang, C.; et al. Adaptive Working Condition Recognition With Clustering-Based Contrastive Learning for Unsupervised Anomaly Detection[J]. IEEE transactions on industrial informatics 2024, 10, 20. [Google Scholar] [CrossRef]

- Wu, L.; Ali, M. K. M.; Tian, Y. Supervision and early warning of abnormal data in Internet of Things based on unsupervised attention learning[J]. Computer communications 2024, 216(Feb.), 229–237. [Google Scholar] [CrossRef]

- Lu, J.; Cao, Y.; Shi, R.; et al. An Efficient Driver Anomaly State Detection Approach Based on End-Cloud Integration and Unsupervised Learning[J]. 2023 IEEE 26th International Conference on Intelligent Transportation Systems (ITSC) 2023, 5824–5830. [Google Scholar] [CrossRef]

- Xi, Y.; Lei, Z.; Wen, G.; et al. Unsupervised Fault Detection Method via Time-Series Segmentation and Contrastive Masking Learning[J]. Instrumentation and Measurement, IEEE Transactions on 2025, 74(000), 1–10. [Google Scholar] [CrossRef]

- Shang, X.; Zhang, J.; Jiang, X.; et al. Anomaly Detection for Multivariate Time Series Based on Contrastive Learning and Autoformer[J]. 2024 27th International Conference on Computer Supported Cooperative Work in Design (CSCWD) 2024, 2614–2619. [Google Scholar] [CrossRef]

- Xiao, P.; Jia, T.; Duan, C.; et al. LogCAE: An Approach for Log-based Anomaly Detection with Active Learning and Contrastive Learning[J]. 2024 IEEE 35th International Symposium on Software Reliability Engineering (ISSRE) 2024, 144–155. [Google Scholar] [CrossRef]

- Hamza, A.; Ali, Z.; Dudley, S.; et al. Optimizing PV Array Performance: A2 LSTM for Anomaly Detection and Predictive Maintenance based on Machine Learning[J]. 2024 IEEE Energy Conversion Congress and Exposition (ECCE) 2024, 1681–1688. [Google Scholar] [CrossRef]

- Song, D.; Lee, N.; Kim, J.; et al. Anomaly Detection of Deepfake Audio Based on Real Audio Using Generative Adversarial Network Model[J]. IEEE Access 2024, 12, 184311–184326. [Google Scholar] [CrossRef]

- Qian, J.; Wu, Z.; Cao, Y.; et al. Unsupervised anomaly detection for radar active deception jamming based on denoising diffusion implicit model[J]. IET Conference Proceedings 2024, 2023(47), 2454–2457. [Google Scholar] [CrossRef]

- Li, S.; Song, W.; Zhao, C.; et al. An Anomaly Detection Method for Multiple Time Series Based on Similarity Measurement and Louvain Algorithm[J]. Procedia Computer Science 2022, 200(c), 1857–1866. [Google Scholar] [CrossRef]

- Lei, Y.; Nieuwoudt, M.; Matsumoto, H.; et al. Unsupervised Anomaly Detection for Mild Cognitive Impairment Using Diffusion Model[J]. 2023 Asia Conference on Cognitive Engineering and Intelligent Interaction (CEII) 2023, 41–46. [Google Scholar] [CrossRef]

- Roseline, S. A.; Karthik, S.; Sruti, I. N. V. D. Intelligent Human Anomaly Detection using LSTM Autoencoders[J]. 2024 International Conference on Advances in Computing, Communication and Applied Informatics (ACCAI) 2024, 1–7. [Google Scholar] [CrossRef]

- Natsumeda, M.; Mizoguchi, T.; Cheng, W.; et al. Unsupervised anomaly detection under a multiple modeling strategy via model set optimization through transfer learning[J]. 2023 26th International Conference on Information Fusion (FUSION) 2023, 1–8. [Google Scholar] [CrossRef]

- Song, S.; Yang, K.; Wang, A.; et al. A Mura Detection Model Based on Unsupervised Adversarial Learning[J]. IEEE Access 2021, PP(99), 1–1. [Google Scholar] [CrossRef]

- Ali, M.; Scandurra, P.; Moretti, F.; et al. Anomaly Detection in Public Street Lighting Data Using Unsupervised Clustering[J]. IEEE Transactions on ConsumerElectronics 2024, 1, 70. [Google Scholar] [CrossRef]

- He, Y.; Ding, X.; Tang, Y.; et al. Unsupervised Multivariate Time Series Anomaly Detection by Feature Decoupling in Federated Learning Scenarios[J]. IEEE Transactions on Artificial Intelligence 2025, 1–15. [Google Scholar] [CrossRef]

- Qiu, H.; Jiang, H. RLIF-Net:Unsupervised Trace-SPC Fault Detection Solution Based on Representation Learning and Isolation Forest[J]. 2024 2nd International Symposium of Electronics Design Automation (ISEDA) 2024, 552–557. [Google Scholar] [CrossRef]

- Duan, M.; Mao, L.; Liu, R.; et al. Unified Model Based on Reinforced Feature Reconstruction for Metro Track Anomaly Detection[J]. IEEE sensors journal 2024, 4, 24. [Google Scholar] [CrossRef]

| Method Type | Typical Thought | Extractable Information | Primary Advantage | Primary Limitation |

| Inter-frame Difference Method | Compare the pixel change areas of adjacent frames | Motion position, rough change range | Computationally simple, suitable for rapid detection | Prone to be affected by noise and illumination changes, difficult to describe complex behaviors |

| Optical Flow Field Analysis Method | Calculate the direction and speed of pixel or area movement | Local motion intensity, direction distribution | Reflect short-term motion characteristics | Sensitive to occlusion, camera jitter, and background disturbances |

| Trajectory Statistics Method | Trajectory tracking and analysis | Motion path, stay time, speed change | Has certain explanatory power for continuous behaviors | Tracking is unstable in multi-target scenarios, and the trajectory loss leads to false determination |

| Methods Based on Artificial Features | Extract direction gradients, local motion descriptors, etc. | Local texture and action cues | Easy to implement, convenient for combination with classifiers | Feature expression is shallow, difficult to model long-term dependence and scene semantics |

| Rule or Threshold Discrimination Method | Establish abnormal conditions based on experience | Region boundary crossing, speed anomaly, stay anomaly | Deployment is convenient, suitable for simple monitoring tasks | Rules are rigid, have weak cross-scenario adaptability, and have more false alarms and missed detections |

| Organization Step | Processing Method | Output Result | Main Role |

| Normal Segment Extraction | Using sliding window to divide continuous video | Fixed-length behavior segments | Reserve basic temporal information |

| Motion Intensity Filtering | Calculating dynamic degree based on optical flow response | Low, medium, and high dynamic subsets | Reduce sample aliasing |

| Dual View Construction | Parallel input of original view and lightweight enhanced view | Paired training samples | Improve representation stability |

| Prototype Aggregation | Computing the average of group-specific features to obtain the center representation | Normal behavior prototype | Enhance distribution compactness |

| Representation Learning | Joint training of spatio-temporal encoding and mapping | Fragment embedding features | Provide a discriminative basis for anomaly detection |

| Dataset | Training Video Number | Testing Video Number | Resolution | Dataset Characteristics |

| UCSD Ped2 | 16 | 12 | 360×240 | Scene relatively simple, target size small, suitable for testing basic anomaly detection capabilities |

| CUHK Avenue | 16 | 21 | 640×360 | Scene more diverse, abnormal behavior forms are more numerous, has certain complexity |

| ShanghaiTech | 274 | 330 | Resolution not uniform | Scene quantity is large, background differences are significant, abnormal types are complex, suitable for testing model generalization ability |

| Method | UCSD Ped2/AUC(%) | CUHK Avenue/AUC(%) | ShanghaiTech/AUC(%) | Average AUC(%) | Average F1(%) |

| Traditional feature method | 89.6 | 81.7 | 71.8 | 81.0 | 75.6 |

| Conv-AE | 91.8 | 84.6 | 74.9 | 83.8 | 80.7 |

| Future Prediction | 93.2 | 86.1 | 76.8 | 85.4 | 82.1 |

| MemAE | 95.1 | 88.4 | 79.3 | 87.6 | 84.5 |

| Baseline contrastive learning method | 95.8 | 89.6 | 80.5 | 88.6 | 85.3 |

| Proposed method | 97.4 | 91.8 | 83.7 | 91.0 | 88.1 |

| Model Number | Spatio-temporal Joint Encoding | Prototype Constraints | Second-order Temporal Residual | Local Neighborhood Support | Average AUC (%) | Average F1 (%) |

| M1 Basic Contrastive Learning Model | × | × | × | × | 88.6 | 85.3 |

| M2 | √ | × | × | × | 89.7 | 86.2 |

| M3 | √ | √ | × | × | 90.2 | 86.8 |

| M4 | √ | √ | √ | × | 90.7 | 87.4 |

| M5 | √ | √ | × | √ | 90.5 | 87.1 |

| M6 This method | √ | √ | √ | √ | 91.0 | 88.1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).