5. Results

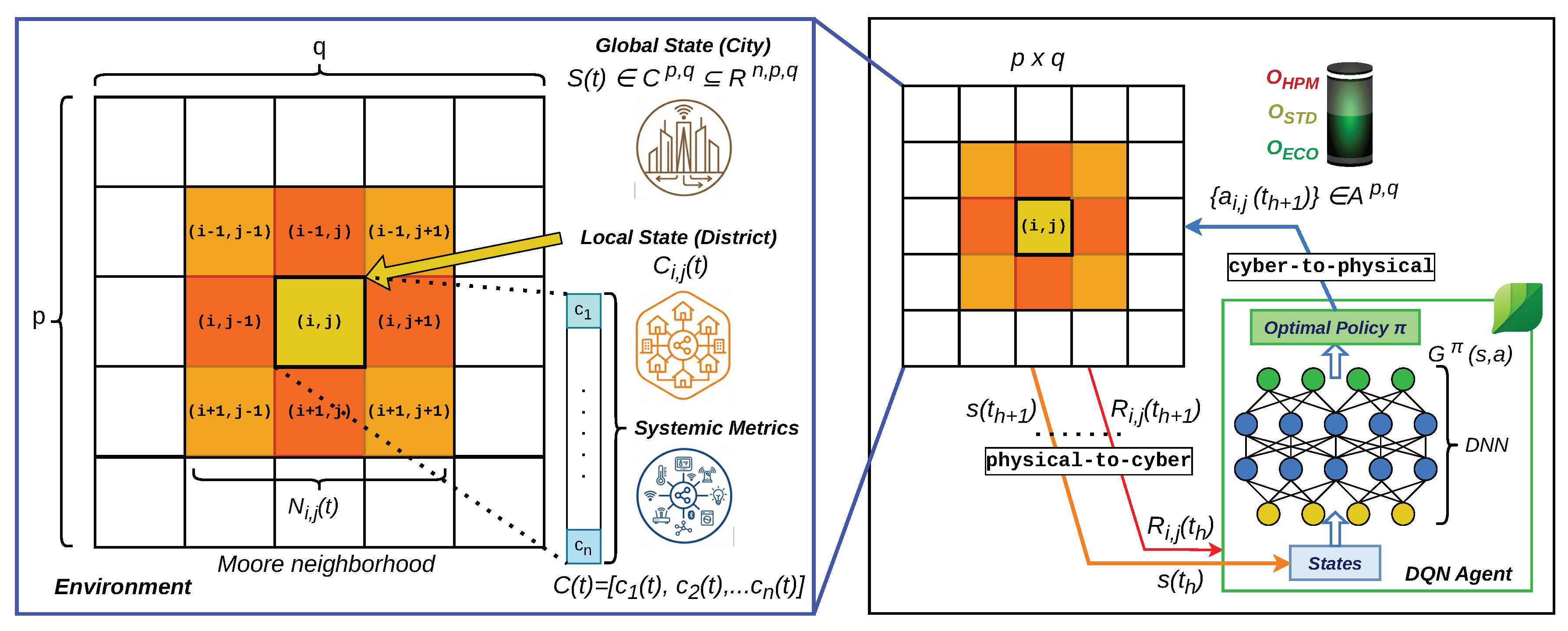

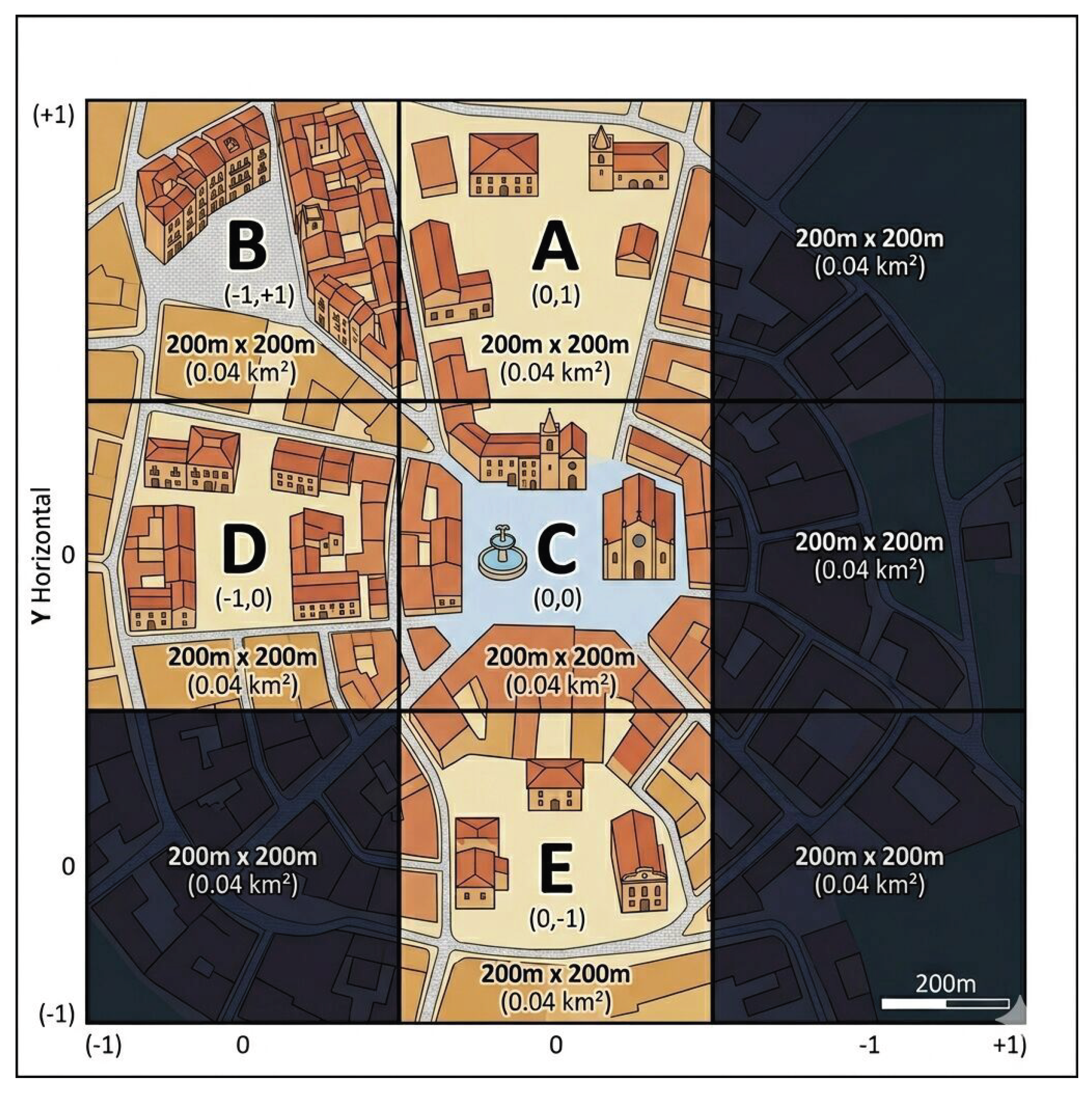

To validate the robustness of the proposed approach, a comprehensive analysis has been conducted on the urban infrastructures described in

Table 3. The

C district has been selected as the primary

reference due to its full-fledged configuration, as shown in

Table 4. Such a multi-modal data environment provides a challenging and representative scenario. Three policies, characterizing the agent behavioral attitude toward urban dynamics, emerge as trade-offs from the multi-objective optimization. We define these policies as

lazy,

balanced, and

responsive.

The lazy policy identifies an energy-saving and cost-saving oriented profile. In this configuration, the DQN agent keeps the devices in a low-power state to minimize energy consumption and reduce the associated economic expenditure. It is simultaneously focused on the device lifespan: by operating primarily in less demanding and less stressful conditions, it preserves the hardware electronic integrity. Thereby, the system ensures long-term operational resilience by avoiding the thermal and computational stress typical of higher-performance modes. Although this policy safeguards hardware longevity and related costs, it introduces a significant operational risk to the urban scenario: by failing to capture micro-scale traffic fluctuations and peak congestion events, the system underestimates the actual urban stress. As a consequence, it can represent a systemic risk for the community, as decision-makers are provided with insufficient data that masks pollution hotspots and traffic bottlenecks. In summary, the lazy policy achieves device-level resilience impacting on the city observability and consequently on its sustainability.

The responsive policy identifies a social-oriented profile. This configuration is highly sensitive to fluctuations in urban traffic demand; while it accepts a higher energy and economic cost at the device level, it minimizes the information latency. By operating at peak performance, the system avoids underestimating mobility dynamics, which is crucial to prevent flawed or weak decision-making. In this case study, responsiveness translates in substantial environmental and social benefits: by providing high-resolution monitoring of traffic congestion and parking occupancy, the system enables more effective urban flow management and a reduction in traffic congestion. Consequently, the ability to detect and react to micro-scale mobility fluctuations allows for the targeted mitigation of pollution hotspots. In this configuration, local energy E consumption and capital expenditure could be a strategic investment to achieve a systemic reduction of the overall environmental footprint.

The balanced policy identifies a nominal baseline profile designed to ensure that urban monitoring remains reliable and inclusive without reaching the energy peaks of the responsive mode while avoiding the information latency of the lazy approach.

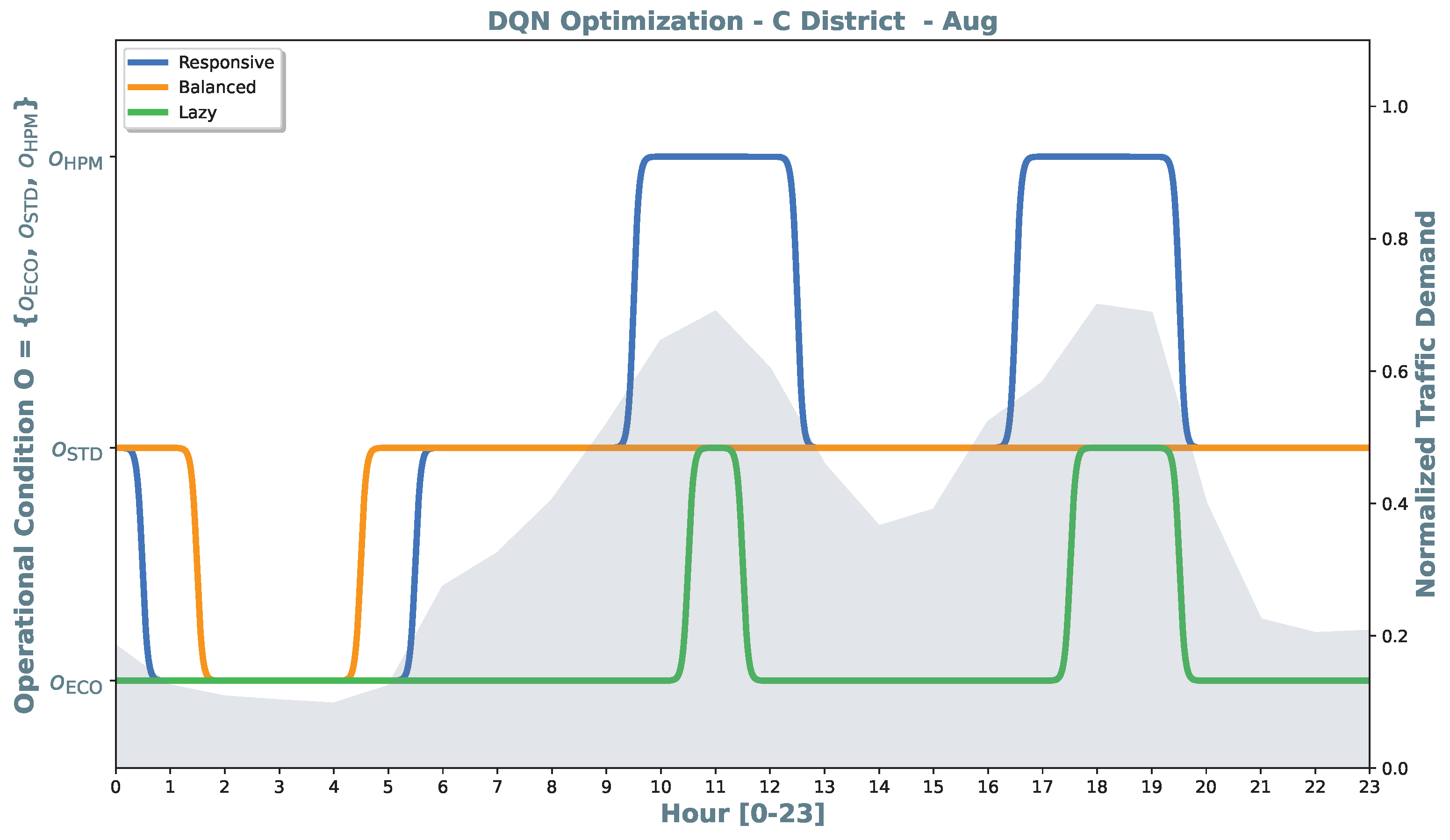

To account for seasonal variations in traffic-parking patterns and environmental conditions, four representative months have been selected to sample the annual operational cycle from August 2025 to March 2026: August (summer), October (autumn), January (winter), and March (spring). This seasonal sampling ensures that the DQN policy is not over-fitted to specific temporal conditions but remains effective and generalized across different climatic and social contexts. The following analysis details the behavioral patterns learned by the DQN agent across the four representative months, illustrating how the lazy, balanced, and responsive policies map the normalized traffic demand to specific operational conditions .

In the August scenario depicted in

Figure 6, the agent faces distinct summer traffic peaks where the policies exhibit highly differentiated behaviors. The

lazy policy is in the

state for the majority of the day, showing high tolerance for traffic increases and transitioning to

only during the mid-day and evening peaks. The

balanced policy serves as a stable baseline, remaining in

for almost the entire 24-hour cycle to ensure constant monitoring. Finally, the

responsive policy acts as a vigilant sentinel, proactively switching to

during the two main traffic surges.

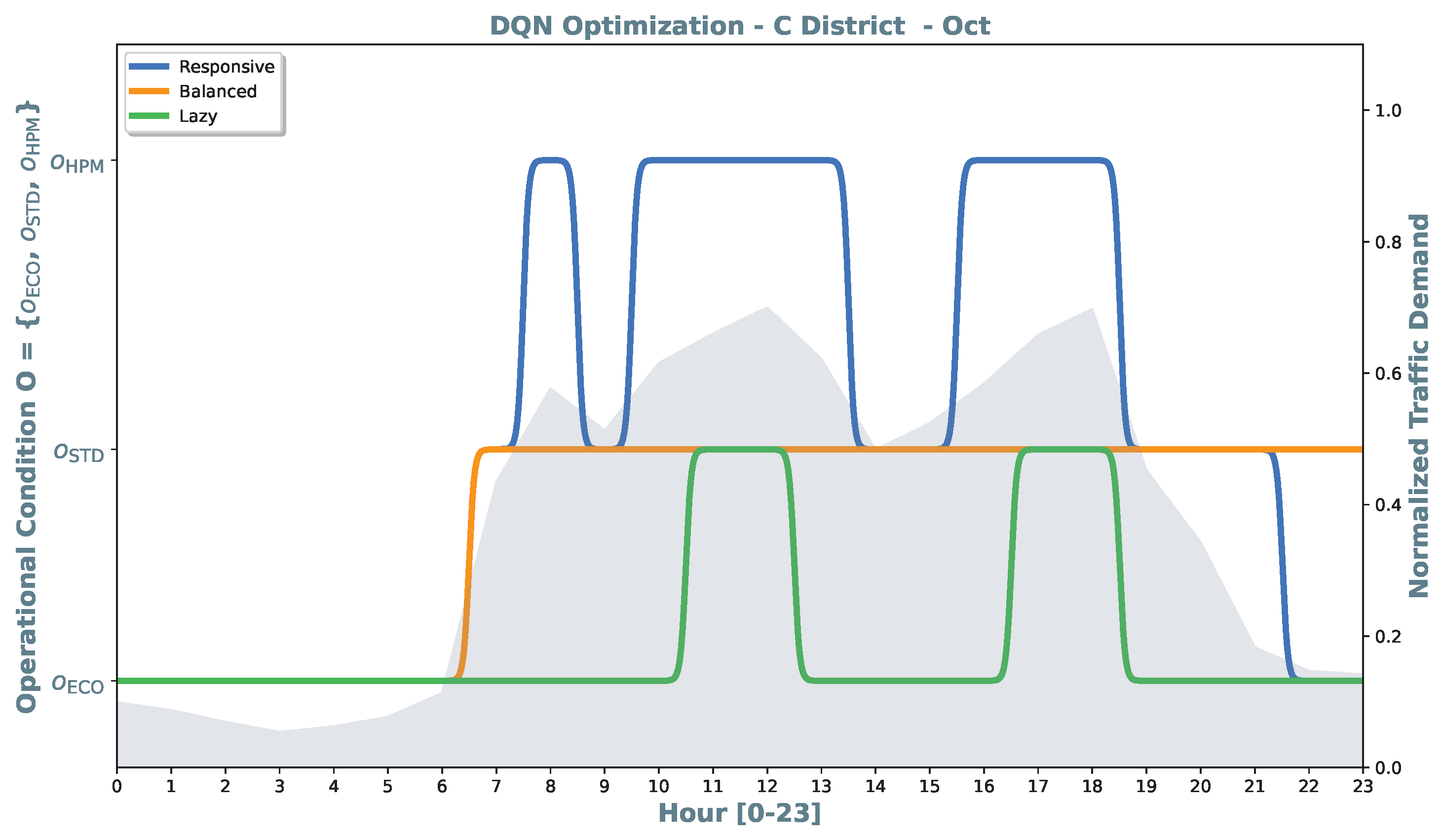

In the October scenario depicted in

Figure 7, the

lazy policy switches from the

mode to the

mode only during the two highest peaks of the day, maintaining its low-power profile like in August. The

balanced policy remains in

throughout the active city hours (07:00–21:00) and reverting to

only during deep night. The

responsive policy switches into

at each traffic peak, specifically targeting the early morning rush, the mid-day plateau, and the evening return, thus maximizing the permanence in the high-performance

condition.

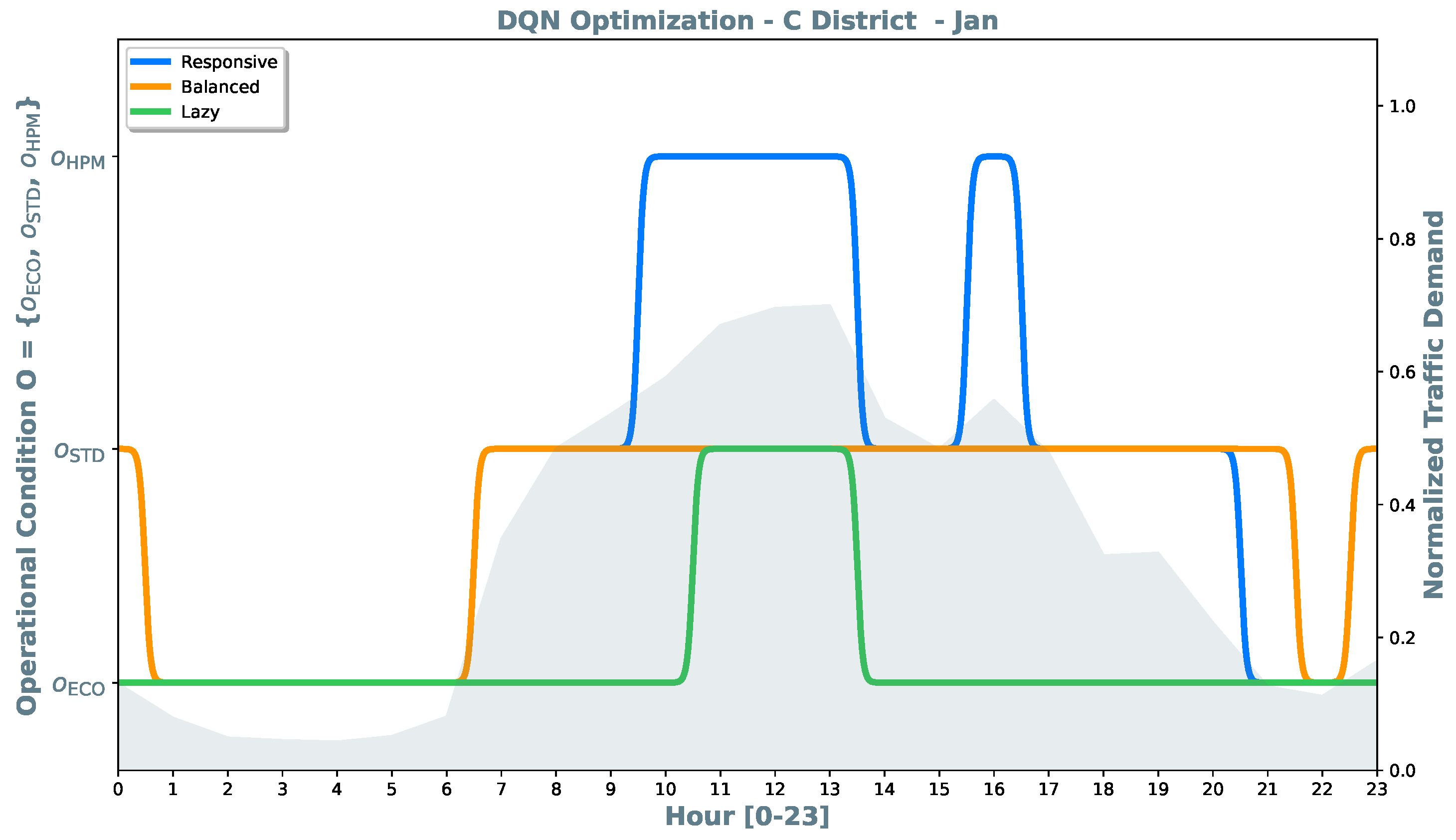

By observing the January scenario depicted in

Figure 8, the analysis reveals how the agent adapts to winter traffic demand. The

lazy policy confirms the extreme cost-oriented attitude observed in August and in October. Devices remain in

even during significant traffic demand, with only a brief transition to

during the late morning. Although the

balanced policy is not high-responsive to the midday peaks (0.5–0.7) in traffic demand, it maintains devices in

during the (07:00–21:00) daily period when the city center is expected to be persistently affected by vehicular traffic flows. Devices are switched to

during the early morning hours (normalized traffic demand < 0.1), and around the 22:00. The

responsive policy, instead, promptly escalates to

to cover the broad mid-day traffic plateau and a spike around the 16:00.

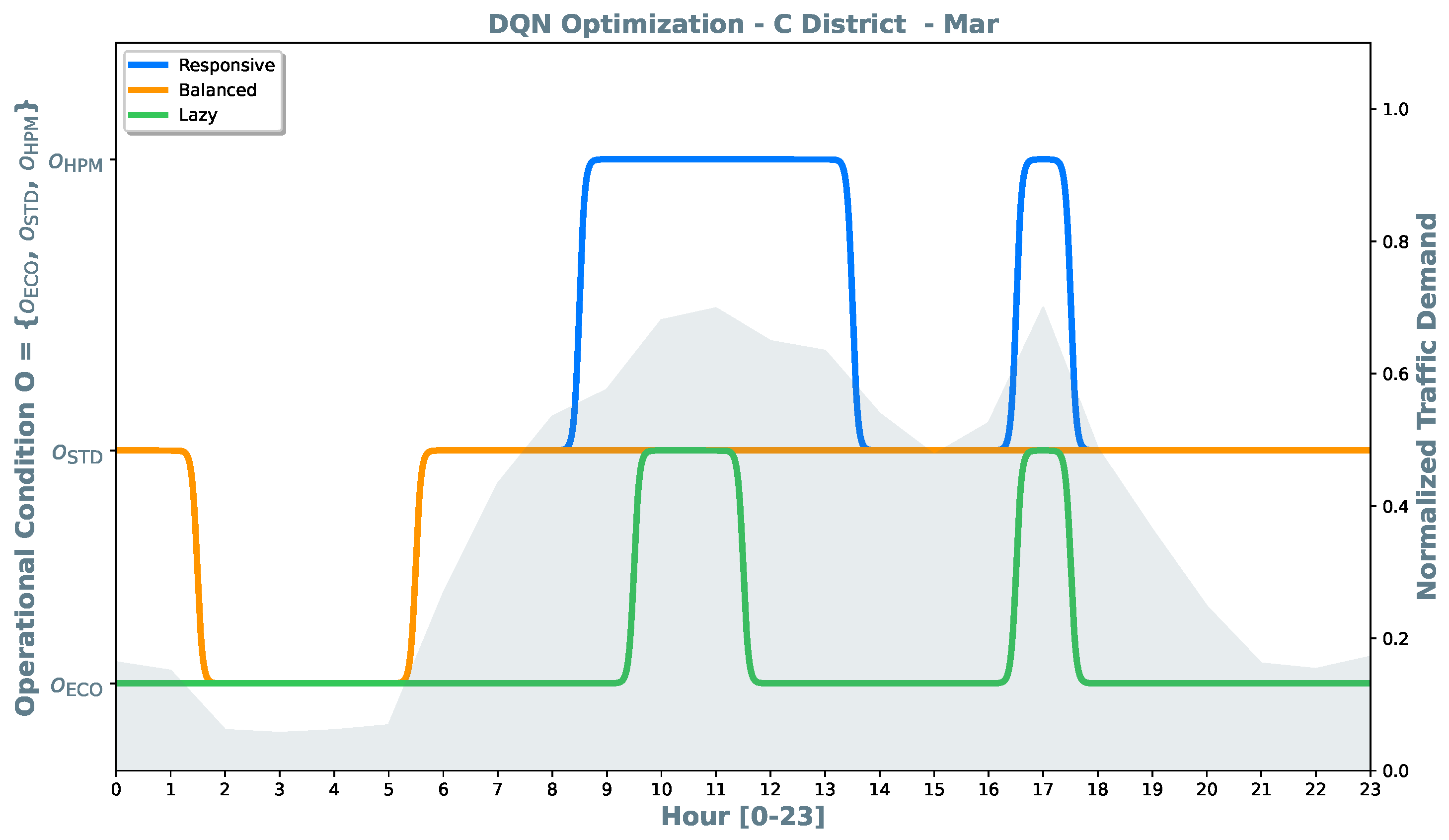

Observing the March scenario depicted in

Figure 9, the analysis confirms the characteristic behavior of the three policies. The

lazy policy maintains devices in the

, activating the

condition only during the peaks of demand (around hours 11:00 and 17:00). The

balanced policy maintains its cost-oriented objective. Finally, the

responsive policy confirms its high sensitivity.

The cross-seasonal analysis confirms that the DQN agent has successfully synthesized three distinct optimization policies. The policy convergence observed across the four representative months suggests a high degree of robustness: regardless of the seasonal baseline, the lazy policy consistently acts as a lower bound for energy expenditure, while the responsive policy serves as a high-fidelity upper bound, with a direct benefit in terms of social sustainability.

The balanced policy exhibits an intermediate and stabilizing behavior. It effectively filters out minor traffic fluctuations to maintain devices in a steady monitoring state, proving to be a compromise profile between social, economic, and environmental sustainability, without incurring the economic penalties of the responsive mode. However, the balanced policy is weaker than responsive to address environmental sustainability.

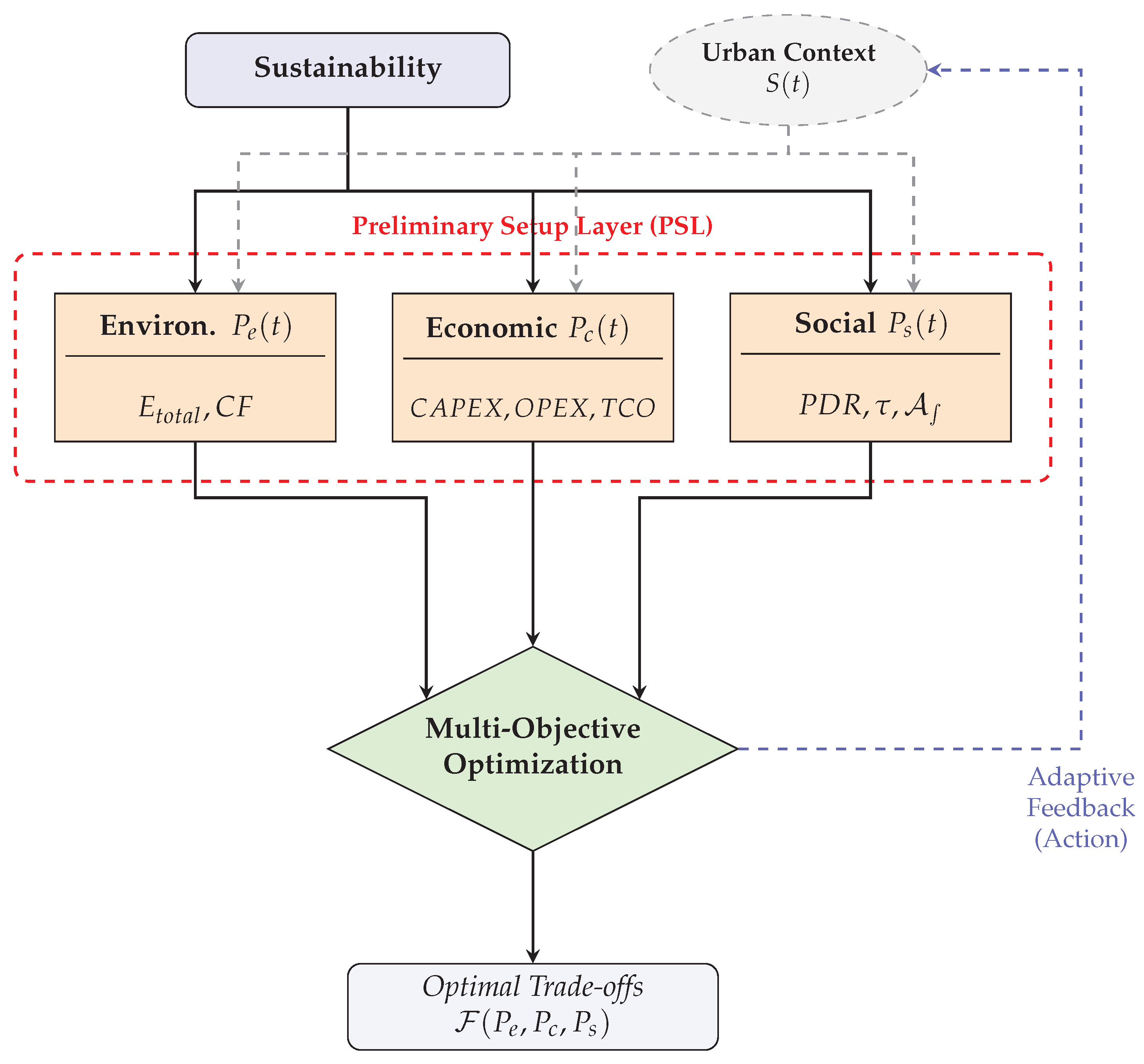

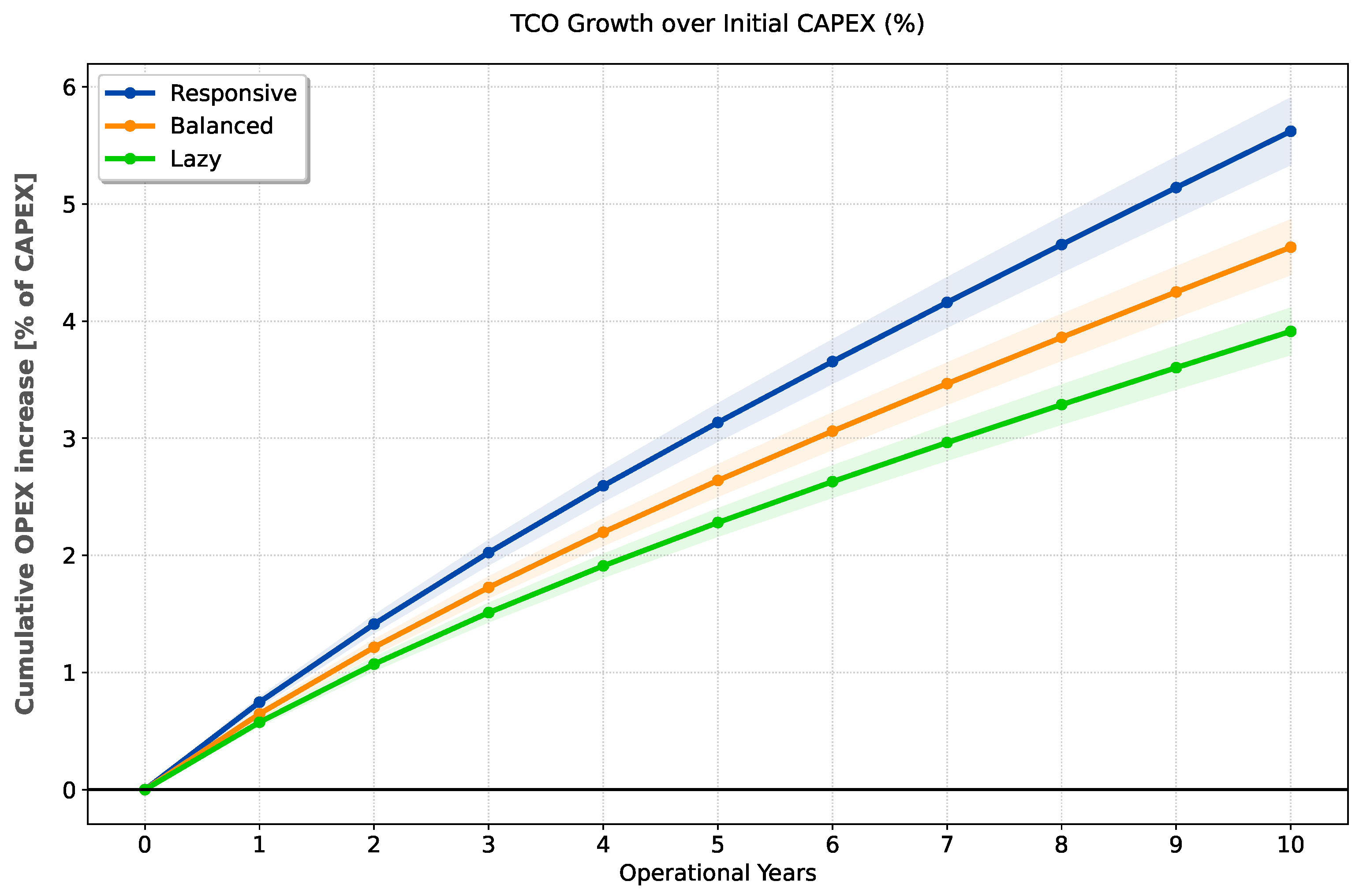

The economic sustainability of the examined CPS is evaluated through a

analysis over a 10-year operational horizon. The initial

is established as the baseline, and comprises the acquisition of the equipment shown in

Table 4, alongside a centralized gateway, software orchestration platforms, and professional installation costs. The growth in

is driven by the

, and it is primarily conditioned by energy consumption costs, assuming a baseline rate of

, and system maintenance costs.

The results of

Figure 10 allow an investigation of the policy sensitivity: the

responsive policy exhibits higher growth, reaching an OPEX-to-CAPEX ratio of approximately

% after 10 years. The DQN agent consistently prioritizes the

mode to ensure near-zero latency and high information fidelity during peak traffic demand, which inherently maximizes the power draw across the NVIDIA Jetson Nano rails.

The lazy policy achieves the highest economic sustainability, limiting the 10-year increase to less than 4%. All policies show a subtle transition to a linear trend after the initial five-year phase, reflecting their stabilization and leading to a predictable marginal cost per year.

Finally, the balanced policy shows a mid-range trade-off. These savings are achieved with 95% confidence intervals, ensuring the reliability of the economic projections.

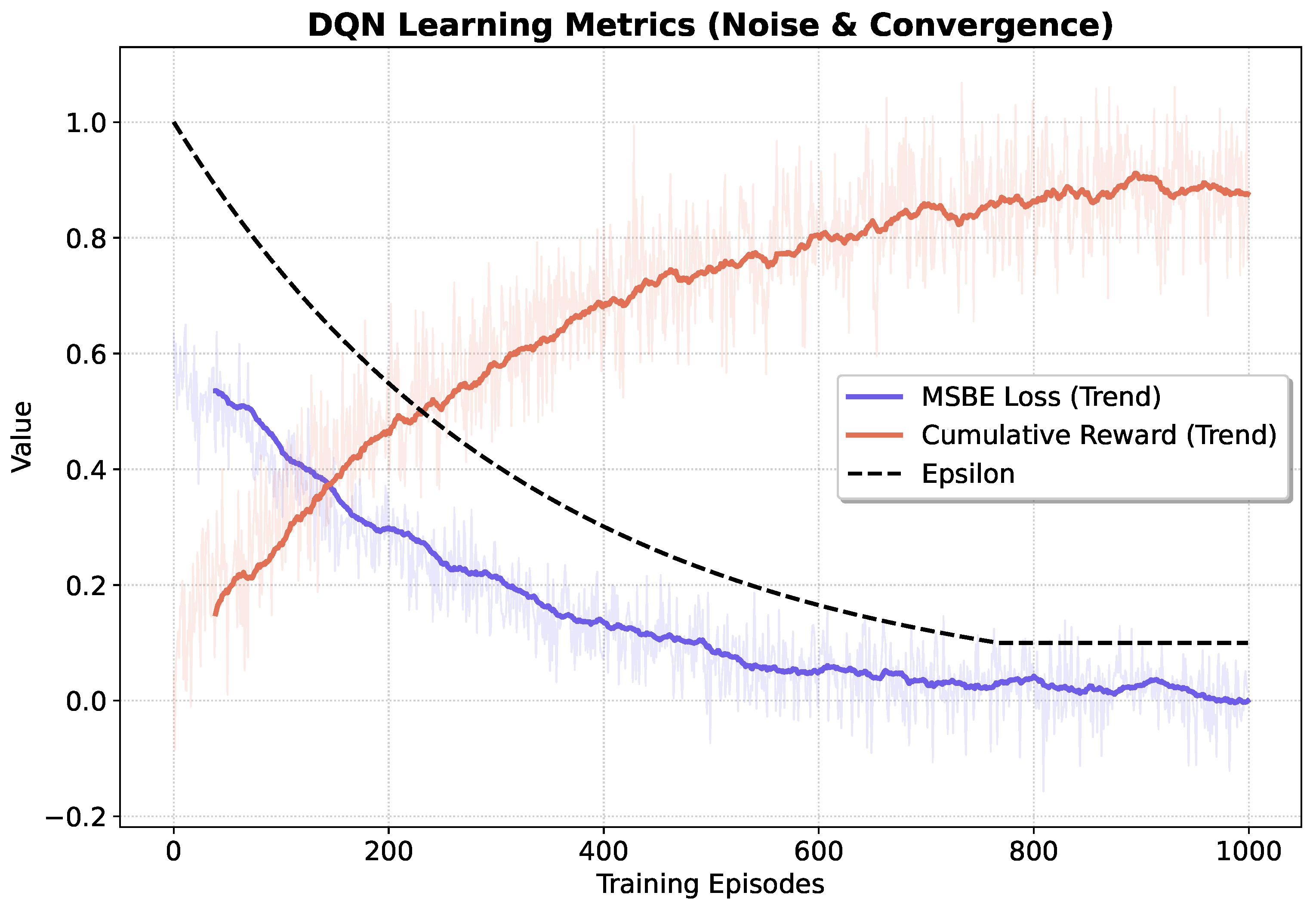

The training process of the DQN agent has been monitored through three key metrics, as illustrated in

Figure 11. The

Epsilon curve represents the exploration-exploitation trade-off. It follows a planned decay from

to a minimum threshold of

around episode 700. This transition ensures that the agent sufficiently explores the state-action space in the early stages before shifting toward the exploitation of the learned optimal policy in the final phases of training. The high stability of the reward and loss trends in the last 200 episodes indicates that the agent has reached a reliable and converged operational state. The

Mean Squared Bellman Error (MSBE) represents a fundamental metric [

49] for evaluating approximation accuracy, formulating learning objectives, and investigating the theoretical properties of RL algorithms. The

trend shows a consistent downward trajectory, starting from approximately

and approaching zero as the training progresses. This reduction indicates that the model has accurately captured the underlying dynamics of the context. The

Cumulative Reward trend exhibits a strong upward slope, stabilizing at a high plateau (approximately at

) after 800 episodes. This trend confirms that the agent is successfully learning a control policy that maximizes the objective function, balancing energy efficiency and service quality according to the defined reward structure.

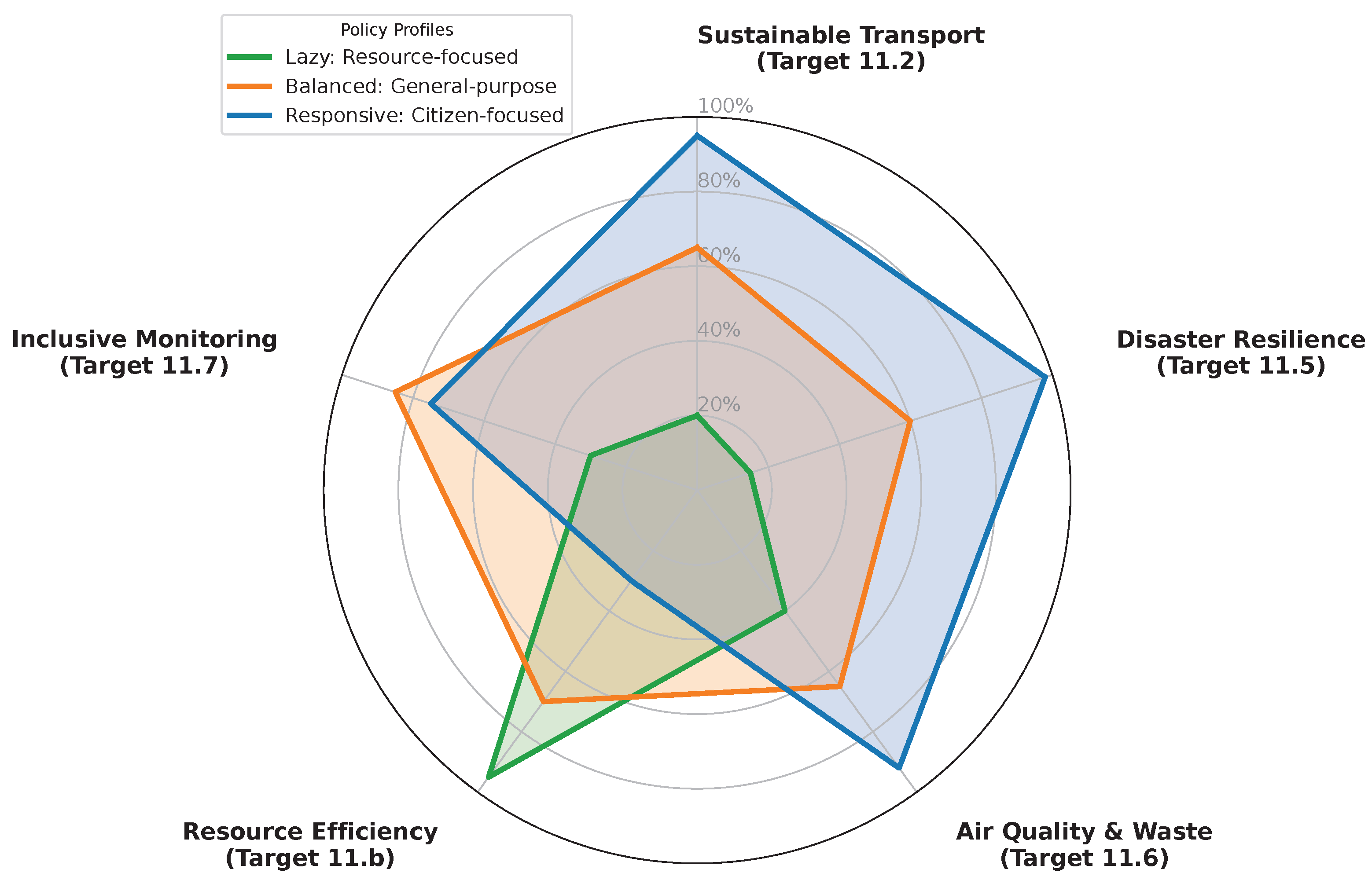

Figure 12 shows a radar of the multi-objective optimization policies resulting from our analysis, mapped on a set of

UN SDG 11 targets. This map is obtained considering the set of heterogeneous metrics of the dynamic urban context

defined in Equation (

24).

The responsive policy demonstrates a clear prioritization of Target 11.2 (Sustainable Transport) and Target 11.5 (Disaster Resilience and Safety). By proactively escalating to the high-performance state during traffic peaks, the agent ensures the information fidelity required to mitigate congestion and reduce emergency response times. This “citizen-centric” behavior acknowledges that a localized increase in energy consumption is a strategic investment to achieve systemic environmental benefits and public safety, directly supporting Target 11.6 (reducing the environmental impact of cities).

Conversely, the lazy policy aligns primarily with Target 11.b (Resource Efficiency). By maintaining the infrastructure in a low-power state for the majority of the operational cycle, it minimizes the carbon footprint and the operational expenditure . While this approach maximizes the physical and financial longevity of the CPS, it results in lower performance regarding real-time mobility management.

Finally, the balanced policy identifies an equilibrium point for Target 11.7 (Inclusive and Reliable Monitoring). By acting as a stable baseline in , it provides continuous and reliable data flows without the energy surges of the responsive mode or the data scarcity of the lazy profile.

The analysis confirms that the DQN-based framework does not merely optimize a technical trade-off but offers an optimal set of strategies. This allows municipal decision-makers to dynamically tune the infrastructure behavior to meet specific sustainability priorities, from strict resource conservation to high-fidelity urban resilience.