Submitted:

20 April 2026

Posted:

20 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

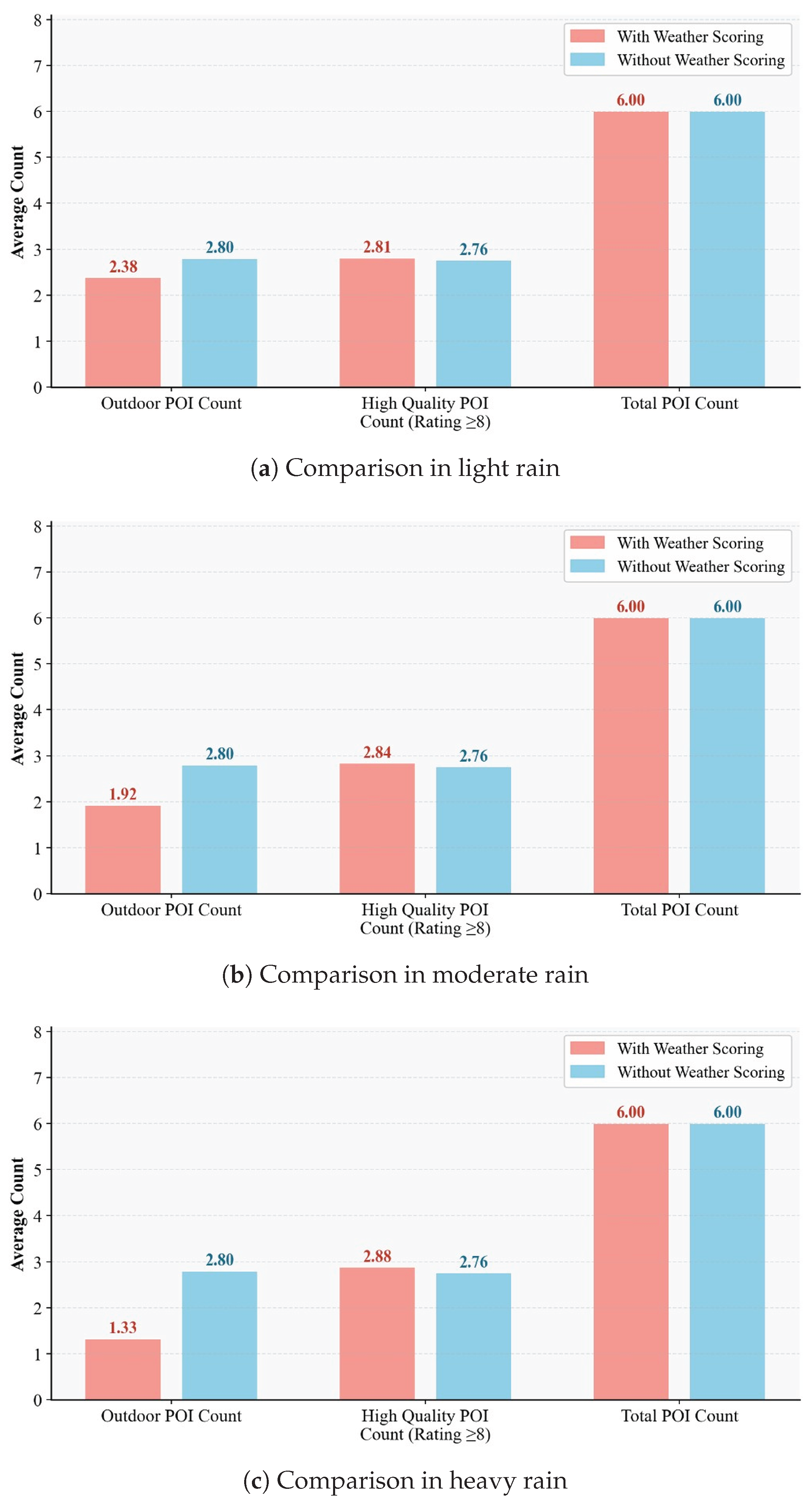

- A weather-adaptive POI scoring framework that maps nine distinct weather conditions (clear, drizzle, heavy drizzle, light shower rain, light rain, shower rain, moderate rain, extreme rain, and extreme weather) to three qualitatively differentiated scoring strategies, all modulated by the local density of indoor alternatives. This resolves the binary exclusion limitation of existing weather-aware systems while preserving qualitatively superior outdoor attractions within generated itineraries under mild adverse conditions.

- 2.

- A user-participatory evaluation architecture in which three integer sliders produce normalized weight coefficients , , (with ) that directly govern the GA fitness function across three sub-objectives: POI quality, traveling efficiency, and preference satisfaction. This mechanism minimizes the information burden on users while generating itineraries that transparently reflect individual traveler priorities.

2. Related Work

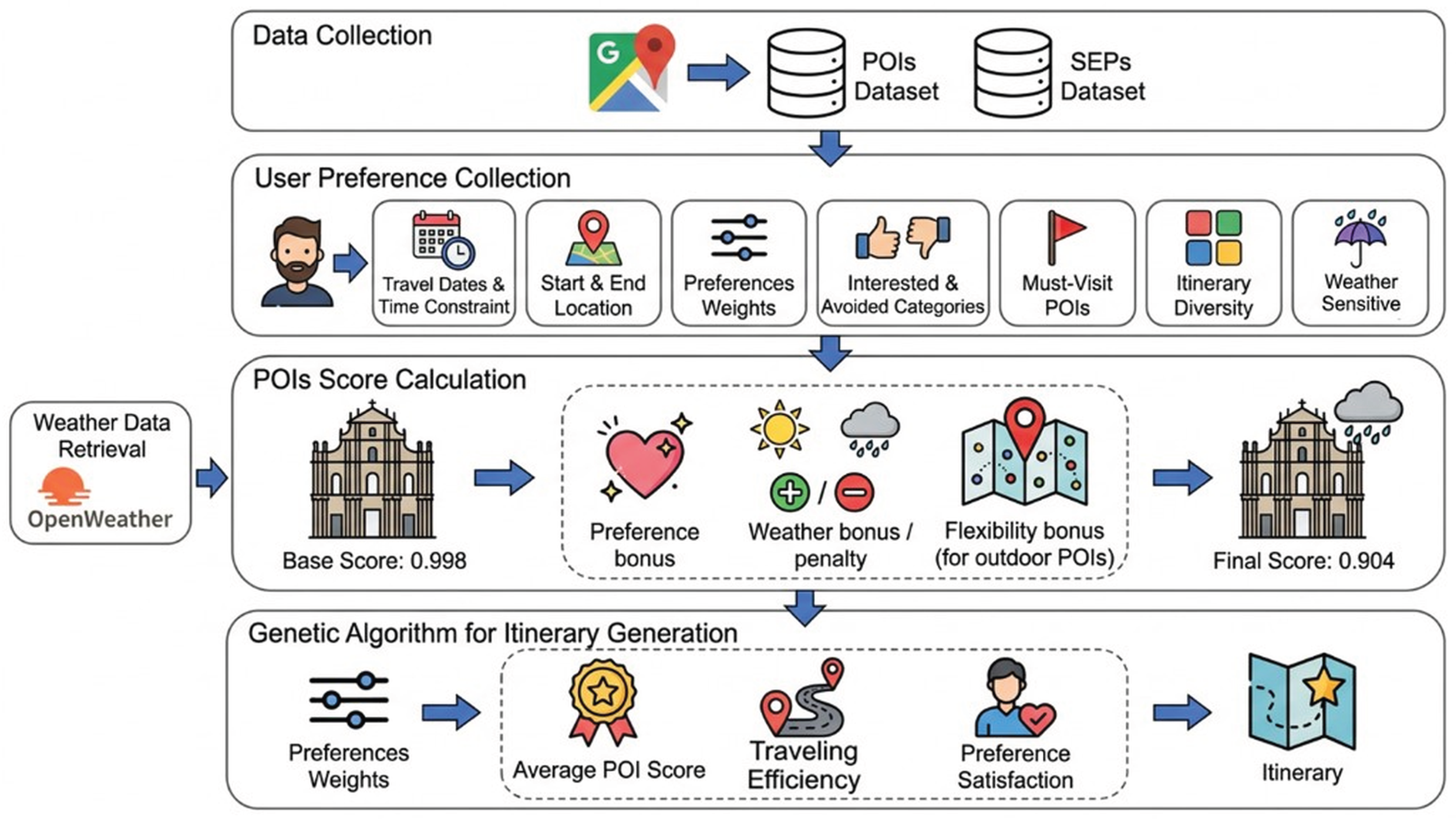

3. Overview Framework

3.1. Data Collection

3.2. User Preference Collection

3.3. Weather Data Retrieval

3.4. POIs Score Calculation

3.5. Genetic Algorithm for Itinerary Generation

- Replace: A randomly selected POI in the day’s route is substituted with a new candidate drawn from the scored candidate pool. The replacement POI must not already appear elsewhere in the day’s route, preventing duplicate visits.

- Add: A new POI is appended to the day’s route, drawn from the top-10 highest-scored candidates not already in the route. This operator is only eligible when the current route contains fewer than 5 POIs, ensuring that route length remains manageable.

- Remove: A randomly selected POI is deleted from the day’s route. Must-visit POIs are excluded from removal eligibility, guaranteeing that user-mandated attractions are preserved across all mutations.

4. Formal Algorithmic Specification

4.1. POIs Score Calculation

4.1.1. Base Score Calculation & Review Count Log Normalization

4.1.2. Rain Penalty

4.1.3. Flexibility Bonus

4.1.4. Preference Score

4.2. Genetic Algorithm Fitness Function

4.2.1. POI Quality ()

4.2.2. Traveling Efficiency ()

4.2.3. Preference Satisfaction ()

5. Results and Discussion

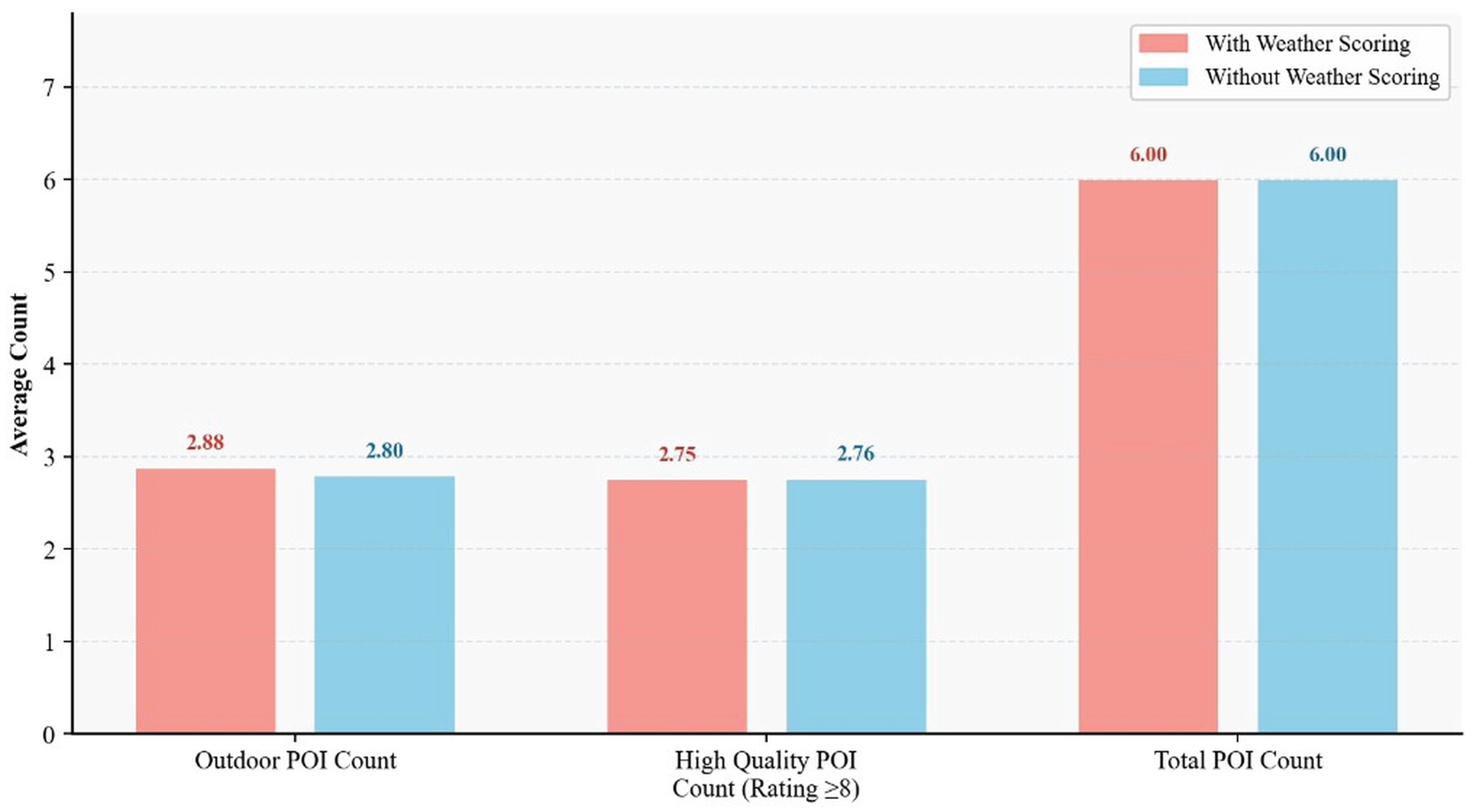

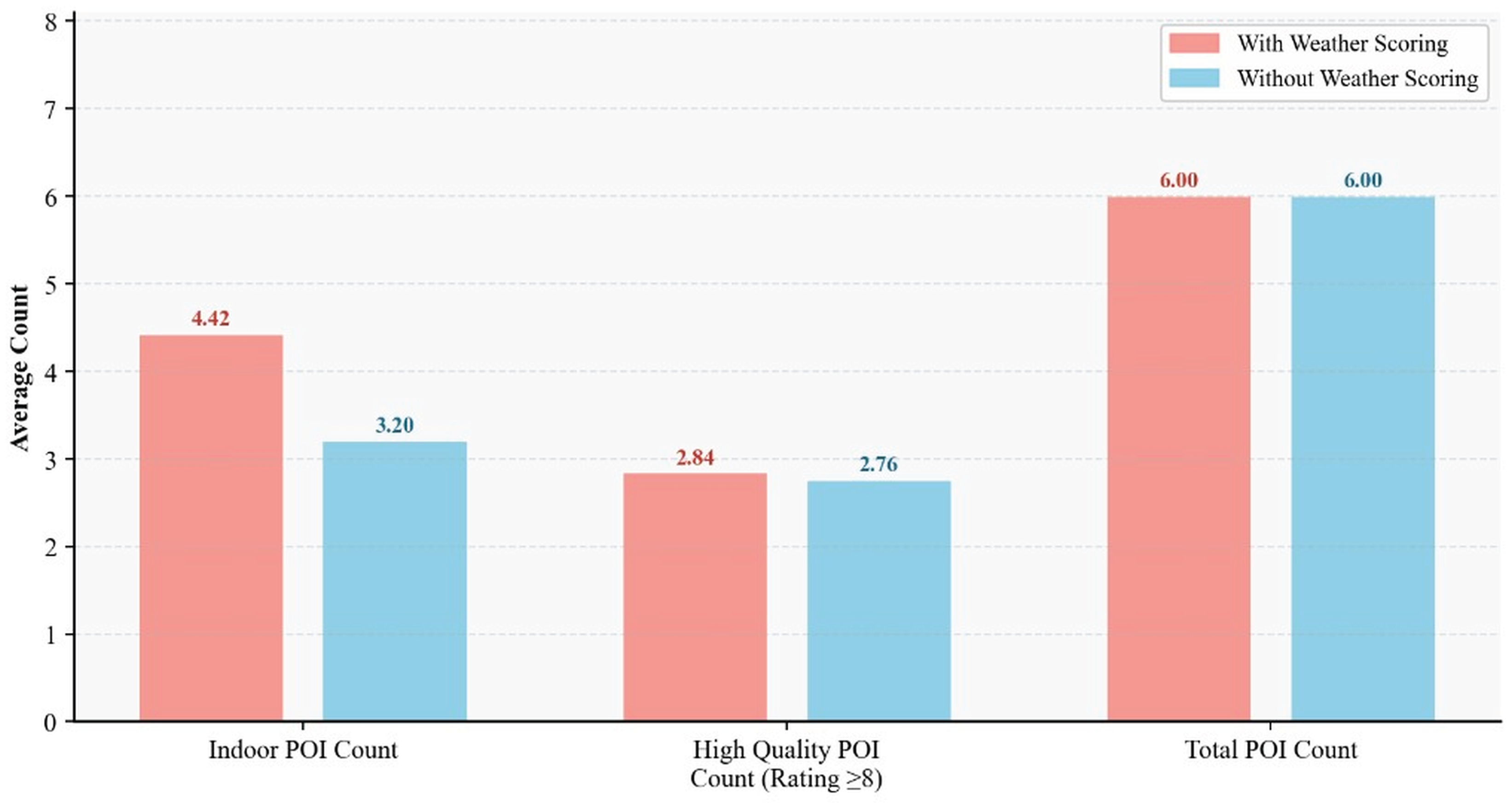

5.1. Weather-Adaptive Scoring Validation

5.2. User Preference Weight Sensitivity Analysis

5.2.1. Quality Weight () Sensitivity

5.2.2. Traveling Efficiency Weight () Sensitivity

5.2.3. Preference Satisfaction Weight () Sensitivity

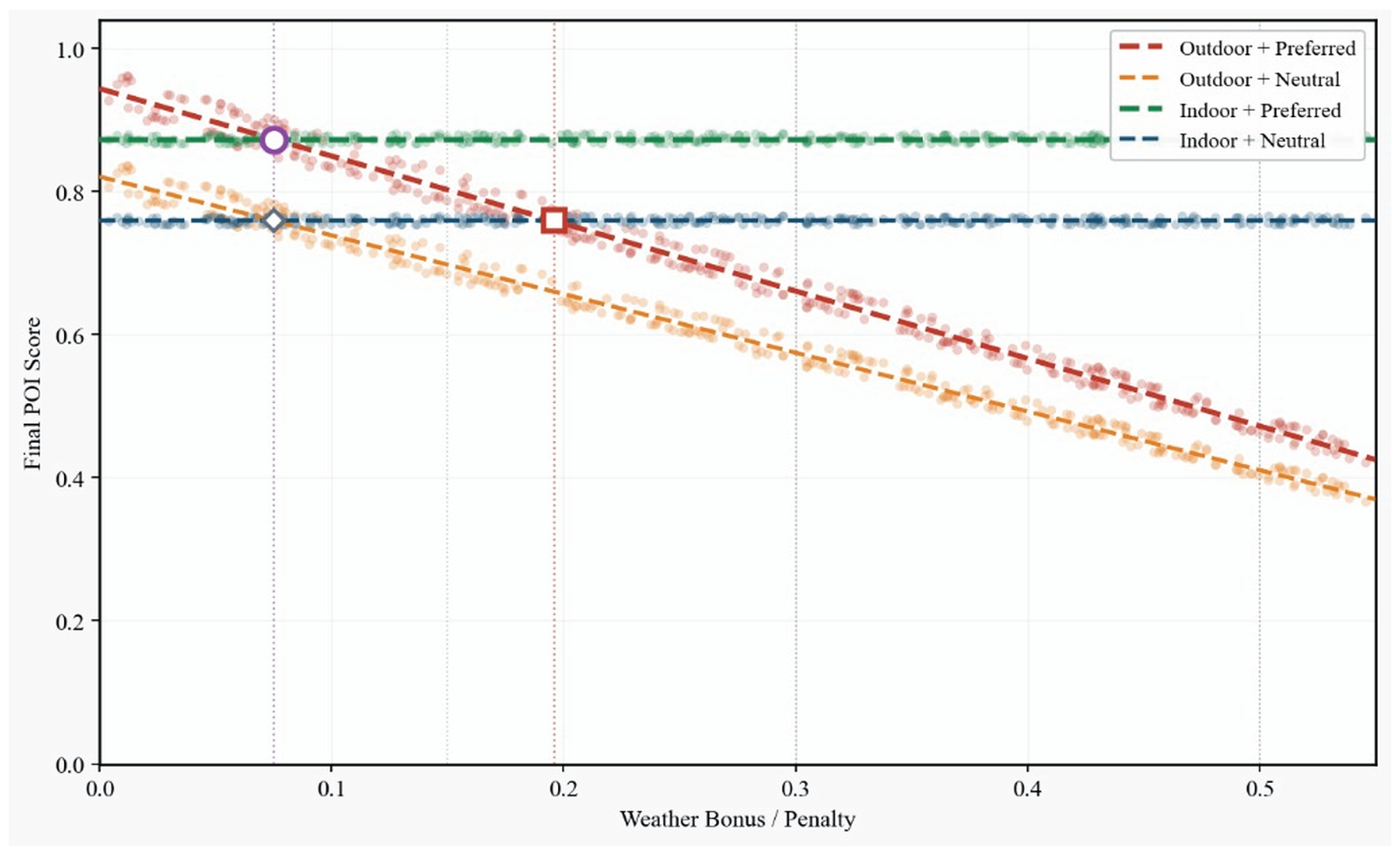

5.3. Weather–Preference Scoring Mechanism Compatibility

5.4. Discussion

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Cárdenas-García, P. J.; Sánchez-Rivero, M.; Pulido-Fernández, J. I. Does tourism growth influence economic development? Journal of travel Research 2015, 54(2), 206–221. [Google Scholar] [CrossRef]

- Sharma, G. D.; Thomas, A.; Paul, J. Reviving tourism industry post-COVID-19: A resilience-based framework. Tourism management perspectives 2015, 37, 100786. [Google Scholar] [CrossRef]

- Zhu, C.; Hu, J. Q.; Wang, F.; Xu, Y.; Cao, R. On the tour planning problem. Annals of Operations Research 2012, 192(1), 67–86. [Google Scholar] [CrossRef]

- Yochum, P.; Chang, L.; Gu, T.; Zhu, M. An adaptive genetic algorithm for personalized itinerary planning. IEEE Access 2020, 8, 88147–88157. [Google Scholar] [CrossRef]

- Şehab, M.; Turan, M. An enhanced genetic algorithm solution for itinerary recommendation considering various constraints. PeerJ Computer Science 2024, 10, e2340. [Google Scholar] [CrossRef] [PubMed]

- Anshari, M. R.; Baizal, Z. K. A. N-days tourist route recommender system in Yogyakarta using genetic algorithm method. JIPI 2023, 8(3), 736–743. [Google Scholar] [CrossRef]

- Cena, F.; Console, L.; Micheli, M.; Vernero, F. Including the Temporal Dimension in the Generation of Personalized Itinerary Recommendations. IEEE Access 2024, 12, 112794–112809. [Google Scholar] [CrossRef]

- Huang, T.; Gong, Y. J.; Zhang, Y. H.; Zhan, Z. H.; Zhang, J. Automatic planning of multiple itineraries: A niching genetic evolution approach. IEEE Transactions on Intelligent Transportation Systems 2019, 21(10), 4225–4240. [Google Scholar] [CrossRef]

- Halder, S.; Lim, K. H.; Chan, J.; Zhang, X. Deep learning of dynamic POI generation and optimisation for itinerary recommendation. ACM Transactions on Recommender Systems 2025, 3(4), 1–29. [Google Scholar] [CrossRef]

- Zhang, J.; Ma, M.; Gao, X.; Chen, G. Encoder-decoder based route generation model for flexible travel recommendation. IEEE Transactions on Services Computing 2024, 17(3), 905–920. [Google Scholar] [CrossRef]

- Wong, C. U. I.; Qi, S. Tracking the evolution of a destination’s image by text-mining online reviews-the case of Macau. Tourism management perspectives 2017, 23, 19–29. [Google Scholar] [CrossRef]

- Yixuan, W.; Jiayu, W.; Tian, C. Multi-Scenario analysis of rooftop greening regulation on runoff effects based on adaptive Evaluation: A case study of Macau, China. Ecological Indicators 2024, 163, 111856. [Google Scholar] [CrossRef]

- Kim, S.; Park, J. H.; Lee, D. K.; Son, Y. H.; Yoon, H.; Kim, S.; Yun, H. J. The impacts of weather on tourist satisfaction and revisit intention: A study of South Korean domestic tourism. Asia Pacific Journal of Tourism Research 2017, 22(9), 895–908. [Google Scholar] [CrossRef]

- Steiger, R.; Abegg, B.; Jänicke, L. Rain, rain, go away, come again another day. Weather preferences of summer tourists in mountain environments. Atmosphere 2016, 7(5), 63. [Google Scholar] [CrossRef]

- Tenemaza, M.; Luján-Mora, S.; De Antonio, A.; Ramirez, J. Improving itinerary recommendations for tourists through metaheuristic algorithms: an optimization proposal. IEEE Access 2020, 8, 79003–79023. [Google Scholar] [CrossRef]

- Choi, K. C.; Li, S.; Lam, C. T.; Wong, A.; Lei, P.; Ng, B.; Siu, K. M. Genetic algorithm for tourism route planning considering time constrains. International Journal of Engineering Trends and Technology 2022, 70(1), 170–178. [Google Scholar] [CrossRef]

- Chen, B. H.; Han, J.; Chen, S.; Yin, J. L.; Chen, Z. Automatic itinerary planning using triple-agent deep reinforcement learning. IEEE Transactions on Intelligent Transportation Systems 2022, 23(10), 18864–18875. [Google Scholar] [CrossRef]

- Noguchi, T.; Fukada, K.; Bao, S.; Togawa, N. Hybrid subQUBO Annealing With a Correction Process for Multi-Day Intermodal Trip Planning. IEEE Access 2025, 13, 19716–19727. [Google Scholar] [CrossRef]

- Lim, K. H.; Chan, J.; Karunasekera, S.; Leckie, C. Personalized itinerary recommendation with queuing time awareness. In Proceedings of the 40th international ACM SIGIR conference on research and development in information retrieval; 2017; pp. 325–334. [Google Scholar]

- Choachaicharoenkul, S.; Coit, D.; Wattanapongsakorn, N. Multi-objective trip planning with solution ranking based on user preference and restaurant selection. IEEE Access 2022, 10, 10688–10705. [Google Scholar] [CrossRef]

- Pilato, G.; Persia, F.; Ge, M.; D’Auria, D. Social sensing for personalized orienteering mediating the need for sociality and the risk of covid-19. IEEE Transactions on Technology and Society 2022, 3(4), 323–332. [Google Scholar] [CrossRef]

- Barua, B.; Kaiser, M. S. Optimizing Travel Itineraries with AI Algorithms in a Microservices Architecture: Balancing Cost, Time, Preferences, and Sustainability. arXiv 2024, 2410, 17943. [Google Scholar]

- Volchek, K.; Ivanov, S. ChatGPT as a travel itinerary planner. ENTER e-Tourism Conference; Springer Nature: Switzerland, 2024; pp. 365–370. [Google Scholar]

- Seyfi, S.; Kim, M. J.; Nazifi, A.; Murdy, S.; Vo-Thanh, T. Understanding tourist barriers and personality influences in embracing generative AI for travel planning and decision-making. International Journal of Hospitality Management 2025, 126, 104105. [Google Scholar] [CrossRef]

| Weather Condition | Scoring Strategy | |||

|---|---|---|---|---|

| Indoor POI | Outdoor POI | |||

| Pref. bonus | Pref. bonus | Flex. bonus | Rain penalty | |

| No weather-sensitive in non-extreme condition | ✓ | ✓ | − | − |

| Clear | ✓ | ✓† | − | − |

| Drizzle | ✓ | ✓ | ✓ | 15% |

| Light shower rain | ✓ | ✓ | ✓ | 25% |

| Heavy drizzle | ✓ | ✓ | ✓ | 30% |

| Light rain | ✓ | ✓ | ✓ | 30% |

| Shower rain | ✓ | ✓ | ✓ | 40% |

| Moderate rain | ✓ | ✓ | ✓ | 50% |

| Extreme rain | ✓* | ✓* | ✓* | 60% |

| Extreme weather | ✓* | ✓* | ✓* | 60% |

| †Clear: Outdoor POIs additionally receive an outdoor log-bonus. | ||||

| * Extreme rain and official alert: Indoor POIs receive a 10% score boost; preference bonus applies at 50% effectiveness. | ||||

| Outdoor POIs: Preference bonus at 30% effectiveness; applies flexibility bonus in extreme weather condition. | ||||

| Slider Label | Integer Input | Weight Coefficient | Sub-Objective |

|---|---|---|---|

| Score | POI Quality () | ||

| Distance | Traveling Efficiency () | ||

| Preference | Preference Satisfaction () |

| Preference Setting | Avg. POI Score (Default slider 4:3:3) | Avg. POI Score (Quality slider 8:1:1) |

|---|---|---|

| No preference | 0.890 | 0.890 |

| Museum | 0.856 | 0.940 |

| Park | 0.759 | 0.931 |

| Tourist attraction | 0.802 | 0.860 |

| Historical landmark | 0.723 | 0.889 |

| Garden | 0.883 | 0.956 |

| Shopping (no museum) | 0.875 | 0.887 |

| Museum+Landmark | 0.679 | 0.806 |

| Park+Garden | 0.769 | 0.898 |

| Attraction+Church | 0.840 | 0.840 |

| Catholic Church | 0.758 | 0.950 |

| Buddhist Temple | 0.747 | 0.865 |

| Taoist Temple | 0.764 | 0.899 |

| Beach | 0.843 | 0.933 |

| Observation Deck | 0.867 | 0.931 |

| Religious (3 faiths) | 0.668 | 0.929 |

| Park+Garden+Beach | 0.704 | 0.938 |

| Museum+Landmark+Church | 0.839 | 0.875 |

| Attraction+Museum (No temples) | 0.869 | 0.869 |

| Attraction+Park+Museum | 0.831 | 0.955 |

| Average | 0.798 | 0.902 |

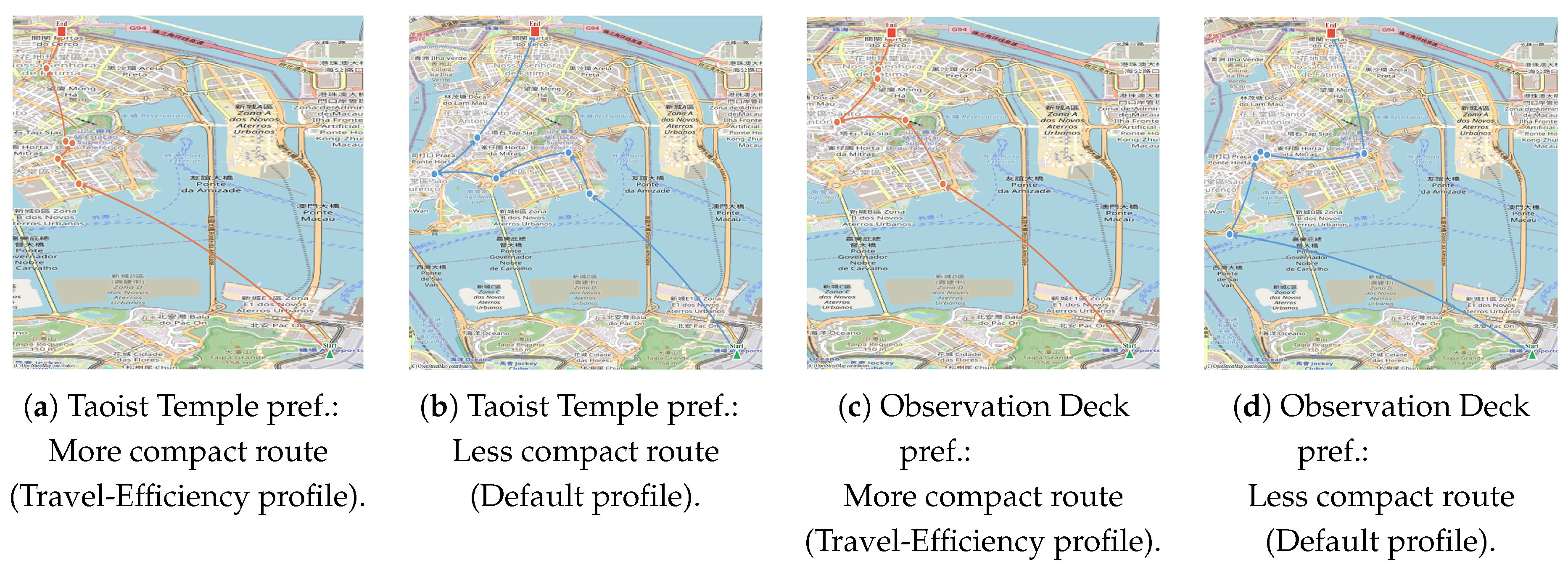

| Preference Setting | (Default slider 4:3:3) | (Travel-Efficiency slider 1:8:1) |

|---|---|---|

| No preference | 1.207 | 1.207 |

| Museum | 1.288 | 1.071 |

| Park | 1.313 | 1.108 |

| Tourist attraction | 1.369 | 1.112 |

| Historical landmark | 1.057 | 1.057 |

| Garden | 1.301 | 1.072 |

| Shopping (no museum) | 1.197 | 1.106 |

| Museum+Landmark | 1.261 | 1.076 |

| Park+Garden | 1.218 | 1.186 |

| Attraction+Church | 1.327 | 1.159 |

| Catholic Church | 1.275 | 1.157 |

| Buddhist Temple | 1.240 | 1.149 |

| Taoist Temple | 1.379 | 1.057 |

| Beach | 1.286 | 1.114 |

| Observation Deck | 1.448 | 1.146 |

| Religious (3 faiths) | 1.204 | 1.145 |

| Park+Garden+Beach | 1.243 | 1.136 |

| Museum+Landmark+Church | 1.244 | 1.174 |

| Attraction+Museum (no temples) | 1.220 | 1.175 |

| Attraction+Park+Museum | 1.195 | 1.141 |

| Average | 1.264 | 1.127 |

| Preference Setting | (Default slider 4:3:3) | (Preference slider 1:1:8) |

|---|---|---|

| No preference | 0 | 0 |

| Museum | 3 | 3 |

| Park | 4 | 4 |

| Tourist attraction | 3 | 4 |

| Historical landmark | 1 | 3 |

| Garden | 0 | 3 |

| Shopping (no museum) | 4 | 4 |

| Museum+Landmark | 5 | 5 |

| Park+Garden | 3 | 5 |

| Attraction+Church | 5 | 5 |

| Catholic Church | 3 | 4 |

| Buddhist Temple | 1 | 3 |

| Taoist Temple | 1 | 2 |

| Beach | 0 | 1 |

| Observation Deck | 0 | 2 |

| Religious (3 faiths) | 4 | 5 |

| Park+Garden+Beach | 4 | 4 |

| Museum+Landmark+Church | 4 | 5 |

| Attraction+Museum (no temples) | 4 | 5 |

| Attraction+Park+Museum | 5 | 5 |

| Average (19 with preferences) | 2.84 | 3.79 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).