Submitted:

17 April 2026

Posted:

20 April 2026

You are already at the latest version

Abstract

Keywords:

1. Summary

- The RAW-FABRID dataset provides a publicly available, comprehensively annotated benchmark for raw fabric defect detection. This addresses a major limitation in textile inspection research, where most existing studies rely on private or poorly documented datasets, which restrict objective comparison and reproducibility of results [5].

- The dataset enables researchers to evaluate and compare computer vision and machine learning algorithms under consistent and well-documented acquisition conditions, facilitating fair benchmarking of defect detection and anomaly detection methods.

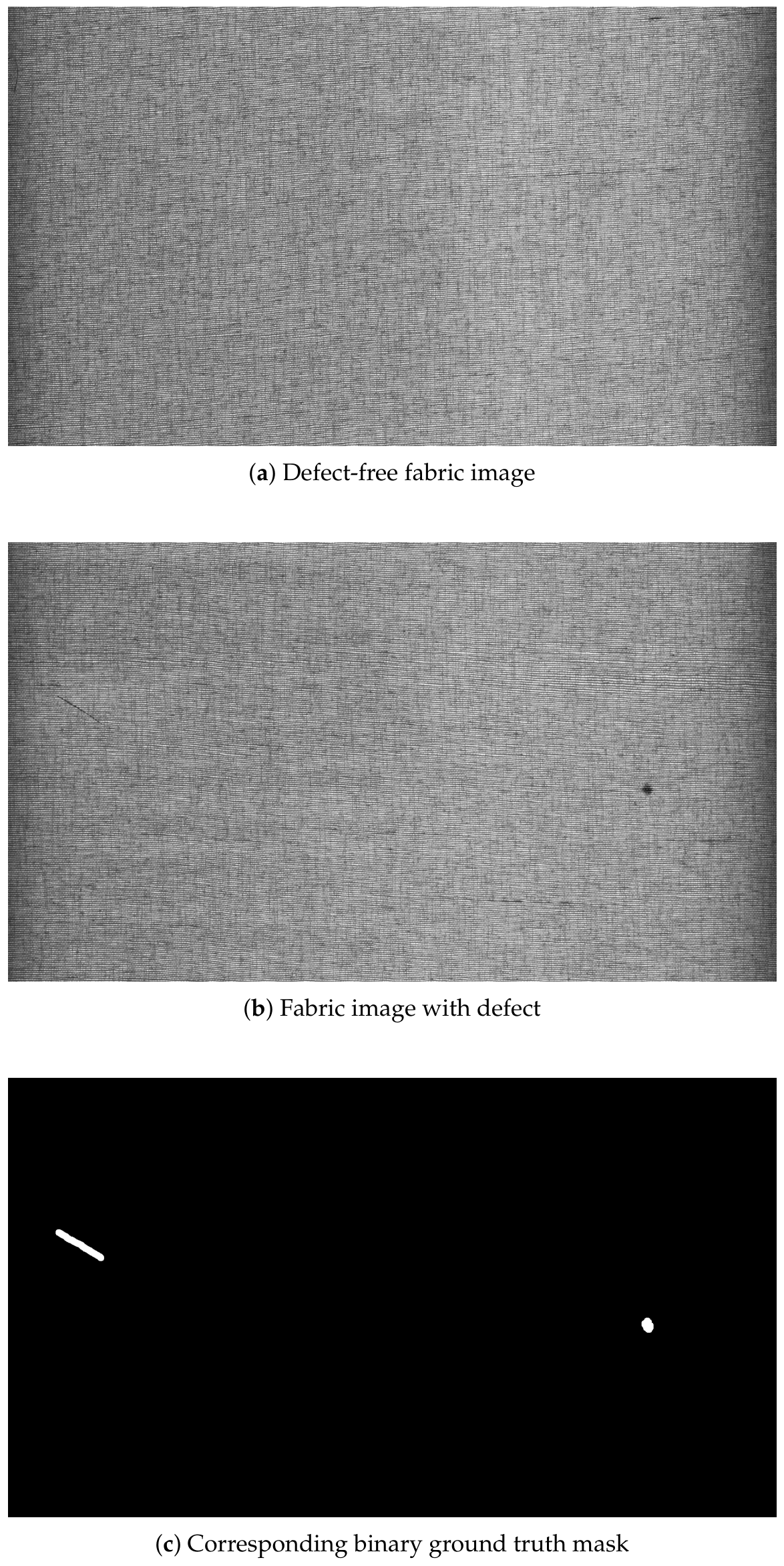

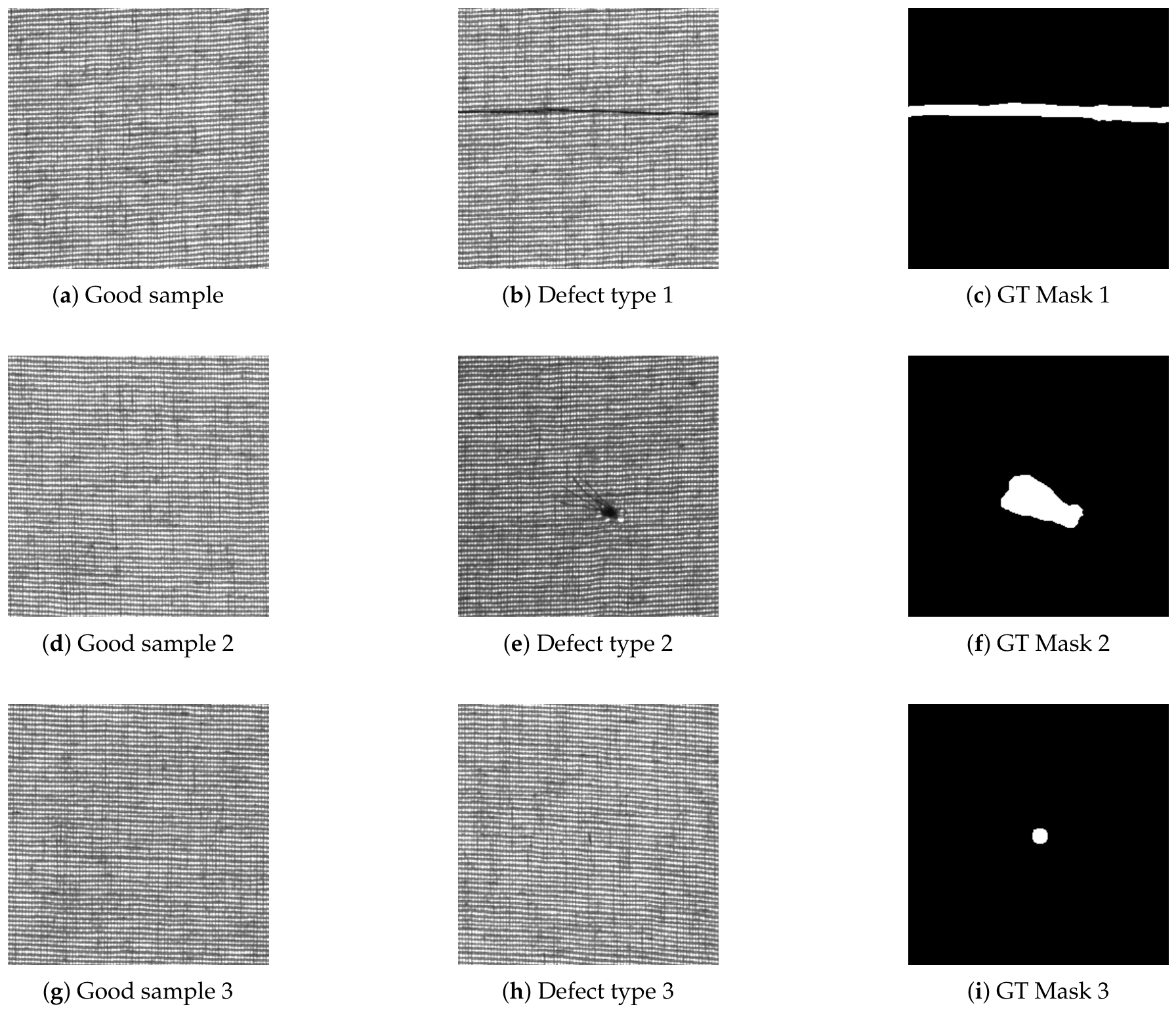

- The inclusion of high-resolution images with precise binary ground truth masks, further enriched by COCO-formatted bounding boxes and polygon annotations, allows for detailed spatial analysis of fabric defects. This supports the development of both traditional segmentation-based methods and advanced high-precision object detection algorithms.

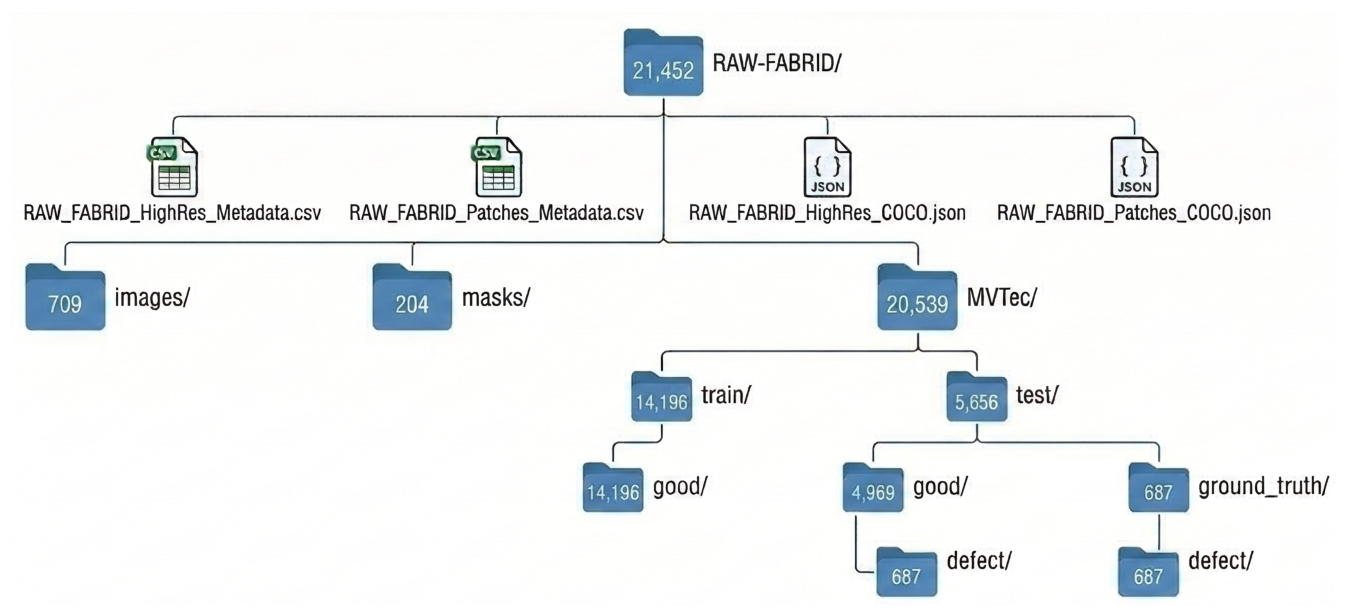

- Reusability is drastically enhanced through a dual data organization strategy and full data traceability. The dataset is provided both as high-resolution 2D grayscale images (cropped to 1792×1024 pixels to remove peripheral acquisition artifacts) with corresponding masks, and as 256×256 pixel patches organized according to the widely adopted MVTec Anomaly Detection benchmark structure [6]. This dual format, accompanied by detailed CSV metadata, seamlessly supports both custom high-resolution processing workflows and standardized deep learning pipelines.

2. Data Description

3. Methods

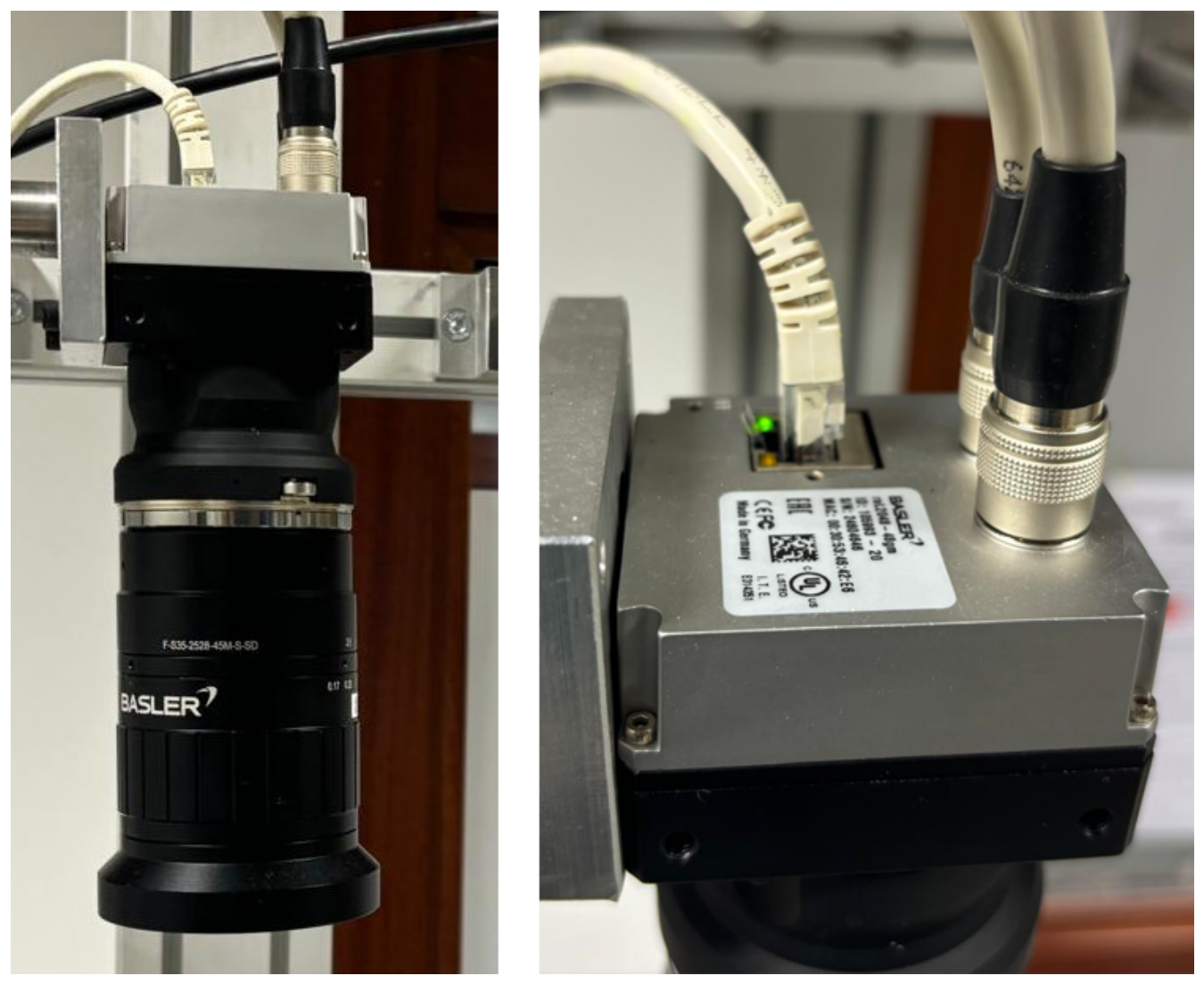

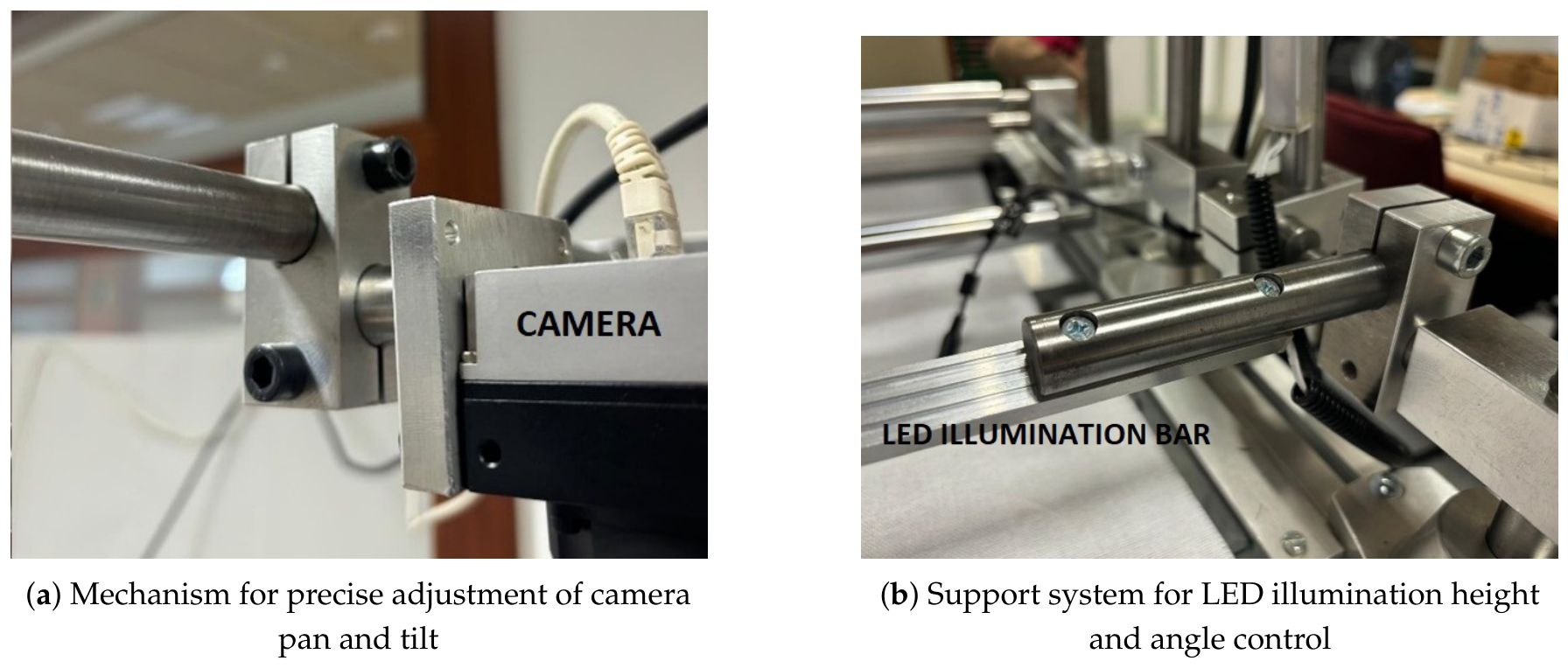

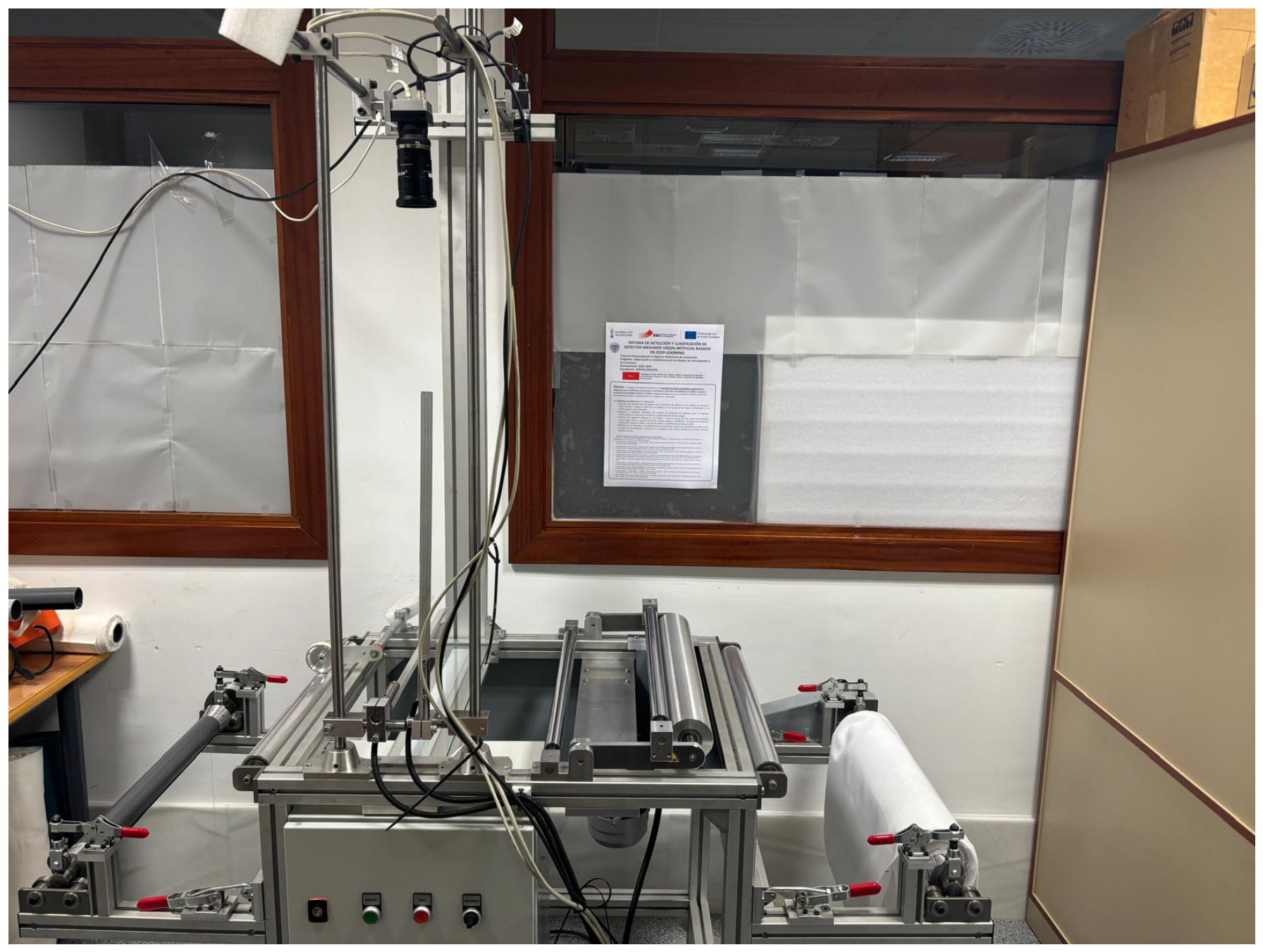

3.1. Data Acquisition System

3.2. Defect Annotation Procedure

3.3. Image Preprocessing and Formatting

4. User Notes

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| RAW-FABRID | RAW FABric Image Dataset |

| LED | Light-Emitting Diode |

| MVTec | Machine Vision Technologies |

| CSV | Comma-Separated Values |

| COCO | Common Objects in Context |

| JSON | JavaScript Object Notation |

| CNN | Convolutional Neural Network |

| ViT | Vision Transformer |

| GT | Ground Truth |

References

- Pérez-Llorens, R.; Albero-Albero, T.; Silvestre-Blanes, J. RAW-FABRID: RAW FABRic Image Dataset for defect detection. Mendeley Data, V1, 2026. [CrossRef]

- Kumar, A. Computer-vision-based fabric defect detection: A survey. IEEE Transactions on Industrial Electronics 2008, 55, 348–363. [CrossRef]

- Hanbay, K.; Talu, M.F.; Özgüven, Ö.F. Fabric defect detection systems and methods: A systematic literature review. Optik 2016, 127, 11960–11973. [CrossRef]

- Silvestre-Blanes, J.; Pérez-Lloréns, R. System for Defect Detection and Classification Using Artificial Vision Based on Deep Learning [Research Project]. Available at: https://www.upv.es/entidades/epsa/proyectos-de-investigacion-en-curso/tejidos-deep-learning/, 2022. (Accessed: 20 March 2026).

- Ngan, H.Y.T.; Pang, G.K.H.; Yung, N.H.C. Automated fabric defect detection—A review. Image and Vision Computing 2011, 29, 442–458. [CrossRef]

- Bergmann, P.; Fauser, M.; Sattlegger, D.; Steger, C. MVTec AD–A Comprehensive Real-World Dataset for Unsupervised Anomaly Detection. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2019, pp. 9592–9600. [CrossRef]

- Lin, T.Y.; Maire, M.; Belongie, S.; Bourdev, L.; Girshick, R.; Hays, J.; Perona, P.; Ramanan, D.; Zitnick, C.L.; Dollár, P. Microsoft COCO: Common Objects in Context. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2014, pp. 740–748.

- COCO Consortium. COCO Dataset Data Format. https://cocodataset.org/#format-data, 2026. Accessed: 2026-03-17.

| Category | Original Images | Patches () | ||

|---|---|---|---|---|

| () | Train | Test | Total Patches | |

| Defect-free (Good) | 505 | 14196 | 4969 | 19165 |

| Defective | 204 | - | 687 | 687 |

| Total Images | 709 | 14196 | 5656 | 19852 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).