Submitted:

16 April 2026

Posted:

17 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background and Related Work

2.1. Adversarial Attacks in Deep Learning

2.2. Transferability of Adversarial Examples

2.3. Medical Image Datasets and Domain Characteristics

2.4. Adversarial Robustness in Medical Image

2.5. Evaluation Metrics and Research Gap

3. Methodology

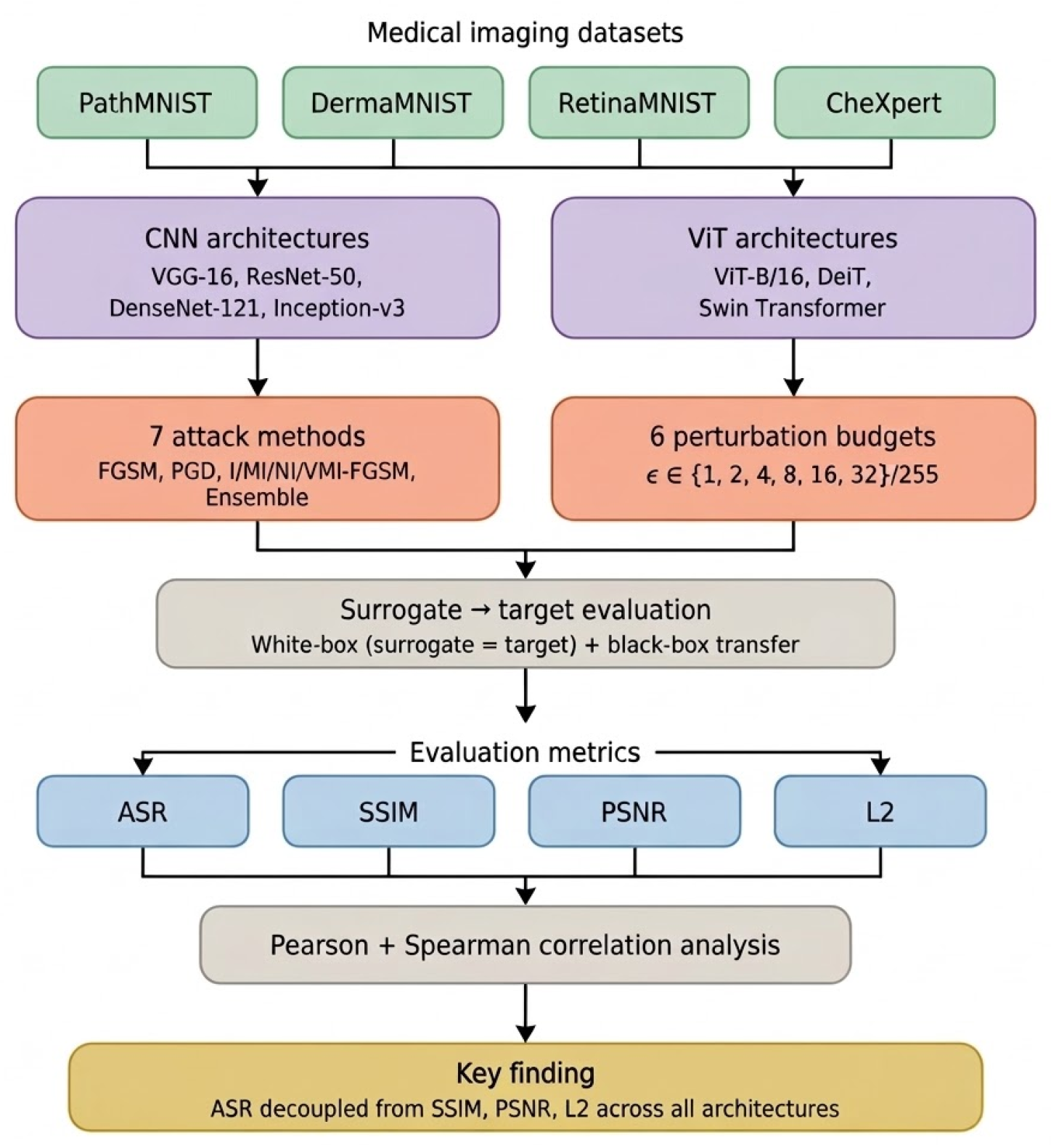

3.1. Overview

3.2. Datasets

3.3. Model Architectures

3.4. Adversarial Attacks

3.5. Perturbation Settings

3.6. Evaluation Metrics

3.7. Threat Model

4. Experiments

4.1. Experimental Setup

4.2. Evaluation Protocol

4.3. Correlation Analysis

4.4. Implementation Details

5. Results

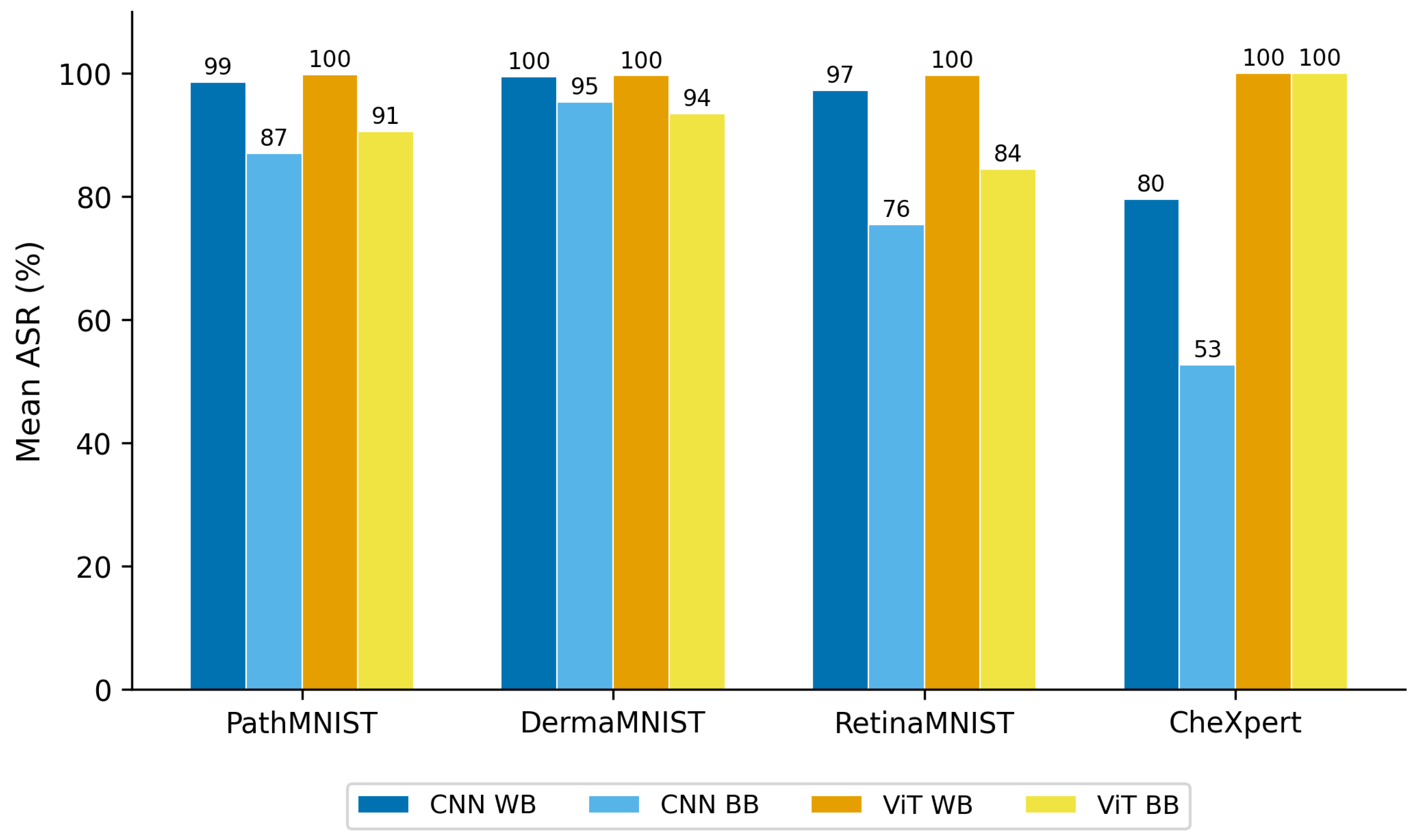

5.1. Dataset-Level Adversarial Effectiveness

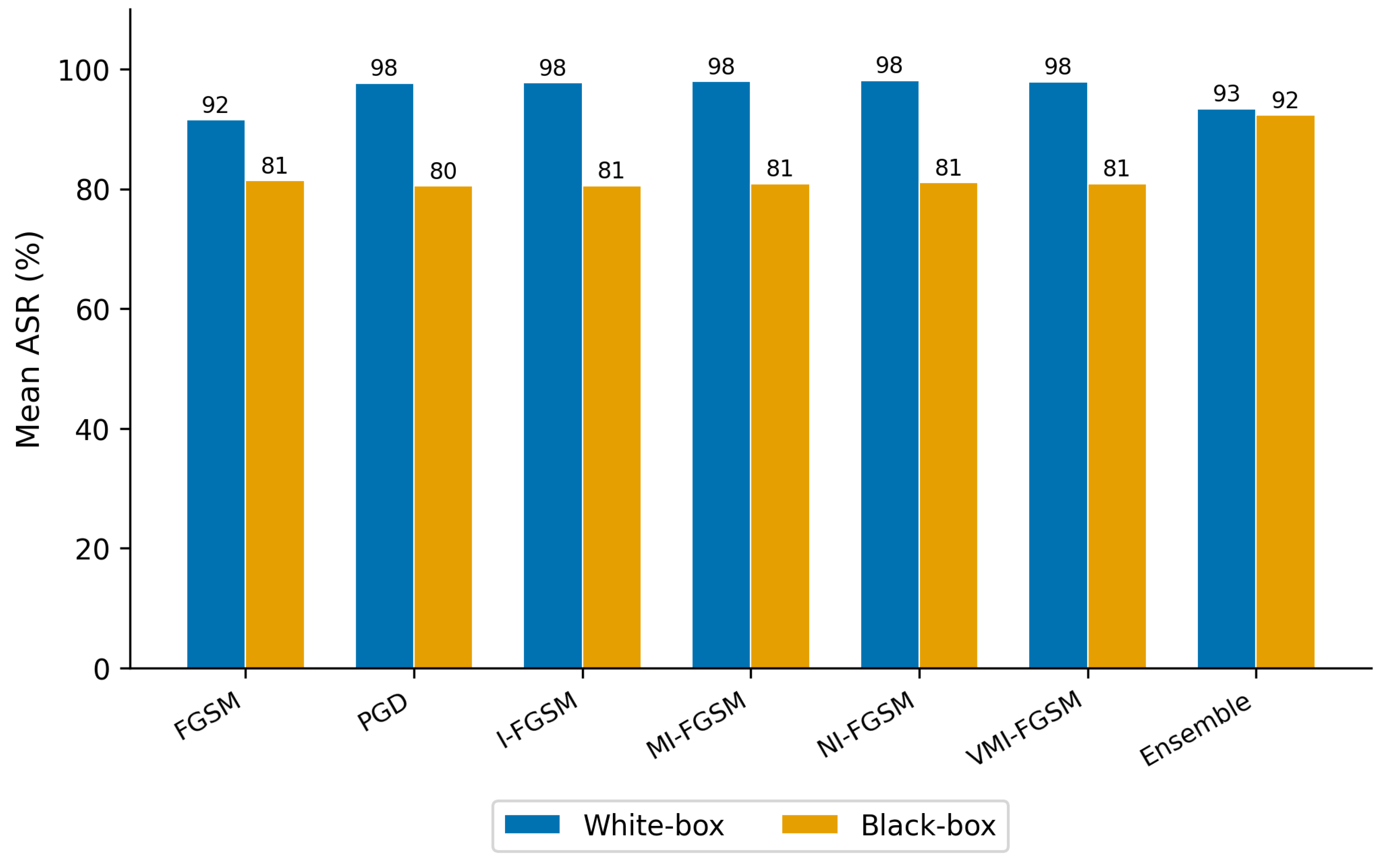

5.2. Attack Method Comparison

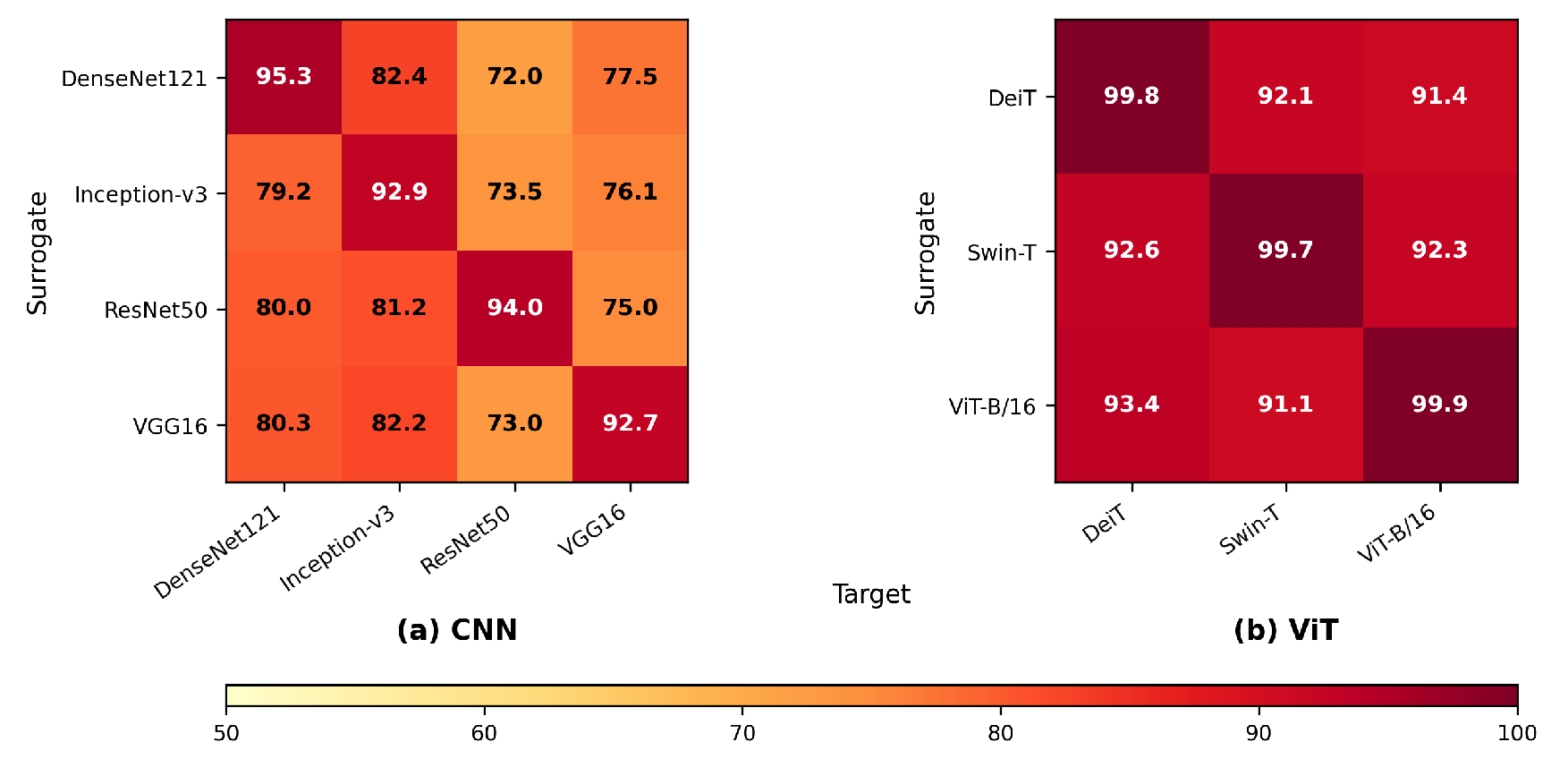

5.3. Adversarial Transferability Across Models

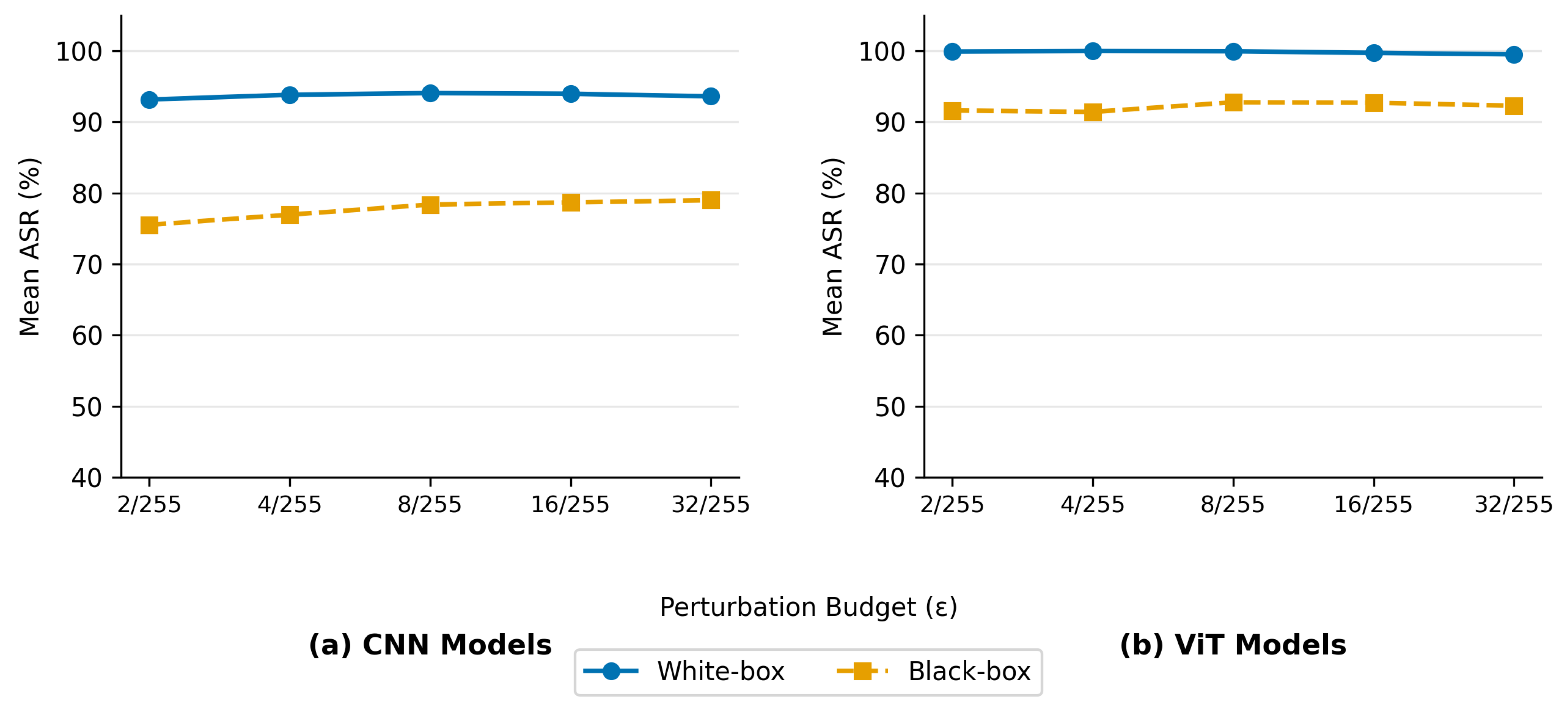

5.4. Effect of Perturbation Budget

5.5. Correlation Analysis: ViT Models

5.6. Correlation Analysis: CNN Models

5.7. Overall Correlation Analysis (CNN + ViT)

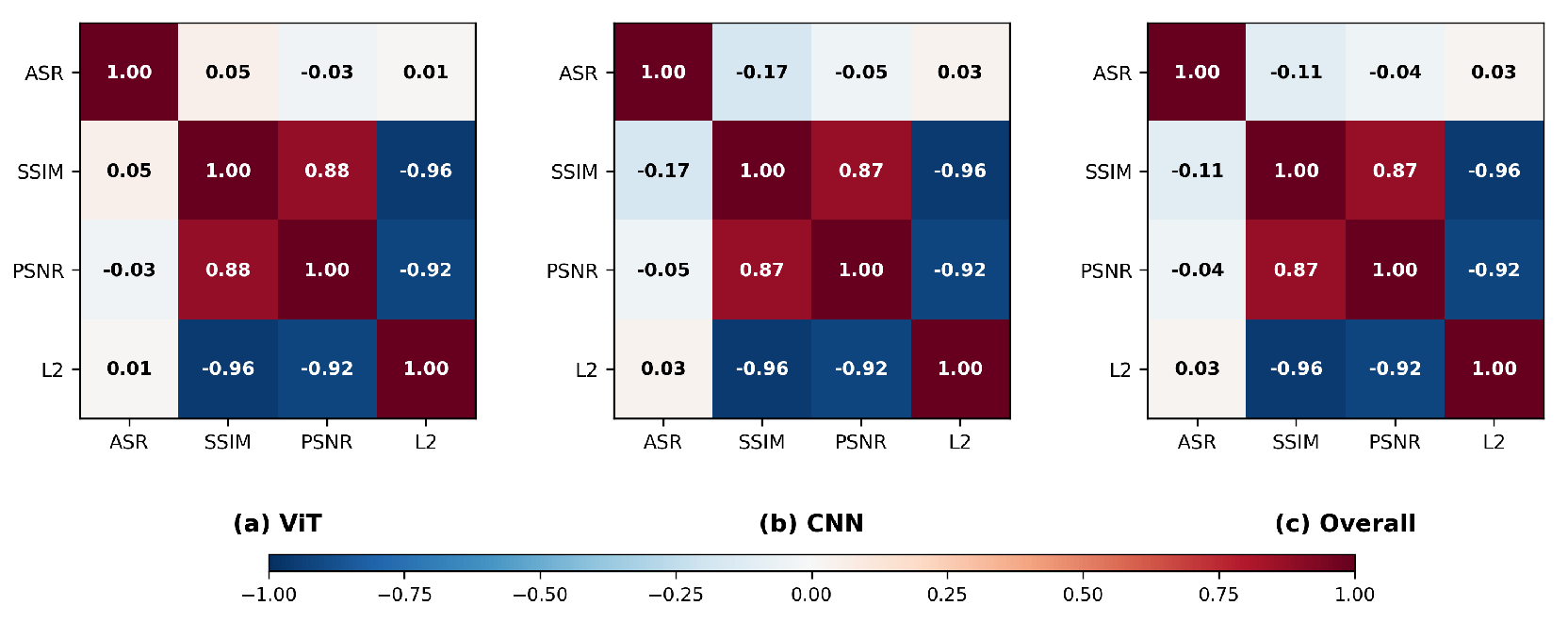

- Metric Coupling: We found that perceptual and distortion-based metrics form a tightly coupled cluster. Specifically, SSIM, PSNR, and are strongly interrelated (, ). This finding reflects the intuitive relationship between perturbation magnitude and perceptual degradation.

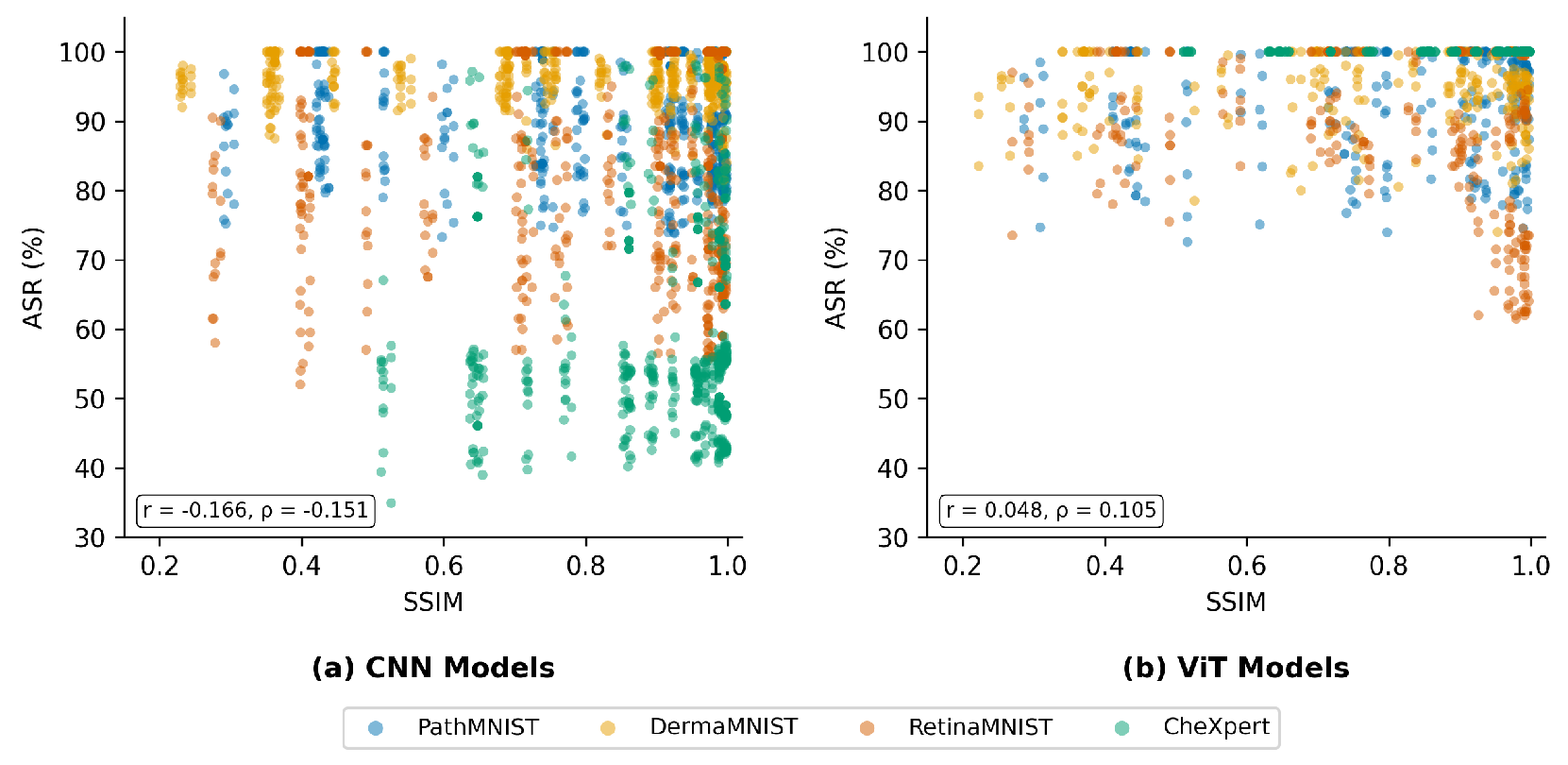

- ASR Decoupling: ASR is consistently decoupled from this cluster, with all ASR correlations remaining below and , regardless of architecture or dataset. As shown in the scatter plots in Figure 7, the decoupling is visually apparent; data points spread broadly across the ASR axis at every SSIM value, showing no discernible trend in either CNN or ViT models.

6. Discussion

6.1. Decoupling of ASR from Perceptual and Distortion

6.2. Consistency Across Architectures

6.3. The CheXpert Anomaly

6.4. Implications for Medical Image Analysis Systems

7. Conclusions

8. Future Work

Data Availability Statement

References

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. Proceedings of the IEEE 2002, 86, 2278–2324. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Advances in neural information processing systems 2012, 25. [Google Scholar] [CrossRef]

- Dibbo, S.V.; Breuer, A.; Moore, J.; Teti, M. Improving robustness to model inversion attacks via sparse coding architectures. In Proceedings of the European Conference on Computer Vision, 2024; Springer; pp. 117–136. [Google Scholar]

- Amebley, D.; Dibbo, S. Are Neuro-Inspired Multi-Modal Vision-Language Models Resilient to Membership Inference Privacy Leakage? arXiv 2025, arXiv:2511.20710. [Google Scholar]

- Lien, C.W.; Vhaduri, S.; Dibbo, S.V.; Shaheed, M. Explaining vulnerabilities of heart rate biometric models securing IoT wearables. Machine Learning with Applications 2024, 16, 100559. [Google Scholar] [CrossRef]

- Hinton, G.; Deng, L.; Yu, D.; Dahl, G.E.; Mohamed, A.r.; Jaitly, N.; Senior, A.; Vanhoucke, V.; Nguyen, P.; Sainath, T.N.; et al. Deep neural networks for acoustic modeling in speech recognition: The shared views of four research groups. IEEE Signal processing magazine 2012, 29, 82–97. [Google Scholar] [CrossRef]

- Vhaduri, S.; Dibbo, S.V.; Chen, C.Y.; Poellabauer, C. Predicting next call duration: A future direction to promote mental health in the age of lockdown. In Proceedings of the 2021 IEEE 45th Annual Computers, Software, and Applications Conference (COMPSAC); IEEE, 2021; pp. 804–811. [Google Scholar]

- Andor, D.; Alberti, C.; Weiss, D.; Severyn, A.; Presta, A.; Ganchev, K.; Petrov, S.; Collins, M. Globally normalized transition-based neural networks. Proceedings of the Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics 2016, Volume 1, 2442–2452. [Google Scholar]

- Vhaduri, S.; Cheung, W.; Dibbo, S.V. Bag of on-phone ANNs to secure IoT objects using wearable and smartphone biometrics. IEEE Transactions on Dependable and Secure Computing 2023, 21, 1127–1138. [Google Scholar] [CrossRef]

- Sah, R.K.; Ghasemzadeh, H. Adversarial transferability in wearable sensor systems. arXiv 2020, arXiv:2003.07982. [Google Scholar]

- Nasr, M.; Rando, J.; Carlini, N.; Hayase, J.; Jagielski, M.; Cooper, A.F.; Ippolito, D.; Choquette-Choo, C.A.; Tramèr, F.; Lee, K. Scalable extraction of training data from aligned, production language models. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2025. [Google Scholar]

- Szegedy, C.; Zaremba, W.; Sutskever, I.; Bruna, J.; Erhan, D.; Goodfellow, I.; Fergus, R. Intriguing properties of neural networks. arXiv 2013, arXiv:1312.6199. [Google Scholar]

- Liu, Y.; Chen, X.; Liu, C.; Song, D. Delving into transferable adversarial examples and black-box attacks. arXiv 2016, arXiv:1611.02770. [Google Scholar]

- Popovic, D.; Sadeghi, A.; Yu, T.; Chawla, S.; Khalil, I. {DeBackdoor}: A Deductive Framework for Detecting Backdoor Attacks on Deep Models with Limited Data. In Proceedings of the 34th USENIX Security Symposium (USENIX Security 25), 2025; pp. 6419–6438. [Google Scholar]

- Sara, U.; Akter, M.; Uddin, M.S.; et al. Image quality assessment through FSIM, SSIM, MSE and PSNR—a comparative study. Journal of Computer and Communications 2019, 7, 8–18. [Google Scholar] [CrossRef]

- Bilgic, B.; Chatnuntawech, I.; Fan, A.P.; Setsompop, K.; Cauley, S.F.; Wald, L.L.; Adalsteinsson, E. Fast image reconstruction with L2-regularization. Journal of magnetic resonance imaging 2014, 40, 181–191. [Google Scholar] [CrossRef] [PubMed]

- Benesty, J.; Chen, J.; Huang, Y.; Cohen, I. Pearson correlation coefficient. In Noise reduction in speech processing; Springer, 2009; pp. 1–4. [Google Scholar]

- Sedgwick, P. Spearman’s rank correlation coefficient. Bmj 2014, 349. [Google Scholar] [CrossRef] [PubMed]

- Piet, J.; Alrashed, M.; Sitawarin, C.; Chen, S.; Wei, Z.; Sun, E.; Alomair, B.; Wagner, D. Jatmo: Prompt injection defense by task-specific finetuning. In Proceedings of the European Symposium on Research in Computer Security, 2024; Springer; pp. 105–124. [Google Scholar]

- Peellawalage, L.D.; Dibbo, S.; Vhaduri, S. Meta-Research on Backdoors: Dataset and Threat Model Shifts in Multimodal Backdoor Attacks. 2026. [Google Scholar] [CrossRef]

- Goodfellow, I.J.; Shlens, J.; Szegedy, C. Explaining and harnessing adversarial examples. arXiv 2014, arXiv:1412.6572. [Google Scholar]

- Kurakin, A.; Goodfellow, I.; Bengio, S. Adversarial machine learning at scale. arXiv 2016, arXiv:1611.01236. [Google Scholar]

- Madry, A.; Makelov, A.; Schmidt, L.; Tsipras, D.; Vladu, A. Towards deep learning models resistant to adversarial attacks. arXiv 2017, arXiv:1706.06083. [Google Scholar]

- Dong, Y.; Liao, F.; Pang, T.; Su, H.; Zhu, J.; Hu, X.; Li, J. Boosting adversarial attacks with momentum. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2018; pp. 9185–9193. [Google Scholar]

- Wang, X.; He, K. Enhancing the transferability of adversarial attacks through variance tuning. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021; pp. 1924–1933. [Google Scholar]

- Xie, C.; Zhang, Z.; Zhou, Y.; Bai, S.; Wang, J.; Ren, Z.; Yuille, A.L. Improving transferability of adversarial examples with input diversity. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019; pp. 2730–2739. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Yang, J.; Shi, R.; Wei, D.; Liu, Z.; Zhao, L.; Ke, B.; Pfister, H.; Ni, B. Medmnist v2-a large-scale lightweight benchmark for 2d and 3d biomedical image classification. Scientific data 2023, 10, 41. [Google Scholar] [CrossRef]

- Kather, J.N.; Krisam, J.; Charoentong, P.; Luedde, T.; Herpel, E.; Weis, C.A.; Gaiser, T.; Marx, A.; Valous, N.A.; Ferber, D.; et al. Predicting survival from colorectal cancer histology slides using deep learning: A retrospective multicenter study. PLoS medicine 2019, 16, e1002730. [Google Scholar] [CrossRef]

- Tschandl, P.; Rosendahl, C.; Kittler, H. The HAM10000 dataset, a large collection of multi-source dermatoscopic images of common pigmented skin lesions. Scientific data 2018, 5, 180161. [Google Scholar] [CrossRef]

- Irvin, J.; Rajpurkar, P.; Ko, M.; Yu, Y.; Ciurea-Ilcus, S.; Chute, C.; Marklund, H.; Haghgoo, B.; Ball, R.; Shpanskaya, K.; et al. Chexpert: A large chest radiograph dataset with uncertainty labels and expert comparison. Proceedings of the Proceedings of the AAAI conference on artificial intelligence 2019, Vol. 33, 590–597. [Google Scholar] [CrossRef]

- Ma, X.; Niu, Y.; Gu, L.; Wang, Y.; Zhao, Y.; Bailey, J.; Lu, F. Understanding adversarial attacks on deep learning based medical image analysis systems. Pattern Recognition 2021, 110, 107332. [Google Scholar] [CrossRef]

- Finlayson, S.G.; Bowers, J.D.; Ito, J.; Zittrain, J.L.; Beam, A.L.; Kohane, I.S. Adversarial attacks on medical machine learning. Science 2019, 363, 1287–1289. [Google Scholar] [CrossRef] [PubMed]

- Huynh-Thu, Q.; Ghanbari, M. Scope of validity of PSNR in image/video quality assessment. Electronics letters 2008, 44, 800–801. [Google Scholar] [CrossRef]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image quality assessment: from error visibility to structural similarity. IEEE transactions on image processing 2004, 13, 600–612. [Google Scholar] [CrossRef]

- Carlini, N.; Wagner, D. Towards evaluating the robustness of neural networks. In Proceedings of the 2017 ieee symposium on security and privacy (sp); Ieee, 2017; pp. 39–57. [Google Scholar]

- Laidlaw, C.; Singla, S.; Feizi, S. Perceptual adversarial robustness: Defense against unseen threat models. arXiv 2020, arXiv:2006.12655. [Google Scholar]

- Croce, F.; Andriushchenko, M.; Sehwag, V.; Debenedetti, E.; Flammarion, N.; Chiang, M.; Mittal, P.; Hein, M. Robustbench: a standardized adversarial robustness benchmark. arXiv 2020, arXiv:2010.09670. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2016; pp. 770–778. [Google Scholar]

- Huang, G.; Liu, Z.; Van Der Maaten, L.; Weinberger, K.Q. Densely connected convolutional networks. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017; pp. 4700–4708. [Google Scholar]

- Szegedy, C.; Vanhoucke, V.; Ioffe, S.; Shlens, J.; Wojna, Z. Rethinking the inception architecture for computer vision. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2016; pp. 2818–2826. [Google Scholar]

- Touvron, H.; Cord, M.; Douze, M.; Massa, F.; Sablayrolles, A.; Jégou, H. Training data-efficient image transformers & distillation through attention. In Proceedings of the International conference on machine learning. PMLR, 2021; pp. 10347–10357. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021; pp. 10012–10022. [Google Scholar]

- Dibbo, S.V.; Moore, J.S.; Kenyon, G.T.; Teti, M.A. Lcanets++: Robust audio classification using multi-layer neural networks with lateral competition. In Proceedings of the 2024 IEEE International Conference on Acoustics, Speech, and Signal Processing Workshops (ICASSPW); IEEE, 2024; pp. 129–133. [Google Scholar]

- Lad, A.; Bhale, R.; Belgamwar, S. Fast gradient sign method (FGSM) variants in white box settings: A comparative study. In Proceedings of the 2024 International Conference on Inventive Computation Technologies (ICICT); IEEE, 2024; pp. 382–386. [Google Scholar]

| Claim | Supported By |

|---|---|

| ASR-focused optimization | Madry et al. [23], Carlini & Wagner [36] |

| Transferability via ASR | Liu et al. [13], Dong et al. [24] |

| Perceptual metrics independently | Laidlaw et al. [37], Wang et al. [35] |

| No unified framework | Croce et al. [38] |

| Dataset | Modality | #Cls | Key Characteristics |

|---|---|---|---|

| DermaMNIST [30] | Dermatoscopy | 7 | Skin lesion; high inter-class similarity |

| PathMNIST [29] | Histopathology | 9 | Tissue classification; high texture variation |

| RetinaMNIST [28] | OCT | 5 | Retinal disease; subtle structural differences |

| CheXpert [31] | Chest X-ray | 14 | Multi-label clinical; distribution shifts |

| Metric Pair | Pearson (r) | Spearman () |

|---|---|---|

| SSIM vs PSNR | 0.8766 | 0.9739 |

| SSIM vs | −0.9596 | −0.9739 |

| PSNR vs | −0.9186 | −1.0000 |

| ASR vs SSIM | 0.0479 | 0.1054 |

| ASR vs PSNR | −0.0338 | 0.0175 |

| ASR vs | 0.0139 | −0.0178 |

| Metric Pair | Pearson (r) | Spearman () |

|---|---|---|

| SSIM vs PSNR | 0.8730 | 0.9743 |

| SSIM vs | −0.9595 | −0.9743 |

| PSNR vs | −0.9166 | −1.0000 |

| ASR vs SSIM | −0.1657 | −0.1505 |

| ASR vs PSNR | −0.0461 | −0.0409 |

| ASR vs | 0.0333 | 0.0409 |

| Metric Pair | Pearson (r) | Spearman () |

|---|---|---|

| SSIM vs PSNR | 0.8742 | 0.9750 |

| SSIM vs | −0.9595 | −0.9750 |

| PSNR vs | −0.9173 | −1.0000 |

| ASR vs SSIM | −0.1076 | −0.0646 |

| ASR vs PSNR | −0.0422 | −0.0328 |

| ASR vs | 0.0272 | 0.0329 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).