Submitted:

15 April 2026

Posted:

16 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

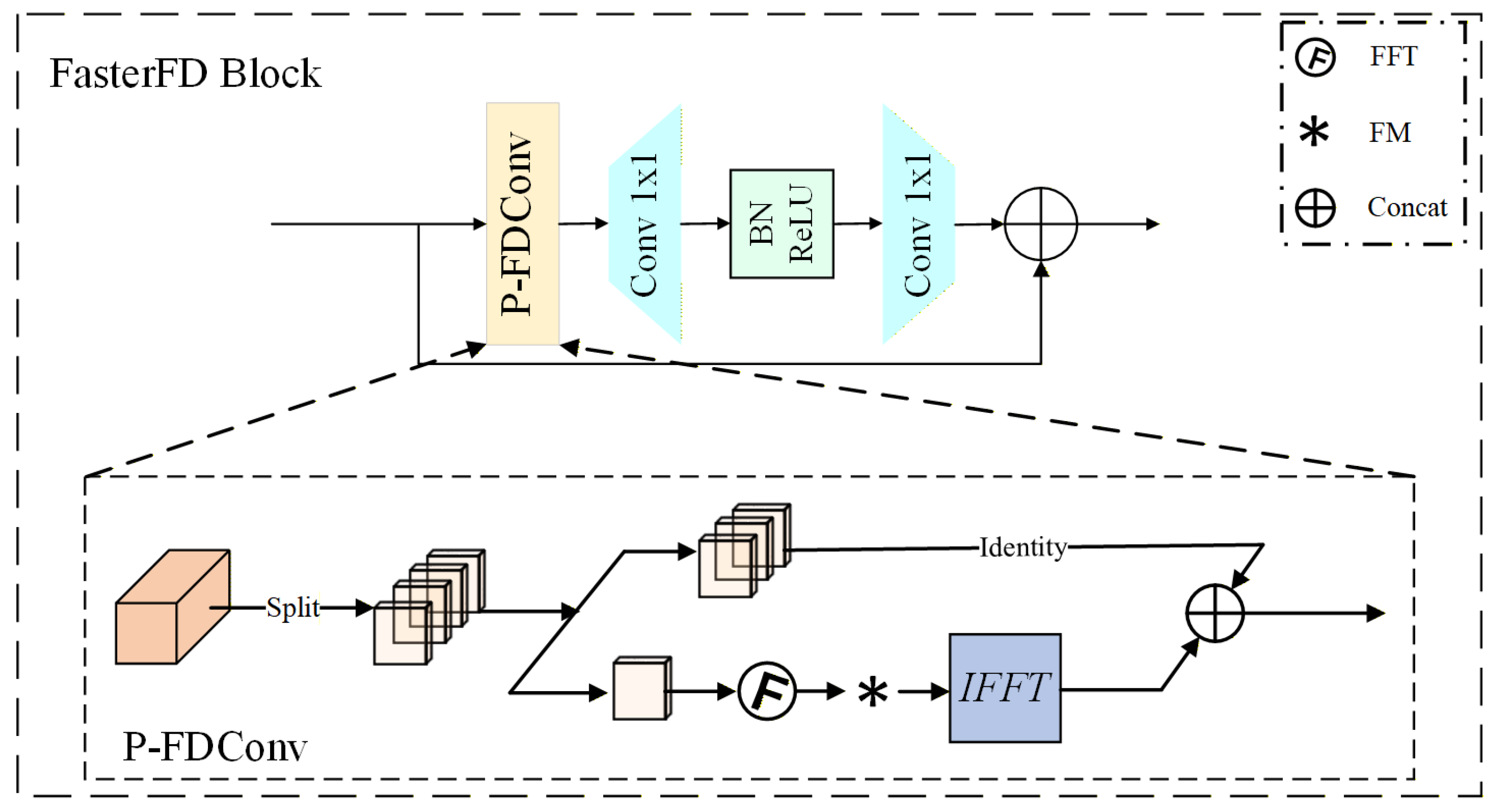

- FasterFD (Frequency-Aware CSP Backbone): Inheriting the robust Cross Stage Partial (CSP) hierarchy of YOLOv8, this backbone introduces a radical micro-architectural innovation by upgrading standard bottlenecks into C2f-FasterFD modules. By integrating the Partial Convolution (PConv) paradigm with Frequency Dynamic Convolution (FDConv), it effectively suppresses low-frequency background noise and amplifies faint panicle textures, perfectly optimizing the multi-scale feature extraction process for low-power edge devices.

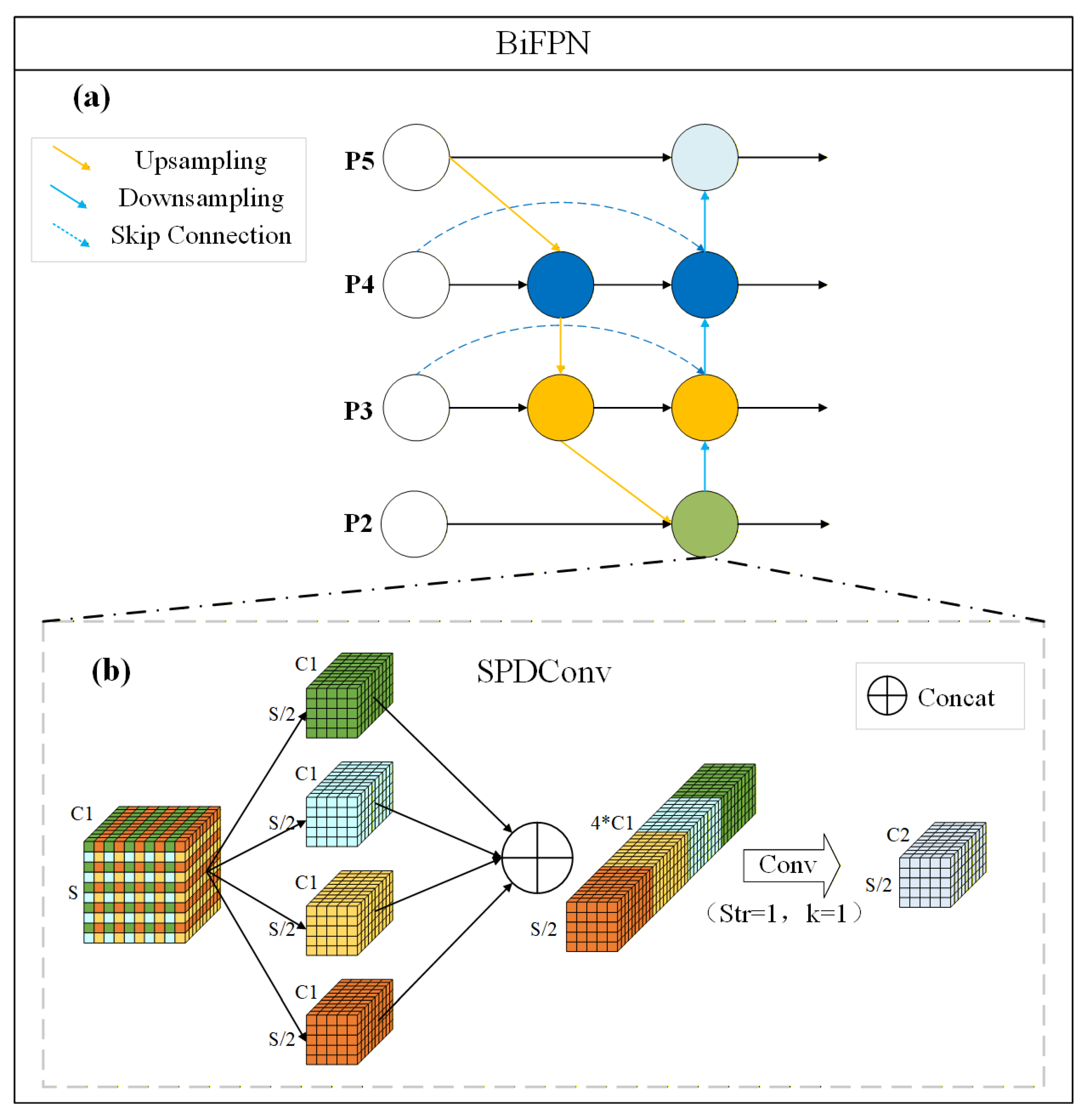

- LFE (Lossless Feature Encoder): By integrating Space-to-Depth mapping with bidirectional feature pyramids, this encoder achieves complete cross-scale feature reconstruction, successfully overcoming the “semantic collapse” of micro-targets captured from high UAV altitudes.

- Composite Metric Loss: Integrating NWD and Inner-IoU, this formulation compensates for the gradient failure of traditional IoU on overlapping targets, significantly improving bounding box regression and dense counting precision without adding any inference delay.

2. Materials and Methods

2.1. Study Area and Data Preparation

2.2. Panicle-DETR Network Framework

2.2.1. Overall Network Architecture

2.2.2. FasterFD: Frequency-Aware CSP Feature Extraction Backbone

2.2.3. LFE: Lossless Feature Encoder

2.2.4. Adjustment of the Loss Function

- Normalized Wasserstein Distance Loss

- 2.

- Inner-IoU Loss

- 3.

- Total Loss Formulation

3. Results

3.1. Experimental Setup

3.1.1. Experimental Environment

3.1.2. Experimental Parameters

3.1.3. Evaluation Metrics

(1) Fundamental Detection Metrics

(2) Comprehensive Accuracy Metrics

(3) Agronomic Counting Metrics

3.2. Ablation Study of Engineered Innovations

3.2.1. Comparative Analysis of Backbone Architectures

3.3. Comparative Experiments on the Composite Dataset

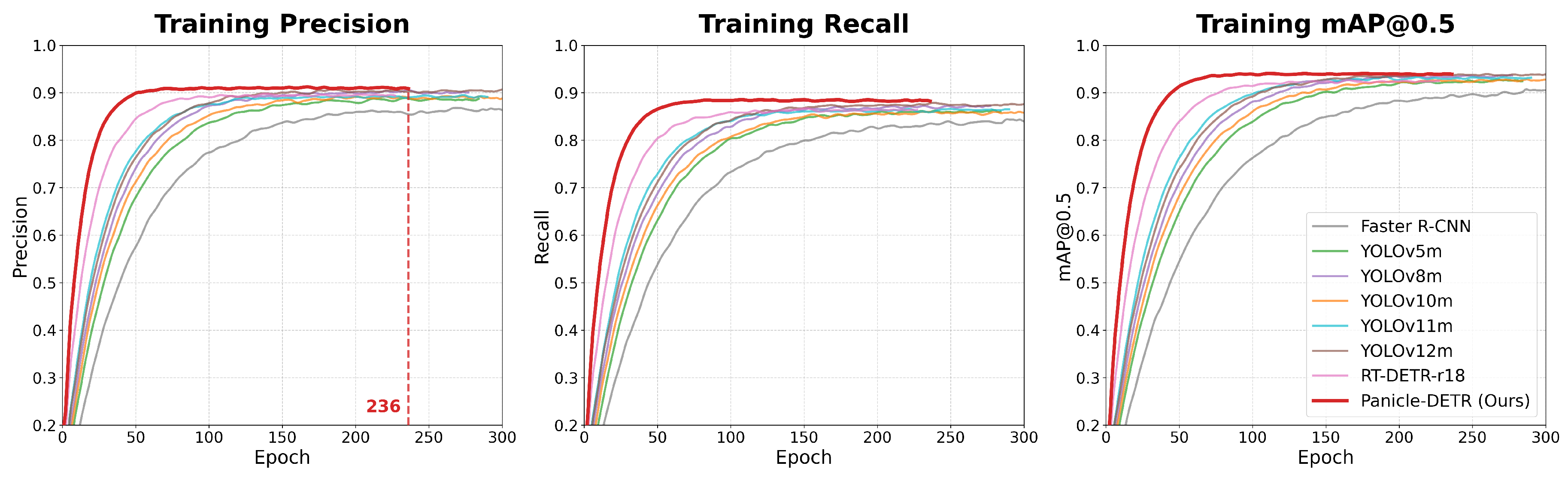

3.3.1. Quantitative Detection Performance and Training Dynamics

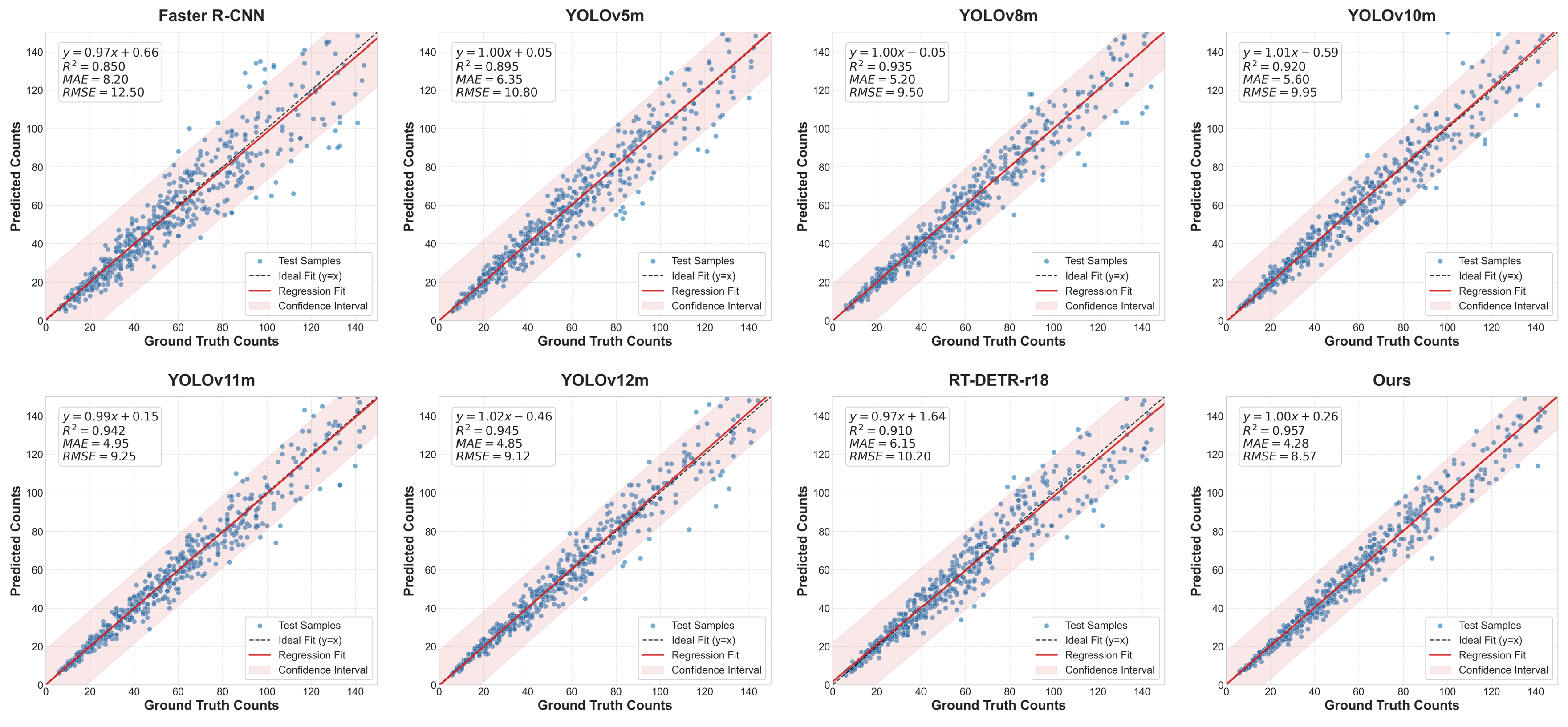

3.3.2. Agronomic Counting Efficacy and Stability

| Model | MAE ↓ | RMSE ↓ | ↑ |

|---|---|---|---|

| Faster R-CNN [39] | 8.20 | 12.50 | 0.850 |

| YOLOv5m [40] | 6.35 | 10.80 | 0.895 |

| YOLOv8m [41] | 5.05 | 9.35 | 0.938 |

| YOLOv10m [16] | 5.60 | 9.95 | 0.920 |

| YOLOv11m [42] | 4.95 | 9.25 | 0.942 |

| YOLOv12m [43] | 4.85 | 9.12 | 0.945 |

| RT-DETR-r18 [26] | 6.15 | 10.20 | 0.910 |

| Panicle-DETR (Ours) | 4.28 | 8.57 | 0.957 |

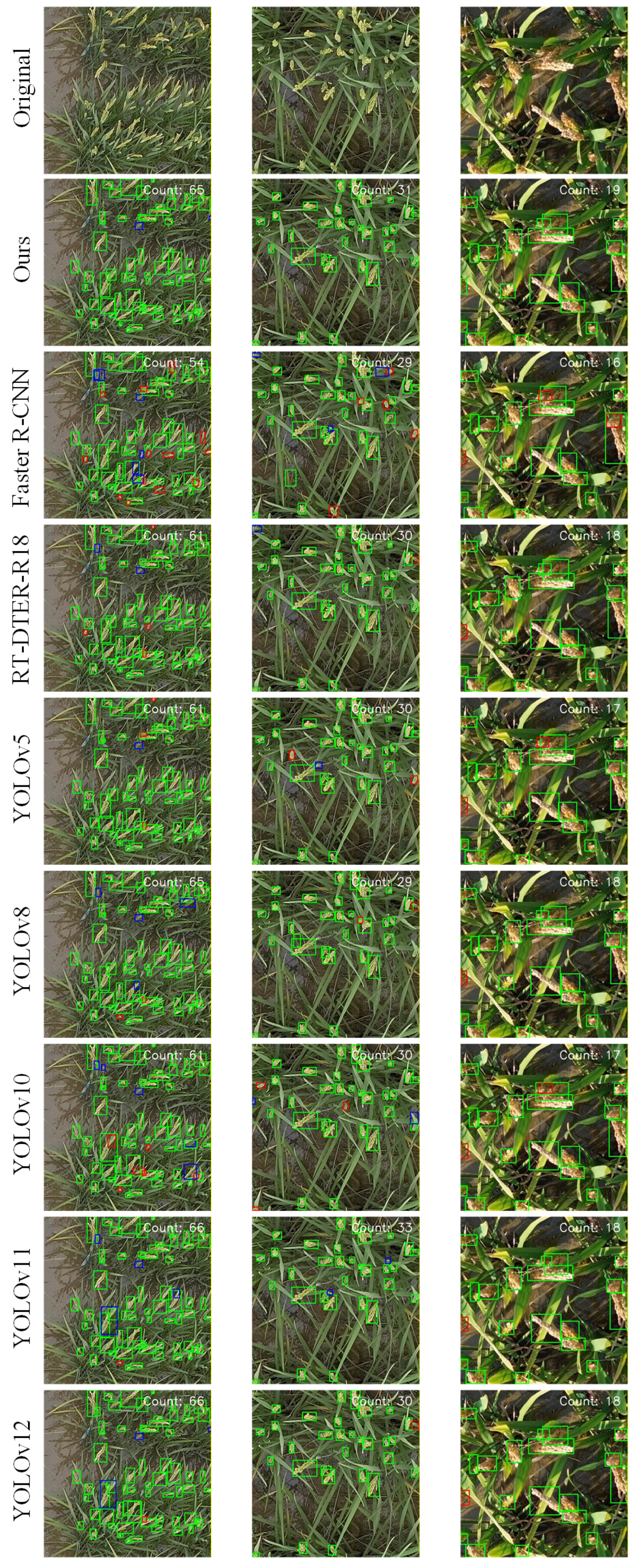

3.3.3. Qualitative Visual Validation Across Altitudes

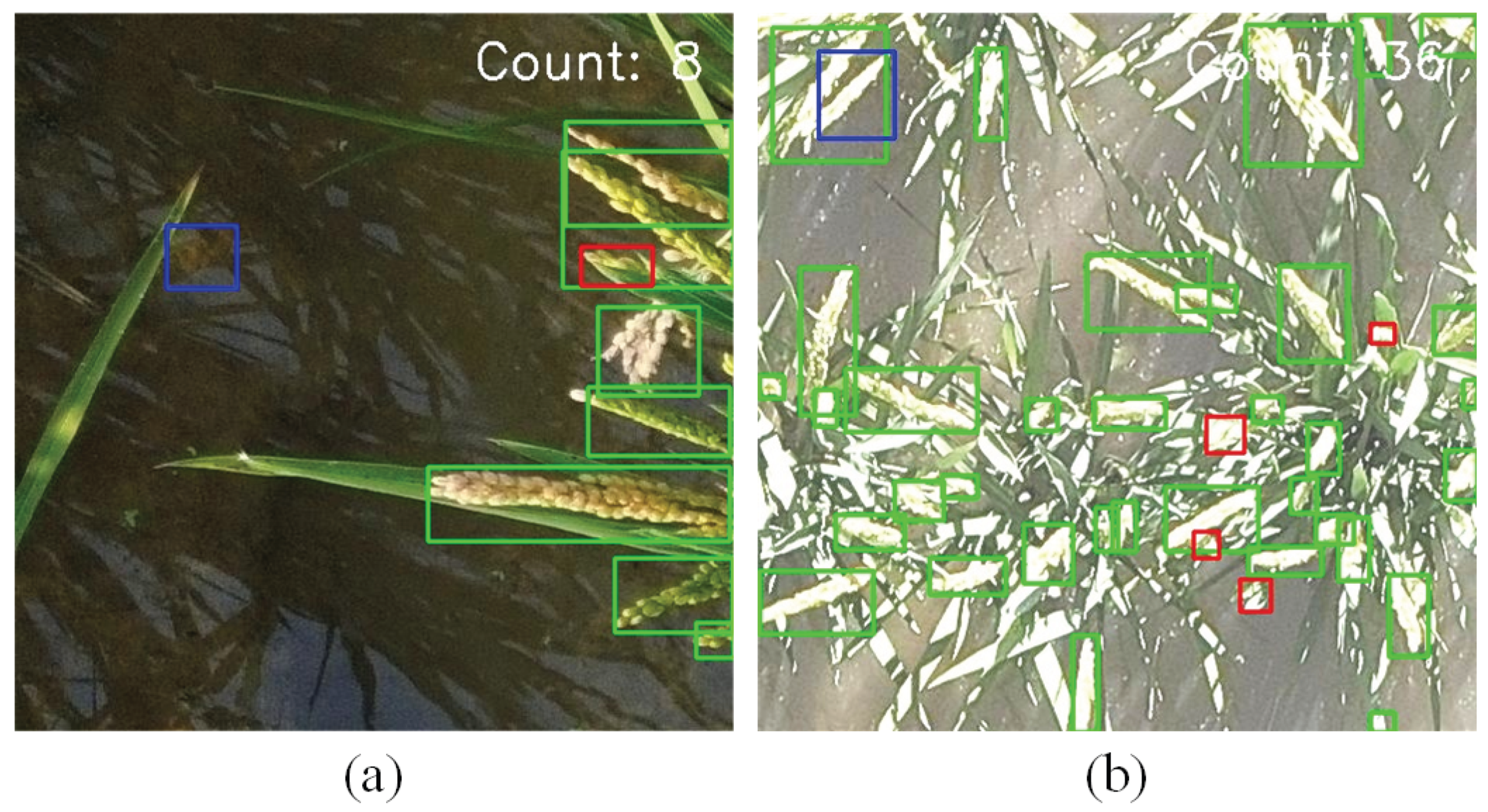

3.4. Error Analysis and Model Limitations

4. Discussion

4.1. Overcoming the Spatial-Semantic Dichotomy in Multi-Altitude Phenotyping

4.2. Bridging the Gap Between Computer Vision and Agronomic Yield Estimation

4.3. Engineering Viability and Edge-Deployment Trade-offs

4.4. Limitations and Future Work

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Madec, S.; Jin, X.; Lu, H.; De Solan, B.; Liu, S.; Duyme, F.; Heritier, E.; Baret, F. Ear density estimation from high resolution RGB imagery using deep learning technique. Agric. For. Meteorol. 2019, 264, 225–234. [Google Scholar] [CrossRef]

- Ampatzidis, Y.; Partel, V. UAV-based high throughput phenotyping in citrus utilizing multispectral imaging and artificial intelligence. Remote Sens. 2019, 11, 410. [Google Scholar] [CrossRef]

- Chen, R.; et al. High-throughput UAV-based rice panicle detection and genetic mapping of heading-date-related traits. Front. Plant Sci. 2024, 15, 1327507. [Google Scholar] [CrossRef]

- Huang, J.; Chen, L.; Zhang, M.; et al. Estimate the Pre-Flowering Specific Leaf Area of Rice Based on Vegetation Indices and Texture Indices Derived from UAV Multispectral Imagery. Agriculture 2025, 15, 2293. [Google Scholar] [CrossRef]

- Zhou, C.; Ye, H.; Hu, J.; et al. Automated counting of rice panicle by applying deep learning model to images from unmanned aerial vehicle platform. Sensors 2019, 19, 3106. [Google Scholar] [CrossRef] [PubMed]

- Guo, W.; Fukatsu, T.; Ninomiya, S. Automated characterization of flowering dynamics in rice using field-acquired time-series RGB images. Plant Methods 2015, 11, 7. [Google Scholar] [CrossRef] [PubMed]

- Shen, J.; Yue, J.; Liu, Y.; Yao, Y.; Feng, H.; Yang, H.; Guo, W.; Ma, X.; Fu, Y.; Shu, M.; Yang, G.; Qiao, H. Analyzing maize stem circumference, stem height, and stem circumference-to-height ratio using UAV, UGV, and deep learning. Comput. Electron. Agric. 2025, 239, 111019. [Google Scholar] [CrossRef]

- Yuan, Z.; Gong, J.; Guo, B.; Wang, C.; Liao, N.; Song, J.; Wu, Q. Small Object Detection in UAV Remote Sensing Images Based on Intra-Group Multi-Scale Fusion Attention and Adaptive Weighted Feature Fusion Mechanism. Remote Sens. 2024, 16, 4265. [Google Scholar] [CrossRef]

- Heng, Z.; Xie, Y.; Du, D. MIE-YOLO: A Multi-Scale Information-Enhanced Weed Detection Algorithm for Precision Agriculture. AgriEngineering 2026, 8, 16. [Google Scholar] [CrossRef]

- Oliveira, T.C.M.; Souza, J.B.C.; Almeida, S.L.H.; et al. Combining Artificial Intelligence and Remote Sensing to Enhance the Estimation of Peanut Pod Maturity. AgriEngineering 2025, 7, 368. [Google Scholar] [CrossRef]

- Zhang, Y.; Xiao, D.; Liu, Y.; et al. An algorithm for automatic identification of multiple developmental stages of rice spikes based on improved Faster R-CNN. Crop J. 2022, 10, 1323–1333. [Google Scholar] [CrossRef]

- Tanimoto, Y.; Zhang, Z.; Yoshida, S. Object Detection for Yellow Maturing Citrus Fruits from Constrained or Biased UAV Images: Performance Comparison of Various Versions of YOLO Models. AgriEngineering 2024, 6, 4308–4324. [Google Scholar] [CrossRef]

- Rodríguez-Lira, D.-C.; Córdova-Esparza, D.-M.; Álvarez-Alvarado, J.M.; Romero-González, J.-A.; Terven, J.; Rodríguez-Reséndiz, J. Comparative Analysis of YOLO Models for Bean Leaf Disease Detection in Natural Environments. AgriEngineering 2024, 6, 4585–4603. [Google Scholar] [CrossRef]

- Wu, H.; Guan, M.; Chen, J.; Pan, Y.; Zheng, J.; Jin, Z.; Li, H.; Tan, S. OE-YOLO: An EfficientNet-Based YOLO Network for Rice Panicle Detection. Plants 2025, 14, 1370. [Google Scholar] [CrossRef]

- Huang, D.; Chen, Z.; Zhuang, J.; Song, G.; Huang, H.; Li, F.; Huang, G.; Liu, C. DRPU-YOLO11: A Multi-Scale Model for Detecting Rice Panicles in UAV Images with Complex Infield Background. Agriculture 2026, 16, 234. [Google Scholar] [CrossRef]

- Wang, A.; Chen, H.; Liu, L.; et al. YOLOv10: Real-time end-to-end object detection. arXiv;arXiv 2024, arXiv:2405.14458. [Google Scholar]

- Guo, Y.; Zhan, W.; Zhang, Z.; Zhang, Y.; Guo, H. FRPNet: A Lightweight Multi-Altitude Field Rice Panicle Detection and Counting Network Based on Unmanned Aerial Vehicle Images. Agronomy 2025, 15, 1396. [Google Scholar] [CrossRef]

- Wu, P.; Zhao, J. LKD-YOLO: Improved YOLOv8 for Rice Panicle Detection in Drone Images. In Proceedings of the 2025 4th International Symposium on Computer Applications and Information Technology (ISCAIT), Xi’an, China, 21–23 March 2025; pp. 549–553. [Google Scholar]

- Liang, Y.; et al. A rotated rice spike detection model and a crop yield estimation application based on UAV images. Comput. Electron. Agric. 2024, 224, 109188. [Google Scholar] [CrossRef]

- Yao, M.; et al. Rice counting and localization in unmanned aerial vehicle imagery using enhanced feature fusion. Agronomy 2024, 14, 868. [Google Scholar] [CrossRef]

- Qian, Y.; et al. MFNet: Multi-scale feature enhancement networks for wheat head detection and counting in complex scene. Comput. Electron. Agric. 2024, 225, 109342. [Google Scholar] [CrossRef]

- Thayananthan, T.; Zhang, X.; Huang, Y.; Chen, J.; Wijewardane, N. K.; Martins, V. S.; Chesser, G. D.; Goodin, C. T. CottonSim: A vision-guided autonomous robotic system for cotton harvesting in Gazebo simulation. Comput. Electron. Agric. 2025, 239, 110963. [Google Scholar] [CrossRef]

- Lan, M.; Liu, C.; Zheng, H.; et al. RICE-YOLO: In-field rice spike detection based on improved YOLOv5 and drone images. Agronomy 2024, 14, 836. [Google Scholar] [CrossRef]

- Cai, W.; et al. Rice growth-stage recognition based on improved YOLOv8 with UAV imagery. Agronomy 2024, 14, 2751. [Google Scholar] [CrossRef]

- Carion, N.; Massa, F.; Synnaeve, G.; et al. End-to-end object detection with transformers. In Proceedings of the European Conference on Computer Vision (ECCV), Glasgow, UK, 23–28 August 2020; pp. 213–229. [Google Scholar]

- Zhao, Y.; Lv, W.; Xu, S.; Wei, J.; Wang, G.; Dang, Q.; Liu, Y.; Chen, J. DETRs beat YOLOs on real-time object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 17–21 June 2024; pp. 16965–16974. [Google Scholar]

- Guo, Z.; Cai, D.; Jin, Z.; Xu, T.; Yu, F. Research on unmanned aerial vehicle (UAV) rice field weed sensing image segmentation method based on CNN-transformer. Comput. Electron. Agric. 2025, 229, 109719. [Google Scholar] [CrossRef]

- Teng, Z.; Chen, J.; Wang, J.; Wu, S.; Chen, R.; Lin, Y.; Shen, L.; Jackson, R.; Zhou, J.; Yang, C. Panicle-Cloud: An Open and AI-Powered Cloud Computing Platform for Quantifying Rice Panicles from Drone-Collected Imagery to Enable the Classification of Yield Production in Rice. Plant Phenomics 2023, 5, 0105. [Google Scholar] [CrossRef] [PubMed]

- Chen, J.; Kao, S.; He, H.; Zhuo, W.; Wen, S.; Lee, C.-H.; Chan, S.-H.G. Run, Don’t Walk: Chasing Higher FLOPS for Faster Neural Networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 18–22 June 2023; pp. 12021–12031. [Google Scholar]

- Chen, L.; Gu, L.; Li, L.; Yan, C.; Fu, Y. Frequency Dynamic Convolution for Dense Image Prediction. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 11–15 June 2025. [Google Scholar]

- Tan, M.; Pang, R.; Le, Q.V. EfficientDet: Scalable and Efficient Object Detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 10778–10787. [Google Scholar]

- Sunkara, R.; Luo, T. No More Strided Convolutions or Pooling: A New CNN Building Block for Low-Resolution Images and Small Objects. arXiv 2022, arXiv:2208.03641. [Google Scholar]

- Wang, J.; Xu, C.; Yang, W.; Yu, L. A Normalized Gaussian Wasserstein Distance for Tiny Object Detection. arXiv 2021, arXiv:2110.13389. [Google Scholar]

- Zhang, H.; Xu, C.; Zhang, S. Inner-IoU: More Effective Intersection over Union Loss with Auxiliary Bounding Box. arXiv 2023, arXiv:2311.02877. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Howard, A.; Sandler, M.; Chu, G.; Chen, L.-C.; Chen, B.; Tan, M.; Wang, W.; Zhu, Y.; Pang, R.; Vasudevan, V.; et al. Searching for MobileNetV3. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 1314–1324. [Google Scholar]

- Ma, X.; Dai, X.; Bai, Y.; Wang, Y.; Fu, Y. Rewrite the Stars. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 17–21 June 2024; pp. 12051–12061. [Google Scholar]

- Tan, M.; Le, Q.V. EfficientNetV2: Smaller Models and Faster Training. arXiv 2021, arXiv:2104.00298. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef]

- Khanam, R.; Hussain, M. What is YOLOv5: A deep look into the internal features of the popular object detector. arXiv 2024, arXiv:2407.20892. [Google Scholar] [CrossRef]

- Yaseen, M. What is YOLOv8: An In-Depth Exploration of the Internal Features of the Next-Generation Object Detector. arXiv 2024, arXiv:2408.15857. [Google Scholar]

- Khanam, R.; Hussain, M. YOLOv11: An Overview of the Key Architectural Enhancements. arXiv 2024, arXiv:2410.17725. [Google Scholar]

- Tian, Y.; Ye, Q.; Doermann, D. YOLOv12: Attention-Centric Real-Time Object Detectors. arXiv 2025, arXiv:2502.12524. [Google Scholar]

- Nikouei, M.; et al. Small Object Detection: A Comprehensive Survey on Challenges, Techniques and Real-World Applications. arXiv 2025, arXiv:2503.20516. [Google Scholar] [CrossRef]

- Ramachandran, A.; Kumar, S. Border Sensitive Knowledge Distillation for Rice Panicle Detection in UAV Images. Comput. Mater. Contin. 2024, 81, 827–842. [Google Scholar] [CrossRef]

- Lu, X.; Shen, Y.; Cen, H.; et al. Phenotyping of Panicle Number and Shape in Rice Breeding Materials Based on Unmanned Aerial Vehicle Imagery. Plant Phenomics 2024, 6, 0265. [Google Scholar] [CrossRef] [PubMed]

- Zhu, J.; Lin, Y.; Liu, Y.; Wang, H.; Qin, Y.; Li, M.; Xu, H.; He, Y. Intelligent agriculture: deep learning in UAV-based remote sensing imagery for crop diseases and pests detection. Front. Plant Sci. 2024, 15, 1435016. [Google Scholar] [CrossRef]

- Gookyi, D.A.N.; Wulnye, F.A.; Wilson, M.; Danquah, P.; Danso, S.A.; Gariba, A.A. Enabling Intelligence on the Edge: Leveraging Edge Impulse to Deploy Multiple Deep Learning Models on Edge Devices for Tomato Leaf Disease Detection. AgriEngineering 2024, 6, 3563–3585. [Google Scholar] [CrossRef]

| Parameter | Value/Description |

|---|---|

| Input Resolution | pixels |

| Optimizer | AdamW |

| Initial Learning Rate | (0.001) |

| Momentum | 0.9 |

| Batch Size | 16 |

| Total Epochs | 300 |

| LR Scheduler | Cosine Annealing |

| Early Stopping Patience | 50 epochs |

| Weight Decay | (0.0001) |

| Variant | Baseline | +FasterFD | +LFE | +Loss | Precision (%) | Recall (%) | mAP@50 (%) | GFLOPs |

|---|---|---|---|---|---|---|---|---|

| Baseline | ✓ | 89.50 | 86.40 | 92.45 | 56.9 | |||

| Model 1 | ✓ | ✓ | 89.80 | 86.80 | 92.95 | 41.8 | ||

| Model 2 | ✓ | ✓ | 89.70 | 87.60 | 93.20 | 64.7 | ||

| Model 3 | ✓ | ✓ | 89.65 | 86.45 | 92.55 | 56.9 | ||

| Model 4 | ✓ | ✓ | ✓ | 90.40 | 88.00 | 93.60 | 53.0 | |

| Model 5 | ✓ | ✓ | ✓ | 90.05 | 86.85 | 93.05 | 41.8 | |

| Model 6 | ✓ | ✓ | ✓ | 89.90 | 87.75 | 93.30 | 64.7 | |

| Panicle-DETR | ✓ | ✓ | ✓ | ✓ | 90.97 | 88.33 | 93.94 | 53.0 |

| Backbone | Precision (%) | Recall (%) | mAP@50 (%) | GFLOPs |

|---|---|---|---|---|

| ResNet-50 [35] | 90.65 | 87.80 | 93.70 | 108.5 |

| HGNetv2-L [26] | 90.10 | 87.25 | 93.20 | 74.2 |

| MobileNetV3 [36] | 87.80 | 84.15 | 90.50 | 47.7 |

| FasterNet [29] | 89.45 | 86.85 | 92.85 | 41.5 |

| StarNet [37] | 88.50 | 85.10 | 91.80 | 40.0 |

| EfficientNetV2 [38] | 89.25 | 86.80 | 92.80 | 49.5 |

| FasterFD (Ours) | 90.97 | 88.33 | 93.94 | 53.0 |

| Model | Precision (%) | Recall (%) | F1-Score (%) | mAP@50 (%) | mAP@75 (%) | mAP@50-95 (%) | Params (M) | GFLOPs |

|---|---|---|---|---|---|---|---|---|

| Faster R-CNN [39] | 86.50 | 84.20 | 85.33 | 90.50 | 66.80 | 58.90 | 41.35 | 180.6 |

| YOLOv5m [40] | 88.50 | 86.10 | 87.28 | 92.50 | 70.80 | 62.10 | 25.05 | 64.0 |

| YOLOv8m [41] | 90.10 | 87.20 | 88.63 | 93.65 | 71.50 | 62.80 | 25.84 | 78.7 |

| YOLOv10m [16] | 88.80 | 85.90 | 87.33 | 92.70 | 70.90 | 62.30 | 16.45 | 63.4 |

| YOLOv11m [42] | 89.20 | 86.50 | 87.83 | 93.10 | 71.80 | 63.40 | 19.69 | 65.6 |

| YOLOv12m [43] | 90.50 | 87.50 | 88.97 | 93.80 | 72.50 | 63.90 | 19.54 | 58.6 |

| RT-DETR-r18 [26] | 89.50 | 86.40 | 87.92 | 92.45 | 68.10 | 60.50 | 19.87 | 56.9 |

| Panicle-DETR (Ours) | 90.97 | 88.33 | 89.63 | 93.94 | 74.15 | 64.75 | 13.78 | 53.0 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).