Submitted:

14 April 2026

Posted:

16 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- A concrete utility formulation and discrete bundle catalog suited to operational RAG routing, with full token billing accounting.

- An open reference implementation with retrieval backed by FAISS (Facebook AI Similarity Search, an open-source library for efficient approximate nearest-neighbor search over dense vector embeddings [7]), BM25-ready tokenization, OpenAI embedding/chat integration, and a CLI experiment harness.

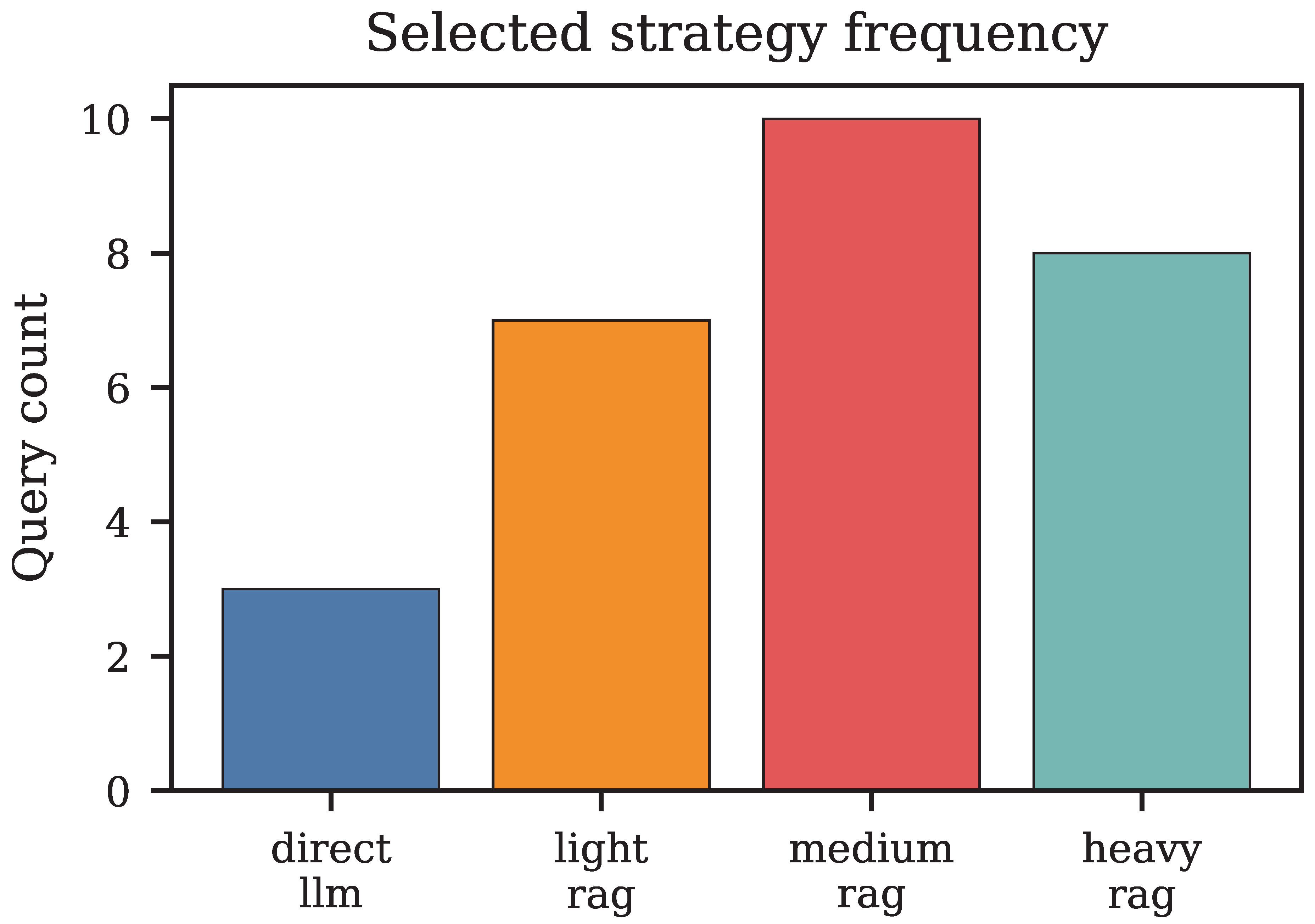

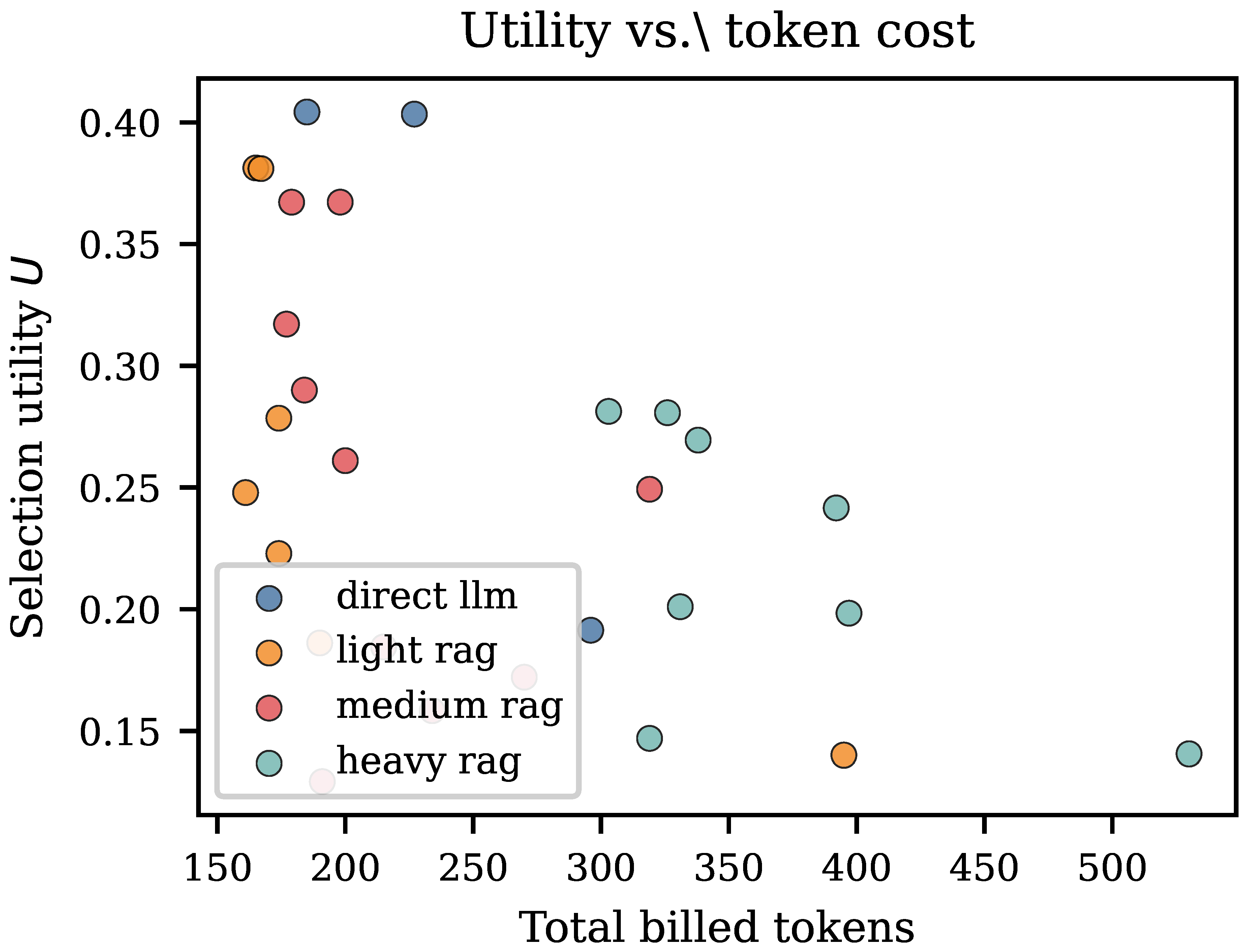

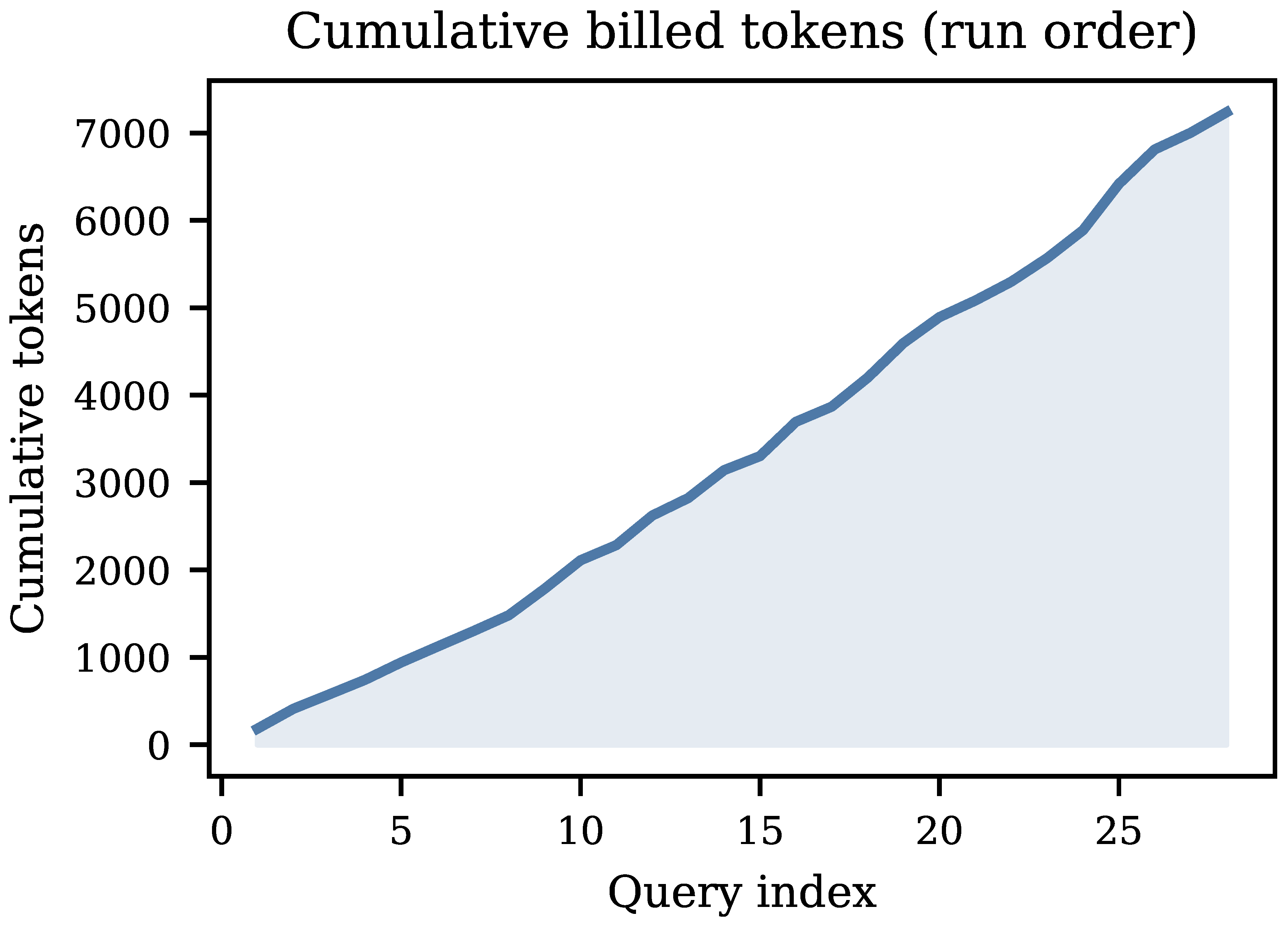

- A 28-query empirical study with ten quantitative figures and five tables, all generated directly from measured CSV logs, covering strategy frequency, token decomposition, latency distributions, utility–cost structure, correlation analysis, and router vs. fixed-baseline comparisons.

- Operational guidance for bundle design, weight calibration, monitoring, and failure-mode triage in deployed RAG systems.

2. Related Work

2.1. Retrieval for Open-Domain QA

2.2. Classical and Hybrid Retrieval

2.3. RAG Surveys and Production Considerations

2.4. Contextual Bandits and Adaptive Decision-Making

3. Problem Formulation

- Quality. A factual, multi-step question needs several retrieved passages to be answered correctly. A simple definitional question does not. Applying deep retrieval uniformly wastes resources on easy questions without improving answers on hard ones.

- Latency. Each additional passage in the prompt makes the model take longer to respond. For user-facing applications, response time matters, and a retrieval strategy that is too heavy can push response times beyond acceptable limits.

- Cost. Commercial language model APIs charge by the token. Each retrieved passage injected into the prompt is billed as an input token. At scale, the difference between retrieving three passages and ten passages per query adds up to a measurable difference in operating cost.

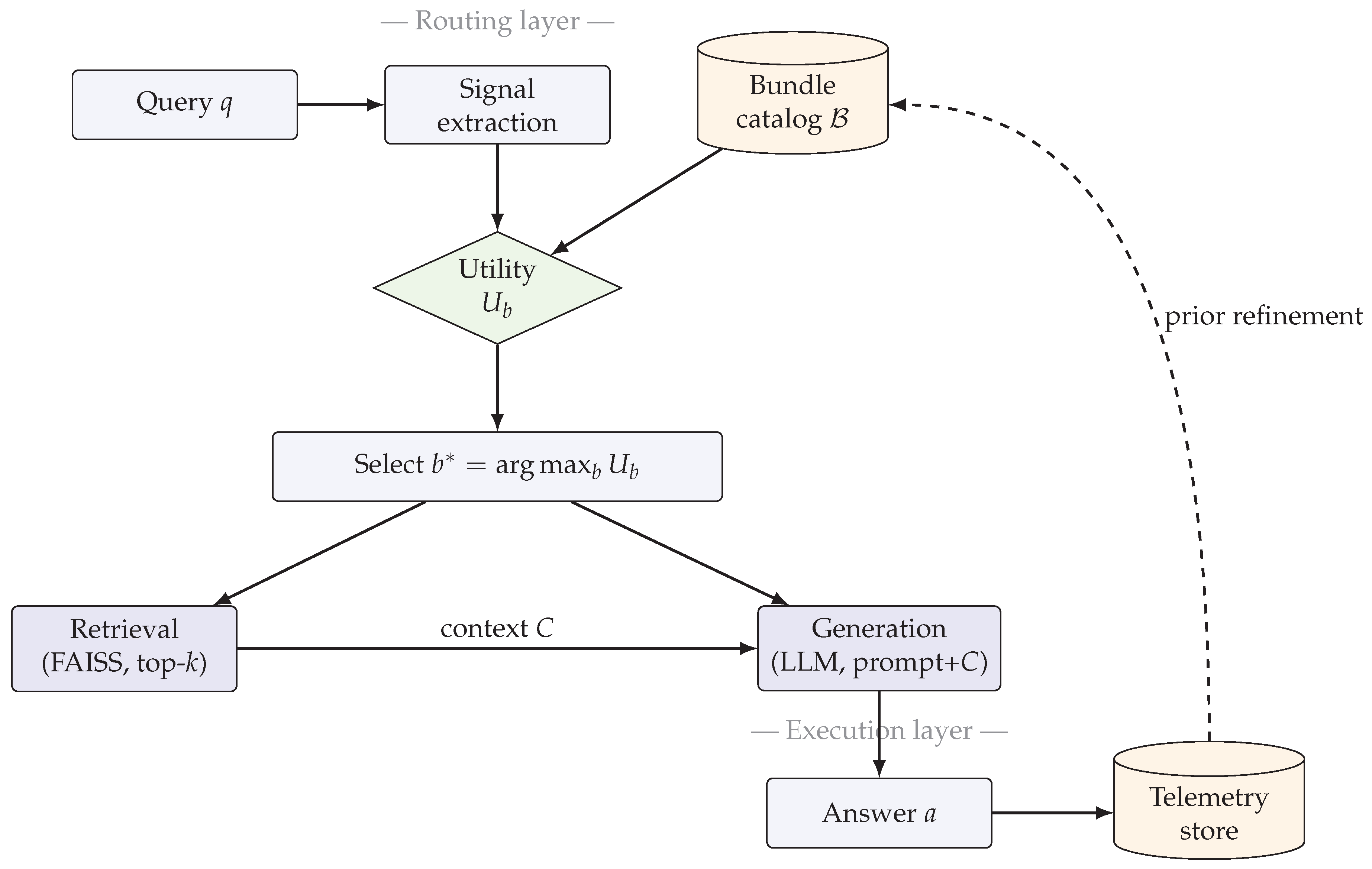

4. System Architecture

4.1. End-to-End Pipeline

- 1.

- Signal extraction: Compute QuerySignals (length, cue-word counts) and a heuristic complexity score .

- 2.

- Utility estimation: Evaluate for all using priors and optional telemetry.

- 3.

- Bundle selection: Dispatch to .

- 4.

- Retrieval: Execute retrieval per the specification of , obtaining context C and retrieval confidence.

- 5.

- Generation: Construct a prompt from q and C; call the LLM generator.

- 6.

- Telemetry logging: Log prompt, completion, and embedding tokens; end-to-end latency; lexical quality proxy; and realized utility.

5. Methodology

5.1. Query Signals and Complexity

5.2. Strategy Bundles

5.3. Utility Function and Bundle Selection

5.4. Token Billing Model

5.5. Implementation Stack

6. Experimental Setup

6.1. Corpus and Query Set

6.2. Metrics

6.3. Baseline Configurations

6.4. Reproducibility

-

Router run: ca-rag-experiment –docs data/documents_benchmark.txt –questionsdata/questions_benchmark.txt –out results/router_default.csv

- Fixed baselines: ca-rag-experiment –mode fixed –fixed-strategy heavy_rag ...

- Figure generation: python scripts/generate_paper_figures.py –csv results/ router_default.csv –results-dir results

| Metric | Value |

|---|---|

| Queries | 28 |

| Unique strategies | 4 |

| Corpus lines (benchmark) | 15 |

| Index embedding tokens (API) | 262 |

7. Results

7.1. Strategy Selection and Routing Behavior

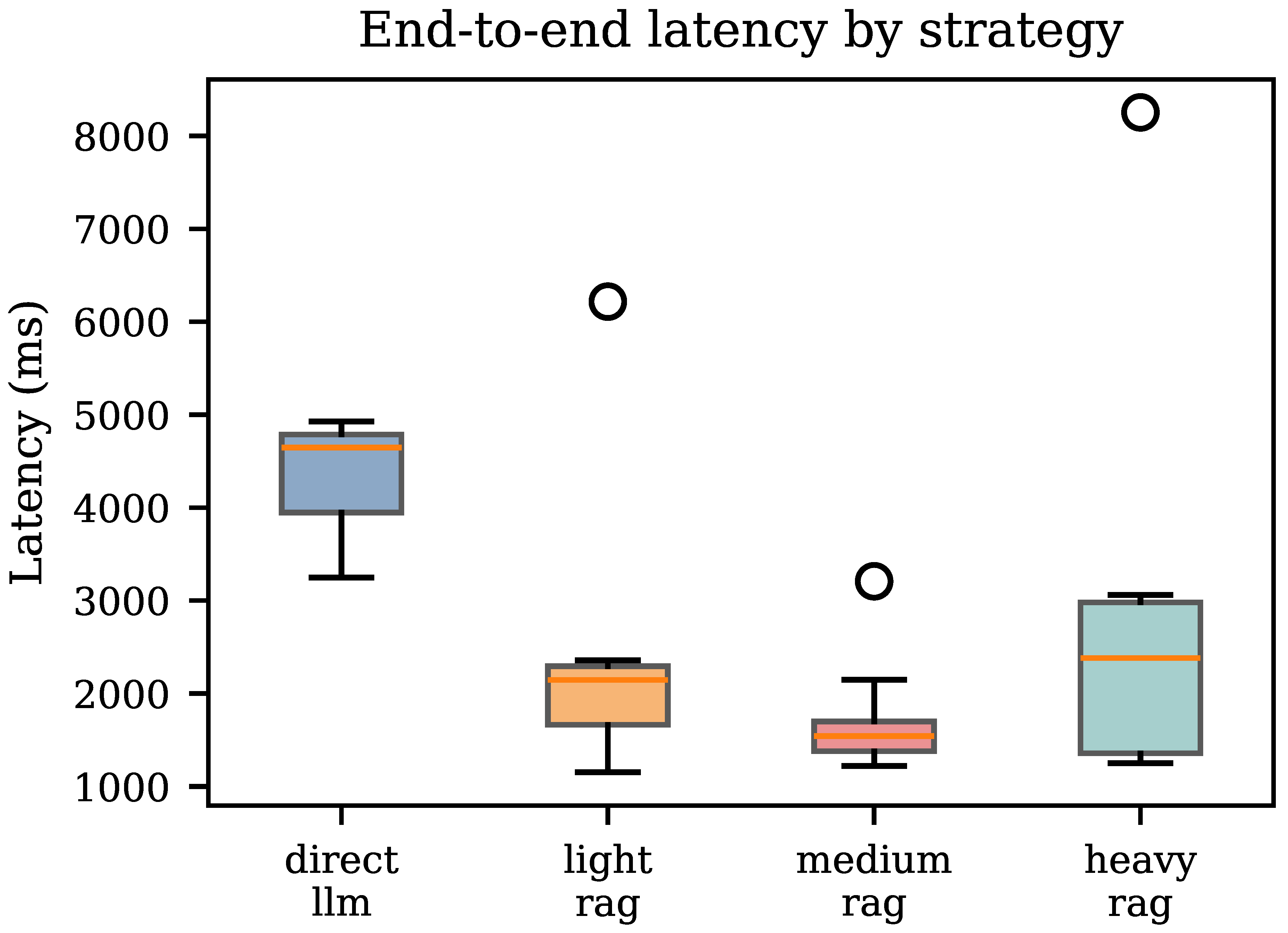

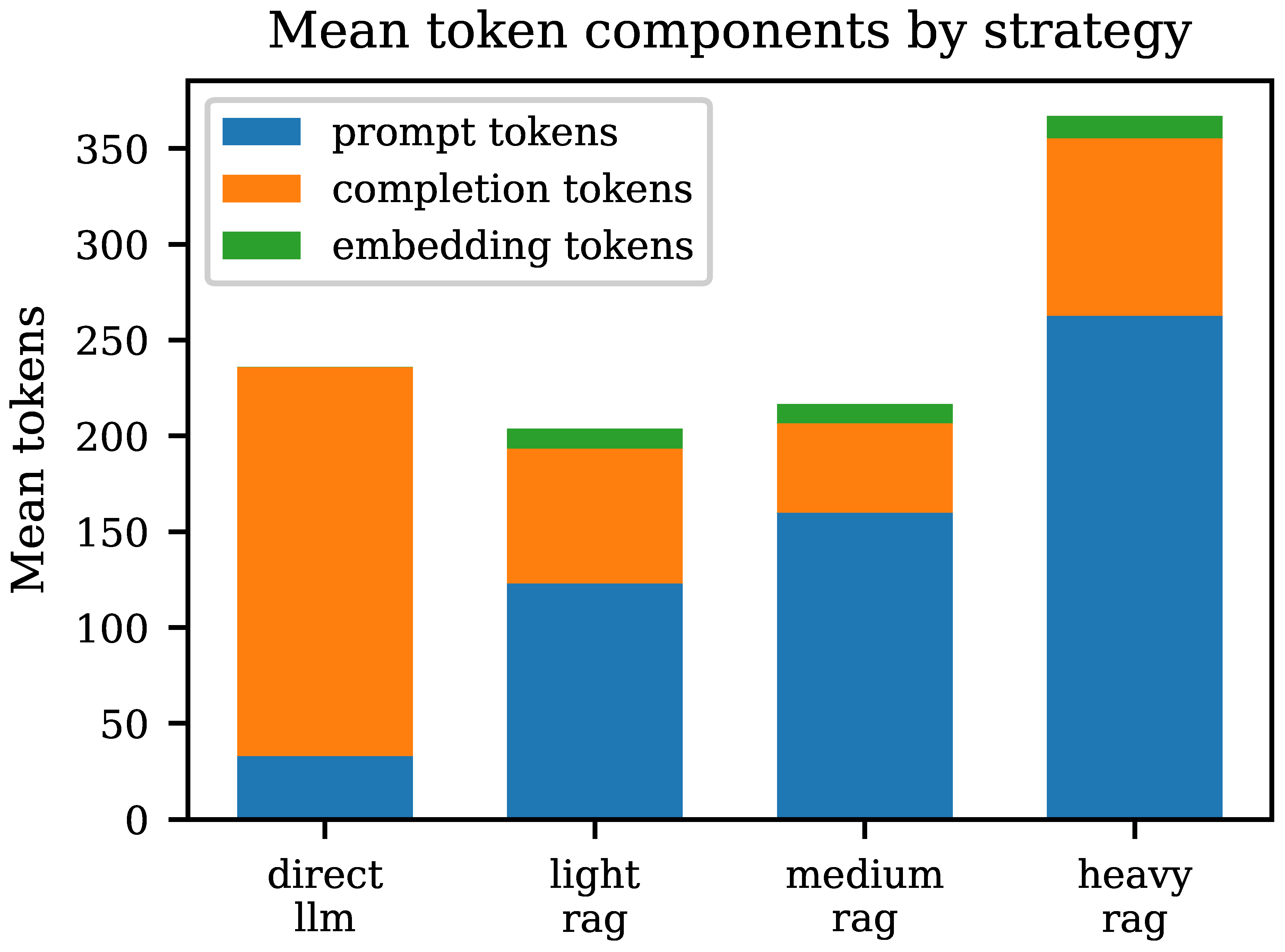

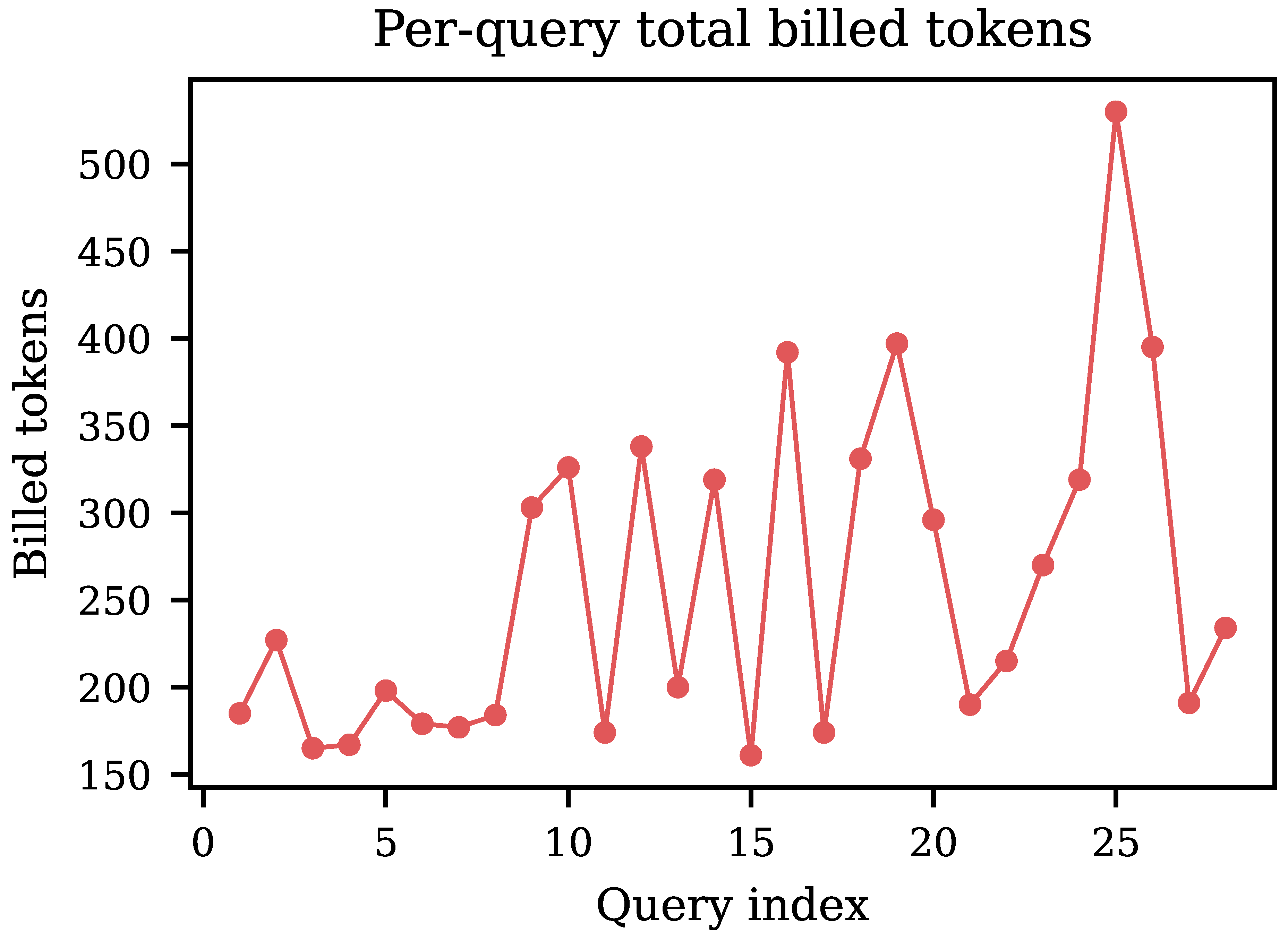

7.2. Latency Profiles and Token Decomposition

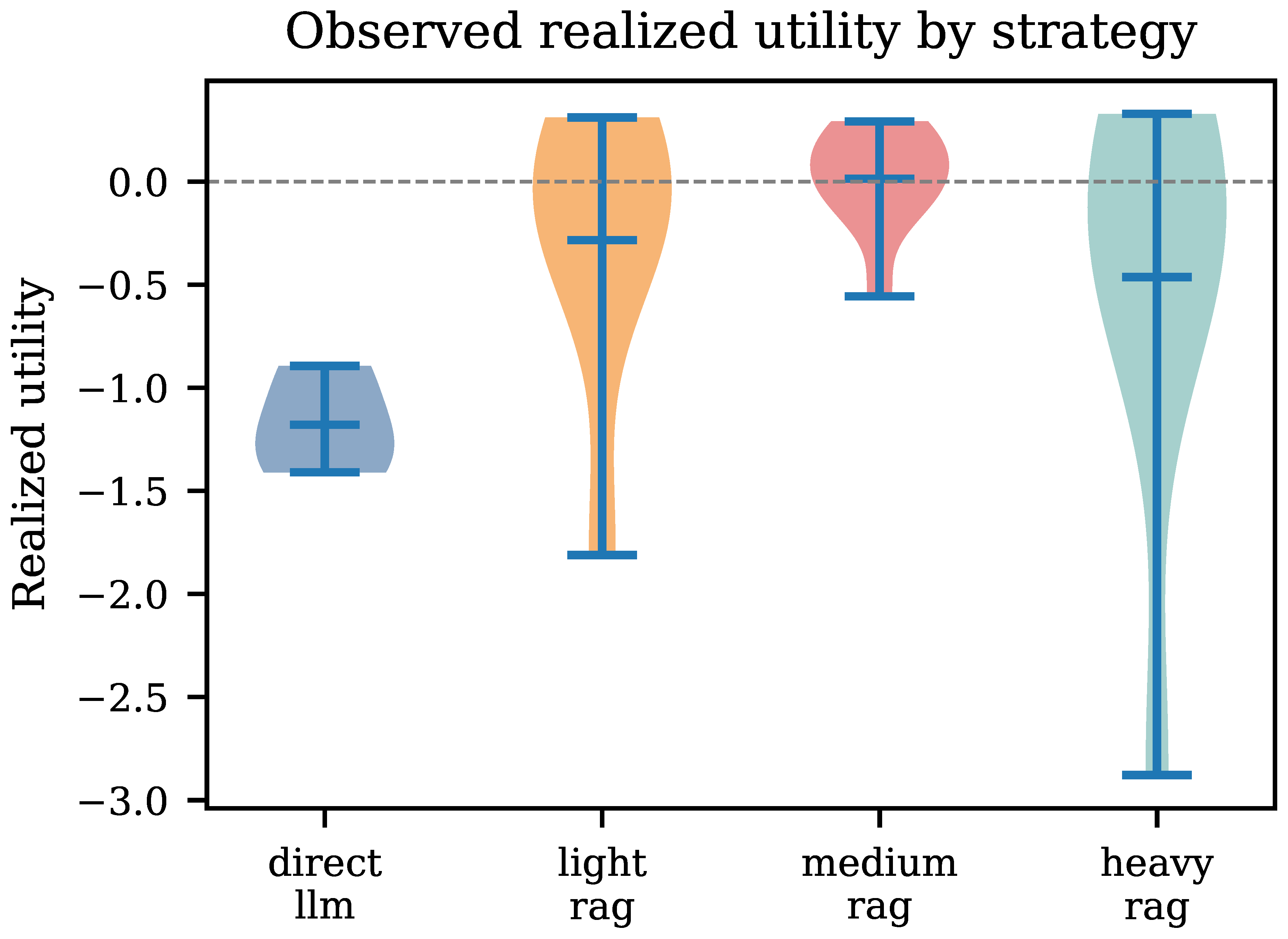

7.3. Utility Distributions and Quality Proxy Analysis

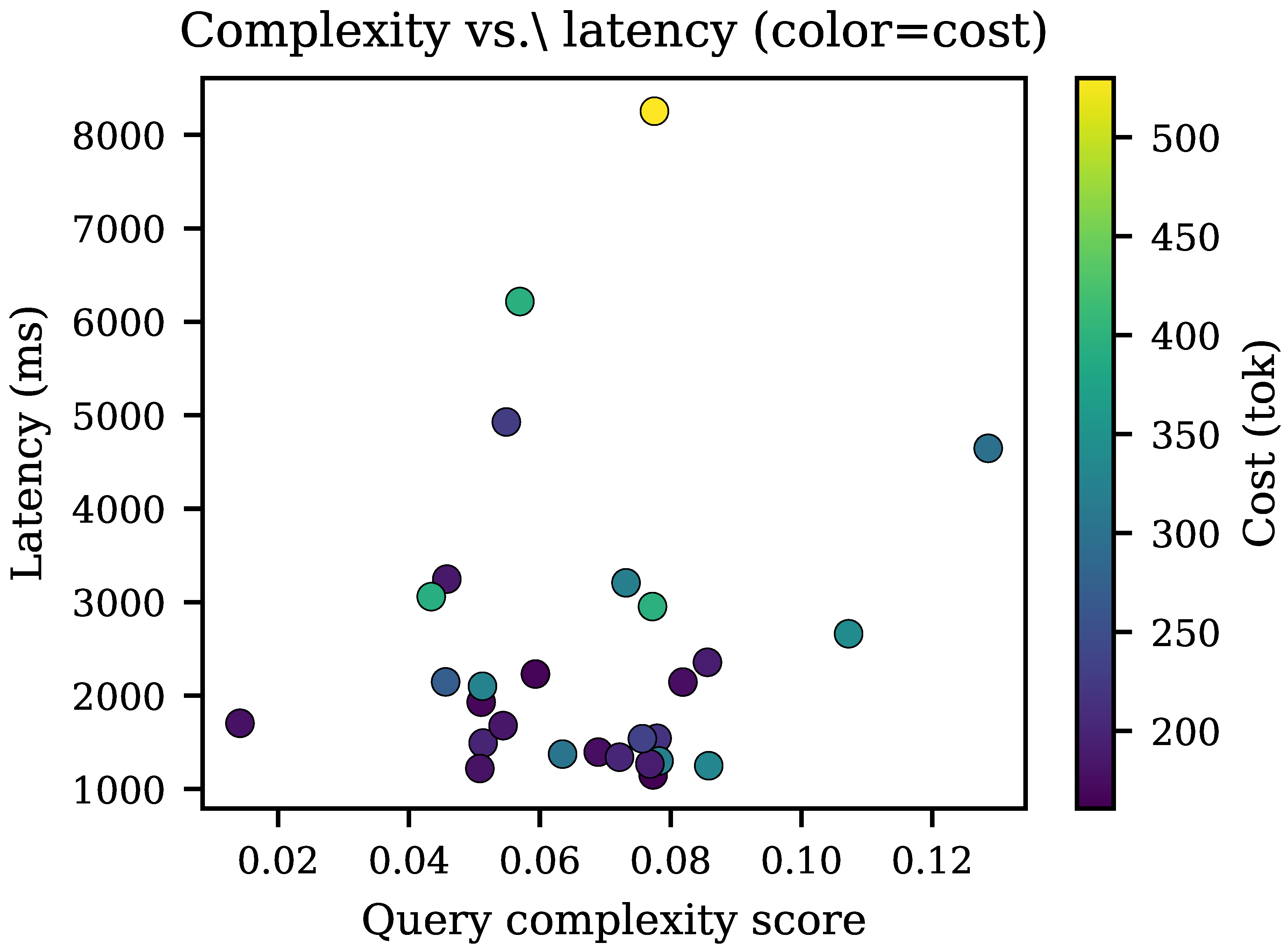

7.4. Complexity, Correlation Structure, and Per-Query Cost

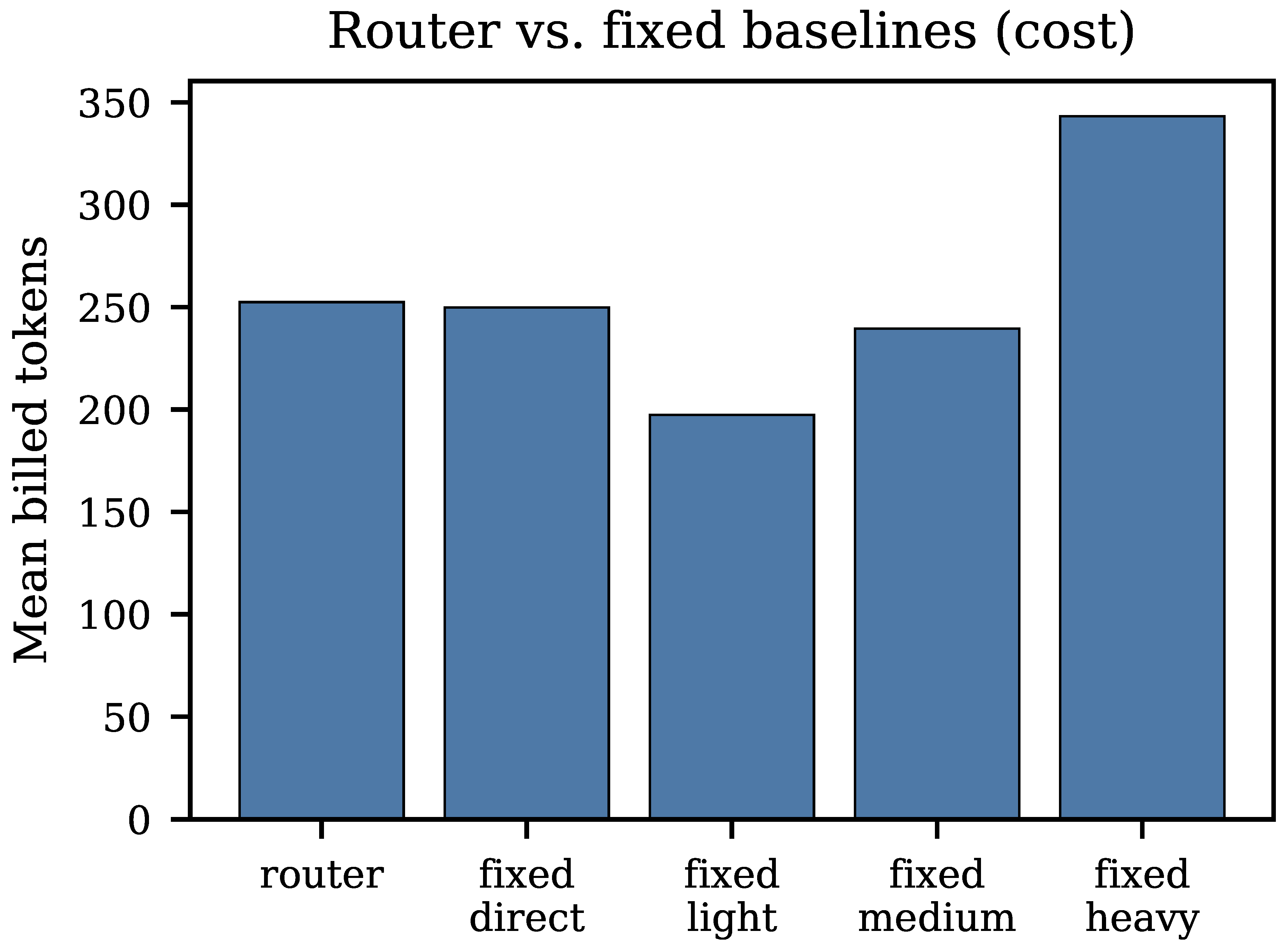

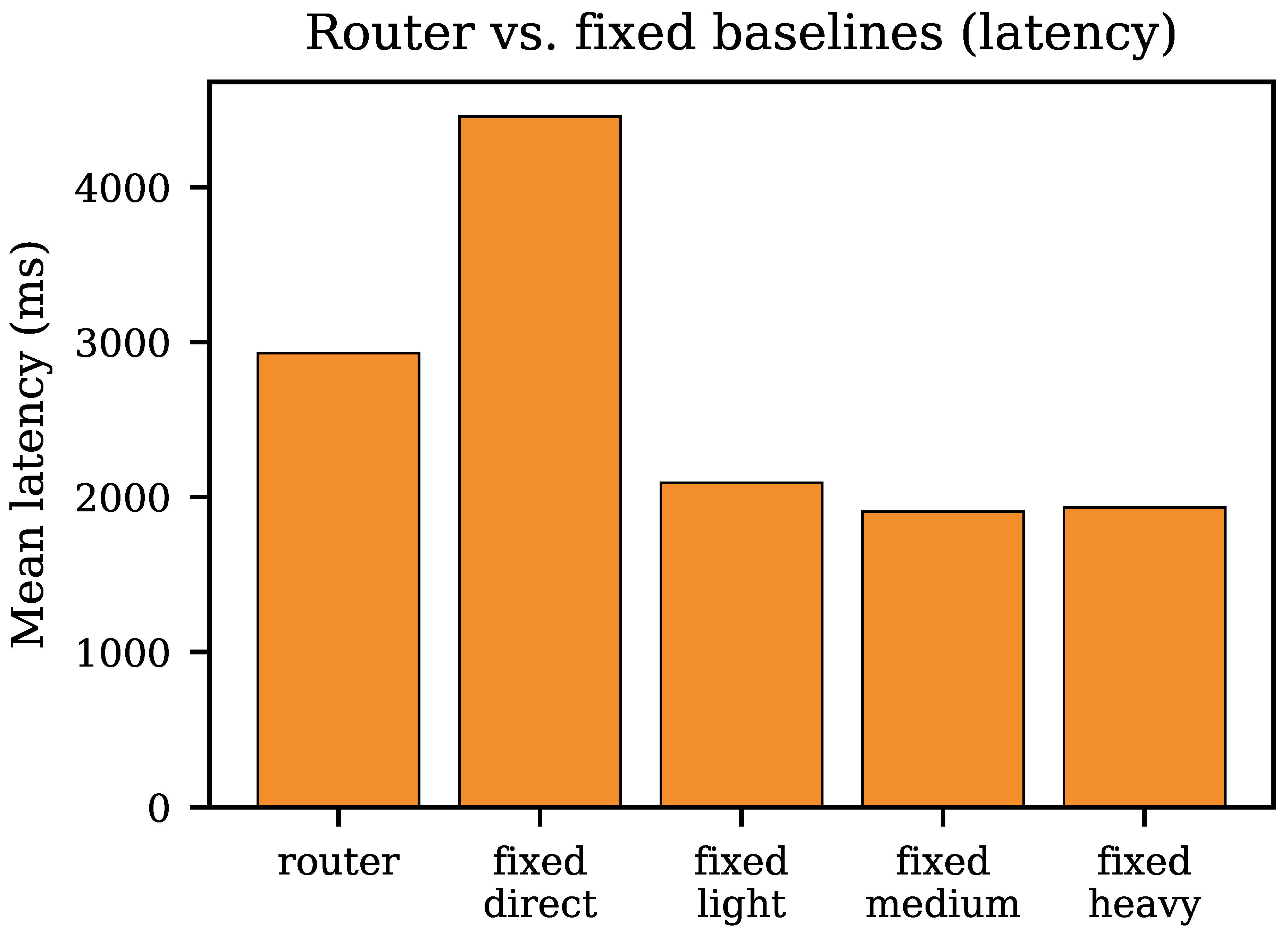

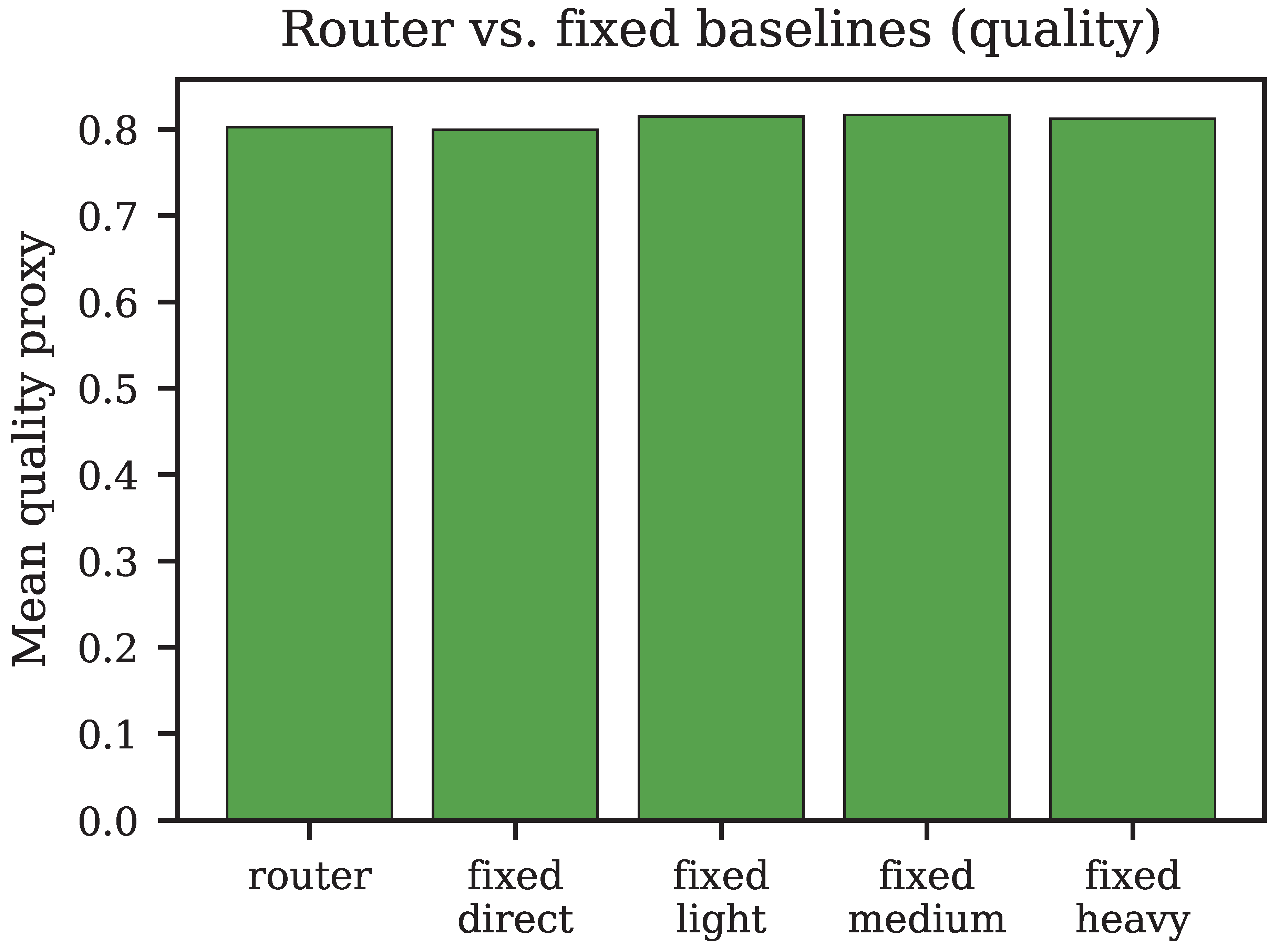

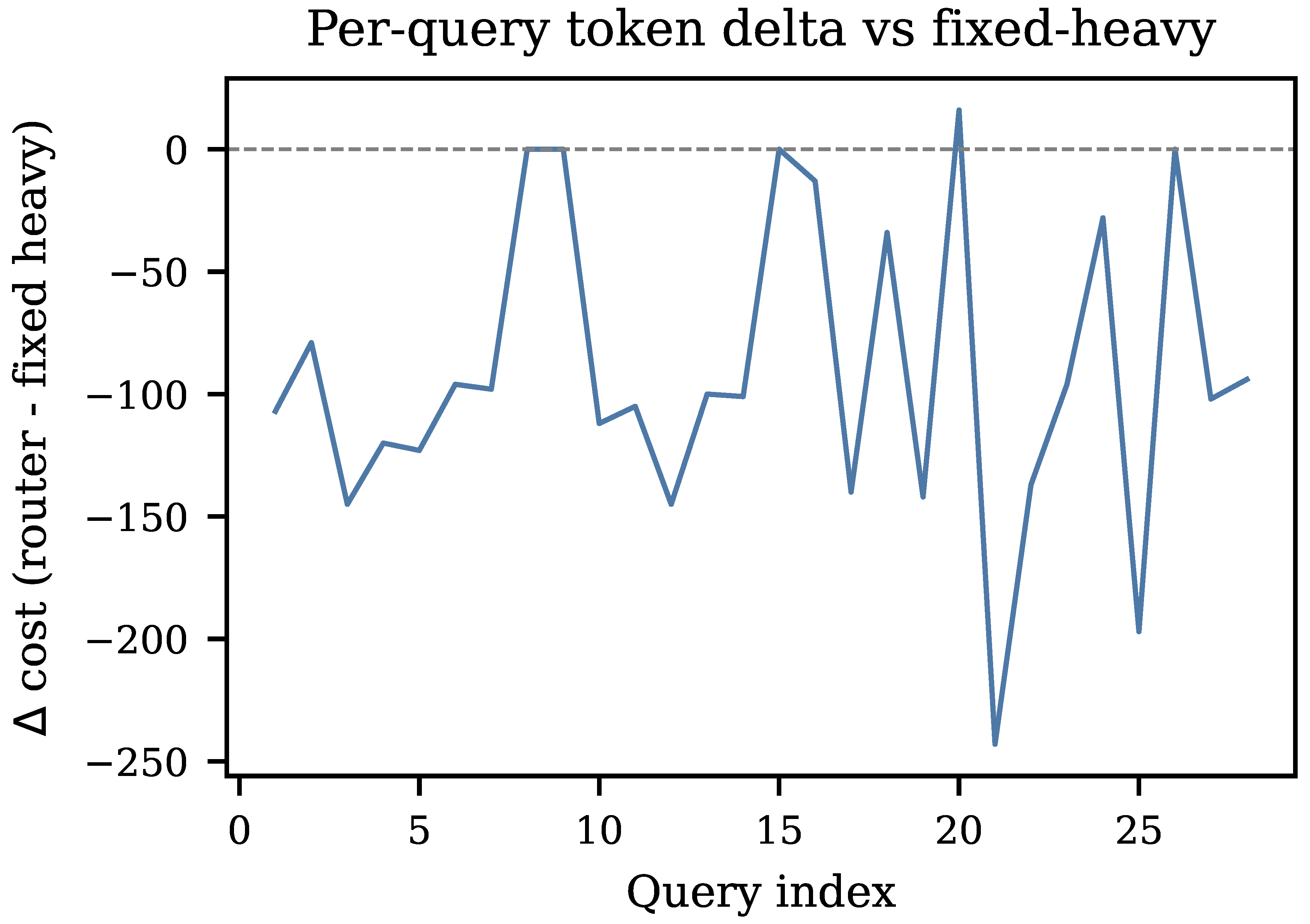

7.5. Router vs. Fixed Baselines

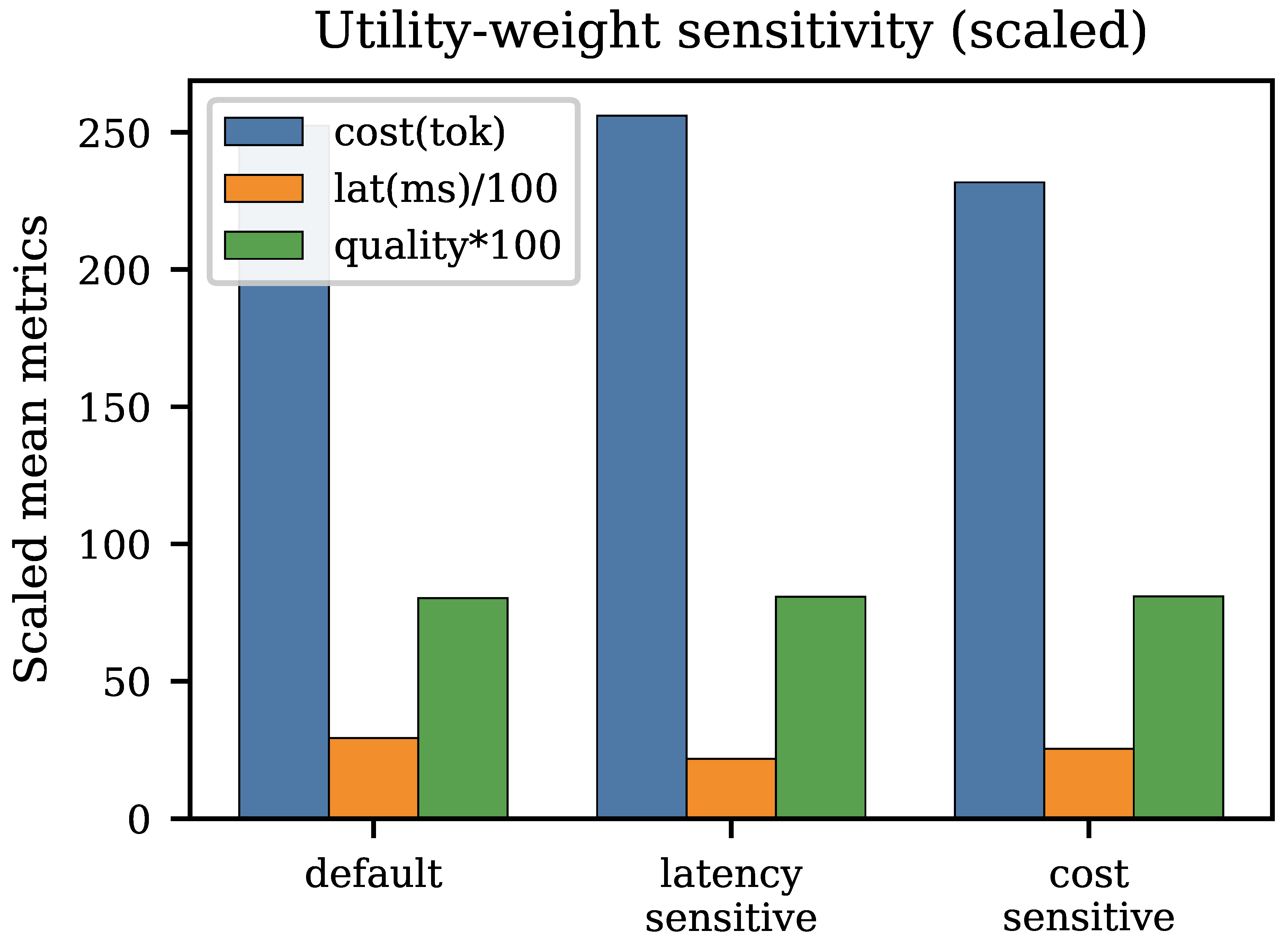

7.6. Utility-Weight Sensitivity

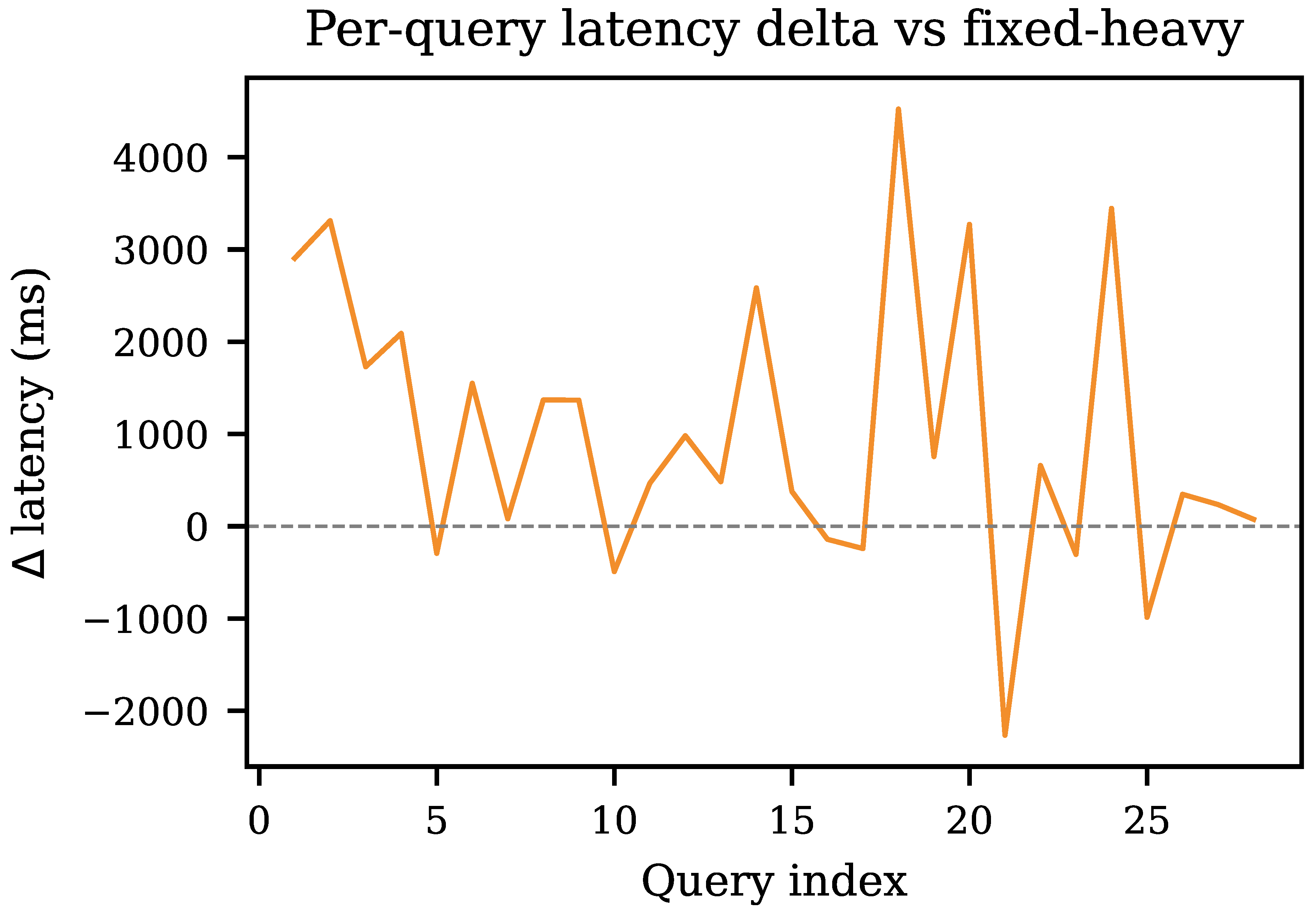

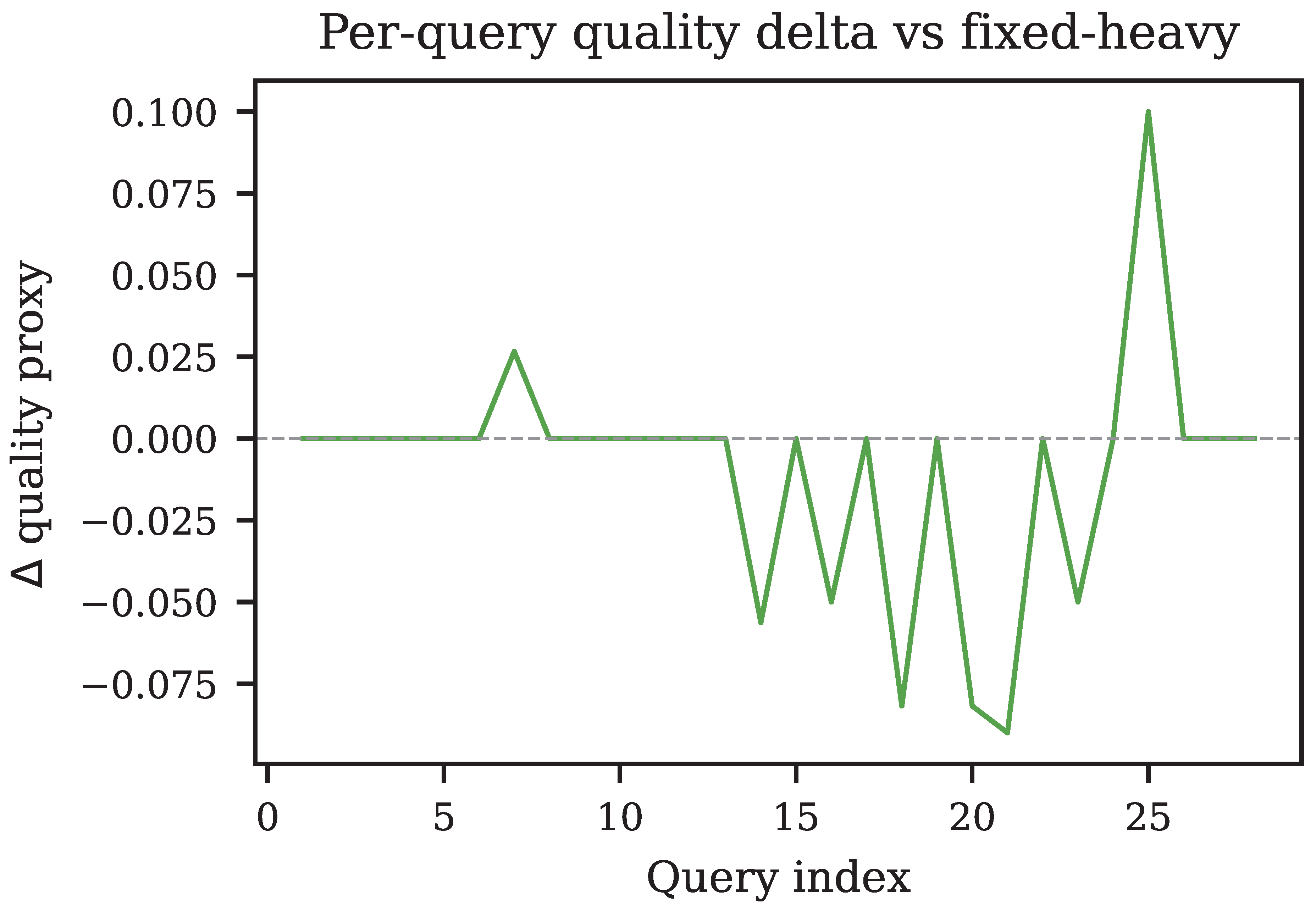

7.7. Per-Query Delta Analysis

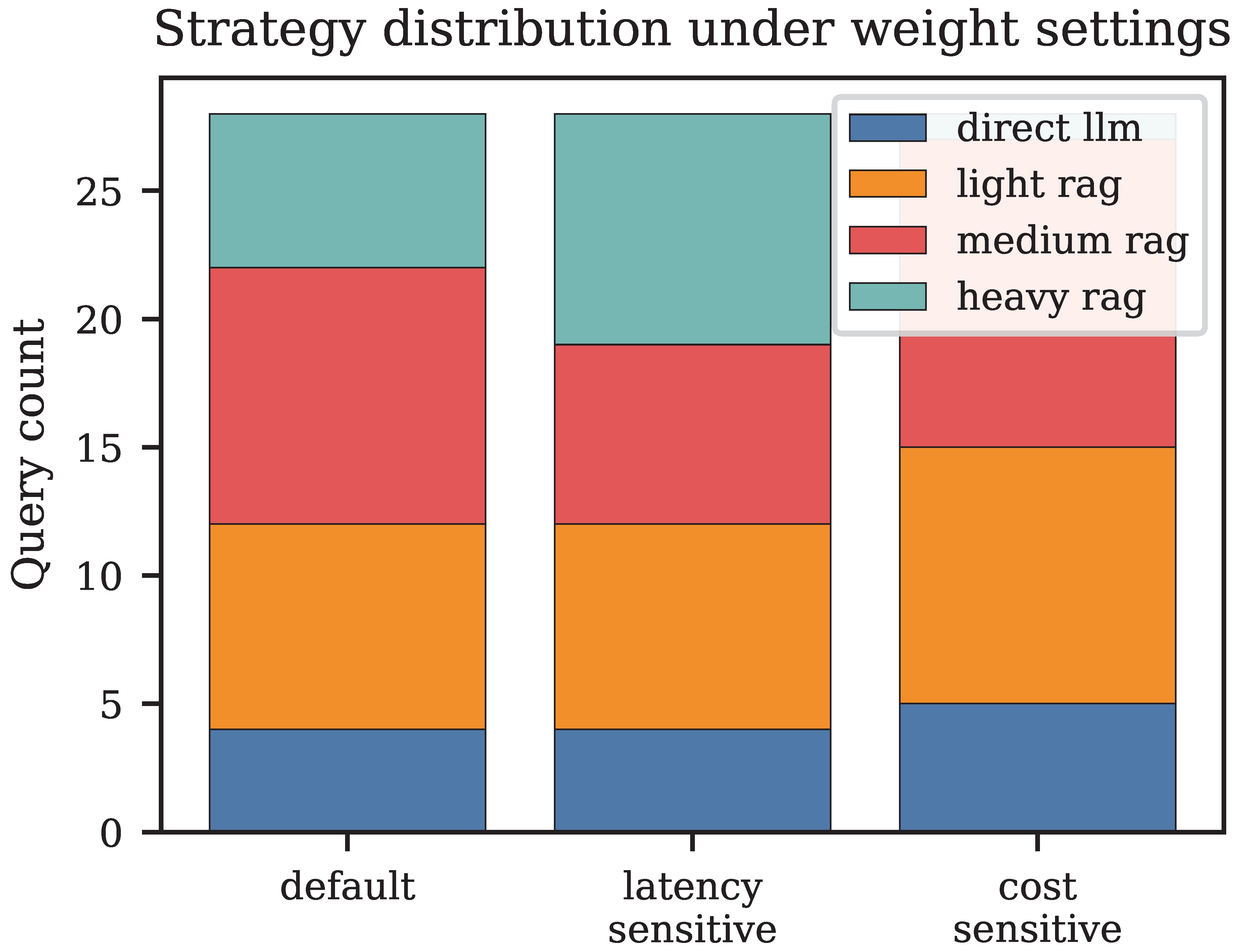

7.8. Strategy Mix Under Alternative Weight Settings

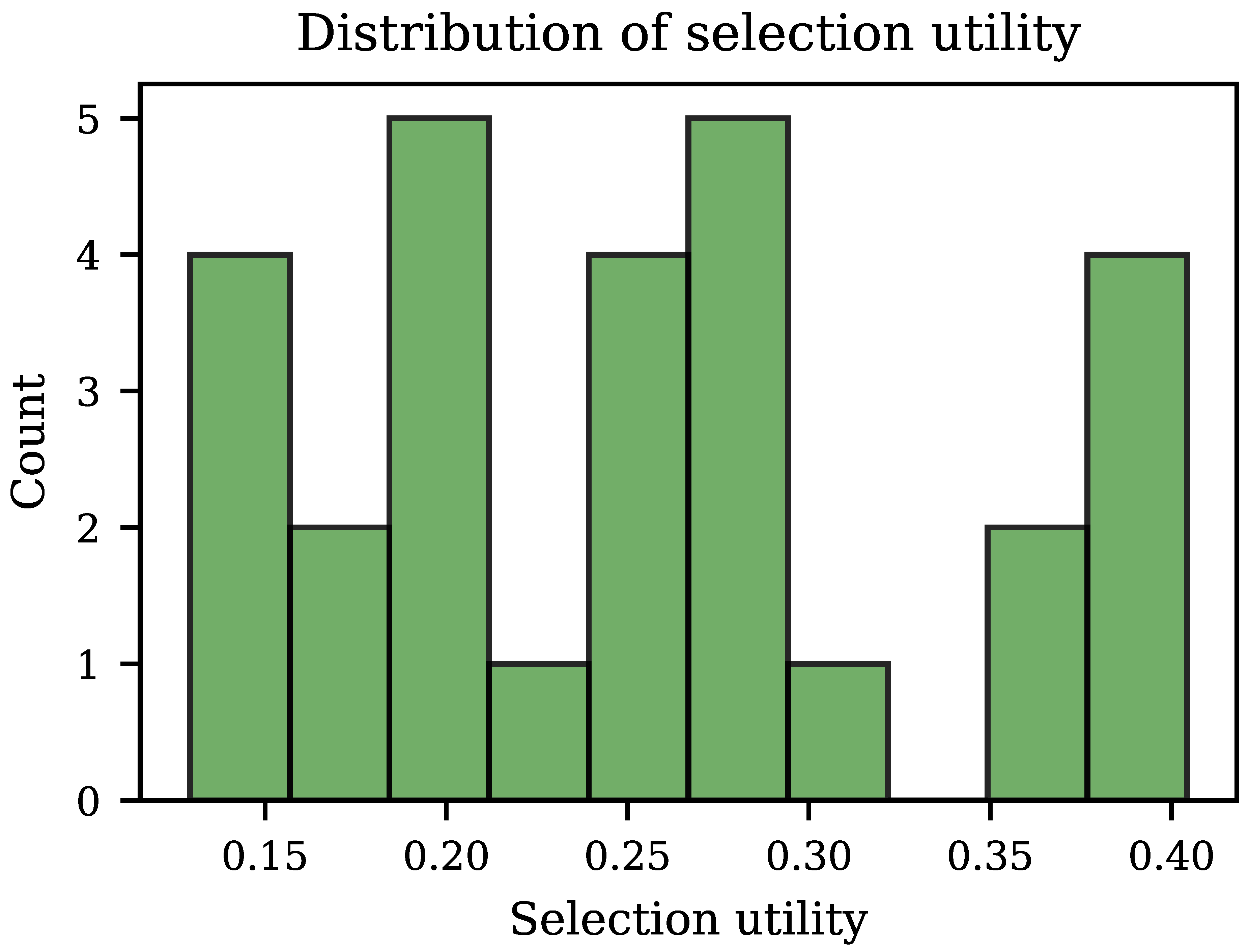

| Variable | mean | std | min | max |

|---|---|---|---|---|

| cost | 258.5 | 94.5 | 161.0 | 530.0 |

| latency | 2512.3 | 1675.6 | 1151.6 | 8253.4 |

| utility | 0.3 | 0.1 | 0.1 | 0.4 |

| quality_proxy | 0.8 | 0.1 | 0.6 | 1.0 |

| Strategy | mean cost | mean latency | mean U |

|---|---|---|---|

| direct_llm | 236.0 ± 56.0 | 4274 ± 900 | 0.333 ± 0.123 |

| light_rag | 203.7 ± 84.9 | 2490 ± 1703 | 0.263 ± 0.092 |

| medium_rag | 216.7 ± 45.9 | 1714 ± 588 | 0.250 ± 0.086 |

| heavy_rag | 367.0 ± 74.0 | 2869 ± 2298 | 0.220 ± 0.057 |

| cost | lat. | U | cplx. | |

|---|---|---|---|---|

| cost | 1.00 | 0.66 | -0.50 | 0.22 |

| lat. | 0.66 | 1.00 | -0.19 | 0.13 |

| -0.50 | -0.19 | 1.00 | -0.48 | |

| cplx. | 0.22 | 0.13 | -0.48 | 1.00 |

8. Discussion

8.1. Why Routing Matters: The Static Configuration Trap

8.2. Per-Query Regime Analysis

8.3. Signal Quality and Routing Accuracy

8.4. Weight Calibration as a Product Decision

8.5. Failure Modes and Mitigations

8.6. Scalability Pathway

9. Limitations

10. Conclusions

Appendix A. Algorithmic Summary

- 1.

- Compute query signals and complexity .

- 2.

- For each bundle , compute estimated utility via Equation (3).

- 3.

- Select (optionally with -greedy exploration).

- 4.

- Retrieve context C using the retrieval specification of ; generate answer a using the shared generation spec.

- 5.

- Log ; optionally update telemetry priors.

Appendix B. Reproducible Commands

Appendix C. Benchmark Question Set

- What is RAG?

- Why is token cost important?

- How does latency affect AI systems?

- What is adaptive retrieval?

- Explain cost-aware AI systems.

- What is hybrid retrieval?

- Define utility-based routing.

- What is FAISS used for?

- How do strategy bundles work in CA-RAG?

- What is retrieval confidence?

- Compare light versus heavy retrieval for long documents.

- Explain how telemetry refines routing estimates with concrete steps.

- Why might a system skip retrieval for some queries?

- List tradeoffs between large top-k and small top-k retrieval.

- How do embedding tokens differ from completion tokens in billing?

- Describe a municipal RAG use case with forms and citations.

- What are the risks of fixed retrieval depth across heterogeneous queries?

- How does CA-RAG combine quality, latency, and cost in one scalar objective?

- Explain when reranking is worth the extra latency in production.

- Derive an intuitive explanation of why discrete bundles are used instead of

- continuous search.

- What operational metrics should a team report for a deployed RAG service?

- How does query length influence estimated complexity signals in CA-RAG?

- Contrast direct LLM answers with retrieval-grounded answers for policy

- questions.

- What limitations apply to lexical quality proxies versus human evaluation?

- How would you tune utility weights for a latency-sensitive chatbot?

- Describe an experiment protocol to log strategy choices and token usage per

- query.

- What is the role of exploration epsilon in bundle selection?

- Explain retrieval-augmented generation for knowledge-intensive tasks in two

- sentences.

Appendix D. Benchmark Corpus

- RAG improves LLM accuracy by retrieving relevant documents before

- generation.

- Token cost is a major concern because embedding and completion APIs bill

- per token.

- Latency depends on retrieval time, reranking, and model inference time

- under load.

- Adaptive systems dynamically select strategies based on query complexity

- and observed telemetry.

- Cost-aware AI systems optimize resource usage while maintaining answer

- quality under SLO constraints.

- Hybrid dense-sparse retrieval combines embedding similarity with BM25

- lexical overlap for robustness.

- Utility-based routing scores each strategy bundle using quality priors

- minus latency and cost penalties.

- Municipal RAG applications ground answers in ordinances, forms, and public

- documents with provenance.

- Production RAG should expose retrieval confidence and source citations for

- auditability and trust.

- Embedding indexes such as FAISS enable approximate nearest neighbor search

- over chunked corpora.

- Strategy bundles pair retrieval depth with generation budgets to trade

- accuracy against spend.

- Telemetry can refine latency and quality estimates per bundle after

- sufficient query volume.

- Skipping retrieval reduces cost for definitional queries but risks

- hallucination on fact-heavy tasks.

- Large top-k retrieval increases recall but inflates prompt tokens and

- end-to-end latency.

- Reranking stages reorder candidates using cross-encoders at extra compute

- cost.

Appendix E. Logged CSV Schema

| query | : input question text |

| strategy | : selected retrieval strategy name |

| bundle | : bundle name (retrieval+generation composite key) |

| utility | : selection utility (prior-based, Eq. 1) |

| quality_proxy | : observed lexical overlap proxy in [0,1] |

| realized_utility | : post-hoc utility computed from observed metrics |

| latency | : total end-to-end latency (ms) |

| cost | : total billed tokens (prompt+completion+embedding) |

| prompt_tokens | : LLM prompt token count |

| completion_tokens | : LLM completion token count |

| embedding_tokens | : query embedding tokens |

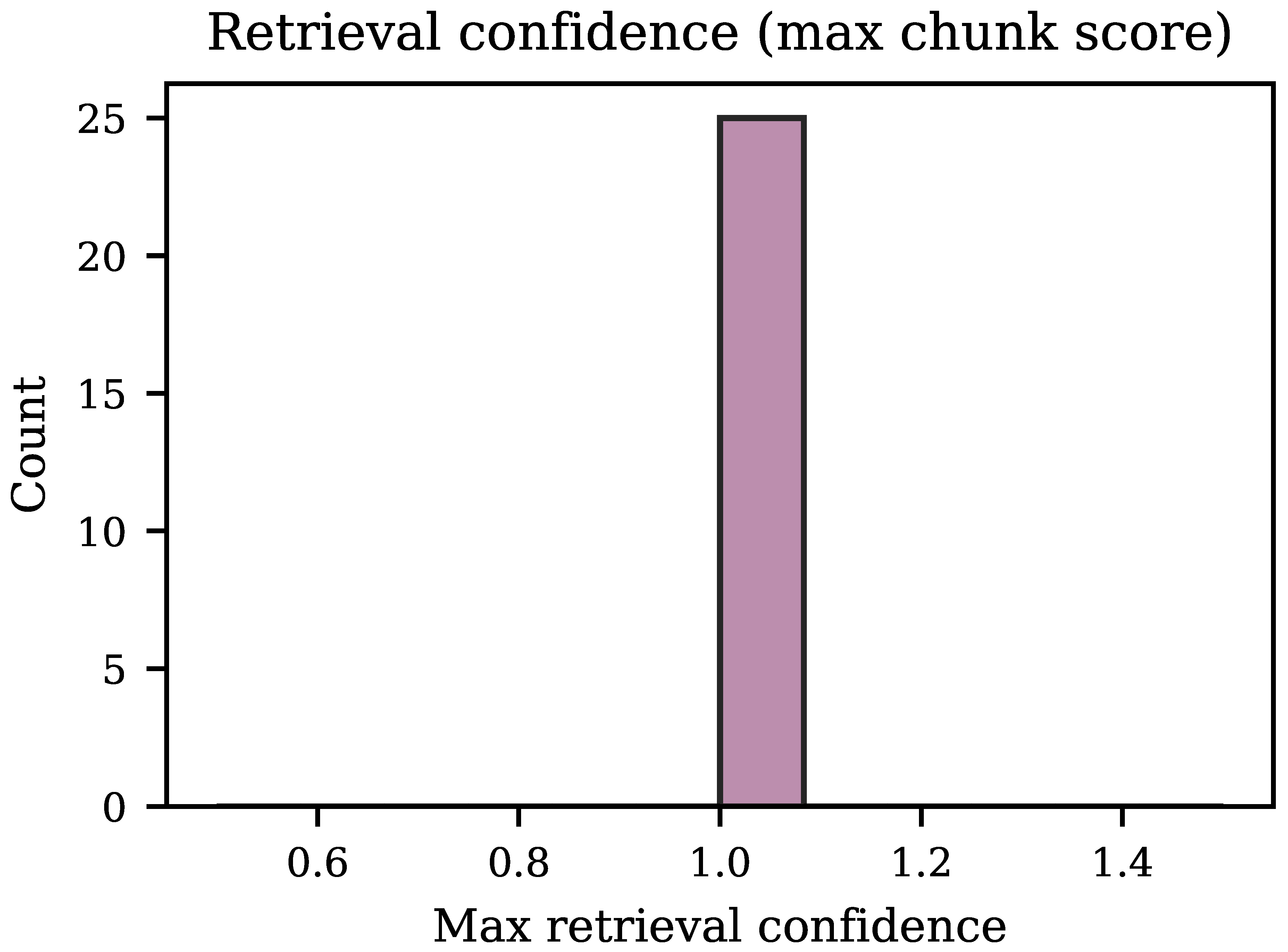

| retrieval_confidence | : max retrieved chunk cosine similarity |

| complexity_score | : heuristic complexity signal in [0,1] |

| index_embedding_tokens | : offline corpus-embed tokens (bookkeeping) |

Appendix F. Per-Query Strategy Assignments

| 1. What is RAG? | => direct_llm |

| 2. Why is token cost important? | => direct_llm |

| 3. How does latency affect AI systems? | => light_rag |

| 4. What is adaptive retrieval? | => light_rag |

| 5. Explain cost-aware AI systems. | => medium_rag |

| 6. What is hybrid retrieval? | => medium_rag |

| 7. Define utility-based routing. | => medium_rag |

| 8. What is FAISS used for? | => heavy_rag |

| 9. How do strategy bundles work in CA-RAG? | => heavy_rag |

| 10. What is retrieval confidence? | => medium_rag |

| 11. Compare light vs. heavy retrieval | => medium_rag |

| 12. Explain how telemetry refines routing estimates | => light_rag |

| 13. Why might a system skip retrieval? | => heavy_rag |

| 14. List tradeoffs between large/small top-k | => medium_rag |

| 15. How do embedding tokens differ from completion? | => medium_rag |

| 16. Describe a municipal RAG use case | => medium_rag |

| 17. What are risks of fixed retrieval depth? | => medium_rag |

| 18. How does CA-RAG combine quality/latency/cost? | => heavy_rag |

| 19. Explain when reranking is worth extra latency | => medium_rag |

| 20. Derive intuitive explanation of discrete bundles | => direct_llm |

| 21. What operational metrics should teams report? | => heavy_rag |

| 22. How does query length influence complexity? | => medium_rag |

| 23. Contrast direct vs. retrieval-grounded answers | => medium_rag |

| 24. Limitations of lexical quality proxies? | => medium_rag |

| 25. How to tune weights for latency-sensitive chat? | => medium_rag |

| 26. Describe experiment protocol for token logging | => medium_rag |

| 27. Role of exploration epsilon in bundle selection | => light_rag |

| 28. Explain RAG for knowledge-intensive tasks | => medium_rag |

Appendix G. Sample CSV Rows

- query,strategy,cost,latency,utility,quality_proxy,realized_utility

- What is RAG?,direct_llm,185,4051.1,0.4043,0.55,-1.2461

- How does latency affect AI systems?,light_rag,165,2850.3,0.3813,0.64,

- -0.3573

- What is hybrid retrieval?,medium_rag,179,1111.8,0.3672,0.85,-0.0159

- What is FAISS used for?,heavy_rag,303,1433.3,0.2813,0.91,0.1274

References

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.t.; Rocktäschel, T.; et al. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS); 2020; Vol. 33, pp. 9459–9474. [Google Scholar]

- Gao, Y.; Xiong, Y.; Gao, X.; Jia, J.; Pan, J.; Bi, Y.; Dai, Y.; Sun, J.; Wang, H. Retrieval-Augmented Generation for Large Language Models: A Survey. arXiv 2023, arXiv:2312.10997. [Google Scholar]

- Izacard, G.; Grave, E. Leveraging Passage Retrieval with Generative Models for Open Domain Question Answering. arXiv 2021, arXiv:2007.01282. [Google Scholar] [CrossRef]

- OpenAI. GPT-4 Technical Report. arXiv 2024, arXiv:2303.08774. [Google Scholar]

- Karpukhin, V.; Oguz, B.; Min, S.; Lewis, P.; Wu, L.; Edunov, S.; Chen, D.; Yih, W.t. Dense Passage Retrieval for Open-Domain Question Answering. In Proceedings of the Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), 2020; pp. 6769–6781. [Google Scholar]

- Khattab, O.; Zaharia, M. ColBERT: Efficient and Effective Passage Search via Contextualized Late Interaction over BERT. In Proceedings of the Proceedings of the 43rd International ACM SIGIR Conference on Research and Development in Information Retrieval, 2020; pp. 39–48. [Google Scholar]

- Johnson, J.; Douze, M.; Jégou, H. Billion-Scale Similarity Search with GPUs. IEEE Transactions on Big Data 2021, 7, 535–547. [Google Scholar] [CrossRef]

- Guu, K.; Lee, K.; Tung, Z.; Pasupat, P.; Chang, M. REALM: Retrieval-Augmented Language Model Pre-Training. In Proceedings of the Proceedings of the 37th International Conference on Machine Learning (ICML), 2020; pp. 3929–3938. [Google Scholar]

- Robertson, S.; Zaragoza, H. The Probabilistic Relevance Framework: BM25 and Beyond. Foundations and Trends in Information Retrieval 2009, 3, 333–389. [Google Scholar] [CrossRef]

- Li, L.; Chu, W.; Langford, J.; Schapire, R.E. A Contextual-Bandit Approach to Personalized News Article Recommendation. In Proceedings of the Proceedings of the 19th International Conference on World Wide Web (WWW), 2010; pp. 661–670. [Google Scholar]

- Lattimore, T.; Szepesvári, C. Bandit Algorithms; Cambridge University Press, 2020. [Google Scholar]

Short Biography of Authors

|

Sanjay Mishra is a seasoned technology leader, author, and innovator with over 14 years of expertise spanning data engineering, AI, and agentic systems. As a Principal Software Engineer at Fidelity Investments, he architects and delivers enterprise-scale data solutions and AI-driven platforms that power real-world decision-making. A prolific author, he has written two foundational technical books—The SQL Universe and Oracle Database Performance Tuning: A Checklist Approach—both widely regarded as essential references for engineers building high-performance data platforms. His research on AI and agentic systems has been published in IEEE, reflecting his commitment to advancing the frontier of intelligent systems. He is an active builder in the MCP ecosystem, having designed and deployed MCP servers and agents that connect natural language interfaces to complex data infrastructure, including NL2SQL pipelines powered by Oracle and OpenAI. Beyond engineering, he is a sought-after speaker at industry events and a judge at hackathons, where he mentors the next generation of technologists. Mr. Mishra is a Member of the IEEE. |

|

Ganesh R. Naik is a globally recognized leader in biomedical engineering, ranked among the top 1% of researchers worldwide in his field, and a prominent expert in data science and biomedical signal processing. He earned his Ph.D. in Electronics Engineering, specializing in biomedical engineering and signal processing, from RMIT University, Melbourne, Australia, in 2009, and holds an Associate Professor position in IT and Computer Science at Torrens University, Adelaide, Australia. Previously, he was an academic and research theme co-lead at Flinders University’s sleep institute, a Postdoctoral Research Fellow at the MARCS Institute, Western Sydney University (2017–2020), and a Chancellor’s Post-Doctoral Research Fellow at the Centre for Health Technologies, University of Technology Sydney (2013–2017). Dr. Naik has edited 15 books and authored approximately 200 papers in peer-reviewed journals and conferences. He serves as Associate Editor for IEEE Access, Frontiers in Neurorobotics, and two Springer journals. His contributions have been recognized with the Baden–Württemberg Scholarship (2006–2007), an ISSI overseas fellowship (2010), the BridgeTech industry fellowship from the Medical Research Future Fund, Government of Australia (2023), and the UK Royal Society Fellowship. |

| Bundle | k | Skip retr. | Qual. prior | Lat. prior (ms) |

|---|---|---|---|---|

| direct_llm | 0 | ✓ | 0.52 | 8 |

| light_rag | 3 | ✗ | 0.66 | 45 |

| medium_rag | 5 | ✗ | 0.74 | 60 |

| heavy_rag | 10 | ✗ | 0.82 | 95 |

| Policy | cost(tok) | lat(ms) | qual. | U |

|---|---|---|---|---|

| router_default | 252.4 | 2927 | 0.80 | 0.192 |

| router_latency_sensitive | 256.0 | 2165 | 0.81 | -0.291 |

| router_cost_sensitive | 231.8 | 2536 | 0.81 | 0.117 |

| fixed_direct | 249.9 | 4457 | 0.80 | -0.367 |

| fixed_light | 197.3 | 2091 | 0.82 | 0.167 |

| fixed_medium | 239.5 | 1906 | 0.82 | 0.177 |

| fixed_heavy | 343.2 | 1932 | 0.81 | 0.132 |

| Baseline | P(cost win) | P(lat win) | P(qual win) |

|---|---|---|---|

| fixed_direct | 0.43 | 0.71 | 0.32 |

| fixed_light | 0.07 | 0.36 | 0.00 |

| fixed_medium | 0.32 | 0.18 | 0.00 |

| fixed_heavy | 0.82 | 0.25 | 0.07 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).