Submitted:

14 April 2026

Posted:

15 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

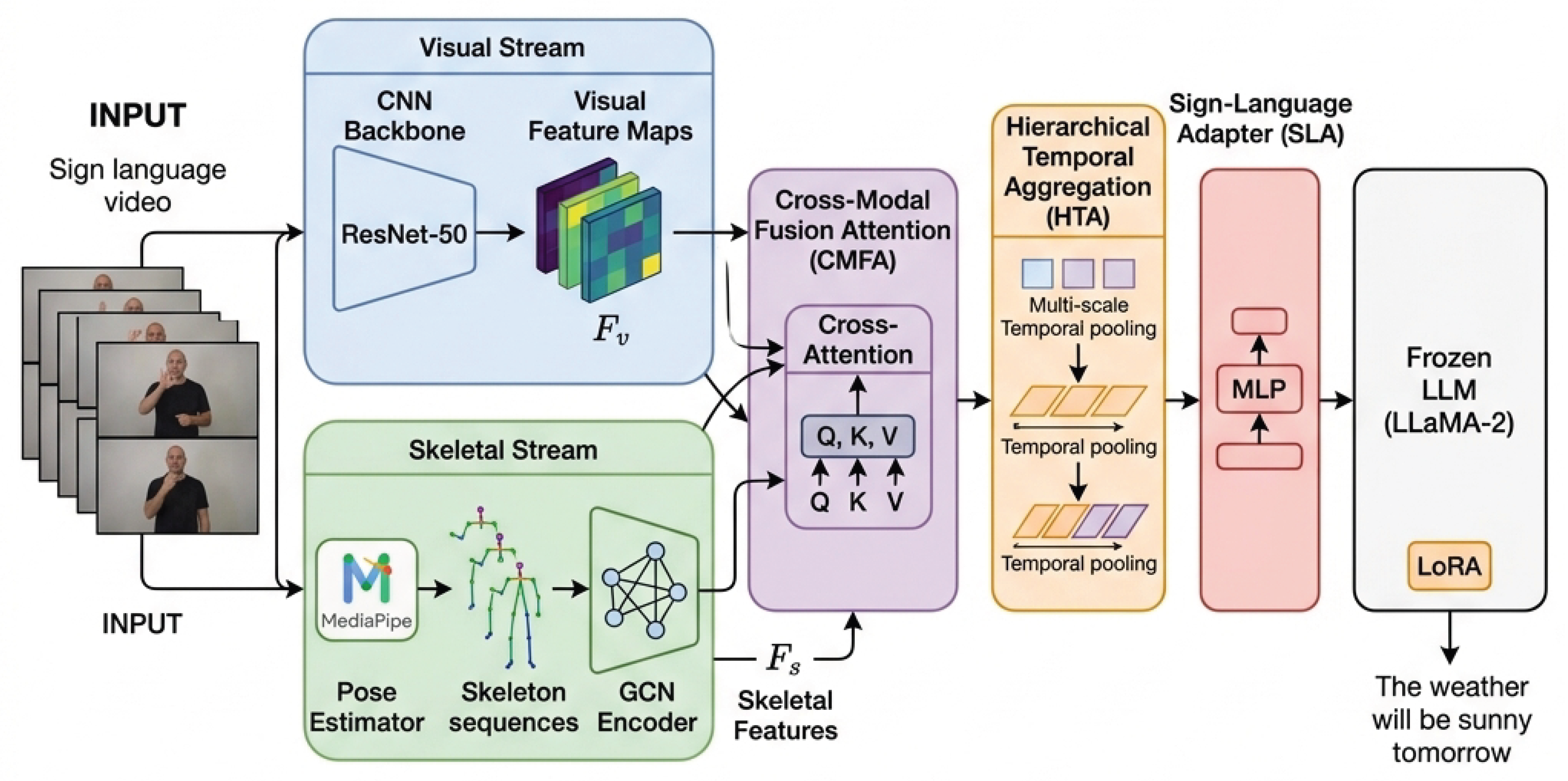

- Dual-Stream Feature Extraction: We employ a CNN-based visual stream (ResNet-50) and a GCN-based skeletal stream (ST-GCN) to extract complementary features. The visual stream captures appearance information (e.g., hand shapes, facial expressions), while the skeletal stream captures structural and kinematic information (e.g., joint angles, movement trajectories).

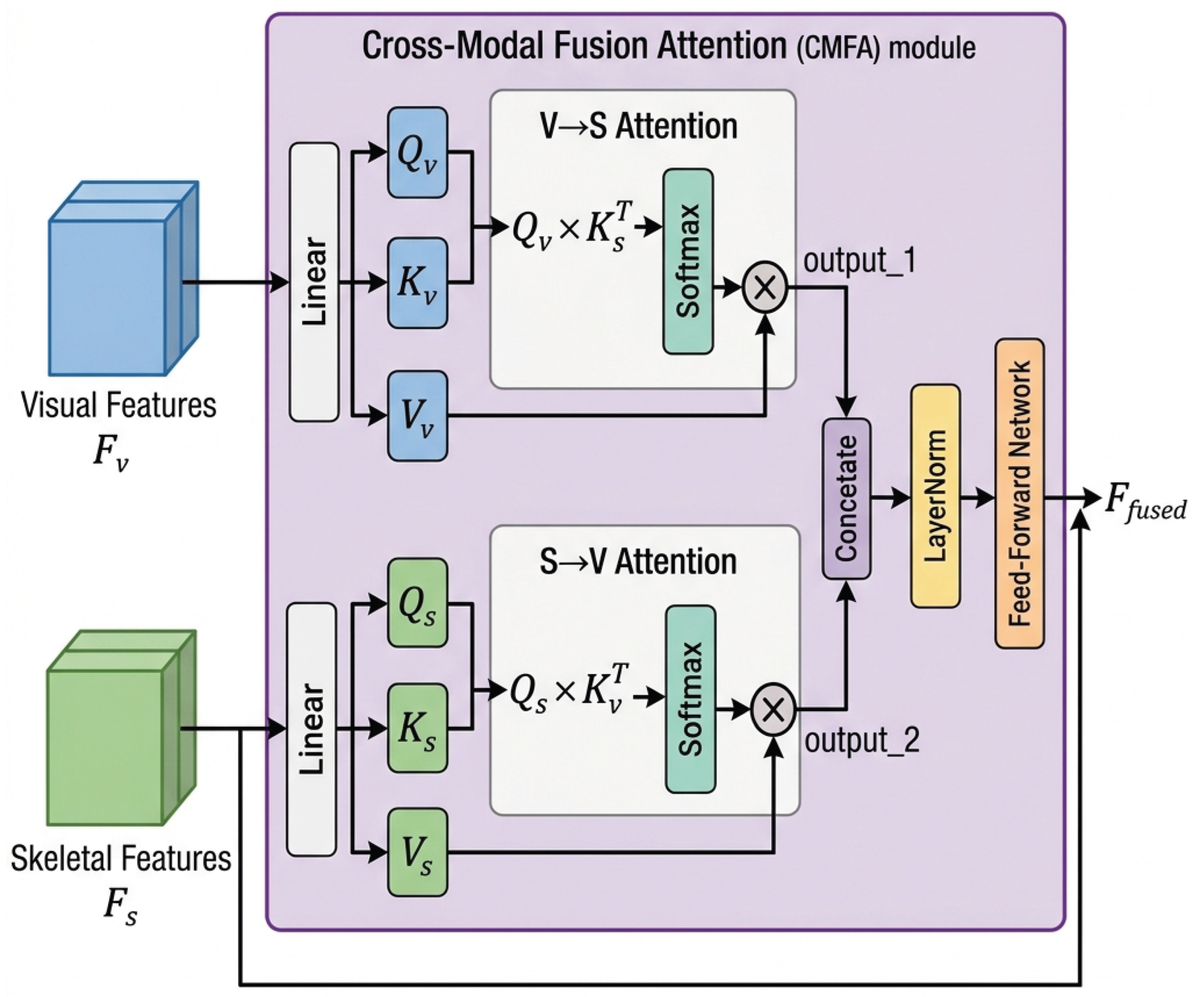

- Cross-Modal Fusion Attention (CMFA): We introduce a novel bidirectional cross-attention module that enables each modality to attend to the other, producing enriched multimodal representations that leverage the strengths of both visual and skeletal features through learned gated aggregation.

- Hierarchical Temporal Aggregation (HTA): We design a multi-scale temporal modeling module that captures sign language dynamics at frame, segment, and sequence levels, enabling the model to understand both fine-grained gestures and long-range contextual dependencies through adaptive temporal fusion.

- We propose SignFuse, a novel dual-stream architecture that, for the first time, integrates CNN-based visual features and GCN-based skeletal features through deep bidirectional cross-modal attention for LLM-based sign language translation.

- We introduce the Cross-Modal Fusion Attention (CMFA) module with gated aggregation and the Hierarchical Temporal Aggregation (HTA) mechanism, both of which are formally specified with complete mathematical formulations.

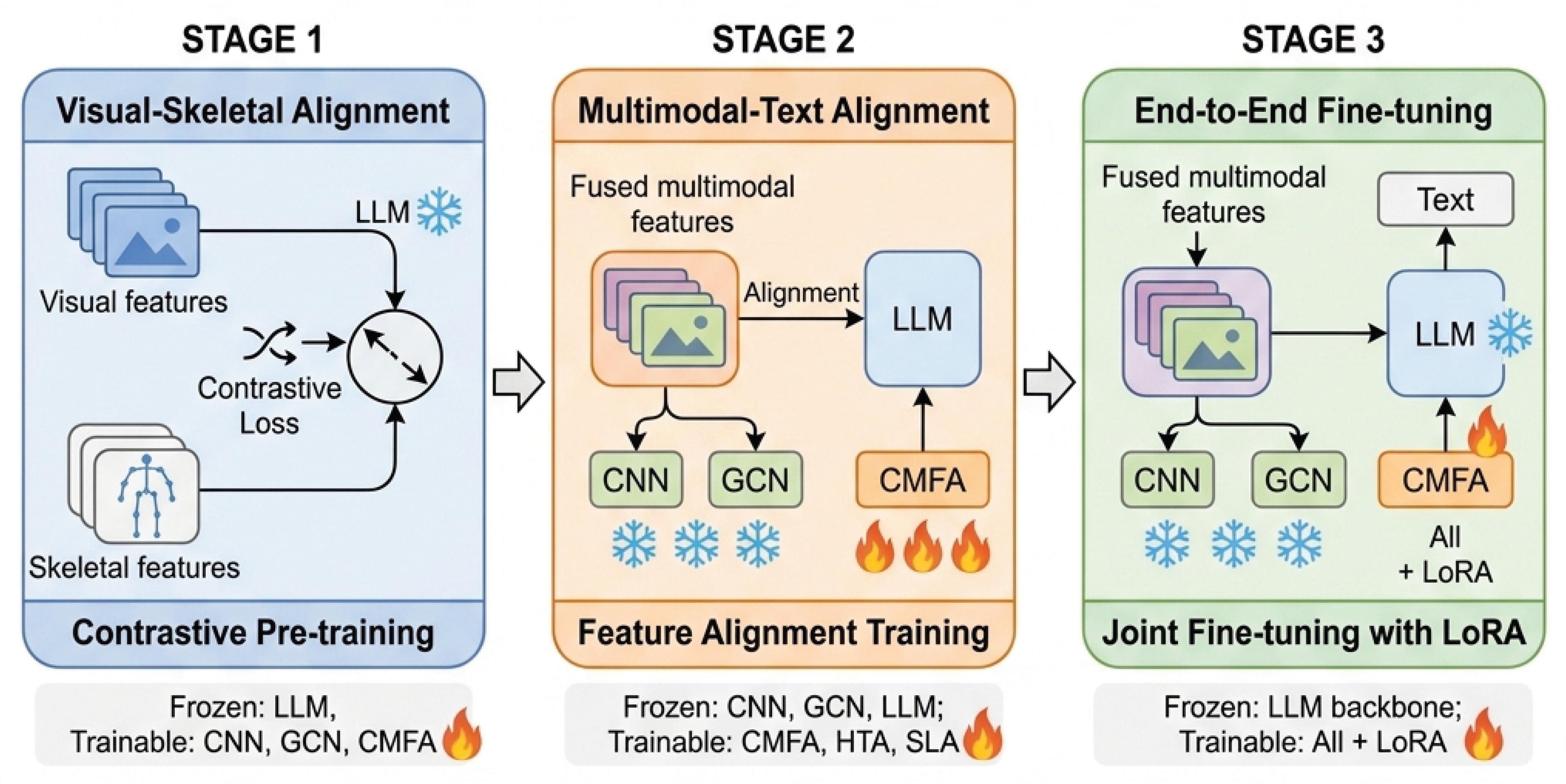

- We design a three-stage progressive training strategy that systematically bridges the gap between multimodal sign language representations and the linguistic space of LLMs.

- We provide a complete, runnable PyTorch implementation and formalize a comprehensive experimental protocol on three standard benchmarks, extending an open call for community-driven empirical validation.

2. Related Work

2.1. Sign Language Recognition

2.2. Gloss-Free Sign Language Translation

2.3. Large Language Models and Vision–Language Models for Sign Language

2.4. Multimodal Fusion Strategies

3. Proposed Methodology: SignFuse

3.1. Problem Formulation

3.2. Visual Feature Stream

3.3. Skeletal Feature Stream

3.4. Cross-Modal Fusion Attention (CMFA)

3.5. Hierarchical Temporal Aggregation (HTA)

- Frame-level (fine-grained): Individual hand configurations, finger spelling, and instantaneous facial expressions occur at the frame level (25–30ms per frame at 30fps). These are critical for distinguishing between signs with similar global motions but different hand shapes.

- Segment-level (mid-grained): Individual sign units typically span 5–30 frames (150–1000ms). Capturing segment-level dynamics enables recognition of transitional movements, sign boundaries, and phonological components.

- Sequence-level (coarse-grained): Sentence-level structures, discourse patterns, and long-range dependencies extend across hundreds of frames. Understanding these is essential for generating grammatically correct, contextually appropriate translations.

3.6. Sign-Language Adapter and LLM Integration

3.7. Progressive Multi-Stage Training

4. Implementation Specifications

4.1. Model Configuration

4.2. Proposed Training Hyperparameters

4.3. Software Availability

- model.py: Full architecture implementation including all five proposed components (VisualStream, SkeletalStream, CMFA, HTA, SLA), loss functions (InfoNCE, Alignment, Combined), and a model builder utility. The implementation has been validated for correct tensor shapes through a comprehensive forward-pass test.

- train.py: Complete three-stage training pipeline with stage-specific parameter freezing, optimizer configuration, learning rate scheduling, checkpoint management, and synthetic data fallback for pipeline testing.

- evaluate.py: Evaluation utilities implementing BLEU-1/2/3/4, ROUGE-L, and METEOR metrics from scratch, along with visualization tools for generating comparison charts.

5. Proposed Experimental Protocol

5.1. Target Datasets

5.2. Evaluation Metrics

- BLEU-n () [42]: Measures n-gram precision between hypothesis and reference translations with a brevity penalty. BLEU-4 is the primary metric for comparison.

- ROUGE-L [43]: Measures the longest common subsequence between hypothesis and reference, capturing sentence-level fluency.

- METEOR [44]: Combines precision, recall, and alignment with synonym matching for more comprehensive evaluation.

5.3. Baseline Methods for Comparison

| Method | B@4 | R-L |

|---|---|---|

| GFSLT-VLP [6] (ICCV 2023) | 21.44 | 43.78 |

| Sign2GPT [11] (2024) | 22.52 | 44.83 |

| SignLLM [10] (CVPR 2024) | 23.51 | 46.35 |

| SpaMo [12] (NAACL 2025) | 24.32 | 47.56 |

| MMSLT [13] (ICCV 2025) | 25.18 | 48.34 |

5.4. Proposed Ablation Studies

- Component ablation: Progressively add components (Visual-only → +Skeletal → +CMFA → +HTA → +Progressive Training) to measure incremental gains.

- Fusion strategy comparison: Compare CMFA against concatenation, element-wise addition, unidirectional cross-attention (V→S and S→V), and bidirectional without gating.

- Temporal scale analysis: Evaluate different combinations of HTA temporal scales to identify the most impactful granularities.

- Training stage analysis: Measure the benefit of each progressive training stage compared to direct end-to-end training.

- LLM backbone sensitivity: Test with different LLM backbones (Flan-T5, Vicuna, LLaMA-2 7B/13B) to assess the framework’s generalizability.

5.5. Expected Theoretical Advantages

6. Discussion

6.1. Theoretical Analysis of CMFA

6.2. Computational Cost Analysis

6.3. Limitations and Future Directions

- Pending empirical validation. The most significant limitation is the absence of empirical results. While the architecture is fully specified and the codebase is complete, the claims remain theoretical until validated on benchmark datasets with real training.

- Pose estimation reliability. Our reliance on MediaPipe for skeleton extraction means that the skeletal stream’s effectiveness is bounded by MediaPipe’s accuracy, which can degrade under poor lighting, occlusion, or non-frontal camera angles.

- Fixed temporal scales. The HTA module uses fixed kernel sizes , which may not be optimal for all signing speeds and styles. Adaptive kernel selection could improve robustness.

- Single sign language. While we propose evaluation on multiple datasets, each represents a different sign language. Cross-lingual transfer between sign languages remains an open challenge.

7. Open Call to the Research Community

- Execute the proposed training pipeline on PHOENIX-14T, CSL-Daily, and/or How2Sign using the provided codebase and hyperparameters.

- Report empirical results following the evaluation protocol defined in Section 5, including all recommended ablation studies.

- Extend or modify the architecture based on empirical findings, e.g., exploring alternative backbone networks, different fusion strategies, or additional modalities.

- Collaborate on joint publications reporting the empirical validation results.

8. Conclusion

Data Availability Statement

References

- World Health Organization. Deafness and Hearing Loss. 2023. Available online: https://www.who.int/health-topics/hearing-loss (accessed on 2025-01-15).

- Camgöz, N.C.; Hadfield, S.; Koller, O.; Ney, H.; Bowden, R. Neural Sign Language Translation. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2018; pp. 7784–7793. [Google Scholar]

- Singh, G.; Banerjee, T.; Ghosh, N. Tracing the Evolution of Artificial Intelligence: A Review of Tools, Frameworks, and Technologies (1950–2025). Preprints 2025. [Google Scholar] [CrossRef]

- Camgöz, N.C.; Koller, O.; Hadfield, S.; Bowden, R. Sign Language Transformers: Joint End-to-end Sign Language Recognition and Translation. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2020; pp. 10023–10033. [Google Scholar]

- Camgöz, N.C.; Koller, O.; Hadfield, S.; Bowden, R. Multi-channel Transformers for Multi-articulatory Sign Language Translation. arXiv 2020, arXiv:2009.00299. [Google Scholar]

- Zhou, B.; Chen, Z.; Clápez, A.; Shao, J.; Wang, J.; Xiao, J.; Zha, Z.J. Gloss-Free Sign Language Translation: Improving From Visual-Language Pretraining. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023; pp. 2816–2825. [Google Scholar]

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. LLaMA: Open and Efficient Foundation Language Models. arXiv 2023, arXiv:2302.13971. [Google Scholar] [CrossRef]

- OpenAI. GPT-4 Technical Report. arXiv 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Singh, G.; Qamar, L.; Volta, N.V.; Velamuri, A.; Khanyile, A. Vision–Language Foundation Models and Multimodal Large Language Models: A Comprehensive Survey of Architectures, Benchmarks, and Open Challenges. Preprints 2026. [Google Scholar] [CrossRef]

- Gong, J.; Foo, L.G.; He, Y.; Rahmani, H.; Liu, J. LLMs are Good Sign Language Translators. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024; pp. 14754–14764. [Google Scholar]

- Wong, R.; Camgöz, N.C.; Bowden, R. Sign2GPT: Leveraging Large Language Models for Gloss-Free Sign Language Translation. arXiv 2024, arXiv:2405.04164. [Google Scholar]

- Hwang, E.J.; Cho, S.; Lee, J.; Park, J.C. An Efficient Gloss-Free Sign Language Translation Using Spatial Configurations and Motion Dynamics with LLMs. In Proceedings of the Proceedings of the 2025 Conference of the North American Chapter of the Association for Computational Linguistics (NAACL), 2025. [Google Scholar]

- Kim, J.; Jeon, H.; Bae, J.; Kim, H.Y. Leveraging the Power of MLLMs for Gloss-Free Sign Language Translation. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2025; pp. 21048–21058. [Google Scholar]

- Liu, Y.; Zhang, W.; Ren, S.; Huang, C.; Yu, J.; Xu, L. SCOPE: Sign Language Contextual Processing with Embedding from LLMs. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence (AAAI), 2025; Vol. 39. [Google Scholar]

- Jiang, Z.; Sant, G.; Moryossef, A.; Müller, M.; Sennrich, R.; Ebling, S. SignCLIP: Connecting Text and Sign Language by Contrastive Learning. In Proceedings of the Proceedings of the Conference on Empirical Methods in Natural Language Processing (EMNLP), 2024. [Google Scholar]

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models. In Proceedings of the International Conference on Learning Representations (ICLR), 2022. [Google Scholar]

- Koller, O.; Forster, J.; Ney, H. Continuous Sign Language Recognition: Towards Large Vocabulary Statistical Recognition Systems Handling Multiple Signers. Proceedings of the Computer Vision and Image Understanding 2015, Vol. 141, 108–125. [Google Scholar] [CrossRef]

- Cui, R.; Liu, H.; Zhang, C. Recurrent Convolutional Neural Networks for Continuous Sign Language Recognition by Staged Optimization. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2017; pp. 7361–7369. [Google Scholar]

- Huang, J.; Zhou, W.; Zhang, Q.; Li, H.; Li, W. Video-based Sign Language Recognition without Temporal Segmentation. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2018. [Google Scholar]

- Li, D.; Rodriguez, C.; Yu, X.; Li, H. Word-level Deep Sign Language Recognition from Video: A New Large-scale Dataset and Methods Comparison. In Proceedings of the Proceedings of the IEEE Winter Conference on Applications of Computer Vision (WACV), 2020; pp. 1459–1469. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention Is All You Need. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2017. [Google Scholar]

- Chen, Y.; Wei, F.; Sun, X.; Wu, Z.; Lin, S. Two-stream Network for Sign Language Recognition and Translation. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2022. [Google Scholar]

- Park, M.; Kim, S.; Lee, J. ConSignformer: A Transformer-based Architecture for Continuous Sign Language Recognition. arXiv 2024, arXiv:2405.12018. [Google Scholar]

- Chen, Y.; Li, Z.; Wang, M. Skeleton-Based Sign Language Recognition via Graph Convolutional Networks. arXiv 2024, arXiv:2407.11960. [Google Scholar]

- Yan, S.; Xiong, Y.; Lin, D. Spatial Temporal Graph Convolutional Networks for Skeleton-Based Action Recognition. In Proceedings of the AAAI Conference on Artificial Intelligence, 2018; 32. [Google Scholar]

- Lugaresi, C.; Tang, J.; Nash, H.; McClanahan, C.; Uboweja, E.; Hays, M.; Zhang, F.; Chang, C.L.; Yong, M.G.; Lee, J.; et al. MediaPipe: A Framework for Building Perception Pipelines. arXiv 2019, arXiv:1906.08172. [Google Scholar] [CrossRef]

- Kumar, A.; Singh, R.; Patel, N. Continuous Sign Language Recognition System using Deep Learning with MediaPipe Holistic. arXiv 2024, arXiv:2411.04517. [Google Scholar] [CrossRef]

- Ahmed, Z.; Khan, M.; Ali, T. Enhancing Sign Language Detection through MediaPipe and Convolutional Neural Networks. arXiv 2024, arXiv:2406.03729. [Google Scholar] [CrossRef]

- Ferreira, P.M.; Cardoso, J.S.; Rebelo, A. Sign Language Recognition Based on Deep Learning and Low-cost Handcrafted Descriptors. arXiv 2024, arXiv:2408.07244. [Google Scholar] [CrossRef]

- Alyami, S.; Luqman, H. A Comparative Study of Continuous Sign Language Recognition Techniques. arXiv 2024, arXiv:2406.12369. [Google Scholar] [CrossRef]

- Rastgoo, R.; Kiani, K.; Escalera, S. Recent Advances on Deep Learning for Sign Language Recognition. Computer Modeling in Engineering & Sciences, 2024. [Google Scholar]

- Yin, A.; Zhong, T.; Tang, L.; Jin, W.; Jin, T.; Zhao, Z. Gloss Attention for Gloss-free Sign Language Translation. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023; pp. 2551–2562. [Google Scholar]

- Inan, M.; Sicilia, A.; Alikhani, M. SignAlignLM: Integrating Multimodal Sign Language Processing into Large Language Models. In Proceedings of the Findings of the Association for Computational Linguistics (ACL), 2025. [Google Scholar]

- Zheng, W.; Li, H.; Chen, W.; Wang, Y. CLIP-SLA: Parameter-Efficient CLIP Adaptation for Continuous Sign Language Recognition. arXiv 2024, arXiv:2407.12174. [Google Scholar]

- Singh, G. A Review of Multimodal Vision–Language Models: Foundations, Applications, and Future Directions. Preprints 2025. [Google Scholar] [CrossRef]

- Lu, J.; Batra, D.; Parikh, D.; Lee, S. ViLBERT: Pretraining Task-Agnostic Visiolinguistic Representations for Vision-and-Language Tasks. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2019. [Google Scholar]

- Li, J.; Selvaraju, R.R.; Gotmare, A.D.; Joty, S.; Xiong, C.; Hoi, S. Align Before Fuse: Vision and Language Representation Learning with Momentum Distillation. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2021. [Google Scholar]

- Alayrac, J.B.; Donahue, J.; Luc, P.; Miech, A.; Barr, I.; Hasson, Y.; Lenc, K.; Mensch, A.; Millican, K.; Reynolds, M.; et al. Flamingo: a Visual Language Model for Few-Shot Learning. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2022. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016; pp. 770–778. [Google Scholar]

- Zhou, H.; Zhou, W.; Qi, W.; Pu, J.; Li, H. Improving Sign Language Translation with Monolingual Data by Sign Back-Translation. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2021; pp. 1316–1325. [Google Scholar]

- Duarte, A.; Palaskar, S.; Venberèg, L.; Ghadiyaram, D.; DeHaan, K.; Metze, F.; Jordi, T.; Giro-i Nieto, X. How2Sign: A Large-scale Multimodal Dataset for Continuous American Sign Language. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2021; pp. 2735–2744. [Google Scholar]

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.J. BLEU: a Method for Automatic Evaluation of Machine Translation. In Proceedings of the Proceedings of the 40th Annual Meeting of the Association for Computational Linguistics (ACL), 2002; pp. 311–318. [Google Scholar]

- Lin, C.Y. ROUGE: A Package for Automatic Evaluation of Summaries. In Proceedings of the Text Summarization Branches Out, 2004. [Google Scholar]

- Banerjee, S.; Lavie, A. METEOR: An Automatic Metric for MT Evaluation with Improved Correlation with Human Judgments. In Proceedings of the Proceedings of the ACL Workshop on Intrinsic and Extrinsic Evaluation Measures for Machine Translation and/or Summarization, 2005. [Google Scholar]

| Component | Specification |

|---|---|

| Visual Stream | |

| Backbone | ResNet-50 (ImageNet pre-trained) |

| Output dimension | |

| Temporal conv | , stride , output |

| Skeletal Stream | |

| Pose estimator | MediaPipe Holistic |

| Number of joints | (hands + body + face) |

| ST-GCN layers | 4 layers, channels: |

| Temporal kernel | , stride 2 at layer 3 |

| CMFA Module | |

| Feature dimension | |

| Number of layers | |

| Attention heads | |

| FFN inner dim | |

| HTA Module | |

| Temporal kernels | |

| Target length | |

| SLA + LLM | |

| Sign tokens | |

| LLM backbone | LLaMA-2 7B (frozen) |

| LLM dimension | |

| LoRA rank / alpha | , |

| Parameter | Stage 1 | Stage 2 | Stage 3 |

|---|---|---|---|

| Epochs | 30 | 20 | 15 |

| Learning rate | |||

| Batch size | 32 | 32 | 32 |

| Warmup epochs | 3 | 2 | 1 |

| Gradient clipping | 1.0 | 1.0 | 1.0 |

| Hardware | 4× NVIDIA A100 80GB | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).