Submitted:

09 April 2026

Posted:

16 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Deep Learning for Symbolic Music Generation

2.2. Diffusion Models for Sequence and Music Generation

2.3. Style Conditioning and Feature Disentanglement

2.4. Structural Fidelity and Differentiable Regularization

2.5. Benchmarking and Reproducibility in Creative AI

3. Motivation: Entangled Conditioning and Melody Drift

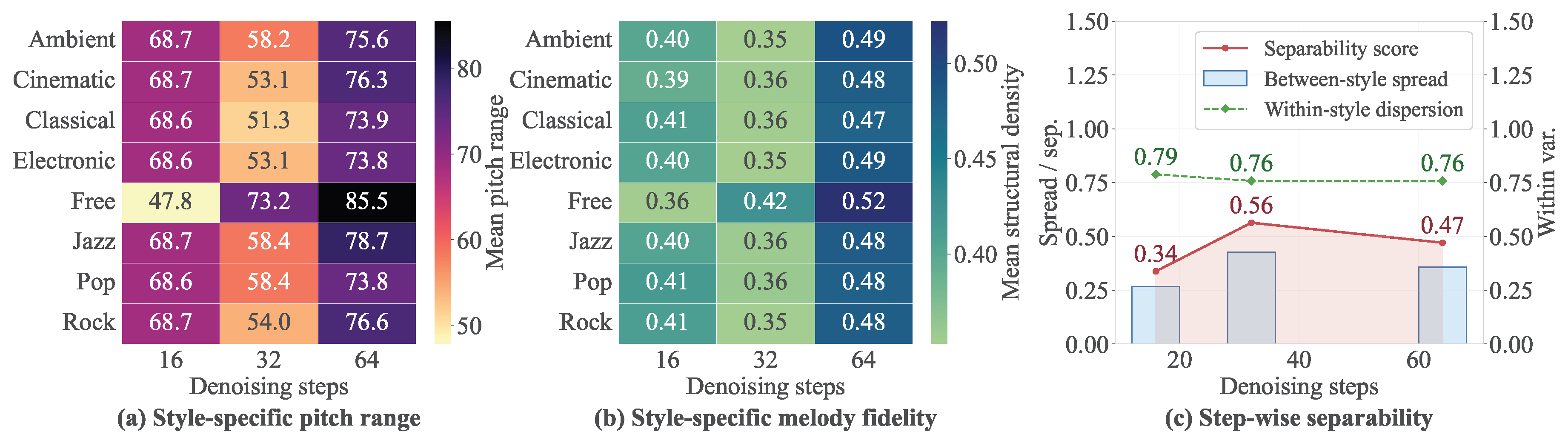

3.1. Weak Style Separability Under Unified Conditioning

3.2. Melody Drift in Iterative Hierarchical Denoising

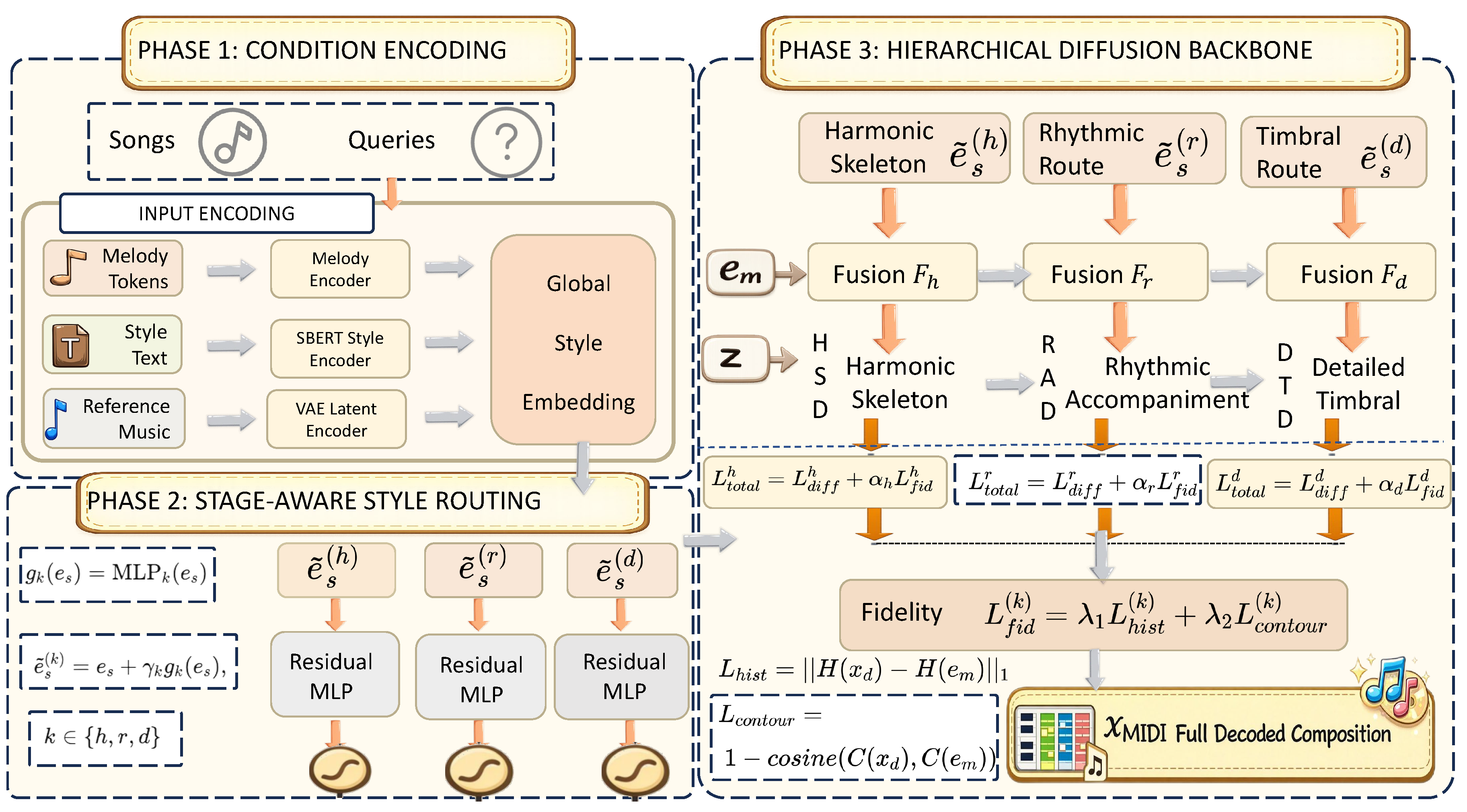

4. Proposed Framework

4.1. Formulation of Hierarchical Symbolic Diffusion

4.2. Stage-Aware Style Routing via Residual Multi-Layer Perceptron

4.3. Differentiable Melody Regularization

4.4. Overall Training Objective

5. Experimental Setup

5.1. Dataset and Inference Configurations

5.2. Objective Evaluation Metrics

5.3. Implementation Details

6. Results

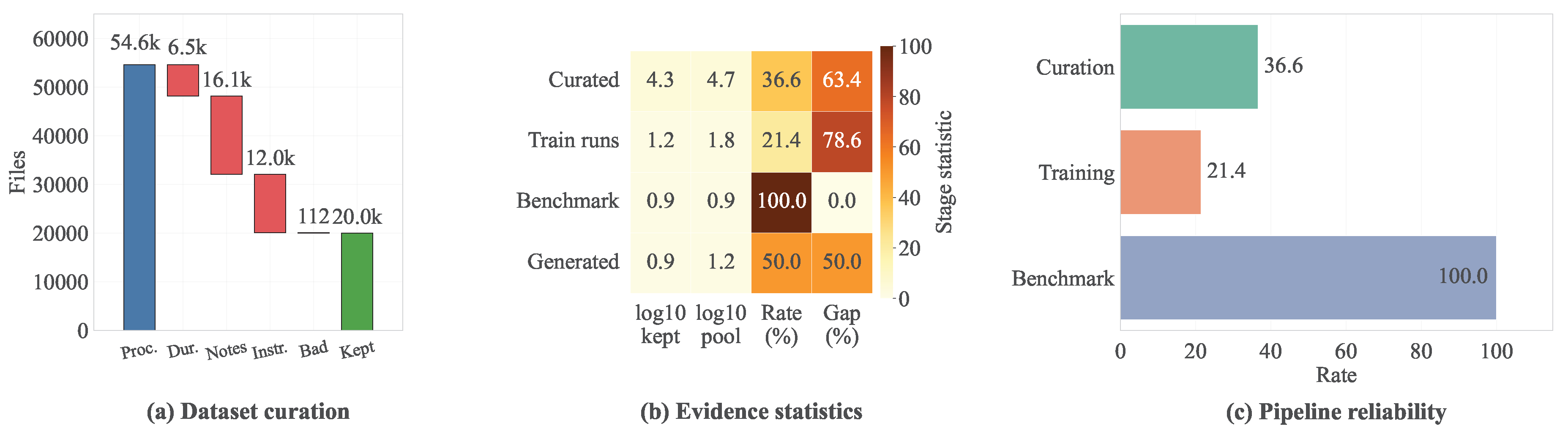

6.1. Benchmark Scope and Corpus Quality

6.2. Legacy-Compatible Baseline Reference

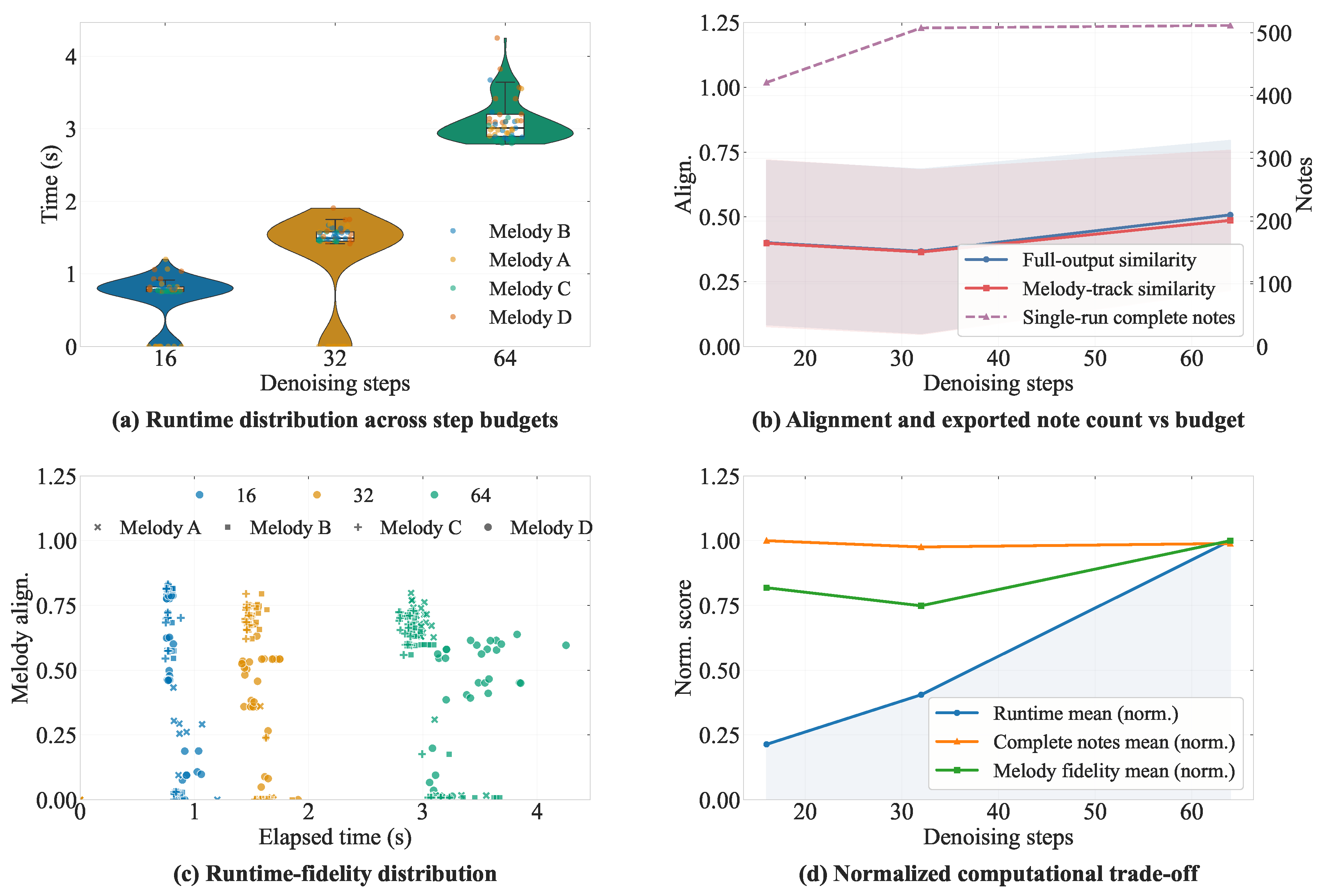

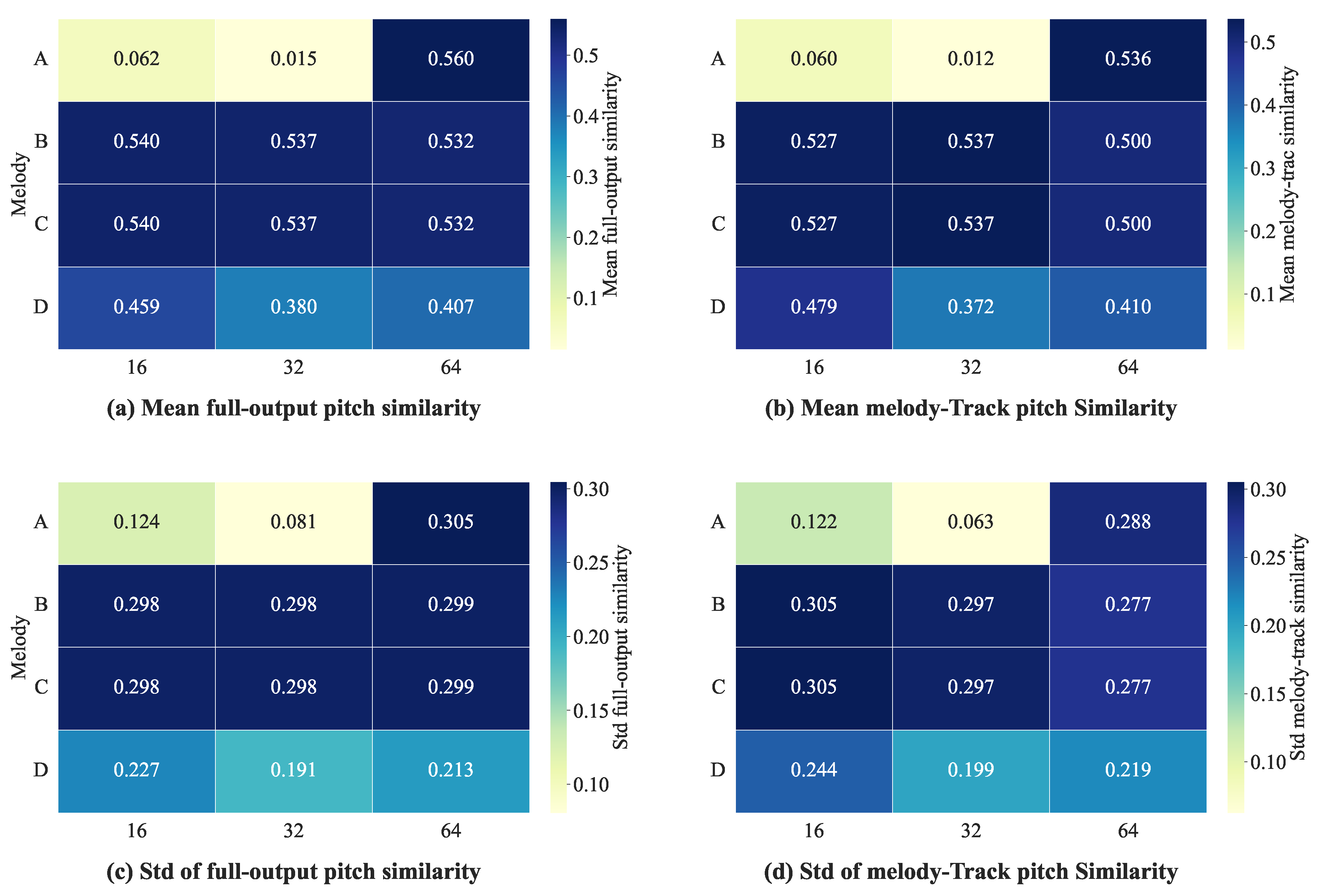

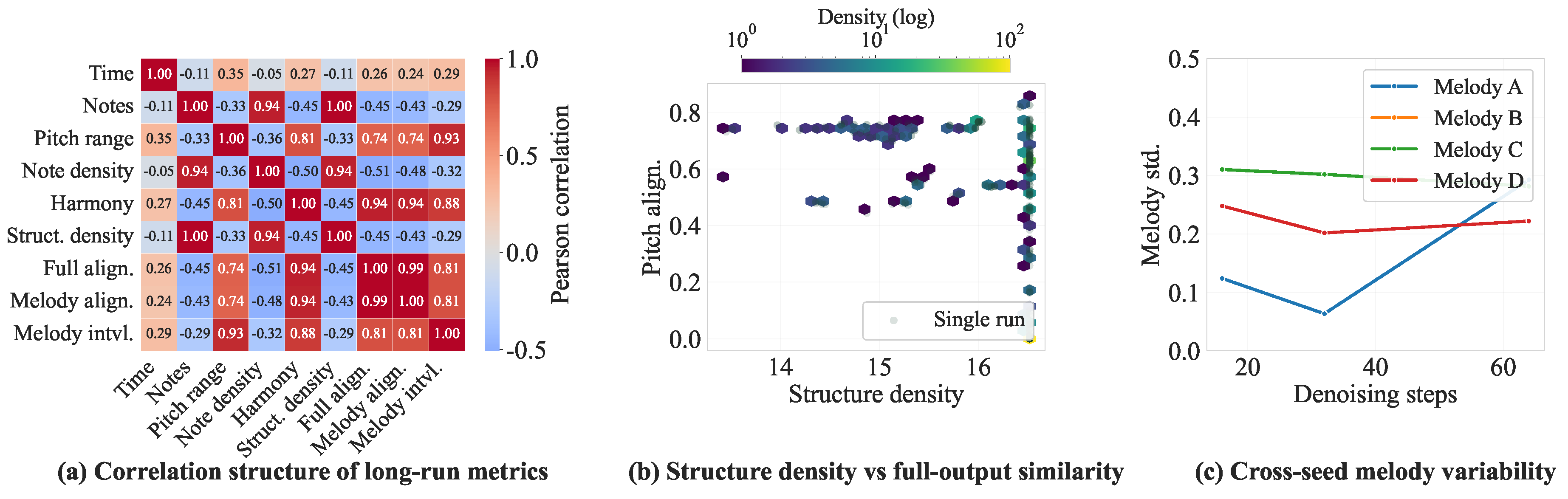

6.3. Long-Run Multi-Melody Evaluation

6.4. Metric Coupling and Efficiency Tradeoffs

7. Discussion

8. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Min, L.; Jiang, J.; Xia, G.; Zhao, J. Polyffusion: A Diffusion Model for Polyphonic Score Generation with Internal and External Controls. In Proceedings of the 24th International Society for Music Information Retrieval Conference (ISMIR), Milan, Italy, 5–9 November 2023. [Google Scholar]

- Lv, A.; Tan, X.; Lu, P.; Ye, W.; Zhang, S.; Bian, J.; Yan, R. GETMusic: Generating Any Music Tracks with a Unified Representation and Diffusion Framework. arXiv 2023, arXiv:2305.10841. [Google Scholar] [CrossRef]

- Lu, P.; Xu, X.; Kang, C.; Yu, B.; Xing, C.; Tan, X.; Bian, J. MuseCoco: Generating Symbolic Music from Text. arXiv 2023, arXiv:2306.00110. [Google Scholar] [CrossRef]

- Ho, J.; Jain, A.; Abbeel, P. Denoising Diffusion Probabilistic Models. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Virtual, 6–12 December 2020; pp. 6840–6851. [Google Scholar]

- Mittal, G.; Engel, J.; Hawthorne, C.; Simon, I. Symbolic Music Generation with Diffusion Models. arXiv 2021, arXiv:2103.16091. [Google Scholar] [CrossRef]

- Wang, Z.; Min, L.; Xia, G. Whole-Song Hierarchical Generation of Symbolic Music Using Cascaded Diffusion Models. arXiv 2024, arXiv:2405.09901. [Google Scholar] [CrossRef]

- Yuan, R.; Lin, H.; Wang, Y.; Tian, Z.; Wu, S.; Shen, T.; et al. ChatMusician: Understanding and Generating Music Intrinsically with LLM. arXiv 2024, arXiv:2402.16153. [Google Scholar] [CrossRef]

- Cífka, O.; Şimşekli, U.; Richard, G. Groove2Groove: One-Shot Music Style Transfer With Supervision From Synthetic Data. IEEE/ACM Trans. Audio Speech Lang. Process. 2020, 28, 2638–2650. [Google Scholar] [CrossRef]

- Dhariwal, P.; Jun, H.; Payne, C.; Kim, J.W.; Radford, A.; Sutskever, I. Jukebox: A Generative Model for Music. arXiv 2020, arXiv:2005.00341. [Google Scholar] [CrossRef]

- Huang, Q.; Park, D.S.; Wang, T.; Denk, T.I.; Ly, A.; Chen, N.; Zhang, Z.; Zhang, Z.; Yu, J.; Frank, C.; Engel, J.; Le, Q.V.; Chan, W.; Chen, Z.; Han, W. Noise2Music: Text-conditioned Music Generation with Diffusion Models. arXiv 2023, arXiv:2302.03917. [Google Scholar]

- Huang, Y.; Ghatare, A.; Liu, Y.; Hu, Z.; Zhang, Q.; Shama Sastry, C.; Gururani, S.; Oore, S.; Yue, Y. Symbolic Music Generation with Non-Differentiable Rule Guided Diffusion. In Proceedings of the International Conference on Machine Learning (ICML), Vienna, Austria, 21–27 July 2024; pp. 19772–19797. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł; Polosukhin, I. Attention Is All You Need. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Long Beach, CA, USA, 4–9 December 2017; pp. 5998–6008. [Google Scholar]

- Huang, C.-Z.A.; Vaswani, A.; Uszkoreit, J.; Simon, I.; Hawthorne, C.; Dai, A.; Hoffman, M.D.; Dinculescu, M.; Eck, D. Music Transformer: Generating Music with Long-Term Structure. In Proceedings of the International Conference on Learning Representations (ICLR), New Orleans, LA, USA, 6–9 May 2019. [Google Scholar]

- Huang, Y.-S.; Yang, Y.-H. Pop Music Transformer: Beat-Based Modeling and Generation of Expressive Pop Piano Compositions. In Proceedings of the 28th ACM International Conference on Multimedia (ACM MM), Seattle, WA, USA, 12–16 October 2020; pp. 1180–1188. [Google Scholar]

- Hsiao, W.-Y.; Liu, J.-Y.; Yeh, Y.-C.; Yang, Y.-H. Compound Word Transformer: Learning to Compose Full-Song Music over Dynamic Directed Hypergraphs. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtual, 2–9 February 2021; pp. 178–186. [Google Scholar]

- Roberts, A.; Engel, J.; Raffel, C.; Hawthorne, C.; Eck, D. A Hierarchical Latent Vector Model for Learning Long-Term Structure in Music. In Proceedings of the International Conference on Machine Learning (ICML), Stockholm, Sweden, 10–15 July 2018; pp. 4364–4373. [Google Scholar]

- Liu, J.; Dong, Y.; Cheng, Z.; Zhang, X.; Li, X.; Yu, F.; Sun, M. Symphony Generation with Permutation Invariant Language Model. In Proceedings of the 23rd International Society for Music Information Retrieval Conference (ISMIR), Bengaluru, India, 4–8 December 2022. [Google Scholar]

- Rombach, R.; Blattmann, A.; Lorenz, D.; Esser, P.; Ommer, B. High-Resolution Image Synthesis with Latent Diffusion Models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 10684–10695. [Google Scholar]

- Agostinelli, A.; Denk, T.I.; Borsos, Z.; Engel, J.; Verzetti, M.; Caillon, A.; Huang, Q.; Jansen, A.; Roberts, A.; Tagliasacchi, M.; Sharifi, M.; Zeghidour, N.; Frank, C. MusicLM: Generating Music From Text. arXiv 2023, arXiv:2301.11325. [Google Scholar] [CrossRef]

- Li, X.; Thickstun, J.; Gulrajani, I.; Liang, P.S.; Hashimoto, T.B. Diffusion-LM Improves Controllable Text Generation. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), New Orleans, LA, USA, 28 November–9 December 2022; pp. 4328–4343. [Google Scholar]

- Reimers, N.; Gurevych, I. Sentence-BERT: Sentence Embeddings Using Siamese BERT-Networks. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing (EMNLP), Hong Kong, China, 3–7 November 2019; pp. 3982–3992. [Google Scholar]

- von Rütte, D.; Biggio, L.; Kilcher, Y.; Hofmann, T. FIGARO: Generating Symbolic Music with Fine-Grained Artistic Control. arXiv 2022, arXiv:2201.10936. [Google Scholar]

- Dong, H.-W.; Hsiao, W.-Y.; Yang, L.-C.; Yang, Y.-H. MuseGAN: Multi-Track Sequential Generative Adversarial Networks for Symbolic Music Generation and Accompaniment. In Proceedings of the AAAI Conference on Artificial Intelligence, New Orleans, LA, USA, 2–7 February 2018; pp. 34–41. [Google Scholar]

- Yang, R.; Wang, D.; Wang, Z.; Chen, T.; Jiang, J.; Xia, G. Deep Music Analogy Via Latent Representation Disentanglement. In Proceedings of the 20th International Society for Music Information Retrieval Conference (ISMIR), Delft, The Netherlands, 4–8 November 2019; pp. 596–603. [Google Scholar]

- Karras, T.; Laine, S.; Aila, T. A Style-Based Generator Architecture for Generative Adversarial Networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 4401–4410. [Google Scholar]

- Perez, E.; Strub, F.; De Vries, H.; Dumoulin, V.; Courville, A. FiLM: Visual Reasoning with a General Conditioning Layer. In Proceedings of the AAAI Conference on Artificial Intelligence, New Orleans, LA, USA, 2–7 February 2018; pp. 3942–3951. [Google Scholar]

- Ren, Y.; He, J.; Tan, X.; Qin, T.; Zhao, Z.; Liu, T.-Y. PopMAG: Pop Music Accompaniment Generation. arXiv 2020, arXiv:2008.07703. [Google Scholar] [CrossRef]

- Jang, E.; Gu, S.; Poole, D. Categorical Reparameterization with Gumbel-Softmax. In Proceedings of the International Conference on Learning Representations (ICLR), Toulon, France, 24–26 April 2017. [Google Scholar]

- Ens, J.; Pasquier, P. Building the MetaMIDI Dataset: Linking Symbolic and Audio Musical Data. In Proceedings of the 22nd International Society for Music Information Retrieval Conference (ISMIR), Online, 7–12 November 2021; pp. 182–188. [Google Scholar]

| Statistic | Count | Ratio (%) |

|---|---|---|

| Processed MIDI files | 54,609 | 100.00 |

| Retained after filtering | 20,000 | 36.62 |

| Filtered out | 34,609 | 63.38 |

| Primary Rejection Causes | ||

| Note-count mismatches | 16,078 | 29.44 |

| Instrument track mismatches | 11,952 | 21.89 |

| Duration anomalies | 6,467 | 11.84 |

| Data corruption | 112 | 0.21 |

| Statistic | Value |

|---|---|

| Generated samples | 384 |

| Valid outputs | 384 / 384 (100%) |

| Melody inputs | 4 |

| Style presets | 8 |

| Random seeds | 4 |

| Step budgets | 16 / 32 / 64 |

| Mean duration | 31.50 s |

| Mean total notes | 499.68 |

| Mean pitch-histogram similarity | 0.4251 |

| Mean melody-track pitch similarity | 0.4164 |

| Mean interval-histogram similarity | 0.4485 |

| Mean melody-track interval similarity | 0.4023 |

| Metric | Legacy-HCDMG | HCDMG++ |

|---|---|---|

| Generation success rate (%) | 100.00 | 100.00 |

| Mean pitch-histogram similarity | 0.0043 | 0.3797 |

| Mean interval-histogram similarity | 0.7391 | 0.7310 |

| Mean melody-track pitch similarity | N/A | 0.3720 |

| Style separability score | N/A | 1.0581 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).