Submitted:

13 April 2026

Posted:

14 April 2026

You are already at the latest version

Abstract

Keywords:

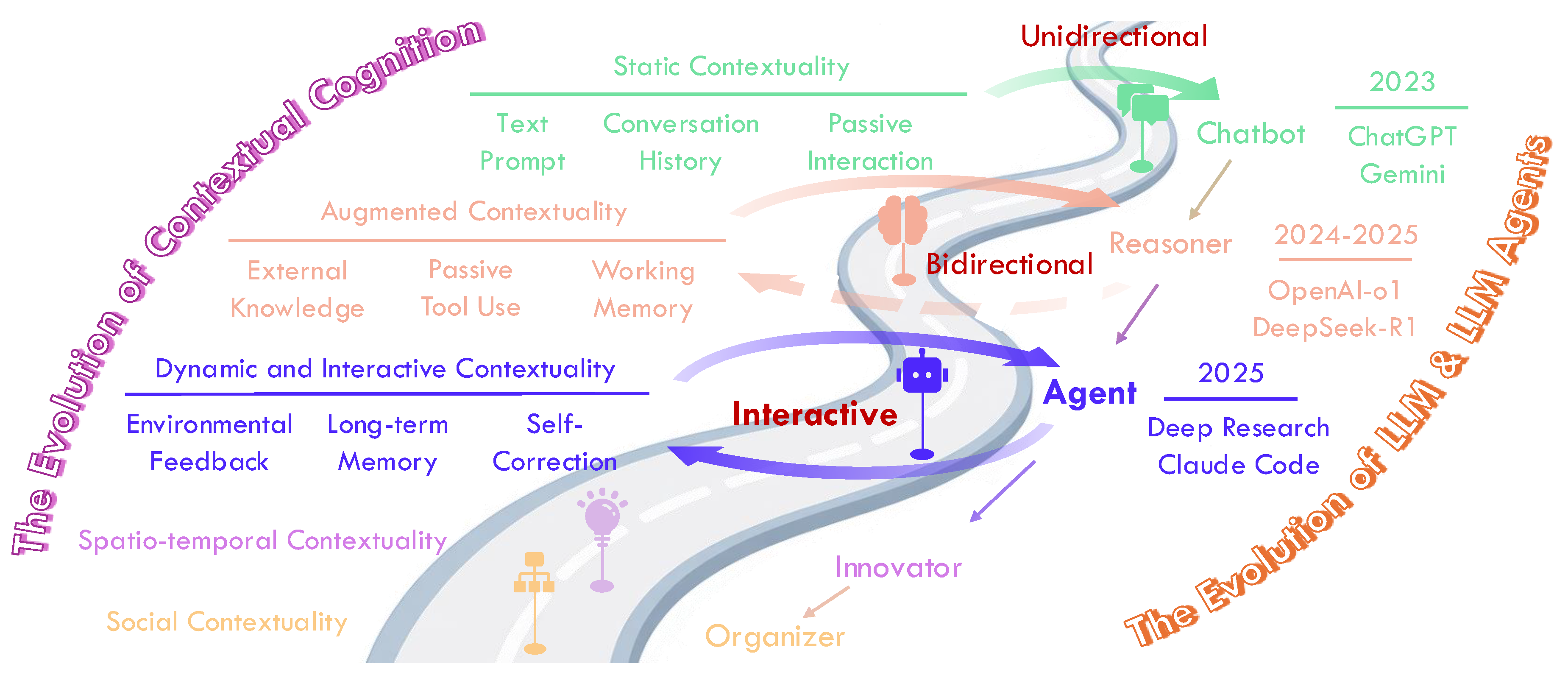

1. Introduction

“Without context, ... actions have no meaning at all."— Gregory Bateson

2. Background

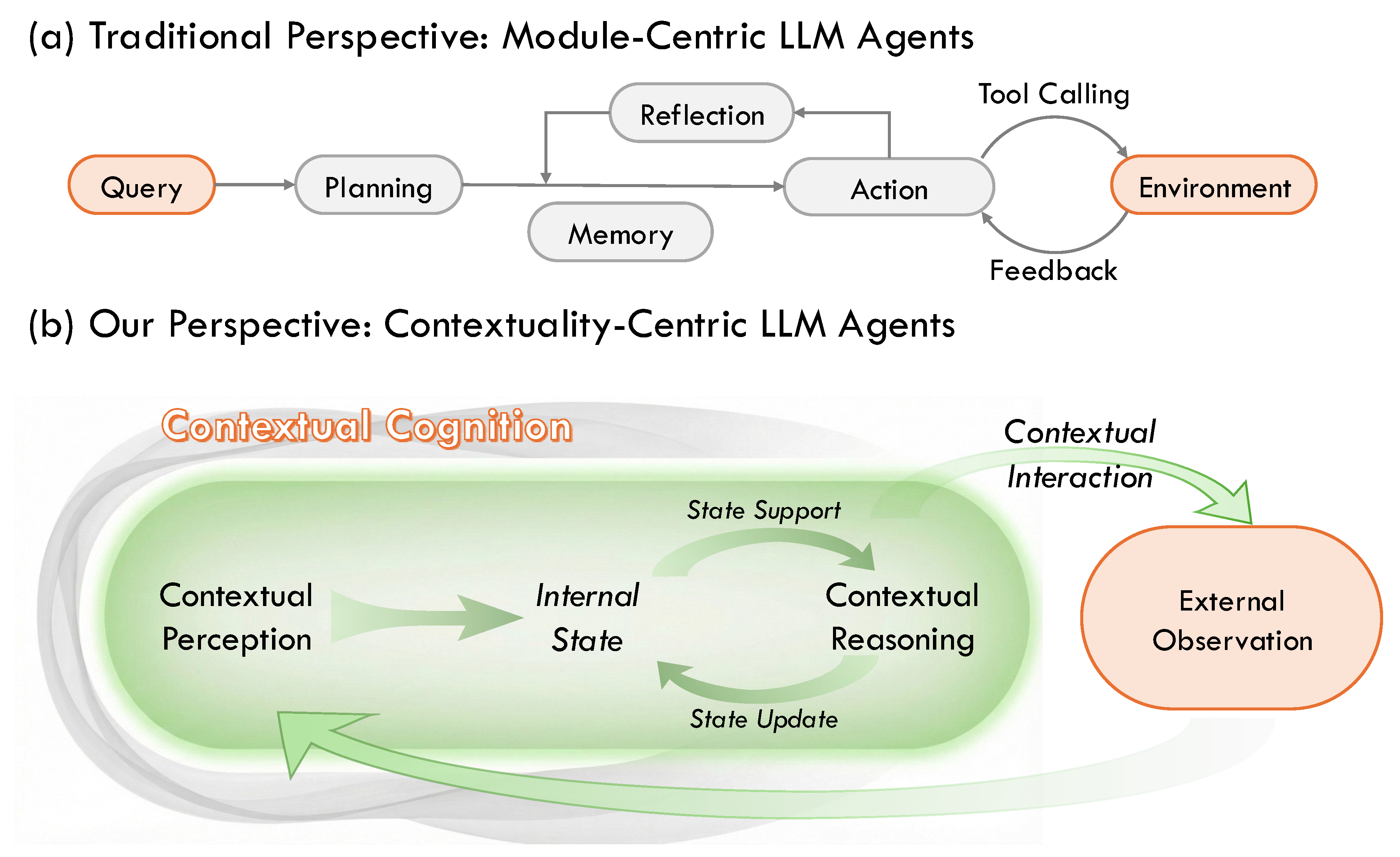

2.1. Concept of LLM Agents

2.2. Existing Surveys

3. Motivation

3.1. Why Contextuality Matters for LLM-Based Agents

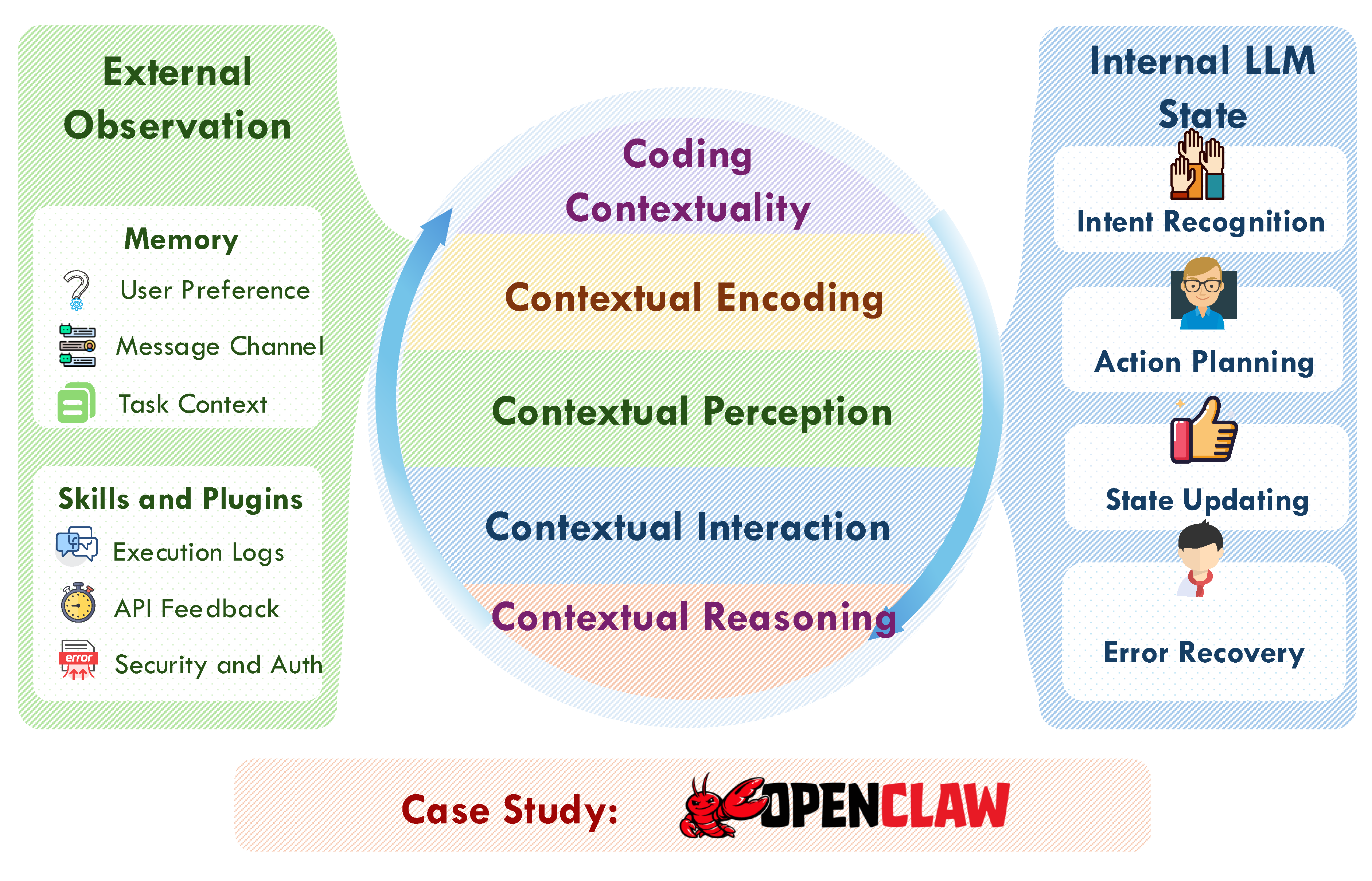

3.2. Definition of Contextuality in LLM-Based Agents

Internal States of LLMs ().

External Observation ().

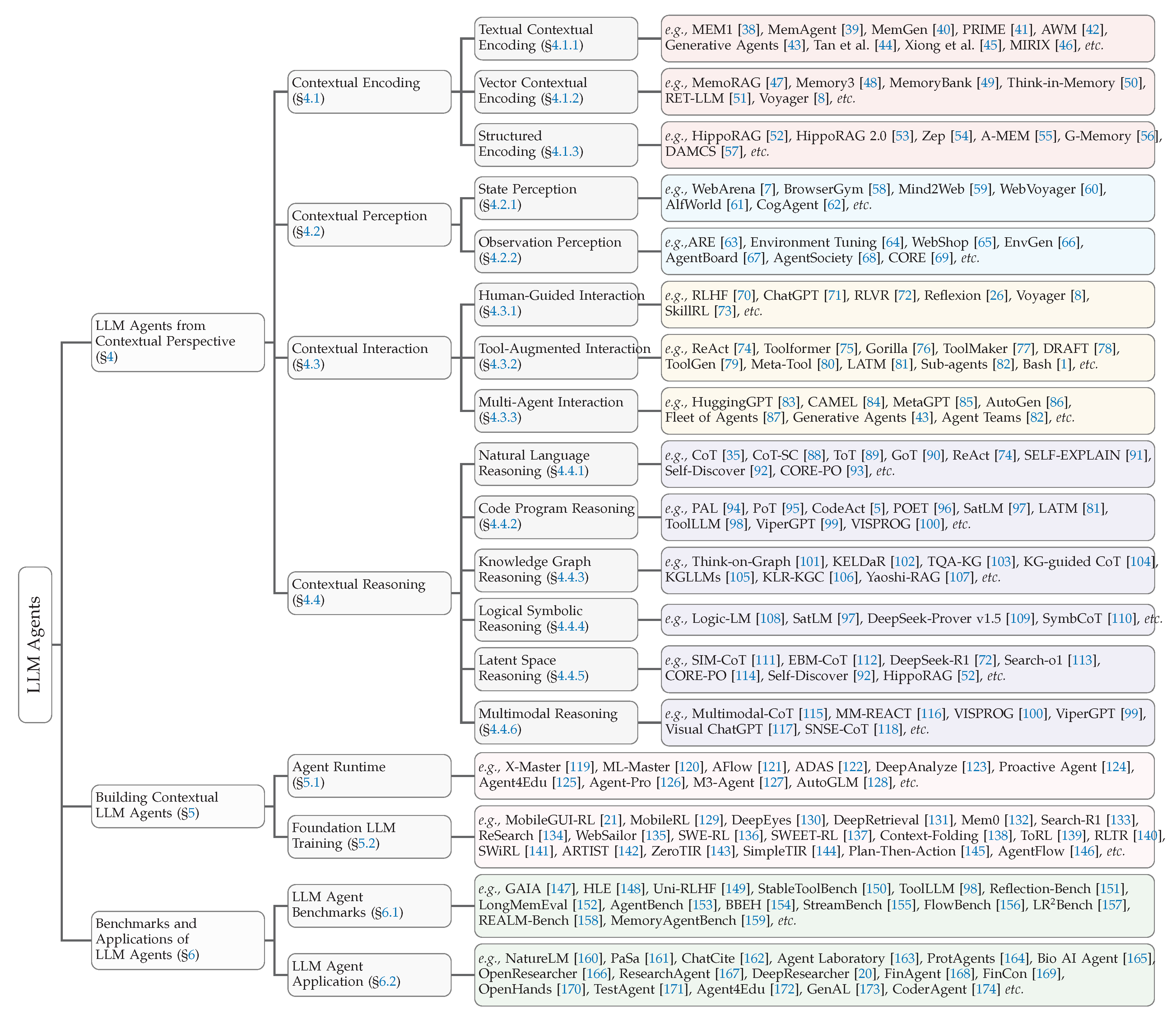

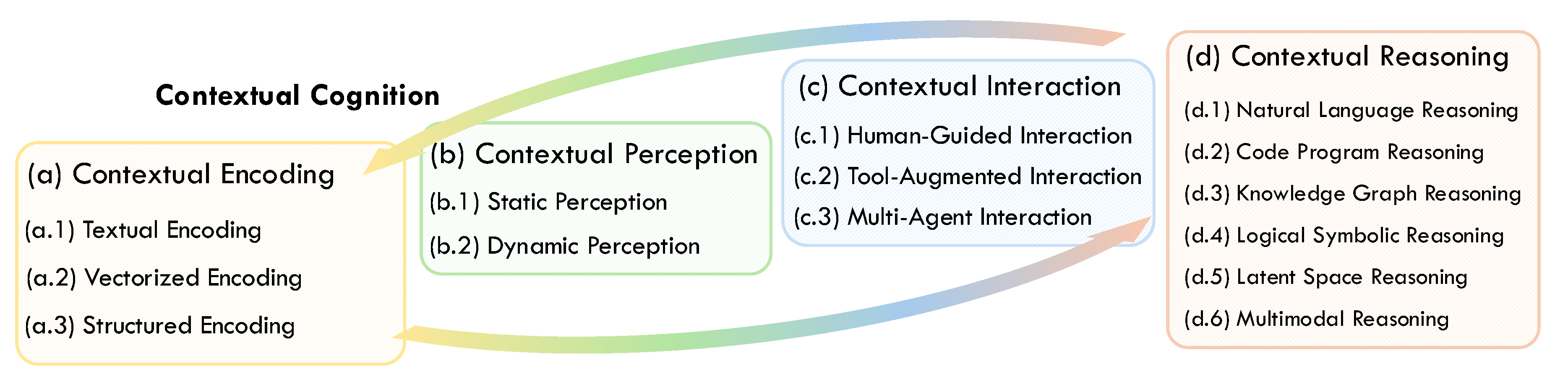

4. LLM Agents from Contextual Perspective

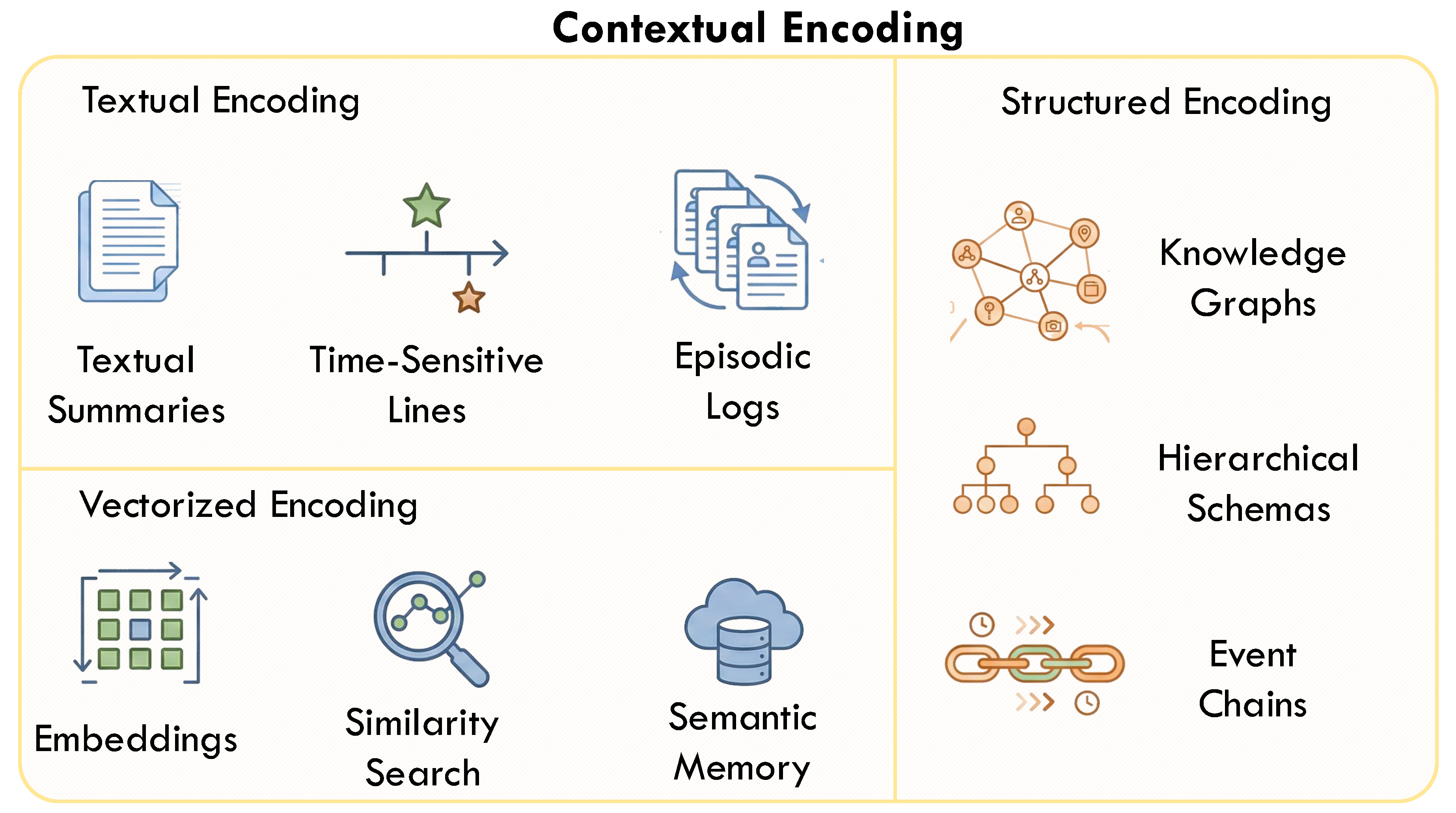

4.1. Contextual Encoding

4.1.1. Textual Encoding

4.1.2. Vector Encoding

4.1.3. Structured Encoding

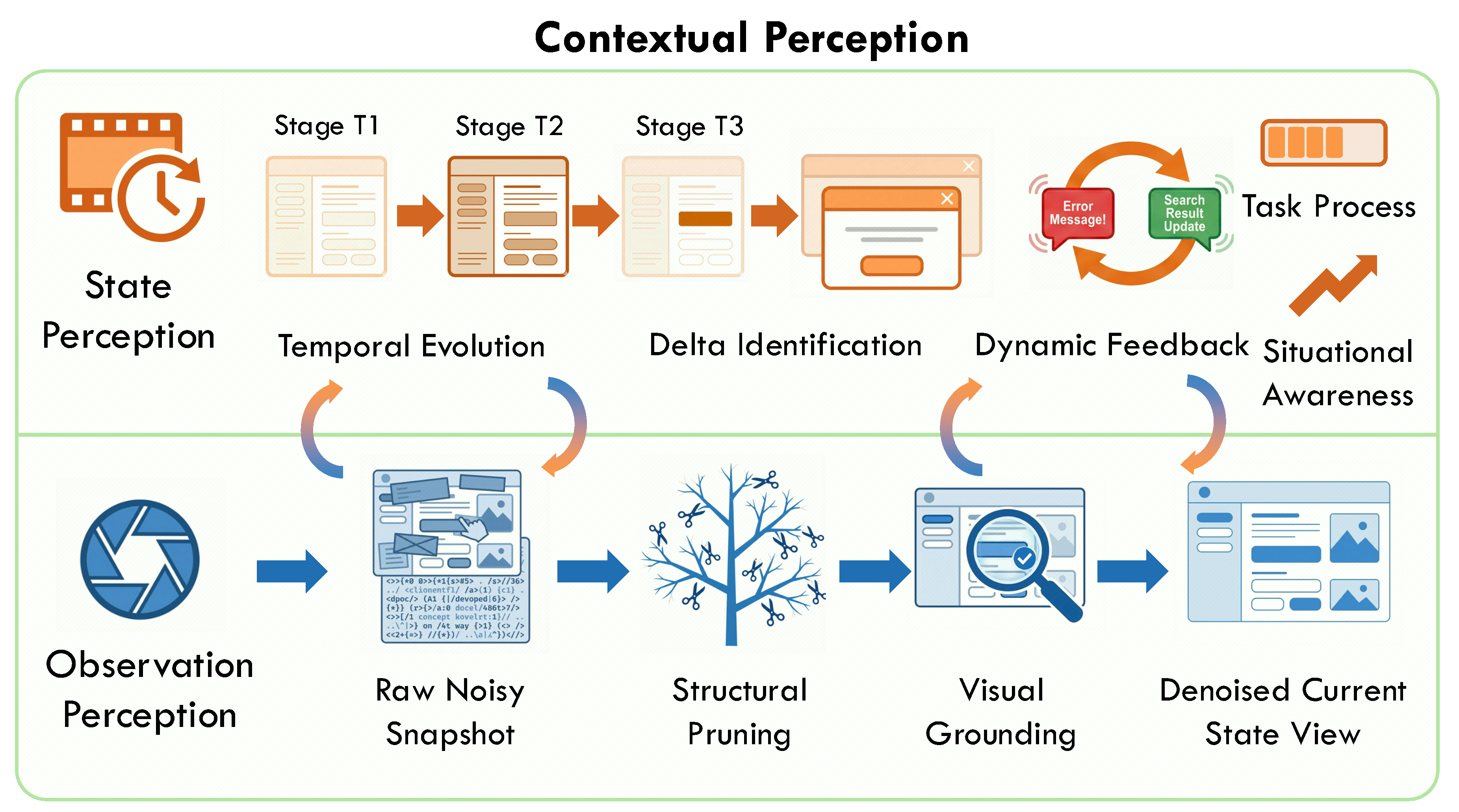

4.2. Contextual Perception

4.2.1. State Perception

4.2.2. Observation Perception

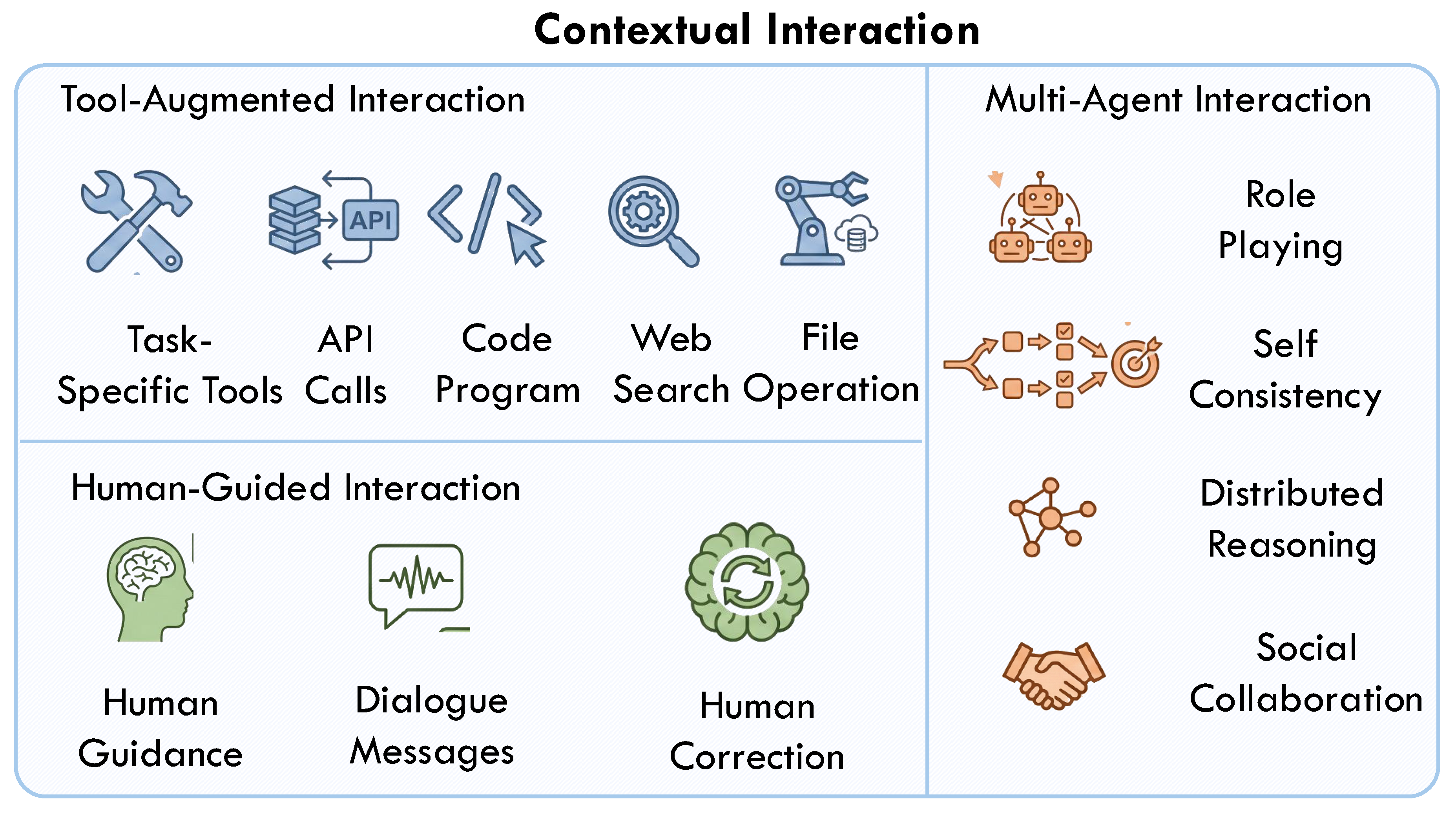

4.3. Contextual Interaction

4.3.1. Human-Guided Interaction

4.3.2. Tool-Augmented Interaction

4.3.3. Multi-Agent Interaction

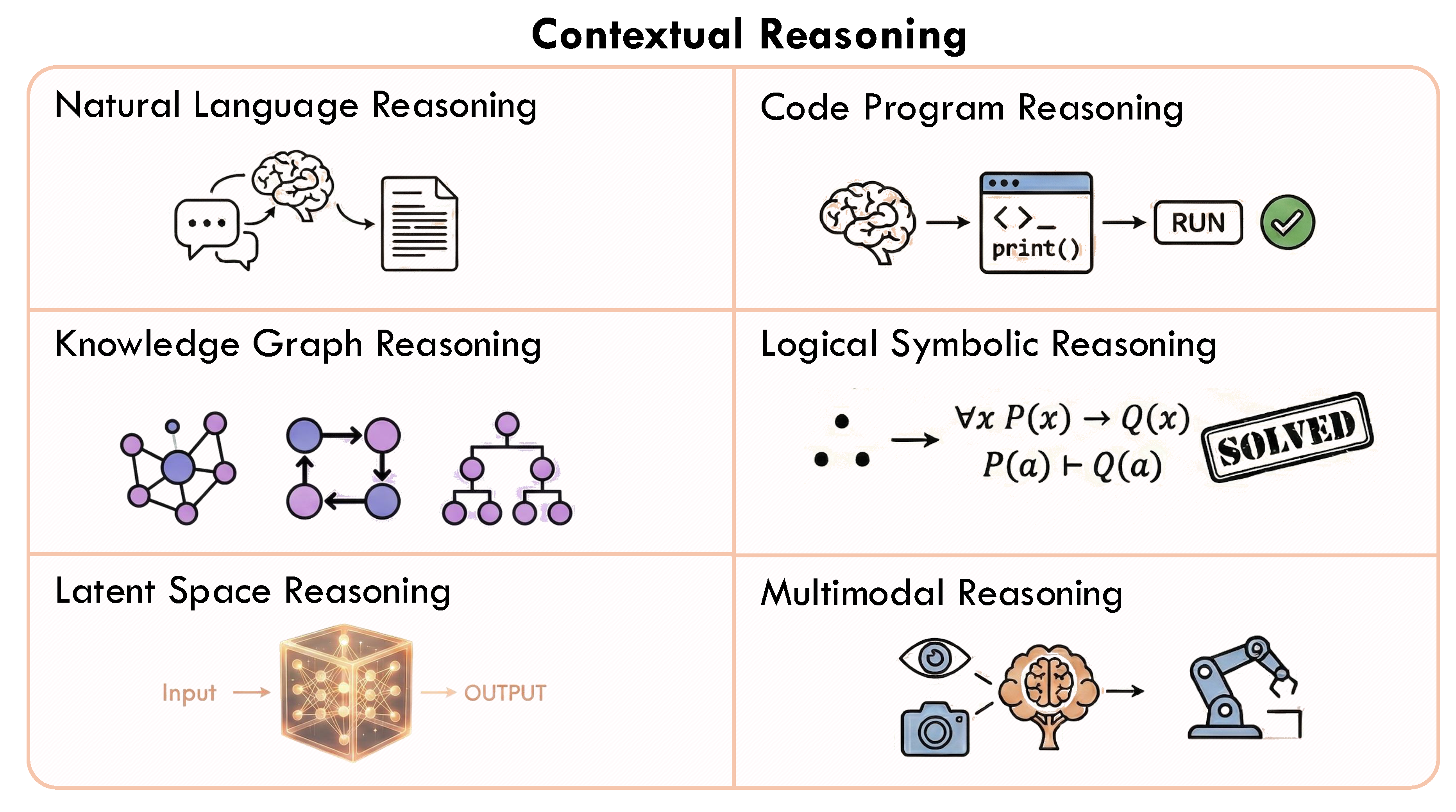

4.4. Contextual Reasoning

4.4.1. Natural Language Reasoning

4.4.2. Code Program Reasoning

4.4.3. Knowledge Graph Reasoning

4.4.4. Logical Symbolic Reasoning

4.4.5. Latent Space Reasoning

4.4.6. Multimodal Reasoning

5. Building Contextual LLM Agents

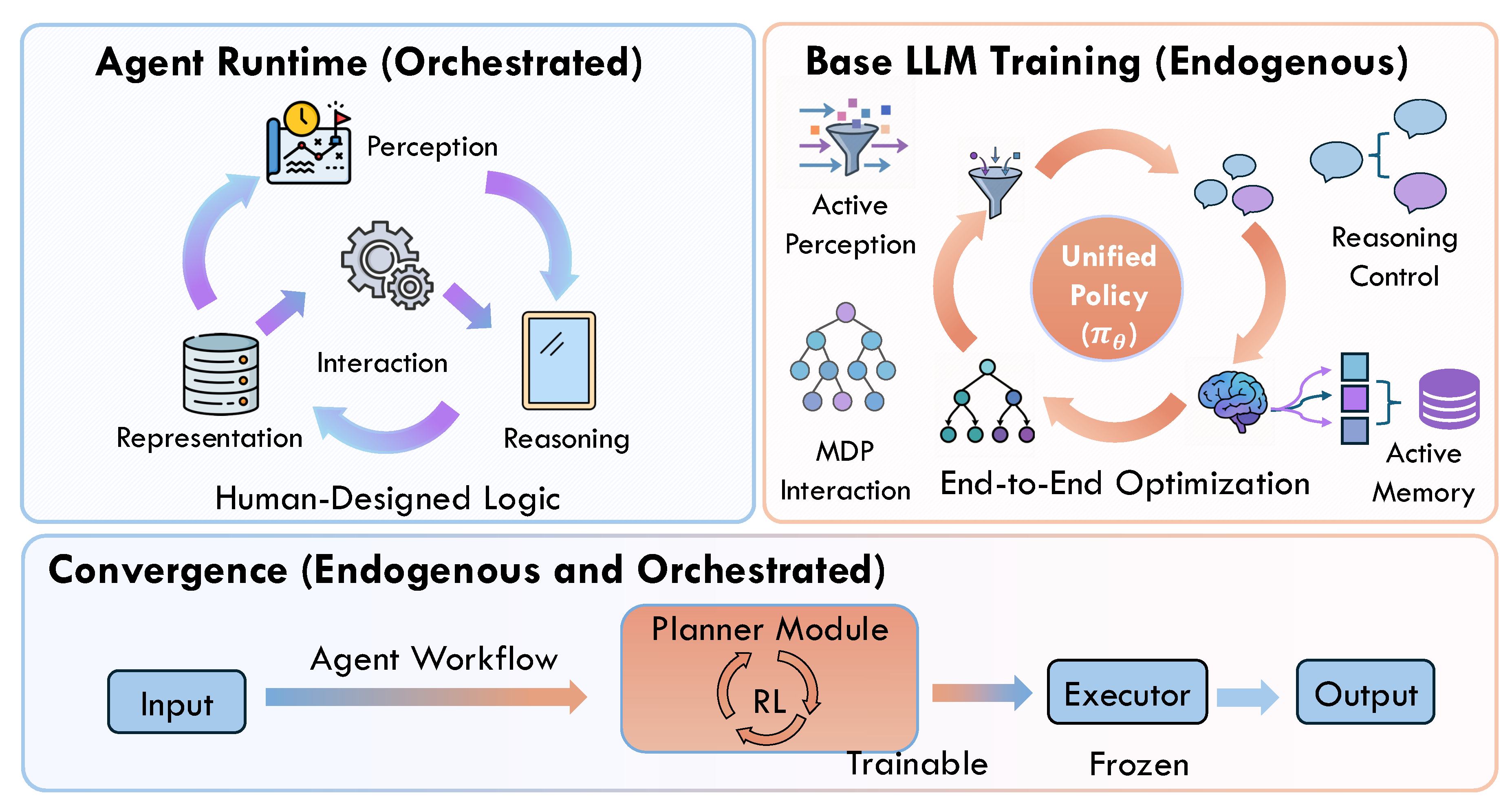

5.1. Agent Runtime

5.1.1. Agent Runtime for Contextual Encoding

5.1.2. Agent Runtime for Contextual Perception

5.1.3. Agent Runtime for Contextual Interaction

5.1.4. Agent Runtime for Contextual Reasoning

5.2. Foundation LLM Training

5.2.1. RL for Contextual Encoding

5.2.2. RL for Contextual Perception

5.2.3. RL for Contextual Interaction

5.2.4. RL for Contextual Reasoning

5.3. Convergence: RL-Optimized Agentic Workflows

6. Benchmarks and Applications

6.1. Datasets and Benchmarks

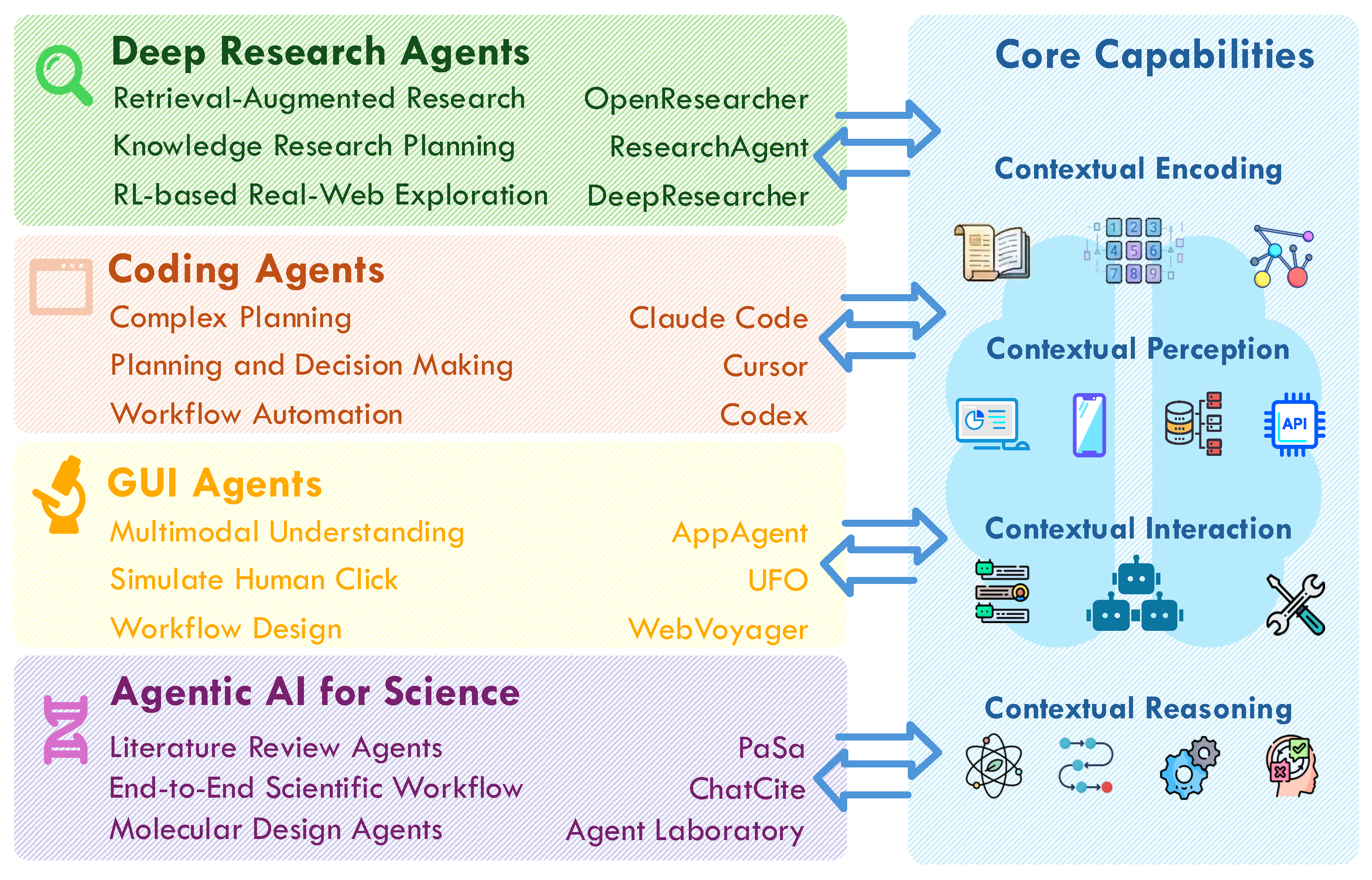

6.2. LLM Agent Applications

6.3. Case Study: Contextual Cognition for OpenClaw

7. Future Research Directions

7.1. Evolving Agentic Runtimes

7.2. Aligning LLM for Contextual Cognition.

7.3. Advanced Contextual State Management

7.4. Expanding Contextual Encodings

7.5. Broadening Agent Applications

7.6. Evaluating Dynamic Contextual Interactions.

8. Conclusion

References

- Anthropic. Claude Code: Build, debug, and ship from your terminal. 2025. Available online: https://claude.ai/product/claude-code (accessed on 2026-02-06).

- openclaw. openclaw: Your own personal AI assistant. Any OS. Any Platform. The lobster way. 2024. Available online: https://github.com/openclaw/openclaw.

- Kaplan, J.; McCandlish, S.; Henighan, T.; Brown, T.B.; Chess, B.; Child, R.; Gray, S.; Radford, A.; Wu, J.; Amodei, D. Scaling laws for neural language models. arXiv 2020, arXiv:2001.08361. [Google Scholar] [CrossRef]

- Hu, Y.; Liu, S.; Yue, Y.; Zhang, G.; Liu, B.; Zhu, F.; Lin, J.; Guo, H.; Dou, S.; Xi, Z.; et al. Memory in the Age of AI Agents. arXiv 2025, arXiv:2512.13564. [Google Scholar] [CrossRef]

- Wang, X.; Chen, Y.; Yuan, L.; Zhang, Y.; Li, Y.; Peng, H.; Ji, H. Executable code actions elicit better llm agents. In Proceedings of the Forty-first International Conference on Machine Learning, 2024. [Google Scholar]

- Ren, J.; et al. Towards Scientific Intelligence: A Survey of LLM-based Scientific Agents. arXiv 2025, arXiv:2503.24047. [Google Scholar] [CrossRef]

- Zhou, S.; Xu, F.F.; Zhu, H.; Zhou, X.; Lo, R.; Sridhar, A.; Cheng, X.; Ou, T.; Bisk, Y.; Fried, D.; et al. Webarena: A realistic web environment for building autonomous agents. arXiv 2023, arXiv:2307.13854. [Google Scholar]

- Wang, G.; Xie, Y.; Jiang, Y.; Mandlekar, A.; Xiao, C.; Zhu, Y.; Fan, L.; Anandkumar, A. Voyager: An open-ended embodied agent with large language models. arXiv 2023, arXiv:2305.16291. [Google Scholar] [CrossRef]

- Wang, X.; Cui, Z.; Li, H.; Zeng, Y.; Wang, C.; Song, R.; Chen, Y.; Shao, K.; Zhang, Q.; Liu, J.; et al. PerPilot: Personalizing VLM-based Mobile Agents via Memory and Exploration. arXiv 2025, arXiv:2508.18040. [Google Scholar]

- Huo, Y.; Lu, Y.; Zhang, Z.; Chen, H.; Lin, Y. AtomMem: Learnable Dynamic Agentic Memory with Atomic Memory Operation. arXiv 2026, arXiv:2601.08323. [Google Scholar]

- Ding, D.; Liu, S.; Yang, E.; Lin, J.; Chen, Z.; Dou, S.; Guo, H.; Cheng, W.; Zhao, P.; Xiao, C.; et al. OctoBench: Benchmarking Scaffold-Aware Instruction Following in Repository-Grounded Agentic Coding. arXiv 2026, arXiv:2601.10343. [Google Scholar]

- Huang, X.; Liu, W.; Chen, X.; Wang, X.; Wang, H.; Lian, D.; Wang, Y.; Tang, R.; Chen, E. Understanding the planning of LLM agents: A survey. arXiv 2024, arXiv:2402.02716. [Google Scholar] [CrossRef]

- Zhang, G.; et al. The Landscape of Agentic Reinforcement Learning for LLMs: A Survey. arXiv 2025. Key survey for Agentic RL.. arXiv:2509.02547. [CrossRef]

- Gao, H.a. A Survey of Self-Evolving Agents: On Path to Artificial Super Intelligence Key survey for Self-evolution. arXiv 2025, arXiv:2507.21046. [Google Scholar]

- Tran, K.T.; Nguyen, H.D.; et al. Multi-Agent Collaboration Mechanisms: A Survey of LLMs. arXiv 2025, arXiv:2501.06322. [Google Scholar] [CrossRef]

- Hassan, A.; Graham, B. Evaluating LLM-based Agents for Multi-Turn Conversations: A Survey. arXiv 2025, arXiv:2503.22458. [Google Scholar]

- Xi, Z.; Chen, W.; Guo, X.; et al. The Rise and Potential of Large Language Model Based Agents: A Survey. In Frontiers of Computer Science;Comprehensive architecture review; 2024. [Google Scholar]

- Xie, T.; Zhang, D.; Chen, J.; Li, X.; Zhao, S.; Cao, R.; Hua, T.J.; Cheng, Z.; Shin, D.; Lei, F.; et al. Osworld: Benchmarking multimodal agents for open-ended tasks in real computer environments. Advances in Neural Information Processing Systems 2024, 37, 52040–52094. [Google Scholar]

- Mon-Williams, R.; Li, G.; Long, R.; Du, W.; Lucas, C.G. Embodied large language models enable robots to complete complex tasks in unpredictable environments. Nature Machine Intelligence 2025, 1–10. [Google Scholar] [CrossRef]

- Zheng, Y.; Fu, D.; Hu, X.; Cai, X.; Ye, L.; Lu, P.; Liu, P. Deepresearcher: Scaling deep research via reinforcement learning in real-world environments. arXiv 2025, arXiv:2504.03160. [Google Scholar]

- Shi, Y.; Yu, W.; Li, Z.; Wang, Y.; Zhang, H.; Liu, N.; Mi, H.; Yu, D. MobileGUI-RL: Advancing Mobile GUI Agent through Reinforcement Learning in Online Environment. arXiv 2025, arXiv:2507.05720. [Google Scholar]

- Cheng, M.; Luo, Y.; Ouyang, J.; Liu, Q.; Liu, H.; Li, L.; Yu, S.; Zhang, B.; Cao, J.; Ma, J.; et al. A survey on knowledge-oriented retrieval-augmented generation. arXiv 2025, arXiv:2503.10677. [Google Scholar]

- Russell, S.J.; Norvig, P. Artificial Intelligence: A Modern Approach; Pearson Education, 2010. [Google Scholar]

- Wang, L.; Ma, C.; Feng, X.; et al. A Survey on Large Language Model based Autonomous Agents. In Frontiers of Computer Science; 2024. [Google Scholar]

- Ng, A. Agentic workflows: The future of AI automation. In DeepLearning.AI Blog; Concept of Agentic Workflow, 2024. [Google Scholar]

- Shinn, N.; Cassano, F.; Gopinath, A.; Narasimhan, K.; Yao, S. Reflexion: Language agents with verbal reinforcement learning. Advances in Neural Information Processing Systems 2023, 36, 8634–8652. [Google Scholar]

- Sumers, T.; Yao, S.; Narasimhan, K.; Griffiths, T. Cognitive architectures for language agents. Transactions on Machine Learning Research, 2023. [Google Scholar]

- Yang, B.; Xu, L.; et al. ContextAgent: Context-Aware Proactive LLM Agents with Open-World Sensory Perceptions. arXiv 2025, arXiv:2505.12345. [Google Scholar]

- Chawla, R.; Wiest, O.; Zhang, X. Large Language Model Based Multi-agents: A Survey of Progress and Challenges. Proceedings of IJCAI Survey Track, 2024. [Google Scholar]

- Zhu, Y.; Yuan, H.; Wang, S.; Liu, J.; Liu, W.; Deng, C.; Chen, H.; Liu, Z.; Dou, Z.; Wen, J.R. Large language models for information retrieval: A survey. ACM Transactions on Information Systems, 2023. [Google Scholar]

- Wang, Q.; Zhang, L.; Huang, Y. FinAgent: A multimodal foundation agent for financial trading. ACM Transactions on Management Information Systems 2023, 15, 1–19. [Google Scholar]

- Xu, W.; Huang, C.; Gao, S.; Shang, S. LLM-Based Agents for Tool Learning: A Survey: W. Xu et al. Data Science and Engineering 2025, 1–31. [Google Scholar] [CrossRef]

- Mei, L.; Yao, J.; Ge, Y.; Wang, Y.; Bi, B.; Cai, Y.; Liu, J.; Li, M.; Li, Z.Z.; Zhang, D.; et al. A Survey of Context Engineering for Large Language Models. arXiv 2025, arXiv:2507.13334. [Google Scholar] [CrossRef]

- Cao, H.; Jiang, D.; Pei, J.; He, Q.; Liao, Z.; Chen, E.; Li, H. Context-aware query suggestion by mining click-through and session data. In Proceedings of the Proceedings of the 14th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, New York, NY, USA, 2008; KDD ’08, pp. 875–883. [Google Scholar] [CrossRef]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Xia, F.; Chi, E.; Le, Q.V.; Zhou, D.; et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems 2022, 35, 24824–24837. [Google Scholar]

- Asai, A.; Wu, Z.; Wang, Y.; Sil, A.; Hajishirzi, H. Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection. In Proceedings of the The Twelfth International Conference on Learning Representations, 2024. [Google Scholar]

- Lopopolo, R. Harness engineering: leveraging Codex in an agent-first world; OpenAI Blog, 2026; Available online: https://openai.com/index/harness-engineering/.

- Zhou, Z.; Qu, A.; Wu, Z.; Kim, S.; Prakash, A.; Rus, D.; Zhao, J.; Low, B.K.H.; Liang, P.P. MEM1: Learning to Synergize Memory and Reasoning for Efficient Long-Horizon Agents. arXiv 2025, arXiv:2506.15841. [Google Scholar] [CrossRef]

- Yu, H.; Chen, T.; Feng, J.; Chen, J.; Dai, W.; Yu, Q.; Zhang, Y.Q.; Ma, W.Y.; Liu, J.; Wang, M.; et al. MemAgent: Reshaping Long-Context LLM with Multi-Conv RL-based Memory Agent. arXiv 2025, arXiv:2507.02259. [Google Scholar]

- Zhang, G.; Fu, M.; Yan, S. MemGen: Weaving Generative Latent Memory for Self-Evolving Agents. arXiv 2025, arXiv:2509.24704. [Google Scholar]

- Zhang, X.F.; Beauchamp, N.; Wang, L. PRIME: Large Language Model Personalization with Cognitive Dual-Memory and Personalized Thought Process. In Proceedings of the Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing; 2025; pp. 33695–33724. [Google Scholar]

- Wang, Z.Z.; Mao, J.; Fried, D.; Neubig, G. Agent Workflow Memory. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- Park, J.S.; O’Brien, J.; Cai, C.J.; Morris, M.R.; Liang, P.; Bernstein, M.S. Generative agents: Interactive simulacra of human behavior. In Proceedings of the Proceedings of the 36th annual acm symposium on user interface software and technology, 2023; pp. 1–22. [Google Scholar]

- Tan, Z.; Yan, J.; Hsu, I.H.; Han, R.; Wang, Z.; Le, L.; Song, Y.; Chen, Y.; Palangi, H.; Lee, G.; et al. In prospect and retrospect: Reflective memory management for long-term personalized dialogue agents. Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics 2025, Volume 1, 8416–8439. [Google Scholar]

- Xiong, Z.; Lin, Y.; Xie, W.; He, P.; Tang, J.; Lakkaraju, H.; Xiang, Z. How Memory Management Impacts LLM Agents: An Empirical Study of Experience-Following Behavior. arXiv 2025, arXiv:2505.16067. [Google Scholar] [CrossRef]

- Wang, Y.; Chen, X. Mirix: Multi-agent memory system for llm-based agents. arXiv 2025, arXiv:2507.07957. [Google Scholar]

- Qian, H.; Liu, Z.; Zhang, P.; Mao, K.; Lian, D.; Dou, Z.; Huang, T. Memorag: Boosting long context processing with global memory-enhanced retrieval augmentation. Proceedings of the Proceedings of the ACM on Web Conference 2025, 2025, 2366–2377. [Google Scholar]

- Yang, H.; Lin, Z.; Wang, W.; Wu, H.; Li, Z.; Tang, B.; Wei, W.; Wang, J.; Tang, Z.; Song, S.; et al. Memory3: Language modeling with explicit memory. arXiv 2024, arXiv:2407.01178. [Google Scholar]

- Zhong, W.; Guo, L.; Gao, Q.; Ye, H.; Wang, Y. Memorybank: Enhancing large language models with long-term memory. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2024; pp. 19724–19731. [Google Scholar]

- Liu, L.; Yang, X.; Shen, Y.; Hu, B.; Zhang, Z.; Gu, J.; Zhang, G. Think-in-memory: Recalling and post-thinking enable llms with long-term memory. arXiv 2023, arXiv:2311.08719. [Google Scholar]

- Modarressi, A.; Imani, A.; Fayyaz, M.; Schütze, H. RET-LLM: Towards a General Read-Write Memory for Large Language Models. arXiv e-prints 2023, arXiv–2305. [Google Scholar]

- Jimenez Gutierrez, B.; Shu, Y.; Gu, Y.; Yasunaga, M.; Su, Y. Hipporag: Neurobiologically inspired long-term memory for large language models. Advances in Neural Information Processing Systems 2024, 37, 59532–59569. [Google Scholar]

- Gutiérrez, B.J.; Shu, Y.; Qi, W.; Zhou, S.; Su, Y. From RAG to Memory: Non-Parametric Continual Learning for Large Language Models. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- Rasmussen, P.; Paliychuk, P.; Beauvais, T.; Ryan, J.; Chalef, D. Zep: a temporal knowledge graph architecture for agent memory. arXiv 2025, arXiv:2501.13956. [Google Scholar]

- Xu, W.; Mei, K.; Gao, H.; Tan, J.; Liang, Z.; Zhang, Y. A-mem: Agentic memory for llm agents. arXiv 2025, arXiv:2502.12110. [Google Scholar] [CrossRef]

- Zhang, G.; Fu, M.; Wan, G.; Yu, M.; Wang, K.; Yan, S. G-Memory: Tracing Hierarchical Memory for Multi-Agent Systems. arXiv 2025, arXiv:2506.07398. [Google Scholar]

- Yang, H.; Chen, J.; Siew, M.; Botran, T.L.; Joe-Wong, C. LLM-Powered Decentralized Generative Agents with Adaptive Hierarchical Knowledge Graph for Cooperative Planning. In Proceedings of the The First MARW: Multi-Agent AI in the Real World Workshop at AAAI, 2025, 2025. [Google Scholar]

- Chezelles, D.; Le Sellier, T.; Shayegan, S.O.; Jang, L.K.; Lù, X.H.; Yoran, O.; Kong, D.; Xu, F.F.; Reddy, S.; Cappart, Q.; et al. The browsergym ecosystem for web agent research. arXiv 2024, arXiv:2412.05467. [Google Scholar] [CrossRef]

- Deng, X.; Gu, Y.; Zheng, B.; Chen, S.; Stevens, S.; Wang, B.; Sun, H.; Su, Y. Mind2web: Towards a generalist agent for the web. Advances in Neural Information Processing Systems 2023, 36, 28091–28114. [Google Scholar]

- He, H.; Yao, W.; Ma, K.; Yu, W.; Dai, Y.; Zhang, H.; Lan, Z.; Yu, D. Webvoyager: Building an end-to-end web agent with large multimodal models. arXiv 2024, arXiv:2401.13919. [Google Scholar]

- Shridhar, M.; Yuan, X.; Cote, M.A.; Bisk, Y.; Trischler, A.; Hausknecht, M. ALFWorld: Aligning Text and Embodied Environments for Interactive Learning. In Proceedings of the International Conference on Learning Representations, 2020. [Google Scholar]

- Hong, W.; Wang, W.; Lv, Q.; Xu, J.; Yu, W.; Ji, J.; Wang, Y.; Wang, Z.; Dong, Y.; Ding, M.; et al. Cogagent: A visual language model for gui agents. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024; pp. 14281–14290. [Google Scholar]

- Andrews, P.; Benhalloum, A.; Bertran, G.M.T.; Bettini, M.; Budhiraja, A.; Cabral, R.S.; Do, V.; Froger, R.; Garreau, E.; Gaya, J.B.; et al. Are: Scaling up agent environments and evaluations. arXiv 2025, arXiv:2509.17158. [Google Scholar] [CrossRef]

- Lu, S.; Wang, Z.; Zhang, H.; Wu, Q.; Gan, L.; Zhuang, C.; Gu, J.; Lin, T. Don’t Just Fine-tune the Agent, Tune the Environment. arXiv 2025, arXiv:2510.10197. [Google Scholar]

- Yao, S.; Chen, H.; Yang, J.; Narasimhan, K. Webshop: Towards scalable real-world web interaction with grounded language agents. Advances in Neural Information Processing Systems 2022, 35, 20744–20757. [Google Scholar]

- Zala, A.; Cho, J.; Lin, H.; Yoon, J.; Bansal, M. EnvGen: Generating and Adapting Environments via LLMs for Training Embodied Agents. In Proceedings of the First Conference on Language Modeling, 2024. [Google Scholar]

- Chang, M.; Zhang, J.; Zhu, Z.; Yang, C.; Yang, Y.; Jin, Y.; Lan, Z.; Kong, L.; He, J. Agentboard: An analytical evaluation board of multi-turn llm agents. Advances in neural information processing systems 2024, 37, 74325–74362. [Google Scholar]

- Zhang, J.; Yan, Y.; Yan, J.; Zheng, Z.; Piao, J.; Jin, D.; Li, Y. A parallelized framework for simulating large-scale llm agents with realistic environments and interactions. Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics 2025, Volume 6, 1339–1349. [Google Scholar]

- Wang, B.; Zhang, L.; Wang, Z.; Zhao, Y.; Zhou, T. Core: Cooperative reconstruction for multi-agent perception. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023; pp. 8710–8720. [Google Scholar]

- Ouyang, L.; Wu, J.; Jiang, X.; Almeida, D.; Wainwright, C.; Mishkin, P.; Zhang, C.; Agarwal, S.; Slama, K.; Ray, A.; et al. Training language models to follow instructions with human feedback. Advances in neural information processing systems 2022, 35, 27730–27744. [Google Scholar]

- Achiam, J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F.L.; Almeida, D.; Altenschmidt, J.; Altman, S.; Anadkat, S.; et al. Gpt-4 technical report. arXiv 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Guo, D.; Yang, D.; Zhang, H.; Song, J.; Zhang, R.; Xu, R.; Zhu, Q.; Ma, S.; Wang, P.; Bi, X.; et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv 2025, arXiv:2501.12948. [Google Scholar]

- Xia, P.; Chen, J.; Wang, H.; Liu, J.; Zeng, K.; Wang, Y.; Han, S.; Zhou, Y.; Zhao, X.; Chen, H.; et al. SkillRL: Evolving Agents via Recursive Skill-Augmented Reinforcement Learning. arXiv 2026, arXiv:2602.08234. [Google Scholar]

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.R.; Cao, Y. React: Synergizing reasoning and acting in language models. In Proceedings of the The eleventh international conference on learning representations, 2022. [Google Scholar]

- Schick, T.; Dwivedi-Yu, J.; Dessì, R.; Raileanu, R.; Lomeli, M.; Hambro, E.; Zettlemoyer, L.; Cancedda, N.; Scialom, T. Toolformer: Language models can teach themselves to use tools. Advances in Neural Information Processing Systems 2023, 36, 68539–68551. [Google Scholar]

- Patil, S.G.; Zhang, T.; Wang, X.; Gonzalez, J.E. Gorilla: Large language model connected with massive apis. Advances in Neural Information Processing Systems 2024, 37, 126544–126565. [Google Scholar]

- Wölflein, G.; Ferber, D.; Truhn, D.; Arandjelovic, O.; Kather, J.N. Llm agents making agent tools. Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics 2025, Volume 1, 26092–26130. [Google Scholar]

- Qu, C.; Dai, S.; Wei, X.; Cai, H.; Wang, S.; Yin, D.; Xu, J.; Wen, J.R. From Exploration to Mastery: Enabling LLMs to Master Tools via Self-Driven Interactions. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2025. [Google Scholar]

- Wang, R.; Han, X.; Ji, L.; Wang, S.; Baldwin, T.; Li, H. ToolGen: Unified Tool Retrieval and Calling via Generation. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2024. [Google Scholar]

- Qin, S.; Zhu, Y.; Mu, L.; Zhang, S.; Zhang, X. Meta-Tool: Unleash Open-World Function Calling Capabilities of General-Purpose Large Language Models. Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics 2025, Volume 1, 30653–30677. [Google Scholar]

- Cai, T.; Wang, X.; Ma, T.; Chen, X.; Zhou, D. Large Language Models as Tool Makers. In Proceedings of the The Twelfth International Conference on Learning Representations, 2024. [Google Scholar]

- Piskala, D.B. Agent, Sub-Agent, Skill, or Tool? A Practitioner’s Guide to Extending Agentic AI Systems. In Authorea Preprints; 2026. [Google Scholar]

- Shen, Y.; Song, K.; Tan, X.; Li, D.; Lu, W.; Zhuang, Y. Hugginggpt: Solving ai tasks with chatgpt and its friends in hugging face. Advances in Neural Information Processing Systems 2023, 36, 38154–38180. [Google Scholar]

- Li, G.; Hammoud, H.; Itani, H.; Khizbullin, D.; Ghanem, B. Camel: Communicative agents for" mind" exploration of large language model society. Advances in Neural Information Processing Systems 2023, 36, 51991–52008. [Google Scholar]

- Hong, S.; Zhuge, M.; Chen, J.; Zheng, X.; Cheng, Y.; Wang, J.; Zhang, C.; Wang, Z.; Yau, S.K.S.; Lin, Z.; et al. MetaGPT: Meta programming for a multi-agent collaborative framework. In Proceedings of the The Twelfth International Conference on Learning Representations, 2023. [Google Scholar]

- Wu, Q.; Bansal, G.; Zhang, J.; Wu, Y.; Li, B.; Zhu, E.; Jiang, L.; Zhang, X.; Zhang, S.; Liu, J.; et al. Autogen: Enabling next-gen LLM applications via multi-agent conversations. In Proceedings of the First Conference on Language Modeling, 2024. [Google Scholar]

- Klein, L.H.; Potamitis, N.; Aydin, R.; West, R.; Gulcehre, C.; Arora, A. Fleet of Agents: Coordinated Problem Solving with Large Language Models. In Proceedings of the The Exploration in AI Today Workshop at ICML 2025, 2025. [Google Scholar]

- Wang, X.; Wei, J.; Schuurmans, D.; Le, Q.V.; Chi, E.H.; Narang, S.; Chowdhery, A.; Zhou, D. Self-Consistency Improves Chain of Thought Reasoning in Language Models. In Proceedings of the The Eleventh International Conference on Learning Representations, 2023. [Google Scholar]

- Yao, S.; Yu, D.; Zhao, J.; Shafran, I.; Griffiths, T.; Cao, Y.; Narasimhan, K. Tree of thoughts: Deliberate problem solving with large language models. Advances in neural information processing systems 2023, 36, 11809–11822. [Google Scholar]

- Yao, Y.; Li, Z.; Zhao, H. GoT: Effective graph-of-thought reasoning in language models. Proceedings of the Findings of the Association for Computational Linguistics: NAACL 2024, 2024, 2901–2921. [Google Scholar]

- Jiachen, Z.; Yao, Z.; et al. SELF-EXPLAIN: Teaching large language models to reason complex questions by themselves. In Proceedings of the R0-FoMo: Robustness of Few-shot and Zero-shot Learning in Large Foundation Models, 2023. [Google Scholar]

- Zhou, P.; Pujara, J.; Ren, X.; Chen, X.; Cheng, H.T.; Le, Q.V.; Chi, E.; Zhou, D.; Mishra, S.; Zheng, H.S. Self-discover: Large language models self-compose reasoning structures. Advances in Neural Information Processing Systems 2024, 37, 126032–126058. [Google Scholar]

- Zhao, J.; Xie, Y.; Kawaguchi, K.; He, J.; Xie, M. Automatic model selection with large language models for reasoning. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023, 2023; pp. 758–783. [Google Scholar]

- Gao, L.; Madaan, A.; Zhou, S.; Alon, U.; Liu, P.; Yang, Y.; Callan, J.; Neubig, G. Pal: Program-aided language models. In Proceedings of the International Conference on Machine Learning. PMLR, 2023; pp. 10764–10799. [Google Scholar]

- Chen, W.; Ma, X.; Wang, X.; Cohen, W.W. Program of Thoughts Prompting: Disentangling Computation from Reasoning for Numerical Reasoning Tasks. Transactions on Machine Learning Research, 2022. [Google Scholar]

- Pi, X.; Liu, Q.; Chen, B.; Ziyadi, M.; Lin, Z.; Fu, Q.; Gao, Y.; Lou, J.G.; Chen, W. Reasoning Like Program Executors. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, 2022; pp. 761–779. [Google Scholar]

- Ye, X.; Chen, Q.; Dillig, I.; Durrett, G. Satlm: Satisfiability-aided language models using declarative prompting. Advances in Neural Information Processing Systems 2023, 36, 45548–45580. [Google Scholar]

- Qin, Y.; Liang, S.; Ye, Y.; Zhu, K.; Yan, L.; Lu, Y.; Lin, Y.; Cong, X.; Tang, X.; Qian, B.; et al. ToolLLM: Facilitating Large Language Models to Master 16000+ Real-world APIs. In Proceedings of the ICLR, 2024. [Google Scholar]

- Surís, D.; Menon, S.; Vondrick, C. Vipergpt: Visual inference via python execution for reasoning. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2023; pp. 11888–11898. [Google Scholar]

- Gupta, T.; Kembhavi, A. Visual programming: Compositional visual reasoning without training. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2023; pp. 14953–14962. [Google Scholar]

- Sun, J.; Xu, C.; Tang, L.; Wang, S.; Lin, C.; Gong, Y.; Ni, L.; Shum, H.Y.; Guo, J. Think-on-Graph: Deep and Responsible Reasoning of Large Language Model on Knowledge Graph. In Proceedings of the The Twelfth International Conference on Learning Representations, 2024. [Google Scholar]

- Li, Y.; Song, D.; Zhou, C.; Tian, Y.; Wang, H.; Yang, Z.; Zhang, S. A framework of knowledge graph-enhanced large language model based on question decomposition and atomic retrieval. Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2024, 2024, 11472–11485. [Google Scholar]

- He, M.; Zhou, A.; Shi, X. Enhancing Textbook Question Answering with Knowledge Graph-Augmented Large Language Models. In Proceedings of the Asian Conference on Machine Learning. PMLR, 2025; pp. 639–654. [Google Scholar]

- Wang, R.; Vinh, T.; Xu, R.; Zhou, Y.; Lu, J.; Yang, C.; Pasquel, F. Knowledge Graph Augmented Large Language Models for Next-Visit Disease Prediction. arXiv 2025, arXiv:2512.01210. [Google Scholar]

- Leng, X.; Liang, J.; Mauro, J.; Wang, X.; Bertozzi, A.L.; Chapman, J.; Lin, J.; Chen, B.; Ye, C.; Daniel, T.; et al. Narrative Analysis of True Crime Podcasts With Knowledge Graph-Augmented Large Language Models. CoRR, 2024. [Google Scholar]

- Ji, S.; Liu, L.; Xi, J.; Zhang, X.; Li, X. KLR-KGC: Knowledge-Guided LLM Reasoning for Knowledge Graph Completion. Electronics (2079-9292) 2024, 13. [Google Scholar] [CrossRef]

- Sha, H.; Gong, F.; Liu, B.; Liu, R.; Wang, H.; Wu, T. Leveraging retrieval-augmented large language models for dietary recommendations with traditional Chinese Medicine’s medicine food homology: algorithm development and validation. JMIR Medical Informatics 2025, 13, e75279. [Google Scholar] [CrossRef]

- Pan, L.; Albalak, A.; Wang, X.; Wang, W. Logic-lm: Empowering large language models with symbolic solvers for faithful logical reasoning. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023, 2023; pp. 3806–3824. [Google Scholar]

- Xin, H.; Ren, Z.; Song, J.; Shao, Z.; Zhao, W.; Wang, H.; Liu, B.; Zhang, L.; Lu, X.; Du, Q.; et al. Deepseek-prover-v1. 5: Harnessing proof assistant feedback for reinforcement learning and monte-carlo tree search. arXiv 2024, arXiv:2408.08152. [Google Scholar]

- Xu, J.; Fei, H.; Pan, L.; Liu, Q.; Lee, M.L.; Hsu, W. Faithful Logical Reasoning via Symbolic Chain-of-Thought. Proceedings of the Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics 2024, Volume 1, 13326–13365. [Google Scholar]

- Wei, X.; Liu, X.; Zang, Y.; Dong, X.; Cao, Y.; Wang, J.; Qiu, X.; Lin, D. SIM-CoT: Supervised Implicit Chain-of-Thought. arXiv 2025, arXiv:2509.20317. [Google Scholar]

- Chen, Z.; Cui, S.; Ye, D.; Zhang, Y.; Bian, Y.; Zhu, T. Think Consistently, Reason Efficiently: Energy-Based Calibration for Implicit Chain-of-Thought. arXiv 2025, arXiv:2511.07124. [Google Scholar] [CrossRef]

- Li, X.; Dong, G.; Jin, J.; Zhang, Y.; Zhou, Y.; Zhu, Y.; Zhang, P.; Dou, Z. Search-o1: Agentic search-enhanced large reasoning models. arXiv 2025, arXiv:2501.05366. [Google Scholar]

- Jang, H.; Jang, Y.; Lee, S.; Ok, J.; Ahn, S. Self-Training Large Language Models with Confident Reasoning. arXiv 2025, arXiv:2505.17454. [Google Scholar] [CrossRef]

- Zhang, Z.; Zhang, A.; Li, M.; Karypis, G.; Smola, A.; et al. Multimodal Chain-of-Thought Reasoning in Language Models. Transactions on Machine Learning Research, 2024. [Google Scholar]

- Yang, Z.; Li, L.; Wang, J.; Lin, K.; Azarnasab, E.; Ahmed, F.; Liu, Z.; Liu, C.; Zeng, M.; Wang, L. Mm-react: Prompting chatgpt for multimodal reasoning and action. arXiv 2023, arXiv:2303.11381. [Google Scholar]

- Wu, C.; Yin, S.; Qi, W.; Wang, X.; Tang, Z.; Duan, N. Visual chatgpt: Talking, drawing and editing with visual foundation models. arXiv 2023, arXiv:2303.04671. [Google Scholar] [CrossRef]

- Zheng, G.; Wang, J.; Zhou, X.; Zhang, X. Enhancing Semantics in Multimodal Chain of Thought via Soft Negative Sampling. In Proceedings of the LREC/COLING, 2024. [Google Scholar]

- Chai, J.; Tang, S.; Ye, R.; Du, Y.; Zhu, X.; Zhou, M.; Wang, Y.; Zhang, Y.; Zhang, L.; Chen, S.; et al. SciMaster: Towards General-Purpose Scientific AI Agents, Part I. X-Master as Foundation: Can We Lead on Humanity’s Last Exam? arXiv 2025, arXiv:2507.05241. [Google Scholar]

- Liu, Z.; Cai, Y.; Zhu, X.; Zheng, Y.; Chen, R.; Wen, Y.; Wang, Y.; Chen, S.; et al. ML-Master: Towards AI-for-AI via Integration of Exploration and Reasoning. arXiv 2025, arXiv:2506.16499. [Google Scholar]

- Curtarolo, S.; Setyawan, W.; Hart, G.L.; Jahnatek, M.; Chepulskii, R.V.; Taylor, R.H.; Wang, S.; Xue, J.; Yang, K.; Levy, O.; et al. AFLOW: An automatic framework for high-throughput materials discovery. Computational Materials Science 2012, 58, 218–226. [Google Scholar] [CrossRef]

- Hu, S.; Lu, C.; Clune, J. Automated Design of Agentic Systems. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2025. [Google Scholar]

- Zhang, S.; Fan, J.; Fan, M.; Li, G.; Du, X. Deepanalyze: Agentic large language models for autonomous data science. arXiv 2025, arXiv:2510.16872. [Google Scholar] [CrossRef]

- Lu, Y.; Yang, S.; Qian, C.; Chen, G.; Luo, Q.; Wu, Y.; Wang, H.; Cong, X.; Zhang, Z.; Lin, Y.; et al. Proactive Agent: Shifting LLM Agents from Reactive Responses to Active Assistance. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2025. [Google Scholar]

- Gao, W.; Liu, Q.; Yue, L.; Yao, F.; Lv, R.; Zhang, Z.; Wang, H.; Huang, Z. Agent4edu: Generating learner response data by generative agents for intelligent education systems. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2025; pp. 23923–23932. [Google Scholar]

- Zhang, W.; Tang, K.; Wu, H.; Wang, M.; Shen, Y.; Hou, G.; Tan, Z.; Li, P.; Zhuang, Y.; Lu, W. Agent-Pro: Learning to Evolve via Policy-Level Reflection and Optimization. In Proceedings of the ICLR 2024 Workshop on Large Language Model (LLM) Agents, 2024. [Google Scholar]

- Long, L.; He, Y.; Ye, W.; Pan, Y.; Lin, Y.; Li, H.; Zhao, J.; Li, W. Seeing, listening, remembering, and reasoning: A multimodal agent with long-term memory. arXiv 2025, arXiv:2508.09736. [Google Scholar] [CrossRef]

- Liu, X.; Qin, B.; Liang, D.; Dong, G.; Lai, H.; Zhang, H.; Zhao, H.; Iong, I.L.; Sun, J.; Wang, J.; et al. Autoglm: Autonomous foundation agents for guis. arXiv 2024, arXiv:2411.00820. [Google Scholar]

- Xu, Y.; Liu, X.; Liu, X.; Fu, J.; Zhang, H.; Jing, B.; Zhang, S.; Wang, Y.; Zhao, W.; Dong, Y. MobileRL: Online Agentic Reinforcement Learning for Mobile GUI Agents. arXiv 2025, arXiv:2509.18119. [Google Scholar]

- Zheng, Z.; Yang, M.; Hong, J.; Zhao, C.; Xu, G.; Yang, L.; Shen, C.; Yu, X. DeepEyes: Incentivizing" Thinking with Images" via Reinforcement Learning. arXiv 2025, arXiv:2505.14362. [Google Scholar] [CrossRef]

- Jiang, P.; Lin, J.; Cao, L.; Tian, R.; Kang, S.; Wang, Z.; Sun, J.; Han, J. Deepretrieval: Hacking real search engines and retrievers with large language models via reinforcement learning. arXiv 2025, arXiv:2503.00223. [Google Scholar]

- Chhikara, P.; Khant, D.; Aryan, S.; Singh, T.; Yadav, D. Mem0: Building production-ready ai agents with scalable long-term memory. arXiv 2025, arXiv:2504.19413. [Google Scholar]

- Jin, B.; Zeng, H.; Yue, Z.; Yoon, J.; Arik, S.; Wang, D.; Zamani, H.; Han, J. Search-r1: Training llms to reason and leverage search engines with reinforcement learning. arXiv 2025, arXiv:2503.09516. [Google Scholar] [CrossRef]

- Chen, M.; Sun, L.; Li, T.; Sun, H.; Zhou, Y.; Zhu, C.; Wang, H.; Pan, J.Z.; Zhang, W.; Chen, H.; et al. Learning to reason with search for llms via reinforcement learning. arXiv 2025, arXiv:2503.19470. [Google Scholar] [CrossRef]

- Li, K.; Zhang, Z.; Yin, H.; Zhang, L.; Ou, L.; Wu, J.; Yin, W.; Li, B.; Tao, Z.; Wang, X.; et al. WebSailor: Navigating Super-human Reasoning for Web Agent. arXiv 2025, arXiv:2507.02592. [Google Scholar]

- Wei, Y.; Duchenne, O.; Copet, J.; Carbonneaux, Q.; ZHANG, L.; Fried, D.; Synnaeve, G.; Singh, R.; Wang, S. SWE-RL: Advancing LLM Reasoning via Reinforcement Learning on Open Software Evolution. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Zhou, Y.; Jiang, S.; Tian, Y.; Weston, J.; Levine, S.; Sukhbaatar, S.; Li, X. Sweet-rl: Training multi-turn llm agents on collaborative reasoning tasks. arXiv 2025, arXiv:2503.15478. [Google Scholar]

- Sun, W.; Lu, M.; Ling, Z.; Liu, K.; Yao, X.; Yang, Y.; Chen, J. Scaling Long-Horizon LLM Agent via Context-Folding. arXiv 2025, arXiv:2510.11967. [Google Scholar] [CrossRef]

- Li, X.; Zou, H.; Liu, P. Torl: Scaling tool-integrated rl. arXiv 2025, arXiv:2503.23383. [Google Scholar]

- Li, Z.; Hu, Y.; Wang, W. Encouraging good processes without the need for good answers: Reinforcement learning for llm agent planning. Proceedings of the Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing: Industry Track 2025, 1654–1666. [Google Scholar]

- Goldie, A.; Mirhoseini, A.; Zhou, H.; Cai, I.; Manning, C.D. Synthetic data generation & multi-step rl for reasoning & tool use. arXiv 2025, arXiv:2504.04736. [Google Scholar] [CrossRef]

- Singh, J.; Magazine, R.; Pandya, Y.; Nambi, A. Agentic reasoning and tool integration for llms via reinforcement learning. arXiv 2025, arXiv:2505.01441. [Google Scholar]

- Mai, X.; Xu, H.; Li, Z.Z.; Wang, W.; Hu, J.; Zhang, Y.; Zhang, W.; et al. Agent rl scaling law: Agent rl with spontaneous code execution for mathematical problem solving. arXiv 2025, arXiv:2505.07773. [Google Scholar] [CrossRef]

- Xue, Z.; Zheng, L.; Liu, Q.; Li, Y.; Zheng, X.; MA, Z.; An, B. SimpleTIR: End-to-End Reinforcement Learning for Multi-Turn Tool-Integrated Reasoning. In Proceedings of the NeurIPS 2025 Fourth Workshop on Deep Learning for Code, 2025. [Google Scholar]

- Dou, Z.; Zhao, Q.; Wan, Z.; Zhang, D.; Wang, W.; Raiyan, T.; Chen, B.; Pan, Q.; Ouyang, Y.; Gao, Z.; et al. Plan Then Action: High-Level Planning Guidance Reinforcement Learning for LLM Reasoning. arXiv 2025, arXiv:2510.01833. [Google Scholar]

- Li, Z.; Zhang, H.; Han, S.; Liu, S.; Xie, J.; Zhang, Y.; Choi, Y.; Zou, J.; Lu, P. In-the-Flow Agentic System Optimization for Effective Planning and Tool Use. In Proceedings of the NeurIPS 2025 Workshop on Efficient Reasoning, 2025. [Google Scholar]

- Mialon, G.; Fourrier, C.; Wolf, T.; LeCun, Y.; Scialom, T. Gaia: a benchmark for general ai assistants. In Proceedings of the The Twelfth International Conference on Learning Representations, 2023. [Google Scholar]

- Phan, L.; Gatti, A.; Han, Z.; Li, N.; Hu, J.; Zhang, H.; Zhang, C.B.C.; Shaaban, M.; Ling, J.; Shi, S.; et al. Humanity’s last exam. arXiv 2025, arXiv:2501.14249. [Google Scholar] [CrossRef]

- Yuan, Y.; Jianye, H.; Ma, Y.; Dong, Z.; Liang, H.; Liu, J.; Feng, Z.; Zhao, K.; ZHENG, Y. Uni-RLHF: Universal Platform and Benchmark Suite for Reinforcement Learning with Diverse Human Feedback. In Proceedings of the The Twelfth International Conference on Learning Representations, 2024. [Google Scholar]

- Guo, Z.; Cheng, S.; Wang, H.; Liang, S.; Qin, Y.; Li, P.; Liu, Z.; Sun, M.; Liu, Y. StableToolBench: Towards Stable Large-Scale Benchmarking on Tool Learning of Large Language Models. In Proceedings of the ACL (Findings), 2024. [Google Scholar]

- Li, L.; Wang, Y.; Zhao, H.; Kong, S.; Teng, Y.; Li, C.; Wang, Y. Reflection-Bench: Evaluating Epistemic Agency in Large Language Models. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- Wu, D.; Wang, H.; Yu, W.; Zhang, Y.; Chang, K.W.; Yu, D. LongMemEval: Benchmarking Chat Assistants on Long-Term Interactive Memory. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2025. [Google Scholar]

- Liu, X.; Yu, H.; Zhang, H.; Xu, Y.; Lei, X.; Lai, H.; Gu, Y.; Ding, H.; Men, K.; Yang, K.; et al. AgentBench: Evaluating LLMs as Agents. In Proceedings of the The Twelfth International Conference on Learning Representations, 2024. [Google Scholar]

- Kazemi, M.; Fatemi, B.; Bansal, H.; Palowitch, J.; Anastasiou, C.; Mehta, S.V.; Jain, L.K.; Aglietti, V.; Jindal, D.; Chen, Y.P.; et al. Big-bench extra hard. Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) 2025, 26473–26501. [Google Scholar]

- Wu, C.K.; Tam, Z.R.; Lin, C.Y.; Chen, Y.N.V.; Lee, H.y. Streambench: Towards benchmarking continuous improvement of language agents. Advances in Neural Information Processing Systems 2024, 37, 107039–107063. [Google Scholar]

- Xiao, R.; Ma, W.; Wang, K.; Wu, Y.; Zhao, J.; Wang, H.; Huang, F.; Li, Y. FlowBench: Revisiting and Benchmarking Workflow-Guided Planning for LLM-based Agents. Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2024, 2024, 10883–10900. [Google Scholar]

- Chen, J.; Wei, Z.; Ren, Z.; Li, Z.; Zhang, J. LR²Bench: Evaluating Long-chain Reflective Reasoning Capabilities of Large Language Models via Constraint Satisfaction Problems. Proceedings of the ACL (Findings) 2025, 6006–6032. [Google Scholar]

- Geng, L.; Chang, E.Y. Realm-bench: A real-world planning benchmark for llms and multi-agent systems. arXiv 2025, arXiv:2502.18836. [Google Scholar]

- Hu, Y.; Wang, Y.; McAuley, J. Evaluating memory in llm agents via incremental multi-turn interactions. arXiv 2025, arXiv:2507.05257. [Google Scholar] [CrossRef]

- Xia, Y.; Jin, P.; Xie, S.; He, L.; Cao, C.; Luo, R.; Liu, G.; Wang, Y.; Liu, Z.; Chen, Y.J.; et al. Nature Language Model: Deciphering the Language of Nature for Scientific Discovery. arXiv 2025, arXiv:2502.07527. [Google Scholar]

- He, Y.; Huang, G.; Feng, P.; Lin, Y.; Zhang, Y.; Li, H.; et al. Pasa: An llm agent for comprehensive academic paper search. arXiv 2025, arXiv:2501.10120. [Google Scholar] [CrossRef]

- Li, Y.; Chen, L.; Liu, A.; Yu, K.; Wen, L. ChatCite: LLM agent with human workflow guidance for comparative literature summary. In Proceedings of the Proceedings of the 31st International Conference on Computational Linguistics, 2025; pp. 3613–3630. [Google Scholar]

- Schmidgall, S.; Su, Y.; Wang, Z.; Sun, X.; Wu, J.; Yu, X.; Liu, J.; Liu, Z.; Barsoum, E. Agent laboratory: Using llm agents as research assistants. arXiv 2025, arXiv:2501.04227. [Google Scholar] [CrossRef]

- Ghafarollahi, A.; Buehler, M.J. ProtAgents: protein discovery via large language model multi-agent collaborations combining physics and machine learning. Digital Discovery 2024, 3, 1389–1409. [Google Scholar] [CrossRef]

- Ni, Y.; Zhu, L.; Li, S. Bio AI Agent: A Multi-Agent Artificial Intelligence System for Autonomous CAR-T Cell Therapy Development with Integrated Target Discovery, Toxicity Prediction, and Rational Molecular Design. arXiv 2025, arXiv:2511.08649. [Google Scholar]

- Zheng, Y.; Sun, S.; Qiu, L.; Ru, D.; Jiayang, C.; Li, X.; Lin, J.; Wang, B.; Luo, Y.; Pan, R.; et al. OpenResearcher: Unleashing AI for Accelerated Scientific Research. In Proceedings of the Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing: System Demonstrations, 2024; pp. 209–218. [Google Scholar]

- Baek, J.; Jauhar, S.K.; Cucerzan, S.; Hwang, S.J. Researchagent: Iterative research idea generation over scientific literature with large language models. In Proceedings of the Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers); 2025; pp. 6709–6738. [Google Scholar]

- Zhang, W.; Zhao, L.; Xia, H.; Sun, S.; Sun, J.; Qin, M.; Li, X.; Zhao, Y.; Zhao, Y.; Cai, X.; et al. A multimodal foundation agent for financial trading: Tool-augmented, diversified, and generalist. In Proceedings of the Proceedings of the 30th acm sigkdd conference on knowledge discovery and data mining, 2024; pp. 4314–4325. [Google Scholar]

- Yu, Y.; Yao, Z.; Li, H.; Deng, Z.; Jiang, Y.; Cao, Y.; Chen, Z.; Suchow, J.; Cui, Z.; Liu, R.; et al. Fincon: A synthesized llm multi-agent system with conceptual verbal reinforcement for enhanced financial decision making. Advances in Neural Information Processing Systems 2024, 37, 137010–137045. [Google Scholar]

- Wang, X.; Li, B.; Song, Y.; Xu, F.F.; Tang, X.; Zhuge, M.; Pan, J.; Song, Y.; Li, B.; Singh, J.; et al. Openhands: An open platform for ai software developers as generalist agents. arXiv 2024, arXiv:2407.16741. [Google Scholar]

- Yu, J.; Zhuang, Y.; Sun, Y.; Gao, W.; Liu, Q.; Cheng, M.; Huang, Z.; Chen, E. TestAgent: An Adaptive and Intelligent Expert for Human Assessment. arXiv 2025, arXiv:2506.03032. [Google Scholar] [CrossRef]

- Gao, W.; Liu, Q.; Yue, L.; Yao, F.; Lv, R.; Zhang, Z.; Wang, H.; Huang, Z. Agent4edu: Generating learner response data by generative agents for intelligent education systems. Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence 2025, Vol. 39, 23923–23932. [Google Scholar] [CrossRef]

- Lv, R.; Liu, Q.; Gao, W.; Zhang, H.; Lu, J.; Zhu, L. GenAL: Generative Agent for Adaptive Learning. Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence 2025, Vol. 39, 577–585. [Google Scholar] [CrossRef]

- Zhan, Y.; Liu, Q.; Gao, W.; Zhang, Z.; Wang, T.; Shen, S.; Lu, J.; Huang, Z. CoderAgent: simulating student behavior for personalized programming learning with large language models. In Proceedings of the Proceedings of the Thirty-Fourth International Joint Conference on Artificial Intelligence, 2025, IJCAI ’25. [Google Scholar]

- Yu, S.; Cheng, M.; Liu, Q.; Wang, D.; Yang, J.; Ouyang, J.; Luo, Y.; Lei, C.; Chen, E. Multi-source knowledge pruning for retrieval-augmented generation: A benchmark and empirical study. In Proceedings of the Proceedings of the 34th ACM International Conference on Information and Knowledge Management, 2025; pp. 3931–3941. [Google Scholar]

- Ye, J.; Du, Z.; Yao, X.; Lin, W.; Xu, Y.; Chen, Z.; Wang, Z.; Zhu, S.; Xi, Z.; Yuan, S.; et al. ToolHop: A Query-Driven Benchmark for Evaluating Large Language Models in Multi-Hop Tool Use. In Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics; 2025; Volume 1, pp. 2995–3021. [Google Scholar]

- Liu, Z.; Chen, B.; Cheng, M.; Chen, E.; Li, L.; Lei, C.; Ou, W.; Li, H.; Gai, K. Towards Context-aware Reasoning-enhanced Generative Searching in E-commerce. arXiv 2025, arXiv:2510.16925. [Google Scholar]

- Cheng, M.; Wang, J.; Wang, D.; Tao, X.; Liu, Q.; Chen, E. Can slow-thinking llms reason over time? empirical studies in time series forecasting. arXiv 2025, arXiv:2505.24511. [Google Scholar] [CrossRef]

- Jiang, C.; Cheng, M.; Tao, X.; Mao, Q.; Ouyang, J.; Liu, Q. TableMind: An Autonomous Programmatic Agent for Tool-Augmented Table Reasoning. arXiv 2025, arXiv:2509.06278. [Google Scholar]

- Li, Q.; Cheng, M.; Liu, Z.; Wang, D.; Zeng, Y.; Liu, T. From Hypothesis to Premises: LLM-based Backward Logical Reasoning with Selective Symbolic Translation. arXiv 2025, arXiv:2512.03360. [Google Scholar] [CrossRef]

- Zitkovich, B.; Yu, T.; Xu, S.; Xu, P.; Xiao, T.; Xia, F.; Wu, J.; Wohlhart, P.; Welker, S.; Wahid, A.; et al. Rt-2: Vision-language-action models transfer web knowledge to robotic control. In Proceedings of the Conference on Robot Learning. PMLR, 2023; pp. 2165–2183. [Google Scholar]

- Zhang, X.; Gao, T.; Cheng, M.; Pan, B.; Guo, Z.; Liu, Y.; Tao, X. AlphaCast: A Human Wisdom-LLM Intelligence Co-Reasoning Framework for Interactive Time Series Forecasting. arXiv 2025, arXiv:2511.08947. [Google Scholar]

- Wang, L.; Xu, W.; Lan, Y.; Hu, Z.; Lan, Y.; Lee, R.K.W.; Lim, E.P. Plan-and-Solve Prompting: Improving Zero-Shot Chain-of-Thought Reasoning by Large Language Models. In Long Papers; Association for Computational Linguistics (ACL), 2023; pp. 2609–2634. [Google Scholar]

- Lin, B.Y.; Fu, Y.; Yang, K.; Brahman, F.; Huang, S.; Bhagavatula, C.; Ammanabrolu, P.; Choi, Y.; Ren, X. Swiftsage: A generative agent with fast and slow thinking for complex interactive tasks. Advances in Neural Information Processing Systems 2023, 36, 23813–23825. [Google Scholar]

- Gao, J.; Juluri, S. From Idea to Co-Creation: A Planner-Actor-Critic Framework for Agent Augmented 3D Modeling. arXiv 2026, arXiv:2601.05016. [Google Scholar]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.t.; Rocktäschel, T.; et al. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in neural information processing systems 2020, 33, 9459–9474. [Google Scholar]

- Cheng, M.; Wang, D.; Liu, Q.; Yu, S.; Tao, X.; Wang, Y.; Chu, C.; Duan, Y.; Long, M.; Chen, E. Mind2Report: A Cognitive Deep Research Agent for Expert-Level Commercial Report Synthesis. arXiv 2026, arXiv:2601.04879. [Google Scholar]

- Song, C.H.; Wu, J.; Washington, C.; Sadler, B.M.; Chao, W.L.; Su, Y. Llm-planner: Few-shot grounded planning for embodied agents with large language models. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2023; pp. 2998–3009. [Google Scholar]

- Karimova, S.; Dadashova, U. The model context protocol: a standardization analysis for application integration. Journal of Computer Science and Digital Technologies 2025, 1, 50–59. [Google Scholar]

- Chen, B.; Shu, C.; Shareghi, E.; Collier, N.; Narasimhan, K.; Yao, S. FireAct: Toward Language Agent Fine-tuning. CoRR 2023, abs/2310.05915. [Google Scholar]

- Madaan, A.; Tandon, N.; Gupta, P.; Hallinan, S.; Gao, L.; Wiegreffe, S.; Alon, U.; Dziri, N.; Prabhumoye, S.; Yang, Y.; et al. Self-refine: Iterative refinement with self-feedback. Advances in Neural Information Processing Systems 2023, 36, 46534–46594. [Google Scholar]

- Gou, Z.; Shao, Z.; Gong, Y.; Yang, Y.; Duan, N.; Chen, W.; et al. CRITIC: Large Language Models Can Self-Correct with Tool-Interactive Critiquing. In Proceedings of the The Twelfth International Conference on Learning Representations, 2024. [Google Scholar]

- Zhou, D.; Schärli, N.; Hou, L.; Wei, J.; Scales, N.; Wang, X.; Schuurmans, D.; Cui, C.; Bousquet, O.; Le, Q.V.; et al. Least-to-Most Prompting Enables Complex Reasoning in Large Language Models. In Proceedings of the The Eleventh International Conference on Learning Representations, 2023. [Google Scholar]

- Lin, S.; Hilton, J.; Evans, O. Teaching Models to Express Their Uncertainty in Words. Transactions on Machine Learning Research, 2022. [Google Scholar]

- Pan, T.; Ouyang, J.; Cheng, M.; Li, Q.; Liu, Z.; Pan, M.; Yu, S.; Liu, Q. PaperScout: An Autonomous Agent for Academic Paper Search with Process-Aware Sequence-Level Policy Optimization. arXiv 2026, arXiv:2601.10029. [Google Scholar]

- Ye, R.; Zhang, Z.; Li, K.; Yin, H.; Tao, Z.; Zhao, Y.; Su, L.; Zhang, L.; Qiao, Z.; Wang, X.; et al. AgentFold: Long-Horizon Web Agents with Proactive Context Management. arXiv 2025, arXiv:2510.24699. [Google Scholar]

- Lai, H.; Liu, X.; Zhao, Y.; Xu, H.; Zhang, H.; Jing, B.; Ren, Y.; Yao, S.; Dong, Y.; Tang, J. Computerrl: Scaling end-to-end online reinforcement learning for computer use agents. arXiv 2025, arXiv:2508.14040. [Google Scholar]

- Wang, H.; Zou, H.; Song, H.; Feng, J.; Fang, J.; Lu, J.; Liu, L.; Luo, Q.; Liang, S.; Huang, S.; et al. Ui-tars-2 technical report: Advancing gui agent with multi-turn reinforcement learning. arXiv 2025, arXiv:2509.02544. [Google Scholar]

- Hong, J.; Zhao, C.; Zhu, C.; Lu, W.; Xu, G.; Yu, X. DeepEyesV2: Toward Agentic Multimodal Model. arXiv 2025, arXiv:2511.05271. [Google Scholar]

- Ouyang, J.; Yan, R.; Luo, Y.; Cheng, M.; Liu, Q.; Liu, Z.; Yu, S.; Wang, D. Training powerful llm agents with end-to-end reinforcement learning. 2025. [Google Scholar]

- Song, H.; Jiang, J.; Min, Y.; Chen, J.; Chen, Z.; Zhao, W.X.; Fang, L.; Wen, J.R. R1-searcher: Incentivizing the search capability in llms via reinforcement learning. arXiv 2025, arXiv:2503.05592. [Google Scholar] [CrossRef]

- Team, T.D.; Li, B.; Zhang, B.; Zhang, D.; Huang, F.; Li, G.; Chen, G.; Yin, H.; Wu, J.; Zhou, J.; et al. Tongyi DeepResearch Technical Report. arXiv 2025, arXiv:2510.24701. [Google Scholar] [CrossRef]

- Zhang, H.; Cheng, M.; Luo, Y.; Tao, X. STaR: Towards Cognitive Table Reasoning via Slow-Thinking Large Language Models. arXiv 2025, arXiv:2511.11233. [Google Scholar]

- Paglieri, D.; Cupiał, B.; Cook, J.; Piterbarg, U.; Tuyls, J.; Grefenstette, E.; Foerster, J.N.; Parker-Holder, J.; Rocktäschel, T. Learning When to Plan: Efficiently Allocating Test-Time Compute for LLM Agents. arXiv 2025, arXiv:2509.03581. [Google Scholar] [CrossRef]

- Luo, Y.; Zhou, Y.; Cheng, M.; Wang, J.; Wang, D.; Pan, T.; Zhang, J. Time Series Forecasting as Reasoning: A Slow-Thinking Approach with Reinforced LLMs. arXiv 2025, arXiv:2506.10630. [Google Scholar] [CrossRef]

- Luo, X.; Zhang, Y.; He, Z.; Wang, Z.; Zhao, S.; Li, D.; Qiu, L.K.; Yang, Y. Agent lightning: Train any ai agents with reinforcement learning. arXiv 2025, arXiv:2508.03680. [Google Scholar] [CrossRef]

- Wang, D.; Ouyang, J.; Yu, S.; Cheng, M.; Liu, Q. Claw-R1: Agentic RL for Modern Agents; GitHub repository, 2025; Available online: https://github.com/AgentR1/Claw-R1.

- Ouyang, J.; Pan, T.; Cheng, M.; Yan, R.; Luo, Y.; Lin, J.; Liu, Q. Hoh: A dynamic benchmark for evaluating the impact of outdated information on retrieval-augmented generation. arXiv 2025, arXiv:2503.04800. [Google Scholar]

- Wang, D.; Cheng, M.; Yu, S.; Liu, Z.; Guo, Z.; Liu, Q. Paperarena: An evaluation benchmark for tool-augmented agentic reasoning on scientific literature. arXiv 2025, arXiv:2510.10909. [Google Scholar]

- Gottweis, J.; Weng, W.H.; Daryin, A.; Tu, T.; Palepu, A.; Sirkovic, P.; Myaskovsky, A.; Weissenberger, F.; Rong, K.; Tanno, R.; et al. Towards an AI co-scientist. arXiv 2025, arXiv:2502.18864. [Google Scholar] [CrossRef]

- Manus. Introducing Manus 1.6: Max Performance, Mobile Dev, and Design View. 2025. Available online: https://manus.im/blog/manus-max-release (accessed on 2026-02-06).

- Yang, J.; Jimenez, C.E.; Wettig, A.; Lieret, K.; Yao, S.; Narasimhan, K.; Press, O. Swe-agent: Agent-computer interfaces enable automated software engineering. Advances in Neural Information Processing Systems 2024, 37, 50528–50652. [Google Scholar]

- Zhang, C.; Yang, Z.; Liu, J.; Li, Y.; Han, Y.; Chen, X.; Huang, Z.; Fu, B.; Yu, G. Appagent: Multimodal agents as smartphone users. In Proceedings of the Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems; 2025; pp. 1–20. [Google Scholar]

- Zhang, C.; Li, L.; He, S.; Zhang, X.; Qiao, B.; Qin, S.; Ma, M.; Kang, Y.; Lin, Q.; Rajmohan, S.; et al. Ufo: A ui-focused agent for windows os interaction. In Proceedings of the Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers); 2025; pp. 597–622. [Google Scholar]

Short Biography of Authors

|

Mingyue Cheng received his PhD degree in Data Science from the University of Science and Technology of China (USTC). He is currently serving as an Associate Researcher at USTC, affiliated with the State Key Laboratory of Cognitive Intelligence and School of Computer Science and Technology. His research interests encompass time series and sequence modeling, biomedical big data, recommender systems. Dr. Cheng has contributed several papers to renowned conference proceedings and journals, including KDD, WWW, SIGIR, WSDM, ICDM, and ACM Transactions on Knowledge and Data Engineering (TKDE). He has also served on the program committees for top-tier conferences and as a reviewer for international journals. |

|

Daoyu Wang received the B.E. degree in computer science from the University of Science and Technology of China (USTC), Hefei, China, where he is currently pursuing the master’s degree with the School of Computer Science and Technology and the State Key Laboratory of Cognitive Intelligence. His research interests include retrieval-augmented generation and LLM agents. He has authored or co-authored several papers in premier conferences such as ICML and WWW. |

|

Shuo Yu received the B.E. degree in computer science from the University of Science and Technology of China (USTC), Hefei, China, where he is currently pursuing the master’s degree with the School of Artificial Intelligence and Data Science and the State Key Laboratory of Cognitive Intelligence. His research interests include retrieval-augmented generation and LLM agents. He has authored or co-authored several papers in premier conferences such as CIKM and WWW. |

|

Qingchuan Li received the B.E. degree in computer science from the University of Science and Technology of China (USTC), Hefei, China, and is currently pursuing the master’s degree with the School of Computer Science and Technology and the State Key Laboratory of Cognitive Intelligence. His research interests include LLM logical reasoning and LLM agents, and he has authored or co-authored several papers in premier conferences such as AAAI and WWW |

|

Jie Ouyang received the B.E. degree in computer science from the University of Science and Technology of China (USTC), Hefei, China, where he is currently pursuing the master’s degree with the School of Computer Science and Technology and the State Key Laboratory of Cognitive Intelligence. His research interests include retrieval-augmented generation and reinforcement learning. He has authored or co-authored several papers in premier conferences such as ACL and KDD. |

|

Yucong Luo received his bachelor’s degree in Data Science and Big Data Technology from the University of Science and Technology of China (USTC), Hefei, China. He is currently pursuing a master’s degree at the School of Artificial Intelligence and Data Science, USTC, and the State Key Laboratory of Cognitive Intelligence. His research interests include large language model agents (LLM Agents) and LLM-enhanced recommendation systems (LLM4Rec). He has authored or co-authored several papers published in top-tier international conferences, including AAAI, ICML, WWW, NeurIPS, and ACL. |

|

Yiju Zhang received the B.E. degree from Zhengzhou University. He is currently pursuing the master’s degree with the School of Artificial Intelligence and Data Science, University of Science and Technology of China (USTC), and the State Key Laboratory of Cognitive Intelligence. His research interests include LLM agents and agentic RL. |

|

Qi Liu (Member, IEEE) received the Ph.D. degree in computer science from the University of Science and Technology of China (USTC), Hefei, China, in 2013. He is currently a Professor with the School of Computer Science and Technology. He has authored or coauthored papers published prolifically in refereed journals and conference proceedings, including IEEE Transactions on Knowledge and Data Engineering, ACM Transactions on Information Systems, and KDD conference. His research interests include data mining and intelligent education. Dr. Liu is an Associate Editor for IEEE TRANSACTIONS ON BIG DATA and Neurocomputing. He was the recipient of KDD’ 18 Best Student Paper Award, ICDM’ 11 Best Research Paper Award, and China Outstanding Youth Science Foundation in 2019. He is a Member of the Alibaba DAMO Academy Young Fellow. |

|

Enhong Chen (Fellow, IEEE) received the PhD degree from the University of Science and Technology of China (USTC), in 1996. He is currently a professor of USTC. His research areas include data mining and knowledge discovery, machine learning and artificial intelligence. His research is supported by the National Science Foundation for Distinguished Young Scholars of China. He has published more than 200 papers in refereed conferences and journals, including IEEE Transactions on Knowledge and Data Engineering, ACM Transactions on Information Systems, IEEE Transactions on Pattern Analysis and Machine Intelligence, IEEE Transactions on Neural Networks and Learning Systems, ICML, NeurIPS, KDD, ICLR and AAAI. He is an associate editor of IEEE Transactions on Knowledge and Data Engineering, IEEE Transactions on Systems, Man, and Cybernetics, ACM Transactions on Intelligent Systems and Technology, World Wide Web Journal. He has served regularly on the organization and program committees of numerous conferences, including as a program co-chair of ICKG-2020, a program co-chair for PAKDD-2022. He received the Best Application Paper Award on KDD 2008, the Best Student Paper Award on KDD 2018 (Research), the Best Research Paper Award on ICDM 2011. |

| Key Focus | Representative Work | Year | Categories | Perspective on Contextuality |

|---|---|---|---|---|

| Comprehensive Architecture | Xi et al. [12] | 2023 | General Arch. | Viewed primarily as static prompt/input |

| Module Construction | Wang et al. [18] | 2023 | General Arch. | Viewed primarily as static prompt/input |

| Multi-Agent Collaboration | Tran et al. [10] | 2025 | General Arch. | Limited to conversational history |

| MAS Progress | Chawla et al. [19] | 2024 | General Arch. | Limited to conversational history |

| Long-term Optimization | Zhang et al. [8] | 2025 | Agentic Learning | Focus on scalar rewards over context |

| Self-Evolution | Gao et al. [9] | 2025 | Agentic Learning | Context treated as training data |

| Planning | Huang et al. [7] | 2024 | Memory Systems | Focus on storage rather than interaction |

| Retrieval Augmentation | Zhu et al. [20] | 2023 | Memory Systems | Focus on storage rather than interaction |

| Evaluation | Hassan et al. [11] | 2025 | Domain Apps. | Identifies context loss in long turns |

| Scientific Discovery | Ren et al. [4] | 2025 | Domain Apps. | Context as domain-specific knowledge |

| Contextual Cognition | This Work | 2025 | Unified Framework | Contextuality as the core |

| Agent Paradigm | Core Problem | Applications |

|---|---|---|

| Prompt Engineering | Prompt Optimization | ChatGPT, Claude |

| Context Engineering | Context Augmentation | Perplexity, Copilot |

| Harness Engineering | Contextual Cognition | OpenClaw, Claude Code |

| Dimension | RL | LLM Agents |

|---|---|---|

| Formulation | Policy Optimization | Search Optimization |

| State | Fully Markovian () | Partially Observable () |

| Action | Environmental Primitives | Contextual Interaction |

| Policy | Learned Weights () | Prior () with Search |

| Constraint | Training Efficiency | Inference Budget (B) |

| Category | Benchmark | Task Type | Key Focus |

|---|---|---|---|

| Contextual Encoding | BBEH [154] | Hard reasoning set | Stress-testing world-model consistency. |

| LongMemEval [152] | Long-term interactive dialogue | Long-term memory stability and drift resistance. | |

| MemoryAgentBench [159] | Incremental multi-turn tasks | Accurate recall and cross-turn integration. | |

| StreamBench [155] | Streaming input–feedback tasks | Continuous improvement via streaming feedback. | |

| Contextual Interaction | GAIA [147] | Real-world multi-step tasks | Open-world tasks with intention following and tool use. |

| HLE [148] | Human-level exams | Broad situational cognition in human-level tasks. | |

| Uni-RLHF [149] | Feedback-driven adaptation | Adapting behavior based on user feedback. | |

| ToolLLM [98] | API/function calling | Reliable API calling and tool invocation. | |

| StableToolBench [150] | Virtual API environment | Stable, reproducible large-scale tool use. | |

| AgentBench [153] | Multi-environment agent tasks | Acting across diverse interactive environments. | |

| Contextual Reasoning | REALM-Bench [158] | Real-world planning | Dynamic planning and replanning. |

| FlowBench [156] | Workflow-guided planning | Workflow-guided multi-step reasoning. | |

| Reflection-Bench [151] | Cognitive psychology tasks | Epistemic reasoning and belief updates. | |

| Bench [157] | Consistency-based reasoning | Long-chain reflective reasoning. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).