Submitted:

13 April 2026

Posted:

14 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

- We propose a model-based stochastic augmented Lagrangian method (MSALM) for online stochastic optimization. In each round, we construct model functions to approximate the stochastic objective and constraint functions, which are sampled from time-varying distributions. This construction reduces computational complexity. The step size is designed in a dynamic form and decreases as t increases to accelerate convergence.

- 2.

- We adopt dynamic regret and constraint violation as our performance metrics. These measures are particularly suited for online stochastic optimization with time-varying distributions. Under the assumptions, we prove that the algorithm’s regret and constraint violation have sublinear bounds in terms of the total number of rounds T.

- 3.

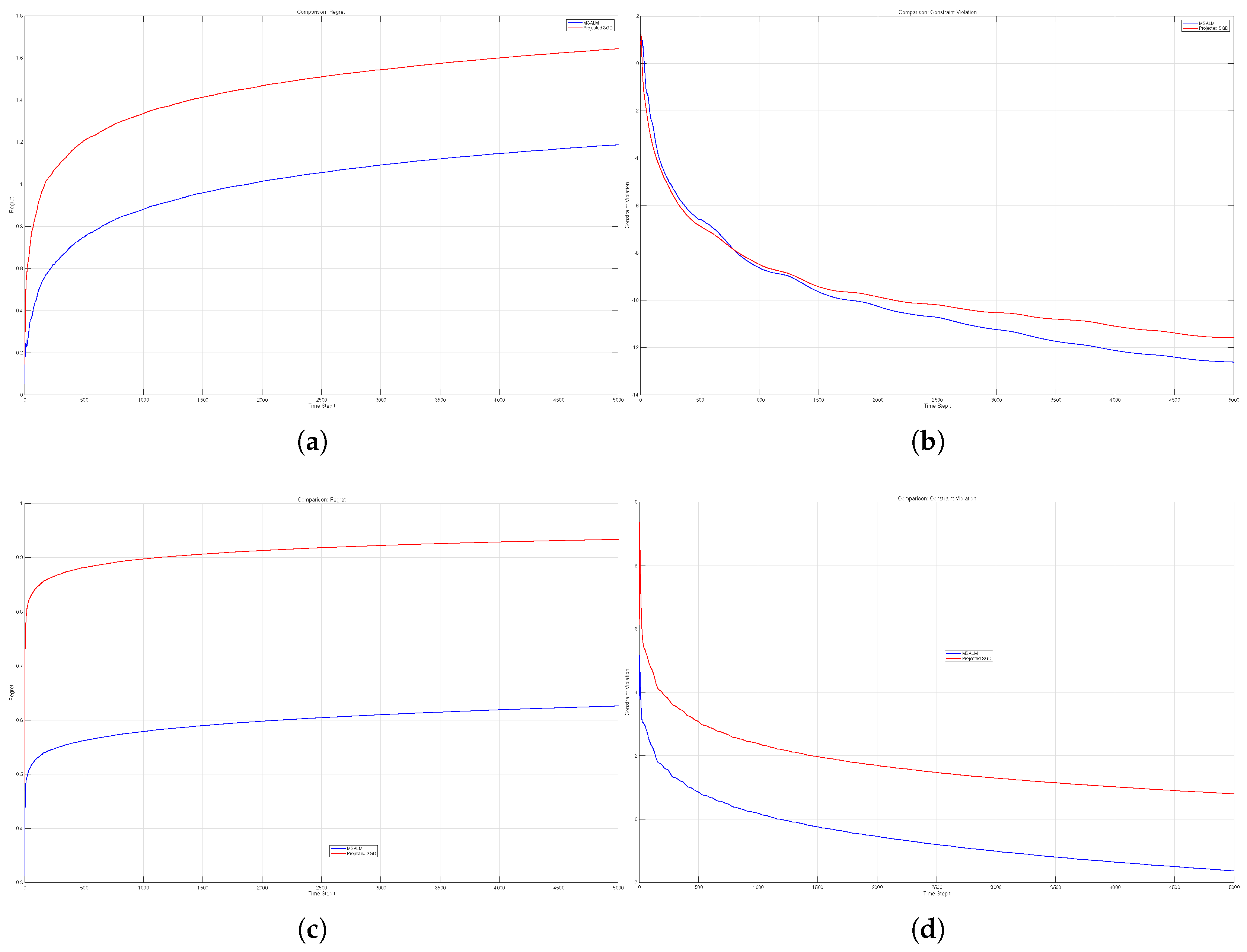

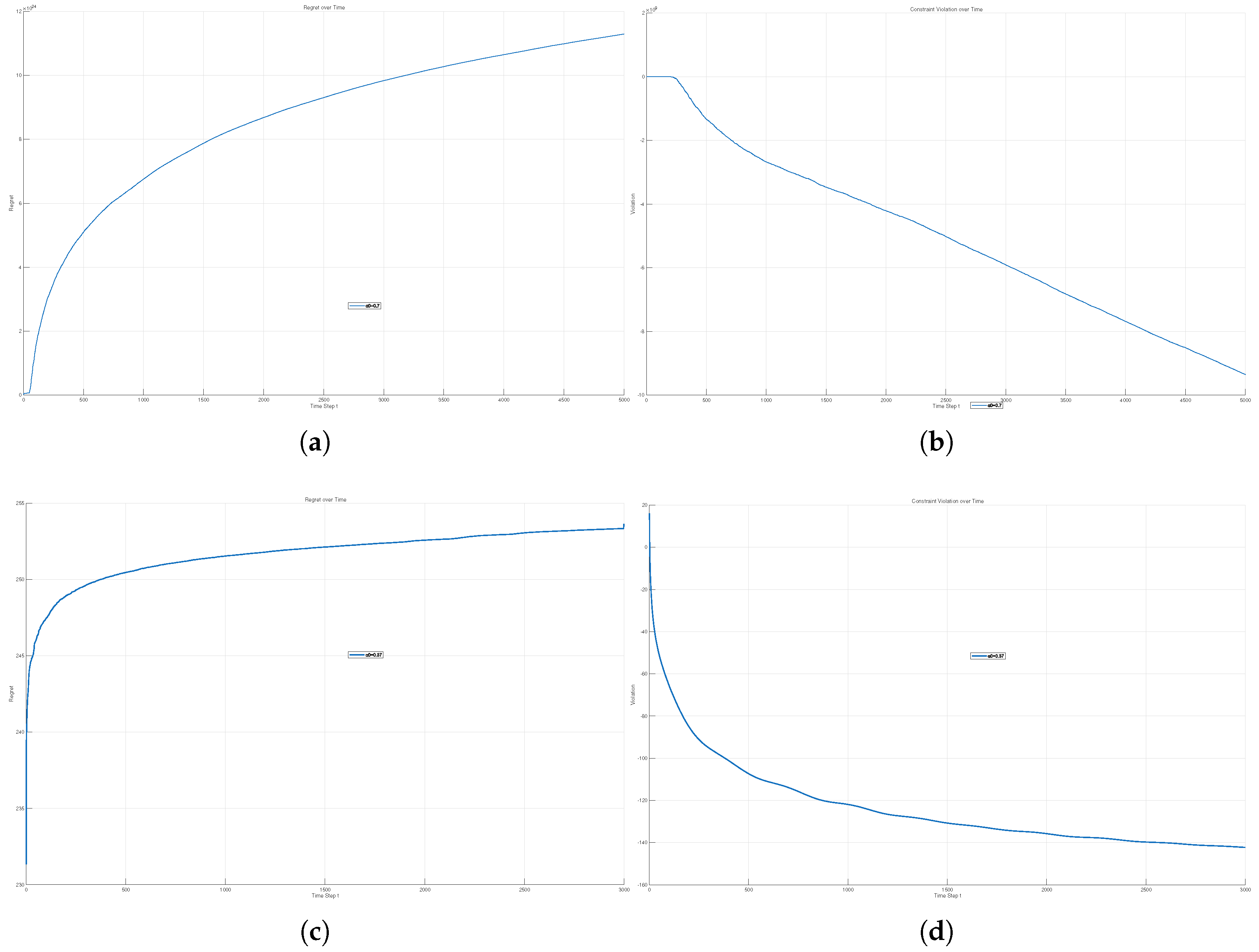

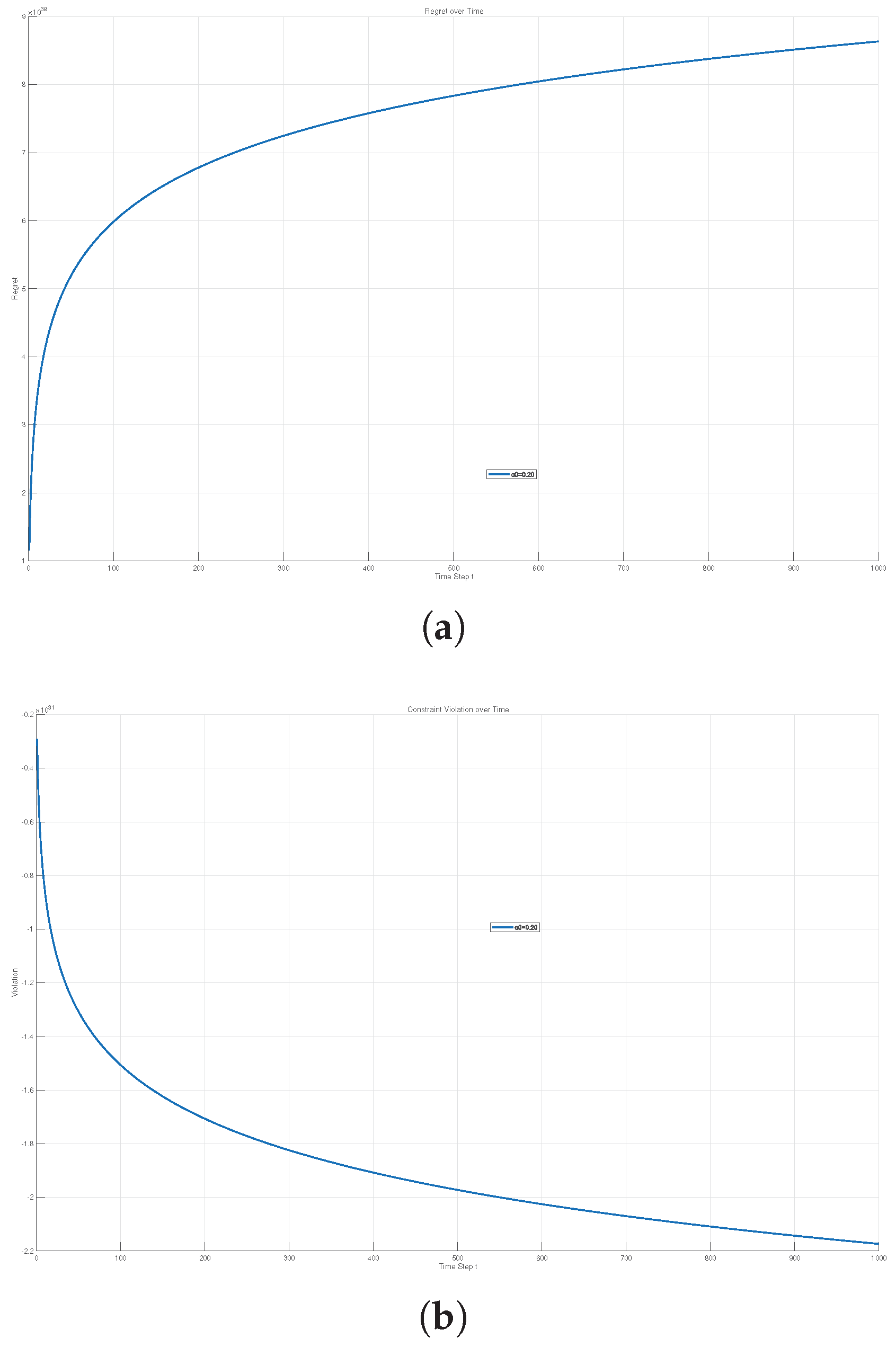

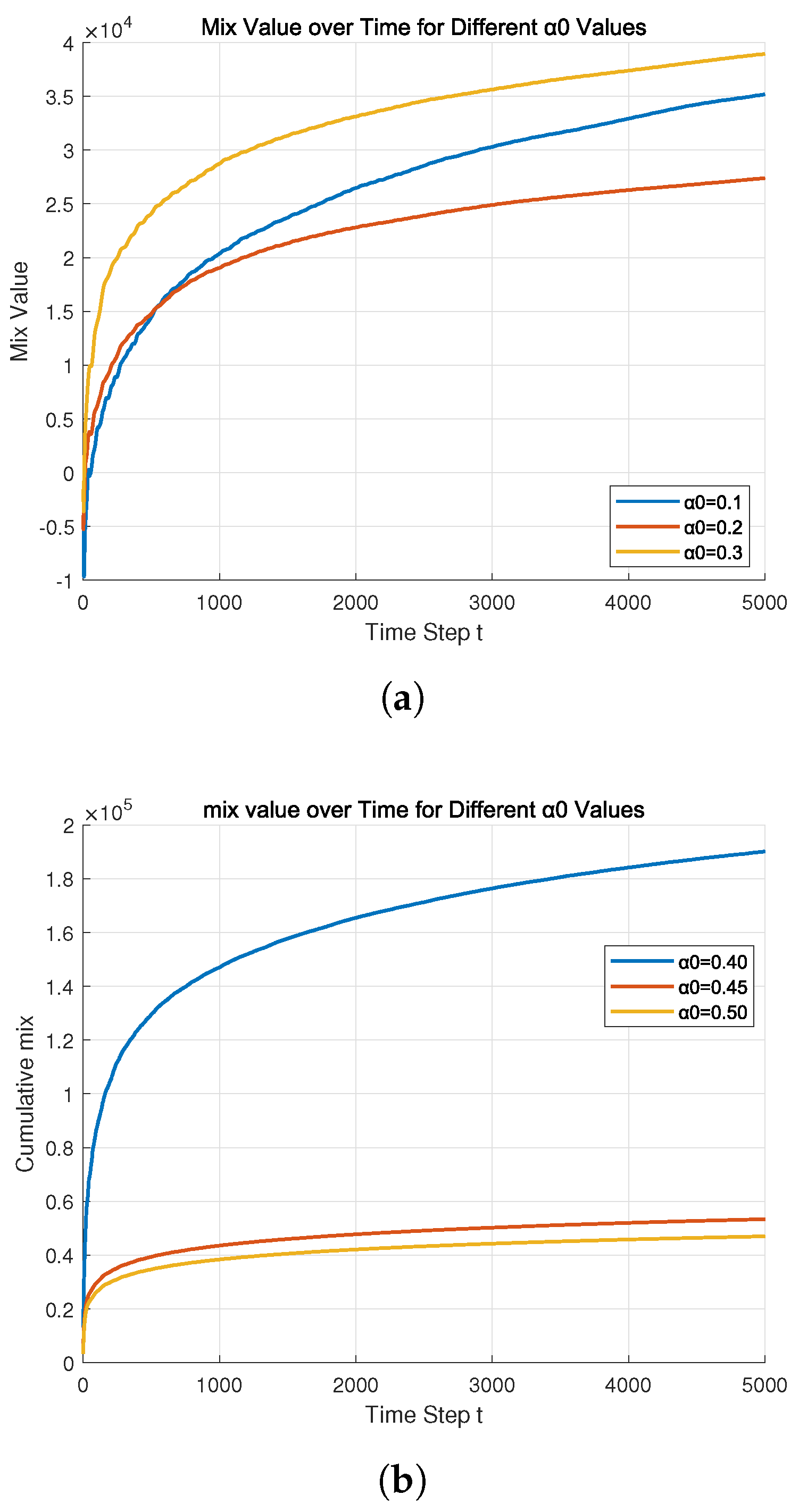

- We demonstrate the practical efficacy of our proposed algorithm through a series of simulation experiments. In the contexts of adaptive filtering and online logistic regression, we compare our method with PSGD. The results show that MSALM attains lower regret and constraint violation bounds than PSGD, indicating that MSALM converges more rapidly toward the theoretical optimum while maintaining stricter adherence to constraints. In addition, results from the time-varying smart grid energy dispatch, online network resource allocation, and path planning problems collectively confirm that regret and constraint violation have bounds .

2. MSALM for Online Stochastic Optimization

2.1. The Online Stochastic Optimization Problem

2.2. MSALM Algorithm

- Linearized model:

- Quadratic model:

- Truncated model:

- Plain model:

| Algorithm 1 MSALM |

|

3. Convergence Analysis

4. Numerical Experiments

4.1. Comparative Experiment with the Existing Algorithm

4.2. Experiments Under Existing Models

4.3. Experiment Combining Our Algorithm with Supervised Learning

| Number of Control Points | 3D RMSE (m) | Mean Error (m) | Max Error (m) |

|---|---|---|---|

| 6 | 16212.93 | 14630.05 | 42953.94 |

| 7 | 15278.20 | 13697.46 | 28088.96 |

| 8 | 10301.73 | 9577.57 | 20571.04 |

| 9 | 7767.29 | 7094.96 | 15264.24 |

| 10 | 6056.13 | 5466.36 | 12094.13 |

| 11 | 6474.88 | 5956.19 | 12599.98 |

| 12 | 6416.03 | 5793.27 | 12159.70 |

| Control Point | X (m) | Y (m) | Z (m) |

|---|---|---|---|

| 1 | 478288.923 | 4423806.307 | 1199.714 |

| 2 | 483007.653 | 4412762.620 | 1939.867 |

| 3 | 491176.780 | 4411013.545 | 1022.683 |

| 4 | 540450.109 | 4386975.935 | 6298.608 |

| 5 | 613313.197 | 4283290.653 | 6170.731 |

| 6 | 753322.541 | 4268797.429 | 6188.807 |

| 7 | 792318.111 | 4171779.364 | 6157.780 |

| 8 | 773451.251 | 4061749.319 | 3778.580 |

| 9 | 782481.198 | 4033694.042 | 2106.506 |

| 10 | 782988.940 | 4014086.869 | 1142.003 |

5. Conclusions

References

- Zinkevich, M. Online convex programming and generalized infinitesimal gradient ascent. In Proceedings of the 20th International Conference on Machine Learning; 2003, pp. 928–935.

- Shalev-Shwartz, S. Online learning and online convex optimization. Found. Trends Mach. Learn. 2012, 4, 107–194. [Google Scholar] [CrossRef]

- Hazan, E. Introduction to online convex optimization. Found. Trends Optim. 2016, 2, 157–325. [Google Scholar] [CrossRef]

- Chen, T.; Ling, Q.; Giannakis, G. B. An online convex optimization approach to proactive network resource allocation. IEEE Trans. Signal Process. 2017, 65, 6350–6364. [Google Scholar] [CrossRef]

- Zhang, Y.; Dall’Anese, E.; Hong, M. Online proximal-ADMM for time-varying constrained convex optimization. IEEE Trans. Signal Inf. Process. Netw. 2021, 7, 144–155. [Google Scholar] [CrossRef]

- Tsuchiya, T.; Ito, S. Fast rates in stochastic online convex optimization by exploiting the curvature of feasible sets. In Advances in Neural Information Processing Systems 37 (NeurIPS 2024); 2024. [Google Scholar]

- Cao, X.; Zhang, J.; Poor, H. V. Online stochastic optimization with time-varying distributions. IEEE Trans. Autom. Control 2020, 66, 1840–1847. [Google Scholar] [CrossRef]

- Kiefer, J.; Wolfowitz, J. Stochastic estimation of the maximum of a regression function. The Annals of Mathematical Statistics 1952, 23, 462–466. [Google Scholar] [CrossRef]

- Johnson, R.; Zhang, T. Accelerating stochastic gradient descent using predictive variance reduction. Advances in Neural Information Processing Systems 2013, 26, 315–323. [Google Scholar]

- Qi, R.; Xue, D.; Zhai, Y. A momentum-based adaptive primal–dual stochastic gradient method for non-convex programs with expectation constraints. Mathematics 2024, 12, 2393. [Google Scholar] [CrossRef]

- Arrow, K. J.; Hurwicz, L.; Uzawa, H. Studies in Linear and Non-Linear Programming; Stanford University Press: Stanford, CA, USA, 1958. [Google Scholar]

- Yu, H.; Neely, M. J.; Wei, X. Online convex optimization with stochastic constraints. In Advances in Neural Information Processing Systems; 2017; pp. 1428–1438. [Google Scholar]

- Lesage-Landry, A.; Wang, H.; Shames, I.; Mancarella, P.; Taylor, J. A. Online convex optimization of multi-energy building-to-grid ancillary services. IEEE Trans. Control Syst. Technol. 2020, 28, 2416–2431. [Google Scholar] [CrossRef]

- Liu, H.; Xiao, X.; Zhang, L. Augmented Lagrangian methods for time-varying constrained online convex optimization. J. Oper. Res. Soc. China 2025, 13, 364–392. [Google Scholar]

- Rockafellar, R. T. Augmented Lagrangians and applications of the proximal point algorithm in convex programming. Math. Oper. Res. 1976, 1, 97–116. [Google Scholar] [CrossRef]

- Suo, W.; Li, W.; Zhang, B.; Liu, Y. Distributed online convex optimization with multiple coupled constraints: A double accelerated push-pull algorithm. J. Franklin Inst. 2024, 361, 106884. [Google Scholar] [CrossRef]

- Hou, R.; Li, X.; Shi, Y. Online composite optimization with time-varying regularizers. J. Franklin Inst. 2024, 361, 106884. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).