Figure 1.

Overall architecture of WDA-TNET. The pipeline consists of four stages: (1) WGSR preprocessing for selective motion-blur restoration, (2) GSCIM-based backbone for glare-suppressed feature extraction, (3) DCPAF neck for direction-aware multi-scale fusion, and (4) ATSS detection head with MPDIoU loss for stable supervision on slender densely-packed targets.

Figure 1.

Overall architecture of WDA-TNET. The pipeline consists of four stages: (1) WGSR preprocessing for selective motion-blur restoration, (2) GSCIM-based backbone for glare-suppressed feature extraction, (3) DCPAF neck for direction-aware multi-scale fusion, and (4) ATSS detection head with MPDIoU loss for stable supervision on slender densely-packed targets.

Figure 2.

Three-stage pipeline of WGSR. The frequency-domain branch computes a wavelet-energy degradation mask; the spatial reconstruction branch recovers high-frequency textures via a lightweight three-layer CNN; the gated fusion stage injects the residual selectively into degraded regions only.

Figure 2.

Three-stage pipeline of WGSR. The frequency-domain branch computes a wavelet-energy degradation mask; the spatial reconstruction branch recovers high-frequency textures via a lightweight three-layer CNN; the gated fusion stage injects the residual selectively into degraded regions only.

Figure 3.

Wavelet sub-band decomposition and degradation mask generation. From left to right: input image; low-frequency approximation ; horizontal, vertical, and diagonal high-frequency components ; aggregated energy map ; final degradation mask M after normalization and Sigmoid gating.

Figure 3.

Wavelet sub-band decomposition and degradation mask generation. From left to right: input image; low-frequency approximation ; horizontal, vertical, and diagonal high-frequency components ; aggregated energy map ; final degradation mask M after normalization and Sigmoid gating.

Figure 4.

Degradation masks generated under different gating steepness k. A small k (left) includes too many low-contrast regions; a large k (right) over-clips the transition zone. At (middle), the mask covers approximately one standard deviation below the energy mean, which matches the measured blur-kernel-to-edge-width ratio in the CTT dataset.

Figure 4.

Degradation masks generated under different gating steepness k. A small k (left) includes too many low-contrast regions; a large k (right) over-clips the transition zone. At (middle), the mask covers approximately one standard deviation below the energy mean, which matches the measured blur-kernel-to-edge-width ratio in the CTT dataset.

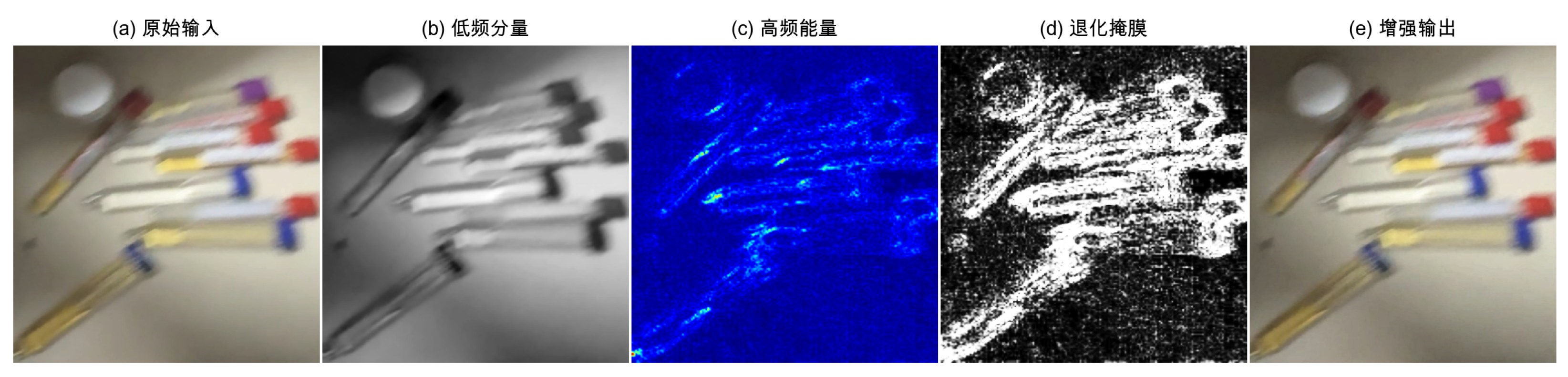

Figure 5.

Visualization of WGSR three-stage collaborative processing. From left to right: original blurred input; degradation mask M (bright regions = clear, dark = degraded); reconstruction output Y; final restored image . The restoration is concentrated on blur-affected zones while specular highlights remain unchanged.

Figure 5.

Visualization of WGSR three-stage collaborative processing. From left to right: original blurred input; degradation mask M (bright regions = clear, dark = degraded); reconstruction output Y; final restored image . The restoration is concentrated on blur-affected zones while specular highlights remain unchanged.

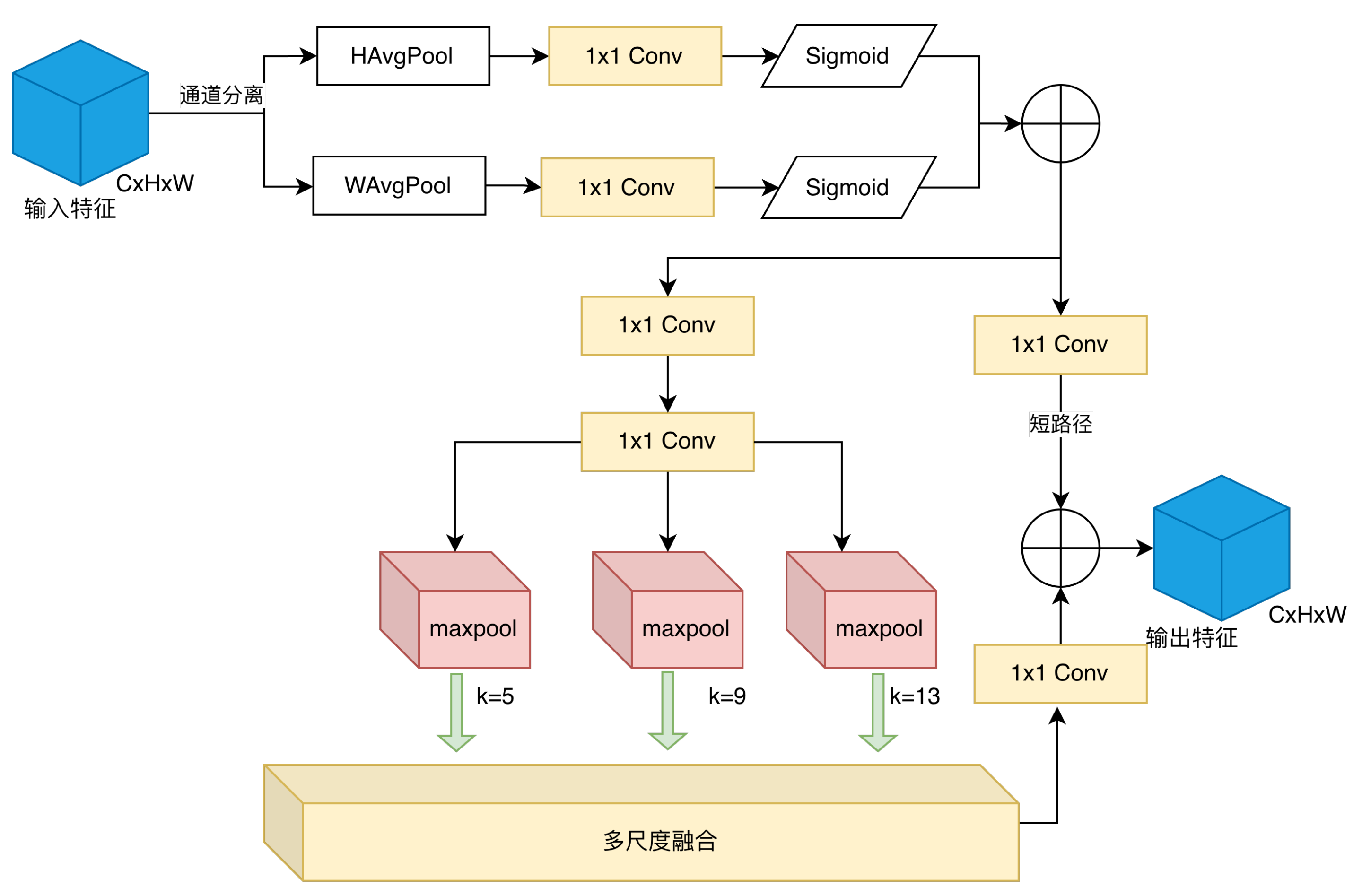

Figure 6.

Structure of the Direction-Aware Cross-Stage Pyramid Attention Fusion (DCPAF) module. The input feature is first recalibrated by two independent 1D pooling branches along height and width. The recalibrated feature then goes through chained max-pooling for multi-scale context, which is concatenated with a cross-stage skip path and fused by convolution.

Figure 6.

Structure of the Direction-Aware Cross-Stage Pyramid Attention Fusion (DCPAF) module. The input feature is first recalibrated by two independent 1D pooling branches along height and width. The recalibrated feature then goes through chained max-pooling for multi-scale context, which is concatenated with a cross-stage skip path and fused by convolution.

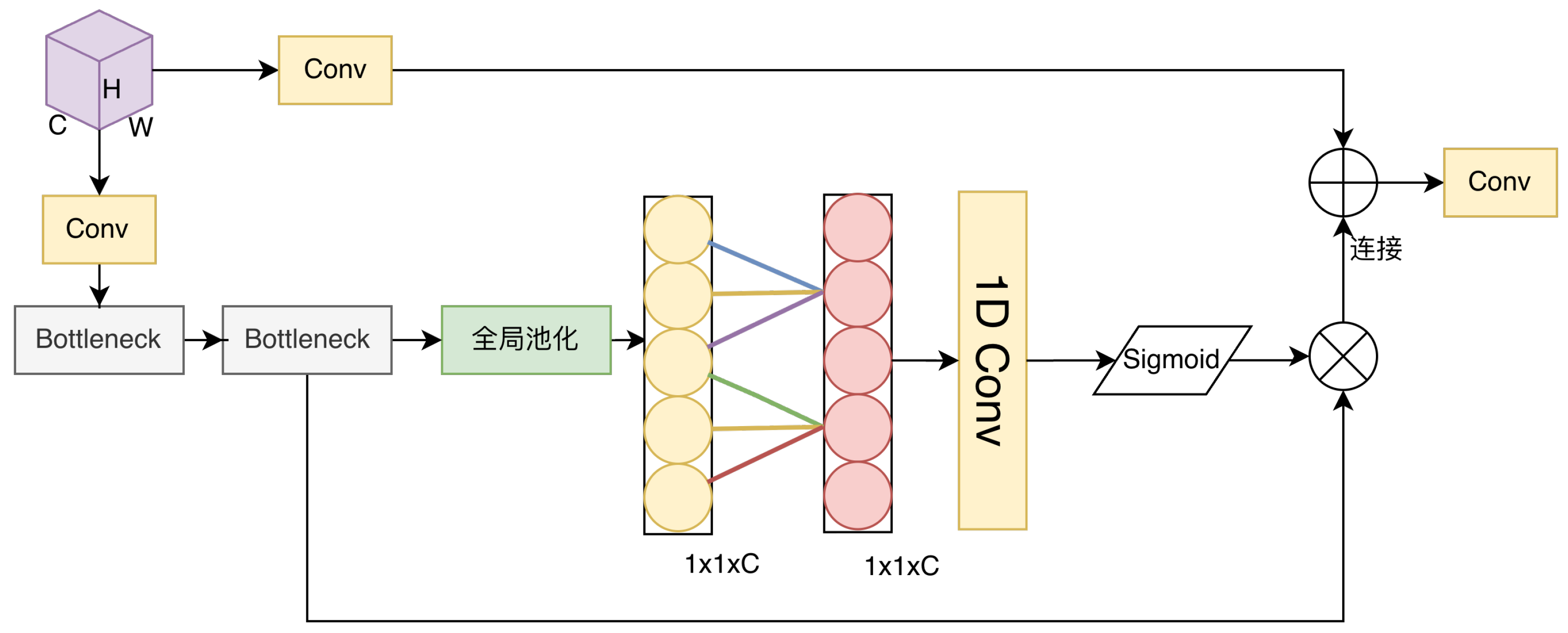

Figure 7.

Architecture of the Glare-Suppressed Channel Interaction Module (GSCIM). The input is split into a transformation branch (cascade of convolutions and ECA channel-weighting units) and a shallow preservation branch. Both branches are concatenated and compressed by a convolution. The ECA unit computes local cross-channel weights via adaptive 1D convolution, suppressing glare-dominated responses while retaining liquid-level edge cues.

Figure 7.

Architecture of the Glare-Suppressed Channel Interaction Module (GSCIM). The input is split into a transformation branch (cascade of convolutions and ECA channel-weighting units) and a shallow preservation branch. Both branches are concatenated and compressed by a convolution. The ECA unit computes local cross-channel weights via adaptive 1D convolution, suppressing glare-dominated responses while retaining liquid-level edge cues.

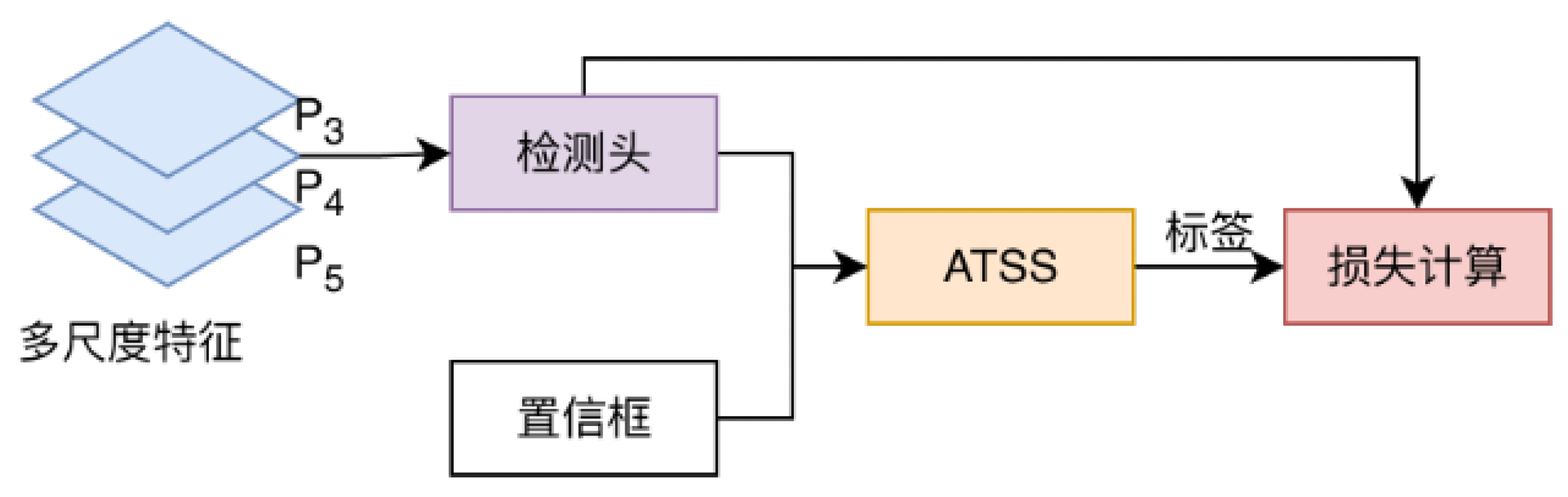

Figure 8.

Position of the ATSS module within the detection head. Multi-scale features P3–P5 pass through the prediction module; the outputs feed into both the loss computation branch and the ATSS assigner, which dynamically determines positive/negative labels based on candidate-set statistics rather than a fixed IoU threshold.

Figure 8.

Position of the ATSS module within the detection head. Multi-scale features P3–P5 pass through the prediction module; the outputs feed into both the loss computation branch and the ATSS assigner, which dynamically determines positive/negative labels based on candidate-set statistics rather than a fixed IoU threshold.

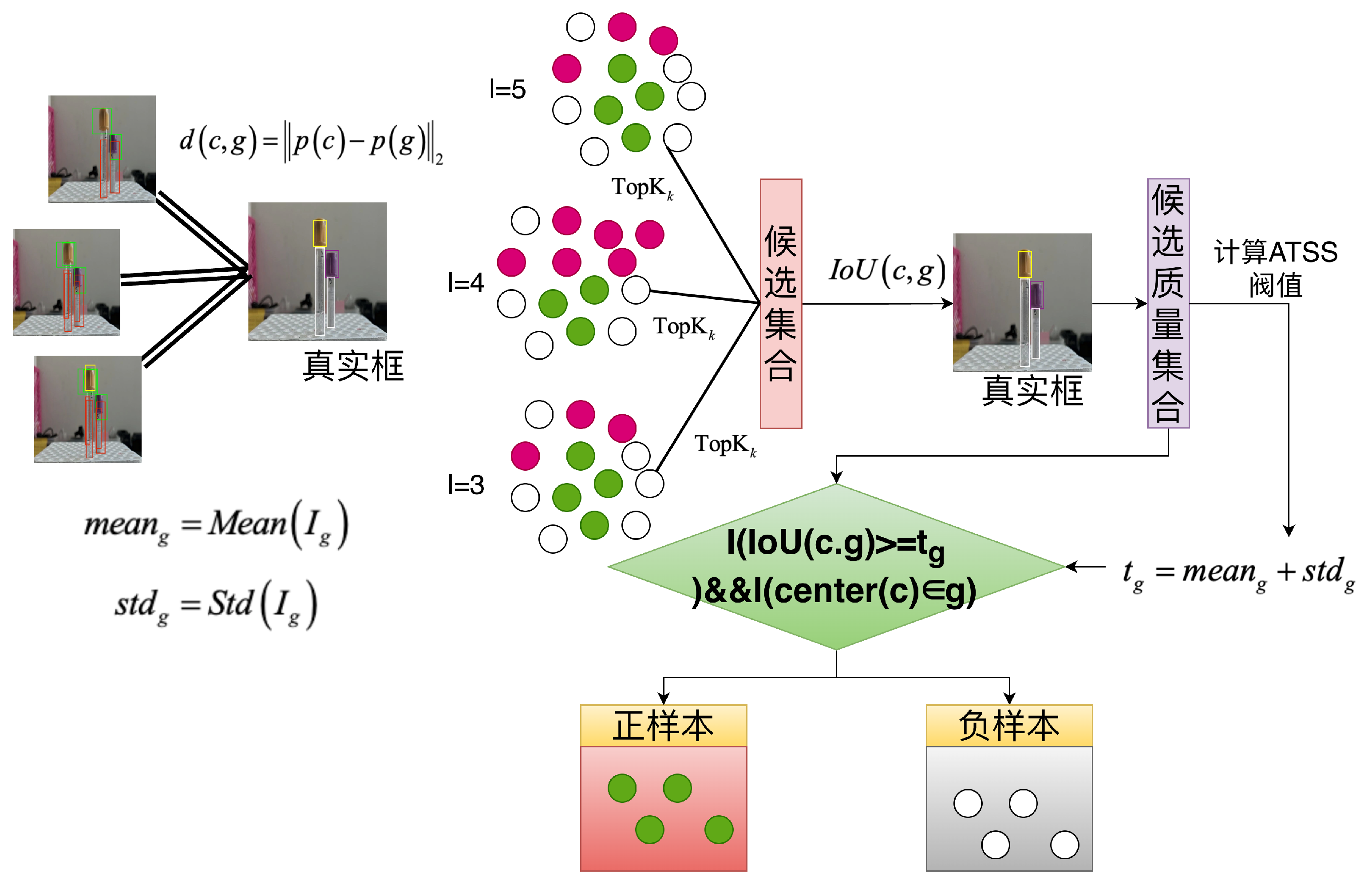

Figure 9.

Flowchart of ATSS adaptive sample assignment. For each ground-truth box, top-k candidates are selected per feature level by center distance, merged across levels, and an adaptive IoU threshold is computed from the candidate set. A candidate is labeled positive only when it simultaneously satisfies the IoU condition and the center-in-box constraint; conflicts from dense tube layouts are resolved by assigning each candidate to the ground truth with the highest IoU.

Figure 9.

Flowchart of ATSS adaptive sample assignment. For each ground-truth box, top-k candidates are selected per feature level by center distance, merged across levels, and an adaptive IoU threshold is computed from the candidate set. A candidate is labeled positive only when it simultaneously satisfies the IoU condition and the center-in-box constraint; conflicts from dense tube layouts are resolved by assigning each candidate to the ground truth with the highest IoU.

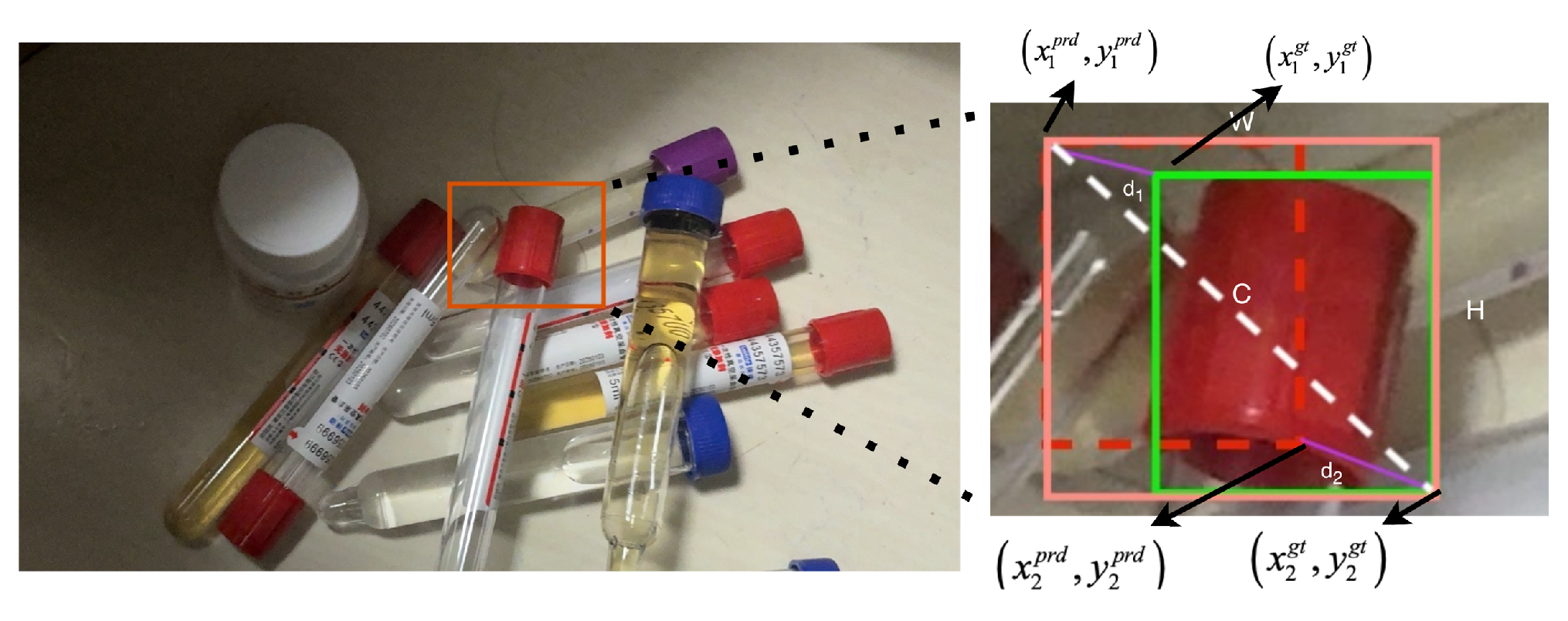

Figure 10.

Corner-distance constraint of MPDIoU. Distances and are computed between the predicted and ground-truth top-left and bottom-right corners respectively, then normalized by the diagonal of the minimum enclosing rectangle. This formulation provides non-zero gradients regardless of overlap, and penalizes short-axis and long-axis deviations equally.

Figure 10.

Corner-distance constraint of MPDIoU. Distances and are computed between the predicted and ground-truth top-left and bottom-right corners respectively, then normalized by the diagonal of the minimum enclosing rectangle. This formulation provides non-zero gradients regardless of overlap, and penalizes short-axis and long-axis deviations equally.

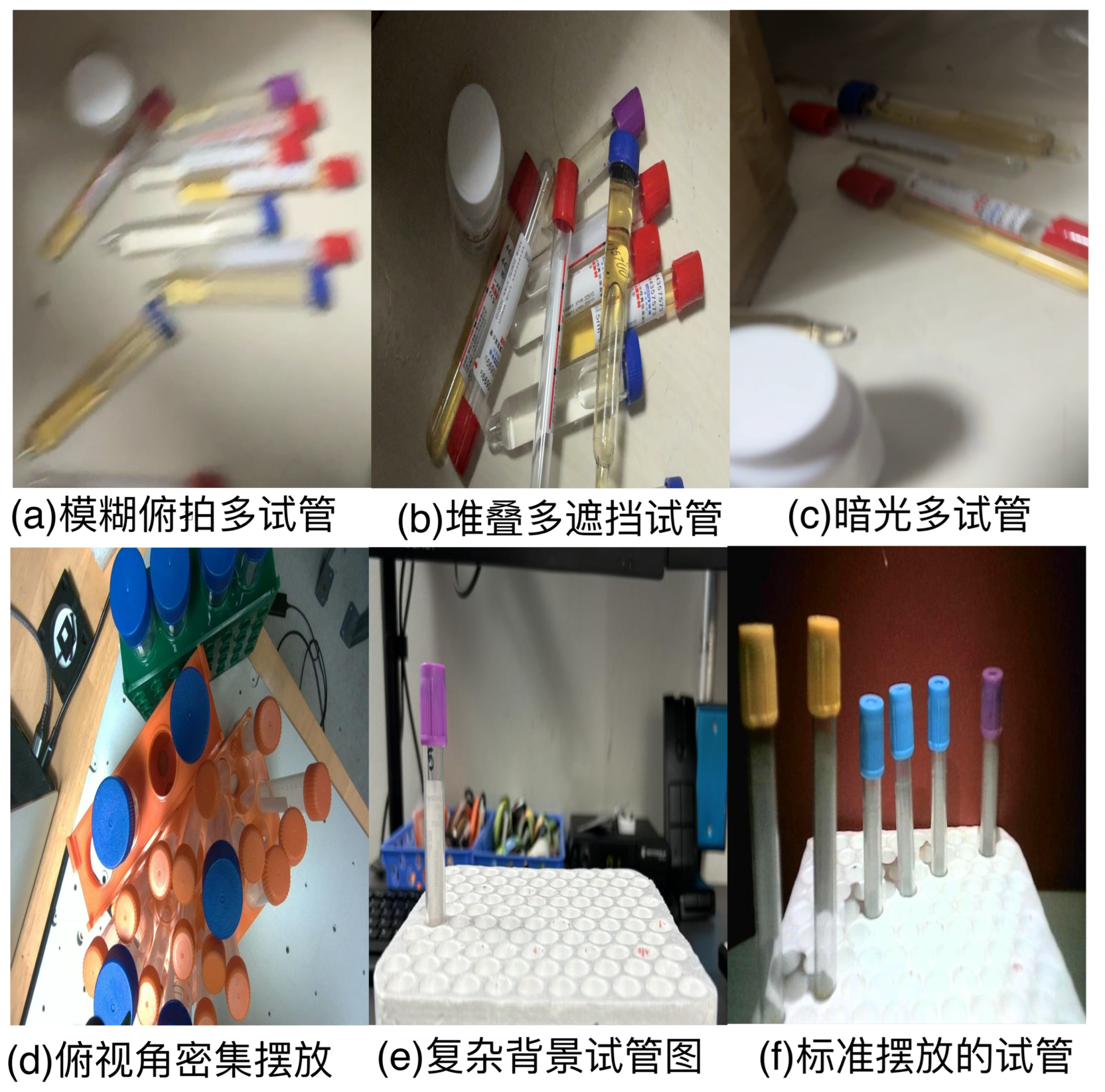

Figure 11.

CTT dataset sample collection scenes. The top row shows pre-sorting layouts with tubes in mixed, unordered states; the bottom row shows rack-loaded configurations ready for robotic scanning. Both stages span hospital laboratory and ward settings.

Figure 11.

CTT dataset sample collection scenes. The top row shows pre-sorting layouts with tubes in mixed, unordered states; the bottom row shows rack-loaded configurations ready for robotic scanning. Both stages span hospital laboratory and ward settings.

Figure 12.

CTT dataset annotation examples. Four annotation dimensions are shown: physical specification (tube size), internal sample state (blood/urine/empty), external barcode label, and cap color. Each dimension contributes complementary information to support robot-assisted tube sorting.

Figure 12.

CTT dataset annotation examples. Four annotation dimensions are shown: physical specification (tube size), internal sample state (blood/urine/empty), external barcode label, and cap color. Each dimension contributes complementary information to support robot-assisted tube sorting.

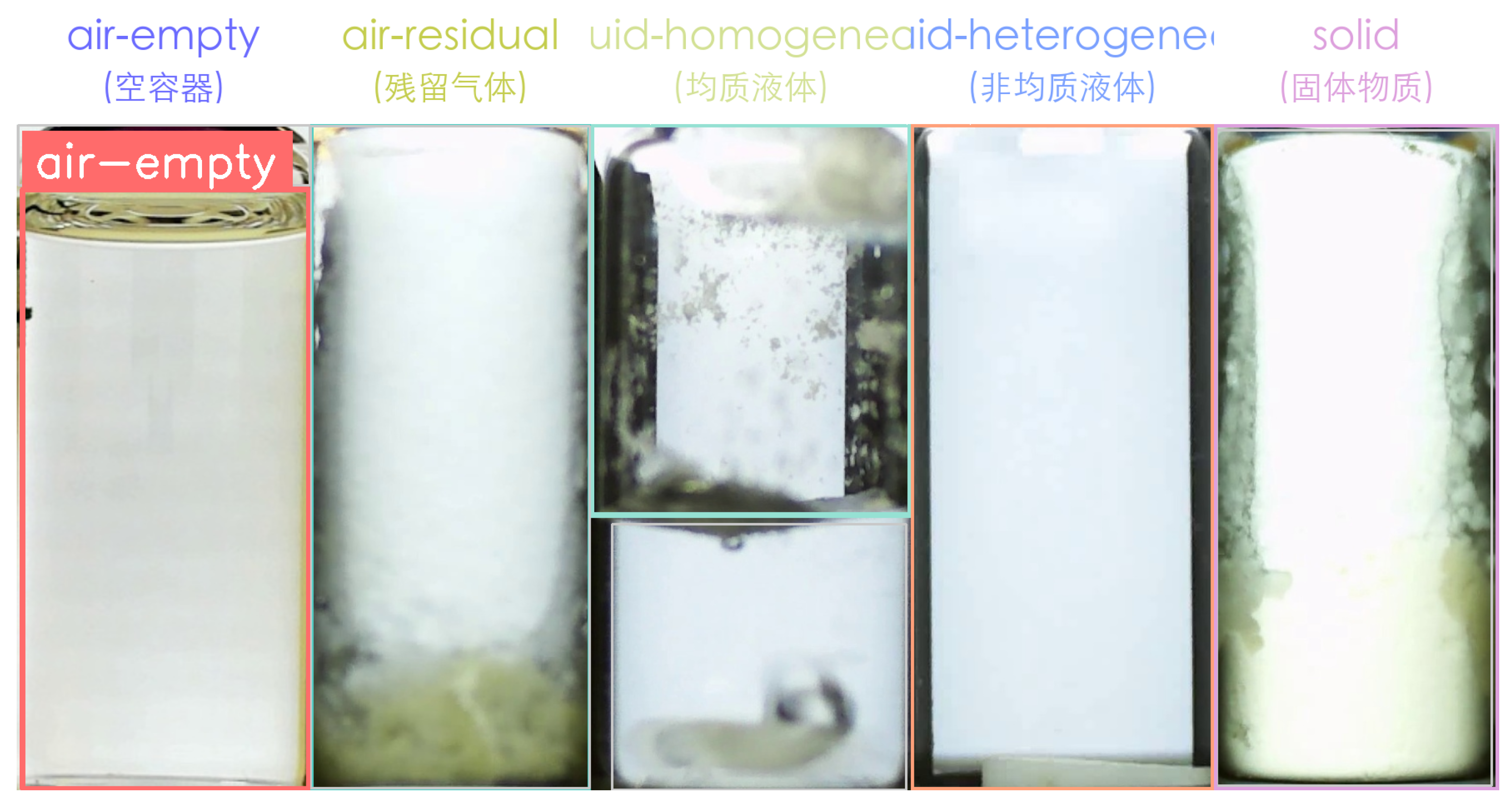

Figure 13.

HeinSight4.0 dataset: five-category annotation examples. From left to right: air (empty container), air with residual gas, clear liquid, turbid liquid, and solid/particle phase. The transparent container walls introduce strong reflection and refraction in all categories.

Figure 13.

HeinSight4.0 dataset: five-category annotation examples. From left to right: air (empty container), air with residual gas, clear liquid, turbid liquid, and solid/particle phase. The transparent container walls introduce strong reflection and refraction in all categories.

Figure 14.

Primary data augmentation examples. Each column illustrates one transform: horizontal flip, vertical flip, shift-scale-rotate, brightness perturbation, contrast perturbation, gamma correction, Mosaic, and MixUp. All transformations maintain annotation consistency via coordinate remapping.

Figure 14.

Primary data augmentation examples. Each column illustrates one transform: horizontal flip, vertical flip, shift-scale-rotate, brightness perturbation, contrast perturbation, gamma correction, Mosaic, and MixUp. All transformations maintain annotation consistency via coordinate remapping.

Figure 15.

Composition-level augmentation examples. Left: Mosaic (four images stitched), simulating dense and multi-scale tube layouts. Middle: MixUp (two images blended), training the model on overlapping or partially visible tubes. Right: Copy-Paste (tube instances transplanted), increasing instance diversity in under-represented cap-color categories.

Figure 15.

Composition-level augmentation examples. Left: Mosaic (four images stitched), simulating dense and multi-scale tube layouts. Middle: MixUp (two images blended), training the model on overlapping or partially visible tubes. Right: Copy-Paste (tube instances transplanted), increasing instance diversity in under-represented cap-color categories.

Figure 16.

Synthetic patch augmentation pipeline. From left to right: source annotated image; GrabCut foreground extraction; morphological opening to smooth mask boundaries; Gaussian feathering to produce a soft alpha channel; background image with color perturbation; final composited sample with retained bounding-box annotation.

Figure 16.

Synthetic patch augmentation pipeline. From left to right: source annotated image; GrabCut foreground extraction; morphological opening to smooth mask boundaries; Gaussian feathering to produce a soft alpha channel; background image with color perturbation; final composited sample with retained bounding-box annotation.

Figure 17.

Detail of GrabCut-based foreground segmentation and Gaussian feathering. Left: initial hard binary mask from GrabCut. Middle: mask after morphological opening removing boundary artifacts. Right: soft alpha channel after Gaussian convolution, enabling seamless Alpha-blend compositing.

Figure 17.

Detail of GrabCut-based foreground segmentation and Gaussian feathering. Left: initial hard binary mask from GrabCut. Middle: mask after morphological opening removing boundary artifacts. Right: soft alpha channel after Gaussian convolution, enabling seamless Alpha-blend compositing.

Figure 18.

Wavelet-enhanced augmentation examples for motion-blur simulation. Left pair: dense, relatively uniform tube arrangement ( low) yields mild blur. Right pair: sparse or clustered arrangement ( high) triggers stronger blur. WGSR restores the degraded training samples before they enter detector training.

Figure 18.

Wavelet-enhanced augmentation examples for motion-blur simulation. Left pair: dense, relatively uniform tube arrangement ( low) yields mild blur. Right pair: sparse or clustered arrangement ( high) triggers stronger blur. WGSR restores the degraded training samples before they enter detector training.

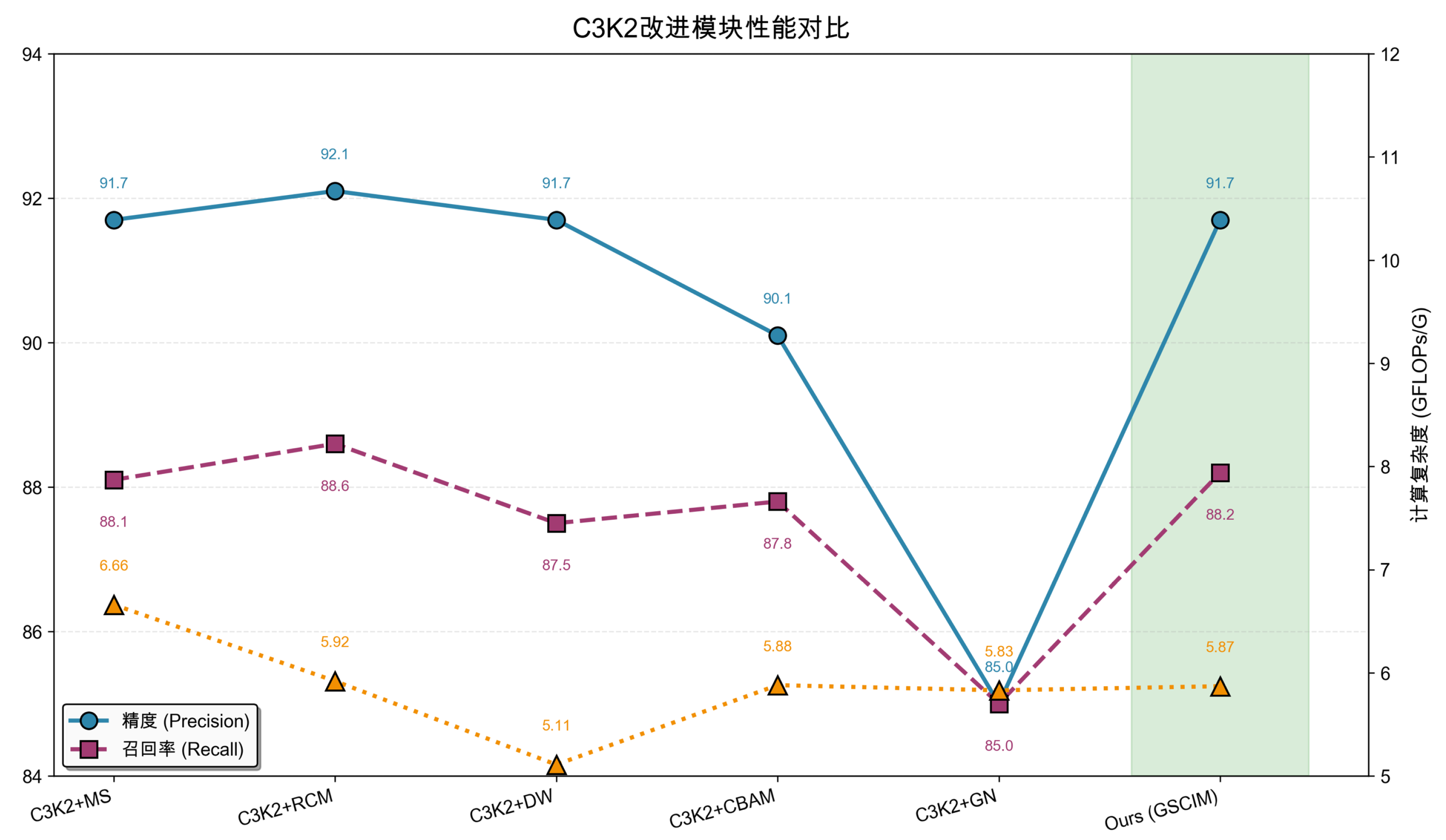

Figure 19.

Precision, recall, and GFLOPs comparison across C3k2 backbone variants on the CTT dataset. GSCIM achieves the best balance: comparable precision to C3k2+MS with lower GFLOPs and a consistently higher recall than all norm-based alternatives.

Figure 19.

Precision, recall, and GFLOPs comparison across C3k2 backbone variants on the CTT dataset. GSCIM achieves the best balance: comparable precision to C3k2+MS with lower GFLOPs and a consistently higher recall than all norm-based alternatives.

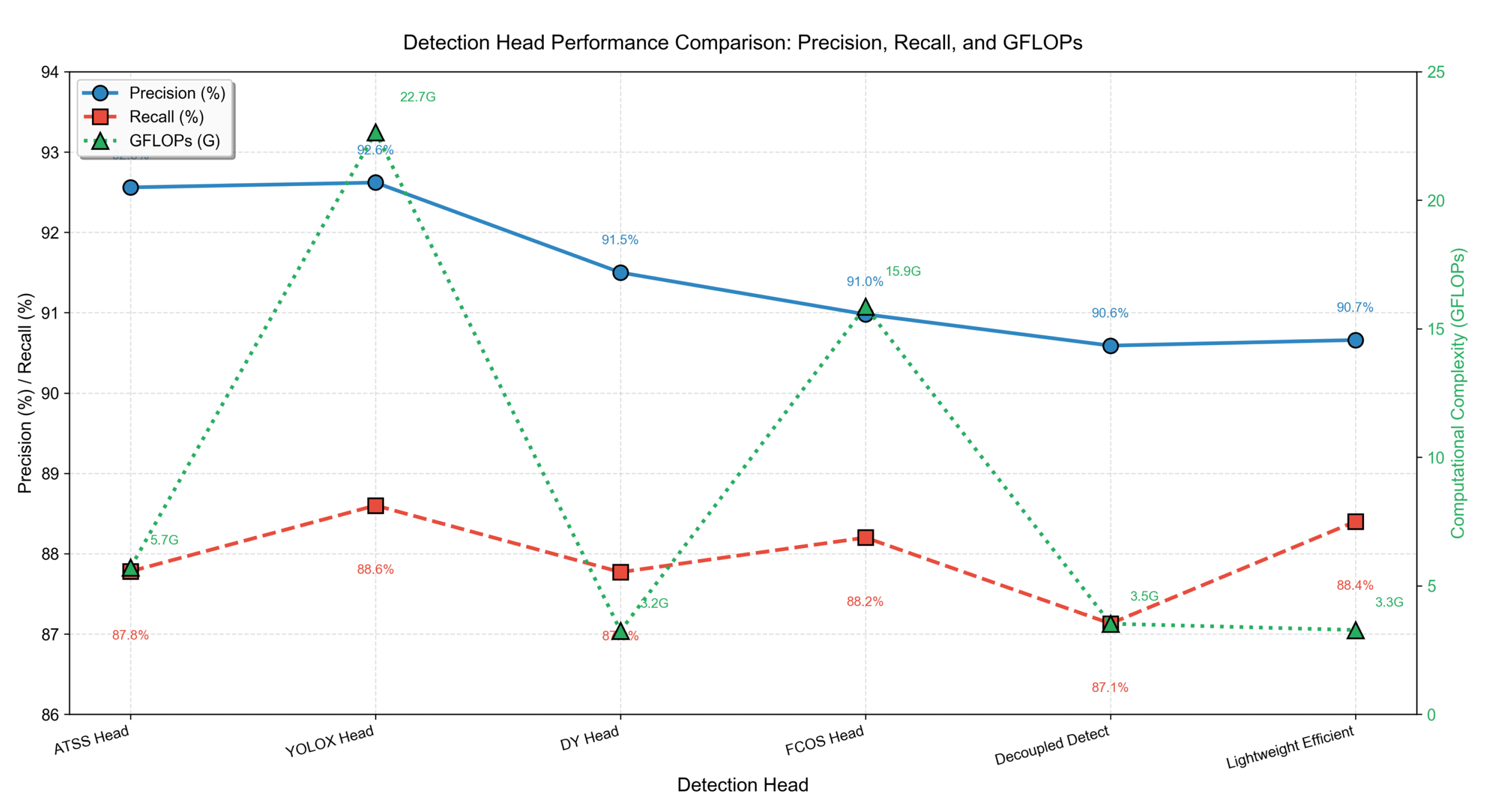

Figure 20.

Precision (P), recall (R), and FLOPs comparison of six detection heads on CTT. ATSS Head achieves the highest precision and recall while keeping FLOPs well below YOLOX Head (approximately one-quarter of YOLOX’s computational cost).

Figure 20.

Precision (P), recall (R), and FLOPs comparison of six detection heads on CTT. ATSS Head achieves the highest precision and recall while keeping FLOPs well below YOLOX Head (approximately one-quarter of YOLOX’s computational cost).

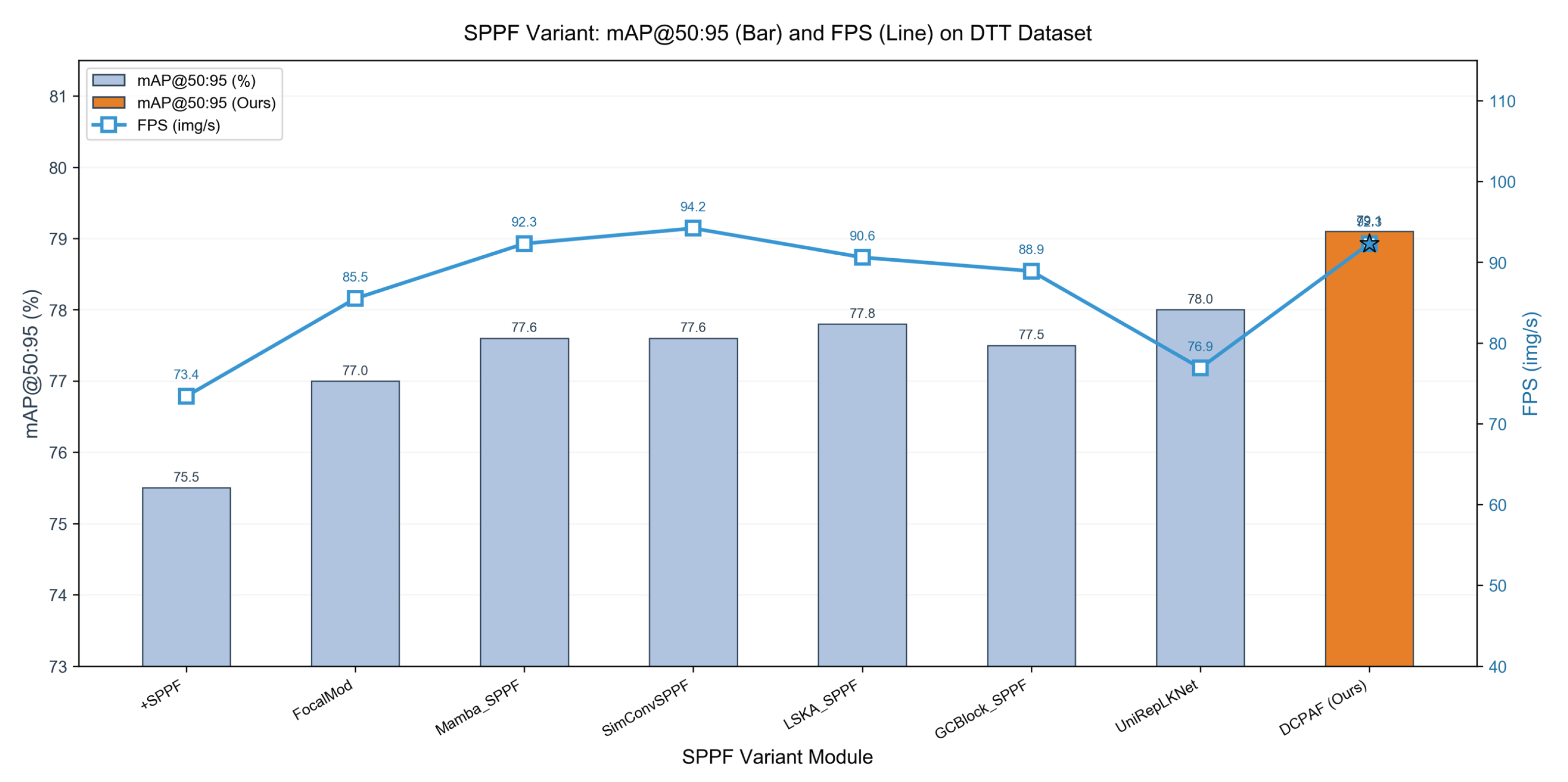

Figure 21.

mAP@50:95 (bars) and FPS (line) comparison of SPPF variants and DCPAF on CTT. DCPAF reaches the highest mAP@50:95 at a competitive inference speed.

Figure 21.

mAP@50:95 (bars) and FPS (line) comparison of SPPF variants and DCPAF on CTT. DCPAF reaches the highest mAP@50:95 at a competitive inference speed.

Table 1.

Performance comparison on the CTT dataset.

Table 1.

Performance comparison on the CTT dataset.

| Model |

Precision (%) |

Recall (%) |

mAP@50 (%) |

mAP@50:95 (%) |

Params (M) |

| yolov5_liquid_level |

93.40 |

89.23 |

92.63 |

78.79 |

9.13 |

| YOLOv8 [33] |

91.64 |

87.99 |

91.19 |

76.22 |

3.01 |

| YOLOv11 [34] |

92.17 |

87.44 |

90.87 |

75.50 |

2.59 |

| YOLOv8aa |

91.90 |

87.96 |

90.85 |

75.71 |

2.94 |

| yolov8_improved_pip |

91.13 |

88.82 |

90.63 |

75.78 |

3.02 |

| 06_gold_yolo_style |

91.15 |

88.17 |

90.61 |

74.46 |

2.59 |

| yolov5_shufflenet |

90.39 |

88.54 |

90.40 |

75.31 |

6.85 |

| YOLOv9t [35] |

90.14 |

88.36 |

90.19 |

75.21 |

2.01 |

| YOLOv12 [36] |

91.12 |

88.52 |

90.14 |

75.02 |

2.57 |

| yolov8n_expiry_date |

89.61 |

88.14 |

89.63 |

73.21 |

2.91 |

| TubeDet-YOLO [13] |

88.40 |

85.82 |

88.31 |

69.83 |

1.03 |

| YOLOv5 liquid-level localization |

87.97 |

79.24 |

83.30 |

66.20 |

2.75 |

| WDA-TNET (Ours) |

94.08 |

88.91 |

92.77 |

79.08 |

8.46 |