Submitted:

09 April 2026

Posted:

10 April 2026

You are already at the latest version

Abstract

Keywords:

1. Summary

2. Data Description

2.1. Structure of the Dataset

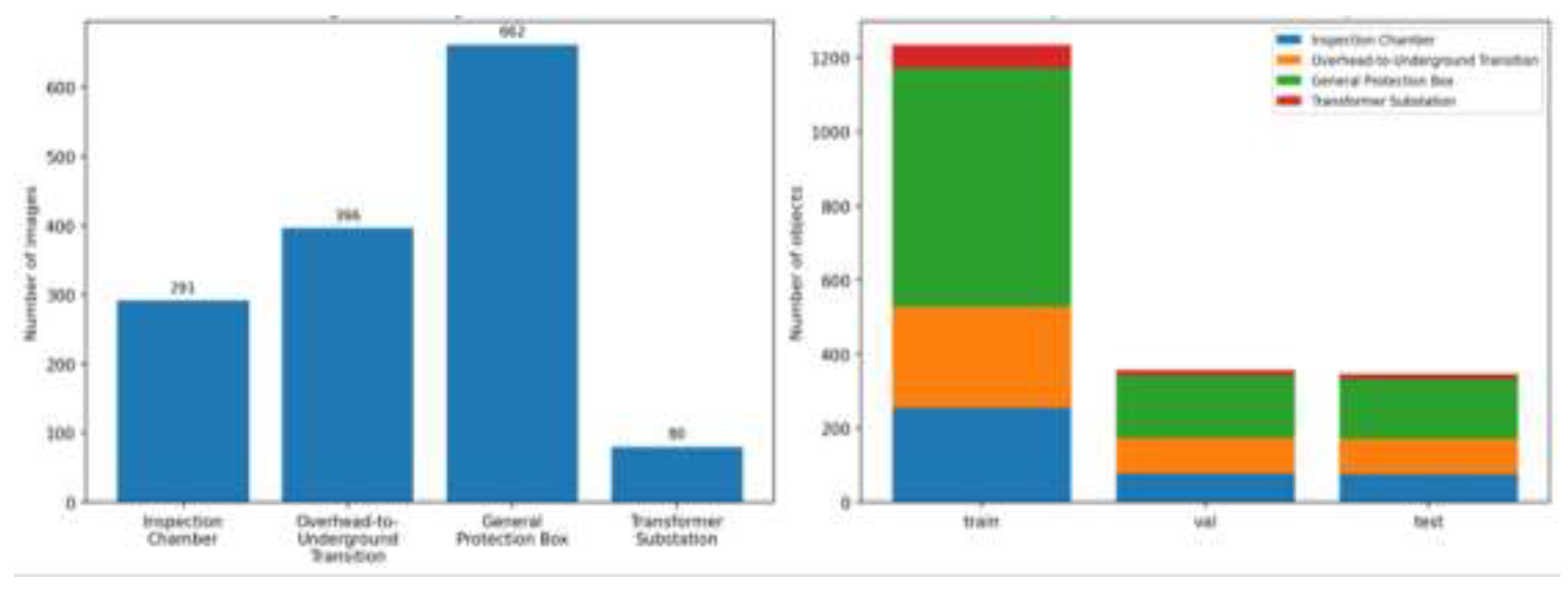

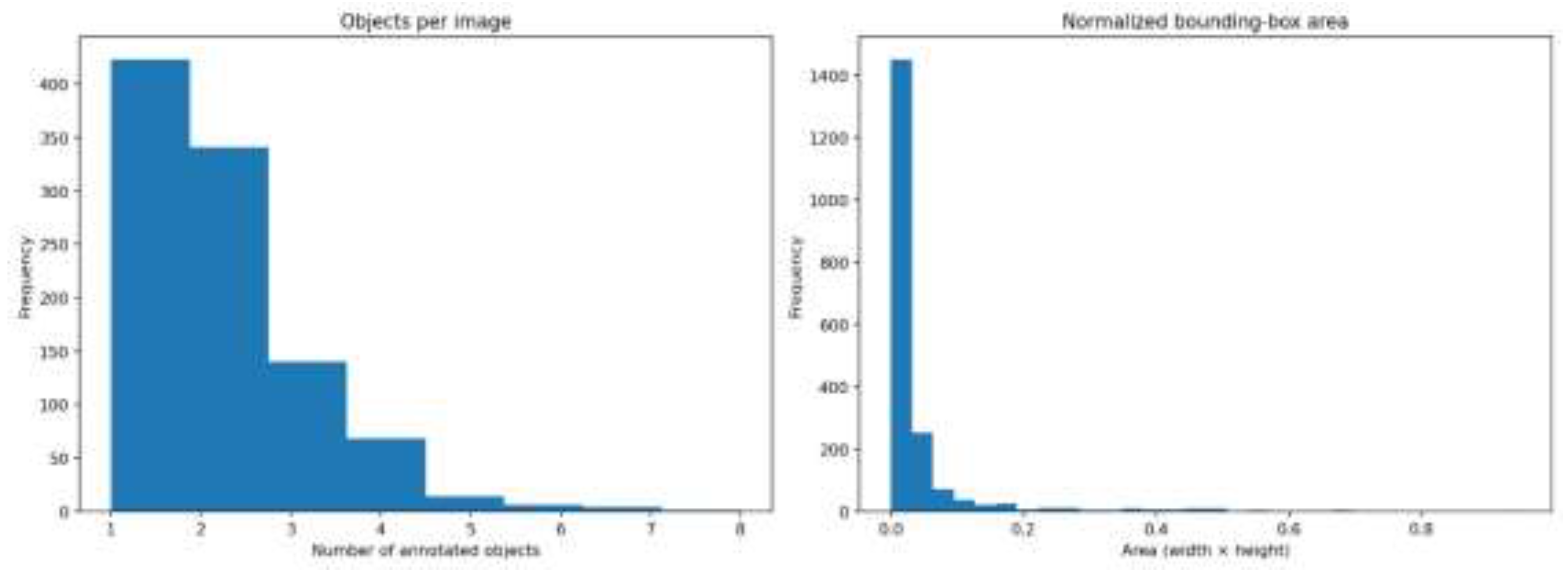

2.2. Class Distribution and Annotation Characteristics

3. Methods

3.1. Image Sources and Acquisition Context

3.2. Annotation Format and Class Ontology

3.3. Curation, Standardization, and Split Generation

3.4. Technical Validation

4. User Notes

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Silva, NS e; Castro, R; Ferrão, P. Smart Grids in the Context of Smart Cities: A Literature Review and Gap Analysis. Energies 2025, 18(5). [Google Scholar] [CrossRef]

- Lin, TY; Maire, M; Belongie, S; Bourdev, L; Girshick, R; Hays, J; et al. Microsoft COCO: Common Objects in Context [Internet]. arXiv. 2015. Available online: http://arxiv.org/abs/1405.0312. [CrossRef]

- Santos, A; Junior, JM; Silva, J de A; Pereira, R; Matos, D; Menezes, G; et al. Storm-Drain and Manhole Detection Using the RetinaNet Method. Sensors 2020, 20(16). [Google Scholar] [CrossRef] [PubMed]

- Nguyen, VN; Jenssen, R; Roverso, D. Automatic autonomous vision-based power line inspection: A review of current status and the potential role of deep learning. Int J Electr Power Energy Syst. 2018, 99, 107–20. [Google Scholar] [CrossRef]

- Sharma, P; Saurav, S; Singh, S. Object detection in power line infrastructure: A review of the challenges and solutions. Eng Appl Artif Intell 2024, 130(C). [Google Scholar] [CrossRef]

- Garcia-Atutxa, I; Martínez-Más, J; Bueno-Crespo, A; Villanueva-Flores, F. Early-fusion hybrid CNN-transformer models for multiclass ovarian tumor ultrasound classification. Front Artif Intell 2025, 8. [Google Scholar] [CrossRef] [PubMed]

- Garcia-Atutxa, I; Villanueva-Flores, F; Barrio, ED; Sanchez-Villamil, JI; Martínez-Más, J; Bueno-Crespo, A. Artificial intelligence for ovarian cancer diagnosis via ultrasound: a systematic review and quantitative assessment of model performance. Front Artif Intell 2025, 8. [Google Scholar] [CrossRef] [PubMed]

- Zaman, M; Puryear, N; Abdelwahed, S; Zohrabi, N. A Review of IoT-Based Smart City Development and Management. Smart Cities 2024, 7(3), 1462–501. [Google Scholar] [CrossRef]

- Wolniak, R; Stecuła, K. Artificial Intelligence in Smart Cities—Applications, Barriers, and Future Directions: A Review. Smart Cities 2024, 7(3), 1346–89. [Google Scholar] [CrossRef]

- İnci, M; Çelik, Ö; Lashab, A; Bayındır, KÇ; Vasquez, JC; Guerrero, JM. Power System Integration of Electric Vehicles: A Review on Impacts and Contributions to the Smart Grid. Appl Sci. 2024, 14(6). [Google Scholar] [CrossRef]

| Folder | Image-label pairs | Purpose | Recommended use |

| train | 698 | Official training subset | Use for model fitting |

| val | 150 | Official validation subset | Use for model selection and early stopping |

| test | 149 | Official hold-out subset | Use only for final evaluation |

| public release | 997 | Complete benchmark release | Use according to the train/val/test protocol |

| Class ID | Class | Images | Objects | Objects (%) | Mean bbox area | Median bbox area |

| 0 | Inspection Chamber |

291 | 409 | 21.09 | 0.0483 | 0.0198 |

| 1 | Overhead-to- Underground Transition |

396 | 465 | 23.98 | 0.0193 | 0.0107 |

| 2 | General Protection Box |

662 | 974 | 50.23 | 0.0238 | 0.0114 |

| 3 | Transformer Substation |

80 | 91 | 4.69 | 0.2926 | 0.2353 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.