Submitted:

09 April 2026

Posted:

10 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

- Truthfulness and Factuality: The minimisation of stochastic hallucinations and adherence to verifiable facts.

- 2.

- Integrity and Curation: The resilience of the system against data poisoning [3] and context degradation.

- 3.

- Representational Fairness: The mitigation of algorithmic biases inherited from source data or amplified during augmentation [9].

- A Novel Perspective: Unifying disparate augmentation methods (RAG, Fine-Tuning, Hybrid) under the singular, critical umbrella of data fidelity and safety.

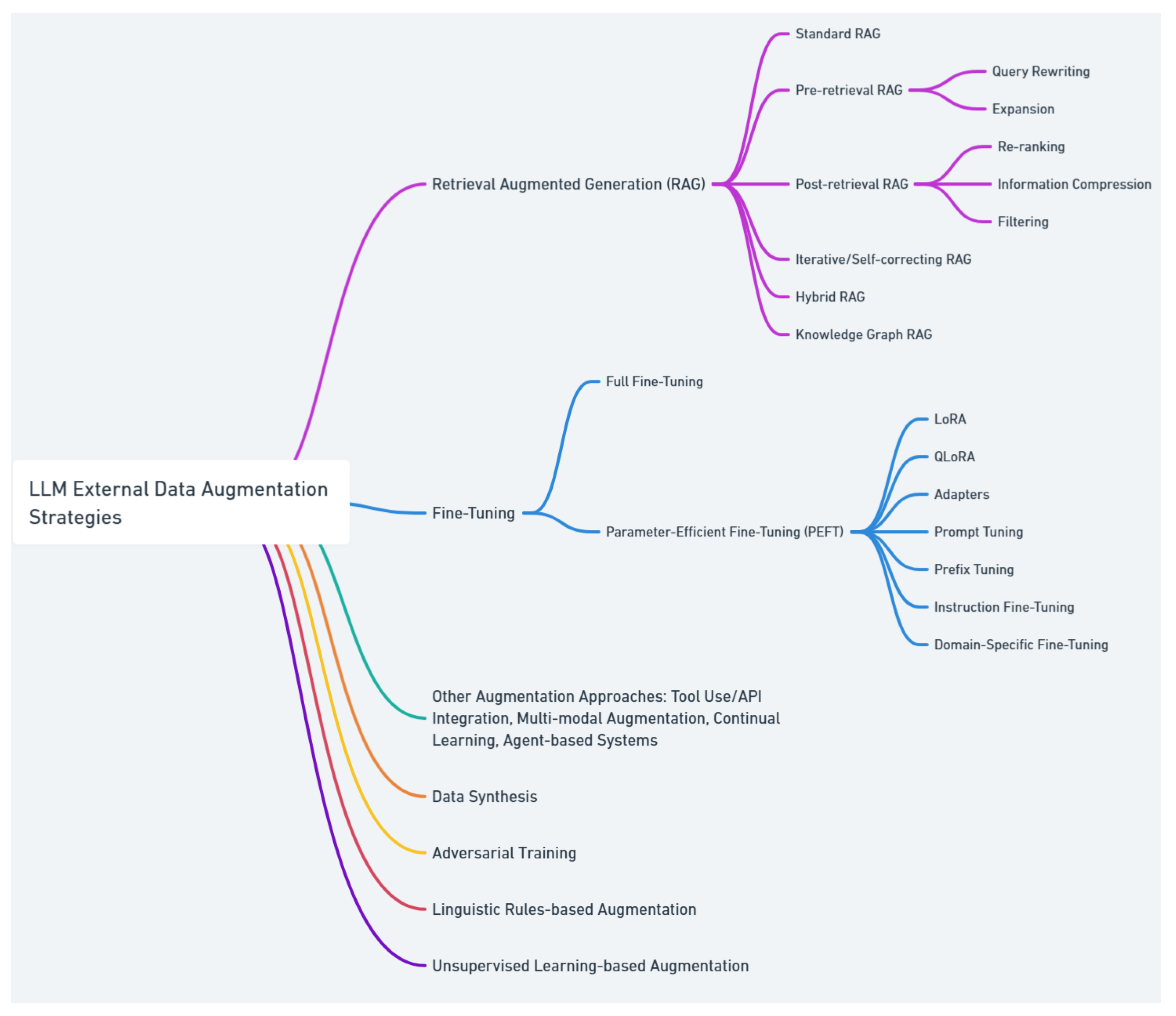

- A Structured Taxonomy: Clarifying the relationships, evolution, and architectural nuances of modern approaches through conceptual diagrams and structured categorisation.

- Actionable Insights: Providing a synthesised comparative framework to guide researchers and practitioners in selecting the optimal architecture based on their specific constraints (e.g., latency vs. provenance).

- RQ-A: What are the main methodologies for integrating external data into LLMs? (Addressed in Section 4, providing a taxonomy of RAG, Fine-tuning, and Hybrid approaches).

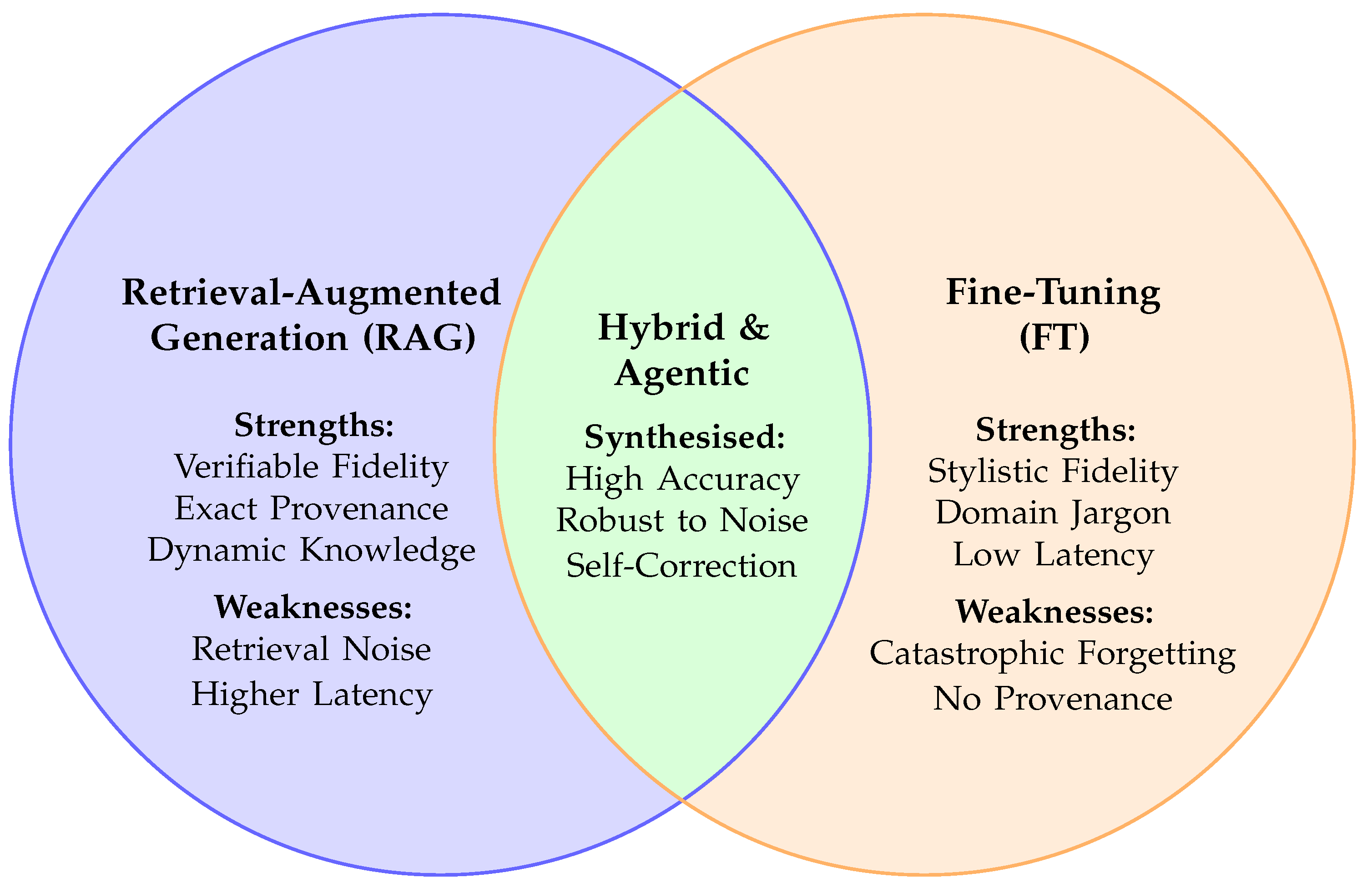

- RQ-B: How do RAG, Fine-tuning, and Hybrid strategies compare in terms of fidelity and performance? (Addressed in Section 6, which synthesises comparative performance metrics).

- RQ-C: What open challenges and research gaps remain for developing trustworthy and high-fidelity augmented LLMs? (Addressed in Section 7, outlining future research categories).

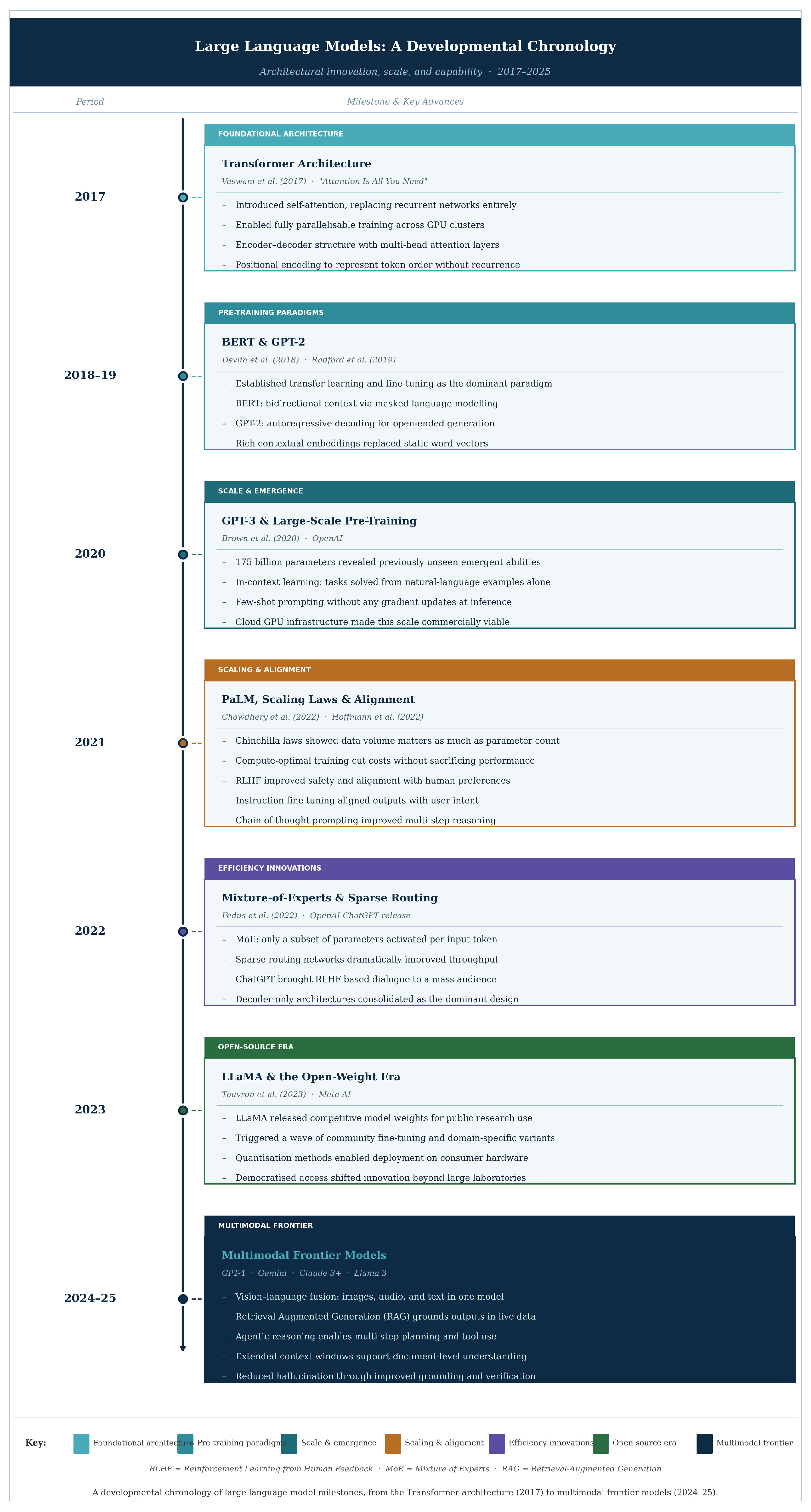

2. Conceptual Framework and Background

2.1. The Need for Augmentation

2.2. Cross-Cutting Fidelity Concerns

2.2.1. Security: Vulnerabilities and Integrity

2.2.2. Privacy and Confidentiality

2.2.3. Bias and Fairness

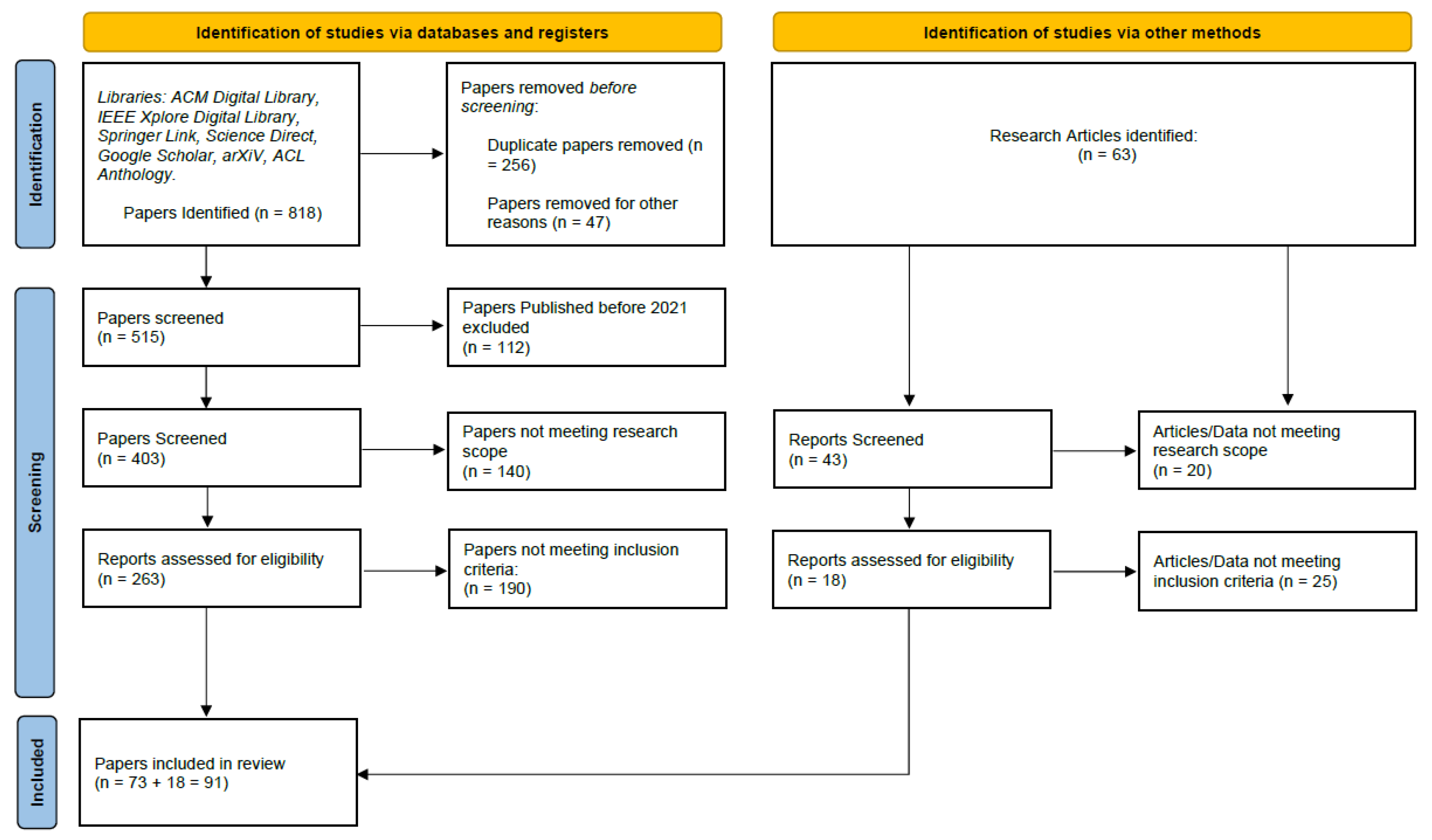

3. Review Methodology

3.1. Search Strategy and Selection

3.2. Inclusion and Exclusion Criteria

-

Inclusion Criteria:

- −

- Publication Date: Articles published between January 1, 2021, and December 31, 2025, to capture the most recent advancements in LLM capabilities.

- −

- Relevance: Papers explicitly discussing "Retrieval-Augmented Generation," "Fine-Tuning," or "External Data Augmentation" in the context of Large Language Models.

- −

- Focus on Fidelity: Studies that include evaluations or discussions regarding data fidelity, factual consistency, hallucination mitigation, or information loss.

- −

- Source Type: Peer-reviewed journal articles and conference proceedings (e.g., ACL, NeurIPS, ICLR) to ensure academic rigor.

-

Exclusion Criteria:

- −

- Language: Non-English publications.

- −

- Scope: Papers focusing solely on pre-training architectures without addressing external data integration.

- −

- Format: Opinion pieces, editorials, and non-peer-reviewed preprints (unless widely cited as seminal works in the field).

- −

- Redundancy: Duplicate studies or earlier versions of papers that have been subsequently published in journals.

4. Taxonomy of Augmentation Methodologies

4.1. Retrieval-Augmented Generation (RAG)

4.1.1. Naive RAG

4.1.2. Advanced RAG

- Query Transformation and Decomposition: Techniques like Query Decomposition break complex questions into sub-queries. The system finds documents for each sub-question and pieces the information together. HyDE (Hypothetical Document Embeddings) takes a different approach by asking the LLM to generate a theoretical answer to guide retrieval, ensuring the semantic search aligns with the answer space rather than just the question space [27].

- Re-Ranking: A second-stage process using cross-encoders to score documents based on true relevance. RankRAG [28] demonstrates that re-ranking significantly reduces noise. Unlike bi-encoders used in the initial search, cross-encoders process the query and document together, allowing for a deeper analysis of relevance. This directly improves the factual accuracy of the generation by filtering out irrelevant "distractors."

- Knowledge Fusion: Methods like RAG-Fusion [29] generate multiple query perspectives and fuse the results using Reciprocal Rank Fusion (RRF). This provides a richer context by combining diverse sets of retrieved documents. However, this negatively impacts the system’s latency due to the multiple retrieval operations required.

4.2. Fine-Tuning Strategies

4.2.1. Knowledge Injection and Domain Adaptation

4.2.2. Parameter-Efficient Fine-Tuning (PEFT)

4.2.3. Continual Learning with New Data

4.3. Hybrid, Agentic, and Structured Approaches

4.3.1. Knowledge Graph Integration

4.3.2. Agentic Frameworks and Plugins

5. Evaluation Metrics for Fidelity

5.1. Retrieval-Level Metrics

5.2. Generation-Level Metrics

5.3. End-to-End Evaluation

5.4. Computational Efficiency of Evaluation

6. Comparative Analysis and Discussion

6.1. Accuracy vs. Factual Fidelity

6.2. Contextual Robustness and Recall

6.3. Interpretability and Safety (Exact Match)

6.4. When to Use What?

- Use RAG when: Data changes frequently (News, Stock prices), provenance, interpretability, and citation are required, the knowledge base is vast (Web-scale). RAG is essential for dynamic, "knowledge-intensive" tasks where output must be strictly verified.

- Use Fine-Tuning when: The domain is static (Medical terminology, Legal syntax), low latency is critical (eliminating retrieval steps), the goal is stylistic adaptation or instruction following. Fine-tuning offers superior stylistic robustness and latency, but poor interpretability.

- Use Hybrid when: High precision is required on complex, domain-specific tasks that demand both deep structural understanding (FT) and up-to-date facts (RAG).

7. Future Research Directions

7.1. Quantifying Data Fidelity

7.2. Continual Learning Without Forgetting

7.3. Privacy-Preserving Augmentation

7.4. Agentic Reliability and Safety

8. Conclusion

Abbreviations

| AI | Artificial Intelligence |

| API | Application Programming Interface |

| CL | Continual Learning |

| DP | Differential Privacy |

| EM | Exact Match |

| KG | Knowledge Graph |

| LLM | Large Language Model |

| LoRA | Low-Rank Adaptation |

| PEFT | Parameter-Efficient Fine-tuning |

| RAG | Retrieval-Augmented Generation |

| ReAct | Reasoning and Acting |

References

- Zhang, Z.; Han, X.; Liu, Z.; Jiang, X.; Sun, M.; Liu, Q. ERNIE: Enhanced Language Representation with Informative Entities. In Proceedings of the Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics; Korhonen, A., Traum, D., Màrquez, L., Eds.; Florence, Italy, 2019; pp. 1441–1451. [Google Scholar] [CrossRef]

- Sun, Y.; Wang, S.; Feng, S.; Ding, S.; Pang, C.; Shang, J.; Liu, J.; Chen, X.; Zhao, Y.; Lu, Y.; et al. ERNIE 3.0: Large-scale Knowledge Enhanced Pre-training for Language Understanding and Generation. ArXiv 2021, abs/2107.02137. [Google Scholar]

- Xue, J.; Zheng, M.; Hu, Y.; Liu, F.; Chen, X.; Lou, Q. BadRAG: Identifying Vulnerabilities in Retrieval Augmented Generation of Large Language Models. CoRR 2024, abs/2406.00083, [2406.00083. [Google Scholar] [CrossRef]

- Weihang, S.; Tang, Y.; Ai, Q.; Wu, Z.; Liu, Y. DRAGIN: Dynamic Retrieval Augmented Generation based on the Real-time Information Needs of Large Language Models 2024, 12991–13013. [CrossRef]

- Chauhan, P.; Sahani, R.K.; Datta, S.; Qadir, A.; Raj, M.; Ali, M.M. Evaluating Top-k RAG-based approach for Game Review Generation. Proceedings of the 2024 IEEE International Conference on Computing, Power and Communication Technologies (IC2PCT) 2024, Vol. 5, 258–263. [Google Scholar] [CrossRef]

- Wang, C.; Long, Q.; Xiao, M.; Cai, X.; Wu, C.; Meng, Z.; Wang, X.; Zhou, Y. BioRAG: A RAG-LLM Framework for Biological Question Reasoning. CoRR 2024, abs/2408.01107, 2408.01107. [Google Scholar] [CrossRef]

- Salemi, A.; Zamani, H. Evaluating Retrieval Quality in Retrieval-Augmented Generation. In Proceedings of the Proceedings of the 47th International ACM SIGIR Conference on Research and Development in Information Retrieval, New York, NY, USA, 2024; SIGIR ’24, pp. 2395–2400. [Google Scholar] [CrossRef]

- Spennemann, D.H.R. Generative Artificial Intelligence and the Future of Public Knowledge. Knowledge 2025, 5. [Google Scholar] [CrossRef]

- Koo, M. ChatGPT Research: A Bibliometric Analysis Based on the Web of Science from 2023 to June 2024. Knowledge 2025, 5. [Google Scholar] [CrossRef]

- Ovadia, O.; Brief, M.; Mishaeli, M.; Elisha, O. Fine-Tuning or Retrieval? Comparing Knowledge Injection in LLMs. In Proceedings of the Conference on Empirical Methods in Natural Language Processing, 2023. [Google Scholar]

- Gao, Y.; Xiong, Y.; Gao, X.; Jia, K.; Pan, J.; Bi, Y.; Dai, Y.; Sun, J.; Guo, Q.; Wang, M.; et al. Retrieval-Augmented Generation for Large Language Models: A Survey. CoRR 2023, abs/2312.10997, [2312.10997. [Google Scholar] [CrossRef]

- Azaria, A.; Mitchell, T. The Internal State of an LLM Knows When It’s Lying, 2023. arXiv arXiv:cs.

- Raj, G.; Hamzah; Raj, N.; Ranjan, N. Hacking LLMs: A Technical Analysis of Security Vulnerabilities and Defense Mechanisms. In Proceedings of the 2025 2nd International Conference on Computational Intelligence, Communication Technology and Networking (CICTN), 2025; pp. 555–560. [Google Scholar] [CrossRef]

- Fasha, M.; Rub, F.A.; Matar, N.; Sowan, B.; Al Khaldy, M.; Barham, H. Mitigating the OWASP Top 10 For Large Language Models Applications using Intelligent Agents. In Proceedings of the 2024 2nd International Conference on Cyber Resilience (ICCR), 2024; pp. 1–9. [Google Scholar] [CrossRef]

- Gummadi, V.; Udayaraju, P.; Sarabu, V.R.; Ravulu, C.; Seelam, D.R.; Venkataramana, S. Enhancing Communication and Data Transmission Security in RAG Using Large Language Models. In Proceedings of the 2024 4th International Conference on Sustainable Expert Systems (ICSES), 2024; pp. 612–617. [Google Scholar] [CrossRef]

- M, L.; Agarwal, V.; Kamthania, S.; Vutkur, P.; M.C., S. Benchmarking LLM for Zero-day Vulnerabilities. Proceedings of the 2024 IEEE International Conference on Electronics, Computing and Communication Technologies (CONECCT) 2024, 1–6. [Google Scholar] [CrossRef]

- Afsharmazayejani, R.; Shahmiri, M.M.; Link, P.; Pearce, H.; Tan, B. Toward Hardware Security Benchmarking of LLMs. Proceedings of the 2024 IEEE LLM Aided Design Workshop (LAD) 2024, 1–7. [Google Scholar] [CrossRef]

- Rathod, V.; Nabavirazavi, S.; Zad, S.; Iyengar, S.S. Privacy and Security Challenges in Large Language Models. Proceedings of the 2025 IEEE 15th Annual Computing and Communication Workshop and Conference (CCWC) 2025, 00746–00752. [Google Scholar] [CrossRef]

- Pan, Q.; Wu, J. Selective Privacy-Preserving Federated Learning for Large Language Model Fine-Tuning. Proceedings of the 2025 International Wireless Communications and Mobile Computing (IWCMC) 2025, 1626–1631. [Google Scholar] [CrossRef]

- Gallegos, I.O.; Rossi, R.A.; Barrow, J.; Tanjim, M.M.; Kim, S.; Dernoncourt, F.; Yu, T.; Zhang, R.; Ahmed, N.K. Bias and Fairness in Large Language Models: A Survey. Computational Linguistics 2024, 50, 1097–1179. Available online: https://direct.mit.edu/coli/article-pdf/50/3/1097/2471010/coli_a_00524.pdf. [CrossRef]

- Sharma, N.; Liao, Q.V.; Xiao, Z. Generative Echo Chamber? Effect of LLM-Powered Search Systems on Diverse Information Seeking. In Proceedings of the Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems, New York, NY, USA, 2024; CHI ’24. [Google Scholar] [CrossRef]

- Sakurai, T.; Shiramatsu, S.; Kinoshita, R. LLM-based Agent for Recommending Information Related to Web Discussions at Appropriate Timing. In Proceedings of the 2024 IEEE International Conference on Agents (ICA), 2024; pp. 120–123. [Google Scholar] [CrossRef]

- Zhang, W.; Liu, H.; Dong, Z.; Du, Y.; Zhu, C.; Song, Y.; Zhu, H.; Wu, Z. Bridging the Information Gap Between Domain-Specific Model and General LLM for Personalized Recommendation. In Proceedings of the Web and Big Data; Zhang, W., Tung, A., Zheng, Z., Yang, Z., Wang, X., Guo, H., Eds.; Singapore, 2024; pp. 280–294. [Google Scholar]

- Jin, B.; Yoon, J.; Han, J.; Arik, S.O. Long-Context LLMs Meet RAG: Overcoming Challenges for Long Inputs in RAG. arXiv 2024, arXiv:2410.05983. [Google Scholar] [CrossRef]

- Jiang, Z.; Ma, X.; Chen, W. LongRAG: Enhancing Retrieval-Augmented Generation with Long-context LLMs. arXiv 2024, arXiv:cs. [Google Scholar]

- Rivera, J.; Zapata, S.; Pizarro, R.; Keith, B. Enhancing Chatbot Performance in a SaaS Platform Through Retrieval-Augmented Generation and Prompt Engineering: A Case Study in Behavioral Safety Analysis. Knowledge 2025, 5. [Google Scholar] [CrossRef]

- Zhang, J.; Cui, W.; Huang, Y.; Das, K.; Kumar, S. Synthetic Knowledge Ingestion: Towards Knowledge Refinement and Injection for Enhancing Large Language Models. In Proceedings of the Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing; Miami, Florida, USA, Al-Onaizan, Y., Bansal, M., Chen, Y.N., Eds.; 2024; pp. 21456–21473. [Google Scholar] [CrossRef]

- Yu, Y.; Ping, W.; Liu, Z.; Wang, B.; You, J.; Zhang, C.; Shoeybi, M.; Catanzaro, B. RankRAG: unifying context ranking with retrieval-augmented generation in LLMs. In Proceedings of the Proceedings of the 38th International Conference on Neural Information Processing Systems, Red Hook, NY, USA, 2025; p. NIPS ’24. [Google Scholar]

- Rackauckas, Z. Rag-Fusion: A New Take on Retrieval Augmented Generation. International Journal on Natural Language Computing 2024, 13, 37–47. [Google Scholar] [CrossRef]

- Hu, E.J.; shen, yelong; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models. In Proceedings of the International Conference on Learning Representations, 2022. [Google Scholar]

- Dong, H.; Xiong, W.; Goyal, D.; Pan, R.; Diao, S.; Zhang, J.; Shum, K.; Zhang, T. RAFT: Reward rAnked FineTuning for Generative Foundation Model Alignment. ArXiv 2023, abs/2304.06767. [Google Scholar]

- Yin, F.; Ye, X.; Durrett, G. LOFIT: localized fine-tuning on LLM representations. In Proceedings of the Proceedings of the 38th International Conference on Neural Information Processing Systems, Red Hook, NY, USA, 2025; p. NIPS ’24. [Google Scholar]

- Tonolini, F.; Massiah, J.; Aletras, N.; Kazai, G. Multi-Fidelity Fine-Tuning of Pre-Trained Language Models. 2024. [Google Scholar]

- Pillai, S. Replay to Remember: Retaining Domain Knowledge in Streaming Language Models. arXiv 2025, arXiv:2504.17780. [Google Scholar] [CrossRef]

- Hu, Y.; Lei, Z.; Zhang, Z.; Pan, B.; Ling, C.; Zhao, L. GRAG: Graph Retrieval-Augmented Generation. In Proceedings of the Findings of the Association for Computational Linguistics: NAACL 2025; Chiruzzo, L., Ritter, A., Wang, L., Eds.; Albuquerque, New Mexico, 2025; pp. 4145–4157. [Google Scholar] [CrossRef]

- Peters, M.E.; Neumann, M.; Logan, R.; Schwartz, R.; Joshi, V.; Singh, S.; Smith, N.A. Knowledge Enhanced Contextual Word Representations. In Proceedings of the Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP); Hong Kong, China, Inui, K., Jiang, J., Ng, V., Wan, X., Eds.; 2019; pp. 43–54. [Google Scholar] [CrossRef]

- Saeid, Y.; Kopinski, T. AgentFusion: A Multi-Agent Approach to Accurate Text Generation. Proceedings of the 2024 International Conference on Electrical and Computer Engineering Researches (ICECER) 2024, 1–8. [Google Scholar] [CrossRef]

- Parthasarathy, K.; Vaidhyanathan, K.; Dhar, R.; Krishnamachari, V.; Kakran, A.; Akshathala, S.; Arun, S.; Karan, A.; Muhammed, B.; Dubey, S.; et al. Engineering LLM Powered Multi-Agent Framework for Autonomous CloudOps. Proceedings of the 2025 IEEE/ACM 4th International Conference on AI Engineering – Software Engineering for AI (CAIN) 2025, 201–211. [Google Scholar] [CrossRef]

- Afzal, A.; Vladika, J.; Fazlija, G.; Staradubets, A.; Matthes, F. Towards Optimizing a Retrieval Augmented Generation using Large Language Model on Academic Data. In Proceedings of the Proceedings of the 2024 8th International Conference on Natural Language Processing and Information Retrieval, New York, NY, USA, 2025; NLPIR ’24, pp. 250–257. [Google Scholar] [CrossRef]

- Roychowdhury, S.; Krema, M.; Mahammad, A.; Moore, B.; Mukherjee, A.; Prakashchandra, P. ERATTA: Extreme RAG for enterprise-Table To Answers with Large Language Models. In Proceedings of the 2024 IEEE International Conference on Big Data (BigData), 2024; pp. 4605–4610. [Google Scholar] [CrossRef]

- Gekhman, Z.; Yona, G.; Aharoni, R.; Eyal, M.; Feder, A.; Reichart, R.; Herzig, J. Does Fine-Tuning LLMs on New Knowledge Encourage Hallucinations? In Proceedings of the Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing; Miami, Florida, USA, Al-Onaizan, Y., Bansal, M., Chen, Y.N., Eds.; 2024; pp. 7765–7784. [Google Scholar] [CrossRef]

- Jiang, X.; Fang, Y.; Qiu, R.; Zhang, H.; Xu, Y.; Chen, H.; Zhang, W.; Zhang, R.; Fang, Y.; Ma, X.; et al. TC–RAG: Turing–Complete RAG’s Case study on Medical LLM Systems. In Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics; Che, W., Nabende, J., Shutova, E., Pilehvar, M.T., Eds.; Vienna, Austria, 2025; Volume 1, pp. 11400–11426. [Google Scholar] [CrossRef]

- Ye, F.; Li, S.; Zhang, Y.; Chen, L. R2AG: Incorporating Retrieval Information into Retrieval Augmented Generation. arXiv 2024, arXiv:cs. [Google Scholar]

- Du, X.; Zheng, G.; Wang, K.; Feng, J.; Deng, W.; Liu, M.; Chen, B.; Peng, X.; Ma, T.; Lou, Y. Vul-RAG: Enhancing LLM-based Vulnerability Detection via Knowledge-level RAG. CoRR 2024, abs/2406.11147, [2406.11147. [Google Scholar] [CrossRef]

- Huang, Y.; Sun, L.; Wang, H.; Wu, S.; Zhang, Q.; Li, Y.; Gao, C.; Huang, Y.; Lyu, W.; Zhang, Y.; et al. Position: TRUSTLLM: trustworthiness in large language models. In Proceedings of the Proceedings of the 41st International Conference on Machine Learning. JMLR.org, 2024; p. ICML’24. [Google Scholar]

- Hagen, M.; Völske, M.; Göring, S.; Stein, B. Axiomatic Result Re-Ranking. In Proceedings of the Proceedings of the 25th ACM International on Conference on Information and Knowledge Management, New York, NY, USA, 2016; CIKM ’16, pp. 721–730. [Google Scholar] [CrossRef]

- Dai, S.; Xu, C.; Xu, S.; Pang, L.; Dong, Z.; Xu, J. Bias and Unfairness in Information Retrieval Systems: New Challenges in the LLM Era. Proceedings of the Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining. ACM 2024, KDD ’24, 6437–6447. [Google Scholar] [CrossRef]

- Razuvayevskaya, O.; Wu, B.; Leite, J.A.; Heppell, F.; Srba, I.; Scarton, C.; Bontcheva, K.; Song, X. Comparison between parameter-efficient techniques and full fine-tuning: A case study on multilingual news article classification. PLOS ONE 2024, 19, e0301738. [Google Scholar] [CrossRef]

- Wei, J.; Yang, C.; Song, X.; Lu, Y.; Hu, N.Z.; Huang, J.; Tran, D.; Peng, D.; Liu, R.; Huang, D.; et al. Long-form factuality in large language models. In Proceedings of the The Thirty-eighth Annual Conference on Neural Information Processing Systems, 2024. [Google Scholar]

- Huang, C.W.; Chen, Y.N. FactAlign: Long-form Factuality Alignment of Large Language Models. arXiv 2024, arXiv:cs. [Google Scholar]

- Madabushi, H.T. FS-RAG: A Frame Semantics Based Approach for Improved Factual Accuracy in Large Language Models. arXiv 2024, arXiv:2406.16167. [Google Scholar] [CrossRef]

- Wang, Y.; Wang, M.; Manzoor, M.A.; Liu, F.; Georgiev, G.N.; Das, R.J.; Nakov, P. Factuality of Large Language Models: A Survey. In Proceedings of the Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing; Miami, Florida, USA, Al-Onaizan, Y., Bansal, M., Chen, Y.N., Eds.; 2024; pp. 19519–19529. [Google Scholar] [CrossRef]

- He, X.; Tian, Y.; Sun, Y.; Chawla, N.V.; Laurent, T.; LeCun, Y.; Bresson, X.; Hooi, B. G-retriever: retrieval-augmented generation for textual graph understanding and question answering. In Proceedings of the Proceedings of the 38th International Conference on Neural Information Processing Systems, Red Hook, NY, USA, 2025; NIPS ’24. [Google Scholar]

- Han, H.; Wang, Y.; Shomer, H.; Guo, K.; Ding, J.; Lei, Y.; Halappanavar, M.; Rossi, R.A.; Mukherjee, S.; Tang, X.; et al. Retrieval-Augmented Generation with Graphs (GraphRAG). arXiv 2025, arXiv:cs. [Google Scholar]

- Gao, L.; Ma, X.; Lin, J.; Callan, J. Precise Zero-Shot Dense Retrieval without Relevance Labels. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics; Toronto, Canada, Rogers, A., Boyd-Graber, J., Okazaki, N., Eds.; 2023; Volume 1, pp. 1762–1777. [Google Scholar] [CrossRef]

- Tian, S.; Luo, Y.; Xu, T.; Yuan, C.; Jiang, H.; Wei, C.; Wang, X. KG-Adapter: Enabling Knowledge Graph Integration in Large Language Models through Parameter-Efficient Fine-Tuning. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2024; Ku, L.W., Martins, A., Srikumar, V., Eds.; Bangkok, Thailand, 2024; pp. 3813–3828. [Google Scholar] [CrossRef]

- Raza, S.; Raval, A.; Chatrath, V. MBIAS: Mitigating Bias in Large Language Models While Retaining Context. In Proceedings of the Proceedings of the 14th Workshop on Computational Approaches to Subjectivity, Sentiment, & Social Media Analysis; Bangkok, Thailand, De Clercq, O., Barriere, V., Barnes, J., Klinger, R., Sedoc, J., Tafreshi, S., Eds.; 2024; pp. 97–111. [Google Scholar] [CrossRef]

- Wang, Y.; Shi, X.; Zhao, X. MLLM4Rec: multimodal information enhancing LLM for sequential recommendation. J. Intell. Inf. Syst. 2025, 63, 745–761. [Google Scholar] [CrossRef]

- Lee, C.; Roy, R.; Xu, M.; Raiman, J.; Shoeybi, M.; Catanzaro, B.; Ping, W. NV-Embed: Improved Techniques for Training LLMs as Generalist Embedding Models. arXiv 2025, arXiv:cs. [Google Scholar]

- Bin Islam, S.; Rahman, M.; Hossain, K.; Hoque, E.; Joty, S.; Parvez, M.R. Open-RAG: Enhanced Retrieval Augmented Reasoning with Open-Source Large Language Models 2024, 14231–14244. [CrossRef]

- Jiang, W.; Zhang, S.; Han, B.; Wang, J.; Wang, B.; Kraska, T. PipeRAG: Fast Retrieval-Augmented Generation via Adaptive Pipeline Parallelism. In Proceedings of the Proceedings of the 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining V.1, New York, NY, USA, 2025; KDD ’25, pp. 589–600. [Google Scholar] [CrossRef]

- Wang, L.; Chen, S.; Jiang, L.; Pan, S.; Cai, R.; Yang, S.; Yang, F. Parameter-efficient fine-tuning in large language models: a survey of methodologies. Artif. Intell. Rev. 2025, 58. [Google Scholar] [CrossRef]

- Seo, S.; Noh, S.; Lee, J.; Lim, S.; Lee, W.H.; Kang, H. REVECA: adaptive planning and trajectory-based validation in cooperative language agents using information relevance and relative proximity. In Proceedings of the Proceedings of the Thirty-Ninth AAAI Conference on Artificial Intelligence and Thirty-Seventh Conference on Innovative Applications of Artificial Intelligence and Fifteenth Symposium on Educational Advances in Artificial Intelligence, 2025, AAAI’25/IAAI’25/EAAI’25; AAAI Press. [Google Scholar] [CrossRef]

- Chan, C.M.; Xu, C.; Yuan, R.; Luo, H.; Xue, W.; Guo, Y.; Fu, J. RQ-RAG: Learning to Refine Queries for Retrieval Augmented Generation. In Proceedings of the First Conference on Language Modeling, 2024. [Google Scholar]

- Wei Jie, Y.; Ferdinan, T.; Kazienko, P.; Satapathy, R.; Cambria, E. Self-training Large Language Models through Knowledge Detection. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2024; Al-Onaizan, Y., Bansal, M., Chen, Y.N., Eds.; Miami, Florida, USA, 2024; pp. 15033–15045. [Google Scholar] [CrossRef]

- Cosme, D.; Galvao, A.; e Abreu, F.B. A Systematic Literature Review on LLM-Based Information Retrieval: The Issue of Contents Classification. In Proceedings of the International Conference on Knowledge Discovery and Information Retrieval, 2024. [Google Scholar]

- Choi, Y.; Asif, M.A.; Han, Z.; Willes, J.; Krishnan, R.G. Teaching LLMs How to Learn with Contextual Fine-Tuning. arXiv 2025, arXiv:2503.09032. [Google Scholar] [CrossRef]

- Wu, Z.; Hao, Y.; Mou, L. ULPT: Prompt Tuning with Ultra-Low-Dimensional Optimization. arXiv 2025, arXiv:cs. [Google Scholar]

- Ning, L.; Liu, L.; Wu, J.; Wu, N.; Berlowitz, D.; Prakash, S.; Green, B.; O’Banion, S.; Xie, J. User-LLM: Efficient LLM Contextualization with User Embeddings. In Proceedings of the Companion Proceedings of the ACM on Web Conference 2025, New York, NY, USA, 2025; WWW ’25, pp. 1219–1223. [Google Scholar] [CrossRef]

- Zhang, Y.; Wu, Y.; Hua, W.; Lu, X.; Hu, X. Understanding Dynamic Diffusion Process of LLM-based Agents under Information Asymmetry. arXiv 2025, arXiv:2502.13160. [Google Scholar] [CrossRef]

- Li, Y.; Tan, Z.; Xiao, W. LLM for Uniform Information Extraction Using Multi-task Learning Optimization. In Proceedings of the Web and Big Data. APWeb-WAIM 2024 International Workshops; Zhang, W., Tung, A., Zheng, Z., Yang, Z., Wang, X., Guo, H., Eds.; Singapore, 2025; pp. 17–29. [Google Scholar]

- Cambon, A.; Hecht, B.; Edelman, B.; Ngwe, D.; Jaffe, S.; Heger, A.; Vorvoreanu, M.; Peng, S.; Hofman, J.; Farach, A.; et al. Technical Report MSR-TR-2023-43; Early LLM-based Tools for Enterprise Information Workers Likely Provide Meaningful Boosts to Productivity. Microsoft, 2023.

- Purohit, S.; Chin, G.; Mackey, P.S.; Cottam, J.A. GraphAide: Advanced Graph-Assisted Query and Reasoning System. In Proceedings of the 2024 IEEE International Conference on Big Data (BigData), 2024; pp. 3485–3493. [Google Scholar] [CrossRef]

- Patel, V.; Tejani, P.; Parekh, J.; Huang, K.; Tan, X. Developing A Chatbot: A Hybrid Approach Using Deep Learning and RAG. In Proceedings of the 2024 IEEE/WIC International Conference on Web Intelligence and Intelligent Agent Technology (WI-IAT), 2024; pp. 273–280. [Google Scholar] [CrossRef]

- Renney, H.; Nethercott, M.; Williams, O.; Evetts, J.; Lang, J. Reimagining the Data Landscape: A Multi-Agent Paradigm for Data Interfacing. In Proceedings of the 2025 8th International Conference on Data Science and Machine Learning Applications (CDMA), 2025; pp. 114–119. [Google Scholar] [CrossRef]

- Xu, J.; Wang, J.; Leung, J.; Gu, J. GRASP: Municipal Budget AI Chatbots for Enhancing Civic Engagement. In Proceedings of the 2024 IEEE International Conference on Big Data (BigData), 2024; pp. 7438–7442. [Google Scholar] [CrossRef]

- Xu, X.; Zhang, D.; Liu, Q.; Lu, Q.; Zhu, L. Agentic RAG with Human-in-the-Retrieval. Proceedings of the 2025 IEEE 22nd International Conference on Software Architecture Companion (ICSA-C) 2025, 498–502. [Google Scholar] [CrossRef]

- Honnalli, R.; Farooq, J. LLM-Powered Agentic AI Approach to Securing EV Charging Systems Against Cyber Threats. In Proceedings of the 2025 IEEE 26th International Symposium on a World of Wireless, Mobile and Multimedia Networks (WoWMoM), 2025; pp. 266–274. [Google Scholar] [CrossRef]

- Allan, K.; Azcona, J.; Sripada, S.; Leontidis, G.; Sutherland, C.A.M.; Phillips, L.H.; Martin, D. Stereotypical bias amplification and reversal in an experimental model of human interaction with generative artificial intelligence. R. Soc. Open Sci. 2025, 12, 241472. [Google Scholar] [CrossRef]

- Mondal, D.; Lipizzi, C. Mitigating Large Language Model Bias: Automated Dataset Augmentation and Prejudice Quantification. Computers 2024, 13. [Google Scholar] [CrossRef]

- Su, C.; Wen, J.; Kang, J.; Wang, Y.; Su, Y.; Pan, H.; Zhong, Z.; Shamim Hossain, M. Hybrid RAG-Empowered Multimodal LLM for Secure Data Management in Internet of Medical Things: A Diffusion-Based Contract Approach. IEEE Internet of Things Journal 2025, 12, 13428–13440. [Google Scholar] [CrossRef]

- Lei, Y.; Ding, L.; Cao, Y.; Zan, C.; Yates, A.; Tao, D. Unsupervised Dense Retrieval with Relevance-Aware Contrastive Pre-Training. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2023; Rogers, A., Boyd-Graber, J., Okazaki, N., Eds.; Toronto, Canada, 2023; pp. 10932–10940. [Google Scholar] [CrossRef]

- Shi, Y.; Zi, X.; Shi, Z.; Zhang, H.; Wu, Q.; Xu, M. Enhancing Retrieval and Managing Retrieval: A Four-Module Synergy for Improved Quality and Efficiency in RAG Systems. ArXiv 2024, abs/2407.10670. [Google Scholar]

- Yuan, X.J.; Guo, Q.; Dadson, Y.A.; Goodarzi, M.; Jung, J.; Dong, Y.; Albert, N.; Bennett Gayle, D.; Sharma, P.; Ogunbayo, O.T.; et al. A Review of Ethical Challenges in AI for Emergency Management. Knowledge 2025, 5. [Google Scholar] [CrossRef]

- Tsoulos, I.G.; Charilogis, V. Gen2Gen: Efficiently Training Artificial Neural Networks Using a Series of Genetic Algorithms. Knowledge 2025, 5. [Google Scholar] [CrossRef]

- Soudani, H.; Kanoulas, E.; Hasibi, F. Fine Tuning vs. Retrieval Augmented Generation for Less Popular Knowledge. In Proceedings of the Proceedings of the 2024 Annual International ACM SIGIR Conference on Research and Development in Information Retrieval in the Asia Pacific Region, New York, NY, USA, 2024; SIGIR-AP 2024, pp. 12–22. [Google Scholar] [CrossRef]

- Edge, D.; Trinh, H.; Cheng, N.; Bradley, J.; Chao, A.; Mody, A.N.; Truitt, S.; Larson, J. From Local to Global: A Graph RAG Approach to Query-Focused Summarization. ArXiv 2024, abs/2404.16130. [Google Scholar]

- Wang, S.; Xiang, Y. Research on Data Augmentation Techniques for Text Classification Based on Antonym Replacement and Random Swapping. In Proceedings of the Proceedings of the International Conference on Modeling, Natural Language Processing and Machine Learning, New York, NY, USA, 2024; CMNM ’24, pp. 103–108. [Google Scholar] [CrossRef]

- Liu, Y.; Cao, J.; Liu, C.; Ding, K.; Jin, L. Datasets for Large Language Models: A Comprehensive Survey. ArXiv 2024, abs/2402.18041. [Google Scholar] [CrossRef]

- Mialon, G.; Dessì, R.; Lomeli, M.; Nalmpantis, C.; Pasunuru, R.; Raileanu, R.; Rozière, B.; Schick, T.; Dwivedi-Yu, J.; Celikyilmaz, A.; et al. Augmented Language Models: a Survey, 2023. arXiv arXiv:cs.

- Nia, N.; Amiri, A.; Luo, Y.; Kline, A. Ethical perspectives on deployment of large language model agents in biomedicine: a survey. AI and Ethics 2025, 6. [Google Scholar] [CrossRef]

| Feature | Retrieval-Augmented Generation (RAG) | Fine-Tuning |

|---|---|---|

| Knowledge Updates | Directly updates the retrieval knowledge base, ensuring information remains current without retraining. Highly suitable for dynamic data. | Requires continuous retraining to update knowledge. Not suitable for adding new knowledge or scenarios requiring rapid iteration. |

| Reducing Hallucinations | Inherently less prone to hallucinations since each answer is grounded in verifiable retrieved evidence. | Reduces hallucinations on specific domain data but may still exhibit severe hallucinations when faced with unfamiliar input. |

| Ethical and Privacy | Privacy concerns arise primarily from storing and retrieving text from external databases, which can be secured via access controls. | Ethical and privacy concerns arise from permanently embedding sensitive content directly into the model’s training data parameters. |

| Paper | Methodology | Application / Benchmark | Key Results | Limitations & Future Scope |

|---|---|---|---|---|

| LoRA [30] | Freezes pre-trained weights, injects trainable low-rank matrices. | GPT-3 175B on RoBERTa/DeBERTa tasks. | Reduces trainable parameters by 10,000x, matches FFT performance. | Rank r is a sensitive hyperparameter. |

| RAFT [31] | Rejection sampling FT via reward model ranking. | Generative alignment on LLaMA-7B. | More stable than PPO-based RLHF for alignment tasks. | Overhead of generating k samples during training. |

| LoFiT [32] | Fine-tunes sparse subset (3-10%) of attention heads. | TruthfulQA on Llama2-7B. | Improved TruthfulQA accuracy from 62.2% to 74.4%. | Locating optimal "locus" of heads is non-trivial. |

| Multi-Fidelity FT [33] | Sequential training: low-fidelity then high-fidelity data. | Noise robustness benchmarks. | Outperforms naive mixing, leverages noisy data effectively. | Risk of catastrophic forgetting of initial stage. |

| Paper | Methodology | Application / Benchmark | Key Results | Limitations & Future Scope |

|---|---|---|---|---|

| ERNIE [1] | Pre-training with KGs, fuses entity info via TransE. | Entity Typing, Relation Classification. | Significant improvements on knowledge-driven tasks. | Static knowledge injection requires costly retraining. |

| GRAG [35] | Retrieves k-hop ego-graphs, "soft pruning". | Graph QA (WebQSP). | Outperforms standard RAG by preserving topological structure. | Subgraph retrieval is computationally intensive. |

| BIORAG [6] | Domain-Specific RAG, MeSH hierarchy refinement. | Biomedical QA. | 73-90% accuracy on medical datasets. | Highly domain-coupled maintenance. |

| AgentFusion [37] | Multi-agent collaboration for retrieval/validation. | Technical documentation. | Significantly reduces hallucinations in non-English contexts. | High complexity in agent orchestration. |

| Dataset | Runtime E2E (s) | Runtime eRAG (s) | Memory E2E (GB) | Memory eRAG-Doc (GB) |

|---|---|---|---|---|

| Natural Qs (NQ) | 918 | 351 | 75.0 | 1.5 |

| TriviaQA | 1819 | 686 | 46.2 | 1.5 |

| HotpotQA | 1844 | 712 | 52.4 | 1.5 |

| FEVER | 3395 | 1044 | 66.5 | 1.5 |

| Wizard of Wiki | 912 | 740 | 47.9 | 1.5 |

| Paradigm | Accuracy & Factual Fidelity | Robustness (to noise/decay) | Latency & Cost | Interpretability & Provenance |

|---|---|---|---|---|

| Naive RAG | High for retrieved facts, prone to hallucination if retrieval fails. | Low, highly susceptible to irrelevant "distractor" documents. | Medium, depends on vector search speed. | High, directly traces output to source chunks. |

| Advanced RAG (e.g., RankRAG) | Very High, re-ranking filters noise before generation. | High, robust against poorly phrased queries via query expansion. | High, re-ranking and multi-queries add significant delay. | High, maintains clear provenance to filtered sources. |

| Fine-Tuning (PEFT) | Medium, good for stylistic alignment but struggles with novel facts. | Low to Medium, prone to catastrophic forgetting over time. | Low, no retrieval step required at inference. | Low, knowledge is parametrically embedded (black-box). |

| Hybrid / Agentic | Very High, combines parametric reasoning with external facts. | Very High, agents can verify and self-correct retrieved data. | Very High, multiple LLM calls and tool uses required. | Medium to High, execution traces provide some transparency. |

| Framework | Target Domain | Evaluation Dataset | Evaluation Metric | Reported Performance |

|---|---|---|---|---|

| TC-RAG [42] | Medical QA | MMCU-Medical | Exact Match (EM) | 83.15% |

| R2AG [43] | Open-domain QA | NQ-10 | Exact Match (EM) | 69.30% |

| VUL-RAG [44] | Software Security | PairVul | Precision | 61.00% |

| Training Dataset Variant | HighlyKnown (Test) | MaybeKnown (Test) | WeaklyKnown (Test) | Unknown (Test) |

|---|---|---|---|---|

| Early Stopping (Optimal Generalisation) | ||||

| Trained on | 98.7% | 60.1% | 9.0% | 0.6% |

| Trained on | 95.6% | 52.9% | 6.5% | 0.6% |

| Convergence (Overfitting on New Knowledge) | ||||

| Trained on | 98.4% | 58.8% | 8.5% | 0.7% |

| Trained on | 55.8% | 36.6% | 12.2% | 3.2% |

| Model Architecture | RAG Only (Ideal) | Fine-Tuning (PEFT) Only | Hybrid Approach |

|---|---|---|---|

| StableLM2 (1.6B) | 0.761 | 0.217 | 0.821 |

| Llama3 (8B) | 0.813 | 0.569 | 0.833 |

| Model / Method | Dataset | Metric | Score (%) |

|---|---|---|---|

| LONGRAG [25] | Natural Questions (NQ) | Answer Recall@1 | 71.7 |

| LONGRAG [25] | HotpotQA | Answer Recall@2 | 72.5 |

| RankRAG (8B) [28] | TriviaQA | Recall@5 | 93.2 |

| RankRAG (8B) [28] | TriviaQA | Recall@10 | 95.4 |

| Model Architecture | Multi-Choice (MC) | Open QA | Dialogue (KGD) | Summarisation |

|---|---|---|---|---|

| GPT-4 | 0.835 | 0.320 | 0.150 | 0.760 |

| ChatGPT (GPT-3.5) | 0.557 | 0.500 | 0.430 | 0.630 |

| ChatGLM2 | 0.557 | 0.600 | 0.500 | 0.510 |

| Llama2-70B | 0.256 | 0.370 | 0.440 | 0.540 |

| Mistral-7B | 0.412 | 0.480 | 0.450 | 0.490 |

| Vicuna-33B | 0.412 | 0.410 | 0.420 | 0.450 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).