Submitted:

08 April 2026

Posted:

09 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We design a sparse self-prompt mechanism based on vision foundation models to achieve strong generalization for in-the-wild stereo matching.

- We investigate whether tokens from vision foundation models can represent the coordinate information of all pixels within the original patch for stereo matching.

- We collect a set of in-the-wild stereo pairs and evaluate our model on more than eight public datasets, highlighting its potential for direct deployment in real-world stereo perception systems.

2. Related Work

3. Methodology

3.1. Overview

3.2. Model Architecture

3.3. Loss Functions

4. Experiments

4.1. Datasets & Evaluation Metrics

4.2. Main Properties

4.3. Comparisons

5. Discussion

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

Appendix A. Implementation Details

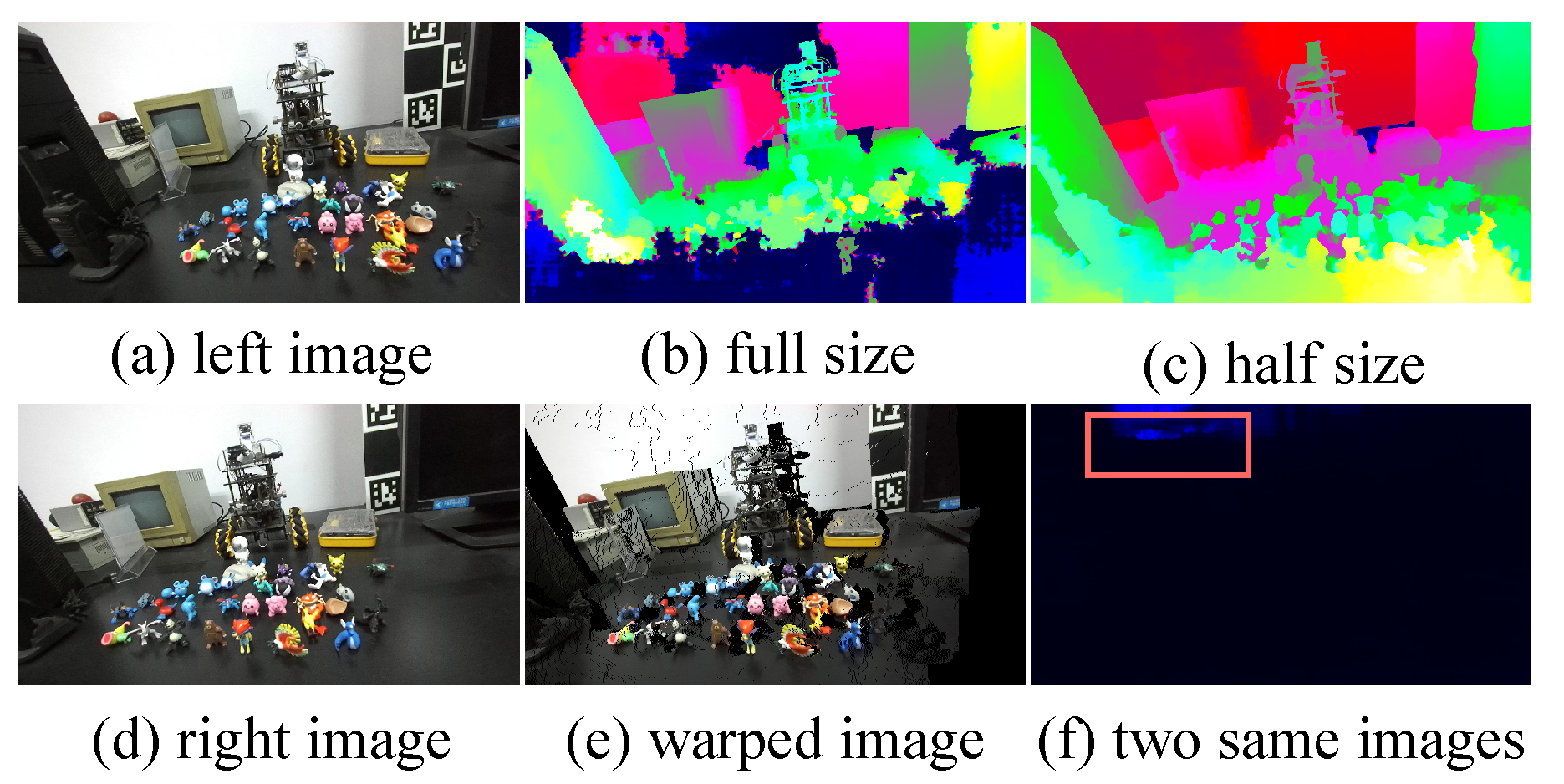

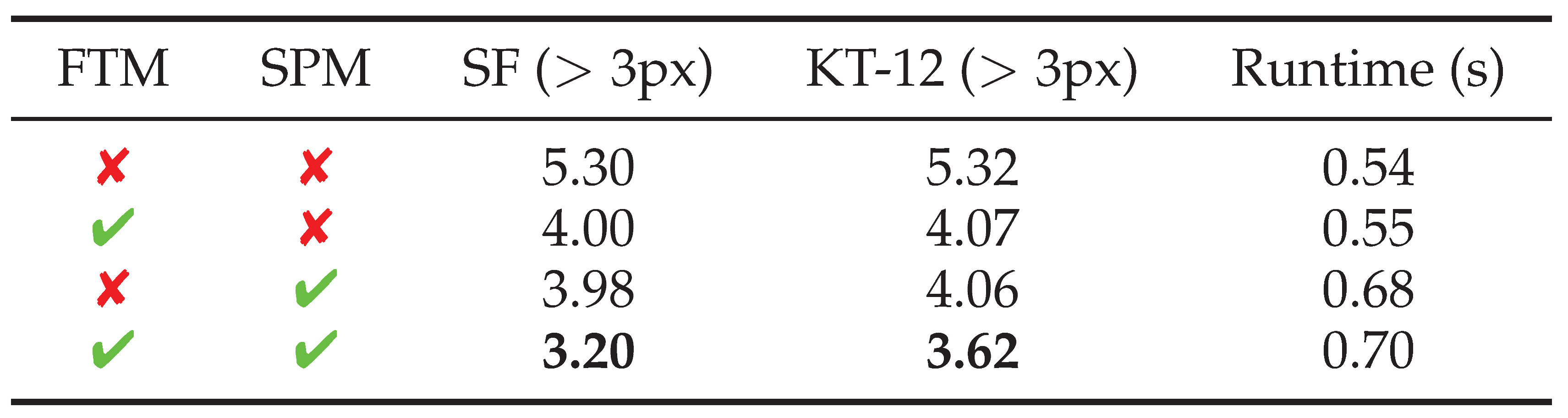

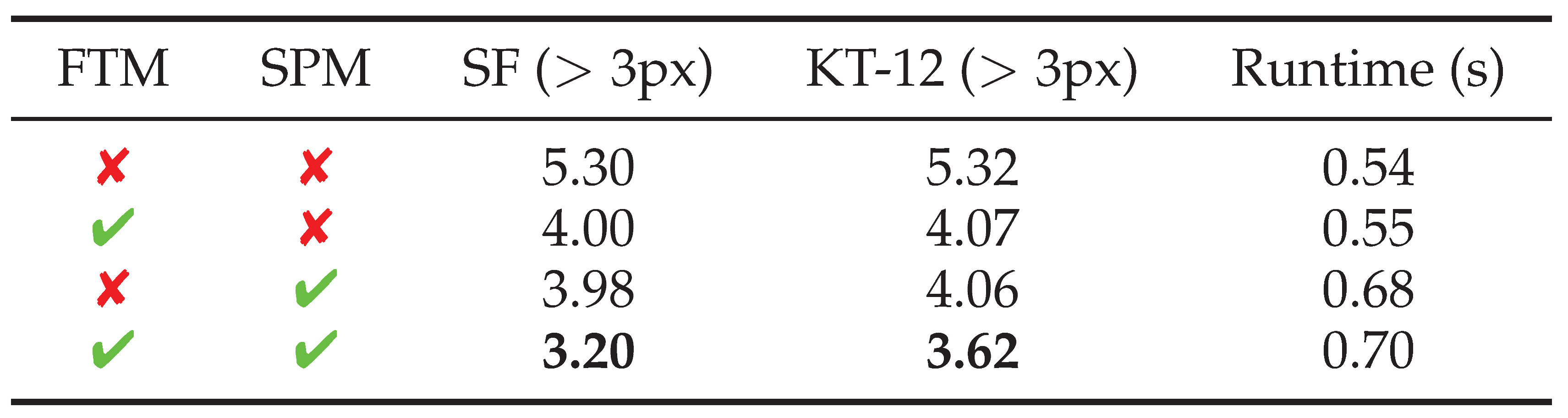

Appendix B. Additional Experiments

| Pre-trained dataset |

KT-12 (px) |

KT-15 (px) |

MB (px) |

ET (px) |

|---|---|---|---|---|

| SceneFlow [11] | ||||

| Cre-Stereo [10] |

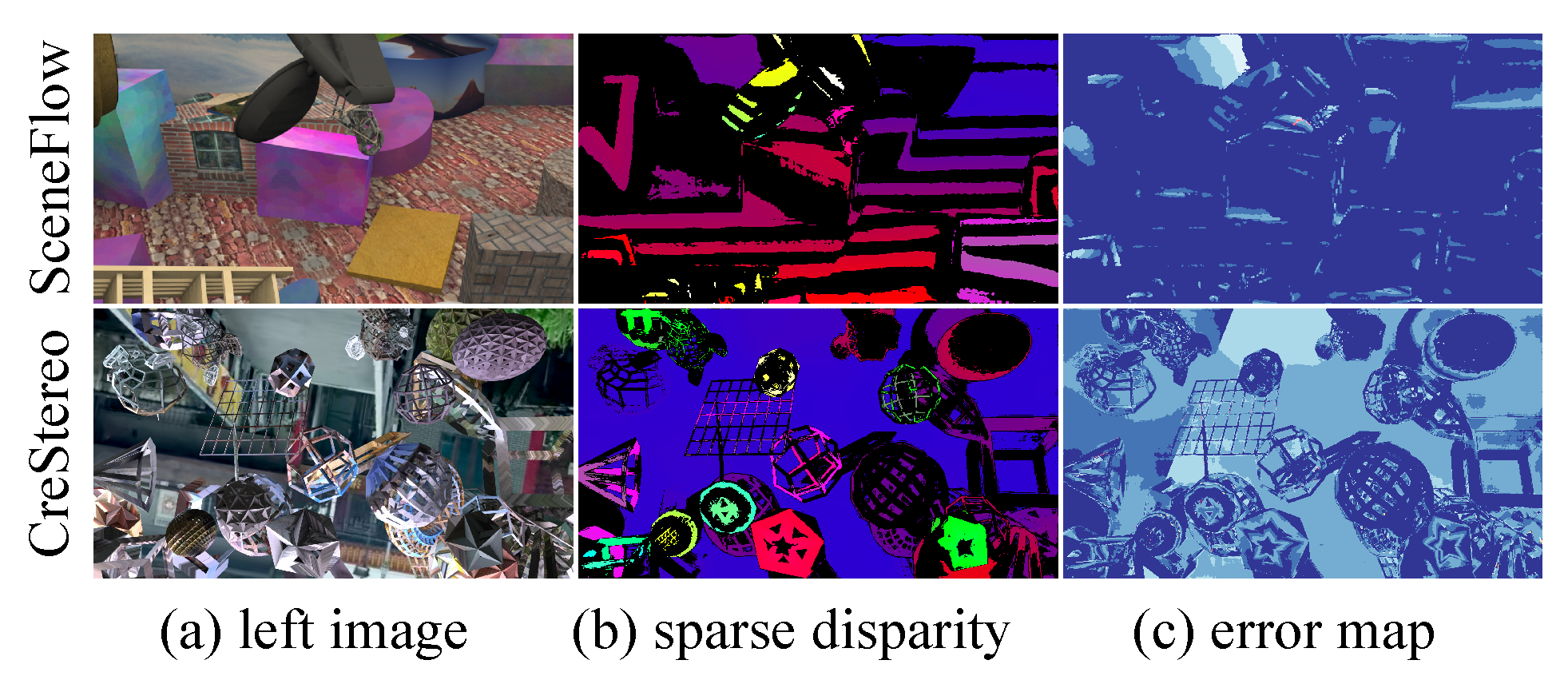

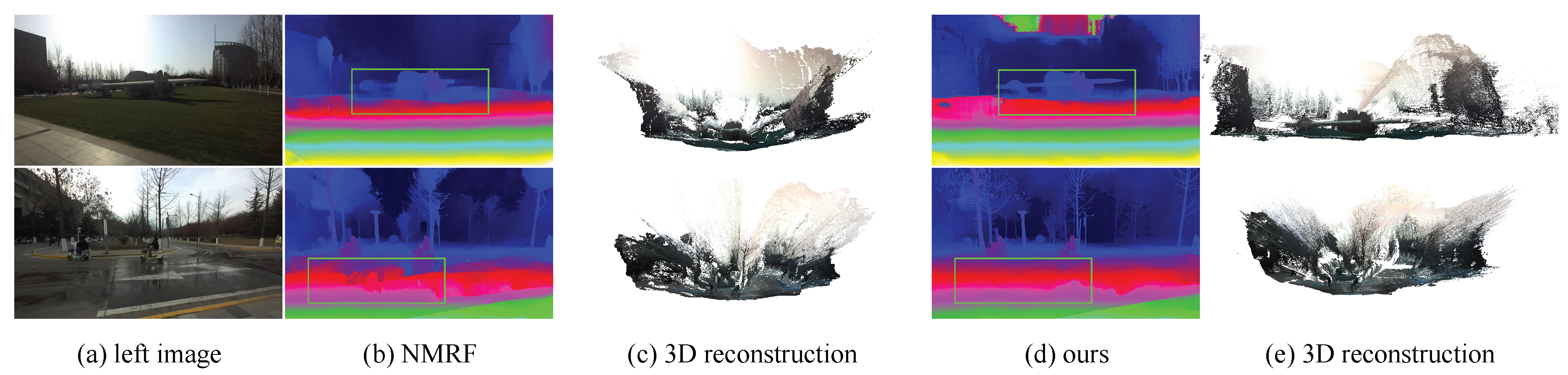

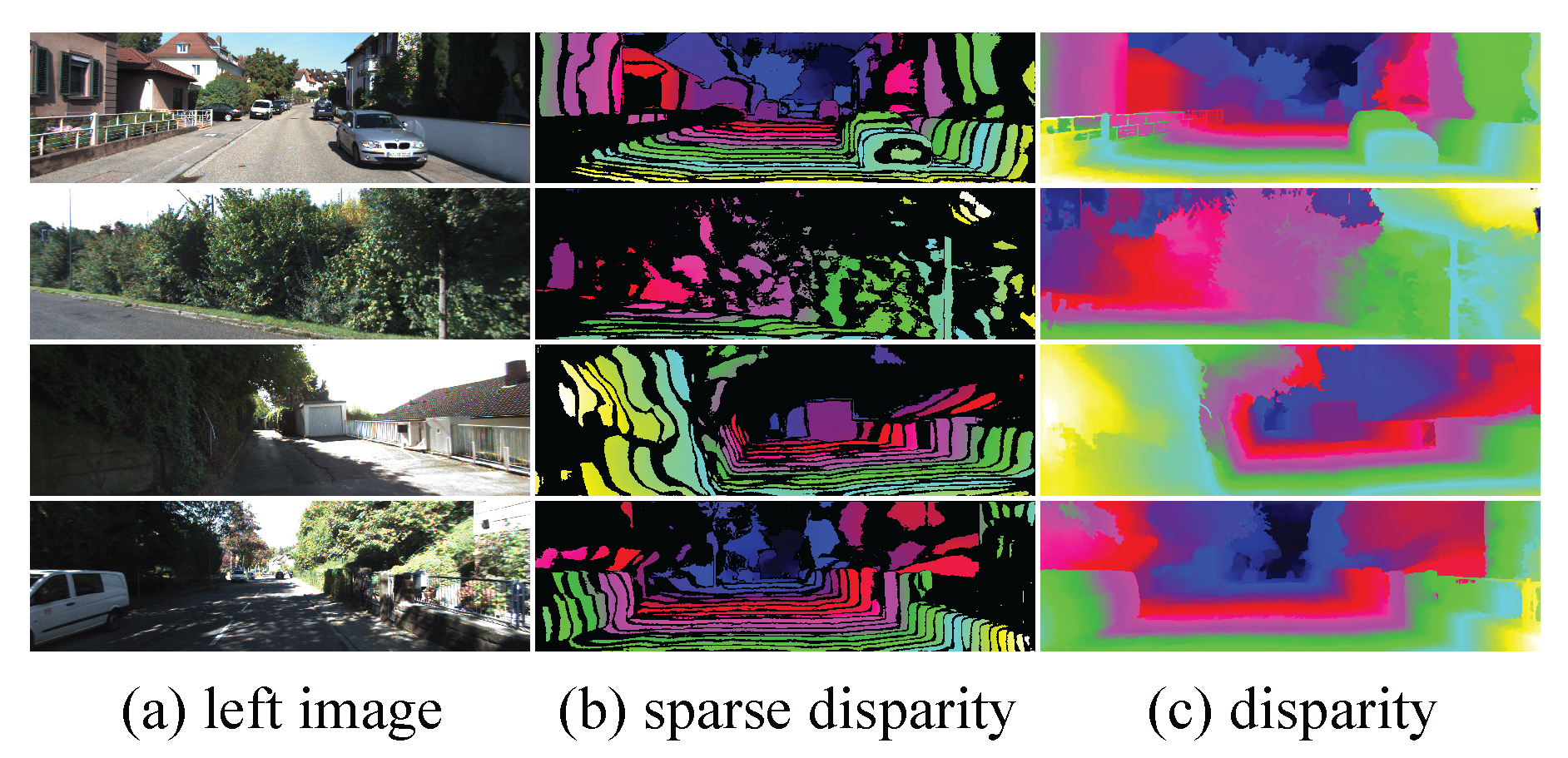

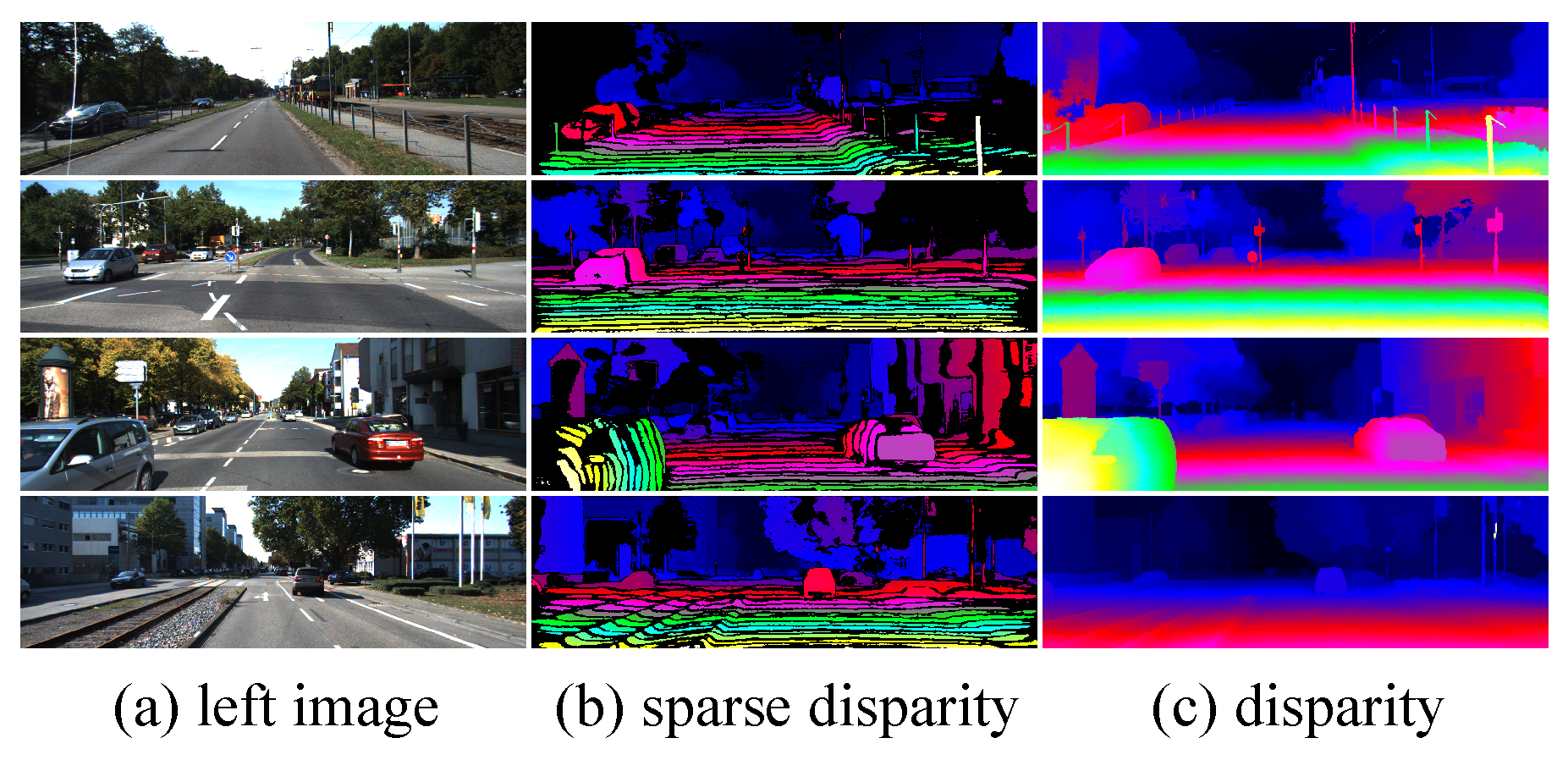

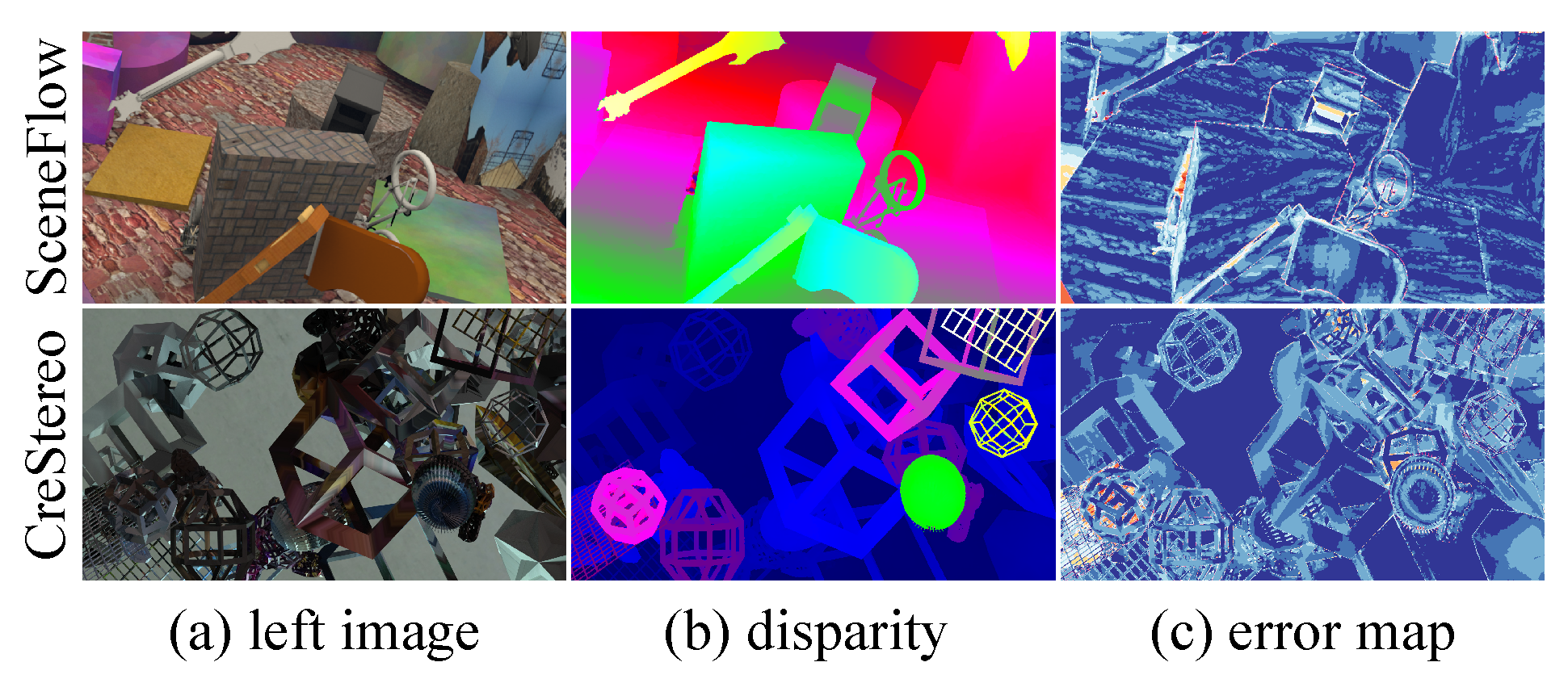

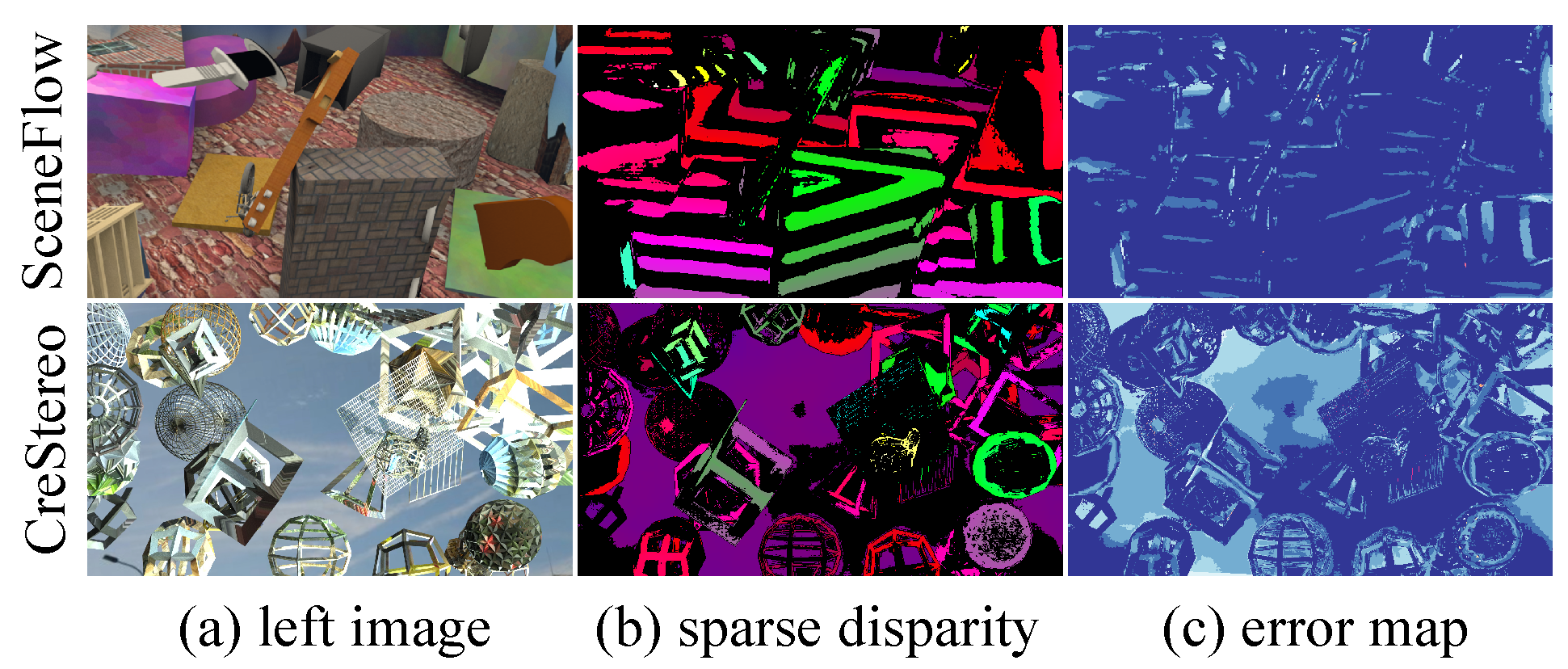

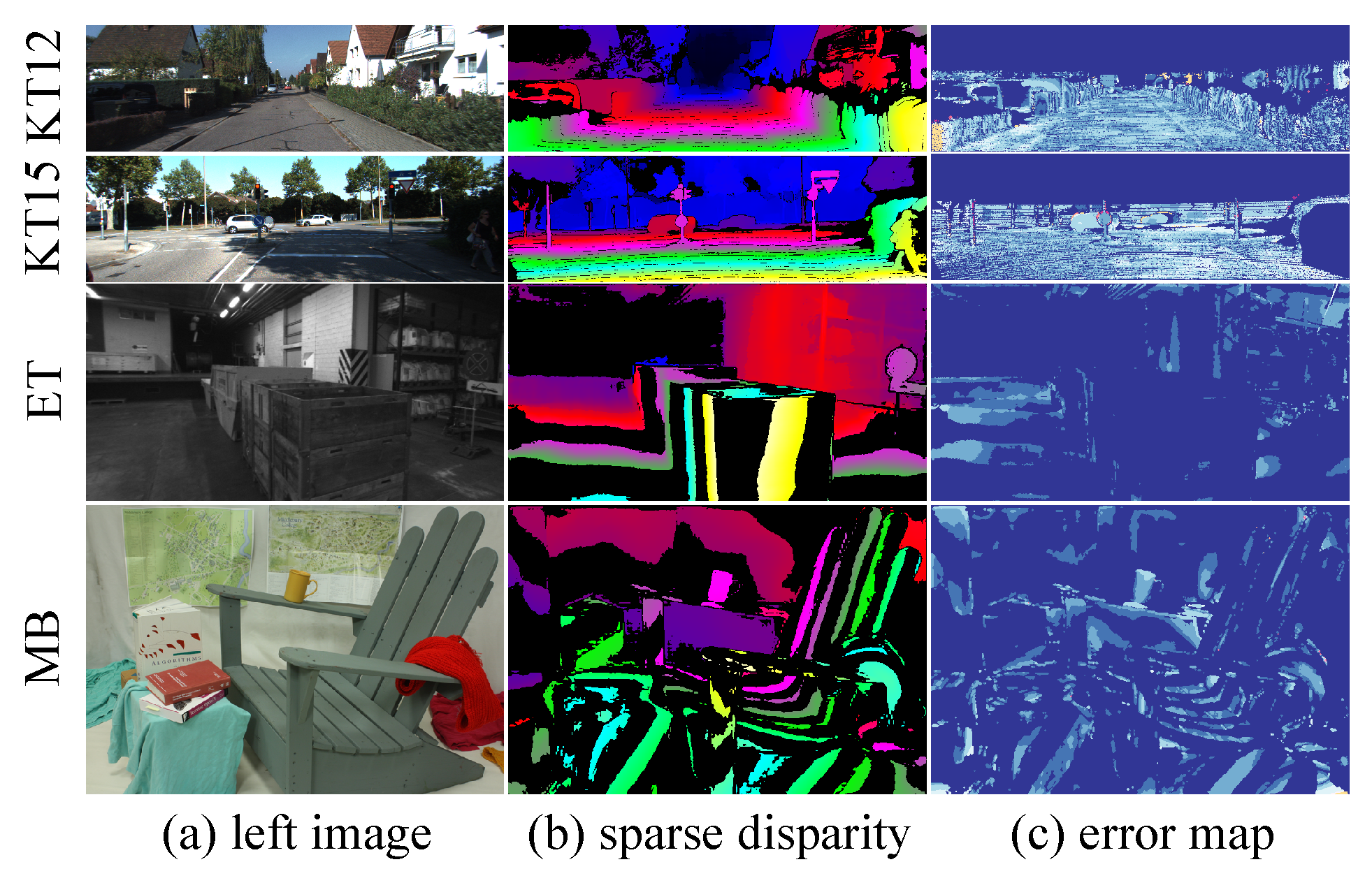

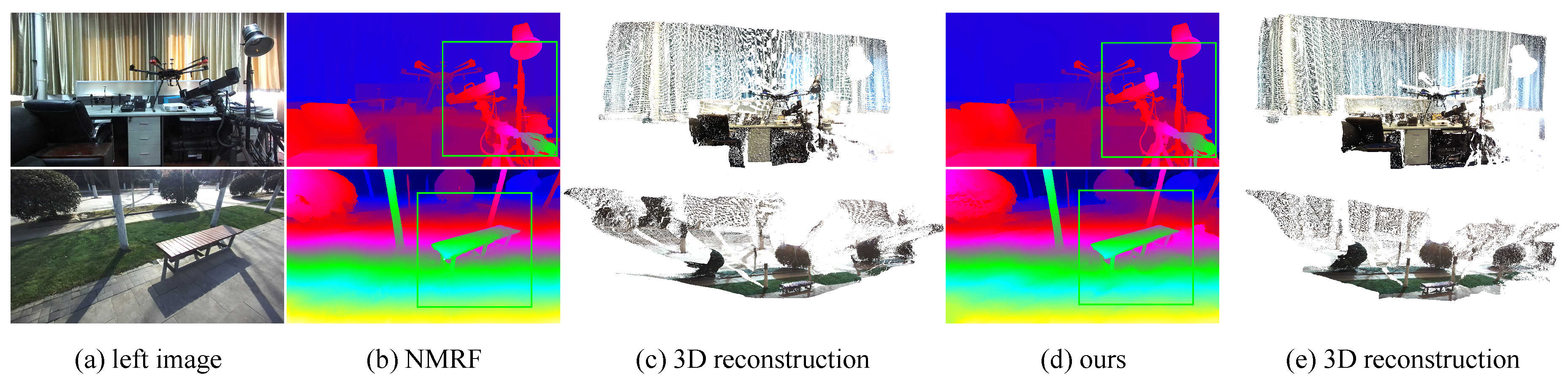

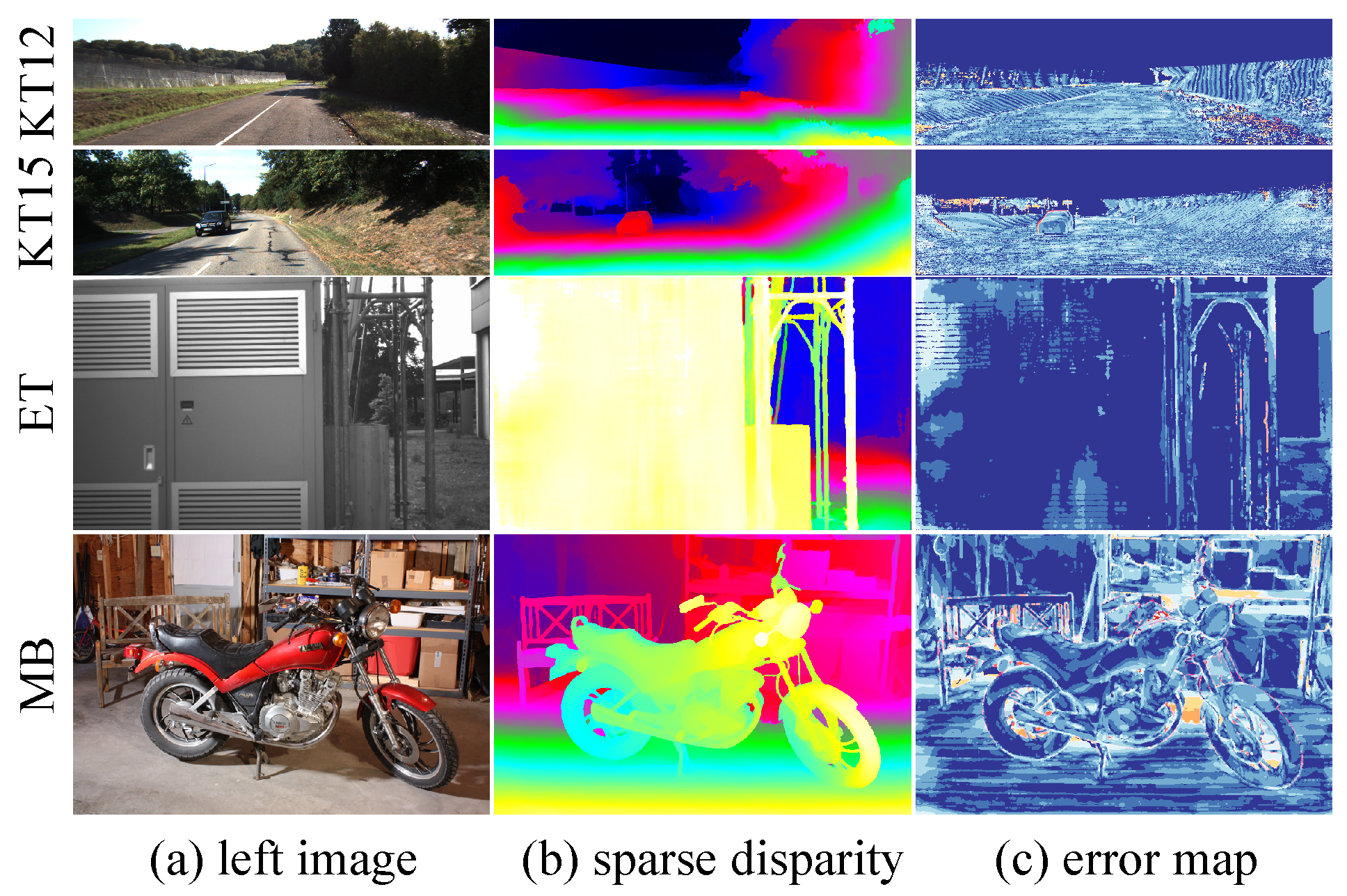

Appendix C. More Visualization Results

References

- Chen, C.; Zhao, L.; He, Y.; Long, Y.; Chen, K.; Wang, Z.; Hu, Y.; Sun, X. SemStereo: Semantic-Constrained Stereo Matching Network for Remote Sensing. Proceedings of the AAAI Conference on Artificial Intelligence (AAAI) 2025, 39, 15758–15766. [Google Scholar] [CrossRef]

- Rao, Z.; Dai, Y.; Shen, Z.; He, R. Rethinking Training Strategy in Stereo Matching. IEEE Transactions on Neural Networks and Learning Systems (TNNLS) 2023, 34, 7796–7809. [Google Scholar] [CrossRef] [PubMed]

- Liang, Z.; Li, C. Any-Stereo: Arbitrary-Scale Disparity Estimation for Iterative Stereo Matching. Proceedings of the AAAI Conference on Artificial Intelligence (AAAI) 2024, 38, 3333–3341. [Google Scholar] [CrossRef]

- Li, X.; Zhang, C.; Su, W.; Tao, W. II-Net: Implicit Intra-Inter Information Fusion for Real-Time Stereo Matching. Proceedings of the AAAI Conference on Artificial Intelligence (AAAI) 2024, 38, 3225–3233. [Google Scholar] [CrossRef]

- Zhou, J.; Zhang, H.; Yuan, J.; Ye, P.; Chen, T.; Jiang, H.; Chen, M.; Zhang, Y. All-in-One: Transferring Vision Foundation Models into Stereo Matching. Proceedings of the AAAI Conference on Artificial Intelligence (AAAI) 2025, 39, 10797–10805. [Google Scholar] [CrossRef]

- Yang, L.; Kang, B.; Huang, Z.; Xu, X.; Feng, J.; Zhao, H. Depth Anything: Unleashing the Power of Large-Scale Unlabeled Data. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024; pp. 10371–10381. [Google Scholar]

- Kirillov, A.; Mintun, E.; Ravi, N.; Mao, H.; Rolland, C.; Gustafson, L.; Xiao, T.; Whitehead, S.; Berg, A.C.; Lo, W.Y.; et al. Segment Anything. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023; pp. 4015–4026. [Google Scholar]

- Ruan, J.; Weng, H.; Yuan, Z.; Jin, G.; Zhou, L. Lightweight Stereo Vision for Obstacle Detection and Range Estimation in Micro-Mobility Vehicles. Sensors 2026, 26, 1988. [Google Scholar] [CrossRef] [PubMed]

- Oquab, M.; Darcet, T.; Moutakanni, T.; Vo, H.; Szafraniec, M.; Khalidov, V.; Fernandez, P.; Haziza, D.; Massa, F.; El-Nouby, A.; et al. DINOv2: Learning Robust Visual Features without Supervision. arXiv 2023. arXiv:2304.07193. [CrossRef]

- Li, J.; Wang, P.; Xiong, P.; Cai, T.; Yan, Z.; Yang, L.; Liu, J.; Fan, H.; Liu, S. Practical Stereo Matching via Cascaded Recurrent Network with Adaptive Correlation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022; pp. 16263–16272. [Google Scholar]

- Mayer, N.; Ilg, E.; Hausser, P.; Fischer, P.; Cremers, D.; Dosovitskiy, A.; Brox, T. A Large Dataset to Train Convolutional Networks for Disparity, Optical Flow, and Scene Flow Estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2016; pp. 4040–4048. [Google Scholar]

- Geiger, A.; Lenz, P.; Urtasun, R. Are We Ready for Autonomous Driving? The KITTI Vision Benchmark Suite. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2012; pp. 3354–3361. [Google Scholar]

- Menze, M.; Geiger, A. Object Scene Flow for Autonomous Vehicles. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2015; pp. 3061–3070. [Google Scholar]

- Sch"ops, T.; Sch"onberger, J.L.; Galliani, S.; Sattler, T.; Schindler, K.; Pollefeys, M.; Geiger, A. A Multi-View Stereo Benchmark with High-Resolution Images and Multi-Camera Videos. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2017; pp. 3260–3269. [Google Scholar]

- Scharstein, D.; Hirschmüller, H.; Kitajima, Y.; Krathwohl, G.; Nešić, N.; Wang, X.; Westling, P. High-Resolution Stereo Datasets with Subpixel-Accurate Ground Truth. In Proceedings of the German Conference on Pattern Recognition, 2014; pp. 31–42. [Google Scholar]

- Wang, Y.; Wang, L.; Yang, J.; An, W.; Guo, Y. Flickr1024: A Large-Scale Dataset for Stereo Image Super-Resolution. In Proceedings of the International Conference on Computer Vision Workshops (ICCVW), 2019; pp. 3852–3857. [Google Scholar]

- Kendall, A.; Martirosyan, H.; Dasgupta, S.; Henry, P.; Kennedy, R.; Bachrach, A.; Bry, A. End-to-End Learning of Geometry and Context for Deep Stereo Regression. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2017; pp. 66–75. [Google Scholar]

- Shen, Z.; Dai, Y.; Rao, Z. CFNet: Cascade and fused cost volume for robust stereo matching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2021; pp. 13906–13915. [Google Scholar]

- Zhao, H.; Zhou, H.; Zhang, Y.; Chen, J.; Yang, Y.; Zhao, Y. High-Frequency stereo matching network. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023; pp. 1327–1336. [Google Scholar]

- Guan, T.; Wang, C.; Liu, Y.H. Neural Markov Random Field for Stereo Matching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024; pp. 5459–5469. [Google Scholar]

- Wang, X.; Xu, G.; Jia, H.; Yang, X. Selective-Stereo: Adaptive Frequency Information Selection for Stereo Matching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024; pp. 19701–19710. [Google Scholar]

- Zhou, J.; Ye, P.; Zhang, H.; Yuan, J.; Qiang, R.; YangChenXu, L.; Cailin, W.; Xu, F.; Chen, T. Consistency-Aware Self-Training for Iterative-Based Stereo Matching. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2025, 16641–16650. [Google Scholar]

- Zhang, J.; Wang, X.; Bai, X.; Wang, C.; Huang, L.; Chen, Y.; Gu, L.; Zhou, J.; Harada, T.; Hancock, E.R. Revisiting Domain Generalized Stereo Matching Networks from a Feature Consistency Perspective. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022; pp. 13001–13011. [Google Scholar]

- Rao, Z.; Xiong, B.; He, M.; Dai, Y.; He, R.; Shen, Z.; Li, X. Masked representation learning for domain generalized stereo matching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023; pp. 5435–5444. [Google Scholar]

- Zhang, Y.; Wang, L.; Li, K.; Wang, Y.; Guo, Y. Learning Representations from Foundation Models for Domain Generalized Stereo Matching. In Proceedings of the European Conference on Computer Vision (ECCV), 2024; pp. 146–162. [Google Scholar]

- Wen, B.; Trepte, M.; Aribido, J.; Kautz, J.; Gallo, O.; Birchfield, S. FoundationStereo: Zero-Shot Stereo Matching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025; pp. 5249–5260. [Google Scholar]

- Bartolomei, L.; Tosi, F.; Poggi, M.; Mattoccia, S. Stereo Anywhere: Robust Zero-Shot Deep Stereo Matching Even Where Either Stereo or Mono Fail. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2025, 1013–1027. [Google Scholar]

- Jiang, H.; Lou, Z.; Ding, L.; Xu, R.; Tan, M.; Jiang, W.; Huang, R. DEFOM-Stereo: Depth Foundation Model Based Stereo Matching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025; pp. 21857–21867. [Google Scholar]

- Cheng, J.; Liu, L.; Xu, G.; Wang, X.; Zhang, Z.; Deng, Y.; Zang, J.; Chen, Y.; Cai, Z.; Yang, X. MONSter: Marrying Monodepth to Stereo Unleashes Power. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025; pp. 6273–6282. [Google Scholar]

- Jia, M.; Tang, L.; Chen, B.C.; Cardie, C.; Belongie, S.; Hariharan, B.; Lim, S.N. Visual Prompt Tuning. In Proceedings of the European Conference on Computer Vision (ECCV), 2022; pp. 709–727. [Google Scholar]

- Shen, Z.; Song, X.; Dai, Y.; Zhou, D.; Rao, Z.; Zhang, L. Digging into Uncertainty-Based Pseudo-Labels for Robust Stereo Matching. IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI) 2023, 45, 14301–14320. [Google Scholar] [CrossRef] [PubMed]

- Yang, S.; Wu, J.; Liu, J.; Li, X.; Zhang, Q.; Pan, M.; Gan, Y.; Chen, Z.; Zhang, S. Exploring Sparse Visual Prompt for Domain Adaptive Dense Prediction. In Proceedings of the AAAI Conference on Artificial Intelligence (AAAI), 2024; pp. 16334–16342. [Google Scholar]

- Guo, Y.; Yang, C.; Rao, A.; Agrawala, M.; Lin, D.; Dai, B. SparseCtrl: Adding Sparse Controls to Text-to-Video Diffusion Models. In Proceedings of the European Conference on Computer Vision (ECCV), 2024; pp. 330–348. [Google Scholar]

- Li, H.; Liu, H.; Hu, D.; Wang, J.; Oguz, I. PRISM: A Promptable and Robust Interactive Segmentation Model with Visual Prompts. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention (MICCAI), 2024; pp. 389–399. [Google Scholar]

- Huang, Z.; Yu, H.; Shentu, Y.; Yuan, J.; Zhang, G. From Sparse to Dense: Camera Relocalization with a Scene-Specific Detector from Feature Gaussian Splatting. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025; pp. 27059–27069. [Google Scholar]

- He, K.; Chen, X.; Xie, S.; Li, Y.; Dollár, P.; Girshick, R. Masked autoencoders are scalable vision learners. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022; pp. 16000–16009. [Google Scholar]

- Liu, B.; Yu, H.; Qi, G. GraftNet: Towards Domain Generalized Stereo Matching with a Broad-Spectrum and Task-Oriented Feature. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022; pp. 13012–13021. [Google Scholar]

- Yang, L.; Kang, B.; Huang, Z.; Zhao, Z.; Xu, X.; Feng, J.; Zhao, H. Depth Anything V2. 2025, 37, 21875–21911. [Google Scholar]

- Liu, Z.; Qiao, L.; Chu, X.; Ma, L.; Jiang, T. Towards an Efficient Foundation Model for Zero-Shot Amodal Segmentation. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2025, 20254–20264. [Google Scholar]

- Liang, Y.; Hu, Y.; Shao, W.; Fu, Y. Distilling Monocular Foundation Models for Fine-Grained Depth Completion. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025; pp. 22254–22265. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An Image Is Worth 16x16 Words: Transformers for Image Recognition at Scale. In Proceedings of the International Conference on Learning Representations (ICLR), 2021; pp. 1–21. [Google Scholar]

- Liu, B.; Yu, H.; Long, Y. Local Similarity Pattern and Cost Self-Reassembling for Deep Stereo Matching Networks. Proceedings of the AAAI Conference on Artificial Intelligence (AAAI) 2022, 36, 1647–1655. [Google Scholar] [CrossRef]

- Xu, P.; Xiang, Z.; Qiao, C.; Fu, J.; Pu, T. Adaptive Multi-Modal Cross-Entropy Loss for Stereo Matching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024; pp. 5135–5144. [Google Scholar]

| t | Cre px |

SF px |

KT-12 px |

KT-15 px |

MB px |

ET px |

|---|---|---|---|---|---|---|

| Backbone | Size | SF (px) | KT-12 () |

|---|---|---|---|

| CNN | - | 3.90 | 4.98 |

| DINO v2 | S | 3.65 | 4.84 |

| DINO v2 | L | 3.22 | 4.54 |

| DepthAnything v2 | S | 3.61 | 3.79 |

| DepthAnything v2 | L | 3.20 | 3.62 |

| Method | KT-12 (px) |

KT-15 (px) |

MB (px) |

ET (px) |

|---|---|---|---|---|

| HVT-RAFT | ||||

| NMRF | ||||

| Selective-IGEV | ||||

| Former-RAFT-DAM | ||||

| DEFOM-Stereo | ||||

| CST-IGEV | ||||

| SSPGNet |

| Method | KT-12 (px) |

KT-15 (px) |

MB (px) |

ET (px) |

|---|---|---|---|---|

| PSMNet | ||||

| GANet | ||||

| DSMNet | ||||

| GF-PSMNet | ||||

| LacGwcNet | ||||

| CFNet | ||||

| Mask-CFNet | ||||

| SSPGNet |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).