1. Introduction

Establishing dense spatial correspondences between volumetric medical images is central to many clinical and research workflows, from longitudinal brain atrophy monitoring to atlas-based segmentation propagation [

1,

2]. Deformable registration seeks a dense displacement field that warps one volume onto another while respecting anatomical plausibility, which imposes two competing demands: the field must be flexible enough to capture inter-subject variability yet smooth enough to avoid topological violations such as tissue folding [

3,

4].

Classical iterative optimization methods provide strong diffeomorphic guarantees but are prohibitively slow for large-scale studies. Convolutional neural networks (CNNs) address this bottleneck by predicting displacement fields in a single forward pass [

5,

6]. VoxelMorph [

6] demonstrated that training with image similarity and smoothness objectives alone, without segmentation supervision, can yield competitive accuracy. Nonetheless, the limited effective receptive field of purely convolutional encoders constrains their capacity to resolve large or spatially heterogeneous deformations [

7].

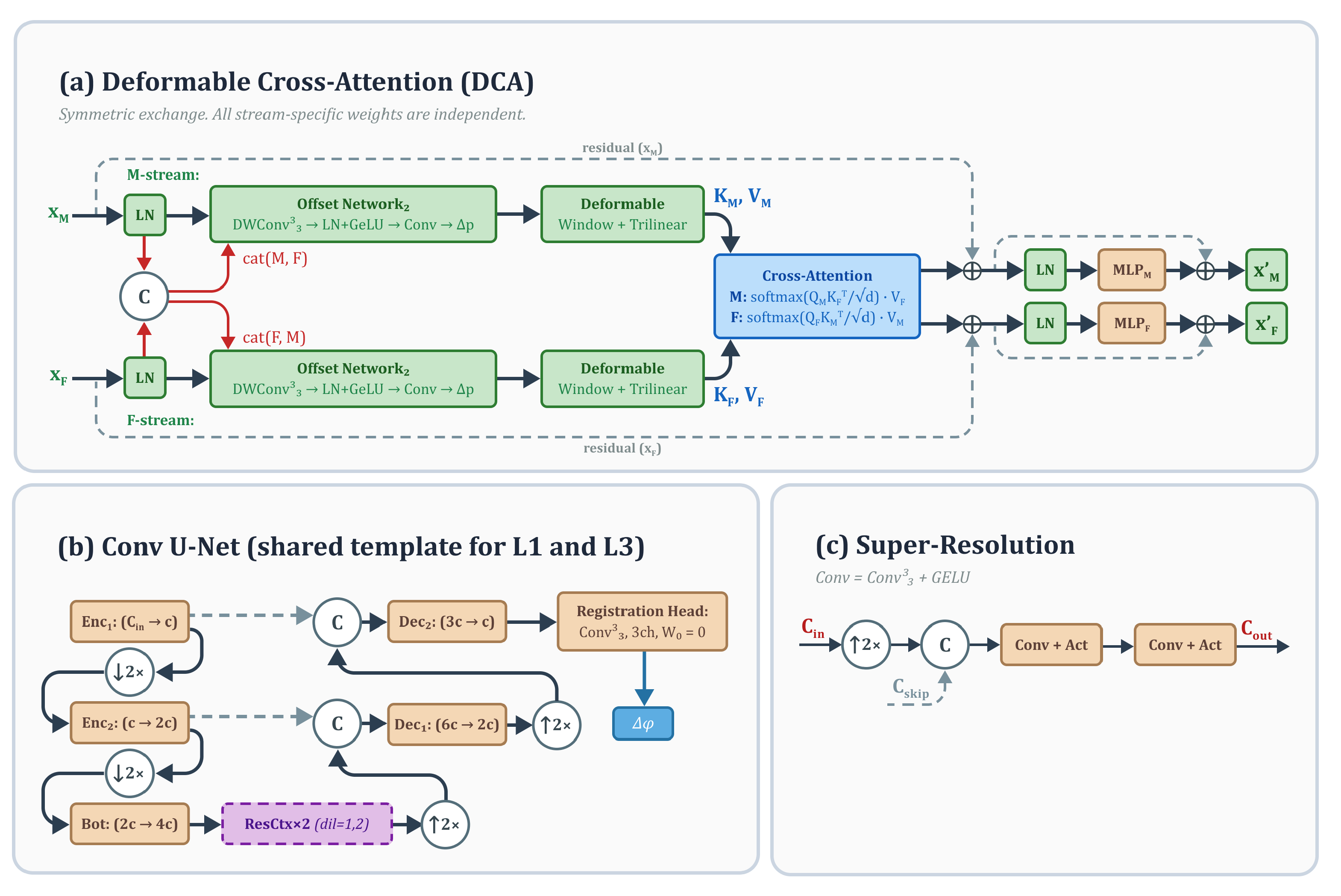

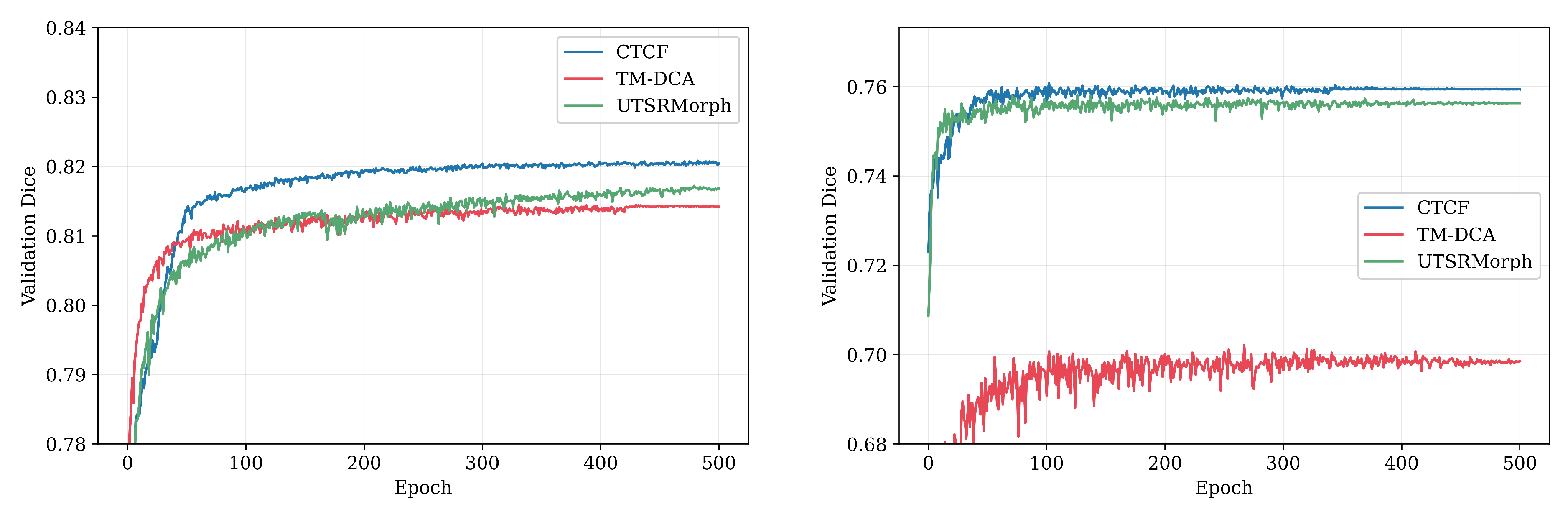

Vision transformers overcome this limitation through self-attention over patch tokens. TransMorph [

7] paired a Swin Transformer encoder with a convolutional decoder and achieved substantial accuracy gains on brain MRI benchmarks. TransMorph-DCA [

8] further replaced window-based self-attention with deformable cross-attention (DCA), allowing each encoder to selectively sample anatomically relevant tokens from the opposite image stream through learned offsets. This sparse adaptive sampling improves robustness to large deformations while keeping attention complexity comparable to the standard windowed variant. However, both methods still rely on convolutional decoders with interpolation-based upsampling, which may introduce aliasing artifacts and limit the spatial fidelity of the recovered displacement field.

An alternative line of work addresses the decoder bottleneck. UTSRMorph [

9] recast displacement field reconstruction as a super-resolution (SR) problem, replacing trilinear interpolation with learned upsampling modules that progressively reconstruct high-resolution fields from coarse feature maps. The resulting deformation fields exhibit improved smoothness and spatial detail. Yet the encoder of UTSRMorph uses overlapping window self-attention rather than deformable cross-attention, which may limit the capacity for capturing sparse long-range correspondences between distant anatomical structures.

These two advances—deformable cross-attention and learned super-resolution—address complementary bottlenecks but remain single-pass architectures that must resolve global alignment and local detail simultaneously.

Coarse-to-fine decomposition has a long history in registration. Classical methods such as SyN [

3] perform multi-resolution optimization, solving for large-scale deformations on downsampled grids before refining at finer scales. In the learning-based setting, Zhao et al. [

10] proposed recursive cascaded networks that stack identical registration subnetworks, each refining the previous stage’s output, demonstrating that cascading improves accuracy on brain MRI. Mok and Chung [

11] introduced LapIRN, which combines a Laplacian pyramid decomposition with multi-resolution registration to capture deformations at different spatial scales. However, both approaches replicate the same architecture at each level, resulting in proportional parameter growth with each added stage.

While these cascaded approaches demonstrate the value of multi-resolution decomposition, they share two limitations. First, they replicate a uniform architecture at every level, so each added stage incurs the full parameter and compute cost of the base network. Second, the refinement signal is implicit—later stages simply re-register warped images without an explicit measure of

where the current alignment is deficient. Analogous benefits of stage-wise decomposition have been observed in other medical imaging tasks: for example, Nefediev et al. [

12] reported that a cascaded pipeline combining prostate localization and subsequent segmentation improved prostate cancer segmentation on T2-weighted MRI, confirming that explicit stage decomposition is beneficial when a model must capture both global context and precise local boundaries. Additionally, topology-preserving constraints—inverse consistency, Jacobian penalties—are typically applied as auxiliary losses but are not tightly coupled with architectural design.

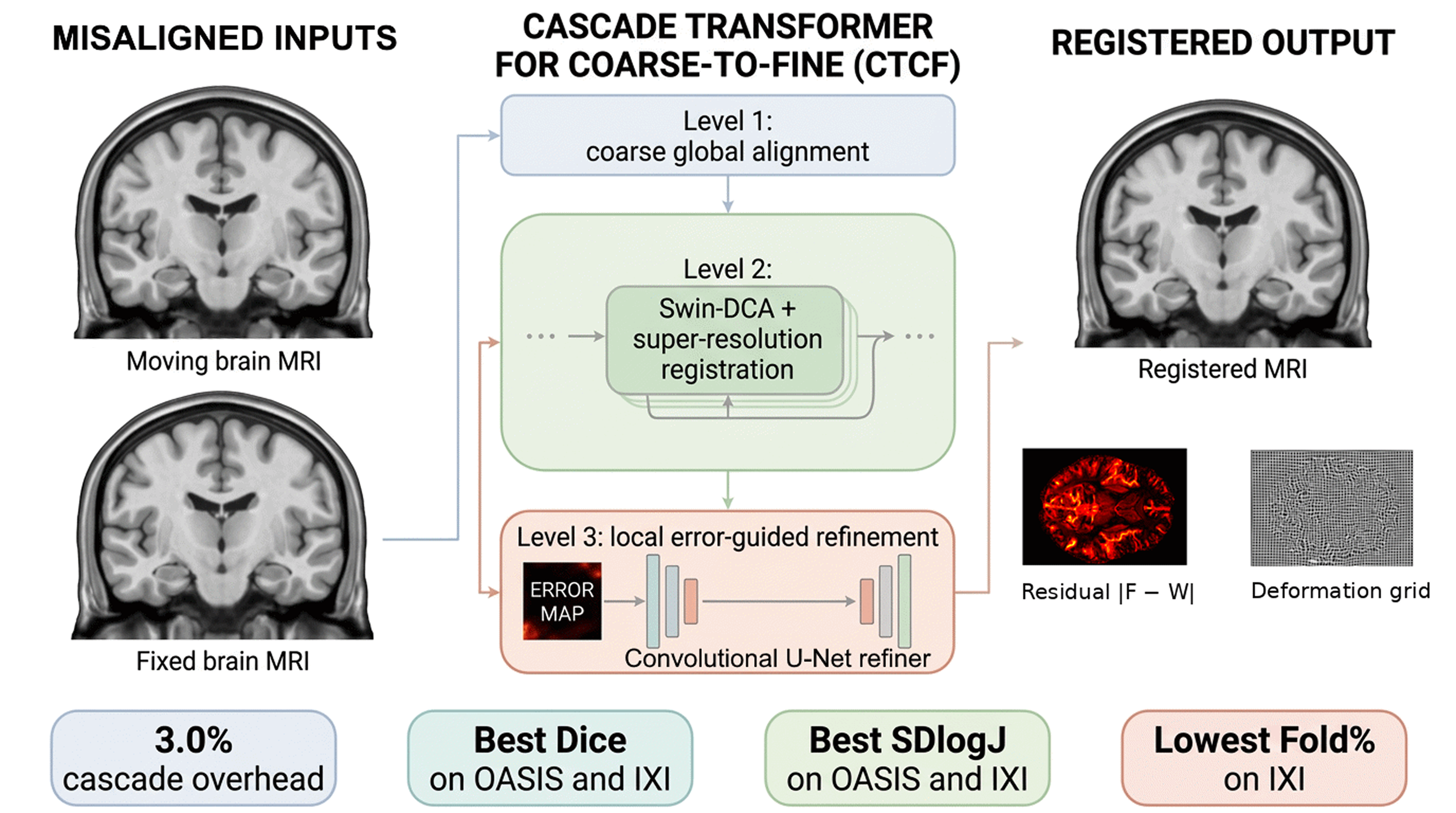

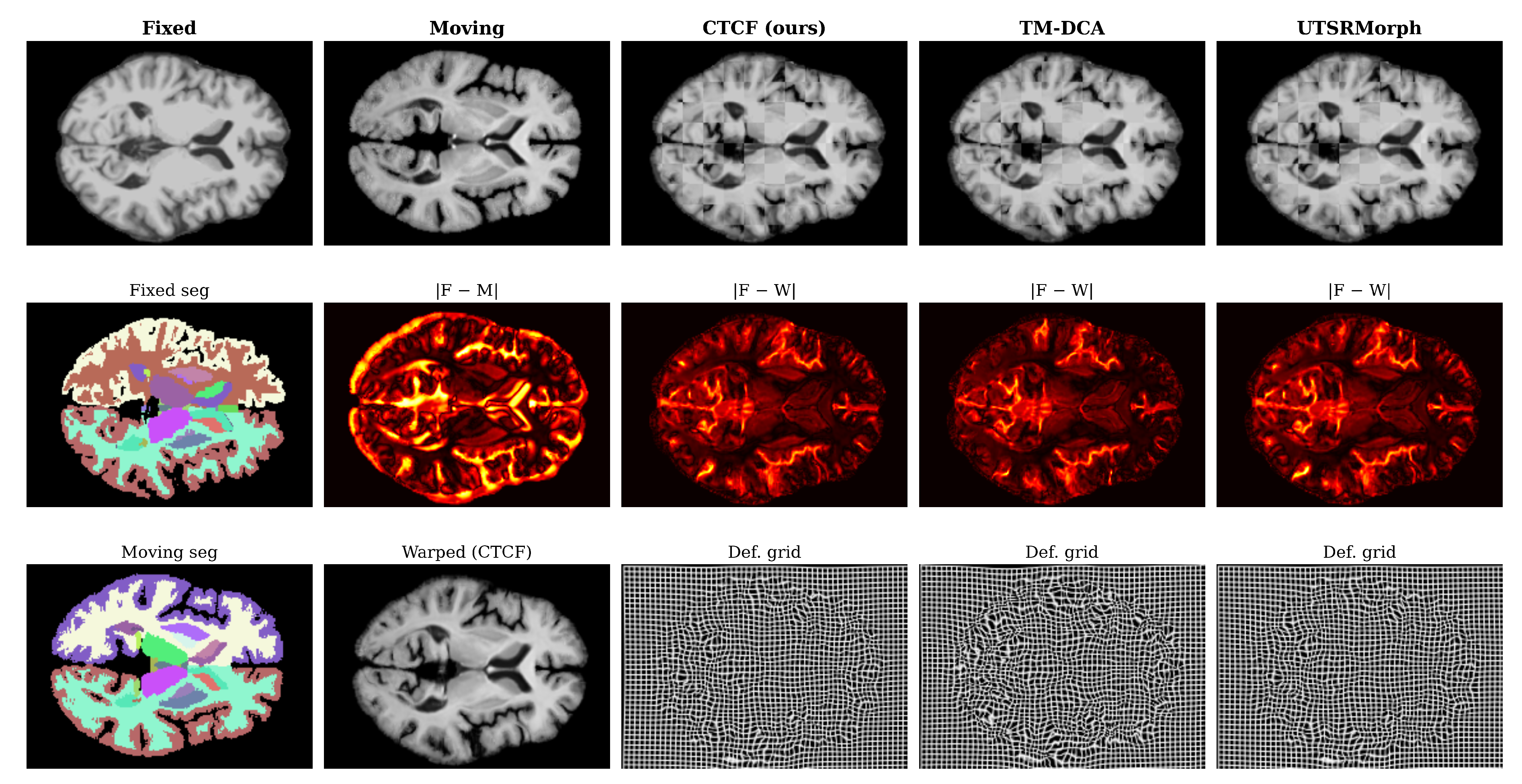

In this work, we propose CTCF (Cascade Transformer for Coarse-to-Fine registration), a three-level cascade framework that jointly addresses correspondence modeling, high-resolution deformation reconstruction, and topology-preserving regularization. Unlike single-pass methods, CTCF explicitly decomposes registration into three stages:

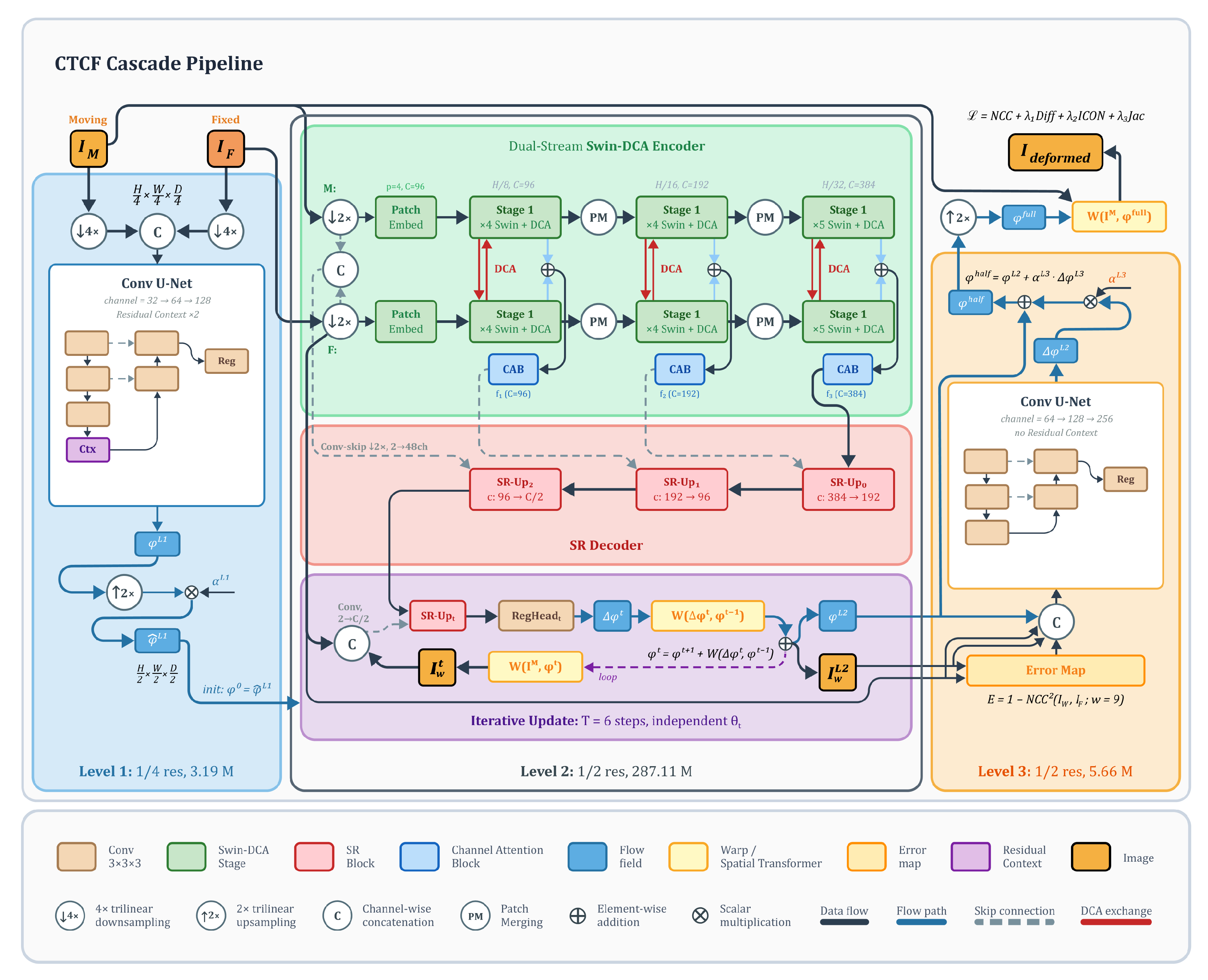

Level 1: A lightweight convolutional network at quarter resolution that predicts a coarse displacement field, providing a global initialization for subsequent stages.

Level 2: A Swin Transformer encoder with deformable cross-attention and a super-resolution decoder at half resolution, performing the main registration with iterative flow integration.

Level 3: An error-driven convolutional refiner at half resolution that detects residual misalignment using local NCC error maps and predicts a corrective displacement update.

A smoothstep cascade warmup schedule progressively activates Level 1 and Level 3 during training, enabling stable optimization of the full cascade. The model is trained end-to-end with a composite unsupervised loss that enforces image similarity, deformation smoothness, inverse consistency, and topology preservation—no segmentation labels are used during training.

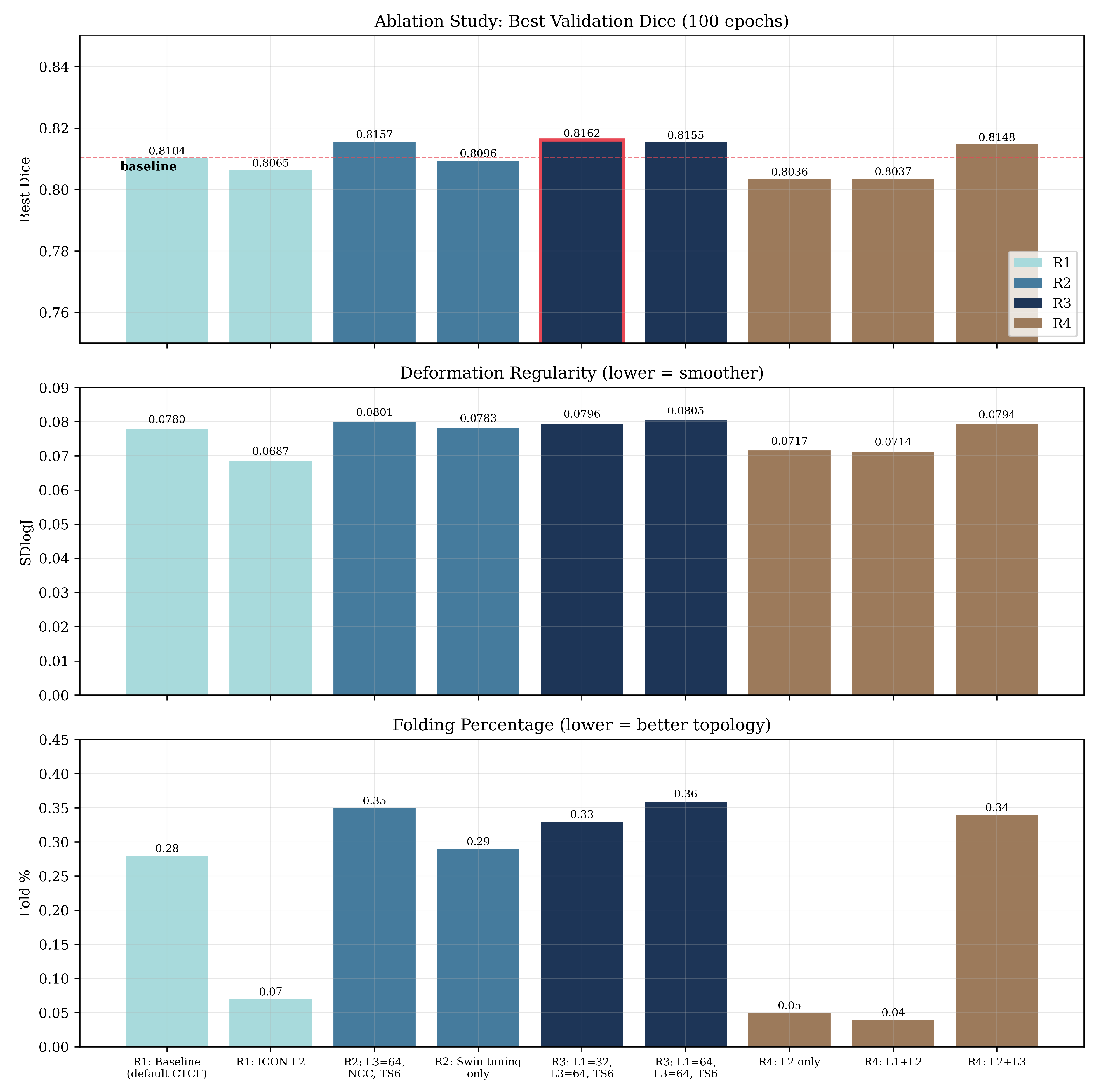

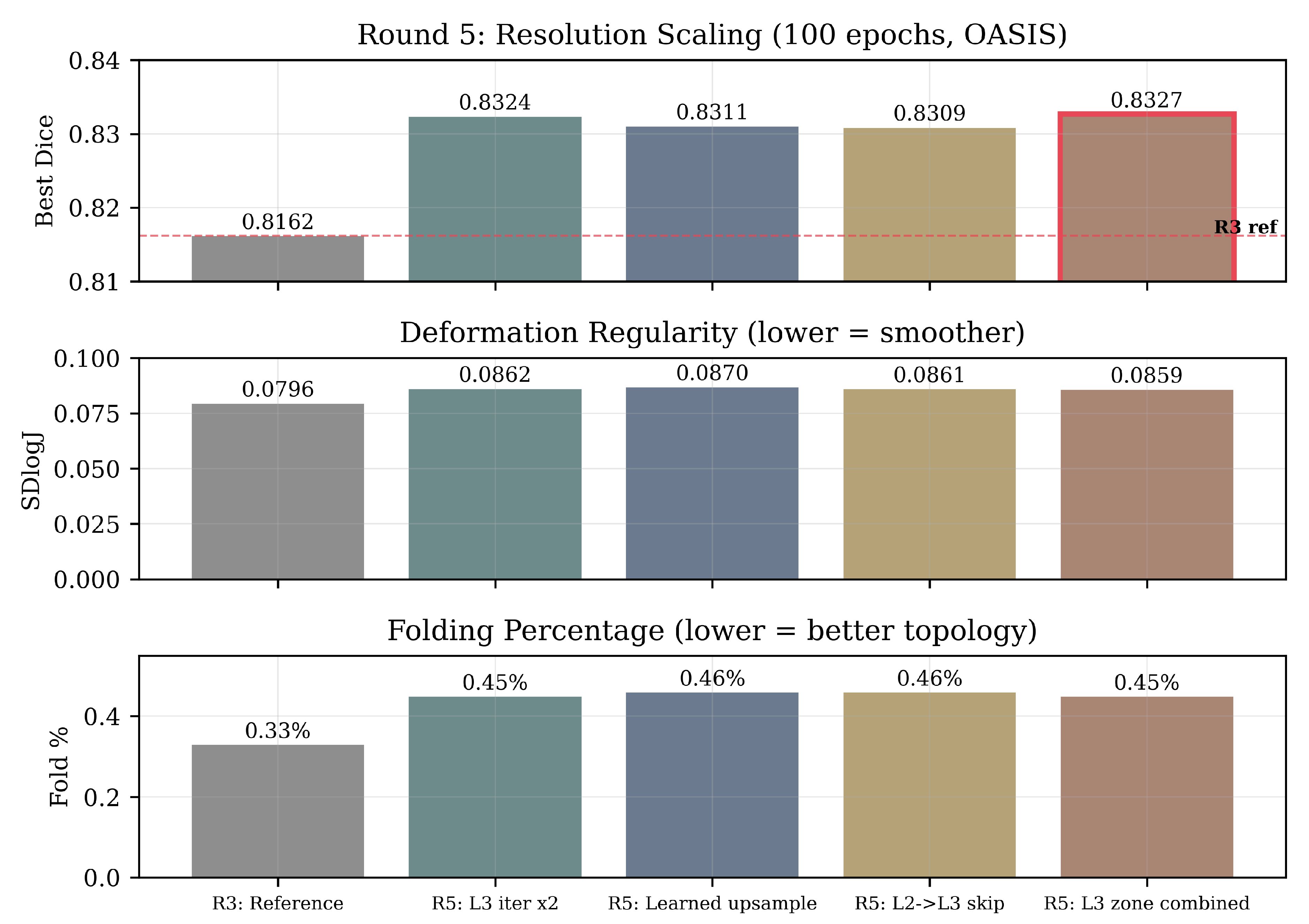

In our preliminary work [

13], we presented an early version of CTCF with a single-stage refinement loop on OASIS. The present study extends this with an explicit three-level cascade, a stronger regularization strategy (inverse consistency + Jacobian penalty replacing cycle consistency), evaluation on both OASIS and IXI, and a five-round ablation study including cascade decomposition and resolution scaling.

The main contributions are:

- 1.

A modular coarse-to-fine cascade (Levels 1 and 3) that adds only 3.0% parameter overhead to a Swin-DCA encoder with a learned super-resolution decoder. The cascade envelope is architecturally separable from the core module and, in principle, portable to alternative backbones.

- 2.

An error-driven flow refinement module (Level 3) that uses per-voxel local NCC error maps to detect and correct residual misalignment, validated against two alternative error formulations.