Empirical Results: ENS 2016–2017

Using data from the Chilean National Health Survey (ENS) 2016–2017 and explicitly accounting for its complex multistage sampling design, we estimated survey-weighted prevalence figures and regression models for chronic kidney disease (CKD) and high cardiovascular (CV) risk in the adult population. CKD was defined as an estimated glomerular filtration rate below 60 ml/min/1.73 m2, while high cardiovascular risk was defined as the highest risk category (category 3) of the Chilean-adapted Framingham 10-year cardiovascular risk score.

The analytic sample comprised adults, including approximately individuals with CKD events. The survey-weighted prevalence of CKD was 3.1% (95% CI: 2.4–3.8), and the prevalence of high cardiovascular risk was 23.9% (95% CI: 21.5–26.3).

All variance estimates fully accounted for the complex survey design, including stratification, clustering, unequal sampling weights, and strata containing a single primary sampling unit, which were handled using conservative variance adjustments.

Table 5 presents survey-weighted baseline characteristics stratified by CKD status. Individuals with CKD were substantially older than those without CKD, with a mean age of 74.8 years compared to 42.3 years in the non-CKD group (

). The proportion of women was similar across groups (50.9% vs. 51.6%,

), and mean body mass index (BMI) did not differ significantly despite the large age gap (28.9 vs. 28.5 kg/m

2,

).

In contrast, cardiometabolic conditions were markedly more prevalent among individuals with CKD. Hypertension affected 86.7% of participants with CKD compared to 25.5% among those without CKD (), while diabetes prevalence was nearly three times higher in the CKD group (31.1% vs. 11.2%, ). Most notably, the prevalence of high cardiovascular risk exceeded 90% among individuals with CKD, compared to 21.5% in the non-CKD population ().

Taken together, these findings reveal a pronounced clustering of cardiovascular risk factors and predicted cardiovascular risk among individuals with CKD in the Chilean adult population, providing a strong empirical motivation for the multivariable and regularized modeling strategies developed in the subsequent analyses.

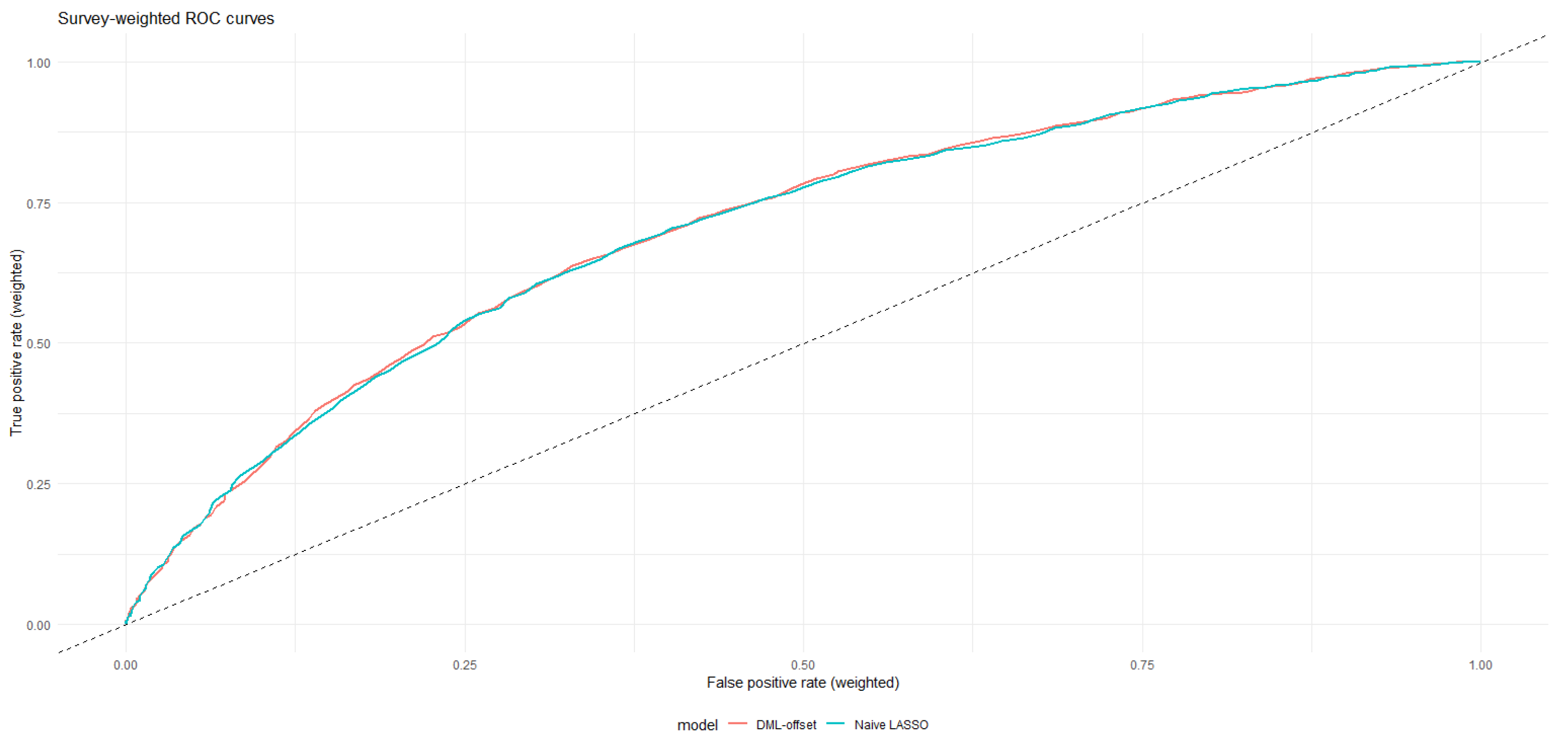

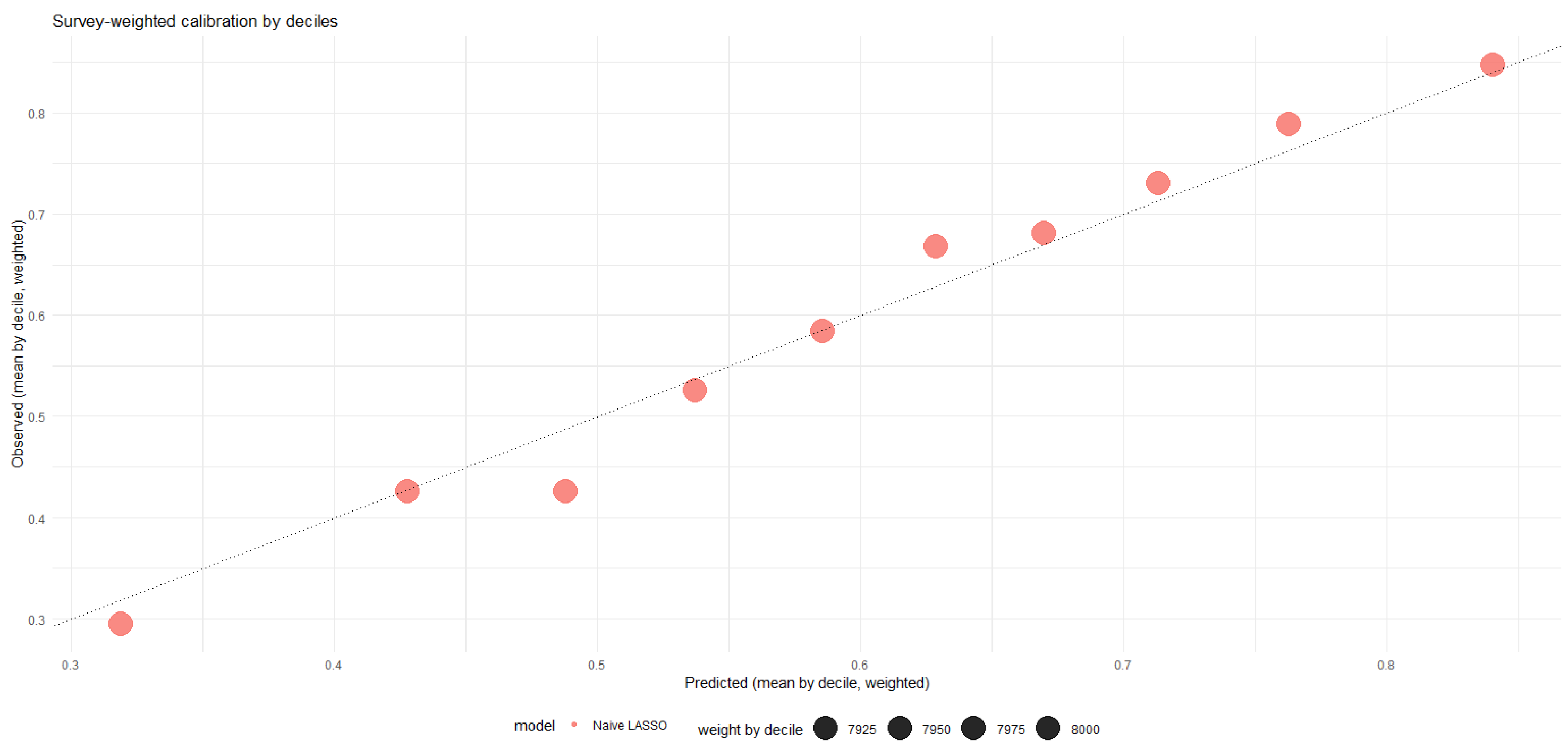

Naive survey-weighted model

As an initial benchmark, we estimated a survey-weighted logistic regression model treating CKD status as exogenous. The model adjusted for age, sex, hypertension, diabetes, and body mass index, while fully accounting for the complex survey design.

In this naive specification, CKD was strongly associated with high cardiovascular risk. Individuals with CKD exhibited substantially higher odds of being classified as high cardiovascular risk compared to those without CKD (OR = 5.66; 95% CI: 2.71–11.82; ). Age and diabetes emerged as the strongest predictors, while hypertension also showed a positive and statistically significant association. Sex and body mass index were not independently associated with high cardiovascular risk in this model.

While these results indicate a robust association between CKD and predicted cardiovascular risk, the naive specification does not address potential overfitting or multicollinearity among cardiometabolic covariates. These limitations motivate the use of regularized variable selection techniques in the subsequent analysis.

Regularized variable selection via LASSO

Under the conservative criterion, LASSO selected a compact and clinically interpretable set of predictors, including chronic kidney disease (CKD), age, hypertension, and diabetes. This subset captures the core cardiometabolic pathways linking renal dysfunction and cardiovascular risk.

Using the more permissive criterion, additional variables entered the model, including body mass index and selected metabolic and laboratory indicators, as well as regional fixed effects. While this specification improved in-sample fit, it did so at the cost of increased model complexity.

Table 6 reports the survey-weighted logistic regression results re-estimated using the variables selected by each LASSO rule. Across both specifications, CKD remained a strong and statistically significant predictor of high cardiovascular risk. In the

model, individuals with CKD exhibited more than fivefold higher odds of being classified as high cardiovascular risk compared to those without CKD (OR = 5.73; 95% CI: 2.80–11.73). Nearly identical effect sizes were observed under the

specification (OR = 5.62; 95% CI: 2.72–11.60), indicating substantial robustness of the CKD association to alternative model selection criteria.

Age and diabetes consistently emerged as the strongest predictors in both models, while hypertension retained a moderate but statistically significant association. Importantly, the sign and magnitude of the CKD coefficient were stable across specifications, and all shared covariates exhibited identical coefficient signs, underscoring the structural robustness of the estimated relationships.

Model performance metrics were similar across the two specifications. The McFadden pseudo- was 0.445 for the model and 0.449 for the model, suggesting that the additional covariates selected under provided only marginal improvements in explanatory power.

Overall, these results support the use of the parsimonious specification as the primary empirical model, while the specification serves as a sensitivity analysis confirming the stability of the CKD effect.

In the empirical application, LASSO is used exclusively as a data-driven variable screening step. All reported odds ratios, confidence intervals, and causal estimates are obtained from unpenalized survey-weighted models refit on the covariate sets selected under (main specification) and (sensitivity analysis).

Both naive and LASSO-refitted survey-weighted models yield similar odds ratios, indicating a strong associational relationship between CKD and high cardiovascular risk. These models are interpreted as predictive or associational and do not admit a causal interpretation. See

Table 7

Endogeneity assessment via two-stage residual inclusion

To explore potential endogeneity between chronic kidney disease (CKD) status and cardiovascular risk, we implemented a two-stage residual inclusion (2SRI) approach adapted to the complex ENS sampling design. In this framework, CKD status was treated as potentially endogenous.

In the first stage, CKD was modeled as a function of age, sex, hypertension, and diabetes, which act as clinically motivated predictors of kidney dysfunction and are strongly related to CKD prevalence. The first-stage model was estimated using survey-weighted logistic regression without penalization. First-stage relevance was assessed using design-based Wald tests that account for stratification, clustering, and survey weights. Coefficient estimates, standard errors, effective sample size, and joint relevance diagnostics are reported in

Table S1.

The resulting first-stage residuals were then included as a control function in the second-stage outcome model. In this specification, the residual term was not statistically significant (), providing no strong evidence of residual endogeneity after conditioning on observed covariates.

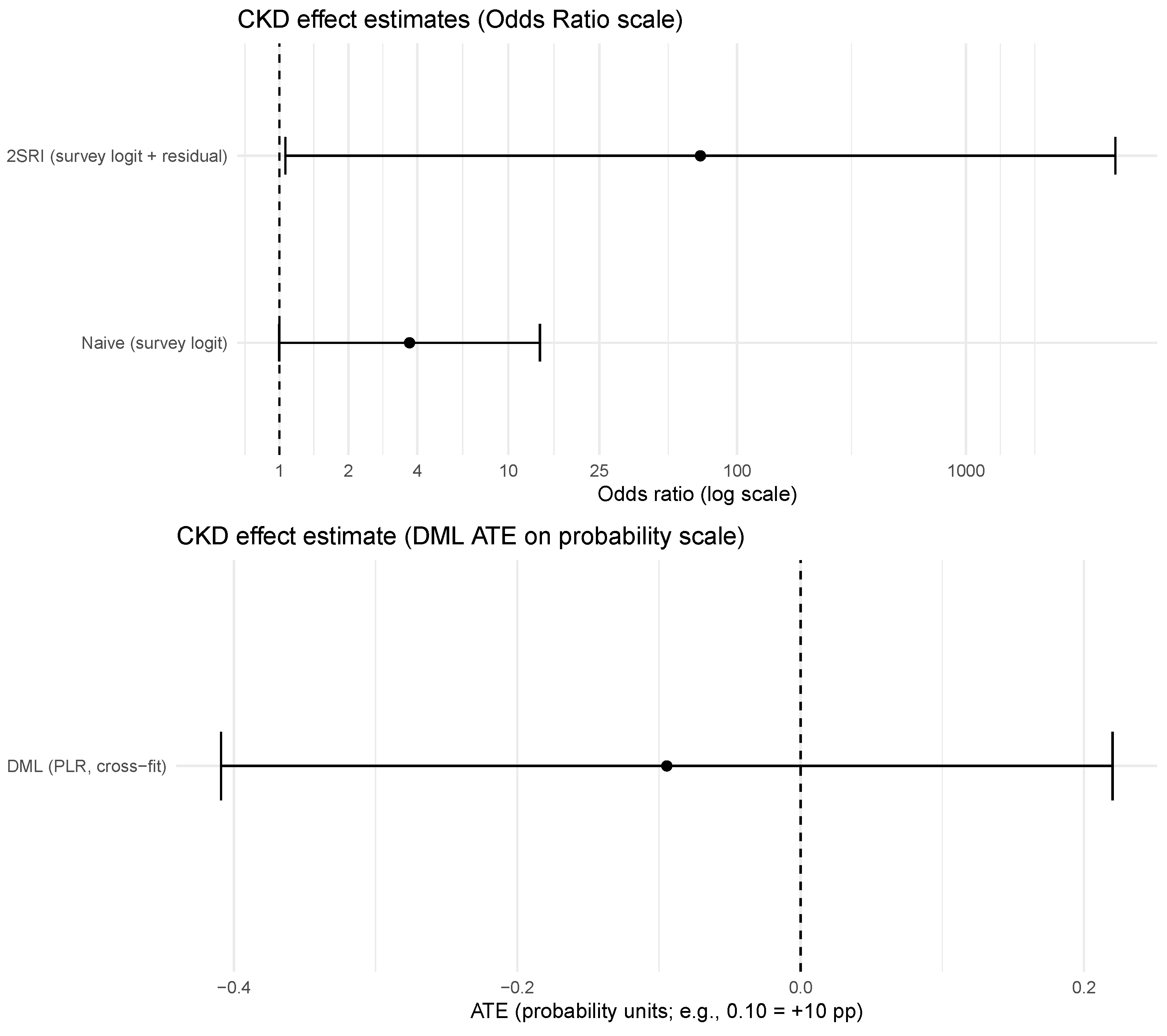

The estimated effect of CKD increased markedly in magnitude (OR = 69.1), but with a very wide confidence interval (95% CI: 1.06–4502.7), reflecting numerical instability due to limited overlap and the small effective sample size of individuals with CKD. These results indicate that conventional control-function approaches may be unreliable in this setting and motivate the use of orthogonalized machine learning estimators as the primary causal strategy.

Diagnostics for extreme odds ratios.

The extreme odds ratio observed in the 2SRI specification reflects sparse-data issues and limited overlap between CKD and non-CKD groups. The number of CKD events per covariate was below conventional thresholds, and quasi-separation was detected in the second-stage model. Mean variance inflation factors were below 5, indicating limited multicollinearity. Penalized likelihood corrections (e.g., Firth) were not applied, as the 2SRI results are reported solely for diagnostic comparison.

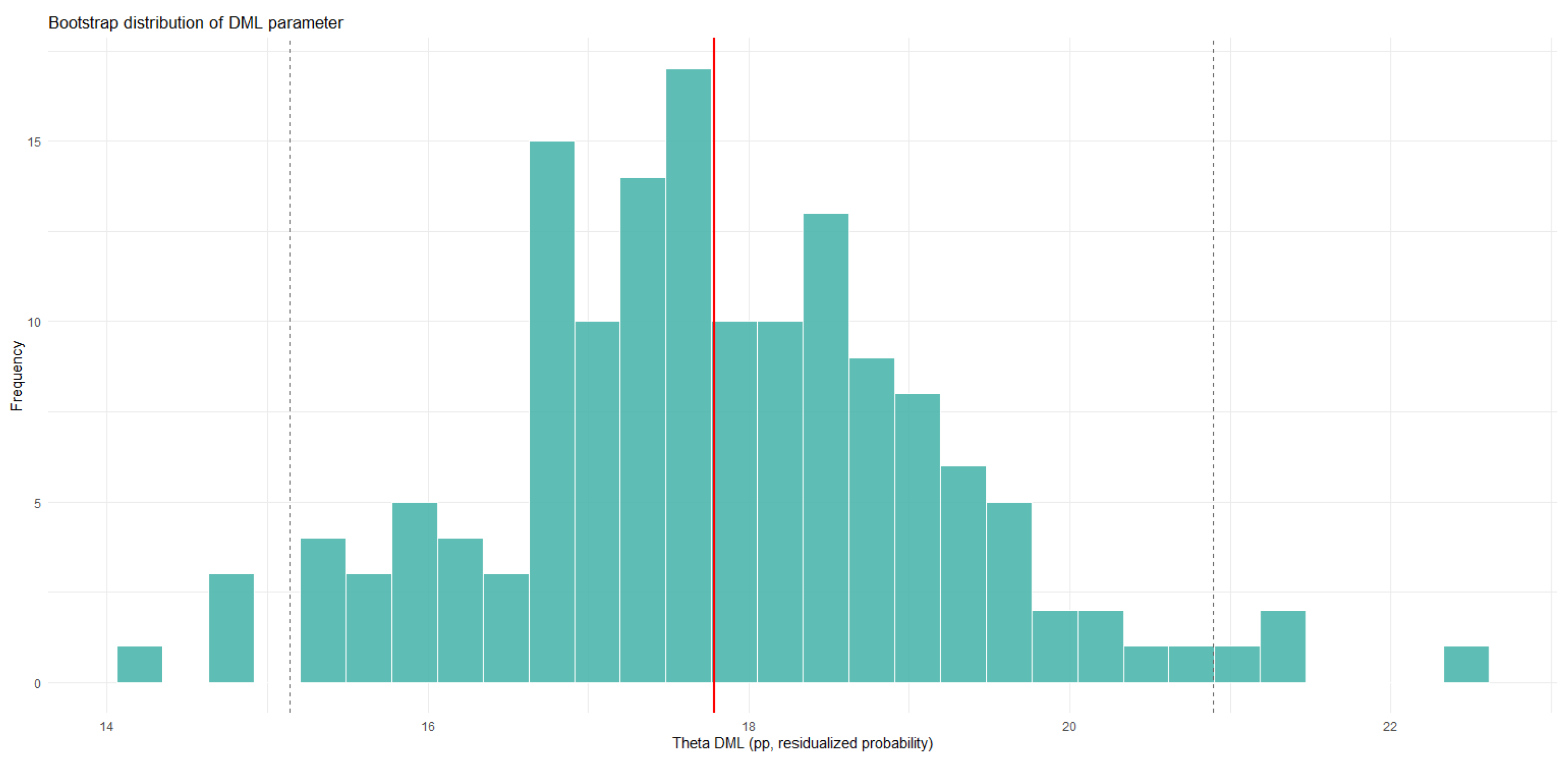

Double/Debiased Machine Learning estimation

Finally, we estimated the effect of CKD on high cardiovascular risk using a Double/Debiased Machine Learning (DML) approach based on the partially linear regression (PLR) framework with cross-fitting. This specification avoids reliance on inverse propensity weighting, which can be unstable in the presence of rare treatments and complex survey designs.

Nuisance components for the outcome and treatment models were estimated via survey-weighted logistic regressions, and the causal effect of CKD was obtained from a second-stage regression on orthogonalized residuals. This strategy yields a stable and approximately unbiased estimate of the average treatment effect under weak regularity conditions.

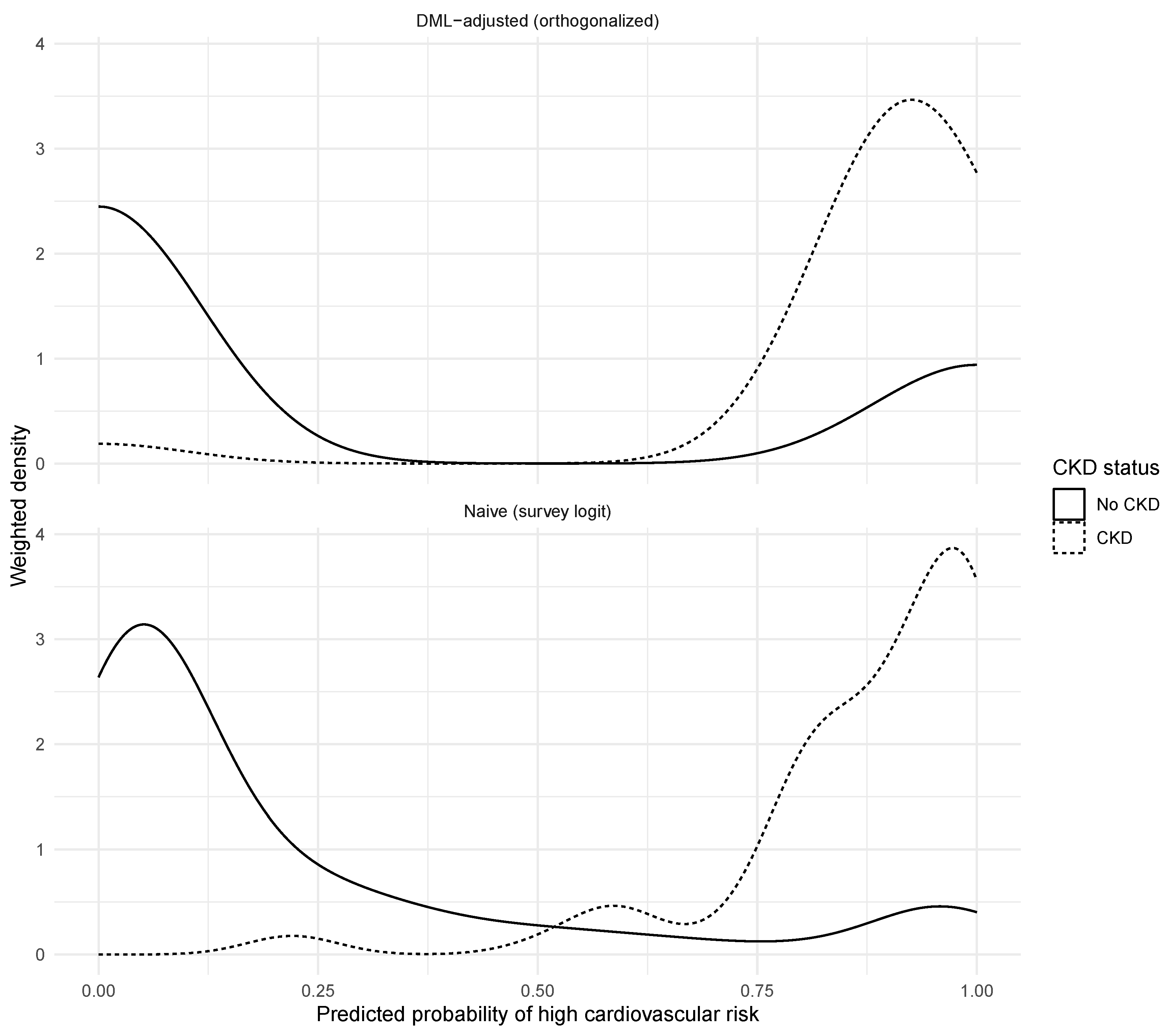

The DML estimate suggests that CKD is not associated with a statistically significant increase in the probability of being classified as high cardiovascular risk once demographic characteristics and cardiometabolic comorbidities are accounted for (, SE = 0.161; 95% CI: ). While naive and control-function specifications indicate a strong association between CKD and cardiovascular risk, the DML results indicate that this relationship is largely explained by confounding factors, particularly age and diabetes.

Table depicts

Table S2 empirical modeling results for association between CKD and high cardiovascular risk.

Figure 5 provides a visual comparison of the CKD effect across modeling strategies, while

Figure 6 illustrates how predicted cardiovascular risk distributions differ between naive and DML-adjusted specifications.

Given the low prevalence of CKD (approximately 3%) and the resulting limited effective sample size, the minimum detectable effect for the DML estimator is on the order of 0.25–0.30 probability points. Smaller causal effects cannot be reliably distinguished from zero under the observed design, and estimates should be interpreted with this power limitation in mind.