Submitted:

07 April 2026

Posted:

08 April 2026

You are already at the latest version

Abstract

Keywords:

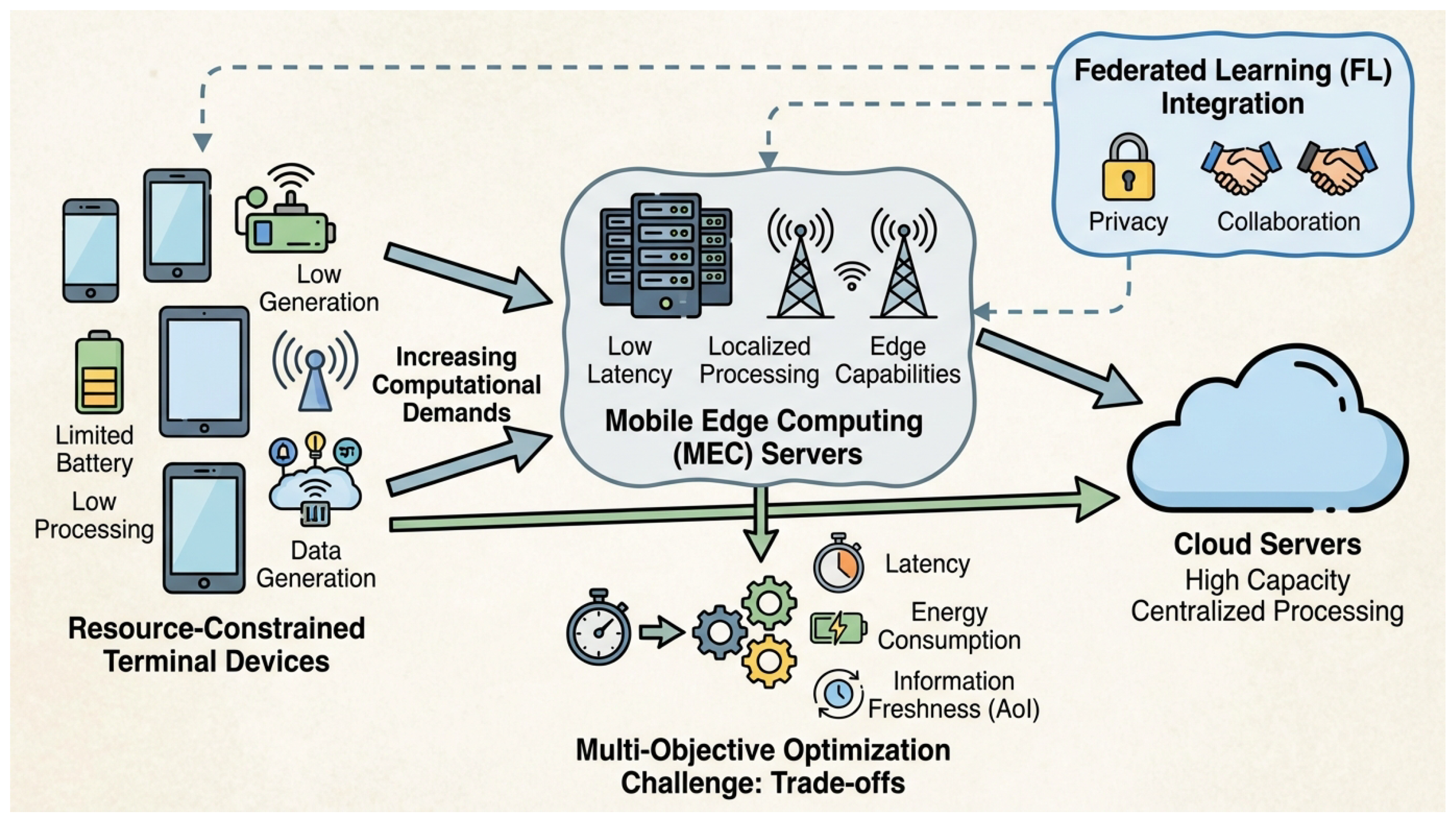

1. Introduction

- We formulate a novel Contextual Risk-aware Multi-objective Markov Decision Process (MOMDP) for MEC offloading, explicitly incorporating environmental uncertainties and multiple conflicting objectives.

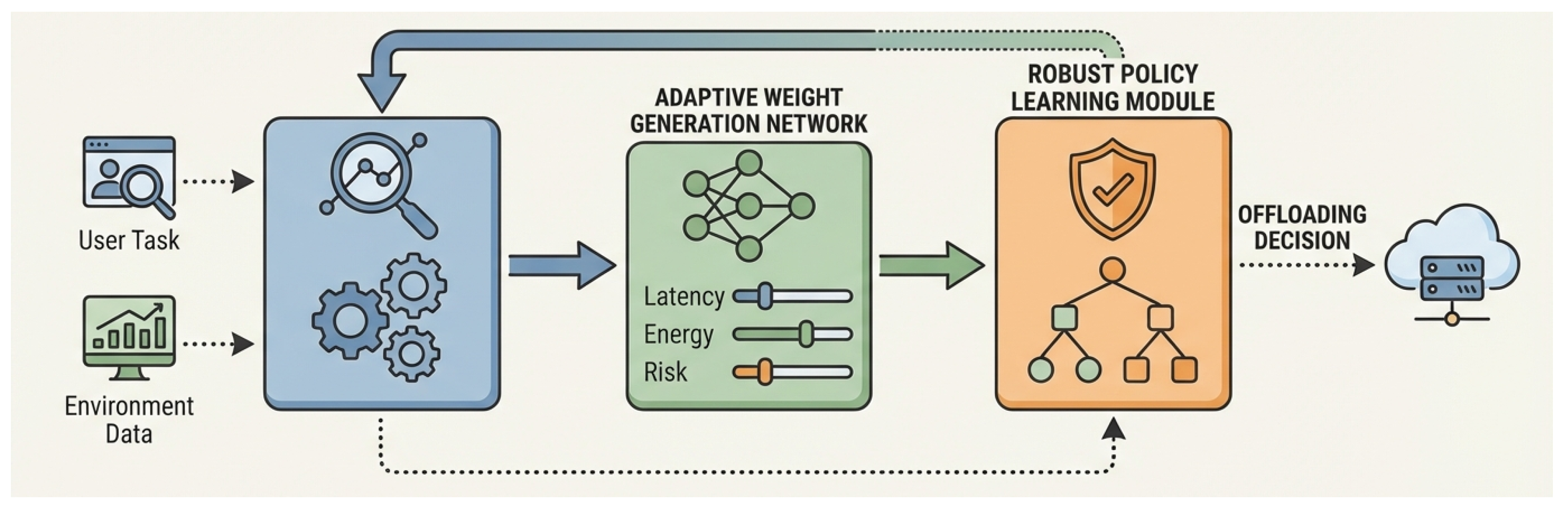

- We propose ADAPT-ROMA, a sophisticated deep reinforcement learning algorithm featuring a CVaR-based risk prediction module, an adaptive weight generation network for dynamic multi-objective optimization, and a robust distributed DRL framework with contextual encoding.

- We rigorously evaluate ADAPT-ROMA in a simulated dynamic MEC environment, demonstrating its superior performance in terms of balanced latency and energy, high task completion rate, and significantly enhanced robustness against uncertainties as quantified by its low risk-aware score.

2. Related Work

2.1. Deep Reinforcement Learning for MEC Offloading

2.2. Multi-Objective and Risk-Aware Reinforcement Learning

3. Method

3.1. System Model and Problem Formulation

3.1.1. Communication and Computation Model

3.1.2. Contextual Risk-aware Multi-objective Markov Decision Process

3.2. Risk-Aware Modeling with CVaR

3.3. Adaptive Dynamic Risk-aware Multi-objective Offloading Algorithm (ADAPT-ROMA)

3.3.1. Adaptive Weight Generation for Multi-Objective Scalarization

3.3.2. Robust Policy Learning via Distributed DRL and Adversarial Training

3.3.3. Contextual Encoding with Hierarchical Attention Network

3.3.3.1. Intra-Component Attention

3.3.3.2. Inter-Component Attention

4. Experiments

4.1. Experimental Setup

- Mobile Users: 15 mobile users are simulated, each generating computational tasks following a Poisson process with an average arrival rate of 0.5 tasks/second. Task characteristics, including input data size () and required CPU cycles (), are heterogeneous. Data size ranges from 100 KB to 2 MB, and CPU cycles from 0.5 Gcycles to 5 Gcycles. Users’ local CPU frequency is set to 1 GHz, and transmit power is 100 mW. The energy coefficient is .

- MEC Servers: 3 edge servers are distributed within a 500m x 500m area. Each edge server possesses distinct computational capabilities (CPU frequencies : 5 GHz, 7 GHz, 10 GHz) and communication bandwidths (: 20 MHz, 30 MHz, 40 MHz). Their load states are dynamically updated based on executed and queued tasks. A remote cloud server is also available with practically infinite computational power (100 GHz) but incurs higher communication latency.

- Channel Model: Wireless channel conditions between users and servers are modeled using a time-varying Rayleigh fading channel, incorporating path loss and shadow fading effects. Background noise power is set to -174 dBm/Hz. This introduces significant uncertainty into transmission rates and latencies.

- Simulation Horizon: Each simulation run lasts for 5000 time slots, with each time slot representing 1 second, allowing sufficient time for the DRL agents to learn and adapt. The results are averaged over 10 independent runs to ensure statistical significance.

- DRL Training: The DRL agents are trained for episodes, with a learning rate of for both actor and critic networks. The replay buffer size is , and mini-batch size is 128. For CVaR calculation, the confidence level is set to 0.9.

4.2. Baseline Methods

- Greedy Offloading: This is a simple heuristic-based strategy where each user independently chooses to offload its task to the server (including local device or cloud) that currently appears to have the lowest load, aiming for immediate minimal latency or energy without considering future states or uncertainties.

- Delay-first DRL: A Deep Reinforcement Learning (DRL) strategy that is solely optimized to minimize the total task execution latency across all users. This baseline represents an upper bound on how low latency can be achieved when energy and risk are not considered.

- Energy-first DRL: Similar to Delay-first DRL, but this DRL strategy is trained exclusively to minimize the total energy consumption of user devices. It provides a benchmark for energy efficiency without explicit consideration for latency or risk.

- Basic Multi-objective DRL (Basic MORL): This method employs a standard DRL framework with a fixed, predefined set of weights (e.g., ) to scalarize the multiple objectives (latency, energy, and a rudimentary risk term or a static approximation). It lacks the adaptive weight generation and sophisticated risk perception of ADAPT-ROMA.

4.3. Performance Evaluation

4.4. Ablation Study

4.5. Perceived User Experience Evaluation

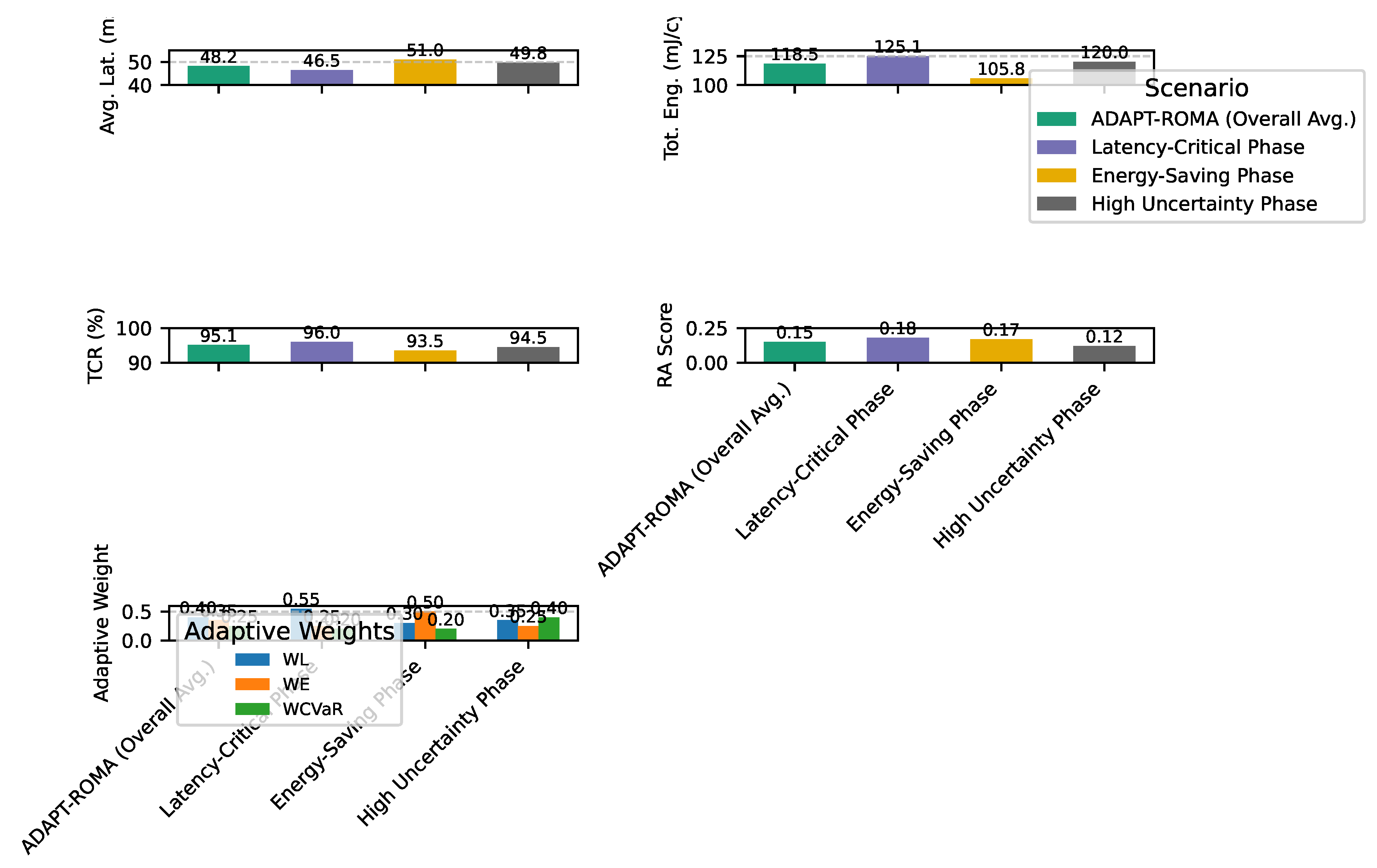

4.6. Adaptability to Dynamic Environments

4.7. Robustness Analysis under Extreme Conditions

4.8. Scalability Analysis

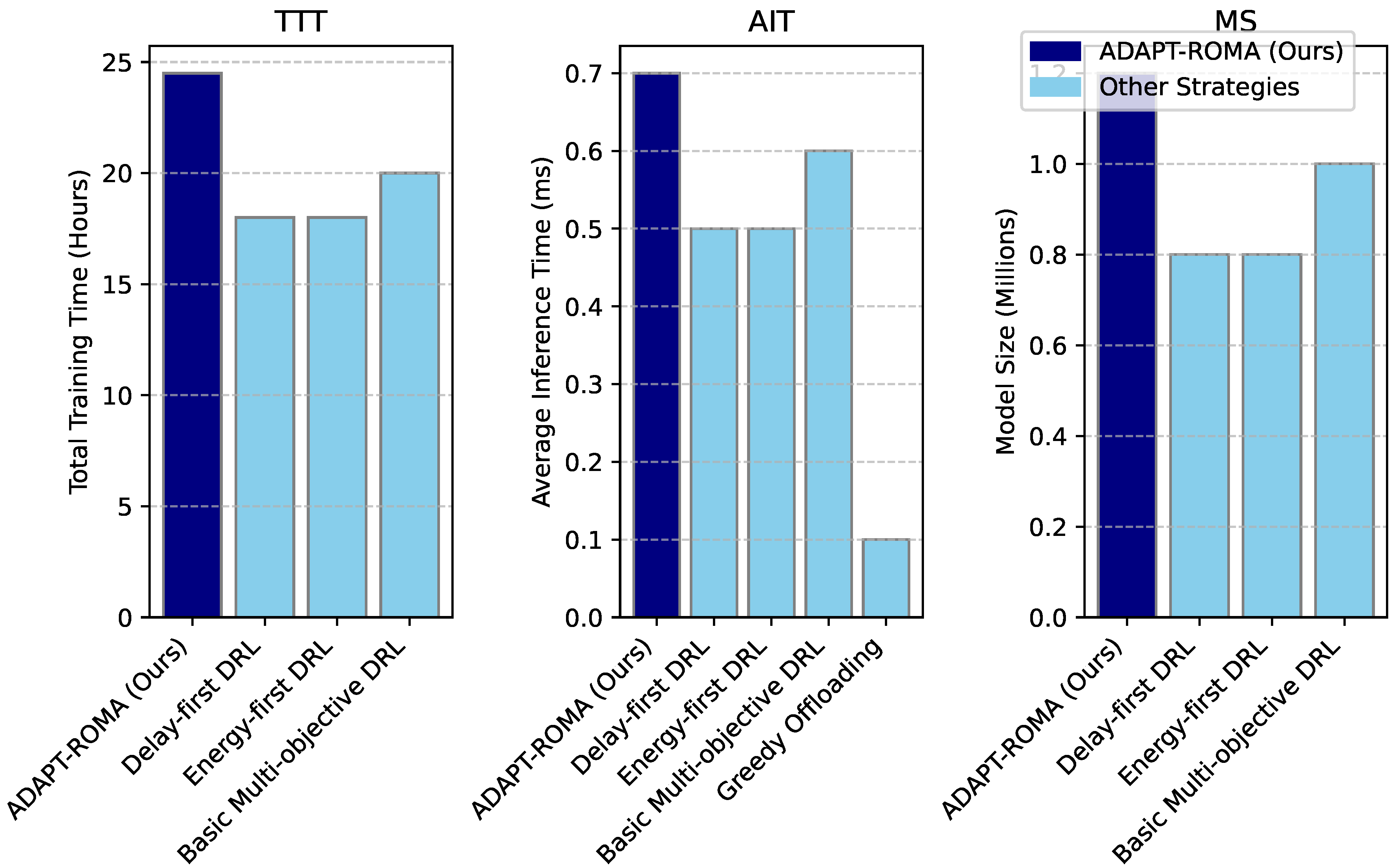

4.9. Computational Overhead Analysis

5. Conclusions

References

- Wei, L.; Hu, D.; Zhou, W.; Yue, Z.; Hu, S. Towards Propagation Uncertainty: Edge-enhanced Bayesian Graph Convolutional Networks for Rumor Detection. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 3845–3854. [CrossRef]

- Ye, D.; Lin, Y.; Huang, Y.; Sun, M. TR-BERT: Dynamic Token Reduction for Accelerating BERT Inference. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2021; Association for Computational Linguistics; pp. 5798–5809. [Google Scholar] [CrossRef]

- Yang, N.; Liu, Y.; Chen, S.; Zhang, M.; Zhang, H. Minimizing AoI in Mobile Edge Computing: Nested Index Policy with Preemptive and Non-preemptive Structure. arXiv arXiv:2508.20564. [CrossRef]

- Yang, N.; Wen, J.; Zhang, M.; Tang, M. Generalizable Pareto-Optimal Offloading with Reinforcement Learning in Mobile Edge Computing. IEEE Transactions on Services Computing, 2025. [Google Scholar]

- Han, Z.; Ding, Z.; Ma, Y.; Gu, Y.; Tresp, V. Learning Neural Ordinary Equations for Forecasting Future Links on Temporal Knowledge Graphs. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021; Association for Computational Linguistics; pp. 8352–8364. [Google Scholar] [CrossRef]

- Pryzant, R.; Iter, D.; Li, J.; Lee, Y.; Zhu, C.; Zeng, M. Automatic Prompt Optimization with “Gradient Descent” and Beam Search. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, 2023; Association for Computational Linguistics; pp. 7957–7968. [Google Scholar] [CrossRef]

- Wang, X.; Liu, Q.; Gui, T.; Zhang, Q.; Zou, Y.; Zhou, X.; Ye, J.; Zhang, Y.; Zheng, R.; Pang, Z.; et al. TextFlint: Unified Multilingual Robustness Evaluation Toolkit for Natural Language Processing. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing: System Demonstrations, 2021; Association for Computational Linguistics; pp. 347–355. [Google Scholar] [CrossRef]

- Yang, N.; Yuan, X.; Lin, H.; Zhang, H.; Lyu, P.; Wang, J. FedDM: Federated Learning Incorporating Dissimilarity Measure for Mobile Edge Computing Systems. IEEE Transactions on Cognitive Communications and Networking, 2025. [Google Scholar]

- Xu, X.; Tu, W.; Yang, Y. CASE-Net: Integrating local and non-local attention operations for speech enhancement. Speech Communication 2023, 148, 31–39. [Google Scholar] [CrossRef]

- Giorgi, J.; Nitski, O.; Wang, B.; Bader, G. DeCLUTR: Deep Contrastive Learning for Unsupervised Textual Representations. Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing 2021, Volume 1, 879–895. [Google Scholar] [CrossRef]

- Madotto, A.; Lin, Z.; Zhou, Z.; Moon, S.; Crook, P.; Liu, B.; Yu, Z.; Cho, E.; Fung, P.; Wang, Z. Continual Learning in Task-Oriented Dialogue Systems. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021; Association for Computational Linguistics; pp. 7452–7467. [Google Scholar] [CrossRef]

- Zhang, Z.; Strubell, E.; Hovy, E. A Survey of Active Learning for Natural Language Processing. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, 2022; Association for Computational Linguistics; pp. 6166–6190. [Google Scholar] [CrossRef]

- Deng, M.; Wang, J.; Hsieh, C.P.; Wang, Y.; Guo, H.; Shu, T.; Song, M.; Xing, E.; Hu, Z. RLPrompt: Optimizing Discrete Text Prompts with Reinforcement Learning. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, 2022; Association for Computational Linguistics; pp. 3369–3391. [Google Scholar] [CrossRef]

- Jiang, Z.; Yang, M.; Tsirlin, M.; Tang, R.; Dai, Y.; Lin, J. “Low-Resource” Text Classification: A Parameter-Free Classification Method with Compressors. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2023; Association for Computational Linguistics, 2023; pp. 6810–6828. [Google Scholar] [CrossRef]

- Tu, Q.; Li, Y.; Cui, J.; Wang, B.; Wen, J.R.; Yan, R. MISC: A Mixed Strategy-Aware Model integrating COMET for Emotional Support Conversation. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics, 2022; Volume 1, pp. 308–319. [Google Scholar] [CrossRef]

- Hu, X.; Zhang, C.; Yang, Y.; Li, X.; Lin, L.; Wen, L.; Yu, P.S. Gradient Imitation Reinforcement Learning for Low Resource Relation Extraction. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021; Association for Computational Linguistics; pp. 2737–2746. [Google Scholar] [CrossRef]

- Xiao, W.; Beltagy, I.; Carenini, G.; Cohan, A. PRIMERA: Pyramid-based Masked Sentence Pre-training for Multi-document Summarization. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2022, pp. 5245–5263. [CrossRef]

- Huang, J.; Shao, H.; Chang, K.C.C. Are Large Pre-Trained Language Models Leaking Your Personal Information? In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2022; Association for Computational Linguistics, 2022; pp. 2038–2047. [Google Scholar] [CrossRef]

- Fernandes, P.; Farinhas, A.; Rei, R.; C. de Souza, J.G.; Ogayo, P.; Neubig, G.; Martins, A. Quality-Aware Decoding for Neural Machine Translation. In Proceedings of the Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2022; Association for Computational Linguistics; pp. 1396–1412. [Google Scholar] [CrossRef]

- Pang, S.; Xue, Y.; Yan, Z.; Huang, W.; Feng, J. Dynamic and Multi-Channel Graph Convolutional Networks for Aspect-Based Sentiment Analysis. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021; Association for Computational Linguistics, 2021; pp. 2627–2636. [Google Scholar] [CrossRef]

- Ainslie, J.; Lee-Thorp, J.; de Jong, M.; Zemlyanskiy, Y.; Lebron, F.; Sanghai, S. GQA: Training Generalized Multi-Query Transformer Models from Multi-Head Checkpoints. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, 2023; Association for Computational Linguistics; pp. 4895–4901. [Google Scholar] [CrossRef]

- Wan, D.; Bansal, M. FactPEGASUS: Factuality-Aware Pre-training and Fine-tuning for Abstractive Summarization. In Proceedings of the Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2022; Association for Computational Linguistics; pp. 1010–1028. [Google Scholar] [CrossRef]

- Xu, X.; Wang, Y.; Xu, D.; Peng, Y.; Zhang, C.; Jia, J.; Chen, B. Vsegan: Visual speech enhancement generative adversarial network. In Proceedings of the ICASSP 2022-2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2022; IEEE; pp. 7308–7311. [Google Scholar]

- Xu, X.; Tu, W.; Yang, Y.; Li, J.; Zhang, Y.; Chen, H. Contribution-aware Dynamic Multi-modal Balance for Audio-Visual Speech Separation. IEEE Transactions on Multimedia, 2026. [Google Scholar]

- Sun, J.; Ma, X.; Peng, N. AESOP: Paraphrase Generation with Adaptive Syntactic Control. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021; Association for Computational Linguistics; pp. 5176–5189. [Google Scholar] [CrossRef]

| Strategy | Avg. Task Latency (ms) | Total Energy (mJ/cycle) | Task Completion Rate (%) | Risk-aware Score (Lower is better) |

| ADAPT-ROMA (Ours) | 48.2 | 118.5 | 95.1 | 0.15 |

| Greedy Offloading | 75.3 | 165.2 | 82.7 | 0.40 |

| Delay-first DRL | 45.1 | 142.8 | 92.5 | 0.28 |

| Energy-first DRL | 60.7 | 98.1 | 88.0 | 0.35 |

| Basic Multi-objective DRL | 52.0 | 125.7 | 90.3 | 0.22 |

| Strategy Variant | Avg. Task Latency (ms) | Tot. Eng. (mJ/cycle) | Task Completion Rate (%) | Risk-aware Score (Lower is better) |

| ADAPT-ROMA (Full) | 48.2 | 118.5 | 95.1 | 0.15 |

| w/o Risk Perception (CVaR) | 50.1 | 120.3 | 93.5 | 0.25 |

| w/o Adaptive Weights | 51.5 | 124.0 | 92.8 | 0.20 |

| w/o Adversarial Training | 49.5 | 119.8 | 94.0 | 0.22 |

| w/o Contextual Encoder | 53.8 | 128.1 | 91.0 | 0.27 |

| Strategy | Perceived Latency | Perceived Energy Eff. | System Reliability | Overall Satisfaction |

| ADAPT-ROMA (Ours) | 1.8 | 2.1 | 4.5 | 4.3 |

| Delay-first DRL | 1.5 | 3.0 | 3.2 | 3.5 |

| Energy-first DRL | 2.5 | 1.7 | 3.0 | 3.3 |

| Basic Multi-objective DRL | 2.0 | 2.5 | 3.8 | 3.9 |

| Strategy / Condition | Avg. Lat. (ms) | Tot. Eng. (mJ/cycle) | TCR (%) | RA Score |

| ADAPT-ROMA (Full) - Normal | 48.2 | 118.5 | 95.1 | 0.15 |

| ADAPT-ROMA (Full) - High Interference | 55.0 | 130.2 | 92.8 | 0.18 |

| ADAPT-ROMA (Full) - Server Overload | 58.5 | 135.5 | 91.5 | 0.20 |

| ADAPT-ROMA (Full) - Task Burst | 62.1 | 140.8 | 90.2 | 0.21 |

| Basic Multi-objective DRL - High Interference | 65.2 | 155.0 | 85.0 | 0.35 |

| Basic Multi-objective DRL - Server Overload | 68.9 | 160.1 | 83.5 | 0.38 |

| Basic Multi-objective DRL - Task Burst | 72.5 | 168.0 | 81.0 | 0.40 |

| w/o Risk Perception (CVaR) - High Interference | 60.5 | 140.0 | 89.0 | 0.30 |

| w/o Adversarial Training - High Interference | 57.5 | 135.0 | 90.0 | 0.25 |

| Strategy | # Users | Avg. Lat. (ms) | Tot. Eng. (mJ/cycle) | TCR (%) | Inf. Time (ms) |

| ADAPT-ROMA (Ours) | 5 | 40.5 | 95.2 | 98.0 | 0.5 |

| ADAPT-ROMA (Ours) | 15 | 48.2 | 118.5 | 95.1 | 0.7 |

| ADAPT-ROMA (Ours) | 30 | 55.7 | 135.0 | 92.5 | 1.2 |

| ADAPT-ROMA (Ours) | 50 | 65.1 | 150.3 | 89.0 | 2.0 |

| Basic Multi-objective DRL | 15 | 52.0 | 125.7 | 90.3 | 0.8 |

| Basic Multi-objective DRL | 30 | 62.5 | 145.1 | 88.0 | 1.5 |

| Basic Multi-objective DRL | 50 | 75.0 | 165.8 | 84.5 | 2.5 |

| Greedy Offloading | 15 | 75.3 | 165.2 | 82.7 | 0.1 |

| Greedy Offloading | 30 | 90.1 | 190.5 | 78.0 | 0.1 |

| Greedy Offloading | 50 | 110.5 | 220.0 | 70.5 | 0.2 |

| Strategy | # Servers | Avg. Lat. (ms) | Tot. Eng. (mJ/cycle) | TCR (%) | Inf. Time (ms) |

| ADAPT-ROMA (Ours) | 1 | 65.8 | 150.0 | 88.0 | 0.6 |

| ADAPT-ROMA (Ours) | 3 | 48.2 | 118.5 | 95.1 | 0.7 |

| ADAPT-ROMA (Ours) | 5 | 42.1 | 105.0 | 96.5 | 0.9 |

| Basic Multi-objective DRL | 1 | 75.0 | 168.0 | 82.0 | 0.7 |

| Basic Multi-objective DRL | 3 | 52.0 | 125.7 | 90.3 | 0.8 |

| Basic Multi-objective DRL | 5 | 45.0 | 110.0 | 92.0 | 1.1 |

| Greedy Offloading | 1 | 85.0 | 180.0 | 75.0 | 0.1 |

| Greedy Offloading | 3 | 75.3 | 165.2 | 82.7 | 0.1 |

| Greedy Offloading | 5 | 68.0 | 150.0 | 86.0 | 0.2 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).