Submitted:

14 April 2026

Posted:

16 April 2026

You are already at the latest version

Abstract

Keywords:

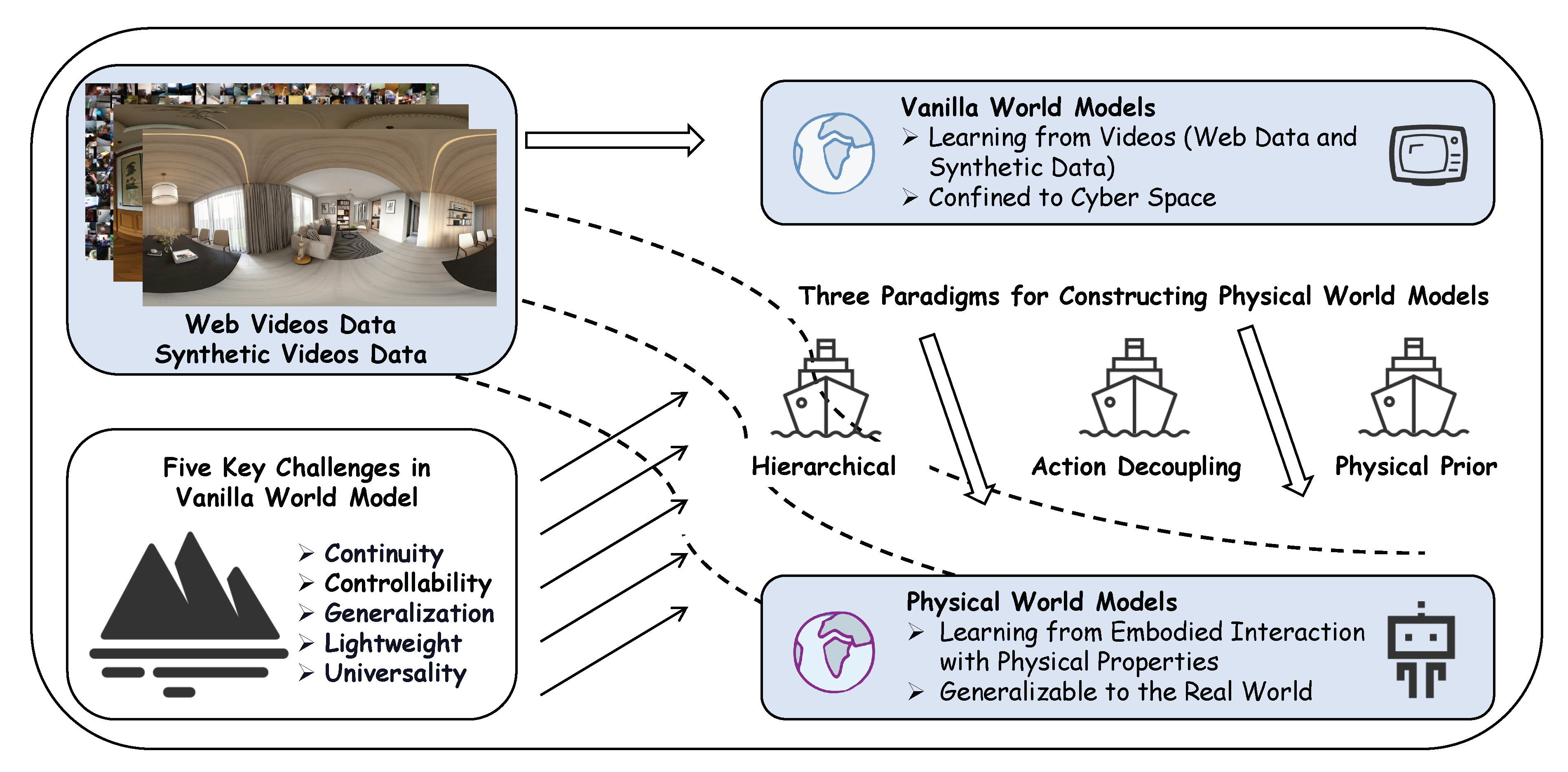

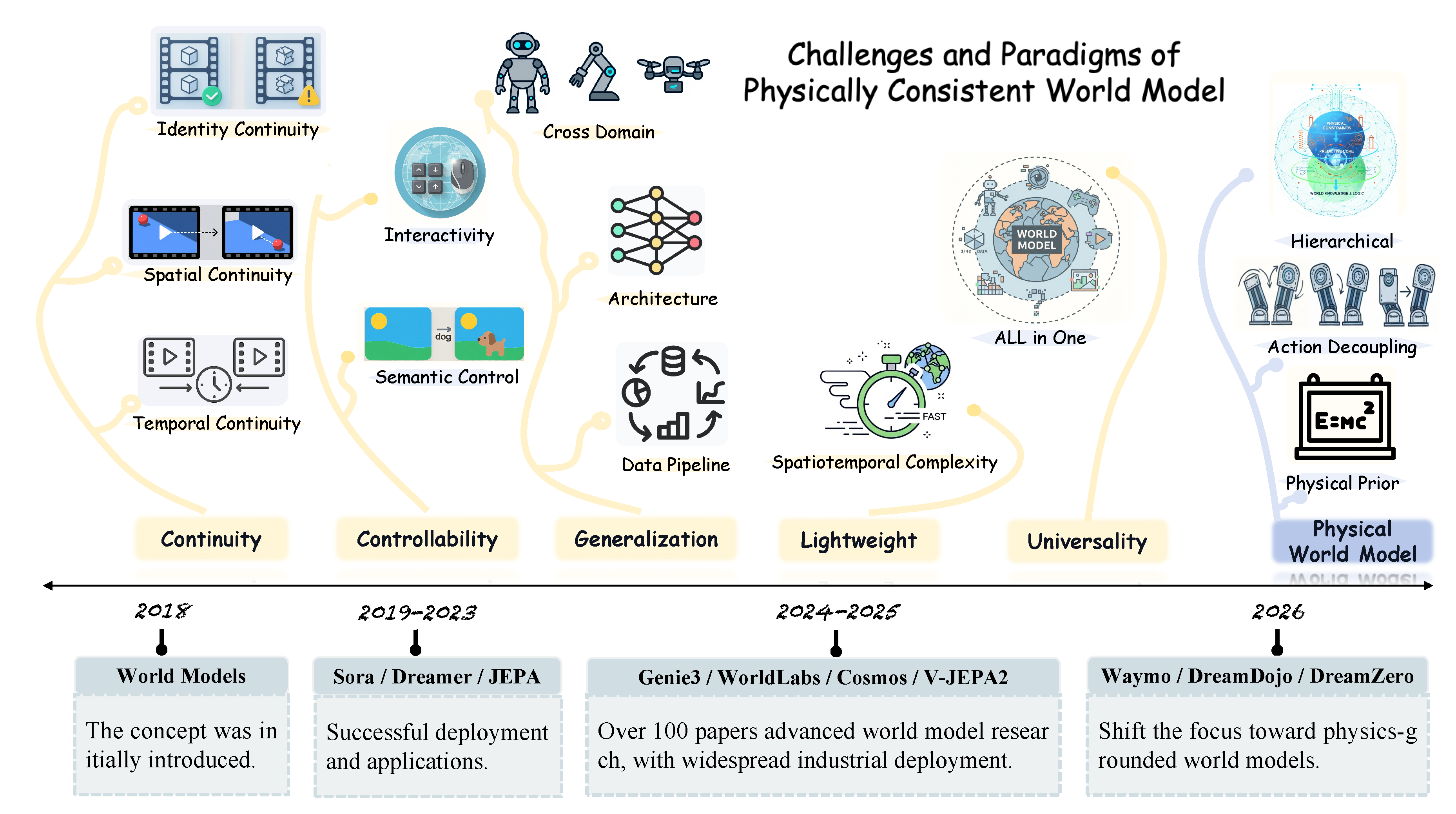

1. Introduction

- Pixel–Physics Challenges: We distill five core challenges—continuity, controllability, generalization, lightweight, and universality—and systematically summarize the sub-problems and representative solutions for each challenge through the lens of physical consistency.

- Three Paradigms of Physical World Model: Existing approaches toward physically grounded modeling can be broadly categorized into three classes: prior injection, dynamic–static decoupling, and hierarchical abstraction.

- Future Directions: We identify five major open problems for future research and provide a systematic discussion on industrial deployment and current safety issues.

2. Challenges of Learning from Video

2.1. Physical Continuity

2.1.1. Temporal Continuity

indicates video or multi-frame;

indicates video or multi-frame;  denotes single-frame or image;

denotes single-frame or image;  refers to latent representation;

refers to latent representation;  to spatial representation;

to spatial representation;  to text modality;

to text modality;  to action representation;

to action representation;  to camera (pose) information;

to camera (pose) information;  to depth feature;

to depth feature;  to neural signal;

to neural signal;  to object-level feature;

to object-level feature;  to physical information; and

to physical information; and  to historical memory feature. Evaluations: V assesses visual quality, generation, prediction, and control in downstream tasks, encompassing qualitative evaluations. R evaluates robotic tasks in simulation, while includes real-robot experiments. P measures physical understanding and perception. C evaluates planning and decision-making in games, tasks, and navigation.

to historical memory feature. Evaluations: V assesses visual quality, generation, prediction, and control in downstream tasks, encompassing qualitative evaluations. R evaluates robotic tasks in simulation, while includes real-robot experiments. P measures physical understanding and perception. C evaluates planning and decision-making in games, tasks, and navigation.

indicates video or multi-frame;

indicates video or multi-frame;  denotes single-frame or image;

denotes single-frame or image;  refers to latent representation;

refers to latent representation;  to spatial representation;

to spatial representation;  to text modality;

to text modality;  to action representation;

to action representation;  to camera (pose) information;

to camera (pose) information;  to depth feature;

to depth feature;  to neural signal;

to neural signal;  to object-level feature;

to object-level feature;  to physical information; and

to physical information; and  to historical memory feature. Evaluations: V assesses visual quality, generation, prediction, and control in downstream tasks, encompassing qualitative evaluations. R evaluates robotic tasks in simulation, while includes real-robot experiments. P measures physical understanding and perception. C evaluates planning and decision-making in games, tasks, and navigation.

to historical memory feature. Evaluations: V assesses visual quality, generation, prediction, and control in downstream tasks, encompassing qualitative evaluations. R evaluates robotic tasks in simulation, while includes real-robot experiments. P measures physical understanding and perception. C evaluates planning and decision-making in games, tasks, and navigation.| Work | Venue | Main Solution | Method | Input / Output | Conditions | Evals |

|---|---|---|---|---|---|---|

| Temporal Consistency | ||||||

| EnerVerse [26] | NeurIPS’25 | Autoregression Improvements |

Sparse Chunks |

/ /

|

|

V |

| EVA [27] | arXiv’25 | Reflection |

/ /

|

|

V | |

| Yume [28] | arXiv’25 | Framepack |

/ /

|

|

V | |

| SAMPO [24] | NeurIPS’25 | Scale-Wise |

/ /

|

None | V | |

| GEM [34] | CVPR’25 | Diffusion Schedules |

Increasing Noise |

/ /

|

|

V |

| Diamond [33] | NeurIPS’24 | Adaptive Noise |

/ /

|

None | V | |

| Epona [32] | ICCV’25 | Diffusion Forcing |

/ /

|

|

VC | |

| Pathdreamer [35] | ICCV’21 | Condition Constraints |

HR. Modeling |

/ /

|

|

VC |

| PlayerOne [36] | NeurIPS’25 | Rec. Constraints |

/ /

|

|

V | |

| VRAG [23] | NeurIPS’25 | Global Constraints |

/ /

|

None | CV | |

| Vid2World [38] | ICLR’26 | Optimization Level |

Loss-based |

/ /

|

|

V |

| SSD [37] | NeurIPS’25 | State-space |

/ /

|

None | C | |

| SGF [39] | ICLR’25 | Regularization |

/ /

|

None | C | |

| Spatial Consistency | ||||||

| RoboScape [40] | NeurIPS’25 | Implicit Alignment |

HR. Modeling |

/ /

|

None | VR |

| WorldGrow [41] | AAAI’26 | Block Inpainting |

/ /

|

|

V | |

| WVD [42] | CVPR’25 | Explicit Alignment |

Spatial Joint Modeling |

/ /

|

None | V |

| FlashWorld [43] | ICLR’26 | Dual-mode Pre-training |

/ /

|

|

V | |

| Geom. Forcing [44] | ICLR’26 | Rep. Alignment |

/ /

|

None | V | |

| InfiniCube [45] | ICCV’25 | HR. Constraints |

/ /

|

|

V | |

| MindJourney [46] | NeurIPS’25 | Language Guidance |

/ /

|

|

VCP | |

| UniFuture [47] | ICRA’26 | Multi-modal |

/ /

|

|

V | |

| Edeline [48] | NeurIPS’25 | Mem. Enhancement |

/ /

|

None | C | |

| Ctrl-World [49] | ICLR’26 | Space Constraints |

/ /

|

|

V | |

| Spatial-Mem [50] | NeurIPS’25 | Semantic Alignment |

/ /

|

|

V | |

| WorldMEM [51] | NeurIPS’25 | Memory Mechanism |

Memory Bank |

/ /

|

|

V |

| Voyager(LLM) [52] | TMLR’24 | Skill Library |

/ /

|

|

C | |

| SSM-World [53] | ICCV’25 | State-Space Models |

/ /

|

|

V | |

| Identity Consistency | ||||||

| Loci-v1 [54] | ICLR’23 | Occlusion | Imagination Tracking |

/ /

|

|

C |

| SAVi++ [55] | NeurIPS’22 | Tracking | Identity Tracking |

/ /

|

None | P |

| ForeDiff [56] | arXiv’25 | Anchors | Arch. Decoupling |

/ /

|

|

V |

2.1.2. Spatial Continuity

2.1.3. Identity Continuity

2.2. Controllability

2.2.1. Semantic Control

2.2.2. Interactivity

2.3. Generalization

2.3.1. Data

2.3.2. Architecture Generalization

2.3.3. Behavioral and Environmental Generalization

| Work | Venue | GPUs | Batch Size | Training Steps |

|---|---|---|---|---|

| DINO-world [105] | arXiv’25 | H100*16 | 1024 | 350K Iter. |

| HWM [106] | arXiv’25 | A6000*2 | 128 | – |

| MinD [107] | arXiv’25 | A40*4 | – | 9 Hours |

| Sparse Imagin. [108] | ICLR’26 | 3090*4 | 32 | 100 Epochs |

| Simulus [109] | arXiv’25 | 4090*1 | 8 | 100 Epochs |

| EMERALD [110] | ICML’25 | 3090*1 | 16 | – |

| -World [111] | arXiv’24 | V100*8 | 24 | 24 Epochs |

| AVID [71] | RLC’25 | A100*4 | 64 | 7 Days |

| ScaleZero [112] | arXiv’25 | A100*8 | 512 | – |

| KeyWorld [113] | arXiv’25 | A800*8 | 1 | 100 Epochs |

| TWIST [114] | ICRA’24 | 3090*1 | – | 500K Iter. |

| IRIS [115] | ICLR’23 | A100*8 | 256 | 3.5 Days |

| -IRIS [116] | ICML’24 | A100*1 | 32 | 1K Epochs |

| HERO [117] | arXiv’25 | A100*1 | – | – |

| PosePilot [118] | IROS’25 | A100*8 | - | – |

| OCWM [119] | ICLR’25 | H100*4 | 32 | 40 Epochs |

2.4. Lightweight

indicates video or multi-frame;

indicates video or multi-frame;  denotes single-frame or image;

denotes single-frame or image;  refers to latent representation;

refers to latent representation;  to spatial representation;

to spatial representation;  to text modality;

to text modality;  to action representation;

to action representation;  to camera (pose) information;

to camera (pose) information;  to depth feature;

to depth feature;  to neural signal;

to neural signal;  to object-level feature;

to object-level feature;  to physical information; and

to physical information; and  to historical memory feature. Evals and Downstream Apps: Physical generation, question answering, interaction, understanding, attributes are abbreviated as , A refers to action prediction. M stands for motion planning. F stands for fluid dynamics. In downstream applications: W is real world, R is robotics, D is autonomous driving, and O is objects.

to historical memory feature. Evals and Downstream Apps: Physical generation, question answering, interaction, understanding, attributes are abbreviated as , A refers to action prediction. M stands for motion planning. F stands for fluid dynamics. In downstream applications: W is real world, R is robotics, D is autonomous driving, and O is objects.

indicates video or multi-frame;

indicates video or multi-frame;  denotes single-frame or image;

denotes single-frame or image;  refers to latent representation;

refers to latent representation;  to spatial representation;

to spatial representation;  to text modality;

to text modality;  to action representation;

to action representation;  to camera (pose) information;

to camera (pose) information;  to depth feature;

to depth feature;  to neural signal;

to neural signal;  to object-level feature;

to object-level feature;  to physical information; and

to physical information; and  to historical memory feature. Evals and Downstream Apps: Physical generation, question answering, interaction, understanding, attributes are abbreviated as , A refers to action prediction. M stands for motion planning. F stands for fluid dynamics. In downstream applications: W is real world, R is robotics, D is autonomous driving, and O is objects.

to historical memory feature. Evals and Downstream Apps: Physical generation, question answering, interaction, understanding, attributes are abbreviated as , A refers to action prediction. M stands for motion planning. F stands for fluid dynamics. In downstream applications: W is real world, R is robotics, D is autonomous driving, and O is objects.| Work | Venue | Method | Input / Output | Conditions | Evals and Apps |

|---|---|---|---|---|---|

| Explicit Priors & Feedback Integration | |||||

| Pandora [125] | arXiv’24 | Physical Prompts |

/ /

|

|

|

| WorldGPT [126] | MM’24 | Modality Alignment |

/ /

|

|

|

| LLMPhy [127] | arXiv’24 | Engine Integration |

/ /

|

|

|

| DrivePhysica [128] | arXiv’24 | Positional Constraints |

/ /

|

|

|

| PhysTwin [129] | ICCV’25 | Attribute Fusion |

/ /

|

None | |

| SlotPi [130] | SIGKDD’25 | Physical Constraints |

/ /

|

None | |

| S2-SSM [131] | arXiv’25 | Sparse Regularization |

/ /

|

None | |

| RenderWorld [132] | ICRA’25 | Pretraining |

/ /

|

|

|

| DINO-WM [95] | ICML’25 | Pretraining Priors |

/ /

|

None | |

| HERMES [133] | ICCV’25 | Multi-view Modeling |

/ /

|

|

|

| Cosmos [91] | arXiv’25 | Multimodal Constraints |

/ /

|

|

|

| Disentangling Static and Dynamic Factors | |||||

| AdaWorld [103] | ICML’25 | Action Decoupling |

/ /

|

|

|

| Dyn-O [62] | NeurIPS’25 | Dynamic Decoupling |

/ /

|

|

|

| ContextWM [134] | NeurIPS’23 | Dynamic Decoupling |

/ /

|

|

|

| DisWM [135] | ICCV’25 | Dynamic Decoupling |

/ /

|

None | |

| DreamDojo [67] | RAL’26 | Explicit Action Modeling |

/ /

|

|

|

| DreamZero [30] | arXiv’26 | Action Decoupling |

/ /

|

|

|

| OC-STORM [136] | arXiv’25 | Object Extraction |

/ /

|

None | |

| AD3 [137] | ICML’24 | Action Decoupling |

/ /

|

|

|

| LongDWM [138] | arXiv’25 | Action Decoupling |

/ /

|

|

|

| Vidar [93] | arXiv’25 | Action Decoupling |

/ /

|

|

|

| DREAMGEN [85] | arXiv’25 | Pseudo Action Estimation |

/ /

|

|

|

| VLMWM [88] | arXiv’25 | Fine-tuning |

/ /

|

None | |

| WorldDreamer [96] | arXiv’24 | Disentangled Modeling |

/ /

|

|

|

| Simulus [109] | arXiv’25 | Dynamic Decoupling |

/ /

|

|

|

| SCALOR [139] | ICLR’20 | Background Modeling |

/ /

|

|

|

| AETHER [140] | ICCV’25 | Unified Modeling |

/ /

|

None | |

| UWM [29] | RSS’25 | Action Decoupling |

/ /

|

None | |

| FLARE [87] | CoRL’25 | Unified Modeling |

/ /

|

|

|

| Progressive Constraints & Hierarchical Abstraction | |||||

| DWS [73] | AAAI’26 | Regularization |

/ / / /

|

|

|

| Dreamland [92] | arXiv’25 | Engine Simulation |

/ /

|

|

|

| GWM [141] | ICCV’25 | Hierarchical Abstraction |

/ / / /

|

|

|

| PIWM [142] | arXiv’24 | Interpretability |

/ /

|

None | |

| Ross et al. [143] | ICLR’25 | Theoretical Framework |

/ /

|

None | |

| SimWorld [90] | arXiv’25 | Simulation-based Modeling |

/ /

|

|

|

| MoSim [98] | CVPR’25 | Multi-constraint |

/ /

|

None | |

| WALL-E [144] | NeurIPS’25 | Rule Learning |

/ /

|

|

|

| FOLIAGE [145] | arXiv’25 | Hierarchical Abstraction |

/ /

|

|

|

| LLMPHY [127] | arXiv’24 | Hierarchical Abstraction |

/ /

|

|

|

| V-JEPA 2 [12] | arXiv’25 | Hierarchical pretraining |

/ /

|

|

|

2.5. Universality

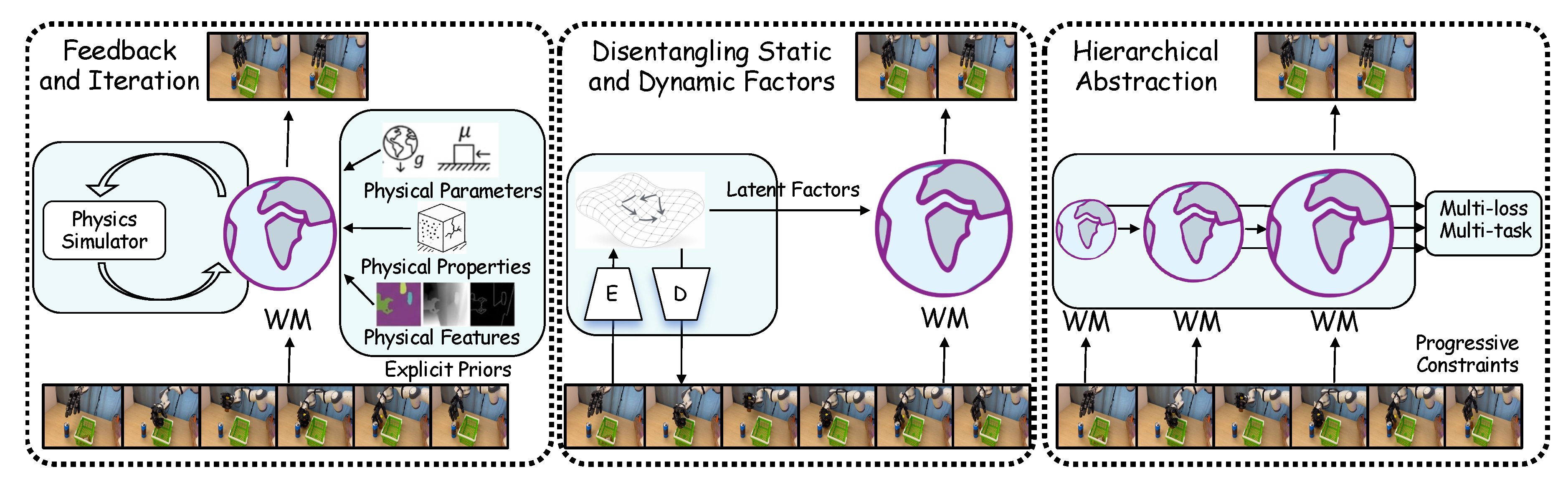

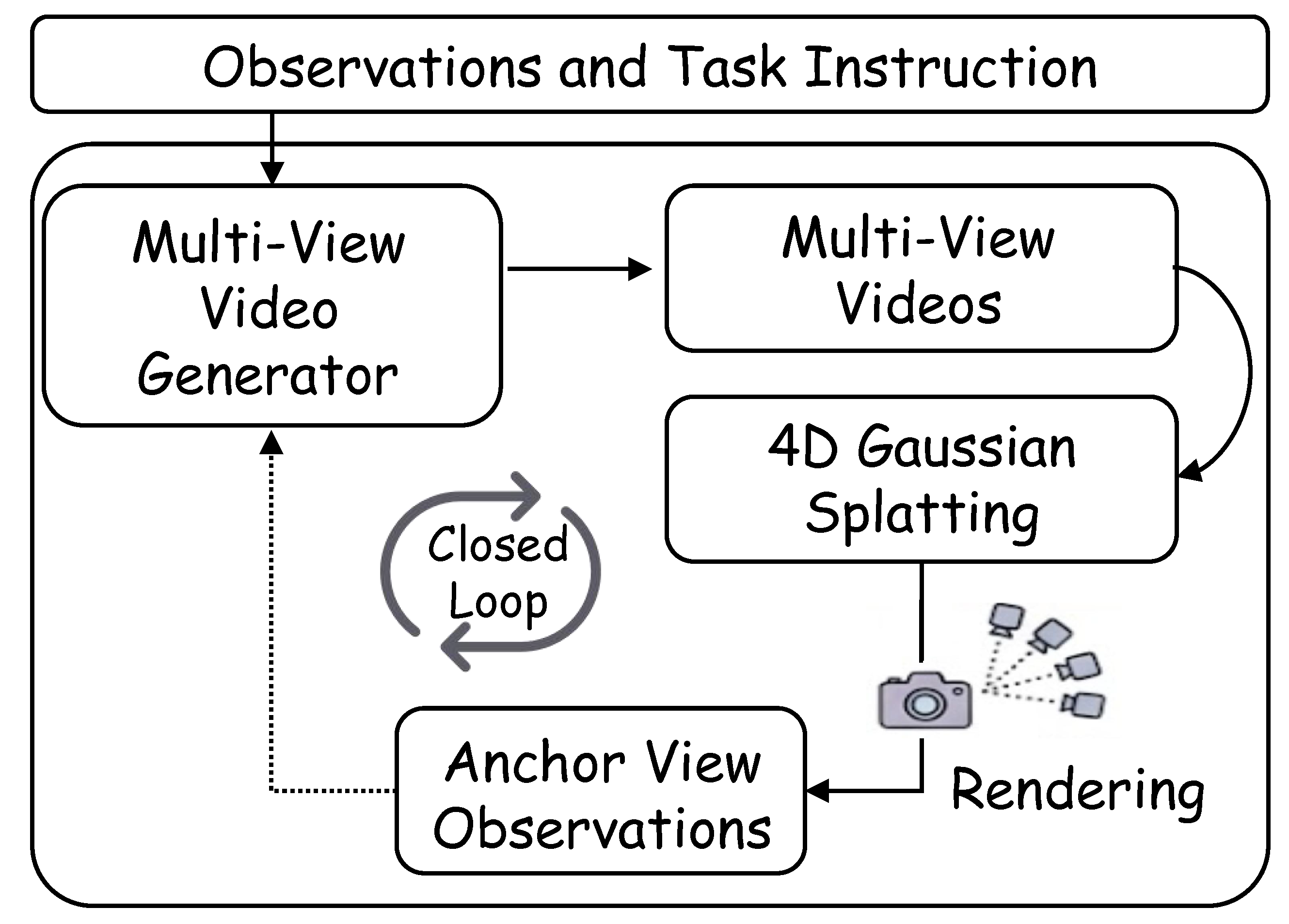

3. Three Paradigm of Physical World Model

3.1. Learning from Physical Priors

3.2. Learning from Action Decoupling

3.3. Hierarchical Progressive Learning

4. Future Directions and Discussion

4.1. Open Challenges

4.2. Industrialization and Deployment

4.3. Safety and Ethical Challenges

5. Conclusions

Conflicts of Interest

References

- Ha, D.; Schmidhuber, J. World models. arXiv 2018, arXiv:1803.101222. [Google Scholar]

- Zhang, P.F.; Cheng, Y.; Sun, X.; Wang, S.; Zhu, L.; Shen, H.T. A Step Toward World Models: A Survey on Robotic Manipulation. arXiv 2025, arXiv:2511.02097. [Google Scholar] [CrossRef]

- Tu, S.; Zhou, X.; Liang, D.; Jiang, X.; Zhang, Y.; Li, X.; Bai, X. The role of world models in shaping autonomous driving: A comprehensive survey. arXiv 2025, arXiv:2502.10498. [Google Scholar] [CrossRef]

- Feng, T.; Wang, W.; Yang, Y. A survey of world models for autonomous driving. arXiv 2025, arXiv:2501.11260. [Google Scholar] [CrossRef]

- Guan, Y.; Liao, H.; Li, Z.; Hu, J.; Yuan, R.; Zhang, G.; Xu, C. World models for autonomous driving: An initial survey. IEEE Transactions on Intelligent Vehicles, 2024. [Google Scholar]

- Li, J.; Tang, J.; Xu, Z.; Wu, L.; Zhou, Y.; Shao, S.; Yu, T.; Cao, Z.; Lu, Q. Hunyuan-GameCraft: High-dynamic Interactive Game Video Generation with Hybrid History Condition. arXiv 2025, arXiv:2506.17201. [Google Scholar]

- Zhang, Y.; Peng, C.; Wang, B.; Wang, P.; Zhu, Q.; Kang, F.; Jiang, B.; Gao, Z.; Li, E.; Liu, Y.; et al. Matrix-Game: Interactive World Foundation Model. arXiv 2025, arXiv:2506.18701. [Google Scholar] [CrossRef]

- Medsker, L.R.; Jain, L.; et al. Recurrent neural networks. Design and applications 2001, 5, 2. [Google Scholar]

- Hafner, D.; Lillicrap, T.; Ba, J.; Norouzi, M. Dream to Control: Learning Behaviors by Latent Imagination. In Proceedings of the International Conference on Learning Representations, 2020. [Google Scholar]

- Hafner, D.; Lillicrap, T.; Norouzi, M.; Ba, J. Mastering atari with discrete world models. arXiv 2020, arXiv:2010.02193. [Google Scholar]

- Hafner, D.; Pasukonis, J.; Ba, J.; Lillicrap, T. Mastering diverse domains through world models. arXiv 2023, arXiv:2301.04104. [Google Scholar]

- Assran, M.; Bardes, A.; Fan, D.; Garrido, Q.; Howes, R.; Muckley, M.; Rizvi, A.; Roberts, C.; Sinha, K.; Zholus, A.; et al. V-jepa 2: Self-supervised video models enable understanding, prediction and planning. arXiv 2025, arXiv:2506.09985. [Google Scholar]

- OpenAI. Sora 2 is here. 2025. Available online: https://openai.com/index/sora-2/ (accessed on 2025-06-05).

- DeepMind. Genie 3: A new frontier for world models. 2025. Available online: https://deepmind.google/discover/blog/genie-3-a-new-frontier-for-world-models/ (accessed on 2025-06-05).

- Ma, X.; Shen, Y.; Liu, P.; Zhan, J. Recent Advances, Critical Reflections, and Future Directions in Large-Scale Group Decision-Making: A Comprehensive Survey. In IEEE Transactions on Systems, Man, and Cybernetics: Systems; 2025. [Google Scholar]

- Yue, J.; Huang, Z.; Chen, Z.; Wang, X.; Wan, P.; Liu, Z. Simulating the Visual World with Artificial Intelligence: A Roadmap. arXiv 2025, arXiv:2511.08585. [Google Scholar] [CrossRef]

- Ding, J.; Zhang, Y.; Shang, Y.; Zhang, Y.; Zong, Z.; Feng, J.; Yuan, Y.; Su, H.; Li, N.; Sukiennik, N.; et al. Understanding world or predicting future? a comprehensive survey of world models. ACM Computing Surveys, 2024. [Google Scholar]

- Zhu, Z.; Wang, X.; Zhao, W.; Min, C.; Deng, N.; Dou, M.; Wang, Y.; Shi, B.; Wang, K.; Zhang, C.; et al. Is sora a world simulator? a comprehensive survey on general world models and beyond. arXiv 2024, arXiv:2405.03520. [Google Scholar] [CrossRef]

- Lin, M.; Wang, X.; Wang, Y.; Wang, S.; Dai, F.; Ding, P.; Wang, C.; Zuo, Z.; Sang, N.; Huang, S.; et al. Exploring the evolution of physics cognition in video generation: A survey. arXiv 2025, arXiv:2503.21765. [Google Scholar] [CrossRef]

- Liu, D.; Zhang, J.; Dinh, A.D.; Park, E.; Zhang, S.; Mian, A.; Shah, M.; Xu, C. Generative physical ai in vision: A survey. arXiv 2025, arXiv:2501.10928. [Google Scholar] [CrossRef]

- Xie, N.; Tian, Z.; Yang, L.; Zhang, X.P.; Guo, M.; Li, J. From 2D to 3D Cognition: A Brief Survey of General World Models. arXiv 2025, arXiv:2506.20134. [Google Scholar] [CrossRef]

- Hu, M.; Zhu, M.; Zhou, X.; Yan, Q.; Li, S.; Liu, C.; Chen, Q. Efficient text-driven motion generation via latent consistency training. IEEE Transactions on Systems, Man, and Cybernetics: Systems, 2026. [Google Scholar]

- Chen, T.; Hu, X.; Ding, Z.; Jin, C. Learning World Models for Interactive Video Generation. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Wang, S.; Tian, J.; Wang, L.; Liao, Z.; lijiayi; Dong, H.; Xia, K.; Zhou, S.; Tang, W.; Hua, G. SAMPO: Scale-wise Autoregression with Motion Prompt for Generative World Models. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Xiang, J.; Gu, Y.; Liu, Z.; Feng, Z.; Gao, Q.; Hu, Y.; Huang, B.; Liu, G.; Yang, Y.; Zhou, K.; et al. PAN: A World Model for General, Interactable, and Long-Horizon World Simulation. arXiv 2025, arXiv:2511.09057. [Google Scholar] [CrossRef]

- Huang, S.; Chen, L.; Zhou, P.; Chen, S.; Liao, Y.; Jiang, Z.; Hu, Y.; Gao, P.; Li, H.; Yao, M.; et al. EnerVerse: Envisioning Embodied Future Space for Robotics Manipulation. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Chi, X.; Fan, C.K.; Zhang, H.; Qi, X.; Zhang, R.; Chen, A.; Chan, C.m.; Xue, W.; Liu, Q.; Zhang, S.; et al. Eva: An embodied world model for future video anticipation. arXiv 2024, arXiv:2410.15461. [Google Scholar] [CrossRef]

- Mao, X.; Lin, S.; Li, Z.; Li, C.; Peng, W.; He, T.; Pang, J.; Chi, M.; Qiao, Y.; Zhang, K. Yume: An interactive world generation model. arXiv 2025, arXiv:2507.17744. [Google Scholar] [CrossRef]

- Zhu, C.; Yu, R.; Feng, S.; Burchfiel, B.; Shah, P.; Gupta, A. Unified world models: Coupling video and action diffusion for pretraining on large robotic datasets. arXiv 2025, arXiv:2504.02792. [Google Scholar] [CrossRef]

- Zhi, H.; Chen, P.; Zhou, S.; Dong, Y.; Wu, Q.; Han, L.; Tan, M. 3DFlowAction: Learning Cross-Embodiment Manipulation from 3D Flow World Model. arXiv 2025, arXiv:2506.06199. [Google Scholar]

- Chen, B.; Martí Monsó, D.; Du, Y.; Simchowitz, M.; Tedrake, R.; Sitzmann, V. Diffusion forcing: Next-token prediction meets full-sequence diffusion. Advances in Neural Information Processing Systems 2024, 37, 24081–24125. [Google Scholar]

- Zhang, K.; Tang, Z.; Hu, X.; Pan, X.; Guo, X.; Liu, Y.; Huang, J.; Yuan, L.; Zhang, Q.; Long, X.X.; et al. Epona: Autoregressive diffusion world model for autonomous driving. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025; pp. 27220–27230. [Google Scholar]

- Alonso, E.; Jelley, A.; Micheli, V.; Kanervisto, A.; Storkey, A.J.; Pearce, T.; Fleuret, F. Diffusion for world modeling: Visual details matter in atari. Advances in Neural Information Processing Systems 2024, 37, 58757–58791. [Google Scholar]

- Hassan, M.; Stapf, S.; Rahimi, A.; Rezende, P.; Haghighi, Y.; Brüggemann, D.; Katircioglu, I.; Zhang, L.; Chen, X.; Saha, S.; et al. Gem: A generalizable ego-vision multimodal world model for fine-grained ego-motion, object dynamics, and scene composition control. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025; pp. 22404–22415. [Google Scholar]

- Koh, J.Y.; Lee, H.; Yang, Y.; Baldridge, J.; Anderson, P. Pathdreamer: A world model for indoor navigation. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021; pp. 14738–14748. [Google Scholar]

- Tu, Y.; Luo, H.; Chen, X.; Bai, X.; Wang, F.; Zhao, H. PlayerOne: Egocentric World Simulator. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Savov, N.; Kazemi, N.; Zhang, D.; Paudel, D.P.; Wang, X.; Gool, L.V. StateSpaceDiffuser: Bringing Long Context to Diffusion World Models. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Huang, S.; Wu, J.; Zhou, Q.; Miao, S.; Long, M. Vid2World: Crafting Video Diffusion Models to Interactive World Models. arXiv 2025, arXiv:2505.14357. [Google Scholar] [CrossRef]

- Robine, J.; Höftmann, M.; Harmeling, S. Simple, Good, Fast: Self-Supervised World Models Free of Baggage. In Proceedings of the The Thirteenth International Conference on Learning Representations, 2025. [Google Scholar]

- Shang, Y.; Zhang, X.; Tang, Y.; Jin, L.; Gao, C.; Wu, W.; Li, Y. RoboScape: Physics-informed Embodied World Model. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Li, S.; Yang, C.; Fang, J.; Yi, T.; Lu, J.; Cen, J.; Xie, L.; Shen, W.; Tian, Q. Worldgrow: Generating infinite 3d world. Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence 2026, Vol. 40, 6433–6441. [Google Scholar] [CrossRef]

- Zhang, Q.; Zhai, S.; Martin, M.A.B.; Miao, K.; Toshev, A.; Susskind, J.; Gu, J. World-consistent video diffusion with explicit 3d modeling. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025; pp. 21685–21695. [Google Scholar]

- Li, X.; Wang, T.; Gu, Z.; Zhang, S.; Guo, C.; Cao, L. FlashWorld: High-quality 3D Scene Generation within Seconds. In Proceedings of the The Fourteenth International Conference on Learning Representations, 2026. [Google Scholar]

- Wu, H.; Wu, D.; He, T.; Guo, J.; Ye, Y.; Duan, Y.; Bian, J. Geometry Forcing: Marrying Video Diffusion and 3D Representation for Consistent World Modeling. In Proceedings of the The Fourteenth International Conference on Learning Representations, 2026. [Google Scholar]

- Lu, Y.; Ren, X.; Yang, J.; Shen, T.; Wu, Z.; Gao, J.; Wang, Y.; Chen, S.; Chen, M.; Fidler, S.; et al. Infinicube: Unbounded and controllable dynamic 3d driving scene generation with world-guided video models. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025; pp. 27272–27283. [Google Scholar]

- Yang, Y.; Liu, J.; Zhang, Z.; Zhou, S.; Tan, R.; Yang, J.; Du, Y.; Gan, C. MindJourney: Test-Time Scaling with World Models for Spatial Reasoning. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Liang, D.; Zhang, D.; Zhou, X.; Tu, S.; Feng, T.; Li, X.; Zhang, Y.; Du, M.; Tan, X.; Bai, X. Seeing the Future, Perceiving the Future: A unified driving world model for future generation and perception. arXiv 2025, arXiv:2503.13587. [Google Scholar] [CrossRef]

- Lee, J.H.; Lin, B.J.; Sun, W.F.; Lee, C.Y. EDELINE: Enhancing Memory in Diffusion-based World Models via Linear-Time Sequence Modeling. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Guo, Y.; Shi, L.X.; Chen, J.; Finn, C. Ctrl-World: A Controllable Generative World Model for Robot Manipulation. In Proceedings of the International Conference on Learning Representations (ICLR), 2026. [Google Scholar]

- Wu, T.; Yang, S.; Po, R.; Xu, Y.; Liu, Z.; Lin, D.; Wetzstein, G. Video World Models with Long-term Spatial Memory. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Xiao, Z.; LAN, Y.; Zhou, Y.; Ouyang, W.; Yang, S.; Zeng, Y.; Pan, X. WorldMem: Long-term Consistent World Simulation with Memory. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Wang, G.; Xie, Y.; Jiang, Y.; Mandlekar, A.; Xiao, C.; Zhu, Y.; Fan, L.; Anandkumar, A. Voyager: An Open-Ended Embodied Agent with Large Language Models. Transactions on Machine Learning Research, 2024. [Google Scholar]

- Po, R.; Nitzan, Y.; Zhang, R.; Chen, B.; Dao, T.; Shechtman, E.; Wetzstein, G.; Huang, X. Long-context state-space video world models. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025; pp. 8733–8744. [Google Scholar]

- Traub, M.; Otte, S.; Menge, T.; Karlbauer, M.; Thuemmel, J.; Butz, M.V. Learning What and Where: Disentangling Location and Identity Tracking Without Supervision. In Proceedings of the The Eleventh International Conference on Learning Representations, 2023. [Google Scholar]

- Elsayed, G.; Mahendran, A.; Van Steenkiste, S.; Greff, K.; Mozer, M.C.; Kipf, T. Savi++: Towards end-to-end object-centric learning from real-world videos. Advances in Neural Information Processing Systems 2022, 35, 28940–28954. [Google Scholar]

- Zhang, Y.; Guo, X.; Xu, H.; Long, M. Consistent World Models via Foresight Diffusion. arXiv 2025, arXiv:2505.16474. [Google Scholar] [CrossRef]

- Chen, J.; Zhu, H.; He, X.; Wang, Y.; Zhou, J.; Chang, W.; Zhou, Y.; Li, Z.; Fu, Z.; Pang, J.; et al. DeepVerse: 4D Autoregressive Video Generation as a World Model. arXiv 2025, arXiv:2506.01103. [Google Scholar] [CrossRef]

- Russell, L.; Hu, A.; Bertoni, L.; Fedoseev, G.; Shotton, J.; Arani, E.; Corrado, G. Gaia-2: A controllable multi-view generative world model for autonomous driving. arXiv 2025, arXiv:2503.20523. [Google Scholar] [CrossRef]

- Hu, W.; Wen, X.; Li, X.; Wang, G. DSG-World: Learning a 3D Gaussian World Model from Dual State Videos. arXiv 2025, arXiv:2506.05217. [Google Scholar] [CrossRef]

- Huang, T.; Zheng, W.; Wang, T.; Liu, Y.; Wang, Z.; Wu, J.; Jiang, J.; Li, H.; Lau, R.; Zuo, W.; et al. Voyager: Long-range and world-consistent video diffusion for explorable 3d scene generation. ACM Transactions on Graphics (TOG) 2025, 44, 1–15. [Google Scholar] [CrossRef]

- Zhou, S.; Du, Y.; Yang, Y.; Han, L.; Chen, P.; Yeung, D.Y.; Gan, C. Learning 3D Persistent Embodied World Models. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Wang, Z.; Wang, K.; Zhao, L.; Stone, P.; Bian, J. Dyn-O: Building Structured World Models with Object-Centric Representations. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Ferraro, S.; Mazzaglia, P.; Verbelen, T.; Dhoedt, B. FOCUS: object-centric world models for robotic manipulation. Frontiers in Neurorobotics 2025, 19, 1585386. [Google Scholar] [CrossRef] [PubMed]

- Barcellona, L.; Zadaianchuk, A.; Allegro, D.; Papa, S.; Ghidoni, S.; Gavves, S. Dream to Manipulate: Compositional World Models Empowering Robot Imitation Learning with Imagination. In Proceedings of the Greeks in AI Symposium, 2025, 2025. [Google Scholar]

- Oquab, M.; Darcet, T.; Moutakanni, T.; Vo, H.; Szafraniec, M.; Khalidov, V.; Fernandez, P.; Haziza, D.; Massa, F.; El-Nouby, A.; et al. DINOv2: Learning Robust Visual Features without Supervision. Transactions on Machine Learning Research Journal 2024. [Google Scholar]

- Huang, Y.; Zhang, J.; Zou, S.; Liu, X.; Hu, R.; Xu, K. LaDi-WM: A Latent Diffusion-Based World Model for Predictive Manipulation. In Proceedings of the Proceedings of The 9th Conference on Robot Learning PMLR; Lim, J., Song, S., Park, H.W., Eds.; Proceedings of Machine Learning Research, 27–30 Sep 2025; Vol. 305, pp. 1726–1743. [Google Scholar]

- Guo, J.; Ma, X.; Wang, Y.; Yang, M.; Liu, H.; Li, Q. Flowdreamer: A rgb-d world model with flow-based motion representations for robot manipulation. IEEE Robotics and Automation Letters 2026, 11, 2466–2473. [Google Scholar] [CrossRef]

- Bar, A.; Zhou, G.; Tran, D.; Darrell, T.; LeCun, Y. Navigation world models. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025; pp. 15791–15801. [Google Scholar]

- Peebles, W.; Xie, S. Scalable diffusion models with transformers. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2023; pp. 4195–4205. [Google Scholar]

- Chen, Y.; Li, H.; Jiang, Z.; Wen, H.; Zhao, D. Tevir: Text-to-video reward with diffusion models for efficient reinforcement learning. IEEE Transactions on Systems, Man, and Cybernetics: Systems, 2025. [Google Scholar]

- Rigter, M.; Gupta, T.; Hilmkil, A.; Ma, C. AVID: Adapting Video Diffusion Models to World Models. In Proceedings of the Reinforcement Learning Conference, 2024. [Google Scholar]

- Wu, J.; Yin, S.; Feng, N.; He, X.; Li, D.; Hao, J.; Long, M. ivideogpt: Interactive videogpts are scalable world models. Advances in Neural Information Processing Systems 2024, 37, 68082–68119. [Google Scholar]

- He, H.; Zhang, Y.; Lin, L.; Xu, Z.; Pan, L. Pre-trained video generative models as world simulators. Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence 2026, Vol. 40, 4645–4653. [Google Scholar] [CrossRef]

- Zhao, B.; Tang, R.; Jia, M.; Wang, Z.; Man, F.; Zhang, X.; Shang, Y.; Zhang, W.; Wu, W.; Gao, C.; et al. AirScape: An Aerial Generative World Model with Motion Controllability. In Proceedings of the Proceedings of the 33rd ACM International Conference on Multimedia, 2025; pp. 12519–12528. [Google Scholar]

- Robotics, U. UnifoLM-WMA-0: A World-Model-Action (WMA) Framework under UnifoLM Family Open-source world-model–action architecture spanning multiple types of robotic embodiments. 2025. Available online: https://github.com/unitreerobotics/unifolm-world-model-action.

- Hayashi, K.; Koyama, M.; Guerreiro, J.J.A. Inter-environmental world modeling for continuous and compositional dynamics. arXiv 2025, arXiv:2503.09911. [Google Scholar] [CrossRef]

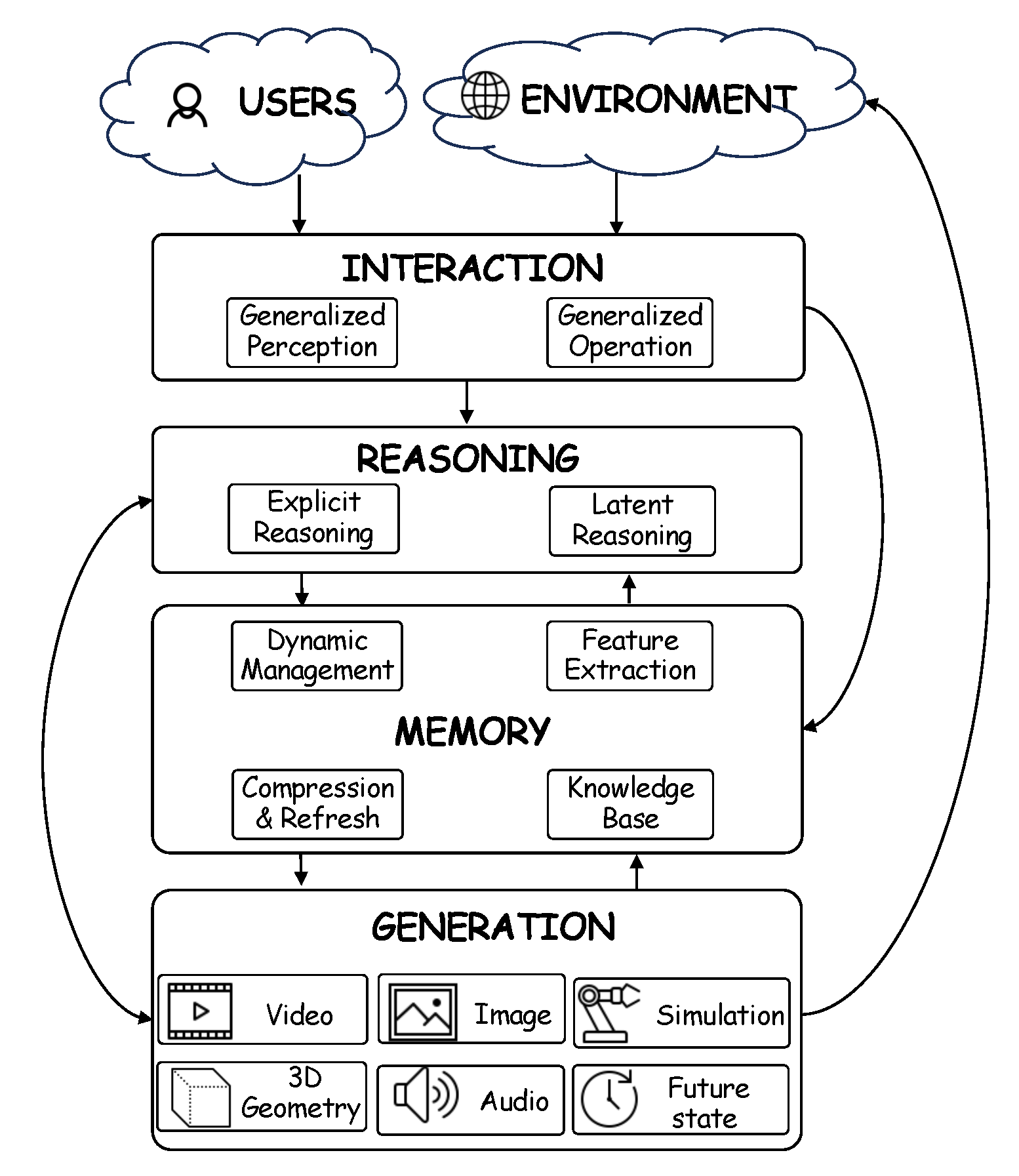

- Durante, Z.; Gong, R.; Sarkar, B.; Wake, N.; Taori, R.; Tang, P.; Lakshmikanth, S.; Schulman, K.; Milstein, A.; Vo, H.; et al. An interactive agent foundation model. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025; pp. 3652–3662. [Google Scholar]

- Zhen, H.; Sun, Q.; Zhang, H.; Li, J.; Zhou, S.; Du, Y.; Gan, C. TesserAct: learning 4D embodied world models. arXiv 2025, arXiv:2504.20995. [Google Scholar] [CrossRef]

- Duan, Y.; Zou, Z.; Gu, T.; Jia, W.; Zhao, Z.; Xu, L.; Liu, X.; Lin, Y.; Jiang, H.; Chen, K.; et al. LatticeWorld: A Multimodal Large Language Model-Empowered Framework for Interactive Complex World Generation. arXiv 2025, arXiv:2509.05263. [Google Scholar]

- Guo, J.; Ye, Y.; He, T.; Wu, H.; Jiang, Y.; Pearce, T.; Bian, J. Mineworld: a real-time and open-source interactive world model on minecraft. arXiv 2025, arXiv:2504.08388. [Google Scholar]

- Li, J.; Tang, J.; Xu, Z.; Wu, L.; Zhou, Y.; Shao, S.; Yu, T.; Cao, Z.; Lu, Q. Hunyuan-GameCraft: High-dynamic Interactive Game Video Generation with Hybrid History Condition. arXiv 2025, arXiv:2506.17201. [Google Scholar]

- Lab, D. Mirage 2 — Generative World Engine Browser-based system to generate explorable 3D worlds from images/text. 2025. Available online: https://www.mirage2.org/.

- Labs, W. World Labs: spatial intelligence for large world models. 2025. Available online: https://www.worldlabs.ai/ (accessed on 2025-06-05).

- Yang, Z.; Ge, W.; Li, Y.; Chen, J.; Li, H.; An, M.; Kang, F.; Xue, H.; Xu, B.; Yin, Y.; et al. Matrix-3d: Omnidirectional explorable 3d world generation. arXiv 2025, arXiv:2508.08086. [Google Scholar] [CrossRef]

- Jang, J.; Ye, S.; Lin, Z.; Xiang, J.; Bjorck, J.; Fang, Y.; Hu, F.; Huang, S.; Kundalia, K.; Lin, Y.C.; et al. DreamGen: Unlocking Generalization in Robot Learning through Video World Models. In Proceedings of the 9th Annual Conference on Robot Learning, 2025. [Google Scholar]

- Kerbl, B.; Kopanas, G.; Leimkühler, T.; Drettakis, G. 3D Gaussian splatting for real-time radiance field rendering. ACM Trans. Graph. 2023, 42, 139–1. [Google Scholar] [CrossRef]

- Zheng, R.; Wang, J.; Reed, S.; Bjorck, J.; Fang, Y.; Hu, F.; Jang, J.; Kundalia, K.; Lin, Z.; Magne, L.; et al. FLARE: Robot Learning with Implicit World Modeling. In Proceedings of the Proceedings of The 9th Conference on Robot Learning;PMLR; Lim, J., Song, S., Park, H.W., Eds.; Proceedings of Machine Learning Research, 27–30 Sep 2025; Vol. 305, pp. 3952–3971. [Google Scholar]

- Qiu, Y.; Ziser, Y.; Korhonen, A.; Cohen, S.B.; Ponti, E.M. Bootstrapping World Models from Dynamics Models in Multimodal Foundation Models. arXiv 2025, arXiv:2506.06006. [Google Scholar] [CrossRef]

- Kadian, A.; Truong, J.; Gokaslan, A.; Clegg, A.; Wijmans, E.; Lee, S.; Savva, M.; Chernova, S.; Batra, D. Sim2real predictivity: Does evaluation in simulation predict real-world performance? IEEE Robotics and Automation Letters 2020, 5, 6670–6677. [Google Scholar] [CrossRef]

- Li, X.; Song, R.; Xie, Q.; Wu, Y.; Zeng, N.; Ai, Y. Simworld: A unified benchmark for simulator-conditioned scene generation via world model. In Proceedings of the 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS); IEEE, 2025; pp. 927–934. [Google Scholar]

- Agarwal, N.; Ali, A.; Bala, M.; Balaji, Y.; Barker, E.; Cai, T.; Chattopadhyay, P.; Chen, Y.; Cui, Y.; Ding, Y.; et al. Cosmos world foundation model platform for physical ai. arXiv 2025, arXiv:2501.03575. [Google Scholar] [CrossRef]

- Mo, S.; Leng, Z.; Liu, L.; Wang, W.; He, H.; Zhou, B. Dreamland: Controllable World Creation with Simulator and Generative Models. arXiv 2025, arXiv:2506.08006. [Google Scholar] [CrossRef]

- Feng, Y.; Tan, H.; Mao, X.; Liu, G.; Huang, S.; Xiang, C.; Su, H.; Zhu, J. Vidar: Embodied video diffusion model for generalist bimanual manipulation. arXiv 2025, arXiv:2507.12898. [Google Scholar]

- Wang, Y.; Yu, R.; Wan, S.; Gan, L.; Zhan, D.C. Founder: Grounding foundation models in world models for open-ended embodied decision making. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- Zhou, G.; Pan, H.; LeCun, Y.; Pinto, L. DINO-WM: World Models on Pre-trained Visual Features enable Zero-shot Planning. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- Wang, X.; Zhu, Z.; Huang, G.; Wang, B.; Chen, X.; Lu, J. Worlddreamer: Towards general world models for video generation via predicting masked tokens. arXiv 2024, arXiv:2401.09985. [Google Scholar] [CrossRef]

- Schiewer, R.; Subramoney, A.; Wiskott, L. Exploring the limits of hierarchical world models in reinforcement learning. Scientific Reports 2024, 14, 26856. [Google Scholar] [CrossRef]

- Hao, C.; Lu, W.; Xu, Y.; Chen, Y. Neural Motion Simulator Pushing the Limit of World Models in Reinforcement Learning. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025; pp. 27608–27617. [Google Scholar]

- Song, Y.; Sohl-Dickstein, J.; Kingma, D.P.; Kumar, A.; Ermon, S.; Poole, B. Score-based generative modeling through stochastic differential equations. arXiv 2020, arXiv:2011.13456. [Google Scholar]

- Mazzaglia, P.; Verbelen, T.; Dhoedt, B.; Courville, A.; Rajeswar, S. GenRL: Multimodal-foundation world models for generalization in embodied agents. Advances in neural information processing systems 2024, 37, 27529–27555. [Google Scholar]

- Fang, F.; Liang, W.; Wu, Y.; Xu, Q.; Lim, J.H. Improving generalization of reinforcement learning using a bilinear policy network. In Proceedings of the 2022 IEEE International Conference on Image Processing (ICIP); IEEE, 2022; pp. 991–995. [Google Scholar]

- Fang, Q.; Du, W.; Wang, H.; Zhang, J. Towards Unraveling and Improving Generalization in World Models. arXiv 2024, arXiv:2501.00195. [Google Scholar] [CrossRef]

- Gao, S.; Zhou, S.; Du, Y.; Zhang, J.; Gan, C. AdaWorld: Learning Adaptable World Models with Latent Actions. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- Prasanna, S.; Farid, K.; Rajan, R.; Biedenkapp, A. Dreaming of Many Worlds: Learning Contextual World Models aids Zero-Shot Generalization. In Proceedings of the Seventeenth European Workshop on Reinforcement Learning, 2024. [Google Scholar]

- Baldassarre, F.; Szafraniec, M.; Terver, B.; Khalidov, V.; Massa, F.; LeCun, Y.; Labatut, P.; Seitzer, M.; Bojanowski, P. Back to the features: Dino as a foundation for video world models. arXiv 2025, arXiv:2507.19468. [Google Scholar] [CrossRef]

- Ali, M.Q.; Sridhar, A.; Matiana, S.; Wong, A.; Al-Sharman, M. Humanoid World Models: Open World Foundation Models for Humanoid Robotics. arXiv 2025, arXiv:2506.01182. [Google Scholar] [CrossRef]

- Chi, X.; Ge, K.; Liu, J.; Zhou, S.; Jia, P.; He, Z.; Liu, Y.; Li, T.; Han, L.; Han, S.; et al. MinD: Unified Visual Imagination and Control via Hierarchical World Models. arXiv 2025, arXiv:2506.18897. [Google Scholar] [CrossRef]

- Chun, J.; Jeong, Y.; Kim, T. Sparse Imagination for Efficient Visual World Model Planning. In Proceedings of the The Fourteenth International Conference on Learning Representations, 2026. [Google Scholar]

- Cohen, L.; Wang, K.; Kang, B.; Gadot, U.; Mannor, S. Uncovering Untapped Potential in Sample-Efficient World Model Agents. arXiv 2025, arXiv:2502.11537. [Google Scholar]

- Burchi, M.; Timofte, R. Accurate and Efficient World Modeling with Masked Latent Transformers. In Proceedings of the Proceedings of the 42nd International Conference on Machine Learning PMLR; Singh, A., Fazel, M., Hsu, D., Lacoste-Julien, S., Berkenkamp, F., Maharaj, T., Wagstaff, K., Zhu, J., Eds.; Proceedings of Machine Learning Research, 13–19 Jul 2025; Vol. 267, pp. 5894–5912. [Google Scholar]

- Zhang, H.; Yan, X.; Xue, Y.; Guo, Z.; Cui, S.; Li, Z.; Liu, B. D2-world: An Efficient World Model through Decoupled Dynamic Flow. arXiv 2024, arXiv:2411.17027. [Google Scholar]

- Pu, Y.; Niu, Y.; Tang, J.; Xiong, J.; Hu, S.; Li, H. One Model for All Tasks: Leveraging Efficient World Models in Multi-Task Planning. arXiv 2025, arXiv:2509.07945. [Google Scholar] [CrossRef]

- Li, S.; Hao, Q.; Shang, Y.; Li, Y. KeyWorld: Key Frame Reasoning Enables Effective and Efficient World Models. arXiv 2025, arXiv:2509.21027. [Google Scholar] [CrossRef]

- Yamada, J.; Rigter, M.; Collins, J.; Posner, I. Twist: Teacher-student world model distillation for efficient sim-to-real transfer. In Proceedings of the 2024 IEEE International Conference on Robotics and Automation (ICRA); IEEE, 2024; pp. 9190–9196. [Google Scholar]

- Micheli, V.; Alonso, E.; Fleuret, F. Transformers are Sample-Efficient World Models. In Proceedings of the The Eleventh International Conference on Learning Representations, 2023. [Google Scholar]

- Micheli, V.; Alonso, E.; Fleuret, F. Efficient World Models with Context-Aware Tokenization. In Proceedings of the International Conference on Machine Learning. PMLR, 2024; pp. 35623–35638. [Google Scholar]

- Song, Q.; Wang, X.; Zhou, D.; Lin, J.; Chen, C.; Ma, Y.; Li, X. Hero: Hierarchical extrapolation and refresh for efficient world models. arXiv 2025, arXiv:2508.17588. [Google Scholar] [CrossRef]

- Jin, B.; Li, W.; Yang, B.; Zhu, Z.; Jiang, J.; Gao, H.a.; Sun, H.; Zhan, K.; Hu, H.; Zhang, X.; et al. PosePilot: Steering camera pose for generative world models with self-supervised depth. In Proceedings of the 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2025; pp. 8051–8058. [Google Scholar]

- Jeong, Y.; Chun, J.; Cha, S.; Kim, T. Object-Centric World Model for Language-Guided Manipulation. In Proceedings of the ICLR 2025 Workshop on World Models: Understanding, Modelling and Scaling, 2025. [Google Scholar]

- Akbulut, T.; Merlin, M.; Parr, S.; Quartey, B.; Thompson, S. Sample Efficient Robot Learning with Structured World Models. arXiv 2022, arXiv:2210.12278. [Google Scholar] [CrossRef]

- Van Den Oord, A.; Vinyals, O.; et al. Neural discrete representation learning. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Chen, R.; Ko, Y.; Zhang, Z.; Cho, C.; Chung, S.; Giuffré, M.; Shung, D.L.; Stadie, B.C. LAMP: Extracting Locally Linear Decision Surfaces from LLM World Models. arXiv 2025, arXiv:2505.11772. [Google Scholar] [CrossRef]

- Hu, W.; Wang, H.; Wang, J. Joint Optimization Time-Slotted Computing Offloading and V2X Resource Allocation by Reinforcement Learning. In IEEE Transactions on Systems, Man, and Cybernetics: Systems; 2026. [Google Scholar]

- Zeng, B.; Zhu, K.; Hua, D.; Li, B.; Tong, C.; Wang, Y.; Huang, X.; Dai, Y.; Zhang, Z.; Yang, Y.; et al. Research on World Models Is Not Merely Injecting World Knowledge into Specific Tasks. arXiv 2026, arXiv:2602.01630. [Google Scholar] [CrossRef]

- Xiang, J.; Liu, G.; Gu, Y.; Gao, Q.; Ning, Y.; Zha, Y.; Feng, Z.; Tao, T.; Hao, S.; Shi, Y.; et al. Pandora: Towards general world model with natural language actions and video states. arXiv 2024, arXiv:2406.09455. [Google Scholar]

- Ge, Z.; Huang, H.; Zhou, M.; Li, J.; Wang, G.; Tang, S.; Zhuang, Y. Worldgpt: Empowering llm as multimodal world model. In Proceedings of the Proceedings of the 32nd ACM International Conference on Multimedia, 2024; pp. 7346–7355. [Google Scholar]

- Cherian, A.; Corcodel, R.; Jain, S.; Romeres, D. Llmphy: Complex physical reasoning using large language models and world models. arXiv 2024, arXiv:2411.08027. [Google Scholar] [CrossRef]

- Yang, Z.; Guo, X.; Ding, C.; Wang, C.; Wu, W. Physical informed driving world model. arXiv 2024, arXiv:2412.08410. [Google Scholar] [CrossRef]

- Jiang, H.; Hsu, H.Y.; Zhang, K.; Yu, H.N.; Wang, S.; Li, Y. Phystwin: Physics-informed reconstruction and simulation of deformable objects from videos. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025; pp. 7219–7230. [Google Scholar]

- Li, J.; Wan, H.; Lin, N.; Zhan, Y.L.; Chengze, R.; Wang, H.; Zhang, Y.; Liu, H.; Wang, Z.; Yu, F.; et al. SlotPi: Physics-informed Object-centric Reasoning Models. Proceedings of the Proceedings of the 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining V. 2(2025), 1376–1387.

- Petri, F.; Asprino, L.; Gangemi, A. Learning Local Causal World Models with State Space Models and Attention. arXiv 2025, arXiv:2505.02074. [Google Scholar] [CrossRef]

- Yan, Z.; Dong, W.; Shao, Y.; Lu, Y.; Liu, H.; Liu, J.; Wang, H.; Wang, Z.; Wang, Y.; Remondino, F.; et al. Renderworld: World model with self-supervised 3d label. In Proceedings of the 2025 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2025; pp. 6063–6070. [Google Scholar]

- Zhou, X.; Liang, D.; Tu, S.; Chen, X.; Ding, Y.; Zhang, D.; Tan, F.; Zhao, H.; Bai, X. Hermes: A unified self-driving world model for simultaneous 3d scene understanding and generation. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025; pp. 27817–27827. [Google Scholar]

- Wu, J.; Ma, H.; Deng, C.; Long, M. Pre-training contextualized world models with in-the-wild videos for reinforcement learning. Advances in Neural Information Processing Systems 2023, 36, 39719–39743. [Google Scholar]

- Wang, Q.; Zhang, Z.; Xie, B.; Jin, X.; Wang, Y.; Wang, S.; Zheng, L.; Yang, X.; Zeng, W. Disentangled world models: Learning to transfer semantic knowledge from distracting videos for reinforcement learning. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025; pp. 2599–2608. [Google Scholar]

- Zhang, W.; Jelley, A.; McInroe, T.; Storkey, A. Objects matter: object-centric world models improve reinforcement learning in visually complex environments. arXiv 2025, arXiv:2501.16443. [Google Scholar] [CrossRef]

- Wang, Y.; Wan, S.; Gan, L.; Feng, S.; Zhan, D.C. AD3: implicit action is the key for world models to distinguish the diverse visual distractors. In Proceedings of the Proceedings of the 41st International Conference on Machine Learning, 2024; pp. 51546–51568. [Google Scholar]

- Wang, X.; Wu, Z.; Peng, P. LongDWM: Cross-Granularity Distillation for Building a Long-Term Driving World Model. arXiv 2025, arXiv:2506.01546. [Google Scholar]

- Jiang, J.; Janghorbani, S.; De Melo, G.; Ahn, S. SCALOR: Generative World Models with Scalable Object Representations. In Proceedings of the International Conference on Learning Representations, 2020. [Google Scholar]

- Zhu, H.; Wang, Y.; Zhou, J.; Chang, W.; Zhou, Y.; Li, Z.; Chen, J.; Shen, C.; Pang, J.; He, T. Aether: Geometric-aware unified world modeling. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025; pp. 8535–8546. [Google Scholar]

- Lu, G.; Jia, B.; Li, P.; Chen, Y.; Wang, Z.; Tang, Y.; Huang, S. Gwm: Towards scalable gaussian world models for robotic manipulation. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025; pp. 9263–9274. [Google Scholar]

- Mao, Z.; Ruchkin, I. Towards Physically Interpretable World Models: Meaningful Weakly Supervised Representations for Visual Trajectory Prediction. arXiv 2024, arXiv:2412.12870. [Google Scholar] [CrossRef]

- Ross, E.; Drygala, C.; Schwarz, L.; Kaiser, S.; di Mare, F.; Breiten, T.; Gottschalk, H. When do World Models Successfully Learn Dynamical Systems? arXiv 2025, arXiv:2507.04898. [Google Scholar] [CrossRef]

- Zhou, S.; Zhou, T.; Yang, Y.; Long, G.; Ye, D.; Jiang, J.; Zhang, C. WALL-E: World Alignment by NeuroSymbolic Learning improves World Model-based LLM Agents. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Liu, X.; Tang, H. FOLIAGE: Towards Physical Intelligence World Models Via Unbounded Surface Evolution. arXiv 2025, arXiv:2506.03173. [Google Scholar]

- Huh, M.; Cheung, B.; Wang, T.; Isola, P. The platonic representation hypothesis. arXiv 2024, arXiv:2405.07987. [Google Scholar] [CrossRef]

- Zhao, Y.; Scannell, A.; Hou, Y.; Cui, T.; Chen, L.; Büchler, D.; Solin, A.; Kannala, J.; Pajarinen, J. Generalist World Model Pre-Training for Efficient Reinforcement Learning. In Proceedings of the ICLR 2025 Workshop on World Models: Understanding, Modelling and Scaling, 2025. [Google Scholar]

- Technologies, X. 1X World Model. 2024. Available online: https://www.1x.tech/discover/1x-world-model (accessed on 2025-11-14).

- Guo, W.; Liu, G.; Zhou, Z.; Wang, J.; Tang, Y.; Wang, M. Robust training in multiagent deep reinforcement learning against optimal adversary. IEEE Transactions on Systems, Man, and Cybernetics: Systems, 2025. [Google Scholar]

- Chen, C.; Wu, Y.F.; Yoon, J.; Ahn, S. Transdreamer: Reinforcement learning with transformer world models. arXiv 2022, arXiv:2202.09481. [Google Scholar] [CrossRef]

- Wu, H.; Guo, M.; Li, Z.; Dou, Z.; Long, M.; He, K.; Matusik, W. GeoPT: Scaling Physics Simulation via Lifted Geometric Pre-Training. arXiv 2026, arXiv:2602.20399. [Google Scholar] [CrossRef]

- Jin, C.; Lin, M.; Wu, F.; Wu, X.; Zhou, Y.; Wang, J. TVMTrailer: a text-video-music AIGC framework for film trailer generation. IEEE Transactions on Systems, Man, and Cybernetics: Systems, 2025. [Google Scholar]

- Gu, A.; Dao, T. Mamba: Linear-time sequence modeling with selective state spaces. In Proceedings of the First conference on language modeling, 2024. [Google Scholar]

- Ye, S.; Ge, Y.; Zheng, K.; Gao, S.; Yu, S.; Kurian, G.; Indupuru, S.; Tan, Y.L.; Zhu, C.; Xiang, J.; et al. World Action Models are Zero-shot Policies. arXiv 2026, arXiv:2602.15922. [Google Scholar] [CrossRef]

- Gao, S.; Liang, W.; Zheng, K.; Malik, A.; Ye, S.; Yu, S.; Tseng, W.C.; Dong, Y.; Mo, K.; Lin, C.H.; et al. DreamDojo: A Generalist Robot World Model from Large-Scale Human Videos. arXiv 2026, arXiv:2602.06949. [Google Scholar]

- Kotar, K.; Lee, W.; Venkatesh, R.; Chen, H.; Bear, D.; Watrous, J.; Kim, S.; Aw, K.L.; Chen, L.N.; Stojanov, S.; et al. World modeling with probabilistic structure integration. arXiv 2025, arXiv:2509.09737. [Google Scholar] [CrossRef]

- Saxena, D.; Cao, J. Generative adversarial networks (GANs) challenges, solutions, and future directions. ACM Computing Surveys (CSUR) 2021, 54, 1–42. [Google Scholar] [CrossRef]

- Wang, M.; Jin, W.; Cao, K.; Xie, L.; Hong, Y. ContactGaussian-WM: Learning Physics-Grounded World Model from Videos. arXiv 2026, arXiv:2602.11021. [Google Scholar]

- Henderson, P.; Chang, W.D.; Shkurti, F.; Hansen, J.; Meger, D.; Dudek, G. Benchmark environments for multitask learning in continuous domains. arXiv 2017, arXiv:1708.04352. [Google Scholar] [CrossRef]

- NVIDIA PhysX SDK. 2025. Available online: https://developer.nvidia.com/physx-sdk (accessed on 2025-11-15).

- Makoviychuk, V.; Wawrzyniak, L.; Guo, Y.; Lu, M.; Storey, K.; Macklin, M.; Hoeller, D.; Rudin, N.; Allshire, A.; Handa, A. Isaac Gym: High Performance GPU Based Physics Simulation For Robot Learning NeurIPS 2021 Track: Datasets and Benchmarks. arXiv 2021, arXiv:2110.13563. [Google Scholar]

- Sun, P.; Kretzschmar, H.; Dotiwalla, X.; Chouard, A.; Patnaik, V.; Tsui, P.; Guo, J.; Zhou, Y.; Chai, Y.; Caine, B.; et al. Scalability in perception for autonomous driving: Waymo open dataset. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020; pp. 2446–2454. [Google Scholar]

- Wang, Y.; Yan, H.; Park, J.H.; Hu, Y.; Shen, H. Asynchronous control of cyber–physical systems with Quantized measurements and stochastic multimode attacks. IEEE Transactions on Cybernetics, 2025. [Google Scholar]

- Duan, H.; Yu, H.X.; Chen, S.; Fei-Fei, L.; Wu, J. Worldscore: A unified evaluation benchmark for world generation. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025; pp. 27713–27724. [Google Scholar]

- Li, D.; Fang, Y.; Chen, Y.; Yang, S.; Cao, S.; Wong, J.; Luo, M.; Wang, X.; Yin, H.; Gonzalez, J.E.; et al. WorldModelBench: Judging Video Generation Models As World Models. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems Datasets and Benchmarks Track, 2025. [Google Scholar]

- Bengio, Y.; Clare, S.; Prunkl, C.; Andriushchenko, M.; Bucknall, B.; Murray, M.; Bommasani, R.; Casper, S.; Davidson, T.; Douglas, R.; et al. International ai safety report 2026. arXiv 2026, arXiv:2602.21012. [Google Scholar] [CrossRef]

| Challenges | Importance | Classes | The Pixel–Physics Gap | Solutions |

|---|---|---|---|---|

Continuity |

Physical continuity is fundamental to stable world models. Maintaining continuity across time, space, and object identity is crucial for reliable long-term prediction and decision-making. Physical continuity is fundamental to stable world models. Maintaining continuity across time, space, and object identity is crucial for reliable long-term prediction and decision-making.

|

Temporal (Section 2.1.1) |

Videos sample continuous processes as discrete frames and lack causality, causing autoregressive models to accumulate errors and break physical continuity. Videos sample continuous processes as discrete frames and lack causality, causing autoregressive models to accumulate errors and break physical continuity.

|

Autoregression improvements Autoregression improvements |

Optimization of schedules Optimization of schedules |

||||

Conditional constraints Conditional constraints |

||||

Optimization-level Optimization-level |

||||

| Spatial (Section 2.1.2) |

Videos are 2D projections that lose key 3D geometry (e.g., depth and structure) Videos are 2D projections that lose key 3D geometry (e.g., depth and structure)

|

Implicit alignment Implicit alignment |

||

Explicit alignment Explicit alignment |

||||

Memory mechanisms Memory mechanisms |

||||

| Identity (Section 2.1.3) |

Videos lack explicit object properties (e.g., mass, material, shape). Videos lack explicit object properties (e.g., mass, material, shape).

|

Identity perception Identity perception |

||

Controllability |

Videos passively record events without action–outcome causality, limiting world models in controllability, causal reasoning, and goal-directed behavior. Videos passively record events without action–outcome causality, limiting world models in controllability, causal reasoning, and goal-directed behavior.

|

Semantic (Section 2.2.1) |

Videos lack semantic–physical alignment, hindering grounded instruction. Videos lack semantic–physical alignment, hindering grounded instruction.

|

Semantic alignment mechanism Semantic alignment mechanism |

| Interactivity (Section 2.2.2) |

Videos record past events and cannot model counterfactuals or real-time responses to interventions. Videos record past events and cannot model counterfactuals or real-time responses to interventions.

|

Low-level control signals Low-level control signals |

||

Global control Global control |

||||

Generalization |

Videos capture appearance rather than underlying physics, leading to overfitting. Generalization requires learning physics-grounded representations transferable across scenes and tasks. Videos capture appearance rather than underlying physics, leading to overfitting. Generalization requires learning physics-grounded representations transferable across scenes and tasks.

|

Data Augmentation (Section 2.3.1) |

Physically diverse, well-annotated video data are scarce, limiting coverage of rare dynamics. Physically diverse, well-annotated video data are scarce, limiting coverage of rare dynamics.

|

Automated data pipeline Automated data pipeline |

Simulation-to-real engine Simulation-to-real engine |

||||

Foundation models Foundation models |

||||

| Architecture (Section 2.3.2) |

Naive architectures capture visual correlations rather than physical invariances. Naive architectures capture visual correlations rather than physical invariances.

|

Advanced modeling Advanced modeling |

||

| Behavioral & Environmental (Section 2.3.3) |

Videos from different embodiments and environments exhibit large distribution shifts in physical dynamics. Videos from different embodiments and environments exhibit large distribution shifts in physical dynamics.

|

Regularization and disentangling Regularization and disentangling |

||

Context adaptation Context adaptation |

||||

Lightweight |

Lightweight models save resources, enable real-time interaction, and learn compact, generalizable dynamics, balancing efficiency and performance for constrained settings. Lightweight models save resources, enable real-time interaction, and learn compact, generalizable dynamics, balancing efficiency and performance for constrained settings.

|

Representation & Efficiency (Section 2.4) |

Videos are high-dimensional and redundant, with much irrelevant pixel data, hindering efficient extraction of physically meaningful representations. Videos are high-dimensional and redundant, with much irrelevant pixel data, hindering efficient extraction of physically meaningful representations.

|

Latent modeling Latent modeling |

Sequence optimization Sequence optimization |

||||

Parameter-efficient / transfer Parameter-efficient / transfer |

||||

Training and sample efficiency Training and sample efficiency |

||||

Universality |

Videos capture a limited slice of reality and lack unified cross-modal representations. Universal world models need shared physical abstractions that generalize. Videos capture a limited slice of reality and lack unified cross-modal representations. Universal world models need shared physical abstractions that generalize.

|

Modeling Multi-task Architecture (Section 2.5) |

Videos’ passive, single-view, and modality-limited nature prevents learning unified physical representations. Videos’ passive, single-view, and modality-limited nature prevents learning unified physical representations.

|

Shared representations Shared representations |

Multi-task learning Multi-task learning |

||||

Knowledge generalization and transfer Knowledge generalization and transfer |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.