Submitted:

06 April 2026

Posted:

08 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. ChatGPT’s Impact on Student Motivation and Autonomy

1.2. Theoretical Framework: Self-Determination Theory

1.3. Generative AI Through the Lens of SDT

2. Materials and Methods

2.1. Participants

2.2. Measures

- • Accomplishment: Intrinsic Motivation Toward Accomplishment (e.g., Q2: “ChatGPT provides valuable tips to help me maintain my motivation when working toward long-term goals.”);

- • Desire to Know: Intrinsic Motivation Based on the Desire to Know (e.g.,Q6: “I turn to ChatGPT for advice on specific learning methods that enhance the joy of acquiring knowledge.”);

- • Stimulation: Intrinsic Motivation Based on the Desire to Experience Stimulation (e.g., Q8: “With ChatGPT’s input, I incorporate activities and approaches that infuse excitement into the learning process.”);

- • Rewards: Extrinsic Motivation Through Rewards and Constraints (e.g., Q10: “ChatGPT suggests creative ways to reward myself when I achieve specific milestones or goals.”);

- • Value: Extrinsic Motivation Based on Personal Value (e.g., Q15: “With ChatGPT’s insights, I reframe tasks to make them more personally significant.”);

- • Amotivation (e.g., Q17: “ChatGPT provides guidance on overcoming a persistent lack of motivation and regaining a sense of purpose.”).

2.3. Procedure

2.4. Data Analysis

2.4. Data Availability

3. Results

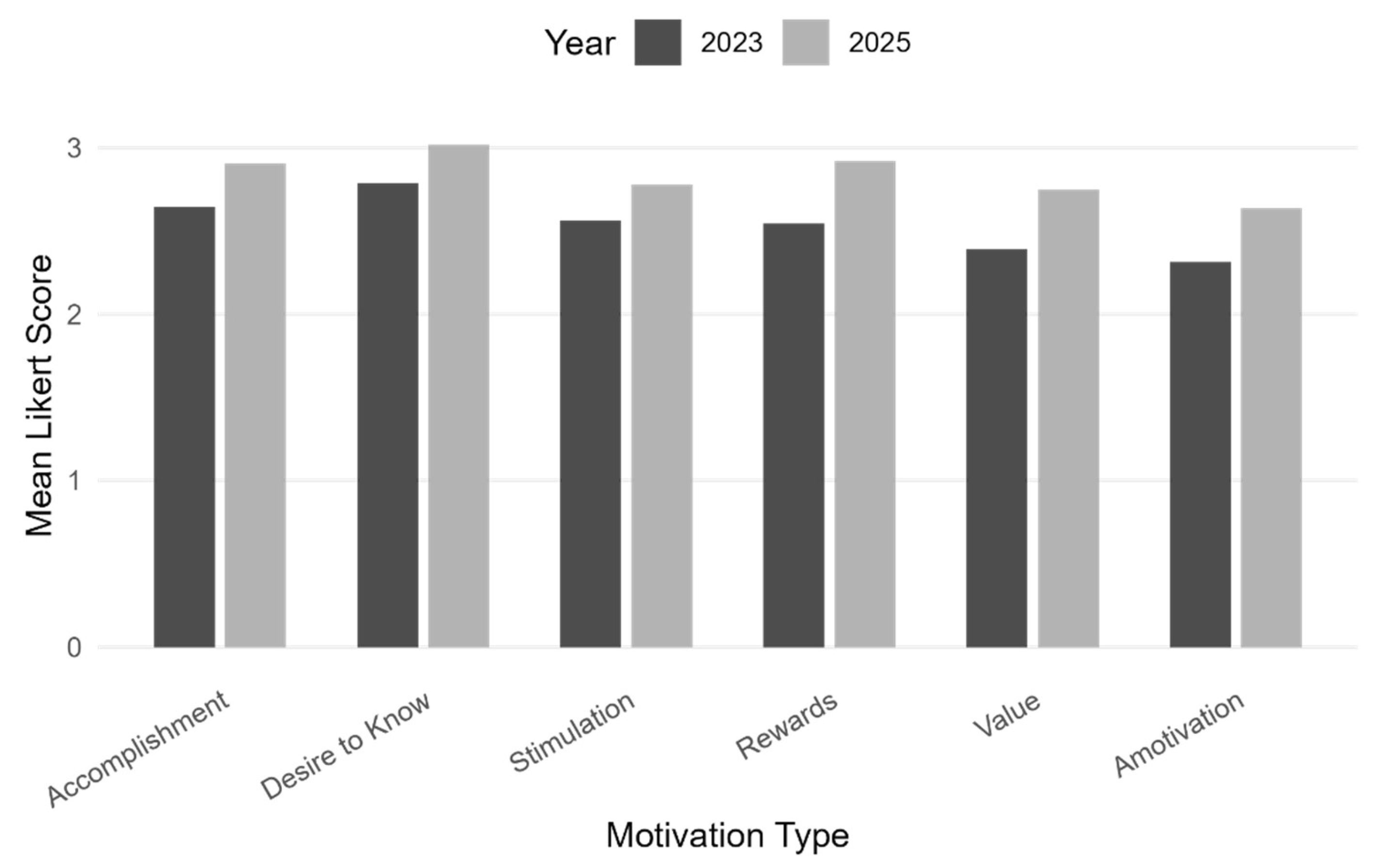

3.1. Descriptive Statistics

3.2. Inferential Analysis

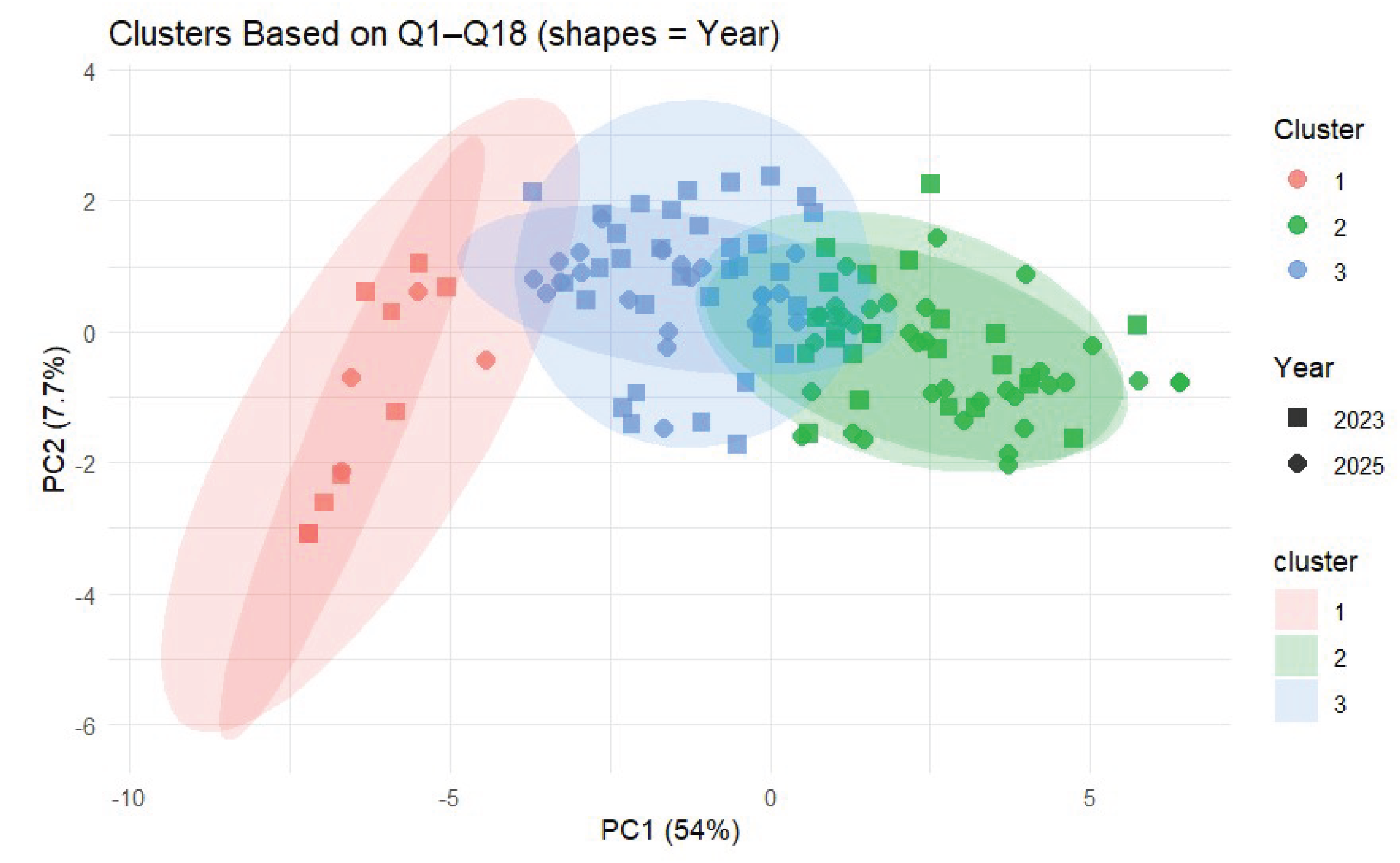

3.3. Cluster Analysis

4. Discussion

Author contributions

Conflict of interest

References

- Altman S. OpenAI’s Sam Altman on Building the ‘Core AI Subscription’ for Your Life [Video]. YouTube; 2025. Available online: https://www.youtube.com/watch?v=ctcMA6chfDY.

- Annamalai N, Ab Rashid R, Hashmi UM, et al. Using chatbots for English language learning in higher education. Comput Educ Artif Intell. 2023;5:100153. [CrossRef]

- Annamalai N, Bervell B, Mireku D, et al. Artificial intelligence in higher education: Modelling students’ motivation for continuous use of ChatGPT based on a modified self-determination theory. Comput Educ Artif Intell. 2025;8:100346. [CrossRef]

- Cronjé J. Exploring the role of ChatGPT as a peer coach for developing research proposals: Feedback quality, prompts, and student reflection. Electron J e-Learn. 2023;22(2):1–15. [CrossRef]

- Chen BX. It’s not just a chatbot, it’s a life coach. N Y Times. 2023 Jun 26. Available online: https://www.nytimes.com/2023/06/23/technology/ai-chatbot-life-coach.html.

- Almulla MA. Investigating influencing factors of learning satisfaction in AI ChatGPT for research: University students perspective. Heliyon. 2024;10(11):e32220. [CrossRef]

- Chiu TKF. A classification tool to foster self-regulated learning with generative artificial intelligence by applying self-determination theory: A case of ChatGPT. Educ Technol Res Dev. 2024. [CrossRef]

- Ng DTK, Tan CW, Leung JKL. Empowering student self-regulated learning and science education through ChatGPT: A pioneering pilot study. Br J Educ Technol. 2024. [CrossRef]

- Hmoud M, Swaity H, Hamad N, et al. Higher education students’ task motivation in the generative artificial intelligence context: The case of ChatGPT. Information. 2024;15(1):33. [CrossRef]

- Budu J, Oteng K. Exploring students’ use and outcomes of ChatGPT. Proc ISCAP Conf. 2024;10(6185). https://iscap.us/proceedings/2024/pdf/6185.pdf.

- Ilić J, Ivanovic M. The impact of ChatGPT on student learning experience in higher STEM education: A systematic literature review. In: Proc 2024 21st Int Conf IT-Based Higher Educ Training (ITHET); 2024 Nov; IEEE. [CrossRef]

- Qi C, Tang Y, Lei Y. Does feedback from ChatGPT help? Investigating the effect of feedback from both teacher and ChatGPT on students’ learning outcomes. In: Proc 30th Americas Conf Info Syst (AMCIS); 2024. https://aisel.aisnet.org/amcis2024/is_education/is_education/29.

- Cardona MA, Rodríguez RJ, Ishmael K. Artificial intelligence and the future of teaching and learning: Insights and recommendations. U.S. Department of Education, Office of Educational Technology; 2023. https://coilink.org/20.500.12592/rh21zz.

- Fan Y, Tang L, Le H, et al. Beware of metacognitive laziness: Effects of generative artificial intelligence on learning motivation, processes, and performance. Br J Educ Technol. 2024. [CrossRef]

- Ye JH, Zhang M, Nong W, et al. The relationship between inert thinking and ChatGPT dependence: An I-PACE model perspective. Educ Inf Technol. 2025;30(3):3885–3909. [CrossRef]

- Deng R, Jiang M, Yu X, et al. Does ChatGPT enhance student learning? A systematic review and meta-analysis of experimental studies. Comput Educ. 2025;227:105224. [CrossRef]

- Zogheib S, Zogheib B. Understanding university students’ adoption of ChatGPT: Insights from TAM, SDT, and beyond. J Info Technol Educ Res. 2024;23:25. [CrossRef]

- Ansari AN, Ahmad S, Bhutta SM. Mapping the global evidence around the use of ChatGPT in higher education: A systematic scoping review. Educ Inf Technol. 2024;29(9):11281–11321. [CrossRef]

- Quintanilla Villegas MA, Pineda Rivas EE. Evidences from the literature on the motivations, consequences, and concerns regarding the use of artificial intelligence in higher education. Environ Soc Manag J. 2025;19(3). [CrossRef]

- Ali D, Fatemi Y, Boskabadi E, et al. ChatGPT in teaching and learning: A systematic review. Educ Sci. 2024;14(6):643. [CrossRef]

- Jo H. From concerns to benefits: A comprehensive study of ChatGPT usage in education. Int J Educ Technol High Educ. 2024;21(35). [CrossRef]

- Woerner JHR, Turtova AP, Lang ASID. Transformative potentials and ethical considerations of AI tools in higher education: Case studies and reflections. In: Proc SoutheastCon 2024; 2024; Atlanta, GA, USA. p. 510–515. [CrossRef]

- Abramson A. How to use ChatGPT as a learning tool. Monit Psychol. 2023;54(4). https://www.apa.org/monitor/2023/06/chatgpt-learning-tool.

- Hasanein AM, Sobaih AEE. Drivers and consequences of ChatGPT use in higher education: Key stakeholder perspectives. Eur J Investig Health Psychol Educ. 2023;13(11):2599–2614. [CrossRef]

- Ryan RM, Deci EL. Intrinsic and extrinsic motivation from a self-determination theory perspective: Definitions, theory, practices, and future directions. Contemp Educ Psychol. 2020;61:101860. [CrossRef]

- Deci EL, Olafsen AH, Ryan RM. Self-determination theory in work organizations: The state of a science. Annu Rev Organ Psychol Organ Behav. 2017;4:19–43. [CrossRef]

- Bureau JS, Howard JL, Chong JX, Guay F. Pathways to student motivation: A meta-analysis of antecedents of autonomous and controlled motivations. Rev Educ Res. 2022;92(1):46–72. [CrossRef]

- Wang C, Hsu HCK, Bonem EM, et al. Need satisfaction and need dissatisfaction: A comparative study of online and face-to-face learning contexts. Comput Hum Behav. 2019;95:114–125. [CrossRef]

- Chan CKY, Hu W. Students’ voices on generative AI: Perceptions, benefits, and challenges in higher education. Int J Educ Technol High Educ. 2023;20(1):1–18. [CrossRef]

- Yilmaz R, Yilmaz FGK. The effect of generative artificial intelligence (AI)-based tool use on students’ computational thinking skills, programming self-efficacy and motivation. Comput Educ Artif Intell. 2023;4:100147. [CrossRef]

- Hu YH. Implementing generative AI chatbots as a decision aid for enhanced values clarification exercises in online business ethics education. Educ Technol Soc. 2024;27(3):356–373. https://www.jstor.org/stable/48787035.

- Bettayeb AM, Alkholy SO, Alshurideh MT. Exploring the impact of ChatGPT: Conversational AI in education. Front Educ. 2024;9. [CrossRef]

- Sok S, Heng K. Opportunities, challenges, and strategies for using ChatGPT in higher education: A literature review. J Digit Educ Technol. 2024;4(1):ep2401. [CrossRef]

- Zhang X, Zhang X, Liu H. Reflections on enhancing higher education classroom effectiveness through the introduction of large language models. J Mod Educ Res. 2024. [CrossRef]

- Vallerand RJ, Pelletier LG, Blais MR, et al. Academic motivation scale (AMS-C 28), college (CEGEP) version. Educ Psychol Meas. 1993;52(53). https://www.lrcs.uqam.ca/wp-content/uploads/2017/08/emecegep_en.pdf.

- R Core Team. R: A language and environment for statistical computing (Version 4.5.0) [Computer software]. R Foundation for Statistical Computing; 2024. https://www.R-project.org/.

- Harder JA, Klehm C, Lang ASID, Locke L, Turtova A, Valderrama E, et al. Dataset and survey questions for Not Just Answers: ChatGPT’s Expanding Role in Supporting Student Motivation [dataset]. 2025. figshare. [CrossRef]

| Motivation type | Mean (2023) | SD (2023) | Mean (2025) | SD (2025) |

| Accomplishment | 2.65 | 0.73 | 2.90 | 0.59 |

| Desire to know | 2.79 | 0.61 | 3.02 | 0.58 |

| Stimulation | 2.56 | 0.75 | 2.78 | 0.70 |

| Rewards | 2.54 | 0.56 | 2.92 | 0.62 |

| Value | 2.39 | 0.85 | 2.75 | 0.81 |

| Amotivation | 2.31 | 0.82 | 2.63 | 0.81 |

| Motivation type | Mean (2023) | Mean (2025) | t-value | df | p-value | Cohen’s d | Effect size | ||

| Accomplishment | 2.65 | 2.90 | 2.29 | 129.46 | .023 | 0.39 | Small | ||

| Desire to Know | 2.79 | 3.02 | 2.27 | 137.00 | .025 | 0.38 | Small | ||

| Stimulation | 2.56 | 2.78 | 1.81 | 136.40 | .073 | 0.31 | Small | ||

| Rewards | 2.54 | 2.92 | 3.75 | 138.94 | <.001 | 0.63 | Medium | ||

| Value | 2.39 | 2.75 | 2.59 | 137.22 | .011 | 0.44 | Small | ||

| Amotivation | 2.31 | 2.63 | 2.34 | 137.95 | .021 | 0.39 | Small | ||

| Motivation type | Estimate (b) | Std. Error | df | t-value | p-value |

| Accomplishment | 0.27 | 0.10 | 87 | 2.69 | .009 |

| Desire to Know | 0.23 | 0.09 | 97 | 2.43 | .017 |

| Stimulation | 0.22 | 0.10 | 69 | 2.14 | .036 |

| Rewards | 0.35 | 0.09 | 92 | 3.80 | < .001 |

| Value | 0.34 | 0.12 | 83 | 2.75 | .007 |

| Amotivation | 0.33 | 0.12 | 74 | 2.79 | .007 |

| Cluster | Accomplish | Know | Stimulation | Reward | Value | Amotivation | GPA |

| 1 | 1.56 | 1.93 | 1.31 | 1.96 | 1.04 | 1.09 | 3.38 |

| 2 | 3.13 | 3.29 | 3.20 | 3.17 | 3.23 | 3.09 | 3.24 |

| 3 | 2.68 | 2.68 | 2.39 | 2.43 | 2.18 | 2.11 | 3.43 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).