Submitted:

09 April 2026

Posted:

28 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Security constitutes a qualitatively distinct challenge dimension. Harm manifests through actions rather than outputs, and the consequences of failure are irreversible. Primary attack vectors include environment prompt injection, capability escalation through tool composition, and memory poisoning, with memory poisoning being the most pernicious—attackers only need a single injection to persistently compromise system behavior across sessions by contaminating persistent storage. While sandbox isolation is a common defense approach, it is neither structurally feasible to implement perfectly nor a sufficient condition for security. Empirical evidence from AgentHarm [39] demonstrates that even aligned models can perform a wide range of harmful tasks when behavioral constraints are absent. Therefore, model alignment and harness security mechanisms are complementary rather than substitutive, and must be developed in tandem.

- Agent harness suffers from significant evaluation reliability issues, as existing benchmark results deviate from agents’ true real-world performance. Both AgencyBench [15] and METR [40] have documented substantial performance score discrepancies caused solely by differences in harness implementations. This problem arises from the superposition of three distinct structural root causes: environment drift, task specification ambiguity, and harness coupling, each with unique characteristics and requiring separate solutions. Among these, harness coupling is the most consequential for research: it is the only root cause whose solution lies entirely at the infrastructure layer, and also the least explicitly addressed in current evaluation frameworks.

- Protocol fragmentation is the core bottleneck limiting the large-scale deployment of agent harnesses: the absence of universal standards means that every integration between a harness and a tool, or between two harnesses, requires custom code, and maintenance costs grow quadratically with the number of systems in the ecosystem. The industry has naturally emerged into a functionally layered landscape: MCP [41] dominates the “agent-to-tool” invocation standard and has achieved the broadest adoption owing to its low latency; A2A [42] specializes in cross-entity task delegation between agents and offers more robust security models and stateful task management. However, the most critical inter-layer integration remains unstandardized to date, and the overall maturity of interoperability infrastructure lags significantly behind that of model capabilities.

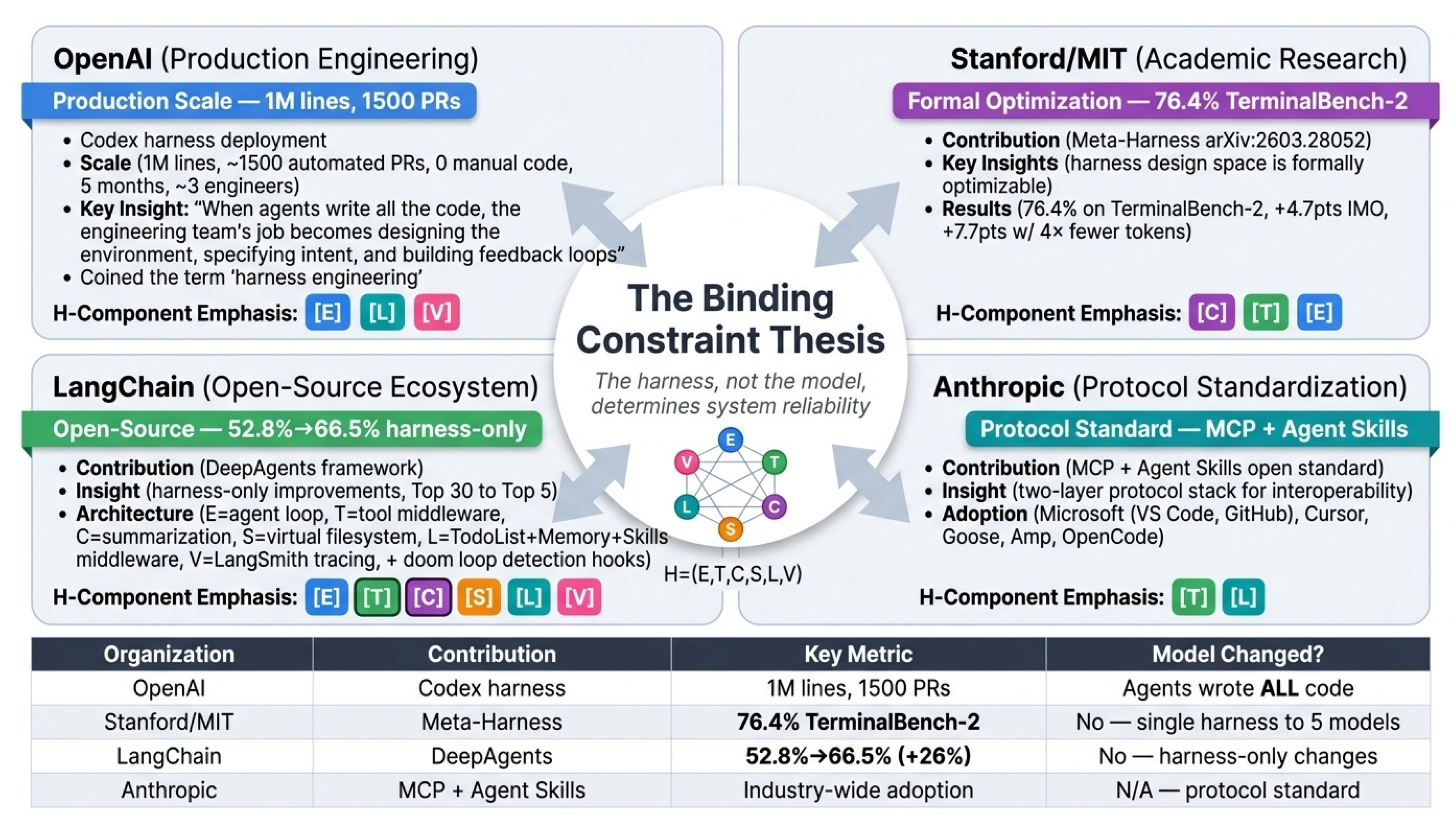

- The core challenge of runtime context management lies in highlighting critical information within limited context windows: attention dilution exists even in long-context models, where longer contexts make it increasingly difficult for the model to accurately retrieve key information. Empirical evidence from DeepAgents [43] directly validates this issue: via pure harness-level engineering optimizations (including structured context injection and failure detection), performance on TerminalBench [44] 2.0 was improved by 26% without modifying the underlying model. This demonstrates that harness-level improvements can yield gains comparable to generational model upgrades.

- The core challenge of knowledge and context engineering is to construct a stable, consistent, and governable knowledge and state management layer. When the three subsystems—persistent memory management, retrieval-augmented context organization, and skill representation and validation—operate in effective coordination, the agent can maintain a causally coherent execution view. Poor coordination, by contrast, leads to issues such as context confusion, loss of critical information, and historical state poisoning. At present, the field remains empirically driven, lacking systematic theoretical frameworks and standardized practices.

- The tool registry serves as the primary control point for agent behavior governance, covering four dimensions: design patterns, failure handling, supply-chain security, and protocol standardization. Both PALADIN [45] and SHIELDA [46] demonstrate that structured anomaly classification at the harness level contributes more to performance than model scale alone. ToolHijacker [47] validates the feasibility of registry-level attacks, while PRISM [48] proposes a runtime security scheme based on cross-lifecycle hooks. At the protocol level, MCP and Agent Skills [49] together form a two-layer protocol stack for tool definition and workflow management. This survey also reveals a counter-intuitive finding: removing tools often improves performance more than adding tools. The governance design of the tool registry, not raw model capability, represents the dominant bottleneck for current system performance.

- Memory management is likewise a harness infrastructure issue rather than a model capability issue: the harness determines what information is stored, how it is retrieved, and how it is persisted. This section establishes three core findings. First, across six memory architectures, there is an unavoidable three-way trade-off among recency bias, scalability, and retrieval latency; these cannot all be optimized at once. Second, in many systems (e.g., Reflexion [5] and Voyager [3]), memory content is generated and written by the model itself. This means that the harness must not only manage storage mechanisms but also govern the quality of what is written. Third, memory poisoning can persist across sessions without requiring continued attacker involvement, yet, with the exception of AgentSys[50], current systems lack security controls at write time. To date, memory systems also lack a portable standard interface, which prevents implementations from being transferred across harnesses and makes retrieval quality difficult to evaluate independently.

- At the single-agent level, the core challenge is that the planning loop is fundamentally a harness governance object rather than an autonomous model process. As planning complexity increases from linear to tree-structured, governance responsibility shifts entirely to the harness, which must assume four non-substitutable infrastructure responsibilities: controlling the planning loop, persisting cross-inference planning state, enforcing hard compute and token budgets, and designing the Agent-Computer Interface (ACI). Empirical evidence from multiple sources confirms that harness-level optimizations, particularly ACI design, deliver greater performance gains than model upgrades [7,17,51].

- At the multi-agent level, the governance model undergoes a transformation rather than extension: the harness now governs relationships between execution loops, introducing problems with no single-agent analogs. MASEval [52] confirms framework choice matters as much as model choice, with four core challenges: agent identity management, inter-agent message validation, shared state consistency, and the topology tradeoff between auditability and adaptability. Optimized single-agent systems match homogeneous multi-agent collectives, requiring economic justification for coordination overhead, and no production harness defends against cross-agent prompt injection.

- We are the first to conduct a systematic survey on the LLM agent harness, based on a comprehensive review of over hundred papers, technical reports, and industry blogs. Importantly, we provide a formal definition and conceptualization of the agent harness as a six-tuple , establishing the necessary and sufficient conditions for runtime execution environments.

- We fully uncover the technical evolutionary lineage and paradigm shifts of the agent harness from a new perspective. We trace its formation from three originally independent lines of technology: software testing harnesses from the 1990s, standardized reinforcement learning environments, and modern LLM agent frameworks. We demonstrate that the agent harness represents a deeply rooted, standalone architectural layer rather than a trivial extension of LLMs, providing a unified historical perspective for understanding key design tradeoffs in contemporary harness systems.

- We propose a novel, empirically grounded two-dimensional taxonomy and harness completeness matrix. Addressing the fundamental limitation of existing methods that rank systems only along a one-dimensional scale of functional complexity, we conduct a structured expert survey over 23 representative systems and build a novel classification framework based on stack positioning as the primary dimension and domain scope as the secondary dimension. This corrects the misconception that “more complex equals better” and provides a principled basis for engineering system selection.

- We systematically organize and deeply analyze nine core technical challenges facing production-grade agent harnesses. We perform a structured investigation of sandbox security, evaluation reliability, protocol fragmentation, context management, tool governance, and other critical issues. Moving beyond the common practice of studying challenges in isolation, we identify cross-component coupling as the fundamental root cause of systemic unreliability in agent systems.

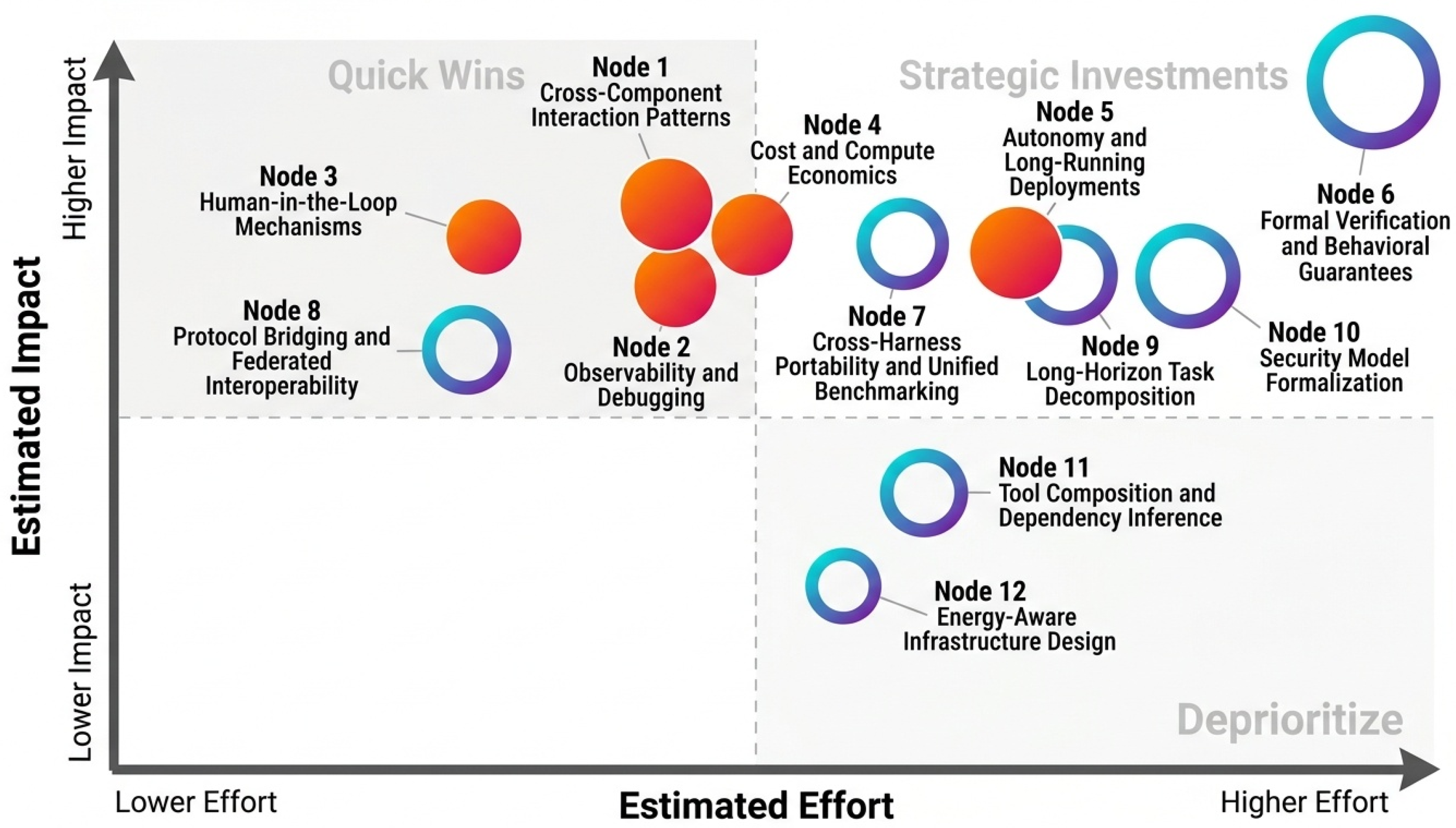

- We identify critical research gaps and outline future research directions.Based on the analysis of the nine core challenges, we propose a two-tier research agenda consisting of near-term tractable directions and long-term foundational research directions. We explicitly emphasize that model capability is necessary but insufficient for reliable deployment, and that the agent harness represents the primary bottleneck limiting the large-scale real-world application of agent systems.

2. Related Work

2.1. LLM Agent

2.2. Evaluation System

2.2.1. What This Survey Does Differently

3. Definitions and Conceptual Framework

3.1. The Definition Problem

3.2. Formal Definition

- E — Execution loop: Manages the observe-think-act cycle, including turn sequencing, termination conditions, and error recovery

- T — Tool registry: Maintains a typed, validated catalog of available tool interfaces; routes and monitors tool invocations

- C — Context manager: Governs what information enters the model’s context window across turns, including compaction, retrieval, and prioritization strategies

- S — State store: Persists task-relevant state across turns and, optionally, across sessions; provides recovery from partial failures

- L — Lifecycle hooks: Pre- and post-invocation interception points for authentication, logging, policy enforcement, and instrumentation

- V — Evaluation interface: Instruments the execution to capture action trajectories, intermediate states, and success signals for offline analysis, through standardized hooks that distinguish the V-component from general logging

3.2.1. The V-component Design Space: From Logging to Evaluation Pipelines

3.2.2. Framework Status and Validation Pathway

3.3. The Orthogonality Assumption and Its Limits

3.4. Situating the Harness in the Agent Stack

| Concept | Primary Role | Scope | Runtime? | Key Question |

| Framework | Construction primitives | Dev-time | No | How is the agent built? |

| Harness | Runtime governance | Runtime | Yes | How does the agent run reliably? |

| Platform | Organizational management | Both | Both | How are agents managed at scale? |

| Agent OS | Formal kernel services | Runtime | Yes | What are the minimal governance abstractions? |

| Eval harness | Assessment infrastructure | Test-time | Partial | How is agent behavior measured? |

| Task Type | E (Loop) | T (Tools) | C (Context) | S (State) | L (Lifecycle) | V (Eval) | Example Systems |

| Single-turn Q&A | Minimal | Optional | Minimal | × | × | Optional | ChatGPT, Claude.ai |

| Multi-step web research | Moderate | Required (web, search) | High | ∼ | ∼ | Optional | WebArena agents |

| Software engineering | High | Required (code exec, file) | High | Required | Required | Required | SWE-agent, OpenHands |

| Long-running personal assistant | High | Required (broad) | High | Required | Required | Optional | OpenClaw, MemGPT |

| Multi-agent collaboration | High | Required | High | Required | Required | Required | MetaGPT, AutoGen |

| Robotic/embodied task | High | Required (actuators) | Moderate | Required | Required | Required | RAI, embodied systems |

4. Historical Evolution

4.1. Three Lineages, One Synthesis

4.2. Thread 1 — Software Test Harnesses: The Governance Template

4.3. Thread 2 — Reinforcement Learning Environments: The Interface Standard

4.4. Thread 3 — The Early LLM Agent Frameworks: The Failure Mode Catalog

4.4.1. The 2023 Benchmark Infrastructure Emergence

4.5. The Harness Turn (2024–2026)

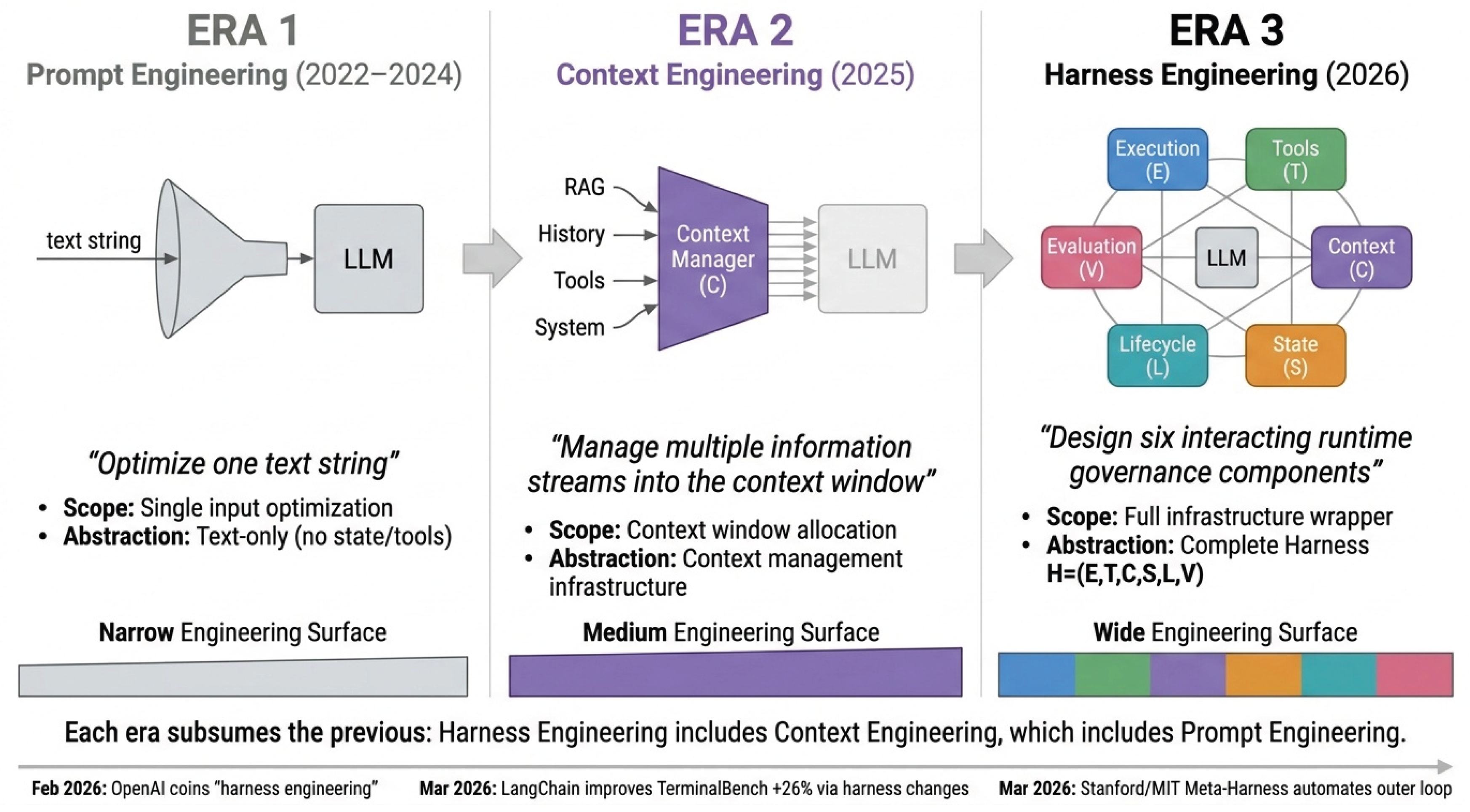

- 1.

- Prompt Engineering (2022–2024): The primary engineering lever was the text of the input prompt itself. Researchers and practitioners optimized by crafting better instructions, few-shot examples, and reasoning templates. The question was: “What text should we give the model to get better outputs?” This era produced chain-of-thought prompting, in-context learning, and instruction tuning as core methodologies.

- 2.

- Context Engineering (2025): As agents became longer-running, the binding constraint shifted from “what is the input?” to “what information should the model see?” This era focused on context management: what to inject on each turn, how to retrieve and compress memories, how to rank tool results by relevance, and how to handle context window saturation. Context engineering asks: “What structured information should we assemble and present to the model to guide its decisions?” This is when practitioners began systematizing memory retrieval, tool result formatting, and dynamic context management.

- 3.

- Harness Engineering (2026): As models became capable enough to handle long-running tasks but deployment reliability remained elusive, the engineering focus expanded to the full infrastructure wrapper. Harness engineering asks: “What governance, constraints, feedback loops, and execution controls must we design to make agent systems reliable?” The answer spans all six components considered as an integrated whole. This era, represented by OpenAI’s Codex harness [20], Meta-Harness [53] optimization, and LangChain’s DeepAgents [43], recognizes that model capability is necessary but insufficient—reliability emerges from the interaction of a capable model with a thoughtfully designed execution environment.

4.6. Why the Harness Concept Required All Three Lineages

5. Taxonomy of Agent Harness Systems

5.1. The Classification Problem

5.1.1. System Selection Methodology

5.1.2. Coding Methodology for the Completeness Matrix

5.2. Harness Completeness Matrix

| System | E | T | C | S | L | V | Security | MA | Category |

| Claude Code | ✔ | ✔ | ✔ | ✔ | ✔ | ∼ | Sandbox | × | Full-Stack |

| OpenClaw/PRISM | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | Container | ✔ | Full-Stack |

| Hermes | ✔ | ✔ | ✔ | ✔ | ✔ | ∼ | Container | ✔ | Full-Stack |

| AIOS | ✔ | ✔ | ✔ | ✔ | ✔ | ∼ | Process | ✔ | Full-Stack |

| OpenHands | ✔ | ✔ | ✔ | ✔ | ✔ | ∼ | Container | ✔ | Full-Stack |

| MetaGPT | ✔ | ✔ | ∼ | ∼ | ∼ | ∼ | None | ✔ | Multi-Agent |

| AutoGen | ✔ | ✔ | ∼ | ∼ | ∼ | ∼ | None | ✔ | Multi-Agent |

| ChatDev | ✔ | ∼ | ∼ | ∼ | ∼ | ∼ | None | ✔ | Multi-Agent |

| CAMEL | ✔ | ∼ | ∼ | ∼ | × | ∼ | None | ✔ | Multi-Agent |

| DeerFlow | ✔ | ✔ | ∼ | ∼ | ∼ | ∼ | Container | ✔ | Multi-Agent |

| DeepAgents | ✔ | ✔ | ∼ | ∼ | ∼ | ∼ | MicroVM | ✔ | Multi-Agent |

| LangChain | ✔ | ✔ | ✔ | ∼ | ∼ | × | None | × | Framework |

| LangGraph | ✔ | ∼ | ∼ | ∼ | × | × | None | ∼ | Framework |

| LlamaIndex | ∼ | ✔ | ✔ | ∼ | × | × | None | × | Framework |

| SWE-agent | ✔ | ✔ | ✔ | ∼ | ∼ | ✔ | Container | × | Specialized |

| MemGPT | × | × | ✔ | ✔ | × | × | None | × | Module |

| Voyager | ✔ | ✔ | ∼ | ✔ | × | ∼ | None | × | Module |

| Reflexion | ∼ | × | ∼ | ✔ | × | ∼ | None | × | Module |

| Generative Agents | ✔ | × | ∼ | ✔ | × | ∼ | None | × | Module |

| Concordia | ✔ | × | ∼ | ✔ | × | ∼ | None | × | Module |

| HAL | ✔ | ✔ | ∼ | ∼ | ∼ | ✔ | VM | ✔ | Eval Infra |

| AgentBench | ✔ | ∼ | ∼ | ∼ | × | ✔ | Container | ✔ | Eval Infra |

| OSWorld | ✔ | ∼ | ∼ | ∼ | × | ✔ | VM | × | Eval Infra |

| BrowserGym | ∼ | ∼ | × | Browser | × | Eval Infra |

5.3. Taxonomy

5.3.1. The Evaluation Infrastructure Gap: Twelve Systems and the State of the Art

5.3.2. Multi-Agent Harness Architecture Patterns

5.3.3. Case Study: OpenAI Codex Harness Engineering at Scale

5.4. What the Taxonomy Reveals

6. Core Technical Challenges

6.1. Introduction to Cross-Cutting Challenge Analysis

6.2. Sandboxing and Security

6.2.1. The Unique Threat Profile of Agent Harnesses

6.2.2. The Isolation Mechanism Design Space

| Mechanism | Startup Latency | Memory Overhead | Isolation Model | Typical Deployment |

|---|---|---|---|---|

| Process-only | ∼10 ms | +0 MB | None | Prototyping only |

| Docker/OCI | ∼1 s | +10–50 MB | Namespace isolation | Development, trusted agents |

| gVisor | ∼2 s | +50–100 MB | User-space syscall interception | Security-conscious deployments |

| MicroVM (Firecracker) | ∼125 ms | +5–15 MB | Hardware virtualization | Production multi-tenant |

| WebAssembly | ∼10 ms | +1–5 MB | Capability-based sandbox | Compute-constrained tasks |

| Attack Vector | Entry Point | Harness Component Exploited | Persistence | Example | Harness Mitigation |

| Direct prompt injection | User input | C (context assembly) | Session only | Jailbreak via user message | Input sanitization at ingress (L-hook) |

| Indirect injection via retrieval | Retrieved content | C (context injection) | Session only | Poisoned web page | Content provenance tagging () |

| Tool-mediated injection | Tool output | T (tool result persistence) | Multi-step | Malicious API response hijacking agent goal | Tool output validation before context injection (L-hook) |

| Memory poisoning | Persistent store write | S (long-term storage) | Cross-session | Injecting false beliefs into long-term memory | Write-time content validation (L-hook on S writes) |

| Capability escalation | Tool composition | T (registry) | Single session | File read + bash exec = arbitrary code | Tool composition policy enforcement |

| Sandbox escape | Execution environment | E (execution loop) | System-level | Container kernel exploit | MicroVM isolation; capability-based sandbox |

| Cross-agent injection | Agent-to-agent message | E (multi-agent coordination) | Multi-agent | Compromised subagent hijacks orchestrator | Message schema validation + agent identity verification |

6.2.3. Why Container Isolation is Structurally Insufficient

6.2.4. Defensive Architecture at the Harness Level

6.2.5. Prompt Injection: A Detailed Taxonomy

6.2.6. Root Cause Analysis: Why Security Remains Unsolved

6.2.7. The Execution Environment as Policy Materialization Layer

6.2.8. Environment State Management as Harness Reliability Function

6.2.9. Code Execution Environments as Harness Components

6.2.10. SandboxEscapeBench and the Limits of Container Isolation: Extended Analysis

6.3. Evaluation and Benchmarking

6.3.1. The Fundamental Evaluation Problem

6.3.2. The Benchmark Landscape

| Benchmark | Domain | # Tasks | Environment Type | Known Failure Modes |

| SWE-bench | Software engineering | 2,294 | Real GitHub repositories | Test flakiness; repository state drift |

| OSWorld | GUI interaction | 369 | Real computer OS | 28% false negative rate (Kang, 2025) |

| WebArena | Web navigation | 812 | Live websites | Website changes invalidate tasks |

| Mind2Web | Web understanding | 2,350 | Cached snapshots | No environment dynamics |

| GAIA | General reasoning | 466 | Synthetic + real | Limited agent-specific coverage |

| AgentBench | Multi-domain | 1,000+ | 8 environments | Environment non-determinism |

| WorkArena | Enterprise workflow | 33 | ServiceNow (live) | Enterprise data dependency |

| InterCode | Interactive coding | 200+ | Docker containers | Execution environment consistency |

| HAL | Multi-domain | 9 suites | Standardized VMs | $40K cost per full evaluation cycle |

| Terminal-Bench | CLI interaction | 100+ | Container sandbox | Narrow task distribution |

| Benchmark | Environment Reset | State Persistence | Multi-step Tracking | Reproducibility Mechanism | Harness Infra Complexity |

| SWE-bench | Git repository clone | × per task | Test suite execution | Docker + fixed commit hash | High |

| OSWorld | VM snapshot restore | × per task | GUI state tracking | VirtualBox snapshots | Very High |

| WebArena | Live site re-init | × per task | Navigation history | Self-hosted site instances | High |

| AgentBench | Per-environment reset | × per task | Action-observation log | Docker per environment | High |

| HAL | Standardized VM | ✔ (cross-run) | LLM-aided log inspection | Centralized harness | Very High |

| AgencyBench | Per-task harness init | ✔ (within task) | Tool call trace (90 avg) | Native ecosystem harnesses | Very High |

| GAIA | Synthetic + cached | × | Tool use sequence | Fixed test set | Low |

| InterCode | Docker container | × per task | REPL interaction trace | Docker + fixed image | Moderate |

6.3.3. The Unreliability Crisis

6.3.4. Three Distinct Root Causes of Evaluation Unreliability

6.3.5. Benchmark Design Principles for Harness-Aware Evaluation

6.3.6. Meta-Harness: Automated Harness Optimization as an Evaluation Methodology

6.4. Protocol and Interface Standardization

6.4.1. The Fragmentation Problem

6.4.2. Protocol Comparison

| Protocol | Scope | Transport | Auth | Adoption | Maintainer |

| MCP | Agent→Tool | JSON-RPC (stdio/HTTP) | OAuth (2025) | High | Anthropic |

| A2A | Agent→Agent | HTTPS + SSE | OAuth | Medium | |

| ACP | High-level dialogue | Various | Various | Low | IBM |

6.4.3. Empirical Comparison: MCP vs. A2A Performance Characteristics

6.4.4. MCP and A2A: Complementary Roles in the Harness Stack

| Dimension | MCP | A2A | ACP | OpenAI Function Calling | Anthropic tool_use |

| Communication model | Client-server (tool invocation) | Peer-to-peer (agent-to-agent) | Request-response (task delegation) | Unidirectional (model→tool) | Unidirectional (model→tool) |

| Stateful sessions | Roadmap (2026) | Native | Limited | × | × |

| Auth/permission layer | Roadmap (2026) | OAuth2 | Basic | API key only | API key only |

| Streaming support | Roadmap (2026) | ✔ | Limited | ✔ | ✔ |

| Tool discovery | ✔ (schema registry) | ∼ (capability declaration) | × | × | × |

| Multi-step coordination | × | ✔ | ✔ | × | × |

| Production maturity | Early (2025–) | Emerging (2026–) | Emerging (2026–) | Mature | Mature |

| Primary harness role | T-component standard | E-component coordination | Cross-harness task delegation | T-component (closed) | T-component (closed) |

6.4.5. Root Causes of Protocol Fragmentation

6.5. State and Knowledge Management

6.5.1. Runtime Context Management

The Context Window Bottleneck

Context Management Strategies Comparison

| Strategy | Mechanism | Pros | Cons | Systems |

| Truncation | Keep most recent K tokens | Simple, fast | Loses old information | Baseline |

| Summarization | Compress older context | Preserves gist | Information loss | LangChain |

| Retrieval-augmented | Store all, retrieve relevant | Preserves everything | Retrieval quality depends on query | MemGPT, Zep |

| Knowledge graph | Structured entity/relationship storage | Semantic relationships | Construction cost | Zep TK |

| Memory-as-action | Agent actively manages memory | Task-optimal curation | Learning overhead | MemAct |

| Skill injection | Structured procedural packages | Targeted, efficient | Curation required | SkillsBench harnesses |

6.5.2. Knowledge and Context Engineering

Key Systems and Empirical Results

Long-Context Models and the Evolving C-Component Design Space

Retrieval-Augmented Context Management: Architecture and Limitations

Root Cause: Why Context Management Remains Unsolved

DeepAgents: Context Engineering Through Middleware Architecture

6.6. Tool Use as Core Harness Function

6.6.1. The Tool Registry as a Governance Object

6.6.2. The ToolBench Ecosystem and Large-Scale Tool Learning

6.6.3. Skill Libraries as S-T Component Integration

6.6.4. Tool Registry Design Patterns: A Structured Comparison

6.6.5. Key Harness Design Questions

| Question | Options | Tradeoffs |

| Tool registration | Static vs. dynamic | Static: predictable; Dynamic: flexible but security risk |

| Tool versioning | Strict vs. loose | Strict: reproducible; Loose: adaptive but fragile |

| Permission model | Per-tool vs. per-category vs. per-action | Granularity vs. usability |

| Error handling | Fail-fast vs. retry vs. fallback | Latency vs. robustness |

| Action space | Discrete-tool vs. code-as-action | Composability vs. interpretability |

6.6.6. Harness-Compatible Model Training and Fine-Tuning

6.6.7. Root Cause: Tool Governance Without Standards

6.6.8. Tool Management Infrastructure: The Registry as a Governance Component

6.6.9. Tool Failure as a Harness-Level Problem

6.6.10. Tool Security: The Registry as Attack Surface

6.6.11. MCP as Harness Protocol Infrastructure

6.6.12. Agent Skills: Workflow-Level Interoperability Beyond Tool-Level Protocols

6.7. Memory Management Architecture

6.7.1. Memory as Infrastructure, Not Capability

6.7.2. Architecture Comparison

| System | C-Component | S-Component | Key Innovation |

| MemGPT | Virtual paging | Disk storage | OS-inspired paging |

| Generative Agents | Context + retrieval | Memory stream | Reflection mechanism |

| Reflexion | Episodic buffer | Verbal critique store | Language-grounded self-improvement |

| MemoryBank | Retrieved context | Ebbinghaus-curve store | Forgetting curve update |

| Agent Workflow Memory | Workflow retrieval | Workflow store | Procedural memory induction |

| MemAct | Learnable policy | Action consequences | Memory-as-action |

| CORAL | MM + CO tools | External DB | Explicit tool interface |

| Zep TK | Retrieved context | Knowledge graph | Temporal relationships |

| OpenClaw | Session context | MEMORY.md files | Human-readable persistence |

6.7.3. Reflexion and the Episodic Buffer Design Pattern

| Architecture | Working Memory | Long-term Storage | Retrieval Mechanism | Context Cost | Security Isolation | Representative System |

| Flat context | In-window only | × | × (all in context) | O(1) per turn, O(n) total | None | Early ChatGPT plugins |

| Append-only log | In-window | External log | Chronological | O(n) per turn | None | Naive agent implementations |

| Hierarchical paging | Working set | Tiered (short-term/mid-term/long-term) | Priority scheduler | O(k) per turn (k = working set) | Tier-boundary enforcement | MemGPT, MemoryOS |

| Graph-structured | In-window summary | Knowledge graph | Semantic + relational | O(log n) per turn | Node-level ACLs possible | A-MEM, HippoRAG |

| Compression + gisting | Compressed summary | Original + gist index | Two-stage (gist → full) | O(c) per turn (c = compression ratio) | None | ReadAgent, Mem0 |

| Episodic + procedural | Working + episode buffer | Skill library + episode store | Quality-gated + relevance | O(k+p) per turn | Write-time validation | Voyager + Reflexion |

6.7.4. Memory–Security Coupling

6.7.5. The Memory Governance Contract: A Proposed Specification

6.7.6. Root Cause: Four Open Problems in Harness Memory

6.7.7. Compute Economics as a Context Management Constraint

6.7.8. The Harness as Memory Scheduler

6.7.9. Context Rot as a Harness Failure Mode

6.7.10. Memory as Security Attack Surface

6.7.11. The Absence of a Standard Memory Interface

| System | Memory Model | Scheduling Policy | Interface Portability | Write-time Security |

| MemGPT [13] | Two-tier paging | Agent-invoked function calls | Host-harness dependent | Absent |

| Generative Agents [87] | Memory stream + reflection | Harness-scheduled reflection cycles | Non-portable | Absent |

| Voyager [3] | Executable skill library | Embedding-similarity retrieval | Non-portable | Absent |

| MemoryOS [149] | Three-tier short-term/mid-term/long-term | FIFO + segmented page replacement eviction | Partial | Absent |

| A-MEM [157] | Graph-structured Zettelkasten | Link-traversal retrieval | Non-portable | Absent |

| Mem0 [155] | Async extraction middleware | Deduplication + consolidation | Partial (API layer) | Absent |

| AgentSys [50] | Hierarchical isolated stores | Harness-enforced authorization | Non-portable | Write isolation |

6.8. Coordination and Planning

6.8.1. Planning and Infrastructure

The Planning Loop as a Harness Governance Object

Planning State as Harness State

Search Budget and Harness Resource Governance

Planning Interface Design: The ACI as a Harness Responsibility

| System | Loop Control | State Persistence | Search Budget Enforcement | ACI Design |

| ReAct [79] | Linear | None (stateless) | Step limit only | Minimal tool-call schema |

| Tree of Thoughts [11] | BFS/DFS over thought tree | Tree state in harness memory | Breadth/depth limits | Thought generation prompt |

| LATS [160] | MCTS + reflection | Reflection buffer + tree state | Rollout count ceiling | Value fn + reflection prompt |

| Reflexion [5] | Retry with critique | Episodic verbal critique buffer | Max episode count | Self-critique schema |

| Voyager [3] | Curriculum-guided episodes | Skill library + episode log | Episode count limit | Skill code spec + task prompt |

| Agent Q [162] | MCTS over web actions | MCTS tree + DPO training pairs | Rollout budget / cost ceiling | Web action ACI (click/type/nav) |

| SWE-agent [7] | ReAct with ACI commands | File viewer state | Step ceiling | Custom ACI: search, edit, run |

| ExACT / R-MCTS [163] | R-MCTS + contrastive reflection | State cache for node rollback | Rollout + depth limits | Environment action set |

6.8.2. Multi-Agent Coordination as Harness Infrastructure

The New Governance Requirements of Multi-Agent Harnesses

| Pattern | Identity Model | Message Validation | State Consistency | Key Vulnerability |

| Role-Based | Fixed SOP assignment (MetaGPT [86]) | Typed document handoffs | Atomic document-level | Cascade errors across pipeline stages |

| Market-Based | Dynamic registry (AutoGen [98]) | Bid/offer with capability declarations | Agent-registry availability | Task starvation under biased assignment |

| Simulation | Persistent entity (Generative Agents [87]) | Indirect via environment state | World-model conflict resolution | Shared state corruption from concurrent writes |

| Hierarchical | Permission tree (DeerFlow [66], DeepAgents[99]) | Task specification + result validation | Permission-state propagation | Authority escalation via delegation bugs |

Protocol-Layer Standardization vs. Learned Topology

The Single-Agent Baseline Problem

Reliability and Security in Multi-Agent Harnesses

6.9. Emerging Topics and Research Directions

Group A: Immediate Community Priorities

6.9.1. Cross-Component Interaction Patterns

| Coupling Pattern | Components | Mechanism | Consequence of Independent Optimization |

| Retention–Security | Longer context retention increases both task performance and adversarial content persistence | C-optimal retention window exceeds L-safe window; joint optimization required | |

| Evaluation–Governance | Both intercept execution stream; V for measurement, L for policy enforcement | Separate logging and governance layers; governance events missing from evaluation traces | |

| Memory–Tool Composition | Tools operating on persistent state are simultaneously tool invocations and state mutations | External state treated as opaque side effects; governance benefits of explicit tracking lost |

6.9.2. Observability and Debugging

6.9.3. Human-in-the-Loop Mechanisms

6.9.4. Cost and Compute Economics

6.9.5. Autonomy and Long-Running Deployments

6.9.6. Automated Harness Engineering

6.9.7. Natural-Language Harness Specification

Group B: Long-Term Research Agenda

6.9.8. Formal Verification and Behavioral Guarantees

6.9.9. Cross-Harness Portability and Unified Benchmarking

6.9.10. Protocol Bridging and Federated Interoperability

6.9.11. Long-Horizon Task Decomposition

6.9.12. Security Model Formalization

6.9.13. Tool Composition and Dependency Inference

6.9.14. Energy-Aware Infrastructure Design

| Direction | Group | Key Challenge | -Components | Effort | “Solved” Milestone |

|---|---|---|---|---|---|

| Cross-component coupling | A | C–L, V–L, S–T interactions | All 6 | 2–3 yr | Joint optimization frameworks |

| Observability | A | Structured traces, replay | V, L, E | 2–3 yr | Deterministic replay of failed trajectories |

| Human-in-the-loop | A | Approval policy derivation | L, E | 1–2 yr | Task-level risk-based approval specs |

| Cost economics | A | Context accumulation costs | C, S, T | 2–3 yr | Formal cost models predicting CPT |

| Long-running autonomy | A | Skill library governance | S, L, E | 3–5 yr | Monitored self-improvement loops |

| Automated harness engineering | A | Search-based harness optimization | E, T, C | 2–3 yr | Auto-discovered harnesses surpass hand-engineered baselines |

| Natural-language harness spec | A | Formal grounding for NL representations | E, T, L | 2–3 yr | Verifiable NLAH frameworks with type-checking |

| Formal verification | B | Behavioral guarantees | E, L | 3–5 yr | Machine-checkable harness invariants |

| Cross-harness portability | B | Harness–model coupling | V, T, C, S | 2–3 yr | 100+ task suite on 3+ harnesses |

| Protocol bridging | B | MCP/A2A fragmentation | T, L | 1–2 yr | Universal adapter spec + reference impl. |

| Long-horizon decomposition | B | Harness-aware planning | E, C, S | 2–3 yr | Provably optimal decomposition algorithm |

| Security formalization | B | No formal security model | L, E, T | 4–6 yr | Bell–LaPadula analog for harnesses |

| Tool composition | B | Dependency inference | T, E | 2–3 yr | Auto-inferred composition templates |

| Energy-aware design | B | Energy budgets | E, C, S | 3–5 yr | 20%+ energy reduction vs. baseline |

6.9.15. Synthesis

7. Conclusion

7.1. The Infrastructure Thesis Revisited

7.2. Implications for Research Practice

7.3. Limitations and Future Scope

| 1 | This figure is from the GitHub artifact and evaluation leaderboard; the paper abstract states that Meta-Harness “surpasses baseline by a significant margin.” |

References

- Zheng, Z.; Ning, K.; Wang, Y.; Zhang, J.; Zheng, D.; Ye, M.; Chen, J. A survey of large language models for code: Evolution, benchmarking, and future trends. arXiv 2023, arXiv:2311.10372. [Google Scholar]

- Cheng, F.; Li, H.; Liu, F.; Van Rooij, R.; Zhang, K.; Lin, Z. Empowering llms with logical reasoning: A comprehensive survey. arXiv 2025, arXiv:2502.15652. [Google Scholar] [CrossRef]

- Wang, G.; Xie, Y.; Jiang, Y.; Mandlekar, A.; Xiao, C.; Zhu, Y.; Fan, L.; Anandkumar, A. Voyager: An Open-Ended Embodied Agent with Large Language Models. arXiv 2023, arXiv:2305.16291. [Google Scholar] [CrossRef]

- Qin, Y.; Liang, S.; Ye, Y.; Zhu, K.; Yan, L.; Lu, Y.; Lin, Y.; Cong, X.; Tang, X.; Qian, B.; et al. Toolllm: Facilitating large language models to master 16000+ real-world apis. arXiv 2023, arXiv:2307.16789. [Google Scholar]

- Shinn, N.; Cassano, F.; Gopinath, A.; Narasimhan, K.; Yao, S. Reflexion: Language Agents with Verbal Reinforcement Learning. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS 2023), 2023. [Google Scholar]

- Weng, Y.; Zhu, M.; Xie, Q.; Sun, Q.; Lin, Z.; Liu, S.; Zhang, Y. Deepscientist: Advancing frontier-pushing scientific findings progressively. arXiv 2025, arXiv:2509.26603. [Google Scholar]

- Yang, J.; Jimenez, C.E.; Wettig, A.; Lieret, K.; Yao, S.; Narasimhan, K.; Press, O. SWE-agent: Agent-Computer Interfaces Enable Automated Software Engineering. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS 2024), 2024. [Google Scholar]

- Yu, Y.; Yao, Z.; Li, H.; Deng, Z.; Jiang, Y.; Cao, Y.; Chen, Z.; Suchow, J.W.; Cui, Z.; Liu, R.; et al. Fincon: A synthesized llm multi-agent system with conceptual verbal reinforcement for enhanced financial decision making. Adv. Neural Inf. Process. Syst. 2024, 37, 137010–137045. [Google Scholar]

- Zhang, Q.; Lyu, F.; Sun, Z.; Wang, L.; Zhang, W.; Hua, W.; Wu, H.; Guo, Z.; Wang, Y.; Muennighoff, N.; et al. A survey on test-time scaling in large language models: What, how, where, and how well? arXiv 2025, arXiv:2503.24235. [Google Scholar]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Xia, F.; Chi, E.; Le, Q.V.; Zhou, D.; et al. Chain-of-thought prompting elicits reasoning in large language models. Adv. Neural Inf. Process. Syst. 2022, 35, 24824–24837. [Google Scholar]

- Yao, S.; Yu, D.; Zhao, J.; Shafran, I.; Griffiths, T.; Cao, Y.; Narasimhan, K. Tree of Thoughts: Deliberate Problem Solving with Large Language Models. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS 2023), 2023. [Google Scholar]

- Madaan, A.; Tandon, N.; Gupta, P.; Hallinan, S.; Gao, L.; Wiegreffe, S.; Alon, U.; Dziri, N.; Prabhumoye, S.; Yang, Y.; et al. Self-refine: Iterative refinement with self-feedback. Adv. Neural Inf. Process. Syst. 2023, 36, 46534–46594. [Google Scholar]

- Packer, C.; Wooders, S.; Lin, K.; Fang, V.; Patil, S.G.; Gonzalez, J.E. MemGPT: Towards LLMs as Operating Systems. arXiv 2023, arXiv:2310.08560. [Google Scholar]

- Kapoor, S.; Stroebl, B.; Kirgis, P.; Nadgir, N.; Siegel, Z.S.; Wei, B.; Xue, T.; Chen, Z.; Chen, F.; Utpala, S.; et al. Holistic agent leaderboard: The missing infrastructure for ai agent evaluation. arXiv 2025, arXiv:2510.11977. [Google Scholar] [CrossRef]

- Li, K.; Shi, J.; Xiao, Y.; Jiang, M.; Sun, J.; Wu, Y.; Xia, S.; Cai, X.; Xu, T.; Si, W.; et al. Agencybench: Benchmarking the frontiers of autonomous agents in 1m-token real-world contexts. arXiv 2026, arXiv:2601.11044. [Google Scholar]

- Jimenez, C.E.; Yang, J.; Wettig, A.; Yao, S.; Pei, K.; Press, O.; Narasimhan, K. SWE-bench: Can Language Models Resolve Real-World GitHub Issues? In Proceedings of the International Conference on Learning Representations (ICLR 2024), 2024. [Google Scholar]

- Liu, X.; Yu, H.; Zhang, H.; Xu, Y.; Lei, X.; Lai, H.; Gu, Y.; Ding, H.; Men, K.; Yang, K.; et al. Agentbench: Evaluating llms as agents. arXiv 2023, arXiv:2308.03688. [Google Scholar] [CrossRef]

- Mialon, G.; Fourrier, C.; Wolf, T.; LeCun, Y.; Scialom, T. Gaia: a benchmark for general ai assistants. In Proceedings of the The Twelfth International Conference on Learning Representations, 2023. [Google Scholar]

- Zhou, S.; Xu, F.F.; Zhu, H.; Zhou, X.; Lo, R.; Sridhar, A.; Cheng, X.; Ou, T.; Bisk, Y.; Fried, D.; et al. Webarena: A realistic web environment for building autonomous agents. arXiv 2023, arXiv:2307.13854. [Google Scholar]

- Lopopolo, R. Harness engineering: leveraging Codex in an Agent-First World. 2026. Available online: https://openai.com/index/harness-engineering/.

- Zoom In, A.I. The Harness Gap: AI Coding Output Rose 59% in 2025 While Teams Shipped 7% Less. Mediu. Write A Catal. 2026. [Google Scholar]

- Gray, A. Minions: Stripe’s one-shot, end-to-end Coding Agents. 2026. Available online: https://stripe.dev/blog/minions-stripes-one-shot-end-to-end-coding-agents.

- Qu, A. We Removed 80% of Our Agent’s Tools. Vercel Engineering Blog, 2025. [Google Scholar]

- Chase, H. Context Engineering Our Way to Long-Horizon Agents. Sequoia Capital Podcast, 2026. [Google Scholar]

- Gupta, A. 2025 Was Agents. 2026 Is Agent Harnesses. 2026. Available online: https://aakashgupta.medium.com/2025-was-agents-2026-is-agent-harnesses-heres-why-that-changes-everything-073e9877655e.

- Zhang, Z.; Dai, Q.; Bo, X.; Ma, C.; Li, R.; Chen, X.; Zhu, J.; Dong, Z.; Wen, J.R. A survey on the memory mechanism of large language model-based agents. ACM Trans. Inf. Syst. 2025, 43, 1–47. [Google Scholar] [CrossRef]

- Hu, Y.; Liu, S.; Yue, Y.; Zhang, G.; Liu, B.; Zhu, F.; Lin, J.; Guo, H.; Dou, S.; Xi, Z.; et al. Memory in the age of ai agents. arXiv 2025, arXiv:2512.13564. [Google Scholar] [CrossRef]

- Huang, X.; Liu, W.; Chen, X.; Wang, X.; Wang, H.; Lian, D.; Wang, Y.; Tang, R.; Chen, E. Understanding the planning of llm agents: A survey. arXiv 2024, arXiv:2402.02716. [Google Scholar] [CrossRef]

- Zhai, W.; Liao, J.; Chen, Z.; Su, B.; Zhao, X. A survey of task planning with large language models. Intell. Comput. 2025, 4, 0124. [Google Scholar] [CrossRef]

- Qu, C.; Dai, S.; Wei, X.; Cai, H.; Wang, S.; Yin, D.; Xu, J.; Wen, J.R. Tool Learning with Large Language Models: A Survey. Front. Comput. Sci. 2024, arXiv:2405.17935. [Google Scholar] [CrossRef]

- Xu, W.; Huang, C.; Gao, S.; Shang, S. LLM-Based Agents for Tool Learning: A Survey: W. Xu et al. Data Sci. Eng. 2025, 1–31. [Google Scholar]

- Qian, Q.; Huang, C.; Xu, J.; Lv, C.; Wu, M.; Liu, W.; Wang, X.; Wang, Z.; Huang, Z.; Tian, M.; et al. Benchmark 2: Systematic Evaluation of LLM Benchmarks. arXiv 2026, arXiv:2601.03986. [Google Scholar]

- Yehudai, A.; Eden, L.; Li, A.; Uziel, G.; Zhao, Y.; Bar-Haim, R.; Cohan, A.; Shmueli-Scheuer, M. Survey on evaluation of llm-based agents. arXiv 2025, arXiv:2503.16416. [Google Scholar] [CrossRef]

- Mohammadi, M.; Li, Y.; Lo, J.; Yip, W. Evaluation and Benchmarking of LLM Agents: A Survey. In Proceedings of the ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (KDD 2025), 2025. [Google Scholar]

- Luo, J.; Zhang, W.; Yuan, Y.; Zhao, Y.; Yang, J.; Gu, Y.; Wu, B.; Chen, B.; Qiao, Z.; Long, Q.; et al. Large language model agent: A survey on methodology, applications and challenges. arXiv 2025, arXiv:2503.21460. [Google Scholar] [CrossRef]

- Yang, X.; Li, L.; Zhou, H.; Zhu, T.; Qu, X.; Fan, Y.; Wei, Q.; Ye, R.; Kang, L.; Qin, Y.; et al. Toward Efficient Agents: Memory, Tool learning, and Planning. arXiv 2026, arXiv:2601.14192. [Google Scholar] [CrossRef]

- Gamma, E.; Beck, K.; et al. JUnit: A cook’s tour. Java Rep. 1999, 4, 27–38. [Google Scholar]

- Brockman, G.; Cheung, V.; Pettersson, L.; Schneider, J.; Schulman, J.; Tang, J.; Zaremba, W. OpenAI Gym; 2016. [Google Scholar]

- Andriushchenko, M.; Souly, A.; Dziemian, M.; Duenas, D.; Lin, M.; Wang, J.; Hendrycks, D.; Zou, A.; Kolter, Z.; Fredrikson, M.; et al. Agentharm: A benchmark for measuring harmfulness of llm agents. arXiv 2024, arXiv:2410.09024. [Google Scholar] [CrossRef]

- METR Research Team. Many SWE-bench-Passing PRs Would Not Be Merged into Main. 2026. Available online: https://metr.org/notes/2026-03-10-many-swe-bench-passing-prs-would-not-be-merged-into-main/.

- Anthropic. Introducing the Model Context Protocol. 2024. Available online: https://www.anthropic.com/news/model-context-protocol.

- Google. Announcing the Agent2Agent Protocol (A2A). 2025. Available online: https://developers.googleblog.com/en/a2a-a-new-era-of-agent-interoperability/ (accessed on 2026-04-13).

- LangChain. Agent Frameworks, Runtimes, and Harnesses. 2025. Available online: https://blog.langchain.com/agent-frameworks-runtimes-and-harnesses-oh-my/.

- Snorkel, A.I. Terminal-Bench 2.0. 2025. Available online: https://snorkel.ai.

- Vuddanti, A.; et al. PALADIN: Structured Tool Failure Recovery for LLM Agents. arXiv 2025, arXiv:2509.25238. [Google Scholar]

- Zhou, J.; Chen, J.; Lu, Q.; Zhao, D.; Zhu, L. Shielda: Structured handling of exceptions in llm-driven agentic workflows. 2025. [Google Scholar] [CrossRef]

- Shi, Y.; et al. ToolHijacker: Supply-Chain Attacks on Agent Tool Registries. arXiv 2025, arXiv:2504.19793. [Google Scholar]

- Li, F. OpenClaw PRISM: A Zero-Fork, Defense-in-Depth Runtime Security Layer for Tool-Augmented LLM Agents. arXiv 2026, arXiv:2603.11853. [Google Scholar]

- Anthropic. Agent Skills: An Open Standard for Portable Agent Workflows. https://agentskills.io/specification, 2025. Open standard specification. Accessed. 2025. (accessed on April 2026). [Google Scholar]

- Wen, R.; Li, H.; Xiao, C.; Zhang, N. AgentSys: Secure and Dynamic LLM Agents Through Explicit Hierarchical Memory Management. arXiv 2026, arXiv:2602.07398. [Google Scholar] [CrossRef]

- Schmid, P. The Importance of Agent Harness in 2026. 2026. Available online: https://www.philschmid.de/agent-harness-2026.

- Emde, C.; Rubinstein, A.; Goel, A.; Heakl, A.; Yun, S.; Oh, S.J.; Gubri, M. MASEval: Extending Multi-Agent Evaluation from Models to Systems. arXiv 2026, arXiv:2603.08835. [Google Scholar] [CrossRef]

- Lee, Y.; Nair, R.; Zhang, Q.; Lee, K.; Khattab, O.; Finn, C. Meta-Harness: End-to-End Optimization of Model Harnesses. 2026. [Google Scholar]

- Wang, L.; Ma, C.; Feng, X.; Zhang, Z.; Yang, H.; Zhang, J.; Chen, Z.; Tang, J.; Chen, X.; Lin, Y.; et al. A survey on large language model based autonomous agents. Front. Comput. Sci. 2024, 18, 186345. [Google Scholar] [CrossRef]

- Xi, Z.; Chen, W.; Guo, X.; He, W.; Ding, Y.; Hong, B.; Zhang, M.; Wang, J.; Jin, S.; Zhou, E.; et al. The rise and potential of large language model based agents: A survey. Sci. China Inf. Sci. 2025, 68, 121101. [Google Scholar] [CrossRef]

- Guo, T.; Chen, X.; Wang, Y.; Chang, R.; Pei, S.; Chawla, N.V.; Wiest, O.; Zhang, X. Large Language Model Based Multi-Agents: A Survey of Progress and Challenges. In Proceedings of the International Joint Conference on Artificial Intelligence (IJCAI 2024), 2024. [Google Scholar]

- Zhao, W.X.; Zhou, K.; Li, J.; Tang, T.; Wang, X.; Hou, Y.; Min, Y.; Zhang, B.; Zhang, J.; Dong, Z.; et al. A survey of large language models. arXiv 2023, arXiv:2303.182231, 1–124. [Google Scholar]

- Xu, B. AI Agent Systems: Architectures, Applications, and Evaluation. arXiv 2026, arXiv:2601.01743. [Google Scholar] [CrossRef]

- Li, X.; Chen, W.; Liu, Y.; Zheng, S.; Chen, X.; He, Y.; Li, Y.; You, B.; Shen, H.; Sun, J.; et al. SkillsBench: Benchmarking how well agent skills work across diverse tasks. arXiv 2026, arXiv:2602.12670. [Google Scholar] [CrossRef]

- Marchand, R.; Cathain, A.O.; Wynne, J.; Giavridis, P.M.; Deverett, S.; Wilkinson, J.; Gwartz, J.; Coppock, H. Quantifying Frontier LLM Capabilities for Container Sandbox Escape. arXiv 2026, arXiv:2603.02277. [Google Scholar] [CrossRef]

- Xie, T.; Zhang, D.; Chen, J.; Li, X.; Zhao, S.; Cao, R.; Hua, T.J.; Cheng, Z.; Shin, D.; Lei, F.; et al. Osworld: Benchmarking multimodal agents for open-ended tasks in real computer environments. Adv. Neural Inf. Process. Syst. 2024, 37, 52040–52094. [Google Scholar]

- Gao, H.; Geng, J.; Hua, W.; et al. A Survey of Self-Evolving Agents: What, When, How, and Where to Evolve, on the Path to Artificial Super Intelligence. arXiv 2026, arXiv:2507.21046. [Google Scholar]

- Pan, L.; Zou, L.; Guo, S.; Ni, J.; Zheng, H.T. Natural-Language Agent Harnesses. arXiv 2026, arXiv:2603.25723. [Google Scholar] [CrossRef]

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. Harness Engineering for Language Agents: The Harness Layer as Control, Agency, and Runtime. 2026. [Google Scholar] [CrossRef]

- Anthropic. Building Effective Agents. 2026. Available online: https://www.anthropic.com/engineering.

- He, T.; Li, H.; et al. DeerFlow v1: A Deep Research Framework for LLM Agents. GitHub, 2025. Original Deep Research framework release. Maintained on the 1.x branch; active development moved to v2.0 in February 2026; MIT License.

- Richards, T.B.; Gravitas, Significant. AutoGPT: Build, Deploy, and Run AI Agents. GitHub 2023. [Google Scholar]

- Wu, W.; Zhou, P.; Chen, L.; Wang, Q.; Lu, C.; Gao, Y.; Wu, Y.; Hu, Y.; Xiong, H. Aligning Large Language Models with Searcher Preferences. arXiv 2026, arXiv:2603.10473. [Google Scholar] [CrossRef]

- Liu, J. LlamaIndex; 2022. [Google Scholar] [CrossRef]

- Pydantic Team. PydanticAI: Agent Framework for Production-Grade GenAI Applications. GitHub 2024. [Google Scholar]

- Monica Inc. Manus: A General-Purpose Autonomous AI Agent Platform. 2025. Available online: https://manus.im.

- Microsoft. Microsoft Copilot Studio: Build and Customize AI Agents. 2023. Available online: https://learn.microsoft.com/en-us/microsoft-copilot-studio/.

- Amazon Web Services. Multi-Agent Orchestrator: Flexible Framework for Orchestrating Multiple AI Agents. GitHub 2024. [Google Scholar]

- Gao, L.; Biderman, S.; Black, S.; et al. lm-evaluation-harness. 2021. Available online: https://github.com/EleutherAI/lm-evaluation-harness.

- Mei, K.; Zhu, X.; Xu, W.; Hua, W.; Jin, M.; Li, Z.; Xu, S.; Ye, R.; Zhang, Y. AIOS: LLM Agent Operating System. In Proceedings of the Conference on Language Modeling (COLM 2025), 2025. [Google Scholar]

- Beck, K. Simple Smalltalk Testing: With Patterns. In Proceedings of the The Smalltalk Report Original xUnit testing framework paper, 1994; Available online: https://www.xprogramming.com/testfram.htm.

- Towers, M.; Kwiatkowski, A.; Terry, J.; Balis, J.U.; De Cola, G.; Deleu, T.; Goulão, M.; Kallinteris, A.; Krimmel, M.; KG, A.; et al. Gymnasium: A standard interface for reinforcement learning environments. arXiv 2024, arXiv:2407.17032. [Google Scholar] [CrossRef]

- Chezelles, D.; Le Sellier, T.; Shayegan, S.O.; Jang, L.K.; Lù, X.H.; Yoran, O.; Kong, D.; Xu, F.F.; Reddy, S.; Cappart, Q.; et al. The browsergym ecosystem for web agent research. arXiv 2024, arXiv:2412.05467. [Google Scholar] [CrossRef]

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.R.; Cao, Y. ReAct: Synergizing Reasoning and Acting in Language Models. In Proceedings of the International Conference on Learning Representations (ICLR 2023), 2023. [Google Scholar]

- Schick, T.; Dwivedi-Yu, J.; Dessì, R.; Raileanu, R.; Lomeli, M.; Hambro, E.; Zettlemoyer, L.; Cancedda, N.; Scialom, T. Toolformer: Language Models Can Teach Themselves to Use Tools. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS 2023), 2023. [Google Scholar]

- Patil, S.G.; Zhang, T.; Wang, X.; Gonzalez, J.E. Gorilla: Large Language Model Connected with Massive APIs. arXiv 2023, arXiv:2305.15334. [Google Scholar] [CrossRef]

- Significant Gravitas. AutoGPT. 2023. Available online: https://github.com/Significant-Gravitas/AutoGPT (accessed on 2026-04-13).

- Nakajima, Y. BabyAGI. 2023. Available online: https://github.com/yoheinakajima/babyagi.

- Li, G.; Hammoud, H.A.A.K.; Itani, H.; Khizbullin, D.; Ghanem, B. CAMEL: Communicative Agents for “Mind” Exploration of Large Language Model Society. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS 2023), 2023. [Google Scholar]

- Qian, C.; Liu, W.; Liu, H.; Chen, N.; Dang, Y.; Li, J.; Yang, C.; Chen, W.; Su, Y.; Cong, X.; et al. Chatdev: Communicative agents for software development. Proceedings of the Proceedings of the 62nd annual meeting of the association for computational linguistics 2024, volume 1, 15174–15186. [Google Scholar]

- Hong, S.; Zhuge, M.; Chen, J.; Zheng, X.; Cheng, Y.; Wang, J.; Zhang, C.; Wang, Z.; Yau, S.K.S.; Lin, Z.; et al. MetaGPT: Meta programming for a multi-agent collaborative framework. In Proceedings of the The twelfth international conference on learning representations, 2023. [Google Scholar]

- Park, J.S.; O’Brien, J.C.; Cai, C.J.; Morris, M.R.; Liang, P.; Bernstein, M.S. Generative Agents: Interactive Simulacra of Human Behavior. In Proceedings of the ACM Symposium on User Interface Software and Technology (UIST 2023), 2023. [Google Scholar] [CrossRef]

- Drouin, A.; Gasse, M.; Caccia, M.; Laradji, I.H.; Del Verme, M.; Marty, T.; Boisvert, L.; Thakkar, M.; Cappart, Q.; Vazquez, D.; et al. Workarena: How capable are web agents at solving common knowledge work tasks? arXiv 2024, arXiv:2403.07718. [Google Scholar] [CrossRef]

- Anthropic. Demystifying Evals for AI Agents. 2026. Available online: https://www.anthropic.com/engineering.

- Vezhnevets, A.S.; Agapiou, J.P.; Aharon, A.; Ziv, R.; Matyas, J.; Duéñez-Guzmán, E.A.; Cunningham, W.A.; Osindero, S.; Karmon, D.; Leibo, J.Z. Generative agent-based modeling with actions grounded in physical, social, or digital space using Concordia. arXiv 2023, arXiv:2312.03664. [Google Scholar] [CrossRef]

- Wang, J.; Wang, J.; Athiwaratkun, B.; Zhang, C.; Zou, J. Mixture-of-Agents Enhances Large Language Model Capabilities. arXiv 2024, arXiv:2406.04692. [Google Scholar]

- Chen, W.; Su, Y.; Zuo, J.; Yang, C.; Yuan, C.; Chan, C.M.; Yu, H.; Lu, Y.; Hung, Y.H.; Qian, C.; et al. Agentverse: Facilitating multi-agent collaboration and exploring emergent behaviors. In Proceedings of the The Twelfth International Conference on Learning Representations, 2023. [Google Scholar]

- Zhong, W.; Guo, L.; Gao, Q.; Ye, H.; Wang, Y. MemoryBank: Enhancing Large Language Models with Long-Term Memory. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence (AAAI 2024), 2024. [Google Scholar]

- Wang, Z.Z.; Mao, J.; Fried, D.; Neubig, G. Agent workflow memory. arXiv 2024, arXiv:2409.07429. [Google Scholar] [CrossRef]

- Yang, J.; Prabhakar, A.; Narasimhan, K.; Yao, S. InterCode: Standardizing and Benchmarking Interactive Coding with Execution Feedback. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS 2023), 2023. [Google Scholar]

- Deng, X.; Gu, Y.; Zheng, B.; Chen, S.; Stevens, S.; Wang, B.; Sun, H.; Su, Y. Mind2Web: Towards a Generalist Agent for the Web. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS 2023), 2023. [Google Scholar]

- Moura, J.; CrewAI Inc. CrewAI: Framework for Orchestrating Role-Playing. Auton. AI Agents 2024. [Google Scholar]

- Wu, Q.; Bansal, G.; Zhang, J.; Wu, Y.; Li, B.; Zhu, E.; Jiang, L.; Zhang, X.; Zhang, S.; Liu, J.; et al. Autogen: Enabling next-gen LLM applications via multi-agent conversations. In Proceedings of the First conference on language modeling, 2024. [Google Scholar]

- Li, X.; Jiao, W.; Jin, J.; Dong, G.; Jin, J.; Wang, Y.; Wang, H.; Zhu, Y.; Wen, J.R.; Lu, Y.; et al. Deepagent: A general reasoning agent with scalable toolsets. arXiv 2025, arXiv:2510.21618. [Google Scholar] [CrossRef]

- Wang, X.; Li, B.; Song, Y.; Xu, F.F.; et al. OpenHands: An Open Platform for AI Software Developers as Generalist Agents. In Proceedings of the International Conference on Learning Representations (ICLR 2025), 2025. [Google Scholar]

- LangChain, Inc. LangGraph: Build Stateful, Multi-Actor Applications with LLMs. GitHub 2024. [Google Scholar]

- Anthropic. Claude Code: Agentic Coding Tool. Accessed. 2025. (accessed on 2026-04-14).

- Greshake, K.; Abdelnabi, S.; Mishra, S.; Endres, C.; Holz, T.; Fritz, M. Not what you’ve signed up for: Compromising real-world llm-integrated applications with indirect prompt injection. In Proceedings of the Proceedings of the 16th ACM workshop on artificial intelligence and security, 2023; pp. 79–90. [Google Scholar]

- Zhan, Q.; Liang, Z.; Ying, Z.; Kang, D. Injecagent: Benchmarking indirect prompt injections in tool-integrated large language model agents. Proc. Find. Assoc. Comput. Linguist. ACL 2024, 2024, 10471–10506. [Google Scholar]

- Ruan, Y.; Dong, H.; Wang, A.; Pitis, S.; Zhou, Y.; Ba, J.; Dubois, Y.; Maddison, C.J.; Hashimoto, T. Identifying the risks of lm agents with an lm-emulated sandbox. arXiv 2023, arXiv:2309.15817. [Google Scholar] [CrossRef]

- Yuan, T.; He, Z.; Dong, L.; Wang, Y.; Zhao, R.; Xia, T.; Xu, L.; Zhou, B.; Li, F.; Zhang, Z.; et al. R-judge: Benchmarking safety risk awareness for llm agents. Proc. Find. Assoc. Comput. Linguist. EMNLP 2024, 2024, 1467–1490. [Google Scholar]

- Yadav, S. The Day Claude Code Deleted Our Production Database. In Medium, Coding Nexus; 2026. [Google Scholar]

- TechCrunch. A Meta AI Security Researcher Said an OpenClaw Agent Ran Amok on Her Inbox; TechCrunch, 2026. [Google Scholar]

- Merkel, D. Docker: Lightweight Linux Containers for Consistent Development and Deployment; Houston, TX, 2014; Vol. 2014. [Google Scholar]

- Young, E.G.; Zhu, P.; Caraza-Harter, T.; Arpaci-Dusseau, A.C.; Arpaci-Dusseau, R.H. The True Cost of Containing: A gVisor Case Study. In Proceedings of the 11th USENIX Workshop on Hot Topics in Cloud Computing (HotCloud 19), Renton, WA, 2019. [Google Scholar]

- Agache, A.; Brooker, M.; Florescu, A.; Iordache, A.; Liguori, A.; Neugebauer, R.; Piwonka, P.; Popa, D.M. Firecracker: Lightweight Virtualization for Serverless Applications. In Proceedings of the 17th USENIX Symposium on Networked Systems Design and Implementation (NSDI 20), Santa Clara, CA, 2020; pp. 419–434. [Google Scholar]

- Haas, A.; Rossberg, A.; Schuff, D.L.; Titzer, B.L.; Holman, M.; Gohman, D.; Wagner, L.; Zakai, A.; Bastien, J.F. Bringing the Web up to Speed with WebAssembly. In Proceedings of the Proceedings of the 38th ACM SIGPLAN Conference on Programming Language Design and Implementation (PLDI 2017), Barcelona, Spain, 2017; pp. 185–200. [Google Scholar] [CrossRef]

- Steinberger, P. OpenClaw: An Open-Source Autonomous AI Agent. GitHub 2025. [Google Scholar]

- Hua, W.; Yang, X.; Jin, M.; Li, Z.; Cheng, W.; Tang, R.; Zhang, Y. TrustAgent: Towards Safe and Trustworthy LLM-Based Agents. Proc. Find. Assoc. Comput. Linguist. EMNLP 2024, arXiv:2402.01586. [Google Scholar]

- Portia Labs. Portia: Build AI Agents You Can Trust in Regulated Environments. Software framework. 2025. [Google Scholar]

- Lin, W. Towards self-driving codebases. 2026. Available online: https://cursor.com/blog/self-driving-codebases.

- Perez, F.; Ribeiro, I. Ignore Previous Prompt: Attack Techniques For Language Models. In Proceedings of the ML Safety Workshop at NeurIPS 2022, 2022. [Google Scholar]

- Caldwell, S.; Harley, M.; Kouremetis, M.; Abruzzo, V.; Pearce, W. PentestJudge: Judging Agent Behavior Against Operational Requirements. arXiv 2025, arXiv:2508.02921. [Google Scholar] [CrossRef]

- Wang, H.; Poskitt, C.M.; Sun, J. Agentspec: Customizable runtime enforcement for safe and reliable llm agents. arXiv 2025, arXiv:2503.18666. [Google Scholar] [CrossRef]

- Xu, F.F.; Song, Y.; Li, B.; Tang, Y.; Jain, K.; Bao, M.; Wang, Z.Z.; Zhou, X.; Guo, Z.; Cao, M.; et al. Theagentcompany: benchmarking llm agents on consequential real world tasks. arXiv 2024, arXiv:2412.14161. [Google Scholar]

- Wang, X.; Chen, Y.; Yuan, L.; Zhang, Y.; Li, Y.; Peng, H.; Ji, H. Executable Code Actions Elicit Better LLM Agents (CodeAct). In Proceedings of the International Conference on Machine Learning (ICML 2024), 2024. [Google Scholar]

- Jain, N.; Shetty, M.; Zhang, T.; Han, K.; Sen, K.; Stoica, I. R2E: Turning any Github Repository into a Programming Agent Environment. In Proceedings of the International Conference on Machine Learning (ICML 2024); 2024; Vol. 235, Proceedings of Machine Learning Research ; pp. 21196–21224. [Google Scholar]

- Hu, R.; Peng, C.; Wang, X.; Xu, J.; Gao, C. Repo2run: Automated building executable environment for code repository at scale. 2025. [Google Scholar]

- Bühler, C.; Biagiola, M.; Di Grazia, L.; Salvaneschi, G. Securing AI Agent Execution, 2025.

- Yuan, A.; Su, Z.; Zhao, Y. AEGIS: No Tool Call Left Unchecked–A Pre-Execution Firewall and Audit Layer for AI Agents. 2026. [Google Scholar]

- Errico, H.; Ngiam, J.; Sojan, S. Securing the Model Context Protocol (MCP): Risks, Controls, and Governance; 2025. [Google Scholar]

- Ursekar, V.; Shanker, A.; Chatrath, V.; Denton, S.; et al. VeRO: An Evaluation Harness for Agents to Optimize Agents. arXiv 2026, arXiv:2602.22480. [Google Scholar] [CrossRef]

- Anthropic. Donating the Model Context Protocol and Establishing the Agentic AI Foundation. 2025. Available online: https://www.anthropic.com/news/donating-the-model-context-protocol-and-establishing-of-the-agentic-ai-foundation (accessed on 2026-04-13).

- IBM Research. Agent Communication Protocol (ACP). 2025. Available online: https://research.ibm.com/projects/agent-communication-protocol (accessed on 2026-04-13).

- LF AI; Data Foundation. ACP Joins Forces with A2A Under the Linux Foundation. 2025. Available online: https://lfaidata.foundation/communityblog/2025/08/29/acp-joins-forces-with-a2a-under-the-linux-foundations-lf-ai-data/ (accessed on 2026-04-13).

- Ehtesham, A.; Singh, A.; Gupta, G.K.; Kumar, S. A survey of agent interoperability protocols: Model context protocol (mcp), agent communication protocol (acp), agent-to-agent protocol (a2a), and agent network protocol (anp). arXiv 2025, arXiv:2505.02279. [Google Scholar]

- Anbiaee, Z.; Rabbani, M.; Mirani, M.; Piya, G.; Opushnyev, I.; Ghorbani, A.; Dadkhah, S. Security Threat Modeling for Emerging AI-Agent Protocols: A Comparative Analysis of MCP, A2A, Agora, and ANP. arXiv 2026, arXiv:2602.11327. [Google Scholar] [CrossRef]

- Zhang, Y.; Shu, J.; Ma, Y.; Lin, X.; Wu, S.; Sang, J. Memory as action: Autonomous context curation for long-horizon agentic tasks. 2025. [Google Scholar] [CrossRef]

- Asai, A.; Wu, Z.; Wang, Y.; Sil, A.; Hajishirzi, H. Self-RAG: Learning to Retrieve, Generate, and Critique Through Self-Reflection. In Proceedings of the International Conference on Learning Representations (ICLR 2024), 2024. [Google Scholar]

- Liu, N.F.; Lin, K.; Hewitt, J.; Paranjape, A.; Bevilacqua, M.; Petroni, F.; Liang, P. Lost in the Middle: How Language Models Use Long Contexts. Trans. Assoc. Comput. Linguist. 2024, arXiv:2307.0317212, 157–173. [Google Scholar] [CrossRef]

- Patil, S.G.; Zhang, T.; Fang, V.; Huang, R.; Hao, A.; Casado, M.; Gonzalez, J.E.; Popa, R.A.; Stoica, I.; et al. Goex: Perspectives and designs towards a runtime for autonomous llm applications. arXiv 2024, arXiv:2404.06921. [Google Scholar] [CrossRef]

- Lu, J.; Holleis, T.; Zhang, Y.; Aumayer, B.; Nan, F.; Bai, H.; Ma, S.; Ma, S.; Li, M.; Yin, G.; et al. Toolsandbox: A stateful, conversational, interactive evaluation benchmark for llm tool use capabilities. Proc. Find. Assoc. Comput. Linguist. NAACL 2025, 2025, 1160–1183. [Google Scholar]

- Lou, X.; Lázaro-Gredilla, M.; Dedieu, A.; Wendelken, C.; Lehrach, W.; Murphy, K.P. AutoHarness: improving LLM agents by automatically synthesizing a code harness. arXiv 2026, arXiv:2603.03329. [Google Scholar]

- Zeng, A.; Liu, M.; Lu, R.; Wang, B.; Liu, X.; Dong, Y.; Tang, J. AgentTuning: Enabling Generalized Agent Abilities for LLMs. Proc. Find. Assoc. Comput. Linguist. ACL 2024 2024, arXiv:2310.12823. [Google Scholar]

- Li, M.; Zhao, Y.; Yu, B.; Song, F.; Li, H.; Yu, H.; Li, Z.; Huang, F.; Li, Y. Api-bank: A comprehensive benchmark for tool-augmented llms. In Proceedings of the Proceedings of the 2023 conference on empirical methods in natural language processing, 2023; pp. 3102–3116. [Google Scholar]

- Jia, Y.; Li, K. AutoTool: Graph-Based Tool Usage Index for Harness-Side Tool Selection. arXiv 2026, arXiv:2511.14650. [Google Scholar]

- Sigdel, S.; Baral, C. Schema First: Controlled Experiments on JSON Schema Validation at Harness Tool Registration Boundaries. arXiv 2026, arXiv:2603.13404. [Google Scholar]

- Wang, A.; Hager, S.; Asija, A.; Khashabi, D.; Andrews, N. Hell or High Water: Evaluating Agent Recovery from External Tool Failures. arXiv 2025, arXiv:2508.11027. [Google Scholar]

- Jia, J.; Deng, Z.; Chen, Z.; Wang, Y.; Zheng, Z. MAS-FIRE: Fault Injection and Reliability Evaluation for LLM-Based Multi-Agent Systems. 2026. [Google Scholar]

- Bhardwaj, V.P. Formal analysis and supply chain security for agentic ai skills. 2026. [Google Scholar] [CrossRef]

- Baral, R. Guardrails as Infrastructure: Policy-First Control for Tool-Orchestrated Workflows. arXiv 2026, arXiv:2603.18059. [Google Scholar]

- Sawers, P. MCP’s Biggest Growing Pains for Production Use Will Soon Be Solved. The New Stack. Accessed. 2026. (accessed on 2026-04-13).

- Nicoud, A. The Trends That Will Shape AI and Tech in 2026. IBM Think. Accessed. 2026. (accessed on 2026-04-13).

- Kang, J.; Ji, M.; Zhao, Z.; Bai, T. Memory os of ai agent. 2025. [Google Scholar] [CrossRef]

- Du, P. Comprehensive Survey of Memory Management for LLM Agents: The Write–Manage–Read Loop. arXiv 2026, arXiv:2603.07670. [Google Scholar]

- Sumers, T.R.; Yao, S.; Narasimhan, K.; Griffiths, T.L. Cognitive Architectures for Language Agents (CoALA). Trans. Mach. Learn. Res. 2024, arXiv:2309.02427. [Google Scholar]

- Mei, K.; et al. A survey of context engineering for large language models. 2025. [Google Scholar] [CrossRef]

- ai, Zylon. What Is OpenClaw? A Practical Guide to the Agent Harness Behind the Hype. 2026. Available online: https://zylon.ai.

- Lee, K.H.; Chen, X.; Furuta, H.; Canny, J.; Fischer, I. A Human-Inspired Reading Agent with Gist Memory of Very Long Contexts. In Proceedings of the International Conference on Machine Learning (ICML 2024), 2024. [Google Scholar]

- Chhikara, P.; Khant, D.; Aryan, S.; Singh, T.; Yadav, D. Mem0: Building production-ready ai agents with scalable long-term memory. 2025. [Google Scholar]

- Li, Z.; Li, C.; Zhang, M.; Mei, Q.; Bendersky, M. Retrieval augmented generation or long-context llms? a comprehensive study and hybrid approach. In Proceedings of the Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing: Industry Track, 2024; pp. 881–893. [Google Scholar]

- Xu, W.; Liang, Z.; Mei, K.; Gao, H.; Tan, J.; Zhang, Y. A-MEM: Graph-Structured Zettelkasten Memory for LLM Agents. arXiv 2025, arXiv:2502.12110. [Google Scholar]

- Maharana, A.; Lee, D.H.; Tulyakov, S.; Bansal, M.; Barbieri, F.; Fang, Y. Evaluating Very Long-Term Conversational Memory of LLM Agents. arXiv 2024, arXiv:2402.17753. [Google Scholar] [CrossRef]

- Wei, T.; Sachdeva, N.; Coleman, B.; et al. Evo-Memory: Benchmarking LLM Agent Test-time Learning with Self-Evolving Memory. arXiv 2025, arXiv:2511.20857. [Google Scholar]

- Zhou, A.; Yan, K.; Shlapentokh-Rothman, M.; Wang, H.; Wang, Y.X. Language Agent Tree Search Unifies Reasoning, Acting, and Planning in Language Models. arXiv 2023, arXiv:2310.04406. [Google Scholar] [CrossRef]

- Chen, L.; Tong, P.; Jin, Z.; Sun, Y.; Ye, J.; Xiong, H. Plan-on-graph: Self-correcting adaptive planning of large language model on knowledge graphs. Adv. Neural Inf. Process. Syst. 2024, 37, 37665–37691. [Google Scholar]

- Putta, P.; Mills, E.; Garg, N.; Motwani, S.; Finn, C.; Garg, D.; Rafailov, R. Agent Q: Advanced Reasoning and Learning for Autonomous AI Agents. arXiv 2024, arXiv:2408.07199. [Google Scholar] [CrossRef]

- Yu, X.; Peng, B.; Vajipey, V.; Cheng, H.; Galley, M.; Gao, J.; Yu, Z. ExACT: Teaching AI Agents to Explore with Reflective-MCTS and Exploratory Learning. arXiv 2024, arXiv:2410.02052. [Google Scholar]

- Hao, S.; Gu, Y.; Ma, H.; Hong, J.J.; Wang, Z.; Wang, D.Z.; Hu, Z. Reasoning with Language Model is Planning with World Model (RAP). In Proceedings of the Empirical Methods in Natural Language Processing (EMNLP 2023), 2023. [Google Scholar]

- Xie, Y.; Goyal, A.; Zheng, W.; Kan, M.Y.; Lillicrap, T.P.; Kawaguchi, K.; Shieh, M. Monte carlo tree search boosts reasoning via iterative preference learning. arXiv 2024, arXiv:2405.00451. [Google Scholar]

- Bui, N.D. OPENDEV: Building AI Coding Agents for the Terminal—Scaffolding. Harness Context Eng. 2026, arXiv:2603.05344. [Google Scholar]

- Böckeler, B. Harness Engineering. 2026. Available online: https://martinfowler.com/articles/exploring-gen-ai/harness-engineering.html.

- Roucher, A.; Villanova del Moral, A.; Wolf, T.; von Werra, L.; Kaunismäki, E. smolagents: A Smol Library to Build Great Agentic Systems. 2025. Available online: https://github.com/huggingface/smolagents.

- Yu, G. AdaptOrch: Task-Adaptive Multi-Agent Orchestration in the Era of LLM Performance Convergence. arXiv 2026, arXiv:2602.16873. [Google Scholar]

- Zhang, J.; Xiang, J.; Yu, Z.; Teng, F.; Chen, X.; Chen, J.; Zhuge, M.; Cheng, X.; Hong, S.; Wang, J.; et al. Aflow: Automating agentic workflow generation. arXiv 2024, arXiv:2410.10762. [Google Scholar] [CrossRef]

- Ruan, J.; et al. Automating Sub-Agent Creation for Agentic Orchestration. arXiv 2026, arXiv:2602.03786. [Google Scholar] [CrossRef]

- Li, A.; Xie, Y.; Li, S.; Tsung, F.; Ding, B.; Li, Y. Agent-Oriented Planning in Multi-Agent Systems. In Proceedings of the International Conference on Learning Representations (ICLR 2025), 2025. [Google Scholar]

- Huang, S.; Zhao, Z.; Zhu, Y.; Zhao, D. Adaptive Multi-Agent Coordination among Different Team Attribute Tasks via Contextual Meta-Reinforcement Learning. 2024. [Google Scholar]

- Zheng, L.; Chen, J.; et al. Byzantine Fault Tolerance in Multi-Agent LLM Systems: Failure Propagation and Suppression. arXiv 2026, arXiv:2511.10400. [Google Scholar]

- Syros, G.; Suri, A.; Ginesin, J.; Nita-Rotaru, C.; Oprea, A. Saga: A security architecture for governing ai agentic systems. 2025. [Google Scholar] [CrossRef]

- Hashimoto, M. My AI Adoption Journey. Personal blog. Accessed. 2026. (accessed on 2026-04-13).

- Harbor Framework Team. Harbor: A Framework for Evaluating and Optimizing Agents and Models in Container Environments. 2026. Available online: https://github.com/harbor-framework/harbor.

| Survey | Research Object | Analysis Level | Core Contribution | Overlap with This Survey |

| Wang et al. [54] | Agent capabilities: memory, planning, tool use, action | Model-level decomposition | Foundational taxonomy of agent functional modules | Describes what components do; does not address runtime governance |

| Xi et al. [55] | Agent application landscape | Application-level survey | Comprehensive map of agent deployment domains | Documents where agents are used; largely silent on execution infrastructure |

| Mohammadi et al. [34] | Evaluation methodology and benchmark design | Benchmark-level analysis | Taxonomy of metrics, failure modes, evaluation protocols | Treats evaluation as external measurement; this survey treats it as an infrastructure component (V) |

| Guo et al. [56] | Multi-agent coordination: roles, communication, collaboration | System-level survey | Taxonomy of multi-agent coordination patterns | Addresses how agents coordinate; this survey addresses what infrastructure makes coordination reliable |

| Gao et al. [62] | Agent self-improvement: skill evolution, self-training, adaptive infrastructure | Model-evolution-level analysis | Taxonomy of how agents update capabilities over time | Addresses how agents evolve; this survey addresses what infrastructure makes agents run reliably |

| This survey | Agent execution harness: runtime governance infrastructure | Infrastructure-level analysis | Formal definition, historical lineage, completeness taxonomy, cross-cutting challenge analysis | — |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).