4.1. The Classification Problem

Any taxonomy of agent harness systems must resolve a prior question: what dimension of variation is being classified? The existing ecosystem includes systems that differ along at least three independent axes—functional completeness (how many of the six governance components they implement), domain specialization (whether they are designed for general or specific task types), and stack position (whether they operate as runtime environments, development tools, or capability components). We adopt a two-dimensional classification: stack position as the primary dimension and domain scope as the secondary dimension. This organization reflects the question practitioners most frequently ask: “Is this system something I can deploy directly, or something I build with?”

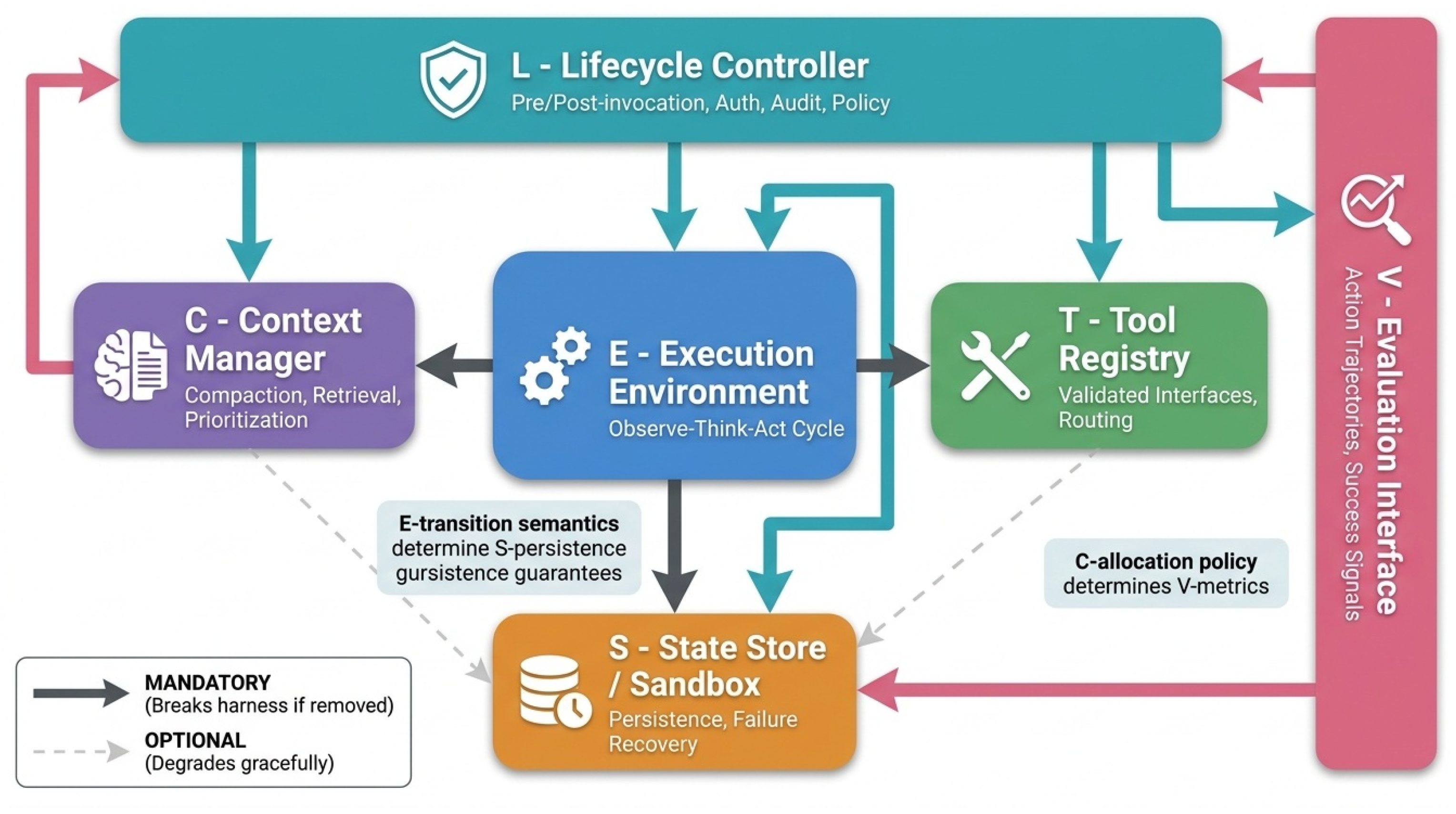

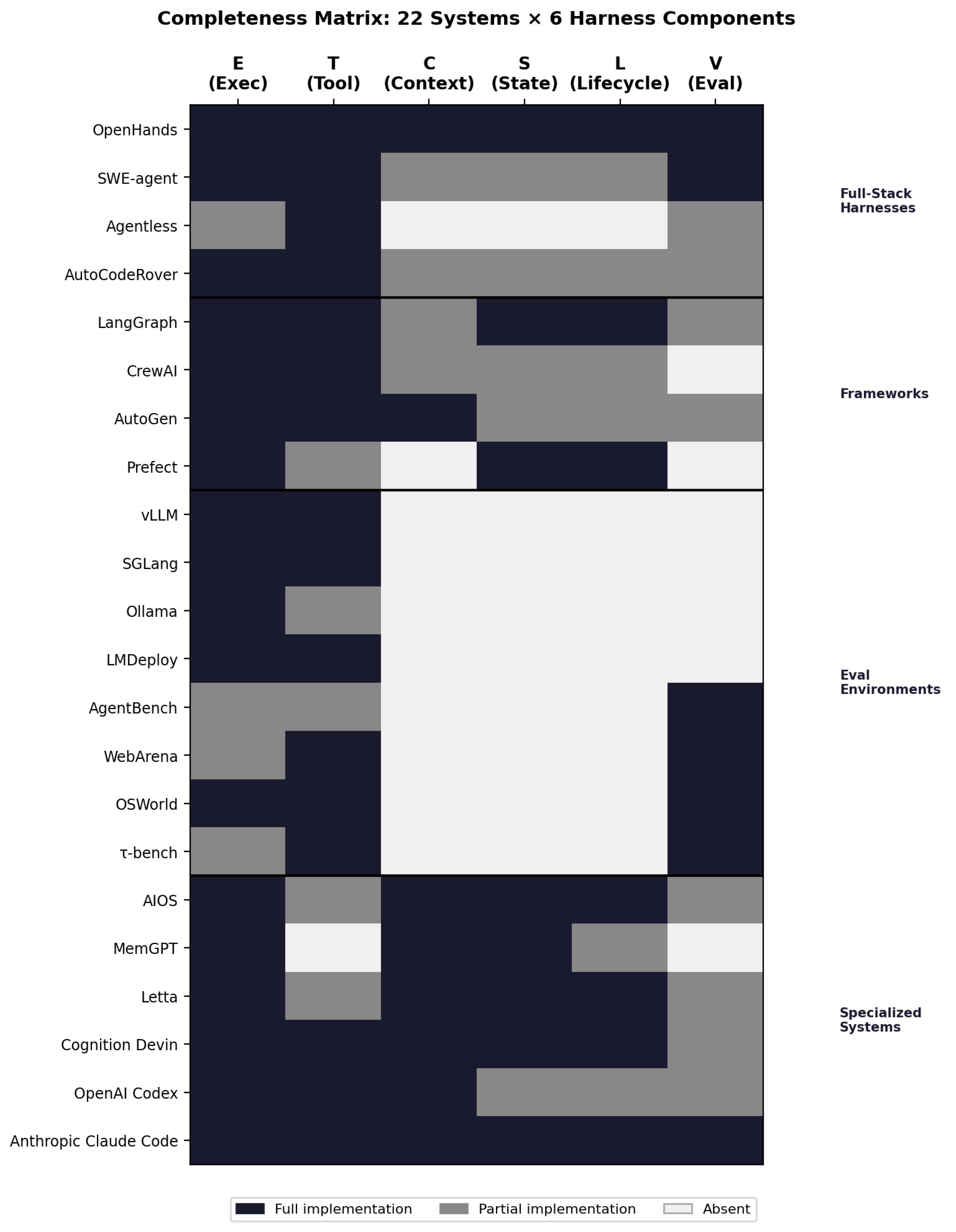

Figure 11 presents the completeness matrix as a heatmap across 22 systems and the six harness components (E, T, C, S, L, V). Each cell encodes one of three states: full implementation (dark), partial implementation (medium), or absent (light). Reading the heatmap by row reveals each system’s architectural profile—the characteristic diagonal pattern of full-stack harnesses (uniformly dark) contrasts sharply with the sparse coverage of frameworks (dark only in the E and T columns) and the inverse sparseness of capability modules (dark only in C and S). Reading by column reveals which governance functions are most frequently under-implemented across the ecosystem: the V column (evaluation interface) and L column (lifecycle hooks) exhibit the highest proportion of partial or absent implementations, confirming that evaluation instrumentation and lifecycle governance are the most systematically neglected harness functions. The security model column, shown separately to the right of the six-component block, shows a bimodal distribution: full-stack harnesses cluster at high isolation (MicroVM or Container), while frameworks and modules uniformly show “None”—a gap that the security analysis in §5.1 explains in structural terms.

4.1.1. System Selection Methodology

This survey analyzes 22 agent harness systems, but the selection process itself requires explicit justification. We adopt a structured expert survey approach rather than a PRISMA-compliant systematic review, reflecting the nascency of the agent harness literature and the prevalence of grey literature (practitioner reports, developer blogs, GitHub repositories) as primary sources.

Inclusion criteria. Systems are included if: (1) they implement publicly documented architectures; (2) they instantiate at least three of the six harness components (E, T, C, S, L, V); and (3) they are accessible to researchers through source code, official documentation, or published academic descriptions. This threshold of “≥3 components” operationalizes the distinction between agent infrastructure and general-purpose application frameworks: a system implementing only E and T is a workflow orchestrator, not an agent harness. Systems are categorized by their primary design intent (full-stack harness, framework, module, or evaluation infrastructure), but component adoption is the technical criterion for inclusion.

Search strategy. Systems were identified through four complementary pathways: (1) systematic search of academic literature from ACL, NeurIPS, ICLR, ICML, and AAAI conferences published between January 2023 and March 2026, with keyword queries combining “agent,” “harness,” “orchestration,” “framework,” and “orchestration infrastructure”; (2) snowball sampling from references in agent surveys and position papers (Wang et al., 2024; Si et al., 2024; Tavčar et al., 2024); (3) systematic GitHub repository enumeration filtering for repositories with publication history and ≥ 500 stars within the search window, applying labels “agent,” “agent-framework,” “agentic,” and “autonomous-agent”; and (4) documented practitioner deployments from practitioner reports, engineering blog posts, and industry white papers published by major organizations (Anthropic, OpenAI, Google, DeepMind, Microsoft, Meta).

Time window and scope. The survey spans January 2023 through March 2026, marking the period from the emergence of ReAct (Yao et al., 2023) through the current landscape. This window captures the rapid proliferation of agent architectures following the release of GPT-4 in March 2023 and Claude 3 in March 2024.

Exclusion criteria. We exclude: (1) proprietary enterprise-internal systems without published documentation (limiting us to systems with public architectures); (2) single-component libraries (e.g., pure memory packages like chromadb, pure tool-use packages like tool_use implementations in LLM SDKs) that do not cross the ≥3-component threshold; and (3) early-stage prototypes without public deployment or documentation at time of writing. The first exclusion criterion introduces a systematic bias: the most mature real-world agent harnesses are typically enterprise-internal (e.g., internal orchestration systems at Anthropic, Google, Meta), and this survey captures only what is publicly documentable. This limitation is acknowledged and addressed in the directions section.

Assessment confidence. For open-source systems, component assessments reflect code-backed evidence and are high-confidence. For closed-source systems, assessments rely on official documentation and published specifications, introducing inherent limitations: public documentation may understate or overstate implementation sophistication. For these systems, we treat component ratings as claims about public architectural description, not certainty about deployed reality.

4.1.2. Coding Methodology for the Completeness Matrix

Before presenting the matrix, we make our classification methodology explicit. Each component rating was determined through a three-source verification process: (1) primary documentation review—official documentation, API specifications, and architectural descriptions published by the system’s developers; (2) open-source code inspection where available (applicable to LangGraph, AutoGen, OpenHands, AIOS, MemGPT, SWE-agent, Voyager, and all evaluation infrastructure systems); and (3) published academic papers where available (MemGPT, AIOS, CAMEL, MetaGPT, and evaluation systems). In cases of disagreement across sources, official documentation was treated as authoritative; where ambiguity persisted after consulting all three sources, the component is annotated [D] (disputed) in the extended data. For closed-source systems (Claude Code, DeepAgents, Browser-Use), ratings derive from official documentation, public API specifications, and published blog posts; documentation may overstate deployed sophistication, and we acknowledge this risk explicitly.

This matrix is an analytical framework for revealing design patterns, not an ontological claim about system capabilities. We explicitly state: this matrix aims to surface recurring architectural patterns and is not intended as a normative judgment of any system’s capabilities or completeness. Classifying a system as lacking a given component reflects the state of its public documentation at time of writing, not a claim that the capability could not exist internally or in future releases. Future meta-analyses should treat ratings for closed-source systems as “at least partial” rather than definitive.

4.2. Harness Completeness Matrix

Figure 12: Harness Architecture Taxonomy Tree. A hierarchical classification tree showing how systems cluster into three architectural families based on their §4 completeness profile. The top-level distinction separates full-stack harnesses (E, T, C, S, L, V all substantially implemented) from frameworks (E and T implemented, C/S/L/V often partial) and capability modules (C, S implemented, others minimal). The second level distinguishes frameworks by deployment context: orchestration frameworks (LangGraph, Prefect) implement L and E separately, while inference frameworks (vLLM, SGLang) implement E and T but delegate L to the calling application. Examples of each class are placed at leaf nodes, showing system name and reference. This taxonomic organization reveals that systems do not fall on a single “completeness spectrum”; rather, they optimize for different use cases by excising different components, resulting in qualitatively different architectural patterns.

The following matrix maps each system against the six harness components defined in

Section 2, plus security model and multi-agent support:

Table 4.

Harness Completeness Matrix: 22 Systems × 6 Components. Each system rated on E, T, C, S, L, V plus security model and multi-agent support. Full (✓), partial (∼), or absent (×).

Table 4.

Harness Completeness Matrix: 22 Systems × 6 Components. Each system rated on E, T, C, S, L, V plus security model and multi-agent support. Full (✓), partial (∼), or absent (×).

| System |

E |

T |

C |

S |

L |

V |

Security |

MA |

Category |

| DeepAgents |

✓ |

✓ |

✓ |

✓ |

✓ |

✓ |

MicroVM |

✓ |

Full-Stack |

| Claude Code |

✓ |

✓ |

✓ |

✓ |

✓ |

∼ |

Sandbox |

× |

Full-Stack |

| OpenClaw |

✓ |

✓ |

✓ |

✓ |

✓ |

∼ |

Container |

✓ |

Full-Stack |

| DeerFlow |

✓ |

✓ |

✓ |

✓ |

✓ |

✓ |

Container |

✓ |

Full-Stack |

| OpenHands |

✓ |

✓ |

✓ |

✓ |

✓ |

✓ |

Container |

✓ |

Full-Stack |

| AIOS |

✓ |

✓ |

✓ |

✓ |

✓ |

∼ |

Process |

✓ |

Full-Stack |

| SWE-agent |

✓ |

✓ |

∼ |

✓ |

∼ |

✓ |

Container |

× |

Specialized |

| Browser-Use |

✓ |

✓ |

∼ |

× |

× |

∼ |

MicroVM |

× |

Specialized |

| RAI |

✓ |

✓ |

∼ |

∼ |

∼ |

∼ |

Physical |

∼ |

Specialized |

| PortiaAI |

✓ |

✓ |

∼ |

✓ |

✓ |

∼ |

Container |

× |

Specialized |

| TrustAgent |

✓ |

✓ |

∼ |

∼ |

✓ |

∼ |

Policy |

× |

Specialized |

| LangGraph |

∼ |

∼ |

× |

× |

× |

× |

None |

∼ |

Framework |

| LlamaIndex |

∼ |

✓ |

✓ |

∼ |

× |

× |

None |

× |

Framework/Module |

| AutoGen |

∼ |

∼ |

∼ |

∼ |

∼ |

× |

None |

✓ |

Framework |

| Google ADK |

∼ |

∼ |

∼ |

∼ |

∼ |

× |

∼ |

✓ |

Framework |

| CrewAI |

∼ |

∼ |

∼ |

∼ |

× |

× |

None |

✓ |

Framework |

| MemGPT |

× |

× |

✓ |

✓ |

× |

× |

∼ |

× |

Module |

| MCP Servers |

× |

✓ |

× |

× |

× |

× |

∼ |

× |

Module |

| Voyager |

× |

∼ |

× |

✓ |

× |

× |

None |

× |

Module |

| HAL |

✓ |

∼ |

∼ |

✓ |

✓ |

✓ |

VM |

✓ |

Eval Infra |

| AgencyBench |

✓ |

∼ |

∼ |

✓ |

∼ |

✓ |

Container |

✓ |

Eval Infra |

| SkillsBench |

✓ |

∼ |

∼ |

∼ |

∼ |

✓ |

Container |

× |

Eval Infra |

| Harbor (TB2) |

|

∼ |

× |

∼ |

∼ |

|

Container |

× |

Eval Infra |

Legend: Full implementation | ∼ Partial | × Absent

Matrix methodology. Component ratings were determined through a three-source process: (1) primary documentation review (official documentation, API specifications, and published architectural descriptions); (2) open-source code inspection where available (applicable to LangGraph, AutoGen, OpenHands, AIOS, MemGPT, SWE-agent, Voyager, and all evaluation infrastructure systems); and (3) published academic papers where available (applicable to MemGPT, AIOS, CAMEL, MetaGPT, and evaluation systems). For closed-source systems (Claude Code, DeepAgents, Browser-Use), ratings were derived from official documentation, public API specifications, published blog posts, and, where available, academic papers authored by the developers. For these systems, closed-source assessment introduces an inherent limitation: documentation may overstate implementation sophistication relative to deployed reality. We acknowledge this risk explicitly and recommend that future meta-analyses treat closed-source component ratings as “at least partial” rather than definitive. The V-component rating for Claude Code (∼ Partial) reflects documented trajectory logging but no publicly documented standardized evaluation interface that is compatible with external benchmark frameworks; this may understate its internal evaluation tooling, which is not publicly described.

Three patterns in this matrix deserve attention. The five full-stack harnesses cluster distinctly—all implement E, T, C, S, L fully, diverging only on V and security model. This clustering validates the category: full-stack harnesses form a coherent class that is not an artifact of our definition. The framework row shows a characteristic profile: partial E and T, absent or minimal C, S, L, V—confirming that frameworks solve the what of agent architecture but not the how of reliable execution. The module row shows the inverse: strong on specific governance functions but absent on orchestration. Modules are the building blocks that full-stack harnesses assemble.

4.3. Taxonomy

The ecosystem divides into five categories. Full-Stack Harnesses implement all six governance components with production-grade reliability. The five systems in this category—DeepAgents, Claude Code, OpenClaw, DeerFlow, and OpenHands—differ primarily in design philosophy and target context. DeepAgents emphasizes context engineering and sub-agent orchestration. Claude Code is a closed-source harness optimized for software engineering tasks, notable as the only major commercial system to explicitly self-identify as an “agent harness” in its official engineering documentation.6

6 [Practitioner Report] Anthropic. (2026). Demystifying evals for AI agents. Anthropic Engineering Blog. (Non-peer-reviewed official documentation.) OpenClaw introduces a skill marketplace architecture. DeerFlow implements a hierarchical multi-agent pipeline with explicit role separation. OpenHands provides the most comprehensive evaluation integration, making it the primary platform for agent research benchmarking in software engineering.

OpenClaw represents the most complete open-source implementation of the harness concept in current deployment. Its architecture directly instantiates the five necessary conditions identified in §2: a persistent execution loop governed by event triggers (heartbeats, webhooks, and scheduled jobs); a tool management layer built on a skill registry with native MCP integration; a three-tier memory architecture that separates working memory, retrieved memory, and long-term distilled memory (embodied in its MEMORY.md convention and session context management); a sub-agent spawning mechanism enabling dynamic multi-agent coordination; and, as of March 2026, an external runtime security layer—PRISM (Li et al., arXiv:2603.11853, 2026)—that distributes enforcement across ten lifecycle hooks without forking the host framework. PRISM’s zero-fork architecture, implementing a hybrid heuristic-plus-LLM scanning pipeline with session-scoped risk accumulation, represents the first systematic published treatment of production runtime security for a deployed, open-source agent harness. The coexistence of OpenClaw as a production system and PRISM as the first openly documented production runtime security layer for an open-source agent harness marks a new phase in harness research: practitioner-scale deployment generating academic infrastructure that feeds back into the research community, closing the loop between empirical harness engineering and systematic academic analysis.

General Frameworks provide construction primitives for agent logic but delegate runtime governance to the deployer. LangGraph’s DAG-based execution graphs give developers explicit control over agent flow but implement no context management or security model. AutoGen’s role-based conversation management handles multi-agent coordination but treats state persistence and evaluation as the developer’s responsibility. Google ADK integrates the A2A protocol natively, positioning itself as the standard harness for agents that must communicate across organizational boundaries.

LlamaIndex occupies a position analogous to LangChain in the harness taxonomy: strong T and C component support through its retriever and query engine abstractions, but absent L and V components that would elevate it to full harness status. Its E-component is partial, realized through the QueryPipeline workflow orchestration interface, which provides DAG-style task sequencing without the execution loop semantics—error recovery, termination conditions, state machine formalization—that distinguish a harness E-component from a framework primitive. Its S-component is likewise partial, supported through storage integrations (vector stores, docstores, index stores) that provide persistence for retrieval artifacts but do not constitute a general-purpose cross-session state store. LlamaIndex’s primary contribution to the harness ecosystem is as a C-component provider—a sophisticated context assembly layer that many full-stack harnesses (including LangGraph-based systems) integrate as a retrieval backend rather than implement natively. The retriever, query engine, and response synthesizer abstractions constitute what is arguably the most mature off-the-shelf C-component subsystem in the open-source ecosystem; harness builders adopting LlamaIndex as a C-component dependency gain substantial context management sophistication without implementing it from scratch. The practitioner harness engineering movement has produced a recognizable category of “harness-first” production systems that sit at the “full harness” end of the ETCSLV completeness spectrum—distinguished from “model-first” systems by the primacy given to environmental specification and architectural constraint over model selection. The OpenAI Codex harness exemplifies this category: its architecture enforces a linter-validated six-layer dependency ordering (Types → Config → Repo → Service → Runtime → UI) through Codex-generated structural tests, wires Chrome DevTools Protocol directly into the agent runtime for DOM-level environment access, and exposes LogQL and PromQL to Codex for live observability of its own execution environment (Lopopolo, 2026) [Practitioner report]. Stripe’s Minions Blueprint architecture is a second exemplar: deterministic harness nodes govern CI/linting and PR templating, agentic nodes handle implementation, a harness-enforced maximum of two CI rounds before escalating to human review implements a policy decision at the infrastructure layer rather than delegating it to model judgment, and each agent instance receives its own devbox environment spinning up in under ten seconds (Gray, 2026) [Practitioner report]. These systems instantiate all six ETCSLV components—execution environment isolation, curated tool registries, context management via AGENTS.md conventions, persistent state per agent instance, lifecycle hooks enforcing deterministic steps, and automated CI evaluation—and their performance characteristics are a product of harness architecture as much as model capability.

Specialized Harnesses implement full or near-full governance for a specific domain. SWE-agent couples the ACIface interaction model with a container-based execution environment for repository manipulation. Browser-Use achieves 87K+ GitHub stars by providing a complete execution environment for web navigation, deliberately omitting persistent state and lifecycle hooks as appropriate for stateless tasks. RAI extends the harness concept to physical robotic systems via ROS2, where the tool registry maps to sensor and actuator interfaces.

Capability Modules implement one or two governance functions exceptionally well and are designed for integration into harnesses rather than standalone deployment. MemGPT’s virtual context management is the strongest available C-component implementation. MCP servers constitute the standard T-component interface. Voyager’s skill library represents a validated architecture for the S-component—storing not raw interaction history but abstracted, reusable capabilities.

Three additional multi-agent systems—Concordia (Vezhnevets et al., 2023), Mixture-of-Agents (Wang et al., 2024), and AgentVerse (Chen et al., 2023)—occupy the capability module category with a multi-agent specialization. Concordia (DeepMind) provides a simulation substrate for LLM agents acting in physical, social, or digital spaces; its shared Associative Memory component enables persistent state synchronization across agent ensembles, addressing the multi-agent S-component challenge of cross-agent state consistency without requiring a centralized harness. Mixture-of-Agents (MoA) structures agent collaboration as a layered pipeline in which proposer agents generate candidate answers and aggregator agents synthesize them across rounds; its collective intelligence effect—demonstrating that iterative cross-agent synthesis outperforms any single agent—establishes a motivating case for the harness interoperability work discussed in §7 Direction 4 (Protocol Interoperability). AgentVerse introduces dynamic agent recruitment, where the agent ensemble composition adapts to task demands at runtime; this requires harness-level agent registry management analogous to dynamic tool registration in the T-component, but applied to agents rather than tools.

Two memory-focused capability modules warrant explicit inclusion: MemoryBank (Zhong et al., 2023) and Agent Workflow Memory (Wu et al., 2024). MemoryBank implements an Ebbinghaus-inspired forgetting curve that governs when memories are retained versus decayed, providing the first principled long-term memory update mechanism for LLM agents and directly addressing the memory bloat problem identified in §6.3.4. Agent Workflow Memory (AWM) takes a different approach: rather than storing individual experiences, AWM induces reusable workflow abstractions from past task trajectories, then retrieves and executes appropriate workflows for new tasks. AWM’s strong results on Mind2Web (+14.9% success) and WebArena (+8.9% success) demonstrate that procedural memory—not just episodic memory—is a first-class S-component design concern.

Evaluation Infrastructure constitutes a distinct category: these systems require substantial harness engineering to assess other agents, while not being deployed as production operating environments. The breadth of this category is underappreciated—by our count, at least twelve distinct evaluation infrastructure systems have been deployed with production-grade harness engineering, representing a de facto specialization of the harness concept for assessment rather than task execution. HAL (Kapoor et al., 2026) represents the current state of the art: a standardized evaluation harness that orchestrates parallel evaluations across hundreds of VMs, conducting 21,730 rollouts across nine benchmarks to demonstrate what robust infrastructure can achieve. AgencyBench (Li et al., 2026) extends this with cross-harness evaluation capability, simultaneously running agents in their native and foreign harness environments to isolate the harness–model coupling effect. SkillsBench (Li et al., 2026) deploys seven agent-model configurations across 7,308 trajectories using a model-agnostic harness (based on Harbor) to separate skill effects from harness effects—a methodology that represents the field’s most rigorous approach to controlled cross-harness comparison. Terminal-Bench 2.0’s Harbor framework provides containerized reproducible execution for CLI-focused tasks. SWE-bench’s evaluation harness orchestrates test execution against real GitHub repositories with explicit sandboxing to prevent contamination. OSWorld’s evaluation harness must manage screenshot capture, action injection, and state verification across live GUI environments—the most technically demanding evaluation substrate currently in operation. AgentBench (Liu et al., 2023) coordinates eight parallel evaluation environments ranging from operating system tasks to database manipulation, requiring a multi-environment orchestration harness distinct from any single-domain evaluation system. WorkArena (Drouin et al., 2024) introduces BrowserGym—a unified browser environment interface providing standardized observations and actions for web-based agent evaluation—that functions as a harness-level abstraction over live web applications, making it directly comparable to the environment-standardization role that OpenAI Gym played for RL. InterCode (Yang et al., 2023) provides a Docker-containerized interactive coding benchmark in which code is the action space and execution output is the observation; its containerized environment design is a direct model for harness code-execution sandbox architecture, combining state persistence across turns with execution isolation and scripted reward functions. The emerging consensus across these systems is that evaluation infrastructure is not merely a testing concern but a scientific infrastructure problem: the reliability of the field’s capability claims is bounded by the reliability of the evaluation harnesses producing them.

4.3.1. The Evaluation Infrastructure Gap: Twelve Systems and the State of the Art

The depth of engineering investment in evaluation infrastructure is underappreciated precisely because these systems are classified as “benchmarks” in academic discourse, which obscures their character as harness-engineering achievements. We enumerate the twelve major evaluation infrastructure systems in the corpus and characterize the specific harness engineering innovation that each represents.

SWE-bench (Jimenez et al., ICLR 2024): The innovation is reproducible repository state management—orchestrating test execution against 2,294 real GitHub repositories, each pinned to a specific commit hash and associated with a specific failing test that the agent must fix. The harness must check out the repository, install dependencies, run tests before and after agent intervention, and compare test output to determine whether the fix is valid. The challenge is dependency management at scale: each repository has distinct dependency trees that must be isolated from each other and from the host environment.

OSWorld (Xie et al., NeurIPS 2024): The innovation is live GUI environment management—managing screenshot capture, action injection via platform-specific automation APIs (xdotool on Linux, AppleScript on macOS, UIAutomation on Windows), and state verification across arbitrary applications. The V-component must parse raw pixel arrays into semantically meaningful success signals, a challenge that HAL’s LLM-aided log inspection only partially addresses.

WebArena (Zhou et al., ICLR 2024): The innovation is self-hosted live environment deployment—running four complete web applications (shopping site, code repository, social forum, corporate wiki) as Docker containers with scripted initial state, enabling ecological validity without external service dependency. The harness manages container lifecycle, inter-container networking, user account state across 812 tasks, and action injection via Playwright browser automation.

Mind2Web (Deng et al., NeurIPS 2023): The innovation is web interaction annotation at scale—3,500+ annotated action sequences across 137 real websites, with offline snapshots enabling reproducible evaluation without live environment dependency. The harness challenge is snapshot fidelity: JavaScript-rendered content requires full browser execution to reproduce, and snapshot-based harnesses must decide how much rendering to include in the archived snapshot.

AgentBench (Liu et al., NeurIPS 2023): The innovation is multi-environment orchestration—simultaneously managing eight structurally different environments through a unified evaluation interface. The harness abstraction layer must normalize eight different observation schemas, eight different action schemas, and eight different success criteria into comparable evaluation records.

GAIA (Mialon et al., 2023): The innovation is difficulty calibration via human annotation—each GAIA task is accompanied by a human solution time estimate and a tool-use annotation indicating which tools the human required. The harness infrastructure manages multi-tool execution (web search, Python REPL, file processing) while recording which tools the agent used and comparing usage patterns to the human-annotated expected pattern.

WorkArena/BrowserGym (Drouin et al., 2024): The innovation is enterprise software integration—deploying a fully functional ServiceNow instance as the evaluation substrate, populating it with realistic enterprise data, and defining 33 knowledge worker tasks that require navigating real enterprise workflows. BrowserGym’s standardized observation and action format is designed for reuse across web agent benchmarks, representing the first step toward a shared evaluation harness for the web domain.

InterCode (Yang et al., NeurIPS 2023): The innovation is interactive code execution with state persistence—a Docker-containerized benchmark in which each task is a multi-turn interaction with a Bash, SQL, or Python interpreter, where each agent action changes the interpreter state and subsequent observations reflect that accumulated state. The harness must manage Docker lifecycle, state snapshotting for rollback, and programmatic reward computation from execution output.

HAL (Kapoor et al., ICLR 2026): The innovation is infrastructure standardization—a single evaluation harness that can run nine structurally different benchmarks through a common execution environment, enabling fair cross-benchmark comparison by controlling for harness variation. The 2.5 billion token log archive is a secondary innovation: it enables post-hoc behavioral analysis at a scale and richness that individual benchmark datasets cannot support.

AgencyBench (Li et al., 2026): The innovation is cross-harness experimental design—simultaneously running the same agent in its native ecosystem and in an independent model-agnostic harness, enabling controlled measurement of the harness coupling effect. This is the first evaluation infrastructure explicitly designed to measure the harness as an experimental variable rather than as a controlled constant.

SkillsBench (Li et al., 2026): The innovation is skill effect isolation—separating the contribution of the harness’s skill library from the contribution of the underlying model by running the same model with and without skill augmentation across 86 tasks. The 7,308 trajectory corpus, produced through a standardized Harbor-based harness, is the largest controlled experiment on harness skill management currently available.

Terminal-Bench 2.0 / Harbor (Snorkel AI, 2025): The innovation is containerized reproducibility for CLI-focused tasks—a harness framework that manages Docker container lifecycle, provides deterministic environment initialization, and enables complete trajectory capture for offline analysis. Harbor’s design as a reusable harness framework (rather than a task-specific evaluation system) makes it the evaluation infrastructure analog of the general-purpose harnesses surveyed in §4.3.

4.3.2. Multi-Agent Harness Architecture Patterns

The multi-agent dimension of the harness taxonomy deserves specific treatment because it introduces governance requirements that single-agent harnesses do not face. When multiple agents execute within or across harness boundaries, three new governance problems emerge: agent identity management (which agent is performing which action, and with what authority?); inter-agent message validation (what schema and content constraints apply to messages between agents?); and shared state consistency (when multiple agents update shared state, what consistency guarantees must the S-component provide?).

The systems surveyed reveal four distinct multi-agent harness patterns. The role-based orchestration pattern (MetaGPT, ChatDev, CrewAI) assigns fixed roles with fixed communication schemas: each agent-role has a defined input document format and output document format, and the harness validates schema compliance at message boundaries. This pattern achieves predictable coordination at the cost of flexibility—adding a new role requires updating the harness’s schema registry. The market-based coordination pattern (AutoGen, Mixture-of-Agents) enables dynamic agent selection based on task requirements: the harness maintains an agent registry analogous to a tool registry, and agents are selected and invoked based on capability matching and task state. The simulation-substrate pattern (Concordia, Generative Agents) provides a shared world model that all agents read from and write to; the harness governs access to the shared world state through read/write APIs that enforce consistency. The hierarchical delegation pattern (DeerFlow, DeepAgents) distinguishes orchestrator agents from worker agents, with the harness enforcing that worker agents can only take actions authorized by their orchestrator—a permission model analogous to Unix process hierarchies applied to agent ensembles.

Each pattern makes different S-component demands. Role-based orchestration requires document-level state consistency: the output of one agent’s role is the input of the next, and the harness must ensure that document transitions are atomic. Market-based coordination requires agent-registry state: the harness must maintain current availability, capability, and load information for each agent in the registry. Simulation-substrate patterns require world-model consistency: the harness must provide conflict resolution for concurrent writes to shared world state. Hierarchical delegation requires permission-state consistency: the harness must maintain current authorization grants and ensure that delegations are propagated correctly through the agent hierarchy. The absence of a standard multi-agent S-component interface—analogous to MCP’s standardization of the T-component—is a significant gap that the field has not yet addressed.

4.3.3. Case Study: OpenAI Codex Harness Engineering at Scale

The most ambitious production deployment of agent harness infrastructure to date is OpenAI’s Codex agent system, as documented in practitioner reports by Lopopolo (2026) and in the broader “Harness Engineering” discipline that OpenAI explicitly named in February 2026. The Codex harness demonstrates all six governance components operating in concert at production scale, and provides quantitative validation of the binding constraint thesis through a deployment metric that has no model-capability correlate.

Scale and Architecture. Over a five-month period (August 2025 through February 2026), a team of three to seven engineers built approximately one million lines of production code—encompassing application logic, infrastructure, tooling, and documentation—through Codex-generated code, producing approximately 1,500 merged pull requests. This throughput (averaging 3.5 PRs per engineer per day) is the most comprehensive test of agent-at-scale deployment to date. Critically, every line of code in production was generated by Codex; no lines were hand-written. This makes the Codex harness a forcing function for understanding what infrastructure is necessary to make code-generation agents deployable.

Harness Architecture. The Codex harness implements all six ETCSLV components through a linter-validated dependency ordering: Types → Config → Repo → Service → Runtime → UI. Each layer is generated by Codex, validated by structural tests, and integrated into the next layer. The E-component enforces execution flow through dependency constraints: lower layers must be complete before higher layers execute, preventing race conditions and state corruption. The T-component implements a curated tool registry including source code modification tools (AST-aware patches), testing tools (Python pytest, JavaScript Jest), and observability tools (Chrome DevTools Protocol for DOM access). The C-component provides context through live code introspection: the harness exposes the current repository state, recent CI logs, and execution traces to Codex via a code-generation context API. The S-component persists agent state through Git: each agent iteration commits to a branch, and the harness rolls back commits that fail structural validation. The L-component enforces lifecycle constraints: agents move through planning → implementation → testing → integration phases, with harness-enforced gates preventing progression to the next phase until the current phase passes validation. The V-component is automated CI/CD validation: Codex’s generated code is evaluated through both structural tests (linting, type checking) and behavioral tests (execution against the test suite).

The Harness as the Binding Constraint. The Codex team’s own retrospective explicitly frames harness quality as the binding constraint, not model capability. Lopopolo (2026) states: “Early progress was slower than we expected, not because Codex was incapable, but because the environment was underspecified.” This observation points to a critical insight: once models reach a threshold capability level (and Codex appears to have crossed that threshold), the limiting factor is how well the harness channels that capability toward productive action. The team further reports that throughput increased rather than saturated as the team grew from three to seven engineers—a violation of Brooks’s Law that the team attributes to each engineer operating an independent harness instance. This finding suggests that the harness design enables parallelism in agent execution that would be impossible with a shared codebase and single agent: each agent has its own branch, its own execution environment, and its own validation loop, reducing contention and enabling independent progress.

Implications for Harness Design. The Codex deployment demonstrates that successful agent-at-scale deployment depends critically on three harness properties: (1) environment underspecification elimination—the harness must make all necessary constraints explicit in machine-readable form (linter rules, type signatures, schema definitions) rather than leaving them implicit in practitioner knowledge; (2) action validation before execution—the harness validates generated code for structural correctness before executing it, reducing the failure rate from “write code that crashes at runtime” to “write code that passes static validation”; and (3) feedback loop closure—the harness provides immediate, actionable feedback (test failures, type errors, linting violations) that guides the next iteration without human intervention. These three properties are not model-capability requirements; they are harness-engineering requirements that become critical constraints at production scale.

4.4. What the Taxonomy Reveals

The taxonomy makes several structural observations visible that individual system descriptions obscure. Before presenting them, we note the representativeness limitations of a 22-system corpus. The taxonomy covers all major open-source harness systems (OpenHands, AIOS, SWE-agent, Voyager, MemGPT, LangGraph, AutoGen) and the major commercial systems whose documentation is publicly available (Claude Code, DeepAgents, DeerFlow). It includes the most widely cited evaluation infrastructure systems (HAL, AgencyBench, SWE-bench) and the most widely deployed MCP/A2A protocol implementations. However, it almost certainly misses enterprise-internal harnesses deployed within large organizations that have not published their designs, and it may undercount specialized domain harnesses (medical AI, financial AI, robotics) that do not use the “agent harness” terminology. The structural patterns identified below should be understood as robust across the covered systems but potentially unrepresentative of the broader deployed ecosystem. The modularity gap is the most consequential: full-stack harnesses are built largely from proprietary implementations of each governance component, despite the existence of excellent shareable implementations of specific components—MemGPT for C, MCP servers for T. The ecosystem lacks comparable standard components for S (state store), L (lifecycle hooks), and V (evaluation interface). Every full-stack harness re-implements these functions independently, with no shared foundation and no portability between harnesses. A second observation concerns the framework-harness boundary as a design decision: several systems (AutoGen, Google ADK) occupy an ambiguous position, suggesting this boundary is not fixed but reflects a choice about where to draw the line between “things developers configure” and “things the system handles.” Third, evaluation infrastructure is a forcing function: because benchmark results must be reproducible and comparable, evaluation harnesses impose strict requirements on environment isolation and state management that production deployment harnesses often relax—producing some of the most carefully engineered execution environments in the field.

Having established that the six-component model accurately describes the architectural landscape, we now shift perspective from structure to dynamics: What technical challenges emerge from the interactions between these components when they operate at production scale? The completeness matrix shows us which components each system implements; the next section examines the substantive engineering problems that arise when you actually try to implement them together.

Figure 13.

Cross-component challenge coupling matrix (9×6). Rows represent the nine technical challenge areas analyzed in §5–7; columns represent the six harness components (E, T, C, S, L, V). Cell shading indicates coupling strength: dark = mandatory coupling (removing this component breaks the harness for this challenge), medium = typical coupling present in most production deployments, light = optional coupling present only in specialized systems. Row 2 (Evaluation & Benchmarking) is the most densely coupled challenge, coupling mandatorily to all six components—explaining why evaluation remains the hardest unsolved problem in harness design. The density of cross-cell couplings also explains why monolithic harness implementations have outperformed modular component reuse: clean component interfaces are structurally infeasible given this coupling density.

Figure 13.

Cross-component challenge coupling matrix (9×6). Rows represent the nine technical challenge areas analyzed in §5–7; columns represent the six harness components (E, T, C, S, L, V). Cell shading indicates coupling strength: dark = mandatory coupling (removing this component breaks the harness for this challenge), medium = typical coupling present in most production deployments, light = optional coupling present only in specialized systems. Row 2 (Evaluation & Benchmarking) is the most densely coupled challenge, coupling mandatorily to all six components—explaining why evaluation remains the hardest unsolved problem in harness design. The density of cross-cell couplings also explains why monolithic harness implementations have outperformed modular component reuse: clean component interfaces are structurally infeasible given this coupling density.