Submitted:

07 April 2026

Posted:

09 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. The Model Selection Problem

1.2. Why Quantum?

1.3. Contributions

- 1.

- Novel problem formulation: The first QUBO encoding of the LLM Cascade Routing Problem, with cost minimization, quality constraints, budget limits, and latency SLAs.

- 2.

- Real quantum hardware evaluation: QAOA executed on three IBM Heron 156-qubit processors via IBM Quantum, providing verifiable hardware results (16 quantum jobs).

- 3.

- Shallow circuit advantage: Empirical demonstration that QAOA significantly outperforms deeper circuits on NISQ hardware for this problem class.

- 4.

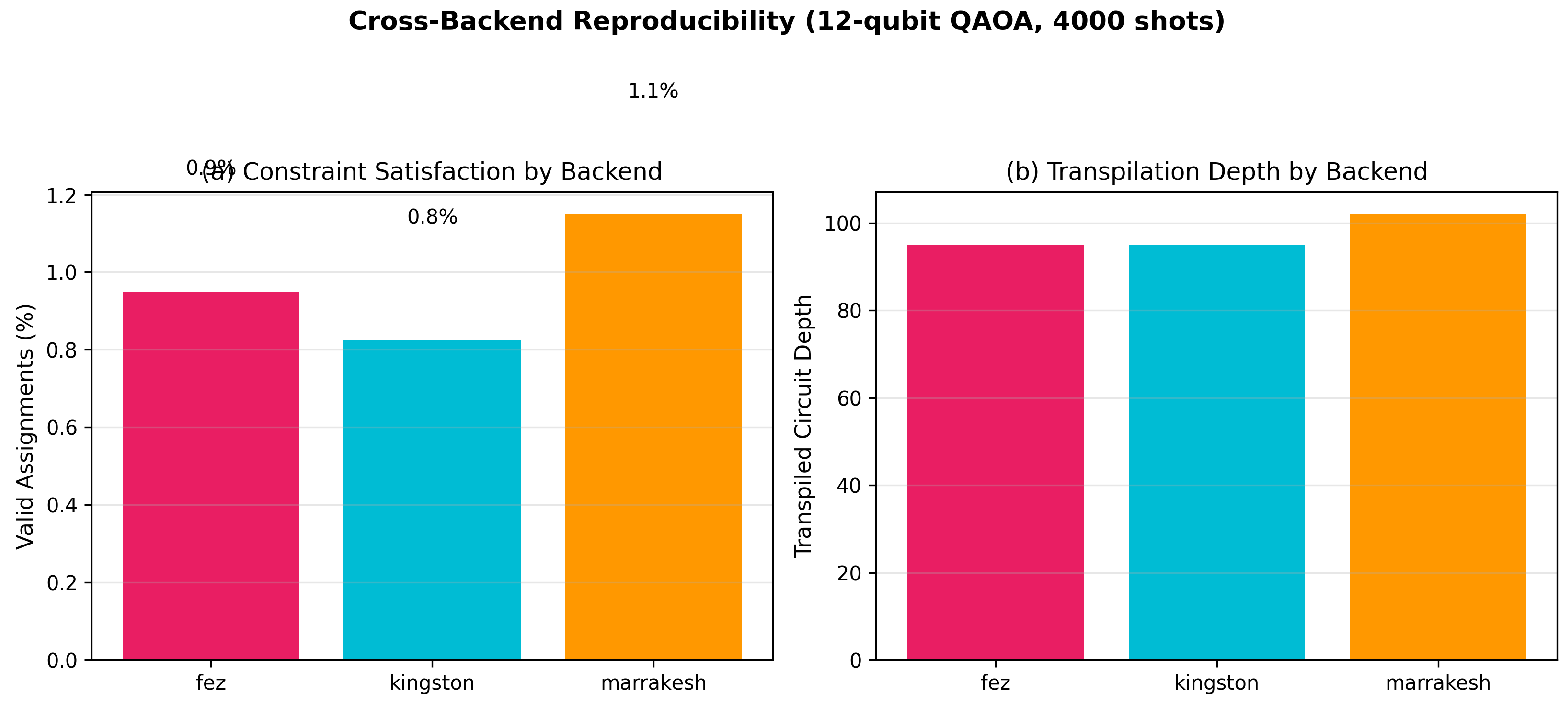

- Cross-backend reproducibility: Consistent results across three distinct quantum processors.

- 5.

- Open-source implementation: Complete Cirq/Qiskit code and benchmark suite for reproducibility.

2. Related Work

2.1. Quantum Optimization for Scheduling

2.2. Classical LLM Routing

2.3. Quantum Machine Learning

2.4. Research Gap

3. Problem Formulation

3.1. The LLM Cascade Routing Problem

3.2. Objective Function

3.3. QUBO Encoding

3.4. Complexity

4. Quantum Solution: QAOA

4.1. Algorithm Overview

4.2. Circuit Construction for LCRP

- 1.

- Cost layer: on each qubit (linear terms) and on coupled pairs (one-hot enforcement).

- 2.

- Mixer layer: on all qubits.

4.3. Constraint Penalty Calibration

- (assignment)—must dominate to enforce valid solutions

- (quality)—must exceed maximum single-assignment cost savings

- (budget)—soft constraint

- (latency)—soft constraint, rarely binding

5. Classical Baselines

6. Experimental Setup

6.1. Data Source

6.2. Quantum Hardware

- ibm_fez: Heron r1, heavy-hex topology

- ibm_kingston: Heron r1, heavy-hex topology

- ibm_marrakesh: Heron r1, heavy-hex topology

6.3. Experiment Design

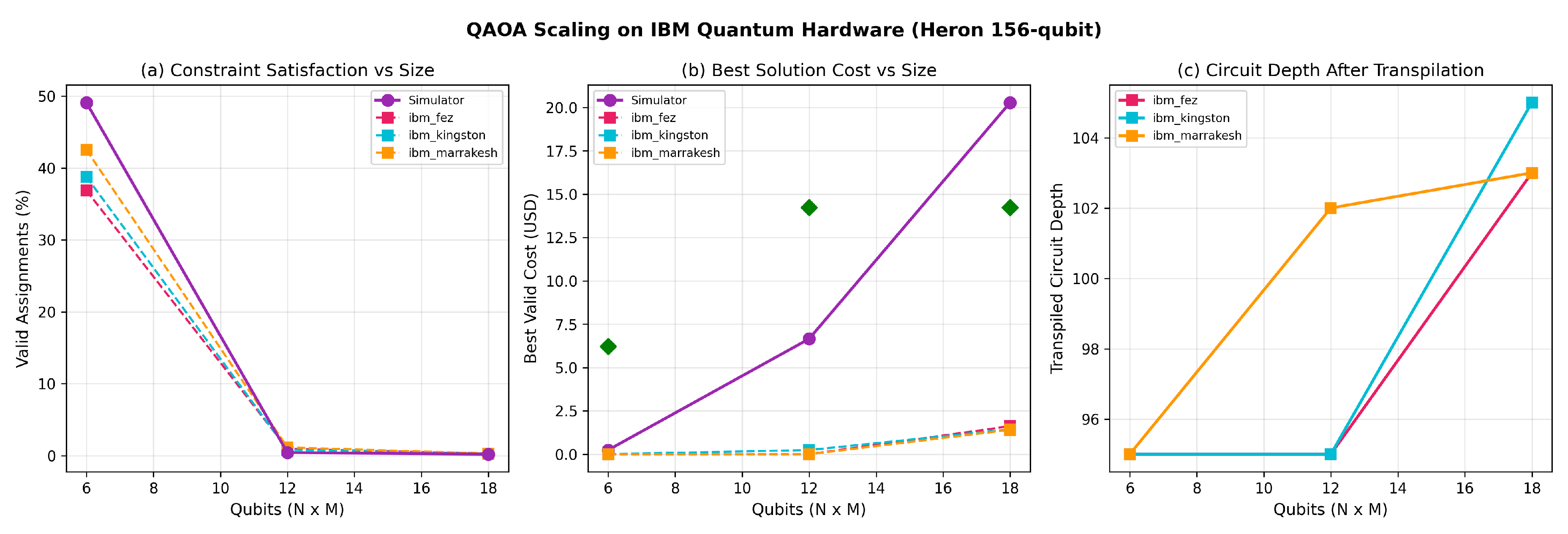

- Experiment A — Scaling: 6, 12, and 18 qubits (, , ) on all 3 backends. Tests noise impact as problem size grows.

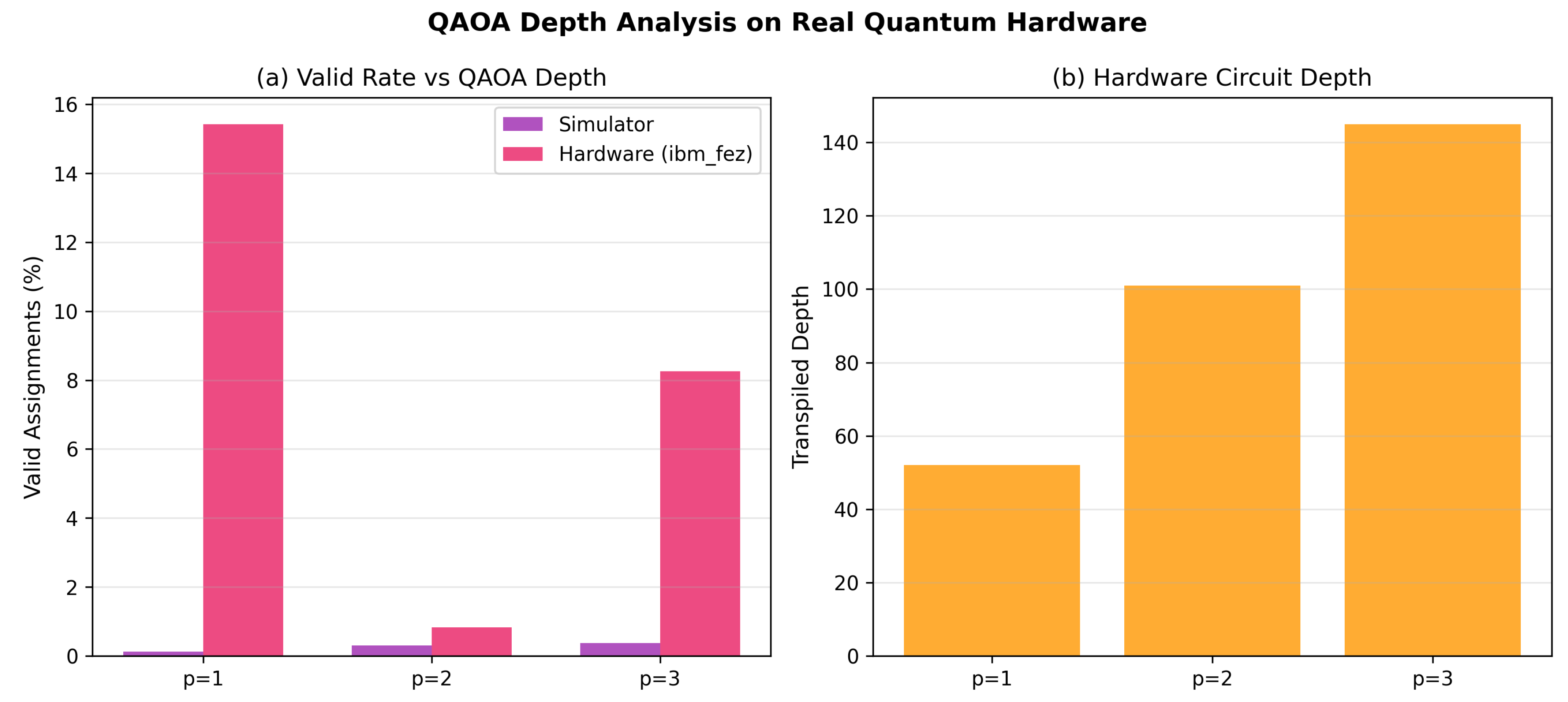

- Experiment B — Circuit Depth: on 12 qubits (ibm_fez). Tests whether deeper QAOA improves solutions on noisy hardware.

- Experiment C — High-Shot: 8000 shots at 12 qubits (ibm_fez). Tests whether more samples compensate for noise.

7. Results

7.1. Penalty Weight Sensitivity

7.2. Hardware Scaling (Experiment A)

7.3. Circuit Depth Analysis (Experiment B)

7.4. Cross-Backend Reproducibility

7.5. High-Shot Experiment (Experiment C)

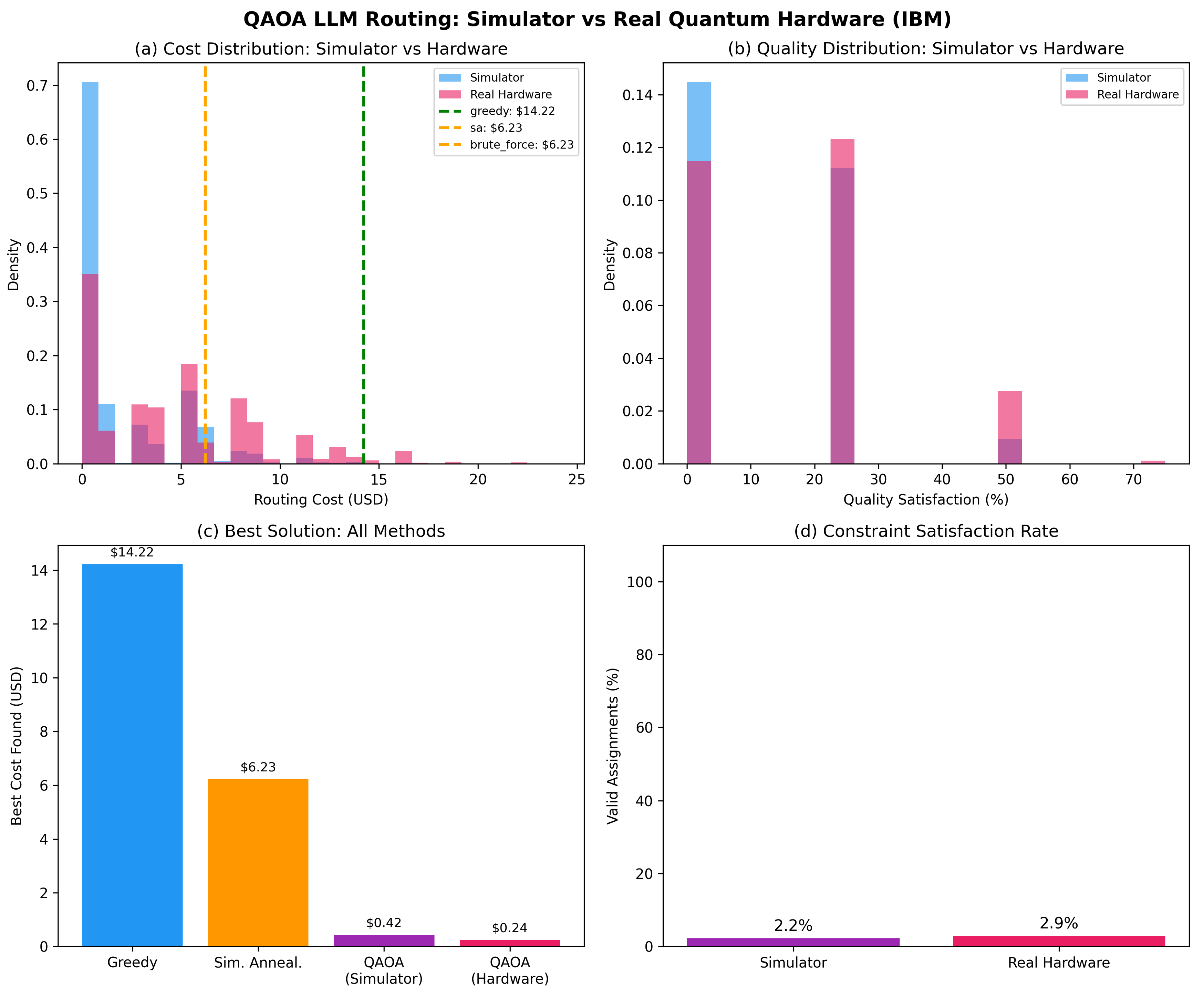

7.6. Comparison Summary

8. Discussion

8.1. The Shallow Circuit Advantage

- 1.

- Fewer layers, more shots: Rather than deepening the circuit, run many more shots at to better sample the solution space.

- 2.

- Error mitigation at : Apply post-processing techniques (zero-noise extrapolation, probabilistic error cancellation) to the shallow circuit where they are most effective.

- 3.

- Warm-starting: Initialize parameters from classical solutions [13] rather than random restarts.

8.2. Constraint Satisfaction on NISQ

- 1.

- Feasibility-first decoding: Post-process measurements by projecting each invalid bitstring to its nearest valid assignment (e.g., for each task, keep only the model with the highest measurement probability).

- 2.

- Quantum-aware constraint mixers: Replace the standard mixer with a constraint-preserving mixer [14] that only explores the feasible subspace, ensuring every measurement is valid by construction.

- 3.

- Hybrid approach: Use QAOA to generate a set of candidate solutions, then classically refine the top candidates to satisfy constraints.

8.3. When Will Quantum Be Practical for LCRP?

- Current: 6 qubits yields 37–43% valid on Heron (∼0.5% two-qubit gate error)

- Needed: 40+ tasks × 10+ models = 400+ qubits at <0.1% gate error

- Timeline: IBM targets 100,000+ qubit systems by 2033; error rates are improving ∼2× per generation

- Projected: Practical advantage for LCRP-scale problems: 2030–2035

8.4. Practical Implications

- 1.

- Formal problem structure: LCRP as a QUBO enables any combinatorial solver—quantum annealing, QAOA, or classical SAT—not just heuristics.

- 2.

- Solver-agnostic architecture: Production systems can implement a solver interface that swaps between greedy (today), SA (near-term), and QAOA (future).

- 3.

- Quantum-ready encoding: The QUBO is directly executable on D-Wave quantum annealers or future fault-tolerant gate-based machines.

8.5. Limitations

- 1.

- Small scale: Problem sizes up to 18 qubits are within classical solvability. The quantum advantage hypothesis requires testing at 100+ qubits.

- 2.

- Static routing: We optimize batch assignment at a single time point, not dynamic real-time routing.

- 3.

- Simplified quality model: A single quality score per model; real quality varies by task domain.

- 4.

- No error mitigation: We report raw hardware results without applying quantum error mitigation techniques, which would likely improve valid rates.

- 5.

- Pre-optimized parameters: Parameters were tuned on a noiseless simulator; noise-aware parameter optimization could yield better hardware results.

9. Conclusion and Future Work

Appendix A. Reproducibility

Appendix B. IBM Quantum Job IDs

References

- Xi, Z. The Rise and Potential of Large Language Model Based Agents: A Survey. arXiv 2023, arXiv:2309.07864. [Google Scholar] [CrossRef]

- Farhi, E.; Goldstone, J.; Gutmann, S. A Quantum Approximate Optimization Algorithm. arXiv 2014, arXiv:1411.4028. [Google Scholar] [CrossRef]

- Deller, A. Quantum Approximate Optimization for the Job Shop Scheduling Problem. European Journal of Operational Research 2023. [Google Scholar]

- IBM Research, “Workforce Task Execution Scheduling Using Quantum Computers,” IEEE QCE, 2024.

- QTIS: A QAOA-Based Time-Interval Scheduler. arXiv 2025, arXiv:2511.15590.

- D-Wave Systems, “Workforce Scheduling with Quantum Optimization,” D-Wave Quantum, 2024.

- Ong, I. RouteLLM: Learning to Route LLMs with Preference Data. arXiv 2024, arXiv:2406.18665. [Google Scholar] [CrossRef]

- Chen, L. FrugalGPT: How to Use Large Language Models While Reducing Cost and Improving Performance. arXiv 2023, arXiv:2305.05176. [Google Scholar] [CrossRef]

- Havlicek, V. Supervised Learning with Quantum-Enhanced Feature Spaces. Nature 2019, vol. 567, 209–212. [Google Scholar] [CrossRef] [PubMed]

- IonQ, “Supercharging AI with Quantum Computing: Quantum-Enhanced Large Language Models,” IonQ Blog, 2025.

- Martello, S.; Toth, P. Knapsack Problems: Algorithms and Computer Implementations; Wiley, 1990. [Google Scholar]

- Guerreschi, G.; Matsuura, A. QAOA for Max-Cut Requires Hundreds of Qubits for Quantum Speed-up. Scientific Reports 2019, vol. 9. [Google Scholar] [CrossRef] [PubMed]

- Egger, D. Warm-starting Quantum Optimization. Quantum 2021, vol. 5, 479. [Google Scholar] [CrossRef]

- Hadfield, S. From the Quantum Approximate Optimization Algorithm to a Quantum Alternating Operator Ansatz. Algorithms 2019, vol. 12(no. 2). [Google Scholar] [CrossRef]

- Quantum Machine Learning for Anomaly Detection: A Comprehensive Survey. Future Generation Computer Systems 2024, arXiv:2408.11047.

- Hu, S. RouterBench: A Benchmark for Multi-LLM Routing System. arXiv 2024, arXiv:2403.12031. [Google Scholar]

- Powell, M. J. D. A Direct Search Optimization Method That Models the Objective and Constraint Functions by Linear Interpolation; 1994. [Google Scholar]

| Model | Cost/1K | Quality | Latency | Context |

|---|---|---|---|---|

| gemma2:2b | $0.000 | 0.30 | 50ms | 8K |

| Claude Haiku | $0.250 | 0.60 | 200ms | 200K |

| Claude Sonnet | $3.000 | 0.82 | 500ms | 200K |

| Claude Opus | $15.00 | 0.95 | 1500ms | 200K |

| o1 | $60.00 | 0.99 | 3000ms | 128K |

| Cost ($) | Quality | Observation | |

|---|---|---|---|

| 5 | 0.24 | 25% | Cost-dominant |

| 10 | 0.24 | 25% | Cost-dominant |

| 20 | 6.23 | 50% | Transition region |

| 40 | 14.22 | 75% | Matches greedy |

| 80 | 14.22 | 75% | Saturated |

| Simulator | Hardware Valid Rate | ||||

|---|---|---|---|---|---|

| Qubits | Valid | Best$ | fez | kingston | marrakesh |

| 6 (2×3) | 49.1% | $0.24 | 36.9% | 38.8% | 42.5% |

| 12 (4×3) | 0.4% | $6.65 | 0.9% | 0.8% | 1.1% |

| 18 (6×3) | 0.2% | $20.28 | 0.3% | 0.2% | 0.3% |

| Simulator | Hardware (ibm_fez) | ||||

|---|---|---|---|---|---|

| p | Valid | Depth | Valid | Depth | Best$ |

| 1 | 0.1% | — | 15.4% | 52 | $0.50 |

| 2 | 0.3% | — | 0.8% | 101 | N/A |

| 3 | 0.4% | — | 8.2% | 145 | $0.42 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).